Abstract

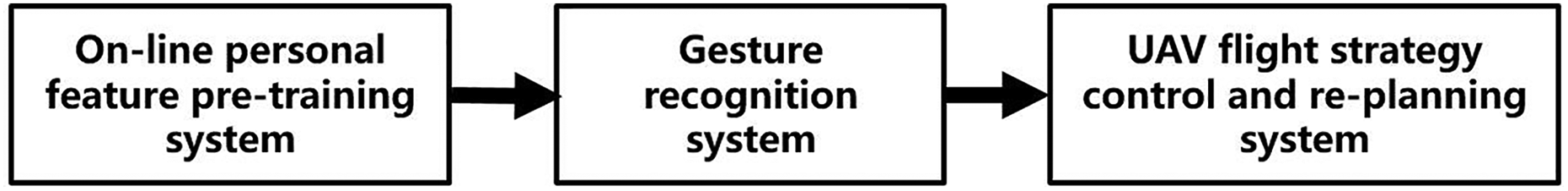

This article proposes an online control programming algorithm for human–robot interaction systems, where robot actions are controlled by the recognition results of gestures performed by human operators based on visual images. In contrast to traditional robot control systems that use pre-defined programs to control a robot where the robot cannot change its tasks freely, this system allows the operator to train online and replan human–robot interaction tasks in real time. The proposed system is comprised of three components: an online personal feature pretraining system, a gesture recognition system, and a task replanning system for robot control. First, we collected and analyzed features extracted from images of human gestures and used those features to train the recognition program in real time. Second, a multifeature cascade classifier algorithm was applied to guarantee both the accuracy and real-time processing of our gesture recognition method. Finally, to confirm the effectiveness of our algorithm, we selected a flight robot as our test platform to conduct an online robot control experiment based on the visual gesture recognition algorithm. Through extensive experiments, the effectiveness and efficiency of our method has been confirmed.

Keywords

Introduction

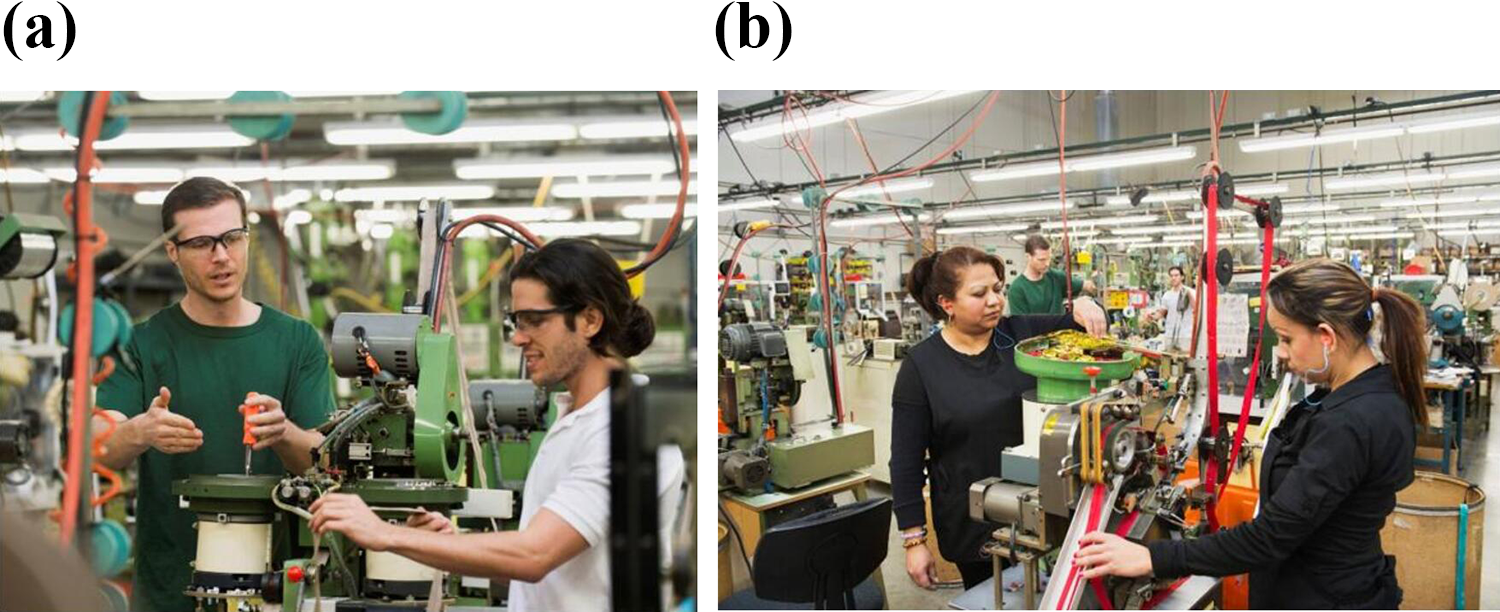

With the rapid development of robots, the requirement for smart/intelligent robots and devices is growing quickly. 1 –3 One of the most important metrics for evaluating a smart robot is its ability to understand operator commands and follow directions, which is well known as human–robot interaction (HRI). 4 –6 The earliest HRI system was controlled through mechanical valve and rocker. 7 Later, with the help of computer-based graphical interfaces, controlled by mouse and keyboard input, the usability of the HRI was greatly improved. 8 Touch screen-equipped devices have provided people with further usability improvements to accomplish HRI tasks. 9 However, in contrast to interaction among humans, all of these methods require the operator to touch a device to control the robot (as shown in Figure 1), which is not in line with people’s interaction habits and needs a long period of specialized training, when operating certain types of robots, such as industrial robots and unmanned aerial vehicles (UAVs). 10

Human–robot interaction system with touch-based manipulation methods. (a) Special task telecontrol robot. (b) Industrial remote control robot.

In addition to these touch-based manipulation methods, HRI could also be achieved by various non-touch methods, such as speech recognition, 11 electromyography (EMG) signal force sensors, 12 and vision-based gesture recognition. 13 –17 Speech recognition is one of the most convenient methods and can directly recognize human voices using a hidden Markov model method, among others. However, it also has problems such as high performance degradation due to the huge variety of human accents. Compared to speech recognition, EMG signals can achieve a higher recognition rate of human actions, but the operator needs to wear special equipment, which restricts this method from being applied to industrial environments, as complex electromagnetic signals produced by surrounding machines can easily interfere with the wireless signal transmission between the EMG sensor and the computer.

Compared with other human communication methods like facial expression, eye tracking, or head movement, human gestures are more easily understood, 18,19 and more complex information can be expressed with different gestures (as shown in Figure 2). Vision sensors are seldom affected by such electromagnetic interference, and vision-based methods have the advantages of convenient interaction, rich expression, and interactive nature. 20 –22

Human–robot interaction system with gesture recognition methods. (a) Gesture interactive industrial robot. (b) Aerial camera UAV with gesture control function. (c) Unmanned taxi with gesture recognition function. (d) Disaster rescue UAV with gesture control function. UAV: unmanned aerial vehicle.

However, most of the vision-based HRI technologies are difficult to apply to real world applications that require high accuracy or with low tolerance of interference, such as industrial robots, unmanned vehicles, and so on. 23,24 This may have a variety of causes, such as interference from complex illumination conditions, cluttered backgrounds that happen to contain hand-shaped components, the presence of the hands of people other than the operator within range of the sensors, and the different operating habits of each operator. 25 –28

To solve the problems mentioned above, we proposed a novel vision-based HRI system. The main contribution of our work is that by introducing an online training-based real-time visual gesture recognition method into the robot control system, the personalized online training based on small samples can be realized. Second, we proposed a novel real-time multifeature cascade classifier algorithm for gesture recognition, which considered both efficiency and accuracy. Third, we have achieved an online task replanning function for robot control, which means the operator can flexibly alter robot tasks in the case of unforeseen events. This is in contrast to conventional robot control systems, for which the operator can only change robot task plans by making changes to programming, which is an additional challenge for operators without programming ability. Finally, we used UAV platform to verify the reliability of the system. Through the online pretraining and tasks replanning system, our gesture recognition system correctly recognized operator gestures at a rate of nearly 100% under complex illumination and cluttered backgrounds, and the ability to change robot control policies in real time was achieved.

In this article, we selected a UAV 29 as a test platform for gesture recognition. We made this selection mainly for two reasons.

The first reason is that the UAV test platform was selected for its low fault tolerance relative to other types of robots, as a single operator input error could result in the robot crashing into the ground. 30 In this case, the human gesture recognition system was designed to translate gestures to robot control commands such that each command would correspond to a unique human gesture, so no false positive would be identified by the gesture recognition method. We believe that if a control method based on gesture recognition can satisfy the safety requirement of a UAV flying task, it could also be applied to control other robots such as smart vehicles, industrial cooperation robots, nurse robots, and so on.

Another reason is that the traditional UAV control methods, including the use of remote control, rocker or ground station, and other special equipment, are operated by professionals, which is not conducive to the promotion of UAV applications. 31,32 Without any control equipment, gesture-based UAV control method is more in line with people’s habits and more natural, by which the implementation areas of UAV can be greatly expanded. 33 As the examples shown in Figure 2(b) and (d), aerial camera UAV has ability of responding to people’s gestures accordingly, 34 or the disaster rescue UAV can respond to people who make rescue gestures without remote control during the search process. 35

Methods

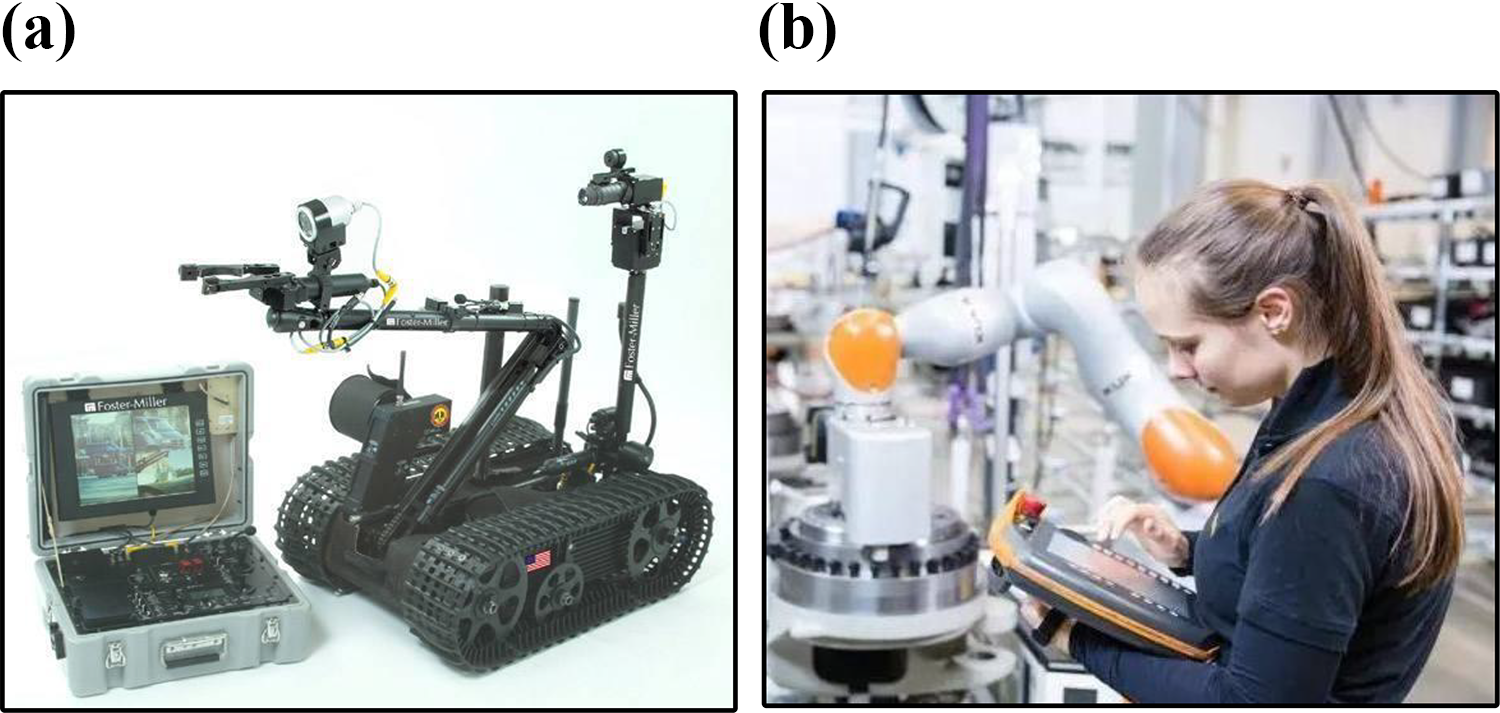

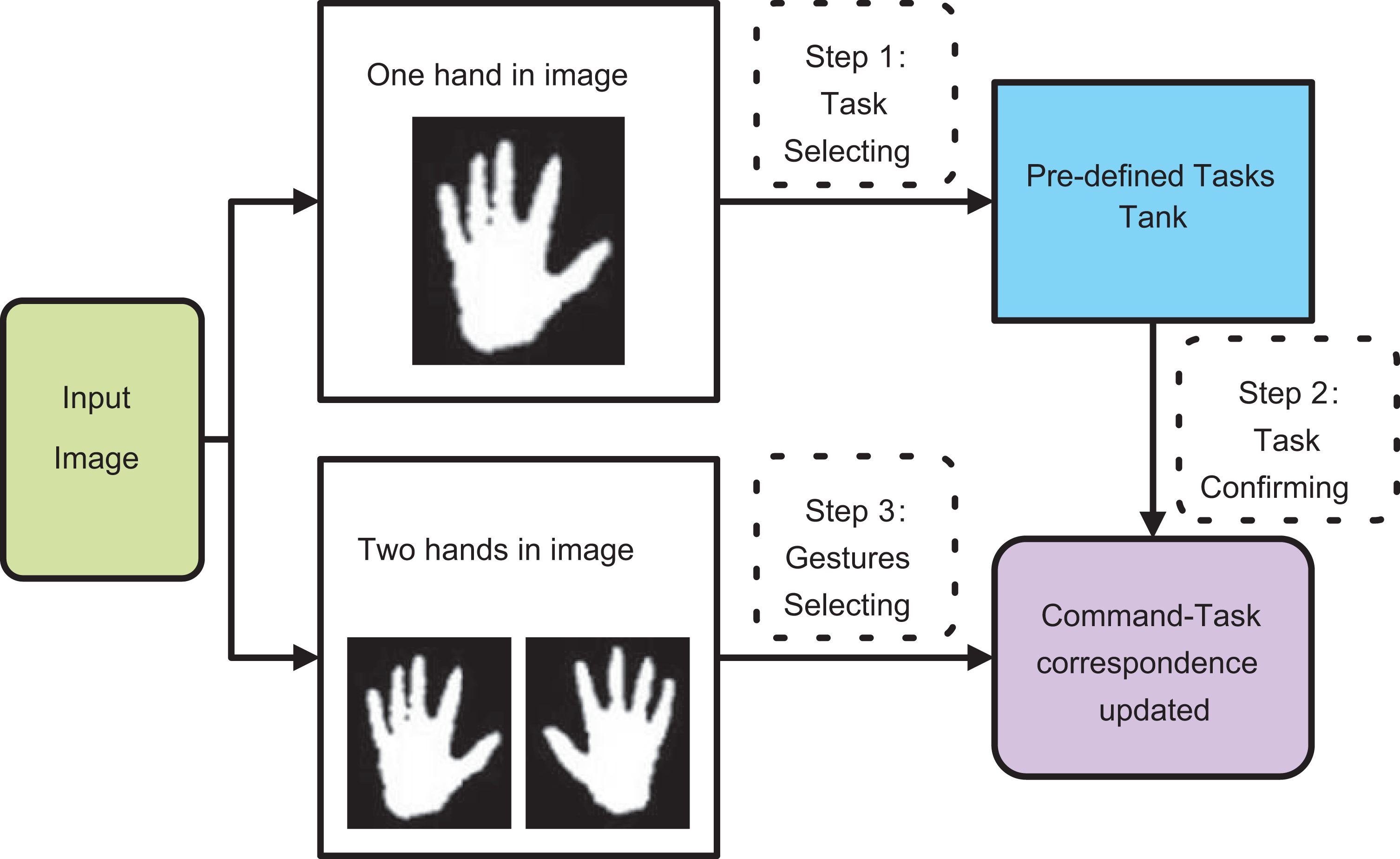

The flow chart of the proposed system is shown in Figure 3. The first part of the system is the online personal feature pretraining system. Before operation, the operator is requested to show a few gestures to be collected by the camera and analyzed by the online personal feature pretraining system. The processed feature data will then be stored into the operator’s personal feature library (PFL). The second part is the gesture recognition system, where a cascade classifier is applied to the PFL for gesture recognition. The third part is the UAV flight strategy control and replanning system, where each flight strategy corresponds to a unique gesture (or the unique combination of two-hand gestures), and the operator can switch control strategies by performing different gestures.

Flow chart of our system.

Online personal features pretraining system

As shown in Figures 4 and 5, the difficulties of applying HRI technology based on gesture recognition to many applications fields like those include (1) interference from other persons, complex backgrounds, or complex lighting conditions, as shown in Figure 4; and (2) the difficulty for a gesture recognition system to cover the variation of human hands, as shown in Figure 5, due to age, skin color, shape, and so on. In addition, even with the same gesture, each operator has their own unique operating habits. To solve these problems, we proposed an online personal features extraction method to extract each operator’s gesture features for recognition.

Interference from the complex background conditions. (a) Interference from other people’s hands. (b) Cluttered background makes gesture recognition difficult.

Huge variations of human hands. (a) Fat hand. (b) Thin hand. (c) Child’s hand. (d) Old hand. (e) Black man’s hand. (f) White man’s hand.

Online personal features pretraining system.

Firstly, the system collects several images of different gestures of the operator (Figure 6). Relying on these images, the mean value of hue, saturation, and value (HSV) is extracted from the inner region of the hand for every frame (Figure 7). The HSV space range of the region of interest (ROI) can be derived by calculating the mean variance of HSV and making reasonable restrictions (restrict it to 0–255). Based on the HSV space range, we can extract the hand region and generate a binary image, by which the mean and variance feature information of different gestures can be derived. Finally, the HSV space range and the mean variance of the feature is stored in the PFL.

Hand region.

Next, we extract short videos of several kinds of operator gestures using the online personal features pretraining system. In these experiments, we selected five gestures: vertical palm, vertical knife, horizontal palm, horizontal knife, and paper. The hand gesture feature information consists of angle, aspect ratio, and convex hull of the gestures (Figure 8). After color filtering and mean-shift 36 clustering, gesture angle and aspect ratio can be derived by applying principal component analysis 37 to the clustering area.

Description for features. (a) Shape information of gesture. (b) Dip angle of gesture. (c) Convex hull of gesture.

Lastly, we use Graham’s scan method,

38

which is a method of finding the convex hull of a finite set of points. The algorithm proceeds by considering each of the points in the sorted array in sequence (Figure 9). For three points Graham’s scan method.

Based on equations (3) and (4), we can obtain the mean and variance of the operator’s feature information. Here, for one operator’s different gestures collected by the pretraining system, n is the ID of different hand gesture type, i is the ith feature’s ID of gesture n, Nn is the training samples number of gesture n, μ ni, and σ ni are feature i’s mean and variance result of gesture n, and f nki is the value of gesture n’s kth hand’s feature i in the training samples. We then store them in the operator’s PFL, which hosts the operator’s personal recognition features. These features will be substituted into equation (7) in the section “Gesture recognition system” as an important parameter of the recognition algorithm for this system

The details of the online training function are shown in Figure 10, by which operator can train and update PFL online or add gesture categories at any time. Each operator’s PFL consists of several sub-modules corresponding to gesture categories, and each sub-module also contains data of various features of the gesture. Whenever a new training sample is sent in, the feature’s μ ni and σ ni of the sub-module corresponding to the training sample category are updated in real time online based on equations (3) and (4). And if the operator wants to add a gesture category, PFL will create a new sub-module to store the feature interval of the new gesture. Based on this module, the online training function of our system is achieved.

Online realtime training and PFL updating function. PFL: personal feature library.

In these experiments of this article, we let the operator wear a pair of gloves with color that differs from the color of other peoples’ hands to distinguish and identify the manipulator (Figure 11). Through color extraction and mean-shift clustering, we can extract and segment the operator’s hand region.

The operator wearing a certain color gloves. (a) and (c) The scenes operating with other people’s interaction and (b) and (d) the corresponding binary images which have distinguish who is the operator.

Gesture recognition system

In our gesture recognition system, the possible regions of human hands are identified according to the gesture features extracted from images captured by a fixed camera. A multifeature cascade classification process is then applied to simultaneously identify the true hand region and classify its category in the PFL.

Multifeature cascade classifier algorithm

A cascade classification, shown in Figure 12, will be applied to a ROI, such that it will be considered as a true hand region when recognized gesture id is non-zero (id = 0 means unrecognized gesture). To select its proper category, we apply equations (5)–(7) to derive its maximum similarity gesture’s ID to all the gestures in the PFL. When the similarity to a gesture exceeds a predefined threshold, it will be classified as the corresponding gesture Gestures recognition system.

Here, xi is the value of feature i of the current gesture. P(x ni | μ ni, σ ni) is the similarity between feature i of the gestures in the current image and feature i of gesture n in the PFL. P(xn | μn , σn ) is the similarity between the current input gesture and gesture n in the PFL. Di is a constant for feature i. Here, we use the Normal Distribution function instead of Euclidean distance as the maximum probability criterion, and features close to the mean of the basis features obtained from operator interface should have a greater weight. By contrast, the features away from mean of the basis features should have a smaller weight. Even so, ambiguity still exists, so we deal with it in “0–1” processing. When xi is out of the range (μ ni − 3σ ni − Ni , μ ni + 3σ ni + Ni ), P(x ni | μ ni, σ ni) will be set to 0. We substitute the current gesture features into equation (5) and calculate in turn. The similarity P(x n | μn , σn ) from each input gesture to the nth gesture in the PFL is calculated by equation (6), in which c is the number of features categories. GN is the number of gesture types in the PFL, and our method will select the id corresponding to the maximum value of P(x n | μn , σn ) to be the current gesture recognition result by equation (7). If each P(x n | μn , σn ) is 0, the current output is rejected with “rejected sub-window” as an unrecognized gesture.

UAV flight strategy control and task replanning system

Because of the high speed and flexibility of UAV flight, the difficulty of using visual recognition technology to control UAVs motion is mainly due to requirements of reliability and real-time speed. The algorithm we choose to control the UAV is H-infinite. 39 H-infinite techniques have an advantage over classical control techniques in that they are readily applicable to problems involving multivariate systems with cross-coupling between channels.

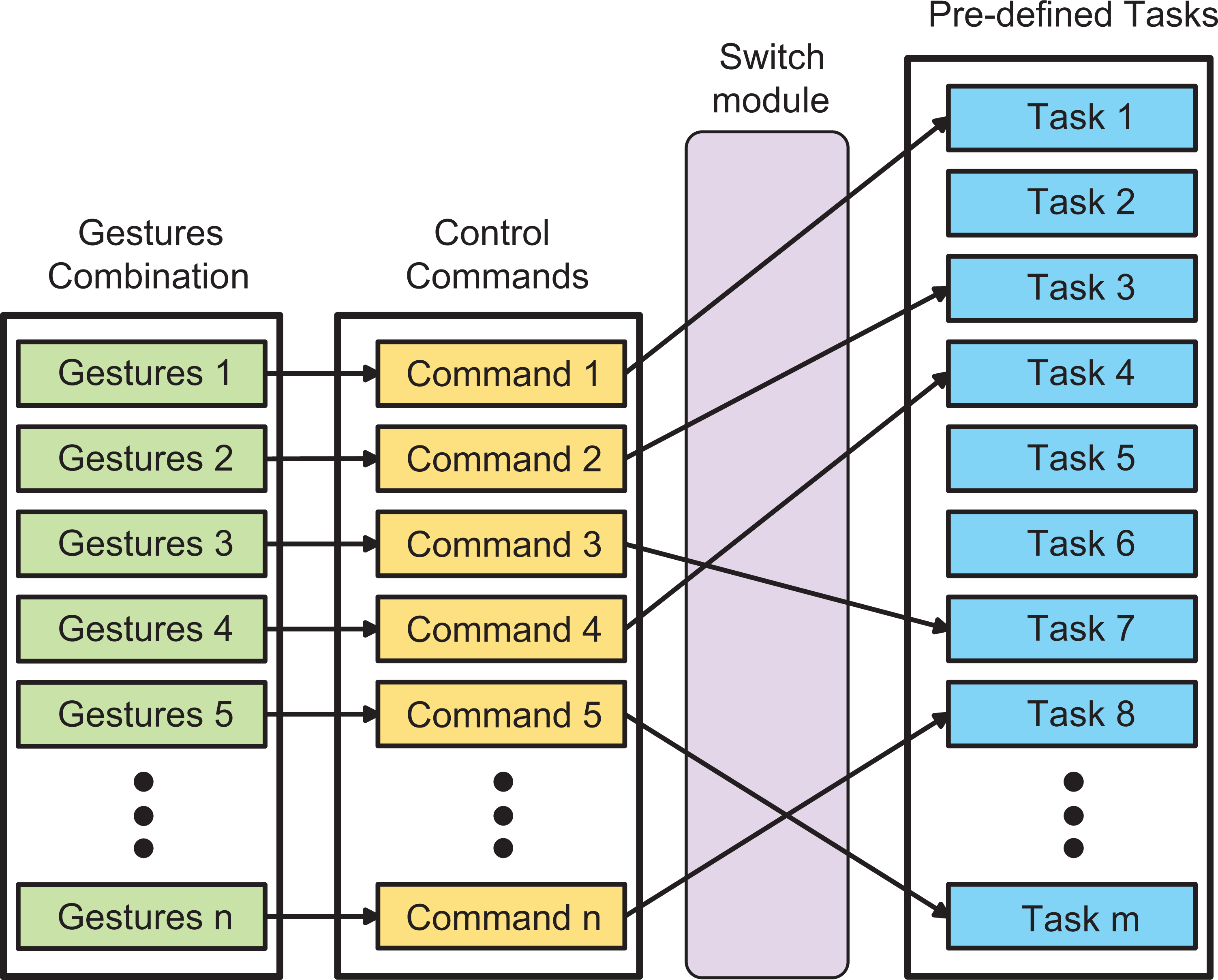

We proposed the online personal features pretraining system in the section “Online personal features pretraining system” and applied it into the robot control system. On the basis of the online pretraining system, we further designed an online task replaning module (as shown in Figure 13), which means the operators can flexibly change the robot task in the case that operators want to change the correspondence between different gestures and tasks or add new gestures corresponding to new tasks.

Task replaning module.

This module consists of four parts (Figure 13): the gestures combination part is composed of the coding combinations of the two hands gestures as shown in Table 1. Correspondingly, each gestures combination corresponds to a control command, all of which constituted the control commands part. Between control commands part and predefined task part is the switch module whose detail is shown in Figure 14. When the operator wants to change the control strategies while operating, he just needs to switch gesture to “one-hand paper,” the system process will jump to online task replaning interface immediately (the interface is shown in Figure 23 in the section “Experiments of online-training and task-replaning function.” Operator should select new task by one hand and confirm it first, then show the new coding gestures combination before the camera, by which user can change the strategies in real time without coding. The function of predefined tasks part is to store predefined robotic tasks, taking UAV as an example, it includes UAV flight attitude control, speed control, aerobatic flight, and other customizable tasks. Some of these tasks are shown in Table 1 and Figure 23.

UAV command code.

UAV: unmanned aerial vehicle.

Switch module.

Experimental results

To evaluate the performance of our algorithm, we performed three experiments. The first experiment was conducted to find the optimal operation distance between the operator and camera. The next experiment compared the proposed gesture recognition algorithm with other methods to evaluate its performance. The third experiment tested the performance of online training and task replaning functions. And the final experiment tested the performance of the robot gestures control function under different conditions.

The four experiments were performed on a notebook PC with an Intel Core i7 2.60 GHz CPU with 8 GB of memory. The images were captured by Basler acA640 camera set to a resolution of 640 × 480. The composition of our system is shown in Figure 15.

System composition.

Experiment of gestures recognition and the distance

This experiment was carried out because the performance of a gesture recognition algorithm usually relies on the resolution of hand region, as a larger hand region will lead to better recognition performance. For this experiment, we collected four groups of samples against a simple background environment, with each group containing five kinds of gestures, which are shown in Table 1. The sample groups were collected with stepped distances between the operator and camera, at 1.0, 1.5, 2.0, and 2.5 m (Figure 16).

The sample groups were collected with a (a) distance of 1.0 m between the operator and camera, (b) distance of 1.5 m between the operator and camera, (c) distance of 2.0 m between the operator and camera, and (d) distance of 2.5 m between the operator and camera.

From this experiment, we found that when the operating distance was 1.0 m, our algorithm failed to recognize some gestures when the operator’s hands were out of frame, and when the operating distance was 2.5 m or more, hand areas were too small to be recognized. Therefore, a conclusion can be drawn from the experiment that the optimum operating range is between 1.5 m and 2.0 m, at which the precision of recognition reaches 100%. Due to the result of this experiment, we set the operating distance between 1.5 m and 2.0 m for the experiments in the sections “Comparative experiments among our method and other gesture recognition algorithms,” “Experiments of online-training and task-replaning function,” and “Experiments of UAV gestures control under different conditions.”

Comparative experiments among our method and other gesture recognition algorithms

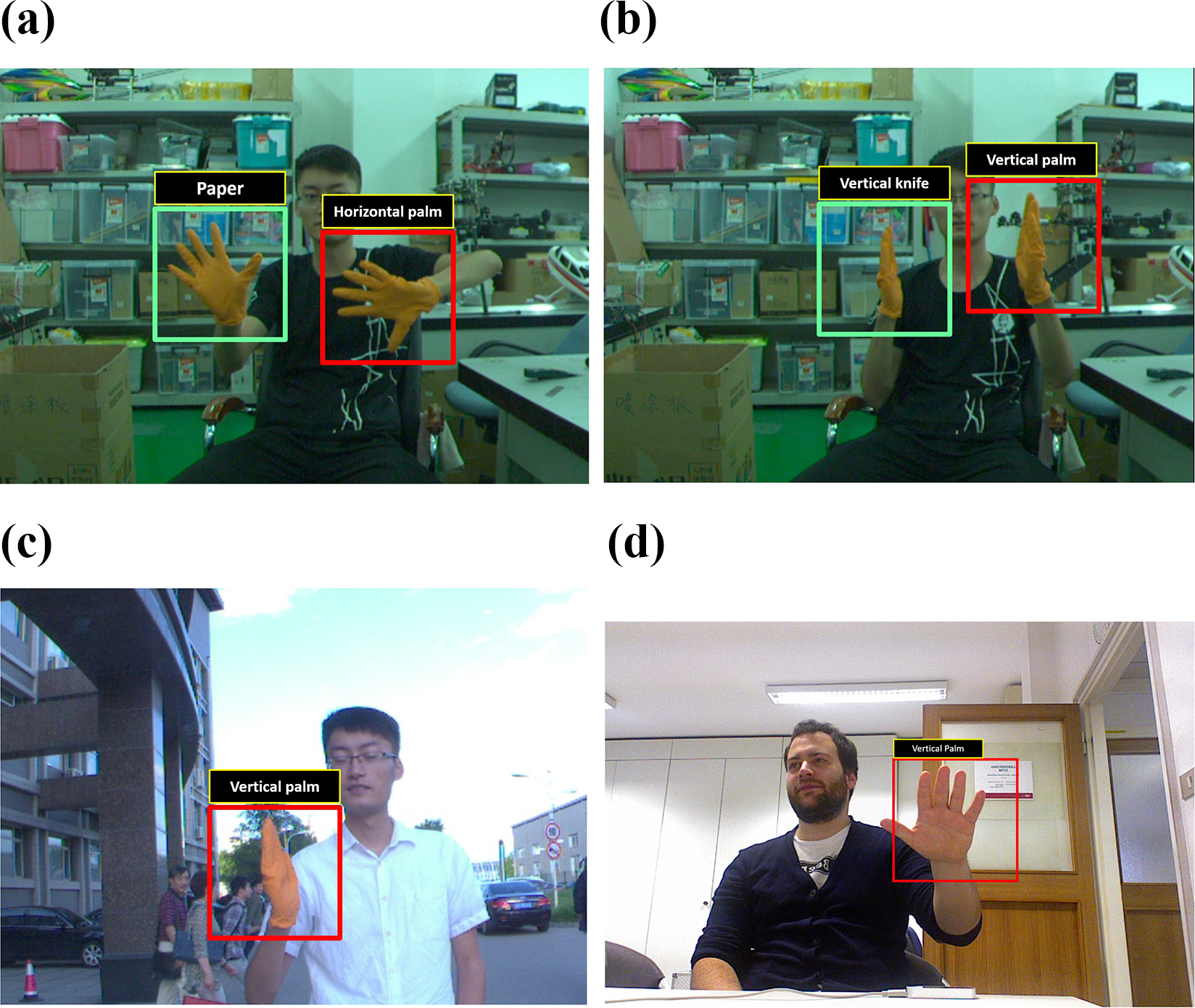

To confirm the effectiveness of our gesture recognition algorithm, in this section, we will compare our method with five other gesture recognition algorithms: (1) methods based on Convolutional Pose Machines 40 + Resnet34 41 ; (2) methods based on Convolutional Pose Machines + Inception-v3 41 ; (3) methods based on Convolutional Pose Machines + Inception-resnet-v2 41 ; (4) methods based on Convolutional Pose Machines + VGG16 41 ; and (5) methods based on Convolutional Pose Machines + Resnet101. 28 We collected four groups of images under different conditions, shown in Figure 17: (i) simple indoor background; (ii) complex indoor background; (iii) simple outdoor background; and (iv) complex outdoor background. Here, the VGG16, Resnet34, Resnet101, Inception v3, and Inception-resnet-v2 methods were each trained with the same dataset, as 2000 positive samples and 3272 negative samples selected from other images that were otherwise irrelevant to the test images. Then, we tested these methods on Microsoft Kinect Leap Motion Dataset 42,43 and NYU hand pose dataset. 44

(a) Samples under simple indoor condition. (b) Samples under complex indoor condition. (c) Samples under simple outdoor condition. (d) Samples under complex outdoor condition with other people’s interaction.

Detail is that the Microsoft Kinect Leap Motion Dataset and NYU hand pose dataset are composed of different gestures of multiple people collected by RGB-D camera. Compared with our own datasets, there are two major differences: first, these two public datasets are all collected in simple indoor background environments, with different gesture samples of 10–15 people and second, people in the pictures of the datasets dont wear gloves of a particular color, but the RGB pictures is accompanied by its depth maps. As the hands are the areas closest to the RGB-D camera in the datasets, we use depth information filtering instead of HSV threshold to extract the areas of the target hands, which is the only different testing process between our dataset and the two public datasets.

We used the recall precision curve (RPC) to evaluate all algorithms, where the recall rate and precision are calculated as

Here, “∑(CR)” means correct recognition; “∑(GT)” refers to ground truth, and “∑(TRR)” corresponds to the total number of recognition results.

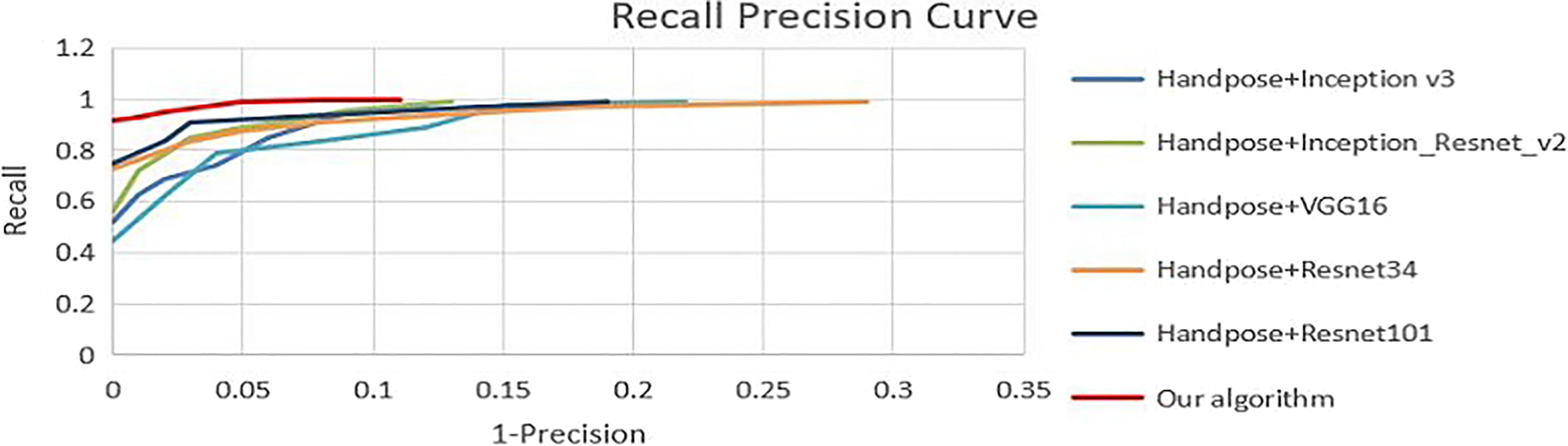

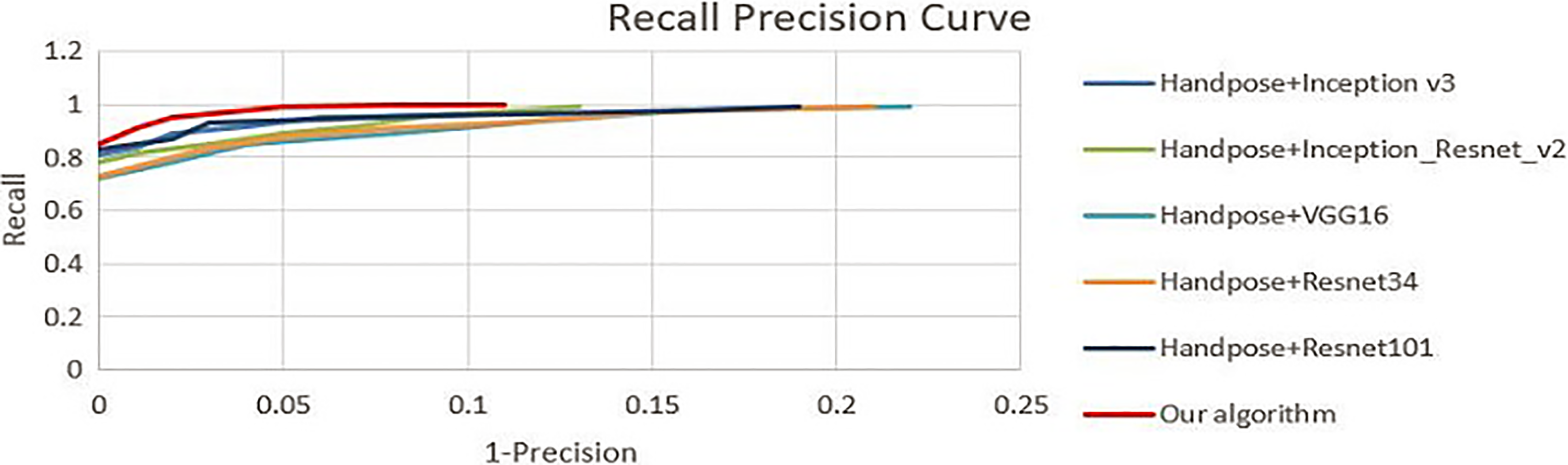

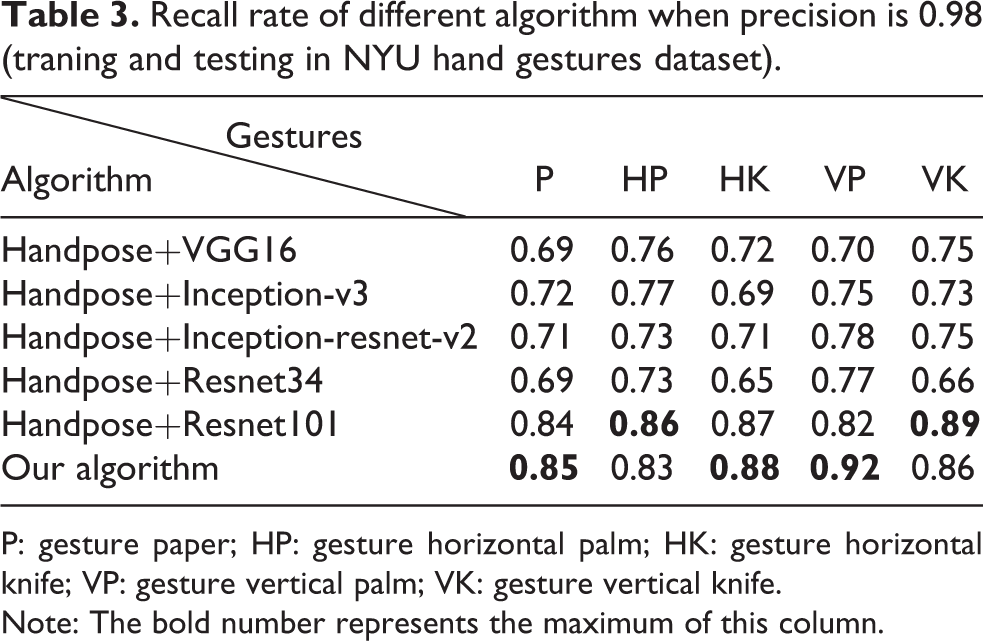

The RPC analysis results of different methods are shown in Figure 18 (with training and test dataset collected by us), Figures 19 and 20 (with the open dataset), The details of recall rate of different algorithm when precision is 0.98 are shown in Tables 1 to 3 (“P” represents gesture paper, “HP” represents gesture horizontal palm, “HK” represents gesture horizontal knife, “VP” represents gesture vertical palm, and “VK” represents gesture vertical knife), and some typical cases of false or miss recognition conditions are shown in Figure 21.

The RPC analysis results based on our own dataset. (The recall rate of our algorithm is much better than other algorithms when precision is more than 0.98.) RPC: recall precision curve.

The RPC analysis results based on Microsoft Kinect Leap Motion Dataset.42,43 (The recall rate of our algorithm is 0.97 and other algorithms is below 0.85 when precision is 0.98.) RPC: recall precision curve.

The RPC analysis results based on NYU hand gestures dataset.44 (The recall rate of our algorithm is similiar to Handpose+Resnet101 and little better than other algorithms when precision is 0.98.) RPC: recall precision curve.

(a) False recognition based on Handpose+Inception-V3. (b) False recognition based on Handpose+Inception-Resnet-V2. (c) False recognition based on Handpose+Resnet34. (d) False recognition based on our algorithm in the Microsoft Kinect and Leap Motion Dataset. (The green boxes represent the correct and the red boxes represent false results.)

RPC analysis results of different methods are shown in Figures 18 to 20 and Tables 2 to 4, from which we can find that in our own training and testing dataset, our algorithm is far more effective (recall rate is close to 0.97 when precision is 0.98) than other algorithms (shown in Figure 18 and Table 4). In the Microsoft Kinect Leap Motion Dataset, our algorithm still maintain a certain superiority (shown in Figure 19 and Table 2). In the NYU hand gestures dataset, the performance of our algorithm is similar to Handpose+Resnet101, which has little advantage over the other deeplearning algorithms. The main reason for the difference between the results of our dataset and open dataset is that the background of our dataset is more complicated than the open dataset. Figure 21 shows us some typical false recognition cases in our own and open datasets.

Recall rate of different algorithm when precision is 0.98 (traning and testing in Microsoft Kinect and Leap Motion Dataset).

P: gesture paper; HP: gesture horizontal palm; HK: gesture horizontal knife; VP: gesture vertical palm; VK: gesture vertical knife.

Note: The bold number represents the maximum of this column.

Recall rate of different algorithm when precision is 0.98 (traning and testing in NYU hand gestures dataset).

P: gesture paper; HP: gesture horizontal palm; HK: gesture horizontal knife; VP: gesture vertical palm; VK: gesture vertical knife.

Note: The bold number represents the maximum of this column.

Recall rate of different algorithm when precision is 0.98 (traning and testing in our datasets).

P: gesture paper; HP: gesture horizontal palm; HK: gesture horizontal knife; VP: gesture vertical palm; VK: gesture vertical knife.

Note: The bold number represents the maximum of this column.

But although the recognition effects of the algorithms based on deep learning have already achieved considerable performance, it is still unsuitable for us to apply them to certain HRI fields which require high accuracy of control instructions, such as the UAV control system that we introduced here. To meet the safety requirement of the UAV control system, the error rate of the control system should be approaching zero and almost all of the commands sent from the operator should be correctly recognized, which requires a precision and recall rate of gesture recognition results that is over 98%. With that consideration, according to the comparative experimental results and RPC evaluated charts, the method based on our algorithm fully meets the requirements. It is only necessary for operators to complete online training in 1 min before operation. In the last section, we will combine the hand gesture recognition system with the UAV platform to test the effectiveness and efficiency of the proposed system.

Experiments of online-training and task-replaning function

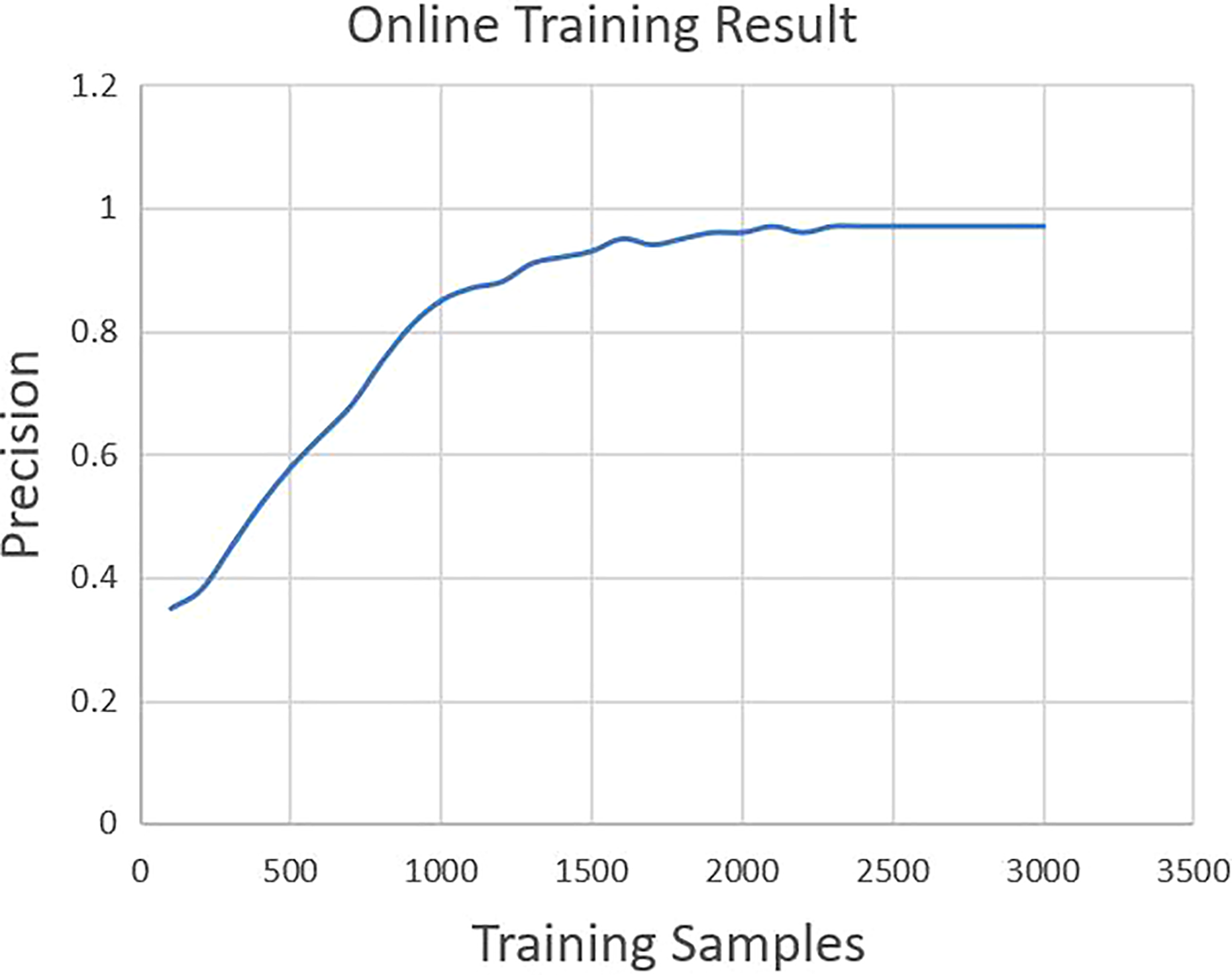

As online training is a real-time parameter updating training method with the image stream input, we first designed an experiment to test the online training effect. Here are the details of the steps: we randomly selected 3000 training samples and 300 testing samples first (selected from our dataset introduced in the section “Comparative experiments among our method and other gesture recognition algorithms”), and input training sample pictures one by one into the training module of the system. When we have sent 100 pictures into the online training system, we began to test the accuracy rate every 10 frames, whose accuracy curve is shown in Figure 22. And the delay time of the online training process is between 0.10 and 0.15 s.

Online training result curve.

The experimental result in Figure 22 shows that condition of falling into the local minimum does not appear in the process of online training, as the final precision is similiar to the offline training results in the section “Comparative experiments among our method and other gesture recognition algorithms,” and accuracy increases steadily and tends to be stable when 2000 training samples are reached.

As illustrated in the section “UAV flight strategy control and task replanning system,” when the operator wants to change the control strategies while operating, he only needs to perform three gestures in turn: swich to “one-hand paper” and select task, confirm task, and show the combination gestures. The interface for task replaning operation is shown in Figure 23, by which the operator can replan tasks conveniently without coding or remote control switching.

Interface of task replaning function.

Experiments of UAV gestures control under different conditions

In these experiments, the operator gesture commands are captured and recognized by a notebook computer on the ground. Distance between the operator and the camera is about 2 m. The corresponding control signal is transmitted to the onboard flight control system by the data transmission device in real time. Pictures of the experiment scene are shown in Figure 24. Even though that after the online training of the PFL, the Precision and Recall Rate of the operator’s gesture recognition reached nearly 100% at the same time. But to further ensure the safety of gesture control of UAV flight, we set that only the system recognizes the same gesture command nth time consecutively, it sends the command to the UAV flight control system 45,46 to control the UAV’s flying posture (n is setted to be two indoor and three ourdoor in our experiments). If the UAV fails to receive the control signal, it will remain hovering.

Experiment of UAV control indoor based on gesture recognition. UAV: unmanned aerial vehicle.

The experiment results in Table 5 show that the UAV control delay time is within 0.35 s, which means that this system has high real-time performance. There are two sources of delay. The process of hand gesture recognition takes about 0.08 s, as a signal will be sent to the UAV only if our gesture recognition system outputs the same result in two consecutive frames. The response time from sending the signal to the UAVs responding to that signal is about 0.1 s.

Precision and delay time results of the experiment in the motion capture system.

UAV: unmanned aerial vehicle.

To test the stability of the system, we have done several experiments at different times, lighting conditions, locations, and environments, as shown in Figure 25. The number of frames captured and recognized by the system accumulated during all experiments combined is roughly 675,000, for which the accuracy of the control signal is more than 99.9%. The upper left picture in Figure 25 shows the experiment we performed with the help of motion capture system, which replaced the GPS to give UAV positioning information. In this experiment, the precision of the UAV’s responding action was 100%.

(a) Operating in simple environment indoor. (b) Operating in the cloud day. (c) Operating in complex environment outdoor. (d) Operating with other people’s interference.

Discussion and future works

Although our system has the advantage of a high detection and recognition rate, there are still some problems that exist, such as interference from background objects and rapid illumination changes, which are serious problems that may result in our system failing to extract the correct mask for detected hands.

To solve these problems, we will add an automatic update function to HSV threshold in the area of interest to improve the system’s adaptability in a changing illumination environment. We will also find more identifiable features to make the recognition system more accurate in recognizing more complex gestures. Further, we will extend our system with the ability to identify different dynamic actions, because the human operator usually expresses commands using gestures and actions simultaneously. Finally, we will expand our system from a monocular camera to using a stereoscopic camera to separate the target hands from the surrounding background to prevent background objects or persons that happen to contain the same color as our gloves to connect with its region in the picture.

Conclusion

In this article, we have proposed a new online training and programming system for controlling robots based on a visual gesture recognition algorithm and have confirmed the effectiveness of this system using a UAV platform to achieve flight control with human gesture recognition. The system is composed of three parts: the online personal feature pretraining system, a gesture recognition system, and a UAV flight strategy control and replanning system. We proposed a multifeature cascade classifier algorithm to guarantee both the accuracy and real-time processing speed of our gesture recognition method. We also designed a pretraining system which can extract operator gesture features and train the recognition system online to ensure a correct gesture recognition rate that is close to 100%. Using the system’s gesture recognition capability, we can achieve task replanning while the robot is working according to its predefined program, which is essential for the development of intelligent robots that are expected to be able to cooperate with human operators to finish complex and flexible work. The effectiveness and efficiency of the proposed system has been proven through extensive experiments.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: National Natural Science Foundation of China (grant no. 61573338); National Natural Science Foundation of China (grant no. U1508208); Joint Research Fund of the National Natural Science Foundation of China (NSFC) and Liaoning Province (grant no. U1608253); National Natural Science Foundation of China (grant no. U1609210); and State Key Laboratory of Robotics (grant no. 2017-Z07).