Abstract

The facial expression of angry emotion can be useful to direct the interaction between agents, especially in unclear and cluttered environments. During the presence of an angry face, a process of analysis and diagnosis is activated in the subject that notices it, which could impact its behavior toward the one who expresses the emotion. In order to study such an effect in human–robot interaction, an expressive robotics face was designed and constructed. The influence of this face on human action and attention was analyzed in two collaborative tasks. Results of a digital survey, experimental interaction, and a questionnaire indicated that anger is the best recognized universal facial expression, has a regulatory effect in human action, and induces human attention when an unclear condition arises during the task. An additional finding was that the prolonged presence of an angry face reduces its impact compared to positive expressions.

Keywords

Introduction

Facial expression is a common mechanism to display emotional behavior during social interaction; it shows individual motivation and makes behavior understandable and predictable. Also, it helps to synchronize, organize, and complete social interactions. 1 With this in mind, artificial emotional systems have been proposed to enhance the interaction between humans and robots. 2 Facial expression interpretation differs among cultural and geographical regions. 3 For this, many expressive robots focus on a set of universal facial expressions. Ekman proposes the following: happiness, surprise, fear, disgust, sadness, and anger. 4 It has been shown that expressive robotic systems favor feedback and engagement with people. 3 In the present work, we focus on the angry facial expression and the elements that guide its activation during a collaborative human–robot task.

The outline is as follows: Related work is presented in the second section. The third section shows the robotics face design. The experiment to validate the robotics face legibility is shown in the fourth section. In the fifth section, an experiment to analyze the angry face activation linked to a robot failure during a task is shown. The effect of the angry face during an unclear situation is presented in the sixth section, and finally, the general results and conclusions are discussed in the seventh section.

Related work

There are several examples of artificial emotional systems that have been used to communicate emotions. Some of these have implemented animal-like designs to emphasize an emotional behavior (e.g. Kismet 5 and Eddie 6 ). Others have focused on emulating the elements of the human face, like the eyes, nose, nose, lips, eyelids, and eyebrows. These humanoid designs commonly rely on electromechanical 7 or digital 8,9 robotic faces. There are two approaches for the appearance of the humanoid robotics face: the first mimics the look of the human face (e.g. android Actroid-SIT 10 ) and the second uses a minimal amount of facial elements (e.g. robot MiRae 11 ). However, universal facial expressions must be legible, independently of the nature of the robotics face. The first robots to explore facial expression legibility were WE-3RIV and WE-4RII that focused primarily on universal emotions. 12,13 This has also been tried in abstract cartoon-like robots such as Flobi 7 or abstract system such as MiRae. 11

Other humanoid robots extend their capabilities to diverse facial expressions and social applications, such as the comedian robot Kobian-R 14 based on the WE-3RIV and WE-4RII robots and Kaspar, 7 a robot that focuses on the expression of emotions for medical purposes. The expression of emotion allows robots to communicate emotional states during the execution of a task like Lisa 8 and Bender. 15

In social interaction, facial expressions help to communicate an emotional and mental state which is expected to be consistent. A common problem is how to access people’s mental state 16 as this cannot be perceived directly and must be inferred to by observers. 17 Thus, the activation of a recognized facial expression has a direct relation to the underlying conditions that prompt it (an extended analysis of these relations can be found in Plutchik’s study, and a summary is shown in Table 1 2,18 ). Thereby, facial expression of emotions can be a window to a mental state in the context of social interaction. Robotics and artificial systems can display an emotional state in order to influence and persuade their users. 19

Conditions and functions of universal emotions. 18

Emotions are commonly classified in negative and positive categories. Such a categorization has its origin in the field of ethics, and other terms from different fields are used to point out such dichotomy; for example, valence from physics and polarity from chemistry. 4,20,21 Alternatively, arousal and valence have been proposed as extra dimensions to distinguish emotions. These dimensions compose the circumplex model. 22,23 For instance, angry is a highly arousing negative emotion and its counterpart is a low-arousing positive one, which is allocated between the contented and relaxed mood. The positive activation–negative activation model 24 –26 considers anger as a highly negative activation and calm as a low positive activation in the opposite side (see Figure 1).

In any case, the angry face could be used as a counterpart of positive emotions during interaction. The opposition between anger and other positive emotions is explicitly exploited in this study and is examined in the third and fourth sections. More complex models have been proposed (e.g. three dimensional (3-D) 2,5 or continuations 27 ) but are left unexplored in the present work.

Anger is a negative emotion associated to hate, failure, aggression, and contrariness. 20 It has been assumed that it is related to a decrease in emotional self-control while increasing irritability, aggression, or dissatisfaction. 21 According to biological adaptation, negative faces are best recognized due to their utility in transmitting a potential threat or danger. 28 Anger emerges when goals or plans fail, signaling that something is not right and has to be changed. 18 However, from the biological evolution and adaptation to social context, it can be useful to obtain benefits in unclear and cluttered environments. 29 The recalibrational theory of anger assumes that the regulatory system that directs the emotion became a means to negotiate and resolve conflicts of interest in addition to recalibrate the situation for the welfare of the subject. 29 –31 In general, the angry robotics face is recognized with relatively good accuracy. 11 This has been taken as an advantage in the design of oriented robotics tasks (e.g. Robot Minerva and robot iCat 32,33 ).

The expression of negative emotions in robots is useful in short-term interactions, commonly complemented with spoken messages and body language. For example, the mood of the Robot Minerva was linked to its navigation behavior in a social setting. Minerva activated an angry face and spoken messages that allowed it to emphasize its intentions to museum visitors in order to continue navigating when they persistently blocked its way. 32 In other research, Midden and Ham explored the positive and negative social feedback to guide user actions. These are shown using facial expressions and spoken messages in the iCat robot. The results showed that the use of negative social feedback improved the performance of the task, which consisted in the following simple instructions. 33

In previous research, 34 the sad facial expression during a collaborative task with humans was explored. The study focused on the activation of emotions in a robotics face, without other multimodal behaviors as speech, gaze, or body language. We analyzed the capabilities of a sad face to communicate a condition derived from an unexpected failure of the robot.

According to research on the effect of anger inside social interaction, 29 –31 in the present investigation the influence of the angry face under two different conditions was studied: (i) the facial activation derived from an activity and (ii) the activation during an unclear situation.

Minimalist robotics face

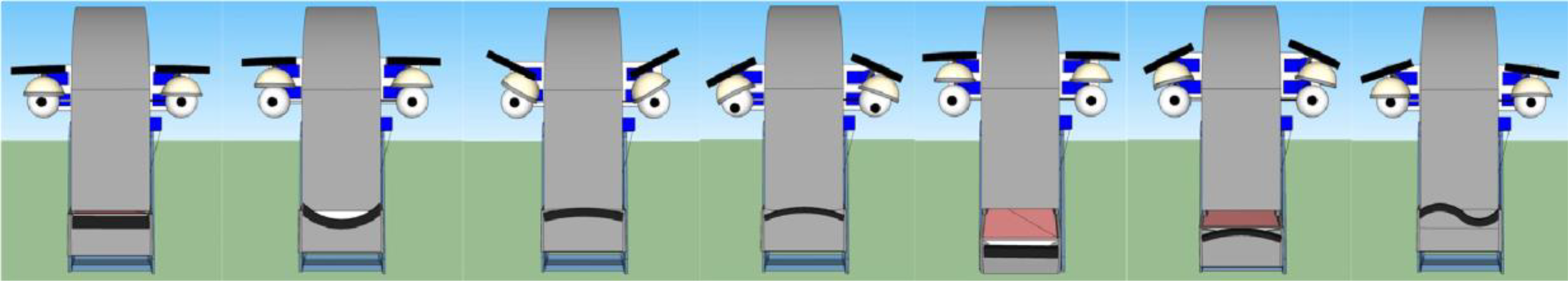

In the present article, we designed and constructed a robotics face (GolemX-1) to analyze the effect of the emotional facial expressions on subjects (Figure 2). The prototype has a minimalist style 11,35 with only eight degrees of freedom and was designed using a 3-D software modeler. Also it was built with electromechanical equipment and manufacturing material (acrylic) featuring accessible construction processes at low-cost (laser cutting), easy maintenance, and basic electronics control equipment (Arduino UNO). The system is able to express six universal expressions plus an extra one. Figures 3 and 4 show neutral, happy, angry, sad, surprise, fear, and worried (the extra one) emotions in two versions, digital and electromechanic.

GolemX-1, an electromechanic minimalist robotic face.

Digital design; from left to right: neutral, happy, angry, sad, surprise, fear, and worried.

GolemX-1 robotic face. From left to right: neutral, happy, angry, sad, surprise, fear, and worried.

Static facial expressions legibility

The experimental setting to evaluate the robotics face legibility was implemented before performing a test with humans. In the following subsections, the scenarios and results of the static format are shown.

Method

We adapted the robot facial expression positions proposed by Bennett and Šabanović 11 and performed preliminary tests in which the parameters of the face were slightly modified and tested with colleagues. We used a setting in which static images expressing an emotion were presented to subjects in two stages: (i) with a digital design (previous to manufacturing) and (ii) with pictures of the final robot’s head.

Experimental condition and hypothesis

The legibility of facial expressions in our robot was tested with an online survey in which the images of the faces were presented to subjects. For the experiment, the seven robot facial expressions were randomly presented one at a time. The subjects had to select only one emotion per image from a list of seven randomized labels. The emotions and the list were presented on the screen. There were 88 subjects who responded to a broad call to students and colleagues not knowing the design beforehand. The average age of the subjects was 24 years old. The results of the survey on the digital design were analyzed and used to implement the seven facial expressions in GolemX-1. This electromechanical robot head was validated with a similar process. For this legibility test, 98 different participants answered the call 2 years after the first survey.

The hypothesis was that the robotics face performs the negative facial expressions in a legible manner.

Results

The legibility test for the digital version is presented in Table 2. The confusion matrix shows that the negative expressions had better accuracy than the positive ones. In the study, anger obtained the highest identification accuracy followed by sadness and neutral, then happy, surprise, and lastly fear.

Confusion matrix for digital design legibility survey (88 subjects).

For this experiment, we draw that the anger and sadness emotions showed to be extremely legible while the positive ones did relatively good. In the case of worried, it had a bad legibility, possibly because we used the lip twisted position which consists of one mouth corner raised and the other dropped at the same time. The use of “lip twisted” is not a standard for worried and is not registered in the Facial Activation Coding System. 36 Several participants reported happy as too calm or near to neutral state. In the case of fear, further research is needed since its legibility has been confused with other negative expressions and generally difficult to be expressed by most robotics faces. 11

Table 3 summarizes the results of the electromechanical robotics head survey which are consistent with our previous findings: anger obtained the highest identification accuracy with a notable increase followed by sadness and neutral, then happy which also presented an increase and surprise remained the same, while worried and fear are still at the bottom of the accuracy scores. Fear continued to perform poorly. In general, the results showed an increase of legibility when compared with its digital version; we attribute this to the tweaks done and the physical embodiment effect.

Confusion matrix for robot GolemX-1 legibility survey (98 subjects).

The regulatory effect of the angry face

One might think that anger could be associated with a failed interaction in which the parties stop collaborating when this emotion arises. However, this is not necessarily the case for a robotics task. On the contrary, since anger has been associated with signaling difficulty to achieve a goal, 18 the robot can harness this signal to regulate the execution of the task. For this, we propose to use the expression of the anger emotion instead of the positive one. An activation of anger preceded by positive emotions emphasizes a problem in the task.

Method

In this experiment, the influence on the human user of a robotics angry face was analyzed. We asked whether the presence of this negative emotion contribute to complete a task correctly. To investigate the effect of the angry face, a collaborative interaction was designed. In the experiment, human and robot performed a noncomplex repetitive task in order to place 10 cylindrical objects within a container as stated in Reyes et al. (2015). 34 However, the robot was programmed to interrupt randomly the collaboration by not being able to place one of the objects in the container. During this situation, the angry face was activated to denote the inability of the robot to perform the task. In order to contrast the valence in the facial expression activation (positive to negative), the happy face was presented when the robot placed the object in the container. The emotional behavior in the robot was compared with neutral (absence of emotion) along the task as the control condition.

Experimental condition

Figure 5 shows the areas and elements of the experimental setting. The robot is facing the human subject on the opposite side of the table. The robot’s right hand is besides the launching area waiting to push the objects into the container. The subject must wait for the access barrier to open and place an object in front of the robot. The subject is instructed to put only one object at each time. After the access barrier closes, the robot pushes the object toward the ramp. The subject cannot access or intervene in the action unless the barrier is opened. Two video cameras were used to record the interaction.

Workspace scenario. (Left) Interaction system and collaborative human–robot workspace diagram: (1) objects zone, (2) human access, (3) access barrier (red/closed, green/open), (4) launch area and robotic hand, (5) ramp, and (6) container. (Right) Human–robot setup and workspace.

In the experiment, written instructions were given to the users and a qualified person placed the objects in front of the robot. Once positioned, the barrier is closed; the robot directs its gaze at the object and throws it into the container. The robot restarts its position. The barrier is opened and the robot faces forward. The human subject takes his/her place and the interaction starts. In the first try the robot pushes the object into the container successfully. The robot actions are randomized from the third execution onward. A failure occurs when the robot is not able to push the object (the hand movement is stopped). When this happens, the robot activates a facial expression according to the experimental sessions described below (there is a 9-s pause). After the failed action, the access barrier is opened for 12 s. We focused on the first human intervention which can occur during the pause (the failure is repeated two times in order to stimulate human actions). After the failures, the robot pushes the object into the container and a successful outcome occurs. Finally, the task stops when all objects fall into the container.

Control condition and hypothesis

There were two types of experimental settings and different feedback was given as follows: In the first, the robot executes only a neutral expression in the session for the control task, including the failed action. In the second, the robot displays a happy expression during the task but an angry face when the failure occurs. All the experimental settings were conducted by 15 naive human subjects who responded to a broad call to students and colleagues who have not seen the robot before.

The hypothesis was that a negative expression (i.e. sad or angry) has a regulatory effect in human actions during task performing.

Results

By analyzing the video recordings, five types of actions performed by the subjects were identified: The subject changed the position of the object. The subject changed the current object by another from the objects zone. The subject threw the object into the container. The subject placed an extra object into the launching area. The subject waited.

The first three actions have a positive effect as the human helps with the flow of the task and follows the instructions. However, the fourth and fifth interventions had a negative effect because he or she does not collaborate during the interruption making the session longer, and this is not consistent with the rules.

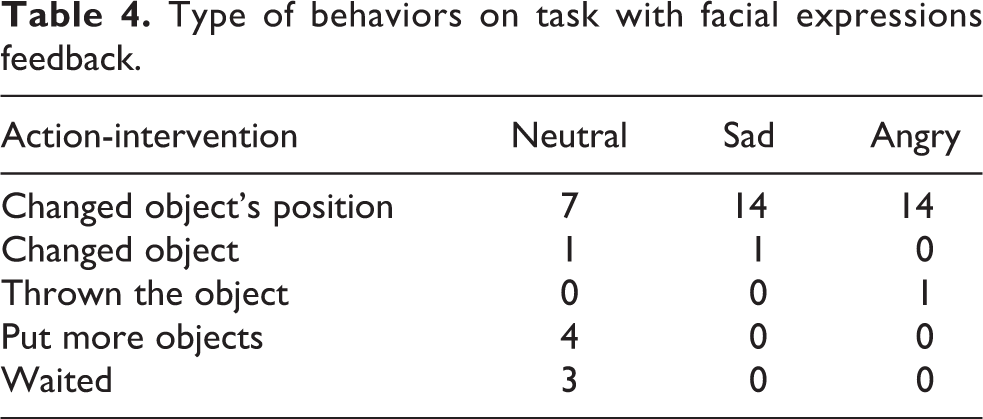

Table 4 presents the type of behaviors and their frequencies for the angry case. In this table, we also include the behaviors for the sad face from our previous experiment. 34 The results show that the angry face as feedback has a positive regulatory effect in the task. The frequency for both emotions, sadness and anger, is presented in Table 5. Angry had different statistical significance when compared with neutral (p ≤ 0.05 using a t-test analysis (full analysis in https://goo.gl/pGE16K)) but anger and sadness are not different. However, the average time in human intervention decreased from 12 to 7 s when the angry expression is used. There is empirical evidence of the difference between sad and angry, but this is left for further study.

Type of behaviors on task with facial expressions feedback.

Positive and negative effect in the experiment.

These results point out that the anger emotion has a regulatory effect on the interaction, by guiding the actions taken by the users. In this case, an unexpected failure represents the cause of the emotion activation that the human subject observes and reacts in consequence. Since sad and angry expressions are not different statistically, we argue that the positive effect is independent of the arousal level shown in the dimensional models of emotions (Figure 1).

The attentional effect of the angry face

For this experiment, we considered that facial expressions occur always during social activities. If the emotion activation is timely and salient, its sudden presence could signal a change in the interaction. From a negative facial expression, the observer would try to infer the motivation triggering a cycle of analysis and diagnosis. 37 During this cycle, he or she could focus on the task state and its context. In this section, the effect of the angry face to induce human attention during an unclear activity is analyzed.

Commonly, the change between facial expressions is given through transitions from an emotional state to another with different intensity and valence. A transitional or dynamic facial expression differs from a static one in that it consists of a sequence of expressions with incremental intensity. 38,39 Figures 6 and 7 show the transitional expressions of GolemX-1 from neutral to angry and from neutral to happy. In order to reach a high-intensity emotion, the face was modified in mouth and eyes to express a Duchenne smile, 40 which is similar to a happy but rated as more authentic, genuine, and trustworthy. 41 The goal was to simulate a higher arousal state according to a situation (getting happier or angrier).

Negative transitional set. Neutral to high arousal angry facial expression (right to left, neutral to higher intensity).

Positive transitional set. Neutral to high arousal happy facial expression (right to left, neutral to higher intensity).

The sudden presence of a single facial expression (static) may occur during the context set up by a transitional one. Positive transitional expressions could contextualize the presence of a negative static one from the change in the valence and the interruption of the positive sequence. In order to study the effectiveness of the angry face to induce human attention, positive universal emotions (happy and surprise) were analyzed.

Figure 8 shows three situations of a sudden activation of a static expression during a transitional one. Situation A shows an angry face during the transition of neutral to happy, situation B shows a happy one during the transition of neutral to angry, and situation C shows a surprise expression during the same type of transition.

Transitional facial expression context and the sudden activation for static facial expression.

Method

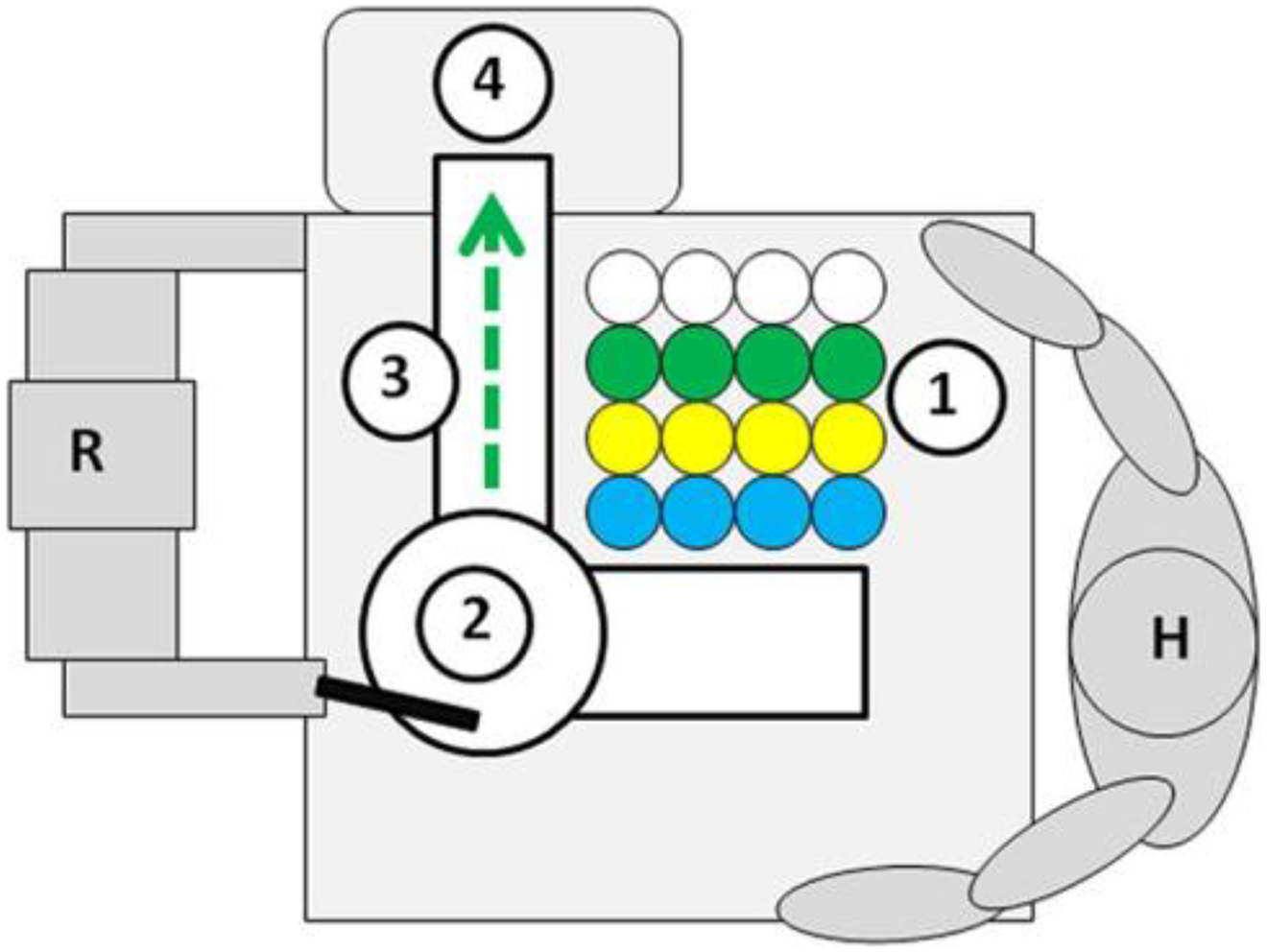

For this experiment, we extended the setting presented in the third section by adding new objects, as shown in Figure 9. In this case, the human and the robot cooperated to place cylindrical objects within a container. This time, four columns with four objects of colors blue, yellow, green, and white (all objects in each column had the same color) were included. The columns were placed randomly on each trial. The subject chose any object and placed it in the launching area so that the robot could push it into the container. At the end of the session, a survey was applied to evaluate the robot performance, facial expressions legibility, and the cause of face activation.

Workspace scenario. Interaction system and collaborative human–robot workspace diagram: (1) objects zone, (2) launch area and robotic hand, (3) ramp, and (4) container.

GolemX-1 performed a transitional facial expression when yellow, green, and white objects were in the area. In order to set up an unclear situation during the task, the robot activated the static face when the blue object was placed. The subject was not previously informed about the conditions of the expression activation. In case the human did not put the object, the robot also activated the static facial expression.

For the static facial expression activation, we chose the blue object since it has not been linked to any of the emotions being used (angry, happy, and surprise). The blue color is interpreted as sad (e.g. the “I feel blue” metaphor) 42,43 and is used in robotics heads. 44

Experimental condition and hypothesis

The experiment was performed as follows: Written instructions are given to the user. A qualified person performs a test session to show an example of the interaction. The researcher restarts the system and the subject places the object. The robot looks at the object, the emotion is activated, and the object is pushed into the container (the full cycle lasts about 3 s). The robot waits for the next object (for about 4 s). The action is repeated until all the objects are launched inside the container. Finally, the task ends up and the subject answers the questionnaire.

The hypothesis was that a negative robotic facial expression of angry induces human attention when an unclear condition arises during the task.

Questionnaire

The experiment was carried out in Spanish (Table 6 shows the English translation). The first question was about the easiness of the task, from very difficult to very easy, and the second about the speed of the robot’s performance from very slow to very fast. In questions 1 and 2, subjects filled in a five-point Likert-type scale applying five answer categories with a scale from 0 to 100 points (0, 25, 50, 75, and 100).

Questionnaire in collaborative HRI test.

HRI: human–robot interaction.

Questions 3 and 4 aimed to measure the legibility of the static and transitional robotics facial expressions. Question 3 asked whether the subjects noticed facial expressions during interaction. A scale of 0 (no) or 100 (yes) points was applied. Question 4 was open and asked for the emotions the subjects noticed in the robotics face; five options were provided: happy, angry, surprise, fear, and sad.

Question 5 was open and asked about the possible causes of the facial expressions activation. We focused on the intention underlying the description of the cause rather than the literal description employed by the subject (e.g. “The emotion was activated when the blue object was in the area,” “The facial emotion was activated when the yellow, green, and white objects were in the launching area,” “The facial expression was activated during the task,” “The blue one,” “The emotion was activated because the robot likes/dislikes the blue color,” etc.).

Results

The number of participants was 69 (23 naive human subjects per situation). All subjects were colleagues and undergraduate students recruited by personal invitation. Table 7 shows the results of the three questions. The first row shows that most of the users thought that the task was easy, which corresponds to our design goal of noncomplex and repetitive. The second one shows that the subjects considered that the speed of the task was normal and the third that all subjects identified different expressions during the experiment.

Quantification of answers to questions 1–3.

The results of facial expression identification are presented in Table 8. For situations A, B, and C, the transitional expression was recognized with a high accuracy (91.3%, 100%, and 100%, respectively). The static one was also highly recognized in the three situations (100%, 95.7%, and 95.7%, respectively). However, there was some confusion between surprise and happy in situations A and C as in the former, the surprise expression had a relatively high score although it was not activated during the session, and in the latter happy was likely to be identified most of the time. This may be due to the Duchenne smile that is confusing as mentioned above. This confusion was not consistent in all situations; in B the happy face was better identified than surprise, and in C surprise had a higher score than happy. Although fear and sad were not activated, some subjects identified them but with a lower frequency.

Question 4 results and rating of facial expression identification.

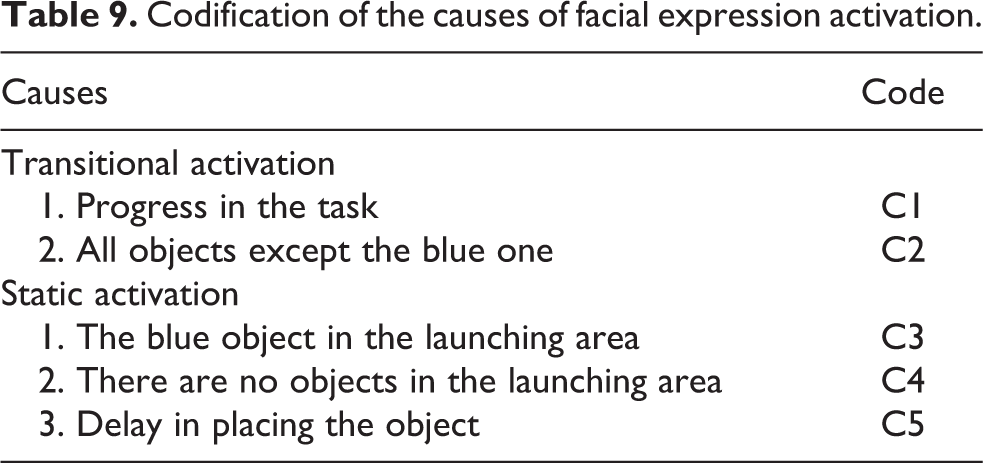

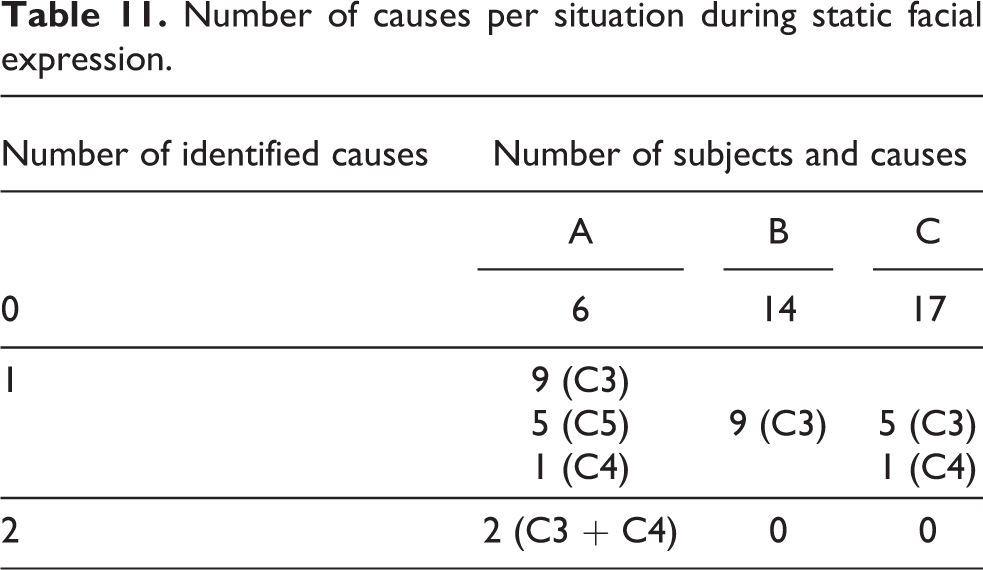

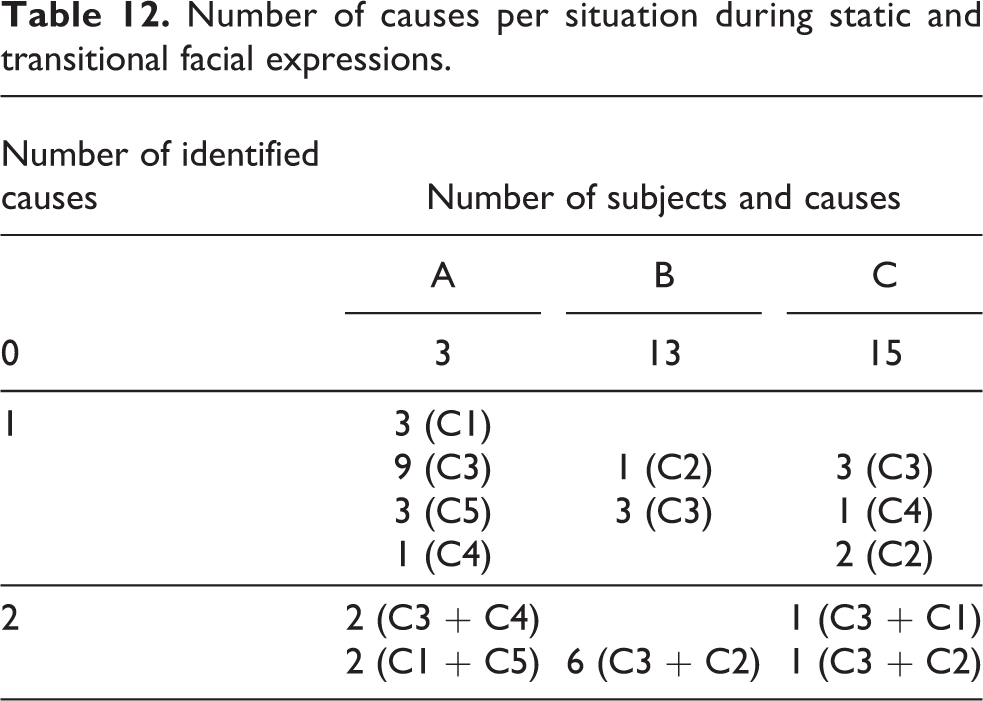

The registered causes for facial expression activation with an identification code are shown in Table 9. All causes except C5 were expected in the experiment’s design. Different or imprecise causes were not considered. The participants could identify one or two causes but in some cases they did not identify any cause at all. Figure 10 compares the number of causes identified per session (0–2) with the subjects during static facial expression. In Figure 11, these results are shown for the complete session (including static and transitional expressions). The results for situation A are statistically different from B and C (p < 0.05 using a χ2 analysis (full analysis in https://goo.gl/pGE16K)), while the last two cannot be distinguished (p > 0.05).

Codification of the causes of facial expression activation.

Sessions and the number of identified causes during static facial expression activation.

Sessions and the number of identified causes during static and transitional facial expression activation.

The angry facial expression induced human attention better than happy and surprise, especially when it was suddenly activated during the transition of the expressions. The subjects identified the causes of the static angry emotion with high accuracy but the causes for transitional expressions were not identified that easily. This was because the prolonged presence of the angry emotion is confusing and could distract the subjects during the activation of positive faces.

In Table 10, the number of causes stated by the subjects are shown. Table 11 shows the number of subjects and causes per situation during the static activation; the results for the static and transitional facial expressions are presented in Table 12.

Registered causes of facial expression activation.

Number of causes per situation during static facial expression.

Number of causes per situation during static and transitional facial expressions.

Discussion

The present article analyzes the influence of angry robotics facial expressions on humans; that is, the effect of emotional feedback during a collaborative human–robot interaction (HRI) task. In the first experiment, the legibility of the angry facial expression of the digital minimalist face through a controlled online survey was measured. These results were used for the development of an electromechanical prototype called GolemX-1, whose legibility was better than the digital version.

The robotic head is able to communicate five of the six universal facial expressions (all but disgust). We found that negative facial expressions (sad and angry) were the best expressed; in particular, the angry face was the highest rated. The fear emotion had the lowest identification accuracy and has been proved difficult to mimic in all type of robotic heads. 11 The change in the position of the eyebrows and the mouth of the digital face influences the legibility directly, regardless of the negative effect on humans.

The identification of fear expression in robots has a relatively low accuracy. Among several potential reasons is that fear is interpreted in relation to a specific context, like dark places, situations involving danger, and so on. The lack of such context leaves the interpretation quite undetermined and the face is highly ambiguous. Moreover, the legibility is independent of the type of design and construction method.

In the second experiment, the use of the sad and angry expression as negative feedback was compared with a neutral face. Such negative feedback was embedded into a simple and repetitive interaction task. The negative facial expression was activated in the task only when the robot was not able to push an object into the container. In previous work, we showed that the sad emotion had a positive effect in the task. 34 In the present research, a similar effect was observed with the expression of anger. Specifically, users tend to follow the instructions better when the robot expresses negative emotions. We argue that this happens because the activation of the expression signals the failure and influences the subject to cooperate better along the flow of the task. This contrasts with the effect of a neutral face since the situation becomes uncertain and adverse to the task progress. This supports the hypothesis that an angry face can trigger a higher level of analysis and diagnosis that is useful to influence human attention and the identification of unclear situations. 37

In the last experiment, a sequence of facial expressions was presented along the task and transitions depicted the incremental emotional intensity. Transitional expressions were used to set up the context for the interpretation of a static facial emotion whose sudden presence highlighted a change in the context; the valence in the emotions was the basis for this change. We compared the transition of positive emotions with a static negative one and vice versa. This transition goes from neutral to positive (happy) or neutral to negative (angry) faces.

The results show that the sudden presence of the angry face in the context of a transitional positive expression is effective to induce attention in naive subjects. The angry emotion promotes an inference whose purpose is to identify or render the cause of the emotion activation. However, the continuous presence of the angry robotics face is adverse to its legibility.

The sudden activation of positive emotions in the context of the angry transitional expression was identified with a high score. However, happy and surprise facial expressions were less effective to induce such explanations of the change in the robotic face regarding the type and number of causes. The statistical analysis shows that the effect of the expression of anger is significantly different from the effect of happiness and surprise. In turn, the effect of both positive emotions is not statistically different from each other.

Facial expressions are useful to coordinate and make interaction understandable. When fuzzy conditions arise, an emotion highlights the unclear situation more smoothly than spoken messages. During social communication, it is expected that humans react in a similar manner whenever a particular facial emotion is activated. 2,18 According to the conditions that trigger facial expressions, the emotions could regulate the subject’s behavior. However, the studies on the influence of emotional expressions are analyzed in human–human communication context and are expected to be consistent when applied to HRI. Commonly, a robot is programmed assuming this influence, and its emotional behavior is configured in the system without knowing the actual impact in human subjects. As has been noted previously, the impact of an emotion is determined by several factors such as the type of interaction, human actions, environment, situation, and even the design of a facial robotics system.

According to the established hypothesis, negative expressions can be better recognized in robotic faces, being the angry face the most legible. Given the adverse conditions associated with the expression of anger, one could think that it is not advisable during human interaction. However, the present experiments and results show that such negative expression has a regulatory effect on human actions and influence attention when an unclear situation emerges during the task. So, when the emotional expression of anger is activated, the human rectifies a situation that hinders the progress of the task, and becomes better at inducing the conditions that cause the expression of anger.

From our studies of emotions in service robots, we found advantages in integrating facial expressions during collaborative robotics tasks. It can be useful for the system’s efficiency with a minimum use of resources. Currently, the results have been incorporated to the service robot GolemIII 45 (see Figure 12), using a robotics head that features a lightweight, portable, easy-to-replicate, low-maintenance structure and low energy consumption. 46 The emotional expression is integrated to the robot’s behavior and used as feedback during interactive tasks.

Golem-III, interactive service robot.

Finally, the importance of our findings lies in the implication that the angry face in an emotional service robot does not necessarily implies an adverse effect during the interaction with humans. On the contrary, the negative emotion could have a regulatory impact in a collaborative task if the activation is timely and adequate to the situation.

Footnotes

Acknowledgements

The authors would like to thank The Golem Group for support during the robot implementation and Noé Hernández for his comments in an earlier and final manuscript. We also thank CIDI, UNAM for the support during the research.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by CONACyT project 178673, PAPIIT-UNAM project IN107513, PCIC-UNAM, PASPA program, PAEP program and CIDI, UNAM.