Abstract

Robot human-like behavior can enhance the performance of human–robot cooperation with prominently improved natural interaction. This also holds for redundant robots with an anthropomorphic kinematics. In this article, we translated human ability of managing redundancy to control a seven degrees of freedom anthropomorphic robot arm (LWR4+, KUKA, Germany) during tele-operated tasks. We implemented a nonlinear regression method—based on neural networks—between the human arm elbow swivel angle and the hand target pose to achieve an anthropomorphic arm posture during tele-operation tasks. The method was assessed in simulation and experiments were performed with virtual reality tracking tasks in a lab environment. The results showed that the robot achieves a human-like arm posture during tele-operation, and the subjects prefer to work with the biologically inspired robot. The proposed method can be applied in control of anthropomorphic robot manipulators for tele-operated collaborative tasks, such as in factories or in operating rooms.

Introduction

During the past decades, the demand for robot assistance in human–robot sharing workspace has been more and more popular in various fields, from industry (e.g. manufacturing and assembly) to service robotics (e.g. robot waiter), medical sector (e.g. robotic nurse), where arms need to share the same workspace with humans in the operating room. 1,2 In these cases, robots are expected to behave in a social, predictable manner. 3

To ease the cooperation in the shared workspace for human and robotic collaborators, several studies have focused on human–robot cooperation analysis. No matter for robot appearance or behavior, human-likelihood attracts more attention for empowering human efficiency and stamina, relieving the human participants from tedious, hard, or even dangerous tasks. 3 With humanoid appearances, redundant anthropomorphic robot arms show their superiority in human–robot interaction, 4 for example, LWR4+ (KUKA, Augsburg, Germany), Justin robot (Institute of Robotics and Mechatronics, Wessling, Germany), and YuMi (ABB, Zurich, Switzerland). Humanoid robots with anthropomorphic structure are more appropriate when the task demands people working in the robots’ working space. 5

Several studies have been conducted to improve the assistance quality of humanoid robot manipulators, which also played a fundamental role in human–robot collaborative tasks. A second-order polynomial 6 was utilized to represent the relation between the wrist pose (referred to the ground) and the arm angle for realizing a human-like control of a redundant robot. The “tutor-tutee hand-in-hand” teaching scheme integrated the tutor’s motor functionalities into the robot’s control architecture, transferring human to human skill. 7 Natural redundancy resolution in dual-arm manipulation was achieved using humanoid manipulators. 8 Human impedance transfer skills 9 were adopted for the control of a dual-arm exoskeleton robot. 10 Human-like motion and compliance properties were achieved and assessed for robot-environment interactions. 11 An adaptive hands-on control was realized for reaching and targeting tasks in surgery with redundant robot. 12,13 A trajectory planning system for anthropomorphic robot arm was designed based on an artificial neural network (ANN) architecture 14 trained on human actions. 15 A wrist-elbow-in-line method was derived to map key positions of human demonstrations to the real robot for obtaining a human-like inverse kinematics solution. 16

Kinematic redundancy can also be exploited for achieving human-like behavior. 17 Many optimality criteria with different objective functions, including jerk minimization, joints constraints, and joints torque optimization, have been introduced to model natural trajectories and achieve human-like motion. 18 –20 However, based on the kinematic level, the joints distribution and structure of human arm is different from the real robot arm. In this way, to constrain the joint angles to achieve human-like motion cannot be viewed as an universal method for the redundant robot arm with different kinematic design. The pioneer works have investigated the relation between the swivel angle and the hand pose, synthesizing a human-like behavior for a dual-arm robotic anthropomorphic manipulator (FRIDA, ABB, Sweden). 21 The kinematic redundancy mapping strategy is thought to facilitate social acceptance, by reducing the stress in human–robot coexistence. 22 This method can be chosen as an universal method for redundant robot, because it is independent from joint configuration.

For tele-operated manipulators in industrial or medical area, the end-effector should be accurately controlled by remote master device. In this article, we investigate the redundancy problem resolution by utilizing a neural network (NN) to obtain the nonlinear relation between the 6-D task pose and the 1-D swivel angle of the elbow, based on actual human motion data studies. Then, we transferred the static mapping model for continuous movement control while handling tele-operation tracking task. Our approach aims to achieve human-like motion without violating the joint position and velocity limitations. The particular scenario considered in this article is to implement online human-like redundancy management module able to imitate the human motion, easing the cooperation in a shared human–robot environment, for example, operating room, or an industrial workspace. At the same time, manipulability performance of robot manipulator for online tele-operation was also analyzed after optimization.

Methods

To develop a biologically inspired tele-operated surgeon robot arm in Figure 1, the elbow swivel angle is adopted to achieve human-like arm pose. Human arm motion data (skeleton trajectory) are acquired with an external sensor and then analyzed and integrated in the human arm model in Figure 2.

23

The human arm can be simply modeled as a rigid kinematic chain connected by three joints (shoulder, elbow, wrist) with seven joints (

Robot human-like arm pose and swivel angle. S is the shoulder position, E is the elbow position, W is the wrist position, the vertical blue plane passing through SW represents reference plane, the red plane represents the arm plane passing through S, E, and W, ψ is the swivel angle between the reference plane and the arm plane.

Human arm model:

DH parameters of the human arm model.

DH: Denavit–Hartenberg.

Geometry of arm model:

Human movement acquisition and processing

With different subjects, the arm length is different. So the DH parameters of model should be also variable. This article focuses on how to transfer the human redundancy optimization ability to tele-operated robot arm. To ignore the influence of different limb length between separate subjects and simplify the model analysis, only one subject is involved in the data collection to acquire arm joints’ angles and hand poses, a human arm motion acquisition and analysis software was implemented with MATLAB 2017a. The software included the 7-degree-of-freedom human arm model in Figure 2 for online visualization of the arm movement. The left picture shows human arm model based on the DH parameters and the right picture shows the skeleton viewer. The human arm model can perform the same motion in the right figure. The hand poses (

The subject was instructed to perform natural reaching motions in the specified cubic task space (surgeons hand workspace) in Figure 4. Data acquisition and analysis were carried out in accordance with the recommendations of our institution with written informed consent from the subject in accordance with the Declaration of Helsinki. The data in Figure 4 were captured and saved with the optical acquisition sensor (Microsoft Kinect V2) placed in front of the subject.

Performed hand motion is limited in a constrained workspace defined in the front of the body: as it is shown in the picture, the distance from body to constrained workspace is

NNs architecture and training

Since the regression model between the swivel angle and the target pose of the end-effector has been introduced and solved in nonlinear description,

22,23

a feedforward back-propagation ANN with one hidden layer was implemented to train the regression mapping function in this article. It is known for approximating any function, regardless of its linearity. The nonlinear regression function for mapping the 6-D task pose of human arm model in Figure 2 (including Cartesian positions x,y,z and Euler angles

The number of neurons of the hidden layer was determined by assessing the performances of the regression network. By the comparison tests of motion data, two-layer NN are enough for prediction of the regression function. The final NN architecture including one input layer with 6 inputs (x, y, z,

Where

The training set was used to update the neurons weights with the predefined number of iterations. When the NN converged to its final configuration, the testing set was used to assess its actual ability to predict human-like swivel angle based on target pose. Two testing data sequences (around 5 min) were given as input for the trained NN.

Robot kinematic control and redundancy resolution

To test the human-like NN model and its feasibility on the control of real robot, a simulation was performed. In Figure 5, O is the intersection of OE and SW. The swivel angle is defined by the angle between the reference plane (BSW) and the actual arm plane (SEW). The swivel angle can be calculated by

where

Redundancy swivel solution.

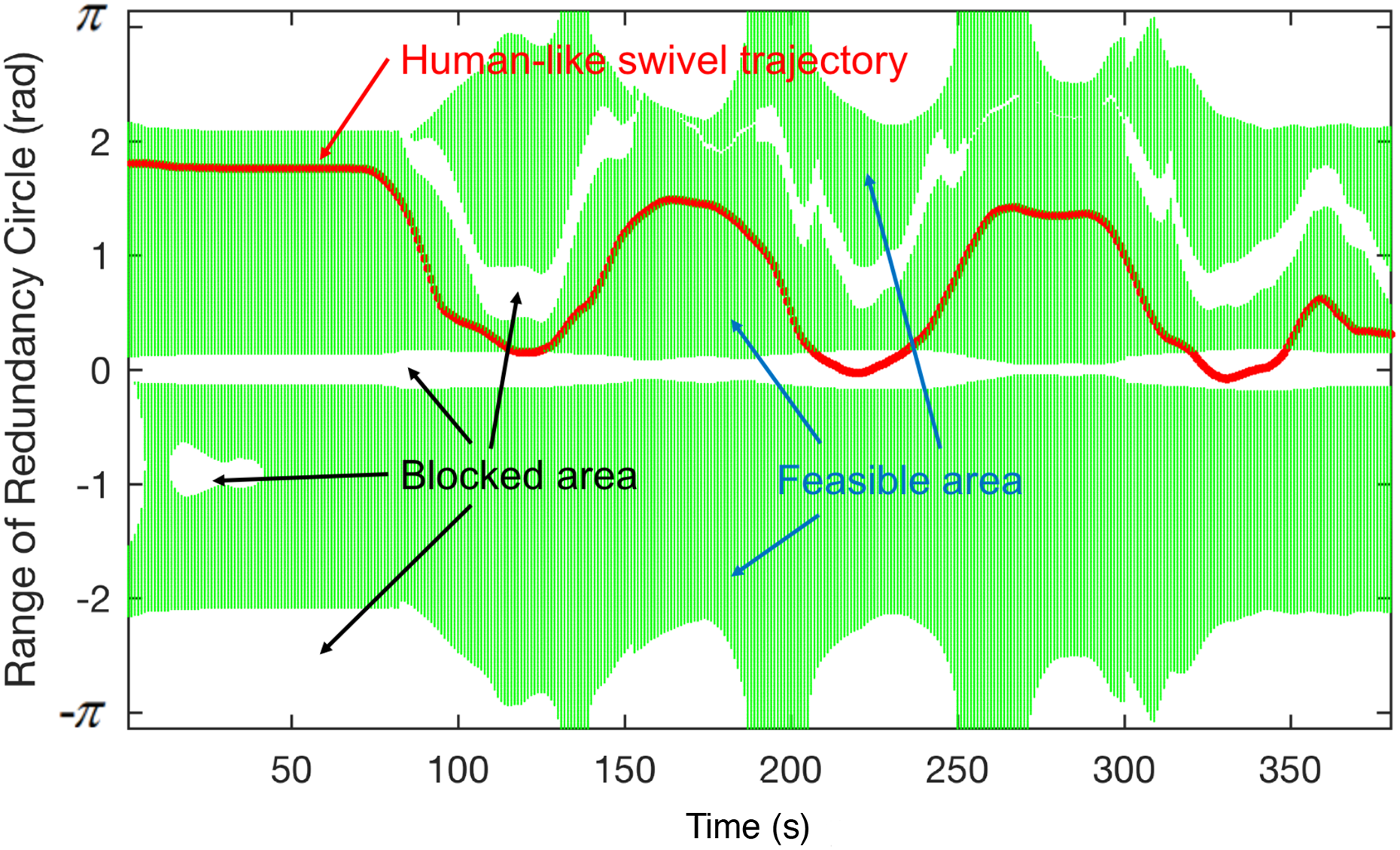

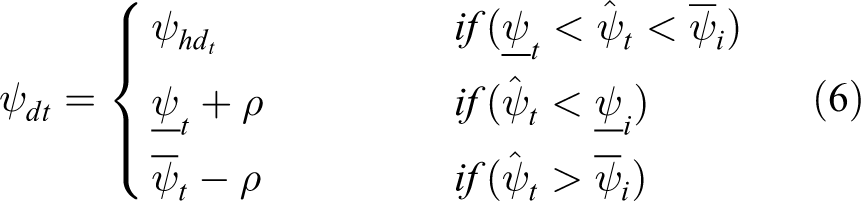

For robot tele-operation control, continuous trajectory interpolation should be introduced for online redundancy optimization, avoiding sudden jump in joint space. As it is shown in Figure 6, the trained NN model cannot be applied on the real robot control directly, because its results sometimes are not feasible for joint limitations during the continuous movement. As above, to make the predicted swivel angle from NN feasible for real robot control, the desired swivel angle must be constrained in continuous feasible area. As it can be seen in Figure 7, the redundant robot can reach an unique desired target pose with infinite joint configurations. Firstly, the target pose of end-effector can be acquired from remote master device. It can achieve the desired target pose with infinite swivel angle configuration for its redundancy. However, the swivel range is not always achievable due to kinematic limitations. Only the human-like swivel angle in feasible area can be mapped to feasible joints configurations, achieving human-like arm pose. But for online tele-operation, when there is no feasible human-like swivel solution, the optimization method should achieve the desired target pose with the feasible swivel area. Hence, for online tele-operation, the swivel configuration from NN will be optimized based on the kinematic limitations, constrained to the feasible area. Then, the inverse kinematics will be adopted to get unique joints’ configuration.

Redundancy optimization of continuous movement: the green areas represent the possible allowed values for the swivel angle during a reaching movement, the white area is blocked area which is not reachable, and the red line is human-like elbow swivel trajectory from NN. NN: neural network.

Mapping from task space to robot arm pose. The grey areas are feasible configuration for robot without violating kinematic limitations; the blue areas are human-like configuration for robot pose.

In Figure 6, the feasible area of real robot for fixed target pose can be calculated as [

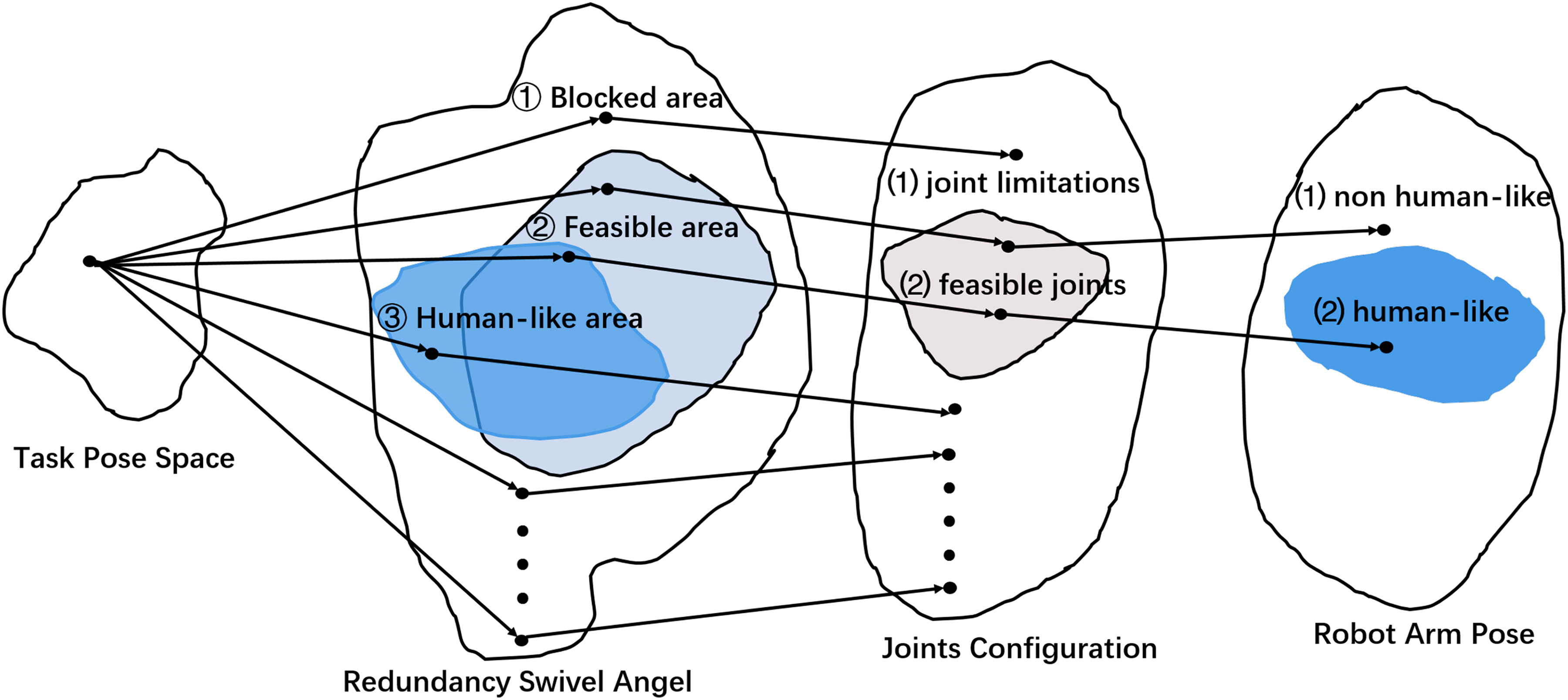

In this way, the desired swivel angle can be constrained in one continuous feasible area. In human-like redundancy resolution, the optimal swivel command

where

where ρ is a small positive constant to avoid reaching the boundary, it is set

System development and architecture

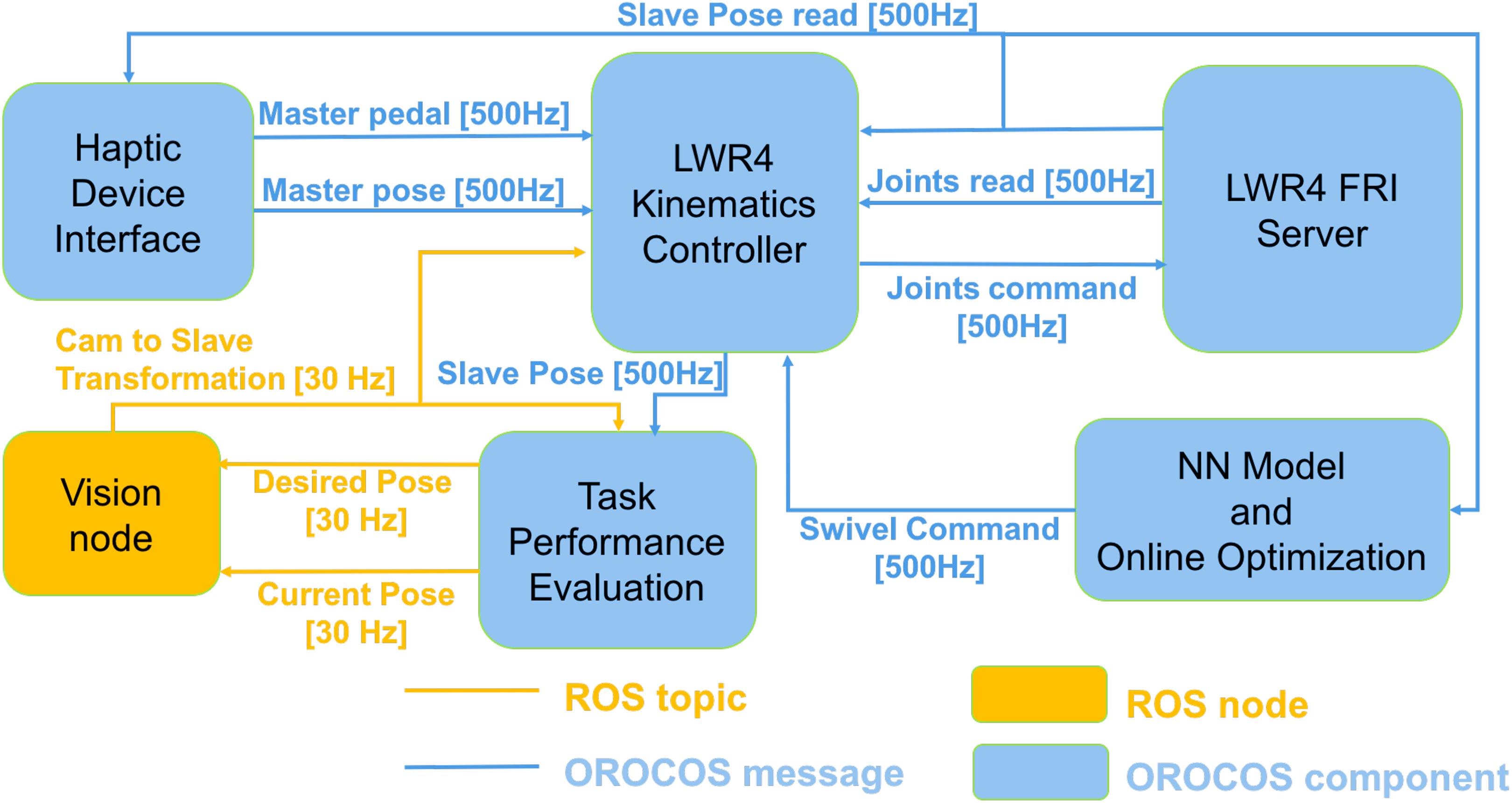

The tele-operated system in Figure 8 includes human motion data analysis and redundant robot control. This robot is kinematic-controlled with fast research interface (FRI) which provides direct low-level real-time access to the KRC (KUKA robot controller) at high rates of up to 1 kHz, 32 allowing to implement accurate control strategies. To realize the real-time tele-operation control, the architecture was developed with OROCOS (Open Robotic Control Software, http://www.orocos.org/) application in Ubuntu 12.04 with a real-time Xenomai-patched Linux kernel and robot operating system (ROS, http://www.ros.org/) kinetic in Ubuntu 16.04. The ROS and OROCOS system architecture can be seen in Figure 9. A 720p webcam (30 fps) is adopted for online visual feedback of task execution, providing the resolution of 1280 × 720 pixels in 8-bit per channel, delivering the three RGB components of the images. To ensure the control performance without affection from vision node, the ROS vision node and the control loop were executed on separate computers with UDP communication between each other: the control loop was developed using C++ on a desktop computer with 3.2 GHz Core i7 processor (Intel Corp) and the vision ROS node was developed using OpenCV on a laptop computer with Core i5 processor for 2-D display. 33 The master device working rate was around 1 kHz.

Flowchart of biologically inspired tele-operated surgeon system.

ROS and OROCOS architecture. ROS: robot operating system; OROCOS: Open Robotic Control Software.

Finally, the vision node displayed the camera images, messages, reference path, and draw paths on the display and estimated the pose of the camera with respect to the task frame by detecting a set of ArUco markers 33 attached to the task phantom. To make the tracking task safe for tele-operation, the designed curves were drawn with OpenCV in the vision interface, not on the real panel. The experimental setup in Figure 10 comprised a ROS camera vision node interface. Before tele-operation, a calibration procedure was conducted to get the transformation matrix from camera coordinate to robot end-effector frame. The tele-operation scheme implements 6-D pose control with a master device (Sigma 7, Force Dimension, Switzerland). 34,35 During the real-time tele-operation control, data such as the tools actual pose were sent through UDP communication to the laptop at around 30 Hz for drawing the actual trajectory curve with OpenCV on the vision interface.

The experimental setup: one camera, one master device, and one redundant robot for tele-operation are shown.

Experimental protocol and evaluation

Experiment 1

Experimental protocol

A subject (male, right-arm, 23 years old, 170 cm tall) was instructed for human movement acquisition. His arm link lengths in Figure 11 are measured as [0.2442 m, 0.2008 m, 0.0537 m] with recorded 3-D data from Kinect. Four groups of training data were collected:

six movements on the horizontal plane keeping the fingers parallel to the Z-axis (four on the perimeter and two on the diagonals); for example, A-B in Figure 4;

six movements on the horizontal plane rotating the wrist from an extended position to a flexed position (four on the perimeter and two on the diagonals); for example, C-D;

six movements from the bottom corners to the top corners keeping the fingers parallel to the Z-axis (four on the lateral planes and two on the diagonal planes); for example, E-F; and

six movements from the top corners to the bottom corners keeping the fingers parallel to the Z-axis (four on the lateral planes and two on the diagonal planes); for example, E-F.

Data acquisition and analysis interface.

Two other groups of data of the same subject involved in data acquisition were collected for NN performance evaluation with arbitrary long movements (around 5 min) in specified space. To train the regression model, the total acquired dataset was divided as: – training set (24 trajectories); and – testing set (2 arbitrary movements trajectories).

All movements for training (24 trajectories) were performed twice in natural manner with a speed around 0.05 m/s and repeated twice. Forty six data sets remained, as two were too noisy to be used. Before analysis on the data, a moving median filter (with windows length of six) and a moving average filter (with windows length eight) were applied to the data to smoothen the trajectories and reduce measurement noise.

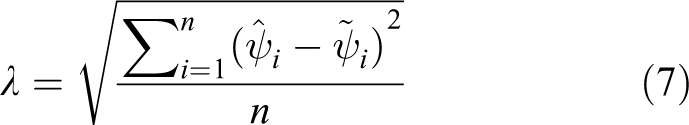

A NN model is trained to map the hand pose to the arm swivel angle, explicating human arm redundancy resolution method. For performance evaluation of the trained NN, prediction mean error of swivel angle was calculated, and the root mean square error (RMSE) for prediction of human swivel angle was calculated

where

NN regression training performance. NN: neural network.

Neural network regression training performance.

Results

The RMSE between the proposed NN output and the actual swivel trajectories of two acquired arbitrary hand motion is reported in Table 1.

The trained model is comparable with the subjects’ motion variability on the swivel trajectory.

Experiment 2

Experimental protocol

To evaluate the proposed human-like redundancy optimization method, three different curves in Figure 14 were designed for tele-operation tracking. 30 The corresponding actual Cartesian trajectory of end-effector in camera frame would also be drawn on the vision interface. The following two redundancy optimization algorithms were compared with the designed tele-operation experiments:

– mid-point redundancy optimization in equation (3); and

– human-like redundancy optimization in equation (4).

2-D tele-operation tracking task in vision node. Three curve tasks are designed in above pictures. The green line is desired trajectory, and the blue line is actual trajectory.

A subject (male, 28 years old) was instructed to perform tele-operation control for drawing the same curve on the original task curve using the vision interface shown in Figure 18. The videos of the robot arm movements during the tele-operated control were recorded for further subjective evaluation. For each designed curve task, tele-operation drawing was separately repeated five times with the above two redundancy resolution strategies.

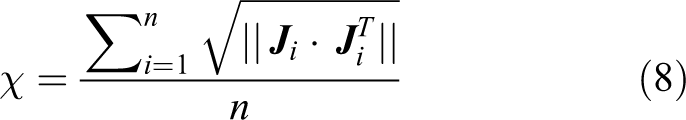

It is known that high manipulability in robotic manipulators could achieve high performance. 36 In this article, average manipulability indices χ of three tracking curves during tele-operation were also calculated to give a quantitative comparison of the ability to move in arbitrary directions, including translation and rotation manipulability defined by

where

Results

Swivel performance in Figure 15 and the corresponding joints trajectory in Figure 16 were recorded for comparison. During the tracking tasks, the continuous variation of swivel angle with time can be called as “swivel trajectory.” As can be seen from left picture in Figure 15, the desired swivel trajectory exists in the feasible area but was sudden jumps during the continuous movement. And for the right human-like optimizer, the human-like trajectory sometime is out of the feasible area. Both the actual swivel trajectories are constrained between the upper and lower boundary. Figure 16 shows that the joints trajectory of human-like optimizer is much more smooth than midpoint optimizer, because its swivel trajectory is much more smoother than the midpoint solution.

Swivel trajectory comparison. Left picture is the result of mid-point optimizer; right picture is human-like optimizer. The dark green line is the upper boundary of the feasible area, the blue line is lower boundary, the red line is desired swivel trajectory, and the green line is actual swivel trajectory.

Joints trajectory comparison: left picture is the result of midpoint optimizer; right picture is human-like optimizer. The seven curves with different color represent the seven joints trajectory of KUKA robot.

The comparison of manipulation indices in Table 3 between two different inverse kinematic solutions is shown in Table 2. The manipulation indices of human-like redundancy are bigger than the previous midpoint inverse kinematic solution. Thus, the human-like redundancy can achieve better manipulability performance than the midpoint solution.

RMSE between NN-predicted swivel trajectory and actual human swivel trajectory, based on the two testing data set.

RMSE: root mean square error; NN: neural network.

Average manipulability index χ on the same tasks.a

a Three corresponding tasks are shown in Figure 14. The manipulability indices are calculated with average value for each tracking task.

Experiment 3

Experimental protocol

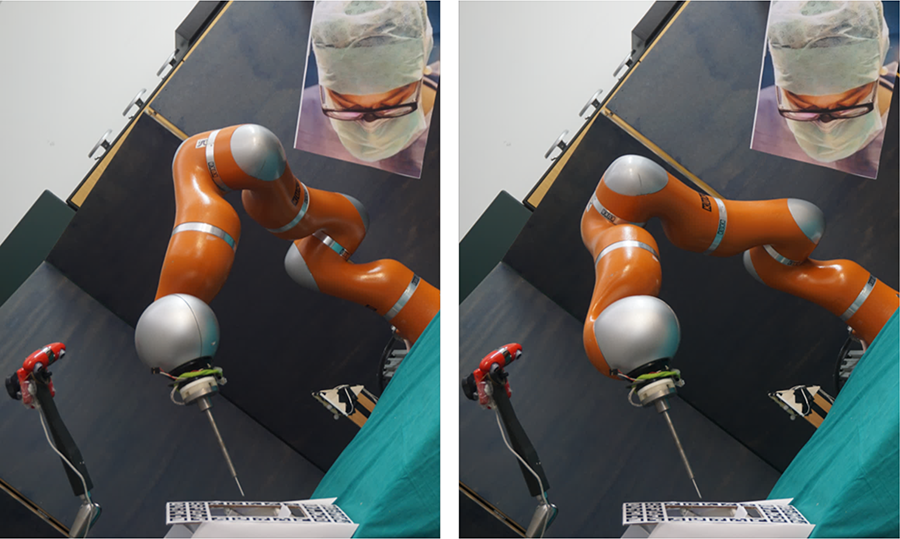

Twenty healthy naive subjects (10 females and 10 males, age between 21 and 29 years) were enrolled to evaluate the performance by watching videos without knowing anything about redundancy optimization in Figure 17. The subjects were instructed to compare which video was more human-like; which motion is more familiar; and which is more comfortable to work with in the same room. A noise-canceling headset is used to prevent the subject from being influenced by outside noise.

Each subject was seated in front of the screen and commented on 15 slides. In each slide, there were one human-like demo and one non-human-like demo, placed with random order.

Real-time robot control: the above pictures are robot arm poses optimized with two different optimizers; for one same target pose left picture is midpoint optimizer, right picture is with human-like optimizer. One surgeon head picture is placed on the head position and videos are taken in the view parallel to the task plane to reduce influence of robot displacement.

Results

As you can see in Table 4, most of the comments (91%) can tell the difference of human-like or not in motion manner. The comments (84.7%) are familiar with the human-like motion, and 89% of the comments are likely to work with the human-like redundancy-optimized robot arm in the same room.

Subjects’ comments on the slides.a

aFor performance: “Human-like” represents whether the motion is human-like or not; “familiar” represents whether the motion is familiar for subjects or not; “comfortable” represents whether it’s comfortable for subjects to work with the robot in the same room. For both optimizer, the number of recorded videos are

In conclusion, the proposed NN can achieve human-like arm pose for tele-operation task without violation of kinematic limitations. The subjects are more familiar with human-like motion and feel comfortable to work with the human-like redundancy optimized robot. At the same time, the proposed redundancy resolution method can also improve the manipulability performance for the end-effector. With the trained model, the robotic arm can replicate human kinematics strategies for performing tele-operation tracking task, achieving human-like arm posture during the whole procedure. The method also takes into account the robot kinematic limitations to ensure online motion smoothness and feasibility for tele-operation.

Conclusions

This article developed an online human-like redundancy resolution system for 2-D tele-operation tracking task based on NN. Joint limitations are considered in the redundancy optimization to make the trajectory feasible and smooth. Simulations and tele-operation tracking tasks were conducted with the redundant robot manipulator LWR4+ to verify the performance and feasibility of the proposed algorithm. Results showed that the robot can achieve human-like motion without exceeding the joint limitations. Tele-operator could just focus on the vision tasks, without paying attention to the real robot arm pose. The developed system could make people (e.g. human nurses) more familiar and comfortable to work with surgical robot in the same workspace. The online redundancy optimization model can also be applied in a wide variety of anthropomorphic robot arms with similar structure. However, the task is conducted in a specified task space that depends on the data acquisition, not working for the whole workspace for robot. For other different tasks or workspaces, the human-like model could be required based on human motion data in the new corresponding workspace. The NN model was only trained with data from one subject. In the near future, more subjects and data would be used to improve the model. And the results will be evaluated with surgeons working in the shared workspace with the popularity of surgical robots in the operating room, it can be expected to see more research efforts expended in this field, not only for tele-operation tracking but also for grasping and handing over objects in human-like manner. The future work could also evaluate the practicality of the proposed method with the participation of real nurse in the operating room.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project has received funding from the European Unions Horizon 2020 research and innovation program under grant agreement no. H2020-ICT-2016-732515.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.