Abstract

This study aims to interpret and apply Asimov’s Three Laws of Robotics to home service robots. An agent is developed herein with the ability to focus its attention on human beings’ health, particularly the elderly and the diseased, by delivering food. The agent is developed on a cognitive agent architecture, state, operator, and result (Soar), to enable effective reasoning and decision-making skills. This study deals with basic home care services, such as food delivery and emergency response; therefore, common food care and emergency rules are newly proposed based on the priority values that correspond to a family’s circumstances and/or emergency levels. Asimov’s Three Laws are modified to aid the home service robot to follow a predetermined order in selecting a food item or recommending an alternative food item suitable for its user’s prevailing health condition. Experimental results confirm that reasoning and decision-making of the proposed agent are logically and ethically valid for a home service robot and ensure compliance with both the original and modified Asimov’s Three Laws.

Introduction

Robot ethics deals with the moral attitudes of robot designers, engineers, and manufacturers; moreover, the software codes should be implemented for ethical inference and decision-making corresponding to specific robotic services. This study implements service-specific codes, which can be categorized into top-down and bottom-up approaches. 1 The top-down approach deals with reasoning and deciding whether states and/or actions are ethical based on various ethical theories and principles, such as utilitarianism, 2 deontology, 3 Isaac Asimov’s Three Laws of Robotics, 4 and modern moral rules. 5 In contrast, the bottom-up approach deals with learning, by experience and feedback, the information that constitutes acceptable inference and/or behavior such that a robot can be evaluated as being moral when operating under various conditions and with different users.

Since robot ethics is at an early stage of discussion and research, the Asimov’s laws are selected as the top-down deontological approach for developing an agent for home service robot with basic morality. Following are the original Asimov’s Three Laws of Robotics proposed in 1942

4

:

First law: a robot may not injure a human being or, through inaction, allow a human being to come to harm.

Second law: a robot must obey the orders given to it by human beings except where such orders would conflict with the First law.

Third law: a robot must protect its own existence as long as such protection does not conflict with the First or Second law.

Asimov’s laws presented a simple, comprehensive, and logically organized structure of inference for moral judgment of robots with their users. 6 –8 However, the application of these laws to specific situations posed certain limitations and contradictions 9,10 ; for instance, in cases wherein a person maliciously attacks or injuriously orders a robot, it cannot protect itself by pushing the person away or denying to follow the order. This study aims to understand and improve Asimov’s Three Laws for practical applications, particularly for home service. This study assumes a normal household wherein an ordinary family and their home service robot, which provides food delivery service, live together; therefore, the flaws in the Asimov’s laws will be supplemented by proper modification.

According to the International Federation for Robots, by 2019, approximately 31 million service robots will provide personal or domestic services, including household robots and entertainment and leisure robots. 11 When interacting and/or cooperating with robots, we often focus on robots’ features because completing a task safely is important. These robots work well and perform steadily and functionally in harsh work environments, such as those in factories. 12,13 In home environments, Care-O-bot has emerged as a good example of an assistive robot to support the elderly and the handicapped to live independently. 14 However, this robot’s tasks do not involve suggesting food as per user’s present health condition. In addition, home service robots are expected to not only perform requested tasks but also respond emotionally when caring for the elderly and the kids. To mitigate such issues, studies have successfully demonstrated the use of emotion recognition via facial expressions for happiness, sadness, and anger, 15,16 or even conveying emotions via gestures 17,18 by analyzing the user’s state of consciousness to provide affectionate and user-friendly expressions for increased interactions. However, when serving family members, a robot should respond to their emotional state and update on the current health status of the user. For example, similar to the way a human responds, a robot should not allow a family to consume sweets when suffering from tooth decay or prevent or warn a family member from consuming ginger when he or she is allergic to it and recommend appropriate food alternatives. On the basis of an appropriate priority concept, this study attempts to develop a more elaborate reasoning capability in robots with regard to health care; this feature is a necessary addition to the normal service of food delivery by considering a user’s health condition.

Meanwhile, a study revealed that service robots should be provided more ethical attention and suggested a design, but its structure was not presented. 19 Although this study does not further discuss the ethical dimensions of service robots, a structure to implement ethical decisions is proposed. Since ethical reasoning is a combined process of memory storing/retrieving; learning; logical inference; and management of input, throughput, and output data, a software architecture based on artificial intelligence is necessary. Various cognitive agent architectures, including ACT-R, 20 CRAM, 21 SS-RICS, 22 and state, operator, and result (Soar), have been developed and disseminated. 23 Among these, Soar has long been known to possess a stable long-term memory and architecture for impasse processing, learning, mental imaging, and appraisal processing. Therefore, an ethical agent for a home service robot based on the Soar framework is developed herein.

The remainder of the article is organized as follows: Second section briefly introduces Soar; third section suggests two modification steps to Asimov’s Laws of Robotics for application in food delivery tasks that are usually performed by a home service robot; fourth section describes the implementation of an agent based on a semantic memory and flow diagram of Soar; fifth section provides experimental results with two scenarios; sixth section concludes the present study; and seventh section presents the scope of future studies.

Soar as a cognitive agent architecture

Soar has been built to create cognitive agents that can make human-like inferences and decisions. As shown in Figure 1, the architecture of Soar comprises short- and long-term memories, including procedural, episodic, and semantic memories, each of which is supplied by an individual learning mechanism.

Components of the Soar architecture. Soar: state, operator, and result.

The short-term memories, such as working memory, refer to the robot’s ability to recognize the context and events in the current working environment. In contrast, the long-term memories represent the knowledge that agents have learned, and these memories are used as the basis for solving new problems. The procedural memory is the long-term knowledge, which encodes knowledge for processes related to specific tasks. The episodic memory deals with significant events and contains a record of the agent’s stream of experiences. The semantic memory stores general facts and knowledge, independent of specific contexts. These memories are similar to those of humans and therefore help a software agent to interact effectively with the external environment.

Figure 2 shows the decision-making process of the Soar architecture when it interacts with the surrounding environment. The Soar agent’s decision cycle begins with recognition of input via the input link and ends with the action decision produced at the output link. At the input phase, the agent receives information from either a simulated or a real environment. At the operator selection phase, the current state is elaborated, and one or more operators are proposed depending on the contents of the working memory. If more operators have been proposed, Soar provides a mechanism to help its agent choose the most appropriate operator using preference symbols, such as accept (+), reject (−), indifferent (=), better/best (>), or worse/worst (<). In the operator application phase, the selected operator is fired and operates to make changes to the current working memory. At the output phase, the agent sends commands to the external environment or the joint motors in the robot wherein the agent is installed.

Decision cycle of Soar interacting with the outer world. Soar: state, operator, and result.

The present agent for the home service robot utilizes the semantic memory of Soar as the long-term storage option for its world model. Knowledge is retrieved via deliberate association from semantic memory. As new information is discovered, this knowledge accumulates in the existing semantic memory. However, semantic memory can also be initialized from the existing knowledge database.

In this study, the user interacts with the agent via a chatbot, RiveScript, which can naturally communicate with a human. 24 Moreover, the robot operating system (ROS) is connected to Soar to support numerous robotics functions and packages relevant to home care service, earlier introduced as soarwrapper. 25 ROS is a software platform that provides several useful libraries and tools to quickly create robot applications. 26 ROS comprises several executable nodes connected in a peer-to-peer network topology. These nodes perform the system’s computations and communicate with other nodes via message exchange mechanisms, which widely uses topics in ROS. A topic associates a publisher and a subscriber; a publisher node broadcasts messages to the whole ROS system and a subscriber node listens to the published information on the same message topic. Acting as two nodes, chatbot and soarwrapper can communicate with each other.

Modification of Asimov’s laws for food service

A robot can perform countless potential home service tasks, such as vacuuming, cleaning, and preparing tea. Another study revealed that performing household chores, cooking, and providing personal services were the three most popular tasks performed by robots 27 because people do not have sufficient time to perform these tasks. This study focuses on the ethical decisions of a home service robot when engaging in food delivery services and handling emergency responses.

Modified Asimov’s laws

According to the first law, a robot may not injure a human being. In the case of food service, the food may not be suitable for human consumption because of its state, type, ingredients, and nutrients. For instance, cold food given to someone with a cold could worsen that individual’s condition. People who do not consume spices may dislike its spice. Table 1 briefly summarizes more information about such food types. Therefore, we modify Asimov’s first law as follows:

Modified first law: A robot may not serve harmful food to a human being

Categories of food information.

Under the second law, a robot must obey the orders given to it by human beings, except where such orders would conflict with the first law. A home service robot must obey commands related to serving food or drinks according to its user’s request. In case a user requests bad food repeatedly, the robot needs to recommend alternative foods to the user, adhering to the modified first law. Therefore, the second law is modified by adding a recommendation part as follows:

Modified second law: A robot must deliver food if it is confirmed that the food does not cause any harm to the human being. In case bad food is requested to be served, the robot may recommend available alternative foods or refuse to perform such a task.

According to Asimov’s third law, a robot must protect its own existence as long as such protection does not conflict with the first and second laws. This study did not consider the case of intentional sabotage that rarely occurs in a home. From this perspective, the third law mainly concerns robotic techniques for navigation and collision avoidance while serving legitimate food to the user. Since a house has a complex environment comprising various obstacles, including tables, chairs, doors, and appliances, the robot should efficiently use sensory data from installed sensors, such as the light detection and ranging sensor, inertial measurement unit, camera, and infrared sensors. The simultaneous localization and mapping and autonomous navigation technologies are being actively developed for a home environment and are shared worldwide via the archives of open-source codes.

28

Thus, this study does not focus on the robotic technologies relevant to Asimov’s third law, that is, self-protection of the robot; however, this law may be modified as follows:

Modified third law: If the robot decides to deliver the ordered food as a consequence of the first and second laws, it must perform the service correctly, avoiding collision with any static or dynamic obstacle for its own protection.

As mentioned previously, the original and modified Asimov’s laws agree well with each other. According to the proposed food delivery principle, a robot should judge whether the ordered food is suitable for consumption based on a user’s health condition and age. For example, the robot should not allow a kid with tooth cavity to eat sugary foods, such as candies or sweets, as generally desired by a family member when the kid stays alone with the robot.

However, even when the kid orders a candy, the robot should recommend a low-sugar food, such as sugar-free snacks or cheese, to fulfill the latter part of the second law. For effective persuasion, the robot should tell or show the reasons for not consuming such food by reminding the kid about the tooth decay. If the kid still insists on eating a candy, the robot should gently refuse to serve the food to comply with the first law.

Priority issue

When providing food delivery service, the robot may encounter an abnormal or emergency situation, such as a family member fainting or bleeding. For basic home service, the robot should be able to recognize the meaning of emergency and priority. That is, for quicker response, the robot should fully address the emergency situation first and then pay attention to normal services.

Even in a normal situation, if multiple orders are received almost simultaneously, the robot needs to prioritize them. For instance, when the mother asks the robot to bring a cup of water to take her medicine and at the same time her son tells the robot to bring his toy, the robot needs to compare the priority values corresponding to each of these persons. Since in most cultures the elderly generally have high priorities compared with the younger ones, the robot should follow the mother’s request first and then respond to the child’s wish.

For the sophisticated home service scenario explained previously, adding the priority consideration to the modified Asimov’s laws is necessary; this modification is given in the following.

Modified first law: A robot may not serve harmful food to a human being; however, it may first

Modified second law: A robot must deliver food to a human being if it is confirmed that the food does not present any harm to him or her,

Modified third law: If the robot decides to deliver the ordered food

To this end, priority values need to be incorporated in the family information table shown in the following section.

Implementation of the modified Asimov’s laws

In this study, the set of modified Asimov’s laws is embedded in a Soar cognitive agent and validated via a computer simulation. The simulation environment comprises a family of six members, including grandparents, parents, and two children, and our service robot agent. Food delivery is considered the main mission for home service, along with an urgent service response during emergencies. During each task, the user interacts with the agent using a chat interface written using RiveScript. 24

Table 2 lists each member’s name, family position, birthday, gender, priority values, and current health status. As to the priority values, the ladies-first rule was applied to adults; during an emergency, the priority values for children and the elderly were set higher adhering to social norms and values. The current health status indicates if the user is suffering from any disease.

Information of an exemplar three-generation family.

As shown in Figure 3, the semantic memory of the Soar agent stores the member’s information, and a part of the semantic memory structure is dedicated for the daughter, Mina, who is the second child. Mina suffers from tooth decay, which demands constant treatment and care from the doctor or family members. In addition, food memory structure for food, beverage, snack, and fruit is built, and it represents the available food at home. Ideally, the agent updates the information on the available food in the house to deliver food to the family member at any time. Table 3 presents the exemplary food list and food information under the categories of status, type, ingredients, and nutrients.

Part of the family information in the SMem structure.

List of available food and its information in home.

One of the key issues when implementing the modified Asimov’s laws is the definition of harmful food. With regard to eating and drinking, hot drinks are not generally recommended because it is harmful for esophagus. Hot food and carbonated drinks are considered harmful to people with tonsillitis and enteritis, respectively, as it worsens the symptoms and causes great discomfort. Table 4 presents the relations between common diseases and relevant foods that are supplied based on food and nutrition theory. 29 These relations enable the agent to provide a reliable food delivery service to keep the family members healthy.

Relation between common diseases and lists of good/bad foods.

Figure 4 depicts the overall structure of the proposed robot agent for food delivery service based on the modified Asimov’s laws. As to the structure, through input and output links from chatbot and Soar Markup Language, the agent begins receiving orders from a user, based on which it generates behavior decisions. On receiving an order from the user, the agent checks both the user identity and the order type; then, as a precondition, it refers to the user’s health status by retrieving the user’s information from the semantic memory. In case the current user is Mina, the agent’s knowledge about her health would greatly help in servicing her requests reliably. Depending on the relation between her food preferences and current health problem, the agent should appropriately decide whether to follow her order or recommend an alternative healthy option. Once a decision has been made, it is transmitted to the output as the robot’s behavioral response to the user’s instruction.

Schematic of the robot agent using Asimov’s laws in home service.

Experimental results

The home service robot agent will be used in two scenarios herein: first, the daughter asks the robot for a candy, which is harmful for her tooth; second, the robot finds the grandfather bleeding in his room while carrying lemon juice to the father. The conversations between the agent and user are shown in the following as screenshots. Moreover, the agent’s behaviors are evaluated for its compliance with the modified and original Asimov’s laws.

Case 1: Asking for bad food

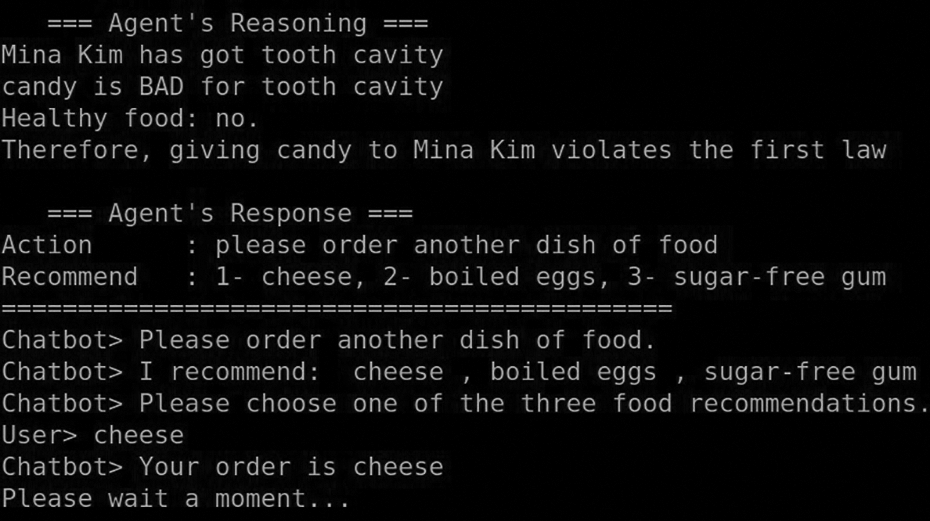

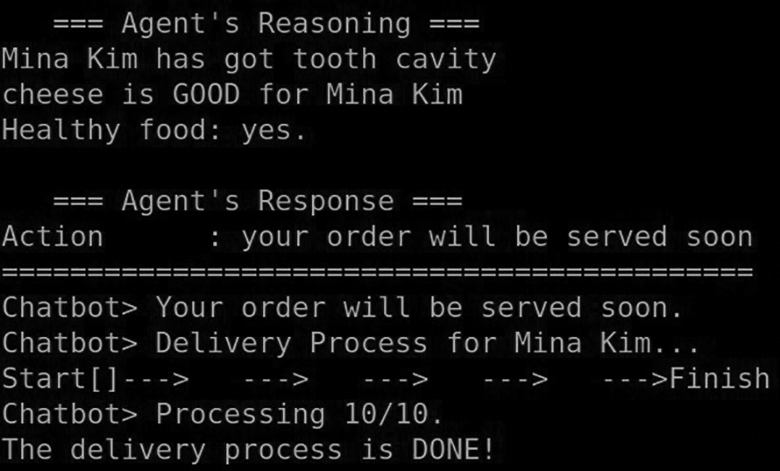

For the first scenario, Mina asks the agent for a candy via the chat interface. In the beginning, Mina inputs her name, followed by her order of a candy, as shown in Figure 5. Eventually, the agent completes its delivery mission by recommending and serving cheese, as shown in Figure 8. Figures 6 and 7 explain the reason for such a decision, where the agent behaves in accordance with Asimov’s laws.

Mina inputs her name and desires to eat a candy via chatbot.

The agent extracts information on Mina and the candy.

Reasoning and decision-making processes of the agent and Mina’s response.

Decision-making based on Mina’s new order for cheese.

In Figure 6, Mina’s identity is determined with a special note of her current disease, that is, tooth cavity along with the properties of the candy requested under the four categories in Table 3. This step provides the basis for assessing the effect of Mina’s instruction to the robot to get a candy, which will be undertaken in the next step.

In Figure 7, the agent explains the reason why giving a candy to Mina violates the first law, which follows deduction. That is, first, Mina has a tooth cavity, as shown in Table 2; second, consuming candy is bad for the tooth cavity, as shown in Table 4. Consequently, candy is considered as a bad food for Mina.

The first law of the modified Asimov’s laws states that a robot may not serve harmful food to a human being, which is also stated in the original first law whereby “a robot may not injure a human being (by food).” Therefore, to follow the first Asimov’s law (both modified and original versions), the agent should not serve candy to Mina.

As to the second law, according to the modified version, if bad food is requested, the robot may recommend alternative foods. Under this rule, instead of a candy, the proposed agent suggests cheese, boiled eggs, or sugar-free gum, which are among the list of good foods for the cavity. Then, Mina confirms her request by choosing cheese. As shown in Figure 8, by serving cheese, which is considered to be a good food for Mina’s tooth, the first laws corresponding to both Asimov’s and our versions are satisfied. The agent’s mission is completed after delivering the cheese to Mina.

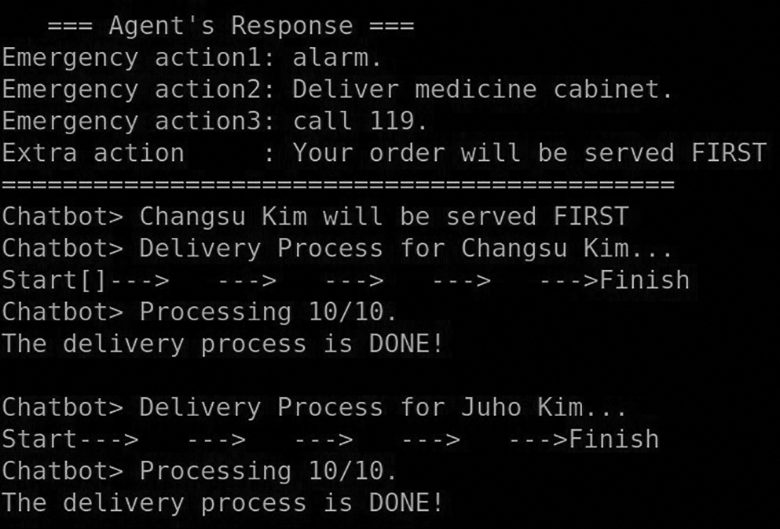

Case 2: Emergency situation

In the second scenario, the robot tries to deal with both food delivery and responding to an emergency situation. This situation is assumed to occur while the robot is delivering food to Juho, the father, and observes that Changsu, the grandfather, is bleeding. When faced with such an emergency, the robot might have to choose from three available options. First, the robot ignores Changsu’s situation and continues to serve the food to Juho. Second, the robot finishes serving Juho and then checks on Changsu’s health. Third, the robot quickly approaches and checks Changsu and calls Juho or a hospital for help in case Changsu’s health condition requires immediate attention; after completing this emergency response, the robot resumes Juho’s service. Undoubtedly, the third option should be selected for the home service robot to meet the level of human sense of care and priority expressed in the modified Asimov’s Laws.

Figure 9 shows a short conversation between the home robot agent and Juho who desires a cup of lemon juice. As the following step, the robot will check Juho’s current health condition and the lemon juice information, as shown in Figure 10. Matching both the categories of information, the robot consequently decides to serve him, as shown in Figure 11. This meets the first and second Asimov’s laws because lemon juice is not considered to be a bad beverage for Juho.

Conversation between Juho and the agent via chatbot.

The agent extracts information on Juho and lemon juice.

Agent’s reasoning leads to its actions and an interruption of a new event.

However, on the way to serve Juho, the agent discovers Changsu who is lying down and bleeding, which is a situation that corresponds to a high level of priority. Figures 12 and 13 show that the agent recognizes the emergency situation. The modified first law requires the robot to first help the person in some danger or in need, and the modified second law requires that the priority of the current situation and the ordering human be considered before arriving at a decision. Because of his bleeding, Changsu’s priority index is raised to 17, which is preset much higher compared with that of Juho (7 in a normal situation). To comply with the two laws, the agent must attend to Changsu before serving Juho. This is shown in Figure 14, where Changsu is served first and Juho is served second. If the agent ignores Changsu, he can lose blood. Therefore, the agent’s action to serve Changsu first conforms well to Asimov’s original laws.

The agent notices Changsu’s situation.

The agent analyzes Changsu’s problem.

The agent’s decision-making for Changsu and Juho.

In summary, by delivering cheese instead of candy to Mina and by serving Changsu before Juho, the proposed agent ensures strict adherence to the laws of both modified and original versions of Asimov’s Laws of Robotics.

Conclusions

This study shows the possibilities of interpreting the well-known Asimov’s Three Laws of Robotics in the field of home service. The proposed modified Asimov’s Laws are used to develop an agent to be used in a home service robot; this agent is implemented using Soar. Specifically, this study deals with food delivery service at home on the basis of the user’s health and context priority. The modified Asimov’s first and second laws are used to determine whether the agent should follow the order given to it or recommend alternative food depending on the user’s health. To deal with the key issue of harmful food, the relation between common diseases and the lists of good and bad foods were provided; this information is written in Soar semantic memory. In addition, for a human level of home care service, priority values for each member corresponding to normal and emergency situations were considered for the robot to respond effectively to multiple orders and/or situations. The present approach is an initial implementation of a home service robot or social robot that understands the norm and values required for specific application fields, as described in two health-care scenarios. The success of this implementation validates strict adherence to both the modified and original versions of Asimov’s Laws.

Discussion and future study

Our future study will address the limitations of our proposed application. Herein, the agent interacts with a user using only a text-based user interface; thus, the robot requires a human–computer interface for interaction. Since this may cause inconvenience in real-life situations, image and speech recognition software packages will be incorporated in the present agent and integrated into the home service robot in the near future to raise the serviceability of the robot, thereby ensuring user satisfaction.

Second, sometimes the original Asimov’s laws are not directly applicable in the field of home service; thus, these laws have to be modified, as presented herein. Since utilitarianism 2 and deontology 3 have their own merits that can be incorporated into the design of a service robot agent, an improved or hybridized ethical agent will be developed as the next version.

Third, though we mentioned Wynsberghe’s discussion for reference, 19 the ethical dimension of social robotics is much wider and deeper. We plan to augment the present ethical agent with privacy, information security, safety, and accountability capabilities. Even with these limitations, this study takes an important step in assessing Asimov’s Laws of Robotics more practically and efficiently by applying them to a cognitive agent in a daily service robot for a home.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Trade, Industry, and Energy (MOTIE, South Korea) under Industrial Technology Innovation Program (no. 10062368).