Abstract

To address the localization problem for a mobile robot in an indoor environment, an improved visual localization system based on artificial markers is proposed in this article. First, we will present a novel artificial marker which can be detected and identified easily and correctly. We will then introduce how it is designed, recognized, and verified. The markers are arranged on the ceiling and the mobile robot moves around on the ground to capture images with an up-facing monocular camera. The markers’ information, including the position and direction, will be obtained by processing the images. The camera’s uncertainty model is then put forward based on the distortion of the camera. The uncertainty of the obtained markers’ information is analyzed through the uncertainty model. Finally, with the obtained information, the marker map in global image coordinate system is established and optimized through a graph-based algorithm in which the edges can be updated to reduce the uncertainty using Bayes Estimation method. To verify the effectiveness of the localization system, numerous experiments have been conducted. Additionally, the proposed method has also been applied to industrial forklift to test its robustness in a factory environment.

Introduction

In the field of mobile robotics, self-localization is one of the most challenging issues, particularly in dynamic environments. Due to an extensive and wide range of solutions, the localization problem has always been widely investigated in autonomous robotics. 1 –4 During the last few decades, many kinds of techniques and methods have been developed with varying levels of acceptance, such as dead reckoning based on an odometer, 5 algorithms based on wireless sensor networks, 6 inertial sensor-based algorithms, 7 and map-matching algorithms based on vision. 8

Among all available sensors, cameras have been increasingly applied to vision-based mobile robot localization due to their low prices, ease of use, and ability to offer abundant information. Generally, the information of the environment could be extracted from the captured image and utilized to determine the position and orientation of a mobile robot through visual positioning method. Recently, the vision-based simultaneous localization and mapping (VSLAM) approach has drawn a lot of attention in the mobile robotics field. And it can give an autonomous robot the abilities to explore an unknown environment and localize itself while building a reliable map. Several visual simultaneous localization and mapping (SLAM) systems have been developed and widely used in application, 9 including the MonoSLAM, 10 the PTAM, 11 the FrameSLAM, 12 or the systems based on the G2O framework. 13 Most of them use the features of environment 14 –16 and are usually carried out using natural or artificial markers. It is difficult to detect and compare the natural features of a noisy environment. In contrast, the detection of artificial markers is much easier, as they are designed with a predetermined color, size, and shape. Therefore, artificial markers are commonly used for navigation and map building in indoor environments. 17,18 They can increase the accuracy of localization for vision-based mobile robot guidance systems.

Artificial marker-based localization systems have been developed in recent years. 19 Wen et al. 20 designed a ceiling- or wall-attached landmark that is pentagonal and identified by binary BCH code. 21 It located a mobile robot based on the homography between the landmark plane and the image plane. The average robot’s speed could reach 0.18 m/s. Wu et al. 22 designed new artificial landmark based on QR code technology and proposed a localization algorithm based on vanishing line principle. Okuyama et al. 23 achieved positioning of a mobile robot through the VSLAM technique based on the newly developed artificial markers. Based on artificial landmarks, Aleksandrovich et al. 24 developed a machine vision system, which identified artificial landmarks on images from a video camera with pan–tilt mechanism and could eliminate course deviation calculated on the basis of information about artificial landmarks. To reduce the interference of illumination variation, visual localization approaches based on infrared markers have been attracting more attention. 25,26 Ren et al. 27 realized the localization of the robot using light-emitting diodes as artificial landmarks. Sultan et al. 28 designed a kind of artificial marker based on an infrared dot matrix and presented the corresponding algorithm to identify the marker. Zhou et al. 29 provided an embedded visual localization system composed of an infrared-reflective artificial marker pasted on the ceiling and an image acquisition and processing unit installed on the robot. This method calculated the position of the robot through Perspective-3-Points algorithm.

In this article, we propose a novel localization system based on the newly designed markers. The marker, which should be attached on the ceiling, can be recognized and identified correctly with high speed. There is no strict requirement for the arrangement of the markers. When the robot moves around on the ground with an up-facing camera, the system learns the distribution of the markers and builds a corresponding marker map in the image coordinate. The position of the robot can then be calculated through the map. In addition, the G2O framework is used to further optimize the accuracy of the marker map. And, the camera’s uncertainty model is also built based on the camera distortion, which could be used to update the edges in G2O framework. The new system has been evaluated through experimentation and the results show that it can meet the industrial requirements in localization accuracy and frequency.

The article is organized as follows. In the next section, we will present the new artificial marker and introduce how it is recognized and verified. The uncertain model of the camera is defined in the third section. The localization system is described in the section “Multi-marker based visual localization system”. In the section “Experiments and results”, experiments are conducted and the results are presented.

Design and recognition of artificial markers

For convenient localization, an artificial marker should have a special identity (ID) and directionality. The markers are also required to be easily and reliably detected and identified. To improve the real-time performance in the localization system, the markers’ information should be extracted and recognized quickly and correctly. This part will introduce a new kind of artificial marker which can meet all these requirements. It also offers a robust marker recognition algorithm, which sufficiently considers the external disturbance, such as the varying illumination conditions and image blurring which may be caused by the robot’s movement.

Design of artificial markers

In our design, an artificial marker is a planar square pattern consisting of a set of small squares which could be encoded. The outer part can isolate the marker from the background. If the background is a dark color such as black, the outer part could be a light color such as white and vice versa. In this article, we use two colors which can form a strong contrast to encode the ID of the marker. For example, in factory experiments, the ceiling’s color is dark gray, so we use the white on the outer. For the inner part, a white square represents 0, while a black square represents 1. Unless otherwise stated, we will take this marker as the default in the following part.

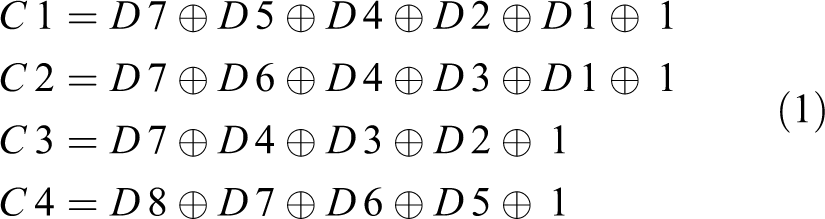

The four parts V1–V4 can indicate the direction of the marker by coloring V1 black and the rest white. The marker is encoded with 12 binary hamming codes of which C1–C4 are parity bits and D1–D8 are data bits. The marker we will use has eight data bits, and the total useful number of IDs is 28 − 1=255, which could cover 140,000 m2 in area. We present the ID number in binary, and D1 denotes the lowest bit while D8 indicates the highest bit. As is shown in Table 1, the parity bits are associated with the data bits. From the table, we can see that the parity bits C1–C4 are only associated with themselves and can represent each different binary bit position. It means that C1–C4 indicate the first, second, third, and fourth bit of the binary bit position from the left, respectively. The other data bits D1–D8 are associated with them according to their bit position in binary form. Then, we will set each of the parity bits to be a certain data to make sure that they and their associated data bits can satisfy the parity check rule which, for example, can be as equation (2). For example, the left first bit of the binary bit position of D1, D3, D4, D5, and D7 is one, so, they are associated with C1. When we use the odd parity check, the parity check rule is set to be as

The relationship between data bits and parity bits.

× means that they are associated.

where the operator ⊕ means nonequivalence operation. If any one of D1, D3, D4, D5, and D7 are wrongly detected, the rule cannot be met.

In this way, the number of C1–C4 could be calculated by the following formulas

When the marker is encoded in the way described above, we could get the 12 binary data bits associated with the inner part. Then, we color the squares with black when the corresponding data bit is 1, while others are kept white. For example, the ID number of Figure 1(c) is 1. The outer contour of the outer part has four vertices, P1–P4. P1, which is nearest to the V1 part, is defined as the origin point. The direction of X-axis is the same with the vector

Design of the artificial marker.

The size of the marker is determined based on the height of the ceiling. The higher the ceiling is, the larger the marker should be. There is no strict requirement of the size. But it would be better if the size of the marker’s edge was more than 80 pixels long.

Extraction and recognition of the marker

In this process, we can get the marker’s ID, origin point, and direction information in the image. The markers are identified in three main steps: (1) marker extraction, which offers the marker candidates; (2) marker verification, which identifies the marker from the candidates; (3) information acquisition, including the ID number, the position, and the direction information of the marker.

Marker detection: In this step, the image is analyzed in order to find square shapes that are candidates to be markers. First, we convert the captured color image into a grayscale image and turn it into a black-and-white image through the method of adaptive thresholding. For each pixel in the image, the threshold value is set to be the weighted sum of neighborhood values, where weights are a Gaussian Window. If the pixel value is below the threshold value, it is set to the background value; otherwise, it is set to be the foreground value. Then, the contours are extracted from the threshold image and those which are not convex or not approximate to a square shape are abandoned. Some extra filtering processes are also applied to remove contours that are too small or too big or too close to each other. Finally, four vertices of the remaining contours are obtained, which will be used in the next step. Marker verification: After marker detection, it is necessary to judge if they are real markers or not by analyzing their coding parts. This step involves extracting the data bits of each marker. To do so, firstly, perspective transformation is applied to obtain the marker in canonical form. The canonical image is binary processed with Otsu and divided into different parts according to the marker size as shown in Figure 2(e). The proportion of black or white pixels in each part is calculated to determine whether it is a white or a black bit. Then, 16 data bits corresponding to the 16 parts of the marker are extracted from the image. In addition, the 12 pieces of inner data can be used to determine whether the ID is valid. If the candidate is a true marker, the conditions below should be satisfied

When the equations cannot be satisfied, the marker is not valid or wrongly recognized. Information acquisition: After marker verification, we can calculate the marker ID according to the weight matrix of the marker circles

where

The steps of the marker detector.

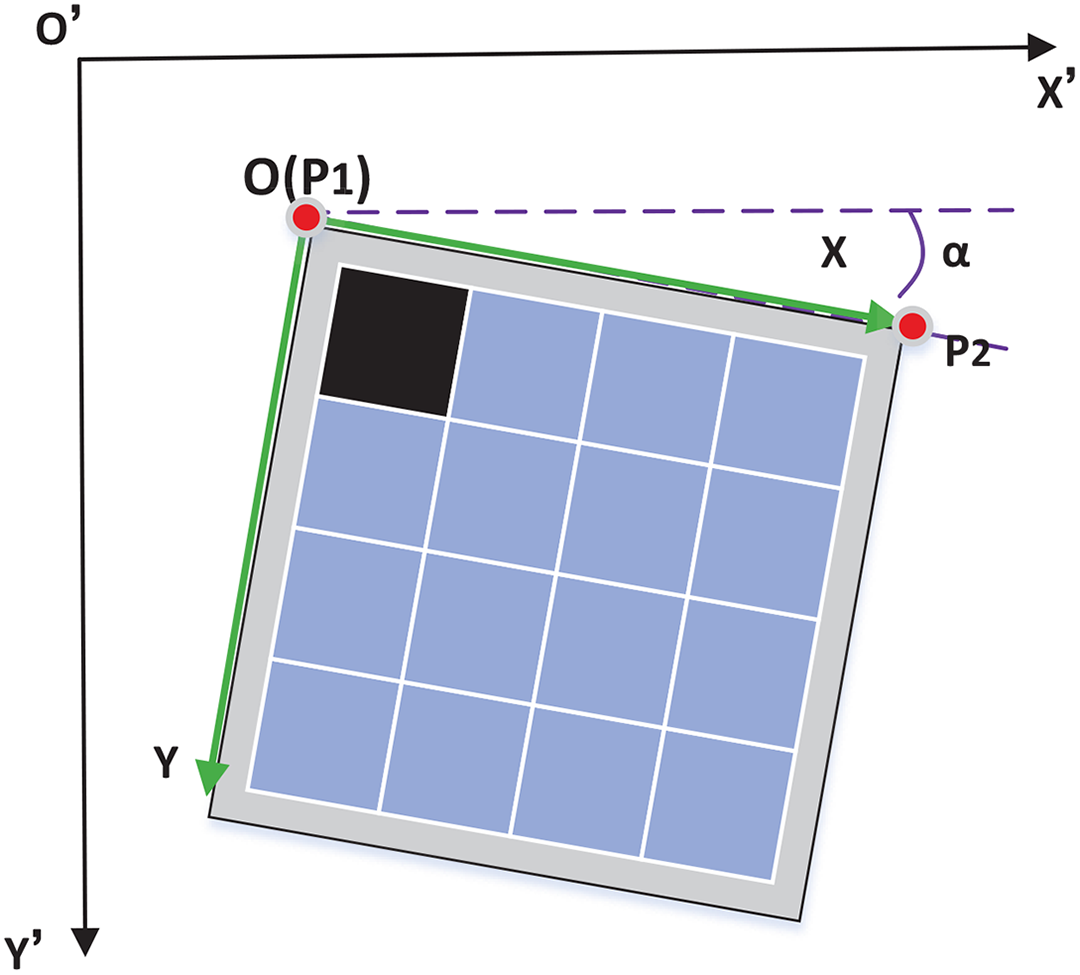

As shown in Figure 3, the marker coordinate system XOY is established in the current image plane X′O′Y′. The direction of vector

The relationship between the marker’s coordinate and the image frame.

The uncertainty model of the camera

Because of the distortion of the camera, the information we directly get from the captured image is not accurate. Usually, we correct the image before processing it. However, this is complex and time-consuming. In this article, we denote the information by the obtained data and a corresponding variance. In this section, we proposed an uncertainty model of the camera according to its distortion.

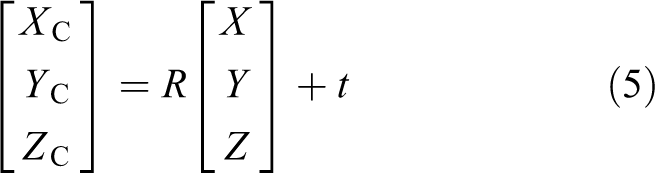

As we know, the relationship between the world coordinate system and the camera coordinate system can be described as

where

The transform relationship between the physics camera coordinate system and the pixel coordinate system is presented as follows

where

where

Then, we can get

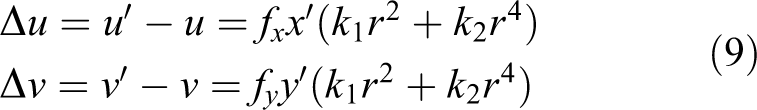

However, there exists some distortion in the camera lens, including the radial distortion and tangential distortion. The tangential distortion can be ignored, because it is very small relative to the lateral distortion. Then, the pinhole camera model has been extended as follows

where

The model of radial nonlinear distortion of the camera can be described as

From equation (9), we can know that the uncertainty of one pixel gets bigger when its distance to the image center gets longer, and their relationship is nonlinear.

For a Gaussian distribution

For a vector, such as

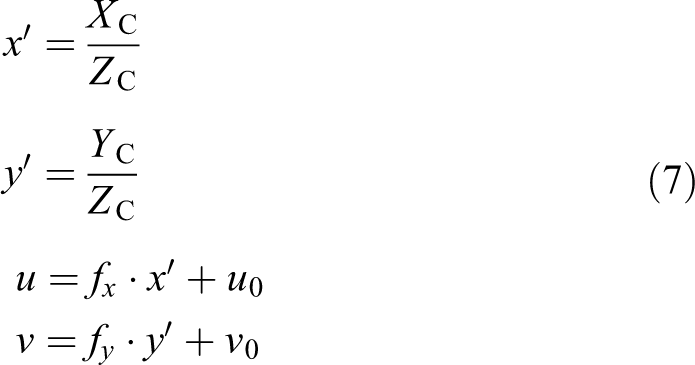

We define θ as an angle which indicates the direction of vector

The process to estimate the variance of θ.

where

Then, according to the 3σ rule, the variance of θ is as follows

The difference of two angles θ1 and θ2 whose variance are

And the variance of Δθ can be calculated as

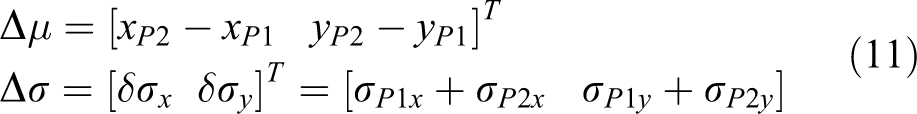

In this article, Δσ and

Multi-marker-based visual localization system

To realize the localization indoors, the newly designed markers are fixed to the ceiling, while the mobile robot moves around with an up-facing camera on top of it for image capturing. In this article, we regard the camera’s location as the robot’s location and use SLAM technology for localization. As we know, once the markers are arranged, their locations are normally unchanged. On that basis, we have put forward a newly designed localization system which can simplify the traditional SLAM method. As shown in Figure 5, the system only builds the marker map in global image coordinate. And the graph-based algorithm with G2O framework is used to optimize the marker map. Here, the markers’ positions indicate the nodes, and the relations between different markers represent the edges of the graph. Besides, in order to increase the positioning accuracy, an uncertainty model is proposed to take the influence of the camera’s distortion into account.

The flow chart of the localization system.

Parameter determination and coordinates establishment

For the indoor mobile robot working in planar floor, there are only the motions moving along

where

Above all, we define

The establishment of the coordinate systems.

After the coordinates are established, the first detected marker is added to the marker map. When a new marker is detected, there should be another marker, which is already in the marker map, in the same image. In this way, the initial position of the newly detected marker can be calculated and then it is added to the marker map.

The coefficient k can be calculated when the first marker is detected in the obtained image. As the actual size of the marker is known, we can easily get its area Sr in square millimeter. When the image is processed, the marker’s area SI in pixels can be obtained. Then, k can be calculated as follows

Graph-based optimization

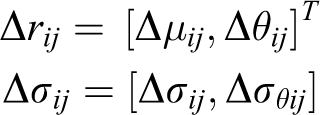

To improve the quality of the marker map, a graph-based optimization algorithm is proposed. Once the global image coordinate system is established, the marker map in it can be built. The positions of markers are set to be the nodes of the graph, while the relative location relationships between each pair of the markers form the edges or constraints of the graph. Usually, there exist several edges between each two markers, because they can be seen from different points of view. However, the calculating time of the algorithm is proportional to the number of edges. It is too time-consuming for real-time application. But if we randomly choose one edge which may have a larger error, then an inaccurate marker map will be generated. Here, we propose a new method which can keep one edge between each two nodes while taking into consideration of all the edge information and calculate the edges using the Bayes Parameter Estimation method.

As shown in Figure 7, the position and direction of

The building of the marker map through the graph-based method.

where

When we acquire another piece of information on the same edge presented by the measured data and uncertainty

In this method, we update the edges when new data are acquired, instead of adding all the edges into the formulation. This will lead to reduction of the processing time and improved accuracy of the marker map.

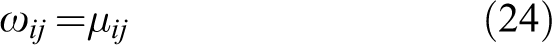

With the updated edges, the graph-based optimization can be done in the next process. Pose graph is very convenient and intuitive to represent the relative position between different markers in global image coordinate system. In general, graph optimization is a nonlinear optimization problem, where each node represents the parameter (marker’s pose) to be optimized, and each edge represents the constraint. Let

The amount of errors introduced by each constraint weighed by its information matrix can be calculated by

Therefore, assuming all of the constraints to be independent, the overall error is

Ultimately, each marker pose can be estimated by minimizing the cost function, and the solution to the graph-SLAM problem is to find a state

In our system, each marker’s pose and observation constraint is defined as

and

where ti denotes the marker’s position

The error function could be expressed by

where Ri is a 2 × 2 rotation matrix. It can be written as

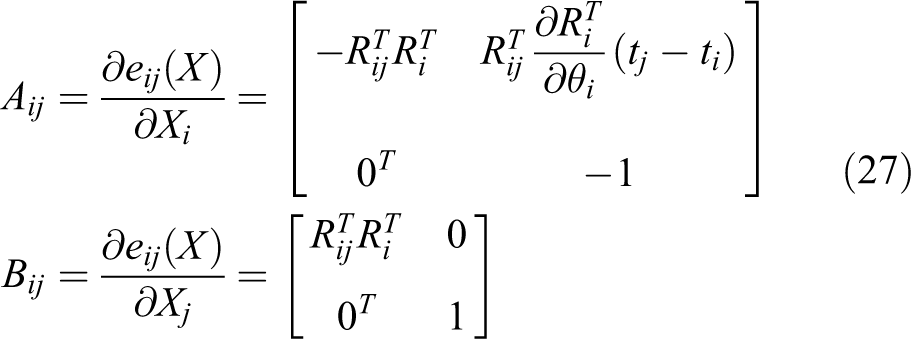

The corresponding error function Jacobian matrix is

Iterative approaches such as Gauss–Newton or Levenberg–Marquadt can be used to compute the optimal state estimate. G2O is an open-source C++ framework for optimizing graph-based nonlinear error functions, such as equation (25) in our method. It is usually used to find the configuration of parameters or state variables that maximally explains a set of measurements affected by Gaussian noise. G2O has been designed to be easily extensible to a wide range of problems, and a new problem typically can be specified within a few lines of code. The marker map is built as a graph, and it can be optimized using the G2O framework. After an optimization iteration, the positions of the markers in the map are modified. Once a new marker is added or the edge is updated, the graph will be optimized.

Localization

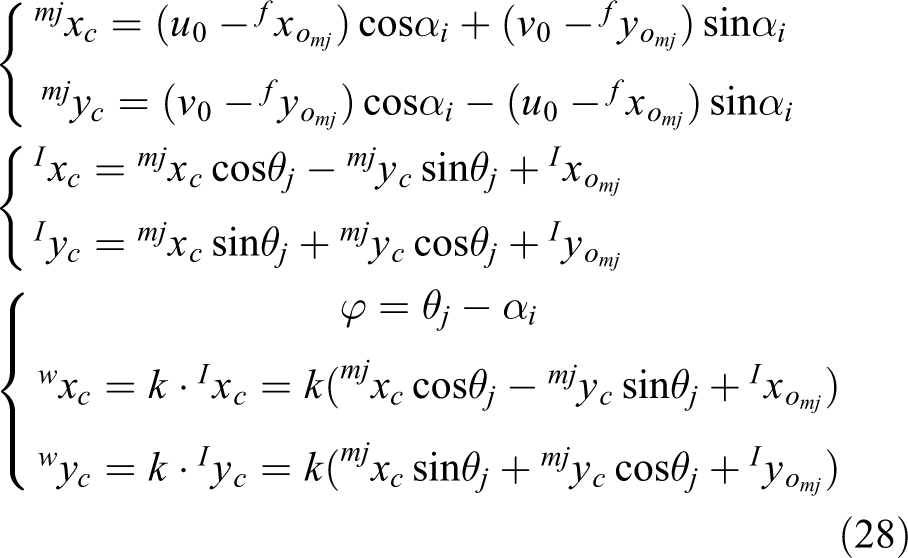

During the period of marker map establishment in global image coordinates, the robot can calculate its position at the same time. Figure 8 shows a sampled moment when the jth marker is viewed and the positioning process is shown below

Part of the marker map and its relationship with the current image frame at a sampled moment.

where

Experiments and results

To verify the accuracy and robustness of the proposed system, the experiments have been conducted in both laboratory and factory environments, as shown in Figure 9. They are indoor environments where ceiling and floor are parallel to each other. A set of newly designed markers were attached to the ceiling.

The localization system in laboratory (right) and factory (left) environment.

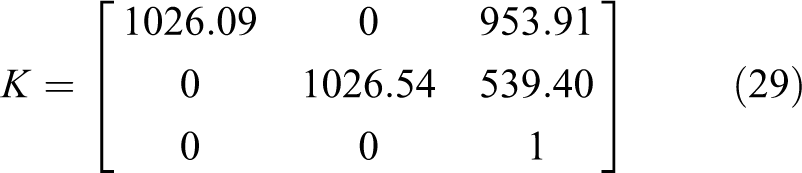

A general industrial camera (with the resolution of 1920 × 1080 pixels) was mounted on the top of the mobile robot, facing upward to capture the markers attached to the ceiling. An on-board laptop computer, with Intel Core 2 Due 2.0 GHz CPU and 2G RAM, was used to process the captured images, estimate the robot’s position and orientation, and thus control the movement of the robot.

The internal matrix of the camera needs to be calibrated before experiments. The camera calibration algorithm 30 is applied with MATLAB, using 20 chessboard images taken from different angles as a calibration template. After calibration, the internal matrix of the camera is

Artificial marker identification experiment

The reliability of the marker recognition has been tested by analyzing the marker detection rate and the marker identification rate. The detection rate indicates the ratio of detected markers to the total number of captured markers. The identification rate means the ratio of correctly identified markers to all the detected markers. In this article, 856 images captured from both the laboratory and the factory environments are tested. There are 1216 markers in these images, and 1188 of them have been detected. In addition, they were all identified successfully. The results are listed in Table 2. Some images were captured in the challenging environment and the makers in them could hardly be detected. So, the misrecognition rate reaches 2.3%. The marker identification rate is 100%, which means information of the finally recognized marker is highly reliable.

The reliability of marker recognition.

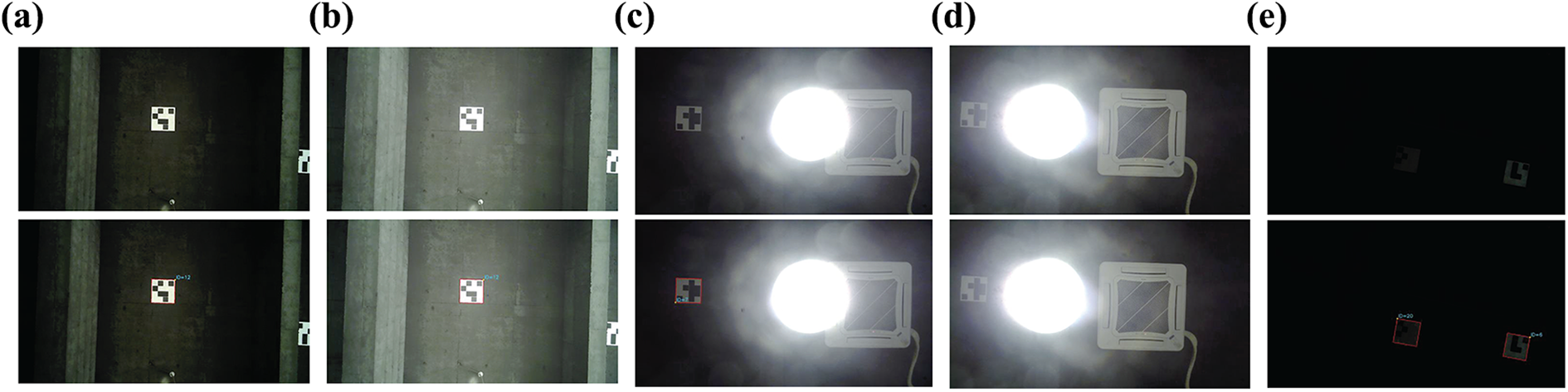

Furthermore, we also analyzed the robustness of the recognition algorithm. We processed the images captured in different illumination conditions. The results are shown in Figure 10. Figure 10(a) shows the normal condition. Figure 10(b) indicates the recognition results under the influence of sunlight, which shone in from the window. Because our system is used in indoor environments, the influence of the sunlight is weak. So, the markers can be recognized easily and accurately in this situation. The influence of the lamp light was also tested in the experiment. We turned on the lamp hanging from the ceiling and moved the robot around to analyze the effect of lamp light. Lamp light has a great impact on the recognition process, but our method can deal with most of the challenging situations. Figure 10(c) shows one sampled image. It can be seen that the marker in it can be recognized correctly. But, when the robot got too close to the lamp, the marker cannot be recognized, as shown in Figure 10(d). So, this factor should be considered when planning the trajectory of the robot. We also processed the images captured in dark environment and our recognition algorithm work well in usual situations, as shown in Figure 10(e). In reality, when it is too dark, the lamp will be turned on, so there is no strict requirement in dark environment. From the experiments, we can learn that the proposed recognized algorithm can work well in most of the challenging illumination conditions.

Marker identification in different illumination conditions. (a) normal, (b) sunlight, (c) lamp light, (d) lamp light, and (e) dark.

In addition, we have tested the recognition algorithm in blurred images. Image blurring can be caused by many reasons, of which the most important one is the movement of the mobile robot. When the robot moves at a higher speed, the vibration of the robot will also be more obvious. The high speed and the vibration can cause the image blurring. As shown in Figure 11, the faster the robot moves, the more blurred the images are. However, our markers can be recognized even when the velocity is up to 2.5 m/s, which absolutely meets the application requirements. These evaluation results show the robustness and accuracy of our marker recognition algorithm.

The marker identification in blurred images of varying degrees. We processed the captured image when the velocity of the mobile robot was (a) 0 m/s, (b) 1 m/s, (c) 2 m/s, and (d) 2.5 m/s.

Marker recognition frequency and the mobile robot’s speed can affect the positioning accuracy. The positioning error caused by the two parameters can be described as

where

In industry, the maximum speed of the robot may be supposed to be more than 1.5 m/s. The maximum allowable positioning error at this speed should be less than 20 cm. So, Tm should satisfy the following condition

At the end of the trajectory, the localization error may be supposed to be less than 10 cm, and the speed at this situation can be 0.5 m/s. So, Tm should satisfy the following condition

As a result, Tm should be less than 0.13 s. In the experiment, we recorded the marker recognition time of 530 images. The average processing time is 0.016 s per frame, which can totally meet the requirement.

Mapping and positioning experiment

Further experiment has been conducted to test the accuracy of the localization system. In the experiment, we let a robot equipped with an up-facing camera move around on the floor and traverse the working area. After that, the marker map was built using different methods. It was convenient to measure the real position of the robot in this environment. Forty-three actual positions in this place were measured with a ruler whose minimum scale is millimeter. The results of the experiment are shown in Figure 12, of which (a) shows the marker map and (b) indicates the corresponding localization results of 43 sampled positions. The green graph indicates the actual values.

The experiment results. (a) The marker map in global image coordinate established with different methods. (b) The corresponding positioning results. (c) The time consumption of different methods.

In Figure 12, the black ones are the localization results of the visual odometer method. This method determined the position and orientation of the robot by analyzing the associated two consecutive images. However, it did not consider the loop closure of the map. As a result, this led to a cumulative error in the localization process.

The other two methods took the loop closure detection into account to reduce the accumulated error. The blue drawing shows the performance of the traditional G2O method whose edges included all the obtained relative information between any two nodes. The red ones present the results of our proposed method. It is a kind of improved G2O method which could use the Bayes Estimation method to reduce the edge’s uncertainty when more information is gained. From the figures, we can see that the results of the two graph-based methods both had higher accuracy and were more accurate than the visual odometer method. As shown in Table 3, the average positioning errors of graph-based method are 23.5 mm and 21.4 mm, which is much less than the 69.8 mm of the visual odometer method. Additionally, the graph-based method has also done a better job in orientation performance.

Localization accuracy.

As for the two graph-based methods, our proposed method performs better in both accuracy and time efficiency in contrast to the traditional G2O method. In our method, with the uncertainty model, the obtained information with high confidence could contribute more to the edge. However, in the traditional method, every edge, including the one with small confidence, has the same weight. So, our method has a more accurate localization performance, as shown in Figure 12(b) and Table 3 of this article.

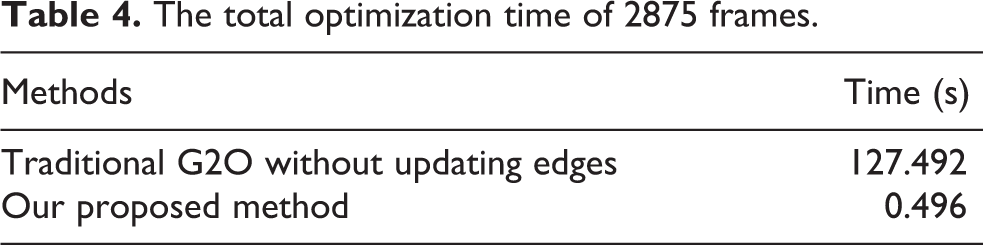

For more accurate localization, the traditional G2O method would consider all the obtained information by adding all the edge information into the graph. That is to say, there exists more than one edge between each two nodes. The more edges in the graph there are, the more time it will take to do the optimization. So, the optimization time of the traditional method will keep increasing when building map. In our method, there exists only one edge between any two nodes, and the edges take all the acquired information into account. When all the nodes are added to the graph, the number of edges is determined and the optimization time of the proposed method will not increase. In the experiment, we captured 2875 frames to test the time-consuming problems of the traditional G2O method and our proposed method. The results are shown in Figure 12(c) and Table 4. When the optimization time of the traditional G2O method reaches 0.1 s per frame, the optimization time of our method is still low, as shown in Figure 12(c). The total optimization time of all 2875 frames of our method is 0.496 s. This is far less than traditional methods’ 127.492 s, as shown in Table 4. So, we can conclude that our proposed method can greatly improve the real-time performance of the robot with less optimization time.

The total optimization time of 2875 frames.

From the experiments, we can see that our proposed system not only improves the localization accuracy but also has an improved time-efficiency.

Robustness test in a factory

In this part, our proposed system was used in a forklift, in which the system provided the localization information. The forklift was required to pick up the goods shelf at one specified location and carry it to another. The location error needed to be less than 100 mm and orientation error less than 2°.

In the experiment, we first used our proposed method to build the marker map of the factory. Then, a human driver drove the forklift to perform the transportation work, in which the starting and ending points were accurately measured. During this teaching period, the route was recorded as the planned trajectory by our localization system. Then, the forklift controlled itself along the planned route, and the self-driven trajectory was recorded. The motion curve is shown in Figure 13. The black line indicates the real route edges in the factory. The red line represents the planned route, and the self-driven trajectory is represented by the green line. As shown in Figure 13, there is little variation between the planned trajectory and the self-driven trajectory. From the observation of the movements of the forklift when it is working, we can see that the self-driven trajectory is within reasonable range. From the observations and results of the experiments, it can be concluded that our localization system and the navigation system based on the localization system work very well and can meet the real application requirement. The maximum speed of the forklift could reach 2.5 m/s, which can afford the normal use in industry.

The motion curve of the forklift.

In contrast to the whole trajectory during the transportation, the localization accuracy in the specified locations, such as the starting and ending points, is required to be higher and more robust. So the transformation was conducted (Figure 14) for 50 times to test its repeated positioning accuracy in the two specified locations. The results are shown in Table 5. During the experiments, our system went very well. From the table, we can see the maximum positioning error is 79.3 mm and the maximum orientation error is 1.36°. So, our system can clearly satisfy the requirements of the industrial environment.

The process for a forklift to carry goods shelf to specified place. (a) The location where to pick up goods shelf. (b) to (e) The process of carrying goods shelf. (f) The place where the goods shelf should be put on.

Localization accuracy in factory.

Conclusion

This article develops an improved artificial marker-based localization system for indoor operation of mobile robots. Firstly, we designed a novel kind of marker which can be detected and identified rapidly and correctly. The markers can be arranged on the ceiling and a robot with an up-facing camera moves around on the floor capturing images. The captured images are processed to get the markers’ information. Secondly, the uncertainty of the camera has been modeled according to the distortion of the camera. The uncertainty of the obtained markers’ information is analyzed through the uncertainty model. Finally, the graph-based optimization algorithm is used to do the loop closure job. In this process, the uncertainty of the edges can be updated through the Bayes Estimation method, as more edge information is obtained. This process can eliminate the accumulated errors, thus improving the localization performance. Results gathered from experimentation have verified that the newly designed marker is robust in different environments and it never recognizes other objects as markers. So the localization system will not make huge mistakes. Additionally, the accuracy of the system was also demonstrated in a laboratory environment, as well as testing its robustness by an industrial application.

In future work, we will analyze the other factors that may have affected the mapping and positioning, such as the image blurring, illumination of the environment, and the uneven ground. Other sensors, such as odometers, can also be used to improve accuracy.

Footnotes

Acknowledgements

The authors would like to thank the National Natural Science Foundation of China and the Hangzhou Civic Significant Technological Innovation Project of China for supporting this work.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was financially supported by the National Natural Science Foundation of China (grant no: 51521064), the Hangzhou Civic Significant Technological Innovation Project of China (nos: 20131110A04, 20142013A56).