Abstract

The Internet of Robotic Things is an emerging vision that brings together pervasive sensors and objects with robotic and autonomous systems. This survey examines how the merger of robotic and Internet of Things technologies will advance the abilities of both the current Internet of Things and the current robotic systems, thus enabling the creation of new, potentially disruptive services. We discuss some of the new technological challenges created by this merger and conclude that a truly holistic view is needed but currently lacking.

Keywords

Introduction

The Internet of Things (IoT) and robotics communities have so far been driven by different yet highly complementary objectives, the first focused on supporting information services for pervasive sensing, tracking and monitoring; the latter on producing action, interaction and autonomous behaviour. For this reason, it is increasingly claimed that the creation of an internet of robotic things (IoRT) combining the results from the two communities will bring a strong added value. 1 –3

Early signs of the IoT-robotics convergence can be seen in distributed, heterogeneous robot control paradigms like network robot systems 4 or robot ecologies, 5 or in approaches such as ubiquitous robotics 6 –8 and cloud robotics 9 –12 that place resource-intensive features on the server side. 13,14 The term ‘Internet of robotic things’ itself was coined in a report of ABI research 1 to denote a concept where sensor data from a variety of sources are fused, processed using local and distributed intelligence and used to control and manipulate objects in the physical world. In this cyber-physical perspective of the IoRT, sensor and data analytics technologies from the IoT are used to give robots a wider situational awareness that leads to better task execution. use cases include intelligent transportation 15 and companion robots. 16 Later uses of the term IoRT in literature adopted alternative perspectives of this term: for example, one that focuses on the robust team communication, 17 –19 and a ‘robot-aided IoT’ view where robots are just additional sensors. 20,21

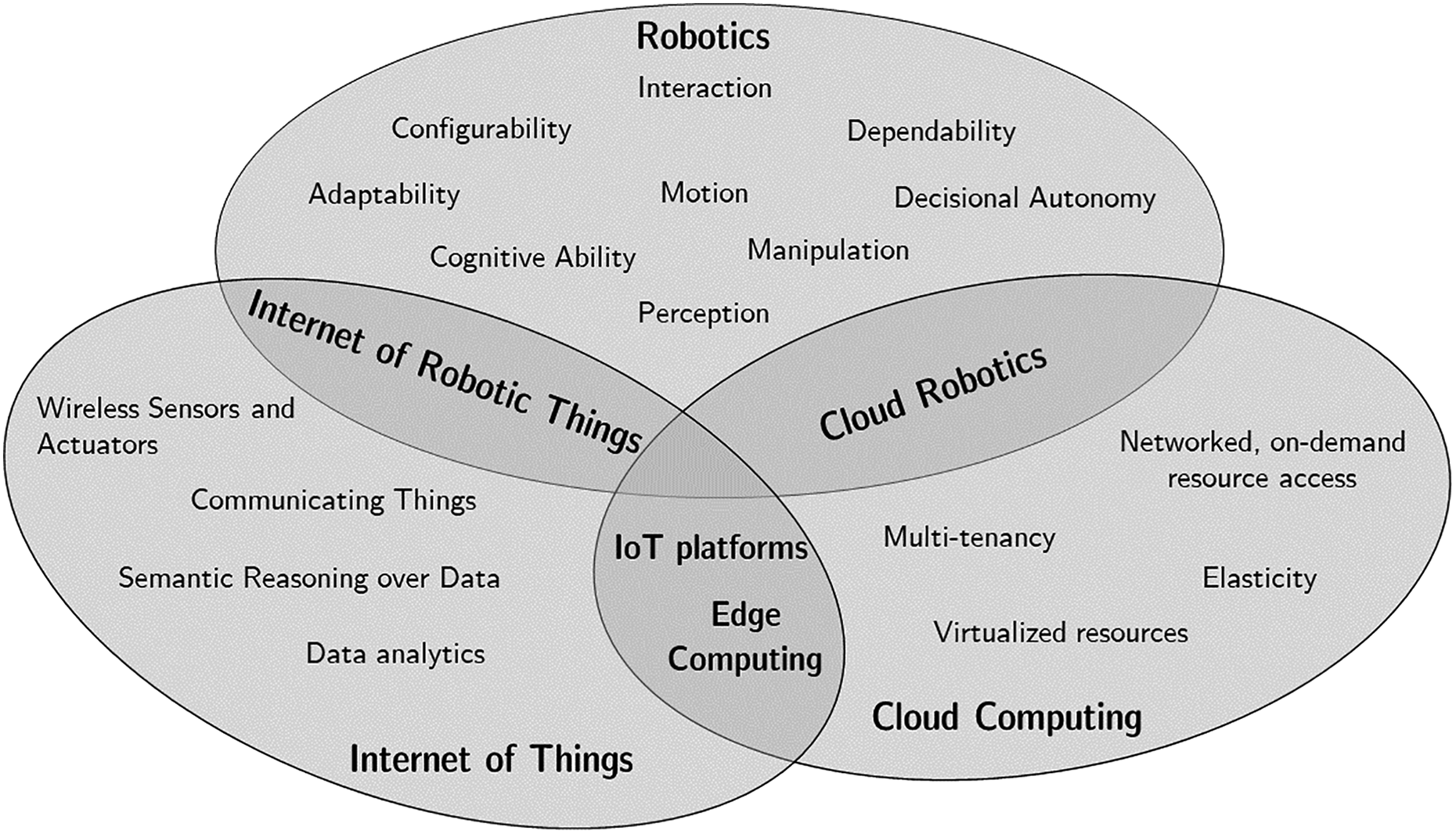

Cloud computing and the IoT are two non-robotic enablers in creating distributed robotic systems (see Figure 1). IoT technologies have three tenets 22 : (i) sensors proliferated in the environment and on our bodies; (ii) smart connected objects using machine-to-machine (M2M) communication; and (iii) data analytics and semantic technologies transforming raw sensor data. Cloud computing provides on-demand, networked access to a pool of virtualized hardware resources (processing, storage) or higher level services. Cloud infrastructure has been used by the IoT community to deploy scalable IoT platform services that govern access to (raw, processed or fused) sensor data. Processing the data streams generated by billions of IoT devices in a handful of centralized data centres brings concerns on response time latency, massive ingress bandwidth needs and data privacy. Edge computing (also referred to as fog computing, cloudlets) brings on-demand and elastic computational resources to the edge of the network, closer to the producers of data 23 . The cloud paradigm was also adopted by the robotics community, called cloud robotics 9 –12 for offloading resource-intensive tasks, 13,14 for the sharing of data and knowledge between robots 24 and for reconfiguration of robots following an app-store model. 25 Although there is an overlap between cloud robotics and IoRT, the former paradigm is more oriented towards providing network-accessible infrastructure for computational power and storage of data and knowledge, while the latter is more focused on M2M communication and intelligent data processing. The focus of this survey is on the latter, discussing the potential added value of the IoT-robotics crossover in terms of improved system abilities, as well as the new technological challenges posed by the crossover.

The scope of this review paper is the IoT as enabler in distributed robotic systems. IoT: Internet of Things.

As one of the goals of this survey is to inspire researchers on the potential of introducing IoT technologies in robotic systems and vice versa, we structure our discussion along the system abilities commonly found in robotic systems, regardless of specific robot embodiment or application domains. Finding a suitable taxonomy of abilities is a delicate task. In this work, we build upon an existing community effort and adopt the taxonomy of nine system abilities, defined in the euRobotics roadmap, 26 which shapes the robotic research agenda of the European Commission. Interestingly, these abilities are closely related to the research challenges identified in the US Robotics roadmap 27 (see Figure 2).

Basic abilities

Perception ability

The sensor and data analytics technologies from the IoT can clearly give robots a wider horizon compared to local, on-board sensing, in terms of space, time and type of information. Conversely, placing sensors on-board mobile robots allows to position them in a flexible and dynamic way and enables sophisticated active sensing strategies.

A key challenge of perception in an IoRT environment is that the environmental observations of the IoRT entities are spatially and temporally distributed. 28 Some techniques must be put in place to allow robots to query these distributed data. Dietrich et al. 29 propose to use local databases, one in each entity, where data are organized in a spatial hierarchy, for example, an object has a position relative to a robot, the robot is positioned in a room and so on. Other authors 30,31 propose that robots send specific observation requests to the distributed entities, for example, a region and objects of interest: this may speed up otherwise intractable sensor processing problems (see Figure 3).

Distributed cameras assist the robot in locating a charging station in an environment. The charging station was placed between a green and yellow visual marker (location A). Visual markers of the same colours were placed elsewhere in the environment to simulate distractors. Visual processing is performed on-demand on the camera nodes to inform the robot that the charging station is at location A and not at the distracting location B (Image from Chamberlain et al. 30 ) (c) 2016 IEEE.

A key component of robots’ perception ability is getting knowledge of their own location, which includes the ability to build or update models of the environment. 32 Despite great progress in this domain, self-localization may still be challenging in crowded and/or Global Positioning System (GPS)-denied indoor environments, especially if high reliability is demanded. Simple IoT-based infrastructures such as an radio frequency identification (RFID)-enhanced floor have been used to provide reliable location information to domestic robots. 33 Other approaches use range-based techniques on signals emitted by off-board infrastructure, such as Wi-Fi access points 34 and visible light, 35 or by IoT devices using protocols such as Ultra-Wideband (UWB), 36 Zigbee 37 or Bluetooth lowenergy. 38,39

Motion ability

The ability to move is one of the fundamental added values of robotic systems. While mechanical design is the key factor in determining the intrinsic effectiveness of robot mobility, IoT connectivity can assist mobile robots by helping them to control automatic doors and elevators, for example in assistive robotics 40 and in logistic applications. 41

IoT platform services and M2M and networking protocols can facilitate distributed robot control architectures in large-scale applications, such as last mile delivery, precision agriculture, and environmental monitoring. FIROS 42 is a recent tool to connect mobile robots to IoT services by translating Robot Operating System (ROS) 43 messages into messages grounded in Open Mobile Alliance APIs?. Such an interface is suited for robots to act as a mobile sensor that publishes its observations and makes them available to any interested IoT service.

In application scenarios such as search and rescue, where communication infrastructure may be absent or damaged, mobile robots may need to set up ad hoc networks and use each other as forwarding nodes to maintain communication. While the routing protocols developed for mobile ad hoc networks can be readily applied in such scenarios, lower overhead and increased energy efficiency can be obtained when such protocols explicitly take into account the knowledge of robot’s planned movements and activities. 44 Sliwa et al. 45 propose a similar approach to minimize path losses in robot swarms.

Manipulation ability

While the core motivation of the IoT is to sense the environment, the one of robotics is to modify it. Robots can grasp, lift, hold and move objects via their end effectors. Once the robot has acquired the relevant features of an object, like its position and contours, the sequence of torques to be applied on the joints can be calculated via inverse kinematics.

The added value of IoT is in the acquisition of the object’s features, including those that are not observable with the robot’s sensors but have an impact on the grasping procedure, such as the distribution of mass, for example, in a filled versus an empty cup. Some researchers attached RFID tags to objects that contain information about their size, shape and grasping points 5 . Deyle et al. 46 embedded RFID reader antennas in the finger of a gripper: Differences in the signal strength across antennas were used to more accurately position the hand before touching the object. Longer range RFID tags were used to locate objects in a kitchen 47 or in smart factories, 48,49 as well as to locate the robots themselves. 50

Higher level abilities

Decisional autonomy

Decisional autonomy refers to the ability of the system to determine the best course of action to fulfil its tasks and missions. 26 This is mostly not considered in IoT middleware: 51 –53 applications just call an actuation API of so-called smart objects that hide the internal complexity. 28

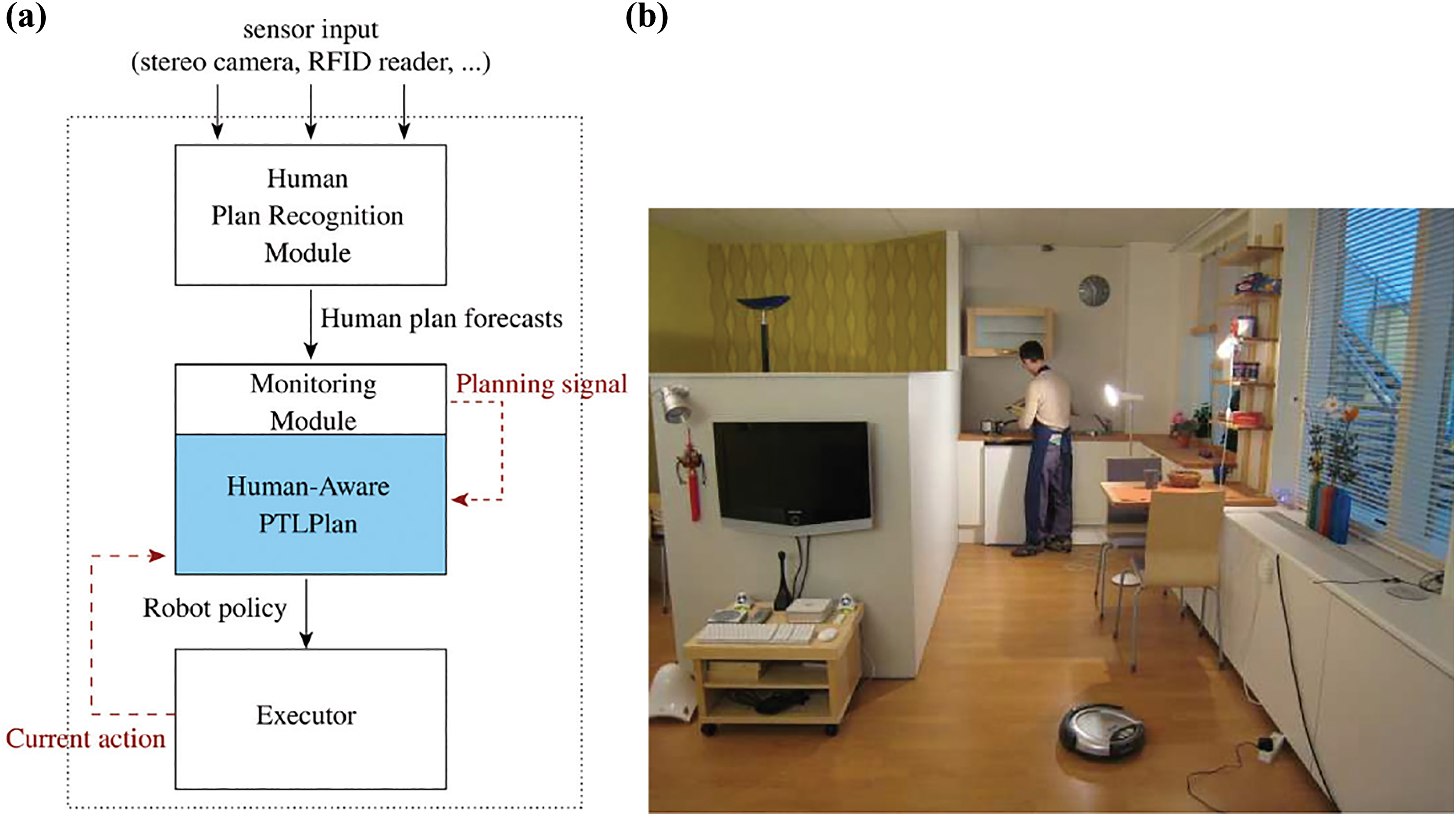

Roboticists often rely on Artificial Intelligence (AI) planning techniques 54,55 based on predictive models of the environment and of the possible actions. The quality of the plans critically depends on the quality of these models and of the estimate of the initial state. In this respect, the improved situational awareness that can be provided by an IoT environment (see “Perception ability” section) can lead to better plans. Human-aware task planners 56 use knowledge of the intentions of the humans inferred through an IoT environment to generate plans that respect constraints on human interaction (see Figure 4).

The vacuum cleaning robot adapts its plan to avoid interference in the kitchen (Figure from Cirillo et al. 56 ) (c) 2010 ACM.

IoT also widens the scope of decisional autonomy by making more actors and actions available, such as controllable elevators and doors. 40,57 However, IoT devices may dynamically become available or unavailable, 58 which challenges classical multi-agent planning approaches. A solution is to do planning in terms of abstract services, which are mapped to actual devices at runtime. 59

Interaction ability

This is the ability of a robot to interact physically, cognitively and socially either with users, operators or other systems around it. 26 While M2M protocols 60 can be directly adopted in robotic software, we focus here on how IoT technologies can facilitate human–robot interaction at functional (commanding and programming) and social levels, as well as a means for tele-interaction.

Functional pervasive IoT sensors can make the functional means of human–robot interaction more robust. Natural language instructions are a desirable way to instruct robots, especially for non-expert users, but they are often vague or contain implicit assumptions. 61,62 The IoT can provide information on the position and state of objects to disambiguate these instructions (see Figure 5). Gestures are another intuitive way to command robots, for instance, by pointing to objects. Recognition of pointing gestures from sensors on-board the robot only works within a limited field of view. 63 External cameras provide a broader scene perspective that can improve gesture recognition. 64 Wearable sensors have also been used, for example, Wolf et al. 65 demonstrated a sleeve that measures forearm muscle movements to command robot motion and manipulation.

Depending on the state of the environment, a natural language instructions results in different actions to be performed (Image from Misra et al. 62 ) (c) 2016 SAGE.

Social body cues like gestures, voice or face expression can be used to estimate the user’s emotional state 66 and make the robot respond to it. 67,68 The integration with body-worn IoT sensors can improve this estimate by measuring physiological signals: Leite et al. 69 measured heart rate and skin conductance to estimate engagement, motivation and attention during human–robot interaction. Others have used these estimates to adapt the robot’s interaction strategy, for example, in the context of autism therapy 70 or for stress relievement. 71

Tele-interaction robots have also been used besides IoT technologies for remote interaction, especially in healthcare. Chan et al. 72 communicate hugs and manipulations between persons via sensorized robots. Al-Taee et al. 73 use robots to improve the tele-monitoring of diabetes patients by reading out the glucose sensor and vocalizing the feedback from the carer (see Figure 6). Finally, in the GiraffPlus project?, a tele-presence robot was combined with environmental sensors to provide health-related data to a remote therapist.

The robot acts as a master Bluetooth device that reads out glucose sensors and transfers them to the caregivers. The robot is then used to provide verbal information concerning the patient’s diet, insulin bolus/intake, and so on (Image from Al-Taee et al. 73 ) (c) 2017 IEEE.

Cognitive ability

By reasoning on and inferring knowledge from experience, cognitive robots are able to understand the relationship between themselves and the environment, between objects, and to assess the possible impact of their actions. Some aspects of cognition were already discussed in the previous sections, for example, multi-modal perception, deliberation and social intelligence. In this section, we focus on the cognitive tasks of reasoning and learning in an IoRT multi-actor setting.

Knowledge models are important components of cognitive architectures. 74,75 Ontologies are a popular technique in both IoT and robotics for structured knowledge. Example ontologies describing the relationship between an agent and its physical environment are the Semantic Sensor Network, 76 IoT-A 77 and the IEEE Ontologies for Robotics and Automation 78 (ORA). For example, Jorge et al. 79 use the ORA ontology for spatial reasoning between two robots that must coordinate in providing a missing tool to a human. Recent works??? harness the power of the cloud to derive knowledge from multi-modal data sources, such as human demonstrations, natural language or raw sensor data observations?, and to provide a virtual environment for simulating robot control policies. In an IoRT environment, these knowledge engines will be able to incorporate even more sources of data.

In the IoT domain, cognitive techniques were recently proposed for the management of distributed architectures. 80,81 Here, the system self-organizes a pipeline of data analytics modules on a distributed set of sensor nodes, edge cloud and so on. To our knowledge, the inclusion of robots in these pipelines has not yet been considered. If robots subscribe themselves as additional actors in the environment, then this gives rise to a new strand of problems in distributed consensus and collaboration for the IoT, because robots typically have a larger degree of autonomy than traditional IoT ‘smart’ objects, and because they are able to modify the physical environment leading to complex dependencies and interactions.

System level abilities

Configurability

This is the ability of a robotic system to be configured to perform a given task or reconfigured to perform different tasks. 26

IoT is mainly instrumental in supporting software configurability, in particular to orchestrate the concerted configuration of multiple devices, each contributing different capabilities and cooperating to the achievement of complex objectives. However, work in IoT does not explicitly address the requirement of IoRT systems to exchange continuous streams of data while interacting with the physical world.

This requirement is most prominent in the domains of logistic and of advanced manufacturing, where a fast reaction to disruptions is needed, together with flexible adaptation to varying production objectives.

Kousi et al. 82 developed a service-oriented architecture to support autonomous, mobile production units which can fuse data from a peripheral sensing network to detect disturbances. Michalos et al. 83 developed a distributed system for data sharing and coordination of human–robot collaborative operations, connected to a centralized task planner. Production lines have also been framed as multi-agent systems 84,85 equipped with self-descriptive capabilities to reduce set-up and changeover times.

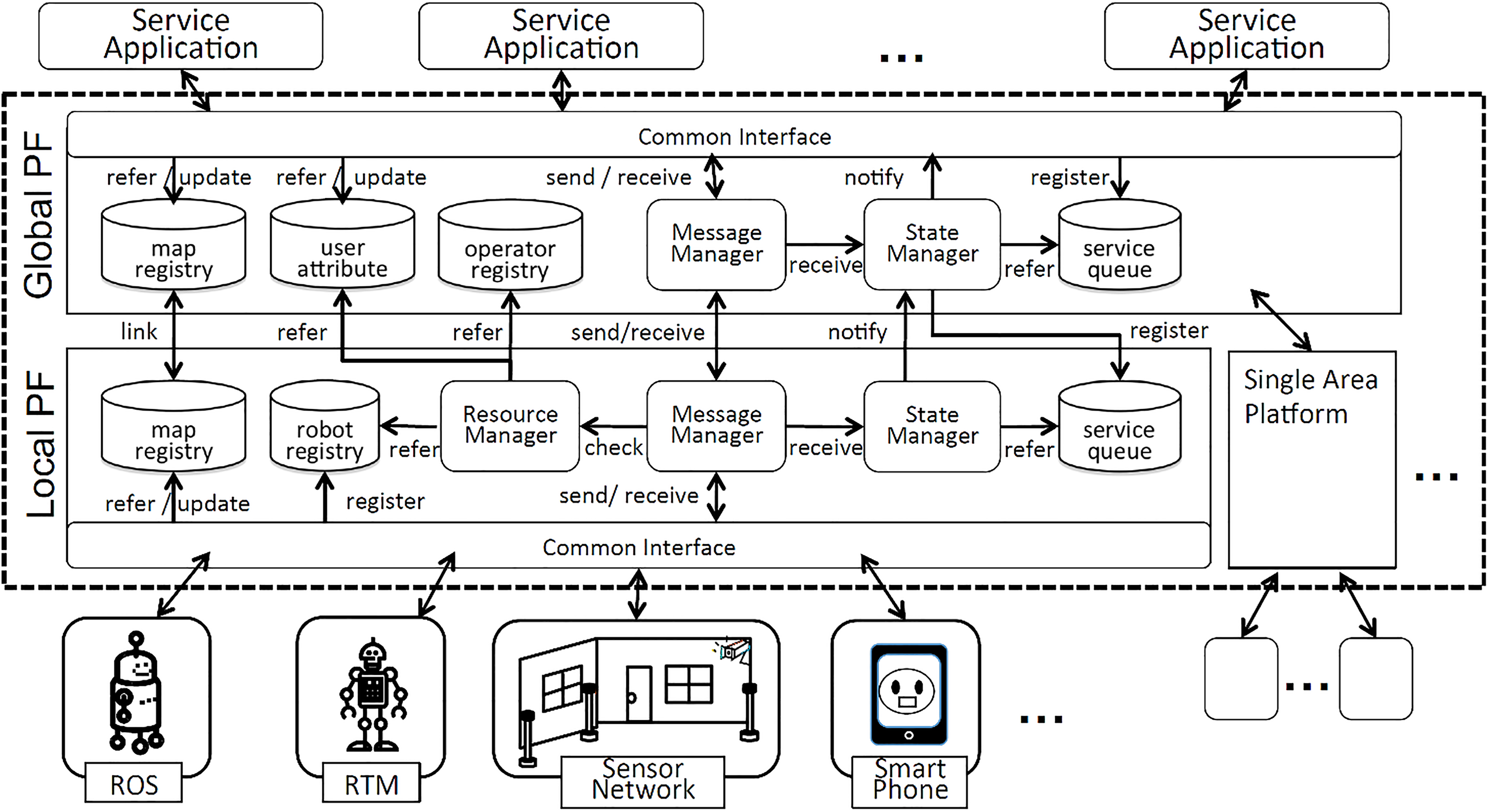

General purpose middlewares have also been developed to support distributed task coordination and control in IoRT environments. The Ubiquitous Network Robot Platform 86 is a general purpose middleware for IoRT environments (see Figure 7). It manages the handover of functionality for services using real and virtual robots, for example reserving a real assistant robot using a virtual robot on the smartphone.

The Ubiquitous Network Robot Platform is a two-layered platform. The LPF configures a robotic system in a single area. The GPF is a middle-layer between the LPFs of different areas and the service applications (Image from Nishio et al. 86 ). LPF: local platform; GPF: global platform (c) 2013 Springer-Verlag.

Configurability can be coupled with decision ability to lead to the ability of a system to self-configure. Self-configuration is especially challenging in an IoRT system since the configuration algorithms must take into account both the digital interactions between the actors and their physical interactions through the real world. The ‘PEIS Ecology’ framework 5 includes algorithms for the self-configuration of a robot ecology: complex functionality is achieved by composing a set of devices with sensing, acting and/or computational capabilities, including robots. A shared tuple-space blackboard allows for high level collaboration and dynamic reconfiguration. 87

Adaptability

This is the ability of the system to adapt to different work scenarios, environments and conditions. 26 This includes the ability to adapt to unforeseen events, faults, changing tasks and environments and unexpected human behaviour. The key enablers for adaptability are the perception, decisional and configuration abilities as described above. Hence, we will now discuss relevant application domains and supporting platforms.

Mobile robots are used in precision agriculture for the deployment of herbicide, fertilizer or irrigation. 88 These robots need to adapt to spatio-temporal variations of crop and field patterns, crop sizes, light and weather conditions, soil quality, and so on. 89 Wireless Sensor Network (WSNs) can provide the necessary information, 90,91 for example, knowledge of soil moisture may be used to ensure accurate path tracking. 92,93 Gealy et al. 94 use a robot to adjust the drip rate of individual water emitters to allow for plant-level control of irrigation. This is a notable example of how robots are used to adjust IoT devices.

Some platforms supporting adaptation of IoRT have also been showcased in the context of Ambient Assisted Living (AAL). Building on OSGi, a platform for IoT home automation, AIOLOS exposes robots and IoT devices as reusable and shareable services, and automatically optimizes the runtime deployment across distributed infrastructure, for example, by placing a shared data processing service closer to the source sensor. 95,96 Bacciu et al. 97,98 deploy recurrent neural networks on distributed infrastructure to automatically learn user preferences, and to detect disruptive environmental changes like the addition of a mirror. 99

Dependability

Dependability is a multifaceted attribute, covering the reliability of hardware and software robotic components, safety guarantees when cooperating with humans and the degree to which systems can continue their missions when failures or other unforeseen circumstances occur.

In this section, we follow the classification of means of dependability identified by Crestani et al. 100

A first means of dependability is to forecast faults or conflicts. For instance, robots in a manufacturing plant must stop if an operator comes too near. IoT technology can provide useful tools to realize this. Rampa et al. 101 mounted a network of small tranceivers in a robotic cell and estimated the user position from the perturbations of the radio field. Other researchers embedded sensors in clothing and on the helmet. Qian et al. 102 developed a probabilistic framework to avoid conflicts of robot and human motion, by combining observations from fixed cameras and on-board sensors with historical knowledge on human trajectories (see Figure 8).

Sensory data from laser and global cameras are fed to the perception module, together with human motion patterns learned by the modelling module. Then three types of abstracted observations are inputted to the controller: PAO, PRO and RSO. Using a Partially Observable Markov Decision Process, a suitable navigational policy is generated (Image from Qian et al. 102 ). PAO: people’s action observation; PRO: people-robot relation observation; RSO: robot state observation (c) 2013 SAGE.

In a marine context, acoustic sensor networks have been used to provide information on water current and ship positions to a path planner for underwater gliders, to avoid collisions when they come to the surface 103 or to preserve energy. 104

A second means of dependability is robust system engineering. This can take new forms in an IoRT system. For instance, mobile wireless communication is a key enabler for industry 4.0, where both field devices, fixed machines and mobile AGV are connected. IoT protocols such as WirelessHart or Zigbee Pro were designed to address the industry concerns on reliability, security and cost. 105 When mobility is involved, however, these protocols must be complemented by meshing technologies to cope with handovers and with the massive presence of metal. 106,107

The last means is fault tolerance, which allows the system to keep working even when components fail. Redundancy is key to fault tolerance, and the IoRT enables redundancy of sensors, information and actuation. Data fusion from both on-board and environment sensors, however, requires a good understanding of the spatial and temporal relationship between the observations from different sensors. Such relationships have been explicitly modelled, for example, in the Positioning Ontology 79 (POS), or implicitly learned as part of modular deep neural network controller. 108

Conclusion

Robotics and IoT are two terms each covering a myriad of technologies and concepts. In this review, we have unravelled the added value of the crossover of both technology domains into nine system abilities. The IoT advantages exploited by roboticists are mostly distributed perception and M2M protocols. Conversely, the IoT has so far mostly exploited robots for active sensing strategies. Current IoRT incarnations are almost uniquely found in vertical application domains, notably AAL, precision agriculture and Industry 4.0. Domain-agnostic solutions, for example, to integrate robots in IoT middleware platforms, are only emerging. It is our conviction that the IoRT should go beyond the readings of ‘IoT-aided robots’ or ‘Robot-enhanced IoT’. We hope that this survey may stimulate researchers from both disciplines to start work towards an ecosystem of IoT agents, robots and the cloud that combines both the above readings in a holistic way.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Pieter Simoens was partially funded through the imec ACTHINGS High Impact initiative.