Abstract

Automated guided vehicles require spatial representations of their working spaces in order to ensure safe navigation and carry out high-level tasks. Typically, these models are given by geometric maps. Even though these enable basic robotic navigation, they off-the-shelf lack the availability of task-dependent information required to provide services. This article presents a semantic mapping approach augmenting existing geometric representations. Our approach demonstrates the automatic annotation of map subspaces on the example of warehouse environments. The proposals of an object recognition system are integrated in a graph-based simultaneous localization and mapping framework and eventually propagated into a global map representation. Our system is experimentally evaluated in a typical warehouse consisting of common object classes expected for this type of environment. We discuss the novel achievements and motivate the contribution of semantic maps toward the operation of automated guided vehicles in the context of Industry 4.0.

Introduction

Automated guided vehicles (AGVs) require spatial representations of their environment in order to enable autonomous navigation. Geometric maps are commonly used with occupancy grid maps being the state-of-the-art for robotic navigation. These enable basic robotic navigation, but they off-the-shelf lack the availability of environment-specific information. This article presents an approach to semantic mapping which augments common geometric representations by objects being relevant for logistic environments.

Navigation algorithms such as path planning or obstacle avoidance benefit from the additional knowledge about obstacles in the surrounding environment. For example, the presence of

Semantic maps provide a fundamental resource for AGVs operating as mobile service robots in logistic environments. In this way, they enable a vehicle to go, for example, to the «

Close-to-market systems solve this with the help of a human supervisor manually annotating maps or in rather exceptional cases using voice and gesture commands. The workload induced by this process is substantive, particularly for the initial setup of such a system. Thus the automatic annotation of such maps is appreciated for the introduction of AGVs in warehouses and is highly beneficial in the context of Industry 4.0.

Our approach aims at building semantic maps online while steering an AGV inside a warehouse (see Figure 1). This makes highly efficient object detection, simultaneous localization and mapping (SLAM), and map inference indispensable. The object detection utilizes efficient one-dimensional (1-D) segmentation over range as well as height information. These data are acquired through assumption of a 2.5-D world with end point of scans being described by a triplet of {

An automated guided vehicle in a warehouse environment.

Our object recognition exploits the fact of a comparable limited object diversity being expected in warehouses. We therefore utilize geometric features covering physical dimensions of objects such as width and height. In addition to that, we investigate the visual appearance of object observations using a pre-trained deep convolutional neural network (DCNN/CNN) whose features are subsequently evaluated by a multi-class support vector machine (SVM). Our system is trained using solely publicly available image data and is evaluated in an environment it has never seen before. We expect the outcome to be highly beneficial since it allows to predict its overall ability to generalize from training data. The a highly efficient segmentation and object detection based on 1-D contours; a semantic mapping framework with near real-time performance; integration of an SLAM framework for estimating globally consistent semantic annotations; and experimental evaluation in an a priori unknown logistic environment.

Related work

The existing work in the area of semantic mapping can be categorized into different research fields in the computer vision and robotics communities and are further investigated as follows.

Deep learning and 2-D segmentation

The presence of DCNNs/CNNs significantly pushed the development in object and scene recognition. Based on the early work of LeCun et al. in the late 1980s, 1 there has been established a large number of algorithms such as the literature 2 –4 in the recent years utilizing CNNs. The baseline recognition performance has tremendously increased as, for example, compared to approaches based on SIFT and bag-of-words (BOWs) classification 5,6 . In contrast to BOW-based approaches utilizing local features such as SIFT, CNNs use holistic images for feature extraction and classification. Out of the box, they are expected to be applied to images with the target object being centered and occupying the majority of the image. Prior image saliency estimation is required in order to make use of CNNs for analyzing images of complex scenes. In this line, a number of substantive work has been established, with selective search 7 being a well-known representative. More recently, the novel methods of Edge Boxes 8 and Bing 9 have proven to be capable for real-time applications. All of these methods 7 –9 search the input image for ROIs being occupied by objects using different heuristics such as the responses of edge detectors.

Semantic scene understanding

The research field of semantic scene understanding has been investigated by the computer vision community. The availability of consumer-grade RGB-D cameras has essentially supported this. Silberman et al. proposed to segment RGB-D images into the classes such as floor, walls, and supportive elements and present an inference model describing physical interactions of these classes.

10

Geiger et al. proposed a method for joint inference of 3-D objects and layout of indoor scenes.

11

Zheng et al. suggest a conditional random field (CRF) for dense segmentation and semantic labeling. Either of the methods provide powerful tools for semantic labeling achieving outstanding results. However, these implementations require a long computation time. The well-known real-time segmentation approach

Object-based SLAM

In the recent years, the development of algorithms for visual simultaneous localization and mapping (V-SLAM) has essentially progressed. Thanks to the availability of graph-based optimization libraries such as g2o

14

and iSAM,

15

the application of V-SLAM for online operation is rendered possible. Several SLAM implementations utilizing these libraries were presented.

16–18

The state-of-the-art in SLAM focuses on the optimization of poses and landmarks which typically refer to local image features (e.g. SIFT

19

), geometric features (e.g. GLARE

20

), ConvNet landmarks,

21

or holistic image matching.

22

There is only a small number of previous work utilizing semantic information in SLAM. Strasdat et al. presented

Semantic mapping

Nüchter et al. proposed a semantic mapping approach that applies plane segmentation to 3-D laser data. 25 Though enabling high performance, it is restricted to planar regions. Stückler et al. presented an object-based extension for SLAM being able to generate dense 3-D object maps at a relatively high frame rate. 26 The authors utilize simple region features extracted from RGB and depth data to segment object regions from point clouds. The objects are classified using random decision forests and subsequently used to concurrently track the camera’s pose and estimate the 3-D poses of the objects. Even though, the approach is highly related to ours since the authors also make use of the geometric properties of objects in classification, we expect that the classification accuracy obtained with simple RGB region features can be substantially increased by CNNs. Grimmett et al. investigate the automatic mapping of parking spaces in garages based on lane marking detection in camera images. 27 Beinschob et al. present an approach for mapping logistic environments including the recognition of storage places and the automated generation of road map for AGVs. 28

Semantic place categorization

Semantic mapping in the context of labeling spatial subspaces with object categories has been investigated by several authors of the mobile robotics field. The early work of Mozos et al. extracts features from 2-D laser range data and trains Adaboost classifiers for distinguishing places of different categories. Pronobis et al. extended this by fusing data from 2-D laser range finders and cameras in an SVM-based place classification 29 which was extensively evaluated on a publicly available data set. 30 Hellbach et al. suggest the use of nonnegative matrix factorization (NMF) to automatically extract relevant features from occupancy grid maps which are subsequently utilized for identifying place categorizations. 31 More recently, Sünderhauf et al. proposed an approach to semantic mapping using visual sensors. 32 The authors make use of deep-learnt features extracted with a CNN which are passed to a random forest classifier. A continuous factor graph model is used to infer from the object observations. The area being covered by the camera’s field of view is labeled according to the output of the graphical model. The authors integrate their approach with state-of-the-art grid mapping solutions, such as GMapping 33 and Octomap, 34 in order to build geometric maps with the free space being supplemented by place category labels.

Summary

The computer vision research yields a number of high-level methods for feature extraction (CNNs), efficient segmentation, and semantic labeling. However, the use of solely 2-D image data for semantic labeling of holistic scenes entails the use of methods for generating region proposals. The measure of edgeness which is typically employed does not necessarily result in object proposals being expected or required which was also reported by Sunderhauf et al. 35 The missing depth data also hamper the use of scaled geometric object properties and height segmentation techniques. The existing methods for SLAM and semantic mapping either focus on the use of objects as landmarks 23 or are likely to provide fewer accuracy in large-scale object classification (36; 5) due to the image features being used. The related work in semantic mapping (2; 8) within the robotics community focuses on the extraction of one object class being of interest for investigated environment type (parking or storage spaces).

To our knowledge, there exists no prior work on online semantic mapping with the result of dense geometric maps with occupied space being assigned object labels with the accuracy of deep-learned models. Also, we expect the application of semantic mapping with recognition of multiple object classes in logistic environments to be novel in the robotics research.

Object recognition

The input RGB-D data of a camera are searched for objects. Thanks to the range data, the detection of occupied space in close proximity of the vehicle is simplified. A point cloud

Illustration of the architecture of our object recognition. The left part of the graph demonstrates the processing of the depth images and the right part the processing of the RGB images.

Ground plane segmentation

At first we filter those points of

Range and height scan

A top-down projection of

where

Depending on the depth measuring sensor used and the resulting density of the obtained point cloud, it is recommendable to either exclude cones without depth values or interpolate the depth data with a Gaussian kernel around these locations.

Let us assume that

In this way, we obtain the contour

Curvature detection

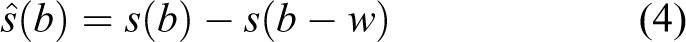

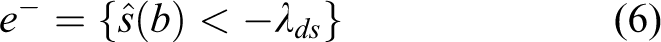

In order to detect objects,

for (

with

Segment estimation

Having obtained the putative object boundaries

The object proposal

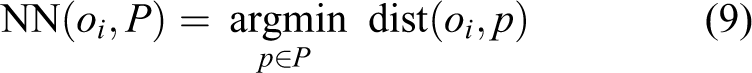

Object retrieval

The object observations are defined by the segments

The estimated height and width of an object are subject to uncertainties due to the range-dependent measurement accuracy of RGB-D sensors. We account for this by introducing the following covariance matrix M

with

The weighting factors

As a result of this step, we obtain a set of object class proposals

ROI estimation

The object proposal

CNN features

This processing unit extracts features from the RGB image data. This process is restricted to the areas defined by the ROIs of the prior detection step. For this purpose, we use a CNN. The principle of CNNs can be summarized as follows. A set of convolutional filters are repeatedly applied to the 2-D image data. The filters’ outputs are collected into nonoverlapping grids. The next layer subsamples the input data by applying pooling methods such as taking the maximum or average of the grid. The combination of convolving and subsampling the input data is repeatedly carried out at successive network layers. This method allows to learn features at different scales and spatial positions in the image. The complex fully connected layers of neural networks are typically found at the end of a CNN. The outputs of different CNN layers can be combined for the final output. The CNNs differ significantly from other feature extraction methods used in computer vision since they learn features and their distributions at different levels (e.g. parts, objects, and local characteristics) given the training data. Depending on the depth, the layers respond to different scales of an object. The further a layer is located from the input layer, the more local will be the response and the smaller the affected area of a firing neuron. As a feature extractor, we make use of the pre-trained CNN

Image/ROI classification

The content of each detected object is classified based on the ConvNet features extracted within its ROI. This procedure is applied to dynamic objects such as pallets, forklifts, and humans. In addition to those, we classify the feature vectors generated from the entire image in order to detect larger objects such as gates and racks. We train a multi-class SVM following a one-versus-all schema. Hence, we train one binary SVM for each class

We use a linear kernel which can be defined as the following cost function:

We observed that using a linear kernel provides promising classification results while keeping the computational costs at a minimum. This is necessary since a more complex system has to evaluate a large number of classifiers for each obstacle being detected at a high frequency. Each SVM is trained with positive samples of one target class and negative samples being randomly drawn from the other classes as well as images describing various objects not being recognized by our system such as walls, ladders, and windows. We use one set of binary SVMs for dynamic (ROI) and another set for static objects (entire image). It is possible to recognize multiple dynamic and one static object in a single image, for example, pallets placed inside a rack. The ROIs and images being evaluated are labeled with the class having the minimum distance to the input feature vector. Those object proposals exceeding a distance threshold

Range scan annotation

The range scan

Semantic mapping

The integration of our semantic mapping algorithm is illustrated in Figure 3 and described in depth in the following section.

Overview of our semantic mapping system with its components and their data flows.

Integration of object proposals in pose graph SLAM

Without loss of generality, we can describe the motion of loop closings detected for the poses xi and xj by uij, hence we can postulate the following state xj

Given all states

We can account this by the following factorization

with

with

Even though it is generally possible to include the object points in the graph optimization, similar to our previously presented approach 16 . This additional optimization is quite expensive and hence might entail a significant performance loss for online mapping.

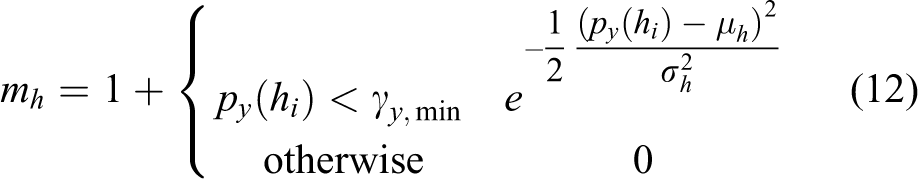

Probabilistic grid mapping with semantic information

This section explains our method for transferring a graph-based map structure into a global semantic grid map. First, we will introduce how a regular spatial structure is generated and the object observations are transferred. The subsequent inference on the initial estimate of the map will be discussed.

where

Each grid cell

where

Our approach implements an end point model with each measurement point being directly assigned to the corresponding grid cell omitting cells inside the ray’s cone. This is necessary in order to ensure an efficient map update. A number of robotic navigation tasks such as path planning often require more comprehensive environment descriptions which explicitly consider free space. For performance reasons, we avoid expensive ray-casting models accompanied with this and suggest building those subsequently or in parallel but slightly delayed.

Since the map size and the number of object likelihoods stored in the cells easily increase in large-scale environments, it is recommended to use sparse instead of dense matrices. Particularly, high-resolution maps usually encode a lot of free space. The point transformations being applied frequently are highly optimized thanks to efficient matrix operations.

Due to the abovementioned reasons, it is necessary to explicitly account for the uncertainties in the semantic map estimation. For this purpose, we make use of the Shannon entropy which is separately estimated for each grid cell as follows

The Shannon entropy provides a beneficial measure of uncertainty as revealed by the probability distributions of the semantic grid cells. The measures of

The differentiation of high-, low-, and unconfident estimates is required to ensure robust class label assignments. Those cells possessing an entropy measure

Semantic grid cells providing a high confidence

The presented method enables efficient inference of final class labels

Experiments

This section presents the experimental results obtained using the object recognition and semantic mapping algorithms as explained earlier in this article. We will describe the experimental setup and the results for each component of the system, in particular the segmentation, classification, and the semantic map generation.

Setup

We evaluate the presented approach by experiments carried out in a warehouse which consists of a multitude of common objects expected for this type of environment. The data are collected with a reach truck which has been fully automated for a research project. For the purpose of generating a semantic map, we manually steered the vehicle inside the warehouse. The RGB-D image data utilized by our system are recorded using an Asus Xtion camera which is aligned sideward with respect to the vehicle’s direction of travel. We use the following parameters in our experiments:

Segmentation

This experiment analyzes the performance of the object detection system before the retrieval and classification components. We therefore run the detection and store the input RGB images along with the overlayed bounding boxes. The amount of correct, incorrect, and missing detections is determined irrespective of the actual class labeling. Thresholds and parameters of the segmentation are kept fixed. We obtain an overall detection rate of about 87.21%.

Classification

We further evaluated the classification accuracy achieved based on the object retrieval priors and the appearance-based classifier. Table 1 provides details about the training phases of the SVMs including iterations, number of support vectors, and amount of training images. As already mentioned, we make use of a set of 500 negative training samples capturing objects not being recognized by our system. From this set, we randomly sample about 150 images and another 150 samples are selected from the training images of the other object classes. The data set is split into 50% training and 50% testing images. The entire training and testing data set is obtained from publicly available image sources being supplemented by our own image collection. These images are edited in the way such that only the object of interest is visible. We captured an additional validation data set consisting of RGB and depth images in the mentioned warehouse. The ground truth for the validation data set is obtained by manually labeling the input RGB images. We assume that at least 50% of an object of interest has to be visible in order to assign an image the corresponding class label. This addresses the classification of entire images (for gates and racks) as well as the ROI-based classification of objects such as forklifts, pallets, and humans. The object segmentation expects this amount in order to minimize false-positive detections while simultaneously enabling as many as possible recognitions of objects in the presence of clutter and close to the image boundaries. The results are shown in Figure 4 and Table 2. The values of the ROC curves are obtained by varying distance thresholds

Details of the training phase: number of training, testing, and validation images; training iterations; number of support vectors; and area under the curve values on testing data are shown for each class.

AUC: area under the curve.

ROC curves for all object classes captured on the validation data set. The figures show the results of the prior training (red) and the ones of the validation (blue). ROC curve for object class (a)

Results of the SVM-based classification with priors obtained from the object retrieval.a

SVM: support vector machine; ROI: region of interest; AUC: area under the curve.

aThe table provides the AUC, the corresponding evaluation window, and the obstacle type.

SLAM

Our graph-based SLAM poses the fundamental for the spatial mapping of objects and the estimation of the vehicle’s pose with respect to the environment. There have been proposed a number of metrics for benchmarking algorithms as, for example, those introduced by the Rawseeds project. 40 The ground truth is obtained using a laser range finder and the algorithms described by Himstedt et al. 16 The accuracy of the estimated trajectory is expected to be below 0.04 m, thanks to the high measurement accuracy of the laser range finder and a joint map and pose refinement. 16 The results of the presented camera-based SLAM approach are compared to the ground truth based on the absolute trajectory error (ATE). 40 The mean error is 0.26 m in the position and about 0.07 rad in the orientation, respectively.

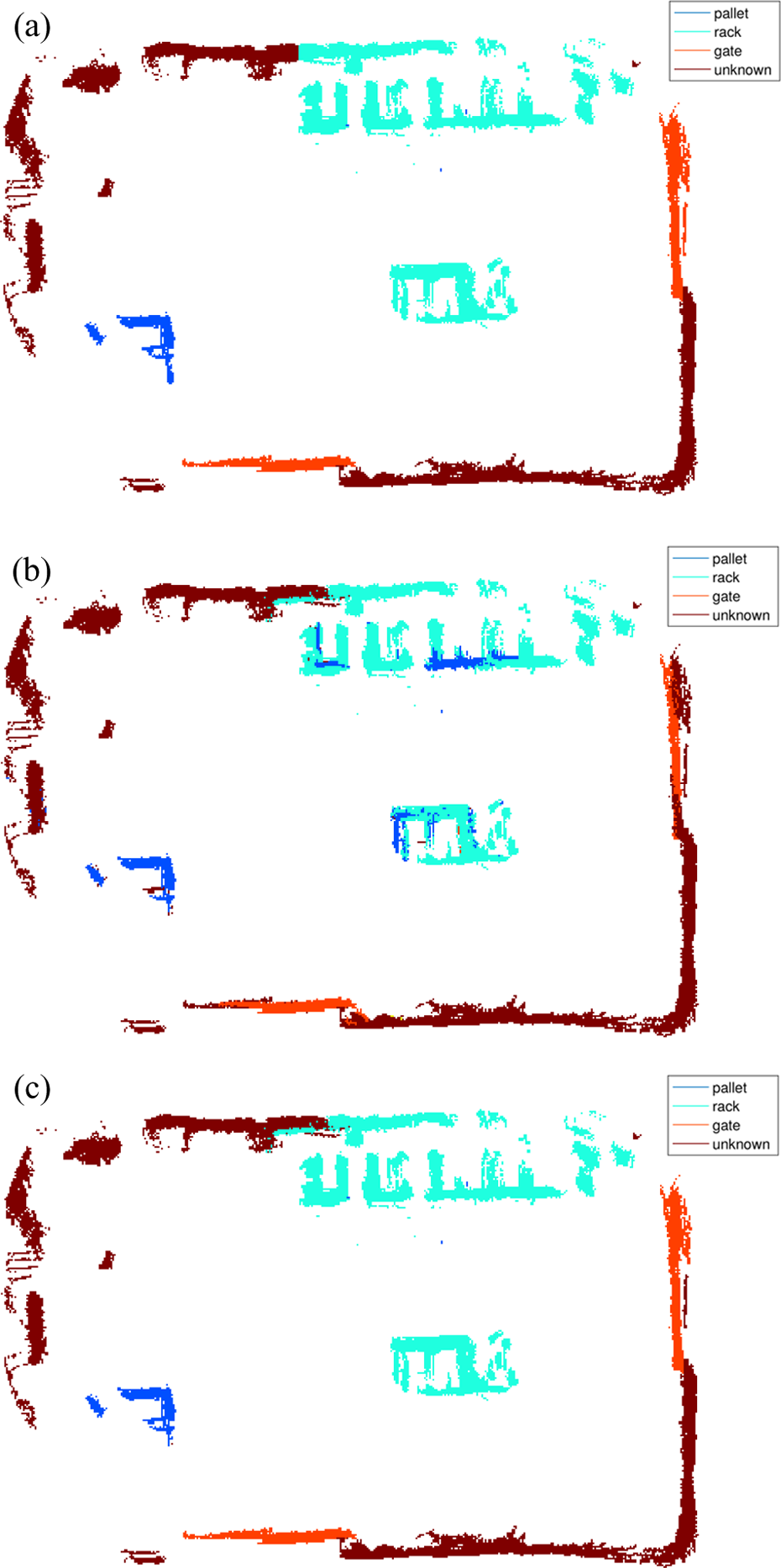

Semantic labeling

In addition to the evaluation of the accuracy of our SLAM framework, we investigated the key contribution of this article which is the semantic annotation of the occupancy grid map. Our ground truth is generated by manually labeling the grid cells of our prior map provided by the SLAM algorithm. The evaluation of the semantic annotation is carried out by comparing the ground truth to those being obtained initially (unoptimized) and those being optimized. The results are visualized in Figure 5 and summarized in Table 3.

The figures demonstrate our experimental results obtained in warehouse environment. The ground truth labels are obtained by manually annotating all non-empty grid cells. (a) Semantic map with ground truth labels. (b) Initial semantic labels without optimization. (c) Initial semantic labels with optimization based on uncertainty estimation and incorporating adjacent cells.

Semantic labeling results.

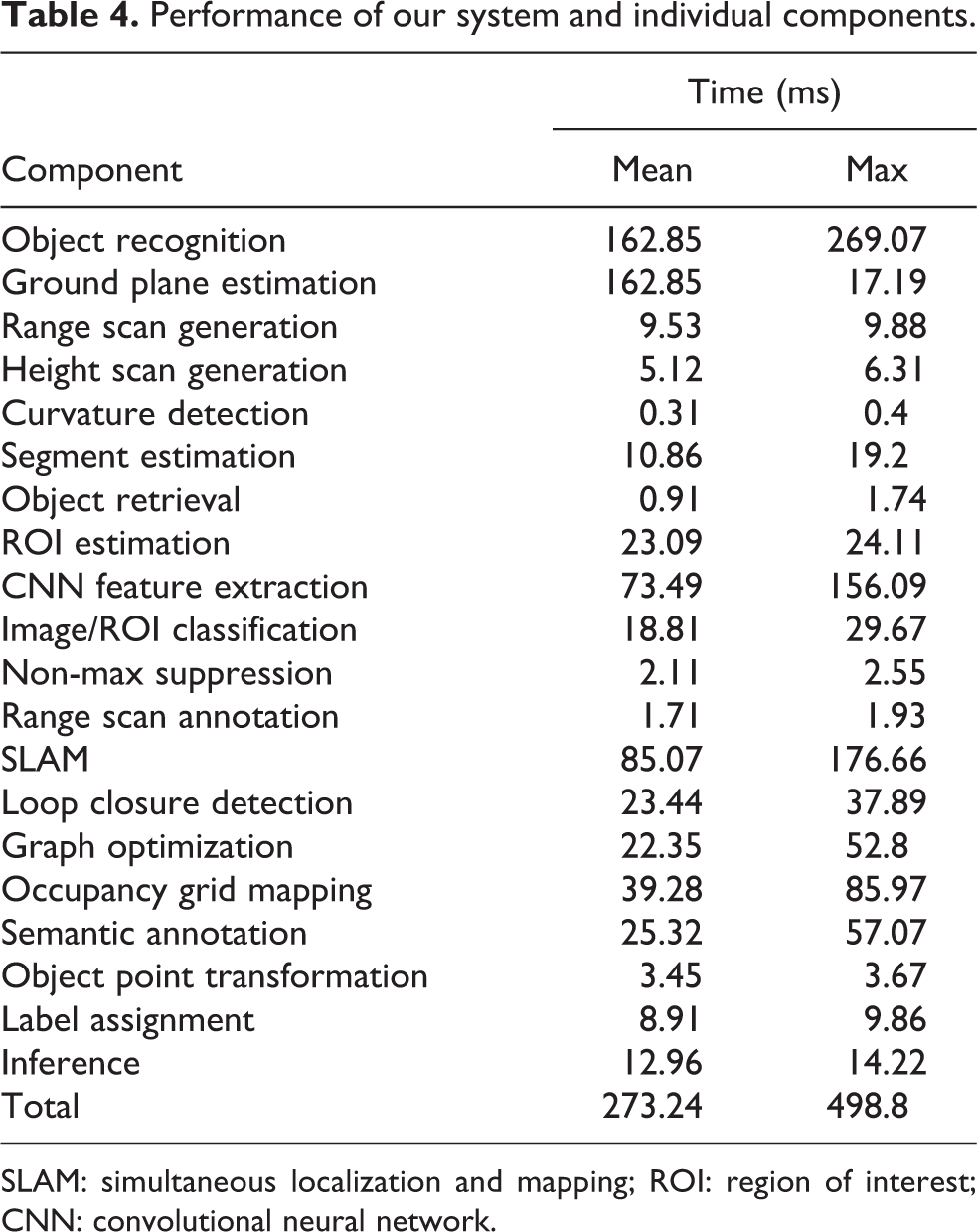

Performance

In our experiments, we used a Dell Latitude E6320 laptop equipped with an Intel Core i7 dual-core processor and 8 GB RAM. The processing time required by each system component is detailed in Table 4. Our system runs at about 4 Hz in the mean and never drops below 2 Hz. Note that some of the components do not necessarily have to run all the time, for example, the occupancy grid map can be re-estimated at a much lower rate or can even be done only once at the end of the trajectory. The semantic map is estimated independently of the occupancy grid map and requires significantly less computation time due to the end point model being utilized instead of expensive ray casting. Also the rates of the loop-closure detection and graph optimization can be reduced to, for example, 0.5 Hz. Based on these simplifications and extensive use of parallel programming, we observed de facto run times of about 5–10 Hz.

Performance of our system and individual components.

SLAM: simultaneous localization and mapping; ROI: region of interest; CNN: convolutional neural network.

Discussion

Our object segmentation and retrieval provides an efficient solution for online semantic mapping. The classification results obtained for the validation data set demonstrate the high generalization performance of the CNN and SVM. This becomes particularly obvious since the appearance of many objects on the training images differs from those in the testing warehouse. The detection of pallets and gates is outstanding, the one for racks is slightly worse. We expect that this is due to the fact that our training data mainly consist of racks being fully equipped with pallets which are not the case for all racks in our testing environment. Thanks to the descriptive power of the CNN features, we are able to achieve a high classification accuracy for SVMs with linear kernels given a relatively small amount of training data, especially compared to approaches based on HOG features. 41 The CNN features in combination with observations of multiple viewpoints being correctly referenced by our SLAM algorithm enable the correct labeling of more than 97% of the grid cells.

Conclusion

We introduced a solution for semantic mapping of logistic environments. Our prior segmentation enables to efficiently generate object proposals by exploiting geometric properties of objects being observed in a scene. These geometric features are fused with textural properties of objects which are extracted based on deep-learned features from a CNN and subsequently evaluated by a set of multi-class SVMs. The entire training stage uses data solely obtained from publicly available image data and a priori known object boundaries. Our system is evaluated on sensor data originated from an environment which neither the CNN nor the SVM have ever seen before which emphasizes our system’s strengths of generalization and potential application in a priori unknown logistic environments.

We presented how points of object observations can be integrated in a graph-based SLAM framework in order to enable online semantic mapping. It is further demonstrated how this graph can be transferred to a grid map with each cell being assigned an object label. Efficient inference of object proposals is carried out by analyzing observation uncertainties. The overall mapping system is evaluated in a warehouse with common object classes being relevant in this type of environment.

Our system runs at about 4–5 Hz which is sufficient for online mapping with AGVs. Thanks to methods of deep learning, we are able to achieve a high-object classification accuracy. The efficient object segmentation and sparse pose graph optimization being incorporated, however, enable the high performance which is important for robotic applications. We expect that the presented system contributes to an emerging interest in AGVs for logistic environments. By bridging the gap from existing technologies enabling autonomous navigation and the significant initial expense of manual map annotations, we look forward to the upcoming fourth industrial revolution.

Footnotes

Acknowledgment

We acknowledge financial support by Land Schleswig-Holstein within the funding program Open Access Publikationsfonds.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was financially supported by Land Schleswig-Holstein within the funding program Open Access Publikationsfonds.