Abstract

In order to assess the performance of multisensor image registration algorithms that are used in the multirobot information fusion, we propose a model based on structural similarity whose name is vision registration assessment model. First of all, this article introduces a new image concept named superimposed image for testing subjective and objective assessment methods. Therefore, we assess the superimposed image but not the registered image, which is different from previous image registration assessment methods that usually use reference and sensed images. Then, we calculate eight assessment indicators from different aspects for superimposed images. After that, vision registration assessment model fuses the eight indicators using canonical correlation analysis, which is used for evaluating the quality of an image registration results in different aspects. Finally, three kinds of images which include optical images, infrared images, and SAR images are used to test vision registration assessment model. After evaluating three state-of-the-art image registration methods, experiments indict that the proposed structural similarity-motivated model achieved almost same evaluation results with that of the human object with the consistency rate of 98.3%, which shows that vision registration assessment model is efficient and robust for evaluating multisensor image registration algorithms. Moreover, vision registration assessment model is independent of the emotional factors and outside environment, which is different from the human.

Keywords

Introduction

By connecting different robots that are integrated kinds of sensors respectively in a team can perform a better job than each individual is capable of. 1,2 In order to improve the abilities of multirobot systems, information fusion is a necessary task to suppress the noise in a multiagent environment. 3 Finding a way to most effectively utilize the information captured from these multiple sensors, possibly of different modalities, is of considerable interest. Image fusion provides one versatile solution, where multiple aligned images acquired by different sensors are merged into a composite image. The properly registered image is more informative than any of the individual input images and can thus better interpret the scene. 4,5 Consequently, an accurate multisensor registration is a key procedure that is to transform information provided by different sensors with multiple spatial and spectral resolutions into the same coordinate model for image fusion. 6 –8 To date, the surveys on image registration methods can be found in literatures. 9 –13 Additionally, Pomerleau and Colas made a review on point cloud registration algorithms for mobile robotics. 14 Moreover, multisenor image registration is also important for Simultaneous Localization And Mapping (SLAM) which is the core of the robot self-localization. 15 –18

In order to achieve the multisensor image registration for different types of images that include visible light images, infrared images, and synthetic aperture radar (SAR) images, multisource sensor image registration quality evaluation methods must be able to solve the problem of image diversity. 19 On the other hand, due to the diversity and complexity of the image scene, the evaluation method is necessary to have a wide range of applicability, which can handle a variety of complex situations. This is one of the problems in the field of image registration quality evaluation-universal applicability, 20 which poses a very high requirement for the performance of image registration quality evaluation methods.

In the study of image registration, scholars mainly focus on the registration algorithm itself. The evaluation of image registration results has not been attracted much attention. Therefore, it is necessary to study the assessment model of the image registration algorithm. Through the study of the image registration quality evaluation algorithms, it is found that the evaluation methods that are commonly used are just applied for one or several kinds of specific scenarios in image registration. 21 –23

At present, some researchers have done a lot of work in the image registration quality evaluation. They proposed some image registration evaluation criteria for different fields. Bouchiha and Besbes utilized the recall which was based on the number of interest points properly matched and the number of actually existing matches for comparing the remote sensing image registration performance of four different features (Scale-invariant feature transform [SIFT], Gradient location-orientation histogram [GLOH], Speeded Up Robust Features [SURF] and Open-Speeded Up Robust Features [O-SURF]). 24 Thor and his colleagues proposed a quantitative measure which included a contour similarity measure which was the Dice’s similarity coefficient (DSC) for the deformable medical image registration quality evaluation. 25 Bharatha and his coauthors used the feature matching rate and DSC for evaluating the three-dimensional finite element-based deformable magnetic resonance image registration. 26 Liu and his collegues used the kernel sparse coding for object recognition. 27 However, those evaluation methods focused on the certain areas and were less robust in complex conditions of multisensor image registration algorithms.

Inspired by the human vision system (HVS)and the existing image registration quality evaluation criteria, 28,29 we built a quality assessment model of image registration with wide applicability and human visual characteristics. Experimental results have shown that the proposed evaluation model is robust to the local distortion. Additionally, our model can be used for evaluating registration algorithms which are used in a variety of application scenarios.

This article is organized as follows. In section “Related work,” we present several related works of subjective and objective evaluation methods. In “Image registration assessment model based on SSIM” section, we describe the proposed algorithm and its improvement by fusing eight indicators. In “Experimental results and analysis” section, we show the numerical simulations of comparing the evaluation results between our proposed model and HVS. It is noted that three states-of-art image registration algorithms are introduced to register the multisensor images. Finally, conclusions and suggestions for further work are summarized in section “Conclusion and future work.”

Related work

According to our previous research, image registration quality evaluation is generally divided into two types: (1) subjective evaluation methods, which depend on the human eyes to observe the images and make choices of registered image quality according to the options provided; (2) objective evaluation method, which depends on the related mathematical model to compute the mean value, variance, or gradient of an image.

Subjective assessment

The subjective image registration assessment methods based on HVS are fast and simple. 30 However, the disadvantages of those methods are one sided and poor reproducible, because the quality of the image registration result is mainly determined by the observer. 31 Moreover, when the observer is affected by the psychological changes and observation environment, it will lead to the difference of the evaluation results and reduce the accuracy of the evaluation results. 32 Therefore, the objective evaluation method has been developed, which results in a large number of objective evaluation model being proposed.

Objective assessment

The objective assessment algorithms of image registration can be divided into two

categories: (1) direct assessment method and (2) indirect assessment method. Christensen

and Crum proposed a method based on inverse consistency error (ICE) for assessing the

quality of image registration.

33,34

However, ICE will fail in evaluating the image registration methods when the

images’ background is flat. In image registration, it is generally assumed that the

cross-correlation mapping between the reference image and the image to be registered is

one-to-one. In other words, any point in the sensed image

However, the objective image registration quality assessment algorithms that are currently used cannot meet the above two requirements, which are difficult to match the assessment results based on the human eyes. Inspired by the image quality evaluation criterion based on structural similarity (SSIM), we proposed a new robust assessment model that combines the advantages of subjective and objective evaluation methods for multisensor image registration algorithms.

Image registration assessment model based on SSIM

Multisensor image registration is installed for different types of images that include optical images, infrared images, and SAR images. Therefore, the objective assessment method of the multisensor image registration methods must be able to solve the problem of image diversity. On the other hand, due to the diversity and complexity of the image scenes, it is inevitable that the image registration assessment method must have a wide range of applicability and can handle a variety of complex situations.

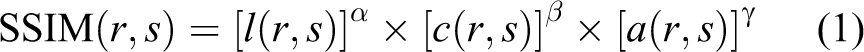

It is noted that our assessment model proposed is inspired by structural similarity model, which is approximate to the processing of human eyes. With the development of the HVS research, Wang and his coauthors proposed an image quality evaluation criterion based on structural similarity. 43 They believe that the image structure information is independent of the brightness and contrast characteristics, so the evaluation of image quality can be approximated as the image structure similarity, brightness, and contrast evaluation. 39 In other words, this method directly evaluates the image quality by calculating the similarity of image structures, which can overcome the complex image scene and the difficulty of multichannel decorrelation. Moreover, according to our previous research and analysis, we introduced eight evaluation factors to be parameters of our proposed model, which assessments the performance of image registration methods in different aspects. Then, vision registration assessment model (V-RAM) can give the readers a reasonable and robust assessment result.

The SSIM model presented by Wang et al. describes the relationship among the correlation

contrast

where

where

Superimposed image

Generally, the reference image

where rext(

where

SSIM-motivated registration assessment model

In our model, some previous indicators are introduced. In the first place, the function of this model is shown as follows

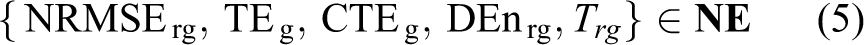

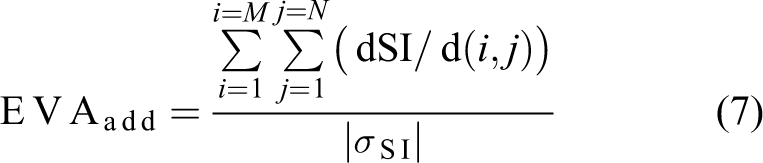

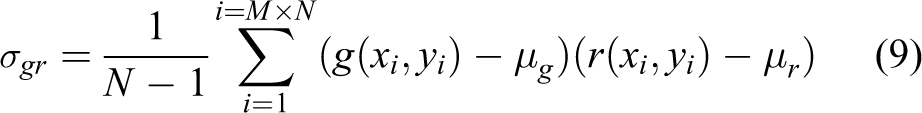

According to equation

(3), we can see that there are eight indicators in V-RAM. According to the

relationship between V-RAM and the registration algorithm, those indicators can be divided

into two categories: (1) the indicator class

where ROgr is the relative overlap region between

where

where

where

where

where

where ImgN is the number of the test images,

where

It is noticed that we assume that

where

Weight acquisition

In order to determine the values of

where

The weights of each indicator is obtained by calculating equation (15)

where

Flowchart of our proposed algorithm

The outlines of our algorithm are shown as follows: Before evaluating the image registration result, the input image of V-RAM is

necessary to be preprocessed. One of the key steps in this preprocessing stage is to

obtain the image overlay, which evaluates the region region of interest (ROI) of the

overlay image. It is known that the difference of the gray level between the

heterologous images is very large. If we calculate the evaluation index of

When evaluating the performance of the color image registration, it is necessary to

decompose the image into different channel for assessment. After the above two-step processing, the evaluation indicators of each channel are

calculated. According to the application scenario of image registration algorithms, we obtain

the results of image registration evaluation results based on the V-RAM model.

Experimental results and analysis

Materials

In our experiment, a database for image registration was created by our lab. The database

has 1200 images that include optical images, infrared images (includes near-infrared

images with wavelengths of 780 to 3000 nm), and SAR images. Moreover, in order to test the

V-RAM model for multisensor registration algorithms, we divided the database into 20

groups based on scenarios, which are described by {

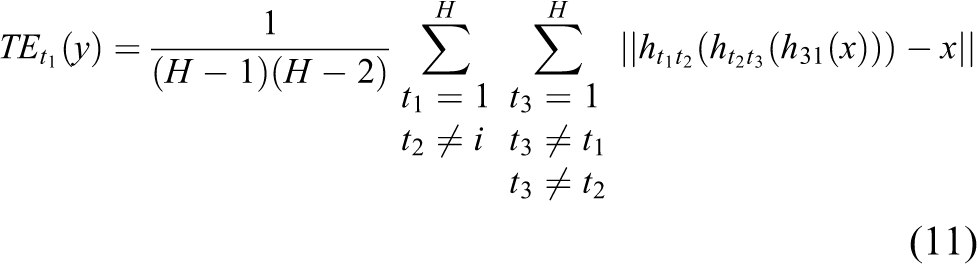

Example images of

Three registration algorithms are selected for testing our assessment model. The three algorithm is Scale invariable-Features from accelerated segment test (SI-FAST), 52 Fast Fourier-Mellin Transform (FAST-FMT), 40 and Difference of Gaussian-Local Binary Patterns (DOG-LBP). 31

Objective evaluation results and analysis of image registration quality

The images in {

After obtaining the registration results, we tested the superimposed images based on combing the reference image and the registered image. In order to test the subjective assessment method, 30 students in our lab were chosen to evaluate the multisensor image registration results by their eyes. Those students spent 1 week to complete the performance assessment of image registration algorithms. It is noted that the subjective results are also used to be as the ground-truth for comparing the objective results which are calculated by V-RAM model. The subjective evaluation results are shown in Figure 2.

The superimposed image and their zoom-in regions. (a) SI-FAST, (b) FAST-FMT, (c) DoG-LBP.

In order to get the subjective results, each student is required to observe the superimposed images, then he/she gives a score for each superimposed image. As we know, 1200 images are registered by the three registration algorithms, so the number of superimposed images is 3600. Moreover, 30 students were asked to watch and score the superimposed images, which caused the number of subjective evaluation results was 10,800. However, due to the limited space, all the scores for each superimposed image are not shown in Figure 3, while we just show the mean score of the observation of all the superimposed images from each student. The interval of the score is 0 to 10.

The subjective results based on human eyes.

According to Figure 3, we can find that the subjective evolution results based on human eyes are different with each other when the students watched the superimposed images. These results imply that the subjective assessment method is easy to be affected by the emotional factor. Moreover, the subjective assessment method is necessary for evaluating the multisensor image registration algorithms.

Objective evaluation for multisensor image registration algorithms

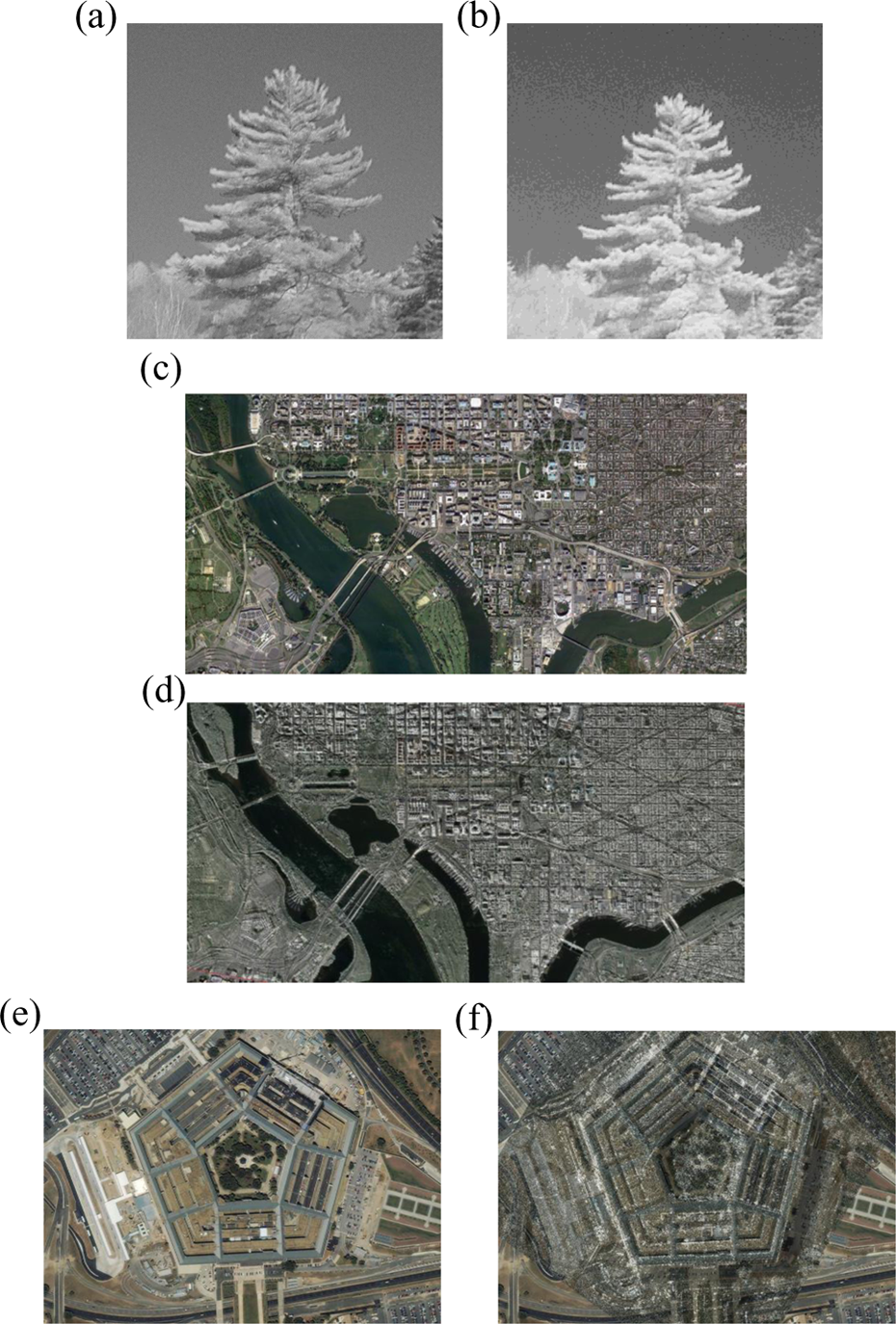

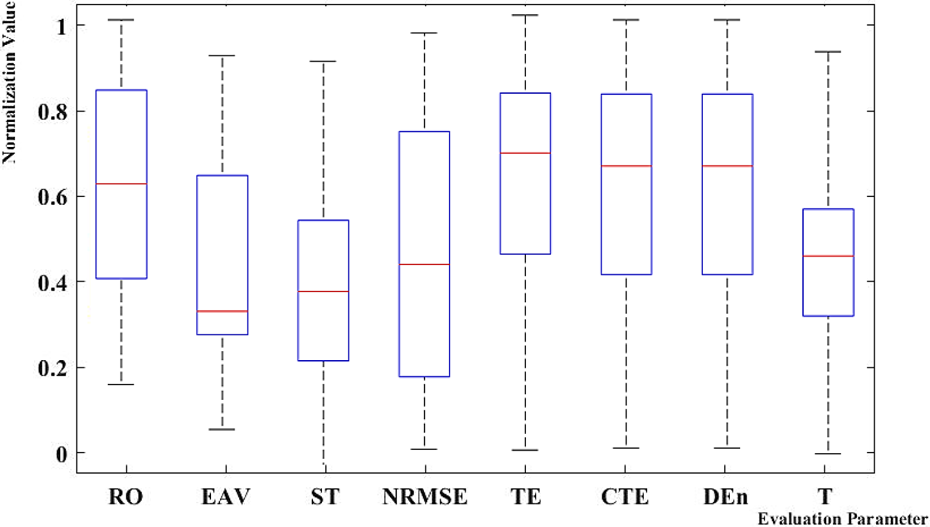

In this section, we firstly analyze the effect of three registration algorithms that has been introduced in this article on the eight indicators of V-RAM model. The objective evaluation results of FAST-FMT, SI-FAST, and DoG-LBP are shown in Figures 4 to 6, respectively. It is noted that the results of V-RAM are described by box plot that can provide a visualization of summary statistics for sample data.

The evaluation result for Fast-FMT based on the V-RAM model. V-RAM: vision registration assessment model.

The evaluation result for SI-FAST based on the V-RAM model. V-RAM: vision registration assessment model.

The evaluation result for Histogram of Oriented Gradient-Local Binary Patterns (HOG-LBP) based on the V-RAM model. V-RAM: vision registration assessment model.

From Figure 4, we can find

that: The means of ROgr, EVAadd, STgr,

NRMSErg, TEg, ICEg, DEnrg, and

The length of the box of The red marker “+” in the corresponding box diagram of NRMSErg indicates

that there is an abnormality in the data of the registration result, which is due to

a mismatch of the image features. However, there is no data abnormality in the boxes

of other indicators. Therefore, we consider that the NRMSErg of V-RAM

model is sensitive to the accuracy of registration results.

According to the Figure 5, we can find that:

The means of ROgr, EVAadd, STgr, NRMSErg,

TEg, ICEg, DEnrg, and

The box length of TEg is longer than the box length of other indicators, which indicates that this indicator is affected by the scene where the image is taken.

As can be seen from Figure

6: The means of ROgr, EVAadd, STgr,

NRMSErg, TEg, ICEg, DEnrg, and

The box length of NRMSErg is longer than the box length of other

indicators, which indicates that NRMSErg is affected by the scene where

the image is taken.

Comparison result of the objective observation and subjective calculation

In order to evaluate the performance of our proposed V-RAM model, we compare the subjective and objective assessment results of the same superimposed image. As we know, there are 10,800 evaluation results of all the superimposed images. However, due to the space limitation, we just showed the 30 of the evaluation results, which is shown in Figure 7.

V-RAM comparison results. (It is noted that the vertical axis is not the score of the image registration assessment.) V-RAM: vision registration assessment model.

In this article, we focus on the performance of our proposed assessment model not the

registration algorithms. In other words, we just compare the objective results of the

three image registration algorithm based on the V-RAM model to the subjective results

obtained by human eyes. Therefore, we utilized the charts to show the changes of the

assessment results between the subjective and the objective evaluation method. According

to Figure 7, we can come to two

conclusions that are shown as follows: For the same image registration algorithm, the objective result is approximate to

the subjective result for the same superimposed image. Moreover, the trend of the

objective results is same with that of the subjective results. For the same scene, the objective result is the same with the subjective result for

the superimposed images acquired using different registration methods. Taking the

ninth results obtained by the three different registration methods as an example, we

can find that the V-RAM model-based registration assessment result acquired by

DoG-LBP is the best one, which is the same with the result based on human eyes.

Figure 8 displays that our proposed model can obtain an assessment result that is more approximate to the result human eyes than that of each parameter. It also indicates that single assessment parameter cannot obtain an exhaustive evaluation result for the multisensor image registration method, which means that our proposed model can achieve an objective and exhaustive assessment.

V-RAM comparison results among different existing assessment methods. V-RAM: vision registration assessment model.

Based on the above results, we found that our proposed V-RAM model achieved the same registration assessment performance with the human vision system. Although the assessment scores are different with the scores obtained by a human, the V-RAM model can give the same conclusion with the human vision system when we compare different registration algorithms.

Conclusion and future work

In order to improve the performance of the multirobot information fusion system, we proposed an SSIM-based assessment model V-RAM for the multisensor image registration algorithms, which was motivated by the human vision system. Two contributions are given by the V-RAM: (a) in order to evaluate the quality of image registration, V-RAM evaluated the registration results from eight aspects, and (b) we introduced superimposed image for building our test database that was utilized to test the subjective image registration assessment method and the V-RAM model. Experimental results implied that our proposed assessment model can obtain approximate evaluation results to the human eyes but is not effected by emotional factors like human beings, which proved that our model was robust and efficient for assessing the multisensor image registration algorithms. In our future work, we will introduce the deep learning method to search the ideal weights of the V-RAM model.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The project sponsored by the National Natural Science Foundation of China (no. 61401040) and the National Key Research and Development Program (no. 2016YFB0502002).