Abstract

Human–robot collaboration is a key factor for the development of factories of the future, a space in which humans and robots can work and carry out tasks together. Safety is one of the most critical aspects in this collaborative human–robot paradigm. This article describes the experiments done and results achieved by the authors in the context of the FourByThree project, aiming to measure the trust of workers on fenceless human–robot collaboration in industrial robotic applications as well as to gauge the acceptance of different interaction mechanisms between robots and human beings.

Keywords

Introduction

Human–robot collaboration can contribute to the development of factories of the future, a space in which humans and robots can work and carry out tasks together. It allows human operators to focus on operations with high added value or demanding high levels of dexterity, thus freeing them from repetitive or potentially risky tasks. However, some tasks can be too complex to be performed by robots or too expensive to be automated, as they may require engineering special tools and systems. Therefore, a collaborative environment in which humans and robots can work side by side and share tasks in an open and fenceless environment is a relevant goal to reach.

Safety and interaction are key success factors for this vision of collaboration between humans and robots. On the one hand, the safety of human beings around the robot must be guaranteed during the execution of tasks. As physical safeguards may be impractical for real cooperation, the use of either power (or force) limiting or speed and separation monitoring is possible according to International Organization for Standardization (ISO). 1 In the second approach, the robot must be constantly aware of what is happening around it and it has to monitor the workers’ actions in order to change its behaviour (speed and/or trajectory) according to the separation distance. On the other hand, an effective bidirectional human–robot communication contributes towards doing the collaboration more effective and safe.

This article presents the results of two experiments carried out to measure how workers trust the safety measures developed and implemented in the European Union (EU) funded

The article is organized as follows: It starts with an overview of the project and the proposed safety strategy and interaction mechanisms are presented. Then, the objectives of the experiment, related work and the experiment design are described. Finally, the results achieved and the conclusions are summarized.

Project context

Project overview

Since December 2014, the FourByThree

2

Project (‘highly customizable robotic solutions for effective and safe human–robot collaboration in manufacturing applications’) is developing a new generation of modular industrial robotic solutions that are suitable for efficient task execution in collaboration with humans in a safe way and are easy to use and program by the factory worker. The four main characteristics of FourByThree are as follows:

Safety strategy

The safety strategy in FourByThree is based on five pillars: The serial elastic actuators allow measuring the force and torque values and provide redundant torque and position estimation. The torque is computed using two different sources: using the motor currents and using the spring deflection and its identified model. If both torque estimation values do not agree, then the motor is disabled. The position sensors on motor and gear side are compared to each other to detect sensor failures. The robot design, by eliminating sharp edges, reducing trapping risks, and so on. The external monitoring system, which consists of projection and vision systems, allowing to monitor the space around the robot to detect any possible violation by the worker. Adjustable stiffness control that adjusts the stiffness level based on different factors, such as relative distance between robot and worker or tasks at hand. The control architecture, as shown in Figure 1.

Safety strategy.

The proper use of these features makes it possible to satisfy the operating conditions established in ISO10218 parts 1 and 2 and ISO/TS15066, once the mandatory risk assessment has been performed in each scenario.

In brief, the FourByThree safety strategy allows implementing speed and separation monitoring and force limiting collaboration modalities.

In fact, it is possible defining a protective area around the robot for coexistence and interference situations (i.e., when the human moves through the robot workspace but does not interact directly with the robot or when the human reaches into the robot working area or obstructs the robot workspace in a non-planned task). The projection 3 and vision systems are in charge of monitoring the robot workspace and triggering the safety signal when there is a violation in the area.

For co-operation activities (i.e., when the human has to interact with the robot in a productive way), the system’s capability to monitor and limit the force and torque is used to guarantee the safety.

Interaction mechanisms

A requirement for natural human–robot collaboration is to endow the robot with the capability to capture, process and understand accurately and robustly human requests. Voice and gestures are key channels that humans use between them for natural communication. By analogy, they can be considered as relevant to achieve such a natural communication between humans and robots. In this multimodal scenario, the information coming from the different channels can be complementary or redundant: A worker says ‘ The worker says ‘

The need for complementary channels is clear, but redundancy can also be beneficial,

4

for example, in industrial scenarios in which noise and variable lighting conditions may reduce the robustness of each channel when considered independently. The FourByThree project has designed and developed a semantic approach that supports multimodal (voice and gesture based) interaction between humans and robots in real industrial settings. For such semantic interpretation, four main modules have been developed: a

These main modules are described in detail in the following subsections.

Knowledge manager

The knowledge manager uses an ontology to model the environment and the robot capabilities, as well as the relationships between the elements in the model, which can be understood as implicit rules that the

Ontologies are reusable and flexible in adapting to dynamic changes, thus avoiding to have to recompile the application and its logic whenever a change is needed. Through ontologies, we model the industrial scenarios in which robots collaborate with humans. The model includes robot behaviours, actions they can accomplish and the objects they can manipulate/handle. It also considers features and descriptors of these objects.

Voice interpreter

Given as input a human request in which a person indicates the desired action via voice, the purpose of this module is to understand exactly what the person wants the robot to do and, if the information is complete, to generate the corresponding command for the robot. For instance, if a worker says ‘

Gesture interpretation

The gesture interpreter module recognizes two different types of gestures: pointing gestures and command gestures used to request a specific and predefined action (e.g.,

For the pointing gesture, a point cloud processing approach has been used. A RGB-D camera is used to acquire the information of the working environment and the worker (in particular, the arms and hand). The camera is placed pointing towards the working area of the robot in the region above the human operator. The camera is calibrated with respect to the robot base and the point cloud referred to the robot frame. Two cuboid regions of interest (ROIs) are then defined in the point cloud as one for the operators pointing gesture (basically his/her forearm) detection and processing and a second ROI where the intersection area has to be identified.

In the ROI where the pointing gesture has to be detected, the forearm of the operator is modelled as a cylinder and its axis taken as the pointing line.

The pointing gesture is modelled as a straight line, while the intersection area can be assumed to be a planar surface (tables, working surfaces, etc.) in most of the cases. The intersection point (

Fusion engine

The fusion engine is in charge of merging the information provided by the voice interpreter, the gesture interpreter and the part identification module to identify the worker intention and send the corresponding command to the robot.

The engine considers different situations regarding the complementary and/or contradictory levels of both sources. It was decided that the text interpreter output will prevail over the gesture information. When no contradiction exists between the two sources, the gesture information is used either to confirm the text interpretation (redundant information) or to complete it (complementary information).

Related work

Human–robot collaboration has been a significant research topic since the beginning of robotics. The constant introduction of robots in industrial environments, the creation of new compliant robots and the sensors available nowadays in the market (cheaper and more accurate) make human–robot interaction an even more active and exciting research subject. 5,6

The different approaches for safe human–robot interaction can be classified as either pre-collision or post-collision strategies.

Post-collision methods detect a collision as it occurs and attempt to minimize the resulting damage. Commercial robots that are purposely designed for collaborative applications fall mainly in this category. The implemented methods may vary. The more common ones are power and force limiting, use of series elastic actuators that minimize the force of impact and the use of protective skins that detect the collision or the proximity of the worker. These approaches have been used in COBOTs available in the market, such as in Nextage (KAWADA), LBR iiwa (KUKA), Roberta (ABB/GOMTEC), Yumi (ABB), Apas (BOSCH), UR3/5/10 (Universal Robots), CR-35iA (FANUC), Baxter (Rethink Robotics) or Franka.

In this field, it is very relevant that the work described in Haddadin 7 is the result of other previous works such as Haddadin et al. 8,9 They focus mainly on the identification of limit values of force and power that a robot may exert upon a person without causing severe injuries derived from real human impact experiments. They were able to drive 8 times faster and cause 13 times higher dynamical contact forces than were suggested by the first version of the norm for the static case. As the impact experiments yielded such low-injury risks, the question whether this standard was too conservative raised.

Pre-collision strategies attempting to prevent collisions by detecting them in advance are also very relevant, not only to avoid the collision itself but due to the fact that there are more than 1.5 million industrial robots already in use worldwide, and there is a great interest in designing solutions that can turn those robots into human-safe platforms.

There are several approaches endowing robotic cells with sensors to determine that a human is present in the vicinity of the robot. Some add-on solutions have been developed for robots that have not been designed as inherently human-safe robots, such as ABB’s SafeMove. 10

In Przemyslaw et al., 11 a PhaseSpace motion capture system that has the drawback of requesting the worker to wear active Light-emitting diode (LED) marks was utilized to sense the position of the human worker within the workspace of an industrial robot with no built-in safety features and no compliant joints. In Lasota and Shah, 12 the same authors evaluate through human subject experimentation whether this motion-level adaptation leads to more efficient teamwork and a more satisfied human co-worker. The results indicated that people learn to take advantage of human-aware motion planning even when performing novel tasks with very limited training and with no indication that the robots motion planning is adaptive.

In Vogel et al., 3 a projector emitting modulated light patterns into the shared human–robot workspace is used. The light reflected from the environment is detected by a camera, being able to detect any intrusion. The system is also used to provide visual information to the worker.

In Rybski et al., 13 the authors fuse data from multiple three-dimensional imaging sensors of different modalities (two time-of-flight cameras and two stereocameras) into a volumetric evidence grid and segment the volume into regions corresponding to background, robots and people that have been previously modelled. The system allows slowing down the robot and even stopping the motion when people and robot approximate. Also relevant, Morato et al. 14 presents a multiple kinects-based exteroceptive sensing framework to achieve safe human–robot collaboration during assembly tasks.

Different approaches 15,16,11 proposed the use of particle filtering and statistical data association for tracking multiple targets, adding robustness to the tracking process.

Factors affecting trust in human–robot interaction have subject of analysis in some works, in particular, when working in high-risk situations such as military applications. In Hancock et al., 17 they evaluated and quantified the effects of human, robot and environmental factors on perceived trust in HRI. They concluded that factors related to the robot itself, specifically, its performance, had the greatest current association with trust, and the environmental factors were moderately associated. There was little evidence for effects of human-related factors.

Bainbridge et al. 18 and Tsui et al. 19 explained how the type, size, proximity and behaviour of the robot affect trust. Park et al. 20 described that trust can be dynamically influenced by factors (or antecedents) within the robotic system itself, the surrounding operational environment and the nature and characteristics of the respective human team members. Sadrfaridpour et al. 21,22 proposed a model for dynamic trust of human to robot based on the robot performance and the human performance. They simulated the human performance, the robot performance and the corresponding trust during a typical work day when they do a certain manufacturing collaborative task.

Experiment objectives and description

Objective

The objective of the experimentation has been to obtain valuable information about two key aspects in a human–robot collaborative environment:

Most papers dealing with perceived safety and interaction satisfaction use a similar approach: participants are requested to execute a collaborative task with a robot and observation and questionnaires are used to measure different features. This is the case of the experiment described in Przemyslaw et al., 11 in which participants worked with a robot to perform a collaborative task, placing eight screws at designated locations; human satisfaction and perceived safety and comfort were evaluated through questionnaires. As recruiting neutral participants is difficult, they followed the common practice of using participants affiliated to the institution, in this case, they were 20 MIT affiliates. In our experiment, participants had not any kind of relationship with the experimenters, on the contrary, they were attendants to the two fairs that accepted to participate in the experiment. A second differential aspect in these experiments was the fact that the proposed tasks demanded the participants to use both the interaction mechanisms and safety features to complete them.

Experiment design

FourByThree has had the opportunity to be present in two important Trade Fairs in 2016:

In each one of these events, we conducted user experiments with attendees that agreed to take part voluntarily. We chose to conduct studies at these events because, in this way, we could gain access to bodies of participants professionally involved in industrial processes from different sectors, with a variety of levels of experience with automation technologies. Such a mix of profiles would have been difficult to recruit otherwise.

The common objective of these experiments was to gain insight into human attitudes with respect to fenceless robotics and some selected interaction mechanisms. By means of the tests, observation and questionnaires, we obtained valuable feedback about the following aspects:

– acceptance of vision-based human detection, – force control in case of collision. Interaction mechanisms: – pointing gesture, – manual guidance, – tapping. Overall attitudes with respect to COBOTs in the workplace.

The technologies and tasks used in both experiments were similar, although with complementary focus on the interaction techniques employed. In both cases, an introductory explanation was provided by the experimenter regarding the technologies employed and the sequence to be followed by the participant in the experiment.

TECHNISHOW: study 1

This experimental study took place during the TECHNISHOW fair in Utrecht, May 2016, in the booth run by STODT, one of the partners in FourByThree. The experimental set-up consisted of a Universal Robot and an RGB-D vision system to monitor the environment and capture data for the pointing gesture, a table and two trays with some parts on one of them. The participants in the experiment had to perform three tasks and answer to a questionnaire afterwards.

Pointing gesture

The first task demonstrated collaborating with the robot using a pointing gesture. There were three different parts on tray1, as shown in Figure 2. The participant pointed at one of them with the finger (extended arm). Following the gesture, the robot identified the part that was being pointed at, grasped it and placed it on the corresponding position on the second tray. The set-up and pointing gesture are shown in Figure 3.

The three different objects in one of the two trays.

Experiment set-up at TECHNISHOW and participant pointing at a target object.

Safety monitoring

The second task demonstrated the safety feature of the robot interrupting its movement whenever the relative distance between a person and the robot was below a threshold. While the robot was moving a part, the participant was invited to reach out to the robot with their hands without touching it. The monitoring system detected this intrusion of the robot’s space and interrupted its movement, so as to avoid a possible collision.

Manual guidance

The third task demonstrated programming the robot by moving it with the hands. The participant dragged the robot arm’s gripper to a position near one of the objects on one of the trays. The position was recorded and afterwards the robot executed the program going to the recorded point, taking the part and placing it on the second tray.

Collision

In addition to the tasks above, participants were invited to be ‘hit by the robot’: The monitoring system was disabled and the robot collided against the participant’s arm. The robot detected the collision (force exerted) and it stopped as a result.

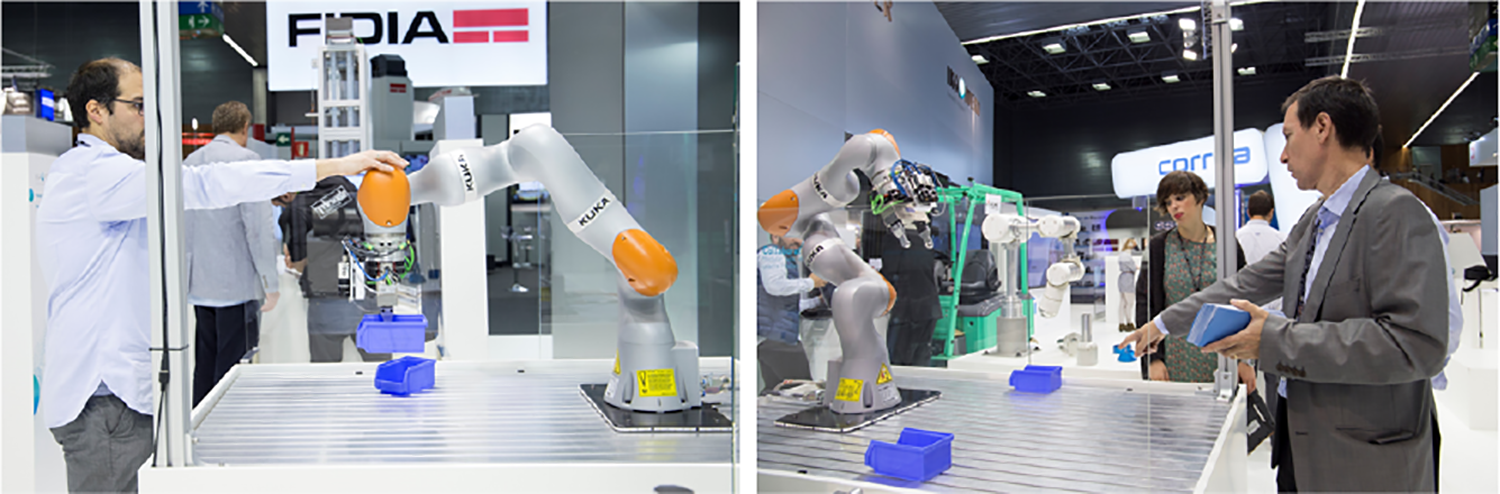

BIEMH: study 2

The setup was prepared for the experimental study at the BIEMH fair (Bilbao, June 2016), a different set-up was used consisting of a KUKA IIWA robot placed on a table, two plastic storage bins and the RGB-D visual monitoring system. This second experiment consisted of the following tasks in the sequence described below.

Safety monitoring

The sequence of the experiment was as follows: While the robot moved one of the bins continuously from side to side (see Figure 4), the participant was invited to bring their hands close to the robot. The monitoring system detected that the hand was close to the robot and interrupted the movement. When the visitor moved his/her hand away, the robot resumed its movement.

Robot moving a plastic drawer.

Tapping and pointing gestures

Once the robot was still after detecting the participant’s hand nearby, she could apply a light downward force with the hand on the robot arm (tapping gesture). The robot reacted to this by leaving the bin on the table and withdrawing itself backwards to a resting position. There, the robot waited for the participant to signal which of the two bins present on the workbench (which could be casually placed in a random arrangement) it had to grasp. The participant then selected one of the trays on the table by pointing at it with the finger (extended arm). The robot used the camera placed on its flange to analyse the actual position of the bin that had been pointed at, computed a grasping strategy and took it from the surface of the workbench, lifting it up and resuming the continuous sideways movement while carrying it. The next cycle of interaction was then ready to begin with a new participant. Both interaction mechanisms are shown in Figure 5.

(left) Visitor exerting a force on the robot to interact with it. (right) A participant pointing at a container bin that he wanted the robot to take.

In order to understand the results presented in the next section, it is important to point out a difference introduced in this set-up with respect to the previous one in TECHNISHOW: in BIEMH (study2), we added to the base of the robot a band of LEDs that was designed to inform the user about the robot’s status in the interaction. In other words, to improve situational awareness for the human actor in the collaborative interaction. Specifically, this band lit up while the robot was awaiting for the person to perform a pointing gesture, and for as long as the gesture had not been recognized by the vision system.

For the rest of the cycle in the interaction, the luminous band remained switched off. This simple information was helpful for the person to understand when the robot was expecting an input gesture, as well as to know when a pointing gesture had been performed long enough for the robot to have registered it. In both studies, participants had to fill in a questionnaire at the end of each session, including five-point Likert scale and multiple choice close-ended and open-ended questions divided into four sections as demographics, interaction, safety and usability.

In the demographic section, participants were asked about pure demographic issues such as age, gender, academic level, the company they work for (sector, size, main activities, current use of robots and plans for introducing robots) or experience in working with robots, as well as their opinion on the impact of robotics on cost, employment, working conditions, efficiency and so on are main barriers for the introduction of COBOTs and the key requirements for COBOTs. In the interaction and usability sections, workers had the opportunity to provide feedback on the interaction mechanisms implemented, that is, pointing gesture (reaction time, naturalness, etc.), hand guiding (effort needed, speed of the movements, safety perception, etc.) and tapping (this was included only in study2). In the safety section, participants evaluated which safety feature was the most relevant, safety perception and acceptance of fenceless collaborative environments.

Finally, participants were interviewed about the more relevant aspects that were observed in the experiments and regarding the most salient responses they had entered in the questionnaires.

Participants

Altogether, 115 participants took part in both studies: 38 participants in study1 (TECHNISHOW), 2 of which were women and 77 participants in study2 (BIEMH), 13 of which were women (see Figure 6).

Demographics of participants.

The prior experience of the participants in these studies is presented in Figure 7, showing relative proportions of type and extent of the experience. More specifically, 13% of the participants had more than 10 years of experience as machine operators, production system designers, commissioning and maintenance of automation systems, 8% and 37% had worked between 6 year and 10 year and between 1 year and 5 year, respectively, with robots and the remaining 48% had no previous direct experience with robots at work.

Participants’ prior experience with robots.

It is also worth noticing that significant number of the participants with previous experience working with robots had acquired their experience in the automotive sector (see Figure 8).

Participants’ experience by business sector and size of company.

Experiment results

Interaction mechanisms

Participants were asked about naturalness, reliability, usefulness, ease of use and response time of the pointing gesture interaction mechanism.

Figure 9 suggests a clear improvement between study1 and study2 in the users’ subjective experience with respect to pointing gesture-based interaction modality, and in particular, the perceived response time. This improvement could be due to the introduction of the feedback mechanism introduced in study2 (the LED luminous band that designed for situational awareness). As explained before, in this second set-up, it was introduced as a lighting system on the base of the robot that was switched on whenever the robot was waiting for a pointing gesture and was immediately switched off once the gesture was identified.

Feedback on pointing gesture.

In both experimental set-ups, a period of time passed since the gesture was identified and before the actual movement of the robot started (during which the planning of the trajectory and the acceleration ramp took place). From the participants’ subjective perspective, the response time in study1 included the complete time until the robot started to move. In contrast, in study2 only the actual pointing detection time was displayed to the participant through the lighting pattern, results in perceiving the system as showing faster response. This factor can also be the reason that explains the lower score for usefulness in study1. The answers recorded for the rest of the parameters (naturalness, ease of use and reliability) were very positive (Figure 9).

In study1, participants were asked to drag the robot arm’s gripper to teach the grasping position. To do that, we used the gravity compensation feature of the universal robot. We asked the participants about three aspects of this hand guiding interaction mechanism (Figure 10): effort needed to drag the robot, usefulness and difficulty to perform. Although the answers were positive for the three factors, it should be stressed that there was a significant number of participants that found the effort needed to move the robot to be in the limit of acceptability. Based on this, we consider that there is still room for improving the control algorithms needed to implement this functionality.

Feedback on hand guiding.

In study2, we implemented the tapping functionality: users lightly tapping downwards on the robot arm to indicate that it could resume its movement and watch for a pointing gesture. The acceptance of the participants was overall positive (Figure 11) if we look at the answers to the same four questions (naturalness, reliability, usefulness and ease of use), even though, 8% of the participants did not feel this way of interaction to be natural. Nonetheless, as this is a feature that does not demand additional sensors (we used the force feedback provided by the COBOT), it is worth considering its use in the future as an additional interaction mechanism.

Feedback on tapping.

Safety

Participants were asked about the safety perception when working and interacting with the robot without any physical barrier between them (see Figure 12). In both studies, the safety measure that created the ‘safe’ collaborative environment was the proximity monitoring system based on an RGB-D vision system, which measures the relative distance between the robot and a person and stopped the movement of the robot when such distance was below a threshold value.

Feedback on perceived safety.

It was encouraging to observe a clearly positive acceptance of the fact that COBOTs will bring many benefits both to workers and to processes in which they integrate. However, it was striking to observe the widespread opinion that such robots will have a negative effect on jobs. It is clear that the robotics community needs to have a clear and convincing answer for this recursive general opinion, and the motivation to generate supporting evidence through the creation of success case studies.

While feedback was overall positive in both cases, it is worth analysing closer the near-unanimity (97%) achieved in study1. There are two possible reasons for that: first, the participants in that study were younger than in study2. In fact, 50% of them were aged below their 30s. In addition, in study1, it was demonstrated to the participants how the robot reacted in case of an unexpected collision (and some of them tried it first hand), that is, how the robot stopped when it detected a collision (following the implementation of the force and torque limiting approach from ISO 10218).

Workers opinion

At the end of each session participants were asked about their opinion on the consequences of robot introduction in factories as well as the key elements to make this a success. The answers to the first question (see Figure 13) were as expected: We observed was the widespread opinion that robots will result in a reduction on the number of jobs. On the contrary, there was a broad consensus on the benefits that this introduction will bring to productivity, quality of production, competitiveness and working conditions of the workers.

Negative and positive effects of introducing robots at work.

Safety was considered the key requirement for succeeding in the introduction of the human–robot collaborative paradigm, followed by usability, flexibility and efficiency (see Figure 14).

Key success factors for HRC. HRC: human-robot collaboration.

Conclusion and future work

The perception of trust in the safety strategy tested in the experimental studies described above was overall positive. It seems that no special objections could be expected from users, according to our data. This finding is in line with the fact that 97% of the participants in study1 declared that in the future the collaboration between robots and workers will be possible and that they would accept working together with in this way. In addition, gesture-based interactions and hand-guiding interaction mechanisms were also rated positively by participants, as potential future users.

As a limitation of our work presented here, this analysis has to be considered for revision in more realistic scenarios, in which workers perform real tasks with the support of robots during a longer period of time. Such a study should be considered for follow-up future work. In fact, authors will conduct a further analysis in five real industrial pilot studies in which workers and robots will have to accomplish deburring, assembling, welding, riveting and machine tending operations. This work will be part of the validation process of the FourByThree project.

Additionally, the projection-based safety mechanism that is also part of the FourByThree project, but that was not available by the time these experiments were done, will provide an additional mechanism that can improve the trust of workers.

We also expect that the two additional mechanisms that are included in FourByThree (using the projection system and the gestures to command basic actions of the robot, such as stop and resume, including voice-based interaction) will contribute to a richer interaction experiences. Both aspects will be tested with a wide spectrum of real workers at STODT in the coming months.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: The FourByThree project has received funding from the European Union’s Horizon 2020 research and innovation program, under Grant agreement no. 637095.