Abstract

Bone removal is essential in cranial and orthopedic surgery but remains time-consuming and highly operator-dependent. We present Cranibot, a surgeon-in-the-loop robotic platform for precision bone removal that integrates an interactive control scheme with a force–position hybrid controller. The system combines computed tomography-based navigation, a custom end effector with dual six-axis force/torque sensors, and an ergonomic handle for surgeon intent input. We extend the instantaneous task specification using constraints framework to fuse surgeon commands with real-time force regulation, enforcing pose constraints while maintaining safe contact forces. Preliminary validation on ex-vivo skull models and an in-vivo porcine feasibility study yielded an average placement error of 2.6 ± 0.56 mm and an average drilling time of under 30 s. Operator forces remained below 5 N, substantially reducing physical load. These initial results indicate that Cranibot can improve efficiency and lessen operator burden, offering a viable pathway toward clinically deployable collaborative bone-removal surgery.

Keywords

Introduction

The field of surgical robotics has recently transitioned from rigid, pre-programmed execution toward adaptive, human-centric collaboration, a shift driven by the convergence of embodied intelligence and advanced robot learning architectures. 1 Contemporary research focuses on developing control frameworks capable of dynamic intention recognition and autonomous skill acquisition. Advanced neural–machine interfaces and data-driven learning paradigms now enable robotic assistants to interpret subtle surgeon inputs and optimize tool trajectories in real time, substantially improving the transparency of collaborative tasks in stochastic surgical environments. 2 These advancements facilitate a symbiotic interaction in which the robot mitigates physiological tremor and fatigue while the surgeon maintains high-level cognitive oversight.

Parallel to the evolution of intelligent control, the emergence of “smart” instrumentation and multi-sensor fusion has redefined precision in hard-tissue procedures. 3 Modern bone-removal platforms increasingly employ high-fidelity feedback loops (i.e. integrating haptic sensing, vibration analysis, and real-time tissue characterization) to navigate complex anatomical structures. Recent approaches in smart robotic milling emphasize active constraint enforcement and predictive breakthrough detection, using hybrid sensing to distinguish bone-layer transitions with sub-millimeter resolution. 4 Despite these advances in sensor-driven autonomy and learning-based optimization, seamlessly merging a surgeon's nuanced intent with compliant, robust robotic assistance remains a significant challenge for clinical translation.

Surgical treatment continues to be a fundamental option for numerous bone pathologies. 5 Procedures such as craniotomy, arthroplasty, and laminectomy require sub-millimeter bone removal, tasks that are demanding, time-intensive, and ergonomically taxing for surgeons. 6 Conventional craniotomy, for example, depends on manual drilling and milling to create a cranial window—a process that can exceed an hour and impose substantial physical and cognitive strain on the surgical team. 7 A central intraoperative challenge is navigating the narrow gap, often less than 1 mm, between the skull's inner table and the dura mater, making dural preservation and soft-tissue protection exceptionally difficult. 8 This challenge is compounded by the skull's multilayered architecture, variable bone density, and anatomical irregularities, all of which heighten the risk of complications. 9

Robotic assistance in surgery has a long history, beginning with the adaptation of industrial arms for stereotactic biopsies and early CT-guided systems that first demonstrated the benefits of robotic precision. 10 Subsequent image-guided robots improved the reproducibility of frameless stereotactic tasks. 11 More recent platforms, including ROSA and NeuroArm, have expanded indications beyond stereotaxy to incorporate complex path planning and intraoperative guidance.12,13 In orthopedic and maxillofacial surgery, semi-active “hands-on” robots such as Acrobot and MAKO have shown that constrained, surgeon-in-the-loop milling can enhance bone-cutting accuracy while preserving essential surgeon control. 14

Recent research has increasingly focused on automated and vision-guided cranial milling platforms that integrate surface contour analysis, image guidance, and closed-loop sensing, enabling highly repeatable craniotomies in small animals and phantom models.15,16 Mechanically precise robotic systems have demonstrated how automated surface mapping combined with computer numerical control (CNC)-style milling can achieve accurate craniotomies, while computer-vision and optical coherence tomography (OCT)-guided robots have shown rapid, sub-100-µm control of residual bone thickness in experimental settings.17,18 These systems highlight how advanced sensing (e.g. OCT, stereo or structured light, and microscopic imaging) can be tightly integrated with motion control to reduce operator variability and attain micron-scale safety margins above the dura. 19

Robotic systems must still overcome several technical challenges, including accurate patient registration, reliable intraoperative sensing of bone-layer transitions and breakthrough events, precise estimation of cutting forces, and safe human–robot interaction strategies using haptic or impedance control, active constraints, or shared control frameworks.20,21 Advances in real-time breakthrough detection and force-based feed control have reduced the risk of sudden penetration during drilling, while progress in multi-axis force/torque (F/T) sensing (including fiber Bragg grating-based designs) and learning-based bone recognition has improved layer discrimination and adaptive control.22,23 Thermal effects during high-speed milling also remain an active research area, with recent studies modeling heat generation and proposing machining parameters that minimize osteonecrosis near delicate neural tissue.24–26

Within the current research landscape, two principal paradigms coexist: autonomous systems that execute preoperative plans with minimal surgeon input, and interactive systems that emphasize surgeon-in-the-loop collaboration through haptic teleoperation and active constraints.27,28 Shared-control approaches are particularly valuable because they leverage surgeon expertise for critical decisions while the robot compensates for tremor and fatigue by enforcing geometric constraints.29,30 Despite these gains, a major research gap persists in integrating customized, task-specific end-effector designs with hybrid control schemes capable of fusing surgeon intent and compliant robotic assistance. 31

In this paper, we present a robotic system designed for bone removal with three core contributions. First, we introduce a patient-adaptable end effector engineered for safe, efficient drilling and milling. Second, we design a collaborative control architecture that integrates impedance control with real-time force sensing for bone-layer recognition and breakthrough detection. Finally, we provide comprehensive experimental validation using craniotomy as a representative procedure, evaluating accuracy, safety, and collaborative performance in a clinically relevant context. The remainder of this paper is organized as follows: Section “System description” details the system architecture, focusing on the dual-sensor end-effector design. Section “Control scheme” establishes the theoretical foundation, deriving the instantaneous task specification using constraints (iTaSC)-based kinematic modeling and interactive force–position hybrid control scheme. Section “Experimental verification” reports quantitative results from ex-vivo skull phantom trials and the in-vivo porcine feasibility study, focusing on placement accuracy and force metrics. Section “Discussion” offers a comprehensive discussion, addressing clinical safety margins, benchmarking against state-of-the-art autonomous platforms, and outlining future research directions.

System description

System overview

Our proposed Cranibot comprises an interactive device, a robotic arm, and a navigation system. Figure 1(a) illustrates the overall layout. Three-dimensional (3D) reconstruction of the patient's bone, surgical planning, and robotic arm control are executed within the robot's controller. The navigation system provides the pose of the surgical tool, while the human–robot interaction device enables the surgeon to perform the operation.

Cranibot system demonstration. (a) System overview; (b) human–robot interactive device.

Interactive device

Interactive control between the surgeon and the robot is achieved through reverse driving, in which the robot senses the force exerted by the surgeon and generates a corresponding motion response. 28 Simultaneously, the contact force between the robot's end effector and the bone must also be detected. To meet these requirements, we designed a flange (Figure 1(b)) housing two mutually perpendicular six-dimensional force/torque sensors. Sensor I (M3701b, nonlinearity <1%) detects the surgeon's operating force, and Sensor II (M3703c, nonlinearity <0.5%, SRI International) measures the contact force. A posture-adjustment mechanism permits orientation changes of the surgical tools, and a flexible mechanism provides compliance during skull milling to buffer sudden force fluctuations.

During drilling and milling, the robot's end effector is positioned at the target region with the appropriate pose. The robotic arm is then passively guided by the operator to perform the procedures. Task execution is shared: the surgeon controls the end-effector speed, while the robot controller regulates tool pose and contact force. A mechanical interface allows manual replacement of surgical tools, such as drills and milling cutters, during the operation.

Controller

The controller consists of a personal computer (PC), a control board, and a navigation system. The PC software employs the Visualization Toolkit (VTK) for 3D medical image reconstruction, registration between the robotic arm and the 3D model, and preoperative planning. The quartz tuning (QT) framework provides a user interface that displays tool status and target regions. A control board (Cortex-A15, ARM) collects force sensor signals through two data acquisition cards and transmits them to the PC via TCP/IP. Motion commands generated by the PC are relayed to the robotic arm through the control board. The navigation system (Polaris Spectra, Northern Digital Inc.) includes a binocular vision device and passive markers. Markers affixed to the surgical tools and the patient's bone (e.g. skull) are detected through fluorescent spheres, enabling real-time pose estimation.

The operation procedure includes preoperative 3D image processing, path planning, and intraoperative navigation-guided execution. Specifically: (1) patient CT data are obtained and reconstructed into a 3D model; (2) the target surgical area is defined, the robotic arm's planned pose is set, and a kinematics-based motion trajectory is generated; and (3) under navigation-system guidance, the procedure is performed using the proposed interactive control algorithm.

Control scheme

Overall

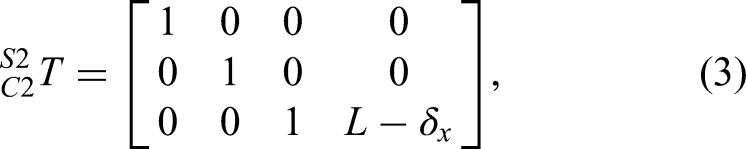

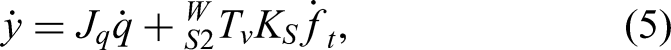

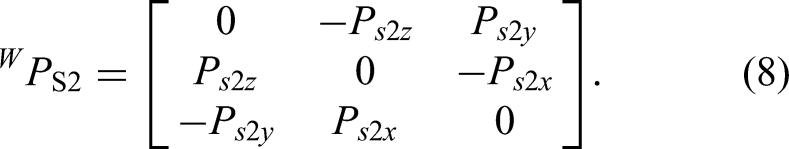

iTaSC is a speed-based control method used to describe control tasks in complex situations,32,33 including transformations between different control frameworks.32,34–37 In this paper, we extend iTaSC to the interactive control of a surgical robot, representing a new application for multi-interactive task execution. This framework includes scene graphs, robots, tasks, target objects, and motion solvers. The mathematical formulations for the kinematic modeling and task specifications in equations (1)–(6) follow the systematic constraint-based approach established by Merckaert et al. 32 and De Schutter et al. 33 , adapted here to the specific geometry and compliance characteristics of the Cranibot end effector.

The scene is illustrated in Figure 2. The world coordinate system {

Relationship of the robot's coordinates.

The position of the contact point between the tool and the bone in the world coordinate system is defined as y, and the forward kinematics are expressed as

Interactive control

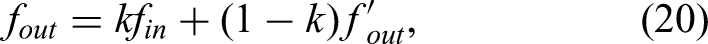

The relevant force for control is the contact force, which is difficult to regulate directly

29

under speed control. The relationship between force and speed is defined as:

Because milling is executed quasi-statically and the robotic end effector has a single degree of flexibility, only the static wrench is considered the dominant component of interaction. The impedance model of the robot joint is described as

Substituting equations (11) and (12) into equation (10) yields

From equation (9), the robot's compliant matrix becomes

Robot flexibility also includes deformation of the flexible member and skull deformation. As the rigid craniotomy tool contacts the skull at a point, the relationship between

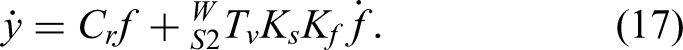

Substituting equations (13) and (15) into equation (5), the velocity of the tool and skull contact point in the world frame becomes:

By setting

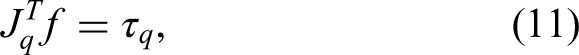

Surgeon–robot interaction is captured through the robot's perception of surgeon-applied forces on the handle. By interpreting this force, the system performs motion analysis and decision-making to provide power assistance and reduce operator effort. The proposed control framework operates at the kinematic level: the algorithm computes desired joint velocities (

Although equation (9) describes the relationship between robot velocity and external force, the flexibility matrix varies with robot configuration, which can lead to inconsistent operator response. To avoid this, the stiffness matrix is used to define the interaction model between surgeon and robot:

Adjusting the matrix

Force–position hybrid control

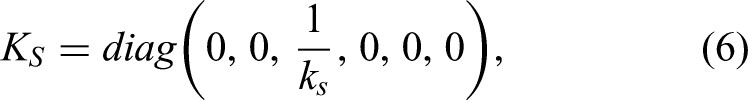

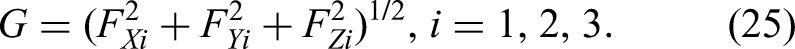

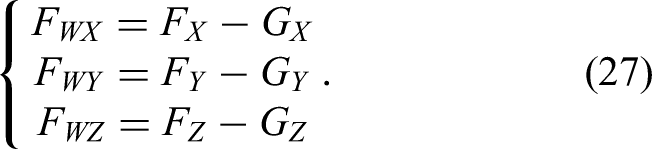

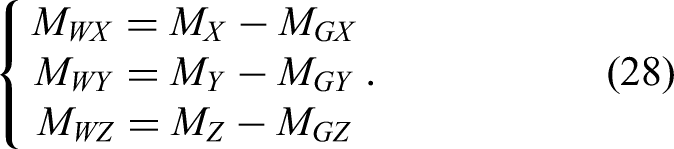

The detection of the interaction force, serving as both feedback and input for interactive control, is the key variable driving the robot's movement. Here, the interaction combines the surgeon's operation and contact forces. To accurately measure both, gravity compensation must be applied to the force sensor data so that the true interaction force is obtained by removing the gravitational effects of the handle and tool.

The gravity compensation method for the operating-handle unit follows the same principle. When no external force is applied to the surgical tool, all force and moment values measured by the six-dimensional force sensor arise solely from the tool's gravity. By driving the robotic arm through multiple spatial poses, several sets of force/moment measurements can be collected; using these, the weight and center of gravity of the tool can be identified via the least-squares method.

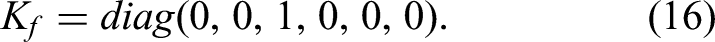

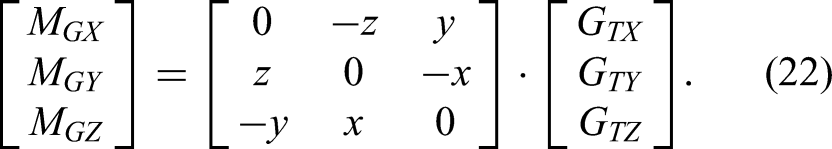

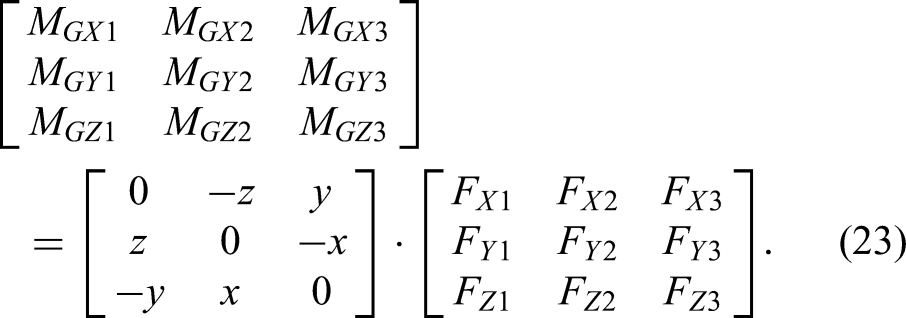

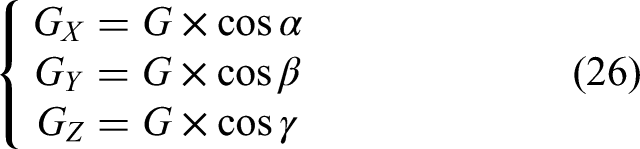

The six-dimensional force sensor frame is a rectangular coordinate frame (Figure 3). Its axes are X, Y, and Z, and the tool gravity is

Gravity compensation diagram.

Because

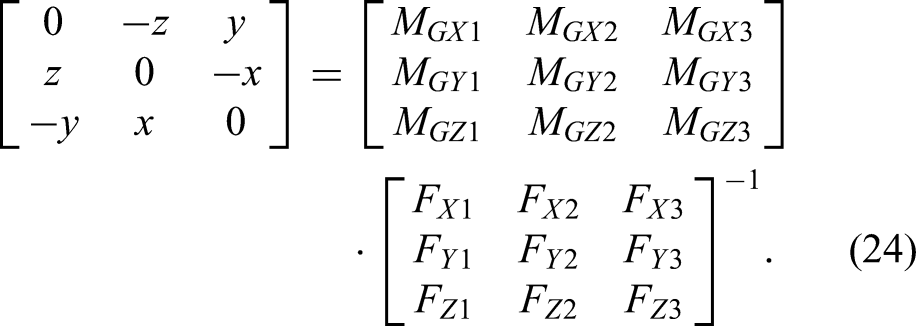

Theoretically, three different poses are sufficient to compute the tool's center of gravity and weight. Let the three measurement sets be (

If matrix

Thus, the coordinates

During craniotomy, the load at the tool tip must be detected. The six-dimensional force sensor measures force components

As the robot operates, the direction of the tool's gravity in the sensor frame changes with the pose of the end effector. After calibration, the angles

The corresponding load-moment components on each axis are

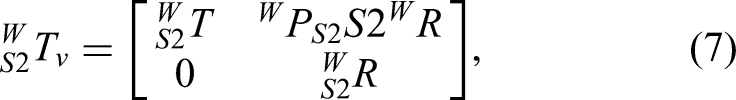

The control framework for Cranibot milling is shown in Figure 4. The first control flow acquires the surgeon's operating force through the handle-mounted force sensor and uses a force–speed conversion module to realize surgeon-driven control of the craniotomy operation. The second control flow reflects the robot's motion path generated through preoperative surgical planning, which constrains the allowable workspace. The third control flow regulates the separation of skull and dura by enforcing the target contact force

Interaction control architecture.

The three layers of the control loop provide an orthogonal force/position control strategy implemented through the six-degrees-of-freedom manipulator. To accurately estimate interaction forces, a gravity compensation algorithm operates in an open loop supporting the remaining control layers. Trajectory-following errors, converted to joint-speed commands in the

The design concept of the force/position hybrid control is as follows: after planning the working path in the craniotomy area, the robot controls the position of the craniotomy tool tip on the tangent plane of the planned trajectory, while the surgeon controls the milling speed. The data measured by the force sensor are compared in real time with the target contact force and adjusted to maintain stable force regulation. When the tool encounters a rigid surface, it transmits only the environmental interaction force. Assuming friction between the tool and environment is negligible, the normal information of the contact surface can be derived from the sensor's force feedback.

During milling, the presence of a milling feed force makes it difficult to compute the surface normal solely from force-sensor values obtained without cutting load. Therefore, the normal direction is determined primarily from preoperative medical imaging, and its representation in the robot frame is obtained through coordinate transformation.

Stability considerations

The stability of the proposed hybrid control scheme is grounded in the passivity of the interaction dynamics. For the surgeon–robot interaction loop (equation (21)), the controller renders the robot as a passive mechanical admittance. By selecting a positive definite stiffness matrix

For the contact-force regulation loop (equation (19)), stability is achieved through appropriate tuning of the PD gains,

Experimental verification

As shown in Figure 5, the prototype of Cranibot was developed based on the proposed design. Two experiments, a skull-model test and an in-vivo validation, were conducted to evaluate system performance.

Cranibot prototype.

Tests on the skull model

We performed skull-model tests according to the following procedure:

The skull model was scanned to obtain CT data, which were reconstructed into a 3D model for navigation in Cranibot. To establish the spatial relationship between the robotic arm and the virtual model, the skull surface was contacted with a probe tip to collect registration data. Four drilling points and their corresponding milling trajectories were defined in the virtual image interface (Figure 6(a)). Based on the planned drilling points, the robotic arm was positioned at each target with the drill oriented normal to the skull surface, and drilling was performed under robotic control (Figure 6(b)). After drilling, the robotic arm was retracted, the drill was replaced with a milling tool, and the control mode was switched to milling. Milling was then performed on the drilled openings. During drilling, the drill motion and skull-model state were displayed in real time in the virtual interface, providing direct navigation feedback to the surgeon.

Drilling procedure during tests. (a) 3D model-based interface for navigation; (b) drilling operation.

The operation was performed on four skull models with 15 total cases. With Cranibot assistance, surgeons completed all procedures successfully. Quantitative measurement indicated a mean placement error of 2.6 ± 0.56 mm between the planned trajectories and the actual drilled holes. Minor displacements of the skull model were observed during drilling due to applied forces.

In-vivo experiment

An in-vivo experiment was conducted to further verify Cranibot performance. A pig was selected because the structure and tissue properties of the porcine head closely resemble those of the human head. The head was CT-scanned to obtain image data and then fixed in a supine position on the operating table after anesthesia. Preoperative preparation included epilation, decortication, and hemostasis to expose the skull (Figure 7(a) and (b)).

In-vivo craniotomy experiment. (a) Decortication. (b) Expose the skull. (c) Register the skull with the model. (d) Display the position of the surgical tool and the skull in the software. (e) The surgeon controls the robot to perform drilling operations on the skull. (f) Group of holes drilled on the skull. (g) Perform milling operations on the skull. (h) The bone window formed on the skull.

The surface data of the skull model and the data acquired by contacting the skull surface with the probe were used to complete registration (Figure 7(c) and (d)). After registration, the target region was selected and the robotic arm path was planned. Drilling mode was activated with the robotic arm equipped with the drilling tool. The operator conducted the procedure by manipulating the handle, and drilling was executed jointly by the operator and Cranibot (Figure 7(e) and (f)). After drilling, milling mode was enabled, and the drilling tool was replaced with the milling tool to perform the milling operation (Figure 7(g) and (h)).

The average drilling time was less than 30 s, saving more than 1 min compared with manual drilling. Both drilling and milling proceeded smoothly and were easy for the operator to control. The required handle force was less than 5 N, significantly below the force typically needed for manual drilling.

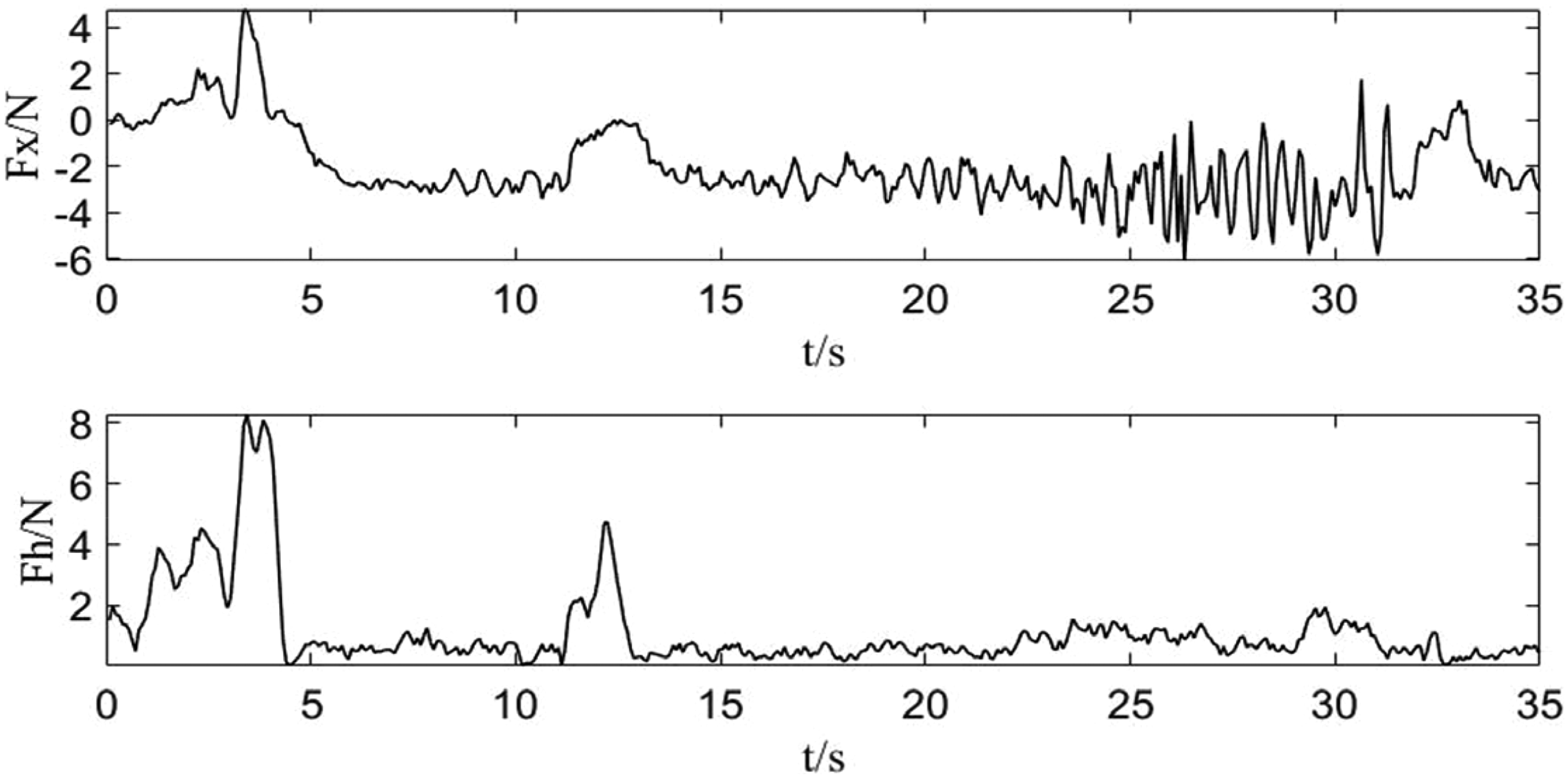

The feedback force and trajectory data of the drilling tool were recorded. In Figure 8, the sharp jump in the force trace between 25 and 30 s indicates that the drill penetrated the skull. At this moment, the operator was alerted to stop the operation for safety.

Interactive force during drilling.

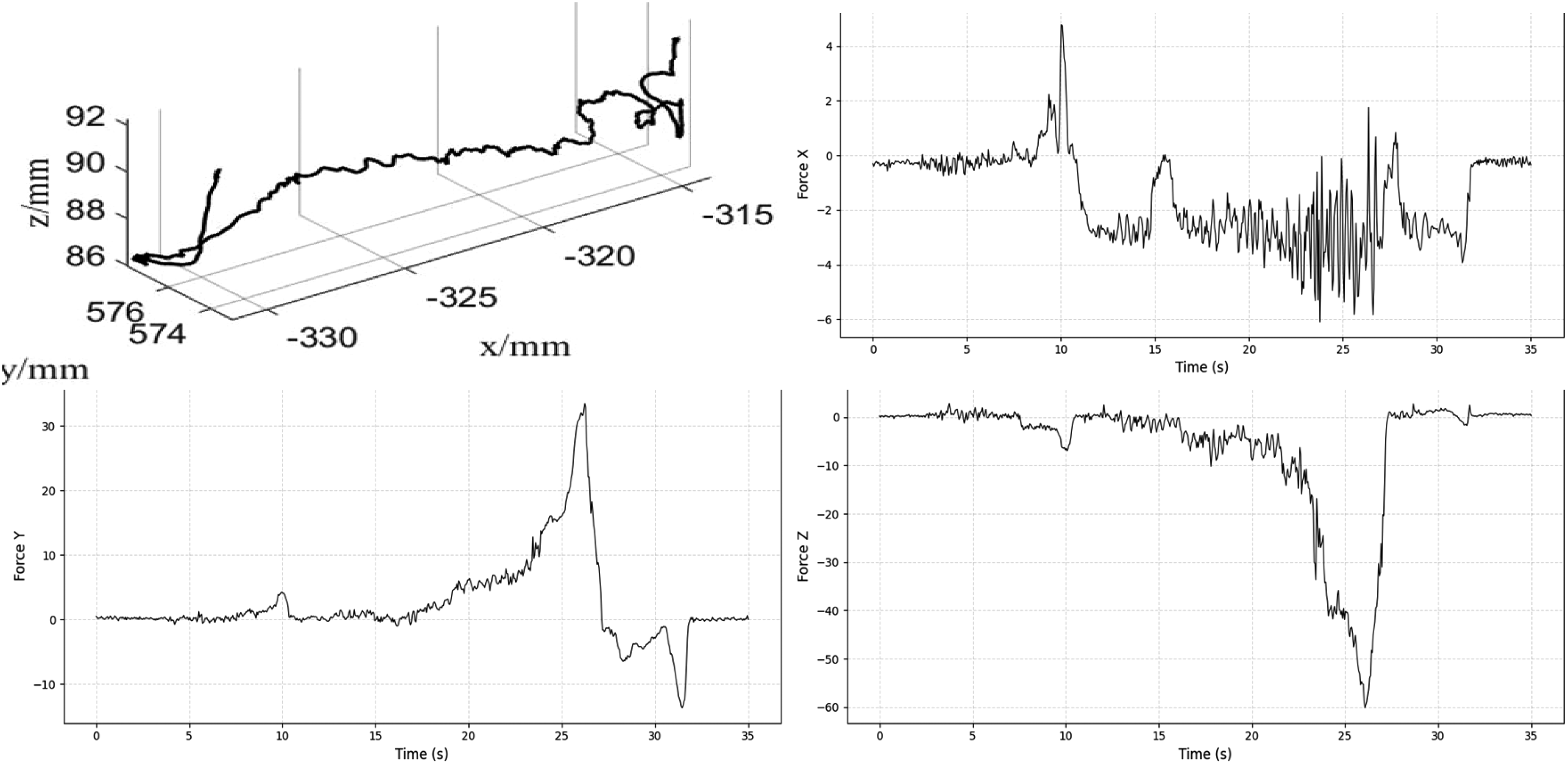

The interactive force during milling is shown in Figure 9. The

Interactive force during milling.

The milling trajectory of the tool tip is analyzed in the time domain in Figure 10. The plot presents displacement along the X, Y, and Z axes on a common time scale. The

Time-series trajectory of the milling tool terminus.

Discussion

Manual bone-removal procedures such as craniotomy or osteotomy remain highly dependent on surgeon skill and endurance. Prolonged tool handling under constrained visibility and narrow operating margins limits achievable precision and increases the risk of neural or vascular injury. Although robotic technology has been widely adopted, with systems like ROSA, Mazor-X, MAKO, and TIANJI enhancing precision in complex pelvic and spinal procedures,11,38–41 meeting the specific requirements of interactive cranial surgery remains challenging. To address this gap, the Cranibot system integrates an iTaSC-based hybrid force–position controller that decouples tangential motion (surgeon-driven) from normal force regulation (robot-controlled), ensuring stable contact and safe depth control.

Comparative analysis and novelty

The novelty and clinical relevance of the Cranibot system are best appreciated when compared with existing bone-removal robotics. Unlike conventional image-guided platforms such as ROSA 12 or NeuroArm, 13 which primarily provide passive stereotactic guidance, Cranibot introduces an active interactive control layer enabling real-time human–robot collaboration. While semi-active systems like MAKO 14 employ “hands-on” active constraints for orthopedic applications, our framework extends the iTaSC constraint-based task specification 33 to the demands of cranial surgery. This extension supports a more sophisticated multi-objective control strategy, preserving the surgeon's tangential intent while allowing autonomous robot regulation of normal contact forces—an advance beyond the simpler haptic boundaries of earlier collaborative systems. Moreover, compared with highly autonomous CNC-style platforms such as the original Craniobot, 7 which follow rigid pre-programmed trajectories, our approach provides greater intraoperative flexibility. The dual six-axis force/torque sensing architecture (Sensors I and II) offers a hardware-level advantage by cleanly separating operator input forces from tool–tissue interaction forces. With drilling times under 30 s and high responsiveness to user input, the system bridges the gap between the rigid precision of autonomous methods and the intuitive workflow required in clinical cranial procedures.

Interpretation of experimental accuracy

The observed average placement error of 2.6 ± 0.56 mm requires contextualization relative to state-of-the-art performance. As described by Kazanzides et al., total system error in image-guided robotics consists of registration error, tracking error, and application error (mechanical compliance). In our setup, application error was the dominant contributor, reflecting the inherent compliance of the iTaSC-based collaborative control. In contrast, the computer-vision-guided system of Navabi et al. achieved sub-millimeter accuracy on rigidly fixed murine skulls, operating in a highly constrained microsurgical environment. While a 2.6-mm deviation exceeds the <1-mm precision typically required for stereotactic neurosurgery, it remains acceptable for gross bone-removal tasks such as craniotomy, where dura preservation and depth control are the primary safety constraints rather than exact lateral contour accuracy. Nonetheless, to extend Cranibot's applicability to microsurgical tasks, future versions must reinforce mechanical rigidity and refine registration techniques, addressing the contributors to compliant deviation identified by Kazanzides et al.

Robustness to uncertainty and disturbance

The experimental results also highlight the system's ability to manage operational uncertainties, particularly the heterogeneous density of skull bone and sensor signal noise. Variations in local bone structure introduce continuous force disturbances during milling. As shown in Figure 9, the force–position hybrid controller effectively attenuated these disturbances. After the initial entry phase (0–5 s), the interactive forces stabilized within a safe ±5 N envelope despite the naturally uneven porcine bone texture. This stability is attributed to two factors: the low-pass filtering in equation (20), which suppresses high-frequency measurement noise, and the inherent compliance of the admittance control scheme, which absorbs impact forces rather than resisting them rigidly. These results confirm that the system can maintain safe operation in the presence of unmodeled environmental dynamics.

Generalization and future outlook

Although validation was limited to cranial bone, the modular control framework and end-effector design can be generalized to other bone-removal procedures such as spinal decompression or joint resurfacing. Future work will focus on improving the mechanical rigidity of supporting fixtures to enhance positioning accuracy and on integrating learning-based models for adaptive stiffness tuning and environmental perception. The combination of AI-driven intent recognition with safety-certified real-time control is expected to advance human–robot collaboration in surgical settings.

Limitations

Despite promising results, this study has several limitations requiring future attention. First, regarding collision avoidance: the current kinematic framework relies on surgeon supervision to prevent collisions between the robot elbow and the surrounding workspace. Although the “surgeon-in-the-loop” approach mitigates this risk, future iterations will incorporate redundancy-resolution algorithms to optimize joint configurations for obstacle avoidance without altering the end-effector pose. Second, regarding long-term accuracy: the present validation focuses on short-duration drilling tasks (typically under 30 s). The long-term effects of sensor thermal drift and cumulative registration error during prolonged milling operations (>1 h) remain unquantified. Planned endurance tests will examine calibration stability over extended periods. Third, regarding system rigidity: as noted in the error analysis, compliance in the experimental fixture contributed to positioning deviations. Clinical translation will require rigid head-fixation systems (e.g. Mayfield clamps) to minimize relative motion.

This study also presents a preliminary evaluation involving 15 phantom trials and a single (n = 1) in-vivo porcine subject. Although these experiments validated the interactive control and force-sensing logic, the sample size is insufficient for statistically meaningful conclusions regarding clinical efficacy or safety rates. These results should be interpreted as proof-of-concept; extensive pre-clinical testing with a larger cohort is needed to assess robustness across biological variability.

Conclusion

In summary, this study presents Cranibot, an interactive surgical robotic system that achieves precision bone removal through a robust iTaSC-based hybrid force–position control strategy. Validation on skull phantoms and an in-vivo model demonstrates that the trajectory strategy successfully decouples surgeon intent from autonomous force regulation. The implemented control framework yielded a mean placement error of 2.6 ± 0.56 mm and significantly reduced surgical time, with drilling completed in under 30 s. The strategy also maintained operator handle forces below 5 N, reducing fatigue and enhancing ergonomic safety. These results demonstrate that the proposed trajectory strategy provides a reliable foundation for collaborative bone surgery, balancing high-precision tracking with safe human–robot interaction. Future work will focus on increasing mechanical rigidity and integrating multimodal sensing to further improve accuracy and adaptability across diverse clinical scenarios.

Footnotes

Author contributions

Conceptualization: Xingguang Duan and Tengfei Cui; methodology: Xingguang Duan and Weijun Zhang; software: Tengfei Cui, Weijun Zhang, Jiapeng Wang, and Huanyu Tian; validation: Tengfei Cui and Huanyu Tian; data curation: Weijun Zhang and Tengfei Cui; writing—original draft preparation: Weijun Zhang, Tengfei Cui, and Jiapeng Wang; writing—review and editing: Xingguang Duan and Shikui Jia; visualization: Tengfei Cui and Shikui Jia; supervision: Shikui Jia; project administration: Shikui Jia; funding acquisition: Xingguang Duan. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the National Key Research and Development Program of China (No. 2024YFC3016700) and the National Natural Science Foundation of China (No. 62473053).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.