Abstract

Stroke threatens human life and health. After a stroke, patients often experience varying degrees of depression, anxiety, and other emotional symptoms alongside physical hemiplegia. A patient's emotional and psychological states significantly affect their motivation for rehabilitation training. Current robot-assisted stroke rehabilitation focuses more on physical recovery, with little consideration given to the emotional factors affecting patients. This paper proposes an upper-limb mirror rehabilitation training robot system that integrates emotional factors. First, a visual method for motion intention recognition is designed to estimate the end effector pose using visual tags, along with an emotion recognition algorithm based on the Yolo_V11n and ResNet_50 neural network models. Second, emotional factors are incorporated into rehabilitation training to develop a rehabilitation optimization strategy tailored to each patient. Finally, a prototype system is constructed based on a six-degree-of-freedom robotic arm and a binocular vision system. Experimental results show that the proposed system can monitor the subject's facial expressions during rehabilitation training and automatically adjust the training strategy based on their emotional state. The system's average recognition accuracy for the unaffected side's movement trajectory is 4.4 mm, the robot's average tracking accuracy is 4.3 mm, and the emotion recognition model achieves an accuracy of 70% on the validation dataset. The proposed upper-limb mirror rehabilitation training robot integrates the patient's emotional factors into the rehabilitation process, enabling rehabilitation therapists to analyze the patient's psychological activities during training and design personalized physiological and psychological intervention plans.

Introduction

Stroke is the second leading cause of death and the third leading cause of death and disability combined in the world. 1 By 2050, the number of people dying from stroke globally is expected to increase by 50%. 2 More than 80% of patients affected by stroke experience upper-limb motor dysfunction, 3 which severely affects their daily activities, such as eating, grasping, and object placement. Mirror rehabilitation robots help improve limb function and motor control after strokes.4–7 In 2000, Stanford University, United States, proposed an upper-limb mirror rehabilitation training robot system called MIME. 8 It incorporates the PUMA560 industrial robot, which uses a six-degree-of-freedom position digitizer to detect the movement trajectory of the patient's unaffected upper-limb and then drive the affected side to perform mirror movements within a fixed spatial range at a certain speed. Various upper-limb rehabilitation robot systems integrating mirror therapy have since been rapidly developed. Cui et al. designed a method for identifying upper-limb action intentions based on a combination of inertial motion units (IMUs) and machine learning algorithms. 9 Wang et al. proposed a control framework for robot-assisted motor learning based on an adaptive frequency oscillator. 10 Lukas Tappeiner et al. developed an upper-limb mirror rehabilitation training system consisting of a cable-driven robot and a visual system. 11 Florin Covaciu et al. proposed a combined mirror-EMG robot-assisted therapy system for lower limb rehabilitation, which achieves personalized and immersive rehabilitation through the dual-modal interaction of “mirror therapy + EMG-driven training.” 7

However, the main focus of existing upper-limb mirror rehabilitation robots is patients’ physical rehabilitation, with psychological aspects receiving inadequate attention. Anxiety and depression are common psychological health issues occurring after strokes, 12 affecting not only patients’ daily mindset but also their rehabilitation progress. Stroke rehabilitation should focus not only on the body's recovery but also on the patient's psychological state, aiming for rehabilitation training that integrates emotional factors.

Emotion-optimized rehabilitation strategies for robotic systems remain in the research phase, with several studies exploring the integration of emotion analysis and affective interaction with rehabilitation protocols. For example, Ghazali et al. proposed a model for emotional states based on human electromagnetic signal information and applied it to rehabilitation robots. 13 They verified the feasibility of integrating an emotion recognition system with a rehabilitation robot platform. Pepper, developed by SoftBank Robotics, is a robot with emotional recognition and human–robot interaction capabilities. 14 Although it was not specifically designed for rehabilitation, it is often used for the cognitive rehabilitation of elderly and chronic disease patients. These preliminary studies and applications demonstrate the feasibility of integrating emotion into motor and cognitive rehabilitation robots. However, research on upper-limb rehabilitation robots that integrate emotional factors remains limited.

Recognition of body movement intentions and emotional states is a key component in mind–body integration in robot-assisted rehabilitation. Regarding movement intention recognition, Lu et al. proposed a method for predicting motion intention using deep neural networks combined with one or two IMUs, 15 but the IMU position affects model performance, and drift may occur during prolonged use. Zhang et al. proposed a dynamic adaptive neural network algorithm based on the fusion of multiple features from surface electromyography (sEMG) signals, accurately recognizing eight lower limb movements. 16 Chen et al. decoded human motion intentions using sEMG signals and proposed a hierarchical dynamic Bayesian learning network model. 17 Dong et al. proposed a technique based on the classification of motor imagery electroencephalogram (EEG) signals, achieving high-precision control of lower limb rehabilitation robots and a series of motion intention recognition methods. 18 However, the resolution of EEG limits its independent use for complex motor tasks; multimodal signal fusion is a feasible approach.

Emotional state recognition mainly relies on physiological signals, such as EEG, electrocardiogram (ECG), and sEMG signals, and methods such as machine vision and multimodal signal fusion. 19 A typical example is the emotion recognition model developed by Elif et al., which combines EEG and physiological data using multimodal signal fusion, machine learning, and deep learning for use in robot-assisted rehabilitation systems. 20 Sayed Ismail et al. built an emotion recognition model using support vector machines and a decision tree classifier and evaluated its effectiveness in emotion recognition using ECG signals and photoplethysmography (PPG). The accuracy of using ECG to recognize arousal was 68.75%, whereas the accuracy of using PPG to recognize emotional dimension categories reached 37.01%. 21 Chanel et al. fused EEG, heart rate, blood pressure, and respiration signals using naive Bayes and Fisher discriminant analysis to recognize the arousal dimension of emotions, achieving an accuracy of 50%–72%. 22

Motion intention and emotion recognition methods based on 1D physiological electrical signals, such as IMU, EEG, and ECG, mainly rely on signal features for indirect estimation; however, signal features are susceptible to interference, and the signal acquisition cost is high. Capturing the affected side's movement trajectory and analyzing facial expression recognition through machine vision are more intuitive and have lower acquisition costs. This paper proposes a vision-based recognition algorithm for upper-limb motion intention to address the above limitations, along with an upper-limb mirror rehabilitation training robot system that integrates emotional factors. The patient's emotional changes during mirror rehabilitation training are monitored to determine their willingness to participate in the rehabilitation process, thereby improving their rehabilitation training by incorporating their emotional factors.

The rest of this paper is structured as follows: Section 2 describes the hardware architecture design of the system. Section 3 introduces the key technologies of the system, including the motion intention recognition algorithm, emotion recognition algorithm, and rehabilitation optimization strategy. Section 4 validates the system's performance and functionality. Section 5 discusses the implications of the research findings, analyzes the limitations of the current system, and puts forward prospects for future improvement directions. Section 6 summarizes the core conclusions of the entire study, emphasizing the innovation points and practical value of the system.

System overview

This system has two key functions: recognition of motion intention and real time emotion monitoring. First, the patient's motion intention on the unaffected side is recognized accurately. Using the mirror therapy principle, the robot system precisely replicates the unaffected limb's motion trajectory on the affected side, thereby guiding the affected side in rehabilitation training. Second, the patient's emotional state is monitored in real time. According to the patient's emotions, different rehabilitation tasks can be selected to optimize the rehabilitation strategy.

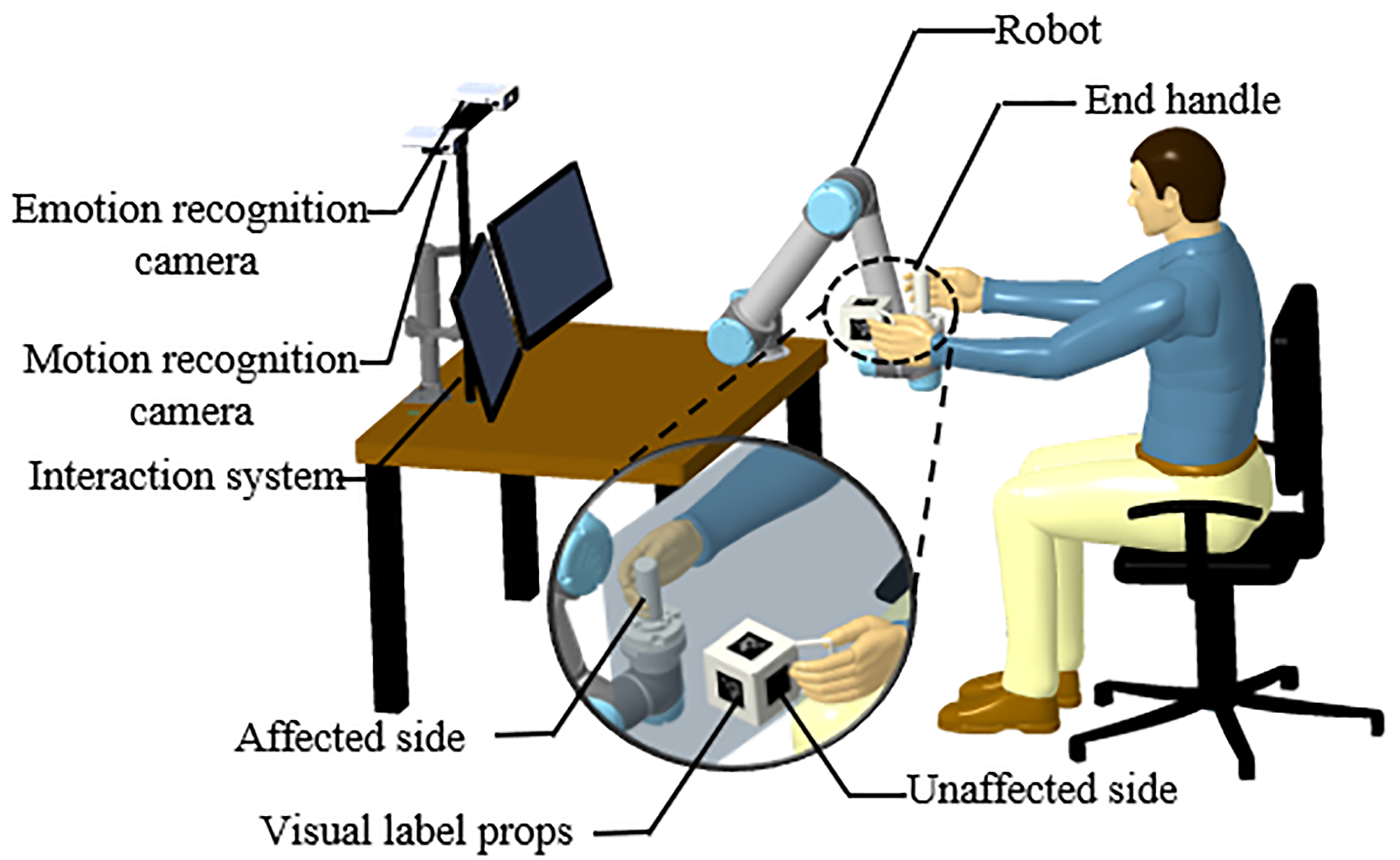

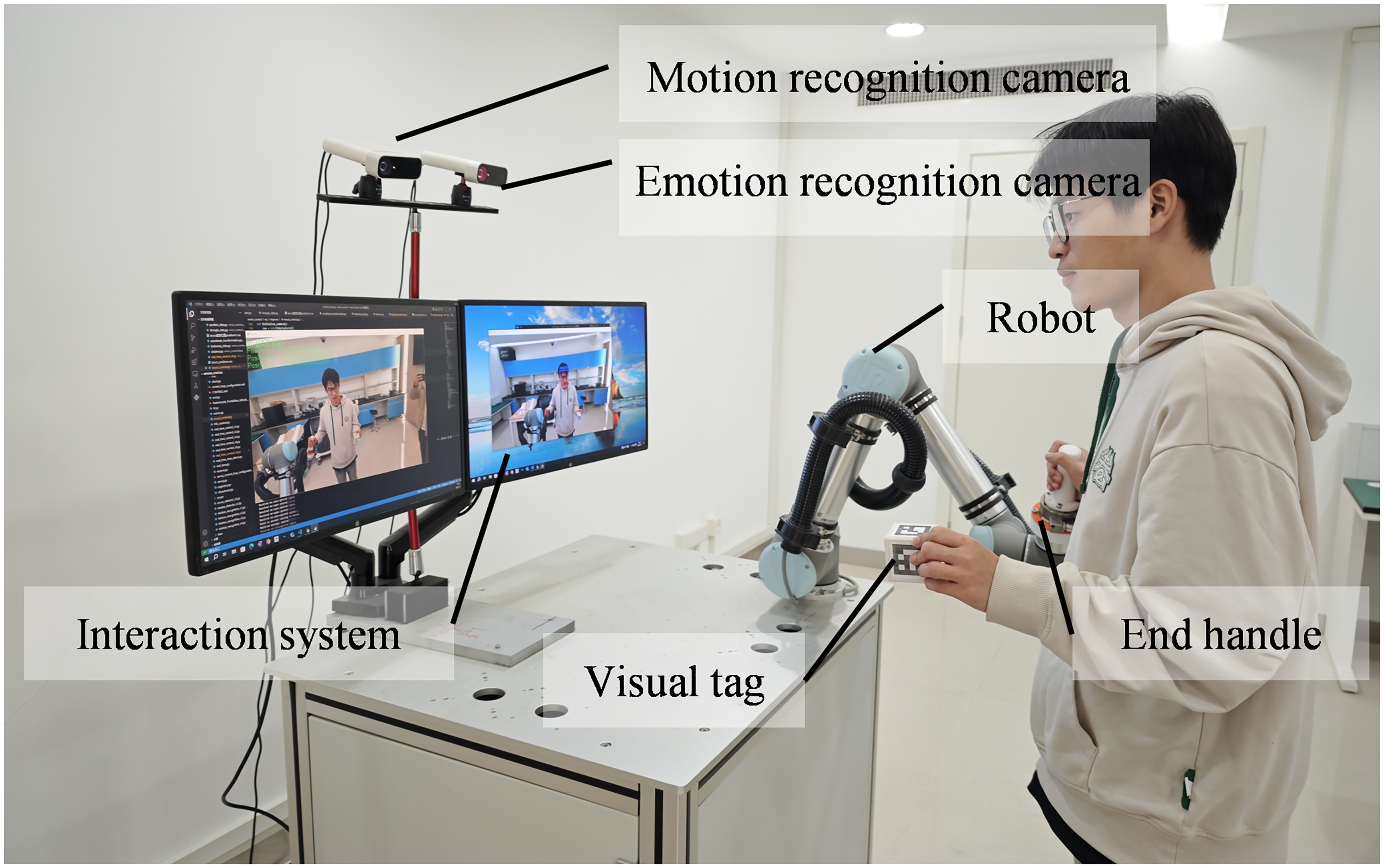

The system structure is shown in Figure 1. It mainly consists of a six-axis robotic arm, two depth cameras, and a workstation. The six-axis robotic arm is the actuator, the cameras are used for motion intention recognition and emotional state monitoring, and the workstation controls the robotic arm and analyzes the emotional states. The workstation is also used to upload the collected training information to a cloud server, allowing rehabilitation therapists to view the patient's emotional state changes and rehabilitation training data remotely.

Overall system design diagram.

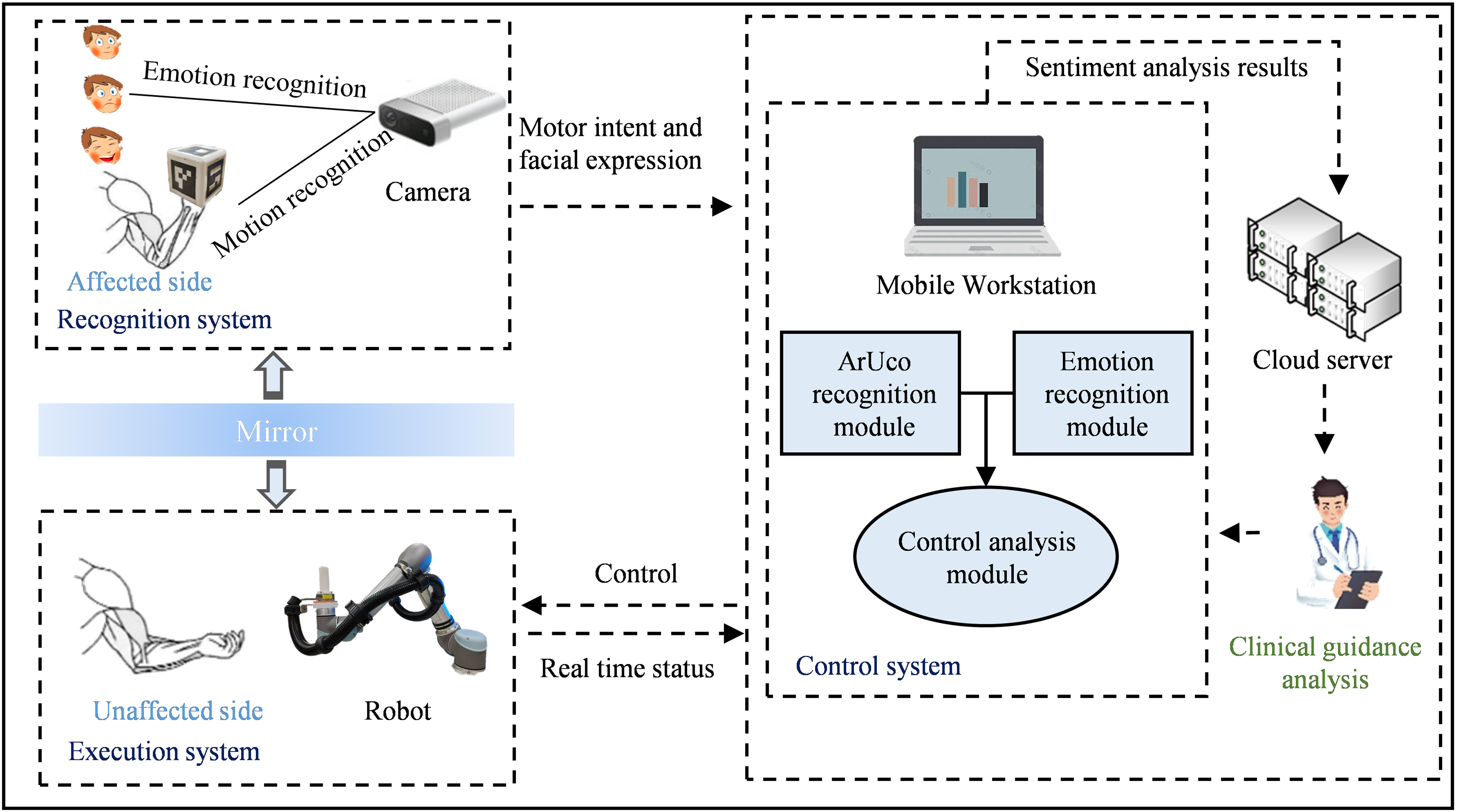

The overall working principle of the system is mainly divided into three parts: the recognition, control, and execution systems (Figure 2). The recognition system acquires the patient's motion intention on the unaffected side and a video stream of their facial expression during rehabilitation. The control system analyzes the affected side's motion intention and the facial emotions in the video stream, adjusts the training tasks, and sends control commands to the execution system. Finally, the execution system drives the patient's affected side to perform mirror rehabilitation training.

Conceptual diagram of the upper extremity mirror rehabilitation training.

Method

Motion intention recognition method

In mirror rehabilitation training, the motion intention of the patient's unaffected side is utilized as the reference for training the affected side. Here, a vision system is used to monitor the patient's upper-limb motion intention. As shown in Figure 1, the system captures the motion trajectory of the patient's unaffected side in real time as the patient holds a customized tool with visual tags in 3D space, and estimates the end effector pose through image processing.23,24 The occlusion problem caused by the rotation of the visual tag during movement is solved using a 5 cm × 5 cm × 5 cm cubic tool with corresponding visual tags on its surfaces. As long as one side of the cube is not occluded during use, our recognition algorithm can calculate the coordinates of the four corner points of each tag in the pixel coordinate system by determining their center values, following the corresponding OpenCV modules for 3D pose estimation. 25 Furthermore, our rehabilitation training tools can be designed according to different rehabilitation scenarios. As long as the visual tags are attached to the tools, the motion intention of the patient's unaffected side can be detected in real time.

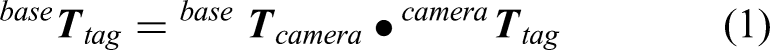

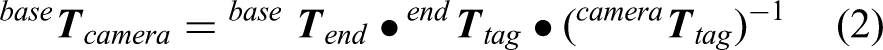

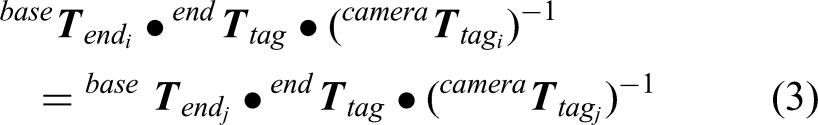

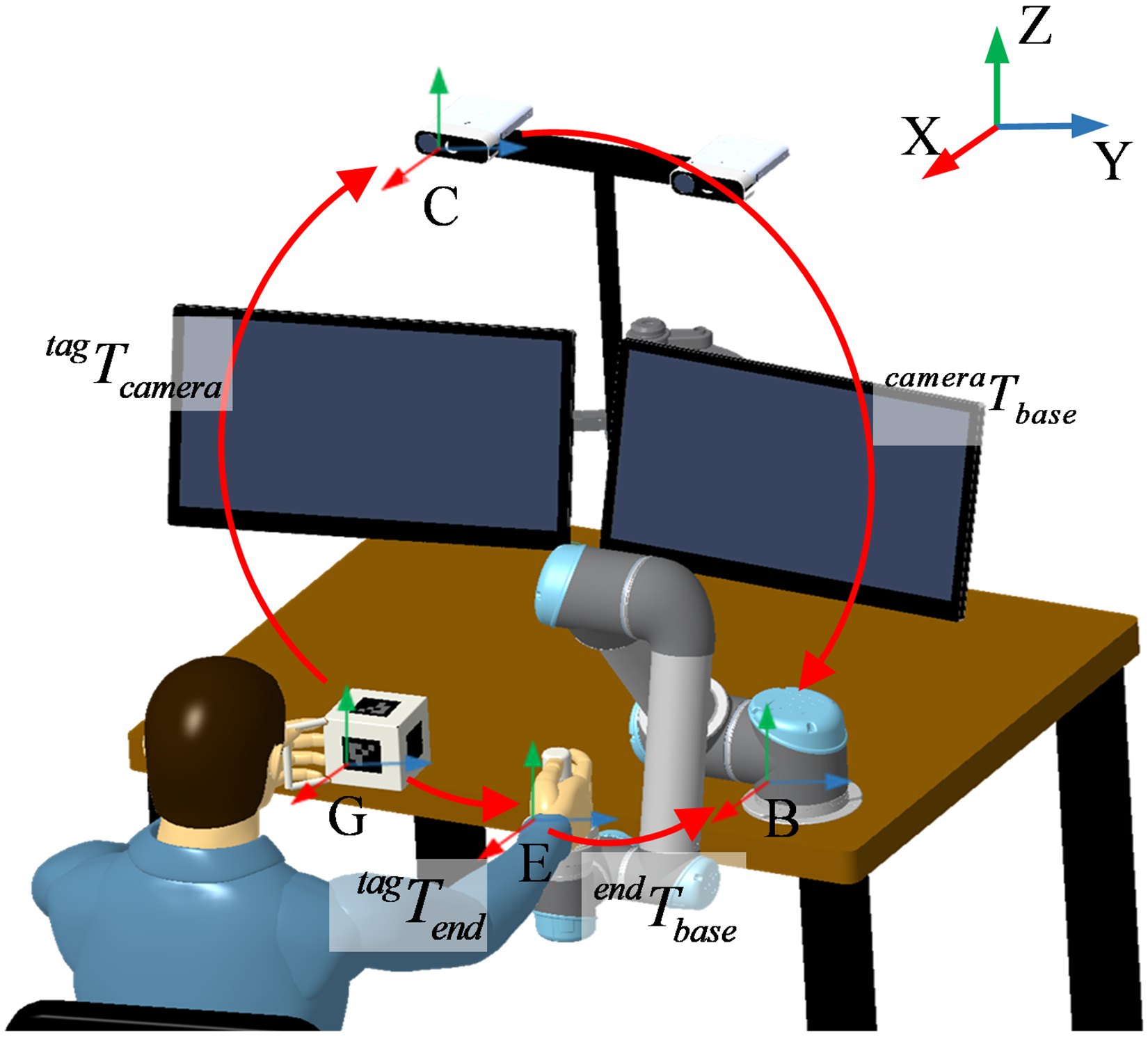

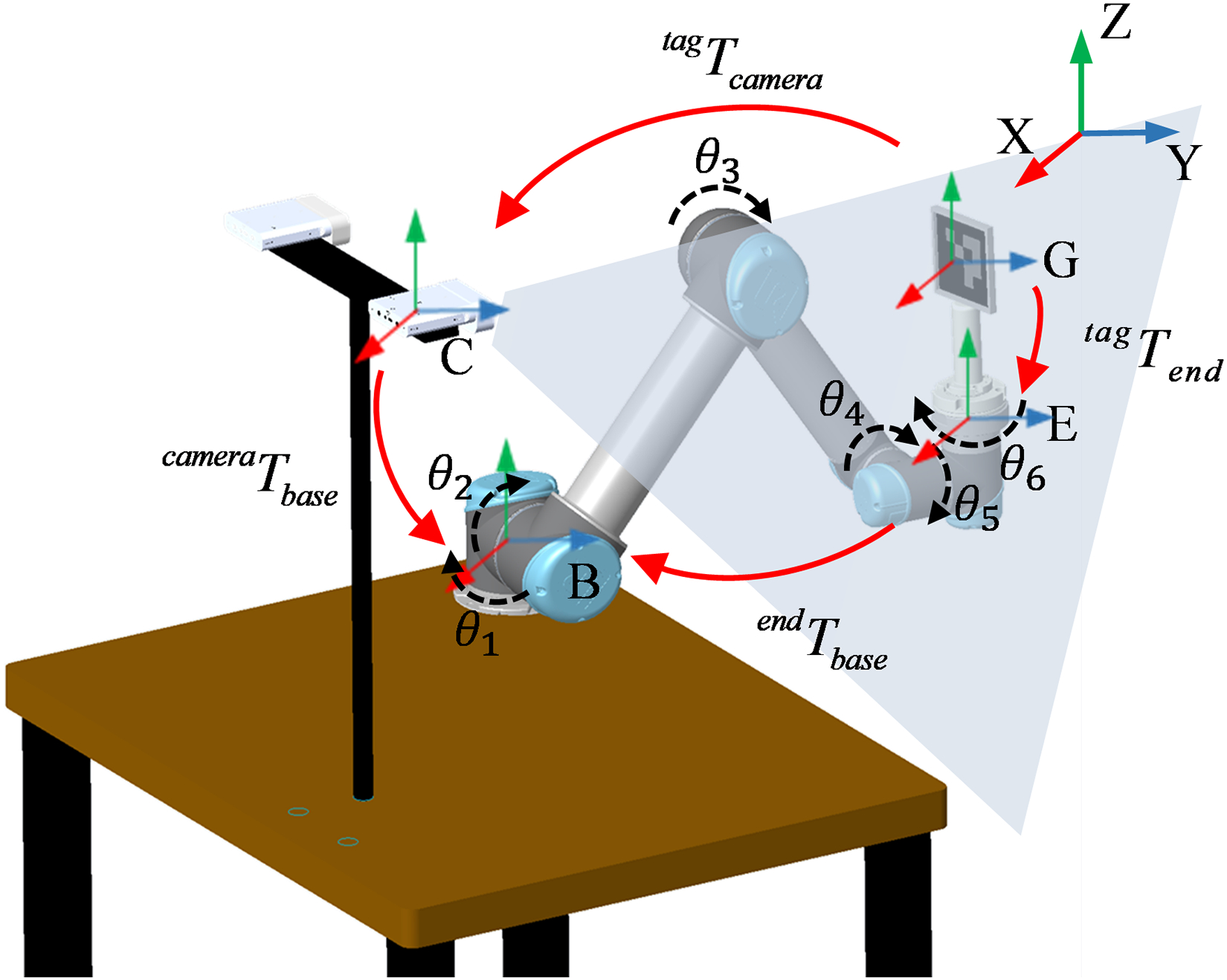

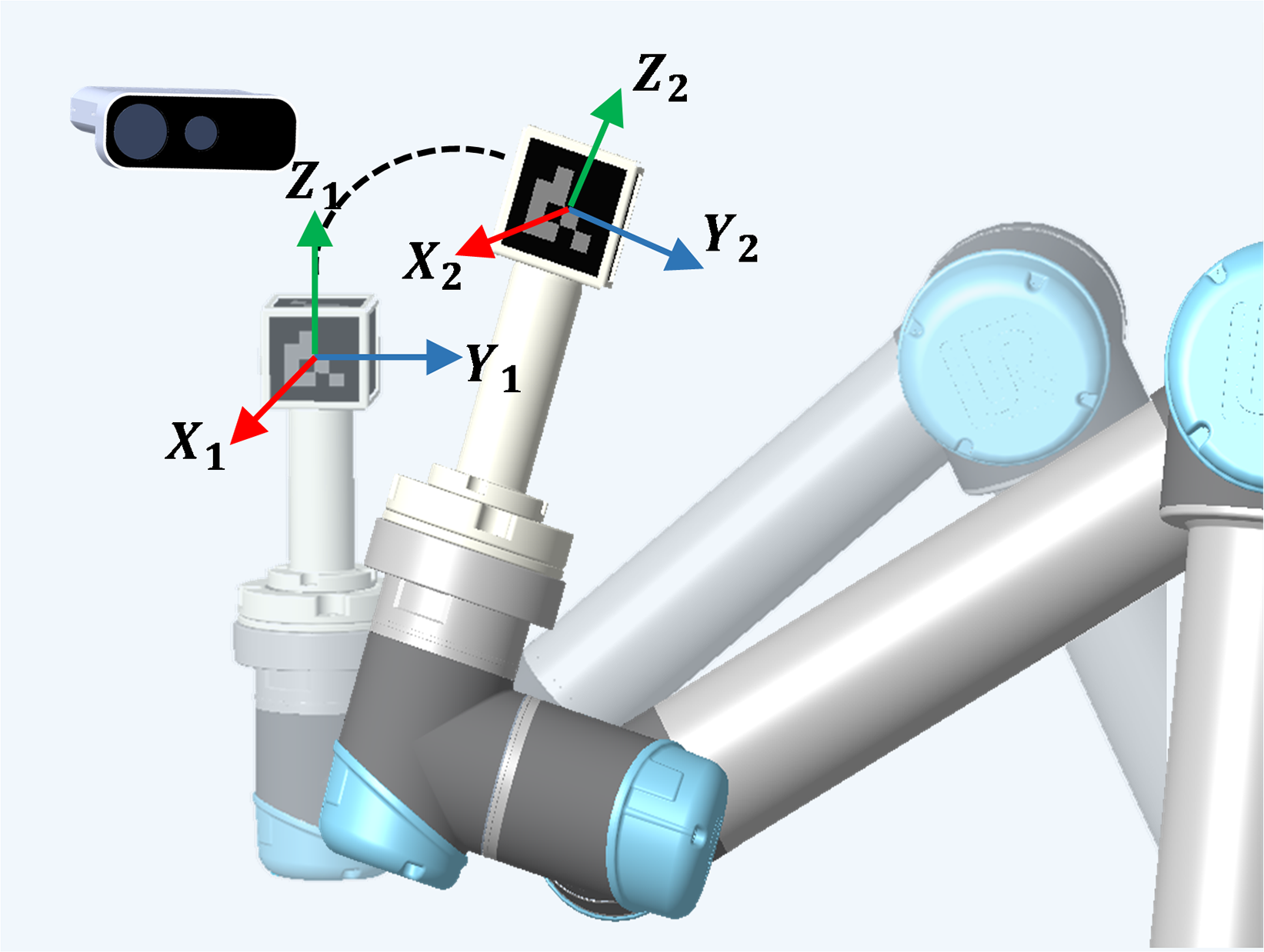

Construction of coordinate system relationship for mirror rehabilitation robot

The operation of the robotic arm is closely related to the coordinate system, which determines the position, posture, and trajectory of each joint and the end effector of the robotic arm. This system relies on the coordinate relationships shown in Figure 3, which mainly include the camera, visual tag, robotic arm base, and robotic arm end effector coordinate systems. The pose of the visual tag in the camera coordinate system is represented by the matrix

System coordinate transfer diagram.

In this study,

Diagram of hand–eye calibration coordinate relationship.

Given

Mirror trajectory following control

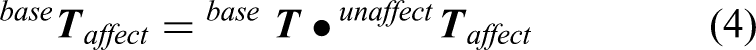

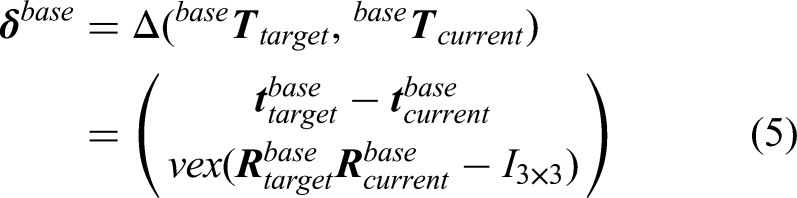

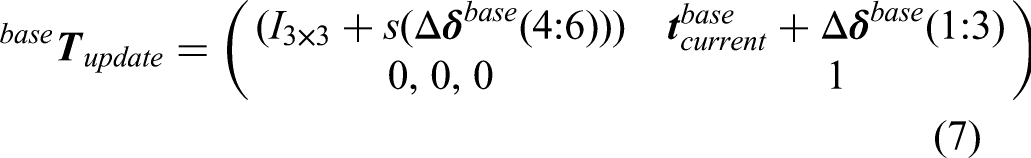

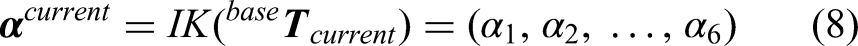

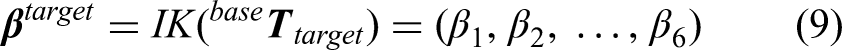

According to the above results, the system obtains the transformation matrix

After the mirror transformation is performed on the spatial pose of the unaffected side (holding the tag), it serves as the target pose

The mirrored position of the unaffected side (holding the tag in the robotic arm base) is calculated using (4).

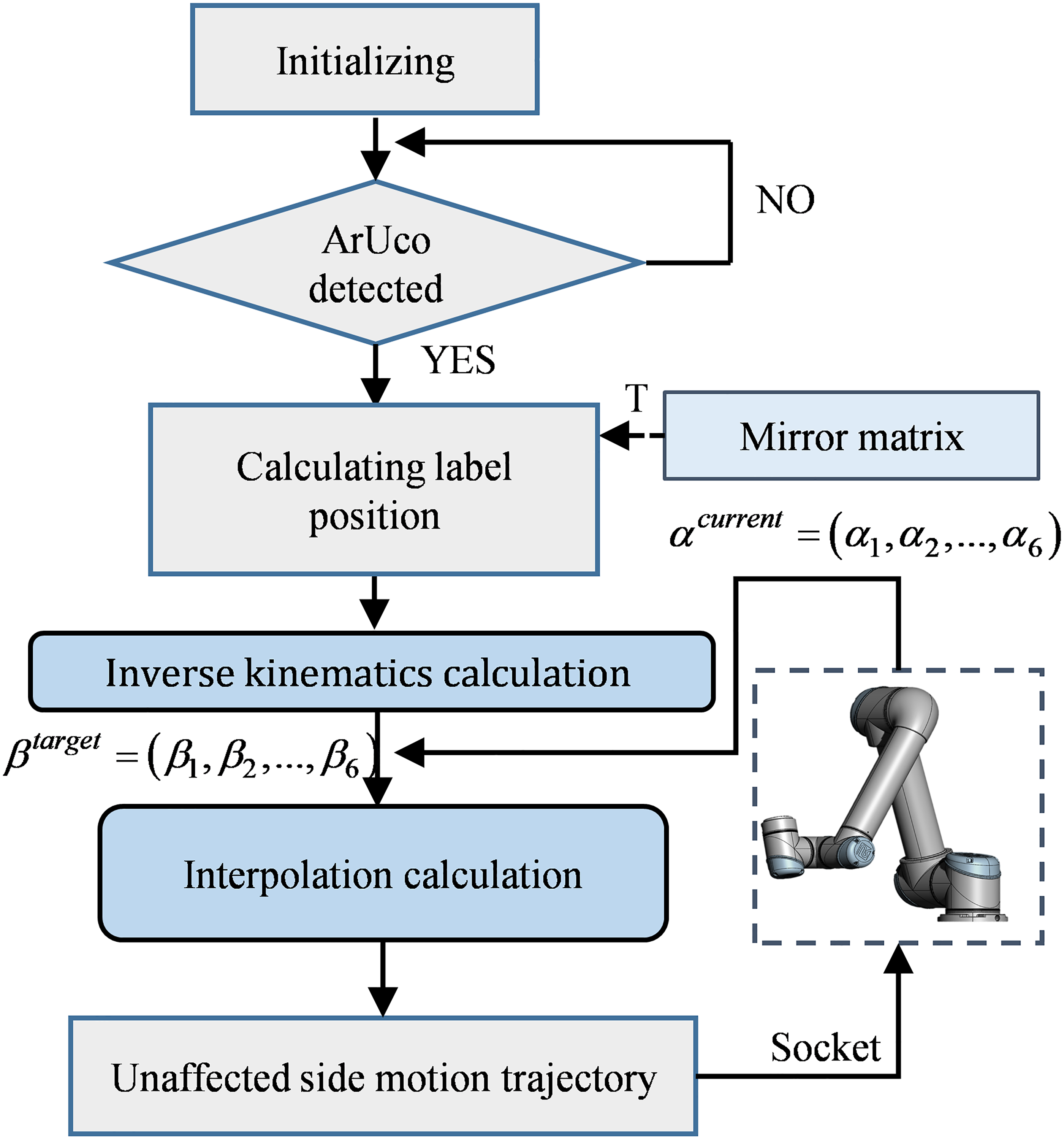

The robotic arm's mirror-following control is implemented as follows:

The pose of the unaffected side (holding the tag in the robotic arm base coordinate system)

The deviation vector

The incremental control step size is denoted as λ, which is then used to compute the next control target increment.

Once the next control target increment is known, the robotic arm's target position is updated using (7).

Interpolation planning is performed between

Finally, the system dynamically updates the control variable

Mirror rehabilitation control framework.

Patient emotional state recognition algorithm

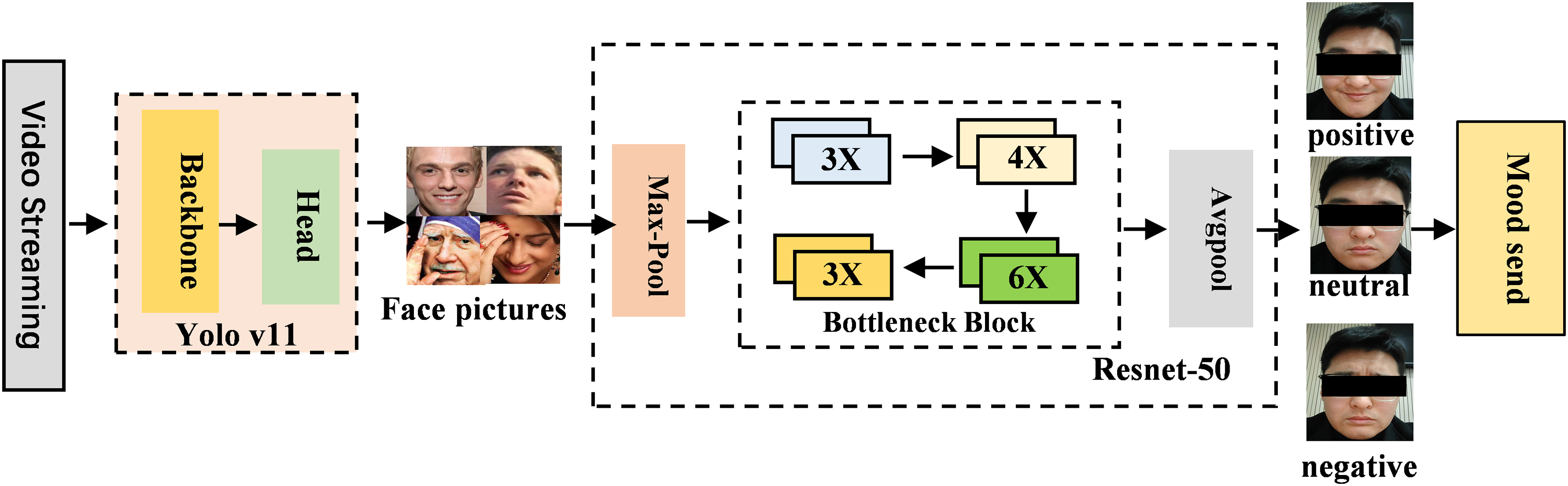

This system combines the Yolo_V11n (nano version) network model31,32 with the ResNet_50 network model 33 to recognize the patient's emotional state during rehabilitation based on their facial expressions. We adopted the official implementation of Yolo_V11n released by Ultralytics, with the specific version specified as Ultralytics Yolo_V11n v8.3.0. Yolo_V11n is commonly used for object detection but can be applied to face detection tasks. It not only recognizes faces in images but also estimates poses, such as facial orientation, making it highly suitable for emotional state recognition in this study. In this research, we convert the bounding box annotations from the WIDER_FACE dataset 34 from absolute pixel coordinates to the relative coordinate format required by the Yolo_V11n model. Specifically, we compute and transform each bounding box's center coordinates and dimensions into proportions relative to the image's width and height. The converted label files adhere to the Yolo_V11n format and are saved alongside the image files, providing a standardized dataset for the subsequent object detection task. ResNet_50 is widely used for image classification. It excellently recognizes object categories in images and can be used for facial feature extraction, capturing subtle differences in facial images. Therefore, it is appropriate for predicting emotional states based on facial expressions in this study.

The emotion recognition process is shown in Figure 6. First, the video stream captured by the camera is input into the program, where the trained Yolo_V11n model detects and labels the face in the video. Then, the labeled face image is fed into the trained ResNet

Architecture of emotion recognition program.

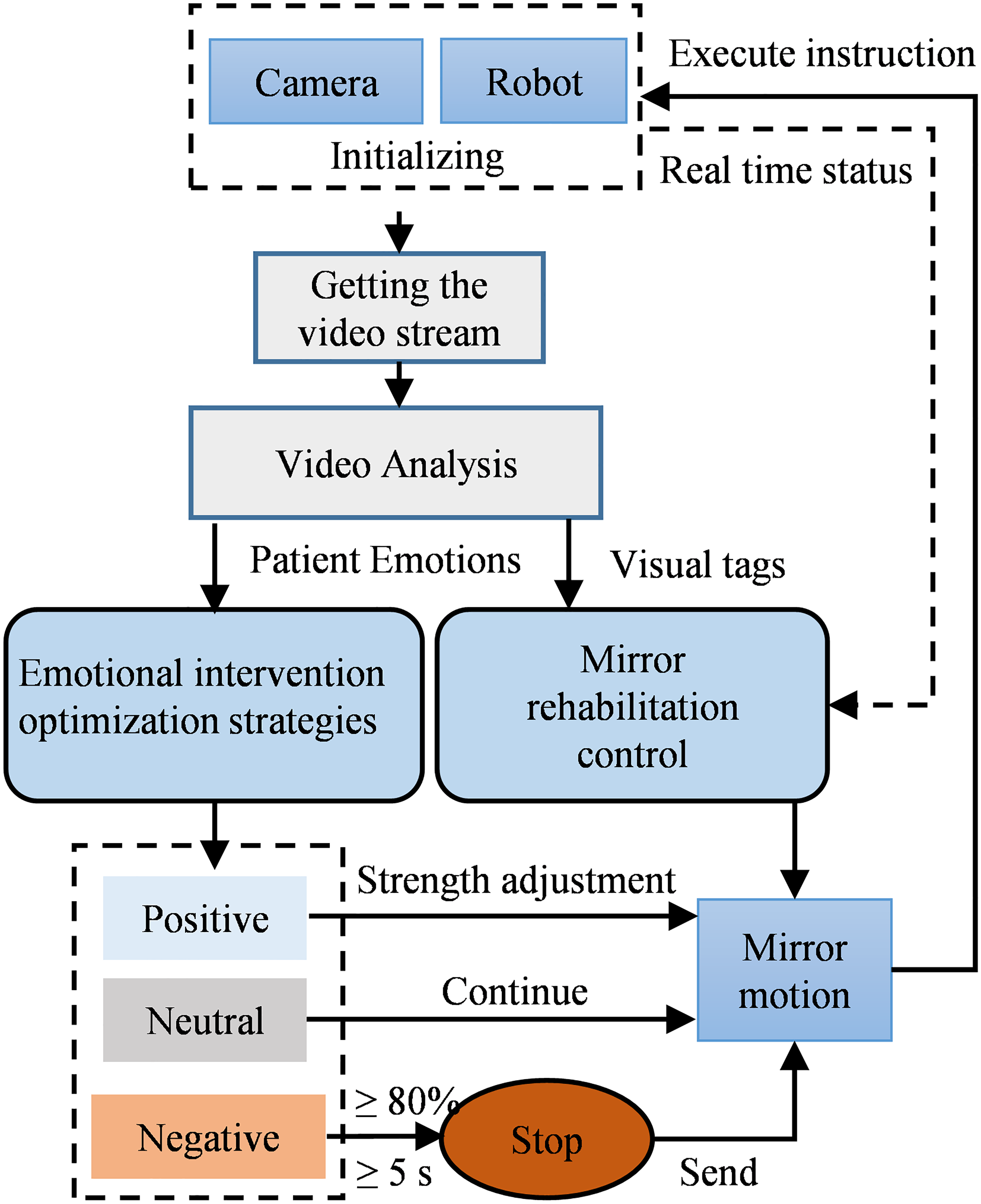

Rehabilitation optimization strategy integrating emotional factors

The patient's emotions influence their level of participation and effort in rehabilitation tasks, thus affecting the effectiveness and progress of rehabilitation. A patient who is in a positive, optimistic state will be more willing to participate in rehabilitation training and less likely to develop resistance. However, a patient in a negative state will exhibit low cooperation in rehabilitation tasks and be more likely to develop resistance. Therefore, this study focuses on ensuring patient safety during rehabilitation, and an initial attempt is made at integrating emotional factors into rehabilitation optimization strategies. However, extensive clinical exploration and practice are required for the rational, effective integration of emotions into rehabilitation optimization.

Three emotional states, namely, positive, neutral, and negative, are used as conditions in this study, with each emotion corresponding to a unique adjustment strategy. If the patient is in a positive emotional state, then the system will appropriately increase the training intensity or duration. If the patient is in a neutral state, then the system will continue the established training plan to ensure rehabilitation effectiveness. If the system detects negative emotions lasting more than 5 s or accounting for over 80% of the emotional state within 10 s, it will immediately stop the training and issue a warning message. Figure 7 illustrates the control logic through which the system exerts a key role in rehabilitation optimization by incorporating emotional factors, thereby providing patients with a safer, more efficient, and personalized rehabilitation experience.

Logic diagram of emotional control strategies.

In addition, we have implemented two supplementary safety measures: First, an emergency stop button is installed. In the event of a potential hazard during training, the therapist can press the emergency stop button promptly to shut down the system immediately. Furthermore, the end-effector handle is designed to be detachable at any time, allowing the patient to disengage from the system quickly. Second, a safety force threshold is preset in the system, with an initial value of 40 N, which can be personalized adjusted according to individual patients’ conditions. If the patient's resistance force exceeds this threshold, the system will trigger an emergency shutdown. Moreover, throughout the entire training process, a therapist is assigned to provide full-time supervision to prevent hazardous situations. These measures effectively prevent potential risks caused by emotional fluctuations.

Result

Prototype construction

As shown in Figure 8, the system uses the six-axis robotic arm UR5 (Universal Robots) as the actuator. The depth-sensing sensor Azure Kinect DK (Microsoft) is used as the video frame acquisition device for the motion intention and emotion recognition programs. The video stream is transmitted to a mobile workstation, which communicates with the actuator through a router. The mobile workstation also uploads patient training data and emotional state information during rehabilitation to the server, allowing rehabilitation therapists to access various data, such as emotional changes and rehabilitation progress.

Prototype of overall system.

Performance validation

Motion intention recognition accuracy test

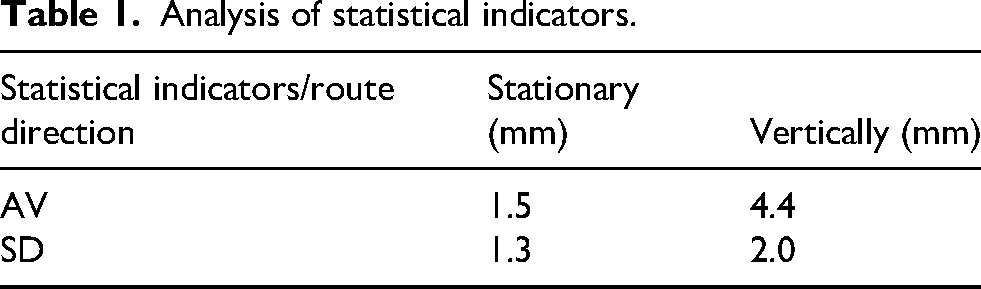

The performance of our visual tag recognition system is assessed accurately by installing the visual tag on the end effector of the robotic arm, recording 120 data points of the visual tag's position in a stationary state, and analyzing them. As shown in Figure 9, the system is set to perform periodic repetitive motion along a rectangular closed loop path with a preset width of 200 mm and a length of 250 mm. Using the depth camera as the recognition tool and our motion intention recognition method, we precisely calculate and record the coordinates of the center of four fixed key points’ tags in the camera coordinate system per motion cycle. This process covers 35 complete motion cycles, with a total of 140 key points of data collected. Subsequently, we calculate the distance between the lateral and longitudinal directions per cycle.

Motion intention recognition accuracy test.

Within the preset experimental range, the recognition program's average error of position recognition in the static state is 1.5 mm. During motion, the average error of motion recognition per path segment is 4.4 mm (Table 1). Therefore, the accuracy of visual tag recognition meets the technical requirements of this study.

Analysis of statistical indicators.

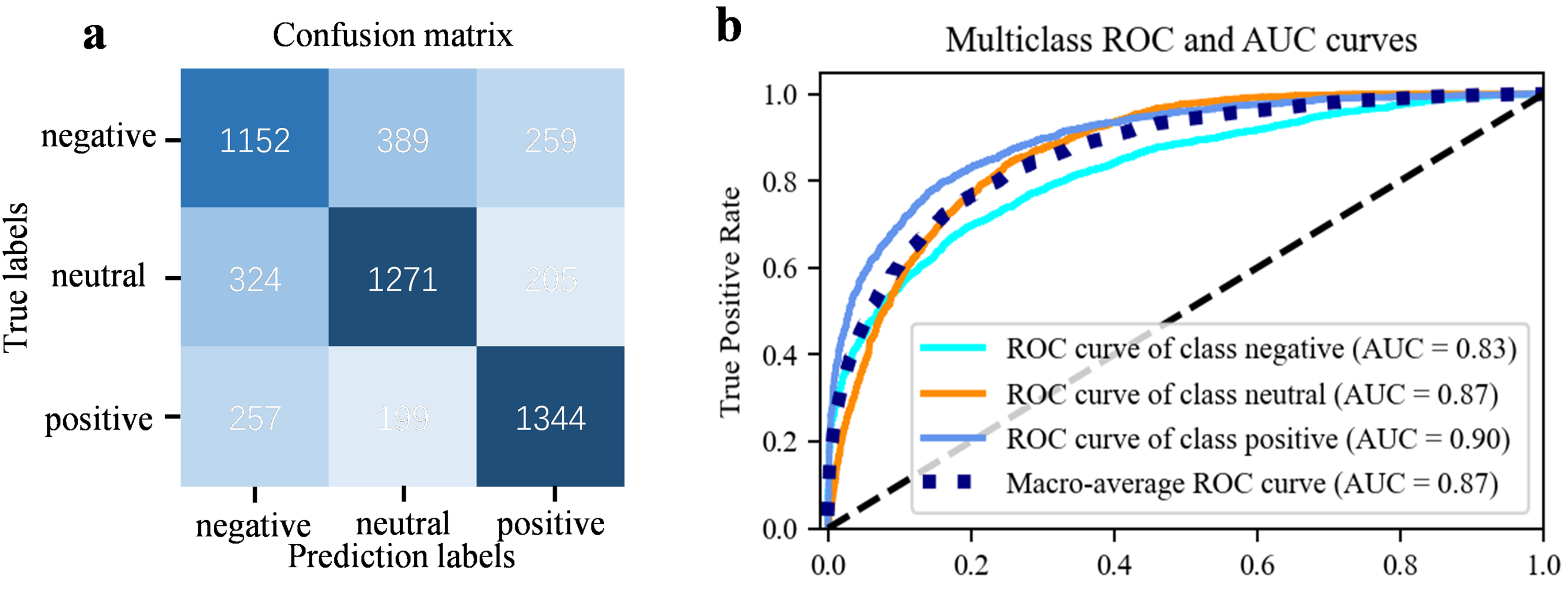

Emotion recognition validity test

For the training of the emotion recognition model in this study, the public WIDER FACE dataset 34 and preprocessed AffectNet dataset 35 were adopted as the training data for face detection and emotion recognition tasks, respectively. For the Yolo_V11n model, the training process was configured with 50 epochs and a batch size of 32, and the SGD optimizer was employed to enhance convergence efficiency, utilizing an initial learning rate of 0.01 and a momentum of 0.9. Prior to the training of the ResNet_50 model, preprocessing operations on the dataset had been completed in advance. Specifically, the eight-class facial images in the AffectNet dataset are reclassified: happiness and surprise as positive emotions; sadness, anger, disgust, fear, and contempt as negative emotions; and neutral as a neutral emotion. Each category contains 12,000 images, which serve as the training data for the model. The ResNet_50 model was trained for 40 epochs using a batch size of 8. The training employed the SGD optimizer with an initial learning rate of 0.001 and a momentum of 0.9. The training results show an accuracy of 70%; Figure 10 shows the confusion matrix of the model and the multiclass receiver operating characteristic (ROC) and area under the ROC curve.

(a) Confusion matrix of the ResNet50-based emotion recognition model, and (b) the multiclass ROC curves and corresponding AUC values.

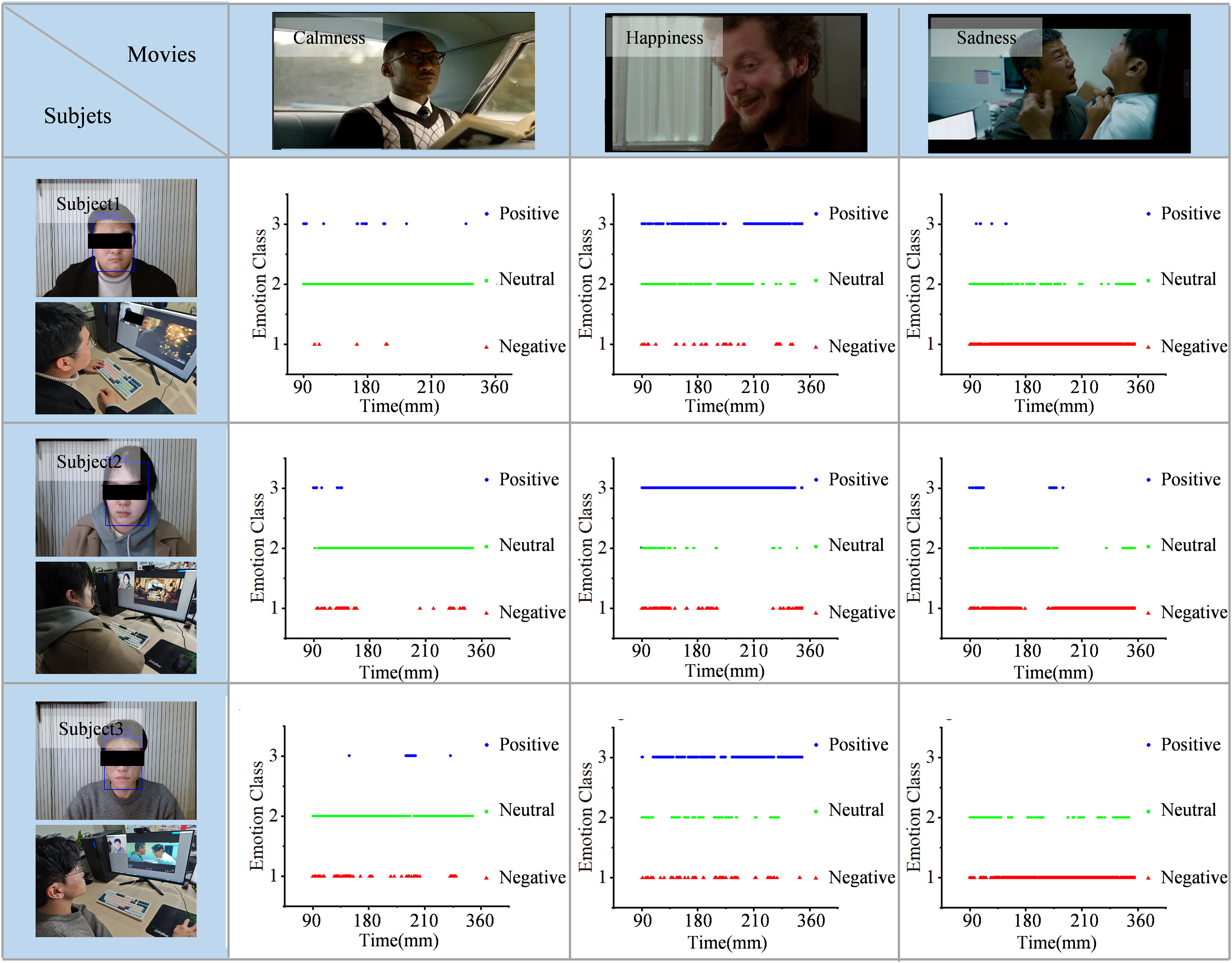

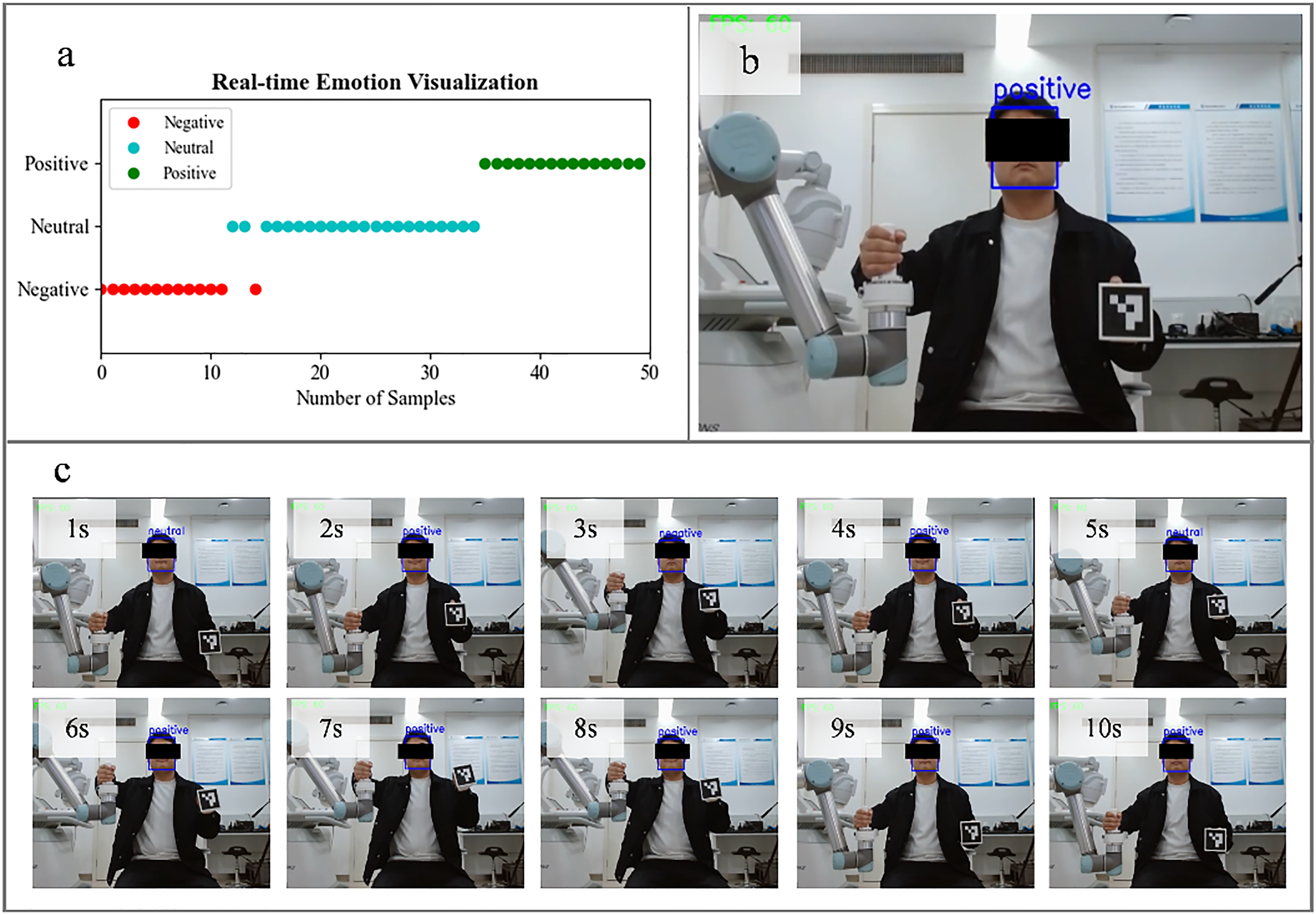

To validate the effectiveness of the emotion recognition algorithm in this study, we edit movie clips with three different emotional tones (calmness, sadness, and happiness) from the films Green Book, Manslaughter, and Home Alone, each approximately 6 min long. Wearing headphones, three participants in a calm state watch the three movie clips. Meanwhile, our emotion recognition system analyzed and recorded their emotional states at a sampling frequency of 8.33 Hz during the viewing process, and then compared the emotional fluctuations of the three participants to validate the program's effectiveness. The results (Figure 11) indicate that the emotional fluctuations of the participants while watching the three movie clips match the emotional tones of the clips, with the algorithm performing excellently in analyzing negative emotions.

Emotion recognition feasibility analysis for watching different movie clips. The rows show the emotional changes of three subjects watching three different emotional colors.

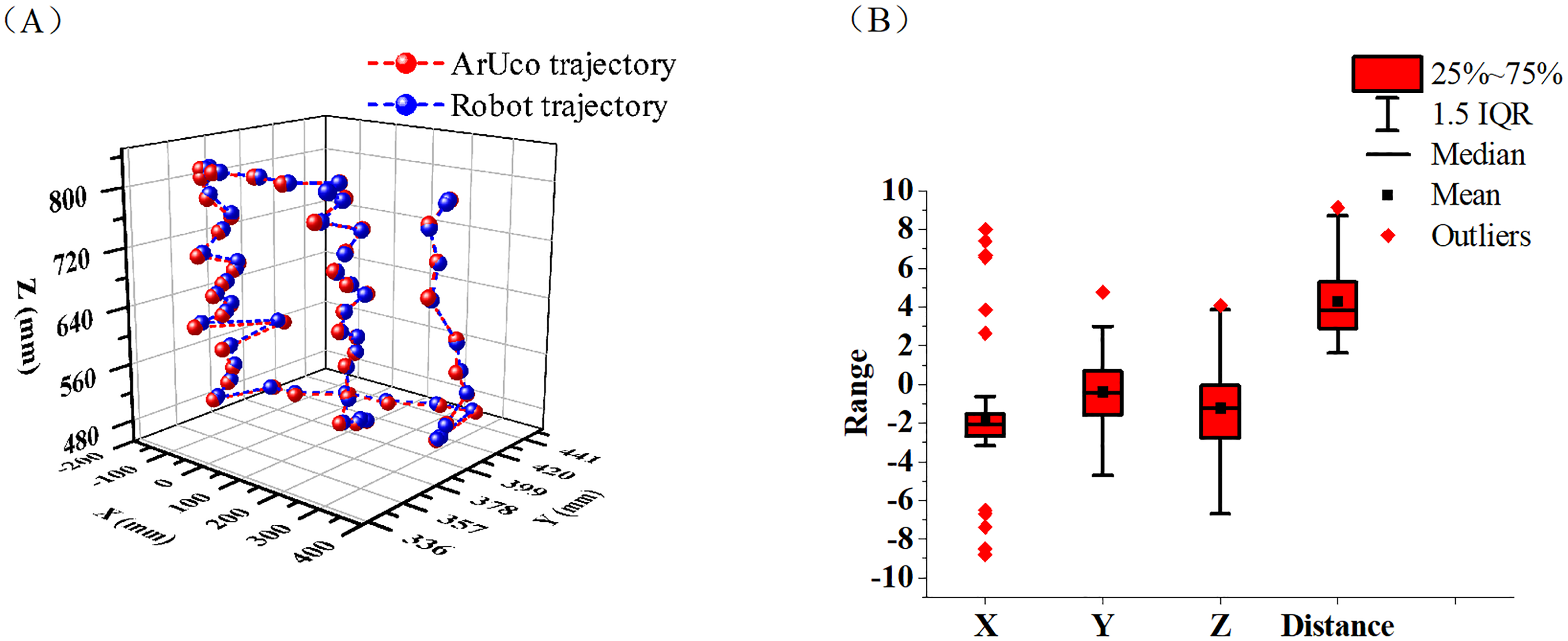

Robotic arm trajectory mirror tracking accuracy test

To test the trajectory tracking accuracy of the robotic arm, in this experiment, we place the visual tag tool on a stand and move it in space, with the robotic arm following the movement in real time. The system then recognizes and records the motion trajectory of the visual tag at a sampling rate of 60 Hz. Then, the mirrored trajectory of the tag is obtained through hand–eye calibration and the mirror transformation matrix, with the time. A comparison of the trajectory of the visual tag and the robotic arm's tracking trajectory error, along with the statistical analysis results, is displayed in Figure 12.

(a) Comparison graph between prop motion trajectory and tracking trajectory of end of robotic arm after origin alignment. (b) Result of statistical analysis of tracking error.

In the selected area of this experiment, the average distance error of the robotic arm's trajectory tracking is 4.3 mm, with a standard deviation of 1.9 mm (Table 2). Therefore, the robotic arm's tracking precision in this system roughly meets the accuracy requirements for the rehabilitation training outlined in this study.

Statistical indicators of X, Y, and Z axis tracking errors for props and robotic arm ends.

Functional validation

In order to verify whether the system meets the expected standards, we invited three adults as subjects to conduct functional verification of the system. During the trial, the subjects’ limb movements and emotional states during training were recorded via cameras. Results within a 10-s window were extracted and presented. In this process, to test the feasibility of emotional state recognition during system operation, we required the subjects to intentionally exhibit three types of emotions (negative, neutral, and positive) in sequence. Before the experiment began, the participants were informed of the specific functions of this system and signed informed consent documents. This study has passed the review of the Ethics Committee of Nanjing Qixia District Hospital (Report No. 20220609), and the Ethics Committee approval date is June 9, 2022. Figure 13 shows the system assisting patients with rehabilitation training while its emotion recognition module analyzes, displays, and records the patients’ real-time emotional states. Findings suggest that this system can aid in robot-assisted mirror rehabilitation training while monitoring the subject's facial expressions and implementing emotion-based intervention during training, ensuring patient safety throughout the activity. This result indicates the initial success of our approach in integrating emotional factors into upper-limb mirror rehabilitation training.

System function verification: (a) Real-time visualization of emotional states during training; (b) backend display of subjects’ training videos; and (c) overview of upper-limb rehabilitation tasks performed by subjects in 1–10 s.

Discussion

This paper proposes an upper-limb mirror rehabilitation training robotic system integrated with emotional factors and verifies its performance and feasibility. The system monitors patients’ emotional states while providing robot-assisted rehabilitation training support. Specifically, machine vision is adopted to track the motion trajectory of the patient's unaffected limb, and facial expression analysis is implemented to assess emotional state, this emotion recognition module consists of two sequential steps: first, Yolo_v11 is used to detect and localize human faces from video streams, which is a well-established and mature technology; second, ResNet_50 is employed to analyze the identified facial regions and determine the corresponding emotional states, effectively avoiding the interference of different environmental factors on the detection results. Through the above mechanisms, the system achieves upper-limb mirror rehabilitation training that incorporates emotional factor regulation.

For the first time, this study attempts to incorporate emotional factors into robot assisted upper-limb mirror rehabilitation by using machine vision to capture motion intentions and emotional states, thereby realizing a closed-loop intervention mode of “motion intention capture – real-time emotional state monitoring – dynamic adaptation of rehabilitation strategies.” This innovative design breaks through the limitation of traditional rehabilitation robots that only focus on motor function recovery. The system can not only accurately respond to the patient's movement instructions, but also adjust training or trigger safety protection mechanisms according to emotional fluctuations. In terms of safety assurance, the system has established a triple collaborative protection mechanism, which specifically includes an emergency stop button, a personalized safety force threshold, and an emotion-driven emergency shutdown measure, forming a comprehensive safety barrier to ensure the safety of patients during training.

It is worth noting that the determined motion intentions and emotional states can only partially reflect the true thoughts of patients. Physiological signals, such as IMU, EEG, and ECG signals, can reflect a patient's motion intention and emotional state,16–18 but relying on a single modality may compromise accuracy and make precise recognition difficult. Therefore, multimodal fusion techniques for motion intention and emotional state recognition should be explored for accurate identification.

Performance and functional verification results demonstrate that the accuracy of motion intention recognition and emotion recognition of the system has met the expected standards, which can satisfy the requirements of routine upper limb rehabilitation training. Notably, integrating emotional factors into the rehabilitation process and implementing intervention optimization based on emotions is a cutting-edge research direction with both complexity and challenges, and the research team is still in the preliminary exploration stage of this field. Based on this, in the initial phase of the research, we completed the system functional verification by recruiting healthy subjects. Its core goal focused on verifying the feasibility and safety of the system's core technologies, laying a solid foundation for the subsequent clinical transformation of the technologies. Meanwhile, the research group deeply recognizes the critical significance of clinical verification for the implementation of the research, has initiated cooperation with relevant clinical institutions to advance the preparation of clinical trials, and has successfully completed the special tests on safety and performance conducted by third-party institutions. At this stage, we are recruiting clinical subjects among patients with upper limb hemiplegia after stroke, aiming to evaluate the practical application effectiveness of the system in real clinical scenarios.

In future research, we will further integrate multimodal physiological data to improve the accuracy of emotion analysis; simultaneously supplement and improve clinical trial data to continuously enhance the clinical value and transformation potential of the research results. In addition, we will iteratively upgrade the emotion intervention optimization strategy in combination with the pain points of actual clinical diagnosis and treatment, promoting the deep adaptation of technology to clinical needs.

Conclusion

This paper proposes an upper-limb mirror rehabilitation training robot system that integrates emotional factors. The system uses machine vision to recognize the patient's emotional state and motion trajectory on the unaffected side while incorporating emotional factors into the rehabilitation process to optimize their rehabilitation experience. The patient's facial expressions and motion trajectory on the unaffected side are visually observed using this system. By analyzing emotional states through the system's emotion recognition algorithm as a monitoring indicator, rehabilitation therapists can identify and analyze the patient's psychological state and then design personalized physiological and psychological intervention plans. In the future, we will incorporate physiological electrical signals as assessment indicators into the motion intention recognition and emotion recognition modules to improve the system's accuracy in motion intention and emotion recognition. Clinical trials will also be conducted to refine the emotion-integrated rehabilitation optimization strategy and enhance rehabilitation outcomes.

Footnotes

Ethical approval

This study has passed the review of the Ethics Committee of Nanjing Qixia District Hospital (Report No. 20220609), and the Ethics Committee approval date is June 9, 2022.

Consent for publication

The consent for publication of this article has been obtained from the volunteers who participated in the experiment.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article. This work was supported in part by the National Key Research and Development Program of China (2022YFC2405600), in part by the National Natural Science Foundation of China (62075098, 61401217, W2433193), in part by the Leading-edge Technology and Basic Research Program of Jiangsu (BK20192004D), in part by the Key Research and Development Program of Jiangsu (BE2022160), and in part by the Natural Science Foundation of Jiangsu Province China (BK20230301). This work was also supported by Major Project of Basic Science (Natural Science) Research in Higher Education Institutions of Jiangsu Province (25KJA416001).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The raw data analyzed in this study are publicly available from the AffectNet dataset,

35

accessible via its DOI: ![]() .

.