Abstract

In small- and medium-sized enterprises, machine tools generate significant quantities of various types of dust, including plastic, iron, and other materials. To facilitate efficient cleaning or recycling of these materials, workers are required to manually vacuum the machines, directly targeting the accumulated dust. This paper introduces a novel approach for automated cleaning in industrial settings, diverging from the common practice of systematic surface coverage. Our method, mobile arm vision-based adaptive cleaning, employs a two-stage adaptive strategy that necessitates only a coarse representation of the machine. First, an offline process optimizes the positions of the robot’s mobile base and arm configurations to maximize the visibility of machine sections from the camera’s perspective at the end effector. Then, an online process detects dust accumulations and adjusts its trajectory based on their locations. We demonstrate the capability of our approach by cleaning three machines with intricate geometries. Our results indicate that our method can clean approximately 84% of the dust heaps present on the machines.

Introduction

Cleaning workstations is a common yet complex task in industrial settings, typically requiring customization for each space. Factories often accumulate dust from fabrication equipment, such as cutting and grinding machines, which can slow production and adversely affect the environment. The continuous presence of dust, which often cannot be cleaned continuously, poses a potential health risk to workers and represents a form of waste that could potentially be recycled or reused. Under these conditions, cleaning must be both efficient and thorough, as dust accumulation can hinder workers’ health and production goals. To overcome these problems, the factories can install static vacuum cleaners as needed along the production line, which typically presents a costly and cumbersome solution. When connected to a central vacuum system, this setup also limits reconfigurability. Automated cleaning methods have also been proposed, typically relying on systematic surface coverage.1,2 Although these methods can ultimately achieve the cleaning objective, they are often highly inefficient in practice, as they do not distinguish between clean and dusty areas. Other works have explored artificial intelligence (AI)- or reinforcement learning (RL)-based techniques,3–5 but these approaches demand large datasets, long training times, and have been tested mainly on simple geometries such as tables or floors, making them unsuitable for industrial machines with complex structures.

To address these challenges, this article presents a robotic cleaning platform that includes a serial manipulator equipped with an RGB-D camera, mounted on an automated guided vehicle mobile base (Figure 1). This robot is specifically designed to facilitate the cleaning of industrial machines with diverse geometries.

The system cleaning a machine using mobile arm vision-based adaptive cleaning (MAVAC).

This paper introduces mobile arm vision-based adaptive cleaning (MAVAC), a novel two-stage framework that bridges the gap between systematic coverage and AI-based methods. Unlike traditional automated cleaning approaches1,2 that rely on monotonous, progressive surface scanning within predefined cleaning areas, our method is vision-based and adaptive: dust is detected using an onboard camera, and targeted motions are planned to vacuum the identified dust accumulations. We assume that at least a coarse representation of these machines is available. Should such a representation be lacking, our approach can use a basic environmental model obtained. As an example, a machine with an approximate volume of 1.3 m3 and an exposed surface area of about 4.97 m2 can be reconstructed using photogrammetry or algorithms such as RTAB-Map with a standard smartphone in about 10 min. 6 Initially, an offline process optimizes the positions of the robot’s mobile base and arm configurations to maximize the visibility of machine sections from the camera’s perspective at the end effector. Then, the online process uses the camera to efficiently scan the machine from strategic viewpoints. Starting with the end effector poses that offer the best coverage, vision algorithms are employed to detect dust and formulate cleaning trajectories. This adaptive, vision-based approach ensures that dust is cleaned from all detected locations, creating a comprehensive map of dust distribution on the machine. Impedance control is implemented in the robot to facilitate efficient cleaning by enabling direct interaction between the suction hose and the machine, much like a human cleaner would. Moreover, this approach provides the necessary compliance for the robot to make contact with and adapt to the machine’s geometry, offering increased flexibility in handling contact with the machine’s surfaces.

Overall, the contributions presented in this paper are threefold:

We introduce an adaptive strategy in which online dust detection exploits offline path planning for inspection to adjust trajectories and target only dirty surfaces. We introduce a refined definition of accessible surfaces, combining reachability and visibility, to more realistically evaluate what parts of the machine can be cleaned. We validate MAVAC on real machines with concavities and irregular geometries, while impedance control ensures safe interaction with machine surfaces.

This paper is organized as follows: the “Related work” section examines existing methods and research relevant to our study, and the “Cleaning task formulation” section defines the problem of robotic cleaning. The “Mobile arm vision-based adaptive cleaning (MAVAC)” section presents our adaptive vision-based cleaning approach. The “Experimental setup” section details our experimental setup, including the description of the robotic platform, testbeds, and data collection. The “Results” section details our experimental findings. The paper concludes by summarizing our findings and their implications, along with discussing limitations and suggesting avenues for future work.

Related work

Kim et al. 7 provide an insightful review of methods utilized for cleaning with mobile manipulator robots. These challenges are commonly categorized into three subproblems: path planning, dust detection, and trajectory generation for the cleaning task.

Mobile base placement for coverage path planning

Methods determining optimal base placement for effective manipulation or coverage often employ reachability maps to evaluate the maneuverability of the robot arm. Prior studies8–10 have demonstrated the value of these maps, which identify positions where the robotic arm can operate in the best possible conditions. For instance, Vahrenkamp et al. 9 study the inverse reachability map to position the mobile base for object grasping. By dividing the workspace, Porges et al. 10 suggest using a capability map, which is essentially a reachability map with a quality index for every position, to provide a more accurate assessment. Makhal et al. 8 expand on this work to present a real-time robot base placement method. These studies primarily focus on mobile base placement for object grasping at a specific position. In the context of coverage path planning, the objective is not to reach specific positions but to cover a surface efficiently using a trajectory that minimizes mobile base movements. To address this issue, Paus et al. 1 present a greedy method to select multiple local positions to cover a machine entirely. By iteratively exploring different positions around the machine and estimating the accessibility of poses for the robotic arm, this approach aims to achieve the most complete coverage possible. However, this method has only been tested in simulation and never in real environments.

Other techniques, such as stochastic and genetic algorithms, 11 as well as hybrid solutions, 12 have been explored to improve machine coverage and cleaning process efficiency. However, these studies generate trajectories to cover the machine according to the action range of the tool center point (TCP).1,2,13,14 Systematically cleaning the entire machine using only the action range of the TCP is not efficient enough, as only dirty machine parts should be vacuumed.

Industrial dust detection

Two main approaches are commonly used for dust detection. The first relies on classical image segmentation to identify visually distinctive types of dust.14,15 These techniques typically use color-based segmentation and background subtraction. Since highly precise three-dimensional (3D) cameras cannot easily be mounted on the robot, and because dust particles are too small for reliable depth sensing, only two-dimensional images can be used for detection.

The second approach leverages convolutional neural networks (CNNs) to identify dirty or contaminated surfaces. Ramalingam et al. 16 employ a YOLOv3-based CNN to detect door handles and autonomously disinfect them. Yin et al. 5 train a DarkFlow-based CNN to detect food debris on tables and prompt wiping actions, distinguishing between liquid and solid residues. More recently, Chen et al. 17 introduce the use of S-YOLOv5s for dust detection in real-world environments.

The literature emphasizes the significance of CNN-based detection for effective cleaning, as it accommodates adaptation to different environments and lighting conditions.

Trajectory generation for cleaning

Cleaning methods using either RL or CNN have been proposed to generate robot movements. Lew et al. 4 use an RL method to wipe dust on a table. The robot is trained according to a dust model and controlled in admittance. More recently, Gaurav et al. 3 combine a time contrastive network and RL to mimic the human mopping behavior from a video. Using demonstration learning, Jaeseok et al. 18 and Cauli et al. 19 present a method to clean a table. Further methods, as Saito et al., 20 extract features from video with a CNN and use them in a deep neural network with the robot force and joints information. However, these machine learning methods require a large set of data or extensive training time, and are only tested on tables or flat floors. Cleaning complex machines is most likely to require significantly more data and time.

Various methods employing classical control have also been investigated to generate cleaning trajectories using traveling salesman problem (TSP) algorithms. Wakayama et al. 14 leverage RoboDK for trajectory generations in cleaning applications. More recently, Qi et al. 2 and Vatavuk et al. 21 segment specific areas such as furniture surfaces or areas occupied by grapevines, and then apply an algorithm to cover these surfaces. Similarly, Zhang et al. 22 segment the surface to be cleaned into small circles and generate a path using a TSP algorithm. These approaches have been primarily developed for specific domestic contexts where the entire surface needs to be cleaned.

In industrial settings, dust can be segmented, and the robot can directly target dust heaps, 5 thereby improving the cleaning process by reducing unnecessary cleaning time and enhancing efficiency.

For all the aforementioned tasks, such as wiping, scrubbing, or vacuuming, the robot has to be in contact with the surface during the execution of the cleaning trajectory. With a rigid tool, the robot may damage the surface or itself. To reduce this risk, Yuang et al. 23 and Wakayama et al. 14 use highly compliant tools adapted to their environments. Another solution could be to use an impedance control as Lew et al. 4

Cleaning task formulation

As a general observation, systematic methods presented in the “Related work” section tend to be slow because they do not specifically target regions where dust is present. AI-based methods, such as CNN or RL, use a camera to detect dust and guide robot movements. However, these methods have, so far, only been applied to cleaning simple structures, such as tables. Most industrial machines, unlike these simple geometries, are not solely made of flat surfaces but also complex shapes, including concavities and hard-to-reach surfaces.

To design and evaluate our dust cleaning method, some information must be available regarding the cleaning task. In addition, at least a basic model of the machine should be readily available. Moreover, human presence during navigation within the factory is not considered.

The cleaning process requirements are determined as follows:

The machine should be cleaned as thoroughly as possible. The robot must adapt its trajectories to avoid damaging the machine in 12 trials and the surrounding environment. The robot should minimize cleaning time. The robot must be compliant when in contact with the machine. The approach should be generalizable to different machines and environments.

The machine’s surface is discretized into a point cloud, denoted as a set

During dust detection, one of the main objectives is to minimize the amount of required movements, either by the arm or the mobile base. This is achieved by minimizing the number of spots

Mobile arm vision-based adaptive cleaning (MAVAC)

Our approach is divided into two interconnected parts, each targeting specific aspects of the cleaning process: offline computation, which generates trajectories for the mobile base and the arm to inspect the entire machine; and online execution, which adapts the arm’s trajectory to the real environment and to the detected dust.

Offline computation

The objective of the offline computation is to generate a set of poses

First, the spot with the highest score is added to the set ( Then, accessible surface elements belonging to this spot are removed from the accessible surfaces for all remaining spots. This ensures that only unaddressed surface elements remain for consideration. Potential spots are rescored, and the process is repeated until the newly highest score is lower than a predefined threshold.

The threshold is fixed at the first iteration by multiplying the highest score by a user-defined coefficient,

Overview of the offline algorithm: spots are selected from the machine model and the reachability map; for each spot, guard configurations are selected to inspect the machine; a special exploration is done for non-observed surface (NoS) to get the final trajectory.

Illustration of the visible point definition used in the MAVAC algorithm. Purple points represent the set of surface points visible from the current camera position, as determined by the HPR algorithm. The white translucent pyramid represents the emulated camera FoV; all points within this volume are considered visible by the MAVAC method. The red point indicates the target surface used to determine the camera’s guard position. MAVAC: mobile arm vision-based adaptive cleaning; HPR: hidden point removal; FOV: field of view.

where

Steps of the iterative selection process are applied similarly for the guards’ selection. The threshold is defined at the first iteration by multiplying the highest score by the user-defined coefficient

Illustration of the method to find the best gateway for a non-observed surface (NoS). (a) Rays (red) cast outward the machine mesh (gray) and (b) rays (green) exiting from the best gateway of the NoS (blue).

Subsequently, a collision-free path is sought for the guard point resulting from this operation, called NoS guard. If a path is valid, the NoS guard is incorporated into the offline trajectory.

Online execution

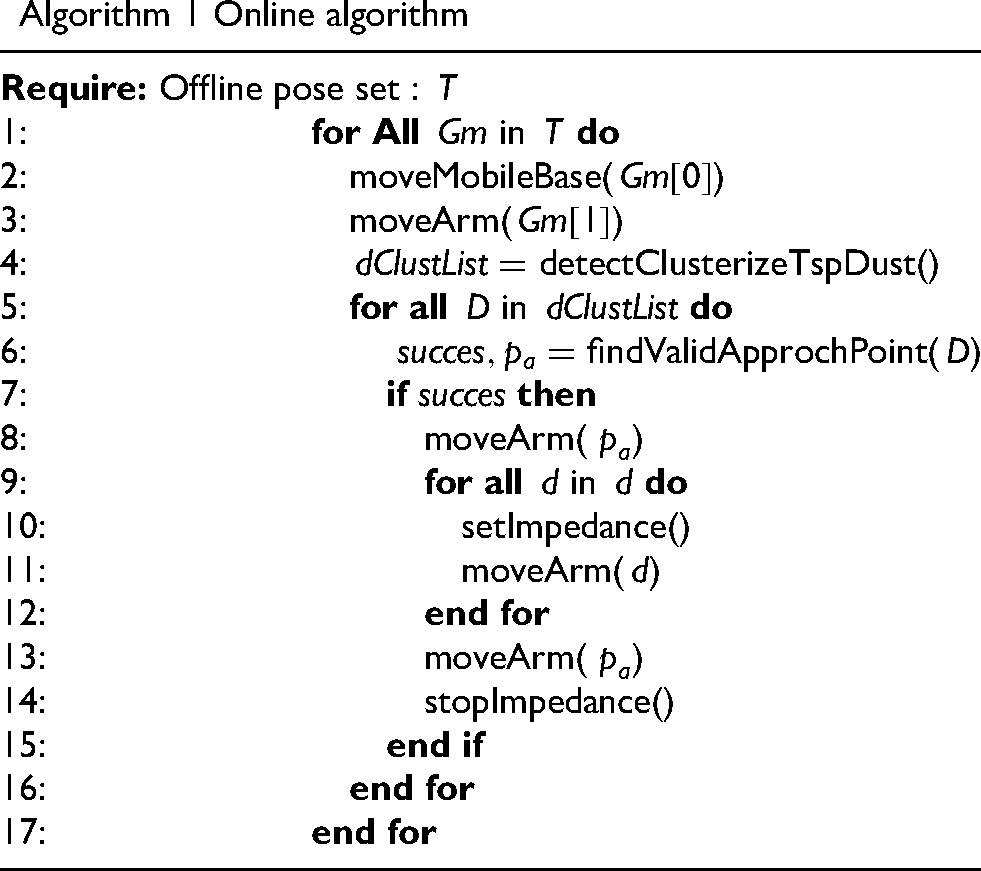

Using the offline trajectory, the online execution follows several steps essential for efficient inspection and cleaning: dust detection, local trajectory generation, and dust collection. Algorithm 1 summarizes these steps.

Algorithm 1 Online algorithm

Robot tool with the Azure Kinect camera on the top.

Experimental setup

The robot mobile manipulator used in the trials consists of a MiR200 mobile robot and a Doosan M1013 manipulator arm. The manipulator arm has been strategically positioned at the center of the MiR200, thereby avoiding forward tilting and maximizing its motion range. Our approach is implemented on a computer equipped with an i9-12900H processor and an Nvidia RTX A2000 8 GB graphics card. The operating system used is Ubuntu 20.04. For real-time control, the system relies on Moveit! library, which runs on the ROS Noetic middleware. Moveit! is employed with real-time collision detection, using an octomap with a resolution of 2 cm. The TCP-mounted camera configuration, together with the offline exploration strategy (including the NoS method for concavities), ensures that all surfaces/obstacles are observed from appropriate viewpoints before trajectory generation. This approach enables the robot to safely vacuum even in geometrically challenging areas.

To ensure both the safety of the robot and the integrity of the machine, we use impedance control when operating close to the machine.

To benchmark our approach, a self-made test bench measuring 2 m

For evaluation purposes, green dust heaps are evenly distributed at intervals of 10 cm on the surface, including within the concavities. Green dust detection is performed using an Azure Kinect camera and a simple HSV filter with fine-tuned parameters. For real-world industrial cleaning applications, other detection methods could be implemented, as outlined in the “Industrial dust detection” section.

Results

This section evaluates the offline computation method used to configure the parameters for the online execution phase, which is also analyzed.

Offline computation

Offline computation relies on four key parameters:

To evaluate

The optimal value of

The parameter

Average percentage of coverage (green) and overlap (red) per

Following the same tradeoff principles applied to

Parameters

For a fixed

Average percentage of coverage and overlap per non-observed surface

As a result, to inspect machines following the requirements from the “Cleaning task formulation” section, we suggest to set

Online execution

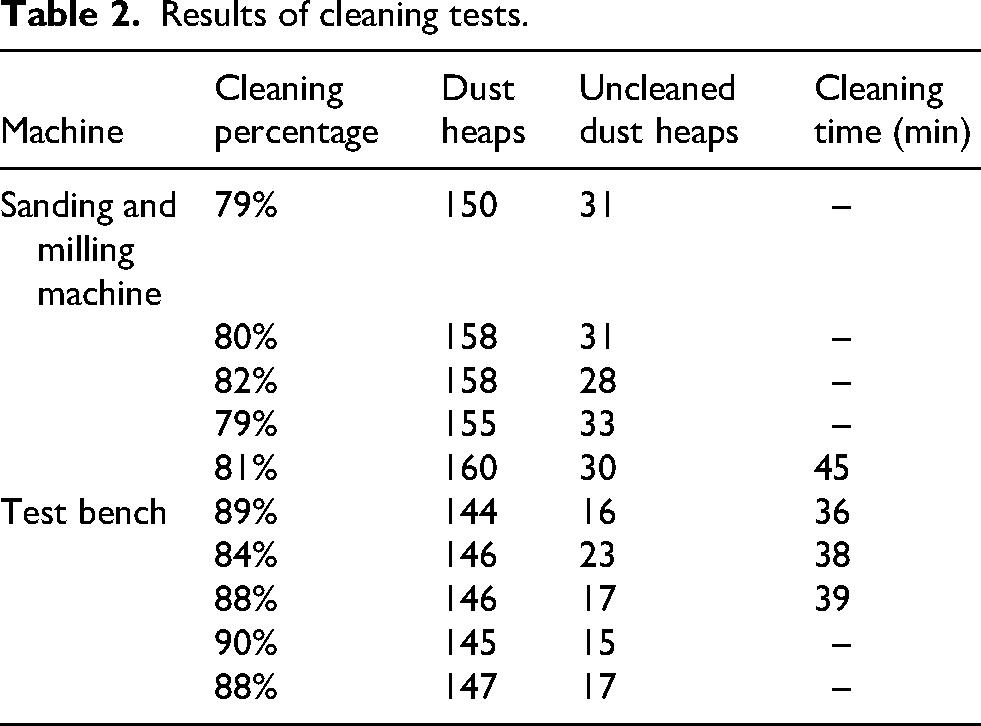

Ten trials were carried out on three scenarios as shown in Figures 8(a) to 8(c). Table 2 presents the percentage of dust heaps vacuumed calculated at the end of each trial. The milling and sanding machines have been cleaned during the same cleaning test. To provide a general indication of the task completion time, a few trials were timed and are presented as illustrative examples. Machine sizes are reflected by the number of dust heaps since they are evenly distributed. For these cleaning trials, the robot achieved an average cleaning efficiency of 84%, indicating that a significant portion of the dust on the machines was removed, although some areas remained uncleaned. The 4% standard deviation of cleaning efficiency across 10 trials conducted on three machines with significantly different morphologies suggests that our method can adapt to various types of machines.

While MAVAC is slower than manual cleaning, direct comparison with other robotic methods is difficult, as most studies report only simulated coverage rather than real cleaning times.

Result of the cleaning process. The top images show the tested machines. Lower images show the cleaned areas in green and uncleaned areas in red. (a) Test bench, (b) sanding machine, and (c) milling machine.

Results of cleaning tests.

Two implementation issues were identified. First, during the milling machine cleaning, the robot detected dust inside the box. However, the path planner failed to generate a valid route due to the tool’s large size. Extending the suction tube could allow access to this area. However, this might introduce new issues, such as the camera’s blind spot widening. The second point concerns the RRT-connect path planning algorithm, which is highly central processing unit-intensive and requires a significant amount of time to generate a trajectory in densely cluttered environments. Currently, newly designed path planners for highly cluttered environments, such as the one proposed by Balakumar et al., 30 could provide a potential solution. These planners appear to be specifically tailored for such environments, incorporating real-time collision detection with an improved resolution of around 1 cm.

Discussion

The primary research contribution of MAVAC is the combination of offline reachability/visibility-based planning with online dust-adaptive cleaning, validated experimentally on real machines with complex geometries.

While a direct quantitative comparison of time savings and motion reduction against systematic coverage methods would be valuable, such benchmarking is challenging due to the lack of established baselines in the literature. Previous work on robotic cleaning typically reports coverage percentages in simulation rather than actual cleaning times or motion metrics.1,11,22 Qualitatively, MAVAC’s adaptive approach provides inherent efficiency advantages over systematic coverage methods: the robot only generates cleaning trajectories to detected dust locations rather than sweeping entire surfaces. By avoiding redundant motion over clean areas, the method prioritizes efficiency without sacrificing cleaning effectiveness. This distinction becomes increasingly significant for large or complex machines where dust distribution is sparse or localized. The primary tradeoff lies in the computational overhead of dust detection and adaptive trajectory planning. However, as demonstrated in our experiments, the online detection and planning process operates efficiently enough to maintain practical cleaning times (36 to 45 min for machines with 144 to 160 dust heaps distributed across surfaces ranging from 5 to 6 m

Another important contribution of MAVAC lies in its definition of “accessible surfaces.” By evaluating surfaces that can truly be reached and cleaned, MAVAC provides a more realistic assessment than methods relying solely on mesh collision checks or nominal reachability maps. This refined definition explains why MAVAC’s reported coverage percentages may be lower than those typically found in the literature. Part of this discrepancy is due to occasional misclassifications by the HPR method, which can incorrectly distinguish between visible and non-visible surfaces. However, these lower coverage values do not necessarily mean that dust remains uncleaned; on the contrary, our experiments show that MAVAC can reach challenging areas (e.g. inside concavities) that standard methods might miss.

A further strength of MAVAC is its reliance on observation trajectories planned with respect to joint configurations, rather than solely on Cartesian paths or static assumptions about machine geometry. This design choice, differing from many existing methods,1,22 enables the cleaning trajectories to adapt seamlessly to real-world changes in the workspace. Such adaptability is particularly beneficial in small- and medium-sized enterprises, where machine layouts are frequently modified. Indeed, between our tests in the fabrication laboratory, the machines were slightly rearranged, yet no additional scanning was required to update the cleaning plan.

In contrast to AI- or RL-based cleaning approaches,3,4 MAVAC does not require large datasets or long training phases, and it has been validated on industrial machines with complex geometries. Previous learning-based approaches have primarily been demonstrated on simple flat surfaces such as tables or floors, whereas MAVAC addresses the challenges of concavities and irregular machine structures. This distinction highlights MAVAC’s potential for practical deployment in real industrial environments.

While the current implementation still leaves room for improvement, particularly in visibility classification and trajectory computation, these challenges are shared by most state-of-the-art methods. The main opportunities for enhancement are:

Overall, MAVAC advances robotic cleaning by introducing an adaptable and experimentally validated framework that directly addresses the limitations of both systematic coverage and learning-based approaches.

Conclusion

This paper introduces an approach for cleaning machines in industrial environments. By cleaning only the detected dust, this method is designed to be inherently more efficient than systematic covering methods and suitable for complex machines. The approach involves the inspection of machines using an RGB-D camera attached to a mobile robot manipulator’s end effector. The offline inspection path, informed by a coarse model of the machine, facilitates precise dust detection and the generation of an online cleaning path. The approach has been successfully tested on a test bench as well as on two real machines from a fabrication laboratory. The experimental results demonstrate that the cleaning task can be accomplished with an 84% average success rate. By developing suitable detection techniques, this method could be adapted to various types of dust or even extended to other tasks, such as painting or sanding.

Future work will focus on solving the problem of unreachable areas. A solution could be to adapt the reachability map of the robot arm by moving the mobile base during the cleaning process.

Footnotes

Acknowledgements

This work was supported by the Natural Sciences and Engineering Research Council (NSERC) through the Collaborative REsearch And Training Experience (CREATE) program, specifically the Collaborative Robotics for Manufacturing (CoRoM) program, Sycodal Electrotechnique Inc., and by Mitacs via the Mitacs Accelerate program.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Natural Sciences and Engineering Research Council of Canada (NSERC) through the CREATE Program on Collaborative Robotics for Manufacturing (CoRoM), and by Mitacs and Sycodal Electrotechnique Inc. through the Mitacs Acceleration program (Grant IT25843).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.