Abstract

Objective

This study aims to develop and evaluate an autonomous surgical system based on the Toumai laparoscopic surgical robot, focusing on improving the precision and reliability of automated cutting and suturing operations.

Methods

The proposed system integrates several key components: (1) Robotic arms and associated control systems. (2) An endoscopic system supporting advanced visual image algorithms. (3) Specialized surgical instruments for cutting and suturing. A binocular stereo matching algorithm is employed to obtain depth information from the field of binocular camera. The DarkPose image key point localization algorithm and the Yolov5 image detection algorithm are utilized to accurately determine the positions of surgical instruments, suture needles, and target points. Additionally, an image classification discriminator is introduced to assess the success of the surgical tasks. A finite state machine model is used to guide the robotic arm's end-effector through real-time trajectory planning and execution, ensuring precise completion of surgical tasks.

Results

Experimental evaluation demonstrated that the autonomous system achieves high precision and reliability in both cutting and suturing tasks. Quantitative analysis shows that the system maintains an 85% success rate in automatic cutting, with a mean time of 5.10 s per cutting action. The automatic suturing task achieves a 92% accuracy rate in instrument positioning and a 90% success rate in needle grasping.

Conclusion

The developed system shows significant promise in automating key laparoscopic surgical tasks, with the potential to enhance surgical efficiency and improve outcomes in clinical practice. Further development and validation of this system could lead to its broader adoption in the field of autonomous surgery.

Keywords

Introduction

Advancements in visual positioning and navigation technology have propelled the development of automated surgical systems, enabling various levels of autonomy.1–5 While earlier robotic applications focused on low-level automation in tasks like puncture and hair transplantation,6–8 this study introduces a highly automated surgical system employing supervised autonomy. The system, based on the Toumai laparoscopic surgical robot developed by Shanghai MicroPort MedBot (Group) Co, Ltd, enhances surgical precision through visual servoing.

By automating repetitive and fundamental surgical tasks such as cutting and suturing, the system improves accuracy, reduces surgery time, and lowers the cognitive burden on surgeons. Future advancements will expand automation to additional tasks, including tissue retrieval, blood suction, organ resection, and wound closure, ultimately forming a comprehensive library of autonomous surgical procedures.9–15 These developments will enable greater efficiency in robotic-assisted surgery, allowing for streamlined execution of complex surgical workflows. Automating these fundamental surgical tasks not only reduces the dependency on human intervention but also ensures greater consistency and precision, which is critical in procedures requiring high levels of accuracy. By integrating machine learning-driven motion planning and force feedback mechanisms, the robotic system can dynamically adapt to variations in tissue properties, further enhancing procedural outcomes.16–19

Integrating these tasks will facilitate complex operations such as nephrectomy, cholecystectomy, and colorectal resections. The ability to autonomously perform multistep procedures with minimal human oversight represents a significant milestone in surgical robotics. As robotic systems become more proficient in real-time decision making and adaptive control, the feasibility of fully autonomous surgeries continues to increase. Recent progress in artificial intelligence (AI) and robotics has significantly enhanced the precision and efficiency of minimally invasive surgical procedures. Advanced visual recognition algorithms, particularly the FP16 model, improve real-time decision making, contributing to more reliable and autonomous surgical systems. 20 These AI-driven models allow for real-time identification of anatomical structures, prediction of surgical tool interactions, and optimization of motion trajectories, all of which are crucial for ensuring safety and efficiency.

In addition to FP16, deep learning-based computer vision techniques such as convolutional neural networks and transformer models have further refined robotic perception capabilities, enabling precise localization of surgical targets and real-time correction of motion deviations. Enhanced depth perception through stereovision and sensor fusion techniques further strengthens the accuracy of robotic systems in dynamic surgical environments. These innovations are paving the way for greater automation in surgery, ultimately improving patient outcomes by reducing complications, minimizing human error, and optimizing procedural efficiency. As the field continues to evolve, the integration of haptic feedback, robotic learning from demonstration, and augmented reality guidance will further enhance the adaptability and autonomy of surgical robots, making them indispensable tools in the operating room. 21

Compared to traditional techniques, robotic surgery offers substantial advantages, utilizing high-resolution three-dimensional (3D) imaging to enhance visualization and precision. The robotic system's wristed instruments provide greater flexibility and dexterity than the human hand, allowing for precise execution of complex surgical maneuvers. These features have led to widespread adoption in various specialties, including urology, gynecology, ophthalmology, cardiothoracic surgery, neurosurgery, and orthopedics.22–29

Additionally, in the Fundamentals of Laparoscopic Surgery standard medical training program, the pattern cutting task requires residents to skillfully operate surgical scissors and tissue forceps to accurately cut out circular patterns on a piece of surgical gauze suspended at the corners. 30 When doctors operate surgical robots to complete cutting tasks, the gauze tends to deform due to the action of the instruments. Research on such deformable materials, especially in cutting tasks, is currently a hot topic in robotic surgery, computer graphics, and computational geometry. Using finite element analysis to reconstruct viscoelastic tissues is computationally expensive. Therefore, researchers are exploring non-model-based methods to address this issue. The learning from demonstration method is a common approach, utilizing human demonstrations to achieve autonomous motion in robotic systems. 31 Thananjeyan et al. 32 explored a finite difference model to simulate gauze deformation and cutting, using deep reinforcement learning and direct policy search methods. They employed a finite element simulator to learn tensioning strategies and then transferred them to the physical system. The results showed that using deep reinforcement learning to learn tension significantly improved performance and robustness to noise and external forces. Murali et al. 33 used a “learning by observation” method, identifying, segmenting, and parameterizing motion sequences and sensor conditions to construct a finite state machine (FSM) for each subtask and validated it in 3D debridement tasks and 2D pattern cutting tasks. Osa et al. 34 proposed a framework for online trajectory planning and force control under dynamic conditions, using Gaussian Process Regression to model the conditional distribution of demonstrated trajectories for given situations, enabling real-time planning of spatial motion and force profiles under dynamic conditions.

Moreover, needle insertion,2,35,36 trajectory planning,37,38 and knot tying39–42 are crucial substeps in the suturing process, and many studies have delved into these areas. Bauzano et al. 43 introduced collaborative human–machine systems to meet the demands of suturing tasks. However, this robot-assisted mode still relies on the manual skills of individual surgeons. In the automatic mode, the surgeon manually outlines the incision in the image, and then the STAR system automatically calculates the 3D position of each stitch at fixed time intervals. The experimental results showed that STAR performed planar suturing tasks five times faster than surgeons using the da Vinci Surgical System, nine times faster than experienced surgeons using manual laparoscopic tools, and four times faster than surgeons using the manual Endo360°, proving its effectiveness.

Methods and materials

As shown in Figure 1, the proposed vision-based automatic surgical system includes three core components: the vision system, robotic system, and experimental environment. Communication between the vision and motion control systems is handled via Transmission Control Protocol/Internet Protocol (TCP/IP). The user initiates the selected surgical task through the doctor–end interface, activating the corresponding vision and control algorithms.

Automatic surgical system with the Toumai robot.

The vision system is equipped with a 3D electronic endoscope and automated surgical vision algorithms. The 3D electronic endoscope calibrates the captured environmental images and relays them to the vision algorithm system. This system is responsible for extracting the positions of targets, instruments, and needles from the images, utilizing a classifier to categorize the experimental outcomes.

The robotic system includes a robotic arm and an automated surgical motion control system. The motion control system generates a specific automatic surgical FSM model tailored to the selected surgical task. Based on the feedback from the vision system, the automated surgical logic controller plans and controls the robot's movement path, transmitting these plans to the robotic arm for execution.

To accommodate the diverse requirements of real surgical procedures, it is essential to develop task-specific vision algorithm systems and customized FSM models that can adapt to different surgical scenarios. This study presents two specialized frameworks: a vision algorithm system and FSM model for automatic cutting and a vision algorithm system and FSM model for automatic suturing. These models are designed to optimize the execution of their respective tasks by integrating real-time visual feedback with precise motion control. The following sections will provide a detailed explanation of their design and implementation.

The system comprises a vision system (3D endoscope and vision algorithms for cutting and suturing) and a robotic system (doctor–end user interface (UI), motion control system, and robotic arm). The motion control system integrates state machine models for both tasks. Experimental scenarios validate the system's effectiveness.

Vision subsystem

The vision subsystem is a critical component of the proposed automatic surgical system, providing the necessary visual feedback and data for precise surgical operations. It comprises two main parts: the 3D endoscope system and the vision algorithm system.

3D endoscope system

The 3D endoscope system is responsible for capturing high-resolution, 3D images of the surgical environment. This system includes a 3D electronic endoscope that is calibrated to ensure accurate and reliable image data. The captured images are then transmitted to the vision algorithm system for further processing.

Automatic surgical vision algorithms

The surgical images transmitted from the 3D electronic laparoscopic endoscope system are processed by the automatic surgical vision algorithm system. The processed results are then sent to the motion control system. All automatic surgical vision algorithms are deployed on a dedicated algorithm server. For this task, we use the NVIDIA A100 Tensor Core Graphics Processing Unit (GPU), which features 40 GB of HBM2 memory, 6912 Compute Unified Device Architecture (CUDA) cores, and 432 Tensor cores. This server supports acceleration across a full range of precisions (from FP32, FP16, INT8 to INT4), and for this task, we employ FP16 algorithm precision.

The selection of the FP16 (half-precision floating point) algorithm in this study is based on its advantages in computational efficiency, reduced memory footprint, and real-time inference speed, which are crucial for autonomous surgical systems. Several studies have demonstrated that FP16 provides an optimal balance between accuracy and efficiency, making it well suited for deep learning applications in medical imaging and real-time decision making.

FP16 significantly reduces computational overhead compared to FP32 while maintaining near-equivalent accuracy. Mao et al. 44 evaluated FP32, FP16, and INT8 for real-time anatomical structure localization in endoscopic pituitary surgery and found that FP16 effectively accelerated inference speed without significant loss in precision, making it ideal for high-speed medical imaging tasks.

Similarly, Miraliev et al. 45 conducted an ablation study comparing FP32, FP16, and INT8 in a real-time multitask learning model. Their results indicated that FP16 offered superior memory efficiency while maintaining high inference accuracy, reinforcing its role as a preferred choice for real-time autonomous applications. 45

Medical AI models often require substantial computational resources, and FP16 reduces memory consumption by half compared to FP32. Huang et al. 46 explored FP16 in intraoperative OCT segmentation and found that it significantly decreased memory usage while achieving inference speeds 1.9× faster than FP32 models.

Additionally, Zhang et al. 47 analyzed FP16 and INT8 quantization for neural network inference and concluded that FP16 maintained better numerical precision than INT8 while achieving significant speedup over FP32, making it ideal for applications requiring high precision and efficiency.

While INT8 can further reduce memory and increase speed, it often sacrifices numerical accuracy, leading to performance degradation in critical medical tasks. Zhang et al. 47 highlight that FP16 preserves more precision than INT8, making it preferable for deep learning applications where exact calculations are necessary.

Furthermore, Mao et al. showed that INT8 models had lower accuracy in real-time anatomical recognition, whereas FP16 provided a crucial balance between speed and predictive performance, ensuring reliable results in autonomous surgical decision making. 44

Automatic cutting vision algorithm

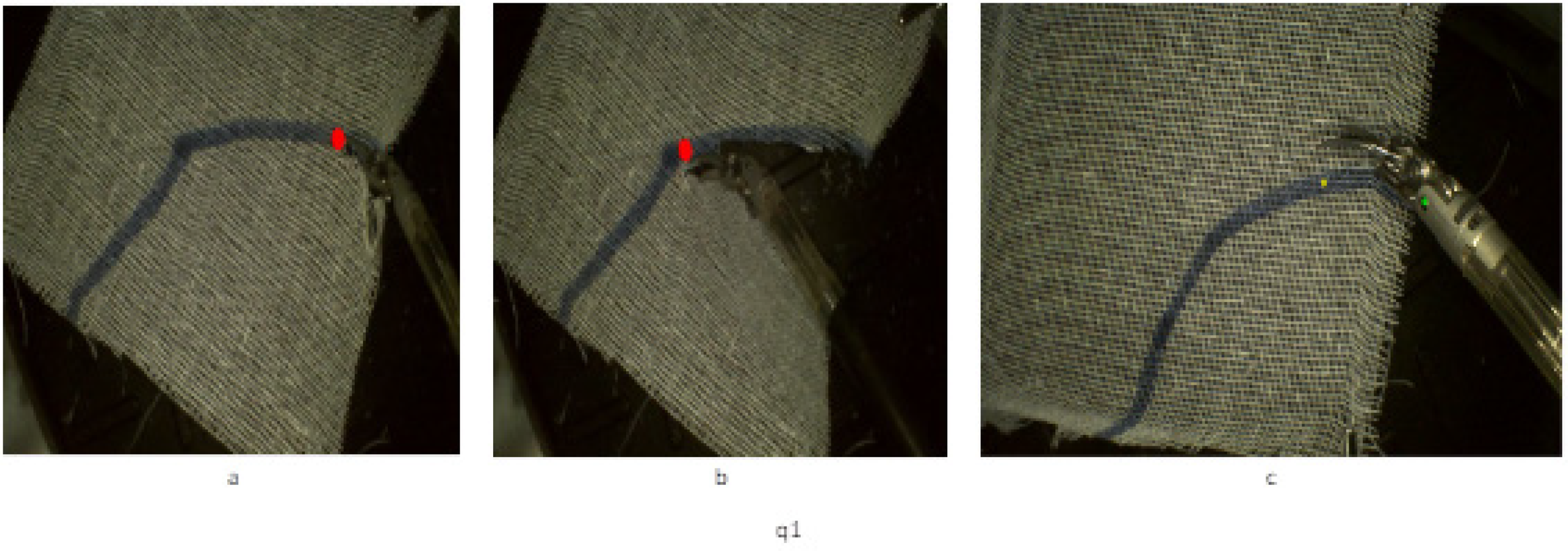

The automatic cutting task along a predefined path requires determining the positions of the path targets, the positions of the instruments, and the depth of both the targets and the instruments within the field of view of the stereo camera. The position of the path target is obtained by projecting the instrument's tip onto the path line, as shown in the Figure 2(a) and (b). The current position of the instrument can be determined using the coordinates of the pivot point Figure 2(c).

The positioning of the path target. (a) Projecting the instrument's tip onto the starting point of the path line, (b) projecting the tip onto the endpoint, and (c) determining the instrument's position using pivot point coordinates.

To determine the positions of the target points and pivot points, we use the HRNet image keypoint detection algorithm, which is a deep learning algorithm. The network structure is shown in Figure 3.

The network structure of the deep learning algorithm.

Horizontally, the network maintains high-resolution feature maps, ensuring that the original image information is not lost due to downsampling. Vertically, it performs multiscale fusion through repeated parallel convolutions to enhance high-resolution representation features. The network ultimately outputs heatmaps of the key points, thereby achieving keypoint localization.

The loss function for the HRNet network model,

49

denoted as

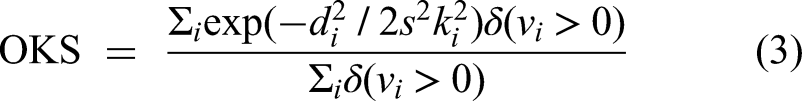

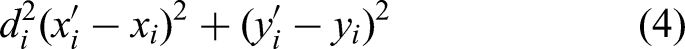

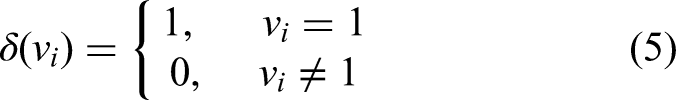

Object keypoint similarity (OKS) is an evaluation metric for keypoint algorithms.

50

Here, i represents any keypoint,

A stereo matching algorithm is used to obtain the depth map in the field of view of a binocular camera. First, a chessboard is used as the calibration board to calibrate the binocular camera. Different poses of the chessboard are sampled, as shown in Figure 4(a). The obtained left and right images are processed using the Matrix Laboratory (MATLAB) calibration toolbox to obtain the intrinsic matrices, distortion coefficients, and the translation and rotation matrices of the right camera relative to the left camera. Finally, stereo rectification is performed to ensure that the pixel points are aligned in height in both images. This means the images from the left and right cameras are perfectly row aligned, as shown in Figure 4(b) and (c).

Stereo matching algorithm for depth mapping in binocular vision.

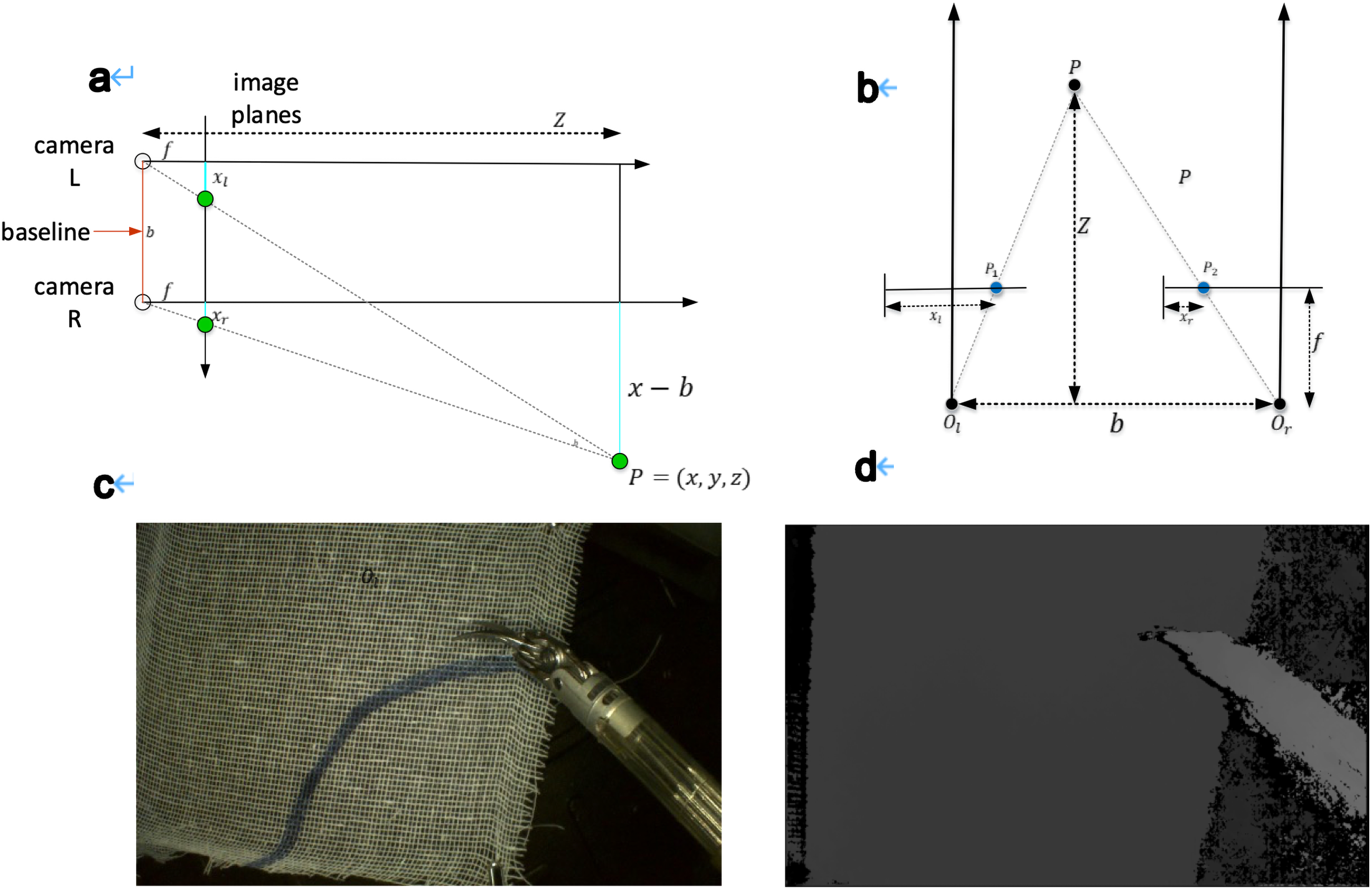

After camera calibration, the same feature points in the left and right images are at the same height. Next, a stereo matching algorithm is used to compute the disparity, and finally, the spatial depth map is obtained based on the triangulation principle, as shown in Figure 5(a) and (b).

Instrument position determined by pivot point coordinates. (a, b) Stereo camera depth estimation for the instrument and path targets, where P represents a point in space, p1 and p2 are its projections on the left and right image planes, f is the focal length, ol and or are optical centers, and xl, xr denote distances from the image edges; (c) determining instrument position using pivot point coordinates; and (d) depth map visualization with color-coded depth variations.

It can be derived that

The camera imaging model is shown in Figure 5(b). Point (P) in space is imaged as p1 and p2 on the left and right image planes, respectively. f is the focal length of the camera, and ol and or are the optical centers of the left and right cameras, respectively. The optical axes of the two cameras are parallel. xl and xr are the distances of p1 and p2 from the left edge of the image on the left and right image planes. The relationship between disparity and object depth is given by equation (1), where b is the baseline of the binocular camera, and z is the depth of point (P) to be determined. From equation (1), equation (2) can be derived. Combining equations (1) and (2), the x and y coordinates of point (P) can be obtained, as shown in equations (3) and (4). The depth map from the stereo camera is shown in Figure 5(c) and (d), where different colors represent varying depths.

Automatic suturing vision algorithm system

The automatic suturing task requires obtaining the positions of the surgical instruments, the position of the surgical needle, and the depth of the target and instruments within the field of view of the binocular camera. The position of the surgical instruments can be determined using the coordinates of the pivot points. The position of the surgical needle can be obtained from the 1/3 and 2/3 points between the needle tip and the needle tail, as indicated by the green circles in Figure 6.

Surgical instrument positioning using pivot point coordinates.

The depth map under the binocular camera is shown in Figure 7, where different colors represent different depths.

The depth map under the binocular camera.

The positions of the instrument pivot points and the needle tip and tail are obtained using the DarkPose keypoint detection algorithm and the Yolov5 object detection algorithm. DarkPose is chosen for its robust performance in human pose estimation, which is adapted here for tracking and predicting the positions of surgical instruments with exceptional accuracy. DarkPose's strength lies in its ability to handle occlusions and complex movements, which are common challenges in dynamic surgical settings. By integrating DarkPose, the system can effectively monitor the orientation and movement of instruments in real time, ensuring that the system can make necessary adjustments to maintain precision during automated procedures. The combination of binocular stereo matching and DarkPose thus ensures a highly accurate, responsive, and adaptable visual system, crucial for the success of automated surgical tasks. Moreover, YOLOv5, a leading model in the you only look once family, is renowned for its speed and accuracy, making it ideal for the fast-paced environment of automated surgery. This model excels at detecting and classifying multiple objects within a frame, which is critical for accurately identifying surgical instruments, tissues, and other relevant structures during an operation. The real-time capabilities of YOLOv5 allow the system to quickly and reliably process visual data, enabling rapid decision making and adjustments to the surgical procedure as needed.

First, the endoscopic images of the surgical instruments are processed using the YOLOv5 object detection algorithm to obtain the bounding box positions of the surgical instruments and the needle. The surgical instruments and needle are then cropped from the original images and input into the DarkPose keypoint detection algorithm to determine the positions of the instrument pivot points and the needle tip and tail.

The network structure of the DarkPose algorithm is shown in Figure 4. The advantage of DarkPose lies in its application of Gaussian distribution statistics to the heatmaps generated by the network output, thereby accurately locating the heatmap peaks, as illustrated in Figure 8.

Gaussian distribution applied to heatmaps for peak localization.

The network structure of the YOLOv5algorithm is shown in Figure 9. It uses a spatial pyramid pooling structure, which performs feature extraction through max pooling with different kernel sizes, thereby increasing the receptive field of the network. In YOLOv5, the spatial pyramid pooling module is used in the backbone feature extraction network.

The network structure of the Yolov5 algorithm.

To increase the success rate of the experiment, a SEResNeXt101 image classification discriminator was introduced to determine whether the surgical needle was successfully grasped. As shown in Figure 10(a), the surgical needle is successfully grasped, while Figure 10(b) shows that the needle was not grasped.

Surgical needle grasp detection using SEResNeXt101.

Motion control system

Surgical robotic system

The experimental setup for this study includes three robotic arms and needle holder tools, all mounted on a steel frame. One arm is used for an endoscope and stays stationary during the experiment. The robotic arms and instruments were made by Shanghai MicroPort MedBot.

The software used for controlling the system is from Beckhoff based on their TwinCAT3 software. This system handles the motion control through various software modules that manage different aspects of movement and control. Communication between the software and hardware is done via EtherCAT, with data sent and received every 250 ms to execute control commands.

Automatic surgical control framework

As shown in Figure 11, the automatic surgical motion control framework incorporates task-specific FSMs (cutting and suturing) managed by a surgical logic controller. The controller oversees system operation, state transitions, and motion parameter updates. A trajectory planner generates real-time position and posture trajectories, while visual feedback refines end-effector pose accuracy. Planned Cartesian velocities are converted to joint velocities via Jacobian inversion and executed by the velocity controller.

Automatic surgical motion control framework.

FSM model

The entire single cutting process and single suturing process are concretized into a FSM,

Automatic cutting state machine model

Arc cutter blade direction adjustment: Before initiating the cutting process, the instrument's orientation is adjusted to ensure that the arc blade at the tip of the monopolar arc cutter remains parallel to the gauze plane. Additionally, the blade should be aligned to point toward the line that connects the current instrument position to the next cutting target.

Arc cutter position adjustment: The instrument is moved to the target location by calculating the required displacement between its current position and the cutting target. A trajectory planning algorithm is utilized to generate the movement path for the instrument.

Cutting: The arc cutter's end rapidly opens and closes three times in succession to effectively cut through the gauze.

Automatic suturing state machine model

Needle grasping: The posture of the left-hand instrument is adjusted so that the needle holder clamp aligns parallel to the horizontal plane. Visual feedback then guides the instrument to the left clamping point of the suture needle to secure it.

Needle pulling: After the needle is securely grasped by the left hand, a 90-degree rotation is performed to smoothly pull the needle tail through the tissue. The suture needle is then moved to the next needle exchange point.

Needle exchange: The posture of the right-hand instrument is adjusted so that the needle holder clamp aligns parallel to the horizontal plane. Visual feedback guides the instrument to the right clamping point of the suture needle to grasp it.

Needle insertion: Once the right hand has secured the needle, the left hand releases it and moves back to the initial position. The right hand then rotates 90 degrees to position the needle tip perpendicular to the tissue. Using visual feedback, the instrument is guided to the next suturing target point to pass the needle through the tissue.

Needle regrasping: A specialized visual feedback component is designed to ensure the success of the automatic suturing process. When the tool arm attempts to grasp the needle, the visual system assesses whether the needle has been successfully secured. If the grasping fails, the tool arm withdraws and attempts to regrasp the needle using updated visual information.

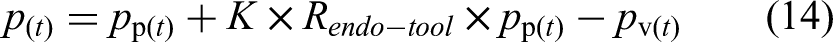

Slave trajectory planner

Surgical robotic systems are typically master–slave operation systems. The Toumai (TMI) laparoscopic surgical robotic system used in this study is also a master–slave robotic system, consisting of a master system and a slave system. The movements input by the operator in the master system are transmitted to the slave robotic arm for execution after being scaled by a certain ratio. The standard system for robotic surgery adopts rate control, where the motion of the slave robotic arm is proportional to the motion input of the master system, such as

The motion planning system developed in this study calculates real-time motion targets based on the instrument position and key target positions provided by the visual system

A control velocity

The motion trajectory of the robotic arm is planned using a quintic polynomial. The planned result ensures that the robotic arm moves to the target position at the end of the motion, with both the velocity and acceleration being zero at the start and end of the motion, and maintaining continuity throughout.

Here,

For the real-time position information

For some posture adjustment tasks in surgical operations, the current rotation matrix

Here,

To calculate the transformation from the initial rotation matrix Use the planned target position

Quantitative validation criteria

To rigorously evaluate the accuracy and precision of the automatic cutting and suturing system, a set of quantitative validation metrics was established for both tasks.

For the cutting task, the following criteria were applied:

Positional error: Deviation between the actual cutting path and the predefined trajectory, measured via image analysis, with an acceptable threshold of ≤0.5-mm per target.

52

Cutting completion rate: Percentage of target points fully severed after three consecutive cuts, ensuring complete gauze separation along the designated path. Edge deformation analysis: The degree of unintended gauze displacement caused by instrument movement, quantified by comparing the gauze's final and initial positions. Depth consistency: Variability in cutting depth assessed through stereo vision measurements, with a maximum allowable variance of ≤0.3 mm.

53

For the suturing task, the following metrics were used:

Positional error: Difference between actual and predefined suture point positions, assessed using image analysis, with an error limit of ≤0.5 mm. Needle insertion angle consistency: Deviation in entry and exit angles of the needle, with a maximum allowable difference of ≤5° to ensure proper tissue penetration. Suture spacing accuracy: Distance between consecutive sutures, with an allowable variation of ≤0.3 mm for uniform stitch spacing. Suturing success rate: Proportion of suture points successfully placed without misalignment or failure, indicating overall task completion reliability. Tissue deformation measurement: Degree of ham deformation during needle manipulation, used to assess the mechanical stability of the suturing process. Force consistency analysis: Uniformity of force applied during needle insertion and pull-through, aimed at minimizing the risk of stitch breakage or slack.

Data collection and analysis

Data collection

The data collection process for this study was meticulously designed to ensure the reliability and accuracy of the results. Data were gathered from a series of controlled surgical simulations using the proposed automatic cutting and suturing control system. The experimental setup involved a range of surgical scenarios, including different tissue types, varying lighting conditions, and distinct cutting and suturing patterns to simulate real-world surgical environments. A 3D electronic endoscope system captured high-resolution images and video sequences during these procedures. The vision system, including the FP16 algorithm, binocular stereo matching, DarkPose, and YOLOv5, processed these visuals to extract relevant data points, such as instrument positions, tissue boundaries, and needle trajectories. Each surgical task was repeated multiple times to ensure the consistency of the data, and the results were automatically logged into a database for further analysis.

Statistical analysis

To analyze the collected data, a combination of descriptive and inferential statistical methods was employed. Descriptive statistics, such as mean, standard deviation, and range, were used to summarize the performance metrics of the system, including accuracy, precision, and time efficiency for each surgical task. Inferential statistics were applied to compare the performance of the proposed system against baseline methods and existing surgical systems. Paired t tests and analysis of variance were conducted to determine the statistical significance of the differences observed between the systems. Additionally, regression analysis was used to explore the relationships between various factors, such as the type of tissue and the success rate of the suturing process.

Handling potential data biases and outliers

Given the complexity and variability of surgical environments, the potential for data biases and outliers was carefully considered throughout the study. To minimize selection bias, a diverse range of surgical scenarios was included, covering different tissue types, instrument positions, and lighting conditions. Randomization was employed in the selection of these scenarios to prevent any systematic bias in the data collection process.

Outliers were identified through a combination of visual inspection of scatter plots and the application of statistical techniques, such as the Grubbs’ test and Z scores. Any data points that fell beyond three standard deviations from the mean were flagged as potential outliers. Once identified, these outliers were scrutinized to determine whether they resulted from experimental error, system malfunction, or genuine variability in the surgical process. Depending on the findings, outliers were either excluded from the analysis or included with appropriate adjustments to the statistical models to account for their impact.

Instrument

The surgical instruments used in this study are all produced by MicroPort (Shanghai) Medical Robotics Group's Toumai endoscopic robotic surgical instruments. The automatic cutting experiment uses a monopolar curved shear to cut gauze. The automatic suturing experiment uses a needle holder to grasp the needle and a medical suture needle for suturing.

Experiments and results

Automatic cutting experiment

Visual results: The detection results for path points and instrument axis points show that the HRNet algorithm can achieve an accuracy of 0.95 in this experiment (AP at OKS = 0.5). In this experiment, the binocular camera, positioned at a height between 10 and 15 cm, has an accuracy loss within 1 mm.

Motion control system verification

A continuous cutting experiment was conducted on the setup shown in Figure 12. A curved path was drawn on a 6 cm × 8 cm white medical gauze using a blue marker, starting from one edge and ending at the opposite edge. The gauze was secured at the four corners using clamps to maintain a stable horizontal plane. An endoscope was fixed vertically on a steel column, with its height controlled between 8 and 15 cm above the gauze, ensuring a clear field of view where all corners were visible.

Automatic cutting experiment.

Cutting targets were placed every 0.3 cm along the curved path. The instrument executed sequential cuts at each target point, adjusting its position dynamically after each cut. To ensure complete separation of the gauze, the system performed three consecutive cuts per target.

The starting point and ending point are the beginning and end of the cutting path. The robotic arm starts from the starting point and moves continuously along a series of target points provided by the vision system, cutting until it successfully reaches the ending point, thereby completing the automatic cutting task along a specific path. The current target is the target position of the cutting task that the robotic arm is currently executing, while the next target is the target position for the subsequent cutting task.

Experimental result

A total of 10 experiments were conducted, with the ones marked with an asterisk (*) indicating failed experiments (Figure 13(a)). The reasons for failure include two categories: “up” and “down.” “Up” refers to the monopolar scissors moving out of the gauze and to the upper side, causing the experiment to fail. “Down” refers to the monopolar scissors moving out of the gauze and to the lower side, also causing the experiment to fail. “Cutting time” and “total time” refer to the total number of cuts and the total time required to complete one C-shaped cutting task along the path, respectively. “Mean time” refers to the average time required to complete a cutting action.

Results of continuous cutting experiments along the path. (a) The results for all experiments; (b) the cutting times for each trial. (d) The total time for each trial; (c) the mean time per cutting action (successful trials only).

The C-shaped cutting task is designed to evaluate the accuracy, efficiency, and consistency of the automated cutting system when following a predefined curved trajectory. 54 In this experiment, a C-shaped path is drawn on a 6 cm × 8 cm medical gauze using a blue marker, ensuring a controlled and repeatable cutting trajectory. The cutting task involves a sequence of target points, spaced 0.3 cm apart, along the curve. The robotic instrument executes three consecutive cuts at each target point to ensure complete separation of the gauze, dynamically adjusting its position after each cut. The system relies on visual feedback and real-time motion control to track and maintain precision throughout the cutting process.

On average, completing a C-shaped cutting task required 24 cuts (SD = 4.58) and took 122.5 s (SD = 22.5), with a maximum completion time of 128 s. The average time per cutting action was 5.1 s (SD = 0.17). A one-way analysis of variance comparing total cutting times across successful trials showed no significant difference in mean completion time, F(7, 2) = 1.45, P = .27, suggesting consistent system performance. A linear regression analysis revealed a strong correlation (r = 0.89, P < .05) between trajectory length and the number of required cuts, confirming that longer cutting paths necessitate more precise adjustments.

The average success rate of the automatic cutting experiments along the path was 85%, with each complete cutting action taking about 5.10 s on average. The total number of cuts required to complete a continuous cutting task along the path increased with the length of the cutting trajectory. Murali et al. 33 conducted arc cutting experiments showing that all experiments were completed within 300 s. Dulan et al. 30 indicated that a task completed within 162 s without errors could be awarded “expert proficiency.” The preliminary experiments conducted in this study confirmed the effectiveness of the developed automated surgical system, demonstrating that its performance in automatic cutting tasks meets expectations.

Automatic suturing experiment

In this experiment, the accuracy of the DarkPose algorithm for the positions of the surgical instrument and the surgical needle reached 0.92 (AP at OKS = 0.5). The accuracy of the SEResNeXt101 classifier in determining whether the surgical needle was successfully grasped reached 0.90.

Validation of the motion control system

The automatic continuous suturing experiment was conducted on the experimental platform shown in Figure 14. Two 1-mm thick pieces of ham were secured on a 6 cm × 4 cm rectangular hollow steel frame using pink sutures that matched the color of the ham. The ham used was commercially available and selected for its similarity to soft tissue properties.

Automatic suturing workflow. (1) Initial state before the experiment starts, with the left and right needle holders positioned on either side of the suturing tissue. (2) The left needle holder adjusts the instrument's posture, then moves to the left needle holder point and grasps the needle. (3) The left needle holder rotates the suture needle so that the needle tail is parallel to pass through the tissue. (4) The instrument lifts above the tissue, and the experimenter manually pulls the suture. (5) The right needle holder grasps the tail of the suture needle. (6) The left needle holder releases the suture needle and retracts. (7) The right needle holder adjusts the instrument's posture and position to the needle-passing preparation position. (8) The right needle holder passes the needle through the tissue.

To ensure a stable experimental setup, the steel frame was fixed in place with two clamps, maintaining a perpendicular orientation to the horizontal plane. An endoscope was positioned directly above the ham, perpendicular to the ground, with a controlled height of 10–15 cm from the ham surface. The visual system identified target positions at the top surface of the ham, and the final suturing target was determined by moving 1 cm along the positive z axis in the endoscope coordinate system, as shown in Figure 14.

Experimental result

The complete sequence of the suturing task is shown in the figure. The robotic system is programmed to complete the entire suturing cycle from grasping the needle to passing the needle. In the initial state, the needle tip passes through the tissue, and the suture thread is tightened by the experimenter. After the experiment starts, the left needle holder first grasps the needle and smoothly passes it through the tissue, followed by the experimenter manually tightening the suture thread. Then, the left and right needle holders exchange the suture needle, the right needle holder adjusts its posture, and finally, the needle is passed through the tissue. These steps are repeated to complete multiple sutures. Specifically, after each needle-grasping action by the robotic arm, the visual system determines whether the needle grasping was successful. If it fails, the needle is regrasped.

Discussion

The development of an automated cutting and suturing control system utilizing an improved FP16 visual recognition algorithm represents a significant leap forward in the field of robotic surgery. The results from our study indicate that the integration of advanced visual recognition algorithms into surgical systems can enhance the precision and reliability of critical tasks, such as cutting and suturing, which are essential in various minimally invasive procedures.

The primary contribution of this work lies in the application and refinement of the FP16 visual recognition algorithm within a surgical context. Our findings demonstrate that the FP16 algorithm, with its reduced memory footprint and increased processing speed, offers substantial improvements over traditional FP32 algorithms, particularly in real-time surgical scenarios where time and accuracy are of the essence. By leveraging the FP16 algorithm, we observed a significant enhancement in the system's ability to accurately detect and track tissue boundaries, which is crucial for ensuring precise cutting and suturing. One of the most important outcomes of this research is the demonstration that the FP16 algorithm can maintain high levels of accuracy without compromising the system's efficiency. Traditional challenges in robotic surgery, such as latency in visual processing and the resulting impact on surgical precision, were effectively mitigated by our approach. The algorithm's ability to operate with lower precision arithmetic, while still delivering high accuracy, underscores its potential for widespread application in various surgical tasks beyond cutting and suturing.

The automatic cutting and suturing control system presented in this study offers several key advancements over currently available similar systems. One of the most significant improvements is the integration of the improved FP16 visual recognition algorithm, which enhances the system's ability to accurately detect and track tissue boundaries in real time. This increased precision reduces the margin of error during critical surgical tasks, such as cutting and suturing, which are highly dependent on accurate visual input. Furthermore, the system's use of a 3D electronic endoscope and advanced motion control algorithms allows for more precise instrument positioning and movement, resulting in smoother and more consistent surgical outcomes. Unlike many existing systems that struggle with latency and adaptability in dynamic surgical environments, this system is designed to quickly adjust to changes in tissue appearance and positioning, minimizing the risk of errors. The combination of these features makes the automatic cutting and suturing control system more reliable, efficient, and capable of handling complex surgical procedures with a higher degree of accuracy than current alternatives.

Current commercial robotic surgical systems, such as the da Vinci Surgical System and Hugo RAS, primarily function as teleoperated platforms, where a surgeon maintains full control over the robotic arms while benefiting from enhanced precision, tremor reduction, and improved visualization. These systems integrate high-definition 3D imaging, motion scaling, and wristed instruments to facilitate complex procedures but rely heavily on human intervention. Existing commercial platforms lack full automation and primarily assist rather than autonomously perform surgical tasks. While some semiautomated features, such as suturing assistance or robotic stapling, are available, these systems do not include fully autonomous cutting and suturing capabilities.

This study introduces a novel vision-based automation framework that enables autonomous execution of fundamental surgical tasks, specifically automatic cutting and suturing. Unlike commercial systems, the proposed method integrates a task-specific vision algorithm system and a FSM model, allowing for real-time adaptive motion planning and decision making. The introduction of FP16-based image recognition, DarkPose keypoint localization, and stereo depth estimation enhances instrument tracking accuracy, while automated trajectory execution ensures consistent precision without the need for direct surgeon control. Additionally, the implementation of quantitative validation criteria, such as positional error thresholds, cutting depth consistency, and suturing accuracy metrics, further distinguishes this study by establishing objective performance benchmarks for autonomous robotic surgery. By addressing these gaps, this research contributes to the advancement of fully autonomous robotic surgery, paving the way for greater efficiency, consistency, and reduced cognitive load on surgeons.

However, while the improved FP16 visual recognition algorithm has shown great promise, it is not without its limitations. One of the key challenges identified in our study is the algorithm's sensitivity to variations in lighting conditions and tissue appearance. Inconsistent lighting or unexpected tissue deformations can lead to reduced accuracy in tissue boundary detection, which could potentially impact surgical outcomes. Another limitation is that the system relies on the predefined patterns for suturing, which may not always be adaptable to the complex and dynamic nature of real-life surgical procedures.

Before this system can be applied in actual surgeries, several key technical and operational challenges must be addressed. One of the primary concerns is the need for enhanced real-time adaptability. While the system performs well in controlled environments, real-life surgeries involve unpredictable variables such as sudden tissue movements, unexpected bleeding, and varying anatomical structures. The system must be equipped with more advanced algorithms that can dynamically adapt to these changes in real time without compromising precision or safety. Additionally, the integration of this system with existing surgical workflows poses a challenge, as it requires seamless communication between the robotic system and other surgical tools and technologies. Ensuring the system's compatibility and ease of use for surgeons is crucial for its adoption. Another significant challenge is the rigorous validation and certification process that must be undertaken to meet regulatory standards for medical devices, which involves extensive testing to ensure the system's reliability and safety across a wide range of surgical scenarios. Addressing these challenges is essential for the successful implementation of the system in clinical practice.

The potential clinical implications of our findings are significant. As robotic surgery continues to evolve, systems that can perform automated tasks with high precision and reliability will become increasingly important. Looking ahead, the future direction for this automatic cutting and suturing system involves several exciting avenues of research and development. One of the key areas is the enhancement of the system's AI capabilities, particularly in machine learning and deep learning, to improve its ability to learn from each surgical procedure and adapt to new and complex scenarios. This could involve developing more sophisticated models for real-time decision making and incorporating more extensive datasets from diverse surgical cases to increase the system's robustness and generalizability. Another promising direction is the integration of haptic feedback and augmented reality to provide surgeons with a more immersive and intuitive interface, allowing them to better monitor and control the robotic system during procedures. Additionally, exploring the potential for cloud-based data storage and processing could enable real-time updates and collaboration across different surgical centers, leading to more consistent and standardized surgical outcomes.

Conclusion

This study presents a novel vision-guided robotic system capable of autonomously performing cutting and suturing tasks with high precision and reliability. By integrating an improved FP16 visual recognition algorithm with advanced stereo depth estimation, keypoint detection, and real-time motion planning, the proposed system achieves robust visual perception and accurate instrument control in dynamic surgical environments. Experimental results demonstrate an 85% success rate in automatic cutting with consistent performance metrics, and a 90% success rate in needle grasping during suturing, confirming the effectiveness of the control framework and visual subsystem.

Compared to existing teleoperated or semiautonomous surgical systems, this fully automated platform offers significant advancements in task accuracy, consistency, and execution efficiency. The system's modular architecture, incorporating task-specific FSMs and visual feedback loops, provides a scalable foundation for extending autonomy to other surgical tasks. Future work will focus on enhancing adaptability to variable anatomical conditions, integrating haptic feedback, and validating performance in preclinical models to pave the way for clinical translation.

Overall, this work represents an important step toward the realization of fully autonomous surgical systems and highlights the potential of AI-driven automation in improving surgical outcomes and reducing procedural burden on clinicians.

Footnotes

Author contributions

Jiayin Wang and Jiayin Wang conceptualized and designed the study; Jiayin Wang did the experiments and drafted the article; Jiayin Wang reviewed and revised the article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

All data relevant to this study are available upon request. Please contact the corresponding author to access the data.