Abstract

Aerial-ground robotic system is a potential candidate for autonomous applications such as target tracking, inspection, agriculture, and environmental mapping. Tracking motion between UAV (unmanned aerial vehicle) and AGV (autonomous ground vehicle) is one of the basic collaborative tasks of the system during execution of collaborative missions. UAV is spatially omnidirectional, thus, can trivially track AGV to provide navigation guidance from a vantage point. AGV is also required to track UAV for providing continuous support (payload and tethered charging) during a mission. However, ground vehicle tracking aerial vehicle is not given much attention in literature. Moreover, nonholonomic AGVs are not suitable for effective collaboration due to their poor mobility. Consequently, they hinder the overall efficiency of collaborative missions. Thus, in this work, we introduce the combination of 4-WISD (4 wheeled independent steering and driving) vehicle and UAV to address these problems. As 4-WISD ground vehicles are expensive in the market from the research perspective, a 4-WISD vehicle is also developed in the current research. ROS (Robot Operating System)-based control package is developed for the vehicle for general usage. Then, vision-based strategies for ground vehicles (nonholonomic and 4-WISD vehicles) are developed to track aerial vehicle in GPS (Global Positioning System) denied and outdoor conditions. Ground vehicles are localized using monocular camera of UAV and IMU (Inertial Measurement Unit) sensors. Kinematic tracking controllers have been developed for ground vehicles using sliding mode control method. Lyapunov stability is proven for the controllers. Experiments have been performed on the hardware to validate the tracking strategies. Tracking controllers show satisfactory performance and are suitable for outdoor and GPS denied conditions. A basic qualitative comparison of tracking performance of both ground vehicles is also presented. Combination of UAV and 4-WISD vehicle becomes partially omnidirectional which leverages the mobility and overall collaboration of the system.

Keywords

Introduction

Multi-agent robotic systems can handle automation of spatially complex tasks, especially heterogenous systems. 1 Heterogeneous systems are combinations of dissimilar robots. Aerial-ground robotic system is a typical example of heterogeneous system. UAV–AGV (unmanned aerial vehicle–autonomous ground vehicle) combination is a potential candidate for automation of tasks involving aerial and ground interactions. UAV–AGV system is combination of two robotic agents: one is aerial, and another is ground robot. UAV is good at agility and perception and AGV is good at payload capacity and computation power hence, the system is balanced for many industrial applications.2–4 Large perception of UAV can help in the navigation of AGV and AGV can support the long-range missions of UAV in terms of payload and charging.1–7 Thus, abilities of both robots are complement to each other. UAV–AGV system may be communicative/noncommunicative based on whether the data communication is present between them. Autonomous inspection, construction site preparation, surveillance, exploration, and mapping of environments are some applications of the system.8–13 This system is suitable for the tasks/missions requiring air-ground automation. A recent review identifies different roles of UAV and AGV for a given task and presents a detailed review in application perspective. 2 Both agents can act as sensor, actuator, auxiliary facility or decision maker or combination of these depending upon the task. A kinematics framework specific to vision-based UAV–AGV (UAV carries a down-facing camera) system is reported in 5 which presents forward and inverse kinematics notions to the system. Few researchers implemented the system to applications such as inspection, 8 indoor data collection, 10 construction site preparation, 9 and mapping. 14 Collaborative navigation of the system is crucial during execution of any missions. For example, collaborative target tracking and surveying in unstructured environments are typical situations. 10 Aerial vehicles are agile however, the mobility of ground vehicles becomes critical for such applications. Hence, the problem of improving collaborative motion/mobility of the system is crucial and requires attention of research community. Generally, when multiple agents are involved in a task, suitable agents must be combined to maximize the output. In this case, suitable ground agent has to be combined with UAV to improve the mobility and navigation of the system for any application. However, researchers have not focused in this direction. This is one of the problems considered in this work.

Autonomous is collaboration between both vehicles is also important aspect when performing any mission. Vision-based technique (camera sensor is attached to UAV or AGV or both) is the well-studied method in the literature where UAV may carry a down-facing camera to relatively localize AGV.5–10,14–17 UAV–AGV system is required to function in GPS (Global Positioning System) inaccessible and outdoor areas to execute the missions in such conditions.15,16 Vision-based UAV–AGV collaboration is well-known for such scenarios and focused by many researchers. Autonomous tracking and landing of UAV are fundamental collaborative functions of the system during execution of missions. Vision-based solutions have been provided for these tasks to execute in GPS less conditions. Vision-based tracking can be either UAV tracking the AGV or vice versa. One robot is required to track the motion of another during tracking task while executing the mission. In case of UAV tracking/following AGV, UAV localizes AGV and follows the motion of AGV. For the relative localization of AGV, UAV can have a down-facing camera to track AGV in GPS-denied conditions. In second case, AGV is required to relatively localize UAV to track its motion and stay right below UAV. AGV can have a sky-facing camera to localize and track the motion of UAV. Another possibility for AGV to track UAV is by communicating with it. UAV can localize AGV with its down-facing camera and communicate to AGV. This method requires data communication between UAV and AGV.

As discussed earlier, tracking motion can be vice versa. However, most of the literature focused on tracking of AGV by UAV considering vision-based UAV–AGV system.6,13,15,17 Both robots play various functional roles according to the task as discussed earlier. Different functional roles of both agents depending upon the given task are identified in reference [2]. UAV can fly over AGV and provide navigation guidance to AGV from a vantage point. AGV can provide the payload support to UAV. 13 This situation may require AGV to follow the UAV for continuously assisting it. A specific combination of system is when UAV acts as a leader and AGV acts as a follower. Under this, AGV continuously follows UAV for assisting/supporting the longer missions of UAV. If the role of AGV is to continuously support (payload and tethered charging support) the longer mission of UAV, AGV can track the motion of UAV for assisting it. Agriculture harvesting is one such example where UAV requires continuous payload support from AGV. Thus, AGV tracking the motion of UAV is as important as UAV tracking the motion of AGV in GPS denied and outdoor conditions. However, it is less focused as per the state of the art and insufficient to execute in outdoor and GPS less conditions. Moreover, when ground vehicle tracks aerial vehicle, poor mobility of ground vehicle decreases the efficiency of the task. So, it is also required to choose the right ground agent for improving mobility of the system. These two problems have been considered and addressed in this work.

Background work and present contributions

State of the art is discussed in three subsections to highlight the problems addressed in the present work.

Ground vehicle tracking aerial vehicle

Multiple AGVs reaching the projection of center of UAV (static/hovering) on the ground plane is presented in reference [18]. The study is not vision-based, UAV is not dynamic and limited to simulations. Wu et al. 19 presents motion capture system-based tracking which is not suitable for outdoor, and GPS denied missions/conditions.Wei et al. 20 and Guérin et al. 21 dealt with waypoint navigation of AGV in the image plane of UAV camera. Sutera et al. 22 presents a simulation study of AGV tracking UAV by localizing them with respect to a common fixed frame in the simulation environment. Thus, AGV providing payload support by continuously tracking UAV in the GPS inaccessible areas is not addressed as per the literature survey. Moreover, overall mobility of UAV–AGV system is affected by the poor mobility of nonholonomic AGVs. For example, considering a task that AGV must track the random/noncooperative motion of UAV during a mission. Nonholonomic (Ackermann and skid steering) AGVs may require to execute complex trajectories even to reach nearby goal points during the tracking.23–25 And also skid-steering of the ground vehicle may damage the terrain and cause tyre wear. Hence, it is required to investigate in the direction of improving the collaborative navigation of the system by integrating suitable ground agent with the aerial agent. Majorly, the research gap tackled in this work is divided into two problems. One is vision-based control strategy for ground vehicle to track aerial vehicle (having down-facing camera) in GPS denied zones. And secondly, combining a suitable ground agent with aerial agent to improve the collaboration/tracking task of the system. Thus, to address both, present study firstly deals with the problem of vision-based tracking of aerial vehicle by a ground vehicle. And then the tracking strategy is implemented for both nonholonomic and flexible mobility ground vehicles. In leader-follower terminology, UAV is leader and AGV is the follower during tracking.

Development of ROS-based 4-WISD ground vehicle

From the above discussion, it is required to explore in the direction of improving the collaboration by integrating suitable AGV with UAV. Vision-based UAV–AGV testbed is made in reference [20], however, AGV is based on front wheel steering (nonholonomic). Few researchers combine UAV with macanum wheeled AGVs and present vision-based tracking control for UAV to track AGV.26,27 However, vision-based tracking of aerial vehicle by macanum wheeled robot is not focus of their study. Moreover, macanum wheeled AGVs are not suitable for outdoor missions. Hence, the combination of 4-WISD ground vehicle with UAV is required, which is not highlighted in the literature as per survey of the authors. Hence, we propose the combination of flexible ground vehicle and aerial vehicle for effective collaboration. This work experimentally investigates the combination of UAV and 4-WISD AGV considering the task of AGV tracking the motion of UAV. 4-WISD vehicles based on standard and castor wheels are developed for different applications. These are also referred as partially holonomic vehicles. In reference [28], researchers have developed a re-configurable floor cleaning robot (hTetro platform) adopting 4-WISD mechanism. In reference [29], a bin-dog robotic system is developed for agriculture applications which is based on 4-wheel independent steering mechanism (4WIS). Concept of 4WIS also became a good choice to develop mobile manipulators (iMoro) and electric vehicles due to their efficient navigation and maneuvrability.25,30,31 Thus, 4-WISD ground vehicles are independently well addressed for different applications as discussed above. This motivates the authors to study the combination of UAV and 4-WISD ground vehicle. However, the available 4-WISD AGVs in the market are expensive for research purpose. It may not be appropriate to procure high-end quality AGVs for research in the educational institutes. 32 This led the researchers to develop their own AGVs purely for research purpose.32–34 This motivates the present work to develop a 4-WISD vehicle to combine with UAV in the current research. In addition, we have also developed ROS based control for the vehicle.

Motion control of 4-WISD ground vehicle under external camera

To combine the 4-WISD vehicle with UAV for GPS denied applications, vision-based motion control of 4-WISD vehicle under external camera has to be studied. Few authors presented vision-based control for road lane and target tracking by a 4-WISD vehicle, however through the on-board camera of the vehicle.35,36 GPS navigation control for 4-WISD vehicle is reported in reference [29]. Kinematic and dynamic modeling and control is reported in reference [37]. However, it is a simulation-based study. Path tracking control is also studied through simulations in reference [38]. Hence, the motion control of a 4-WISD ground vehicle under the external camera/image frame is yet to be experimentally studied as per the survey of the authors, which is also an opportunity to the present work. In fact, this prerequisite is required as UAV localizes 4-WISD vehicle by using its down-facing camera during tracking.

In overall, our contributions in this work are summarized as follows:

Autonomous vision-based tracking strategy for ground vehicles to track aerial vehicle to provide continuous support in outdoor and GPS less missions. ROS-based flexible ground vehicle (4-WISD vehicle) is developed for research and development purpose. And combination of UAV and 4-WISD vehicle is proposed for effective collaboration. Vision-based motion control of 4-WISD vehicle under externally fixed and moving (UAV) monocular cameras. The general kinematic model of the vehicle is simplified for the same. Tracking controllers have been verified by performing real world experiments. And a basic qualitative comparison over tracking motion of both ground vehicles is provided.

Organization of rest of the article is as follows. The third section presents the design, fabrication, and control of 4-WISD ground vehicle developed in the current work. The fourth section discusses the relative localization of ground vehicles in the image frame of UAV and tracking controllers design is discussed in the fifth section. Hardware and data communication between aerial and ground vehicles is discussed in the sixth section. Experimental results are presented in the seventh section and finally conclusions are drawn in the eighth section.

4-WISD vehicle design and development

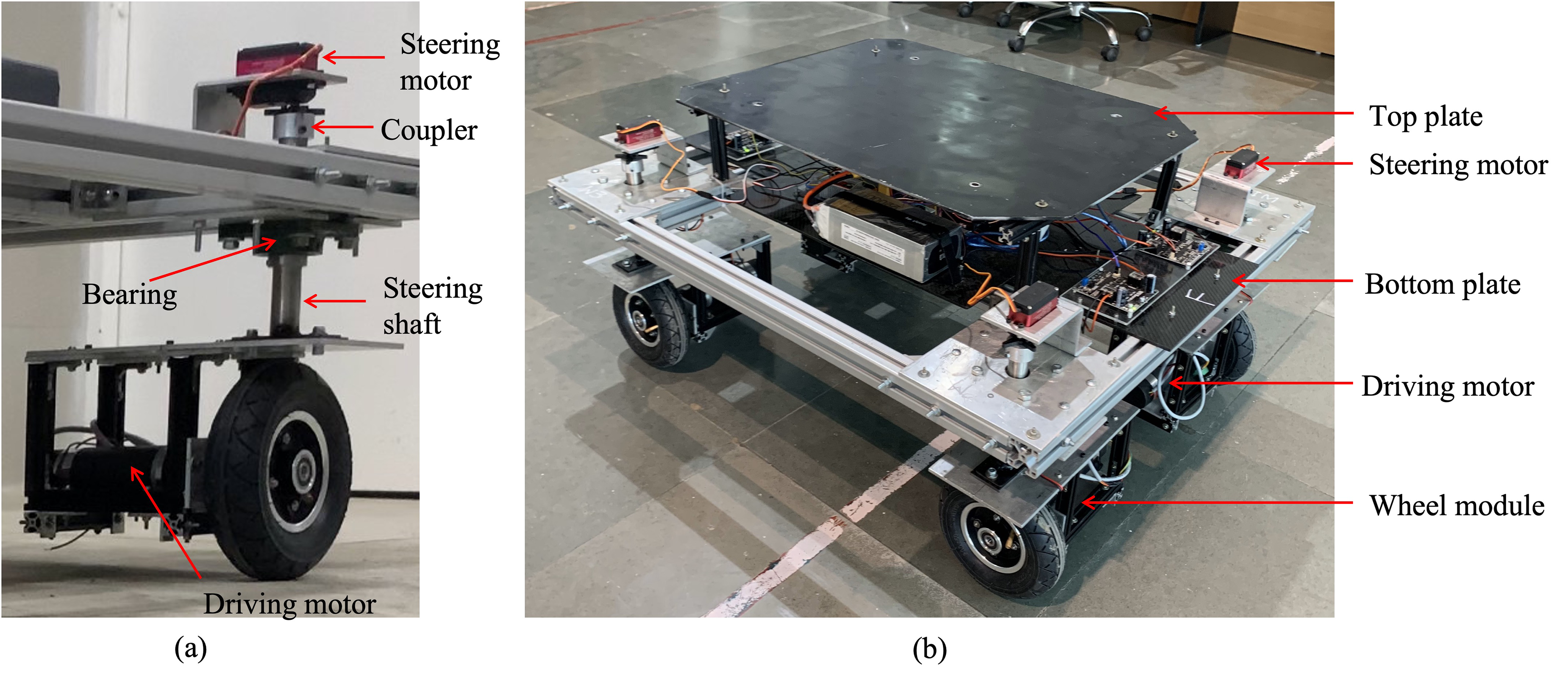

There is a research interest in development of 4-WISD ground vehicles for outdoor and indoor applications as discussed in the second section. 4-WISD ground vehicles are expensive in the global market and may not be appropriate to procure them for research purpose. Moreover, 4-WISD AGVs are not available in the local market (our country). Thus, in this work, we have designed and developed a 4-WISD ground vehicle. A 50-Kg payload and maximum velocity of 1 m/s are major design specifications. Steering and driving motors have been selected based on these specifications. Maximum torque of steering motors is 6 Nm. Rated speed and torque of driving motor are 120 RPM and 9 Nm. Figure 1 shows the solid model of the ground vehicle which is undergone structural analysis. Figure 2 shows completely assembled ground vehicle. Carbon fiber sheet is fixed as bottom plate to accommodate the power electronic components, such as batteries, DC–DC converters, power distribution board, and DC motor drivers. Nvidia Jetson Nano and Arduino controller boards are also fixed on bottom plate (hidden under the aluminium top plate). Power specification of batteries is 22 V, 22 Ah. Two batteries are connected in parallel for powering the vehicle. The total mass of the vehicle is 50 Kg, and payload is also 50 Kg. Length, width and height of the vehicle are 96, 80, and 50 cm, respectively. The vehicle is a modular design with four wheel modules. Figure 2(a) shows the wheel module assembly to the chassis.

Solid model of the 4-WISD ground vehicle. 4-WISD: 4 wheeled independent steering and driving.

(A) Wheel module and (b) fully assembled 4-WISD ground vehicle. 4-WISD: 4 wheeled independent steering and driving.

Figure 3 shows the hardware architecture of the vehicle. Arduino Mega is used to command the DC motors (DCM) and Arduino Uno is used to command steering motors (SM) as well as to read the orientation sensor as shown in Figure 3. Each DCM is connected to a motor driver (MD) of 30 A current capacity. Both Arduino boards are connected to Nvidia Jetson Nano which is interfaced as main controller of the vehicle. ROS serial package is used to establish data communication between Jetson Nano and Arduino boards. Software stack of the vehicle is built based on ROS 1. SDK (software development kit) ROS control package is developed for the vehicle. This package can be used in any Linux based on-board computer to control the 4-WISD ground vehicle.

Hardware architecture of

This vehicle has been developed for only research purpose with limited capabilities and may not be suitable for commercial purpose. GPS and camera sensors can be interfaced to the vehicle depending upon the applications. The existing 4-WISD AGVs in the global market are useful for both research and commercial purposes. However, they are very expensive if the need is only for research. Table 1 shows the comparison of most suitable 4-WISD vehicles available in global market with the vehicle developed in this work. Only major specifications have been selected for the comparison. The data is taken from the datasheets available in the corresponding websites. Our vehicle is with limited capabilities and having more scope toward research and development. While other vehicles with high end capabilities are very expensive if the need is only for research. Thus, we have developed our own 4-WISD vehicle purely for research and development.

Comparison of major specifications with most suitable market products.

GPS: Global Positioning System.

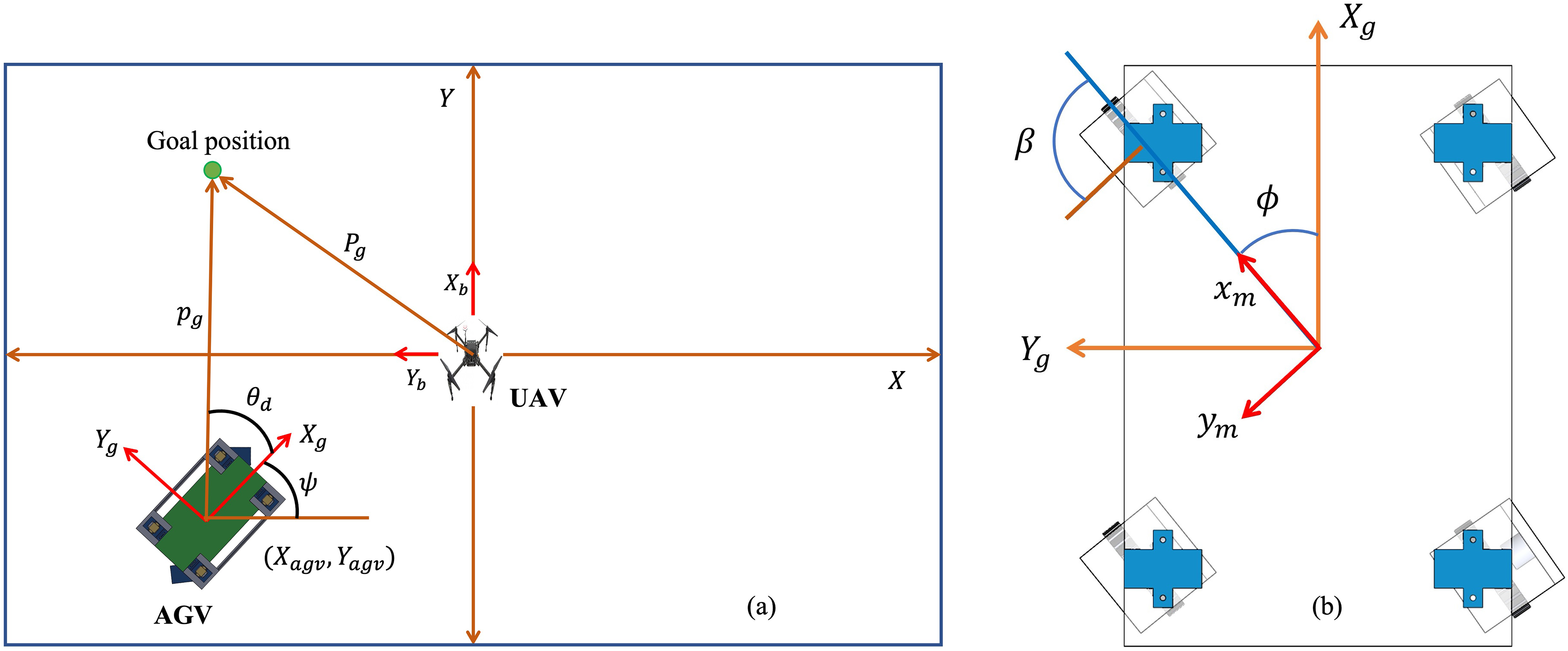

Relative localization of AGV and UAV

A down-facing camera is mounted at the bottom of center of UAV. Intrinsic parameters are calculated by camera calibration methods. Aruco markers are placed on top of the ground vehicles to uniquely localize them using the down-facing camera of UAV. Pose of AGV in the image plane is calculated and then apparent position of UAV is obtained with respect to AGV by applying homogenous transformation. OpenCV (Computer Vision) Python library is used for detecting and localizing the aruco markers in the image plane. Initially RBG (Red-Blue-Green) image is blurred for denoising and then converted to gray scale image before performing aruco marker detection. Sequence of image processing operations is shown in Figure 4.

Flow chart of image processing and relative localization of UAV with respect to AGV. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

Apparent position of aruco markers give the centroid coordinates of ground vehicles. Figure 5 shows the detection of aruco marker placed over ground vehicle. Bounding box (highlighted in yellow color in Figure 5) coordinates are used to obtain apparent position of ground vehicle. Pitch and roll angles of UAV changes orientation of camera. This causes the change in the apparent position of the marker and result in false localization of AGV. To overcome this, homographic transformation has been implemented in the present work using equation (1).

39

It transforms the coordinates of a feature point to a virtual image plane from an actual oriented image plane. Roll and pitch angles of UAV cause to change in orientation of actual image plane. Virtual image plane is parallel to the ground plane.

Detection of aruco marker in the image plane using OpenCV library.

Figure 6 shows the image frame and apparent pose of AGV in the image frame.

AGV localization in image frame of UAV camera. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

Thus, the pose of AGV (

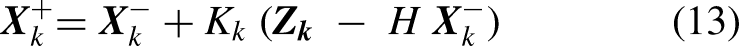

Kalman filter implementation

Kalman filter is implemented for noise elimination and robust position estimation of AGV in the image frame. This operation is carried out after the homographic transformation as shown in Figure 6. The state of the system is

Tracking controller's design

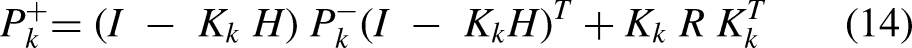

This section presents design of tracking controller for ground vehicles to track UAV. Linear and angular velocity are control commands for nonholonomic AGV, steering of wheels and PWM signal of driving motors are control commands in case of 4-WISD vehicle. Thus, accordingly tracking controllers have been designed which is detailed further. The goal of tracking controller is to drive the AGVs to follow the center of UAV projected on ground plane or the point of optical axis on the ground plane. Kinematic controllers are developed for linear velocity and steering in case of 4-WISD vehicle, linear and angular velocities in case of skid-steering vehicle. Sliding mode control technique has been successful for motion control of ground vehicles as well as drones.11,41,42 Advantages of this technique are robustness and easy implementation. 43 Thus, in the present work, sliding mode theory is implemented to develop kinematic controllers for the position control of AGV in the fixed image frame (overhead fixed camera) as well as for tracking the aerial vehicle (overhead moving camera).

Tracking controller for nonholonomic AGV

Clearpath Husky A200 is used as nonholonomic AGV. ROS control package is provided for the vehicle. Linear and angular velocity of the vehicle can be commanded using ROS to control the motion of vehicle. Hence, controllers for linear and angular velocity have been designed for tracking the aerial vehicle. A communicative UAV–AGV vision-based system is considered in this work where data sharing is present between them. UAV communicates its velocity, attitude, and apparent position of aruco marker to the ground agent/AGV. The task of AGV is to track the motion of UAV which includes both position and velocity of UAV. Thus, the motion of UAV influences the controller design for tracking. We present the communicative tracking of UAV by AGV, UAV sends its velocity to the ground vehicle. The complete tracking motion can be explained as reaching under the UAV (minimizing the position offset in the image plane) and matching with the velocity of the UAV.

5

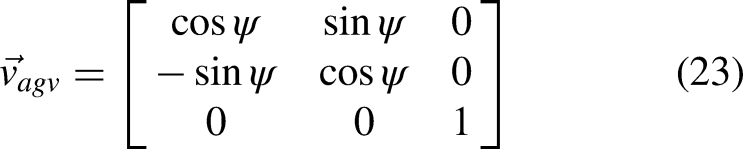

Thus, AGV requires the velocity information of the UAV for smooth and continuous tracking. UAV continuously sends its velocity to AGV during execution of the tracking task as discussed earlier. However, certain transformations are necessary for this because velocities are available in the respective body frames each vehicle. This is detailed further in the discussion. The tracking controller is combination of position control part and the velocity of UAV computed in the body frame of AGV. Figure 7 illustrates the pose of AGV in the image plane. Both position and angle offsets of AGV are indicated in the image plane.

Relative localization of AGV in the image frame of UAV. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

The kinematic model which explains the tracking motion between UAV and AGV is,

5

Body velocity of UAV in the body frame of AGV can be expressed as,

A novel switching control method is proposed for the angular velocity control of the skid-steering AGV. If the apparent distance of AGV in the image plane is greater than 1.5 cm, then the apparent position of UAV with respect to AGV is considered for computing the angle error. If the distance lies below the threshold, direction of motion of UAV in the body frame of the AGV is considered for computation of desired heading angle of AGV. Thus, the angular velocity control of AGV dynamically switches between apparent position-based to the velocity-based during tracking. Threshold value has been decided by performing thorough experiments and slight hovering instability. The final control law for angular velocity of AGV is obtained as,

If |

Stability analysis

Stability of control law for linear motion of AGV can be verified as follows. Choosing a following Lyapunov candidate function,

41

Tracking controller for 4-WISD vehicle

Similar procedure is applied to develop the kinematic controllers for the position control of 4-WISD ground vehicle in the image frame and for tracking the motion of UAV. However, in this case both steering angle and speed of each wheel have to be computed. Kinematic controllers have been developed for servo angles of steering motors and PWM signals of driving motors.

General forward kinematic model of the vehicle is,

37

(A) Schematic representation of position control of 4-WISD ground vehicle in image frame and (b) steering angle convention. 4-WISD: 4 wheeled independent steering and driving.

Figure 8(a) shows the position control situation of ground vehicle in the image frame.

Control architecture of 4-WISD ground vehicle to track UAV. 4-WISD: 4 wheeled independent steering and driving; UAV: unmanned aerial vehicle.

Stability analysis

Similar procedure is followed (the “Stability analysis” section 5.1.1) to prove the stability of tracking control law of the 4-WISD vehicle. Considering a following Lyapunov candidate function,

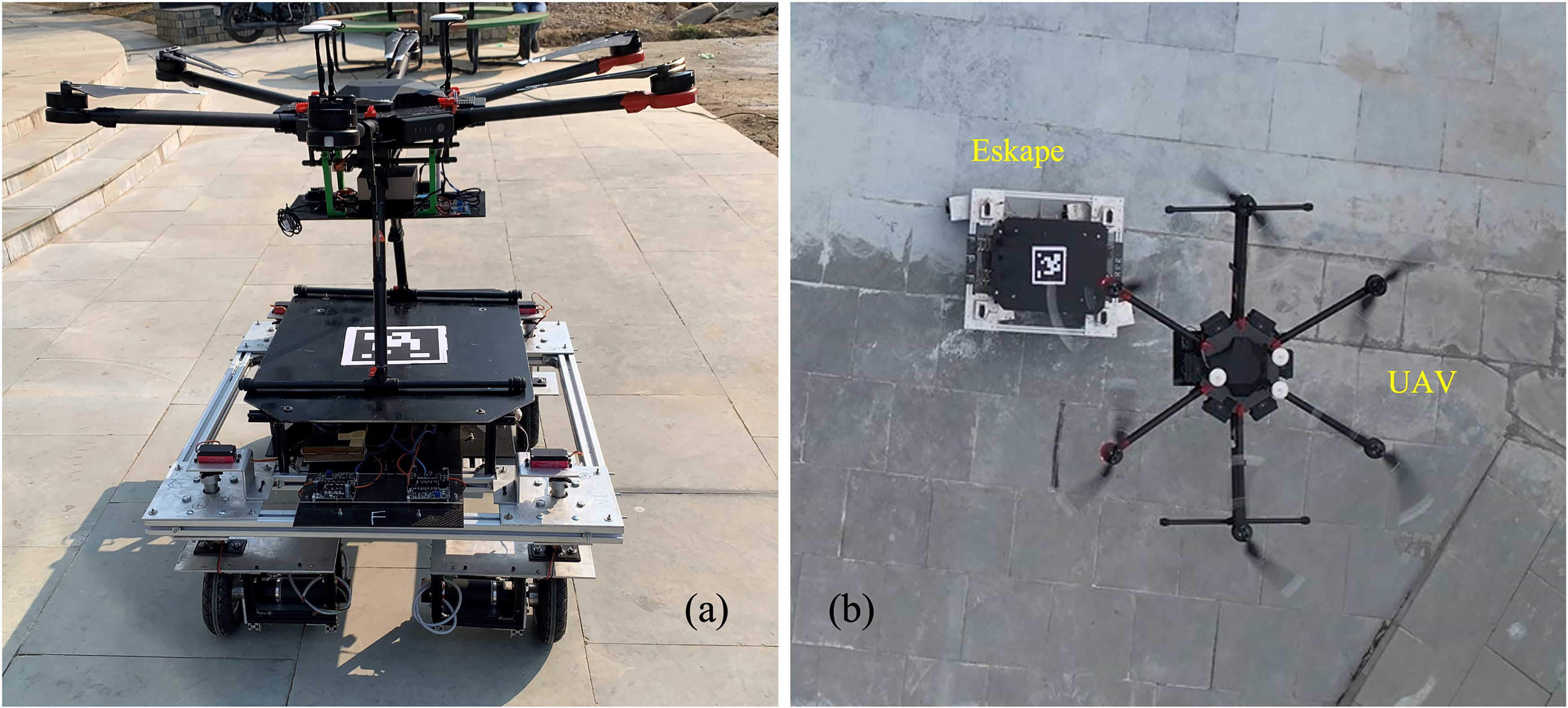

Hardware and data communication

Hexacopter is UAV hardware platform used in the present work which is shown in Figure 10. It contains IMU, GPS, and a monocular down-facing camera (USB camera) sensor as shown in Figure 10. Down-facing camera is used to localize the ground vehicles with respect to UAV. Nvidia Jetson AGX Orin is interfaced as on-board computer for the UAV. It is running on Ubuntu 20.04 with ROS Noetic. Figure 11 shows the ground vehicles used in this study. Figure 11(a) is Clearpath Husky A200 which contains IMU sensor. It is also running on ROS Noetic with Ubuntu 20.04. Figure 11(b) shows the developed ground vehicle in the present work which is called

Hexacopter aerial platform.

(A) Clearpath Husky A200 (nonholonomic) and (b) 4-WISD ground vehicle (

Jetson AGX Orin controller is used with UAV which is running on Ubuntu 20.04 with ROS Noetic. 4-WISD is running on Jetson Nano with ROS Melodic. ROS master export is used to establish communication between both ROS servers of UAV and AGV. Common ROS master is running in the on-board computer of AGV. It establishes communication among all the ROS nodes. UAV detects the aruco markers placed over AGVs using its down-facing camera. Attitude of UAV, velocity of UAV and apparent position of marker/AGV are sent to AGV through ROS communication. Figure 12 depicts the communication among the ROS nodes of the system. Image processing, homographic transformation and Kalman filtering are performed in UAV. Then the computed apparent position of marker is communicated to pose node of AGV. A pose node in the AGV receives the data sent from UAV as well as from its own IMU. Relative yaw angle of AGV with respect to image frame is computed in the pose node. The control commands are calculated in the controller node and then sent to the actuator node as shown in Figure 12. In case of skid-steering vehicle, control commands are linear and angular velocities. And in case of the 4-WISD vehicle control commands are PWM signals for DC motors and Servo angles for steering motors.

Data communication and ROS architecture of aerial-ground system. ROS: Robot Operating System.

Experiments and discussion

Firstly, indoor position control experiments under overhead fixed camera have been performed. These experiments are performed to verify the controller and to show the selection of path by both vehicles. However, position control and waypoint navigation of nonholonomic AGV under external image frame are reported in the literature.9,20,21 After performing indoor testing, outdoor tracking experiments have been performed combining both vehicles with UAV which are detailed in the following sections.

Position control in the fixed external image frame

Figure 13 shows position control of nonholonomic AGV (Figure 2(a)) under the fixed overhead camera. Second part of equation (20) is 0 for these experiments as there is no motion of camera. When the goal position initially lies on

Apparent position control of nonholonomic AGV under fixed overhead camera for different initial and final conditions. AGV: autonomous ground vehicle.

Apparent position control of 4-WISD vehicle under fixed overhead camera for two different initial and final conditions. 4-WISD: 4 wheeled independent steering and driving.

Figure 14(b) shows path of the 4-WISD vehicle when initial and goal position are lying on a line parallel to the

Tracking experiments

Tracking experiments have been performed by combining both nonholonomic and partially holonomic ground vehicles. Aruco markers are placed over ground vehicles to localize AGVs using its down-facing camera of UAV. AGV receives apparent position of aruco marker, yaw angle and velocity of UAV from UAV as discussed earlier and depicted in Figure 12. The ground vehicles compute control commands to track UAV as per the designed controllers for each vehicle. The tracking algorithm for both vehicles is available below. Initially, UAV hovers (4 m from ground) over ground vehicle keeping it in its field of view (FOV). Then UAV is given random velocity commands, and the job of ground vehicle is to track UAV and stay in its FOV. Altitude of UAV is maintained to be constant at 4 m during tracking experiments. UAVs used in this work are old DJI models and requires GPS sensor for localization and stability. However, state of the art drones/UAV can localize and stabilize without GPS sensors in both indoor and outdoor conditions.44,45 The proposed tracking strategy of AGVs is only based on the vision sensor of UAV and does not require a GPS sensor. Hence the tracking strategies are suitable for GPS less missions.

Tracking algorithm for both ground vehicles

Figure 15 illustrates the functioning of tracking strategies of both ground vehicles. Overall strategy is similar for both vehicles, however, differs in tracking control modules of respective ground vehicles. Nonholonomic AGV computes linear and angular velocities and the 4-WISD vehicle computes linear velocity and steering angles to track drone. Communication between both vehicles is established using Wi-Fi connection. To avoid the communication delays and losses, safety distance is maintained from Wi-Fi router as well between both vehicles. Frequency of data sent from UAV and frequency of data received in AGV have been verified to be same before conducting the actual experiments. The data frequency between UAV and AGV is 30 Hz which is sufficient for real time tracking motion.

Overall functioning of tracking strategies of ground vehicles.

Autonomous tracking of UAV by nonholonomic AGV

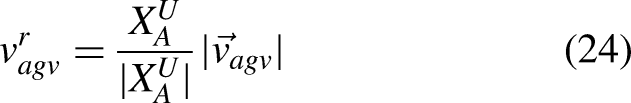

Figure 16 shows aerial image during tracking of UAV by nonholonomic AGV. Quadcopter (DJI M100) drone is used to perform tracking experiments due to unavailability of DJI M600 at the time. Initially, UAV hovers above AGV and keeps AGV in the FOV. Then, UAV is manually operated to give random velocity commands. AGV calculates linear and angular velocity commands to track the motion of UAV. Figures 17 and 18 show the results of tracking experiment with nonholonomic AGV. Figure 17(a) shows the velocity of UAV in the body frame of AGV during tracking and Figure 17(b) shows linear and angular velocities of the AGV during tracking. Figure 18(a) shows the variation of apparent position of AGV in the image frame and Figure 18(b) shows comparison of linear velocity of UAV and AGV during tracking. During 2.5 to 5 seconds, AGV position in the image plane is under the threshold condition. However, due to the velocity of UAV along the positive

Nonholonomic AGV and UAV during tracking experiment. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

(A) UAV velocity in body frame of AGV during tracking and (b) linear and angular velocities of AGV during tracking. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

(A) Apparent position of AGV in the image frame during tracking and (b) comparison of linear velocity of UAV and nonholonomic AGV during tracking. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

From the above discussion, the proposed tracking strategy enables the nonholonomic AGV to track the drone continuously without requiring a GPS sensor. Hence, nonholonomic AGV can support the longer outdoor/indoor missions of UAV by tracking it. Classical machine vision techniques have been implemented in this work for detecting the AGV. However, CNNs (convolutional neural networks) method can be implemented to make to AGV detection robust for outdoor conditions. 46 Due to the random motion of UAV, there is frequent chassis rotation of AGV (due to skid-steering mechanism). This is harmful to the terrain and may also to the payload. This can be addressed by choosing the right mobility type of ground vehicle.

Autonomous tracking of UAV by 4-WISD ground vehicle

Figure 19 shows the combination of UAV and 4-WISD ground vehicle. Relative dimensions of both vehicles are clear from this. A steering test of 4-WISD ground vehicle is performed before actual tracking experiments. UAV is manually controlled by keeping ground vehicle in FOV of UAV camera. The ground vehicle is stationary, and only steering control is functioning during this test. Figure 20 shows steering of the wheels at different instants. Alignment of wheels toward UAV can be observed. Figure 21 shows variation of apparent position (

(A) Combination of

Vision-based steering test of

Apparent position of UAV and corresponding steering angle of wheels during vision-based steering test. UAV: unmanned aerial vehicle.

Tracking experiment has been performed after confirmation of proper steering angle as discussed above. For tracking experiment, initially ground vehicle is kept in FOV of hovering UAV. Then, random velocity commands are given to the UAV. Then, ground vehicle computes speed (PWM) and steering angle commands to track UAV as depicted in Figure 15. Figure 22(a) shows PWM (speed) and steering angles of AGV during tracking. Figure 22(b) shows pose of the AGV in image frame of UAV during tracking. Apparent position of AGV in the image plane is continuously changing due to random motion of UAV. However, the orientation (

(A) Speed (PWM) and steering commands during tracking and (b) pose of AGV in the image frame during tracking. AGV: autonomous ground vehicle; PWM: pulse width modulation.

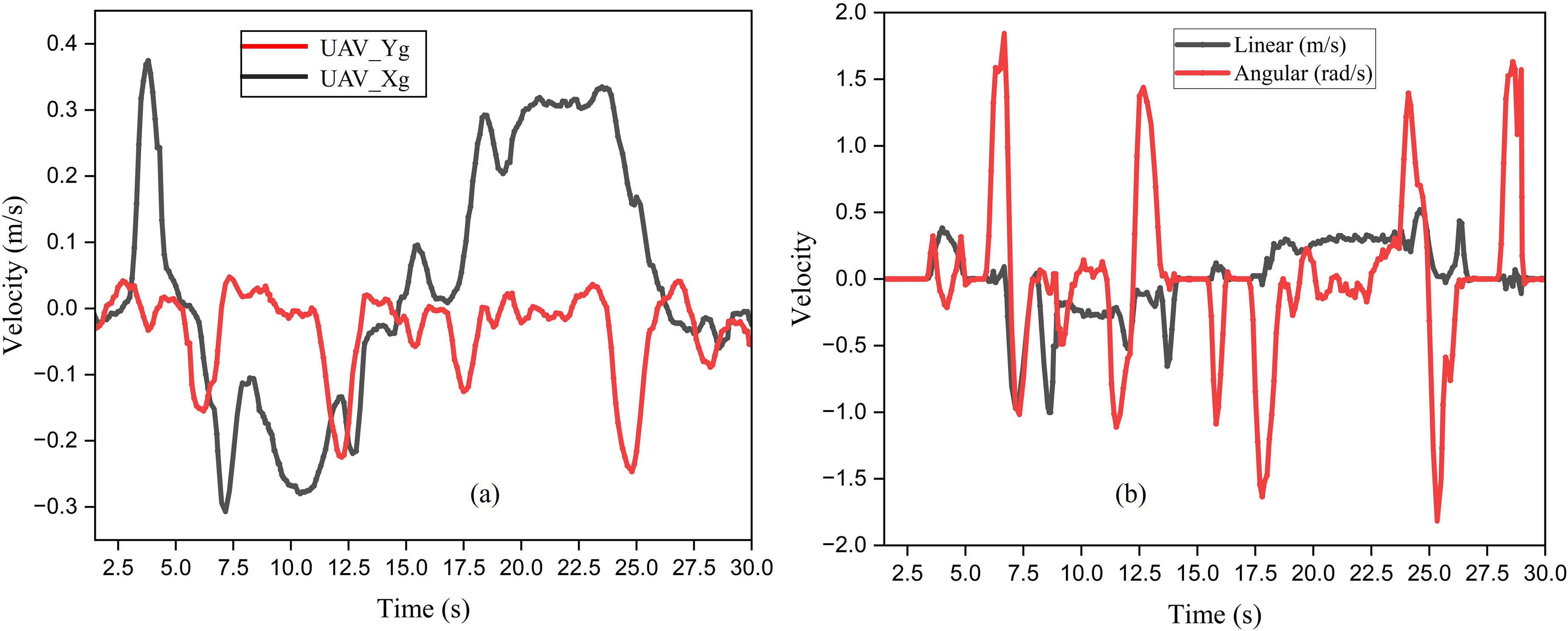

Figure 23(a) shows variation of velocity of UAV in the body frame of AGV during tracking. The velocity of UAV is completely random as can be observed from Figure 23(a). Figure 23(b) depicts comparison of velocity of UAV and AGV during tracking. Component of velocity of both vehicles along

(A) Velocity of UAV in the body frame of AGV (b) comparison of velocity of UAV and AGV during tracking. AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

The correspondence between steering angle of wheels and apparent position of UAV can be observed from Figure 22(a) and (b). At around 10 seconds, UAV is lying in the positive quadrant (first quadrant) of AGV body frame as per its apparent position. It is because the AGV is in the third quadrant in the image plane of UAV from the pose of AGV in Figure 22(b). The corresponding positive steering angle can be seen at the same time from Figure 22(a). Similarly at 30 seconds, UAV is lying in third quadrant (both apparent coordinates of AGV are positive). However, steering angle of wheels should be positive and the same can be seen from Figure 22(a). And it is also evident that, plane of wheels is always directing toward the aerial vehicle during tracking.

From the above results, the proposed tracking strategy of the 4-WISD vehicle is experimentally verified in outdoor conditions. Thus, the 4-WISD vehicle can support the longer outdoor/indoor missions of UAV by tracking it. Furthermore, the 4-WISD vehicle can perform the job effectively due to its good maneuvrability compared to skid steering AGV. However, the proposed tracking strategy (of both AGVs) requires communication between aerial and ground vehicles. Communication failures can happen during outdoor missions which cause to failure of the mission. It can be tackled by mounting the camera sensor to the ground vehicle instead of aerial vehicle. In this, AGV detects UAV using its sky-facing camera and computes control commands to track it. This technique does not require communication between both vehicles. Hence, the proposed tracking strategy can be improved further for outdoor missions as discussed above.

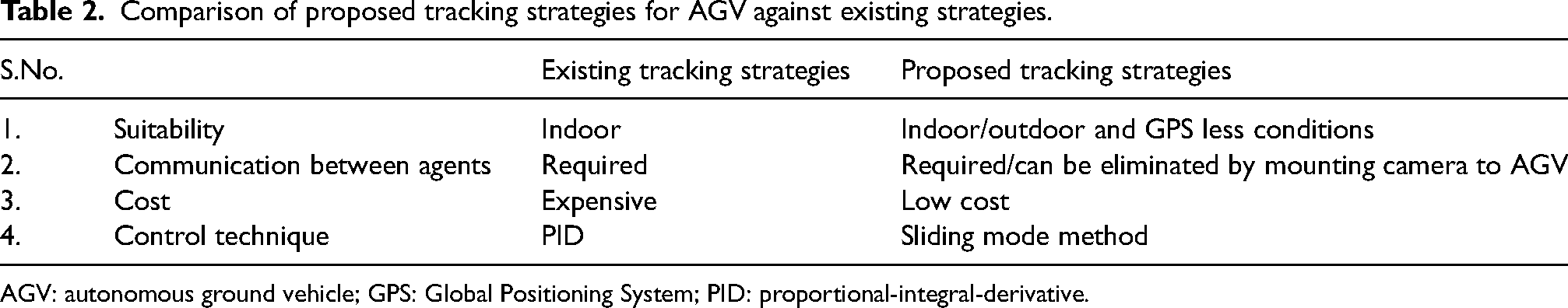

Qualitative comparison of tracking experiments

Tracking controllers are showing satisfactory performance from the experimental results. The proposed tracking strategies will allow the ground vehicle to continuously assist aerial vehicle by tracking it especially, in outdoor and GPS less missions. These are suitable for outdoor and GPS less situations because of use of on-board camera of UAV for relative localization. The proposed tracking strategy is also cost effective and ease to use due to on-board camera instead of motion capture systems. Further, the tracking strategies can be made robust by implementing deep learning techniques for detection of AGV and control. Table 2 shows the advantages of proposed tracking strategies for AGV with respect to the existing strategies. Data communication between aerial and ground vehicles is necessary for proposed tracking strategies. This can be eliminated by mounting the camera to AGV instead of UAV. Then, AGV localizes UAV by its sky-facing camera to track it. Thus, the proposed tracking strategies for AGVs advance the literature studies19,21,22 related to AGV tracking the motion of UAV in GPS less and outdoor conditions to support the longer mission of UAV. It is possible to further advance the methods employing learning based techniques such as deep neural networks (DNNs).

Comparison of proposed tracking strategies for AGV against existing strategies.

AGV: autonomous ground vehicle; GPS: Global Positioning System; PID: proportional-integral-derivative.

Tracking strategies have been developed for two mobility types of ground vehicles such as nonholonomic and partially holonomic. Nonholonomic AGV is required to rotate the chassis to change the direction of motion during tracking. This mobility may cause damage to terrain as well as to the payload. 4-WISD ground vehicle (partially holonomic) is able track UAV without changing its orientation due to all wheel independent steering. Hence this vehicle chooses shortest paths to track UAV which results in less time and energy consumption. This steering mechanism does not create any disturbance to the chassis. Hence, it ensures the safety of payload during collaborative missions. Hence, UAV and 4-WISD vehicle combination would leverage the mobility of the system. And due to quick steering of wheels, it takes shorter paths to track drone or to reach goal points. Skid-steering of nonholonomic vehicle is very frequent during tracking as discussed earlier. This mechanism rotates the whole vehicle during tracking unlike 4WIS mechanism. Thus, torque requirement for skid-steering is relatively higher than 4WIS mechanism (comparing effective moment of inertia and assuming same frictional torque in both cases). Moment of inertia (MOI) of whole vehicle influences the skid-steering. In case of the 4-WISD vehicle, it is only MOI of wheels about steering axis passing through center of wheel. Hence, UAV-4-WISD system may also be energy effective during the tracking compared to similar scale skid-steering vehicle. 47 However, a complete quantitative evaluation is necessary against the combination of UAV and nonholonomic AGV and the work is under progress. In the present work, only translational model of the 4-WISD vehicle is considered where the full advantage of independent control is not taken (4 steering and 4 speed control variables). Tracking can be further improved by controlling eight variables independently. It is trivial to eliminate communication requirement between both vehicles by mounting camera to AGV instead of UAV. Then, AGV relatively localizes the drone using its on-board sky-facing camera to track drone. Table 3 shows the qualitative comparison of proposed system against the combination of UAV and nonholonomic/skid-steering AGV.

Qualitative comparison of proposed system against combination of UAV and nonholonomic AGV.

4-WISD: 4 wheeled independent steering and driving; AGV: autonomous ground vehicle; UAV: unmanned aerial vehicle.

Though the proposed system is effective as summarized in Table 3, a potential improvement is possible by choosing more suitable ground vehicle. The 4-WISD vehicle is partially omnidirectional indicating that steering action is required prior to drive action. Fully omnidirectional ground vehicle with indoor and outdoor capabilities can be combined with UAV to make the system fully omnidirectional. This is taken as separate study and extension of present work.

Conclusions

Problem of ground vehicle tracking the motion of aerial vehicle for supporting its long-range GPS less missions is considered in the present work. Two kinds of ground vehicles are considered to analyze tracking motion. 4-WISD ground vehicle is designed and developed for this purpose. Four-wheel modules are assembled to the chassis. Each wheel module has 2DOF (degrees of freedom) with steering and driving motors. A magnetometer sensor is fixed to the vehicle to measure its yaw with respect to inertial frame. ROS-based SDK is developed for controlling the vehicle. The developed 4-WISD vehicle is integrated with UAV to study tracking performance. This combination offers better functionality of the system because of better mobility of the 4-WISD vehicle compared to nonholonomic ground vehicle. Relative apparent position of ground vehicles is obtained using UAV camera. And relative orientation (yaw) is obtained by comparing IMU data of UAV and AGV. Then apparent relative position of UAV with respect to ground vehicle is obtained applying homogeneous transformation.

Vision-based strategy for both ground vehicles to track aerial vehicle are developed. Kinematic controllers are designed to ground vehicles for tracking the motion of UAV. Sliding mode theory is implemented for the same. Lyapunov stability criteria of control equations are verified. Initially, position control of both vehicles in the fixed image frame is performed to differentiate the paths taken by both vehicles. Quick steering action of the 4-WISD vehicle results in shorter paths and shows good possibility for effective tracking. Steering action of the 4-WISD vehicle has been verified experimentally verified prior to tracking experiments. Tracking experiments have been performed combining nonholonomic and 4-WISD ground vehicles with UAV. Tracking controllers are showing satisfactory performance. Proposed tracking strategies do not depend on external motion capture systems and GPS for localization. Hence, these are well suitable for execution of outdoor and GPS less missions where ground vehicle is required to continuously track the aerial vehicle. Proposed tracking strategies require communication between both vehicles. This can be eliminated by using on-board sky-facing camera of AGV to localize drone. In the present work marker-based detection of ground vehicle is implemented however, learning based methods would make the algorithms robust for outdoor situations.

A basic qualitative comparison is drawn based on tracking experiments. Nonholonomic vehicle tracks UAV with combination of linear and angular motion. Due to this, it takes longer paths and consequently may go out of FOV of UAV. 4-WISD ground vehicle chooses shortest paths to track the UAV due to its flexible mobility and quick steering action. Thus, this combination may have better collaborative motion/mobility over UAV and nonholonomic AGV combination. And the proposed system will perform better in complex terrains due to leveraged maneuverability. Moreover, 4WIS mechanism is also energy effective compared to similar scale skid-steering mechanism. It is because effective moment of inertia of 4WIS mechanism is lesser than the skid-steering mechanism. Consequently, combination of UAV and 4-WISD can improve the effectiveness of the tracking task. And this combination is partially holonomic which relates to the flexible mobility/maneuverability of the system. Thus, UAV and 4-WISD system offers better functionality and reduced energy consumption compared to the nonholonomic combination. A preliminary investigation of the system (UAV and 4-WISD vehicle) is only presented in this work. A complete quantitative evaluation is necessary against the combination of UAV and nonholonomic AGV which is taken as extension of present work. Moreover, it is also possible to improve the maneuverability of the system further by choosing a fully holonomic ground vehicle suitable for both indoor and outdoor conditions.

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Footnotes

Abbreviations

Acknowledgements

Authors would like to acknowledge Central Workshop, Indian Institute of Technology Mandi, India.

Author contributions

Idea proposal, algorithms, coding, experimentation and initial draft preparation are done by AKS. Supervision, editing and conceptualization are done by AS.

Consent to publish

Consent is obtained from colleagues/participants to publish experiments videos containing their identifying information.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Data availability statement

Not applicable for this study.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.