Abstract

Visual simultaneous localization and mapping (SLAM) remains a focal point in robotics research, particularly in the realm of mobile robots. Despite the existence of robust methods such as ORBSLAM3, their effectiveness is limited in dynamic scenarios. The influence of moving entities in these scenarios poses challenges to data association, leading to compromised pose estimation accuracy. This paper proposes a novel approach that utilizes spatial reasoning to reduce the influence of dynamic entities present in an environment. Our approach, known as human–object interaction detection, identifies the dynamic nature of an object by evaluating the intersecting area between the bounding boxes of a person and the object. We tested our approach by extending the ORBSLAM3 RGB-D SLAM algorithm. Consequently, all ORB features associated with dynamic objects are filtered out from the ORBSLAM3 tracking thread. To validate our approach, we conducted evaluations on highly dynamic sequences extracted from the TUM RGB-D dataset. Our results exhibited a significant performance enhancement over ORBSLAM3. Furthermore, in comparison to other state-of-the-art research, our results remained competitive, given the simplicity of our approach.

Introduction

Simultaneous localization and mapping (SLAM) is regarded as the problem of navigating an unknown environment. The approach is founded on navigating an unknown environment while simultaneously self-localizing and generating a map. 1 In general, SLAM is used either as a means of providing detailed maps (or models) of the surrounding environment or producing accurate positions of robots within a given environment. 2

A continuously growing topic of interest has been the concept of visual SLAM. As opposed to the conventional use of light detection and ranging (LiDAR) systems, which provide trajectory and distance measurements through data association, visual SLAM introduces the application of cameras to provide pose estimates. The three dominant approaches of visual SLAM are feature-based, direct, and red–green–blue-depth (RGB-D) SLAM. The feature-based approach was founded on tracking and mapping feature points, while direct methods considered the whole image as an input, specifically the photometric consistency between images, thus making it more computationally demanding. 3

Davison et al. 4 developed monoSLAM, a feature-based method that used the extended Kalman filter to estimate camera motion and feature positions of an unknown environment. One of the key issues of this method was the scale of the environment, which was largely related to the extent of computation required. The parallel tracking and mapping method introduced the concept of using parallel threads for tracking and mapping, thus reducing the computational cost of the approach. 5 With the introduction of utilizing different threads for the different modules of the visual SLAM process, later approaches adopted the technique, such as ORBSLAM. 6 A common example of the direct method approach is LSD-SLAM, 7 whereby a three-dimensional (3D) environment is constructed as a pose-graph of keyframes related to semi-dense depth maps.

With the development of RGB-D cameras, improved visual SLAM approaches were introduced. These cameras produce both RGB and depth images. Common RGB-D cameras determine depth perception through the projection of infrared patterns, constraining their usage in indoor environments. 3 However, depth ranges are often limited to 5 m. 8 Examples of RGB-D SLAM include the work of Endres et al., 9 who used the Kinect camera to simultaneously provide camera trajectory and generate dense 3D models of indoor environments, and Salas-Moreno et al., 10 who developed SLAM++, a method that registered prior 3D objects to replace existing detected objects, leading to increased mapping efficiency.

Most of these methods are limited in two key areas: lack of interpretation of the environment and the inability to cater to dynamic entities. The robustness and precision of state estimation have largely been attributed to the base assumption of a static environment. Feature extraction is dependent on recognizing stable and unique visual elements to facilitate tracking. Dynamic objects introduce complications such as occlusion of other objects, changes in lighting conditions, motion blur, variations in scale, erroneous data associations, and deformations. Consequently, the presence of dynamic entities frequently disrupts this process, resulting in system failure.

Visual SLAM methods have seen rapid progress over the last decade in conjunction with the development of both computer vision and machine learning algorithms. Several approaches11,12 make use of convolutional neural networks to detect and identify dynamic objects within a scene, leading to the removal of dynamic feature points. However, these approaches are limited to their predetermined definition of dynamic objects. Defining static and dynamic objects in a scene is largely attributed to the environment. However, a key assumption in our work is that objects that have the potential to be dynamic are normally due to human interaction. We recognize that many other factors can cause objects to behave dynamically (wind, animals, moving machinery, etc.), but we limit the scope of this work to human-induced dynamics.

The current research proposes an approach that extends ORBSLAM3

13

by filtering ORB features when extracted from environments consisting of human motion and object interaction. The approach utilizes the recently developed YOLOv8

14

object detection models to assist in determining a spatial relationship between humans and objects within an environment. The contributions of the current research include:

The human–object interaction detection (HOID) method utilizes spatial reasoning in either the two-dimensional (2D) or 3D perspective to identify human–object interactions. An extended RGB-D SLAM algorithm, utilizing our HOID method, to identify and remove dynamic objects in an environment.

Related work

Dynamic and semantic SLAM approaches

To address dynamic environments in visual SLAM methods, various strategies incorporate visual methods that include both object detection and semantic segmentation. Bao Ai et al. 11 employed YOLOv4 15 with a dynamic object probability to mitigate the impact of dynamic entities. Similarly, Guan et al. 16 enhanced the ORB-SLAM3 framework by integrating YOLOv5 17 for object detection in dynamic indoor scenes.

Kaneko et al. 18 presented a monocular visual SLAM algorithm incorporating DeepLab v2 12 for semantic segmentation, selectively excluding feature points in outdoor dynamic areas to enhance stability. Xiao et al. 19 proposed Dynamic-SLAM, utilizing a custom solid state drive (SSD) 20 object detection model based on a convolutional neural network, coupled with a missed detection compensation algorithm. This method emphasizes the critical role of recall rate, particularly significant when employing object detection or segmentation methods in SLAM algorithms.

In another approach, Zhong et al. 21 proposed Detect-SLAM, which integrates object detection and SLAM to mutually enhance each other. This method implemented a deep neural network, SSD, 20 to detect both static and dynamic objects. The detection of dynamic objects, combined with a moving probability, was used to reduce their influence. An object map generated by the SLAM process was utilized as prior knowledge to enhance the object detector.

DS-SLAM 22 and DE-SLAM 23 are similar in their approach to enhancing robustness in dynamic environments. DS-SLAM applies a semantic segmentation model (SegNet 24 ) to filter features observed from dynamic objects, combining semantic segmentation with a moving consistency check (based on an optical flow technique) to omit feature points related to dynamic objects. DS-SLAM generates an octo-tree map 25 representing colored voxels with corresponding semantic labels. Similarly, DE-SLAM focuses on highly dynamic environments by employing a combination of semantic detection with MobileNet V2 SSD 26 and adaptive particle filtering for dynamic feature rejection, enhancing robustness against moving objects in real-time applications. Islam et al. 27 introduced MVS-SLAM, which utilizes enhanced multiview geometry to improve semantic RGB-D SLAM performance in dynamic scenarios. This approach leverages semantic segmentation to refine feature matching, thereby increasing the system’s robustness in environments characterized by significant motion.

More recently, Li et al. 28 proposed YVG-SLAM, an advancement of the ORB-SLAM3 algorithm that integrates view geometry with the YOLOv5 algorithm to dynamically remove feature points without relying on a priori assumptions. This method effectively reduces the impact of dynamic elements by employing a feature recognition strategy that determines geometric consistency in detected bounding boxes. Similarly, Zhong et al. 29 introduced DynaTM-SLAM, a method that integrates visual and semantic information for SLAM in dynamic environments. They utilize YOLOv7 30 for object detection and apply template matching within a sliding window to efficiently filter dynamic feature points. Additionally, their approach includes building an online object database to maintain consistent data association for static objects, which are then used to optimize camera poses through bundle adjustment with semantic constraints.

Furthermore, Wang et al. 31 proposed VIS-SLAM, an innovative approach that integrates visual, inertial, and semantic information for enhanced SLAM performance. Their method employs a non-blocking model to extract semantic information and assigns prior motion probabilities to feature points based on object detections. A propagation model is also utilized to estimate motion probabilities for frames without semantic information. The integration of inertial measurement unit (IMU) data assists in robot localization by correcting errors inherent in visual data. Experimental results demonstrate significant improvements in localization accuracy, emphasizing the significance of sensor fusion methods.

Fang et al., 32 presented a novel visual SLAM method that uses semantic segmentation and a knowledge graph to create a semantic descriptor. The knowledge graph represents the relationships between objects in the environment. The study was motivated by the application of robotics as an aid against cross-contamination that may occur during the COVID-19 pandemic.

The semantic descriptor was described as a

Consider Figure 1, in which a random key point k, consists of a human, representing a scalar quantity 1, and a moveable object (e.g. book), representing a scalar quantity 0. Both objects are close and thus the book can be considered dynamic. Therefore, the influence of the human and object (in terms of feature points) can be filtered out. The size of the descriptor and the close proximity (distance) of entities are defined in the study based on an experimental threshold.

Semantic descriptor.

Fang’s descriptor introduced the concept of using masks around a key point to discern meaningful relations between objects and individuals. However, it was observed to be limited by the contouring issues generated by Mask R-CNN. To our knowledge, this is the only approach to consider spatial reasoning, which is in relation to key points. In contrast, our approach aims to define human–object interactions through the intersection between object-bounding boxes. Furthermore, our method incorporates depth information to ascertain the significance of a 3D perspective in Visual RGB-D SLAM.

Limitations

These methods effectively utilize neural networks through object recognition models to remove features associated with predefined dynamic objects. Although these approaches have enhanced the robustness of SLAM systems, their performance is highly sensitive to the specific detection models used. To improve the overall robustness of the SLAM system, methods utilizing detection models make use of accompanying geometry methods, such as optical flow techniques or probability checks. Our approach seeks to mitigate the dependency by being independent of any particular detection model. This design allows for greater flexibility and scalability, making our method adaptable to various contexts and environments of the intended applications. Furthermore, our work places significant emphasis on the concept of human–object interaction. By focusing on the spatial relationships and interactions between humans and objects, our method can discern dynamic and potentially dynamic elements in the environment, potentially improving the accuracy and robustness of the SLAM system.

Method

The current section details our method in three subsections. First, the overview of the extended ORBSLAM3 approach is given. We then present the use of YOLOv8 and the definition of dynamic objects. Finally, we describe the HOID method in depth.

System overview

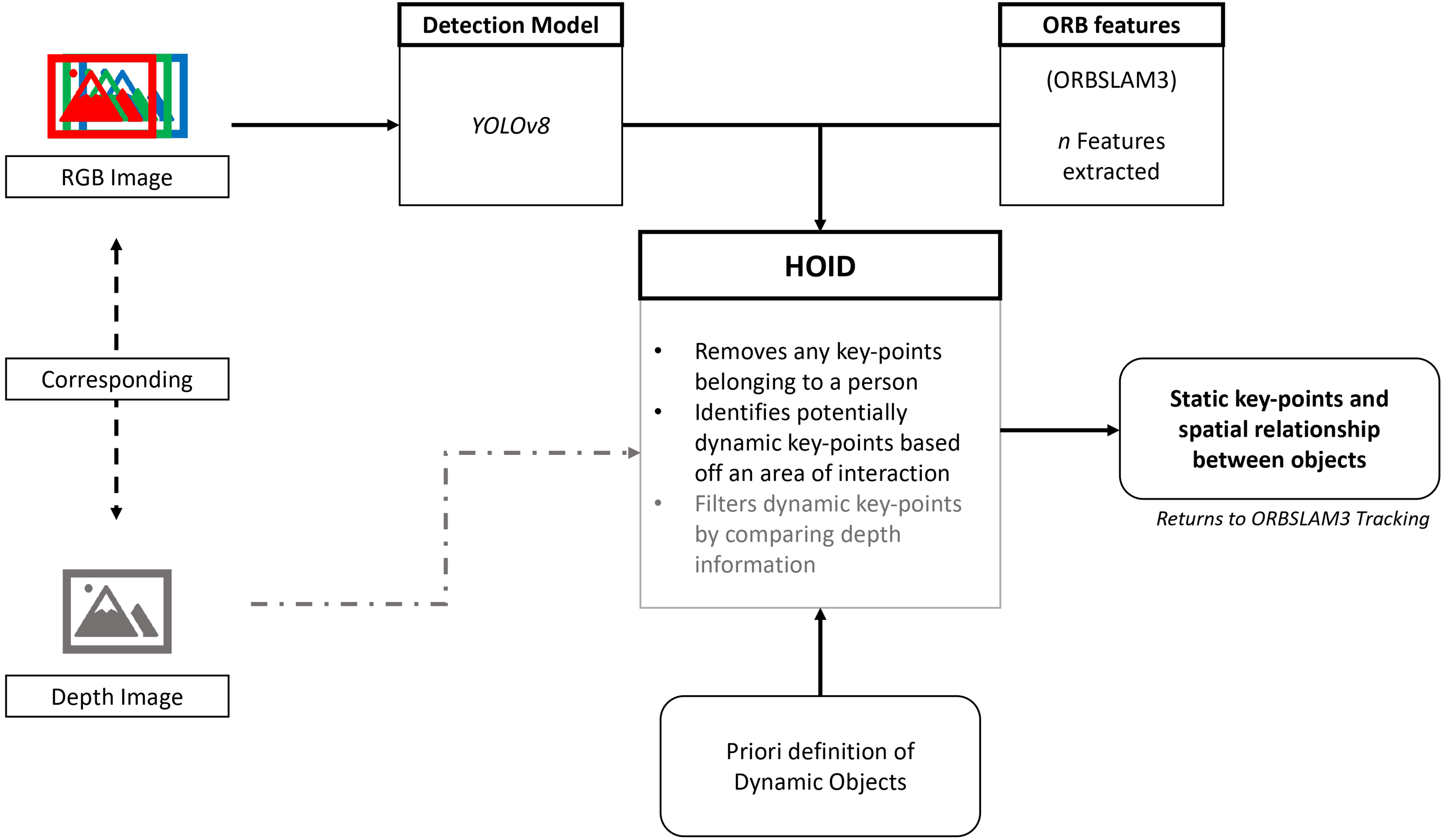

Our approach extends the use of ORBSLAM3, which was built on the preceding ORBSLAM2. 34 The algorithm supports both visual and visual–inertial modes on monocular, stereo, and RGB-D systems. In principle, our method will be able to extend various visual SLAM algorithms and is not limited to specific features. We aimed to improve the RGB-D system and make use of the depth information. The system architecture is presented in Figure 2.

System overview.

The integration of the HOID method into the ORBSLAM3 framework involves specific modifications to the tracking thread to filter dynamic features. The following steps outline the detailed integration process:

Object detection

Object detection is a vast field, encompassing various algorithms and datasets. There have been a number of object detection models that have been applied within the SLAM and robotics field. Some of the popular approaches include Faster R-CNN 35 and YOLO. 36 In addition to classifying and localizing objects using bounding boxes, segmentation models have gained favor for their ability to preserve object shapes through masking.

In the context of the work presented by Fang et al., 32 Mask R-CNN exhibited issues along the contouring of the classified objects. This is not ideal as it may result in errors when determining whether objects are interacting with one another. Therefore, our implementation utilized the latest version of the YOLO models, YOLOv8 by ultralytics. 14

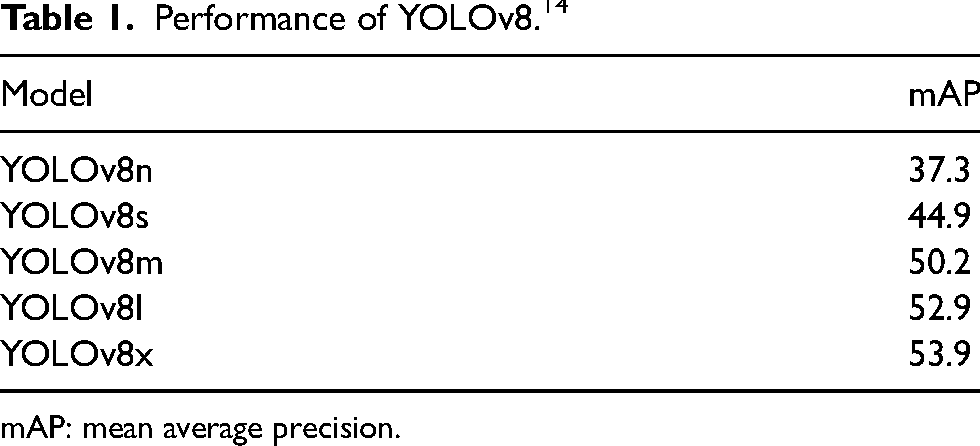

YOLOv8 is not limited to detection but also includes models that incorporate segmentation, pose estimations, and tracking. Our method requires bounding box information and will therefore focus on detection. Table 1 represents the performance of pre-trained YOLOv8 models, on the COCO dataset. As the mean average precision (mAP) increases, so does the inference time. Furthermore, while the choice of model can impact object identification precision, our approach is versatile and the model can be changed to suit the desired performance.

Using object detection, it is possible to remove objects from a scene based on selecting classes of interest that have been defined as dynamic. Our approach only assumes humans as a dynamic class and that objects are dynamic solely due to human interaction. Given that the YOLOv8 models were pre-trained on the COCO dataset, the following objects were chosen to be potentially dynamic:

Human–object interaction detection

This paper introduces a novel approach for deducing human–object interactions by leveraging spatial reasoning. Figure 3 provides an overview of the HOID method. We utilized two distinct versions of the method. The initial version relied solely on the RGB frame, the generated ORB features, and a list of potentially dynamic objects. Similar to Fang’s descriptor, these relationships were detected from a 2D standpoint. In a subsequent iteration, we incorporated the depth image to refine the selection of object feature points. This was achieved by discerning relationships in the 3D space.

Human–object interaction detection.

The premise behind the method is founded on the interacting area between the bounding box of a person and a potentially dynamic object. The interacting area pertains to the shared region between two bounding boxes and the extent to which they overlap. If the ratio between the overlapped area and the object area is greater than a certain threshold, referred to as the area of interaction, it is assumed that human–object interaction is detected. Therefore, determining the object to be dynamic.

In Figure 4, three example object scenarios are presented. Object A is completely encompassed within a person’s bounding box, classifying it as dynamic. Object B exhibits an area surpassing the designated minimum interaction area, hence it is also classified as dynamic. In contrast, Object C displays an area below the specified threshold for interaction, rendering it non-dynamic. Consequently, ORB features from bounding boxes (a) and (b) would be removed.

Interacting area scenarios.

In order to potentially enhance the precision of the proposed SLAM method, we considered depth data. Once an object met the criteria for being considered dynamic in relation to the interaction area, a second filtering step was implemented. This involved comparing depth data using sample boxes positioned at the center of both the object and person bounding boxes. If the disparity between these sample boxes fell below a predefined depth threshold, it signified that the object was in close proximity to the person. Consequently, such an object was classified as dynamic, and its associated features were subsequently removed. A significant assumption of this approach is that the object is ideally positioned at the center of the corresponding bounding box. However, this assumption may not hold true, especially for narrower objects. A flow diagram representing the feature removal process for both the 2D and 3D methods is shown in Figure 5.

Feature removal process.

We display our method using a single RGB-D frame extracted from a sequence within the TUM dataset. In Figure 6, we present the observed detections and 1000 generated ORB features. Implementing the HOID method with a minimum interaction area set at 0.4 effectively eliminates dynamic features, as depicted in Figure 7(a). Introducing depth into the analysis, with a corresponding limit of 0.2 m, reveals the retained features, as illustrated in Figure 7(b). Both figures emphasize the impact of including depth in preserving features. However, the effectiveness of retaining or removing features is discussed in the next section.

Sample frame with generated ORB features and YOLOv8 detections.

Sample frames from our approach.

Experimental results and discussion

To assess the performance of our proposed RGB-D SLAM method, we utilized the TUM dataset. This dataset is a valuable resource containing both RGB-D images and corresponding ground-truth data, specifically designed for evaluating visual SLAM techniques. It encompasses a diverse range of scenes, including static and dynamic scenarios.

For our research, we focused on three primary subsets within the dataset: f3-walking-static, f3-walking-xyz, and f3-walking-halfsphere. These subsets represent high-dynamic scenarios featuring two individuals walking in an office environment. This selection allows us to rigorously evaluate the robustness and effectiveness of our approach in real-world, highly dynamic settings. Each set consists of a sequence of images recorded at a frame rate of 30 fps with a resolution of

Prior to performing a comparison against ORBSLAM3 and the current research, it was necessary to determine the minimum area of interaction. The resulting root mean square error (RMSE) of the absolute trajectory errors (ATEs) are shown in Table 2. Throughout the experimental runs, the optimal range that demonstrated the lowest errors fell within 0.2 to 0.4. Specifically, an interacting area of 0.3 resulted in the lowest ATE for the f3-walking-static sequence, whereas an area of 0.4 yielded the lowest ATE for the f3-walking-xyz and f3-walking-halfsphere sequences. Consequently, the chosen minimum interaction area was set at 0.4.

Trajectory errors [m] on the minimum area of interaction.

The determination of the depth limit is inherently contextual, as it pertains to defining the proximity at which a human is deemed to be in alignment with an object. We have decided to set this limit at 0.2 m, a threshold we consider subjectively proximate enough for meaningful human–object interaction. This parameter directly correlates with the measurement of distance differences between objects.

Table 3 shows the performance comparison between ORBSLAM3 and our method. There is a significant improvement observed in our approach. Figures 8 and 9 represent the trajectories for ORBSLAM3 and our approach with respect to the f3-walking-xyz subset, respectively. In the context of our study, the observed gap in trajectory at the initial and final segments of the generated trajectory is attributed to the time-stamp associations between RGB and depth images. Given our emphasis on enhancing RGB-D SLAM, such occurrences are consistent. The subsets f3-walking-static and f3-walking-xyz demonstrated a significant improvement of 98%, while the f3-walking-halfsphere subset showed an 87% enhancement. Evidently, our method exhibits a greater performance than ORBSLAM3 in highly dynamic environments.

ORBSLAM3 trajectory from f3-walking-xyz sequence.

Our approach trajectory from f3-walking-xyz sequence.

ATE represented in meters as the RMSE, mean and median values.

ATE: absolute trajectory error; RMSE: root mean square error.

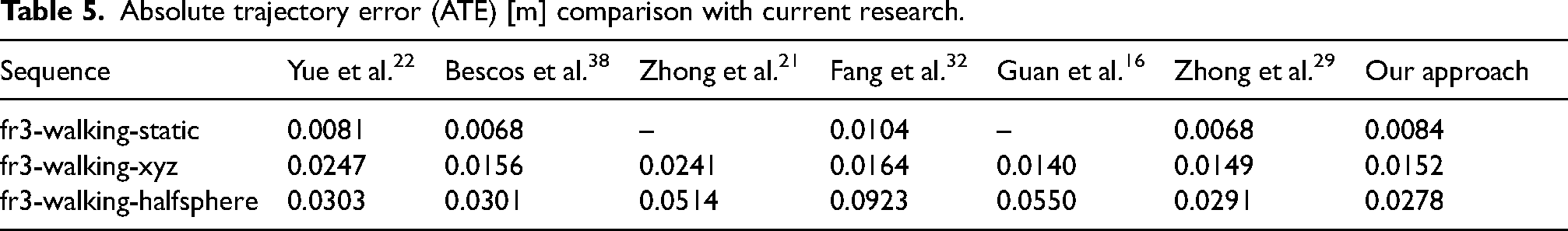

Our approach has been compared to current research methods that have aimed to improve the robustness and performance of visual SLAM within dynamic environments. The comparison can be seen in Table 5. Our results are competitive with the literature, achieving the lowest error in the f3-walking-halfsphere subset. Furthermore, our method stands out by eliminating the need for supplementary motion checks or optical flow, relying instead on spatial reasoning within a frame. It is worth noting that the method’s performance is contingent upon the speed and efficiency of the object detection model. Therefore, the speed of our method is limited to the inference speed of the selected model. Ignoring the detection model, the process of filtering features and removing dynamic objects averaged 12 ms per frame. The computation speed is based on the following laptop specifications: 7th Generation Intel i7-7700HQ, 3.8 GHz processor, 4x Cores, 8x Threads with 16 GB RAM, and an NVIDIA Geforce GTX 1060 6 GB GDDR5 graphics card. All processing involved frames of

Computation analysis.

HOID: human–object interaction detection.

Absolute trajectory error (ATE) [m] comparison with current research.

In the second iteration of our method, we explored the integration of associated depth images to assess the potential enhancement of 3D spatial reasoning on performance. This entailed a comparison of depth data, where sample boxes positioned at the center of both the object and person bounding boxes were compared. A sample box size of

Table 6 presents a comparison between the 2D and 3D methods. Notably, the differences between the two approaches are minimal, with the most significant gap being 14.7%, favoring the 2D method in the f3-walking-halfsphere sequence. It appears that the inclusion of depth data does not necessarily improve the approach, as it retains features not aligned with the people within the scene. This suggests that the removal of features through a 2D perspective might yield more benefits. In a scene where a person moves across objects, their movement can negatively influence the features being tracked on an object. The HOID approach employs a minimum area of interaction, removing these influences shortly before and after a person has moved across it.

ATE RMSE for 2D and 3D method.

ATE: absolute trajectory error; RMSE: root mean square error; 2D: two-dimensional; 3D: three-dimensional; HOID: human–object interaction detection.

The comparison of both methods is constrained to the TUM dataset, and a more comprehensive assessment can be achieved in a dataset with increased instances of human–object interaction. Additionally, both methods face limitations concerning the space a human occupies within a given frame, as the corresponding bounding box removes a considerable number of feature points. Arguably, this might not be as large a drawback, given that scenes where humans dominate the frame would inherently pose challenges to tracking regardless.

The potential of the 3D method should become more beneficial in scenes illustrating clear interactions between humans and objects. Moreover, this approach holds promise for applications in semantic SLAM, where the extraction of detailed scene information is highly desirable. We intend to further explore these scenarios in future research.

Conclusion

This paper introduces a novel approach that leverages spatial reasoning to mitigate the influence of dynamic entities within an environment. Our method was tested by building upon the open-source visual SLAM algorithm, ORB-SLAM3. Referred to as HOID, our approach determines whether an object is dynamic by analyzing the shared intersection between the object and a person’s bounding boxes. If the interacting area exceeded an experimentally defined threshold, the objects were classified as dynamic. Consequently, the associated features were eliminated and reintegrated into the tracking thread of the algorithm.

To evaluate the effectiveness of our method, we conducted tests on dynamic sequences from the TUM dataset. Our method significantly outperformed ORBSLAM3 in dynamic scenarios. Furthermore, our results were competitive with the literature. Considering the simplicity of our method, human–object spatial reasoning proved capable of effectively improving pose estimation.

Our findings highlight several broader implications for the field of robotics and autonomous systems. By effectively filtering out dynamic features, the HOID method enhances the accuracy and reliability of visual SLAM systems in dynamic environments, which is crucial for the deployment of autonomous robots in real-world scenarios where dynamic interactions are common. Furthermore, the ability to detect and exclude dynamic human–object interactions significantly improves the safety and efficiency of robots operating in close proximity to humans, with important implications for collaborative robots (cobots) in industrial and service applications. Furthermore, our research lays a foundation for further studies into dynamic SLAM, encouraging the development of more advanced algorithms that incorporate additional dynamic factors and improve computational efficiency.

However, while our study presents promising results, several limitations should be acknowledged. The evaluation was primarily conducted using the TUM RGB-D dataset, which, while widely recognized and extensively used, may not fully encompass the range of dynamic scenarios encountered in real-world environments. Our focus on human–object interactions as the primary source of dynamics excludes other potential dynamic factors such as moving machinery and environmental conditions.

A second iteration of our method incorporated the use of depth information. However, it was found that there was no significant change to warrant integrating depth perception. As a result, our findings suggest that retaining features of objects not aligned with a human-led to a higher level of error. The inclusion of depth may be beneficial for semantic SLAM applications, where extracting more contextual information of an environment is desirable. We aim to investigate this further.

Future research should aim to incorporate more diverse and highly dynamic datasets to understand the full potential and limitations of our approach in various real-world scenarios. This will help assess the scalability of the method in handling more complex dynamic environments. Additionally, we aim to extend the application of HOID to encompass both semantic and risk-aware navigation, to potentially improve the overall reliability and safety of autonomous systems. We plan to investigate this further and implement our approach in real-world scenarios involving increased human–object interaction to generate semantic-based maps.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.