Abstract

Loop closure detection is a key technique for robots to minimize the accumulated localization and mapping errors after long-time explorations of simultaneous localization and mapping. However, the requirement for efficiency and accuracy performance for mobile robot applications is not well satisfied. In this article, we propose a fast and accurate loop closure detection method by exploiting both pose-based and appearance-based information in a probabilistic manner, inspired by the complementarity between the pose-based and the appearance-based information. Our approach formulates a probability framework combing the pose-based loop closure detection probability and the appearance-based loop closure detection probability. In the proposed framework, the pose-based loop closure detection model is firstly derived from the nonlinear optimization model of odometry. Then the appearance-based loop similarity and the pose-based loop similarity are combined into a joint framework to improve the loop closure detection performance. We implemented our approach using C++ and ROS and thoroughly tested it on the publicly available datasets. The experiments presented in this article suggest that the proposed method can achieve high efficiency and accuracy performance on loop closure detection.

Introduction

Visual loop closure detection is “the revisiting problem” for a robot to recognize a previously visited area. 1 So the loop closure detection (LCD) issue is mostly regarded as a scene matching or image retrieval problem. 2 An image acquired by a camera is matched to a map, where the map contains a set of previously acquired images. Once the match is successful, a loop closure event is confirmed. However, in contrast to general scene matching issues, the LCD method must deal with a growing dataset as the robot explores more scenes, leading to a linear increase in the computational cost, as shown in Figure 1(a). Therefore, the LCD algorithm must be sufficiently efficient in consideration that there are always newly acquired images waiting to be processed with the ongoing application of real-time simultaneous localization and mapping (SLAM).

Time consumption of loop closure detections. (a) The computational cost of traditional loop closure detection will linearly increase with ongoing SLAM. While the proposed method maintains stable and low. (b) Different procedures of loop closure detection consume different length of time when processing a loop candidate. The proposed pose-based probability module (PP) runs faster than the other procedures (SC is the sequential consistency check, and GC is the geometrical consistency check). SLAM: simultaneous localization and mapping.

To perform a faster and more accurate LCD, we presented a novel approach that exploits both pose-based and appearance-based information, inspired by the complementarity between these two kinds of information. The image similarity works well in most cases but may confuse different places with similar scenes. Think about how human beings recognize previously visited places. People can recognize a previously visited place on the basis of the same location, even if the scene has changed greatly. On the contrary, people will not confuse two different places with similar scenes during the journey. In other words, both kinds of information contribute to the LCD task, and they are complementary to each other.

Inspired by the complementary of pose- and appearance-based information, we propose a novel pose-appearance-based probabilistic LCD method. This study is the first trial combining these two kinds of information into a joint framework to improve the LCD performance.

Consequently, this work is an actual “loop” closure detection method, rather than a general re-localization method or scene matching method. In other words, the kidnapping issue is not taken into account.

The joint framework of the proposed method is illustrated in Figure 2. The pose-based method calculates the probability of loop closure using the proposed probability model. And in this process, a fast pose-based filter is also designed to efficiently acquire the loop closure candidates. The appearance-based method is a replaceable component based on the appearance-based LCD method, such as bag-of-words (BoW) and convolutional neural networks (CNN). Then the product of the pose-based probability and the appearance-based similarity is then used as the final loop probability. A sequential consistency (SC) check and a geometrical consistency (GC) check are also introduced into the proposed framework as optional modules which are commonly used for LCD applications. The SC check is very efficient while the GC check has a remarkable precision performance with quite high time consumption.

The joint LCD framework combining the pose-based method and the appearance-based method. The first block indicates the proposed pose-based loop closure probability calculation. The appearance-based method is based on other appearance-based LCD algorithms, such as BoW and CNN. The dashed blocks are optional for different applications. LCD: loop closure detection; BoW: bag-of-words.

The main contribution of this article is the probability combination of pose and appearance in LCD. This allows the proposed method to achieve higher efficiency and accuracy performance on LCD. In sum, we make two key claims: The introduction of pose-based information into the framework will accelerate the LCD process. The proposed LCD pipeline is compatible with many other methods and easy to be used in real-time SLAM applications. The rest of this article is mainly focused on the probability derivations of pose similarity, so that the appearance similarity and pose similarity can be combined and form a novel LCD method.

The remainder of this article is structured as follows. The related work is introduced in section “Related works,” followed by the relevant mathematical concepts and notations in section “Preliminaries.” As the main mathematical derivation of this article, in section “Pose-based loop closure probability,” the pose similarity is derived probabilistically, where the accumulative pose covariance matrix is incrementally calculated and used for this pose similarity. Then, in section “Pose-appearance-based LCD,” the pose similarity is introduced in the new LCD pipeline, named as the pose-appearance-based LCD. Finally, section “Experimental evaluation” presents the experimental settings and results to validate the contributions of the proposed method, and this article is concluded in section “Conclusions.”

Related works

After years of research, LCD methods have formed four main types: traditional feature based, learning based, sequence based, and pose based.

Methods based on traditional feature

Visual features can well represent the appearance information of an image frame and are widely used for image matching. So it is a natural idea to detect loop closures using visual features. 3 Visual features can also be coded as words, named BoW, to accelerate the feature matching process. 4 It represents image features as words of a dictionary, which is generated offline using a large number of visual features with the k-means algorithm. 5 The key idea is then to accelerate the image matching procedure by comparing words instead of raw feature descriptors.

Based on the BoW model, appearance-based LCD methods proposed by Angeli et al. 6 , Cummins and Newman 7 , Schöps and Cremers 8 , Gálvez-López and Tardós 9 , and Labbé and Michaud 10 are widely used. incremental appearance-based mapping (IAB-MAP) 6 extends the BoW method by adding color histogram features together with keypoint features in the dictionary. The epipolar constraint between the similar images is checked and such a procedure is referred to as the GC check. fast appearance-based mapping (FAB-MAP) 7,8 also adopts the BoW idea and uses the Chow–Liu Tree 11 to model the distributions of words, which in turn provides a way of calculating the probability of a loop closure event. DLoopDetector 9 employs both sequential and GC checks after the BoW image match. The sequential check keeps a serial of images matching the same scene in the map as a candidate loop closure, while the GC check employs random sample consensus (RANSAC) to find a fundamental matrix between the image pair. The loop closure is confirmed only if both consistency checks are verified. real-time appearance-based mapping (RTAB-MAP) 10 focuses on the memory management aspect for large-scale and long-term online SLAM application, it also proposes a Bayes estimation approach for LCD problems. ORB-SLAM 12 improves the DLoopDetector by replacing the binary robust independent elementary features (BRIEF) feature with the rotation invariant oriented fast and rotated brief (ORB) features, which can better deal with perspective changes when performing LCD. Bampis et al. 13 use the BoW to produce a general vector representing the whole scene and improve the ability to deal with the LCD for image sequences.

The BoW model can be extended to be incremental, to avoid the vocabulary training stage. 14 Then the robot may online and incrementally detect loop closures for image sequences. 15 –17

Methods based on learning

In addition to the methods based on traditional features, with the fast development of machine learning, LCD based on deep learning is also gaining in popularity. 18 –20 For example, Jégou et al. proposed the vector of locally aggregated descriptors (VLAD) algorithm 21 based on the Fisher kernel, which computes the gradients of the input features in a trained model and generates a joint fixed-size vector as the representation of the input image. 22,23 NetVLAD 24 applies soft assignment of VLAD descriptors to the offline learned clusters as an end-to-end visual place recognition method. Merrill and Huang proposed the CALC 25 method by training a network comprising a semantic segmentation and auto-encoder to extract descriptors. Based on the traditional histogram-of-oriented-gradients (HOG) LCD mehtod, 26 Zaffar et al. proposed the CoHOG 27 by integrating the convolutional scanning and regions-based feature extraction of CNN. Zhang et al., on the other hand, proposed a CNN-based LCD method named CNN-LCD. 28 An et al. combined the CNN global features and CNN local features to improve the LCD performance. 29

Methods based on sequential information

Since the image frames are captured sequentially, the loop closure images are also sequentially consistent. Then the SC check or temporal consistency check can be employed to filter the LCD results to improve the accuracy.

SeqSLAM 30 is a typical representative method using sequential information. Instead of matching to a single image, SeqSLAM calculates matching location within every local navigation sequence to enhance the performance in challenging environments.

DOseqSLAM 31,32 uses a dynamical instead of fixed size of sequence to check the SC, achieving a better adaptability for different applications. Similar to the usage of the sequential information, the incremental LCD methods are also proposed. 29,33,34

Methods based on pose information

Although the appearance-based LCD methods are powerful and successful, however, they suffer from high computational demands, especially when the computational cost linearly increases with the map size. In contrast to that, Pose-driven methods, on the other side of the spectrum, can provide an efficient solution with less computational demand. 35,36 For example, Neira and Tardos 35 recognized the importance of the pose-based information and used the pose-based information for the data association in the mapping process. Li et al. 36 model the visual odometry accumulative error based on the Kalman Filter and the RGB-D camera model. The error in the pose is used as a constraint in LCD. The disadvantage of these two approaches is the limiting Kalman filter model, which is not applicable to nonlinear-optimization-based SLAM, such as the sparse methods 37 –40 and dense methods. 41 –43

In this article, we introduce a novel pose-appearance-based method by exploiting both pose-based and appearance-based information in a probabilistic manner, which performs fast and accurate LCD.

Preliminaries

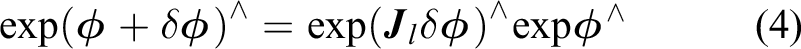

This work combines the pose-based loop probability and the appearance-based loop probability. The calculation of the pose-based loop probability needs the estimation of the covariance matrix of poses, where the pose is represented as Lie algebra, and its covariance matrix is calculated using nonlinear optimization. So in this section, some basic formulas are introduced as preliminaries.

Lie algebra

Consider the 3D rotation matrix of

where

The operator

If a small increment is added to

On the contrary, the Lie algebra has the linear approximation in equation (5) with the small increment of

where

Nonlinear optimization for pose estimation

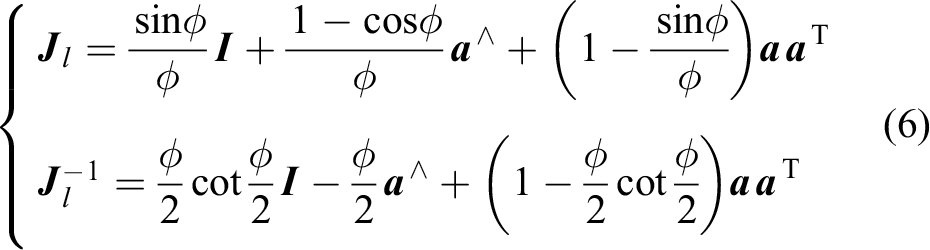

Consider a six-dof camera pose

The landmarks observed by the camera are 3D points in the map,

The

Then, equation (8) can be rewritten in the matrix form as follows

where

Based on the Gauss–Newton nonlinear optimization algorithm, equation (9) can be iteratively solved, and the t-th iterative increment of

where

After several iterations, the optimal pose of

Covariance of pose

The

The

where

Equation (11) is a fast but low accurate first-order estimation of the incremental covariance. There are other accurate methods 45 as alternatives. For the joint optimization of sequential frames, there are also some methods calculating the pose covariance, 46,47 which can be used for global trajectory optimization.

In this work, the covariance of pose is employed for LCD, but it is not a major concern about how to calculate the covariance in this work. Users may adopt any method mentioned above for a specific SLAM system. But the computational efficiency should be taken into consideration when utilizing covariance calculation methods, since the LCD process is executed in real time along with the progress of SLAM.

Pose-based loop closure probability

In this section, we proposed the pose-based method to calculate the loop closure probability of any two poses, shown as the first block in Figure 2.

Two images with exactly the same pose definitively indicate a loop closure. However, in most cases, these two poses are not exactly the same, but they share similar views. In this case, they are called images of covisibility, and they can generate a loop.

Consider the current pose as

All the variables in equation (13) are related to poses and covariance matrix. The derivation is detailed as follows.

Probability distribution of pose

To calculate the pose-based loop closure probability, the probabilistic distribution model of the pose needs to be derived first. For any pose of

Covisibility of two poses

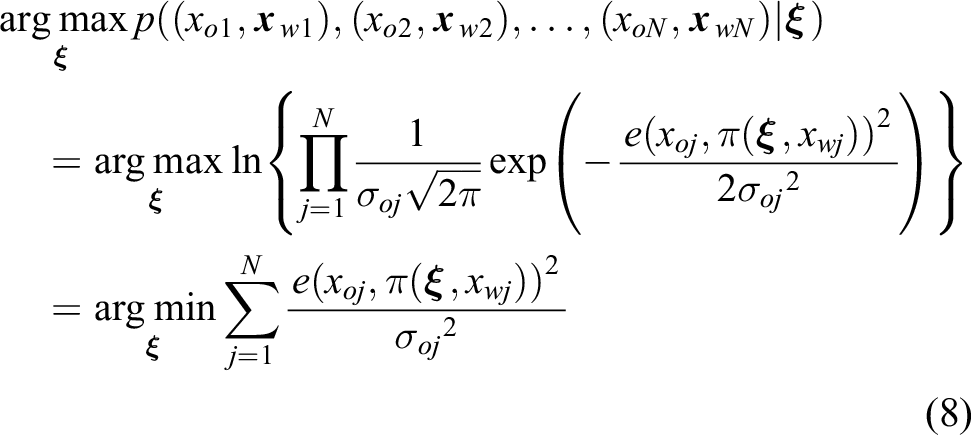

The covisibility of two poses is the basis for visual odometry to calculate their incremental transformation. So the covisibility matrix can be estimated during the odometry process, and then be employed to detect pose-based loop closure.

The covisibility matrix

The

Pose-based loop closure probability

Then we can calculate the loop closure probability of any two poses based on the covariance matrix of pose and the covisibility matrix. In other words, the two poses are considered as loop closure only when they are covisible under the uncertainty of

Based on the marginal probability model, the probability of loop closure, that is, the probability of covisibility, can be calculated as

Note the difference between

Based on Bayes’ theorem of

Considering that the probability of covisibility should be normalized to be 1 when the two poses are exactly the same, that is,

Finally, the probability of loop closure can be calculated as

Let

Fast loop closure candidate filtering

To improve the efficiency of the LCD procedure, the loop closure candidates are selected using a simple threshold operation based on the pose and the covariance matrix, before calculating the probability of equation (20).

We set a probabilistic threshold of 95%. According to the Gaussian integration function, the 95% confidence interval of

Thus, the current pose of

Then, we can obtain the final 95% confidence interval of loop closure according to

Pose-appearance-based LCD

Based on the pose-based loop closure probability, the pose-appearance-based LCD framework can be built, as illustrated in Figure 2. The appearance similarity method, such as BoW-based or CNN-based appearance similarity, can be employed as the appearance-based model in the joint framework.

Pose-appearance-based loop closure probability

To detect the loop closures using both the appearance and the pose information, the appearance similarity of s is multiplied by the pose-based loop closure probability of pp

, after which we can obtain the pose-appearance-based loop closure probability:

For the BoW-based appearance similarity, let v and w be the two BoW vectors that are pre-normalized. Then, the fast

Since

For CNN-based appearance similarity, the output of CNN, that is, the output of the activation function, can be also normalized to be the substitution of appearance similarity s.

Finally, the pose-appearance-based loop closure probability of

SC check and the GC check

Similar to the appearance-based LCD method, the SC check and the GC check are introduced in the proposed method. These two checks are optional and commonly used for LCD methods. 48

The SC check is designed to work very efficiently but with limited performance. A loop candidate is kept only if two or more sequential images are detected to be the same loop closure candidates. The SC check is an optional module for most LCD methods, as well as the proposed method. It can only work for continuous SLAM without kidnapping. Considering the proposed method relies on the continuous pose estimation, and do not deal with the kidnapping case, the SC check is recommended.

The SC check is mostly considered as the prepossess of the global optimization after LCD, instead of part of the loop detection. During the GC check, the fundamental matrix of the image pair is checked based on their keypoint matches. The loop closure candidate is confirmed only if there are enough inliers for the fundamental matrix. Then the keypoint matches are employed as the constraints of the global optimization. In this reason, no matter whether the GC check is part of the LCD methods, it is always necessary for LCD tasks. The GC check has a remarkable performance at filtering out false loops, albeit with a high computational cost.

Experimental evaluation

The main focus of this work is to improve the efficiency and accuracy of LCD process using the complementarity of pose and appearance. Our experiments are designed to show the capabilities of our method and to support our key claims, which are: (i) The introduction of pose-based information into the framework will accelerate the LCD process. (ii) The proposed LCD pipeline is compatible with many other methods and easy to be used in real-time SLAM applications.

Methodology

Datasets

Two publicly available datasets are used in the experiments, namely, the KITTI dataset 49 and the TUM dataset. 50 The KITTI dataset is a dataset of outdoor driving, and the TUM dataset is captured in an indoor environment with a handheld RGB-D camera. Note that only some image sequences of the two datasets consist of loop closures: KITTI00, KITTI02, KITTI05, TUMfreiburg2desk, and TUMfreiburg3longoffice. These image sequences are employed for the LCD experiments. All loop image pairs of the ground truth are manually labeled; some image pairs are illustrated in Figure 3.

Some image pairs of the loop closure ground truth, where the ground truth is manually labeled. (a) KITTI dataset and (b) TUM dataset.

Metrics

The performance of the proposed method is evaluated with the precision and recall metrics. The precision is defined as the ratio of true positive LCDs to the total number of detections. The recall is defined as the ratio of true positive LCDs to the number of ground truth loop closures. An LCD result is considered to be truly positive only if the loop image pair is listed in the ground truth; otherwise, this LCD result is considered to be false. The threshold of the pose-appearance-based probability is continuously tuned during the experiments, and the precision–recall curves are drawn and analyzed.

For the precision–recall curve, the area under the curve (AUC) represents the comprehensive performance of the method. So the AUC is calculated for every comparing LCD method. The average time consumption per frame is also recorded to evaluate the real-time performance.

Program and settings

We implement the proposed LCD method in the framework of ORB-SLAM2,

39

where the LCD process is replaced by the proposed method. The proposed method and all methods used for comparison run together in the program, and the trajectory optimization of the loop closure is disabled in the program to maintain consistency within the experimental conditions among all methods. The program runs on a computer with a 2.4 GHz quad-core processor. The ORB feature is used in all experiments, and the number of features per image is set to be 1000 in consideration of the computational capability of the computer. The program runs at 30 FPS with these settings. The code is open source and can be downloaded from

Efficiency

The first experiment is designed to show the efficiency of our approach and to support the claim that the introduction of pose-based information will accelerate the LCD process. The time consumption of each of the four components is recorded during the LCD, and the average time consumption per loop image pair is illustrated in Figure 1(b).

As shown in Figure 1(b), the time consumption of the GC check is excessively high. Thus, the total time consumption will linearly increase with the amount of the loop candidates when detecting loop closures, as shown in Figure 1(a). Note that most LCD methods do not take the GC check module in themselves. However, the GC check is necessary for LCD of real-time SLAM, because it provides the observation constraints for the global optimization after the loop closure. So there are as many GC checks as the loop candidates. Thats why the time consumption of GC checks is taken into consideration in this real-time LCD experiment.

With the introduction of pose-based information into the framework, the loop candidates are efficiently refined, and the time consumption of the proposed method remains much lower.

The pose-based loop candidates together with the appearance-based loop candidates for one current frame are illustrated in Figure 4. Both the pose-based and the appearance-based modules detect many loop candidates. But the two kinds of candidates are distributed differently with complementarity: The pose-based candidates congregate around the current pose, while the appearance-based candidates disperse over the whole map. The two kinds of candidates overlap each other around the ground truth. Then with the help of the pose-based fast loop closure candidate filtering, only part of the map is necessary for the loop closure consistency check. And thus, the proposed method combining the pose-based information and the appearance-based information has a good efficiency performance.

Loop closure candidates of one demonstrative query frame using the pose-based probability module (PP) and the appearance-based appearance similarity module (AP). The pose-based candidates and the appearance-based candidates are distributed differently with complementarity. (a) Query frame, (b) correct frame of GT, (c) wrong frame of BoW, and (d) wrong frame of PP. GT: ground truth; BoW: bag-of-words.

The pose covariance matrix is calculated along with the odometry. We visualize the calculation results of the pose variance to validate the algorithm. The 95% translation confidence interval is drawn in Figure 5.

The 95% confidence intervals of the pose illustrated as cubes along the trajectory.

The pose variance increases with the progression of the odometry, as shown in Figure 5. This means the more loop candidates will be kept by the pose-based module which result in the increasing of time-cost. But the time-cost is still low comparing to the appearance-based module, as shown in Figure 1. Note that if the robot estimates the pose variance with a low precision in some challenging environments, the 95% threshold can be tuned higher to get a larger confidence interval. Caution that the higher thresh will result in an increase of the time-cost, and an extreme case is the infinite confidence interval which would result in the traditional appearance-based global LCD. This case mostly occurs with the failure of localization which results in the infinite localization error. On the other hand, a smaller threshold may cause the confidence interval too small to detect any loop closures. All the experiments in this work use 95% as the threshold.

Accuracy

The next set of experiments is designed to show the accuracy of our approach and to support the claim that the proposed LCD pipeline is compatible with many other methods and easy to be used in real-time SLAM applications. We, therefore, tested our approach on two public datasets and is running in real time together with a SLAM method of state-of-the-art, the ORB-SLAM. 39

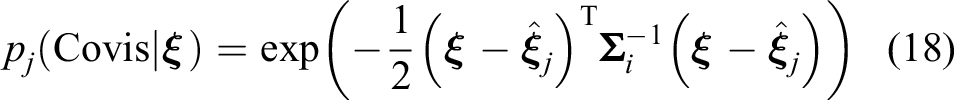

Comparing LCD methods are employed as the appearance-based module in the proposed framework, which are the FAB, 51 DBOW, 9 CNN-LCD, 28 NetVLAD, 24 AlexNet, 25 CALC, 25 HOG, 26 and CoHOG. 27 And the LCD experiments are conducted for the three pose-appearance-based examples compared with the original methods. The experimental results are illustrated in Table 1.

The area under curve (AUC) and the average time consumption performance of different LCD methods with/without the proposed LCD pipeline.

TpF: time consumption per frame; LCD: loop closure detection; HOG: histogram-of-oriented-gradients. The bold values indicate the outstanding results.

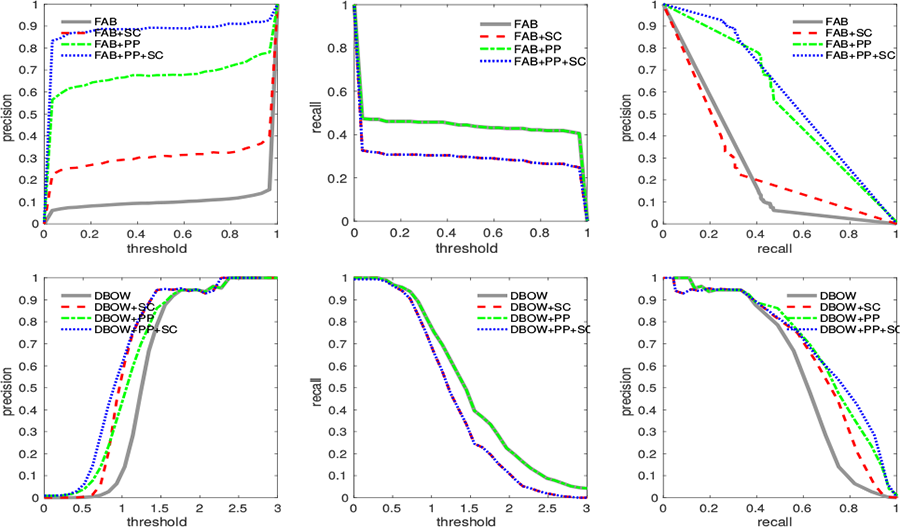

Some precision and recall curves are illustrated in Figures 6 and 7. The precision curves and the recall curves are also provided together with the precision–recall curves to analyze the details of the precision and recall performance, respectively.

Precision, recall, and precision–recall curves of different methods on the KITTI00 image sequence (PP indicates the pose-based probability. SC indicates the sequential consistency check).

Precision, recall, and precision–recall curves of the CNN-based method on the TUMfreiburg2desk (the top row) and the TUMfreiburg3longoffice (the bottom row) image sequences.

Note that the SC check and GC check module are optional and are widely used in many other methods. These two modules are not the contributions of this work so that they are not discussed in this experiment. Moreover, the GC module is a strict but time-consuming module. The introduction of GC will perform a 100% precision for most methods with a heavy computational burden, which is already illustrated in Figure 3.

As shown in Table 1, the introduction of the pose-based probability can greatly improve the LCD performance. The AUC (i.e., precision and recall performance) is greatly increased for all the experimental methods. The time consumption per frame (TpF) is decreased by orders of magnitude, showing the extraordinary real-time performance. Note that this TpF is the average time during the whole SLAM, and the time consumption is increasing with the increase of map, as shown in Figure 1. On the other hand, the introduction of the SC check can also improve the LCD performance, but the improvement is limited comparing to the pose-based probability.

The precision curves in Figures 6 and 7 show that the pose-based probability and the SC check can improve the precision performance of the appearance-based method. However, the recall performance, as shown in the recall curves in Figures 6 and 7, will not be better than the appearance-based results because the introduction of any other component after the appearance-based component can filter out some candidates and cannot add candidates. Finally, the large precision increase and small recall decrease results in an improvement of the precision–recall performance.

In summary, our evaluation suggests that our method provides competitive efficiency and accuracy in LCD. The introduction of pose-based information into the framework will greatly accelerate the LCD process. And meanwhile, the proposed method achieves good precision–recall performance in real-time SLAM applications.

Conclusions

In this article, we presented a novel approach to detect loop closures for SLAM. Our approach exploited both pose-based and appearance-based information in a probabilistic manner, inspired by the complementarity between these two kinds of information. This allows the proposed method to achieve higher efficiency and accuracy performance in LCDs. We implemented and evaluated our approach on different datasets and provided comparisons to other existing techniques. The experimental evaluation suggests that our method provides competitive efficiency and accuracy in LCDs.

However, there are certain aspects of the proposed method that need to be improved. First, the introduction of the pose information limits the application of the method, which makes it unable to deal with the kidnapping of the robot. Our plan is to introduce more powerful but less fast appearance method, such as deep learning based method, to re-localize in the global map. Second, the trend of online incrementally update of the appearance vocabularies or other appearance models can be adopted to enhance the adaptability of long-time SLAM.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Fund for key Laboratory of Space Flight Dynamics Technology (2022-JYAPAF-F1028), Major Project of Natural Science Foundation of Hunan Province (No. 2021JC0004), and the National Science Foundation of China (U1913202, U22A2059, and 62203460).