Abstract

Light Detection and Ranging (LiDAR)-visual-inertial odometry can provide accurate poses for the localization of unmanned vehicles working in unknown environments in the absence of Global Positioning System (GPS). Since the quality of poses estimated by different sensors in environments with different structures fluctuates greatly, existing pose fusion models cannot guarantee stable performance of pose estimations in these environments, which brings great challenges to the pose fusion of LiDAR-visual-inertial odometry. This article proposes a novel environmental structure perception-based adaptive pose fusion method, which achieves the online optimization of the parameters in the pose fusion model of LiDAR-visual-inertial odometry by analyzing the complexity of environmental structure. Firstly, a novel quantitative perception method of environmental structure is proposed, and the visual bag-of-words vector and point cloud feature histogram are constructed to calculate the quantitative indicators describing the structural complexity of visual image and LiDAR point cloud of the surroundings, which can be used to predict and evaluate the pose quality from LiDAR/visual measurement models of poses. Then, based on the complexity of the environmental structure, two pose fusion strategies for two mainstream pose fusion models (Kalman filter and factor graph optimization) are proposed, which can adaptively fuse the poses estimated by LiDAR and vision online. Two state-of-the-art LiDAR-visual-inertial odometry systems are selected to deploy the proposed environmental structure perception-based adaptive pose fusion method, and extensive experiments are carried out on both open-source data sets and self-gathered data sets. The experimental results show that environmental structure perception-based adaptive pose fusion method can effectively perceive the changes in environmental structure and execute adaptive pose fusion, improving the accuracy of pose estimation of LiDAR-visual-inertial odometry in environments with changing structures.

Keywords

Introduction

Autonomous navigation of unmanned vehicles such as Unmanned Ground Vehicle (UGV) and Unmanned Aerial Vehicle (UAV) in unknown environments has always been a major challenge in robotics. 1 Localization is the foundation for unmanned vehicles to achieve autonomous navigation, and the accuracy of vehicles’ poses determines the completed quality of the tasks assigned to them. 2 Due to the difficulty in obtaining stable and accurate satellite positioning signals in most unknown environments, the sensor-fusion odometry technology based on LiDAR, vision, and Inertial Measurement Unit (IMU) has gained attention of researchers in recent years. Utilizing the pose estimations from the measurement models of different sensors such as LiDAR point cloud registration, 3 visual bundle adjustment, 4 and IMU pre-integration, 5 LiDAR-visual-inertial odometry can provide stable relative pose transformation for unmanned vehicles under limited satellite positioning signals. In addition, since all these sensers of LiDAR-visual-inertial odometry are installed on the vehicle’s body, it enables rapid deployment of devices for vehicle’s pose estimation at real time in unknown environments.

Although LiDAR-visual-inertial odometry combines the advantages of LiDAR odometry, visual odometry, and inertial information for pose estimation in various scenarios, it also introduces noise from multiple sensors’ measurements, resulting in significant uncertainty in the output fused pose. 6 Therefore, how to eliminate sensors’ measurement noise to minimize the uncertainty of the fused pose has always been the primary consideration for pose fusion in LiDAR-visual-inertial odometry. Existing pose fusion methods are mostly based on Kalman filter 7 –10 and factor graph optimization. 11 –13 R2LIVE 10 is a LiDAR-visual-inertial odometry built upon Kalman filter, it models the poses estimated by visual measurements 14 and LiDAR measurements 15 as normal distributions with different uncertainties, and the covariance matrix is used to represent the uncertainties of the poses. The fusion process of poses can be seen as the fusion of multiple normal distributions, and the optimization goal is to maximize a posterior probability of the normal distribution of the final fused pose. LVI-SAM 13 is a typical factor-graph-based LiDAR-visual-inertial odometry, the poses from visual-inertial odometry 14 and LiDAR-inertial odometry 16 are abstracted as items in factor graph, the specific forms of these items are represented by nodes or edges with different weights in the factor graph, the nodes represent the fused pose at different instants, and the edges represent the pose constraints introduced into the odometry system by sensors’ measurements. The factor graph is solved in the way of nonlinear optimization to achieve global optimization to estimate the fused pose.

No matter based on filtering method or graph optimization method, the existing pose fusion relies on a priori perception of the environmental structure information to determine some parameters of the pose fusion model. Since these parameters often remain unchanged during the execution of the odometry system, the performance of the pose fusion model is limited in the variable environmental structures. The Kalman filter needs to predict and update the vehicle’s state vector according to the covariance matrix of pose’s uncertainty, and the covariance matrix is derived from the fixed sensor’s noise items which are calibrated in advance. Factor graph optimization needs to determine the weights of each node or edge, and these weights also need to be determined in advance according to engineering experience. However, in the unknown and unstructured environment, the environmental information is difficult to perceive a priori, and due to the degenerations of odometry and other factors caused by the change of environmental characteristics, 17,18 the quality of vehicle’s poses estimated by LiDAR point cloud and vision is diverse in different environmental structures. 19 Therefore, the performance of the sensor-fusion odometry system is more sensitive to the mathematical modeling and parameter configuration of the pose fusion model in unstructured environment than that in structured environment.

To solve the above problems, the existing methods use various optimization strategies to correct the degraded poses in LiDAR or visual odometry, for example, 20 automatically adjusts the sensor fusion mode of visual-inertial odometry according to the pose uncertainty 19 and shields the pose output of the degraded module of LiDAR-visual-inertial odometry through a degeneration perception strategy. 21 However, all these methods work after the corresponding pose is estimated, and preperception for environmental information and pose optimization cannot be realized.

To reduce the pose errors induced by the unexpected noises and inaccurate noise propagation models of different sensors, smooth variable structure filter (SVSF)-based estimation methods 22 –24 are leveraged to guarantee the robustness of vehicles’ poses from various sources. Since the key of SVSF to ensure robustness lies in the mandatory vehicle’s pose state switching between the upper and lower bound, the optimal accuracy of estimated poses cannot be reached. Though SVSF can be utilized in conjunction with Kalman filter 25 to improve the accuracy, the inappropriate smoothing boundary may lead to chattering effect. 26

Based on the above analysis, to quantitatively perceive the environmental structure and optimize the pose fusion module of the LiDAR-visual-inertial odometry in advance to enhance the accuracy and robustness of pose estimation in environments with changing structures, this article proposes ESP-APF, which is a novel adaptive optimization method of pose fusion based on environmental structure perception, and it includes the methods of environmental structure perception and the strategies of adaptive pose fusion. Figure 1 shows the schematic diagram of ESP-APF including several key steps. The main contributions of this article are summarized as follows. Since existing pose fusion models in LiDAR-visual-inertial odometry cannot respond to the change of vehicle’s pose quality induced by the unexpected environmental structural change, the capacity of perceiving the structure of environment in real time needs to be developed for the odometry system. In ESP-APF, a novel quantitative perception method of environmental structural complexity is proposed, and this method can accurately perceive the LiDAR point cloud/visual environmental structure by constructing and analyzing the specially designed LiDAR point cloud histogram and visual bag-of-words vector. The current mainstream pose fusion approaches rely on fixed noise propagation models or fixed pose weights to estimate the fused pose, which restrict the accuracy and robustness of poses estimated by LiDAR-visual-inertial odometry in environments with changing structures. In ESP-APF, two different adaptive pose fusion strategies for two mainstream pose fusion models (Kalman filter and factor graph optimization) are proposed based on the perceived environmental structural complexity, and it can adaptively fuse the poses estimated by LiDAR and vision online by the dynamic configuration of the noise items in Kalman filter and the pose weights in factor graph. ESP-APF is deployed on two state-of-the-art LiDAR-visual-inertial odometry systems with different pose fusion models, and experiments are conducted in open-source data sets and self-gathered data sets to validate the effectiveness of the proposed ESP-APF. The experimental results verify the improvement brought by ESP-APF on the accuracy of vehicle’s poses estimated by LiDAR-visual-inertial odometry, compared with the original odometry without ESP-APF, and the pose translational errors can be reduced around 15%–20% in the data sets with changing environmental structures under the effect of ESP-APF.

The schematic of the proposed ESP-APF. ESP-APF: environmental structure perception-based adaptive pose fusion method.

Overview of the proposed method

The framework of the proposed ESP-APF is shown as Figure 2, and the basic working process of the proposed pose fusion method ESP-APF and the organization of this article are introduced briefly as the following paragraphs.

The framework of the proposed ESP-APF. ESP-APF: environmental structure perception-based adaptive pose fusion method.

Firstly, the visual images and the LiDAR point clouds are taken for the perception of environmental structure, and two quantitative perception methods are designed for vision and LiDAR point cloud in the third section. (1) For visual environmental structure, the complexity calculation method based on visual bag-of-words vector 27 is proposed. The basic visual bag-of-words vector is improved to be used to represent the complexity of the visual environmental structure. (2) For LiDAR point cloud environmental structure, the complexity calculation method based on 2D point cloud feature histogram 28,29 is proposed, a 2D histogram is constructed from the local point cloud map’s normal distributions to represent the environmental structure of point cloud, and the corresponding complexity is calculated based on the point cloud feature histogram.

Secondly, based on the quantitative perception of the two environmental structures, two adaptive pose fusion strategies are proposed in the fourth section to optimize the two mainstream pose fusion methods (Kalman filter and factor graph optimization). (1) For Kalman-filter-based pose fusion, the dynamic configuration of pose uncertainty is leveraged at the pose update stage of Kalman filter. (2) For graph-optimization-based pose fusion, the dynamic configuration of the weights of pose constraints is leveraged before the nonlinear optimization of factor graph is conducted.

Finally, the proposed ESP-APF is deployed on two different types of the state-of-the-art LiDAR-visual-inertial odometry systems (R2LIVE 10 and LVI-SAM 13 ), and the improvements of ESP-APF on the accuracy of the pose estimation of LiDAR-visual-inertial odometry are verified in the open-source data set and the self-gathered data set through a series of experiments in the fifth section.

Environmental structure perception

In this section, the method of environmental structure perception is introduced in detail, including the perception for visual environmental structure and LiDAR point cloud environmental structure.

Visual environmental structure perception

Construction of visual bag-of-words vector

Since visual bag-of-words can represent the type and quantity of visual features, it is often used to compare the similarity of two frames of images for place recognition. Here the traditional visual bag-of-words 27 is utilized to construct the vector used to perceive environmental structure with a series of improvements.

Firstly, the training images are used to construct the visual vocabulary, the detected visual features (ORB or SURF feature) are transformed into

Then, the current image

Visual environmental structural complexity calculation

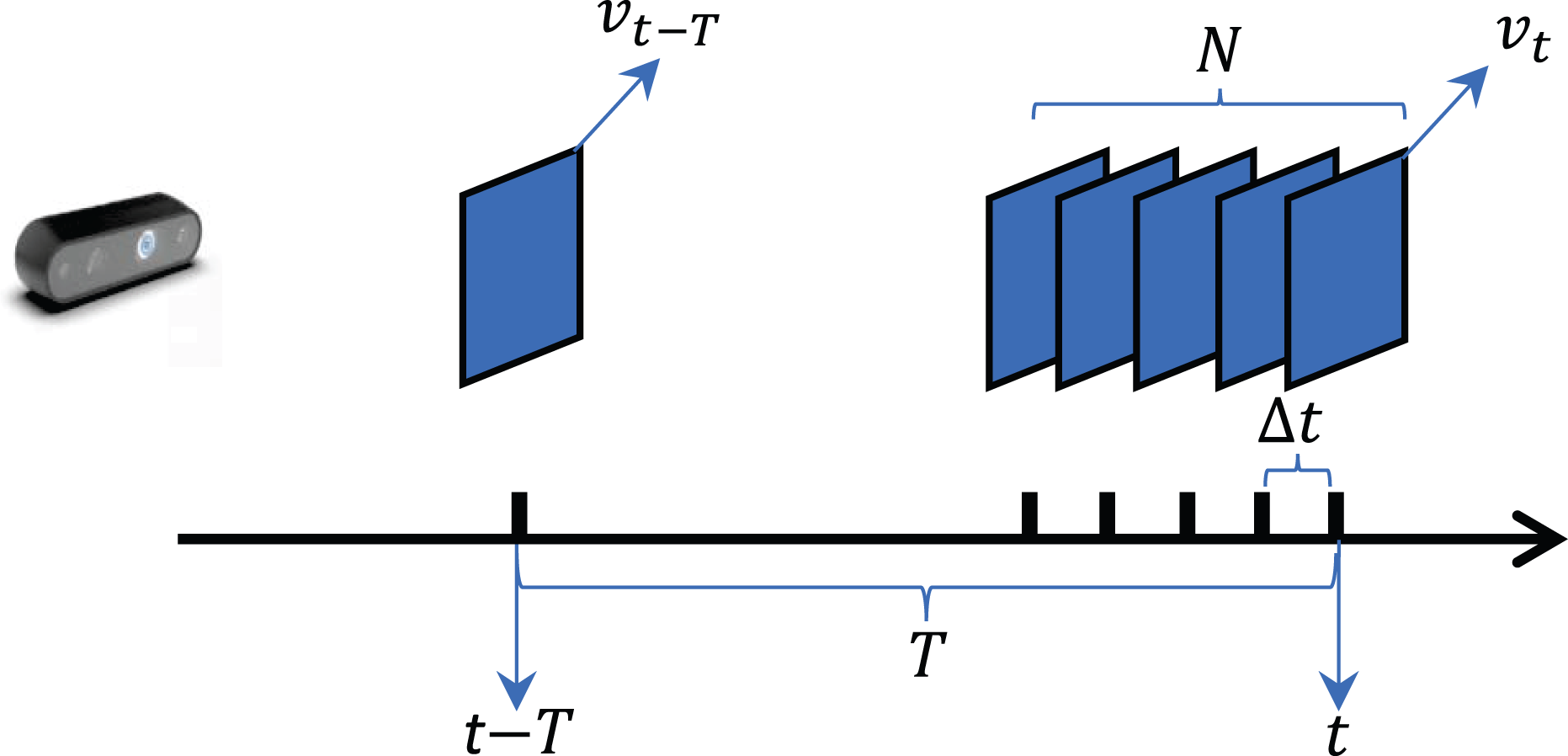

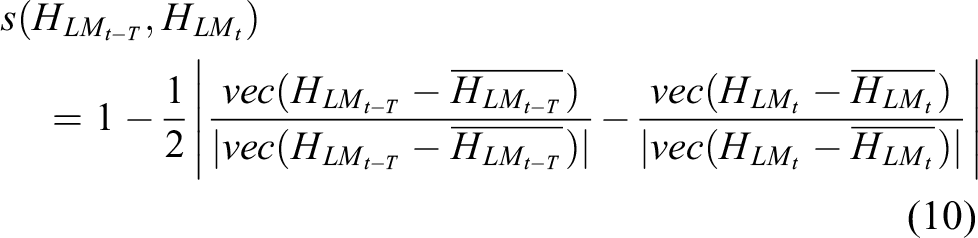

As shown in Figure 3, for two visual images received at a fixed time interval

The schematic of visual environmental structural complexity calculation.

For the sake of quantifying the changes in environmental structure accurately in the time period

Then the average similarity

If

LiDAR point cloud environmental structure perception

Construction of local point cloud map and 2D point cloud feature histogram

For

For each fitted normal distribution

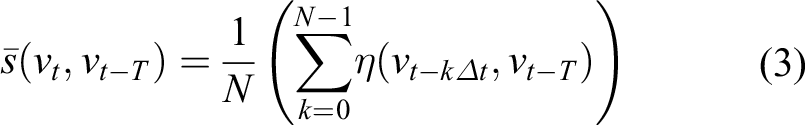

Then the rotational matrix

Given the plane normal vectors

Supposing the size of the histogram

LiDAR point cloud environmental structural complexity calculation

As shown in Figure 4, to quantify the changes in LiDAR point cloud structure accurately within the time period

The schematic of LiDAR point cloud environmental structural complexity calculation.

Like the visual environmental structure, if the similarity

Adaptive pose fusion strategy

The pose fusion strategy for LiDAR-visual-inertial can be divided into two main categories including Kalman filter and factor graph optimization. For different types of pose fusion models, this section introduces different pose fusion strategies of ESP-APF.

Adaptive pose fusion strategy for Kalman filter

Figure 5 shows the dataflow of the pose fusion process of LiDAR-visual-odometry based on Kalman filter. Inertial information acquired from IMU with high frequency is used for pose propagation at the prediction stage of Kalman filter, and pose fusion with LiDAR/visual measurements with low frequency is performed at the update stage of Kalman filter. In the pose update stage, the Kalman gain is calculated to obtain the final fused pose, since the calculation of Kalman gain is affected by the covariance of pose uncertainty derived from the LiDAR/visual measurement models of poses, and it can be seen as a pose weight configuration based on pose uncertainty. Therefore, adaptive pose fusion of ESP-APF is achieved by adjusting pose uncertainty derived from LiDAR/visual measurements.

The dataflow of LiDAR-visual-inertial pose fusion based on Kalman filter.

Since the noise items from LiDAR/visual measurements are added to the current pose uncertainty each time the pose update is performed and they are always fixed values in existing Kalman filters, the dynamic configuration of these noise items is conducted to adjust the pose uncertainty according to the complexity of environmental structure indirectly. We argue that the noise items in well-structured environment should have smaller values than the noise items in the unstructured environment.

The noise from visual measurement model is from the 2D pixel positions

In the proposed adaptive pose fusion strategy of ESP-APF for Kalman filter, the noise covariances

The noise

The noise covariance is configured dynamically based on

Adaptive pose fusion strategy for factor graph optimization

Figure 6 shows the dataflow of the pose fusion process of LiDAR-visual-odometry based on factor graph optimization. The poses estimated by IMU pre-integration, visual odometry, and LiDAR odometry are transformed into constraints represented by edges in the factor graph with different weights, and the optimized fused poses are given as nodes after the nonlinear optimization of factor graph is solved. In existing factor graph solvers (such GTSAM

31

and G2O

11

), the weight matrix

The dataflow of LiDAR-visual-inertial pose fusion based on factor graph optimization.

In the existing LiDAR-visual-inertial odometry with factor graph optimization such as the study by Shan et al.,

13

the weights of pose constraints from different sources are manually preconfigured based on engineering experience and remain static throughout the whole working process of the odometry system. For the purpose of adaptive pose fusion, we apply dynamic configuration of weights of the constraints from LiDAR/visual odometry based on the complexity of environmental structure calculated in the third section. We argue that the weights of pose constraints in well-structured environment should have larger values than the weights in the unstructured environment. The adaptive pose fusion strategy of ESP-APF for factor graph optimization is designed as follows.

Experiment

Data set and experimental platform

To verify the proposed adaptive pose fusion method (ESP-APF) for LiDAR-visual-inertial odometry, the data set should include the raw LiDAR point cloud, visual image, and high-frequency inertial information synchronized by time stamp, and the covered environment of the data set should include enough environmental structural changes to reflect the effectiveness of this method. Therefore, the self-gathered data set containing scene-switching with different environmental structures is used for the following experiments; at the same time, the open-source KITTI data set 32 and the data set used in LVI-SAM 13 are also selected to expand the experimental scenarios. The basic information of these data sets is shown in Table 1.

Basic information of the data set used in the experiment.

The proposed ESP-APF is lightweight and does not require high performance for computing units, so low-cost computing platforms can be used for experiments. The hardware computing platform is an i5-9300 CPU with of 16 GB RAM, installed with Ubuntu 18.04 operating system and Robot Operating System (ROS) melodic.

Effectiveness evaluation of the environmental structure perception of ESP-APF

To verify that the proposed environmental structure perception method in the third section (the LiDAR point cloud environmental structural complexity calculated from the point cloud feature histogram, and the visual environmental structural complexity calculated from the visual bag-of-words vector) can accurately quantify the complexity of the surrounding environmental structure, experiments are carried out in the self-gathered data set. The self-gathered data set covers the process of vehicle’s crossing from unstructured environment (field) to structured environment (sidewalk), and the environmental structure has changed greatly during the process. Figure 7 shows the satellite map of the self-gathered data set and the vehicle’s trajectory evaluated by RTK-GPS, and the specific details of different environmental structures (LiDAR point cloud information and visual information) are also listed to show the change of environmental structure.

LiDAR point cloud and visual details of the self-gathered data set. The upper right pictures show the field scenario which is an unstructured environment, while the lower right pictures show the sidewalk scenario which is a structured environment.

Figure 8 visualizes the point cloud feature histogram and visual bag-of-words vector constructed in the corresponding scene in Figure 7, and the related parameters for the construction are listed in Table 2. In the structured region, the features of the surrounding environment are diverse, and the planes’ normal directions fitted from the normal distributions in the local point cloud map are also diverse, which are conducive to the pose estimation of the point cloud registration algorithm such as point-to-plane Iterative Closest Point (ICP). While in the unstructured region, the features of the surrounding environment are not diverse enough, and the normal directions of planes are concentrated in limited range, which may lead to the degradation of pose estimation. Through the intuitive representation of the point cloud feature histogram, it can be seen that the histogram can well represent the structural characteristics of the surrounding environment. Similarly, the visual bag-of-words vector constructed in the structured environment contains more types and amounts of visual words than that in the unstructured region, which presents a more diverse and complex visual environment structure, and it is also conducive to pose estimation based on visual feature matching and bundle adjustment.

Point cloud feature histogram and visual bag-of-words vector constructed in the corresponding scenarios of Figure 7.

Parameter configuration for environmental structural complexity perception.

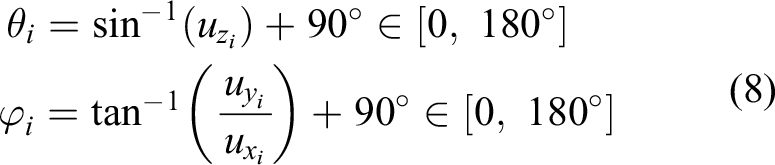

Figure 9 shows the changing process of point cloud/visual environmental structural complexity along with the vehicle’s trajectory, and the specific value of the complexity calculated in different scenarios are also included. The complexity of point cloud environmental structure (calculated in equation (11)) is derived from the standard deviation of point cloud feature histogram, and it represents the average difference of the quantities of planes with different normal directions in a local point cloud map, reflecting the diversity of planes’ normal directions. The complexity of visual environmental structure is calculated in equation (4) using the standard deviation of the visual bag-of-words vector, and it represents the average difference of the quantities of different types of visual words contained in a visual image, reflecting the diversity of visual features. It can be seen from Figure 9 that in the fields with open planar areas and few geometric features, both the complexities of the point cloud environmental structure and the visual environmental structure are lower than those calculated in the sidewalk with rich features, indicating that the environmental structure perception method of ESP-APF can accurately provide continuous indicators to abstract the complexity of the environmental structure. This method is not only in line with human’s intuitive understanding of the environmental structure but also indirectly indicates the quality of poses estimated by LiDAR/visual odometry, providing a priori basis for pose fusion of LiDAR-visual-inertial odometry.

The environmental structural complexity calculated in self-gathered data set. The color of the trajectory in (a) and (b) is utilized to represent the complexity of the environment structure, the four points A, B, C, and D are utilized to show the type of the scenarios in the data set, the trajectory between A and B is in the field (unstructured area, duration 172 seconds, distance 164 meters), while the trajectory between C and D is in the sidewalk (structured area, duration 167s, distance 186 m), (c) and (d) show the distributions of the values of environmental structural complexity calculated in different scenarios using the form of boxplot.

Effectiveness evaluation of the adaptive pose fusion strategy of ESP-APF

Based on the complexity of point cloud/visual environmental structure derived from the point cloud feature histogram and visual bag-of-words vector, different pose fusion strategies are proposed in ESP-APF to adapt to the mainstream pose fusion framework including Kalman filter and factor graph optimization. To verify the effectiveness of the pose fusion strategy proposed in “Adaptive pose fusion strategy for Kalman filter” section on improving the pose estimation performance of LiDAR-visual-inertial odometry based on Kalman filter, the strategy was applied to R2LIVE 10 system for experimental evaluation. To validate the pose fusion strategy proposed in “Adaptive pose fusion strategy for factor graph optimization” section on improving the pose estimation performance of LiDAR-visual-inertial odometry based on factor graph optimization, the strategy is applied to LVI-SAM 13 system for experimental evaluation. To better verify the pure effectiveness of the proposed method, loop-closure modules are disabled in the following experiments.

Ablation study of ESP-APF for the pose fusion strategy for Kalman filter

In this experiment, the pipeline of pose fusion in R2LIVE is utilized, and the pose estimations from LiDAR measurement (point-to-plane error), visual measurements (visual feature reprojection error), and inertial information (IMU pose propagation) are fused through the iterative error state Kalman filter framework. The pose fusion strategy of ESP-APF for Kalman filter introduced in “Adaptive pose fusion strategy for Kalman filter” section is applied as an extra functional module of R2LIVE. The noise items are dynamically configured according to the complexity of the environmental structure to adjust the pose uncertainties adaptively, so as to realize the adaptive pose fusion. Ablation study of adaptive pose fusion strategy is performed on the data set mentioned in “Data set and experimental platform” section. We select some representative data sets and divide these data sets into two parts. One part contains obvious changes of environmental structures, including KITTI-00, KITTI-09, LVI-SAM-Jackal and self-gathered data set. KITTI-00 and KITTI-09 contain scene-switching from urban roads to rural roads, LVI-SAM-Jackal contains a variety of unstructured scenes (forests, fields, lawns, and asphalt roads), and the self-gathered data set includes the changes from unstructured environment (field) to structured environment (sidewalk). The other part contains a relatively simple environmental structure, including KITTI-01, KITTI-10, and LVI-SAM-Handheld, where KITTI-01 is a highway scene, KITTI-10 is a residential road, and LVI-SAM-Handheld is a field. The detailed configuration of related parameters of the adaptive pose fusion strategy is shown in Table 3.

Parameter configuration of adaptive pose fusion strategy for Kalman filter.

The average sequence translational errors and rotational errors of the vehicles’ poses obtained in the ablation study are shown in Table 4, these indicators are calculated using the KITTI odometry evaluation metric,

26

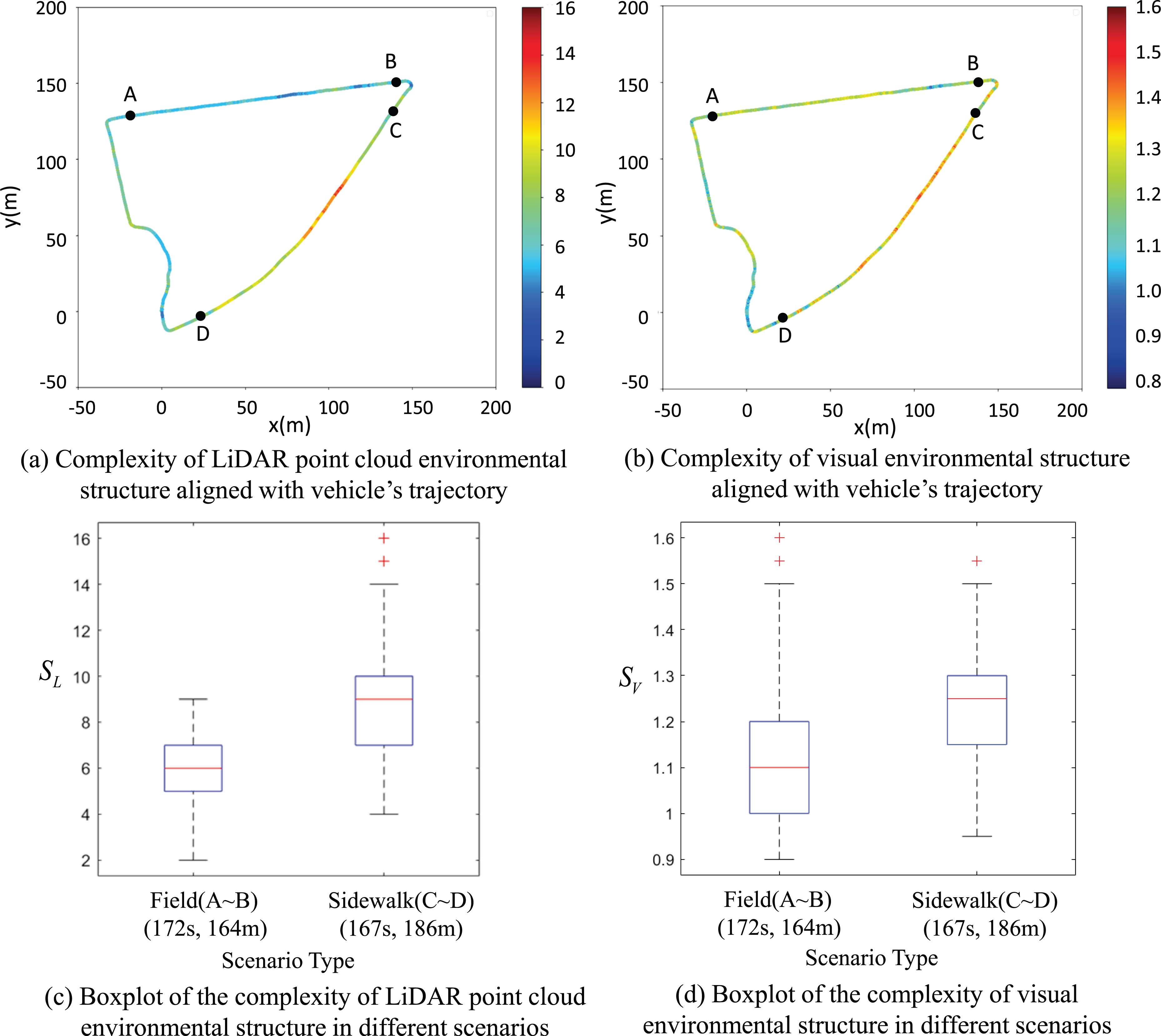

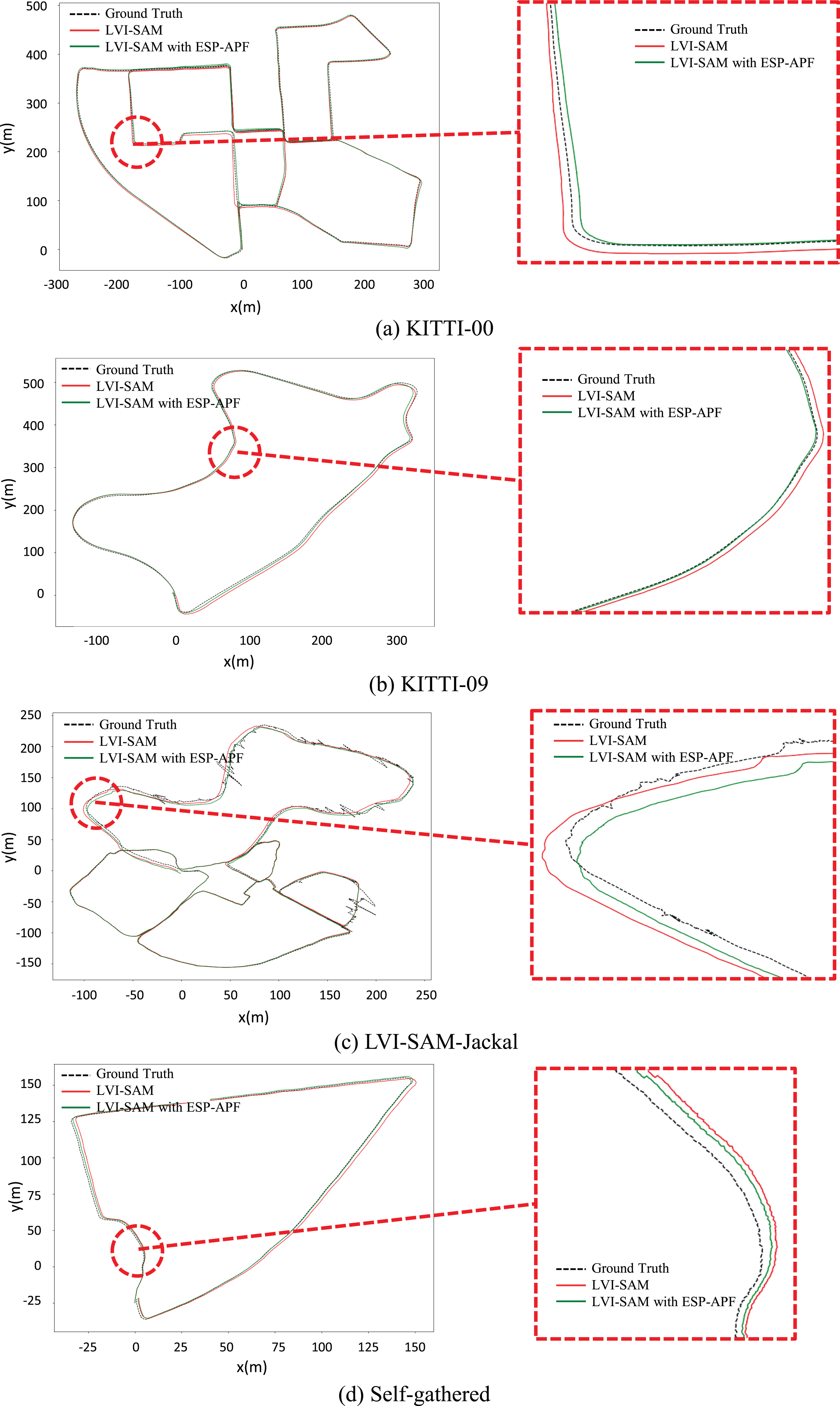

and they are represented as the Root Mean Square Error of pose errors compared with GPS-RTK ground truth. It can be found from the experimental results that in the data sets with relatively simple environmental structures, the improvement of pose accuracy brought by the proposed adaptive pose fusion strategy of ESP-APF is relatively limited. Taking the average translational errors as an example, in KITTI-01, KITTI-10, and LVI-SAM-Handheld, compared with the original R2LIVE, the average translational errors achieved by R2LIVE with ESP-APF are reduced by 7.50%, 6.85%, and 8.30%, respectively. However, in the areas with large changes in environmental structure, the proposed adaptive pose fusion strategy of ESP-APF can significantly improve the pose estimation accuracy of R2LIVE. In KITTI-00, KITTI-09, LVI-SAM-Jackal, and self-gathered data sets, the average translation errors are reduced by 16.05%, 14.89%, 16.31%, and 15.10%, respectively, and the pose trajectories estimated in these data sets are shown in Figure 10. The pose trajectory after the optimization of ESP-APF is more consistently aligned with the GPS-RTK ground truth. The average rotational error also shows greater improvement in the data sets with changing environmental structures than that in the data sets with simple environmental structures. To better demonstrate the overall improvement of ESP-APF on Kalman-filter-based pose fusion model, Figure 11(a) and (b) shows the changing process of the adaptive parameters (the items of the noise covariance matrices

Accuracy evaluation of poses estimated by different methods.

ESP-APF: environmental structure perception-based adaptive pose fusion method.

The pose trajectories estimated by the ablation study on the adaptive pose fusion strategy for Kalman filter in parts of the data sets. The enlarged pictures on the right show the details of the trajectories in the regions within the red circles of the trajectories on the left. The fluctuation of ground truth of LVI-SAM-Jackal is due to the block of GPS signal.

The changing process of the adaptive parameters in R2LIVE with ESP-APF and APEs of the poses estimated by R2LIVE and R2LIVE with ESP-APF in self-gathered data set. The four time instants A, B, C, D in (a), (b), and (e) indicate the types of the scenarios the same as Figure 9 (the duration between A∼B is in unstructured area, the duration between C∼D is in structured area). The continuous APEs are mapped onto the pose trajectory using the color bar in (c) and (d), the APEs aligned with duration are shown in (e), and the unit of APE is m. APE: absolute pose errors; ESP-APF: environmental-structure-perception-based adaptive pose fusion.

Ablation study of ESP-APF for the pose fusion strategy for factor graph optimization

The pipeline of LVI-SAM’s pose fusion framework is leveraged to validate the effectiveness of the pose fusion strategy of ESP-APF for factor graph optimization, and the pose constraints from LiDAR odometry, visual odometry, and IMU pre-integration are fused into the factor graph for optimal pose estimation. The pose fusion strategy introduced in “Adaptive pose fusion strategy for factor graph optimization” section is applied as an extra functional module of LVI-SAM, and the weights of LiDAR/visual pose constraints are dynamically configured to realize adaptive pose fusion. The same data sets in the last section are selected for the ablation study of the pose fusion strategy for factor graph optimization. The detailed configuration of related parameters of the adaptive pose fusion strategy are shown in Table 5.

Parameter configuration of adaptive pose fusion strategy for factor graph optimization.

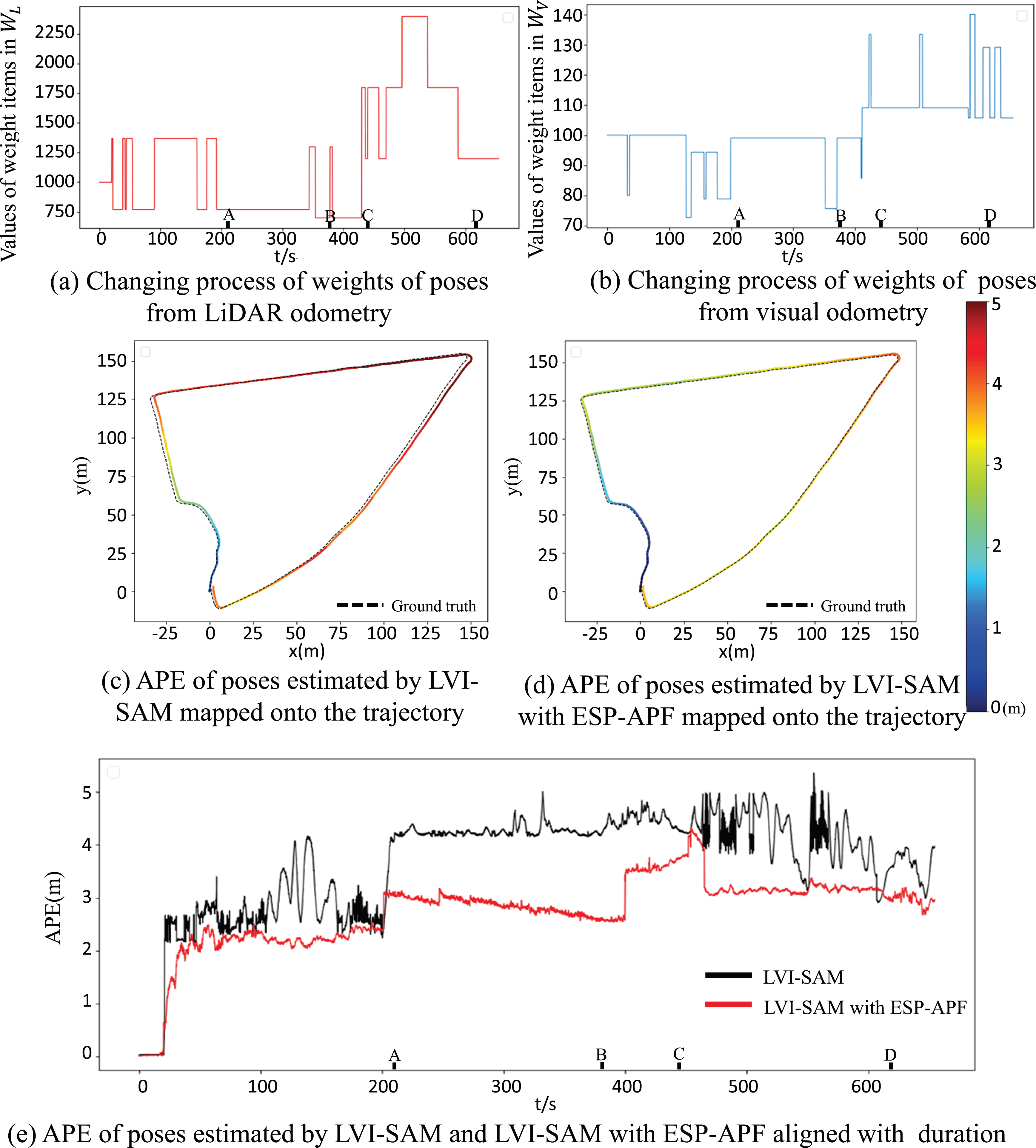

The related pose translational and rotational errors are shown in Table 4. In the data sets with simple environmental structures, compared with the original LVI-SAM, the adaptive pose fusion strategy using dynamical weights of pose constraints can reduce the average translational errors of poses by 4.93%, 9.09%, and 8.39% in KITTI-01, KITTI-10, and LVI-SAM-Handheld, respectively. In the data set with changing environmental structures (KITTI-00, KITTI-09, LVI-SAM-Jackal, and self-gathered data set), the average translational errors are reduced by 15.38%, 16.67%, 18.77%, and 18.41%, respectively, which is more significant for the reduction of pose translational errors. The pose trajectories of the ablation study in parts of the data sets are shown in Figure 12, and the trajectories with ESP-APF are better aligned with GPS-RTK ground truth. Figure 13(a) and (b) shows the changing process of the adaptive parameters (the items of weight matrices

The pose trajectories estimated by the ablation study on the adaptive pose fusion strategy for factor graph optimization in parts of the data sets. The enlarged pictures on the right show the details of the trajectories in the regions within the red circles of the trajectories on the left. The fluctuation of ground truth of LVI-SAM-Jackal is due to the block of GPS signal.

The changing process of the adaptive parameters in LVI-SAM with ESP-APF and APEs of the poses estimated by LVI-SAM and LVI-SAM with ESP-APF in self-gathered data set. The four time instants A, B, C, and D in (a), (b), and (e) indicate the types of the scenarios the same as Figure 9 (the duration between A∼B is in unstructured area, the duration between C∼D is in structured area). The continuous APEs are mapped onto the pose trajectory using the color bar in (c) and (d), the APEs aligned with duration is shown in (e), and the unit of APE is m. APE: absolute pose errors; ESP-APF: environmental structure perception-based adaptive pose fusion.

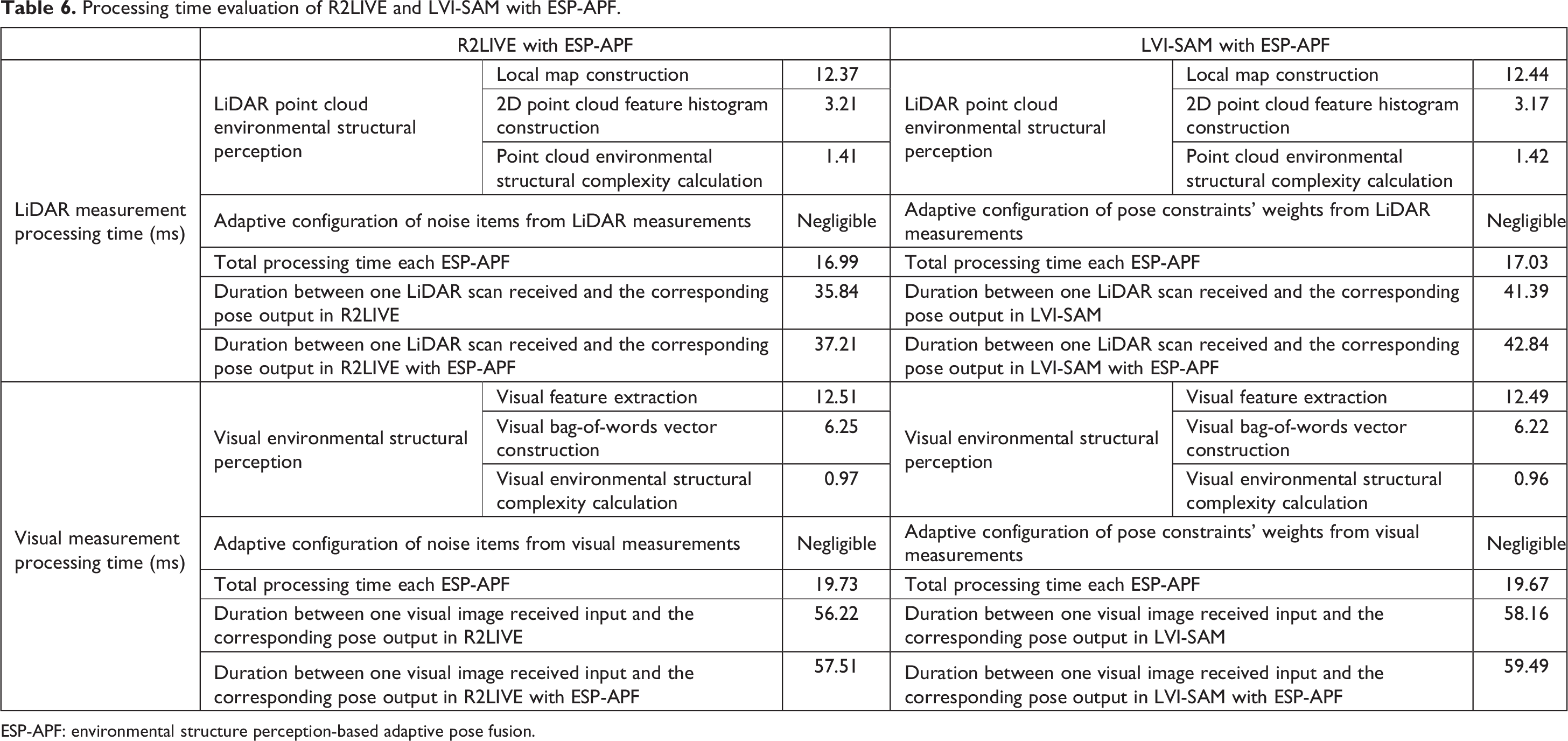

Processing time evaluation

Since ESP-APF works as an extra functional module (see Figure 2) in LiDAR-visual-inertial odometry, the computing cost of ESP-APF needs to be as low as possible to meet the real-time requirement of pose estimation. In this experiment, the time cost of the key phrases of ESP-APF is evaluated and analyzed to validate that it is a lightweight module assisting pose fusion for LiDAR-visual-inertial odometry.

Utilizing the self-gathered data set and the experimental platform in “Data set and experimental platform” section, the detailed average processing time of the key modules of ESP-APF and the selected two odometry systems (R2LIVE and LVI-SAM) is listed in Table 6. From the table we can find that the average total time cost of ESP-APF for processing the dataflow from LiDAR measurements is around 17 ms, and the average total time cost of ESP-APF for the visual measurements is around 20 ms, and they are obviously shorter than the time costs of R2LIVE and LVI-SAM for estimating poses from LiDAR scans (R2LIVE is around 36 ms, LVI-SAM is around 41 ms) and visual images (R2LIVE is around 56 ms, LVI-SAM is around 58 ms), which validates that ESP-APF is a lightweight module assisting the original odometry systems. In addition, since ESP-APF works as an extra module of LiDAR-visual-inertial odometry, it runs in two single threads to process LiDAR and visual dataflows in parallel with low frequency, and the time costs will not be superposed too much to the original odometry system for pose estimation. For R2LIVE with ESP-APF, the average total processing time for LiDAR and visual measurement is 1.37 ms and 1.29 ms longer than the original R2LIVE, respectively. For LVI-SAM with ESP-APF, the average extra time consumption for LiDAR and visual measurement comparing with the original LVI-SAM is 1.45 ms and 1.33 ms, respectively. Besides, to compare the total time consumption of the proposed method with other state-of-the-art odometry systems, the average duration between sensor’s measurement and the corresponding pose output regardless of the sensor’s type is shown in Table 7, and VINS, 14 FAST-LIO, 15 and LIO-SAM 16 are considered as the comparative odometry systems. Even though the processing time of R2LIVE with ESP-APF and LVI-SAM with ESP-APF is a little larger than the original R2LIVE and LVI-SAM, the time consumption is still able to keep up with the pace of other comparative odometry systems. According to the above experimental analysis, the extra time consumption brought by ESP-APF is small enough to maintain the real-time performance of the odometry systems.

Processing time evaluation of R2LIVE and LVI-SAM with ESP-APF.

ESP-APF: environmental structure perception-based adaptive pose fusion.

Average processing time of sensor’s measurement in different state-of-the-art odometry systems

ESP-APF: environmental structure perception-based adaptive pose fusion.

Conclusion and future work

Based on the quantitative analysis of the LiDAR point cloud/visual environmental structure and the characteristics of two mainstream pose fusion models, this article proposes an ESP-APF for mainstream LiDAR-visual-inertial odometry. Through experimental analysis, the proposed environmental structure perception method of ESP-APF can accurately quantify the visual structure and point cloud structure of surrounding environment, providing a priori environmental structure perception for the subsequent pose fusion to predict the quality of the poses estimated from LiDAR and vision. Under the effect of the adaptive pose fusion strategies of ESP-APF, the enhanced accuracy of pose estimation can be achieved in the environment with changing environmental structures, endowing the odometry with the ability of adaptive pre-optimization for pose fusion according to the environmental structure. In addition, from the perspective of system level of LiDAR-visual-inertial odometry, the proposed ESP-APF has universality and decoupling, which implies that it can be easily migrated and adapted to various LiDAR-visual-inertial odometry systems as a separate functional module, providing accurate and robust pose estimations for the mobile vehicles working in the unknown and challenging environments with changing structures.

To further enhance the performances of pose estimation of sensor-fusion odometry systems and expand the application scenarios, the future work may consider adding new types of sensors such as radar and thermal-infrared camera and developing more sophisticated pose fusion techniques. Since filter-based pose fusion models such as Kalman filter, particle filter, and SVSF have their own advantages in advancing the accuracy, robustness, and stability of the estimated vehicle’s pose, the combination of these models also needs to be developed to fuse the multisource heterogeneous data from different sensors to trade-off the overall performance of sensor-fusion odometry. What’s more, our future works will also revolve around the adaptive technique in sensor-fusion odometry by extracting semantic environmental information using machine-learning methods toward complicated and changing environments.

Footnotes

Authors’ contributions

Conceptualization: ZZ; methodology: ZZ; software programming: ZZ and CL; data curation: CL and WY; validation: ZZ, CL, and WY; writing – original draft: ZZ, CL, and WY; writing – review and editing: CL and JS; supervision: JS and DZ.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research and/or authorship of this article: This research was supported by the National Key R&D Program of China, No. 2022YFC3320800 and Zhejiang Provincial Key R&D Plan of China, No. 2021C01040.