Abstract

A key component lacking in the most humanoid grasping type robot is its ability to accurately predict what it is holding. This article presents a new function to the robotic hands such as vision-based shape analysis and tactile sensing–based object identification. The vision-based shape analysis uses contour approximation–based algorithms to distinguish between soft and hard objects by comparing the contours. Tactile sensors attached to the fingertip of the robotic hand can grasp objects and predict the hardness and softness of the grasped object by analyzing the pressure data. An algorithm to control the robotic hand and acquire pressure and vision data is presented. With these added functions to the hard robotic hand, it is ideally suited for the control of active prosthesis and other engineering applications where delicate handling of the object is needed. The results show that hard objects have a sharper pressure slope compared to soft objects. Analyzing the pressure values from the touch surface was used to predict the hardness/softness of the object. The contour comparison of soft/hard objects which were grasped under the same conditions had different patterns that can be used to differentiate between soft and hard objects.

Introduction

The dexterous robotic hand is a necessity of humanoid robots to carry out various functionalities. A robotic hand is generally thought to look and function like human hands. However, it is a challenging task to design and control it to mimic human hand functions. Human hands are the most articulated parts of the human body and grasping is common but an important gesture that humans use when interacting with the surrounding objects. 1 Research in grasping robots has slowly moved from handling objects in a structured environment to manipulating objects in an unstructured environment. A number of publications have shown the possibilities of the soft robotic gripper to hold the object and identify them.2–4

Since the first full-scale humanoid intelligent robot WABOT-1 completed in 1972, 5 researchers have been trying to develop robotic hand resembling human hand in shape and dexterity.

The main requirements for grasping robots are to detect the object and make safe manipulation by deciding where to grasp the object. This is a formidable challenge for the robotic system because of complex actuation, sensing, and control. 6 Since it requires implementation of the sophisticated sensor network, control, and complex deep learning model, existing grasping robots have limited provision of determining the texture of the surface and recognizing the physical properties of the object grasped.7,8

Object hardness is generally measured by comparing the contact pressure and indentation depth of the contact object. However, different object geometries give rise to different contact forces, correlating both are complicated, and measuring the object shape to sufficient precision is difficult for most touch sensors. 9 Vision feedback has proven to be an important source of information for grasping and control.10,11 Although vision provides important information, it is not always enough, as its accuracy may be limited due to imperfect calibration. Tactile and pressure sensors can be used as additional sensors to improve grasping performance during grasp execution. 12 However, these sensors require precise contact point and slip conditions when used alone.

Bandyopadhyaya et al. 13 implemented a grasping system with a pressure sensor and machine learning approach which classified vegetables into ripe, moderate, and green. N Jamali 14 constructed an artificial finger incorporating strain gauges and polyvinylidene fluoride (PVDF) and used naïve Bayes, decision trees, and naïve Bayes tree to recognize relatively course textures. Luo et al. 15 presented an extensive review of tactile recognition of object properties and available tactile sensing technologies. Manickavasagan et al. 16 used computer vision to extract textural features from the monochrome images to classify dates based on hardness. Gao et al. 17 reported that combining visual and haptic data leads to superior performance in determining haptic properties.

Robots with object identification function are crucial in the systems that deal with uncertain and dynamic task environment, for example, grasping and manipulation of unknown objects, locomotion in rough terrains, and physical contacts with living cells and human bodies. They can find a place in application ranging from helping patients with haptic sensitivity impairments or getting various rock samples for geotechnical investigation for assisting in determining the tunnel excavation methods.

Even with a high volume of published papers at hand on grasping robots, there are very few published papers that have achieved integrated sensing, actuation, control, and computer vision.18–21

In this study, we present a robotic hand, actuated with servo motors, which is capable of grasping an object and determining the hardness and softness of the object using haptic sensors integrated into the robotic hand and computer vision. The advantages of the proposed methods are simple yet faster, cheaper, and more reliable than the other methods in identifying haptic properties of the object. The proposed method can find wider application in unstructured environments such as medical surgery, service robots, or product handling.

Model description

Robotic hand design

The InMoov robot 22 is the first life-size robot that can be produced by three-dimensional (3D) printing and its design is open to the public. The majority of the 3D parts used to design the robotic hand were adapted from InMoov. Some of the parts were redesigned for better accuracy and control, such as the pulley, the spring-loaded tendon, and the slot in the fingertip for inserting tactile sensors and alignment of thumb as shown in Figure 1.

A robotic hand with actuation mechanisms and MEMS pressure sensor.

Tactile sensor

Tactile sensors are important for measuring physical interaction with their environment such as contact and forces that are difficult to detect only by visual sense. The tactile sensors used in robots should be highly sensitive, of low cost, and easy to integrate into standardized manufacturing processes.23,24

Microelectromechanical system (MEMS) barometers are used as pressure sensors in cell phone and Global Positioning System (GPS) to measure altitude because they are produced in a large volume and are of low cost despite high performance. For this study, TakkTile strip was implemented which uses MPL115A2 sensors from Freescale Semiconductor Inc. (Austin, TX, USA). The chip consists of a MEMS diaphragm with a Wheatstone bridge, an instrumentation amplifier, a temperature sensor, a multiplexer, an analog-to-digital converter, and I2C bus. One of the features of the TakkTile strip is that the chip inside the strip requires only five connections for wiring it to a microcontroller to use, which enables users to easily connect multiple sensors at the same time. The detailed explanation about the sensor wiring is shown in Figure 2.

TakkTile sensor wiring diagram.

TakkStrip consists of five sensors end-to-end spaced at 8 mm, along with a “traffic cop” microchip that allows the entire strip to be accessed over I2C without address conflicts. 13 Two such sensors were placed at the tip of the thumb and index fingers as both fingers play a significant role in grasping the objects.

The compensated pressure output (

where

Control

Arduino is an open-source electronic platform based on easy-to-use hardware and software. The Arduino Uno is a microcontroller board based on the ATmega328P and developed by Arduino.cc. The board has 14 digital pins with 6 analog pins, and it can be easily programmed with Arduino IDE. Each finger of the robotic hand was actuated by MG996R servo motors, which were controlled by an Arduino microcontroller.

The servo motors have a native swing of 180 degrees. The tendon wires were arranged in such a way that the tip of the finger was connected to the motor pulley through the phalangeal bones.

TakkTile sensor characterization

The sensitivity of the cast sensors depends on the stiffness and thickness of the rubber used, the location of the load over the sensor, and the type of rubber used. 13 The rubber thickness of the cast sensor was found to be 2.41 mm which is different from the official documentation (3.5 mm). The experimental setup to determine the relation between the force and the sensor output value is shown in Figure 3.

TakkTile sensor sensitivity characterization setup.

The controlled external load was applied directly on the top of the cast rubber covering the sensor which was soldered to the “traffic cop” microchip. The load was applied incrementally and the corresponding stabilized sensor value was recorded. The experiment was continued until the sensor output value saturated even when the load was increased. Arduino Uno was used as an I2C interface between sensor and computer. The sensitivity measurements of the sensor showed a linear relation with the load as shown in Figure 4.

Sensor output values versus applied surface load for TakkTile sensor with 2.41 mm rubber thickness.

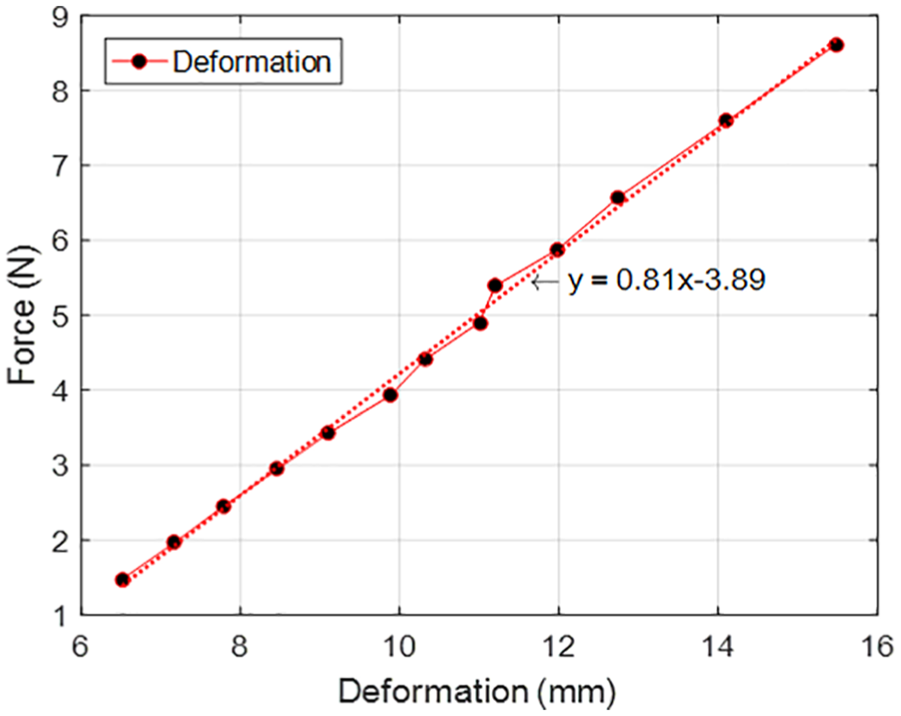

Spring constant determination

The KastKing Mega (80LB) eight-strand braid was used as tendons. In order to keep the tendon wires in tension, each tendon was spring loaded as shown in Figure 1. The stainless steel springs with an outer diameter of 3.1 mm and a length of 6.7 mm were used to keep the tendons in tension. However, the disadvantage of the spring-loaded tendon is that it could only bear the load as high as the stiffness of the spring.

Thus, an experiment was conducted to determine the stiffness of the spring using the standard experimental procedure. The experimental result is shown in Figure 5 and stiffness (

Spring constant experimental data.

System integration and testing

Test frame was designed to fix all hardware in the correct position and conduct the experiment with ease. The robotic hand was fixed to the test frame using the 3D printed bracket. It was then rotated 90 degrees for it to be in full supination with the thumb upward and the palm open. Three cameras were positioned in the

Experimental setup for robotic hand grasp, manipulation, and computer vision accessories.

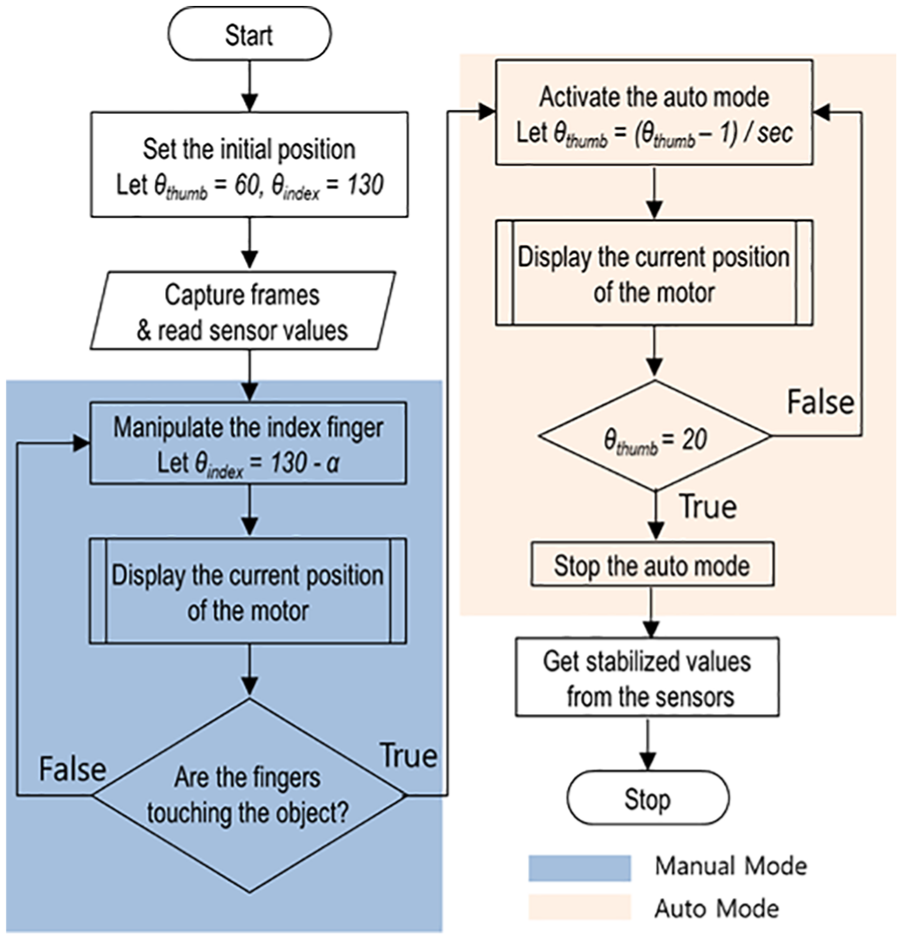

Two dedicated Arduino Uno were used each for sensor data acquisition and controlling the robotic fingers. An Arduino program (sketch) was developed to control the hand actuation in two modes: manual mode and auto-mode. In the manual mode, various motor speeds could be selected based on the keys pressed in the keyboard, while in the auto-mode the servo motor attached to the thumb was actuated by 1 degree per second until it reached 20 degrees (starting at 130 degrees), upon which the actuation stopped automatically. Although the servo motors have a native swing of 180 degrees, the total range for controlling servo motors was limited to only 130 degrees considering the tensile strength of tendon wires. Manual mode was primarily used to precisely control the robotic fingers such that the fingers would just touch the object at two points but with no contact forces transmitted as shown in Figure 7.

The fingers of the robotic hand just touching the object. The sensor is tangent to the object surface.

In this state, the orientation of the two fingers (index and thumb) is denoted by the angle (

An integrated algorithm for controlling finger motion.

Results and discussion

For our experiments, the grasping force was approximated based on the tactile sensor values. A hard plastic ball and a soft sponge ball were used as hard and soft objects for the experiments, respectively. A plastic hardball weighing 3 g and a sponge softball weighing 4.5 g used in this study have the outer diameters of ∅55.5 mm and ∅62.5 mm, respectively.

The tendon wire played a vital role in the grasping force. Dennerlein 25 studied finger external tip forces and the internal tendon force, and their results show that the ratio of tendon force to fingertip force reached as high as 7. This clearly shows that the tendon has a higher force than the fingertip force. In addition, tendon wires without any tension for adjusting mechanism for the robotic hand get loosened over time, and thus the tendon wires with and without the tension springs were compared for the grasping force.

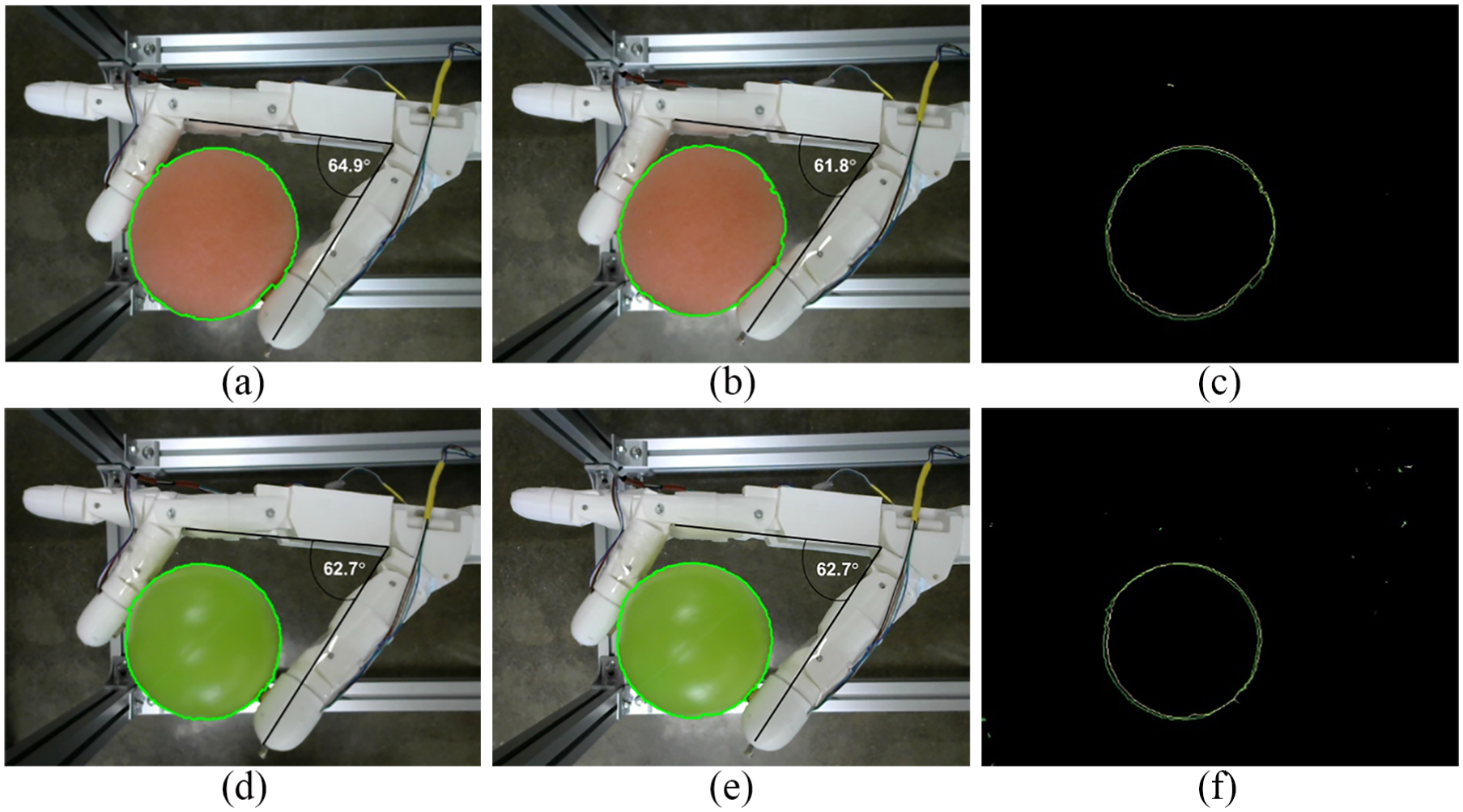

In our study, we took advantage of the vision and the tactile sensor time series data, which allowed elaborate information about the properties of the object being grasped. OpenCV (Open Source Computer Vision Library) in python programming environment was used to determine the softness/hardness of the object. OpenCV is a free and popular computer vision library. The camera attached to the test frame (

Experimental results with tendon wires only, first row corresponds soft sponge ball and second row corresponds hard plastic ball: (a, d) just held by finger, (b, e) fully grasped position and (c, f) contour plot comparison for each case.

Experimental results with tension spring loaded tendon wires; first row corresponds soft sponge ball and second row corresponds hard plastic ball: (a, d) just held by finger, (b, e) fully grasped position and (c, f) contour plot comparison for each case.

In Figures 9 and 10, the pink ball in the first row is a soft sponge ball, while the green ball on the second row is a hard plastic ball. Figure 9 shows the experimental results of the soft and hardballs grasped by the robotic hand with only tendon wire. The contour comparison of both touch position and grab position is plotted in green and yellow colors, respectively, on the third column.

It can be clearly seen from the contour plot comparison that the contour plot of the softball changes significantly, while the hardball has no visible contour profile change. In addition, the angle between the fingers (index and thumb) has also significantly changed in the case of softball; however, there is a trivial angle change in the case of hardball.

While Figure 10 shows the experimental result of the robotic hand actuated via the tendon wire with tension spring, the patterns are similar. However, it can be seen that the change in the contour is minimum in the case of tendon wire with tension spring. It is because the tension force, which is transmitted via a tendon wire, is limited to the stiffness of the spring used, leading the force to be reduced. The result matches well with the finding 21 of in vivo experiments in a human open carpal tunnel release surgery.

In addition to the OpenCV contour plot, the sensor output values were processed to determine the pressure applied to the fingertips. Although two tactile sensors were attached to both the index and thumb fingers, the sensor output values from the thumb finger were only taken because of two reasons: the limited freedom of degree of the index finger and the sensor sensitivity which was mainly affected by the contact point between the sensor and the object.

Figures 11 and 12 show the comparison plots of the sensor output for the hardball and the softball, respectively, with and without tension spring attached to the tendon wire. The sensor values plotted are taken from the beginning and end of the auto-mode because of the controlled grasping (1 degree/s). It can be seen that for a few seconds the sensor values decrease until the hand securely holds the ball, after which the sensor values steadily increase to reach the maximum sensor value after which it stabilizes. As shown in Figures 11 and 12, it takes about a minute for determining softness and hardness. However, the time to grasp and ungrasp might be different depending on the size and shape of the object being grasped and the initial position of the fingers.

Comparison of sensor output of the hardball when the tendon wire is loaded with/without tension spring.

Comparison of sensor output of the softball when the tendon wire is loaded with/without tension spring.

In both cases, the sensor value of hardball increased rapidly. The slope of the curve was approximated by drawing a straight line connecting the minimum and the maximum points for experimental results after the fingers securely grasp the objects.

Conclusion and future work

A hand robot, that is capable of grasping the object and predicting the softness and hardness of the object, was developed using tactile sensors and computer vision. Depending on the softness/hardness of the object, the robotic hand can be easily programmed to handle delicate and soft object without damaging it. However, this article is limited to only holding the static spherical objects. The limitation is because the tactile sensors were only placed on the tip of the finger. The study can be generalized by placing a matrix of sensors at various locations of the finger and palm that can predict more generalized grasping force and thus can accurately predict the softness and hardness of the object well.

A more compliance robotic hand, which can grasp various shapes in static and dynamic conditions, can be the subject for future research work. Similarly, simultaneous processing of vision and pressure sensor data by the feedback control system can give increased control and accuracy to apply proper contact and grasping force and avoid damage, for example, handling ripe fruits, which can also be the subject of future research.

In addition, the lighter robotic hand with improved tendon forces for grasping is possible using artificial muscles instead of bulky servo motors. Further improvement in the robotic hand can be achieved by integrating deep learning along with computer vision, which can correctly predict the shape and texture in addition to the softness/hardness of the object. Another dimension of improvement in the existing hand robot is its grasping frequency. Since the current robotic hand takes about 90 s to grasp and an equivalent time to ungrasp, making the grasping/ungrasping frequency higher will help current robotic hand find application in production and packaging job.

Footnotes

Handling Editor: Guanglei Wu

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (2017R1D1A1B03034437).