Abstract

What is the future of tactile robotics? To help define that future, this article provides a historical perspective on tactile sensing in robotics from the wealth of knowledge and expert opinion in nearly 150 reviews over almost half a century. This history is characterized by a succession of generations: 1965–79 (origins), 1980–94 (foundations and growth), 1995–2009 (tactile winter), and 2010–2024 (expansion and diversification). Recent expansion has led to diverse themes emerging of e-skins, tactile robotic hands, vision-based tactile sensing, soft/biomimetic touch, and the tactile Internet. In the next generation from 2025, tactile robotics could mature to widespread commercial use, with applications in human-like dexterity, understanding human intelligence, and telepresence impacting all robotics and AI. By linking past expert insights to present themes, this article highlights recurring challenges in tactile robotics, showing how the field has evolved, why progress has often stalled, and which opportunities are most likely to define its future.

Introduction

“Study the past if you would define the future.” (Quote by Confucius, Kong Fuzi ∼500 BCE)

Ever since the modern concept of a “robot” entered popular culture in the early 20th century, anticipation has steadily grown that industrial technology will culminate in machines with human-like abilities. Human dexterity, and arguably human intelligence, originated from the evolution of hands adapted to manipulating tools (Wilson, 1999). For that manipulation, the senses of touch and proprioception are essential to provide the force and geometric feedback needed for fine sensorimotor control of the fingers. Therefore, robots will also need these senses to attain human-like dexterity, motivating tactile robotics: the study, development, and use of tactile sensing for robotics.

For nearly half a century, there have been many excellent surveys of progress in tactile robotics, beginning with “Touch-Sensing Technology: A Review” (Harmon, 1980). Subsequently, new reviews were published on average every year or two until 2010, with progress over that period captured in the landmark article “Tactile Sensing – From Humans to Humanoids” (Dahiya et al., 2010). Reviewing has since proliferated, with 15 published in 2024 alone. Yet, even though some major progress has been reported, tactile robotics still does not seem to have transformed robot dexterity. Indeed, one authoritative review began with a wry comment: “Tactile sensing always seems to be a few years away from widespread utility” (Cutkosky et al., 2008). Therefore, it seems a good time to ask again: When will tactile sensing transform robotics?

How do you ask about the future? You can study the past. When studying tactile robotics, the past views of experts are valuable because many of their original motivations still hold. However, people tend to devalue older knowledge, especially when the technology appears new, such as robotics, and when the pace of change seems rapid. The aim of this article is to give voice to those experts, so that today’s researchers may better define the future.

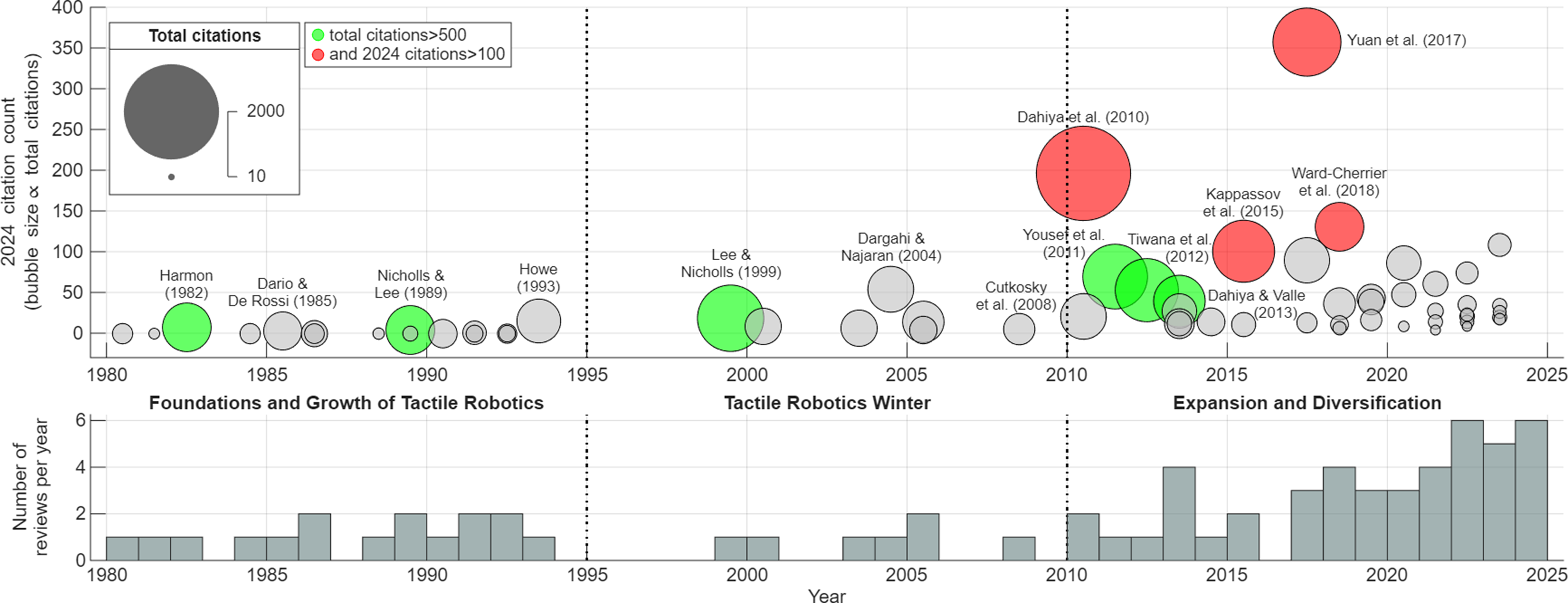

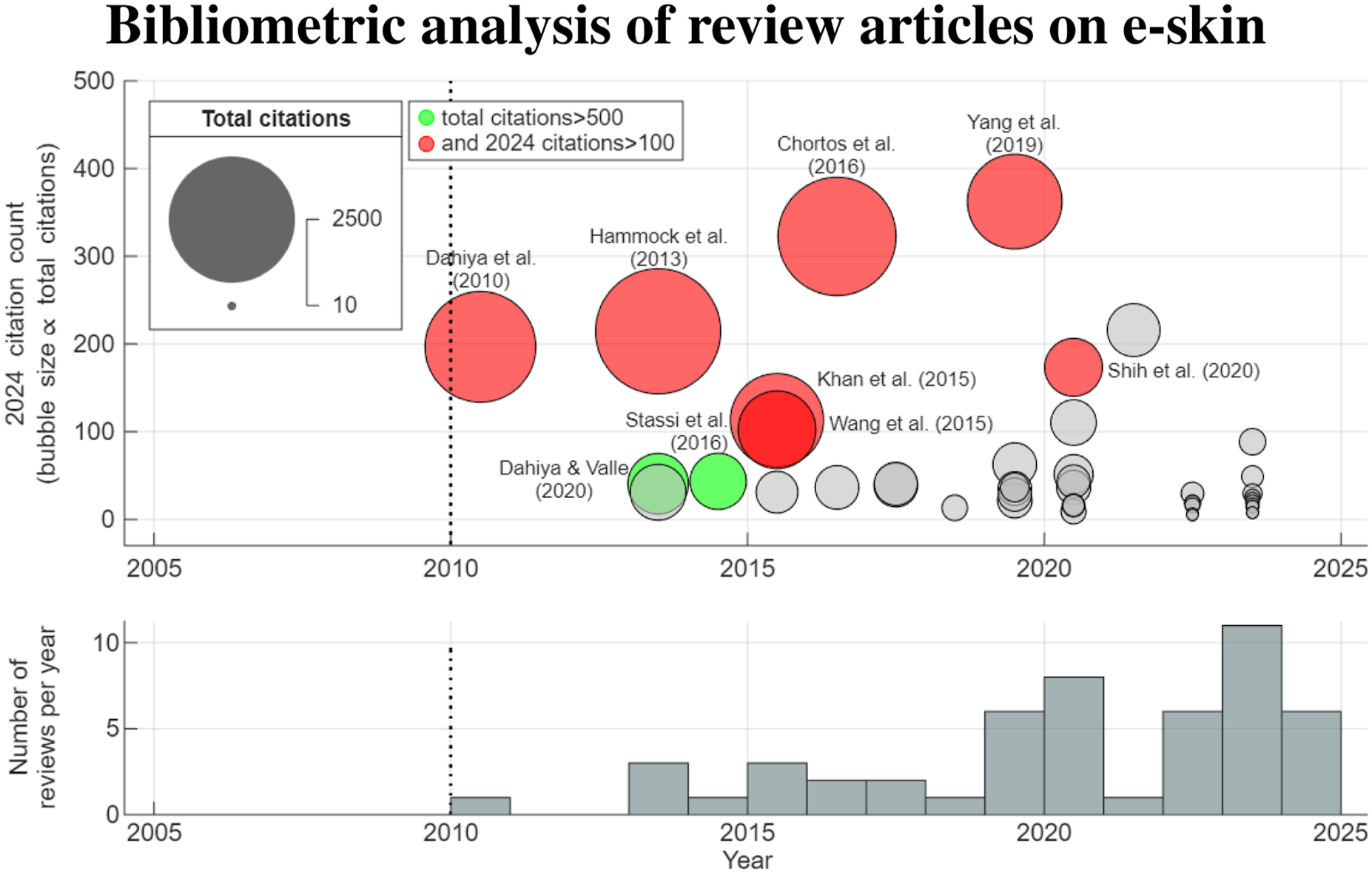

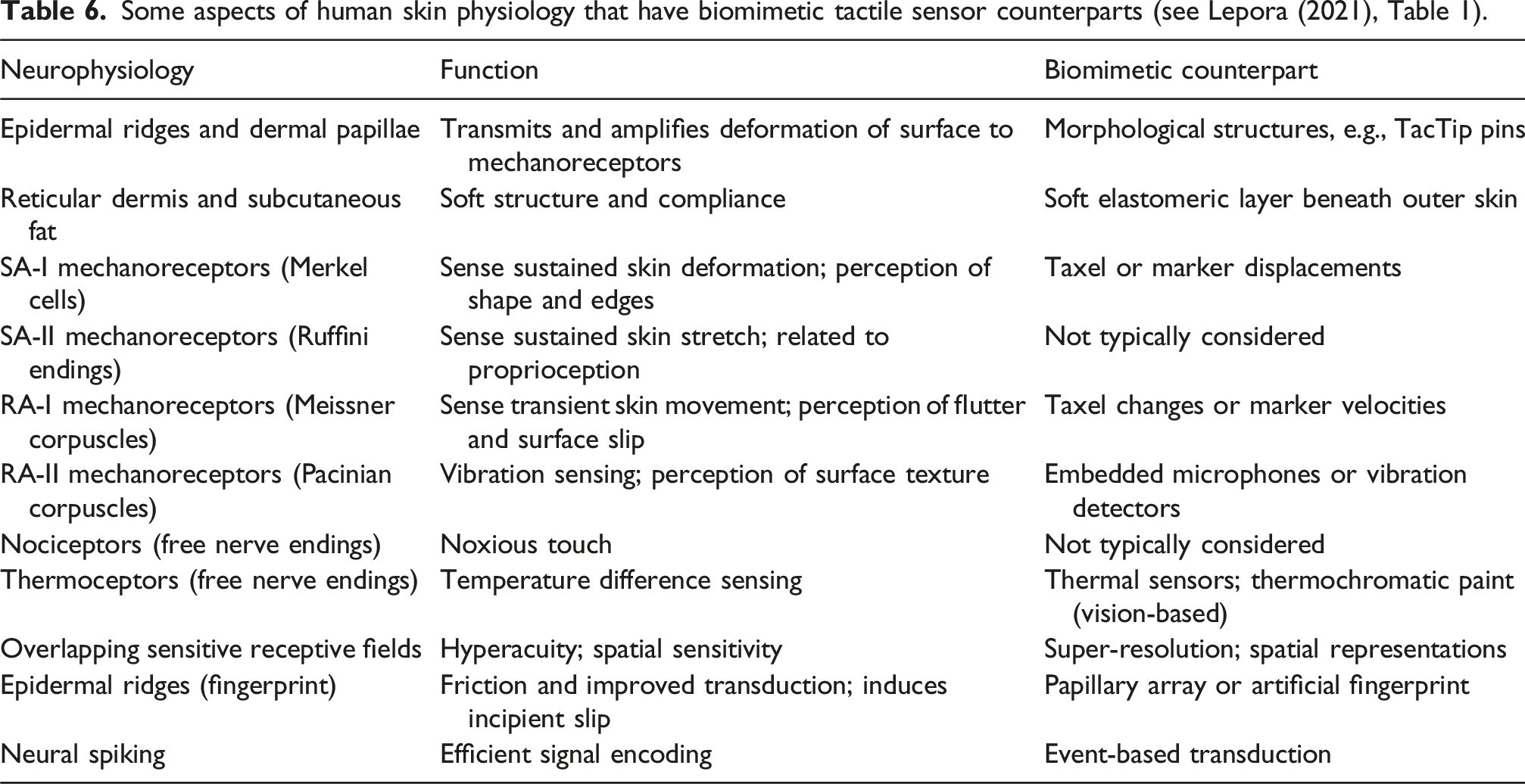

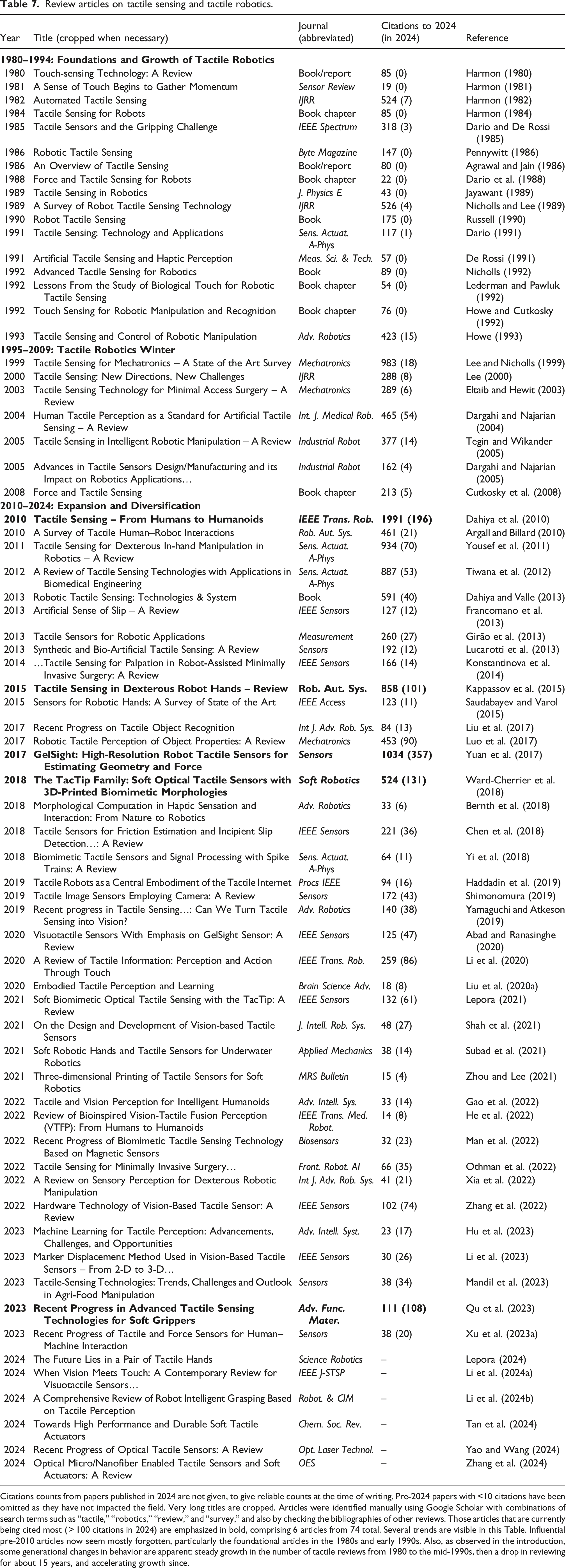

This article will provide a historical perspective on tactile robotics using the wealth of knowledge and expert opinion from past review articles: 69 on tactile robotics in Table 7 and another 54 on e-skins in Table 8 up to 2024. By visually representing their publication dates (Figure 1), one can immediately see trends in the steady growth of the number of tactile reviews from 1980 to the mid-1990s, then a drop in reviewing for about 15 years, and accelerating growth since. These changes in behavior suggest three distinct periods in the 45 years from 1980 to 2024, which we term historical generations (like societal generations, such as “Gen Z”). Bubble plot for the citation counts of review papers in tactile robotics (using the tabularized list of papers in Table 7). The bubble height is the number of citations in 2024 and the bubble size is proportional to the citation total up to 2024. Those with more than 500 citations are colored green, and those also with more than 100 citations in 2024 are colored red to indicate past and present impact. The expert opinion in those papers guides this historical perspective, with author names from key papers displayed.

The structure of this article is organized by these historical generations of tactile robotics. The first section covers the period 1965–1979: the origins of tactile robotics. The following two sections covers the periods 1980–1984 and 1985–1994: the foundations of a research field and growth of tactile robotics. The next section covers a period of slower progress from 1995–2009, termed: the tactile robotics winter. The penultimate section covers the recent period 2010–2024, termed: expansion and diversification. This separates into five distinct themes: e-skins, tactile robotic hands, vision-based tactile sensing, soft/biomimetic technologies, and the tactile Internet. Lastly, this article finishes by considering the future of tactile robotics in the next generation from 2025 onward. Initially, these five distinct themes may continue, then disruptive technological change affecting all robotics and AI is expected. In this, the original motivations retain their immediacy today: to enable human-like dexterity, better understand human intelligence, and implement telepresence.

Methodology

The tactile robotics research surveyed here was found with a review article search in Google Scholar, followed by a manual search of their bibliographies. Given the very large number of papers now being published in tactile robotics, it would be unfeasible for a human to collate information across the entire field by surveying individual research papers. Any such review could cover only a narrow area within the authors’ own interests. For that reason, reviews are treated here as the only source of information in this article. The exception is before the first review in 1980, when original articles and technical reports were used. However, academic publishing was very different in that era, with relatively few articles, many of which are difficult to find.

The details of the reviews were collated into tables with summary information such as journals, titles, and overall or recent citations (Appendix: Tables 7–9). This information is shown diagrammatically in Figure 1 (tactile robotics) and later in Figure 15 (e-skins) to indicate which have been influential and whose expert opinion should guide this historical perspective. Accordingly, author names of key articles are displayed on the plots, along with selected articles at important times in the evolution of tactile robotics that were close to the criteria we used to identify key articles. Several other trends became evident from Figure 1 that also helped guide the structure of this article.

Most prominently, there appear to be three distinct generations of tactile robotics research: an initial sustained period from 1980 to 1994, then a drop in output over 1995–2009, and rapid growth since 2010. It is interesting that these periods seem analogous to the generations of social change (“Gen X,” “Millenials,” “Gen Z”) that also occur every 15 years. In the author’s opinion, this similarity reflects that research is, of course, done by people whose attitudes to research are influenced by their society. In addition, technologies such as ubiquitous access to information on the Internet can underlie changes in both society and research culture.

Two other trends stood out when considering all tactile robotics reviews (see Figure 1). First, a tendency for early (pre-1995) reviews to now be forgotten, and second, the proliferation of reviewing since about 2018. These trends were accompanied by a change in who was writing the reviews. The earliest reviews tended to be written by recognized experts who presented their views with authority. This gradually shifted to students writing with an experienced supervisor, then more recently to new teams entering the research area.

The problem with these trends is that it is now very difficult to gain a complete perspective over the field in a way that was possible in the past. This can lead to important motivations for progressing tactile robotics being forgotten and overcapacity in popular areas of research, while fewer researchers work on the topics that may actually drive progress. By giving voice to past experts and placing those voices within both a historical and a modern context, this article aims to help improve those imbalances as the field enters a new generation of tactile robotics.

1965–79: Origins of tactile robotics

Like modern robotics that has many origin stories (Mason, 2012), so does tactile robotics. One origin story is shared with robotics: the development of teleoperator systems that began in the late 1940s, which led to servo-driven telemanipulators and the first tactile sensors to relay a sense of touch. Another origin is within 1960s AI, where early pioneers such as Marvin Minsky recognized the importance of human dexterity for understanding intelligence but faced insurmountable challenges from the technology of that time. The motivations for these early attempts remain insightful today and give a context for later research in tactile robotics.

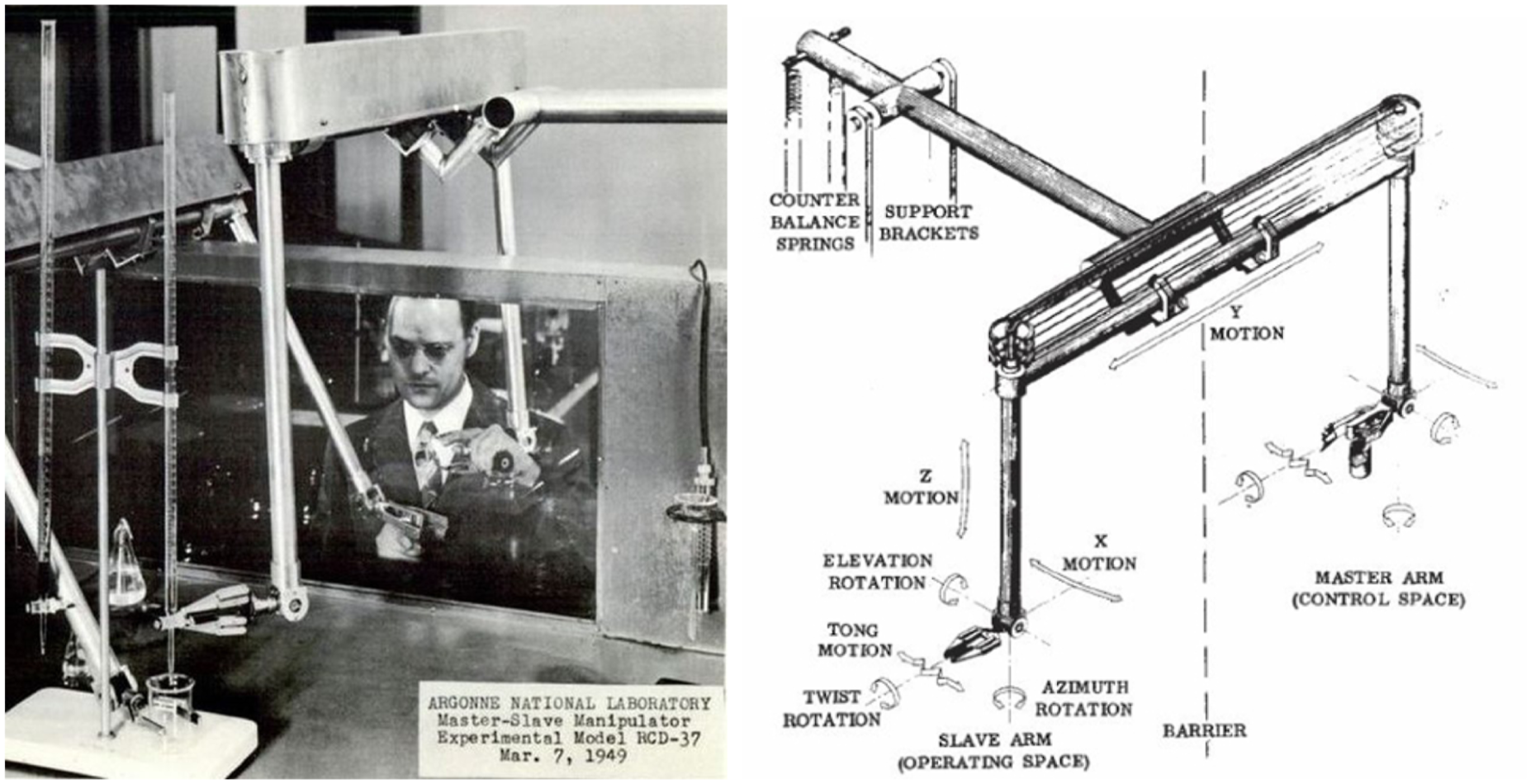

The Atomic Age following World War II created a need for teleoperator-controlled systems to handle radioactive materials. These early telemanipulators were purely mechanical, beginning with Raymond Goertz’s Model-1 Manipulator (1948) from Argonne National Laboratory, Illinois (Figure 2). A pair of mechanical arms with pinch grippers were coupled bilaterally through metal tape cabling so that a human operator could guide all 6 degrees of freedom of the gripper pose along with its closure. Due to mechanical coupling, these telemanipulators provided excellent force feedback to the operator through the cabling, and are also an early example of haptic feedback devices (Sheridan, 1997). Such telemanipulators achieved feats of physical dexterity that remain impressive a human lifetime later; for example, a film reel from the 1950s shows a manipulator placing a cigarette in a secretary’s mouth, striking a match, and lighting the cigarette (British Pathe Archives, 1957). Although the choice of task has not dated well, it shows a level of dexterity that remains impressive today. Left: Raymond Goertz demonstrating his mechanical telemanipulator. Right: Design of his ANL Model-1 manipulator: motions of the controlling and operating arms follow each other bilaterally. Images from University of Chicago Library.

Progress in the use of electric servomotors in the early 1950s led to telemanipulators in which the controlling and operating arms were connected electrically, rather than mechanically. The first of these was Goertz’s E-1 Manipulator (1954), which was also bilateral in that any difference in pose between the controlling and operating grippers drove opposing electric motor forces on the two arms. This mechanism gave force feedback on the operator’s hand that mirrored the forces exerted on the remote gripper (Sheridan, 1997). Although this ingenious device initiated much of modern telerobotics and haptics, electric motor feedback could not transmit forces to the operator as effectively as direct mechanical coupling (Minsky, 1980). Nevertheless, the advantages of electric servomotor-based systems for precision and control are the basis of almost all industrial robotics today (Gasparetto and Scalera, 2019).

The arrival of the space age with the 1957 launch of Sputnik and the 1958 formation of the National Aeronautics and Space Administration (NASA) gave a need for teleoperation over long distances. However, an issue was that the teleoperators would adopt a slow and cumbersome “move-and-wait” behavior to avoid instabilities in control with time delays. Ferrell and Sheridan (1967) addressed this problem by developing supervisory control where a human operator guides a semi-autonomous robotic system, later called a telerobot (Sheridan, 1989; Hokayem and Spong, 2006). Supervisory control made robot teleoperation useful in practice, by helping resolve issues such as limited sensory feedback and enabling faster-than-human reactions to enable smooth contact.

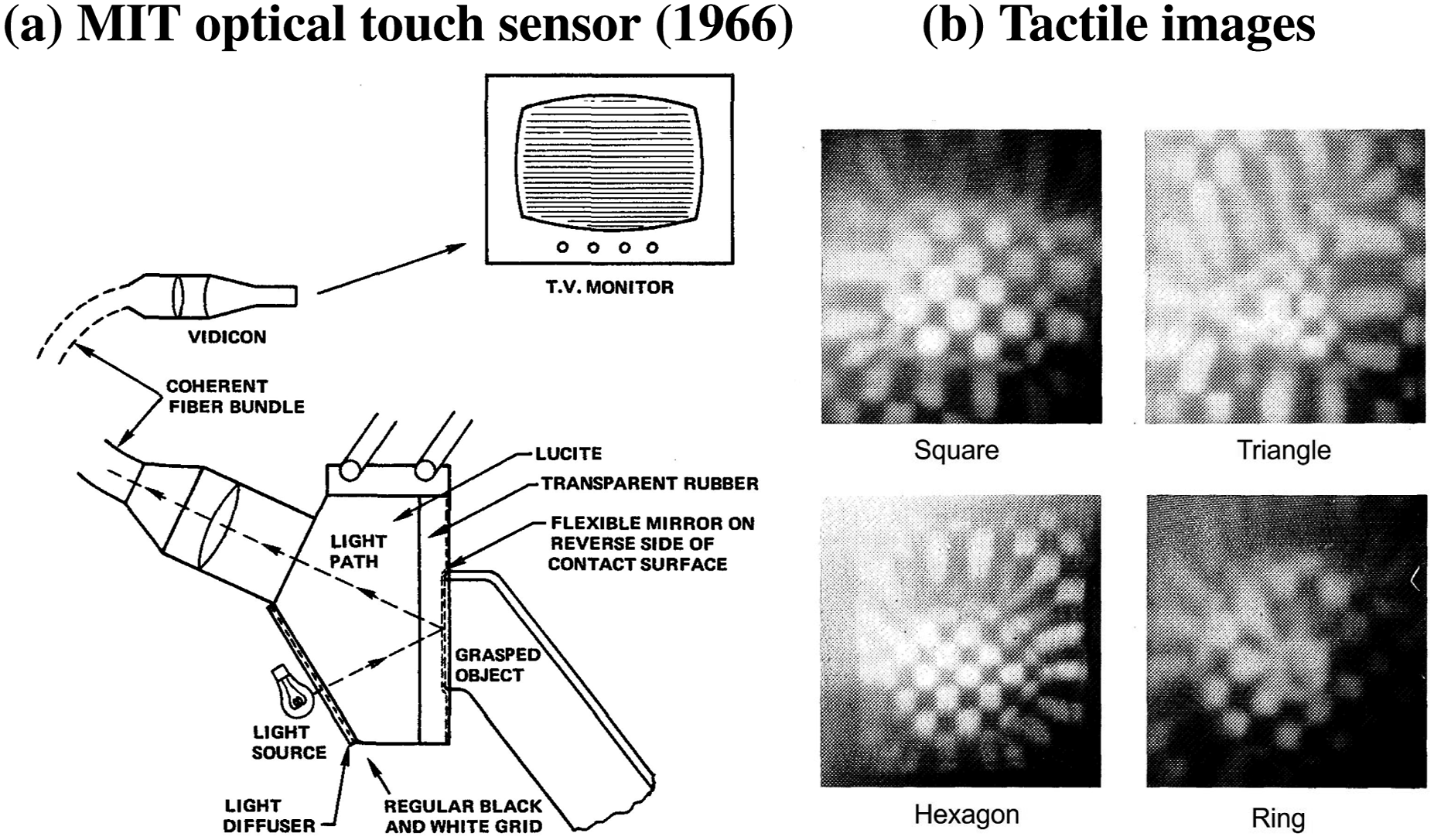

In an attempt to relay tactile sensation during teleoperation, the first artificial tactile sensors to transmit spatial patterns of contact were invented in Thomas Sheridan’s Man-Machine Systems Laboratory (Strickler, 1966a). The MIT optical touch sensing system used a TV camera fitted to a fiber-optic bundle to send detailed images from the inner surface of a deformable skin to a TV monitor (Figure 3). Several skin designs were explored to visually represent the contact, for example, a photoelastic material (Kappl, 1963) and moiré interference patterns (Strickler, 1966a, 1966b). Thomas Strickler finalized the tactile sensor with a checkerboard pattern on a flexible mirror to give a high-contrast image of the deformation relayed to a human operator using a television monitor (example tactile images in Figure 3). However, this early type of “teletouch” did not attract much attention (Sheridan, 1989) and its research was abandoned. MIT optical touch sensing system (Strickler, 1966a). Left: the imprint on the tactile skin is imaged via a fiber-optic bundle viewing a chequerboard pattern on a flexible mirror. Right: tactile images of contacts with various planar shapes. The system transduces touch into a picture on a TV for a human operator. Images from report by Corliss and Johnsen (1968).

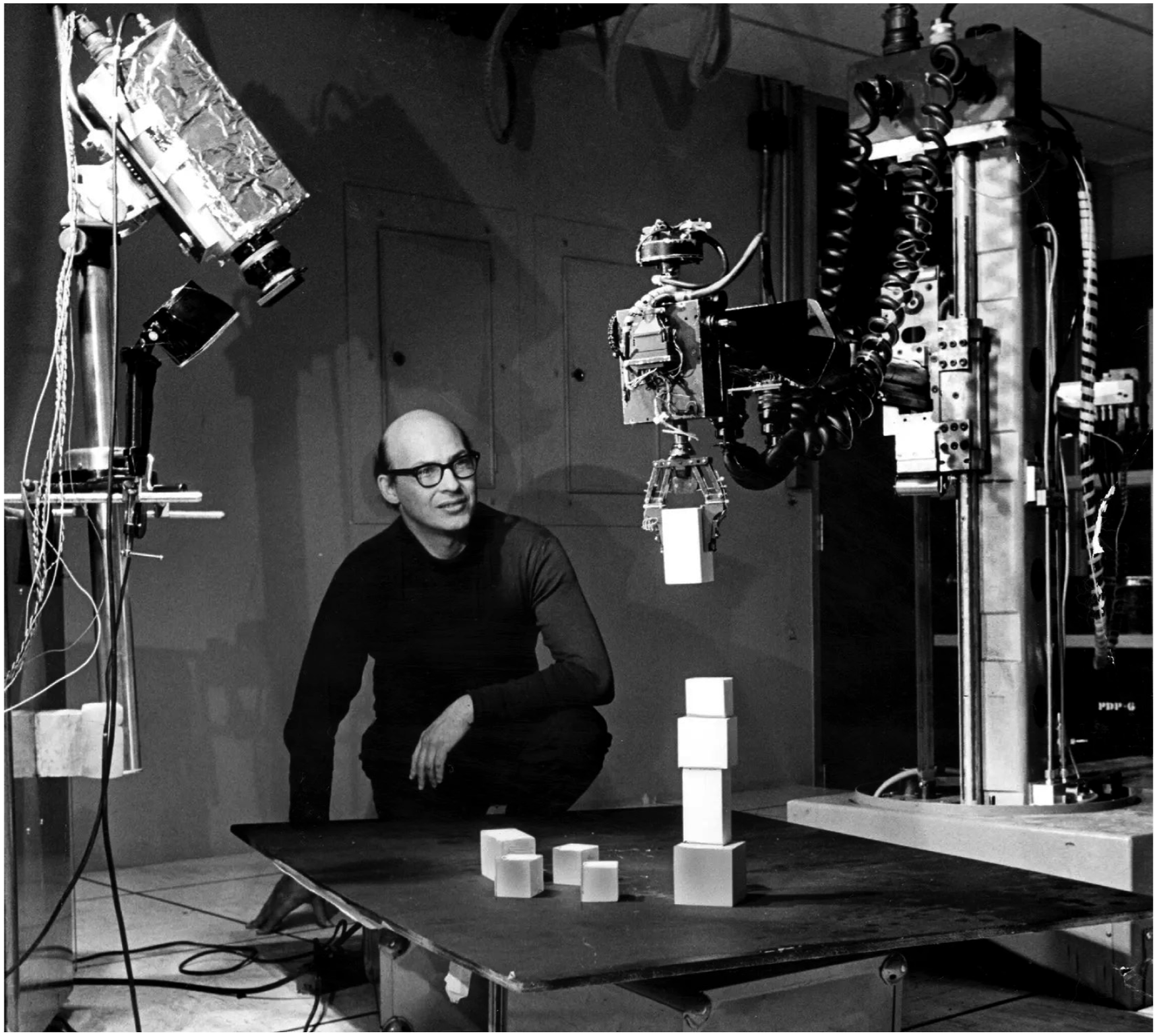

The earliest investigation of tactile sensing for robotics was in Marvin Minsky’s Artificial Intelligence (AI) group, also at MIT. A pioneer of AI, Minsky recognized the importance of human dexterity for human intelligence and proposed a project to develop “techniques for machine perception, motor control and coordination that are applicable to performing real-world tasks of object recognition and manipulation” such that “the system needs visual and tactile input devices capable of unusual discrimination ability” (Minsky, 1966). This project resulted in a mechanical hand and arm that Minsky wanted to autonomously stack blocks (Figure 4). Only hints now remain of this research, such as a 1969 report that describes how a graduate student, David Waltz, “engineered a grasping surface with good pressure sensitivity at a great many points by wrapping a coaxial cable connected to a time-domain reflectometer” (Minsky and Papert, 1969). However, practical issues in creating a tactile robot manipulator proved insurmountable, motivating a change to purely computational studies of intelligence. Marvin Minsky with a robot arm and gripper. The robotic system was constructed with Seymour Papert in Minsky’s Artificial Intelligence (AI) Laboratory in MIT. It was originally intended to instantiate a “blocks world” task where cubes could be stacked. Image from the MIT Museum.

Thus, in the early 1970s, Minsky’s “blocks world” became a purely computational construct of a robot and its environment (Minsky and Papert, 1971). Nevertheless, this led to seminal research on understanding natural language (Winograd, 1972) and Minsky’s acclaimed theory of natural intelligence in his book The Society of Mind (Minsky, 1988). In the chapter “Easy Things Are Hard,” Minsky reflects that he and Seymour Papert had “long desired to combine a mechanical hand, a television eye, and a computer into a robot that could build with children’s building-blocks,” and to do that they “had to build a mechanical hand, equipped with sensors for pressure and touch at its fingertips.” But he said they needed to keep adding more programs in an ever-increasing universe of complications, so “in attempting to make our robot work, we found that many everyday problems were much more complicated than the sorts of problems, puzzles and games adults considered hard.” This observation is similar to its contemporary the Moravec Paradox that “it is comparatively easy to make computers exhibit adult-level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility” (Moravec, 1988).

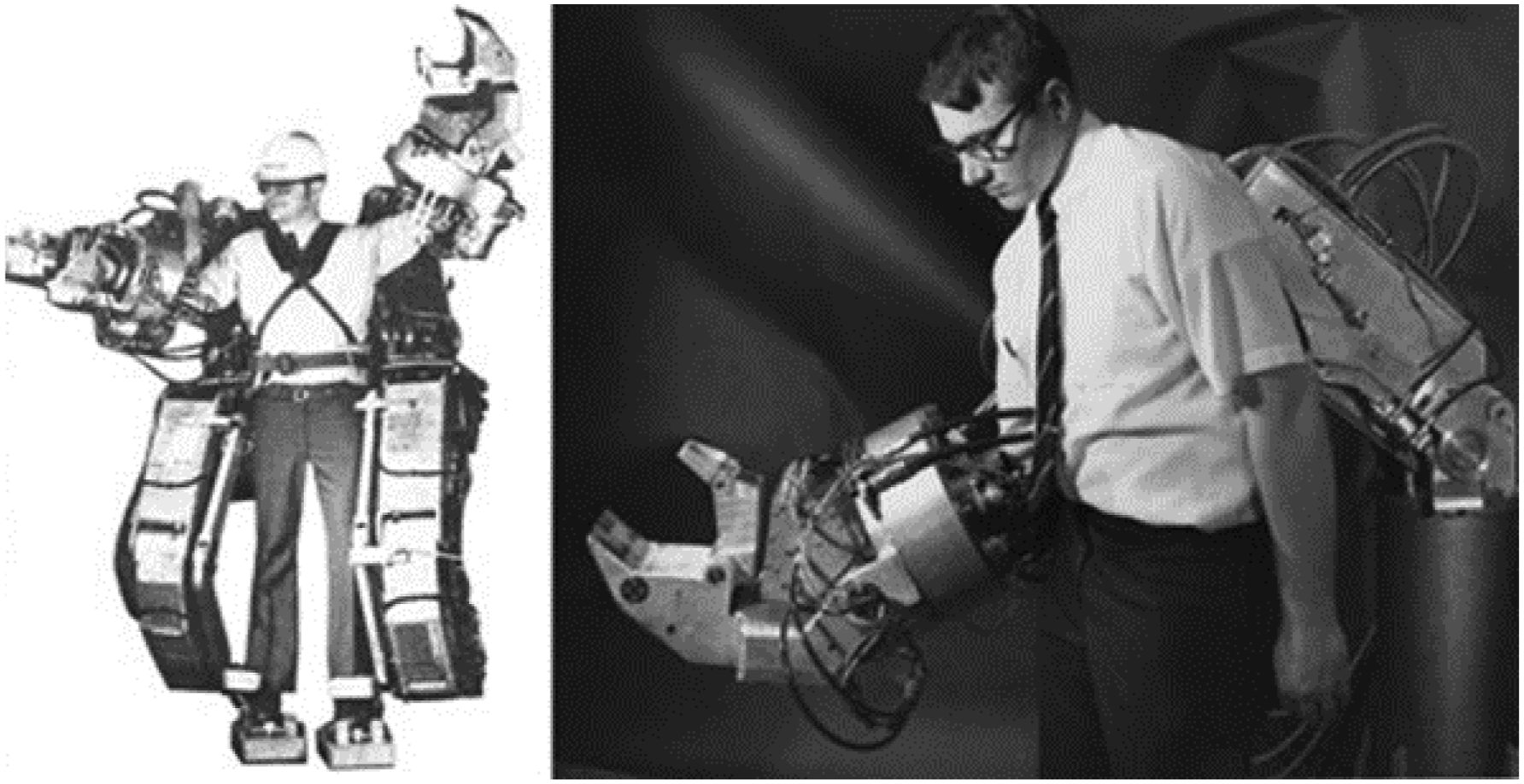

At the end of that decade, Minsky’s last contribution to the story of tactile robotics was in bringing teletouch and telemanipulation together as telepresence. He popularized this term in his so-named article in Omni Magazine (Minsky, 1980): “Telepresence emphasizes the importance of high-quality sensory feedback and suggests future instruments that will feel and work so much like our own hands that we won’t notice any significant difference.” Minsky had a revolutionary view of seeing a transformation of energy production, manufacturing, medicine, and creating entire new industries for a telerobot-based economy. Alongside human augmentation (Figure 5), he called for investment to solve the engineering challenges, including: “very little is known about tactile sensations. It seems quite ironic that we already have a device that can translate print into feel, but we have nothing that can translate feel into feel.” Yet, despite progress in tactile robotics and haptics, Minsky’s manifesto remains a compelling vision of a future that did not occur. Ralph Mosher in the G. E. HARDIMAN Exoskeleton (Human Augmentation Research and Development Investigation MANipulation). Right: one arm of the exoskeleton showing the powered gripper. Images from the miSci, Museum of Innovation and Science; reprinted in Minsky (1980).

It is hard to assess how much these original studies directly influenced later research in tactile robotics. Not least, most were reported in internal memos rather than in journals, and so may not have been known to many researchers. However, their importance is to show that tactile robotics was deeply intertwined with the roots of artificial intelligence and robotics a human lifetime ago. Also, the original motivations for these fields of study retain their immediacy today: to enable human-like dexterity, better understand human intelligence, and implement telepresence. The difference now is that these aims may soon be realizable given the progress over three intervening generations (45 years) of tactile robotics research.

1980–84: Foundations of a research field

In the early 1980s, tactile robotics was formed into a distinctive research discipline through a series of four reviews by Harmon (1980, 1981, 1982, 1984). Of these, the 1982 article on “Automated Tactile Sensing” was published in the first volume of the International Journal of Robotics Research (IJRR). As the first robotics journal, it marks a beginning of robotics as a research field (Mason, 2012), with a new working definition: “Robotics is the intelligent connection of perception to action” (Brady, 1985).

Their author, Leon Harmon, had insight into artificially recreating the human senses as a pioneer in computer vision. He was a free thinker, inspiring Salvador Dali’s “Lincoln in Dalivision” (1977) from a mosaic image of Abraham Lincoln that Harmon published in Scientific American (he also created the “Computer Nude” (1966) that was famously reprinted in the New York Times). In the last few years of his life, Harmon became convinced of a need for a robotic sense of touch in manufacturing and related industries, taking inspiration from the human tactile sense. He demonstrated this insight to others with four papers: “Touch-sensing Technology: A Review” (Harmon, 1980), “A Sense of Touch Begins to Gather Momentum” (Harmon, 1981), “Automated Tactile Sensing” (Harmon, 1982), and “Tactile Sensing for Robots” (Harmon, 1984).

Harmon was motivated by his view that tactile sensing could transform industrial robotics. He sought authority from influential industry leaders of the time, such as Unimation co-founder Joseph Engelberger who “laid heavy stress on tactile sensing” for robot physical interactions (Harmon, 1981). His views were also based on findings from teleoperation research, quoting Antal Bejczy that: “Manipulation-related key events are not contained in visual data,” specifically the “geometric and dynamic reference data for the control of [pose], adaptive and soft grasping of objects, [and] gentle load transfer.” This led Harmon to conclude that the technology of that time was too crude for robotic manipulation and that a new generation of sophistication was beginning to emerge.

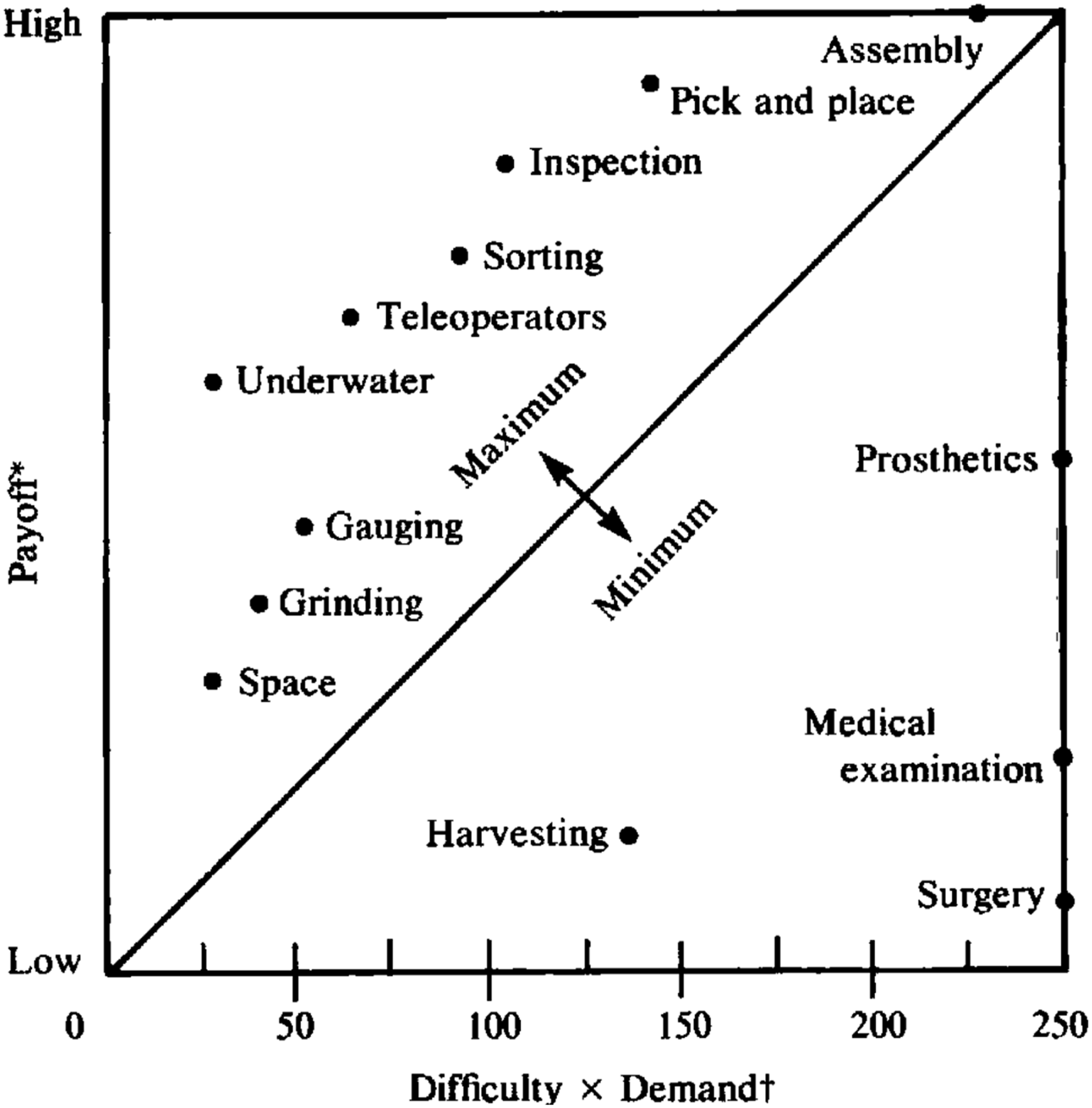

For context, industrial robotics became well-established in the 1970s with several leading companies (Unimation in the US, Hitachi in Japan, and ASEA/ABB in Europe). These were experiencing a surge in demand for advanced robotic arms from the automotive and other manufacturing industries (Gasparetto and Scalera, 2019), which created a demand for better interaction with the operator and the environment to perform more complex tasks. The companies responded by making robots easier to program with improved sensing of themselves and their surroundings. However, these advances were insufficient for many commercially valuable tasks (see, e.g., Figure 6), motivating Harmon to survey artificial tactile sensing for robotics. Harmon’s cost-benefit analysis to characterize touch-sensing commercial feasibility. The payoffs were estimated from 1979 robotics sales, if tactile sensing had been integrated, along with commercial need and technical maturity. The costs in terms of difficulty × demand were likewise estimated by scoring these aspects of the tasks. Full details are given by Harmon (1982) (Figure 5 reprinted above).

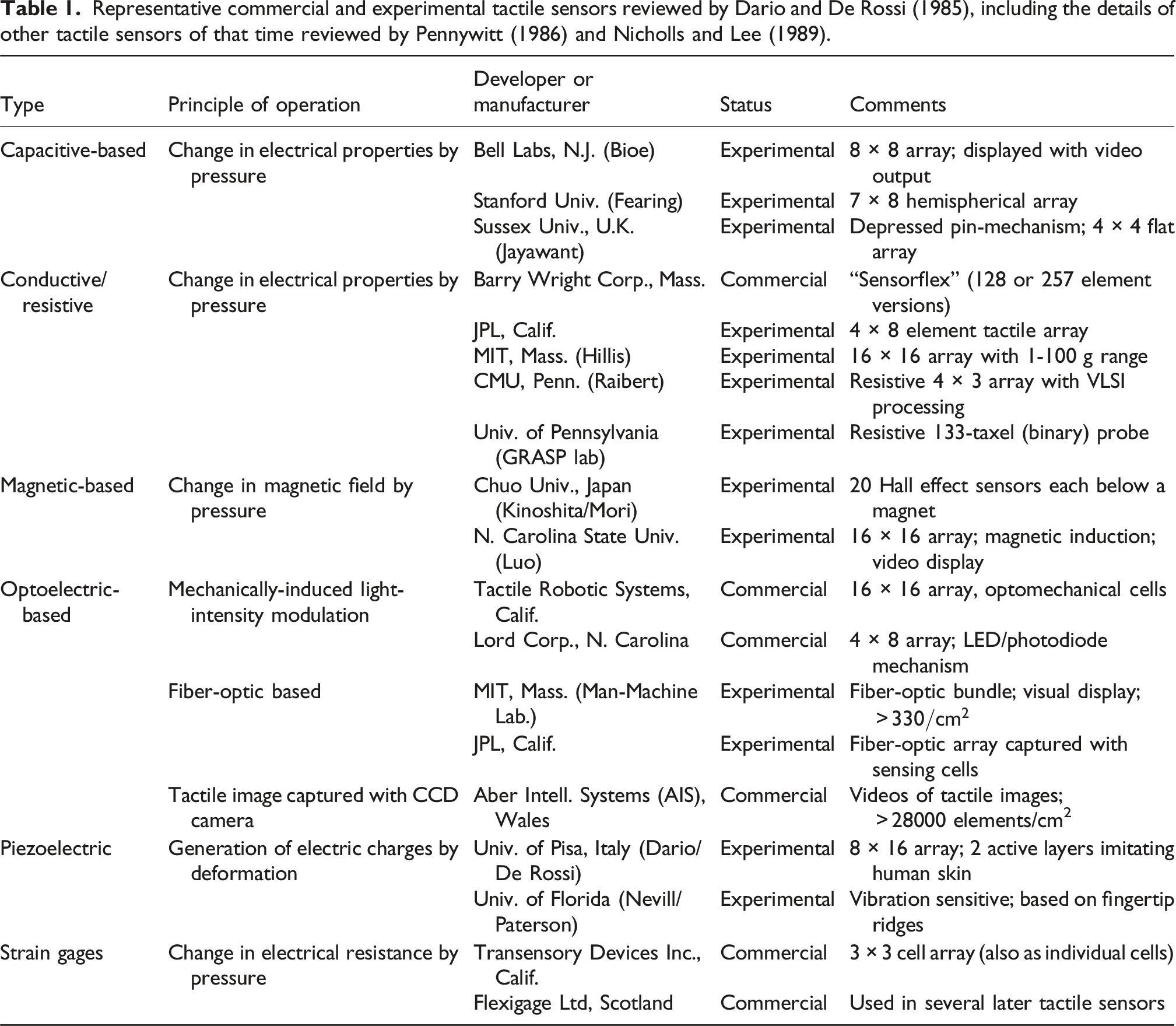

Representative commercial and experimental tactile sensors reviewed by Dario and De Rossi (1985), including the details of other tactile sensors of that time reviewed by Pennywitt (1986) and Nicholls and Lee (1989).

Harmon (1982) also made the first working definition for a robot sense of touch: “Tactile sensing refers to skin-like properties, with which areas of force-sensitive surfaces are capable of reporting graded signals and parallel patterns of touching.” He further clarified a distinction between simple contact and tactile sensing: “When reference is made to simpler contact sensing [at one or a few points], the term simple touch will be used.” Although this term is no longer in use, it describes well the spatial aspects of tactile sensing.

His clarification of simple contact remains insightful in distinguishing between force-torque and tactile sensing (Harmon, 1981, 1982). Force-torque sensors measure contact through a point, with six axes matching the degrees of articulation of a robot arm. However, force sensing is subject to the “unwelcome fact” that force cannot be measured directly, but only from displacement, so its measurement is subject to errors, hysteresis, and delays from its transmission through compliant structures. Tactile measurements that relate directly to skin surface displacement do not suffer from those limitations of indirect force-torque measurements.

Another way that Harmon sought to bring coherence to tactile robotics was to gather responses to questionnaires answered by over 50 academic, industrial, and other researchers (Harmon, 1982). He asked about the need for next-generation robots, finding that “90% of the respondents felt strongly that tactile sensing is needed.” Views spanned from one industry executive feeling that tactile sensing “would open the manipulator market by perhaps an order of magnitude” to a chief engineer who said that “sensors are not the key element in [the near future]” but that the need was for improved electronic control using existing technology such as strain gauges and limit switches.

Some of the respondents gave cautionary quotes that remain pertinent today. For example, one responder said “household robotics probably would not be enthusiastically received [because] people did not want competition from machines at home (neither did they want to be watched or followed by robots).” To this, Harmon retorted that “this respondent may not have been familiar with toilet-, window- or oven-cleaning; floor scrubbing, dish washing, snow shoveling, or diaper changing.” The outlook was that robots would ultimately replace humans in “grungy, hazardous, dull, repetitive tasks,” a view that has become a cliche as the 3 Ds (dirty, dangerous, and dull) of robotics.

Harmon also surveyed the tactile sensor needs for robotic tasks and their probable market importance along with the technological developments required to achieve these functions for that decade 1980–1989. He synthesized the data as a cost-benefit analysis (reprinted in Figure 6). Conclusions included: (1) 9 applications (to the top left) seem commercially feasible, with the really big returns being in factory automation; (2) the 4 applications (bottom right) that seem unlikely to return the investment all concern living systems; and (3) if “technological problems are solved for one particular task, those to the left of it [in difficulty] will also be solved implicitly.” Thus, making an all-out effort to solve assembly would likely solve all the other applications.

Why, four decades later, has none of this come to pass? There is still minimal use of tactile sensing in industrial automation, and many of these tasks remain unsolved (e.g., assembly) or have partial solutions with force-torque sensing or computer vision (e.g., inspection or pick and place). In part, the issue is that the fabrication of practical high-performance tactile skins has proven to be far more challenging than first envisioned. Even today, there is no agreement on tactile sensing technology. Also, the mundane answer from one engineer on the need for improved electronic control using existing technology transpired to be correct in predicting the improvements required for industrial robots in the 1980s. However, given the arguments in Harmon’s paper, it is more surprising that these tactile robotic applications were not just delayed by a decade or two but remain unsolved today.

1985–94: Growth of tactile robotics

By the mid-1980s, the sense of touch had gathered momentum. A new generation of researchers were drawn to tactile robotics with optimism about enabling human-like capabilities. This growth resulted in many new biomimetic and engineered tactile sensor designs, with some becoming commercial products. Meanwhile, the nature and complexity of a tactile sense for robotics was beginning to be understood, with insights entering the field from physiology and neuroscience. This progress was captured in a succession of review articles, including “Tactile Sensing and the Gripping Challenge” (Dario and De Rossi, 1985), “Tactile Sensing in Robotics” (Nicholls and Lee, 1989), and “Tactile Sensing and Control of Robotic Manipulation” (Howe, 1993).

Two early researchers of that generation, Paulo Dario and Danillo De Rossi, were inspired by “increasing the performance of [tactile sensors as] a first step towards robotry that can hold and manipulate objects as humans do” (Dario and De Rossi, 1985). They formed a cluster of interdisciplinary research in Pisa, Italy, where progress in robotics combined knowledge from the biological sciences with mechanical engineering (research now known as biorobotics or neurobotics). The goal of their research was to create an artificial sense of touch integrated with its motor control, extending from a tactile fingertip to an actuated finger to a functional robotic hand as a complete system.

Motivated by the functionality of human skin, Dario and De Rossi (1985) placed emphasis on grasping and manipulating objects safely: “the primary goal of an ideal tactile sensor is to measure variable contact forces on a sensing area,” including to “control the delicate manipulation of objects by providing information on the tangential forces.” Other useful measurements included “the hardness and thermal properties of different materials” and “whether an object has been grasped safely and when slippage occurs.”

They surveyed the main types of tactile sensor of that time with an emphasis on commercialization (see Table 1). Here, their table has been extended to include other contemporary tactile sensors of the 1980s (Table 1, below), using the review articles by Pennywitt (1986) and Nicholls and Lee (1989). As part of their survey, Dario and De Rossi also listed the prices of commercial tactile sensors, which were $2 000–$4 000 (equivalent to $6 000–$12 000 in 2025). This range is not dissimilar to that for the later tactile sensors in Table 5.

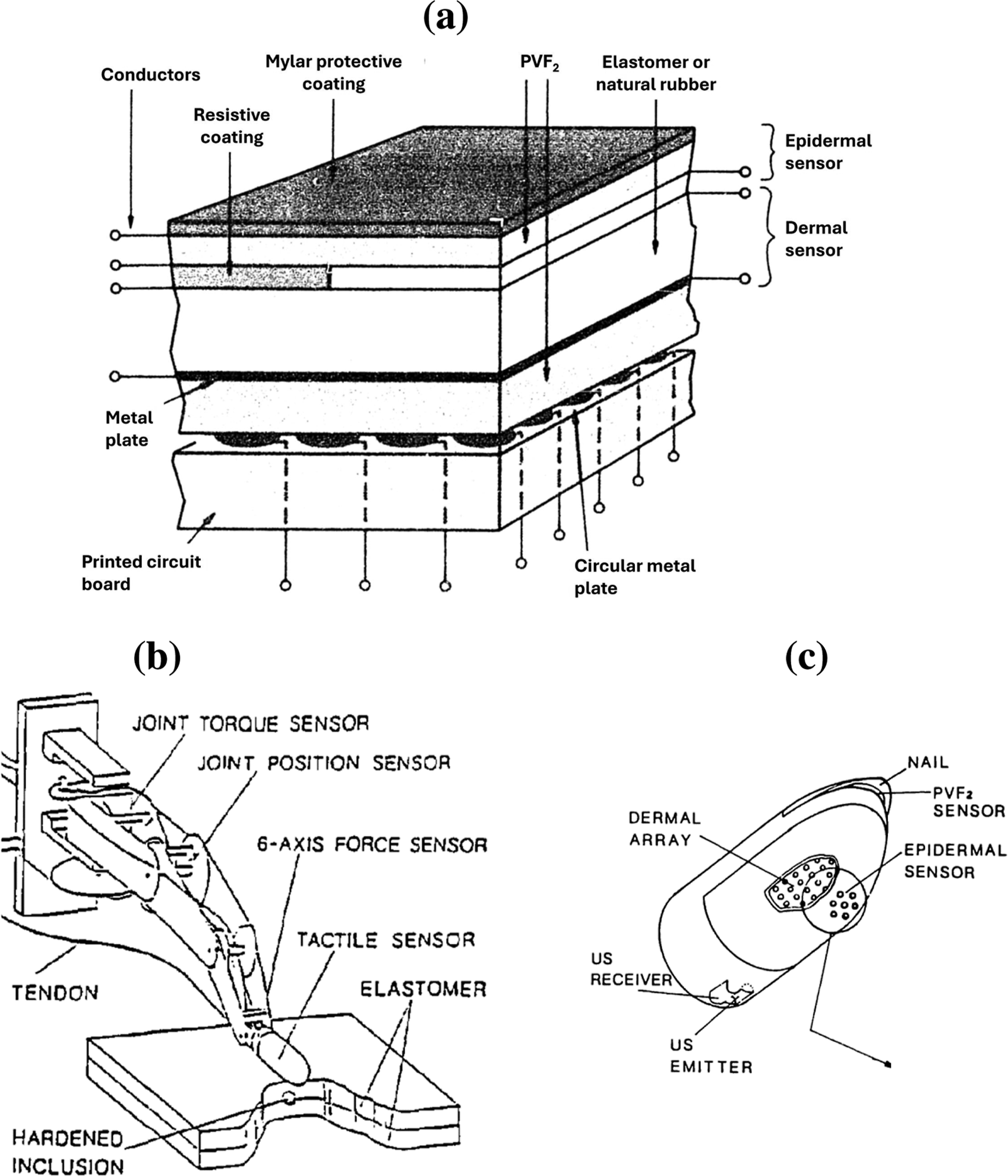

An incentive to write a review is when your research gives you insight into the promises and challenges of a research area. Dario and colleagues were developing a biomimetic tactile sensor based on the layered structure of human skin (Figure 7(a)), using piezoelectric material that generates local changes in electrical properties under contact pressure. Their design was one of the first multi-modal tactile sensors, combining a deeper dermal layer using an array of pressure-sensitive conductive plates with a shallower epidermal layer composed of a thin Mylar film sensitive to gross deformation changes and temperature. A later fingertip design included a tactile “fovea” sensitive to rapidly-changing contacts at the epidermis (Figure 7(c); Dario et al., 1988). This biomimetic fingertip was part of a body of research from the University of Pisa on the creation and control of an anthropomorphic finger (Figure 7(b); Dario and Buttazzo, 1987) to mimic aspects of how humans explore and interact with objects (see reviews by Dario et al., 1988; Dario, 1991). Biomimetic tactile skin and fingertip from the mid-1980s. (a) Layered epidermal (outer) and dermal (inner) tactile skin using a piezoelectric material (PVF2), Biomimetic piezoelectric tactile sensor (1985). (b) An anthropomorphic finger with (c) a biomimetic tactile fingertip using a modified version of the piezoelectric skin, Tactile fingertip (1986). Images from Dario and De Rossi (1985) and Dario (1991).

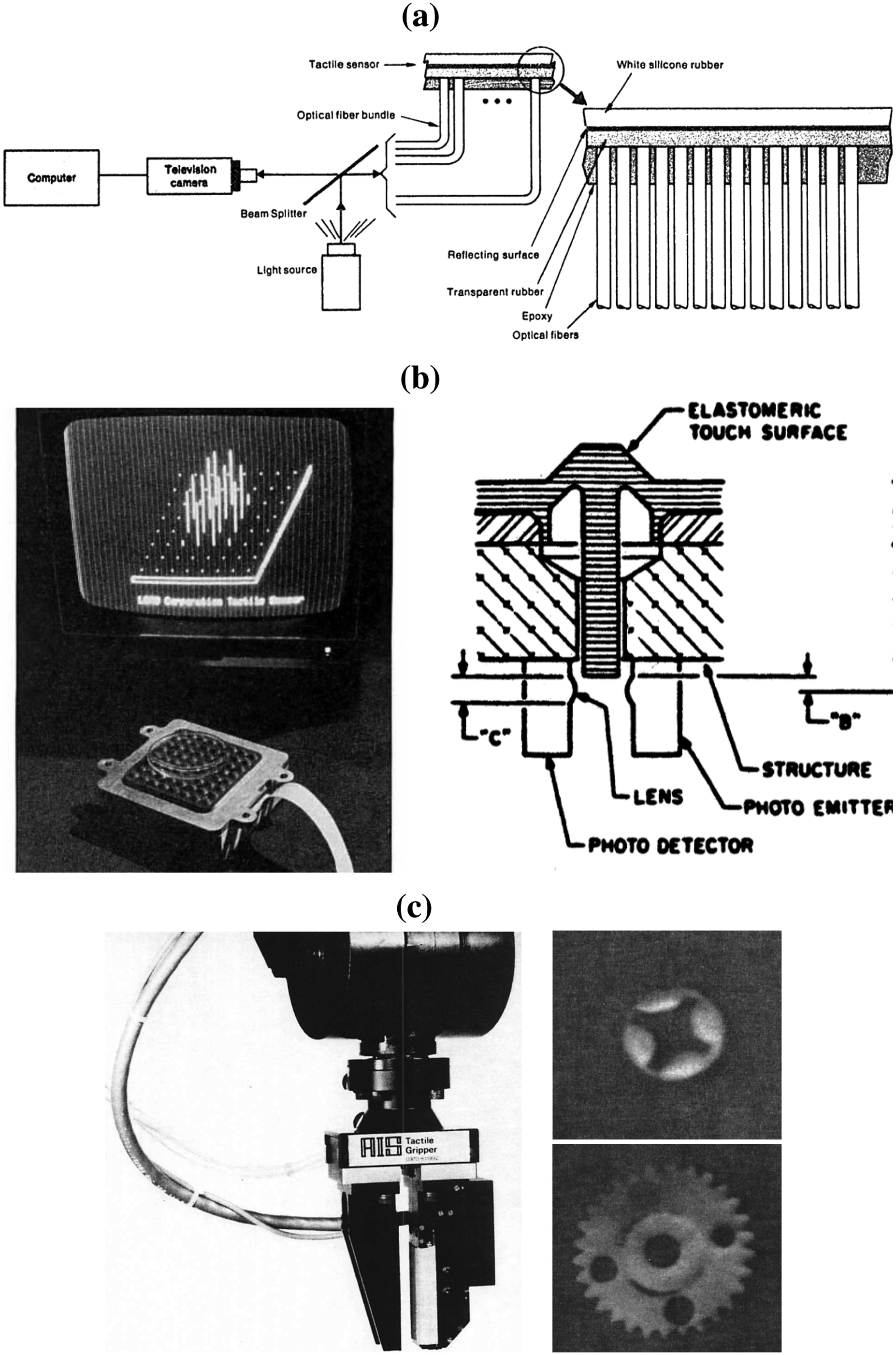

Another tactile sensing technology that was well covered in reviews at that time was optoelectronics (Dario and De Rossi, 1985; Pennywitt, 1986; Nicholls and Lee, 1989). Optical tactile methods were considered promising because of their high sensitivity in that a “small change in force results in a relatively large change in light intensity.” Some examples are shown in Figure 8, including: (a) a fiber optic tactile sensor from MIT (Schneiter and Sheridan, 1984), which both emitted and received light down the same fibers to image a deformable reflective layer underneath an opaque outer rubber surface; (b) a commercial optoelectronic tactile sensor with an array of pin-like tactile elements connected to an elastomeric pad, where depressed pins partially block light between paired LEDs and photodetectors; and (c) an early vision-based tactile sensor using a CCD camera to capture a high-resolution (128 × 256 pixels) tactile image of light internally refracted from a deformable membrane (Mott et al., 1984). This latter work, by Mott, Lee and Nicholls from Aberystwyth, Wales, pioneered vision-based tactile sensing 30 years before it became a main theme of tactile robotics. Optical tactile sensors from the mid-1980s. (a) Fiber optic tactile sensor (MIT Man-machine lab, 1984), fiber optic-based tactile sensor (1984); (b) opto-electronic tactile sensor (Lord Corporation, 1986) with display and mechanism, Commercial optoelectronic tactile sensor (1985); (c) vision-based tactile sensor mounted on a gripper (Aber Intelligent Systems, 1987) with high-resolution tactile images, Commercial vision-based tactile sensor (1987). Images from Agrawal and Jain (1986), Dario and De Rossi (1985), and McClelland (1987).

Nicholls and Lee (1989) also wrote an authoritative review in IJRR of the tactile sensing technology of that time as a successor to the review by Harmon (1982). They extended the definition of tactile sensing to transduce five main categories of data: simple contact, force, shape, slip, and thermal properties; and so defined that “the acquisition, processing and manipulation of this data constitutes tactile sensing.” Their definition has a wider coverage of tactile sensors than Harmon’s original “graded signals across force-sensitive surfaces,” which had become too narrow for the advances that they surveyed over that decade.

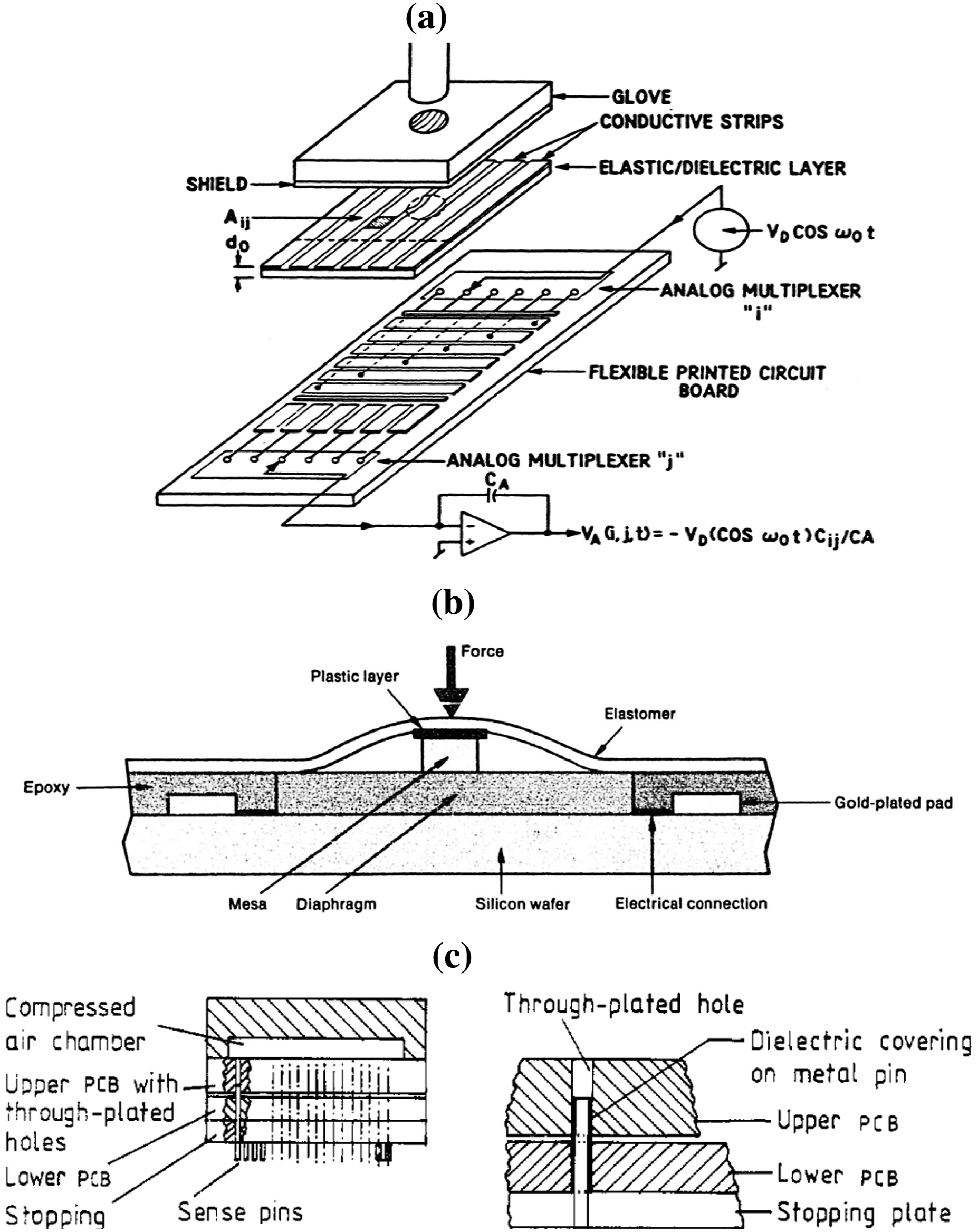

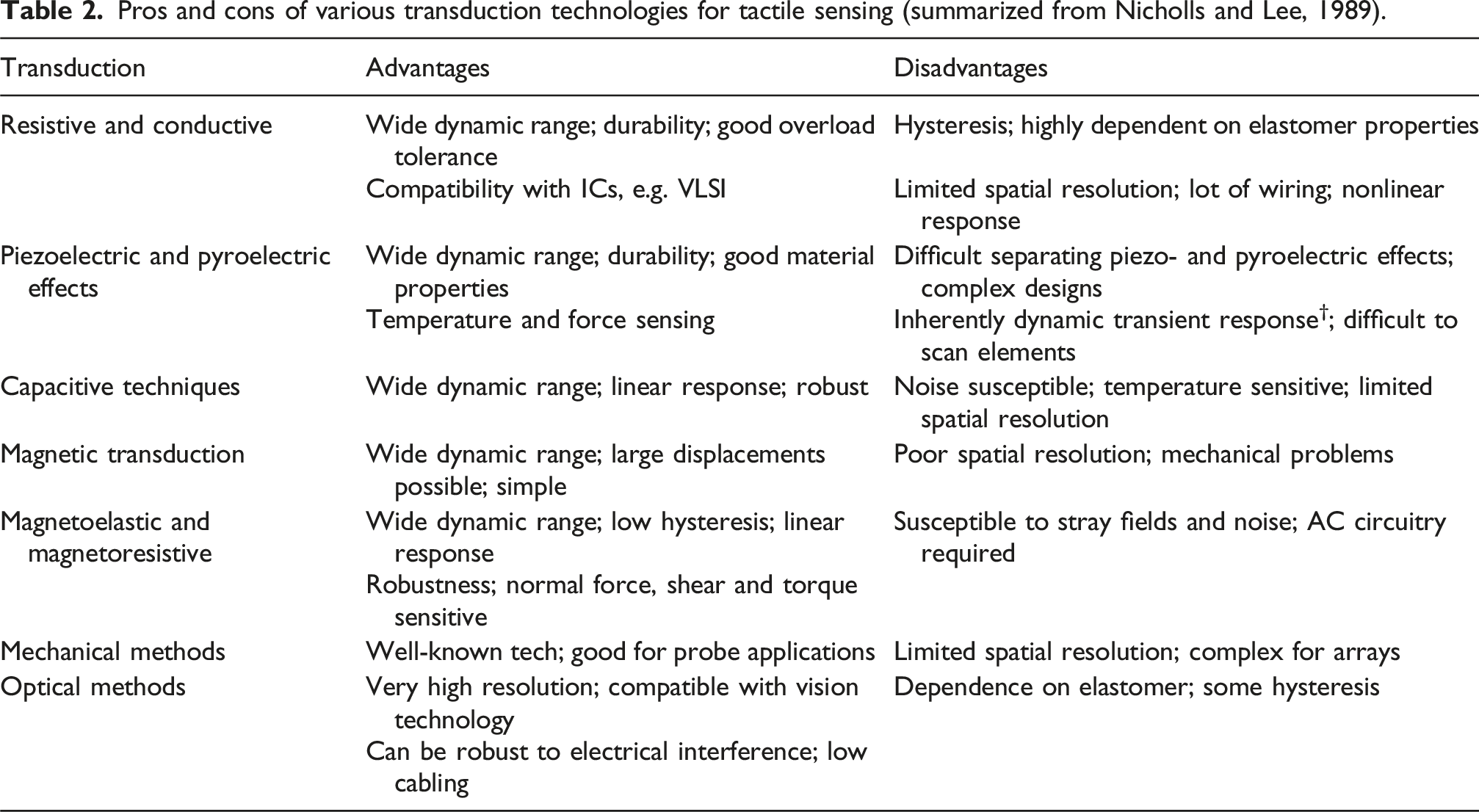

By the late 1980s, the methods for tactile transduction had separated into distinct classes that remain the main methods in development and use today. These include piezoelectric sensors (e.g., Figure 7) and various types of optical tactile sensor (Figure 8), alongside other technologies such as capacitive and strain gage-based (examples shown in Figure 9). After surveying the technologies being considered for tactile sensing, Nicholls and Lee (1989) usefully distilled the pros and cons of each transduction technology (summarized here in Table 2). These benefits and limitations seem current today; for example, many of the mechanisms are limited by having low-resolution or complex designs. Electromechanical tactile arrays from the mid-1980s. (a) Capacitive tactile 6 × 6 array, Capacitive tactile sensor (1985). (b) strain gage deployed as a tactile sensor, Strain gage-based tactile sensor (1985). (c) Capacitive transducer using a pin mechanism to measure normal contact, Capacitive pin-based tactile sensor (1985). Images from Agrawal and Jain (1986), Dario and De Rossi (1985), and Jayawant (1989). Pros and cons of various transduction technologies for tactile sensing (summarized from Nicholls and Lee, 1989).

The 1980s was still a time when topical reviews of leading research could be published in general-interest magazines. In 1986, Byte, a highly successful microcomputer magazine, ran a special issue on robotics (with accompanying artwork, still available online). Its content was guided by the questions: “What makes robotics so hard? Why is it taking so long to develop this technology?” The editors commissioned articles on: “Machine vision,” “Robotic tactile sensing,” “Multiple robotic manipulators,” “Autonomous robot navigation,” “AI in computer vision,” and “Automation in organic synthesis.” Among these, the article by Pennywitt (1986) provided a high-quality early review of tactile sensing up to the mid-1980s. Although much of the material overlaps with other reviews at that time, it stands out in its quality and professionalism.

From the mid-1980s to mid-1990s, a new review of tactile sensing was published every year or so. Their content overlapped, particularly when surveying tactile technologies, but they all contributed new perspectives. In a NASA-commissioned report, Agrawal and Jain (1986) gave an account of tactile and vision integration, proposing that “if the object recognition system makes a hypothesis about [an] object, tactile sensing can be used to verify model features.” In a book on “Robot Tactile Sensing,” Russell (1990) surveyed artificial tactile sensing for undergraduate students. In a review of “Artificial Tactile Sensing and Haptic Perception,” De Rossi (1991) gave a new treatment of tactile transduction and introduced models of contact mechanics, friction, texture, and rheology.

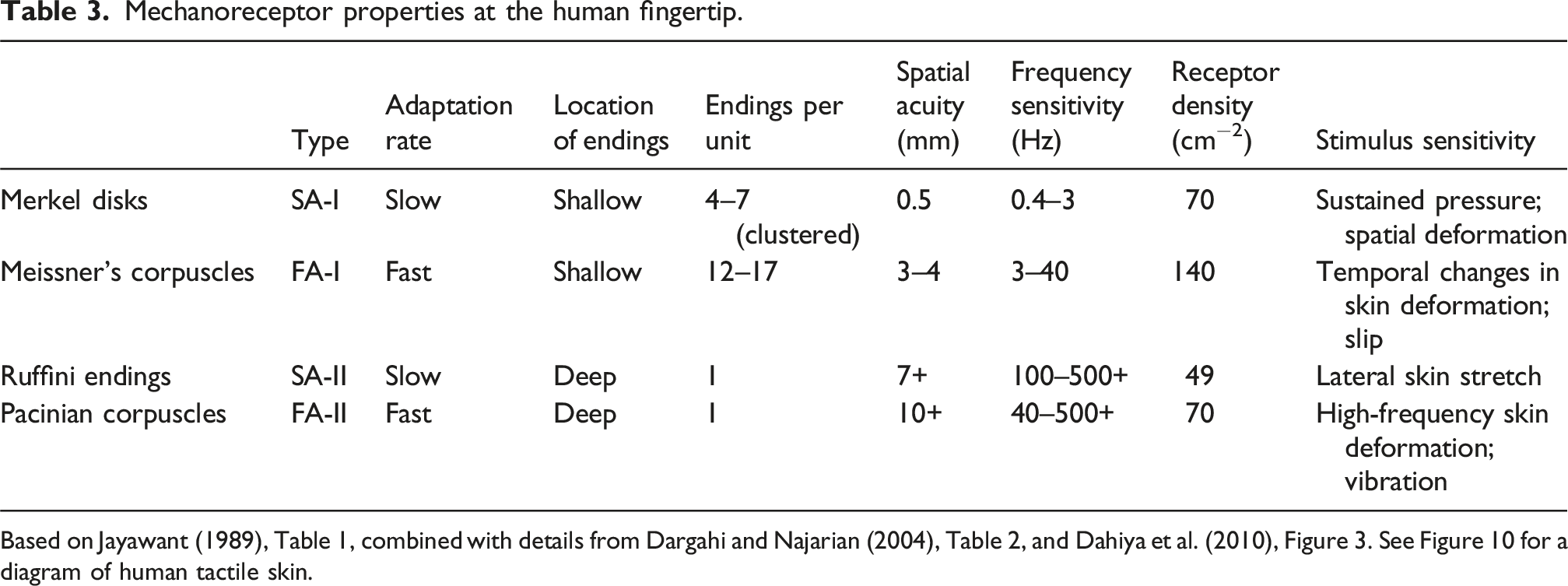

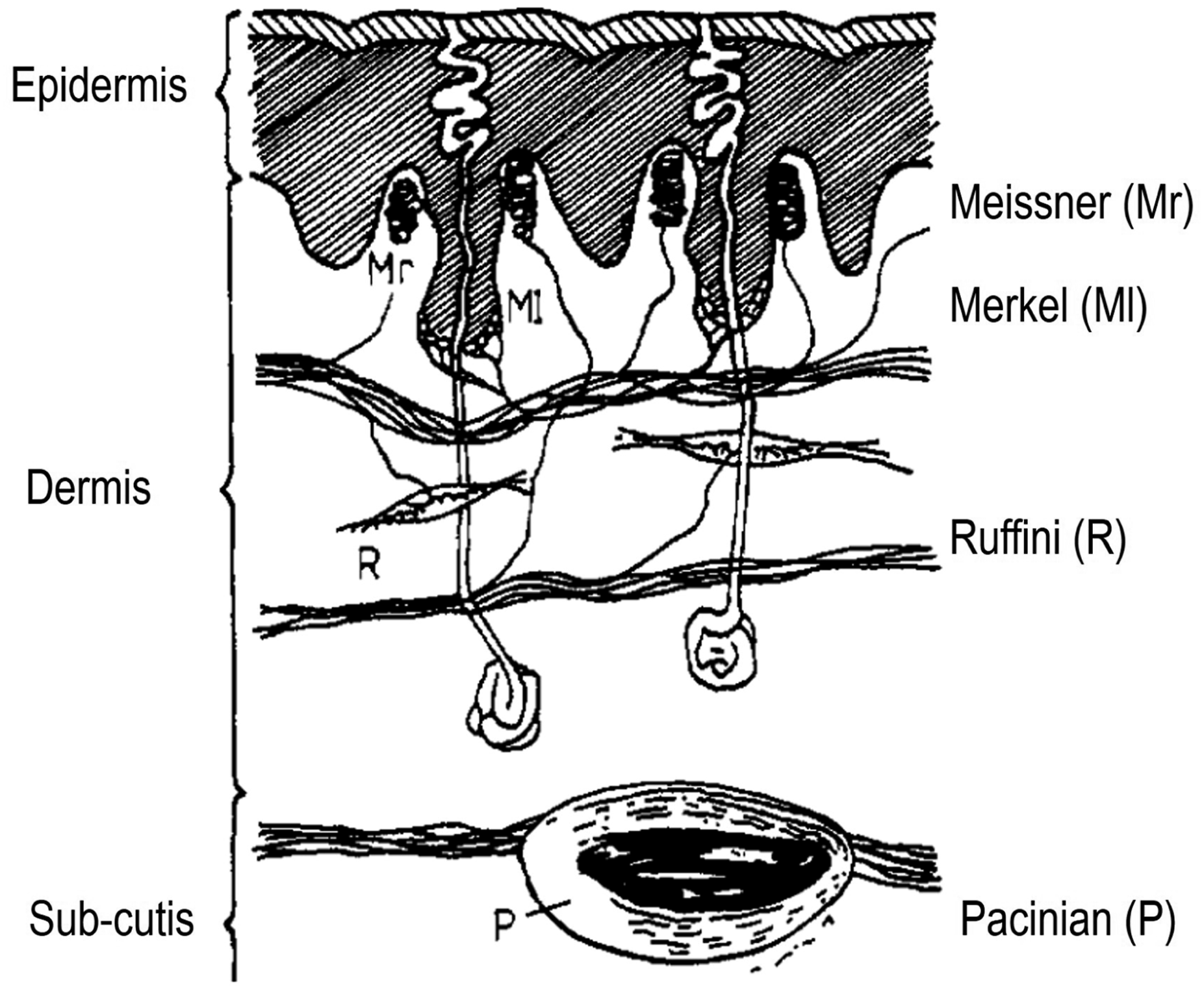

A central theme that inspired artificial tactile sensing in this period and beyond was the physiology and function of the human sense of touch. Harmon (1984), in his last article “Tactile Sensing for Robots,” covered the physiology of the human tactile sense in some detail, including the types and properties of tactile mechanoreceptors. In his view, “consideration of some of what we know about ourselves could illuminate or at least stimulate our machine design.” He also conveyed that human touch is fundamentally an active sense, in that “the hand should properly be considered the sense organ for touch rather than the mechanoreceptors, and…emphasis ought to be on the active seeking of information by the exploring hand.” At that time, these aspects of human touch were being explored in the life sciences, some of which translated to robotics (e.g., Lederman and Pawluk (1992) in a book by Nicholls (1992)).

Mechanoreceptor properties at the human fingertip.

Based on Jayawant (1989), Table 1, combined with details from Dargahi and Najarian (2004), Table 2, and Dahiya et al. (2010), Figure 3. See Figure 10 for a diagram of human tactile skin.

The layered structure of fingertip skin. MI, Merkels neurite complex; Mr, Meissner corpuscle; R, Ruffini cylinder; P, Pacinian corpuscle. Image from Jayawant (1989), Figure 1.

Howe (1993) also described some lessons from human tactile sensing for robot dexterity, including that a diversity of receptors in skin and muscle respond to a variety of stimuli. He emphasized that these receptors can be highly sensitive (e.g., ∼1 μm perceivable static height change), but are also hysteretic, nonlinear, time-varying and respond to many physical parameters simultaneously. In addition, nerve conduction is slow (usually <60 m/s), so animals use feedforward control for voluntary actions in tasks requiring quick reactions. Although robots can use faster signaling, anticipatory control may likewise help perform such tasks.

His focus was on “tactile sensing and control of robot manipulation” (Howe, 1993) (related to a chapter by Howe and Cutkosky (1992), now out of print). Specifically, dexterous manipulation “requires control of forces and motions at the contact [with] the environment, which can only be accomplished through touch”. This perspective contrasted with earlier perspectives that focused mainly on hardware and applications but omitted how tactile sensors could be controlled to do those tasks. The motivation that “we lack a comprehensive theory that defines sensing requirements for various manipulation tasks” remains current today, as do the sources of knowledge: “investigations of human sensing and manipulation, and mechanical analyses of grasping and manipulation.”

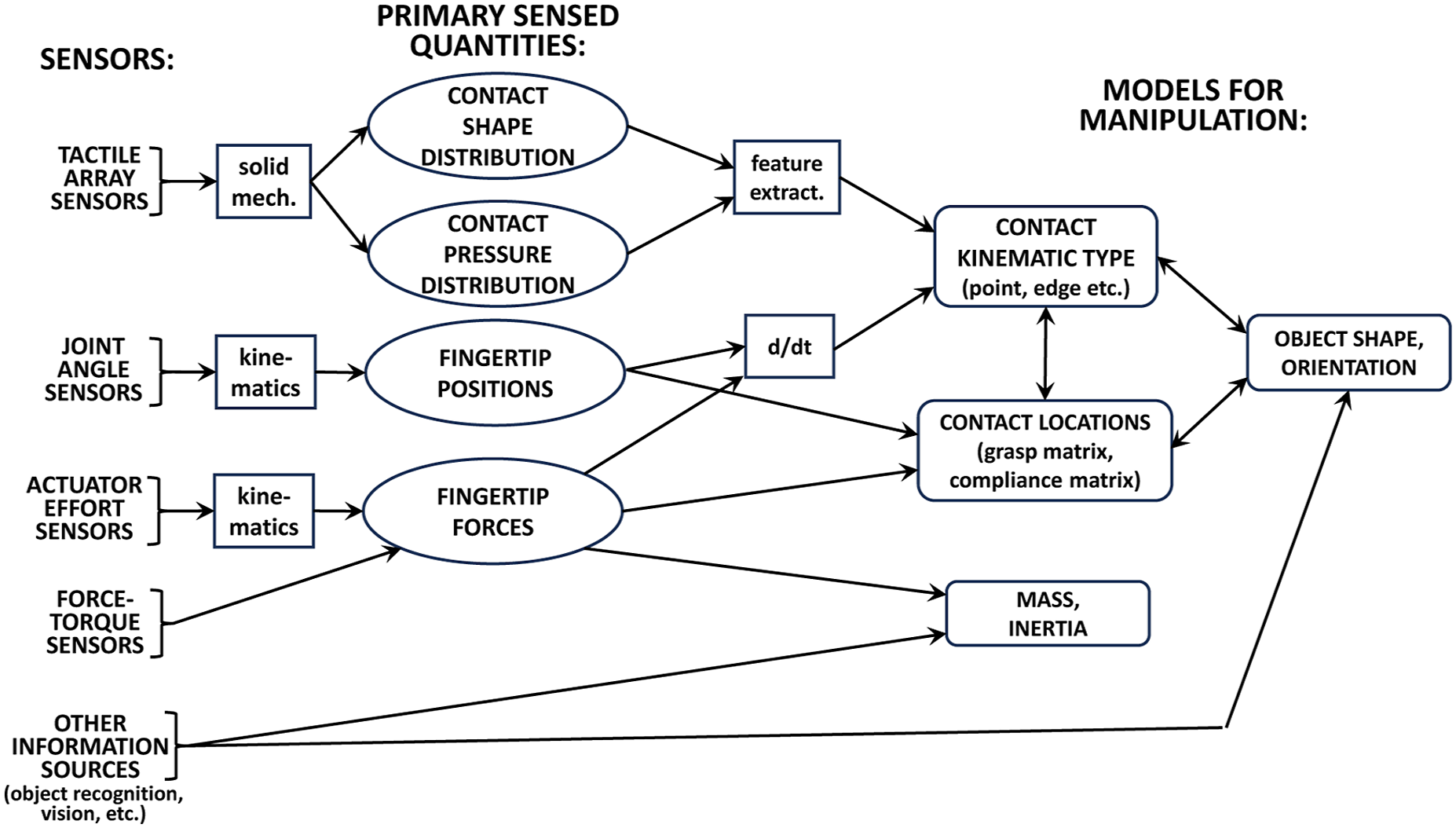

In considering control as the primary use of tactile sensing, Howe (1993) distinguished two ways by which tactile sensing can provide useful information for manipulation: (1) geometric features, including contact shapes and forces; and (2) contact-condition features, including local friction, slip, and transitions between different stages of the task (e.g., making contact or losing grip). Touch-derived quantities such as contact shape/pressure and fingertip positions/forces can then be input to models for use in controlling the manipulation of object shape and pose (see Figure 11). Likewise, similar connections can be made to models for detecting task phases, using dynamic information such as contact vibration and slip detection (Howe, 1993, Figure 4). Use of geometric touch information in manipulation. The diagram links types of sensor (left) to their primary sensed quantities (middle) and how they update models for manipulation (right). Based on Howe (1993), Figure 2.

However, these reviews by Howe (1993); Nicholls and Lee (1989) and others led to a more realistic view of tactile sensing technology. The final theme of this foundational generation of tactile robotics was a dawning realization of the major challenges to make these systems a practical reality, displacing the early optimistic forecasts of the first reviews. This sentiment is expressed in the closing comments of the last few surveys of this generation, which are quoted below. “Despite the findings of the Harmon (1982) survey, tactile sensing has not made any significant contribution to real applications in factory systems” (Nicholls and Lee, 1989). “Although the rapid growth in interest in the field of tactile sensing has initiated significant progress in [tactile] hardware, very little fall-out in real applications has occurred…Future widespread use of [tactile] systems is still foreseen, but the time scale for these events to occur should be realistically correlated with the great theoretical and technical difficulties associated with this field, and the economic factors that ultimately drive the pace of its development” (De Rossi, 1991). “Ten years ago, a commonly cited impediment to progress in tactile sensing was the lack of suitable [devices] and algorithms…The primary issues in touch sensing are [now] the integration of these devices and algorithms into practical manipulation systems.” Also, “contact tasks where small forces and displacements must be controlled” are “beyond the capability of even laboratory robots, and understandably industry avoids these tasks” (Howe, 1993).

1995–2010: Tactile robotics winter

There followed a long “tactile robotics winter” from the mid-1990s to the late 2000s when academic activity dropped compared to the earlier foundational generation (Table 1). This slowdown was against a background of growing robotics research (e.g., the primary robotics conference, ICRA, grew from under 900 to over 2100 submissions). It is difficult to attribute the cause, but perhaps a realistic assessment of the challenges dispelled the early optimism that attracted researchers to tactile robotics. Also, the major research efforts to explore tactile sensing technologies (see Tables 1 and 2) had not resulted in a clear leader, but rather many candidates that all had problems.

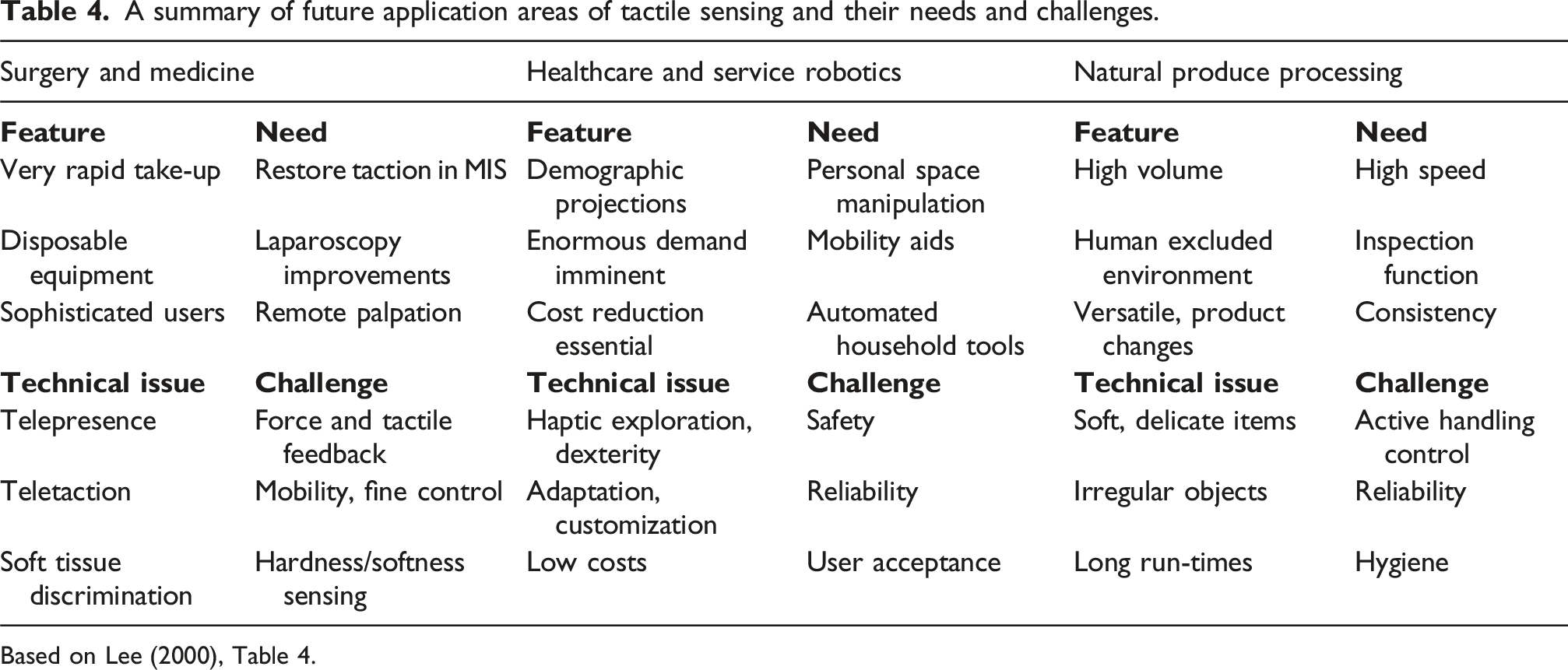

A summary of future application areas of tactile sensing and their needs and challenges.

Based on Lee (2000), Table 4.

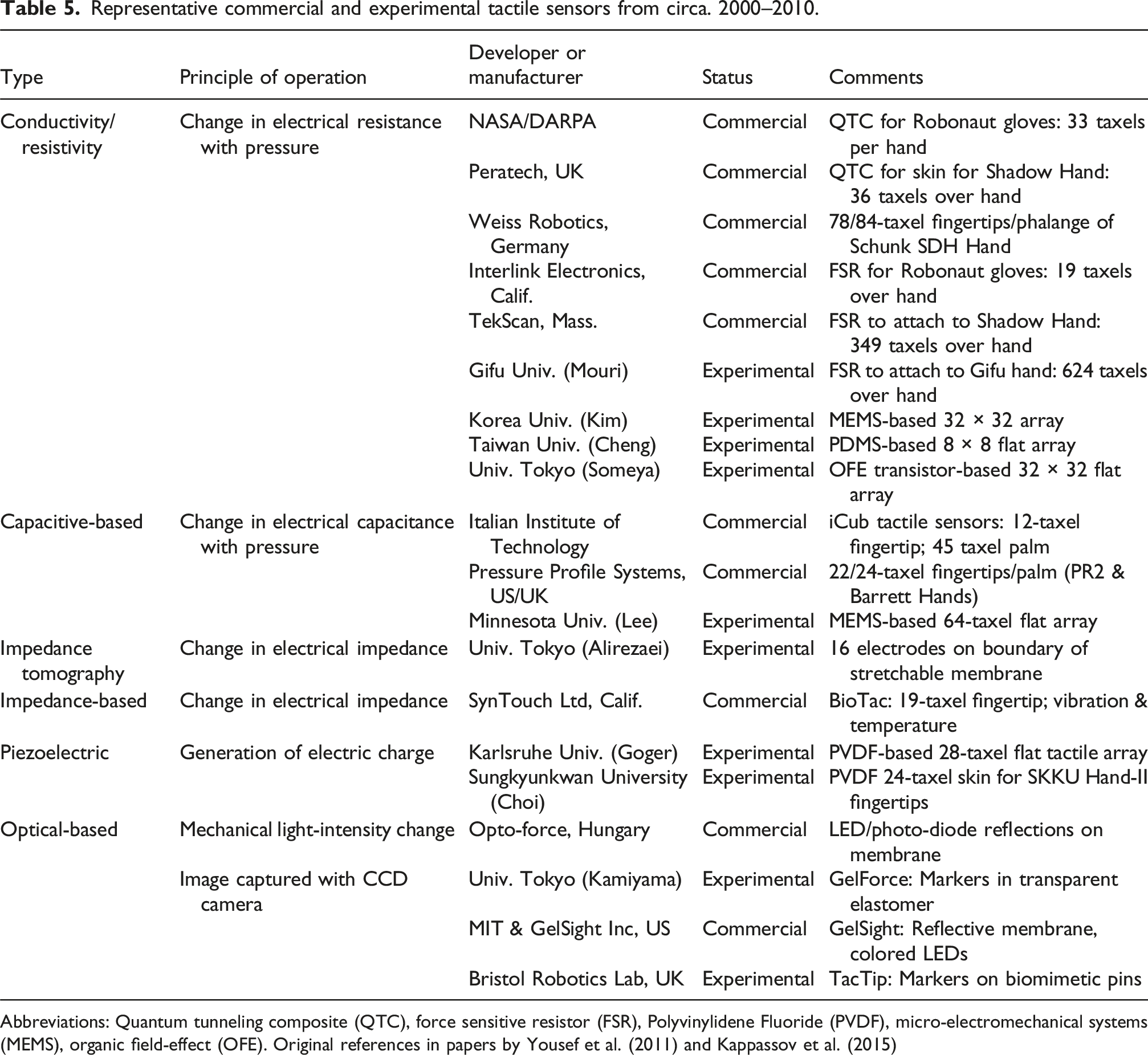

Representative commercial and experimental tactile sensors from circa. 2000–2010.

Abbreviations: Quantum tunneling composite (QTC), force sensitive resistor (FSR), Polyvinylidene Fluoride (PVDF), micro-electromechanical systems (MEMS), organic field-effect (OFE). Original references in papers by Yousef et al. (2011) and Kappassov et al. (2015)

The slow progress in tactile robotics was well described by Cutkosky et al. (2008), who said: “Tactile sensing has been a component of robotics for roughly as long as vision. However, [unlike] vision, …tactile sensing always seems to be a few years away from widespread utility.” Considering why: “one reason for the slow development of tactile sensing technology, compared to vision, is that there is no tactile analog of the CCD or CMOS optical array.” This remains the case, although perhaps with vision-based tactile sensing, the tactile analog of the CCD or CMOS array is that array.

Similarly, Lee and Nicholls (1999) said that “despite repeated suggestions in many papers regarding the potential importance of tactile sensing to industrial automation and other application areas, the literature reported very few applications beyond the experimental prototype stage and hardly any in serious regular use.” Reflecting on the “reasons for this slow commercial exploitation,” they observed that: (1) There is no localized sensory organ: transduction of tactile signals is distributed over a wide area, so the creation of an artificial tactile skin is more difficult than a discrete sensor. (2) The sensing is complex: tactile sensing does not simply transduce one physical property into an electronic signal but has many forms; for example, shape, texture, friction, force, pain, and temperature. It is not well understood how these are related and not easy to find suitable analogues within a single device. (3) Difficult to imitate: it is unclear which are the best tactile signals for applications to develop further. In contrast, computer vision research can focus on understanding images because visual data capture is solved. Tactile sensing has not yet reached this point and is still concerned with data capture. (4) Lack of availability: In addition to and because of these difficulties, there is a “lack of availability of commercial sensors with suitable configurations and characteristics.”

Even though they recognized that “the predicted growth of applications in industrial automation has not eventuated,” Lee and Nicholls (1999) were optimistic about the future of tactile sensing. They saw promising new applications in three main areas: medical procedures, especially “keyhole” or minimal access surgery; rehabilitation/health care and service robotics; and food processing and agriculture. Likewise, Lee (2000) saw that “tactile sensing has undergone a major change of direction” and that it will “soon play a major role in unstructured environments” for those same three application areas. For concreteness, he listed the main characteristics and needs to be satisfied by appropriate technological developments (Table 4), concluding that “we can look forward to the realization of this potential.”

Interest followed in applications to medicine, inspiring focused reviews of “Tactile sensing technology for minimal access surgery” (Eltaib and Hewit, 2003) in Mechatronics and “Human tactile perception as a standard for artificial tactile sensing” (Dargahi and Najarian, 2004) in Medical Robotics and Computer Assisted Surgery. These were motivated by “restoring a tactile capability to MAS [minimal access surgery] surgeons by artificial means would bring immense benefits in patient welfare and safety” and “tactile feedback to medical robotic systems [will help] approach the process demonstrated by bare-handed operations.” The latter article also renewed interest in the biomimetics of artificial touch, in which it is still influencing new audiences (Table 1). (See the previous section for prior surveys of this topic.)

Similar interest followed in applications to industry, inspiring two reviews in the journal Industrial Robotics on “Tactile sensing in intelligent robotic manipulation” (Tegin and Wikander, 2005) and “Advances in tactile sensors design/manufacturing and its impact on robotics applications” (Dargahi and Najarian, 2005). The latter article extended their prior treatment to applications in farming, the food industry, service robotics, and the environment.

More fundamentally, in this period, there was also progress in understanding the constitution of tactile robotics. The definition of artificial tactile sensing continued to be refined and improved. Lee (2000) redefined: “tactile sensing is a form of sensing that can measure given properties of an object through physical contact between the sensor and the object” (based on a similar statement by Lee and Nicholls (1999) a year earlier). Both authors rejected the overly narrow definitions given previously that just considered force sensing over an area, by accepting “any property that is measured through contact, including the shape of an object, texture, temperature, hardness, moisture content, etc.”

Lee and Nicholls (1999) also highlighted a theoretical issue for tactile sensing research, the inverse tactile transduction problem: it can be well-posed to (forward) model a tactile sensor output given an object shape and contact, but the inverse problem of inferring those low-dimensional object features from the high-dimensional tactile data can be poorly posed (Nowlin, 1991). Traditionally, this problem motivated the design of sensors with sharp tactile images that have minimal “cross-talk,” analogous to CCD imaging arrays or touch-screens. However, such tactile arrays can be low-resolution (Table 5) or too stiff to use as robotic skins. More recently, the inverse problem has been largely forgotten, since data-driven methods (e.g., deep learning) can accurately model complex statistical relationships within tactile data.

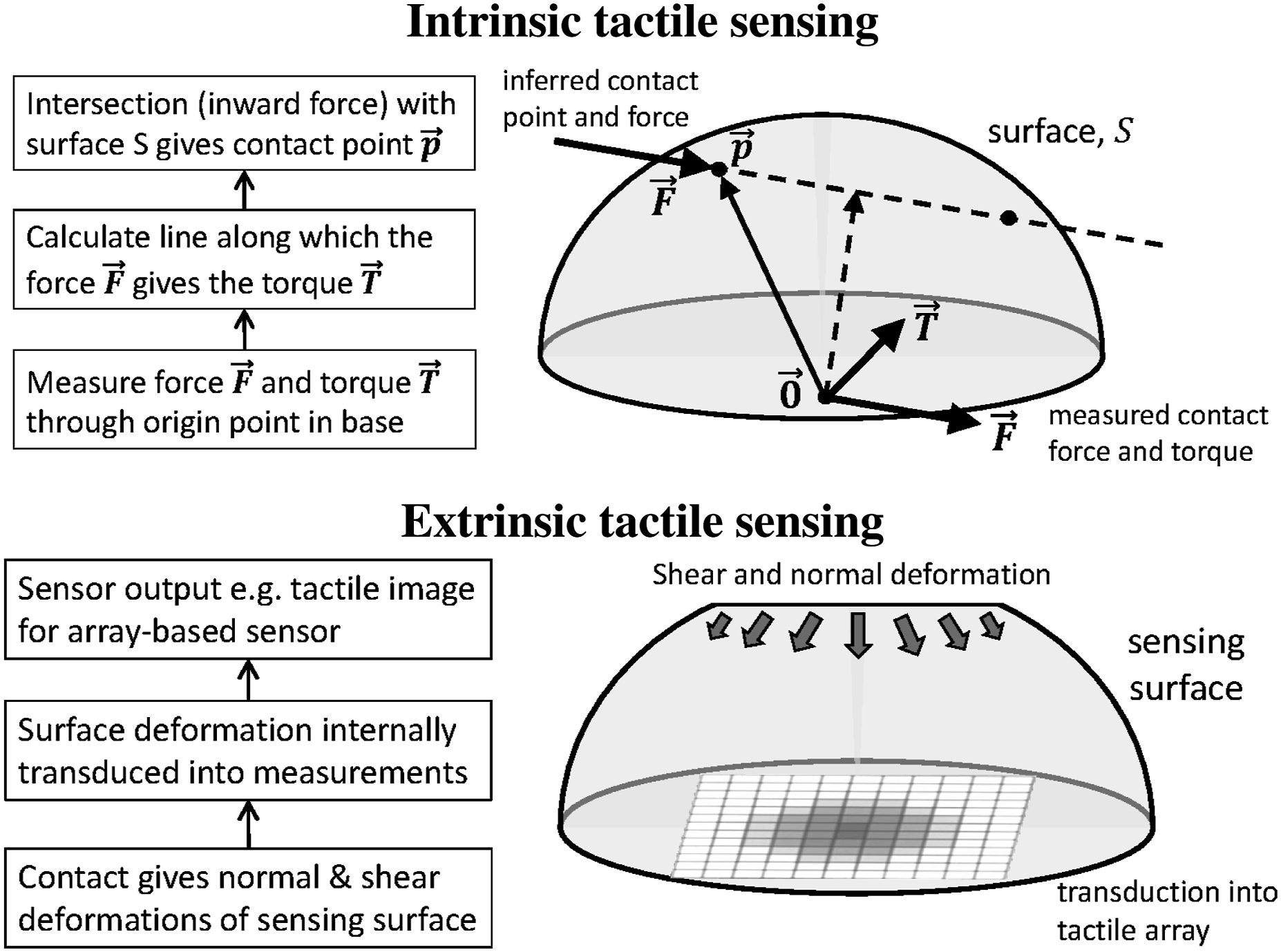

Likewise, Cutkosky et al. (2008) clarified the foundations of tactile robotics in a thorough treatment of “Force and tactile sensing.” In particular, they clarified the relation between force, tactile, and touch sensing, which is still often confused and earlier definitions considered indirectly. They said that the most important quantities measured with touch sensing are shape and force, and tactile quantities are averages or spatial distributions over a contact area. Devices that measure an average or resultant quantity are intrinsic tactile sensors and are based on force sensing (Bicchi et al., 1989). Extrinsic tactile sensors derive measurements from the contact interface, most commonly using one of the many types of tactile array (illustrated in Figure 12). Types of tactile sensing. Top: Intrinsic tactile sensing using force and torque measurements, assuming a known sensor surface shape (see Cutkosky et al. (2008), Figure 19.7). Bottom: Extrinsic tactile sensing with a tactile array, where contact is transduced into an array output.

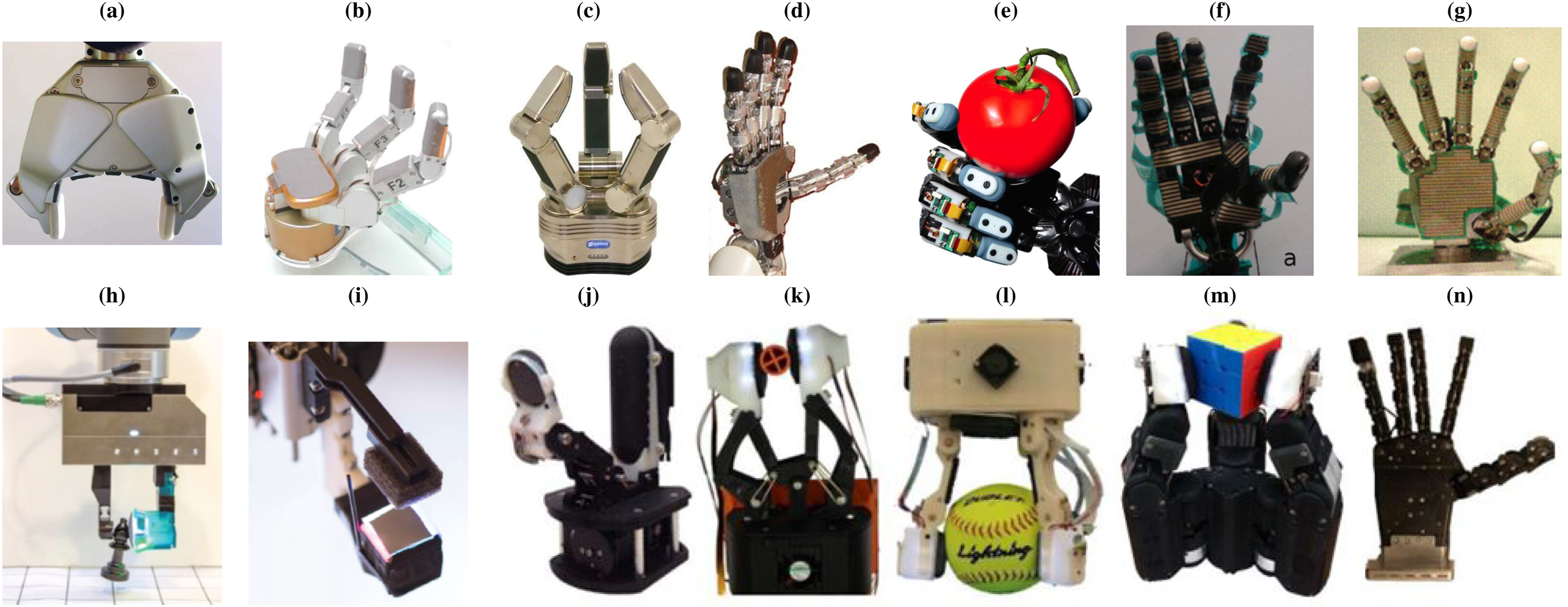

However, even though academic progress seemed slow in this middle generation of tactile robotics, the period resulted in many new commercial and experimental sensors. Some of these are collected in Table 5, and are presented in a similar way to Table 1 that showed tactile sensors from the mid-1980s. Many of the commercial tactile sensors of the 2000s were integrated with robot hands, along with some from academic laboratories (see Figure 13). Tactile-sensorized robot grippers and hands. Top row: examples circa. 2000–2010 from Table 5. (a) PR2 gripper with 2 PPS RoboTouch 22-taxel fingertips (circa. 2009). (b) Barrett hand with 3 PPS RoboTouch 22-taxel fingertips and 24-taxel phalanges, and a 24-taxel palm (circa. 2009). (c) Schunk Dexterous Hand with 3 Weiss 78-taxel piezoresistive tactile fingertips and 84-taxel phalanges (circa. 2008). (d) iCub humanoid robot hand with 5 capacitive 13-taxel fingertips and a 44-taxel palm (circa. 2010). (e) Shadow Hand with 5 SynTouch multimodal 19-taxel BioTac tactile fingertips. (f) Shadow Dexterous Hand with TekScan 355-taxel FSR tactile skin (circa. 2010). (g) Gifu hand III with 624-taxel FSR tactile skin (circa. 2002). Bottom row: examples with vision-based tactile sensors, circa. 2015–2020. (h, i) Grippers with a GelSight fingertip. (j) Model-M2 (2016), (k) Model-GR2 (2018), (l) Model-O (2020) Yale Open Hand grippers, (m) Shadow Modular Grasper (2019), and (n) Pisa/IIT SoftHand (2020) with 1, 2, 3, 3, and 5 TacTip fingertips (top row: images from Girão et al. (2013) and Saudabayev and Varol (2015)); bottom row: images from Lepora (2021) and Yuan et al. (2017)); the original references can be found in those papers). See Figure 16 for more examples.

Comparing tactile sensors in successive generations (Tables 1 and 5), a consolidation occurred that resulted in many commercial products. Most technology types were the same, such as resistive, capacitive, and piezoelectric tactile sensors. Some types became more prevalent; for example, there were many FSR technologies and optical sensing began to become established. A few technology types were new, such as impedance-based tactile sensing. In particular, the BioTac would become influential for the first part of the next generation of tactile robotics, but ultimately be discontinued by the end. Overall, this diversity of technology types and their compatibility with integrating into robot hands would help drive the expansion in the generation that followed.

2010–24: Expansion and diversification

The recent period from 2010 is characterized by a rapid expansion and diversification of tactile robotics. This change is indicated in part by the large increase in the number of reviews (on average 2–3 per year up to 2018, increasing to

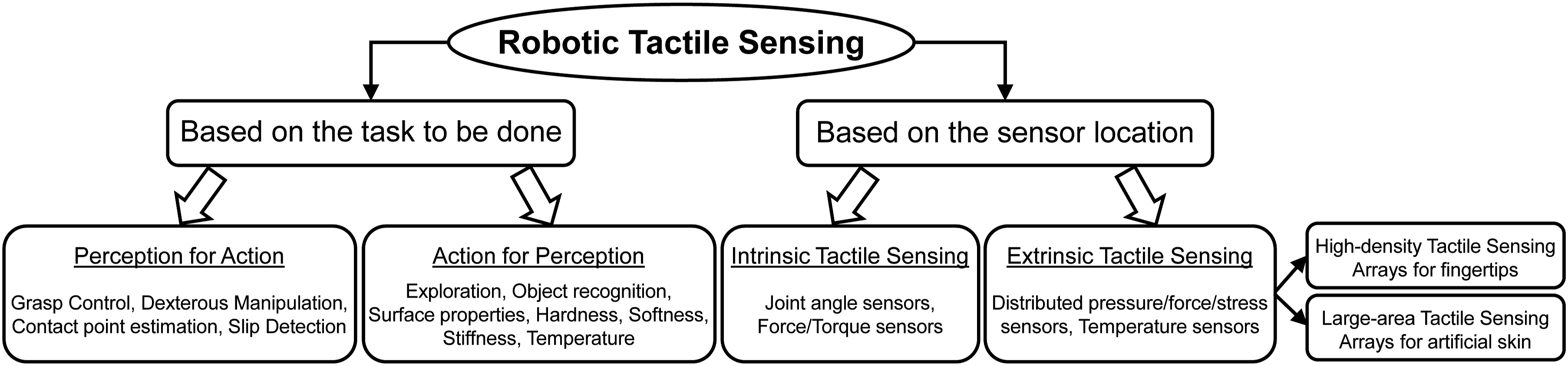

This generation began with several activities that helped cohere and accelerate tactile robotics research. The landmark review by Dahiya et al. (2010) on “Tactile sensing – From humans to humanoids” authoritatively covered all tactile robotics of its time, from the human sense of touch to the role, importance, and state of tactile sensing in robotics (see Figure 14 for their categorization of robotic tactile sensing). The authors were motivated by an ambition to provide tactile skins to cover the bodies of humanoids, which were gaining interest with the iCub robot (Metta et al., 2010), such as for human–robot interaction (Argall and Billard, 2010). These activities led to a “2011 Special Issue on a Robotic Sense of Touch” in Transactions on Robotics (Dahiya et al., 2011), which also drew research attention to tactile robotics. Dahiya et al. (2010) separated robotic tactile sensing by the tasks to be accomplished (left side) and the sensor location within the robot body (right side). Perception for action covers tasks where tactile sensing is used to control the end effector as it interacts with objects. Action for perception covers tasks where control is used to move the tactile sensor to explore or better recognize object properties. Intrinsic tactile sensors are placed within the mechanical structure (body) of the robot, for example, force sensors at the joints or fingertip base, and are akin to kinesthetic or proprioceptive sensing. Extrinsic tactile sensors are sited at or near the contact interface, for example, high-density tactile fingertips or large-area tactile skin, and are analogous to cutaneous sensing.

Since the review by Dahiya et al. (2010) until 2024, there have been no other surveys of the entirety of tactile robotics. Instead, there have been influential treatments of themes within tactile robotics. Notable examples from Figures 1 and 15 (Tables 7–9) include: “Evolution of electronic skin (e-skin)” (Hammock et al., 2013), “Tactile sensing in dexterous robot hands” (Kappassov et al., 2015), “GelSight: High-resolution tactile sensors for estimating geometry and force” (Yuan et al., 2017), “TacTip family: Soft optical tactile sensors with 3D-printed biomimetic morphologies” (Ward-Cherrier et al., 2018), and “Tactile Internet: Applications and challenges” (Fettweis, 2014).

The prevalent themes of those reviews appear to describe well the main areas of activity in tactile robotics over this generation: e-skins, tactile robot hands, vision-based tactile sensing, soft/biomimetic technologies, and the tactile Internet. In particular, these five themes usefully segregate the articles listed in Tables 7–9, and will form the basis for describing the expansion and diversification of this recent generation of tactile robotics.

Separation of tactile fingertips and e-skins

An important and timely insight made by Dahiya et al. (2010) is: “extrinsic tactile sensing is further categorized in two ways – first, for highly-sensitive parts (e.g., fingertips), and second, for less-sensitive parts (e.g., palm)” (see Figure 14, right). This separation into fingertips and artificial skins has proved insightful for the progress of tactile sensing since.

During this recent generation, the development of tactile skins has gained a momentum that is distinct from other areas of tactile robotics. More than half of the research activity on tactile sensing has focused on artificial skins or e-skins, with a rapid expansion of the research in those areas (Figure 15). Researchers in materials science have been attracted to tactile robotics, growing the field with publications in influential materials science journals such as Advanced Materials and Nature Materials (Table 8). This includes “Evolution of electronic skin (e-skin)” (Hammock et al., 2013), “Pursuing prosthetic electronic skin” (Chortos et al., 2016), and “Electronic skin: Recent progress and future prospects…” (Yang et al., 2019).

This diversification of tactile sensing into materials science has resulted in two separate research communities that have distinct priorities and research methods. Although there is some overlap in publishing venues, such as IEEE Sensors, much of the traditional tactile robotics community has continued with robotics and engineering journals and conferences, while the artificial skin community is based within electronics and materials science. Accordingly, the present article splits contributions in tactile fingertips and e-skins between Figures 1 and 15 (Tables 7 and 8). Currently, judging by the number of citations, electronic skin research comprises the larger and more active community.

In the next generation of tactile robotics, the two communities have the potential to achieve a far higher impact if they converge. For example, the potential benefits of e-skins, such as wide skin coverage and seamless integration onto the surface of robots, could be combined with the advances in dexterity from robot learning currently being developed for robotic hands with tactile fingertips.

Tactile robotic hands

Much of the foundational research on tactile sensing was motivated by a desire to create human-like robot hands. For example, in their early review of “Tactile sensing and the gripping challenge,” Dario and De Rossi (1985) concluded that “at some point in the future, the solution may be an artificial hand with intelligent control, able to process and use tactile information adaptively.” However, there was little research on multi-fingered tactile robotic hands until the 2000s, after which several commercial options became available (see Figure 13, top row). According to the article “A century of robot hands” (Piazza et al., 2019), the decade after 2010 saw as many new robotic hands as the 90 years prior, and likewise interest in tactile hands has also increased.

To show the variety of tactile robotic grippers and hands in use during that period, some photos of tactile sensing technologies collected in Table 5 are presented together in Figure 13. Interestingly, most hands (panels (a)–(e)) have dedicated tactile sensors for the fingertips, but some also have them in the phalanges (panels (b) and (c)) and/or palms (panels (c) and (d)). Two of the anthropomorphic hands are covered in tactile skin arrays (panels (f) and (g)). Impressively, the Gifu Hand shown in panel (g) had both the most tactile coverage and was the earliest (from 2002).

This availability of new tactile robotic hand technologies led to adoption in many laboratories and growth in this research area. This interest was reflected in two influential surveys of dexterous tactile robot hands: “Tactile sensing for dexterous in-hand manipulation in robotics” (Yousef et al., 2011) and “Tactile sensing in dexterous robot hands” (Kappassov et al., 2015). The expanding activity in this area was reflected in other reviews of tactile hands, such as Girão et al. (2013) and Saudabayev and Varol (2015). Since then, the focus on hands has changed to coverage within other topics, first in vision-based tactile sensing (Yuan et al., 2017; Ward-Cherrier et al., 2018), then soft robotics (Wang et al., 2018; Subad et al., 2021; Zhou and Lee, 2021; Qu et al., 2023).

Reflecting on why progress in the use of tactile robotic hands has been challenging, Yousef et al. (2011) offered several insights. First, even the simplest tasks performable by humans are difficult to study in practice, for example, multi-finger in-hand manipulation. Consequently, a full appreciation from robotics or neuroscience of the requirements for tactile skins for hands is lacking. Second, tactile sensing technologies are limited in their force range, spatial/temporal resolution, sensing area, and shear force sensing. Therefore, robot hand control has instead relied on traditional kinematic models, complicated offline planning, and overuse of external sensors such as vision. These challenges need overcoming to lead to reactive manipulators that can handle objects with ease under uncertainty, modeling inaccuracies, and nonlinear dynamics.

Kappassov et al. (2015) expressed a complementary view that future research work in dexterous manipulation should focus on the investigation of autonomous control algorithms that apply tactile servoing and force control. Combined with tactile-based object recognition and grasp stabilization, this would allow robot hands to operate in real-world scenarios.

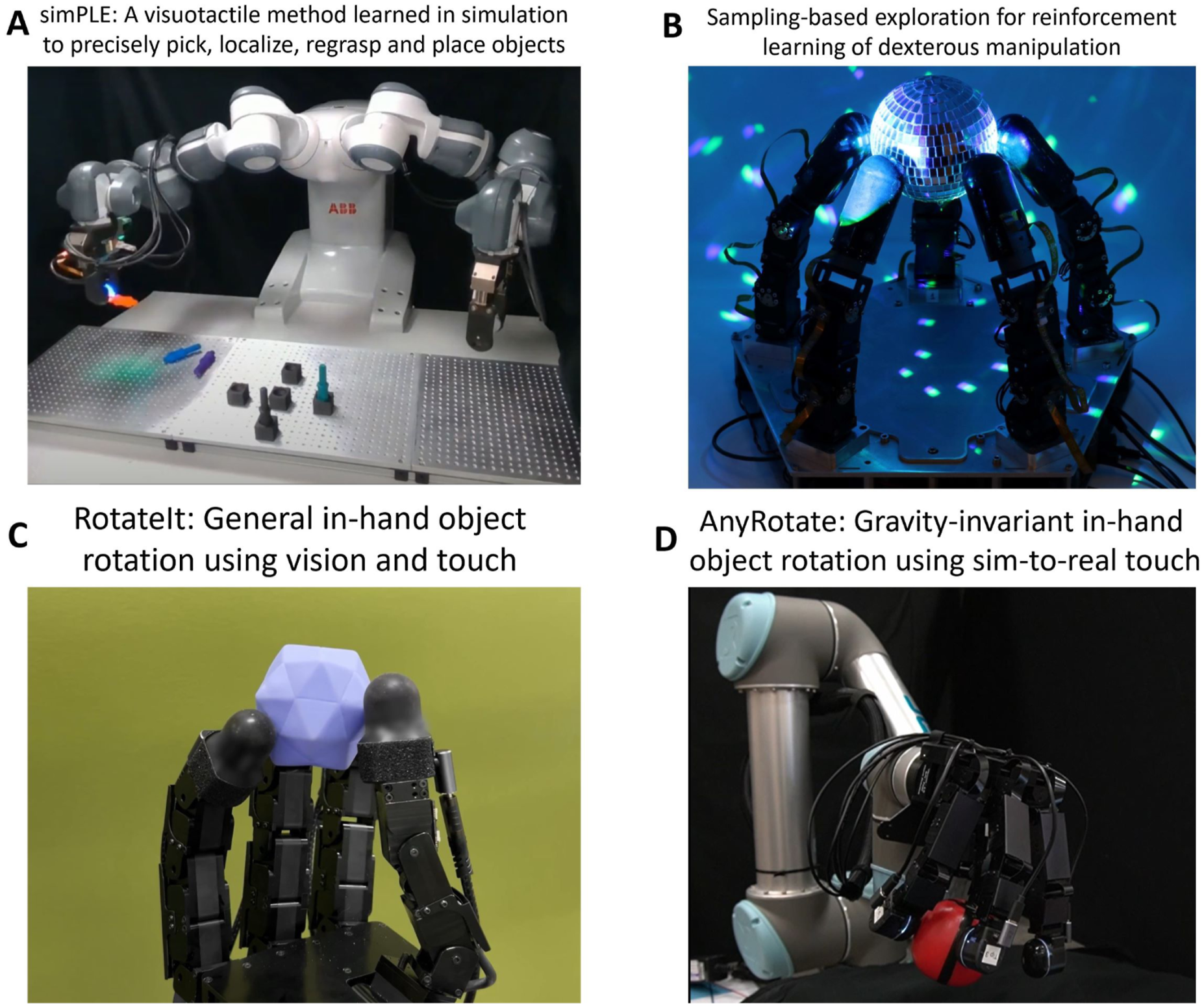

Very recently, the field has undergone a step change with multiple demonstrations of in-hand object manipulation with multi-fingered robotic hands (Figure 16), as surveyed in a commentary in Science Robotics (Lepora, 2024). All of those hands used high-resolution tactile sensing in the fingertips, consistent with comments by Yousef et al. (2011). They also used variants of tactile control, trained via deep reinforcement learning in a physics simulation. By encountering many objects in simulation, they learn how to manipulate new objects while maintaining a stable grasp. State of the art in robot dexterity using sim-to-real methods with deep reinforcement learning applied to tactile data from optical tactile sensors. From Lepora (2024), where references to the individual studies can be found.

Vision-based tactile sensing

Vision-based tactile sensing has been a promising technology since the origins of tactile robotics. The first tactile sensing system was vision-based (Strickler, 1966a, 1966b), using a fiber-optic bundle to transmit an internal view of a deformable skin surface to a TV camera (shown in Figure 2). By the mid-1980s, many different optoelectronic technologies for tactile sensing were being explored, including fiber optics, photo detector/LED pairs, and the integration of a high-resolution CCD camera within the fingertip of a gripper (see Figure 8 and Table 1). This latter technology, by Mott, Lee, and Nicholls from Aberystwyth, Wales, pioneered vision-based tactile sensing 30 years before it would become a main theme of tactile robotics (Mott et al., 1984).

In the mid-2010s, vision-based tactile sensing began to expand rapidly as a research field. For example, the first two dedicated reviews, on “GelSight: High-resolution tactile sensors for estimating geometry and force” (Yuan et al., 2017) and “TacTip family: Soft optical tactile sensors with 3D-printed biomimetic morphologies” (Ward-Cherrier et al., 2018), now count among the most cited reviews in that generation of tactile robotics (Table 7). Each of them focused on a specific type of vision-based tactile sensor design from the teams developing these technologies. The GelSight uses graded light-intensities, like Mott et al. (1984), whereas the TacTip uses discrete markers. Both types of design can be effective for robot dexterity: two examples of state-of-the-art manipulation in Figure 16 use GelSight-type sensors (top and bottom left); another example uses TacTips (bottom right).

As interest in these technologies grew, there followed many reviews of vision-based tactile sensing (e.g., Shimonomura (2019); Abad and Ranasinghe (2020); Shah et al. (2021); Lepora (2021); Zhang et al. (2022); Li et al. (2023)) that covered a diversity of vision-based tactile sensor variants. Examples include ChromoTouch, DIGIT, DigiTac, FingerVision, F-Touch, GelForce, GelTip, GelSlim, MultiTip, NeuroTac, OmniTact, Soft-bubble, and Tac3D (references in the reviews). All of these tactile sensors use camera images to represent contact information (Li et al., 2025) in one of two ways: intensity-based, where continuous variations in light intensity show the skin indentation, or marker-based, which images discrete features coupled to the skin deformation. These mechanisms can be further subdivided: intensity-based may use reflected light (as in the GelSight) or refracted light (Mott et al., 1984); marker-based may use simple markers inside the skin, or morphological structures that transform the skin deformation (as in the TacTip). Other options (e.g., transparency) and combinations also exist.

Why this growing interest? At heart, it is because vision-based tactile sensing is proving to be effective in enabling robot dexterity; for example, in-hand manipulation (Figure 16). This appears due to an alignment of several technologies: (1) miniature digital cameras, first from webcams for cheap and easy construction of test sensors, then smartphone cameras have led to smaller designs suitable for fingertips; (2) 3D-printers, first for making the sensor body and molds for skin, then with multi-material printing for rapid design and fabrication of the skin and other non-electronic parts; (3) neural network software libraries, which can be used to extract useful information from high-resolution images, specifically contact-related features from tactile images.

Tactile robotics is still in a phase where these constituent technologies are improving, so vision-based tactile sensing will also continue to improve (e.g., use of multiple cameras). However, there is potential to make further progress by being integrative with different themes of tactile robotics, such as with complementary aspects of e-skin technology.

Soft and biomimetic technologies

Soft and biomimetic tactile sensing has a long history of inspiring robotic touch, dating back to the beginnings of the research field. Harmon (1984) first gave insight into how human touch may guide robot touch, spanning from mechanoreceptor transduction to active control of touch, from the perspective that “natural systems provide existence proofs of engineering success.” This theme of taking inspiration from the biology of human skin to design artificial sensors has continued over the history of tactile robotics (Dario and De Rossi, 1985; Jayawant, 1989; Howe and Cutkosky, 1993; Lee and Nicholls, 1999; Dargahi and Najarian, 2004; Cutkosky et al., 2008; Dahiya et al., 2010; Yousef et al., 2011). Meanwhile, soft robotics often draws on nature for inspiration of novel material properties and body morphologies (Pfeifer et al., 2007; Kim et al., 2013). This leads to a natural synergy between soft and tactile robotics, as skin is a soft biological structure.

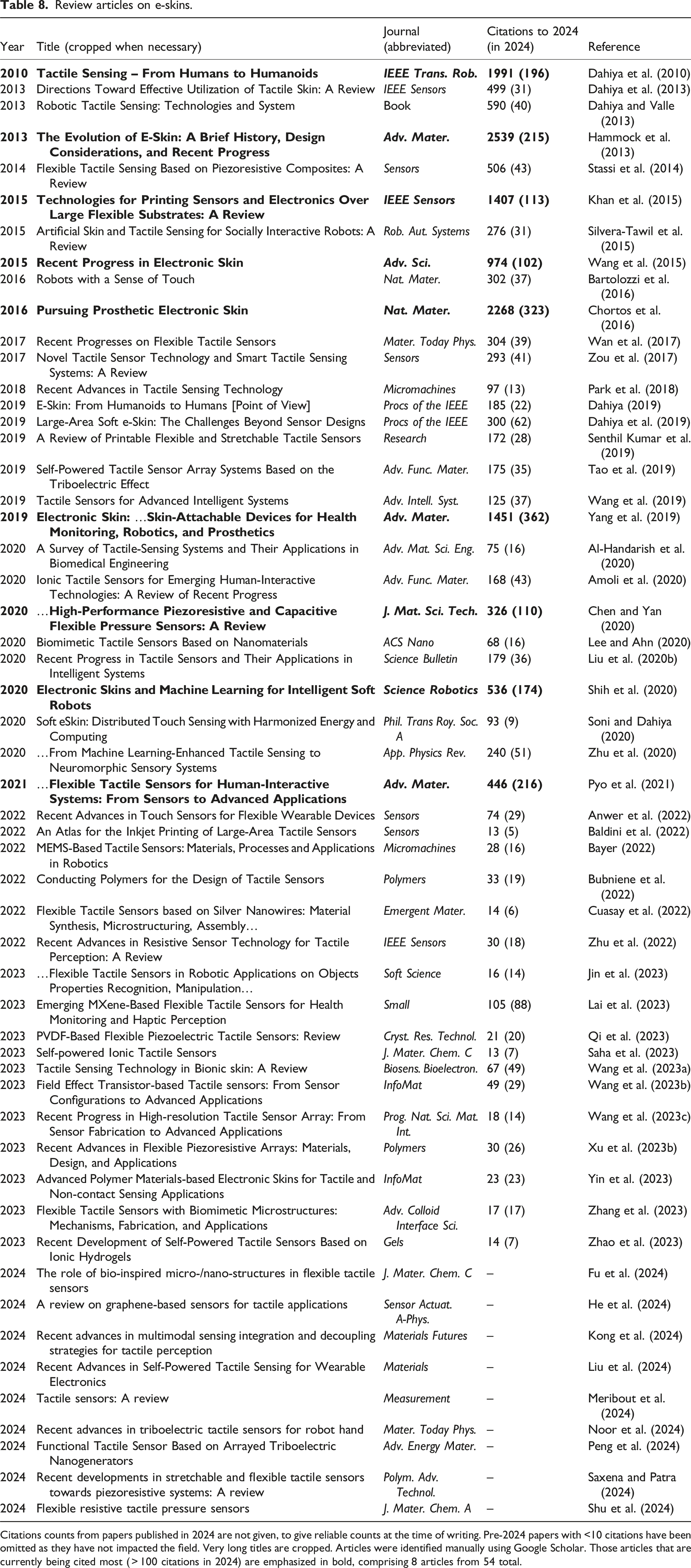

Some aspects of human skin physiology that have biomimetic tactile sensor counterparts (see Lepora (2021), Table 1).

Embodied intelligence combines aspects of intelligence from morphology with those from traditional computation. The physical interaction between a robot and the environment is the central consideration (Liu et al., 2020a; Li et al., 2020), such as for tactile object recognition (Luo et al., 2017; Liu et al., 2017). These topics and their origins in active touch are understudied but core to a future AI of touch.

This combination of the computational and morphological aspects of tactile sensing is central to many works. In “Tactile sensors for friction estimation and incipient slip detection,” Chen et al. (2018) considered how the tactile skin surface facilitates grip control. Human fingertips have papillary ridges (fingerprints), one function of which is to help signal a loss of grip before an object slips. This understanding has inspired the design of some tactile sensors, such as the PapillArray covered in that review. Generally, there has been a sustained interest in tactile slip detection, being fundamental to secure grasping and manipulation (Francomano et al., 2013).

In considering “perceptive soft robots,” Wang et al. (2018) observed that “a variety of soft sensing technologies are available today, but there is still a gap to effectively utilize them in soft robots for practical applications.” Furthermore, proprioception is far more challenging for soft than “hard” robots, “because they have almost infinite degrees of freedom and can be deformed by both internal driving and external loads.” Likewise, those challenges are magnified for tactile sensing when the sensor must also be a flexible outer skin for the robot. Consequently, there has been interest in tactile sensors suitable for the outer structure of a soft body, such as piezoresistive, capacitive, piezoelectric, and triboelectric sensing (Subad et al., 2021; Qu et al., 2023), with an emphasis on 3D-printing (Zhou and Lee, 2021). These areas are among the most active in tactile robotics.

Toward a tactile internet

A new theme in tactile robotics that emerged in the mid-2010s is to envision a tactile Internet: a telecommunications network that connects tactile robots and human operators to relay physical touch experiences and human manipulation skills. In essence, this idea dates back to telepresence and its applications that Minsky (1980) popularized. However, a prospective tactile Internet opens up many new applications through modern telecommunications (e.g., telemedicine, avatars) and challenges (e.g., data security).

The term “Tactile Internet” was introduced by Fettweis (2014) to describe mobile applications that become viable when round-trip latencies drop below 1 ms, since “this is the typical interaction latency required for tactile steering and control of real and virtual objects without creating cybersickness.” Because data rates for wireless technologies follow Moore’s law of doubling every 18 months, he predicted in 2014 that this progress will soon “revolutionize education, mobility and traffic, health care, sports, entertainment, gaming and the smart grid.” 5G networks with latencies of 1 ms have been widely available since 2020, making this now a reality.

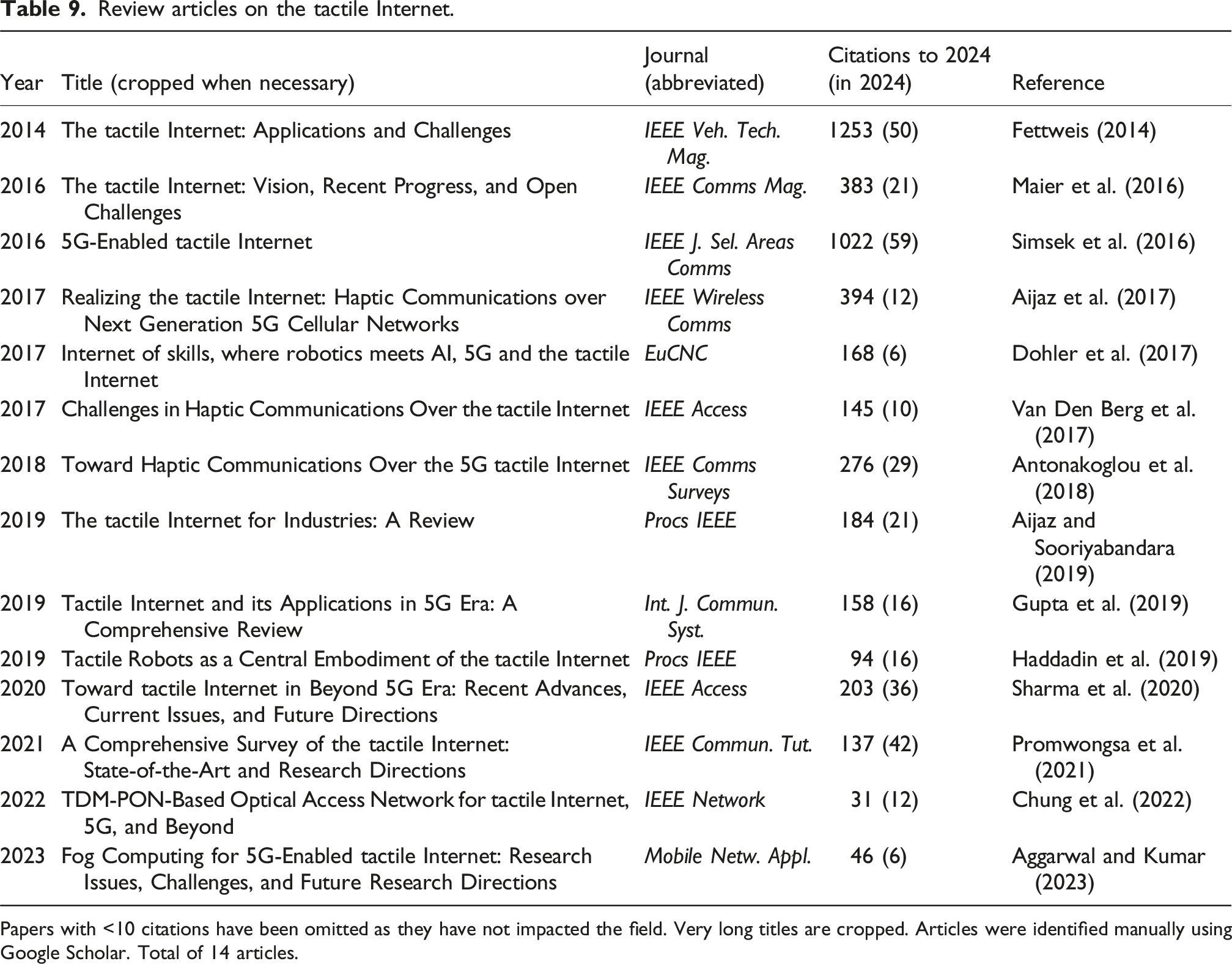

In the past decade, there have been many surveys with overlapping content on technical requirements for the tactile Internet and its applications (e.g., Maier et al. (2016); Simsek et al. (2016); Aijaz et al. (2017); Dohler et al. (2017); Antonakoglou et al. (2018); Gupta et al. (2019); see Table 9 for a list). One trend is that the number of new review articles has dropped since 2020, when the citations per year of the early articles peaked, in contrast to other areas of tactile robotics. This may be because 5G is a mature technology and the bottlenecks for a tactile Internet are now a need for improved haptics and tactile robotics.

This need for improved technologies was recognized by Haddadin et al. (2019), who considered “Tactile robots as a central embodiment of the tactile Internet.” In their view, the tactile robot will constitute the next generation of robots with capabilities beyond those of the current generation whose active compliance stems from knowledge of their kinematics and intrinsic joint forces/torques. However, some “missing technologies” are needed for that new generation: (1) an affordable, easy-to-use artificial skin that enables tactile sensing for robotic platforms; (2) wearables that are intuitive to use and seamlessly connect to immerse human use in the tactile avatar; and (3) exoskeletons as human system interfaces that provide realistic sensation of forces or contact and proprioceptive information. All of these areas are being actively investigated, but the research has not yet escaped the preliminary stage and lacks commercial availability.

2025-: The future of tactile robotics

Like societal change, tactile robotics has cycled through a succession of historical generations, here referred to as: 1965–79 (origins), 1980–94 (foundations and growth), 1995–2009 (tactile winter), and 2010–2024 (expansion and diversification). This cycle suggests that tactile robotics is entering a new generation, raising the question: What is the future of tactile robotics from 2025 onward?

Presented below are some views of the future separated into: (i) near-horizon trends that may continue along somewhat predictable paths from recent history; and (ii) disruptive changes that may unfold unpredictably, where the entire history of the field informs what may occur. Near-horizon trends will be extrapolated from the five emergent themes in 2010–24 surveyed in the last section. Some potentially disruptive changes will then be considered, such as the impact of artificial intelligence on tactile robotics. (1) Tactile e-skins: As research diversified in the recent generation of tactile robotics, an e-skin community has formed around progress in materials science and electronics. Currently, the e-skin community is more active than the original tactile robotics community (c. f., Tables 7 and 8), which will continue for the near future. New skin-like tactile arrays will have novel capabilities, such as low- or self-powered integrated sensing and computation, and novel sensors fabricated with advanced materials such as graphene. (2) Tactile robot hands: Many new grippers and multi-fingered robot hands have emerged in the past decade, and it is now routine to integrate tactile sensors into their fingertips (e.g., Figure 13). For a long time, a main goal for the field was to reach human-like capabilities, such as in-hand and bi-manual dexterous manipulation. Very recently, the field has undergone a step change with multiple demonstrations of such capabilities (see Figure 16), using state-of-the-art AI methods such as sim-to-real deep reinforcement learning applied to tactile robotics. In the future, this dexterity will become routine and other examples of in-hand dexterity will follow, such as tool use or manual assembly. (3) Vision-based tactile sensing: Progress has recently accelerated with the rapid miniaturization and shrinking cost of camera technology and the translation of AI software for computer vision to tactile robotics for dexterous manipulation (Figure 16). There will be continued exploration and improvement of the design and fabrication of suitable tactile contact surfaces for imaging. Furthermore, future advances that ease the manufacture and adoption of vision-based tactile sensing will encourage commercialization and transfer to industry. In the longer term, the technology will become ever smaller and easier to integrate, for example, extending from fingertips to the phalanges and palms of robot hands. (4) Soft and biomimetic tactile robots: There is a natural and established synergy between tactile sensing, biomimetics, and soft robotics. Areas of activity include tactile skins designed to instantiate biologically-inspired processing that simplifies downstream perception and control, and attempts to combine tactile sensing with soft robots. In the future, these topics will influence novel e-skins, tactile robot hands, and vision-based tactile sensors. However, major progress is needed to utilize soft robots for practical applications, given its preliminary nature in many areas. Specific aspects such as morphological computing and the use of compliance can be more readily applied to conventional “hard” robots with soft tactile components. (5) Tactile Internet: Excitement about a future tactile Internet stemmed from anticipation of telecommunication latencies dropping below a 1 ms threshold for remote touch. That threshold was passed with 5G starting in 2020, which is now a mature technology. Therefore, the key enabling technologies needed for a tactile Internet are now to have consumer-ready tactile robots and haptic feedback devices. The next generation of tactile robotics could finally reach this maturity to span applications envisaged in industrial robotics (Harmon, 1982) and telepresence (Minsky, 1980).

However, the above predictions do not say what will truly happen in the next generation of tactile robotics. They only say how this current generation will continue. What could be reasonably expected to be a retrospective on tactile robotics after another generation has passed, in 2040?

The 2010s saw unprecedented progress in AI, particularly in computer vision, extending to generative AI and language models in the 2020s. Underpinning that progress has been ever-increasing compute power, which has both become more accessible and an energy burden. In other technologies, automated fabrication has steadily improved, with affordable high-quality 3D-printing, high-end metal printing, and additively-manufactured electronics. The life sciences have advanced in gene-editing and synthetic biology, although they are unlikely to affect tactile robotics apart from being a use case in automated labs. Virtual reality is now an established commercial product that should drive progress in affordable haptic glove technology. Telecommunications continues to advance through 5G to 6G, offering the tactile Internet if haptics and tactile robotics can come together.

How will technological progress affect tactile robotics? Certainly, no one expects another tactile winter, and we are long past the early optimism of the 1980s. Probably, the most impact is to come from synthesis: combining domains of technology to create something new. Currently, tactile robotics is fragmented. Artificial skin researchers may look on vision-based tactile sensors as bulky and impractical, while those applying AI to vision-based tactile sensing see years of unmet promises to transform robot dexterity with other sensor types. Likewise, the soft robotics community may eschew traditional electromechanical robots to focus on early-stage sensor and actuator research, while those building highly-dexterous robot hands see an imminent deployment of their technologies.

The most progress in robot dexterity may come from unifying the diverse themes of tactile robotics. To some extent, this is happening, as vision-based tactile sensing and robotic hand research combine for in-hand and bimanual dexterity (Figure 16). There are other areas of synthesis, such as combining e-skins over large surfaces with vision-based tactile fingertips, or deploying the AI methods used for in-hand and bimanual robot dexterity on soft robots or with artificial skins. Other key areas of synthesis exist, and moving out of siloed approaches will be important.

Clearly, advances in AI have the potential for major disruptive impact on all tactile robotics. However, tactile sensing has been a blind spot for AI research. In assessing the “Potentials and limits for deep learning in robotics,” Sünderhauf et al. (2018) did not mention tactile sensing. OpenAI’s influential work on in-hand manipulation (Andrychowicz et al., 2020) used about 20 cameras for a task that would seem more suited to touch. It then took several years for university research laboratories to surpass that dexterity using tactile sensing (see Figure 16). Tactile robotics is still a small community. It would be transformative if even a fraction of the community applying AI to vision could change focus to touch. Perhaps it is fanciful, but touch could become as central to future AI research as sound/speech and vision are now. Rather than vague claims about “Artificial General Intelligence,” an AI of tactile robotics could give tangible insight into human intelligence through understanding how our nervous systems are wired for dexterity.

Finally, humanoids will clearly have an important role in the future of tactile robotics. Currently, in the mid-2020s, many tens of billions of dollars are being invested in their development. An output of this investment is a stream of videos showing sleek-looking androids walking or dancing on stage and in other human-centric environments. This is very impressive, but apart from some side applications in entertainment or education, the reason for a humanoid robot is to be a labor-saving device. To perform human labor, the humanoid must have useful hands that function with near-human dexterity. As we have seen, that goal remains some way off and the technology may be harder to develop than a walking robot. That said, a humanoid is well-suited for transporting a pair of dexterous tactile robot hands to where they are needed in human environments. When tactile robotics results in robots with human-like dexterity, humanoids could become commonplace. In the meantime, though, they may not be particularly useful in our daily lives.