Abstract

With the wide application of simulation and optimization tools in engineering problems, how to build a metamodel, which can satisfy the high accuracy requirements of real working conditions and realize fast optimization in the entire design space, becomes a hot issue. On the basis of sequential sampling optimization and updating design space optimization, a two-level global optimization method based on hybrid metamodel is developed. In this method, the hybrid metamodel is constructed using random sampling. In the first level, a space reduction strategy is proposed to reduce the design variable space and guide the search to the promising region. In the second level, Adaptive simulated annealing algorithm is integrated with metamodels to search the global optimal value in the promising region. Several global optimization problems and a real industrial design optimization example are utilized to demonstrate the superior performance of the proposed method.

Keywords

Introduction

At present, optimization tools such as finite element analysis (FEA) and computational fluid dynamics (CFD) have been widely used in product optimization design. However, the optimization accuracy and efficiency are still the bottleneck for its development. It is reported that it takes Ford Motor Company about 36–160 h to run one crash simulation; 1 One run of a whole car impact simulation still costs more than 10 h to simulate a 0.1-s impact process. 2 It can be seen from the literature that a cheap approximate model is often used as a surrogate of the expensive model to carry out optimization design. 3 Approximate model, which is also known as metamodel, surrogate model or response surface, can relieve the computing budget and improve the design efficiency of simulation-based problem. 4 The commonly used approximate models include quadratic response surface model (QRSM),5,6 kriging (KRG),7–9 radial basis function (RBF),8,10,11 and multivariate adaptive regression spline (MARS) 12 . When the accuracy of metamodel meets the design requirements, the optimization design can be conducted based on the model. In order to establish high accuracy of approximate model with sampling points as less as possible, there are two commonly used methods: the sequential sampling and updating optimization space.

Sequential sampling optimization

Traditional sampling methods can be divided into one-stage sampling and sequential sampling. Because it is difficult for one-stage sampling to obtain suitable sample size, in order to address this issue, researchers seek to improve model accuracy through sequential sampling. 13 Currin 14 carried out sequential sampling based on entropy criterion to maximize the information amount of sample points in the framework of Bayesian method. Osio and Amon 15 proposed Bayesian surrogate models and a sequential sampling method based on the A-optimality criterion. Lin et al. 16 proposed the sequential exploratory experimental design (SEED) method, in which data points are identified sequentially based on Bayesian entropy sampling by removing the stationary assumption and introducing correction factors in the calculation of correlations between points. Yao et al. 17 proposed a gradient-based sequential radial basis function neural network (RBFNN) modeling method, which utilizes the gradient information of the present model to expand the sample set and refine the model sequentially. Kitayama et al. 11 presented a sequential approximate optimization (SAO) procedure, in which the optimum of the response surface and the global minimum of the density function are taken as new sample points to improve the model accuracy. Aute et al. 18 developed a KRG metamodel for leave-one-out (LOO) error, point with highest estimated value of LOO error is added to the sample set, and maximum entropy design is integrated with maximin distance to improve the model accuracy. Xu et al. 13 used Voronoi diagram to partition the design space; the error behavior of each cell is estimated through LOO cross-validation approach; new points are sampled sequentially in a Voronoi cell with the largest error value to rapidly improve the metamodel accuracy.

The sequential sampling methods for single metamodel mentioned above can effectively improve the accuracy of approximate model. However, single metamodel has its specific applicable conditions. In order to exploit the approximate performance, the hybrid metamodel has gradually become a research focus. Zhang et al. 19 determined the weight of each contributing surrogate model based on the local measure of accuracy for that surrogate model in the pertinent trust region and selected weights for the three component surrogate models adaptively to improve the overall accuracy of the model. Tang et al. 20 constructed hybrid metamodel for black box functions by combining quadratic polynomial response surface with RBF and conducted optimization design by means of particle swarm algorithm. Sun et al. 21 considered and compared typical surrogate approaches such as response surface methodology, KRG, RBF, and support vector regression for the best modeling accuracy. Yin et al. 22 integrated the dynamic ensemble metamodeling method with the multi-objective particle swarm optimization (MOPSO) algorithm to improve the accuracy of multi-objective crashworthiness optimization. Fang et al. 23 established the ensemble of surrogates to approximate the fatigue life function of a truck cab for the purpose of decreasing the modeling error. Ferreira and Serpa 24 created ensemble of metamodels based on least squares (LS) which is used to estimate the ensemble weights without using any explicit error metrics. Gu et al. 25 proposed a hybrid and adaptive metamodel (HAM)-based global optimization method, which applies three metamodels concurrently, sample data points are selected adaptively according to the calculated values of the three metamodels to gradually improve modeling accuracy. Li et al. 26 concentrated on the high-dimensional optimization problems. Multi-surrogate sampling strategy is utilized to construct more reliable model, and the two-level optimization strategy is adopted to improve the efficiency of the surrogate with limited samples. Qi et al. 27 claimed the accuracy of the surrogate model is improved by constructing ensemble surrogates, and the reliability of the solution obtained by the optimization model could be improved using sequential sampling. Ye et al. 28 created an ensemble of surrogates with optimized weight factor to provide robust prediction performance and selected the promising point to supplement the sample set to improve the accuracy of the model.

By adopting sequential sampling method, the accuracy of single metamodel or hybrid metamodel can be improved. For single metamodel, the model accuracy is easily influenced by the characteristics of the approximated object. For example, it is difficult for high-dimensional non-linear function to get high-accuracy model with QRSM, which is more suitable for low-order function. As for sequential sampling method based on hybrid metamodel, difficulty is encountered in trying to obtain proper weight value for each model when setting weight and conducting linear combination. Meanwhile, for sequential sampling method, the clustering situation is easy to appear with the increase in the sample points. Jin et al. 5 claimed the accuracy of an approximate model is not decided by the sample size. An interpolation method, like RBF, tends to over-fit the performance, which may lead to large local errors. However, even the large sample size does not necessarily reduce local errors. Therefore, with a certain sample size, reducing and partitioning design variable space becomes another important direction to improve the optimization accuracy and efficiency.

Updating design space optimization

Updating design space optimization is a strategy which gradually updates optimization design variable space and focuses on the key concerned area. Srinivas and Patnaik 29 improved the optimization efficiency of genetic algorithm by reducing variable space. Chen and Smith 30 used representation-level constraints to beneficially reduce the search space and improve the search efficiency of genetic algorithm. Wang et al. 31 proposed adaptive response surface method (ARSM), in which the quadratic approximation model is created for computation-intensive design objective function, a threshold or a cutting plane is used to reduce the design space to approach optimal value. In order to address the issue that ARSM requires a great amount of design experiments for high-dimensional design problems, Wang 32 took a general sampling strategy which inherits sample point as long as it falls into the updated design space. Stander and Craig 33 constructed a series of linear metamodels in a subregion of the design space and iteratively contracted the size of this subregion to approach the optimum. Wang et al. 34 proposed a new global optimization method, in which quadratic regression is performed to detect the key region, and mode-pursuing sampling method is used to generate more sample points in the concerned area. Melo et al. 35 developed domain optimization algorithm (DOA), which can efficiently eliminate search-space regions where there is low probability in containing a global optimum and speed up the search to the global optimum. Wang and Timothy 36 proposed an intuitive methodology which the fuzzy c-means clustering method is utilized to cluster sample points, and optimization design is carried out after systematically reducing the design space to a relatively small region. Zhu et al. 37 applied fuzzy c-means clustering method to identify the reduced attractive regions in the original design space, which accelerates the search of the global optimum. Li et al. 38 proposed a new metamodel-based global optimization method, in which fuzzy c-means method and Gath-Geva clustering method are applied to divide the design space into several small interesting cluster spaces for low- and high-dimensional problems, respectively, the modeling efficiency is improved by means of the reduction principle. Wu et al. 39 divided the entire domain of design variables into multiple cells, and identified rougher cells with large modeling error, and then added a small number of additional sample points into these cells to improve the model accuracy. Long et al. 40 integrated ARSM with intelligent space exploration strategy (ISES) to carry out space reduction for higher global optimization efficiency. Gan et al. 2 applied two different search strategies, respectively, inside and outside the promising region to improve optimization efficiency. Dong et al. 41 classified design variable space into global space, reduced medium space, and local space, and the search process is carried out alternately in three spaces to improve search efficiency. Wang et al. 42 discussed several novel space reduction methods and their application in sheet metal forming design due to their high efficiency.

By reducing variable space or emphatically searching in the promising region after partitioning the design space, the metamodel-based optimization efficiency can be improved effectively. The optimization method based on space updating can accelerate convergence to the optimal solution. However, the accuracy is influenced by the method of space reduction or space partition.

On the basis of the research on these two methods which aim to improve optimization accuracy and efficiency, this article proposes a hybrid metamodel–based two-level global optimization (HMTGO) method. In the first level, considering the diversity of surrogates, a space reduction strategy based on hybrid metamodel is proposed to reduce variable space and guide the search to the promising region. Compared with the existing space reduction strategy, in which single metamodel and clustering method are utilized, the space reduction strategy proposed in this article can simplify the search process and ensure the reliability of optimization results. In the second level, adaptive simulated annealing (ASA), 43 which can effectively avoid being trapped into the local minima, is integrated with hybrid metamodel to search the global optimal value and ensure the accuracy of optimization results.

Through space reduction strategy and two-level optimization, the method maintains a balance between optimization accuracy and efficiency, and conducts a beneficial research on the engineering optimization problem.

The hybrid metamodel-based two-level global optimization method

In recent years, Latin hypercube design (LHD) sampling is considered as one of the most popular design of computer experiments methods. Optimal Latin hypercube design (OLHD) not only has the projective characteristics of LHD but also has a good space-filling property. 44 Therefore, OLHD is adopted to generate sample points in two-level optimization.

Haftka et al. 45 claimed that fitting multiple surrogates to the same data is very straightforward in approaches that zoom on promising regions of the design space to restrict the search space. Hence, multiple approximate models are used for space reduction and promising region searching in this article. Two metamodels are not enough to ensure the robust of prediction performance, and more metamodels will increase the amount of computation. According to the test results and the existing literatures, three to five metamodels are suggested. The selected metamodel depends on the type of objective functions and the characteristic of the metamodel itself, and the hybrid metamodel should be suitable for the pre-optimized function. Jin et al. 46 pointed out that QRSM is very accurate for low-order non-linear functions and insensitive to the numerical noise; KRG is better for high-order functions; the average accuracy and robustness of RBF are influenced only slightly by sample size. So, these three approximate models can cover quite a few types of functions and are utilized to construct the hybrid metamodel.

ASA is an efficient stochastic global optimization algorithm. This article utilizes this algorithm in the second-level optimization to search the optimum for the following reasons: (1) ASA is not only especially suitable for the optimization of multi-modal, discrete, non-linear, and non-differentiable target functions but also ensures a high search efficiency. (2) Compared with other optimization algorithms, ASA can avoid getting trapped in local optimal effectively. 47

Methodological model

The hybrid metamodel-based two-level global optimization method includes two-level search process. As shown in Figure 1, first, conduct data sampling using OLHD, establish three approximate models of QRSM, KRG and RBF, respectively, for time-consuming practical problems. In the first level, evaluate sample points generated from OLHD via these three approximate models; after obtaining the approximate response values, select partial sample points with the lowest response values to reduce variable space. With the continuous reduction of space, the sample points for establishing approximate model are gradually approaching to the true optimal value. In addition, the accuracy of the approximate model is being improved constantly. In the second level, obtain a small variable space after dealing with the reduced space of the first level and conduct optimization search through ASA in the small space by means of three latest approximate models established in the first-level optimization, and then obtain the approximate optimal value of each model. Make a comparison between the approximate optimal value of the latest QRSM figured out by ASA in the small space and the approximate response value of QRSM founded from the space center sample point. If the difference of these two approximate values meets the accuracy requirements, then the searching optimization is terminated; the sample point corresponded to the optimal solution of the latest QRSM figured out by ASA in the small space is the global optimal point; if not, then confirm the updated variable space based on the approximate values founded through ASA on the latest three models. Reduce the space via the latest QRSM model which was constructed in the first-level optimization. Construct updated QRSM in the reduced space and obtain the optimal value via the space reduction strategy again. Repeat the second-level optimization until the optimal value meets the accuracy requirements and then the search process terminates.

Methodological model of HMTGO.

The specific implementation process

Step 1. Set the pre-optimized function

where n is the number of design variables. Evaluate these sample points using the real function and establish QRSM, KRG, and RBF, respectively.

Step 2. Continue to generate

where

Step 3. Rank the approximate response values according to the results received from metamodels in ascending order, then select the first

Step 4. In

where

Step 5. When

Step 6. Increase and decrease the optimal values of each design variable in step 5 in a certain proportion, then obtain a small variable space. By reference to the literature, 49 the variation equals to 2.5% of the entire variable space for each design variable. Specific implementation strategies are as follows:

Set

In the updated space, on the basis of the approximate models constructed in the latest iteration, take ASA algorithm to search for the optimal value and obtain the optimization result. If it meets the termination criterion, the entire search process terminates; else, go to step 7.

Step 7. In the previous step, among the optimization results received in the updated space with ASA, with the result of QRSM as a reference, select the optimal value between KRG and RBF which is closer to the result of QRSM and then determine the new variable space based on the optimal values of the selected two approximate models. If any design variable has the same boundary value, then decrease the number of decimal places of boundary value according to the optimization accuracy. Since the number of decimal places is decreased by the premise of ensuring accuracy, it has little impact on optimization results. More importantly, it is convenient for OLHD to generate new sample points. Generate 100 sample points using OLHD in new space, obtain the approximate response values according to the results of QRSM, select 10 sample points with the minimum response values, determine the new upper and lower boundary value of the design variable via these sample points, and obtain the updated variable space.

Step 8. Construct updated approximate models by sampling data points in the updated variable space in step 7, generate 100 sample points using OLHD. After evaluated by QRSM, the sample point with the minimum approximate response value is chosen as the optimal point for the second-level optimization. Process the optimal value in accordance with step 6, if the optimal value meets the termination criterion, the entire optimization process terminates; else, repeat step 7 and step 8 until the termination criterion is met.

The flowchart of the method is given in Figure 2.

Procedures of HMTGO.

Termination criterion

Among the optimization results, the approximate response value of QRSM is adopted for reference, when

is met, where

Case study

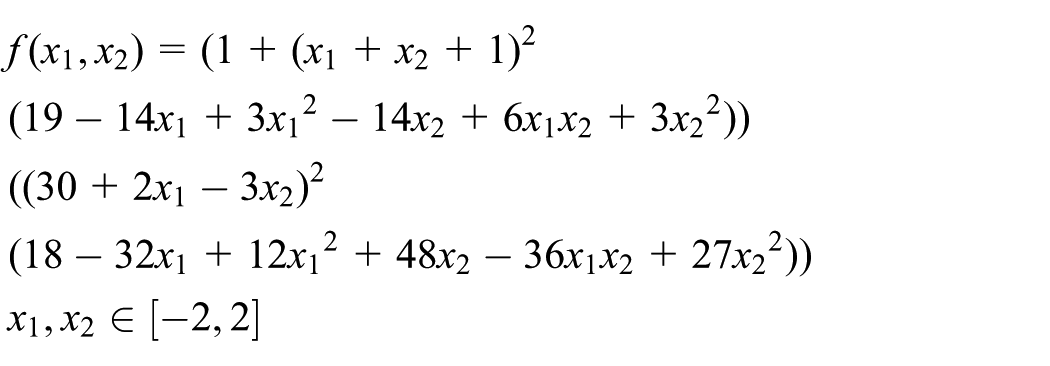

At present, there is not a standard set of test problems for global optimization algorithms, some test functions are widely used.4,11,25,31 Among those, the Goldstein and Price (GP) function is a high-order polynomial function which is frequently used to test new optimization algorithms for their convergence characteristics.

50

This function rises up to higher than

This article takes one set of test data of GP function as an illustration and explains the space reduction strategy for inheriting sample points and the two-level optimization strategy. First, due to GP function has two design variables; generate seven initial sample points using OLHD according to formula (1) to construct QRSM, KRG, and RBF model; consider the variable space

GP function optimization data for the first level.

GP: Goldstein and Price.

GP function optimization data for the second level.

GP: Goldstein and Price.

As can be seen from Tables 1 and 2, the second-level optimization is conducted from the 10th iteration. Carry out variable space updating for the optimal value obtained in iteration 9 according to formula (4) and formula (5), then take ASA algorithm to search for the optimal value in this space based on the three approximate models, obtain the updated variable space according to step 7, and then obtain the updated optimal value after further searching optimization. Iteration 10 does not meet the termination criterion yet, so continue searching in accordance with the above steps. Until termination criterion is met in the 12th iteration, the search process terminates. Take the optimal value, for which ASA algorithm searches based on QRSM model, as the global optimum. The optimum and the updated variable space in the second-level optimization are seen in Figure 3.

The illustration of the second optimization stage.

Because the updated space is too small in some iterations, Figure 3 just shows the expanded and the updated variable space after searching optimization for iteration 9 and iteration 11 for convenience.

It is easy to find that the optimal point

Test and verify of HMTGO

Function tests

In order to verify the validity of the proposed method, this article randomly selects three two-dimensional (2D) functions, one six-dimensional (6D), and one 16-dimensional (16D) benchmark functions to test the searching ability of this method in low-dimensional optimization. Make a comparison with existing global optimization methods mode-pursuing sampling (MPS), 34 HAM, 25 and SAO 51

1. Six-hump camel-back (SC) function

2. GP function

3. Himmelblau (HM) function

4. Hartman (HN) function

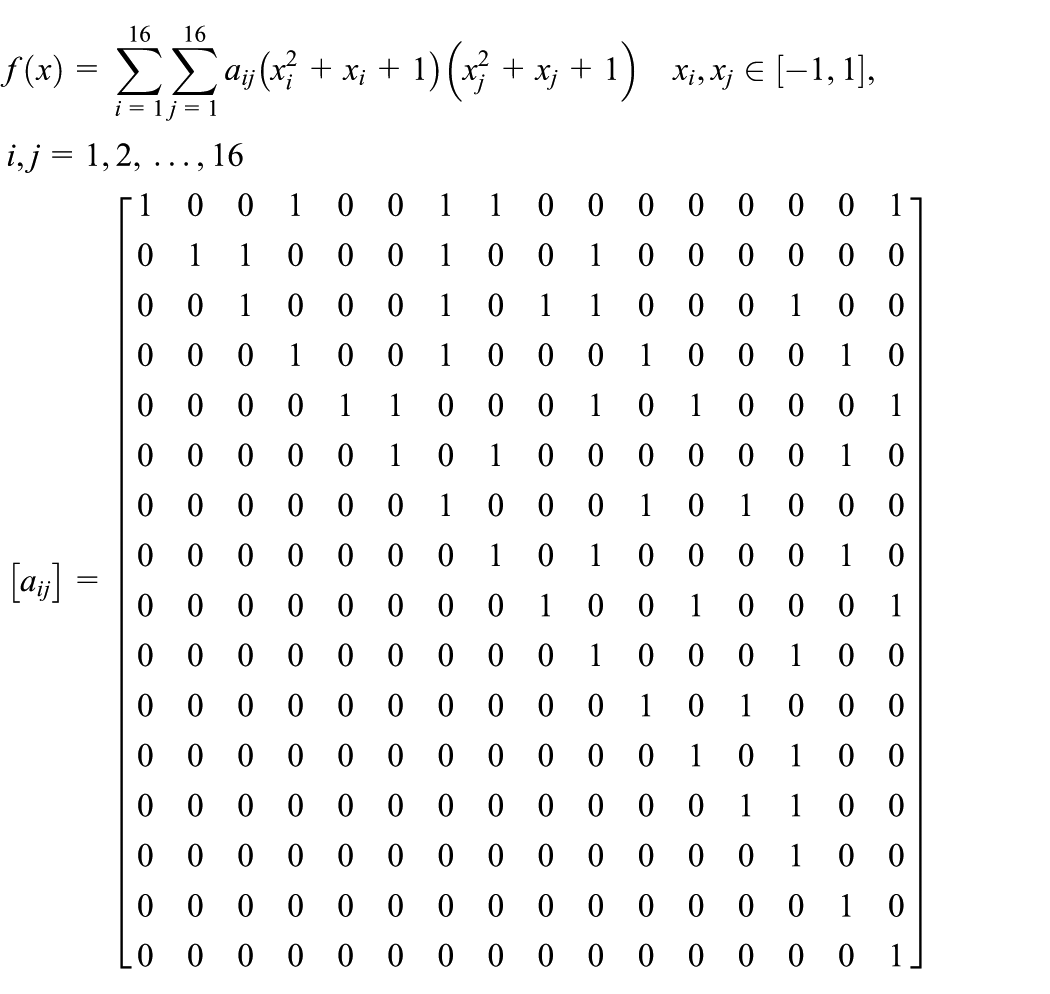

5. 16 variables function (F16)

Take the calculation of GP, HM, and HN as examples to study the influence of different

Comparison of the results for different

NIT: number of iterations; NFE: number of functions evaluations; GP: Goldstein and Price; HM: Himmelblau; HN: Hartman.

As Table 3 shows, compared to

For easy to compare, 10 runs on each of these benchmark functions are carried out in this article like MPS, HAM, and SAO. By comparison, Table 4 lists the optimization results obtained from the proposed method in this article and the existing methods.

Comparing of test results for low-dimensional functions.

NIT: number of iterations; NFE: number of functions evaluations; HMTGO: hybrid metamodel–based two-level global optimization method; SC: six-hump camel-back; GP: Goldstein and Price; HM: Himmelblau; HN: Hartman; F16: 16 variables function.

The data previously presented in the literature. 25

The data previously presented in the literature. 51

As shown by the results in Table 4, for HM, GP, and SC, the proposed method is better than MPS on accuracy and NIT; compared with HAM method, HMTGO has advantages in accuracy and the NFE, especially for GP function, the proposed method has obvious advantages. In comparison with SAO method, although HMTGO needs more numbers of functions evaluations for SC function and HM function, the optimization accuracy is improved. In addition, the NFE and the optimization accuracy still have obvious advantages for GP function. For HN function and F16 function, the proposed method is better than MPS and HAM on optimization accuracy. Compared with SAO, the optimization accuracy is roughly equal. Meanwhile, HMTGO needs more numbers of functions evaluations than HAM and SAO, but less than MPS.

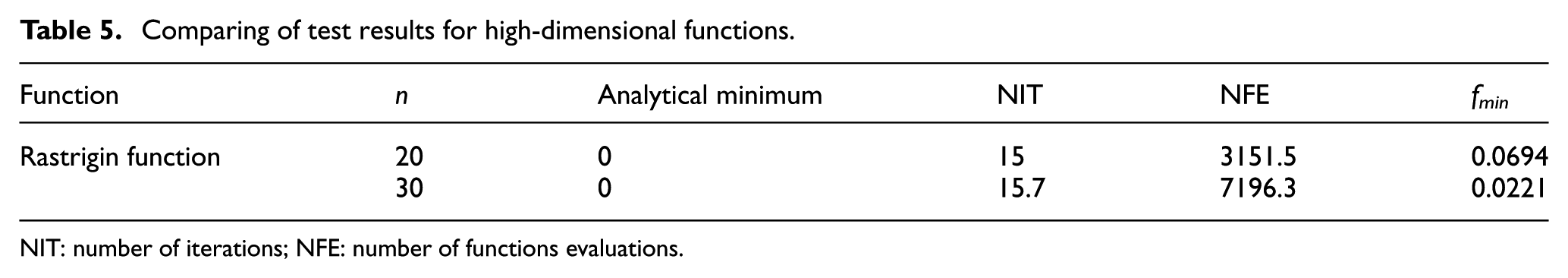

Next, one 20-dimensional (20D) and one 30-dimensional (30D) Rastrigin function are used to test the searching ability of HMTGO in high-dimensional problems. The results are presented in Table 5. Rastrigin function

Comparing of test results for high-dimensional functions.

NIT: number of iterations; NFE: number of functions evaluations.

As seen in Table 5, the proposed method finds the optimal value with a few iterations. However, it is noticed that the NFE non-linearly grows with the increase in the spatial dimension in Tables 4 and 5. It restricts the optimization efficiency of the proposed method in some extent. As is stated above, if parallel computation is available, function evaluation and searching iteration are decoupled, and the NIT can better represent the search efficiency. 25 Meanwhile, the influence of the NIT and the NFE on the search efficiency is related to the amount of simultaneous computation. Assuming the availability of computers, the total time needed by the optimization will be represented by the NIT, rather than the number of total functions evaluations. 34 Although the proposed method needs more evaluation numbers for high-dimensional functions, the NIT for searching optimization is greatly decreased, so the optimization efficiency is also improved for high-dimensional functions.

As shown in the test results of several benchmark functions, the proposed method is suitable for multi-dimensional problems. It successfully finds the global optimum in 10 trials and avoids being trapped into the local minima. Compared with the existing global optimization methods, the proposed method improves the optimization accuracy with the reduction of the NIT, which also maintains a balance between the optimization accuracy and efficiency. The test results demonstrate the superior performance of the proposed algorithm over existing search methods.

Different from the method that improves the model accuracy through sequential sampling, HMTGO method needs to reconstruct approximate model in each updated variable space. It is not difficult to find from formula (1) that the sample points needed to construct approximate models grow non-linearly with the increase in the number of design variables. Especially for the searching optimization of high-dimensional design problems, a large number of new sample points are needed to reconstruct approximate models in each iteration, which leads to a small NIT will need a large number of function evaluations.

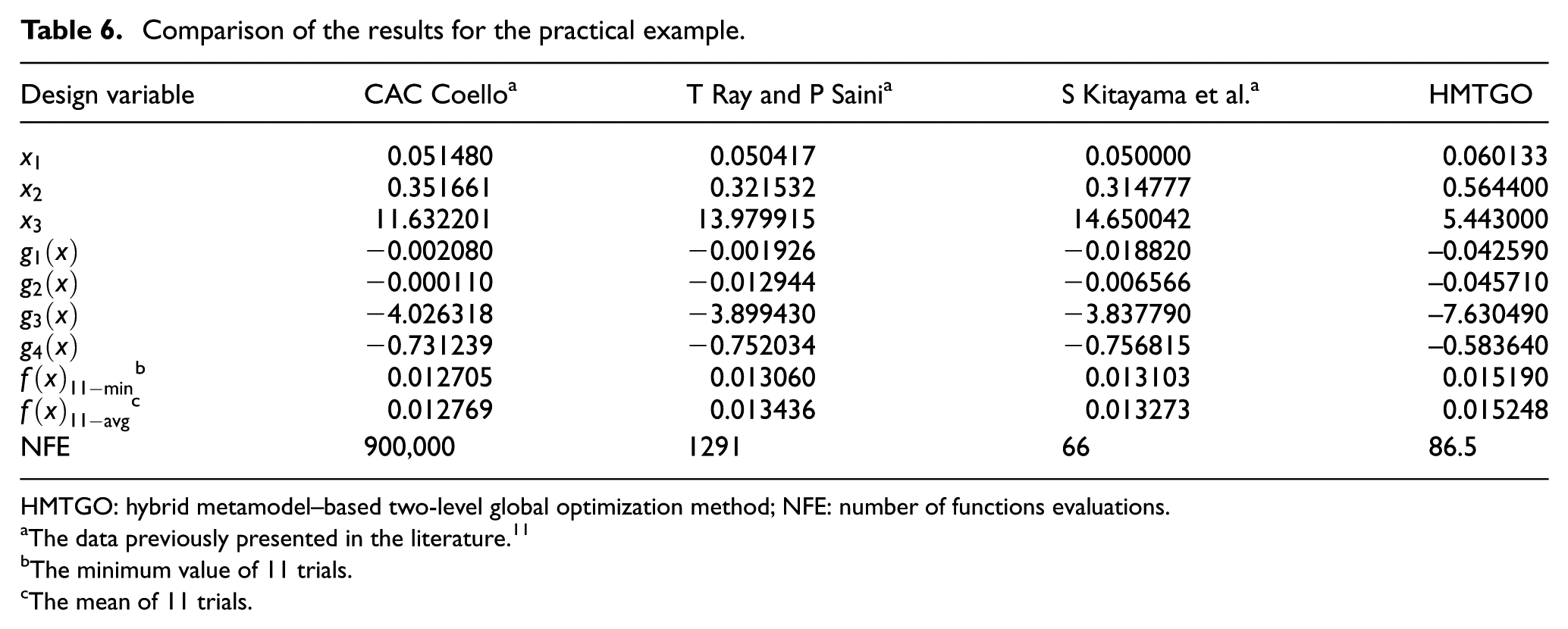

Optimum design of tension/compression spring

The optimum design of the tension/compression spring is adopted to explain the practical application of HMTGO method in engineering field.

The popular test problem is proposed by Arora.

52

As shown in Figure 4, many researchers have used it as a benchmark problem in the structural optimization.11,53,54 The minimization of the weight of a tension/compression spring is considered subject to constraints on minimum deflection, shear stress, surge frequency, and limits on the outside diameter and design variables. The design variables

The illustration of tension/compression spring.

The mathematical model is as follows

where

It can be clearly observed from the mathematical model that the target and constraints are highly non-linear, so it is considered as a time-consuming problem. Construct QRSM, KRG, and RBF approximate models for

Comparison of the results for the practical example.

HMTGO: hybrid metamodel–based two-level global optimization method; NFE: number of functions evaluations.

The data previously presented in the literature. 11

The minimum value of 11 trials.

The mean of 11 trials.

The results can be seen from Table 6 that the proposed method can obtain the optimum value which satisfies the constraints with greatly decreasing the NFE. Meanwhile, it is not difficult to find that the optimization accuracy of HMTGO is worse than the existing methods. It depends on when the constraints are considered in some extent. The existing literature 32 pointed out that if constrains are explicitly given or are of first-order or second-order for which accurate surrogates are easy to obtain, the optimum found from the approximate models of target and constraints is guaranteed to satisfy the constraints which are not approximated. For highly non-linear and computation-intensive constraints, if the space reduction strategy is adopted, then whether the real constraints are satisfied will depend on the accuracy of approximate models for constraints in the reduced variable space. In the process of reducing variable space, when the approximate models of constraints can meet the accuracy requirement, when the constraints are considered with the decrease in the variable space, and whether the real constraints are still satisfied when the variable space is small enough become problems which will have an impact on the final optimization results. This article adopts space reduction strategy to reduce the variable space and then selects partial sample points with the lowest response values under the premise of satisfying all constraints. The optimization result shows that each corresponding design variable of the found optimum values meets the real constraints. However, the accuracy is slightly lower than the existing methods. Therefore, in the global optimization process based on metamodel and space reduction strategy, when the constraints should be considered or how to deal with the constraints to obtain the optimal value with higher accuracy while satisfying all the real constraints becomes an urgent problem to be solved in the future research.

Conclusion

This article proposes a two-level global optimization method based on hybrid metamodel. This method establishes the three approximate models of QRSM, KRG, and RBF for time-consuming problems. After obtaining the approximate response value of sample points, the space reduction strategy is utilized to select partial sample points with the lowest response values to reduce variable space and guide the search to the promising region. Then, ASA algorithm is integrated with the latest approximate models to update the optimization space and obtain the final optimal value. On the basis of the hybrid metamodel and two-level optimization strategy, the optimization accuracy is guaranteed; with space reduction strategy based on hybrid metamodel, the search process is simplified and the optimization efficiency is improved. Several benchmark functions are adopted to test the searching ability of this method, and the optimum design of the tension/compression spring explains the effectiveness of the proposed method in engineering application.

As shown in the experimental results, the NFE grows exponentially with the increase in function dimension. It is mainly influenced by the minimum sample size of constructing QRSM model. Therefore, how to decrease the NFE by selecting approximate models reasonably or reducing the NIT remains to be solved. For optimization problems subject to constraints, how to deal with the constraint conditions in the process of optimization still needs further study, which will be the direction of further researches.

Footnotes

Handling editor: Xichun Luo

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China (grant number 51435011) and the Science & Technology Ministry Innovation Method Program, China (grant number 2017IM040100).