Abstract

The success of grasping and manipulation tasks of commercial prosthetic hands is mainly related to amputee visual feedback since they are not provided either with tactile sensors or with sophisticated control. As a consequence, slippage and object falls often occur. This article wants to address the specific issue of enhancing grasping and manipulation capabilities of existing prosthetic hands, by changing the control strategy. For this purpose, it proposes a multilevel control based on two distinct levels consisting of (1) a policy search learning algorithm combined with central pattern generators in the higher level and (2) a parallel force/position control managing slippage events in the lower level. The control has been tested on an anthropomorphic robotic hand with prosthetic features (the IH2 hand) equipped with force sensors. Bi-digital and tri-digital grasping tasks with and without slip information have been carried out. The KUKA-LWR has been employed to perturb the grasp stability inducing controlled slip events. The acquired data demonstrate that the proposed control has the potential to adapt to changes in the environment and guarantees grasp stability, by avoiding object fall thanks to prompt slippage event detection.

Introduction

Commercial prostheses are typically velocity controlled or position controlled; no tactile system is integrated in the hand and the success of the grasp is based on the visual feedback of the amputee.1,2 On the other hand, control solutions of prosthetic hands based on tactile feedback are borrowed from robotics, where the tactile sensing allows endowing the robotic hands with autonomous dexterous manipulation features. In robotic applications, tactile systems are used for objects recognition tasks, control forces, grasp objects, and to servo surfaces. 3 The control approaches can control fingers torque, force, velocity, and trajectory and include classical proportional–integral–derivative (PID), adaptive, robust, neural, fuzzy sliding mode, and their combinations.4,5 In addition, a well-consolidated approach to ensure grasp stability relies on the concept of friction cone, thus implying that the ratio between the normal force and the tangential force during grasping, multiplied by the static coefficient of friction, has to exceed 1. This method is very effective; however, it suffers from some limitations that make it unsuitable for prosthetics, for example, it requires sensors able to measure both normal and tangential forces (although estimations of the latter can be used as in Wettels et al. 6 ) and also requires a priori knowledge on the static friction coefficient.

Alternative and more recent approaches able to still guarantee grasp stability rely on force control schemes that allow recognizing slippage events by the decrease in the measured normal force. An example of this approach can be found in Cipriani et al., 7 where a PID control is used to preshape a multi-fingered underactuated hand, and a simultaneous force control is applied to all the fingers during grasping. In Jo et al., 8 a proportional–integral (PI) force control with an inner velocity loop has been proposed, but no experimental tests on real prosthetic hands have been done. A hybrid approach has been tested in Zhu et al., 9 where a PI force control is adopted for the outer loop, while a fuzzy position control is used in the inner loop. In Pasluosta and Chiu, 10 a control strategy based on a neural network has been implemented in order to compensate for sensors and hardware non-linearities. The control was divided into two stages: the former was a velocity control to grasp the object, and the latter was a force control. Lately, further studies have been proposed where controls based on slippage prevention are implemented. The idea to prevent slippage in robotics is not new; 11 however, the first example of prosthetic device with force control and slip prevention algorithm has been presented in Engeberg and Meek. 12 In it, a sliding mode force control with slippage detection was successfully compared with a simple proportional–derivative (PD) force control and a sliding mode with no slippage detection. In Sriram et al., 13 a position proportional control has been used to grasp the object, and a fuzzy logic based algorithm was complementary implemented to prevent slippage. Recent algorithms have proposed to use neural networks 14 and fuzzy logic 15 to modulate grasping force of a prosthetic hand.

In this work, a new control architecture for prosthetic hands is proposed with the following two main features:

It is distributed on two levels: a high level based on learning, for trajectory planning and finger coordination, and a low level for force and position control;

The low level is made of a parallel force/position control endowed with slippage prevention capability.

A preliminary feasibility study of such a control architecture has been provided in Ciancio et al. 16 An actor-critic reinforcement learning paradigm and a classical parallel force/position control have been implemented. The architecture was validated in simulation in a cyclic manipulation tasks with a multi-fingered prosthetic hand.

Here, the high-level control has been grounded on a policy search learning algorithm (Policy Improvement Black Box (PIBB)); the low-level control has been based on a parallel force/position control able to manage also slip events. Moreover, the control architecture is implemented and experimentally validated on a real multi-fingered prosthetic hand, i.e. the IH2 hand (by Prensilia s.r.l.), purposely equipped with force sensors on the distal phalanxes.

In the literature, different control architectures based on learning algorithms have been proposed to control robotic hands during grasping or manipulation tasks.17–20 Such architectures have been typically based on hierarchical reinforcement learning, 21 policy search,18–20 and other learning paradigms (e.g. ligand-receptor 17 and neuro-fuzzy inference system 22 ). Despite these architectures have been conceived to confer a good level of autonomy upon the robot, they typically yield a huge increase in the computational burden of the control architecture, thus causing long learning phases, which can hardly be implemented on real robots and cannot be used for online learning.

The learning paradigm adopted in this work, based on PIBB paradigm, has been demonstrated to have moderate computational burden19,20 and high efficiency, with the consequent advantage of enabling online learning. 23 In Meola, 23 central pattern generators (CPGs) have been demonstrated to generate simple motor primitives able to produce both rhythmic and discrete movements. Furthermore, in Meola et al., 23 a first experimental evidence is provided of the learning algorithm coupled with CPGs in discrete and rhythmic tasks as finger tapping and object rotation.

For the low-level control, a parallel force/position control is adopted; such a control is able to manage forces as well as motion during interaction and track the desired motion when interaction does not occur (e.g. during preshaping). 25 The usefulness of this control strategy has been studied in the prosthetic field through simulation tests. 24 Here, it is tested in a real experimental setting and enriched with the slippage information provided by force/tactile sensors in order to enable slippage prevention. For this purpose, force sensors have been embedded into the distal phalanxes of the multi-fingered hand. Moreover, in order to demonstrate the potential of the proposed two-level control, comparative experimental tests with respect to a traditional position control (without learning capabilities) have been carried out.

Strain gauges have largely been utilized on prostheses, but more than one sensor per finger is often needed. 26 Furthermore, temperature compensation is always required to achieve correct force measurements. 7 Force sensing resistors (FSRs) represent an alternative solution, although their functioning principle requires additional sensing units (e.g. piezoelectric 11 ) or supplementary equipment, 13 or signal processing for slippage detection. For example, in Pasluosta and Chiu, 10 signal derivatives have been computed for slip detection, with no negligible noise. In some cases, force/torque (F/T) sensors have been employed, 8 although they provide complex information that are not required by the application with high costs. In this article, one FSR sensor has been positioned on the fingertip of thumb, index, and middle and used for the twofold purpose of measuring the normal force component and detecting slippage. Therefore, FSR force signal is properly processed to extract information about slippage events.

The article is organized as follows: section “Materials and methods” introduces the two-level control architecture, presents the IH2 hand and the implementation of the control architecture on the anthropomorphic hand and the provided tactile sensory system. Section “Results and discussion” illustrates the experimental setup and main results, and finally, section “Conclusion” draws the conclusions and future works.

Materials and methods

Control architecture

The control architecture distributed on high- and low-level control is shown in Figure 1.

Control architecture characterized by the following components: Policy Improvement with Black Box, central pattern generators, parallel force/position control, and sensorized hand and object.

The high-level control has the aim of planning the desired grasping task and identifying the optimal strategy for finger coordination. The PIBB learning algorithm has been employed in order to autonomously search the CPG parameters necessary to define the desired finger trajectory suitable for the grasping task. The learning phase has been characterized by several repetitions of the trial, grouped into periods. During one period, for each trial, the corresponding set of CPG parameters has been tested, thus producing the desired trajectories the hand needs to interact with the object.

The parallel force/position control (i.e. the low-level control) has been devoted to the definition of the motor commands for the hand actuation system starting from force and position references and proprioceptive and tactile sensory feedback.

The proposed architecture aims at being used both during grasping and (rhythmic and discrete) manipulation tasks. The desired forces have been experimentally retrieved with the prosthetic hand while the position references have been generated by the high-level control. The control aims to regulate (1) finger motion during preshaping and (2) force applied to the object during grasping based on position, force and slippage information coming from the sensorized hand.

High-level control

The PIBB algorithm has been used to search the CPG parameters necessary to define the optimal trajectories for the desired task. The learning phase has been characterized by several repetitions of the trial.

At the beginning of learning, an initial set of CPG parameter

The PIBB learning method grounds its functioning on the following phases:19,20,23

Parameter set: in this phase, the

with

Test: each possible set of CPG parameters (

where

Performance evaluation and parameters update: the cost value

where the “temperature parameter”

Phases 1–3 are iterated for all the

In grasping tasks, the cost function needs to take into account the contact points between fingers and object. During bi-digital and tri-digital grasps, only fingertips of thumb, index, and middle fingers (during tri-digital) are in contact with the object for the whole task. For this reason, the cost function needs to know the occurred contact and the contact duration during object and finger interaction. Three counters have been used to calculate the contact time between each fingertips (index, thumb, and middle) and the object. The lowest cost has been obtained when the finger involved in the task has been in contact with the object for the whole grasp. The counters have updated their values at each step. The maximum performance (

where

The current performance value has been defined as the sum of the counters related to each contact point (

The counters update has been carried out at each trial step for each possible hand contact point, increasing the counter if the correct possible contact point is in contact with the object and decrease the counter in the opposite case. This has avoided the contact of the object from the wrong fingers.

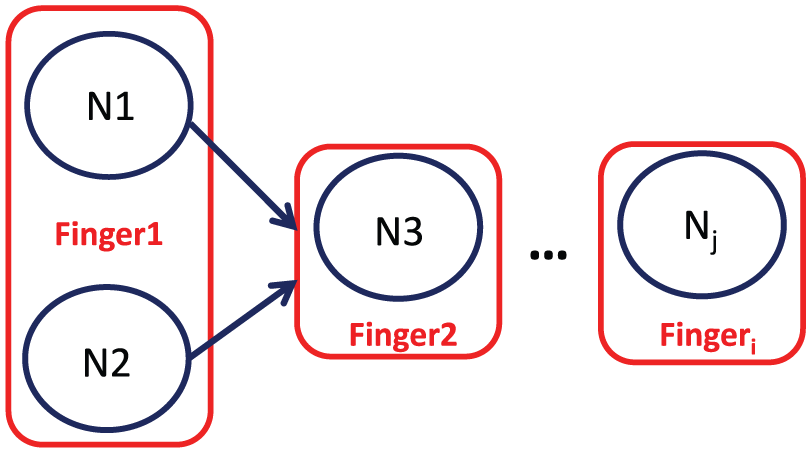

The CPGs have been modeled as coupled oscillators and the theoretical formulation have been presented in Ciancio et al. 27 As detailed in Meola et al., 23 where a first application of the high-level control is presented, the CPG algorithm has been preferred to dynamic movement primitive (DMP) to facilitate the initialization and the transition from discrete and rhythmic movements, for example, transition from grasping and manipulation tasks. The number of adopted oscillators has been directly related to the number of degrees of actuation (DoAs) of the prosthetic hand (Figure 2). The trajectory of each DoA has been defined by the output of the corresponding oscillator.

Central pattern generator architecture: the number of oscillators has been directly related to the number of degrees of actuation of the prosthetic hand.

Low-level control

The implemented low-level control is a parallel force/position control equipped with a slippage detection and control. Figure 3 shows the block diagram of the control.

Parallel force/position with slippage prevention feature control scheme.

The proposed control law for each finger is expressed as follows

The parallel force/position control consists of two loops: the outer, with

Implementation on the prosthetic hand

IH2 hand

In this study, the IH2 anthropomorphic robotic hand (by Prensilia s.r.l.) has been used (Figure 4). It is a five-finger biomechatronic human-sized hand. Five direct current (DC) brushed motors integrated in the palm allow five not-backdrivable DoAs and 11 degrees of freedom. Each finger has two phalanxes and two degrees of freedom of flexion/extension (F/E) except for the thumb, which has the abduction/adduction (A/A) movement, too.

Prosthetic hand IH2 mounted with FSRs on the fingertips of thumb, index, and middle finger (a). The sensorized fingertips are covered with prosthetic silicon caps, as detailed in (b).

An underactuated mechanism is adopted for all the fingers to enable phalanxes F/E; it consists of a tendon that runs inside the phalanxes and is wrapped around the pulleys placed at the joints. Each tendon is pulled by a slider actuated by one motor. The fingers automatically adapt to the object shape while closing. Incremental encoders measure finger positions, and the output varies between 0 (totally open) and 255 (totally closed).

Implementation of the control architecture on the IH2 Hand

The learning phase (high-level control) has been carried out in simulation with the defined simulated objects in order to avoid overwork and exclude unexpected behavior or damages in the real setting with the IH2 hand. The learned parameters have been tested on the real robotic hand during the object grasp. The learning phase is characterized by a period with K = 8 trials, experimentally retrieved. At each trial, a different set of CPG parameters has been tested, and at the end of each period, new K parameter sets have been calculated on the basis of the performance of the previous K trials.

The adopted CPG is presented in Figure 5. It consists of five different oscillators, each one devoted to the definition of the desired trajectory for one DoA involved in the task. Five different oscillators have been adopted with a total of 15 parameters to search (five amplitudes, five centers of oscillation, four phases, and a common frequency). As shown in Figure 5, N1 oscillator has been associated with index flexion/extension, N2 has been in charge of middle flexion/extension, N3 has been responsible for thumb flexion/extension, N4 has been associated with thumb adduction/abduction, and N5 has been affiliated to ring/little flexion/extension.

Central pattern generator scheme composed by five oscillators each defining the trajectory of 1 degree of actuation: N1 defining index flexion/extension trajectory, N2 defining middle flexion/extension trajectory, N3 defining thumb flexion/extension trajectory, N4 defining thumb adduction/abduction trajectory, and N5 defining ring/little flexion/extension trajectory.

Because of the underactuation, the control law has been reformulated in terms of slider variable

with

The parallel force/position control law suited to the IH2 Hand can be expressed as follows

being

Tactile sensing

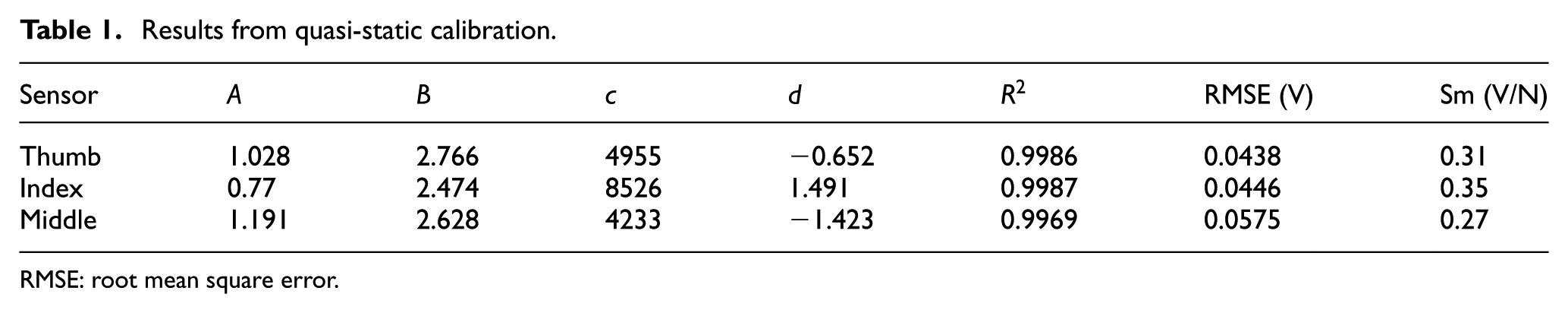

Forces exerted by the prosthetic fingers onto the objects during the experimental trials have been collected by three FSR sensors (Model 400 by Interlink Electronics, Inc.), each one mounted, respectively, on the distal phalanx of thumb, index, and middle finger (Figure 4). The selected FSR model has a circular active area with a diameter of 5.08 mm, a discrimination threshold of 0.2 N, and a measurement range up to 20 N.

Voltage,

where a, b, c, and d are constants that depend on the sensor response, whereas

Results from quasi-static calibration.

RMSE: root mean square error.

Best fitting curves for the three FSRs placed on the prosthetic hand and covered with silicon caps.

As regards the slip signal, after preprocessing and rectification, a threshold mechanism has been adopted to extract a binary slip signal.

Experimental setup

A first experimental setup has been realized for validating the high-level layer and demonstrating its potentials, especially for managing coordinated fingers grasping and achieve successful grasp also when experimental conditions are varied (e.g. size or shape of the objects). To this purpose, the preshaping phase has been in-depth investigated (thus not including the force control) and compared with a traditional position control made of a PD action on the slider position error. In the case of learning architecture, CPGs generated proper joint reference trajectories (converted in slider position references) for a given object, thanks to the training made in simulation. No a priori knowledge on the object precise location was needed. In the case of traditional PD control, slider position references were planned thanks to a priori knowledge of the object size and position.

In order to demonstrate the added value provided by the high-level control with learning, the effect of size changes on the control performance was investigated. In particular, cylindrical objects of different sizes were tested with a tri-digital grasp. It is worth observing that due to the hand under-actuation, the traditional PD control is expected to grasp also objects larger than those used for trajectory planning. On the other hand, it is expected to fail with objects of smaller size. The objects considered for these experimental tests were a plastic cup of 7 cm of diameter and other three cylindrical objects of, respectively, 8, 3.4, and 2 cm of diameter. Control performance has been measured by means of the success rate during the different grasps.

The experimental setup for the validation of the complete two-level control is depicted in Figure 7. Three widely used objects during activities of daily living (ADLs) have been selected for performing two kinds of grasp: bi-digital and tri-digital (Figure 8). The former has been performed with an egg (Figure 8(b)) and a highlighter (Figure 8(d)), the latter with the same egg (Figure 8(a)) and with a cylindrical plastic cup (Figure 8(c)). The masses of the objects were around: 60 g (egg), 50 g (cup) and 10 g (highlighter). The purpose of the experimental tests was to investigate the stability of the grasp when an external perturbation is applied in the two cases of presence or absence of slip detection and to verify system robustness with respect to the type of perturbation. Slippage has been automatically induced by means of 7 degrees of freedom robotic arm (KUKA-LWR 4+), whose end effector has been a thin, cylindrical probe. Slip experiments have been executed by actuating the probe for 1 cm at two different speeds (i.e. 2 and 4 cm/s (maximum speed possible)), in order to assess control stability and robustness at increasing velocities.

Experimental setup. DC power supply for electronics, sensors, and prosthetic hand (a); NI DAQ device (b) for data acquisition; prosthetic hand grasping a cup (c); end effector inducing slippage (d); KUKA-LWR 4 robotic arm (e).

Grasps performed by the IH2 hand during the experiments: tri-digital with egg (a) and cup (c), bi-digital with egg (b) and highlighter (d).

Experiments without considering the sensory slip information (i.e. only relying on the force/position control) have been carried out only at 4 cm/s, in order to demonstrate the efficacy of the proposed slippage control also when control laws that do not manage slippage fail. In all cases, six repetitions have been performed, resulting in a total of 72 trials. All the data have been acquired at a sampling frequency of 2 kHz by a NI DAQ (NI-6009) device, and the slip signal has been recorded even when not employed for the control; the prosthetic hand has been supplied by a DC power supply at 8 V, while all the sensors and acquisition electronics at 5 V. The gains of the PD position control loop have been set to the following values:

Results and discussion

High-level control

Training results

The training phase has been carried out in simulation, by modeling the IH2 hand and the objects, with the same tools described in Ciancio et al. 16 The training of the high-level control has been performed for the tri-digital grasp of a plastic cup with 7 cm of diameter. Afterward, the high-level control has also been applied to other three objects with smaller and larger diameters (i.e. 3.4, 2, and 8 cm).

The performance of the high-level control architecture has been evaluated in two cases: (i) learning is carried out for each object separately (namely learning from zero) and (ii) learning of smaller objects benefits from the learning of the plastic cup (namely learning from plastic cup) (Table 2). Control performance has been assessed through the mean cost value (the lower is the value, the better is the hand behavior), and the number of trials, which provides a measure of the time needed for learning.

Mean cost values and number of trials at the end of the learning (two types of learning have been carried out: learning starting from zero and learning starting from plastic cup).

The training of the high-level control during grasp of larger object (i.e. 8 cm diameter) has been carried out only in the case of learning from zero. As for the case of traditional PD control, it is expected that the under-actuation of IH2 fingers makes the hand adapt to larger objects.

As shown in Table 2, the performance of the task decreased with the object size. Moreover, the number of trials required for training the high-level control increased during grasp of smaller objects. It is interesting to observe that although the training was always successful, the incremental training of the high-level control architecture from the plastic cup learning led to improved performance with respect to learning from zero, with lower values of both the mean cost and the number of trials necessary to conclude the training phase.

The training results demonstrate that the high-level control is able to successfully face changes in the experimental scenario, such as object dimensions, with a quicker learning phase if it starts from previously trained parameters. Furthermore, performance obtained with the incremental training from the previously learned situation also exceeded performance achieved by a new learning from zero.

Preshaping results

Experimental tests have been carried out in order to verify that the trajectories provided by the high-level control were able to correctly grasp the objects (i.e. plastic cup, cylinders with diameters of 3.4 and 2 cm, respectively) and to compare results with a traditional PD control with predefined slider positions (and correspondingly joint positions) required to stably grasp the object. In particular, Table 3 reports the predetermined slider positions to grasp the object (experimentally retrieved), the slider positions provided by the high-level control, the slider positions actually achieved by the fingers, and the success rate of the grasping task.

Predetermined slider positions to grasp the object, slider positions actually achieved, and success rate of the grasping tasks for the traditional PD control and the high-level control.

SD: standard deviation; PD: proportional–derivative.

The hand has been able to grasp the four objects using trajectories furnished by the high-level control with a success rate of 100% during 10 trials. As expected, for the traditional PD control, the same results have been achieved only in the case of plastic cup (as the real slider positions correspond to the planned ones) and larger object (thanks to the under-actuation). The experimental results confirm the correctness of simulation outcomes after re-learning process and demonstrate the applicability of a high-level structure to provide suitable trajectories during grasps of objects with different dimensions.

Results of the complete control architecture and discussion

Results of the training phase

The evolutions of the cost during the learning of bi-digital and tri-digital grasp of an egg are shown in Figure 9. The decrease in the cost values during training clearly indicates the improvement of the performance during learning.

Cost during the learning of two grasping tasks of an egg: bi-digital grasp (blue) and tri-digital grasp (green). The stars indicate the cost value at each period of the training.

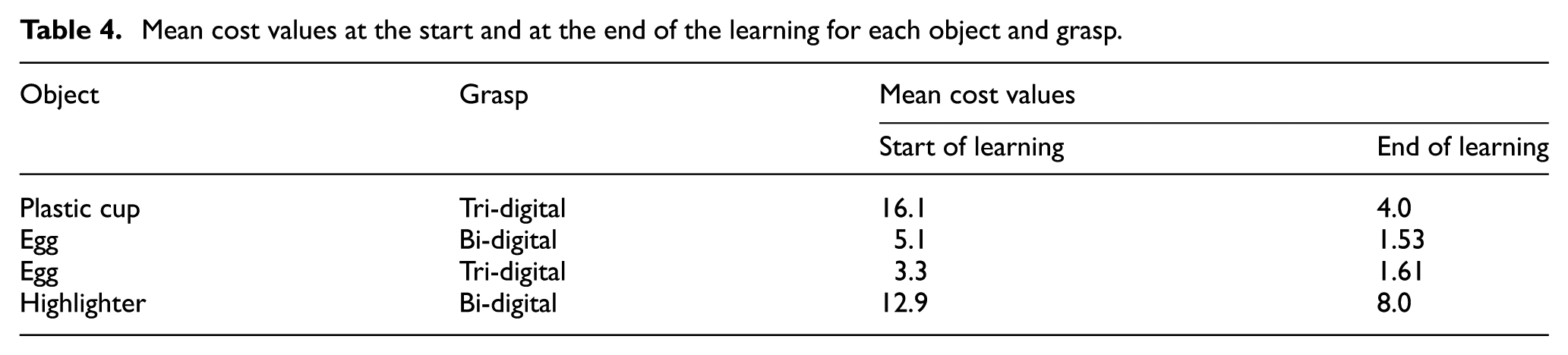

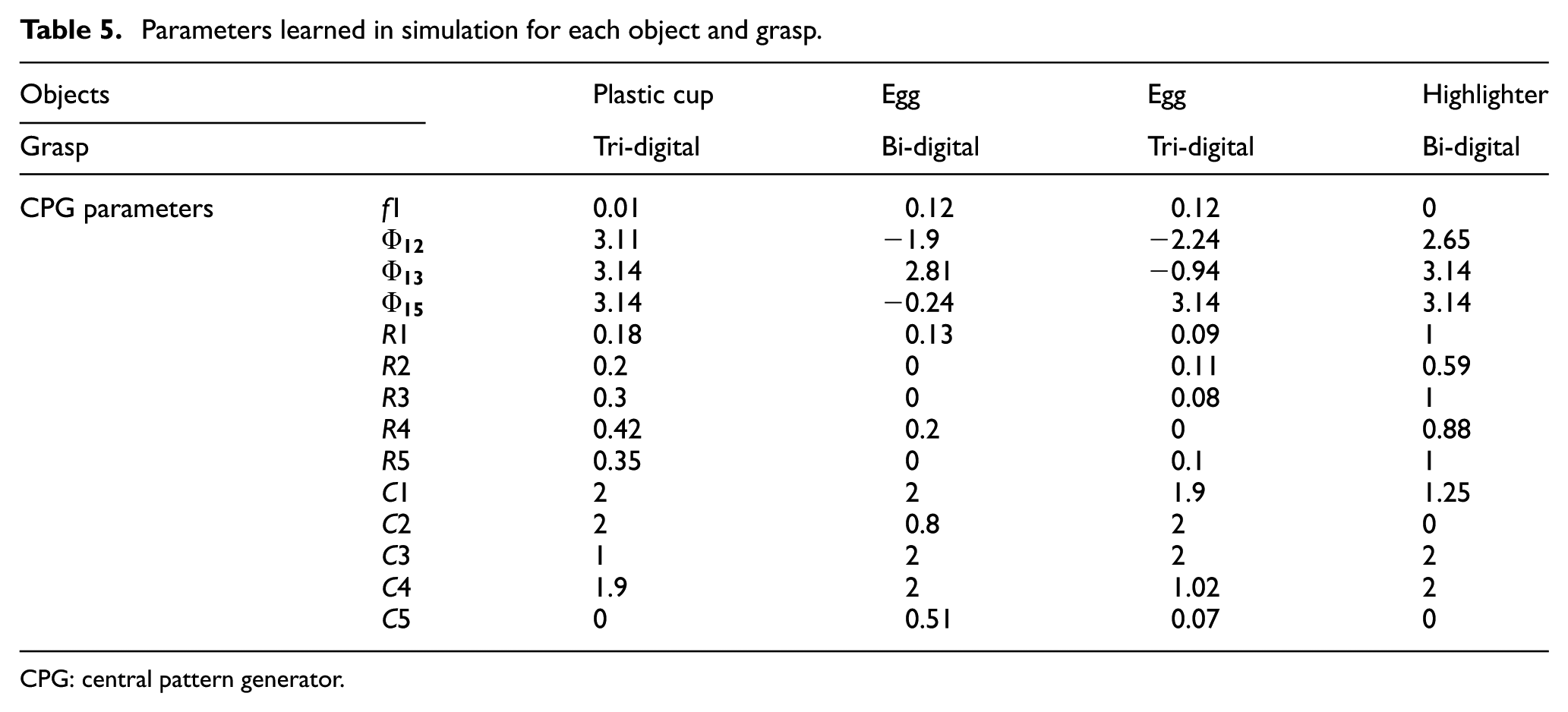

The performance of the high-level control architecture during each grasping task before and after the learning has been summarized in Table 4. Mean values have been calculated on the first and last five periods of the training. In Table 4, the increase in the performance during the learning of the four grasping tasks can be observed. Table 5 reports the CPG parameters extracted at the end of the learning in simulation and adopted in the test phase on the real hand.

Mean cost values at the start and at the end of the learning for each object and grasp.

Parameters learned in simulation for each object and grasp.

CPG: central pattern generator.

Experimental results of the test phase

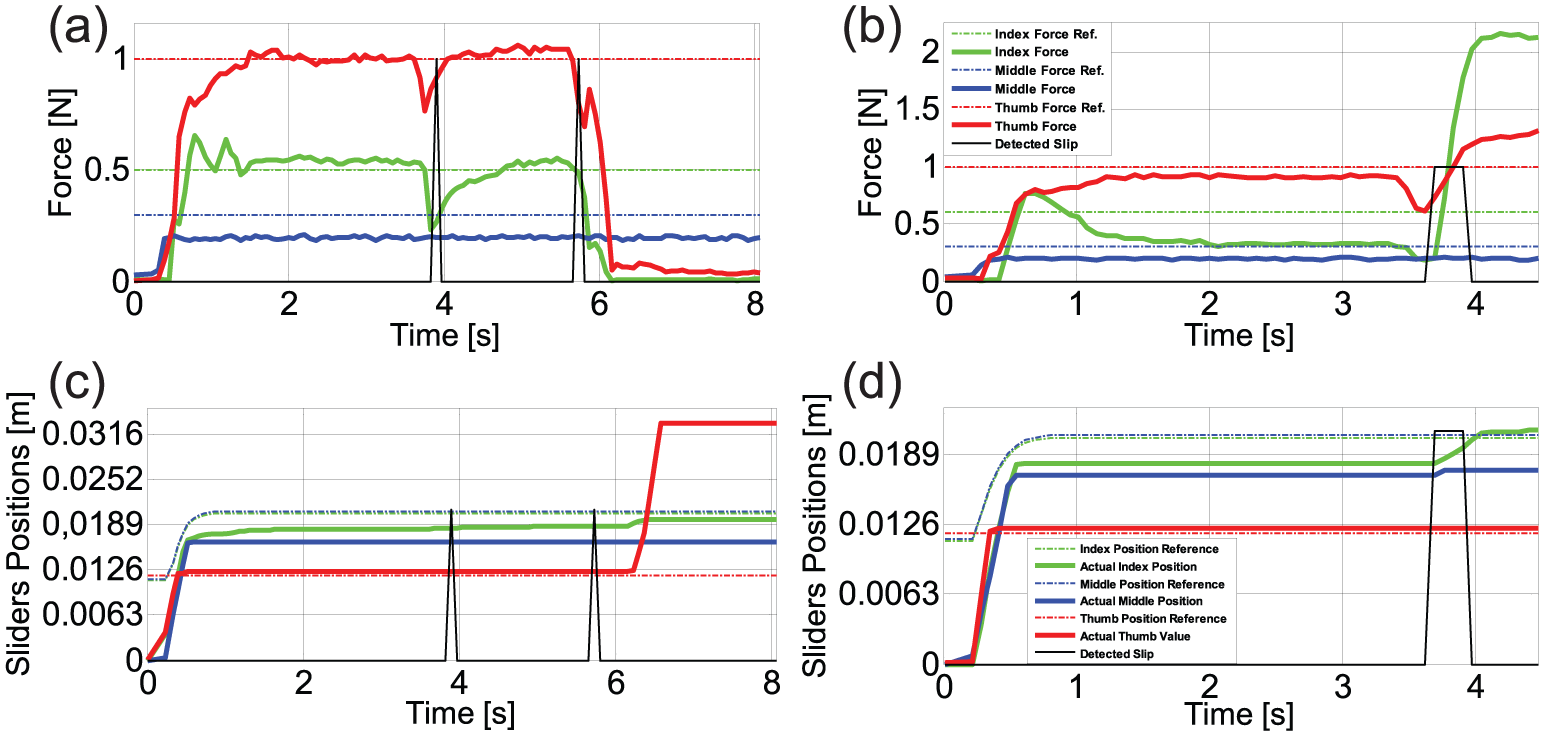

In this section, the results of the experimental tests on grasping are shown. For sake of brevity, the plot of four trials is reported, as the trend is similar during the different grasps of the different objects. Figures 10 and 11 show the following: (a) and (c) the measured forces compared with the desired force, and (b) and (d) the slider positions compared with the desired positions. When slippage control is off, the grasped object is perturbed by two identical perturbations of 1 cm at the maximum speed possible (4 cm/s) so as to demonstrate that the object falls, or at least is unstably grasped. When the slippage control is active, one perturbation of 1 cm at different velocities (2 and 4 cm/s) is produced; as expected, the system is able to immediately detect slippage as shown in Figures 10 and 11. The activation command for the robotic arm was given as soon as the forces reached a steady state; hence, perturbations were not applied at the same time instants in all the trials.

Experimental results with the plastic cup: (a) and (b) slippage control: off and (c) and (d): slippage control: on.

Experimental results with the highlighter: (a) and (b) slippage control: off and (c) and (d) slippage control: on.

In Figure 10(a) and (b), it is shown a trial of the tri-digital grasp of the plastic cup with the slip prevention algorithm is disabled and the perturbation set to 4 cm/s. After the forces reached the steady state, a first disturbance is applied and the controller avoids the cup fall thanks exclusively to the parallel force/position control. The grasp is maintained but it is no longer stable. In fact, as the subsequent perturbation occurs, index and thumb fingertips lose their contact with the object and the precision grasp fails. The middle fingertip accidentally continues touching the object because the object remains unstably grasped. The force references have appositely been chosen quite low in order to achieve a precarious grasp and to demonstrate the effectiveness of the slippage control. The position graph highlights the precariousness of the grasp since after the second perturbation the thumb is completely flexed.

Figures 10(c) and (d) show a further trial of the tri-digital grasp of the cup, but this time the slippage prevention algorithm is active and the perturbation set to 2 cm/s. As the slip event due to perturbation is detected, the grip is strengthened and the grasp becomes stable. From both force and position graphs, it can be noticed that index finger, on which the slippage prevention algorithm is computed, mostly contributes to grasp stability; its applied force evidently arises and, as a reaction, also thumb increases its force value while middle finger does not modify its action.

In Figure 11, the bi-digital grasp task of the highlighter is reported. In this case, when the control of the slippage is inactive (i.e. Figures 11(a) and (b)), the force references are well-kept after the first as well as the second perturbation, but there is no incisive increase in the grasp forces. Rather, forces diminution can be observed in the force graph, confirming that the object is not grasped in a stable manner as a consequence of the perturbations. Slider positions remain unvaried; forces change is uniquely due to the underactuated behavior of the fingers. Figures 11(c) and (d) illustrate the reaction of the controller to a disturbance as fast as 4 cm/s, in order to show its capability of reacting also to the maximum velocity of the robotic arm. More seconds are needed to reach the steady state. In this trial, the slip event is followed by a quick, high response of the index finger force and also the thumb increases its force in reaction. After the adjustment due to slippage, a grasp stability of the object is clearly reached.

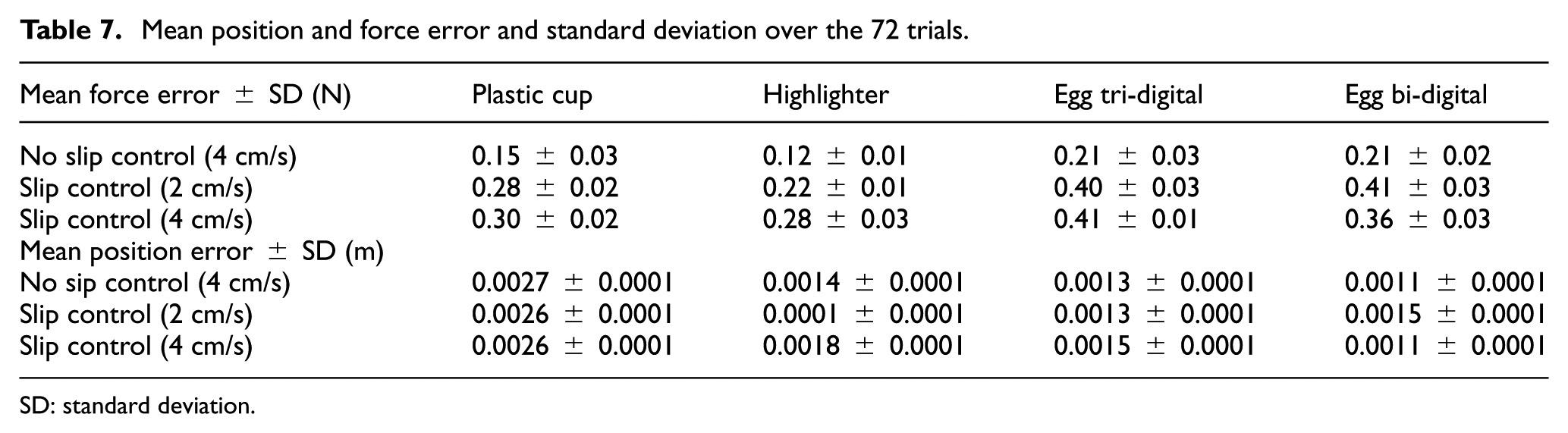

Table 6 summarizes the overall performances of the control architecture for all the 72 trials. As a confirmation of the results depicted in Figures 10 and 11, it can be seen that when the slippage control is off, the failure percentage often comes to be high. Oppositely, the enabled slippage control allows the prosthesis to stably hold the object in every perturbation condition with rare exceptions. Table 7 indicates the mean force and position errors along with standard deviation computed for all the 72 trials. The most influent level of the control is the position one, as its gains are much higher than the force gains. Indeed, the fingers closed quickly, taking around 0.5 s to reach a steady position; thus, the mean position error is always quite low. Force errors resulted little when slip control was off, but rose up significantly when the control could rely on the slip information; this fact can be easily explained considering the force augmentation in case of slip detection.

Complex results over the 72 trials.

Mean position and force error and standard deviation over the 72 trials.

SD: standard deviation.

Conclusion

A novel control architecture to improve grasp stability has been tested on an anthropomorphic hand for prosthetic applications. The control was distributed on two different levels; the high-level control provided the fingers with the optimal trajectories learnt by a PIBB algorithm, whereas the low-level control was directly interfaced with the hand actuators. Potentialities of the high-level control have been analyzed and compared with a traditional approach based on PD control; in particular, the generalization capabilities of a hierarchical control architecture where verified by means of experimental trials involving grasping tasks of objects with different sizes.

FSR sensors put onto the fingertips of thumb, index, and middle fingers and covered with silicon caps have been employed for forces estimation, as well as for slippage detection. An ad hoc experimental setup exploiting a robotic arm has been set to evaluate system performance: results confirmed the architecture capability of stably grasp objects of different sizes and shapes, also in presence of slippage. The added value of the two-layer architecture is especially evident in scenarios where the experimental conditions cannot be predefined, and the environment is highly unstructured, as in the ADLs. In these situations, learning is undoubtedly crucial, above all if grounded on incremental learning with respect to an already performed offline training. Future works will be addressed to (1) extract the slip sensory information from more than one sensor, paying attention not to excessively slow down the control loop; (2) add more force sensors on the prosthetic hand in order to extend the approach to other grasping tasks and to manipulation; and (3) implement online learning on the prosthetic hand and possibly test the developed architecture on different prosthetic hands.

Footnotes

Academic Editor: Nicolas Garcia-Aracil

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Italian Ministry of Instruction, University and Research with PRIN project “HANDBOT—bio-mechatronic hand prostheses endowed with bio-inspired tactile perception, bidirectional neural interfaces and distributed sensori-motor control” (CUP: B81J12002680008), by the Italian Institute for Labour Accidents (INAIL) with PPR 2 project (CUP: E58C13000990001), by the European Project H2020/AIDE: Multimodal and Natural computer interaction Adaptive Multimodal Interfaces to Assist Disabled People in Daily Activities, CUP J42I15000030006.