Abstract

To address the coupled geometric and deformation errors in RV reducers, this study proposes a high-precision transmission error prediction method using Stacking ensemble learning, overcoming the limitations of rigid assumptions and inefficient finite element analysis. A finite element model incorporating machining errors is built to generate a dataset via Latin hypercube sampling. Then, based on orthogonal experiments and variance analysis methods, eight typical machining errors of the RV reducer were analyzed to identify the three machining errors that have the greatest impact on the peak-to-peak value of the transmission error. On this basis, A Stacking model is then constructed with SVR, XGBoost, RF, and KNN as base learners and XGBoost as the meta-learner. Experimental results show this model increases the determination coefficient R2 by 14.3% and reduces RMSE by 27.4% compared to the best single model. Furthermore, it enables an average 52.48% reduction in the transmission error peak-to-peak value, significantly enhancing prediction accuracy and robustness for RV reducer performance optimization.

Introduction

As the core transmission joint of industrial robots, the performance of the RV reducer directly affects the motion accuracy and stability of robots.1–3 High-end equipment represented by six-axis industrial robots imposes requirements of large transmission ratio and high output torque on RV reducers. To address the shortcomings of traditional reducers such as insufficient transmission ratio and limited output torque, the RV reducer adopts a composite structure of planetary gear transmission and cycloidal pinwheel transmission, combining the advantages of large transmission ratio and high torque output.4–6 During the assembly process of the RV reducer, machining errors of components (such as pin machining error and crankshaft eccentricity error) will change the geometric relationship and stress state of the RV reducer, resulting in transmission errors,7–9 which are manifested as motion lag and increased backlash. This further leads to problems such as decreased repeat positioning accuracy, increased trajectory tracking error, and intensified vibration and noise, seriously affecting the operational performance of robots. Taking a certain type of welding robot as an example, measured data show that when the joint transmission error exceeds 15 arcsec, the weld seam trajectory deviation increases by 35%, directly affecting the welding quality. In application scenarios such as precision assembly, transmission errors can also cause inaccurate positioning of the end effector, resulting in assembly failure or product damage. Therefore, studying the influence law of assembly errors on the transmission accuracy of RV reducers and realizing the accurate prediction of transmission accuracy are of great significance for improving the motion performance and operational reliability of industrial robots.10–12

Currently, three main methods—analytical modeling, numerical simulation, and experimental testing—are adopted to analyze the influence of reducer machining errors on transmission accuracy. In terms of analytical modeling, Xue et al. 13 established an analytical prediction methodology of the correlation between transmission error and tooth pitch error for face-hobbed hypoid gears. The variable-step Runge-Kutta algorithm was used to solve the dynamic response of the system, revealing the influence law of carrier machining errors in different directions on the dynamic transmission error and meshing force of the system. For numerical simulation, Huang et al. 14 presents a dynamic modeling and response analysis of cracked herringbone gear transmission systems, with consideration of installation errors. Based on orthogonal tests, the axial deviation most sensitive to meshing characteristics was identified from machining errors in different directions. Regarding experimental testing, Palermo et al. 12 proposed a simplified measurement scheme for gear transmission error (TE) based on low-cost digital encoders, and used this scheme to analyze the influence of gear machining errors on TE.

The analytical modeling method can reveal the causal relationships between error sources (such as eccentricity and pitch error) and transmission errors, offers high computational efficiency without requiring complex computational resources, and enables rapid evaluation of error influence laws.15–17 The numerical simulation method considers nonlinear factors in gear transmission (elastic deformation, backlash, damping) and intuitively demonstrates motion deviations or stress concentrations caused by errors.18–20 The experimental testing method is based on real data and directly reflects the comprehensive error effects of actual systems.21–23 However, these methods all have obvious limitations in transmission error prediction: analytical modeling suffers from insufficient accuracy due to simplified assumptions and struggles to handle complex nonlinear problems; numerical simulation has high computational costs and is difficult to support rapid iterative optimization; experimental testing relies on expensive equipment, has a long cycle, and poor applicability in the early design stage. In contrast, the surrogate model-based transmission error prediction method establishes a mathematical mapping between error parameters and transmission responses, enabling fast and high-precision prediction with only a small number of samples. It not only significantly improves computational efficiency but also supports sensitivity analysis and multi-objective optimization. Willecke et al. 24 reduced the parameter dimension by constructing a comprehensive gear deviation surface and trained a deep learning model based on 3000 sets of data, realizing super real-time prediction of the loaded transmission error characteristics of gear pairs and significantly enhancing the efficiency optimization capability in the gearbox manufacturing process. Xu et al. 25 proposed an improved method based on the Kriging surrogate model and Sobol sensitivity analysis. By replacing time-consuming simulations, screening key parameters, and combining genetic algorithm optimization, the calibration efficiency and accuracy of the dynamic model of multi-stage gearboxes were significantly improved, achieving more accurate prediction of dynamic characteristics. Sakaridis et al. 26 adopted a neural network method to predict the static transmission error of spur gears. A three-layer fully connected network model with periodic symmetry was trained using 20,000 sets of physical simulation data containing 17 parameters, achieving an average error of 0.075% on the test set. This verified the reliability of using neural networks instead of traditional nonlinear solvers for modeling gear static responses.

Geometric modeling and dynamic motion simulation of RV reducer

The RV reducer is a closed-type compound planetary transmission mechanism composed of two-stage reduction mechanisms: the first-stage reduction mechanism is an involute planetary gear mechanism, and the second-stage reduction mechanism is a cycloidal gear-pin gear transmission mechanism. This section mainly conducts geometric modeling and model simplification of the RV reducer to facilitate the subsequent simulation analysis of the entire reducer.

Geometric modeling

Firstly, the overall geometric modeling of the RV reducer is carried out. Its first-stage reduction mechanism is an involute planetary gear mechanism, and the second-stage reduction mechanism is a cycloidal gear-pin gear transmission mechanism. The main structural parameters of the RV reducer are shown in Table 1, and the overall geometric model is illustrated in Figure 1.

Main structural parameters of the RV reducer.

Geometric model of the RV reducer.

Finite element modeling and dynamic motion simulation

The FEA model in this study is based on the following core assumptions: (a) all materials are homogeneous, isotropic, and linear elastic; (b) gear meshing contact is dry and unlubricated; (c) micro-slip at joint interfaces such as bolt connections is neglected; (d) simulations are conducted under constant rated loads. These assumptions aim to construct a controllable benchmark model for studying the transmission of geometric errors. To reduce the number of meshes in the finite element model and improve computational efficiency, appropriate simplifications are made to the geometric model of the RV reducer in this study, including integrating the crankshaft with the synchronizing gear, removing redundant threaded holes in the geometric model, simplifying the sun gear, synchronizing gear and cycloidal gear, removing the rigid disk, and simplifying the output disk structure. The simplified model of the RV reducer is then imported into finite element preprocessing software for mesh generation. The mesh models of key structures (sun gear, integrated crankshaft and synchronizing gear, cycloidal gear, pins, and pin gear housing) are shown in Figure 2. Among them, the number of meshes for the sun gear finite element model is 19,600, that for the integrated crankshaft and synchronizing gear is 34,490, that for the cycloidal gear is 41,544, and the total number of meshes for the RV reducer finite element model is 368,830. The Overall RV reducer finite element model are shown in Figure 3.

Finite element models of each component of the RV reducer.

Overall finite element model of the RV reducer.

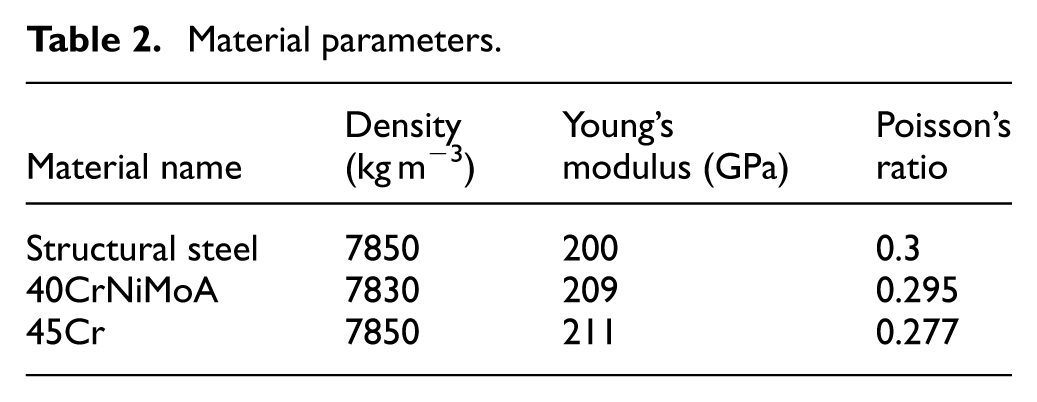

In terms of material settings, the pin gear housing and output disk of the RV reducer are made of structural steel, while structures such as the cycloidal gears, crankshaft, pins, and sun gear are made of 40CrNiMoA alloy steel to meet the requirements of high toughness, fatigue resistance, and mechanical strength. The specific mechanical parameters of each material are detailed in Table 2. The dynamic motion simulation of the RV reducer involves a total of six pairs of contacts: three pairs of contacts between the sun gear and synchronizing gears are set as frictional contacts with a friction coefficient of 0.15; the contacts between the two cycloidal gears and the pin pins are also frictional contacts with a friction coefficient of 0.1; the contacts between the pins and the pin gear housing are set as bonded contacts to facilitate the fixed connection between the pin pins and the pin gear housing. Nine groups of kinematic joints are set to constrain the system motion: 1 fixed joint to ground (for the pin gear housing), 2 revolute joints to ground (for the sun gear and output disk), and 6 body-to-body revolute joints (each cycloidal gear and the crankshaft are equipped with three body-to-body revolute joints). The simulation process is divided into two substeps: in the first substep (0–1 s), the sun gear rotates by 18°, and the output disk is loaded to 100 N m; in the second substep (1–2 s), the load on the output disk remains unchanged, and the sun gear rotates to 180°.

Material parameters.

Transmission error calculation

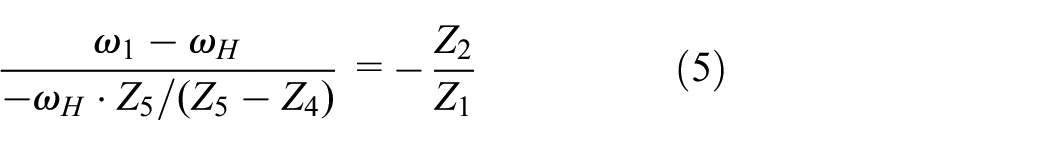

The gear train of RV transmission can be regarded as a planetary closed differential gear train. Here, the planet carrier is denoted by the letter H, and a common angular velocity is applied to the entire gear train, converting the first-stage reduction mechanism of the RV reducer into a fixed-axis gear train. Therefore, the converted transmission ratio of the sun gear and the planetary gear relative to the planet carrier is:

Where: Z1 is the number of teeth of the sun gear; Z2 is the number of teeth of the planetary gear;

Where: Z4 is the number of teeth of the cycloidal gear, and Z5 is the number of teeth of the pin gear.

Furthermore, as

Rearranging gives:

From equations (1) and (3), we obtain:

Since

where

Based on the dynamic motion simulation method of the RV reducer, the rotation angle of the sun gear

Subsequently, this method can be used to obtain the transmission error data of the RV reducer under different machining errors.

Grid-independence and experimental verification

To balance the efficiency and accuracy of finite element simulation in calculating the transmission error of an RV reducer, this paper refines the contact area of the RV reducer’s finite element model. The sparse grid RV reducer finite element model is shown in Figure 4(a), with a grid count of 208,862. The denser grid RV reducer finite element model is shown in Figure 4(b), with a grid count of 368,830. Based on the method described in Section “Transmission error calculation,” the peak-to-peak transmission error values for the two simulation results are calculated. The peak-to-peak transmission errors are 1.3 and 1.1 arcmin, respectively, and remain unchanged after further grid densification.

Grid refinement results.

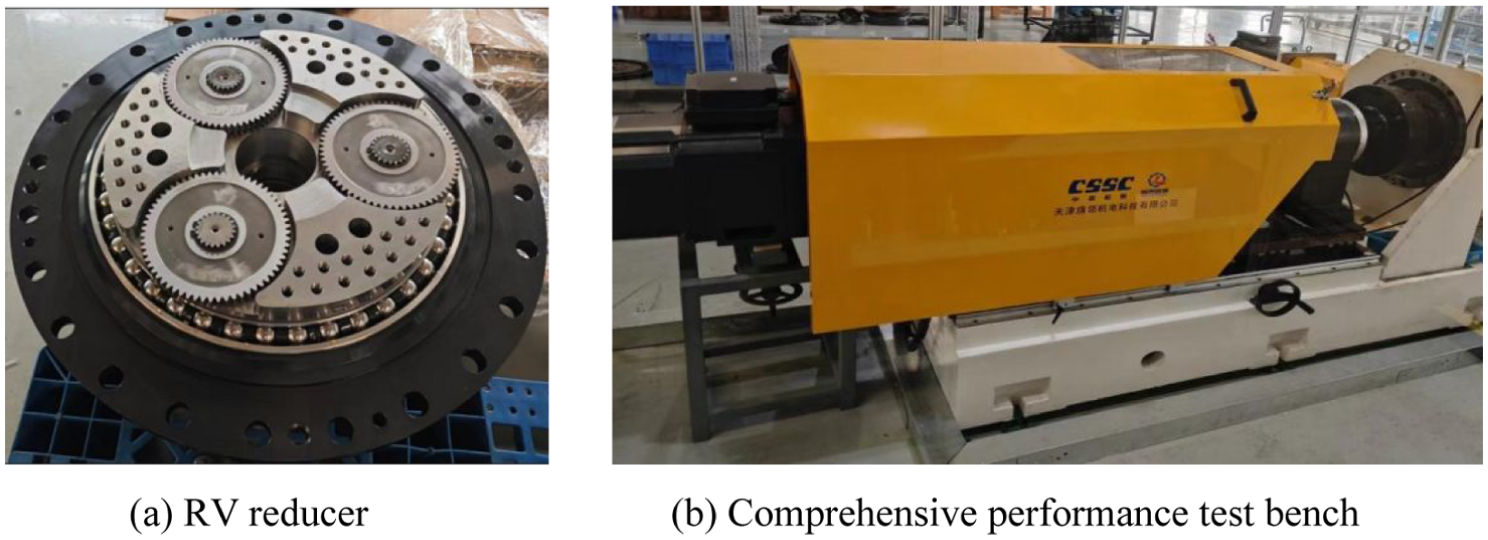

Meanwhile, as shown in Figure 5, this paper also conducted an experiment on the load running-in of the RV reducer under the same load on the test bench. Compared with the measured data of 0.9 arcmin, the refined simulation results are more accurate. Considering the complexity of computer performance and grid division, it is decided to adopt the finite element model with 368,830 grid points as the final finite element model for constructing the surrogate model.

Performance test of RV reducer.

Transmission error prediction of RV reducers based on stacking ensemble learning

The machining errors of RV reducers have a significant impact on transmission errors. This study intends to select eight typical machining errors of RV reducers, conduct a sensitivity analysis, and identify the three machining errors that have the greatest impact on PPSTE from among the eight typical machining errors for the subsequent construction of a surrogate model. The eight selected machining errors are crankshaft eccentricity error and cylindricity errors f a , f b , pin gear pin distribution circle radius error and radius errors f e , f f , pin gear housing pin hole distribution circle radius error and radius errors f c , f d , and cycloid wheel bearing hole distribution circle error and radius errors f g , f h . Finally, three typical machining errors are selected, samples are taken within their tolerance ranges, and the corresponding peak-to-peak transmission error values are obtained through the dynamic motion simulation method described in Section “Geometric modeling and dynamic motion simulation of RV reducer” to construct a dataset. Subsequently, a high-precision and robust Stacking ensemble learning model is constructed to achieve accurate prediction of transmission errors of RV reducers.

Definition of machining error and sensitivity analysis

Based on the definition of machining errors and analysis of long-term engineering practice and manufacturing process capabilities, this study defines and assigns tolerances to the following eight basic machining errors. The crankshaft eccentricity error and cylindricity error f a and f b are defined as 0–0.02 mm; the distribution circle radius error and radius error f c and f d of the pin hole in the pin gear housing are defined as 0–0.02 mm; the manufacturing error of the distribution circle radius error f e and pin gear pin radius f f is defined as 0–0.02 mm; the distribution circle error and radius error f g and f h of the cycloid bearing hole are defined as 0–0.02 mm.

The influence of eight machining error factors on PPSTE was analyzed using orthogonal experimental design. According to the manufacturing tolerance standards for RV reducers, three levels were set for each factor: eccentricity of 0, 0.01, and 0.02 mm, as shown in Table 3.

Orthogonal experiment table.

Next, hypotheses are proposed. The null hypothesis (H0): different levels of each processing error factor have no significant effect on PPSTE; the alternative hypothesis (H1): at least one different level of a processing error factor has a significant effect on PPSTE. Then, the total sum of squares (

In the formula: n represents the number of trials; y i denotes the observed value of the ith trial, and y is the mean of all trial observations y i .

In the formula:

In the formula,

In the formula,

Then, the between-group mean square (

In the formula,

In the formula:

In the formula, m represents the level number. The within-group degrees of freedom

The formula for calculating the F statistic is

Finally, given a significance level

The results of the variance analysis are presented in Table 4. According to the variance analysis results, the radius deviation of the pin gear, f f (F = 15.8, p = 0.002128), the eccentricity error of the crankshaft, f a (F = 12.87, p = 0.00171), and the radius error of the pin gear distribution circle, f e (F = 4.86, p = 0.03354), have a significant impact on PPSTE, while the other five factors have a relatively minor influence. The three most significant transmission errors determined for the RV reducer transmission error are the radius deviation of the pin gear, f f , the eccentricity error of the crankshaft, f a , and the radius error of the pin gear distribution circle, f e . These three machining errors will be used for the subsequent construction of the RV reducer transmission error proxy model.

Analysis of variance results.

The transmission precision of RV reducers largely depends on the manufacturing precision of their core components. Among various manufacturing error sources, the machining error of the pin hole system on the pin gear housing, the dimensional error of the pin pins themselves, and the eccentricity error of the crankshaft are the most critical factors affecting meshing quality. Based on long-term engineering practice and analysis of manufacturing process capability, this study defines the following three basic machining errors and assigns their tolerances as follows:

As an intermediate transmission component between the cycloidal gear and the pin gear housing, the machining error of the pin pins causes problems such as unbalanced load distribution, increased transmission backlash, and intensified vibration and noise. To ensure a good fit of the meshing pair, the manufacturing tolerance for the radius of the pin pins is defined as 0–0.01 mm.The eccentricity of the crankshaft is a core kinematic parameter that determines the correct meshing relationship between the cycloidal gear and the pin pins. If its precision is not guaranteed, the ideal meshing state will be destroyed, which may lead to overload of some tooth surfaces, accelerated wear, or even the occurrence of interference. For this reason, the manufacturing tolerance for the eccentricity is defined as 0–0.015 mm. The radius of the pin distribution circle refers to the radius of the ideal circle where the centers of all pin holes on the pin gear housing are located. If there is an error in the radius of the distribution circle, it will systematically displace the meshing positions of all pin teeth, which is equivalent to changing the theoretical center distance between the cycloidal gear and the pin gear housing, thereby exerting an overall impact on transmission precision and torsional stiffness. Based on the high-precision coordinate boring or the process capability of machining centers for the pin gear housing, the manufacturing tolerance for the radius of the pin distribution circle is defined as 0–0.02 mm.

Stacking ensemble learning method

Stacking (Stacked Generalization) is a heterogeneous ensemble learning framework that was first proposed by Wolpert in 1992.19,20 Its core idea is to achieve hierarchical fusion of prediction results through a two-layer model architecture: Base-learners generate diverse prediction outputs in the first layer, while the Meta-learner constructs an optimal combination strategy based on the outputs of the Base-learners in the second layer. The Stacking ensemble learning framework has demonstrated stronger generalization potential in dealing with complex nonlinear relationships. The algorithm flow of Stacking ensemble learning is shown in Figure 6, which includes the following steps:

Step 1: Data Preparation. Divide the original dataset into a Train Set and a Test Set, where the Train Set is used to train the model, and the Test Set is used to evaluate the model accuracy.

Step 2: Train Base-learners. First, select multiple different machine learning algorithms as base-learners (e.g. Support Vector Machine (SVM), K-Nearest Neighbors (KNN), Random Forest (RF), etc.). These base-learners can be combinations of different parameters of the same type of algorithm or combinations of different types of algorithms. Next, split the Train Set into K folds (K-Fold). For each base-learner, use K−1 folds to train the model in turn, and use the trained model to make predictions on the remaining 1 fold. Repeat this process K times to ensure each fold is predicted exactly once, then combine the prediction results of all folds to generate the prediction results of the base-learner on the Train Set. Finally, retrain each base-learner using the complete Train Set, and use the trained base-learners to make predictions on the Test Set to generate the prediction results of the Test Set.

Step 3: Train the Meta-learner. First, take the aforementioned prediction results as new features and combine them column-wise to form a Meta-training Set. Next, select several machine learning algorithms as meta-learners (e.g. Linear Regression (LR), Logistic Regression, Gradient Boosting Decision Tree (GBDT), etc.). The task of the meta-learner is to generate the final prediction based on the prediction results of the base-learners. Finally, train the meta-learner using the Meta-training Set.

Step 4: Evaluation of Prediction Results. Combine the prediction results of each base-learner on the Test Set column-wise to form a Meta-test Set. Input the Meta-test Set into the trained meta-learner to generate the final prediction results. Use the true labels of the Test Set and the final prediction results to calculate the model’s prediction performance metrics, such as the coefficient of determination (R2), Root Mean Square Error (RMSE), etc.

Stacking ensemble learning workflow.

Selection of base-learners and meta-learner

In the selection of base-learners, comprehensive consideration is given to algorithm diversity and performance balance. Support Vector Regression (SVR), eXtreme Gradient Boosting (XGBoost), Light Gradient Boosting Machine (LightGBM), Random Forest (RF), and K-Nearest Neighbors (KNN) are selected as base-learners. These algorithms exhibit significant differences in hypothesis space, optimization objectives, and data sampling, enabling them to provide differentiated and complementary predictive features. For the selection of meta-learners, three algorithms—XGBoost, CatBoost, and LightGBM—are adopted. Through their unique modeling strategies (such as complex interaction learning, local dependency capture, and global-local pattern correlation mining), they effectively integrate the outputs of the base models. Meanwhile, an overfitting prevention mechanism is established to ensure the model has excellent generalization ability. All base models ensure the consistency of prediction accuracy through cross-validation, avoiding interference from noise of weak models on the ensemble effect.

Dataset construction and model training

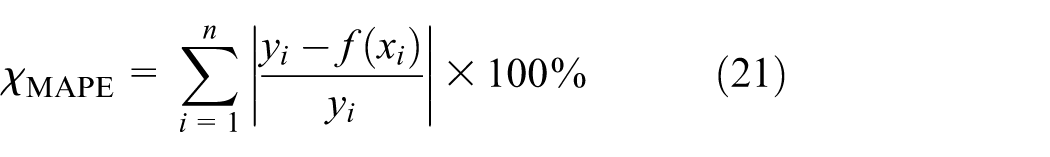

First, 60 sets of machining error data of RV reducers are obtained through Latin Hypercube Sampling (LHS). The manufacturing tolerance for the pin radius is defined as 0–0.01 mm, the manufacturing tolerance for the eccentricity is 0–0.015 mm, and the manufacturing tolerance for the radius of the pin distribution circle is 0–0.02 mm. The corresponding peak-to-peak values of transmission error are acquired based on dynamic simulation. A 5-fold cross-validation strategy is adopted to train four base-learners (SVR, XGBoost, RF, and KNN) to generate the meta-training set and meta-test set. The diversity of the base-learners is double guaranteed by the differences in algorithm principles and the correlation analysis of prediction results, ensuring the effectiveness of the ensemble model. At the meta-learner layer, XGBoost, CatBoost, and LightGBM are trained respectively. The hyperparameters of all models are systematically optimized through Bayesian optimization to ensure the optimal prediction performance of the models. To combat the risk of overfitting in small sample sizes, we divided all data into a training set (70%) and an independent test set (30%). All performance metrics are based on the test set that was not involved in training, objectively reflecting the model’s generalization ability. We adopted a 5-fold cross-validation strategy to train four base learners: SVR, XGBoost, RF, and KNN, generating meta-training sets and meta-test sets. The diversity of the base learners is ensured through both algorithmic principle differences and predictive result correlation analysis, ensuring the effectiveness of the ensemble model. At the meta-learner layer, XGBoost, CatBoost, and LightGBM were used for training. All models’ hyperparameters were tuned through a Bayesian optimization system to ensure optimal predictive performance. Finally, the prediction performance of the model is evaluated by indicators such as RMSE, Mean Absolute Percentage Error (MAPE), and R2, and compared with individual base-learners for verification. The calculation formulas of the indicators are as follows:

Where: n is the number of samples in the test set; y

i

is the true peak-to-peak value of transmission error;

through the aforementioned indicators, the prediction accuracy and generalization ability of the model are verified.

Discussion and analysis of results

Using the aforementioned process, the Stacking ensemble learning model is trained, and the prediction results of the four base-learners (SVR, XGBoost, RF, and KNN) as well as the Stacking ensemble model on the training set and test set are obtained. With the sorted sample indices as the horizontal axis, the variation curves of the true values and the predicted values of each model are plotted, as shown in Figure 7. In the visualization graph, the base-learners are represented by dashed lines with medium transparency to reflect the prediction fluctuations of individual models; the Stacking ensemble model uses solid lines with increased line width to highlight the stable trend after integration; the true values are used as the reference benchmark, represented by black thick solid lines with dot markers. It can be observed from the figure that the prediction trajectories of individual base-learners show obvious oscillatory deviations (e.g. local overfitting or underfitting), while the curve of the ensemble model is closer to the true values in the overall trend with a smaller fluctuation range.

Comparative analysis of training set prediction.

By comparing the prediction performance of each base-learner and the three Stacking ensemble models on the test set, as shown in Figure 8, the prediction trajectories of the base-learners (SVM, KNN, XGBoost, RF, represented by dashed lines) generally exhibit relatively large fluctuations. In contrast, the three Stacking models using XGBoost, CatBoost, and LightGBM as meta-learners (represented by solid lines) demonstrate better fitting characteristics, and the degree of overlap between their prediction curves and the true value trajectory is significantly improved.

Comparative analysis of test set prediction.

The prediction results of different base-learners and the final ensemble learning model are shown in Table 5. First, comparing the ensemble models with different meta-learners, the Stacking_XGB model (using XGBoost as the meta-learner) performs the best among the three indicators. Compared with the second-ranked Stacking_LGB (using LightGBM as the meta-learner), its R2 increases by 14.4%, while RMSE and MAPE decrease by 27.5% and 27.0% respectively. This indicates that the Stacking_XGB model, through the unique regularization terms and boosting mechanism of XGBoost, effectively integrates the nonlinear feature capture capabilities of base-learners such as SVM and KNN, demonstrating stronger generalization performance in handling complex data relationships.

Prediction accuracy metrics of the model.

Second, among the individual base-learners, SVM performs the best in terms of R2 and RMSE. The Stacking_XGB model increases R2 by 14.3% and decreases RMSE by 27.4% compared with SVM. KNN performs the best in terms of MAPE, and the Stacking_XGB model reduces MAPE by 23.9% compared with KNN. This leapfrog performance improvement fully verifies the significant advantages of the ensemble learning model. The base-learner layer achieves multi-angle coverage of the feature space through diverse modeling strategies such as SVM and KNN, while the meta-learner layer effectively suppresses the overfitting tendency of individual models through the gradient boosting mechanism of XGBoost, ultimately achieving a significant improvement in prediction accuracy. It should be noted that the maximum value of R2 in the table is 0.8775. This may be due to insufficient training data, which prevents the model from fully capturing complex nonlinear features, resulting in some overfitting. At the same time, finite element simulation itself is an approximation of “real physics” and involves some simplifications (such as ignoring lubricating oil films, microscopic surface morphology, etc.). These unsimulated physical factors also contribute to part of the prediction deviation.

Transmission error optimization of RV reducers based on differential evolution algorithm

Differential Evolution (DE) is a population-based heuristic search algorithm for solving continuous optimization problems. Proposed by Rainer Storn and Kenneth Price in 1995, it is inspired by genetic algorithms but adopts unique mutation and crossover mechanisms. The overall flow of the differential evolution algorithm is shown in Figure 9, which mainly includes the following five steps:

(1) Initialize the population as:

Where

(2) Mutation: Mutation operation is an important operation of the differential evolution algorithm. Individual mutation is achieved through differential operation: randomly select two different individuals from the population, scale their vector difference, and then perform vector synthesis with the individual to be mutated. Common differential strategies are as follows:

Where F is the scaling factor, and

The gth generation population after mutation can be expressed as:

(3) Crossover: Perform crossover using the gth generation population and the mutation intermediates. The process can be expressed as:

Where CR is the crossover probability;

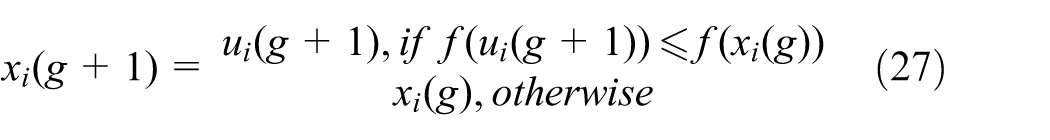

(4) Selection: The greedy algorithm is adopted to select individuals for the next-generation population. The process can be expressed as:

(5) Termination Condition: Repeat steps 2–4 until a certain termination condition is met, such as reaching the maximum number of iterations or the quality of solutions meeting the predetermined threshold.

Based on the ensemble learning model with the highest prediction accuracy in Section “Transmission error prediction of RV reducers based on stacking ensemble learning,” the differential evolution algorithm is adopted to optimize machining errors, taking minimizing the peak-to-peak value of transmission error as the objective. The parameters of the optimization algorithm are set as follows: the maximum number of iterations is 1000, the population size is 30, the scaling factor F is determined by the mutation parameter (with a range of (0.5, 1)), the crossover probability CR is set to 0.7, and the convergence tolerance of the algorithm is set to 0.01.

Overall flow of the differential evolution algorithm.

To demonstrate the accuracy and robustness of the optimization results, we conducted a simple local sensitivity analysis on the optimal tolerance combination obtained through the differential evolution algorithm. We slightly perturbed the error values near the optimal solution and observed the change gradient of PPSTE. The results indicated that PPSTE is relatively insensitive to error changes at the optimal solution, indicating the solution’s robustness. Additionally, four sets of machining errors were randomly generated, and the meshing processes for these four sets were modeled and simulated. PPSTE was calculated and compared with the optimal modification parameters. The modification parameters and their corresponding PPSTE values are presented in Table 6.

Optimized machining errors.

It can be seen that the transmission error fluctuation of the optimized gear pair is the smallest, with a PPSTE of only 1.2 arcmin. Compared with the gear pairs with the other four sets of machining errors, the PPSTE is decreased by an average of 52.48%, which significantly improves the vibration and noise reduction effect of the gear pair. This verifies the reliability and accuracy of the method proposed in this paper.

Conclusions and prospects

(1) Proposes a high-precision and fast prediction method for the transmission error of RV reducers based on Stacking ensemble learning. The ensemble learning model takes Support Vector Regression (SVR), XGBoost, Random Forest (RF), and K-Nearest Neighbors (KNN) as base learners, with XGBoost serving as the meta-learner. Experimental results show that compared with the optimal single model, the coefficient of determination R2 of the ensemble learning model increases by 14.3%, and the root mean square error (RMSE) decreases by 27.4%, effectively addressing the problem of insufficient generalization ability of a single surrogate model.

(2) Adopts the differential evolution algorithm to optimize the machining errors of RV reducers, the peak-to-peak value of the transmission error for the optimized RV reducer was reduced by an average of 52.48%, reducing the peak-to-peak value of the RV reducer’s transmission error and significantly improving the vibration and noise reduction effect. By optimizing the machining errors of the RV reducer using the differential evolution algorithm, the peak-to-peak value of the transmission error of the RV reducer has been reduced, significantly enhancing the shock absorption and noise reduction effects. The core value lies in providing engineers with an efficient “digital testbed,” enabling them to quickly evaluate the final transmission accuracy under different combinations of tolerance levels, thus making the most cost-effective accuracy allocation decisions during the design phase. At the same time, the single-objective optimization presented in this paper has its limitations. Its primary objective is to demonstrate the effectiveness of the proposed framework in minimizing the core indicator of transmission error, laying the foundation for subsequent multi-objective optimization.

(3) By combining finite element simulation and machine learning, a method for predicting transmission errors of spatially driven components has been developed, encompassing geometric modeling, mesh generation, dynamic simulation, and data-driven analysis. This study focuses on geometric errors as the core factor, and does not yet consider complex factors such as clearance, dynamic load changes, friction, and thermal effects. The introduction of these factors would greatly increase model complexity and computational costs. Incorporating the coupled effects of multiple physical fields into consideration is an important direction for future research.

Footnotes

Handling Editor: Cuneyt Fetvaci

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.