Abstract

Tool wear is a critical factor that directly impacts product performance, making accurate and timely detection essential for ensuring machining quality. In particular, under conditions of shallow cutting depth, tool tip wear significantly exceeds edge wear, yet the detection of tool tip wear has received little attention. Therefore, this paper proposes an image segmentation algorithm for detecting milling cutter tip wear, enabling precise measurement of tool tip wear. Initially, Valley-emphasis method is employed for initial segmentation of ground images to detect and segment the bottom edges. Subsequently, the detected edges serve as masks for parallel computation, achieving precise edge segmentation. Finally, the XOR result of the finely segmented edges and the mask is used to determine the wear region. Compared to existing detection algorithms, this method enhances edge detection accuracy without increasing detection time. The maximum error compared to manual measurement is within 0.007 mm, with a minimum accuracy rate of 97.92%. Additionally, the algorithm’s runtime has been reduced to 15.53 s, a decrease of approximately 94.68%. These results substantiate the efficacy of the proposed approach.

Introduction

With the continuous development of China’s aerospace industry, there is an increasing demand for materials that are difficult to machine, complex structural components, and thin-walled parts, which have higher performance requirements. These components are typically processed using milling operations. In particular, thin-walled parts made of aircraft aluminum alloys are machined using high-speed, low-feed, and low-depth-of-cut techniques, enabling the complete processing of all relevant dimensions in a single clamping operation and requiring more precise dimensional and positional tolerances. During the milling process, friction between the milling cutter and the workpiece generates a significant amount of heat, resulting in the conversion of mechanical energy into plastic deformation. As the duration of friction increases, the tool experiences prolonged pressure, leading to tool wear. The performance of the milling cutter deteriorates with increasing tool wear and significantly affects surface integrity, machining efficiency, and surface properties during the machining process, often leading to process failure. According to surveys, the costs associated with tooling and tool changes account for 3%–12% of the total machining cost, and tool inspection contributes to downtime, accounting for 7%–20% of the total machining time. 1 Abnormal tool conditions cause 10%–40% of machine downtime, 2 with less than 38% of industrial tools reaching their expected service life. 3 Therefore, online detection of milling tool wear, also known as tool condition monitoring (TCM), is currently one of the research hotspots in academia and industry.

Currently, most methods for detecting milling tool wear in industrial production rely on empirical observation and offline measurements. Manual inspection is not only costly but also subjective, lacking the ability to quantify the degree of wear. In offline measurements, the tool and tool holder need to be repeatedly clamped and positioned, significantly reducing efficiency and introducing additional assembly errors and changes to the wear mechanism, which outweigh the benefits. Both methods require machine downtime for inspection, further decreasing machining efficiency and increasing enterprise costs.4,5

In the recent scholarly landscape, there has been an upsurge of suggested solutions targeting wear detection, which can be broadly bifurcated into indirect and direct methodologies. The indirect approach employs apt sensors to harvest signals such as cutting forces, 6 acceleration and vibrations,7,8 and temperature, 9 forging an association between these singular or conglomerate signals with the condition of tool wear.10,11 Li and Zhu 12 advocated for an empirical statistical model that estimates the in situ area of tool wear, incorporating both process parameters and force characteristics. Zhou et al. 13 introduced a TCM method that synergizes the optimal feature parameters of acoustic sensor signals with a dual-layer kernel extreme learning machine. Twardowski et al. 14 established a link between the vibration acceleration signal, serving as the model input data, and the wear value of the end-milling tool VBc. He et al. 9 proposed a stacked sparseautoencoder model to predict tool wear, utilizing raw temperature signals and backpropagation neural network regression. Stavropoulos et al. 15 simultaneously monitored acceleration and spindle drive current sensor signals, accomplishing tool wear prediction through a cubic regression model and a pattern recognition system. Lei et al. 16 proposed physically based models, statistically based models, data-driven models, and hybrid models. The indirect approach may be beneficial in practical applications; however, signal analysis fails to provide direct and accurate measurements of tool wear values, as signals are subject to influence from industrial environmental factors.17,18

The direct approach is commonly referred to as a machine vision system, primarily implemented through charge-coupled device (CCD) cameras or optical microscopes. Direct tool condition monitoring focuses on the surface wear profile of the tool, the integrity of the workpiece surface, the morphology of the chips, and finer classification based on these outcomes. Fernández-Robles et al. 19 used various image processing techniques to assess the area of flank wear using micro-milling tool images. Li et al. 20 established a probabilistic function based on the correlation principle between the grayscale variations along the cutting edge and the relative positions of the original and worn boundaries, reconstructing the curved original tool boundary through Bayesian inference. Lim et al. 21 utilized a deep learning regression model to predict tool wear states from features extracted from 2D images of the workpiece surface profile. Pagani et al. 22 extracted different indicators from the RGB and HSV image channels to guide a neural network in chip classification, indirectly assessing tool wear. Zhang et al. 23 proposed a theoretical approach to monitor ultra-precision rake face milling tool wear by observing changes in chip morphology. García-Ordás et al. 24 classified the wear of form milling tools using machine learning and computer vision techniques. The direct method has the advantage of non-contact and accurate values, but has three major problems: difficult image acquisition, inability to detect online, and complex algorithms.

In this paper, the real-time online detection of milling cutter bottom tip wear area is achieved through machine vision algorithms. The milling cutter bottom images are collected in real time by designing CNC programs and controlling tool installation. Environmental light interference is reduced by adjusting the combination of light sources. An improved image processing algorithm is employed to detect the wear area of the tool tip, ensuring measurement accuracy while reducing computational time and addressing the issue of limited tool sample quantity. This provides a novel approach to online detection using machine vision.

Related works

Image segmentation is a fundamental task in many image processing and computer vision applications.25–27 Its objective is to transform an image into a collection of pixel regions with specified labels, enabling the localization, differentiation, and measurement of labeled objects. In practical applications of tool wear detection, accurate segmentation becomes challenging due to issues such as complex tool structures, uneven illumination within the machine tool, and excessive edge fluctuations during the acquisition process, leading to significant measurement errors and unreliable results.

Currently, image segmentation algorithms can be classified into two main categories: deep learning algorithms and foundational algorithms. Deep learning has proven to be a powerful tool in signal detection and image processing, allowing for pixel classification based on training data. However, deep learning typically involves millions of trainable parameters, and successful training heavily relies on large, accurately labeled datasets. Additionally, deep learning lacks a solid mathematical foundation and often suffers from unexpected side effects, such as adversarial attacks. 28 In contrast, foundational algorithms, such as various thresholding methods and variational energy functional methods, exhibit strong stability and adaptability. Thresholding methods generally segment images based on pixel grayscale values, including Otsu, K-means, and KSW entropy algorithms. These methods are simple, computationally efficient, and can be flexibly customized to meet different requirements. Variational energy functional methods and their numerical solution strategies are easy to control and implement, suitable for various tasks. 29 Among them, active contour models (ACMs) have been proposed by numerous researchers, and effective numerical computation formulas have been provided.

The Otsu method and its improvements

The Otsu method is a commonly used generic image thresholding method. By selecting the threshold with the largest inter-class variance or the smallest intra-class variance from the image histogram, the Otsu method can obtain satisfactory segmentation results when the target and the image background have similar variances. However, the method fails if the target and the image background differ significantly in size. In other words, the Otsu method works well for bimodal histograms but fails for images with a single-peaked distribution or near-single-peaked histogram.

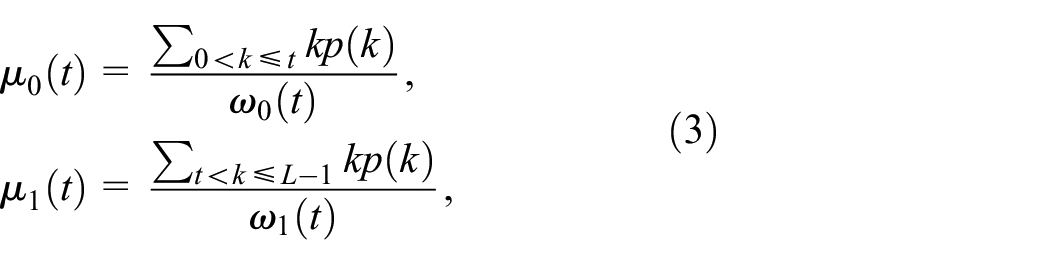

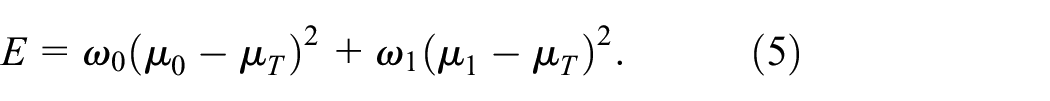

The image can be described as

If the image is divided into two classes,

Let

and

Then for a threshold

The optimal threshold

The image is then binarized according to the optimal threshold

The idea of the Valley-emphasis (VE) method is to attach a weight to Otsu’s objective function and express equation (5) as follows:

where

Active contour models (ACMs)

Based on the characteristics of active contours, ACMs can be categorized into edge-based models31,32 and region-based models.33,34 Edge-based models primarily rely on edge gradient information and are suitable for segmenting images with high contrast and well-defined edges, but they often fail on noisy images. Region-based models are less sensitive to initialization and perform better with noise and weak edges by using information like region mean median, variance, and texture distributions.

In region-based models, the Chan-Vese (CV) model

34

simplifies the Mumford-Shah (MS) model by assuming each segmented subregion is homogeneous, allowing for the segmentation of piecewise constant images. The model employs the level set method (LSM)

35

to represent regions and boundaries, exhibiting favorable mathematical properties. Building upon this, Jung

36

proposed a piecewise smooth model to address intensity inhomogeneity and employed an

MS model

Let

where

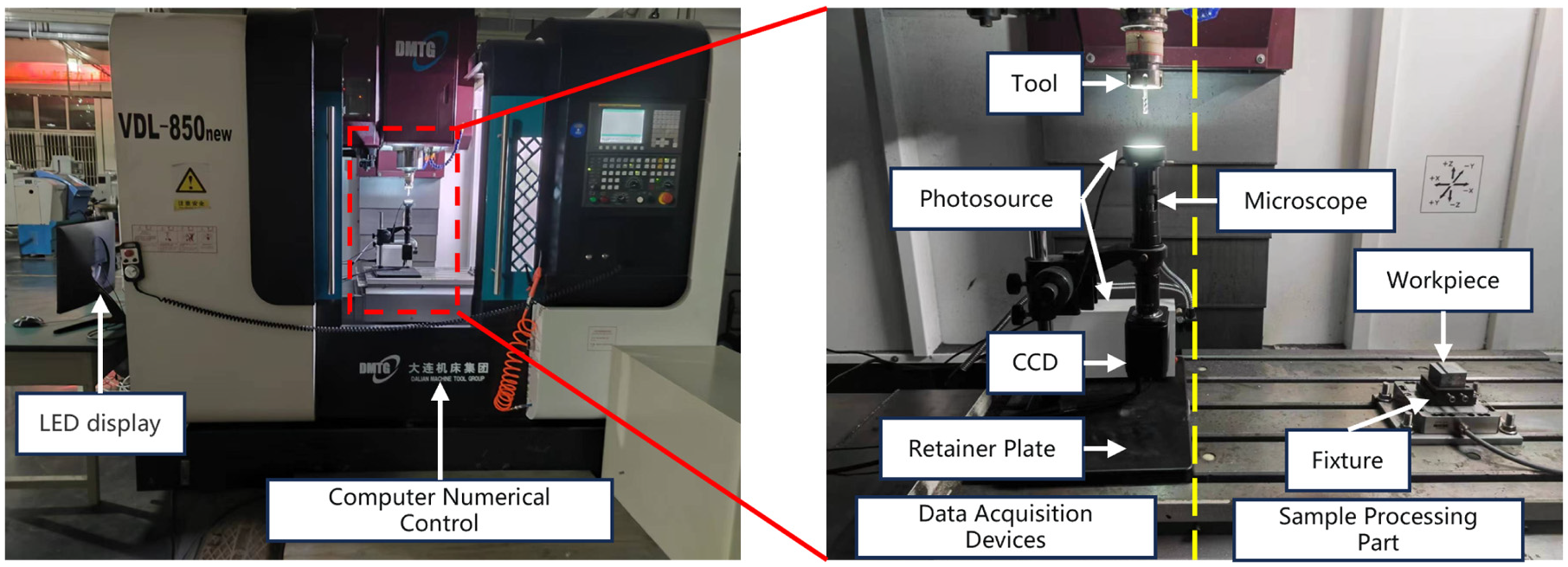

L1PS model

By assuming that the true image is the sum of a piecewise-constant function and a smooth function, a piecewise-smooth image segmentation model with

where

In equation (11), the

Methodology

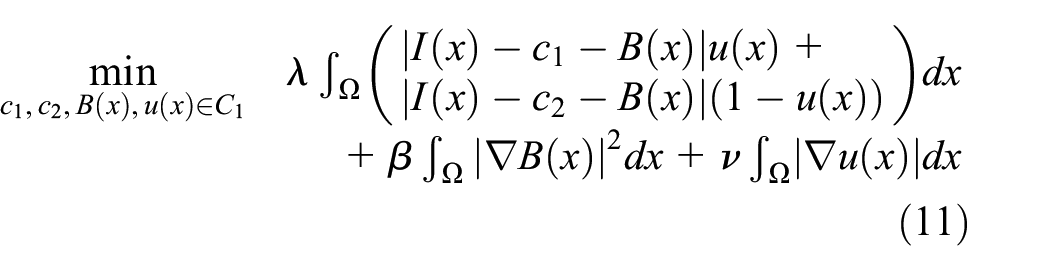

The tool wear online detection system is composed of an image acquisition module and an image processing module, as shown in Figure 1. The image acquisition module divides the workbench into two parts: the detection area and the processing area, and utilizes the detection area to set up the image acquisition equipment for image capture. The image processing module is based on a self-matching algorithm. The image processing is performed in two steps: segmenting the multi-edge image into single-edge images and segmenting the worn region of each individually segmented edge. The flowchart of the image processing module is shown in Figure 2.

Experimental setup used for obtaining tool wear images.

Overall flowchart of image processing module.

Image acquisition

This paper presents an online detection approach that divides the image acquisition module into an acquisition system and a control system. The acquisition system consists of a camera, a microscope, an adjustable LED ring light, and an adjustable linear cold light source, enabling the capture of cutting edge images in a single pass. The intensity of the light source is pre-set based on the ambient light and the pre-experimental image capture results, as illustrated in Figure 3. The control system utilizes numerical control machine tools and programming codes to implement spindle speed reset and tool position movement. The programming code follows the rule of setting a fixed processing time in the code, during which the tool disengages from the work surface, moves to a fixed coordinate above the camera, the spindle stops rotating (n = 0), the feed speed becomes 0 (F = 0), and a pause of 5 s is specified. After the image capture is completed, the spindle starts rotating again, and the tool returns to its original position to continue executing the remaining program.

Results of online image acquisition: (a) Roberts and (b) acquisition effect with fixed extension length.

Image processing

L1PS can flexibly handle irregular boundaries and shapes in images, providing excellent segmentation performance for blurry edges, particularly suitable for edge wear regions with highly blurred boundaries and smooth transitions in base edge images. However, L1PS has some limitations. The method is not suitable for multi-object segmentation and is sensitive to the initial region. Additionally, it has high computational complexity, requiring excessive computation time when applied to the entire base edge image. Therefore, this paper proposes an improved method, where the image is first segmented using the VE algorithm. The segmentation results not only transform the image into single-object tasks but also provide initial masks for L1PS. The detailed steps are as follows:

Initial image segmentation

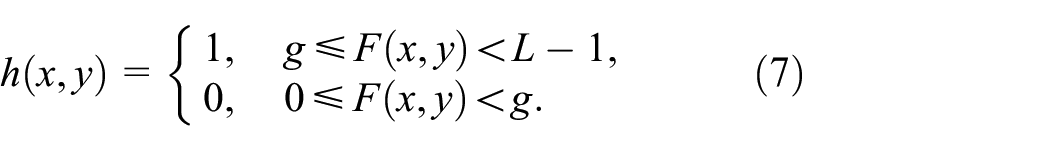

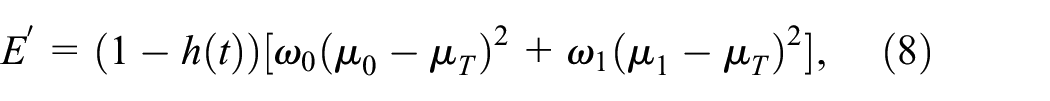

This study adopts the Valley-Emphasis (VE) algorithm for initial segmentation, where the threshold

To ensure consistency of symbols throughout the text, the segmentation results of (7) have been renamed as shown in equation (13).

Milling cutter number identification

To accurately identify the number of cutting edges on a worn milling cutter, an improved method is proposed that combines morphological operations and Hough Transform. This method involves applying threshold segmentation, followed by morphological processing, Hough Transform line detection, and finally analyzing the angle distribution to determine the number of cutting edges.

Morphological operations

Morphological opening and closing operations are performed on the binary image using the same structuring element to remove noise and enhance the features of the cutting edges.

Hough Transform for line detection

The Hough Transform is used to detect lines in the edge-detected image. This technique works by transforming points in the Cartesian coordinate system into the parameter space, where lines are represented by their distance and angle from the origin, facilitating the identification and analysis of straight line features.

Filtering and merging lines

To filter out short lines and merge closely spaced lines with similar angles, the following criteria are used:

First, the length

Second, only lines longer than a specified minimum length

Third, lines that are close to each other and have similar angles within a threshold

Analyzing angle distribution

The angle distribution of the lines is analyzed, and the number of significant peaks in the histogram is identified, corresponding to the number of cutting edges on the milling cutter (N).

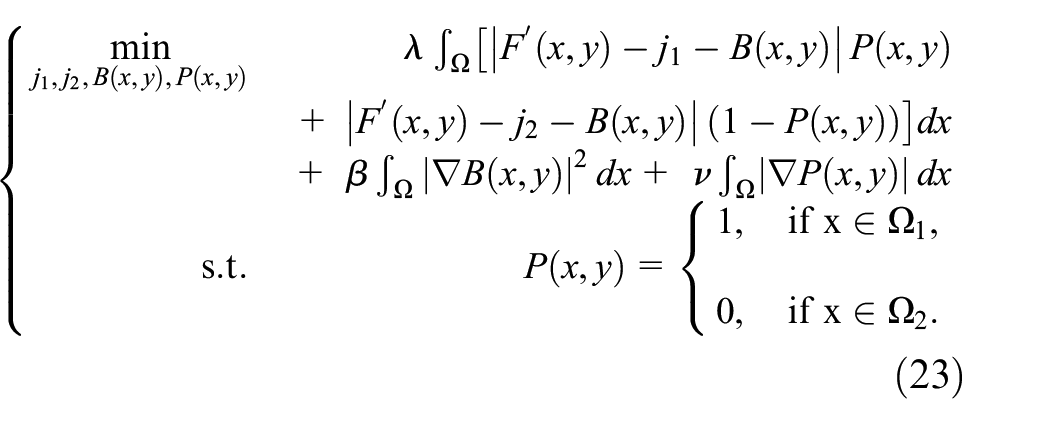

Single-edge image extraction

After identifying the number of cutting edges on the milling cutter, the complete image of the cutter is segmented into parts, each containing a single cutting edge. The segmentation results are shown in Figure 4.

The result of single-objective image extraction: (a) grayscale image, (b) segmented cutting edge result, and (c–f) sub-images of the cutting edges corresponding to the numbers in the image.

Calculate segment boundaries

For a cutter with

Create masks for each segment

For each segment, a binary mask is created. The mask covers the angular range from

where

Apply masks to the image

The image is segmented by applying each mask to the original image

where

The edge image of a single cutting edge is given by:

where

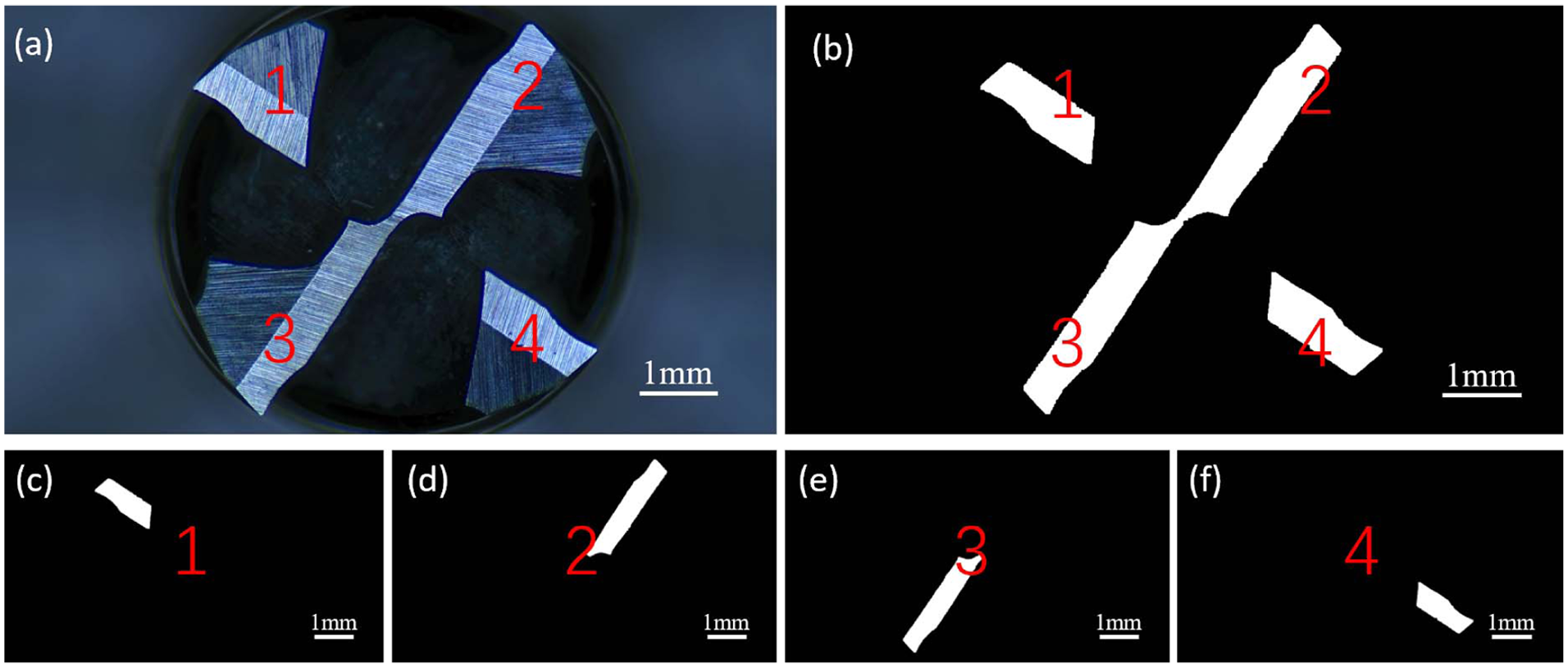

Detailed image segmentation

Using edge images as masks

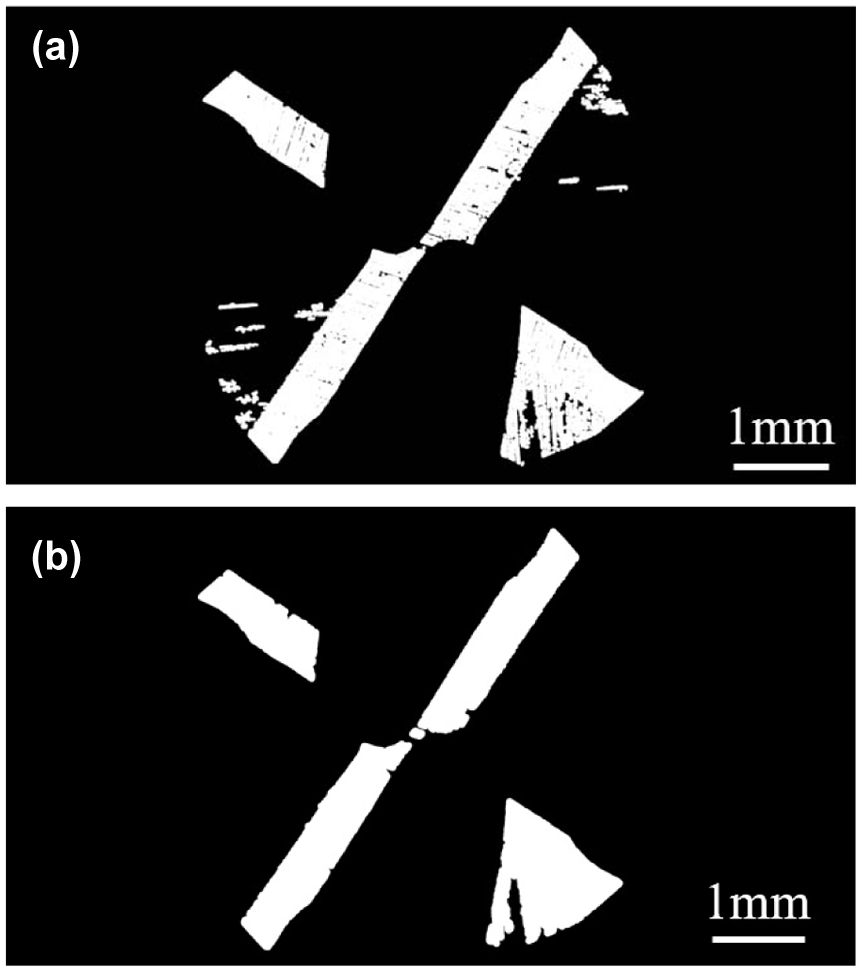

As shown in Figure 5, global thresholding methods may fail to provide accurate segmentation in complex structures, especially when the tool is worn. The L1PS algorithm, which accounts for intensity inhomogeneity, provides superior edge segmentation results. The edge image

The segmentation results of the proposed algorithm: (a) original image, (b) the single-objective mask, (c) VE segmentation result, and (d) final segmentation results.

L1PS algorithm rewrite

The L1PS algorithm uses the L1 norm (Manhattan norm) to effectively handle outliers and noise in image decomposition, addressing intensity inhomogeneity and demonstrating robustness to noise and outliers. It ensures smooth transitions between worn and unworn cutting edges.

Given the edge image

Let

where

The L1PS model minimizes the following objective function to obtain the wear edge image

where

Optimization process

To solve the above model, one main difficulty of the numerical implementation is the binary constraint. To this end, we define a convex set

The minimization problem is not convex with respect to

Calculating the wear area

The number of pixels within the wear region of the milling cutter tip is determined by comparing the wear edge image

where

In the initial step, camera calibration was performed to determine the actual size represented by each pixel, enabling the conversion of pixel count to actual physical dimensions. This calibration allows for precise measurement of wear based on the area. The discrepancies between the

Experimentation and discussion

Test equipment

In this study, a three-axis machining center was employed for tool wear testing, achieving a milling accuracy of 1 µm. The milling path followed a parallel reciprocating motion along the

Tool path.

The quality of the collected images is a key factor in ensuring the effectiveness of the algorithm. Therefore, during the equipment setup process, special attention was given to controlling the lighting conditions and the machining environment.

Lighting conditions: Although the machine operates in a closed environment, external light interference cannot be completely eliminated. To mitigate this, we combined a ring light source with a linear light source. The ring light helps to supplement and reduce ambient light disturbances, while the linear light source enhances the contrast between the tool’s cutting edge and the background.

Processing environment: During normal machining, the tool is influenced by cutting fluids and chips, which can significantly affect image quality. In our study, we first employed a dry environment to avoid the effects of cutting fluids, and second, we continuously blew away debris to keep the tool surface clean.

In this research, the hardware environment is Intel® Core™ i5-6600K @ 3.50GHz × 4 CPU, 8GB of memory, and the development environment is MATLAB 2018b. The image acquisition equipment is two strip light sources and an industrial camera with a resolution of 1200 × 1600.

To ensure data reliability and consistency of working conditions, each milling cutter mills a total of three layers of workpieces and is determined to be scrapped, while a new cutter and new workpieces are replaced. The specific machining parameters are detailed in Table 1.

Detailed machining parameters.

Algorithm analysis

Segmentation evaluation method

In order to quantitatively evaluate the segmentation results of various segmentation models, this study employs several evaluation metrics to assess the accuracy of image segmentation, including Jaccard similarity (JS), Misclassification Error (ME), Pixel Accuracy (PA), F1-Score (F1), and Kappa coefficient (

Jaccard similarity (JS) measures the similarity between two sets of data by calculating the number of elements that are the same and different. The formula is defined as follows:

where

Misclassification Error (ME) measures the percentage of background pixels misclassified as foreground and foreground pixels misclassified as background. For a binary segmentation problem, the ME can be simply expressed as:

where

Another measure is to simply calculate the percentage of correctly classified pixels in the image. This measure is commonly referred to as Pixel Accuracy (PA) and is defined as:

where TP represents the number of correctly segmented foreground pixels, TN represents the number of correctly segmented background pixels, FP represents the number of incorrectly segmented foreground pixels, and FN represents the number of incorrectly segmented background pixels. In binary classification problems, PA is equal to

F1-Score (F1) is a measure of classification accuracy in statistical analysis. It takes into account both precision and recall of the classification model. The F1-score can be seen as the harmonic mean of precision and recall. The formula is defined as follows:

where

Kappa coefficient (

where

Analysis of initial image segmentation algorithm

Upon observing the structure of the cutting tool, (1) noticeable wear marks are generated due to friction at the bottom of the blade, with significant and uneven distribution of these marks. Denoising algorithms fail to effectively address this issue, resulting in the formation of holes on the surface during the segmentation process. (2) The cutting tool consists of a handle and a blade, causing the blade’s helical line and the outline of the handle to not overlap, creating background regions for both the handle and the chip flute, with some grayscale differences between them.

Figure 7 and Table 2 show the segmentation results of different algorithms. Figure 7(a) shows the original collected image of the milling cutter, which is used for comparing the effects of various segmentation algorithms and does not participate in the evaluation of segmentation algorithms.

Comparison of segmentation results of different methods: (a) original collected image, (b) OTSU algorithm, (c) VE algorithm, (d) Adaptive Local Thresholding, (e) Fast K-Means algorithm, and (f) Canny Edge Detection with Filling.

Evaluation metrics for different segmentation algorithms.

OTSU algorithm

The OTSU algorithm’s evaluation metrics all performed poorly, as shown in Figure 7(b). The Jaccard Similarity (JS) and F1-Score (F1) are almost zero, indicating that this algorithm almost failed to identify the correct regions in the segmentation task. The Misclassification Error (ME) is close to 50%, indicating a very high rate of misclassification. The Pixel Accuracy (PA) is slightly above 50%, showing the randomness of the algorithm. The Kappa coefficient is negative, indicating that the OTSU algorithm’s segmentation performance is even worse than random guessing, showing serious systematic errors. In summary, the OTSU algorithm performs extremely poorly in segmenting the milling cutter image and cannot effectively distinguish between the blade and the background.

VE algorithm

The VE algorithm performs best in all evaluation metrics, as shown in Figure 7(c). The Jaccard Similarity (JS) and F1-Score (F1) are both above 0.9, indicating that the algorithm can accurately identify most of the correct segmentation regions. The Misclassification Error (ME) is very low, and the Pixel Accuracy (PA) is close to 100%, demonstrating the high precision of this algorithm. The Kappa coefficient is close to 1, further verifying the high reliability and consistency of the VE algorithm. In summary, the VE algorithm excels in segmenting the milling cutter image and is the most recommended method.

Adaptive Local Thresholding

The performance of the Adaptive Local Thresholding algorithm is moderate, as shown in Figure 7(d). The Jaccard Similarity (JS) and F1-Score (F1) indicate that the algorithm can correctly identify segmentation regions to a certain extent, but there is room for improvement. The Misclassification Error (ME) and Pixel Accuracy (PA) show that the algorithm can correctly classify most pixels, but some misclassifications still exist. The Kappa coefficient shows that the algorithm has a certain level of consistency, but improvement is needed. In summary, the Adaptive Local Thresholding algorithm performs generally in segmenting the milling cutter image and requires further optimization.

Fast K-means algorithm

The performance of the Fast K-means algorithm is poor, as shown in Figure 7(e). The Jaccard Similarity (JS) and F1-Score (F1) indicate that the algorithm has limited ability to identify correct segmentation regions. The Misclassification Error (ME) is high, and the Pixel Accuracy (PA) shows that the algorithm correctly classifies most pixels but still has many misclassifications. The Kappa coefficient is low, indicating poor consistency of the algorithm. In summary, the Fast K-means algorithm performs poorly in segmenting the milling cutter image and needs improvement in segmentation accuracy.

Canny Edge Detection with Filling

The Canny Edge Detection with Filling algorithm performs well, as shown in Figure 7(f). The Jaccard Similarity (JS) and F1-Score (F1) indicate that the algorithm can identify many correct segmentation regions. The Misclassification Error (ME) is low, and the Pixel Accuracy (PA) is high, showing that the algorithm can correctly classify pixels in most cases. The Kappa coefficient is high, indicating good consistency between the segmentation results and the ground truth. In summary, the Canny Edge Detection with Filling algorithm performs well in segmenting the milling cutter image, but from a visual inspection, it introduces redundant information on each tooth, which is not conducive to further processing.

Overall, the VE algorithm performs best across all evaluation metrics, demonstrating high precision and consistency. The Canny Edge Detection with Filling and Adaptive Local Thresholding algorithms perform well but introduce too much redundant information, which is not beneficial for subsequent calculations. The OTSU and Fast K-means algorithms perform poorly, exhibiting significant systematic errors, and cannot be applied to the algorithms in this paper.

The primary reason for the poor performance of the comparison algorithms is that this study focuses on tool tip wear, while most previous research has concentrated on edge wear. In edge wear studies, the region of interest is typically larger, and by controlling data acquisition methods, the gray value difference between worn and unworn areas is more pronounced. In contrast, the region of interest in tool tip wear images occupies only a very small portion of the overall image, as shown in Figure 5, and has gray values similar to the background, making most algorithms ineffective for segmenting tool tip images.

Based on the structural analysis, the VE algorithm was chosen to segment the cutter teeth. However, the results of the VE algorithm still contain some redundant data. After segmentation using the VE algorithm, morphological opening operations are employed to disconnect the connections between wear marks, as shown in Figure 8(a). Subsequently, isolated points in the binary image are removed, and morphological closing is performed to fill in the holes, completing the segmentation of the cutter teeth, as shown in Figure 8(b).

Morphological processing result: (a) morphological open operation and (b) removal of solitudes.

L1PS algorithm analysis

Figure 9 and Table 3 show the influence of different initial contours on the L1PS algorithm.

The influence of different initial contours on L1PS algorithm.

Evaluation metrics for different initial contours in L1PS algorithm.

Square – Left

The segmentation result with this initial contour is extremely poor. The Jaccard Similarity (JS) is 0, indicating no overlap between the segmentation result and the ground truth. The Misclassification Error (ME) is close to 47%, and the Pixel Accuracy (PA) is only 53%, indicating that the algorithm’s performance is almost random. The F1-Score could not be calculated, and the Kappa coefficient is negative, showing that the segmentation result is even worse than random guessing, indicating significant systematic errors.

Square – Center

The segmentation result with this initial contour is the best, with excellent performance in all metrics. The Jaccard Similarity (JS) is 0.609793, indicating a high overlap between the segmentation result and the ground truth. The Misclassification Error (ME) is only 3.26%, and the Pixel Accuracy (PA) is as high as 96.74%. The F1-Score is 0.765677, and the Kappa coefficient is 0.748903, demonstrating high accuracy and consistency of the algorithm with this initial contour.

Square – Top

The segmentation result with this initial contour is moderate. The Jaccard Similarity (JS) is 0.178185, indicating a certain degree of overlap between the segmentation result and the ground truth. The Misclassification Error (ME) is 7.82%, and the Pixel Accuracy (PA) is 92.18%. The F1-Score is 0.276128, and the Kappa coefficient is 0.247424, indicating that the segmentation performance is acceptable but still has room for improvement.

Rectangle – Horizontal

The segmentation result with this initial contour is poor, similar to Square – Left. The Jaccard Similarity (JS) is 0, the Misclassification Error (ME) is 46.58%, and the Pixel Accuracy (PA) is 53.42%. The F1-Score could not be calculated, and the Kappa coefficient is negative, indicating that the segmentation performance is worse than random guessing and has systematic errors.

Rectangle – Vertical

The segmentation result with this initial contour is the same as Rectangle – Horizontal, also showing poor performance. The Jaccard Similarity (JS) is 0, the Misclassification Error (ME) is 46.58%, and the Pixel Accuracy (PA) is 53.42%. The F1-Score is undefined, and the Kappa coefficient is negative, indicating that the segmentation performance is poor and has significant systematic errors.

In summary, the segmentation results with Square – Left, Rectangle – Horizontal, and Rectangle – Vertical initial contours are poor, even worse than random guessing, indicating systematic errors. The segmentation result with the Square – Top initial contour is moderate. The best segmentation result is achieved with the Square – Center initial contour, demonstrating that the L1PS algorithm performs best under this initial contour. Nevertheless, it is evident that the L1PS algorithm alone is not suitable for multi-object segmentation, highlighting the effectiveness of the proposed algorithm in this paper.

It is evident that the L1PS model produces varying segmentation outcomes under different initial contours. Among them, the results obtained from (a), (d), and (e) exhibit similarities but fall short of achieving the desired segmentation. On the other hand, (b) and (c) reveal that although the L1PS model may not be suitable for multi-target segmentation tasks, it performs well in terms of single-object segmentation when appropriate initial contours are provided. Table 4 shows the running time and iteration counts of the L1PS and proposed methods. It can directly reflect the effectiveness of the method in this paper.

Iterations and CPU time of the L1PS and proposed method.

Verification of wear area

Figure 10 illustrates the wear curve of a milling cutter tip over time, showing the area of tip wear (in mm22) against working time (in hours). The curve includes the actual wear data, the proposed method’s prediction, and the deviation. The red dashed lines indicate thresholds for different stages of wear, such as initial wear, slight wear, tip breakage, and tool damage. Additionally, the figure includes images of the tool tip wear at various stages.

Initial wear (0–1.1 h): In the initial stage, when the tool travel is within the range of 2736 m, the wear area of the tool tip gradually increases, representing the normal wear period where the cutting edge adapts to the cutting conditions.

Slight wear (1.1–3.6 h): When the tool travel is between 2736 and 7776 m, the wear area increases more significantly but remains within controlled limits, marking the transition from initial wear to steady-state wear.

Breakage initiation (3.6–4.5 h): When the tool travel is between 7776 and 9720 m, the wear area increases significantly, indicating that wear has become more severe and the tool tip has begun to break down.

Tool damage (4.5–5 h): After the tool travel exceeds 9720 m, the wear area reaches its peak, indicating severe wear and damage. This suggests that the tool is no longer effective and must be replaced.

Milling cutter wear curve.

The proposed method’s prediction closely follows the actual wear trend with slight deviations, demonstrating high accuracy and reliability. The wear morphology changes from initial wear to slight wear, followed by tip breakage, and finally tool damage. The proposed method provides a reliable prediction of the wear trend with minimal deviation from the actual data, highlighting the importance of monitoring wear stages for timely maintenance and replacement of the milling cutter.

Conclusion

This paper designs a machine vision based online tool wear accurate measurement system. The whole process control from machine control, light source combination, equipment selection to algorithm design provides a new idea for online machine vision monitoring. Among them, control the machine tool to go to the path and code to achieve off-line acquisition of tool bottom edge; combination of ring light source and fiber optic light source to attenuate the influence of ambient light; adopting multi-edge to single-edge approach, using VE results as a mask, and using L1PS as an accurate measurement algorithm for fine segmentation. After experimental verification, the maximum deviation from the actual value is 0.036 mm, the average is 0.007 mm, and the detection accuracy is as low as 90.94%, with an average of 97.92%, which verifies the effectiveness of the method. Furthermore, the algorithm’s runtime has been optimized to 15.53 s, reflecting an approximate reduction of 94.68%.

Footnotes

Handling Editor: Chenhui Liang

Author contributions

Funding acquisition: Jing Ni, Zhen Meng, and Zefei Zhu; Investigation: Ruizhi Li, Xunsong Liu, and Zuji Li; Methodology: Ruizhi Li and Yanjun Ren; Resources: Jing Ni; Supervision: Jing Ni and Zhen Meng; Validation: Ruizhi Li; Visualization: Jing Ni; Writing – original draft: Ruizhi Li.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Key R&D Program of China (Grant No. 2022YFB3401900).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.