Abstract

One of the challenges facing widespread adoption of electric vehicles (EVs) is their short driving range. To address this challenge, the development of various EV transmissions is underway. In transmissions, clutches are used for disconnection from the drive source, and a phenomenon called judder, which is a violent vibration, may occur when the clutch slides on a frictional surface. To resolve this problem, the use of deep reinforcement learning, which is being used and advanced in areas such as machine control in simulations, was focused upon. The purpose of this study is to suppress clutch judder using deep reinforcement learning. The deep reinforcement learning model was developed for seamless gear shift control, and the gearshift results without control were compared with the gearshift results after training. As a result, a control rule that achieves seamless gear shifting while suppressing the judder is designed by applying deep reinforcement learning to gear shifting control. In addition, seamless gear shifting can be achieved for various patterns of friction coefficients, enabling the development of a robust controller for changing the friction coefficients.

Keywords

Introduction

In recent years, automobiles have shifted from being internal combustion vehicles to electric vehicles (EVs) owing to stricter fuel efficiency and emission regulations in various countries. To address this problem, the development of various EV transmissions is underway. EV transmissions can be broadly classified into those using planetary gears, Xinxin et al. 1 , Yuhanes et al. 2 , Jae et al. 3 , dual clutch transmission (DCT), Paul et al. 4 , Hong et al. 5 , continuously variable transmissions, Theo et al. 6 , and synchronizers, Md. Ragib et al. 7 , and Gao et al. 8 An EV motor can cover a wider speed range than a gasoline engine, so the number of gear shifts can be reduced and the transmission can be made compact; however, this means that the shock during gear shifting is more noticeable. The magnitude of the shifting shock can be controlled by adjusting the motor torque and applying or transmitting it to the clutch friction elements.

Mousavi et al. devised a transmission composed of a two-speeds planetary gearbox with a common ring and a common sun, using planetary gears. To control the torque flow during shifting, two friction brakes were used, achieving quick and smooth gear shifts by adjusting the speeds of the sun gear and ring gear. Validation using a simulation model showed that the output torque variation could be kept within approximately 10% of the target output torque. 9 Kim et al. implemented a new shift control strategy for improving shift quality in gear shifts of a parallel hybrid electric vehicle (HEV) equipped with a dual-clutch transmission (DCT), by using feedback of both speed and torque states for coordinated control of the drive motor and clutch. This was validated through simulations and experiments on a test bench, reducing jerk to less than half compared to conventional shift control. 10 Mantriota et al. devised a dual-motor transmission mechanism where two small motors were coupled via a planetary gear, in contrast to the standard configuration using one large motor. The proposed mechanism ensured that both motors operated near their optimal operating range, improving overall energy efficiency. Experimental results obtained from extensive simulations conducted across various standard driving cycles demonstrated an average efficiency improvement of approximately 9% in both power delivery and regeneration, showing superior performance compared to equivalent single-motor transmissions. 11 Ruan et al. proposed a new dual-motor, two-speed direct drive system to optimize the efficiency and performance of battery electric vehicles (BEVs). This drive system uses two motors of different sizes to achieve optimal output and efficiency according to different driving conditions. At low speeds, the smaller motor drives the vehicle, while at high speeds, the optimal motor torque from the larger motor is added, enabling efficient power transmission over a wide range of speeds. Additionally, the use of a two-speed transmission allows for the adjustment of motor speed, balancing fuel efficiency and power performance. 12 Cheng proposed an optimized design and mode-switching strategy for a plug-in hybrid electric vehicle (PEV) equipped with a new three-axis Simpson-type dual-motor coupling drive system. This study aimed to improve the efficiency of electric drive and overall performance using the Improved Simulated Annealing (I-SA) algorithm for optimizing the design of the drive system. In the proposed system, two different motors work in coordination, controlled to operate within their most efficient range. While one motor handles the load, the other gradually increases or decreases torque to achieve smooth shifting. 13

Hu et al. proposed a new dual-motor coupled powertrain (DMCP) with speed and torque coupling. This system uses real-time control to optimally adjust motor operation based on driving conditions, optimizing shift timing and torque distribution. This minimizes power loss during shifting and ensures efficient energy use. Moreover, the combination of an electric clutch and torque control enables gradual torque transition, reducing shock during shifting. 14

In transmissions, clutches are used to connect the transmission to the drive source, and a phenomenon called judder, which occurs due to violent vibration when the clutch slides on the friction surface, may occur. The judder causes vibration of the vehicle body through the drive system and affects the ride comfort of passengers. There are many previous studies Rosbi et al. 15 , Dan et al. 16 , Cameron et al. 17 , on the phenomenon and suppression of judder. Many of these approaches have been conducted from the perspective of tribology, and have achieved a certain effect; however, this remains an important issue. Therefore, this study addresses the suppression of clutch judder using a control method based on deep reinforcement learning. In a previous study, Gerrit et al. 18 found that clutch judder was reduced by appropriately controlling the pressing force on the clutch plates, showing that the effect of control can also be considered. The challenge in suppressing the judder is to control the friction coefficient, which varies depending on the speed difference of the clutch plates, the pressure on the clutch plates, and the oil temperature Bezzazi et al. 19 and Steven et al. 20 To solve this problem, we focus on deep reinforcement learning, which has been used and advanced in areas such as machine control in simulations Runsheng et al. 21 , Vassilios et al. 22 , and Gyeong et al. 23 This technique, a type of AI technology, allows a computer to teach itself without the need for a human to provide the correct answer. The advantage of deep reinforcement learning is that it can learn control strategies that adapt to the control target automatically through trial and error. Therefore, it is possible to learn control rules without considering the uncertain changes in the state of the control target. In this study, the control target was the DCT, and uncertain state changes were considered to occur in its component gears and clutches, as well as the attached motor. Based on the above background, this study focused on the application of deep reinforcement learning to the gear shifting control of a two-speed DCT transmission for an EV.

A continuous deep reinforcement learning model was developed to automatically learn shifting control rules that realize seamless shifting and suppressing clutch judder. First, a transmission model that generates the judder was developed. Then, deep reinforcement learning was developed for this model to suppress the shocks and judder during gear shifting. A comparison between the results of gear shifting without control and the results of gear shifting after learning was conducted to verify the usefulness of the model. This study focused on the output torque fluctuation during gear shifting.

Two-speed DCT for EVs

Mechanism

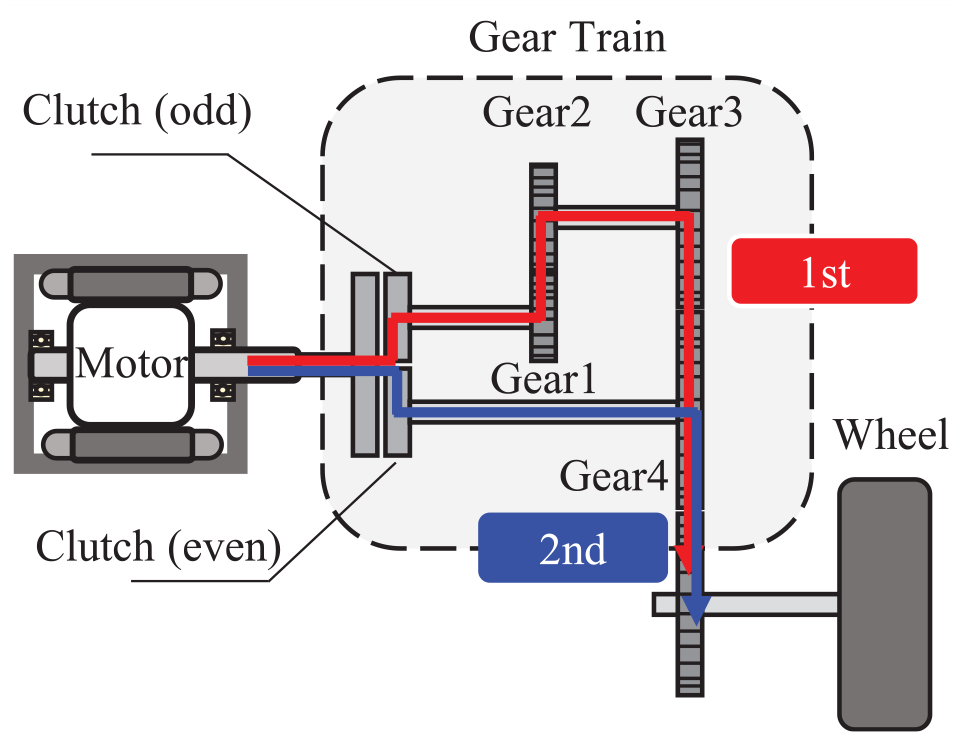

Figure 1 shows the configuration of the two-speed DCT evaluated in this study for use with EVs. The motor is connected to the gearbox, which consists of a double clutch to distribute power across a gear train comprising four gears. The reduction ratio of each gear in the gear train is 3.0 for gears 1 and 2, and 1.0 for gears 3 and 4. Figure 2 shows the torque flow of the first and second gears in the configured system. The clutch to be engaged with the first gear is defined as the “odd-numbered clutch,” and the clutch is engaged with the second gear as the “even-numbered clutch.” The motor side of the clutch is defined as the “drive plate” and the gear train side as the “driven plate.”

Concept of two-speed transmission.

Torque flow diagram of two-speed transmission in low and high modes.

The order of the shifting operation in the upshift of the transmission used in this study was as follows: first gear, torque phase, inertia phase, and second gear. In the first gear, the odd-numbered clutch is engaged, and the even-numbered clutch is released. Power is transmitted only by an odd-numbered clutch. In the torque phase, the even-numbered clutch begins to engage, and the torque sharing progresses gradually from the odd-numbered clutch to the even-numbered clutch, which ends when the share of torque in the odd-numbered clutch decreases to 0. In the inertia phase, the angular velocity ratio changes owing to the torque imbalance between the transmission elements. The operation is completed when the even-numbered clutch is engaged, and the rotational speed of the motor and gear 4 become equal. In the second gear, the odd-numbered clutch is released, and the even-numbered clutch is engaged. Power is transmitted only by an even-numbered clutch.

Coefficient of friction of clutch plates

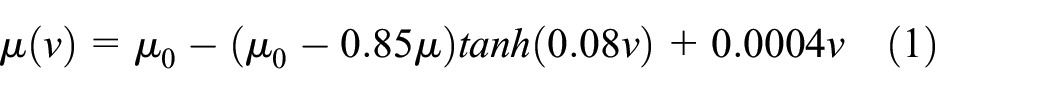

It is evident from previous studies that clutch judder occurs when the μ-v property of the friction surface has a negative gradient with regard to the sliding velocity (9). In this study, the friction coefficient is modeled using the equation (1).

where,

Frictional characteristics.

Proposed method

Figure 4 shows the proposed method. The control rules that command the motor torque to suppress the judder and achieve seamless gear shifting were obtained using deep reinforcement learning. In the learning model, the friction coefficient was randomly changed for each episode. After the training was complete, the test model was used to verify that gear shift control was possible for various patterns of the friction coefficients. A robust controller can be developed when seamless shifting is possible for various friction coefficient patterns in the test model. Based on a previous study, 9 gear shifting is considered to be seamless if the output torque fluctuation during gear shifting and the difference in torque step before and after gear shifting is 10% or less.

System configuration of two-speed transmission by deep reinforcement learning for seamless shifting.

System configuration

Condition for shifting gear

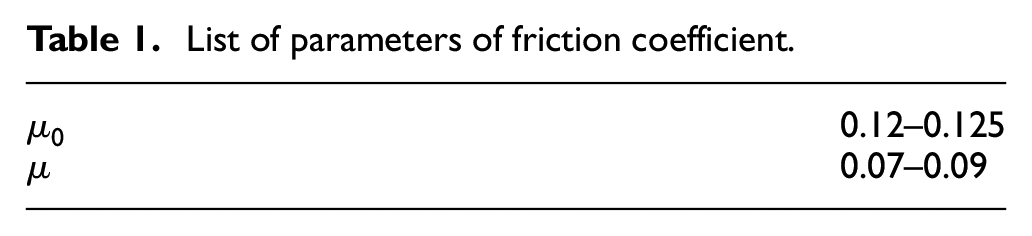

A deep deterministic policy gradient (DDPG), Timothy et al. 24 , a learning algorithm that can handle continuous states, was employed to develop the control model. The transmission to be trained was created in Simulation X, a general-purpose 1DCAE software, and training was performed in MATLAB and Simulink. In the simulation, each element was configured to assume the ideal value before the gear shift. Specifically, the rotational speed of the motor was set to 36.0 rad/s and the rotational speed of the output shaft was set to 12.0 rad/s. The target output torque was set to 30 Nm. Table 1 shows the range of values in which the friction coefficients of the clutch plates to be changed randomly. In this study, the simulation model was performed assuming that there was no delay in the motor torque. In this study, the usefulness of deep reinforcement learning as a gear-shifting control method was verified by comparing the results obtained with and without the proposed control method.

List of parameters of friction coefficient.

Without control

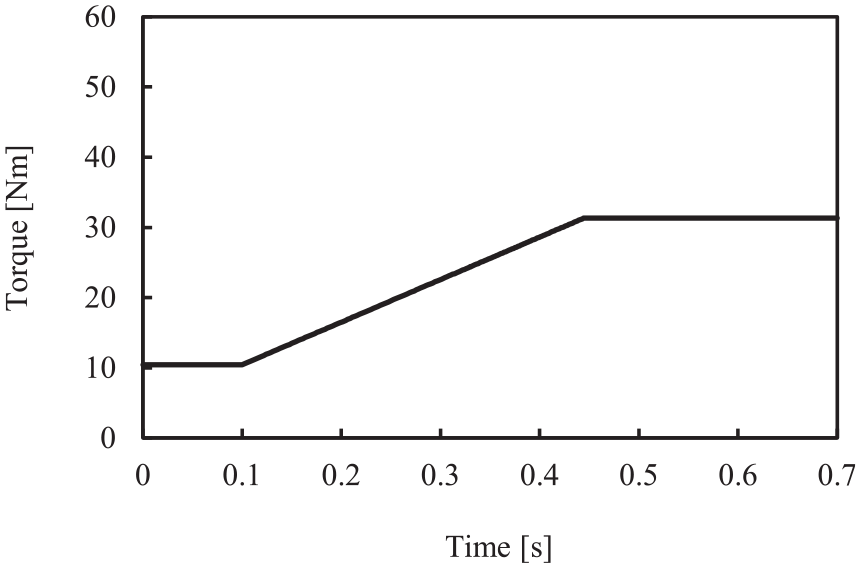

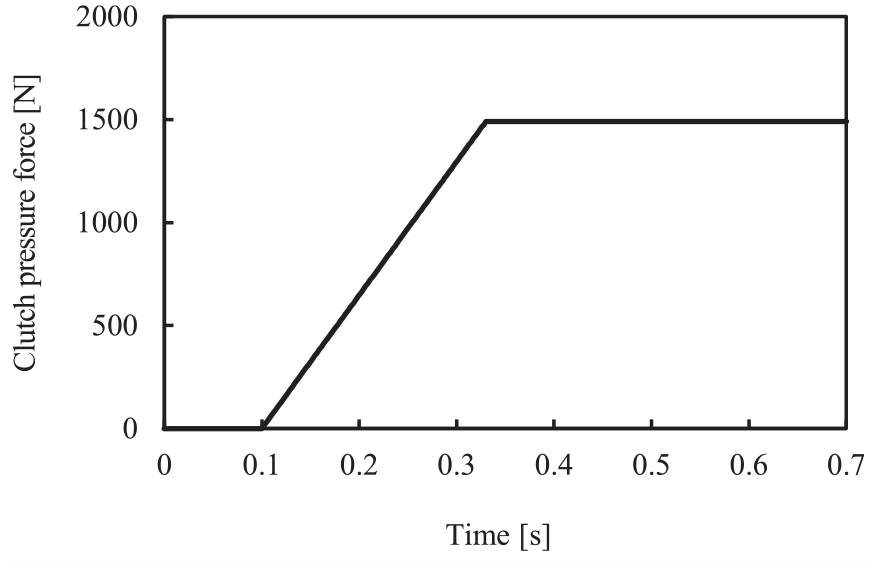

The gear shift was set to begin at 0.1 s, and the motor torque was increased as the clutch pressure increased. Figure 5 shows the motor torque, and Figures 6 and 7 show the odd-numbered and even-numbered clutch forces, respectively, set for the no-control condition.

Motor torque of without control.

Clutch pressure of the odd-numbered clutch.

Clutch pressure of the even-numbered clutch.

Control by deep reinforcement learning

Under these conditions, the effect of judder reduction using DRL was verified. The motor output torque was set to have value between 0 and 60 Nm. The clutch pressing force of the odd-numbered and even-numbered clutch was varied following the same values as in the case without control.

Advanced settings for deep reinforcement learning

Multiple parameters were required for the gear shifting control model, and five parameters namely the input layer, learning parameters, time steps, deep learning hyperparameters, and reward were set as detailed in this section.

Input layer

Nine values were included in the input layer. These are the motor torque, output torque, target torque error, clutch engagement signal (odd-numbered and even-numbered), and speed of each element (motor, gear 1, gear 4, driven plate).

Deep learning hyperparameters

Table 2 shows the learning rate, discount rate, variance of the noise model, and decay rate of the variance for the actor – critic network employed in this study. Note that the discount factor, the variance of the noise model, and the decay rate of the variance can be changed to accelerate learning when it is not progressing at the desired rate.

List of multiple parameters.

Time steps and number of episodes

The total simulation time was set to 0.7 s and the time step was set to 0.001 s. An episode was defined as a repetition of the simulation until the maximum number of steps (700 steps) was reached. The learning was completed when the reward reached 1965, as explained in Section “Reward.”

Network parameters

Figure 8 shows the baseline actor – critic network used in this study. The input layer of the actor network contained the nine nodes defined in Section “Input layer,” and its output contained one node which is the motor torque. The hidden layer contained a full layer coupling of 100 nodes. The tanh activation function was used for the output layer, and a rectified linear unit (ReLU) was used for the remaining layers. The input layer of the critic network was the same as that of the actor network, and the full-layer coupling of 100 nodes in the hidden layer used ReLU as the activation function.

Layout of actor and critic network.

Reward

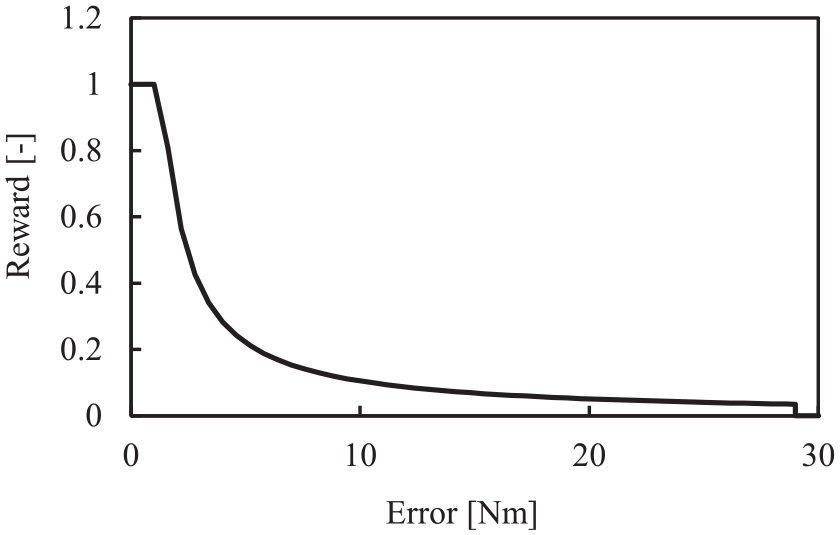

The reward function is set as follows:

Equation (3) is the reward function of the error from the target torque during gear shifting, equation (4) is the reward function of the error from the target torque before and after gear shifting, and equation (5) is the reward that occurs when the error from the target torque is greater than a certain value. The reward was 5/2 for each time step when the clutch was completed after 0.6 s, while the reward in equation (3) was set to 0 and −1 for each time step when the clutch was not completed. where e is the error between the target and actual output torque, and the objective of this research was to shift the gears while minimizing

Reward graph of output torque error of r_1.

Reward graph of output torque error of r_2.

Results

Figures 11–14 show the results of the gear shift simulation, in which the horizontal axis represents the simulation time and the vertical axis represents the element rotational speeds, rotational speeds of the even-numbered clutch plates, output torque, motor torque, and clutch transmission torque. Figure 11 shows the case of no control, and Figures 12–14 show the case of control using deep reinforcement learning and varying the friction coefficient.

Simulation results by no control. (

Control results by deep reinforcement learning.

Control results by deep reinforcement learning.

Control results by deep reinforcement learning.

Figure 11 shows that shocks and judder due to gear shift occur when no control is applied. The shock due to gear shifting is a point where the output torque fluctuates significantly when the clutch is coupled from 0.1 s. To maintain the output torque in the torque phase, it is necessary to gradually increase the motor torque to match the clutch transmission torque. However, because the motor torque was not adequate, the output torque decreased. In this experiment, the output torque decreased because the motor torque, which is the input torque to the transmission, was insufficient relative to the clutch pressing pressure. On the other hand, if the motor torque is increased too much, the output torque will become excessively high. Therefore, it is necessary to input an appropriate motor torque. Judder occurred at the point where the angular velocity ratio changed after 0.33 s, and vibration appeared as the sliding speed between the clutch plates decreased.

Figures 12–14, show that control by deep reinforcement learning, reduced the gearshift shock and judder to less than 10%, was within the scope of this study. The shocks between 0.1 s and 0.33 s are reduced by increasing the motor torque to match the clutch passing torque. Judder is suppressed by controlling the motor torque where it occurs. In addition, it was clear that appropriate motor torque can be output based on friction coefficients that change in various patterns as the angular velocity changes due to gear shifting through repeated learning. Based on a previous study 12 in which seamless gear shifting was achieved, the output shaft fluctuation during gear shifting and the torque difference before and after gear shifting were defined as 10% or less. In this study, the fluctuation of the output torque is less than 10%, so it is considered to be useful. These results show that clutch judder can be suppressed and seamless gear shifting can be achieved by using deep reinforcement learning. Additionally, the proposed method achieved the control measurements in the validation results with approximately 7000 learning iterations

Conclusions

In this study, deep reinforcement learning was applied to the gear shifting control of a two-speed DCT for EVs with μ-v characteristics, and a controller was developed to suppress clutch judder through iterative learning. The primary findings of this study are as follows:

(1) Deep reinforcement learning was applied to the gear shift control of a two-speed DCT for EVs. As a result, the shock and judder caused by the gear shift could be suppressed to less than 10% of the target output torque by controlling the motor torque.

(2) Deep reinforcement learning was successfully applied to provide appropriate input for the friction coefficients that are difficult to estimate. It was also found to be effective for various friction coefficient patterns.

(3) It was shown that the exponential function was effective for the reward function to suppress the judder. The reason for this is that the smaller the target output torque error, the more points can be obtained by weighting.

(4) In this study, only the motor torque was trained, but in the future, not only the motor torque but also the clutch pressing pressure needs to be trained so that the gear shifting becomes even more seamless.

Footnotes

Handling Editor: Sharmili Pandian

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.