Abstract

In order to reduce the incidence of traffic accidents caused by the emotional state of drivers, this study proposes an emotion recognition algorithm based on vehicle noise environment. This algorithm can effectively identify the emotional state of drivers and provide support for further improving their emotions. To address challenges in existing research on speech emotion recognition, such as excessive model parameters, poor generalization, and suboptimal performance in noisy environments, this paper proposes a lightweight network model suitable for small datasets. The model utilizes Power Normalized Cepstral Coefficients (PNCC) as input features, and employs parallel feature extraction layers at different scales. These features are then fed into a feature learning module for in-depth extraction, with the final determination of the driver’s emotional state made by the output layer. Experimental results show that the model achieves an accuracy of 96.08% on the EMO-DB speech dataset. Even in simulated in-vehicle noise environments, the model exhibits high accuracy and robustness. Moreover, compared to other lightweight models, it has fewer training parameters and faster processing speed, making it suitable for deployment on edge devices in mobile applications.

Keywords

Introduction

In the real world, human emotions play a crucial role in communication, and individuals can express their emotions through various means, including gestures, facial expressions, bodily postures, and speech. Speech, as the most direct way to express emotions, is an important prerequisite for achieving natural human-computer interaction. 1 The in-car speech emotion recognition algorithm utilizes vocal signals emitted by the driver to assess their emotional state. It then adjusts accordingly to mitigate negative emotions, minimizing their detrimental impact on driving behavior. This proactive approach aims to reduce casualties and property damage in traffic accidents. Therefore, actively engaging in research within the field of speech emotion recognition under car noise environments holds significant developmental potential and practical value.

In the realm of speech emotion recognition algorithms, machine learning models such as Support Vector Machine, Gaussian Mixture Model, Hidden Markov Model, and others represent the foundational frameworks for speech emotion classification. While these classification models have made significant contributions to the field of speech emotion recognition, the accuracy of the aforementioned models still requires improvement. 2

In recent years, deep learning has significantly propelled advancements in speech emotion recognition. There has been an increasing amount of research on deep learning techniques such as Deep Belief Networks,3,4 Recurrent Neural Networks,5,6 Convolutional Neural Networks,7,8 and others in the realm of speech emotion recognition. 9 Compared to traditional machine learning, neural networks, through learning from a vast amount of samples, have the ability to extract deep and intricate features, enabling them to perform complex classifications.

Promod et al. 10 enhance classification accuracy by employing several parallel pathways with large convolutional kernels and phoneme embeddings. The use of large convolutional kernels makes the model less sensitive to slight variations in input, but it may lose some local detailed information, which is not suitable for small datasets. While phoneme embeddings can improve classification accuracy to some extent, they are sensitive to noise and environmental changes, making them susceptible to interference in recognition results. Chen et al. 11 utilize Mel spectrograms, deltas, and delta-deltas as feature inputs for the model. They introduce first-order and second-order differences as dynamic features, proposing a convolutional recursive neural network based on three-dimensional attention to reduce the impact of irrelevant emotions. However, this model has a larger size, making real-time inference challenging on edge devices with limited computational resources. Aftab et al. 12 extract features with different properties from MFCC using three parallel CNN blocks, which are then concatenated and fed into deep convolutional layers for deep feature extraction. This model exhibits noticeable lightweight characteristics. Although MFCC features show good recognition performance for speech signals related to the training dataset, their correlation weakens if the input signals are affected by other factors, leading to a significant decrease in recognition accuracy. The robustness of the model still needs improvement.

The above approaches are based on clean speech data as experimental subjects, and the experimental results in an ideal environment may not necessarily meet real-world conditions. Due to reverberation or background noise, the speech signals received by devices may be unclear or contaminated. It is challenging to adapt to the impact of noise in a vehicular environment, leading to suboptimal recognition performance. Deploying the model in an in-car system requires addressing the low computational power characteristics of in-car edge devices and ensuring model lightweightness. To address these issues, the following is a list of the main contributions of this paper:

Taking into account the impact of noise in vehicular environments, the model utilizes Power-Normalized Cepstral Coefficients (PNCC) as the primary feature for input. This choice aims to mitigate the influence of noise on the model’s recognition results, ensuring robust recognition performance in less-than-ideal acoustic environments.

Considering the computational constraints of in-car edge devices, the model has been optimized for lightweight deployment to ensure real-time inference without compromising recognition accuracy.

The introduction of the Efficient Channel Attention (ECA) mechanism is aimed at optimizing the model’s recognition performance. This module, by avoiding dimensionality reduction, effectively captures inter-channel interactions, reducing the impact of irrelevant emotions. While ensuring model lightweightness, the ECA mechanism further enhances the recognition effectiveness of the model.

Dataset

EMO-DB

Berlin Emotional Database (EMO-DB) was recorded in 2005 with German as the language. It features 10 professional actors (five male, five female) delivering statements in different emotions, including anger, boredom, disgust, fear, happiness, neutrality, and sadness. The database comprises a total of 535 utterances collected from everyday conversations. This study focuses on five representative emotions (anger, fear, happiness, neutrality, and sadness) for analysis.

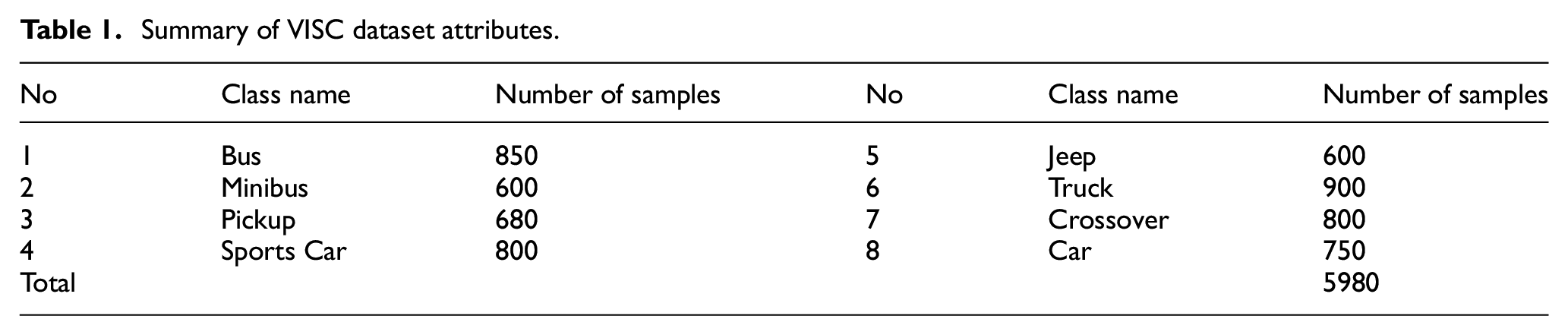

VISC

Vehicle Interior Sound Dataset (VISC) 13 is collected from various perspectives within the cabins of different vehicle types, including buses, jeeps, trucks, sedans, and more, totaling eight vehicle categories. Each vehicle type consists of 600–900 audio segments, with each segment lasting 3–5 s. In this study, the dataset is utilized as a source of vehicular noise. It is important to note that these sounds exclusively represent the interior noise of the vehicles and do not include any driver or human voice. The summarized attributes of the VISC dataset are provided in Table 1.

Summary of VISC dataset attributes.

Feature

In the task of speech emotion recognition, most researchers employ clean speech datasets to train their models, extracting features such as spectrograms, power spectra, FBank features, etc. Currently, MFCC features are widely used and considered standard features for many speech emotion recognition systems. While MFCC performs well in clean speech and requires fewer feature dimensions, it lacks robustness in noisy environments, making it challenging to achieve satisfactory recognition results in practical in-car applications. To address this issue, this paper adopts Power-Normalized Cepstral Coefficients (PNCC) as the input features for the model, enhancing the accuracy of speech emotion recognition tasks in the context of in-car noise environments.

Power-normalized cepstral coefficients

Power-Normalized Cepstral Coefficients (PNCC) is an audio feature proposed by Kim and Stern 14 as a replacement for MFCC, aiming to enhance speech recognition capabilities in noisy and reverberant environments. The computation process of PNCC closely resembles that of MFCC, with key innovations focused on incorporating short-term and mid-term processing. Notably, changes include the transformation of Mel cepstral coefficient filters to Gammatone, the adoption of an extended time window for smoothing each frame of speech data, and the implementation of asymmetric noise suppression. Asymmetric noise suppression involves calculating power over a period to suppress excitation signals in the background and eliminate low-frequency noise. PNCC utilizes a nonlinear power function in place of the nonlinear logarithmic function found in MFCC, aligning more closely with the auditory neural characteristics of the human ear. The feature extraction process for PNCC is illustrated in Figure 1.

PNCC extraction process.

Dynamic feature extraction

Speech signals are non-stationary signals, and commonly extracted features such as MFCC and PNCC only capture the static characteristics of the speech signal. 15 By extracting first and second-order differential features and concatenating them with PNCC features, a more comprehensive set of emotional features for the speech signal is obtained. The first-order differential coefficients of PNCC are expressed as shown in equation (1).

Where D(n) is the first-order differential of PNCC coefficients, C(n + i) represents the set of PNCC parameters for a frame, and k is set to 2. Substituting the result D(n) into equation (2) yields the second-order differential coefficients of PNCC.

Where D2(n) represents the second-order differential of PNCC coefficients.

Feature processing

Firstly, the speech signal passes through a bandpass filter with a cutoff frequency of 10 Hz and 4 kHz. The speech data is then framed using a 64 ms Hamming window with a 16 ms frame shift. Due to the fact that only the initial dimensions of the first and second-order differential features are prominent, PNCC features are extracted to yield 60-dimensional feature parameters. These parameters consist of 47 dimensions of PNCC features, 8 dimensions of first-order differential features, and 5 dimensions of second-order differential features.

Model and method

The model is divided into three parts, consisting of a parallel feature combination module, a local feature learning module, and an output layer. In order to maintain the lightweight characteristics of the model and further improve its performance, this paper inserts the ECA module into the local feature learning module.

Parallel feature combination module

As illustrated in Figure 2, the parallel feature combination module consists of four CNN paths. The first and second CNN paths employ 3 × 3 convolutional kernels for feature extraction. After Batch Normalization (BN) and ReLU activation, the first path uses Max-Pooling to capture essential information in the features, while the second path uses Avg-Pooling to extract spectral-time correlations. The third and fourth CNN paths use 9 × 1 and 1 × 11 convolutional kernels, respectively. After BN and ReLU processing, they employ Avg-Pooling to extract spectral and temporal features. The outputs from the four CNN paths are then combined and fed into the local feature learning module for further extraction of deeper emotional features. This structure is designed to balance spectral and temporal information, extracting more crucial information effectively.

Parallel feature combination module.

Local feature learning module

The local feature learning module consists of several Local Feature Learning Blocks (LFLB), 16 as depicted in Figure 3. These LFLBs possess distinct configuration parameters and are applied to the shallow features extracted by the parallel feature combination module, aiming to capture deeper characteristics.

Local feature learning module.

Each LFLB is structured with convolutional layers, BN, ReLU activation, and pooling. The convolutional layers in LFLB1-LFLB4 have a kernel size of 3 × 3, employing Avg-Pooling, while LFLB5 uses a 1 × 1 convolutional kernel and applies Global Pooling. This design allows the model to train on datasets of varying lengths without altering the architecture. The BN layer is employed to enhance the network’s performance and stability. The deep emotional features extracted by the local feature learning module are then passed to the output layer for emotion classification.

Output layer

The output layer consists of a fully connected layer, as illustrated in Figure 4. The emotional features from the local feature learning module undergo a Dropout layer to mitigate the risk of overfitting and enhance the model’s generalization capability. Subsequently, the features pass through a fully connected layer with a softmax activation function, ultimately achieving emotion classification and prediction.

Output layer.

Lightweight attention mechanism

Efficient Channel Attention (ECA) 17 is an attention mechanism designed to effectively capture inter-channel interaction information. It achieves this by conducting global average pooling across all channels without reducing dimensions. Afterward, it considers each channel and its k neighbors to capture local inter-channel information interaction, ensuring both efficiency and effectiveness. ECA employs one-dimensional convolution, effectively avoiding side effects brought by dimension reduction in fully connected layers. With only a minimal increase in parameters, ECA significantly enhances model performance, making it an extremely lightweight channel attention mechanism. By inserting the ECA module between LFLB4 and LFLB5 in the local feature learning module helps avoid the learning of redundant features without significantly increasing memory overhead and network depth. This ensures the model’s lightweight design and more effectively balances the learning of important features, thereby enhancing the recognition performance without compromising the model’s efficiency. The schematic diagram of the ECA structure is depicted in Figure 5.

ECA structural diagram.

Experiments

Metrics

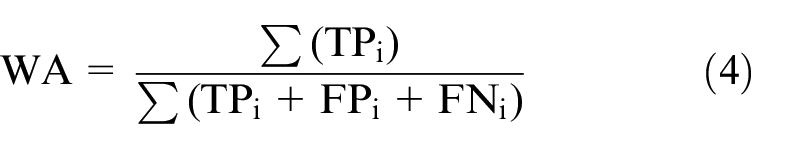

Due to the imbalanced nature of the EMO-DB dataset, this paper primarily employs Unweighted Average Recall (UAR) and Weighted Accuracy (WA) to evaluate the model’s performance. The training process adopts a 10-fold cross-validation. The formulas for calculating UAR and WA are as follows:

Where Recall i is the recall for the i-th class, N is the total number of classes. TP i is the true positive count for the i-th class, FP i is the false positive count for the i-th class, and FN i is the false negative count for the i-th class. The ∑ denotes summation over all classes.

Experimental setup

This paper implemented the proposed model using the TensorFlow 2.4 deep learning framework. The training hyperparameters were set as follows: the loss function utilized the cross-entropy loss, Adam optimizer for optimization with an initial learning rate of 1 × 10−4, which decayed at a e−0.18, and a batch size of 32. The training was conducted for 400 epochs. To prevent overfitting, a Dropout layer with a rate of 0.3 was applied, and L2 regularization was employed in all LFLBs.

Results and discussions

Experiment on a clean dataset

The sampling rate of the speech signals in the experimental dataset is 16 kHz, all single-channel, with varying time lengths ranging from 1.23 to 8.98 s. The length of speech signals can impact the accuracy of emotion prediction, making it crucial to select an appropriate duration. Given that, in real-world scenarios, the duration of a driver’s single speech signal varies, this study chooses speech signals with durations between 1 and 10 s as inputs, comparing the emotion prediction accuracy for different speech lengths. For speech lengths greater than the predetermined length, the speech is truncated to match the predetermined length. For speech lengths shorter than the predetermined length, zero-padding is applied to the beginning and end of the speech signal. We observe that as the input speech length increases, the accuracy quickly improves, reaching a peak at a certain point. Subsequently, if the length continues to increase, the accuracy gradually declines. With the increase in the length of the speech, the accurate emotional information contained in the speech signal also tends to rise. Typically, when confronted with richer emotional information in longer speech segments, models tend to produce more accurate results. However, when the duration of the speech exceeds a certain range, the substantial increase in information may lead to several issues: Firstly, longer speech durations imply a greater potential for emotional variations over a broader range, yet the model’s single output may not adequately reflect the entire emotional content of the speech. Secondly, the extended speech duration may result in a significant increase in the number of features input to the model, potentially giving rise to overfitting. Additionally, as the speech duration increases, the computational cost also rises. Constrained by the limited computational power of edge devices in mobile settings, the algorithm may face challenges in running smoothly or meeting real-time requirements.

Figure 6 illustrates the variation in model accuracy as the length of the speech signal ranges from 1 to 10 s. From the graph, it is evident that when the length is set to 5 and 6 s, their WA remains consistent. Considering the increase in computational cost with the extension of speech signal input time, we choose 5 s as the input time for the speech signal.

Experimental results of different speech lengths.

Figure 7 provides the confusion matrix from the experiments conducted on the EMO-DB dataset using the model. Through the analysis of the confusion matrix, we observe that the model performs relatively accurately in recognizing angry, sad, and neutral emotions, while the recognition accuracy for happy emotions is lower. Angry, sad, and neutral emotions exhibit distinct and prominent PNCC (Power Normalized Cepstral Coefficients) features in the speech signal, making it easier for the model to capture and differentiate these emotions. In contrast, the features of happy emotions are more intricate and subtle, posing a greater challenge to the model.

Confusion matrix on EMO-DB dataset.

To validate the effectiveness of the attention layer and compare the impact of the number of LFLBs in the local feature learning module, this study conducted ablation experiments. The experimental results demonstrate that increasing the number of LFLBs improves the accuracy of emotion recognition. Additionally, with the introduction of the ECA mechanism, the recognition accuracy is further enhanced. The results are shown in Table 2.

Ablation experiments.

Experiment on noise-blended dataset

This section explores the impact of in-vehicle noise on speech emotion recognition. In recent years, the use of MFCC as a feature input has been prevalent in speech emotion recognition research. To validate the robustness of the proposed method, both MFCC and PNCC were used as features to train the model. The accuracy was compared for testing data on both clean datasets and mixed VISC datasets. The introduction of the IEMOCAP dataset further demonstrates the model’s generalization capability. The experimental results are presented in Figure 8.

Accuracy of Clean MFCC and PNCC models on different datasets. (a) The accuracy on the EMO-DB dataset and (b) the accuracy on the IEMOCAP dataset.

In two different datasets, the MFCC and PNCC models perform similarly on the clean dataset; however, in the mixed dataset, the accuracy of the PNCC model is significantly higher than that of the MFCC model, with an improvement of 29.67% in the EMO-DB dataset and 6.01% in the IEMOCAP dataset. This indicates that the PNCC model, trained using a clean dataset, demonstrates better robustness in handling car noise, ensuring a maintained accuracy level to some extent in emotion recognition.

Some studies incorporate noise by mixing it with the dataset during model training, resulting in models that are somewhat resilient to noise. To investigate the accuracy of models trained on a blended dataset for emotion recognition, the VISC dataset was merged with the clean dataset. MFCC and PNCC models were trained using the mixed dataset, and their accuracy was compared. The experimental results are illustrated in Figure 9.

The accuracy of mixed noise models on different datasets. (a) The accuracy on the EMO-DB dataset and (b) the accuracy on the IEMOCAP dataset.

In two different datasets, for the clean dataset, the recognition accuracy of the PNCC mixed model is significantly higher than the MFCC model, with an increase of 33.6% and 13.38% in the EMO-DB and IEMOCAP datasets, respectively. However, for the mixed dataset, the accuracy of the PNCC model is slightly lower than the MFCC model, with a decrease of 2.98% and 10.15% in the EMO-DB and IEMOCAP datasets, respectively.

In the four experimental conditions, under the clean model-clean dataset condition, the accuracy of the PNCC model is roughly equivalent to the MFCC model, indicating that the performance of the two models is similar under ideal conditions. Under the clean model-mixed dataset and mixed model-clean dataset conditions, the accuracy of the PNCC model is much higher than the MFCC model.

However, under the mixed model-mixed dataset condition, the accuracy of the PNCC model is slightly lower than the MFCC model, which is related to the characteristics of the MFCC features. The MFCC mixed model is trained using a dataset with mixed noise, and during testing on the mixed dataset, it exhibits good performance because of the strong correlation among the data in the noise dataset. However, the MFCC mixed model performs poorly on the clean dataset, showing a significant performance gap compared to the mixed dataset. In contrast, the performance gap of the PNCC clean model and mixed model on both clean and mixed datasets is smaller than that of the MFCC model. The overall accuracy of the PNCC model is higher than the MFCC model, demonstrating that the PNCC model exhibits better robustness.

The MFCC feature model can effectively capture speech signals and maintain good performance even in low dimensions. However, the robustness of MFCC is insufficient in noisy environments, leading to low recognition accuracy. In practical application scenarios, its effectiveness often falls short of expectations. On the other hand, without sacrificing recognition performance and computational complexity, the PNCC feature model significantly enhances the robustness of the speech emotion recognition system. This improvement allows the system to adapt to the influence of various noises in real-world scenarios.

Comparison with other methods

While achieving excellent emotion recognition accuracy and robustness, the proposed model in this paper also exhibits the characteristics of lightweight design. This makes it easy to deploy on edge devices, reducing model validation time and facilitating integration with in-vehicle systems designed to guide drivers’ emotions, ultimately enhancing driving safety. The parameter and performance comparison is illustrated in Table 3.

Comparison of relevant studies on the EMO-DB dataset. .

Conclusion

This paper introduces an efficient model for speech emotion recognition, which demonstrates good performance even in the presence of in-car noise, showcasing notable robustness. The model extracts features such as PNCC from the speech signal, utilizing a parallel feature combination module to capture low-level features of the speech signal. These features are then fed into a local feature learning module for extracting deep emotional features, and the output layer classifies the emotion category. In comparison to existing models in recent years, the proposed model in this paper maintains both generalization capability and accuracy while having fewer parameters than other lightweight models. This further enhances the real-time application of the model and makes it more suitable for deployment on edge devices in mobile applications. However, this study also has some limitations. Firstly, the emotion recognition discussed in this research adopts a discrete emotion model rather than a continuous one, which may not fully encompass the various emotions that could exist in a single speech signal, thereby impacting the recognition effectiveness of the model to some extent. Future studies could explore the use of different emotion models to enhance the accuracy of emotion recognition. Secondly, there is still room for further improvement in the accuracy of recognizing the driver’s emotional category in the presence of car noise. Additionally, future research could consider the differences in emotional expression across various languages and cultural backgrounds to better adapt the speech emotion recognition model to diverse language recognition tasks.

Footnotes

Handling Editor: Sharmili Pandian

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.