Abstract

Policymakers and regulators often commission researchers to provide evidence to help understand potential policy changes and their implications. Rapid evidence appraisal is critical to informing policy making in situations where there are urgent or unexpected changes i.e. those that are not the result of long-term planning but are instead responding to events or crises. These approaches often mean that answers are desired within timeframes typically seen as unworkable by academics. We report a novel rapid realist synthesis method, originally devised for delivering a realist review in an under one-month timeframe for Care Quality Commission (a health and adult social care regulator in England). Key considerations were the need to maintain rigour and transparency whilst working at speed. The method comprises a nine-step process: (1) Setting up an expert panel; (2) reverse engineering research questions from policymaker priorities; (3) formulating a data extraction template; (4) sense-checking the strategy and focus areas with the funder and expert panel (5); building a systematic search strategy and running the searches; (6) performing screening, relevance and rigour assessment, and data extraction in parallel; (7) data synthesis and theory generation; (8) sense-checking initial findings with funder(s) and expert panel; and (9) report writing and dissemination. This method achieves its rapidity due to making use of parallelised review processes and adaptations to traditional methods, such as highly purposive but still systematic literature searching and theory-informed data extraction. It also relies on an engaged team with methodological and subject matter expertise who are ready to use collaborative technology to deliver on time. This method should be further tested for answering different research questions in different settings, for hypothesis generation, for diagnosing issues in clinical trials, and for use in rapid evaluation centres or by independent consultants.

Background

Policymakers often turn to academics for evidence-based answers to policy questions in rapid timescales. Rapid reviews are commonly used to inform policymaking decisions, often in tight timescales of 12-18 months (Saul et al., 2013), but current review methodology is often unworkable when evidence is required to inform urgent decision-making. As others have highlighted, policymakers make decisions based on available evidence “whether imperfect or not” (Duncan & Harrop, 2006; Ellins et al., 2025). Timescales for ‘answers’ desired by policymakers vary by context and area, but it is becoming increasingly common in healthcare that policymakers and regulatory bodies are commissioning urgent reports for delivery in timescales of 1-2 months or less. In such cases, a different approach to evidence synthesis needs to be adopted, particularly where using traditional methods would be unfeasible. Delivering rapid reviews in such timescales is challenging and necessitates compromise. Academics may find that there is a tension between the desire of the funder to get answers as quickly as possible (with less focus on method and more on the utility of the results), and the desire of the academics to maintain methodological rigour and ensure they provide answers rooted in evidence (an overriding concern about quality or approach as well as results) (Ellins et al., 2025). This requires careful communication with the funder to ensure they have realistic expectations for what can be delivered and what the evidence can and cannot reliably inform (Ellins et al., 2025).

There is a relationship between the ways in which research findings are used, how those using research engage with research, and how research is initially produced. These relationships between the use and the production of research can be seen as a system or an ecosystem (Gough et al., 2019). A key dynamic in the ecosystem is the stance taken by users of evidence - such as those in decision-making contexts - towards the evidence that they use, evidence which is usually provided by those in academia. While traditionally, ‘evidence producers’ and ‘evidence users’ have been seen as distinct groups, the increasing demand for timely and actionable research has led to greater engagement and influence from policymakers in shaping the research agenda. The push for rapid reviews as a means of providing quick yet reliable insights has grown in response to these pressures. However, the constraints of accelerated timelines, coupled with artificial intelligence assisted review processes, present new challenges for maintaining methodological rigour in rapid evidence synthesis.

This challenge is further complicated by the dynamic nature of evidence ecosystems, which consist of interconnected processes of evidence generation, synthesis, dissemination, and use within policy and practice (Oliver & Boaz, 2019). In an effective evidence ecosystem, evidence reviews should seamlessly integrate into decision-making processes, ensuring that timely and rigorous insights inform urgent policy needs (Ellins et al., 2025). This form of knowledge synthesis should not replace or displace deeper, more extensive research into questions. Yet, the demand for accelerated timelines places strain on this ecosystem, often leading to trade-offs in comprehensiveness, transparency, and methodological robustness. Others have noted that “dangerous is a rapid review that maintains a large ambition but compromises rigour so that any evidence claims are not really justifiable” (Gough et al., 2019, p. 13). However, this paper asserts that both rapidity and rigour have their place, providing both the questions (Q) being asked and the outcomes (O) are of utility and mapped to the choice of methods (CoM) being used. Similarly, both must be scalable (S - able to obtain the evidence needed) and feasible (F - manageable within the timescales required). This differs from a traditional approach where your questions dictate your method and result in your outcomes. We have expressed this as:

Whilst others have developed rapid realist review (also called realist synthesis) methodologies (Saul et al., 2013), the speed of these reviews is typically in the six months to one-year range, although in some cases they have taken as little as two months (Jones et al., 2024; Saul et al., 2013). Since these methods have developed, the standards for rigour and transparency have increased within the realist community. The main example is the publication of the RAMESES guidelines which are now widely used across realist syntheses (Wong et al., 2013). Compromises in rapid realist reviews have included not conducting systematic searches, not performing independent screening of any kind (Saul et al., 2013), sacrificing transparency (i.e., ability to trace theory back to data) (Bergeron & Gaboury, 2020; Dalkin et al., 2021), and requiring all members of study teams to be familiar with realist methodologies (Saul et al., 2013). Despite the limitations and drawbacks of rapid approaches, there have also been advances in improving and honing methods as evidence ecosystems continue to evolve, and new approaches and technological advancements offer opportunities to address these tensions. The development of realist synthesis quality standards, such as those introduced with the RAMESES projects (Wong et al., 2013), as well as tools like NVivo to aid data synthesis (Dalkin et al., 2021) and Covidence and Rayyan to support literature screening (Kellermeyer et al., 2018), demonstrate how technology and methodological innovation can further enhance both speed and rigour.

Given both tightening deadlines and increasing standards of rigour, a new method for accelerated rapid realist-informed review is required - one that uses novel technologies, adheres to RAMESES guidelines, and is responsive to the demands of policymakers while maintaining the integrity of the evidence ecosystem. To address this, as part of a project we delivered for Care Quality Commission (a health and adult social care regulator) in England, we developed this novel Accelerated Parallelised Realist-Informed Literature review (APRIL) methodology. The regulator required answers to support a policy change, to be delivered in less than a one-month period. This article outlines the methodological innovations we had to make to the rapid realist review process including an “assembly line” type approach that drew on parallelisation. We sought to maintain rigour and transparency by (1) drawing on recent technological innovations to enable greater collaboration, (2) parallelising study processes, (3) consulting closely with stakeholders and (4) combining various aspects of the study.

Describing the Method

Why Choose Realist Review?

Realist Concepts and Their Definitions. Reproduced With Permission From Maben et al. (2023)

Realist reviews are also inherently flexible and iterative. They allow researchers to refine their theories and focus as new information emerges, which is particularly advantageous within tight deadlines (Pawson et al., 2004; Wong et al., 2013). This adaptability ensures that the review remains relevant and responsive to the evolving needs of policymakers, without the rigidity often associated with traditional systematic reviews. Another critical aspect of these reviews is the engagement with diverse sources of evidence, including qualitative studies, grey literature, and stakeholder perspectives. This inclusivity enriches the evidence base and ensures that the review captures a wide range of insights. Adopting a realist methodology also facilitates a more efficient use of resources. By prioritising the most relevant literature and focusing on explanatory insights rather than exhaustive data aggregation, the review process can become more streamlined. In our case study of the APRIL method, the need to produce relevant and responsive findings for policymakers, coupled with the commitment towards stakeholder engagement to nuance these findings, meant that adoption of a rapid realist approach (not just due to the timescales) were appropriate for the research question – in particular the need to understand how context influences outcomes in terms of patient and public safety.

Parallelising Study Processes

A core change in the APRIL method over other methods was the review process itself. Review processes are often performed sequentially, e.g. authors will discuss the research questions, then move onto designing a search strategy, then running the search strategy, etc. Instead, to maximise throughput, we needed to take a parallel approach to deliver on time. We wanted to ensure that all members of the team were working on something simultaneously, rather than waiting for others to finish their tasks - which leads to time inefficiencies. We would argue that while sequential delivery of review processes is traditionally ‘how it is done’, sequential delivery does not necessarily confer any methodological advantage or benefit to rigour.

The aspects of the review we were able to parallelise are shown in Figure 1. For example, we were able to work on the following aspects concurrently: • Data extraction template development and search strategy development. • We had multiple authors working on screening literature. Thus, as titles and abstracts were confirmed as included, we moved those entries to full text screening, even as other titles and abstracts were not confirmed. • As soon as full texts were confirmed as included, we were able to move to extracting data, synthesising findings, and generating theories. As realist review processes are often iterative, this was not too significant a deviation from a traditional realist synthesis approach. • Writing the report in sections, while theories and synthesising is being finalised for other sections. Example study flow diagram for this mental health focused review created by the study authors (Fenton et al., Unpublished)

Parallelisation is scalable and therefore can only be of benefit when there are multiple people available. As such, projects relying on single researchers to do much of the work may not realistically benefit from this process. Critical to the success of this process (in terms of timeliness and validity of insights) was also parallel and sequential consultation with the expert stakeholders to refine and iterate ideas with and alongside the research team (see Figure 1).

Assembling a Team

There are practical considerations essential to delivering a review in such a short timescale and having the 'right' team is a core component of this. Considerations include having a team of sufficient size with (1) multiple members with the skills needed to deliver a review (at least some familiarity with more than one review process, e.g. screening and data extraction), (2) at least one member with subject-matter expertise, (3) sufficient time or willingness to work extra to deliver the review, (4) all members with outcome-oriented mindsets and (5) ability to leverage technologies e.g. Office 365 to enable real-time collaboration. Having the ‘right team’ has been highlighted as essential in other rapid evaluation methods, and APRIL is no exception (Ellins et al., 2025). Our team comprised five people: two information specialists (one dedicated to searching academic databases and another to grey literature), a student research associate with experience of systematic review processes such as screening and data extraction, a research fellow with significant realist review experience, and our project lead with significant realist review experience and subject matter expertise. We recommend approaching this methodology with an ‘assembly line’ mindset.

APRIL Steps

The APRIL methodology uses a nine-step parallel process. The steps can be outlined as follows: 1. Reverse engineering research questions from policymaker priorities. 2. Setting up an expert panel. 3. Formulating a data extraction template. 4. Building a systematic search strategy and running the searches. 5. Sense-checking the strategy and focus areas with the funder and expert panel. 6. Performing screening, relevance and rigour assessment, and data extraction in parallel. 7. Data synthesis and theory generation. 8. Sense-checking findings with funder(s) and expert panel. 9. Report writing and dissemination.

Step 1 & 2: Reverse Engineering Research Questions and Setting up an Expert Panel

The review was commissioned by Care Quality Commission in Nov 2024 in response to a public safety incident in Nottingham in 2023 involving a mental health patient who did not receive adequate care.

To address this issue adequately, we set up an expert stakeholder panel as soon as we were notified of success of the tender. We were not able to convene a full PPIE/stakeholder or advisory group; instead, we drew on a few expert panel members already known to the team. This was necessary due to the timelines. Our expert panel comprised academics and members with lived experience of MH issues; however, rapidly setting up a group may be difficult for others seeking to replicate our strategy, especially if not sufficiently networked or close to the subject of review, and this limitation needs to be considered when adopting this method.

Traditionally, a review would start in a ‘bottom-up’ manner with the development of the research question(s), potentially using frameworks such as PICO (Patient, Intervention, Comparator, Outcome). In our case, we needed to deliver a review which worked backwards from the serious incident and case the review was intended to respond to. The initial tender contained four questions, but in the inception meeting with the funder it became clear that there were five diffuse - but related - main areas of focus that were of interest. Whilst we were provided the purpose of the review by the funder and an initial set of broad research questions to answer, these questions were not formulated in a manner appropriate to be answered via realist (or other) methods, and each one alone could have lent itself to an extensive review process. That being said, the questions originated in a lived reality. Thus, holding fast to a reified review process which did not engage with the policy or practice reality of the questions of interest would have been both unethical (as it would have been unable to provide the intelligence needed from evidence synthesis) and unfeasible (as the areas did not lend themselves to the methods existing). As such, we had to formulate new research-informed questions ourselves by working backwards from the funder priorities in relation to the outcomes of interest (see Table 2). The areas of focus for the review that emerged in the inception meeting to clarify the tender questions provided included: 1. Patient & Public Safety 2. Working Together 3. Good/Poor Practice 4. Inequalities 5. Medicine Optimisation Research Questions From Tender vs. Those Reverse-Engineered

These thematic areas of focus were discussed with the funder at the inception meeting to ensure proper scope of the project. Once we understood the areas of research focus required, it then made practical sense to identify which data we required to provide insight into these areas. This led to the development of the following research-informed questions (Table 2).

Step 3 and 4: Formulating a Data Extraction Template and Building a Systematic Search Strategy

Once we had agreed the research questions, we moved to formulating a data extraction template oriented around several themes. Unlike traditional realist reviews, construction of the data extraction template was conducted in parallel with developing and running the searches. Rather than performing data extraction in multiple stages, we created a data extraction template that also assisted us with the first step of analysis. This involved categorising data into larger themes that were more specifically related to the broader literature and evidence than the five major areas of focus provided by the funder. This was done to enable better tracking of data through to theory generation. The new themes we used were: patient safety, public safety, access to services, timeliness of access, responses to incidents, inequalities, system capacity, long-term care planning, integration/communication/transitions, medicine optimisation, regulatory considerations, policy and practice recommendations, knowledge gaps (from literature), and other aspects considered as ‘good’ or ‘poor’ practice.

At the same time, we built a systematic search strategy drawing on these themes. For this method, we recommend a systematic search strategy that comprises purposive searches of the academic literature, and at least one purposive search of the grey literature – in line with contemporary expectations for other non-rapid realist reviews. Assistance from information specialists or experts in systematic searching can significantly help to ensure timely delivery, to ensure accuracy and completeness of searches, and to narrow down the results while maintaining rigour and reproducibility. We also needed to ensure reasonable limits were placed on the searches to support the feasibility of the project. I. We limited the search results to the last 10 years (i.e. 2014) onwards. This also ensured we gathered evidence that was most relevant to the recent policy context. II. Secondly, we conducted five smaller literature searches that were more specific in scope and oriented around the themes we constructed for the data extraction template, rather than one large search. This allowed precision around the literature we identified, while being narrower in scope than a true exhaustive search would have been. These were: III. Thirdly, we focused on the most relevant databases (rather than adopting an exhaustive approach) and searches were therefore limited to Medline and PsycINFO, and a grey literature search was also undertaken on Google and the Healthcare Management Information Consortium (HMIC) database. Searches were undertaken using combinations of both exploded and focused subject headings, multi-purpose keywords, and title/abstract searches.

Step 5: Sense-Checking the Strategy and Focus with the Funder and Expert Panel

The extraction template, the revised research questions, the search strings and the approach being adopted were all discussed with both the funder and the expert panel. This enabled us to refine and take advice on areas of key interest and emphasis. Their feedback was used to refine the template and approach.

Step 6: Screening, Assessing Relevance and Rigour and Parallel Data Extraction

Screening

We relied heavily on piloting as a strategy for independent screening, searching, and data extracting, to ensure congruence between reviewers. While not as rigorous, it was not possible to fully independently screen or extract all the studies within this timeframe due to the parallelisation of other study processes. For APRIL, we propose initially independently screening 10% of titles and abstracts before coming together for discussion. After we screened 10%, we then found that there were significant differences in interpretation between reviewers. To further check screening consistency, we then discussed the criteria further to ensure congruence, before screening a further 10% independently, after which we found that alignment was much closer (<10% discrepancies). As such, once there was a uniform application of the screening criteria, the remaining 80% of titles and abstracts were then divided amongst team members. All full texts were screened separately.

We worked closely using Microsoft Teams and any ‘challenging’ titles and abstracts, or full texts, were discussed together on an ‘on-demand’ basis to ensure congruence, rather than in a post-hoc manner. This allowed any conflicts and difficult-to-screen papers to be discussed in real time, reducing likelihood of type I and II errors in the screening process. Despite these restrictions in scope, we still ended with 81 included sources, a number not uncommon in full-scale realist reviews. This demonstrated that we could have ended up with significantly more papers if not applying these restrictions, which would have not worked given our time limitations.

Since this project was completed, there has also been the publication of the Reverse Chronology Quota Record Screening (RCQRS) approach for realist syntheses, which could also be used as a part of APRIL to identify the most rich and contemporaneously relevant literature in time-pressured situations (Jagosh et al., 2026).

Relevance and Richness

Strict relevance (presence of “evidence relevant to theory development, refinement or testing”) and richness (i.e. the degree of theoretical and conceptual development that explains how an intervention is expected to work) criteria were employed. Rationale for these choices, and which sources were excluded on this basis, were also recorded in line with guidance (Dada et al., 2023).

Rigour

Realist syntheses also assess the rigour of sources and/or programme theories arising from the analysis. This assessment can be used to exclude sources, or otherwise understand how much support particular theories have. Rigour refers to “whether the method used to generate that particular piece of data is credible and ‘trustworthy’”. Rigour can be applied at the level of the source, for example, to understand if the source is credible, or if methods used were appropriate and trustworthy. However, rigour can also be applied at the level of the programme theory – for example, to understand how well the theory is supported across sources, and has internal validity (Dada et al., 2023). As the focus in realist synthesis is on generating theory, it is therefore possible that otherwise methodologically ‘weak’ studies can contain useful insights. Therefore, although formal checklists such as Mixed Methods Appraisal Tool (MMAT) can be used to assess rigour as done in systematic reviews; the RAMESES guidelines recommend against this (Wong et al., 2013). In the APRIL method, to streamline our rigour assessment process, we adopted assessment of rigour at the level of the source and excluded articles on this basis. We applied a subjective assessment of rigour which took into account the source’s level of detail in describing methods used, and how generalisable and trustworthy their findings were based on those methods (e.g. did their conclusions visibly follow from the data). As such, in our initial project using APRIL, outright exclusion mostly applied to certain grey literature sources that, for example, were from third sector organisations evaluating their own initiatives and appeared to lack impartiality. As such, these sources were deemed as having potential to affect the integrity of our programme theories. Adopting this method of rigour assessment enabled us to slim down the included sources further.

Data Extraction

We used an Excel spreadsheet stored in Microsoft Teams to extract study information to. We chose Excel because all team members already were familiar with it, and because it enabled us all to collaborate on the same file in real-time, streamlining parallelised data extraction processes.

In addition to our excel form that was stratified by thematic area; to assist in theory generation, we also made use of journalling techniques to track and record key insights or ‘narratives’ as we read each study. This then helped us in our team discussions to form initial theories.

Step 7 and Step 8: Generating Initial Programme Theories From Data Synthesis and Sense-Checking Initial Findings with Funder(s) and Expert Panel

Given the timescales, we focused only on initial theory generation. This enabled future follow-up work, building on this review, to focus on testing and refining the theories.

As data were extracted into a number of deductively-created ‘themes’ from the outset, this provided a loose structure of stakeholder priorities within which theories needed to be generated. Theories were generated in a manner similar to normal realist reviews; i.e. extracted passages were read and re-read within each ‘theme’ to identify patterns termed ‘demi-regularities’, around which theories were formulated. These theories were discussed within the team for clarity, as well as presented later to our expert panel and the funder. Overarching substantive theories of resource depletion and intersectionality were used to add further nuance and commonality across initial programme theories (IPTs).

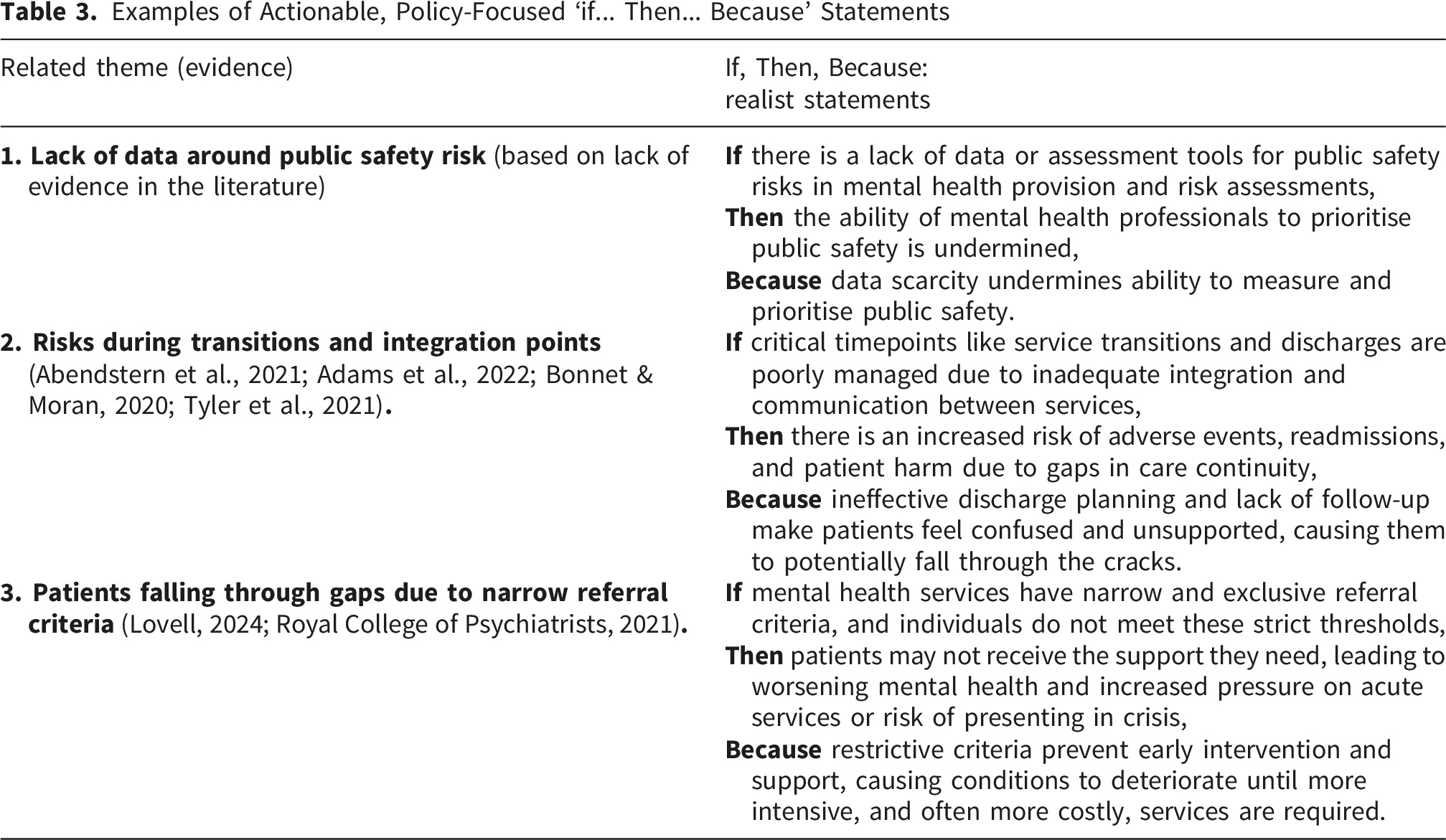

Examples of Actionable, Policy-Focused ‘if... Then... Because’ Statements

Step 9: Report Writing and Dissemination

We parallelised the writing of the report between two team members (SJF and JA) who had experience writing up realist studies for different funders. Each had performed data extraction and theory generation for themes they had subject knowledge of. For example, JA is a patient safety researcher and therefore wrote the patient safety section, among others, and SJF is a mental health researcher and wrote sections on inequalities and more. As each conducted data extraction for their representative themes, this enabled them to have the requisite knowledge to facilitate write-up. The research associate (BU) worked across the themes and closely with SJF and JA around data extraction and synthesis. Short, focused meetings were held between the whole research team at frequent intervals to check the evidence being extracted and discuss demi-regularities or other items of interest in the data as they arose. This was also to ensure that all voices were equally reflected and that multiple perspectives on the data were drawn on as theories emerged.

Reporting of Studies Using APRIL

We have formulated a reporting template for studies using APRIL based on elements essential to the APRIL method, drawing on the relevant components of the RAMESES publication standards and PRISMA reporting checklist. This is intended to improve high-quality reporting of studies based on this method, and is available in Supplemental File 2 (Accelerated Parallelised Realist-Informed Literature review (APRIL): Reporting Checklist).

Discussion

Summary of Method

Summary of APRIL Method

Comparison with Other Realist Review Methods

The RAMESES quality standards for realist reviews suggest a broad six-step process comprising clarifying the scope of the review, searching for evidence, appraising evidence, extracting and synthesising data, developing explanatory accounts, and disseminating findings (Wong et al., 2013). We have included these steps in this rapid parallelised process, but refined our contribution and limitations in relation to these. For example, we arguably included more steps thanks to incorporating stakeholder input into findings at two timepoints.

In terms of rapid realist reviews, perhaps the most well-known approach is by Saul et al., who originally developed a 10-step sequential process for rapid realist reviews (Saul et al., 2013). In contrast, our process comprises nine steps that are parallelised in nature. While still used frequently to this day, we argue the method by Saul et al., required updating due to increased demands for rigour (e.g. from RAMESES guidelines), improved technological progress enabling greater rapidity, greater expectations for realist reviews in terms of searching and transparency, and changing policymaker demands. Figure 2 shows how our methodology differs from the rapid review method outlined by Saul et al. Differences in method

Implications of This Method

Utility to Policy and Practice of Adopting This Method

One of the advantages of rapid policy-focused reviews are that if the findings (if relevant, useful and based on high quality research and rigorous methods) are adopted, then they have the potential to shape the adoption of research evidence into practice, generating impact. In this instance, the report findings were used in multiple ways (see Supplemental File 1 for how they were used) to inform new inspection frameworks for services and to guide priorities for internal organisational change or further work. It is rare when conducting ‘desk research’ that there is such opportunity for research impact – and so the more relevant, useful, and focused, the methods are, the better the potential for generating impactful work becomes. If we had not innovated methodologically to deliver the report within the timeframe available, then there would not have been the opportunity to inform the organisational changes with the evidence identified in this review. Without this innovation, the choices are stark – policy makers and organisations risk making decisions based on limited or inaccurate information. This could result in unintended policy consequences or mis-tailoring of policy solutions that fail to address the causes or fail to activate the mechanisms by which successful outcomes are achieved.

Potential Future Uses for This Novel Method

Projects That Have Successfully Used the APRIL Method

We also could foresee the utility in others using this approach to generate testable hypotheses for other types of research e.g. quantitative studies. This could be done while producing publishable outputs from the process. If capacity is available, such a rapid method could be used to lay the groundwork for arguing why a particular grant or funding application is needed, or to generate initial theories for a follow-up realist programme of work that tests those theories in practice.

There is also potential for this approach to be used in rapid evaluation centres (such as the Birmingham, RAND and Cambridge Evaluation (BRACE) Centre, funded by the National Institute for Health and Care Research (NIHR)). These research centres typically have more staff available to work on rapid evaluations and the literature review portions of evaluations could benefit from a methodology such as that described here, particularly when determining how, why and for whom programmes may work.

Independent consultants with smaller teams, even with contracted employees who work on ad hoc basis, could also make use of this methodology - in a manner similar to rapid evaluation centres - to deliver evidence-informed reviews to commissioners. This method could also be piloted for use by those who are conducting trials of interventions to try to understand what might be going wrong or well, based on existing literature, within the lifespan of the trial.

Strengths and Limitations of This Method

The key strengths of this method were 1) the ability to deliver within such rapid timescales; and 2) the ability to search across diffuse literatures for a focused purpose. We have not seen others in the literature purporting to deliver reviews of this kind in health services research within such timescales. We would also argue that the method prioritises speed and actionable insights from the review while maintaining a significant amount of the academic rigour required. Few compromises are made that would undermine the genuine reproducibility of the review or transparency of its findings.

Although this new method has made a key contribution to realist science and rapid research methods, there are a series of limitations that need to be considered in relation to this work.

The Resource Implications of APRIL

This method still requires significant resource to deliver properly, and team members need to have sufficient time available to support the intensity of the approach. To successfully complete this review in the timescales required, given team members each had significant other portfolios of work, we needed to dedicate extra time outside of typical working hours to deliver this review on time. Therefore, future planning for such reviews should consider their intensity and pace and its impact on staff. We were able to work with skilled staff on casual contracts who had more time available to help shoulder more procedural aspects of the review such as screening and data extraction. Furthermore, we worked with information specialists who were able to rapidly develop a robust search strategy within <1-week timeframes. Whilst the scope of the review is reduced in comparison to an exhaustive search of the literature (as may be required in a systematic review), resourcing would need to be carefully considered when considering the resources available to undertake such a review.

Although we made use of parallelisation, technology, and diligent assignment of tasks to staff members skilled in different areas, this method will still take longer depending on the number of sources included in the review. The more studies there are to screen, extract data from, and theorise about, the longer it will take to complete. Therefore, although parallelisation helps significantly with rapidity; diligent crafting of search strategies, inclusion criteria, requirements for data extraction, and research questions are still areas where significant time savings can be made – as long as aims of the project are still being fulfilled. This may necessitate pilot searching and screening of literature to understand how search terms and inclusion criteria need to be tweaked to maintain feasibility. If policy timelines are longer (for example, four months rather than one), then wider searching and inclusion criteria could be considered.

Depth of Searching in APRIL

We focused on only the most relevant databases, which risked missing out some literature. Therefore, it is important to consider that the time-pressured pragmatic emphasis does have drawbacks in terms of thoroughness that need to be offset against considerations of speed. Therefore, APRIL may not be suitable for exploratory research where there may be diffuse or limited evidence, nor suitable in cases where the research question necessitated a deeper focus on specific topics where a more exhaustive search was essential. To mitigate risks of missing key literature, searches should be honed and tested to ensure they successfully identify a curated set of pre-identified key articles provided by subject experts and/or the commissioner of the project. This complements the piloting also performed to optimise timeliness, as discussed in the previous section.

Although piloting can help ensure congruence and reduce human error in screening, it is likely still worse than doing so independently for the full number of titles and abstracts, full texts, and data extraction. As such, this could still result in human error and missed literature or theoretical insights. Lastly, in this case study of APRIL, we were not able to preregister the review on PROSPERO - in part because we had to innovate the methods as we were working, and it may not have made sense to do so due to the responsive methodological changes we had to make. However, future APRIL reviews may still be able to register their review at PROSPERO during the initial phases of the project, while still following this methodology.

Dissemination and Impact of APRIL

Despite the APRIL model being successfully adopted in this instance and being used immediately to support real world impact, delivering such projects for policymakers or similar funders can impose limitations. Reports for policymakers can often be embargoed, and as a result, dissemination can be initially difficult. What outputs are allowed, and the timescales for these often must be negotiated with the funder. Sometimes, the content may also need to be approved by the funder as policymakers may not want their positions to be misrepresented or misinterpreted. Whilst in this instance there was broad agreement about the report contents and findings, this could be a potential source of reporting bias if this is not openly discussed at the start of the research process.

Conclusion

In a classic realist or rapid realist review, what is often emphasised is the depth of exploration of the Context-Mechanism-Outcome configurations with the ultimate goal of refined programme theory development. We developed and implemented the novel APRIL method to enable diffuse literature to be synthesised rapidly to inform contextually driven theory generation. This research has shown that completing policy-focused rapid realist reviews is possible within timeframes that were previously considered too rapid to be academically feasible. This is possible thanks to parallelisation of review processes, use of modern collaborative technologies, constrained search scopes, and efficient allocation of team expertise. Use of various strategies, such as initially piloting screening strategies to ensure congruence, rather than full independent screening, can enhance systematic search screening and data extraction while being rigorous and more time efficient. The APRIL method should be further tested by other teams to answer different research questions in different settings, including for hypothesis generation, diagnosing issues in clinical trials, and use in rapid evaluation centres or by independent consultants.

Supplemental Material

Supplemental Material - Accelerated Parallelised Realist-Informed Literature Review (APRIL): A Novel Method for Informing Policy Decision-Making in Accelerated (<1 Month) Timescales

Supplemental Material for Accelerated Parallelised Realist-Informed Literature Review (APRIL): A Novel Method for Informing Policy Decision-Making in Accelerated (<1 Month) Timescales by Justin Aunger, Bianca Ungureanu, Rachel Posaner, Christian Bohm, Ross Millar, Sarah-Jane Fenton in International Journal of Qualitative Methods.

Supplemental Material

Supplemental Material - Accelerated Parallelised Realist-Informed Literature Review (APRIL): A Novel Method for Informing Policy Decision-Making in Accelerated (<1 Month) Timescales

Supplemental Material for Accelerated Parallelised Realist-Informed Literature Review (APRIL): A Novel Method for Informing Policy Decision-Making in Accelerated (<1 Month) Timescales by Justin Aunger, Bianca Ungureanu, Rachel Posaner, Christian Bohm, Ross Millar, Sarah-Jane Fenton in International Journal of Qualitative Methods.

Footnotes

Acknowledgements

We would like to thank our expert panel for their contributions, without whom this project would have not been as relevant, focused nor useful. We would also like to acknowledge the Care Quality Commission for commissioning this research to ensure their decision making is informed by the best available evidence.

Ethical Considerations

This did not require ethical approval as it was a literature review.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by CQC EP&S 087 Adult Community Mental Health Literature Review through Cambridge University Technical Services. Justin Aunger was also supported by the National Institute of Health and Care Research (NIHR) Midlands Patient Safety Research Collaboration. The views expressed are those of the author(s) and not necessarily those of the NIHR or the Department of Health and Social Care.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

This research did not generate or use datasets.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.