Abstract

Keywords

Introduction

Construct validity is a foundational concept in social science research, ensuring that empirical measures accurately capture the theoretical constructs they are intended to represent (Cronbach & Meehl, 1955). While widely developed in quantitative research, its systematic application in qualitative methodologies remains underexplored (Gibbert & Ruigrok, 2010; Yin, 2018). In qualitative studies, where variables are often operationalized through themes, categories, or case comparisons, construct validity requires deliberate adaptation to preserve rigor, transparency, and credibility. Strategies such as triangulating evidence, maintaining a clear chain of evidence, and explicitly defining constructs have been recommended (Eisenhardt, 1989; Yin, 2018). As established in IJQM, rigorous mechanisms such as investigator responsiveness and methodological coherence should be embedded within qualitative inquiry to ensure reliability and validity (Morse et al., 2002). However, application of these verification strategies in multi-case construct validation remains underdeveloped.

The absence of such methodological exemplars is especially notable in domains characterized by institutional complexity and cross-border dynamics. Conceptual models are frequently employed to structure inquiry and guide decision-making in these environments, but they are often insufficiently validated, limiting both their credibility and their practical relevance. This article addresses that gap by presenting a methodological roadmap for applying construct validity in qualitative multiple-case research.

The approach is demonstrated through an applied example of sovereign wealth fund (SWF) investments in healthcare. SWFs are increasingly influential actors in health systems, particularly in countries seeking innovative financing solutions (Eastburn et al., 2024; McClellan et al., 2019). Their cross-border investments, varied governance environments, and hybrid mandates provide a rich setting in which to test methodological strategies for construct validation. Drawing on six qualitative case studies, this paper shows how constructs were defined, operationalized, and validated across diverse institutional contexts.

The contribution of this study lies not in the substantive findings on SWFs, but in advancing methodological practice. By embedding construct validity into the design and evaluation of a conceptual model, the article demonstrates how qualitative researchers can enhance transparency, replicability, and empirical soundness in model-building. The framework is transferable beyond the investment and health sectors, offering guidance for scholars developing conceptual models in other complex, multi-actor, multi-country settings.

Background and Gap

Construct validity is a core principle in research methodology, referring to the extent to which a study’s operational measures accurately capture the theoretical constructs they are intended to represent (Cronbach & Meehl, 1955). In practical terms, it addresses whether a researcher is truly measuring the concepts they claim to be studying. Originating in psychometric testing, the concept has been adapted across disciplines including management, political science, and health policy to assess the rigor of both quantitative and qualitative research designs (Sinkovics et al., 2008). In applied fields such as sovereign investment and healthcare financing, where conceptual models are used to guide strategic and policy decisions, ensuring construct validity is vital for both scholarly credibility and practical relevance. This gap echoes broader concerns regarding the evaluation of qualitative research in international buisness contexts (Welch & Piekkari, 2017).

Applying construct validity in qualitative research presents challenges. Qualitative studies often involve context-rich data and interpretive analysis, where the pathway from concept to observation is less standardized than in quantitative approaches. This increases the importance of establishing a transparent chain of evidence linking theoretical constructs to the empirical data that supports them. Yin (2018) emphasizes that construct validity in qualitative case study designs can be achieved through multiple sources of evidence, explicit operational definitions, and clear documentation of the evidence trail. Similarly, Eisenhardt (1989) highlights the importance of defining constructs in advance and grounding them in both literature and empirical data through iterative comparison. Gibbert and Ruigrok (2010) synthesize these perspectives into three strategies for construct validity in case-based research: construct clarity, data triangulation, and alignment of constructs with multiple data sources.

Despite this methodological guidance, few applied studies in sovereign finance or healthcare investment systematically apply these principles. Much existing research in these domains is conceptual, descriptive, or narrowly empirical focusing on isolated indicators or single-case narratives without an underlying model that is both theoretically grounded and empirically validated. In the SWF literature, numerous studies examine macroeconomic stabilization, financial returns, or sectoral diversification (Clark et al., 2013; Megginson & Fotak, 2015), yet few provide frameworks explaining how SWFs behave in specific sectors such as healthcare, particularly in relation to host country institutional context. Similarly, global health systems research often addresses investment decisions in terms of needs, outcomes, or governance, but rarely through validated conceptual models linking capital deployment to governance, institutional quality, and developmental priorities (McClellan et al., 2019; World Health Organization, 2021).

This lack of methodological rigor has two consequences. First, it weakens the explanatory power of conceptual models by leaving them uncertain whether their components accurately reflect real-world dynamics. Second, it limits practical relevance, as policymakers and investment managers are less likely to trust frameworks that have not been evaluated across diverse environments. These issues are particularly significant in the context of SWF healthcare investments, where decisions carry high public accountability and are influenced by institutional variation and evolving health needs.

To address this gap, the present study applies a transparent and replicable construct validity process to a six-construct conceptual model for SWF healthcare investment. The model was developed and evaluated through six case studies involving host countries with different economic classifications (factor-driven, efficiency-driven, innovation-driven) and governance quality (weak, moderate, strong). Constructs were defined using established literature and theoretical frameworks, notably Agency Theory and Whetten’s (1989) model development criteria, and operationalized through secondary data sources such as SWF annual reports, international governance indices, healthcare investment databases, and policy analysis. Validation followed protocols outlined by Yin (2018) and Eisenhardt (1989), combining pattern matching, cross-case analysis, and data triangulation.

By applying these principles in a complex, cross-national setting, this study offers a methodological roadmap for researchers working at the intersection of sovereign finance, health systems, and international development. It shows that construct validity is not only achievable in qualitative research but also essential for enhancing the credibility, transferability, and utility of conceptual models in applied global policy domains. By documenting the process in detail, the study promotes transparency and replicability two qualities often cited as weaknesses in qualitative research, yet crucial for building cumulative knowledge in emerging fields such as sovereign investment in healthcare.

Conceptual Model Development

Conceptual models are essential for mapping abstract constructs into structured frameworks that guide empirical inquiry and practical decision-making. In developing a model to assess SWF investments in healthcare, this study drew upon literature from international business, development finance, public administration, and global health systems. The framework integrates six constructs: SWF type, host country economic classification, host country governance quality, healthcare priorities, stakeholder collaboration, and SWF motivation. These constructs were identified for their consistent presence in the literature, explanatory power in sovereign investment behavior, and relevance to both principal–agent dynamics and healthcare investment contexts.

The model is grounded in Agency Theory, which conceptualizes the relationship between sovereign principals (governments or citizens) and SWF managers as a principal–agent problem characterized by information asymmetry, incentive misalignment, and varying managerial autonomy (Eisenhardt, 1989; Jensen & Meckling, 1976). While Agency Theory is often applied to private-sector governance, here it is extended to state-owned investment entities, emphasizing the role of institutional context in shaping agency dynamics. Following Whetten’s (1989) criteria for theory development, each construct was defined conceptually, operationalized with measurable indicators, and linked to the overall framework to ensure coherence and empirical testability.

SWF Type

This construct categorizes funds by investment mandate and institutional objectives. Drawing on global classifications (Bortolotti et al., 2015; Das et al., 2010), three types are distinguished: stabilization funds, aimed at buffering short-term macroeconomic volatility; savings funds, focused on long-term wealth preservation; and development funds, prioritizing domestic economic growth. Fund type shapes risk appetite, investment horizons, and sectoral engagement. Within Agency Theory, it reflects the incentive structure and constraints set by the principal.

Host Country Economic Classification

Based on the World Economic Forum’s typology (Schwab & Zahidi, 2020), this construct distinguishes between factor-driven, efficiency-driven, and innovation-driven economies. Classification signals a country’s developmental stage, absorptive capacity, and corresponding healthcare needs from basic infrastructure in factor-driven economies to advanced innovation in innovation-driven contexts. Economic classification also influences investment feasibility, expected returns, and the complexity of aligning strategies with local realities.

Host Country Governance Quality

Governance quality refers to institutional and regulatory capacity, measured using composite indices such as the World Bank’s Worldwide Governance Indicators (World Bank, 2024). It is treated as a moderating variable, shaping agency risk and stakeholder relationships. Strong governance reduces information asymmetry and aligns agent actions with societal objectives, whereas weak governance increases risk and necessitates alternative oversight mechanisms.

Healthcare Priorities

This construct captures the strategic focus areas targeted by SWFs, such as infrastructure development, service delivery expansion, preventative care, and research and innovation. Priorities are influenced by economic classification, governance capacity, national health policy, demographic trends, and disease burden. They reveal how sovereign mandates are translated into sector-specific investment logic.

Stakeholder Collaboration

Healthcare investments often require coordination across governments, private firms, providers, and civil society (Hafner & Shiffman, 2013). This construct assesses the extent of alignment and engagement among actors. High collaboration mitigates agency risk, builds legitimacy, and enhances impact, especially in weaker governance contexts. It reflects Agency Theory’s emphasis on monitoring and feedback mechanisms to manage principal–agent tensions.

SWF Motivation

Motivation refers to the strategic intent underlying healthcare investments whether driven by financial returns, social impact, or a blended mandate. Motivation influences subsector selection, risk tolerance, and performance metrics. It also shapes willingness to pursue investments with lower financial yield but higher public value, revealing the degree of mission alignment between principal and agent.

Together, these constructs form a coherent framework for analyzing SWF healthcare investment across varied institutional settings. Each was defined through literature, operationalized using secondary data, and applied consistently across six case studies. Grounding the model in Agency Theory and Whetten’s development principles ensures theoretical robustness and empirical applicability, enabling both scholarly refinement and practical use in global health investment strategy.

Methodological Framework: Construct Validity in Practice

Applying Construct Validity

In qualitative, case-based research, construct validity refers to the degree to which operational measures faithfully capture the theoretical constructs they are intended to represent (Gibbert & Ruigrok, 2010). This is especially critical when developing conceptual models for multi-country studies, where institutional variation can complicate the comparability of cases. Establishing construct validity ensures that observed patterns are grounded in theory rather than artefacts of context or researcher interpretation.

Unlike quantitative approaches, where validity can be statistically evaluated (e.g., factor analysis), qualitative construct validity depends on rigorous design, transparent coding, and explicit linking of theory to evidence. Yin (2018) identifies construct validity as one of four core tests for case study research, noting it is often the most challenging to achieve. Eisenhardt (1989) similarly stresses the importance of defining constructs a priori and grounding them in both literature and data.

This study operationalizes construct validity by applying a six-construct conceptual model to six SWF healthcare investment cases. Each construct was defined from literature, operationalized with clear indicators, and applied consistently across cases.

Techniques to Strengthen Construct Validity

Following established best practice in qualitative methodology (Eisenhardt, 1989; Yin, 2018), five interrelated techniques were used to ensure the model’s constructs were both theoretically grounded and empirically robust: 1. 2. 3. 4. 5.

Audit Trail and Data Mapping

To ensure methodological transparency and replicability, a detailed audit trail was maintained throughout the research process, documenting the evidence base, coding logic, and validation procedures for each construct across the six cases. This approach enhances trustworthiness in qualitative research by enabling other scholars to trace the chain of evidence from theoretical constructs to empirical indicators, consistent with Yin’s (2018) recommendations and Sinkovics et al.’s (2008) trustworthiness criteria. The audit trail was structured around five interlinked elements.

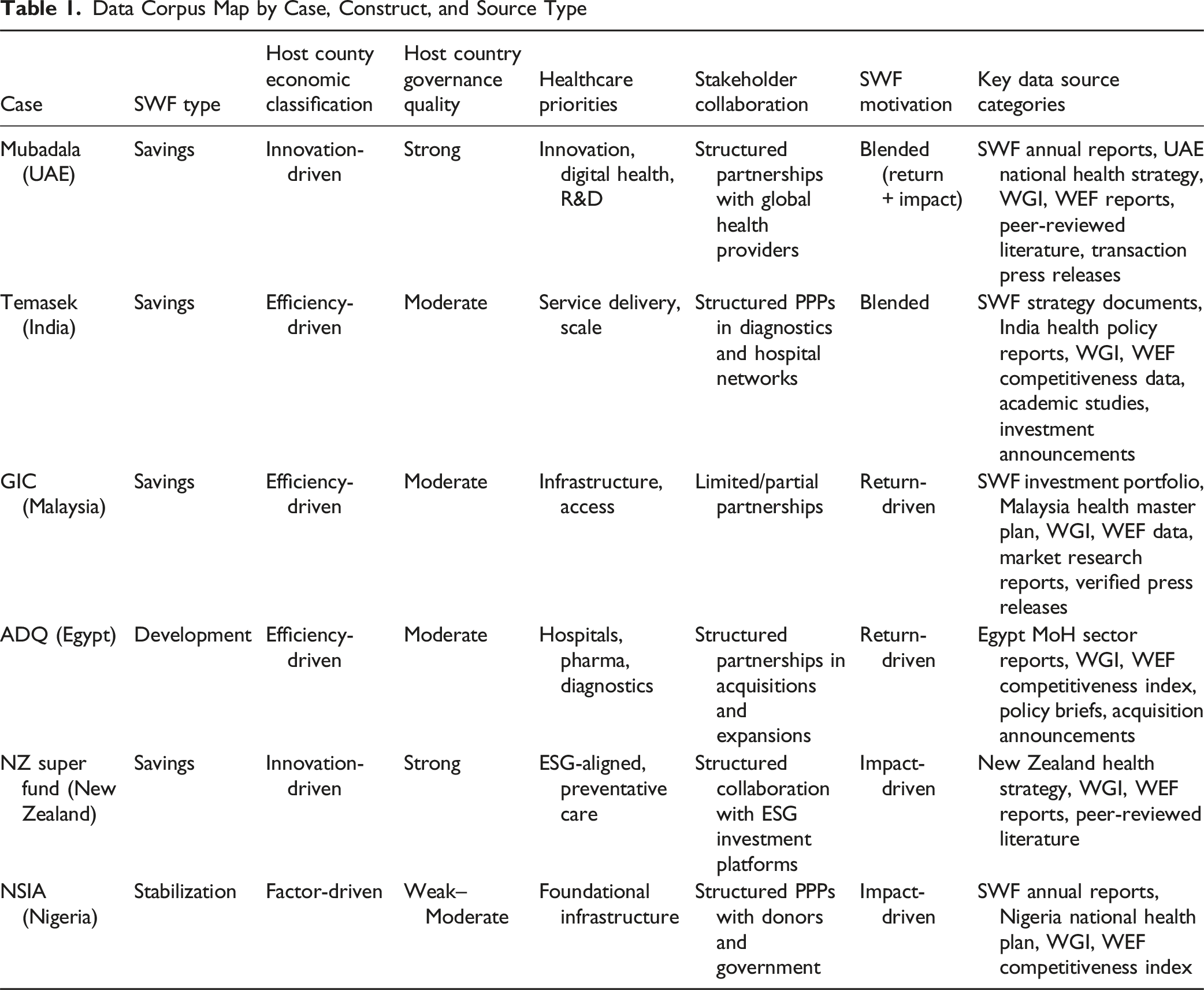

Data Corpus Mapping

A comprehensive corpus map was maintained to record all data sources, systematically organized by case and by construct. Sources fell into five primary categories: • Official SWF materials – annual reports, fund charters, investment strategy documents, and portfolio disclosures. • Host country policy and governance data – national health strategies, Ministry of Health reports, healthcare infrastructure plans, and regulatory frameworks. • International datasets – World Bank Worldwide Governance Indicators, World Economic Forum Global Competitiveness Reports, UNDP Human Development Reports. • Academic and policy literature – peer-reviewed articles, think tank reports, development finance studies, and market intelligence briefs. • Press releases and transaction announcements – used to verify deal timing, scale, and sectoral scope, and to cross-check against other sources.

Each source was entered into a master evidence log with bibliographic metadata, date of publication, construct and case tags, thematic descriptors, and a quality appraisal rating (high/medium/low) based on provenance, recency, and methodological transparency.

Search and Selection Logic for Secondary Sources

Secondary data were identified through a combination of: • Structured database searches (Scopus, Web of Science, ProQuest), • Institutional repositories (IMF, OECD, SWF Institute), and • Targeted Google Scholar queries.

Search strings combined SWF name, host country, healthcare terms (e.g., hospital, diagnostics, digital health), governance terms (rule of law, regulatory quality), and economic classification keywords (factor-driven, efficiency-driven, innovation-driven).

Selection criteria included: (i) Direct and substantive relevance to the case or construct; (ii) Publication within the past 10 years (older sources included only if providing essential historical or institutional context); (iii) Credible provenance from recognized institutional, peer-reviewed, or verified commercial sources.

Coding Procedures and Construct Alignment

A structured codebook, derived from the six construct definitions, guided coding. Each construct was assigned subcodes for its indicators (e.g., Host Country Governance Quality = Weak, Moderate, Strong; SWF Motivation = Return-driven, Impact-driven, Blended).

Coding followed a two-stage process: 1. Within-case coding – assigning source excerpts to relevant construct subcodes. 2. Cross-case matrix compilation – aggregating coded segments to identify convergence/divergence patterns and contextual variations. This approach aligns wiht established qualitative data analysis practices (Miles et al., 2020).

Chain of Evidence

For each coded excerpt, the exact source reference (document, page number, or section) was retained in the evidence log. This allowed backward tracing from any analytical claim in the findings to its empirical origin, fulfilling Yin’s (2018) chain of evidence requirement and ensuring analytical transparency.

Reliability and Stability Checks

Although coding was conducted by a single researcher, reliability was strengthened through: • Stability checks – re-coding 10% of the dataset after a two-week interval, achieving consistent categorization across constructs.

This measure ensured that construct application was consistent, theoretically aligned, and transferable to other institutional or sectoral contexts.

Data Corpus Map by Case, Construct, and Source Type

Construct Operationalization and Validation

To ensure transparency, replicability, and adherence to Yin’s (2018) construct validity principles, each of the six constructs in the conceptual model was explicitly defined, linked to theoretical foundations, and operationalized using a structured evidence-gathering process.

The operationalization process followed three interlinked steps: 1. Definition and Theoretical Anchoring – Each construct was defined with reference to existing literature in sovereign investment, economic development, and health systems governance. 2. Evidence Mapping – For each construct, primary evidence types were identified and mapped (see 4.3), ensuring that data collection drew from multiple independent secondary sources. 3. Indicator Development and Validation – Indicators were selected based on their recurrence in the literature, measurability across all six cases, and capacity to capture both cross-country comparability and local specificity.

Construct Validation Framework for the Six Constructs

This approach not only standardizes measurement across diverse institutional environments but also preserves sensitivity to contextual variation. For example, while “Stakeholder Collaboration” was consistently coded using a three-level ordinal scale (None, Partial, Structured), the evidence sources for that construct varied by case from formal government–SWF memoranda in some contexts to joint venture press releases in others. Such variance was documented in the audit trail to maintain an unbroken chain of evidence from source to coding decision.

By integrating theoretical definition, explicit evidence mapping, and indicator-based coding, the Construct Validation Framework provides a methodological template for qualitative model testing that can be replicated, extended, and stress-tested in future multi-country or cross-sector studies.

Construct Operationalization with Example Evidence from the Six Cases

Integration and Replicability

By defining constructs explicitly, applying consistent indicators, and triangulating evidence across independent data sources, the study meets Yin’s (2018) criteria for construct validity in qualitative case study research. The approach demonstrates that abstract theoretical concepts such as governance quality or stakeholder collaboration can be reliably measured across diverse institutional contexts.

The result is a methodological framework that is transparent, replicable, and adaptable. Researchers can apply the six-construct model to other sectors or geographies, while policymakers and fund managers can use it to assess the strategic alignment of sovereign investment with health system priorities. This explicit linkage between theory, data, and coding enhances both the scholarly credibility and the practical utility of the model.

Researcher Positionality and Reflexivity

Qualitative research, particularly in cross-country, multi-case designs, is unavoidably shaped by the researcher’s interpretive stance. In line with QROM’s emphasis on reflexivity as part of research trustworthiness, this subsection makes explicit how my professional identity, epistemological stance, and methodological choices influenced construct operationalization and interpretation.

I approached this study from a pragmatic qualitative position, prioritizing methodological rigor, cross-case comparability, and real-world applicability over purely interpretive or ethnographic depth. The researcher’s professional background in healthcare operations and policy provided sectoral insights, while posing risks of bias. This positionality enhanced my ability to accurately interpret sector-specific terminology, investment logics, and policy frameworks. It was particularly valuable in defining constructs such as healthcare priorities and stakeholder collaboration in ways that reflected both formal policy commitments and practical implementation realities.

However, this insider familiarity also carried the risk of reinforcing existing assumptions about institutional effectiveness or investment rationales, particularly in high-governance contexts where I have worked extensively. To mitigate such bias, I: • Developed explicit coding rules grounded in published literature. • Triangulated every construct with independent secondary sources before assigning categorical codes. • Cross-checked interpretations with region-specific studies to account for variation in institutional norms, regulatory cultures, and policy environments.

The cross-country scope of the research required sensitivity to linguistic nuance, institutional diversity, and differences in data transparency. Governance quality, for example, is not uniformly understood across political systems. My expectations, shaped by high-governance environments, could have biased assessments of lower-governance contexts. To address this, I systematically consulted comparative governance indices and treated any single-source claim with caution unless corroborated by additional independent evidence.

Because the study relied entirely on secondary data, I did not directly interact with SWF managers, policymakers, or local stakeholders. This reduced the potential for interpersonal bias but limited my ability to probe ambiguous or contradictory information. To address this limitation, I maintained a detailed audit trail documenting: • Rationale for each coding decision. • Criteria for inclusion or exclusion of specific sources. • Resolution of conflicting evidence.

This record-keeping enabled retrospective review of interpretive choices and ensured transparency in the movement from raw data to construct assignment.

Finally, my commitment to construct validity, shaped by engagement with the work of Eisenhardt (1989), Gibbert and Ruigrok (2010), and Yin (2018), informed the study’s emphasis on comparability, replicability, and systematic cross-case pattern matching. While this choice strengthened the analytical framework and its applicability across contexts, it necessarily reduced the narrative depth of individual cases. This was a deliberate trade-off to serve the article’s methodological contribution.

By articulating these positional considerations, the study acknowledges that neutrality is neither possible nor the goal. Instead, it demonstrates how conscious methodological choices, coupled with systematic mitigation strategies, can reduce bias while preserving the interpretive insight essential to qualitative inquiry in complex, multi-actor, and multi-country environments.

Discussion

The application of construct validity in this study provides important methodological lessons for qualitative researchers developing and empirically evaluating conceptual models in complex, multi-country environments. By grounding the framework in established theory and systematically validating constructs across six diverse cases, the research contributes to the ongoing call for rigor, transparency, and replicability in qualitative inquiry.

Strengths of the Approach

One of the primary strengths of this study lies in its demonstration of how construct validity can serve as a bridge between theory development and empirical testing in qualitative research. In many applied domains, field experiments or randomized designs are unfeasible, leaving conceptual models as vital tools for organizing knowledge and guiding decision-making. Yet, such models are too often presented without transparent validation. This study addresses that gap by applying a replicable construct validation strategy, guided by Yin’s (2018) validity test and consistent with the trustworthiness principles outlined by Lincoln and Guba (1985).

The framework also aligns with verification strategies articulated within IJQM. As Morse et al. (2002) argue, rigor in qualitative research depends on embedding verification mechanisms such as methodological coherence, investigator responsiveness, and iterative validation throughout the research process. This study operationalizes those principles in a multi-case context, demonstrating how triangulation, cross-case comparison, and explicit construct definition can achieve methodological coherence while preserving contextual sensitivity.

The model’s application across factor-driven, efficiency-driven, and innovation-driven economies further illustrates its adaptability. This diversity enhanced the robustness of the validation process and highlighted how governance quality and economic classification mediate the relationship between conceptual constructs and observed outcomes. The methodological lesson here is that qualitative model-building, when paired with structured validation techniques, can produce insights that are both generalizable and sensitive to local context (Eisenhardt, 1989; Gibbert & Ruigrok, 2010; Maxwell, 2013).

Limitations and Boundaries

Several limitations must also be acknowledged. First, the study relies entirely on secondary data, including annual reports, investment portfolios, governance indices, and health policy documents. While triangulated across multiple independent sources to enhance dependability, such data may not fully capture internal decision-making processes or informal stakeholder dynamics. Future research could address this by incorporating primary interviews or embedded fieldwork.

Second, the generalizability of the findings is constrained by the scope of the case studies. Although cases were selected for diversity, they do not represent all SWF types or all regional contexts. The framework’s applicability to Latin American, Central Asian, or European settings remains untested. Nevertheless, the explicit construct definitions and transparent coding logic provide a foundation for replication and extension, consistent with Lincoln and Guba’s (1985) criteria of transferability and confirmability.

Finally, the tension between abstraction and specificity in model development remains a methodological challenge. Constructs must be broad enough to allow cross-case comparability, yet precise enough to capture meaningful distinctions. In balancing this tension, certain nuanced drivers such as geopolitical factors or reputational motivations were necessarily excluded, highlighting areas for further theoretical elaboration.

Advancing Transparency in Qualitative Model-Building

The most significant methodological lesson is the value of transparency and systematic validation in qualitative model development. As global policy challenges increase in complexity, the demand for models that are both theory-informed and empirically grounded will continue to grow. Qualitative researchers must therefore be explicit about how constructs are defined, validated, and tested. By applying Agency Theory, Whetten’s (1989) model-building principles, and systematic construct validation, this study offers one approach for embedding rigor and verification in qualitative conceptual models.

The contribution extends beyond the empirical illustration of sovereign wealth funds and healthcare. The framework is broadly relevant to qualitative inquiries in other high-complexity, multi-actor domains. By showing how verification strategies (Morse et al., 2002) can be operationalized in multiple-case research, the study demonstrates how methodological transparency can enhance both scholarly credibility and practical applicability.

Implications for Future Research

This study underscores the central role of construct validity in enhancing the credibility and transferability of qualitative research. Future studies can build on this framework in several important ways.

First, there is scope to extend the use of construct validation beyond the illustrative domain of sovereign wealth funds and healthcare. Conceptual models are widely employed in areas such as education, environmental governance, organizational studies, and public policy, yet their validation often remains implicit or underdeveloped. Applying the structured framework outlined here would allow scholars in these fields to test whether theoretical constructs hold across diverse institutional or cultural contexts, strengthening both methodological rigor and practical relevance.

Second, future research should integrate construct validation into mixed-method designs. While this study relied exclusively on secondary qualitative data, combining multiple-case analysis with survey instruments, econometric testing, or experimental simulations could extend generalizability. Such integration would allow researchers to assess construct boundaries statistically, examine hypothesized relationships, and explore causal mechanisms. This kind of methodological layering would address Morse et al.’s (2002) call for verification strategies to be embedded throughout the research process, ensuring that validation is ongoing rather than retrospective.

Third, researchers can explore how construct validation can be adapted to emergent forms of qualitative inquiry. Digital ethnography, participatory action research, and collaborative policy analysis increasingly generate complex, multi-actor data. Embedding explicit construct definitions and transparent verification strategies in these approaches would enhance credibility and facilitate replication across contexts.

Finally, there is a need for further exploration of the tension between abstraction and specificity in model-building. Constructs must remain precise enough to capture local realities while generalizable enough to enable cross-case comparison. Future work could develop adaptive coding frameworks or hybrid validation protocols to better manage this balance, advancing both theory-building and methodological practice.

In sum, construct validity should not be treated as a peripheral or optional step, but as a foundational principle in qualitative research. By extending and refining the roadmap presented here, future studies can strengthen the transparency, credibility, and impact of qualitative conceptual models across disciplines.

Conclusion

This paper has demonstrated how construct validity can be systematically embedded in qualitative research through a structured, replicable framework. Using a six-case illustration, the study showed how constructs can be explicitly defined, triangulated across multiple data sources, and validated through pattern matching and cross-case comparison. The purpose of this example was not to draw substantive conclusions about sovereign wealth funds, but to demonstrate a transferable methodological process for enhancing rigor in qualitative model-building.

Construct validity is central to elevating the credibility of qualitative inquiry, particularly in multi-country and multi-actor contexts where replication is challenging and data are heterogeneous. As established in IJQM, strategies such as methodological coherence and ongoing verification are essential for ensuring reliability and validity in qualitative research (Morse, 2002; Morse et al., 2002). This study extends those strategies into the domain of multiple-case construct validation, providing a practical roadmap for researchers who seek both transparency and replicability in model development.

The contribution of this framework is not confined to one sector. It offers a transferable blueprint for scholars working in diverse fields who need to operationalize abstract constructs in complex institutional environments. By integrating explicit construct definitions with systematic verification and triangulation, the approach strengthens methodological transparency and enhances the trustworthiness of qualitative conceptual models.

Ultimately, the study reinforces that validation is not a peripheral technical step but a foundational principle for qualitative research. Embedding construct validity in model-building can improve practice, inform policy, and advance scholarly understanding across disciplines.

Footnotes

Acknowledgements

The author thanks her dissertation chair for guidance during the doctoral research on which this article is based, and her family for their encouragement and support.

Author Contribution

RM conceptualized the study, developed the methodology, conducted the analysis, and wrote the manuscript.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All data were drawn from publicly available sources.