Abstract

The application of realist-informed approaches to implementation research can produce answers to why, for whom and under what circumstances social determinants of health interventions work. In the context of a study to develop and test EHR-based clinical decision support tools that suggest adjusting care plans in response to patient-reported financial, housing, food, transportation, and utilities insecurity, the authors applied an innovative use of realist principles in a bounded, mid-study task. This paper demonstrates how realist retroduction can be applied in intervention development processes. Retroduction proved useful in identifying the often intangible clinical needs and preferences that affected decision support tool desirability and use, which then guided the revision of five tools prior to a formal trial. This paper illustrates how data from the study development phases were put in service of retroductive steps that, through the identification of tentative program theories, guided revision of the pilot electronic tools to better meet clinic needs in the study trial phase. Applying retroductive thinking to establish what may be more or less effective under real-world conditions before participants are recruited is a productive, pragmatic form of researcher/stakeholder co-design that seeks to achieve results without wasting clinical teams’ time.

Keywords

Introduction

Social determinants drive health outcomes via complex pathways (Adler & Stewart, 2010; Cole & Nguyen, 2020). Healthcare delivery systems, therefore, have begun to target patients’ social risks, often defined as the downstream and adverse manifestations of social, political, and economic determinants, as one part of comprehensive strategies to improve health. Increasingly, electronic health records (EHRs) are seen as sources of information about patient social determinants yet such EHR data often lacks consistency, usefulness and accuracy (Mehta et al., 2023). Interventions to increase the use of electronic tools to screen for, document and respond to patient social needs are impacted by variable clinical roles and workflows, time constraints, limited training, and clinician burdens. Research examining the implementation of social risk-focused interventions must account for the complexities involved in introducing new activities into healthcare settings (Gold et al., 2017a, 2017b; Wang et al., 2021). Realist evaluation, which foregrounds the relationship between individual actors (e.g., patients, clinicians) and their environments to identify underlying causal processes that drive or inhibit change (Jagosh, 2019; Smeets et al., 2022), is well-suited to this task. The realist focus on unearthing these intangible forces can help researchers determine how and why implementation interventions work (Dalkin et al., 2015; Haynes et al., 2021). Realist evaluation has emerged as a pragmatic and meaningful approach to implementation science (Dalkin et al., 2015; Eldh et al., 2020; McHugh et al., 2016; Rycroft-Malone et al., 2015; Sarkies et al., 2022) due to its ability to assess and account for the nonlinear, context-dependent causality inherent to an intervention’s impact. It can be especially useful for characterizing complexities within health systems settings because it uses multiple, concurrent modes of inference to understand change drivers in real-world conditions (Shearn et al., 2017). Realist evaluation is typically applied to understand how, why and for whom an intervention succeeds or fails (Punton et al., 2020).

The COntextualized care in cHcs’ Electronic health REcords (COHERE) study developed and tested EHR-based clinical decision support (CDS) tools in community-based health centers (CHCs) engaged in addressing social determinants of health (SDH). Specifically, study aims were to: develop CDS tools that offer social risk-informed care plan adaptations; test whether EHR-embedded CDS enhances social risk-informed care provision in CHCs; and assess care team perceptions of the tools’ usability and impacts on care quality and patient-provider interactions. Central to the intervention was the introduction of CDS tools that prompt adjustments to patient care plans in response to awareness of their social needs; through the suggestion of care plan adjustments, care team members would be reminded and encouraged to makes such adjustments. The overall study involved three phases: (1) a formative, advisor engagement phase to develop the content and structure of the tools, (2) a pilot phase to test and revise the tools based on user input, and (3) a trial phase in which a randomized quasi-experimental design tested the tools’ effectiveness. This paper focuses on activities undertaken during the study’s pilot phase (phase 2), in which we employed mixed methods to collect data on tool usage (trackable action data extracted from the EHR) and user perceptions and experiences (qualitative data from interviews, and observations and interactions with pilot clinics). In this case study, we describe how we applied the realist principle of retroduction – an iterative form of inference-making (Mukumbang et al., 2021) – to the analysis of advisor and pilot data during the COHERE study’s pilot phase, a bounded, mid-study task, and then used the results to revise five CDS tools in response to pilot study findings.

Realist Principles

Realist evaluation is a theory-driven approach to program evaluation that may be applied alongside other theoretical frameworks (e.g., Normalization Process Theory, see Bunce et al., 2023a, 2023b; Dalkin et al., 2021; May et al., 2009; May & Finch, 2009) to enhance the explanatory power of research findings. Realism, a philosophy of science, provides the foundation of realist evaluation (Alderson, 2021; Bhaskar, 1975), which involves working backward from empirical data – e.g., provider statements about how CDS tools impacted clinic workflows – to propose program theories (Jagosh et al., 2015) that explain how and why an intervention may take hold under certain circumstances (Westhorpe, 2014).

In realist logic, outcomes result from mechanisms—essentially, the processes through which interventions operate—involving interactions between resources employed in the intervention, cognitive responses of those involved, and contextual factors in which the intervention takes place (Dalkin et al., 2015; Saunders et al., 2009). Preliminary theories about why a specific intervention does or does not take hold are then investigated within a context-mechanism-outcome (CMO) heuristic. CMOs are propositions, hypotheses and educated guesses about the realist question “what works, for whom, in what context” (Jagosh et al., 2012; Westhorp, 2014). To understand how elements of a given CMO interact, qualitative methods are used to assess the actions of individuals involved in interventions in the context of interest, and quantitative data are used to affirm or dispute the strength of proposed CMOs and provide feedback during iterative qualitative analysis cycles (Pawson & Tilley, 1997; Westhorp, 2014). Answering realist questions (Pawson & Tilley, 1997; Wong et al., 2016) thus involves iteratively toggling between data and tentative theories (Haynes et al., 2021) and concurrently testing emergent theories against relevant prior research.

This repetitive back and forth of reflecting on data and developing CMOs, called retroduction in realist evaluation (Hayes et al., 2021), enables researchers to go “behind” and “beneath” observed data patterns to uncover the causal forces driving outcomes (Hoddy, 2019; Lewis-Beck et al., 2004). The focus on intangible forces is important because while seemingly obvious reasons for a specified outcome may be valid (e.g., time and number of required EHR “clicks” to make effective use of tools may inhibit tool desirability) they often do not completely explain outcomes (e.g., low tool uptake). Retroduction enables “trying on” multiple theories to explain the complex interactions of context, program features, and social actors.

For this study, we applied a well-defined set of retroduction steps to the discrete task of post-pilot tool revision. Steps include: (1) describe patterns of events; (2) imagine what might account for the observed patterns; (3) eliminate explanations that do not apply; (4) identify the most likely causal explanations; (5) revise earlier findings in light of new data analysis; and (6) remain ready to imagine new explanations (Alderson, 2021). Following these steps, we first quantitatively described patterns of tool use, then re-engaged with previously collected qualitative data to search for unstated clinical needs and preferences that might explain those patterns. This led us to notice certain tensions (e.g., seemingly contradictory but simultaneously held ideas about the impacts of using the EHR tools) present in provider and staff statements. In response to these tensions, we then framed subsequent data collection and analysis to gradually refine our understanding of the needs met by effective tools that led to their acceptance and use. The tools were then revised to meet these needs to the extent possible. This process is described in detail below and illustrated using one of the study-developed EHR tools as an example.

COHERE: Contextualized Care in cHcs’ Electronic Health REcords

The overarching goal of the COHERE study was to develop and test EHR-based CDS tools that suggest adjusting care plans in response to patient-reported financial, housing, food, transportation, and/or utilities insecurities. The site for data collection is Anonymous Inc., a nonprofit health technology organization offering a fully hosted and tailored instance of Anonymous Epic practice management and EHR solutions to community-based organizations across the United States. The study began with a six-month period in which clinic advisors guided the development of the EHR tools (Gunn et al., 2023). The resulting suite of five tools included alerts and pre-populated order shortcuts designed to facilitate adjustments to care plans. These adjustments were intended to tailor care plans to better support patients with specific social barriers to manage uncontrolled diabetes or hypertension, e.g., by providing support around obtaining relevant medications, adhering to prescribed medications, or attending follow-up appointments. These tools were pilot tested in three clinics, one from October 2021 to August 2022 and two from January to August 2022. The tools, which included electronic alerts for SDH screening, Z codes (social risk diagnosis codes, aka ICD-10-CM diagnosis codes used to document SDH), medication adherence, care adjustment suggestions, and medication ordering, were then revised (Pisciotta et al., 2025) based on insights derived from a realist analysis of data from the formative and pilot phases prior to the trial phase. Mixed methods data (EHR use and qualitative) collection was used to assess implementation of the tools in the pilot clinics to identify if and how the tools were being integrated into workflows and by whom and explore why specific tools were or were not being used by clinic staff. Patterns of tool use and qualitative statements emerged suggesting a need to revisit the tools’ design. To that end we returned to pre-pilot advisory committee data for clues into what was overlooked or misunderstood about how tools could meet clinical needs. Tensions (described below) between perceived potentiality of tools and clinical values surrounding patient care appeared to be driving our early findings.

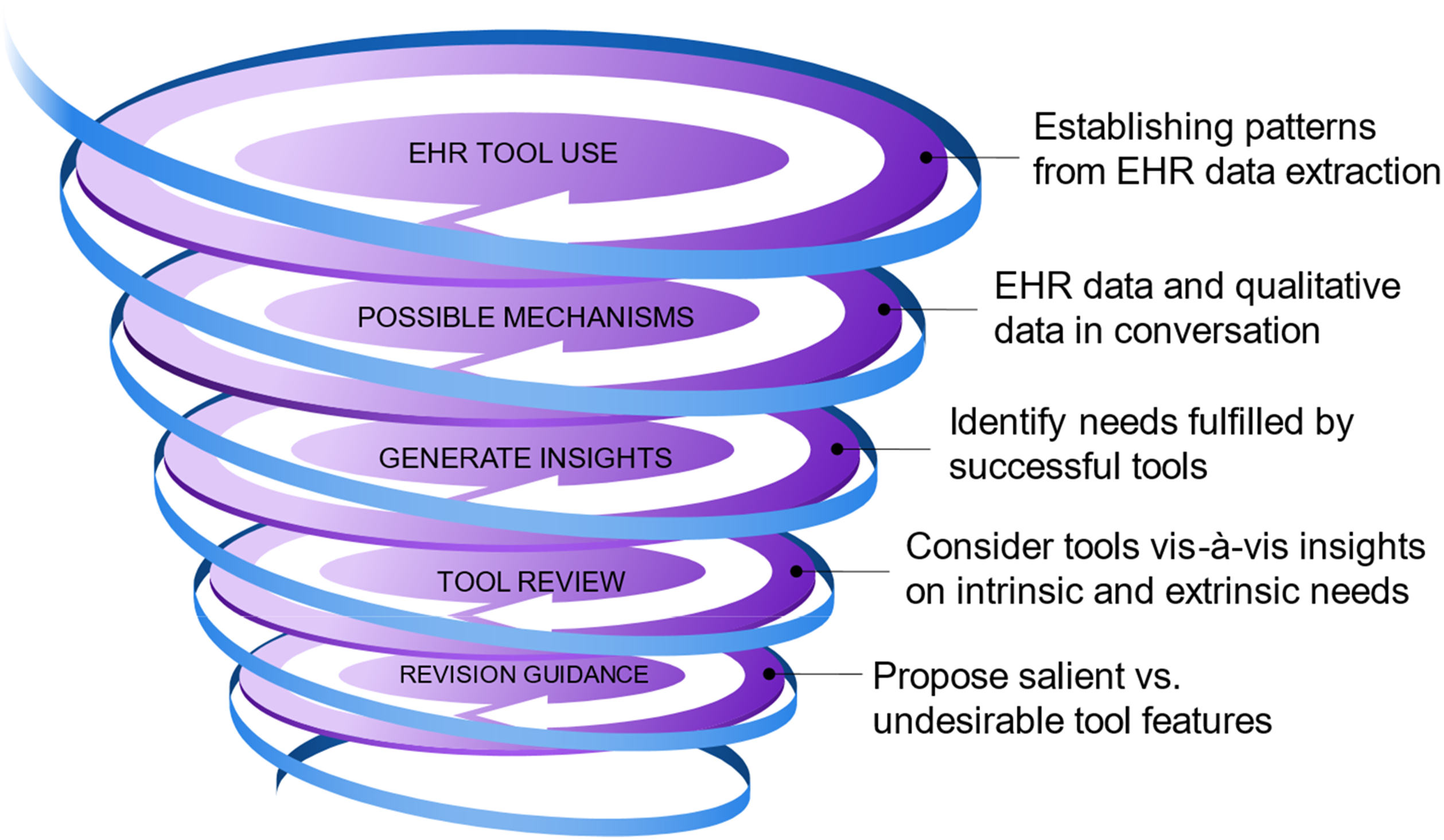

At the point of identifying conceptual tensions (mid-pilot stage), the retroductive analytic process to effect tool changes proved useful (Figure 1). EHR use data were extracted to track the frequency of tool alerts appearing to end users and actions taken in response to the alerts. Concurrently, initial qualitative data on user perceptions and reactions, experiences, and suggestions for improvements were analyzed. Together these data pointed to which tool elements were used and appreciated and why. Underlying, often unspoken needs and preferences now seemed central to the tools’ uptake and became the source of information for a second data coding system (see below). An iterative reengagement with the current pilot- and previous formative phase-data focused next on identifying the functions and needs fulfilled by the most-used tools, and considering whether these same needs were unmet by the other tools and could thus explain their lack of uptake. Retroductive Process Leading to Tool Revision Guidance.

Team Background & Data Collection

Members of the study team come to this work from varied academic backgrounds, including medical anthropology (SM, AB), sociology (MP), public health (SW), epidemiology (RG), statistics (BM, JD) and primary care (LG). We have years of experience studying social-medical integration in settings serving historically marginalized communities (Aceves et al., 2023; Bunce et al., 2023, 2023b; Cottrell et al., 2019; De Marchis et al., 2020; Gold et al., 2017a, 2017b, 2018; Gottlieb & Alderwick, 2019; Gruß et al., 2021; Gunn et al., 2023; LaForge et al., 2018) and in implementation science in community-based clinics (Bunce et al., 2014, 2020, 2023, 2023b, Gold et al., 2015a, 2015b, 2016, 2017a, 2017b, 2019, 2023; Gottlieb et al., 2016; Haley et al., 2021; Gruß et al., 2020; Torres et al., 2023). These experiences and perspectives informed our understanding of study data. Data collection involved mixed methods which combined qualitative interview transcripts and observation field notes with quantitative EHR data. The EHR data measured CDS tool use in the pilot clinics, while qualitative data explored the why and how behind these results as well as the tools’ impact on clinic workflow and communication among care teams and with patients. Following the realist approach, these data were iteratively engaged to explain tool use and generate insights into tool features that should be revised to meet user needs.

Data

Qualitative data collection occurred in parallel with preliminary EHR data extractions and occurred over the course of 2 years. (See Timeline 1: Qualitative Data Collection below.) Data sources were combined to enrich and explain quantitative data gaps and unanticipated findings through qualitative findings (Figure 2). Qualitative Data Collection Timeline.

Engagement Phase - Advisors

The study began with a six-month period of advisory input from OCHIN Inc. member clinic staff interested in guiding the CDS tools’ development. Staff were recruited at standing Anonymous Inc. member meetings and by directly contacting individuals known to be involved in social risk-related activities. Ultimately 12 individuals (Gunn et al., 2023) took part in advising the co-design of the study tools. At monthly meetings from November 2020–March 2021, attendance ranged from seven to 10 committee members plus study staff; two members attended only one meeting, and two others attended only two. The advisors were first presented with possible tool content and asked for feedback; later meetings’ discussions focused on where and how tool content could be most effectively displayed in the EHR (Gunn et al., 2023). Meetings were recorded with permission and key passages transcribed. Qualitative team members observed the meetings and documented committee interactions and reactions.

The committee meetings were intended to generate guidance on tool content and format. Individual interviews with 11 of the 12 committee members were also conducted to expand our understanding of the use of patient-specific social risk data in care planning, with a focus on how that staff member uses/would like to use such information in clinical decision-making. Interviews included: (1) a think-aloud regarding care decisions based on clinical vignettes; (2) current role of social risk data in care decisions and the decision-making process (perceived value and barriers to use); and (3) current or suggested care plan adaptations. The interviews were exploratory and semi-structured; specific questions and topics were tailored to interviewee’s staff role and the content of committee meeting conversations. New ideas and cross-cutting themes (e.g., role of the tools in internal team communication) from the interviews were brought back to the committee for discussion.

Engagement Phase - Patients

The qualitative team conducted a 1-hour discussion with Anonymous Inc.’s Patient Engagement Panel, a group of individuals with experience receiving care at community-based health centers that consults on Anonymous Inc. research. The goal was to explore patient experiences and wishes around potential care adjustments due to social risks – how they feel about it, their prior experiences, how they would like their provider to raise the topic, and the type of adjustments to which they would be open. These conversations provided insight into how to support clinicians in conducting these conversations in ways that would be welcomed by patients.

Tool-specific suggestions and requests from all discussions and interviews were shared with the study’s Epic EHR programmer. Broader themes and ideas were brought to and debated at team meetings to inform the overall approach and decision-making that resulted in the pilot tools.

Pilot Phase

The CDS tools designed through the advisor process were tested in three pilot clinics for 8 months. Each clinic participated in a kick-off meeting, online trainings for providers and rooming staff, one formal mid-point check-in and ad hoc informal communications and meetings as the tools were rolled out. Quantitative data measured tool use patterns but there were limitations to what could be captured through the EHR. Data could tell if alerts had fired, indicating an existing need or desired action. Some alerts enabled clicking to accept, dismiss or take an action. Those clicks were trackable but rarely used, leaving no way to determine if users acted on the alert. Analysts attempted to match alerts to actions within the visit but were often unable to determine which actions were in direct response to the alerts.

Qualitative data from the pilot clinics included: (i) field notes and transcripts from all meetings between the clinics and study team; (ii) text from all relevant email discussions; and (iii) transcripts from six interviews total at the three clinics (five providers and one patient care coordinator, all of whom would be expected to interact with the tools). The interviews asked about perceived value of knowing patients’ social needs in clinical decision-making, role of the EHR in supporting inclusion of SDH information in care planning, experience with and perception of the new tools, feedback on fledgling program theories, and reaction to initial ideas about how to revise the tools to better meet clinic needs.

Analysis: From Realism to Tool Revision

The COHERE study was designed to create and test EHR tools that support care teams in providing social risk-informed care (Gottlieb et al., 2019; Pisciotta et al., 2025). The first step was developing the tools through an advisory process; next, they were piloted to explore if, how and why the first iteration of the tools met clinic staff needs. Preliminary quantitative data from the pilot clinics indicated that only two of the tool components were used with any regularity: Z code alerts and medication adherence (Pisciotta et al., 2025). As the pilot tools were developed to meet the advisors’ stated wishes, we used a realist retroductive analytic process to clarify the intangible forces facilitating or challenging their uptake in practice. By identifying these underlying forces, we hoped to provide guidance on how to revise the tools to better meet staff needs.

The retroductive approach comprised the following steps: (1) Identify which elements of the tools were used and appreciated at the three pilot sites, using quantitative and qualitative data as described above. (2) Reengage with data (detailed below) to determine which functions and needs the effective tools fulfilled, as distinct from those with little or no uptake. (3) Revisit the unused tools to determine if they could be revised to meet the needed functions identified in step two. (4) Revise as possible or remove specific elements from the tools.

To identify the needs filled by the effective tools we first sought to understand the unseen forces underlying staff reactions to and use of the tools. To that end, during the study advisory phase we employed a traditional content analysis (MacQueen et al., 1998) approach to coding; codes included topics such as “Role responsibilities and divisions,” as well as workflow, EHR and data codes (see Appendix A for Content Codebook). Once the pilot EHR use and initial qualitative data indicated that many of the tools were not meeting clinic staff needs, we reengaged with the advisor phase and existing pilot qualitative data to look for elements potentially missed in that analysis. Reviewing the data with this new knowledge illuminated, certain tensions emerged between the views that clinic providers and staff held about the intent and potential impact of the tools and their values around good care (and how tools may impact that care). The tensions fell into three categories. The first tension was between a perceived need for EHR tools to facilitate SDH-related documentation for inter- and intra-team communication and funding versus a feeling that EHR prompts regarding care decisions threaten the user’s autonomy, sense of self as a care provider, and patient-driven care. The second was between a belief that social risk information is critical to care versus the sense that documenting this information (especially in the essentialized, categorical form required by the EHR) could bias care by having potentially stigmatizing patient information in the patient’s chart. The third tension was between the desire to understand a patient’s life versus a desire to not experience information and documentation overload, as too much information becomes “noise”.

These tensions were the starting point for a retroductive approach to analysis, in which the data – and prior understandings from the advisor and pilot study phases – were repeatedly re-queried as framed by this new perspective. Re-querying took two forms. First, we revised the pilot interview guide to directly ask about the identified tensions. As clinic staff interviews were conducted toward the end of the pilot period, these new questions were asked of all interviewees (see Appendix B for Interview Guide). For example, related to the first tension between the perceived need for tools to help with documentation alongside concern about the impact on patient-centered care, we asked: “We think we’re hearing a concern about tools driving care rather than patient needs driving care. What would you want to see in the tools to facilitate your relationship with the patient and what potentially breaks the connection?” Interviewers then probed more deeply into possible tensions between the desire for pragmatic workflow and documentation support and provider autonomy and visions of what it means to provide good care. If these concepts arose at other points during the interview, they were explored at that time.

Concurrently, we created a new coding schema organized around the theorized tensions (see Appendix C for Tentative Theories Codebook). The new codes facilitated a more holistic, narrative approach to the data that enabled a greater understanding of the mechanisms underlying staff response to and use of the tools. We kept a few more traditional content codes (e.g., non-hypothetical discussion of pilot tools; clinic context) to enable retrieval of specific information. Following the example above, one of the new codes addressed the tension around documentation, communication, and patient-centered care:

Brief Definition: Good Care #2: CHC work as a calling among providers and paraprofessionals; contextualized care, patient-centered care and tools for ease without driving interactions.

When to Use: Can represent a tension between wanting and needing tools (for ease, for funding, for recording; info in right place at right time, better visualization) and sense of being as a provider (vision of care, of medicine and their role in it; supporting/encouraging patient autonomy). Tools for ease (reduce mental work)/tools to support adjustment; don’t need tools to know/act (threat to self): already doing the work of adjustment or it’s domain of others, need culture shift not tools; patient-centered care: focus on patient-clinician relationship as foundational with focus on patient priorities/autonomy and individualized care; humility around what provider can and cannot achieve. To flag data related to the distribution of work across care teams; acknowledges provider belief that including patient social risk in care is important yet doesn’t have to be them doing it; a way of sharing the load within a care paradigm that sees whole person care as important while protecting provider time and mental health.

All advisor and pilot phase data were then re-coded per the new schema alongside regular discussion among researchers of ideas that were surfacing. In this process, two new thoughts emerged about our data and analysis approach. First, during the initial analysis, even when a statement hinted at forces that might impact staff reactions to the new tools, we had inadequate information to translate those hints into guidance for tool development. Most data in this category were originally coded as “Patient” (an original content code that emphasized patient engagement and provider-patient relationship) and “Philosophical” (which focused on issues related to stigmatization, ethics and policy). Second, the original coding sometimes focused on a concrete aspect of the statement but missed the bigger idea beneath the surface. In many instances, re-coding the data with the broader, more theory-based codes helped bring the underlying tensions and trade-offs into focus, deepened understanding of the often-tacit needs of individuals and care teams in the CHC environment and pointed toward pragmatic recommendations that ultimately guided revision of the EHR tools for the trial phase.

Following the thread of the examples above, this statement from a CHC provider was originally coded at “Patient”. And so kind of one approach I take with someone, with all patients really but particularly putting this thinking cap on for patients who I have suspicion for trauma is really that they’re the boss. I mean I tell this to my patients on the regular but “You’re the boss, what is it that I can do for you today?”

In another example, this statement from the director of a social risk screening program was originally coded at “Roles and Responsibilities” in reference to role distinctions within care teams: I think there’s a lot of trust with most teams, … It’s like, “Oh I passed along the baton for the things that you can help with. I trust that you’re going to help with them. And if for some reason that’s not working you’ll let me know.” But otherwise I’m going to assume it’s done or at least in progress.

Once the advisory data were re-coded, and pilot data collection was complete and coded, we reviewed the three tension-based codes in their entirety for guidance on how best to revise the EHR tools. Our aim was to strike a balance between pragmatic staff needs and deeply held values about what it means to provide “good care.” We also cross-referenced these ideas with the explicit requests of staff from pilot clinics. The quotes above, for instance, spoke to the need for tools that did not appear to supersede patient and provider autonomy (quote #1) and the need for tools that supported teamwork and data sharing in an environment in which there is never enough time (quote #2). We took our data findings at face value (i.e., appreciated the congruence of EHR use data and qualitative statements as to why certain tools resonated with clinical staff) and chose not to ask research participants to theorize about non-useful tools. Instead, in our analytical process we reflected on tools that were not used to confirm and validate user experiences associated with effective tools. CDS tools that did not fulfill the needs and values of our participants were meaningful to our retroductive process insofar as they provided additional explanation of why certain tools were used. Ultimately, we generated the following tool revision guidance: CDS tools are useful because they support staff to document and communicate about patients’ social risk information and care adjustments already being made or considered. Rather than serving as reminders to make care adjustments (which some providers perceived as being told how to do their jobs), the tools should facilitate documentation of social risks in a way that each tool fulfills as many of the following functions as possible: 1) provides a standardized prompt/clue that acts as a shared shorthand and cue for action; 2) helps to distribute work; 3) facilitates ease and consistency of documentation; and 4) can be used for multiple purposes.

We again followed four retroductive steps to develop tool guidance: step 1, identify patterns in the form of used and appreciated electronic tools; step 2, engage and reengage with the data in search of the intangible needs and perceptions behind the tool use patterns; step 3, apply insights around the forces driving tool use to reassess and realign the lesser or unused tools to fulfill needs established in step 2; and, step 4, revise or remove elements based on findings (and prepare to imagine new explanations during the trial phase). In an example of how our learnings were applied, the tensions noted above proved useful as we explored why the “Z code alert” was popular, as follows. In retroduction step 1, quantitative data showed that clinic staff used alerts that facilitated a one-click addition of Z codes – ICD-10 diagnosis codes that identify non-medical influences on patient health, including social determinants of health – to the EHR problem list; interviewees enthusiastically endorsed this tool during interviews. Reviewing the formative and pilot data showed how the tool balanced competing values and needs, amplifying its utility (retroduction step 2). The Z codes relevant to each positive social risk were pre-populated in the alert and could be customized to a specific clinic’s needs; for example, in some cases a specific Z code was required for reimbursement purposes. This facilitated documentation by clinic support staff and removed the task from the provider workflow [distribution of work]. The one-click addition of Z codes expedited and simplified documentation, and their placement on the problem list ensured that providers would see them. The use of standardized Z codes avoided using time-consuming free text [ease and consistency of documentation], and the use of standardized diagnoses eased staff fears of using language that would negatively bias future care [standardized cues].

Once on the problem list, the Z codes acted as shorthand for challenges faced by the patient, facilitating communication between care team members and between provider and patient. The use of Z codes to make information visible rather than recommending specific care enabled flexible use by different care team members without imposing judgment regarding “proper” care [standardized cues]. The same Z codes could also be used to improve financial reimbursement (e.g., through risk stratification payments from Accountable Care Organizations) and to produce aggregate population-level data for advocacy [multiple purposes]. We applied a similar process to the second EHR tool from the study that participants used and appreciated and found that it fulfilled similar needs. These results were then translated into criteria by which we evaluated tool revisions and removal in preparation for the trial (retroduction steps 3 and 4).

Concluding Discussion

In the context of conducting social risk-related CDS tool design in preparation for trial, we used a realist-informed approach that emphasized retroduction. Though realist evaluation is typically applied to understand how, why and for whom an intervention succeeds or fails overall (Van Belle et al., 2023), in this case study, we applied retroduction to a bounded, mid-study task. It proved useful in identifying below-the-surface forces that affected tool desirability and use, which then guided the revision of an EHR tool intervention prior to its formal trial.

The initial review of pilot data suggested that many of the tools designed in the advisory process were not used by staff in their daily workflows. Although the advisory process generated meaningful direction for the original tool design, pilot study participants (some of whom participated in the advisory process) described being eager to use social risk data, but the piloted tools were not embraced as expected. Realist methods enabled us to broaden our inquiry to the impact of the intersection of clinic context and culture (e.g., workflows and expectations around care team roles and responsibilities), care team relationships and modes of communication, patient burdens, facility with and desire to use EHR tools, and motivations to deliver social care on CDS uptake.

Using realist retroductive analysis helped shift our attention beyond surface explanations for tool (non-) use (e.g., “not enough time” or “too many clicks”) toward the impact of staff values, motivations and expectations for “good” care on their reactions to the tools. Acknowledging these forces yielded insights that deepened understanding of the pragmatic needs of end-users. Questions related to new insights were added to interviews conducted as part of the full realist evaluation to test these theories, and our codebook was revised to account for emerging understandings and program theories. By re-engaging with advisory and pilot data, we saw that care teams gravitated toward and appreciated tool elements that were more likely to elevate patient needs easily, effectively and consistently in the EHR, distribute clinical work, provide useful clues about patients’ social needs to inform care, and be used for multiple purposes.

A valuable piece of the retroductive process in this case was iteration from data content coding to theory coding. Data that had first been coded as “Patient,” “Roles & Responsibilities” and “Philosophical,” for example, took on greater depth in the new coding scheme. The concept of “CHC Calling” drew attention to previously unseen care team values about relationships and patient and provider autonomy, and the tensions between those values and having EHR tools guide care recommendations. The process did not simply involve applying new codes to old and incoming data but instead enabled a generative approach to re-exploring those data segments. Once there, circumstantial and relational themes surrounding which tool features appealed and worked for users became clear to the point of issuing guidance and revising the tools (as described in (Pisciotta et al., 2025) for trial. We recommend that researchers engaging in realist evaluation, particularly across multiple phases of intervention implementation, intentionally apply retroduction to mid-study development tasks. In this way, realist principles can be applied in more traditional ways – for program synthesis and review – while simultaneously guiding on-going intervention design and impact. Under real-world circumstances in which intervention studies place demands on already burdened clinical teams, the steps of retroduction can be harnessed to produce effective tools prior to implementation and, we hope, thereby contribute to higher tool acceptance and sustainment.

Supplemental Material

Supplemental Material - Applying Realist Retroduction to EHR-Based Clinical Decision Support Tool Development

Supplemental Material for Applying Realist Retroduction to EHR-Based Clinical Decision Support Tool Development by Suzanne Morrissey, Arwen Bunce, Jenna Donovan, Brenda McGrath, Laura Gottlieb, Maura Pisciotta, Shelby Watkins, and Rachel Gold in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Applying Realist Retroduction to EHR-Based Clinical Decision Support Tool Development

Supplemental Material for Applying Realist Retroduction to EHR-Based Clinical Decision Support Tool Development by Suzanne Morrissey, Arwen Bunce, Jenna Donovan, Brenda McGrath, Laura Gottlieb, Maura Pisciotta, Shelby Watkins, and Rachel Gold in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Applying Realist Retroduction to EHR-Based Clinical Decision Support Tool Development

Supplemental Material for Applying Realist Retroduction to EHR-Based Clinical Decision Support Tool Development by Suzanne Morrissey, Arwen Bunce, Jenna Donovan, Brenda McGrath, Laura Gottlieb, Maura Pisciotta, Shelby Watkins, and Rachel Gold in International Journal of Qualitative Methods

Footnotes

Acknowledgments

The research reported in this work was powered by PCORnet®. PCORnet has been developed with funding from the Patient-Centered Outcomes Research Institute® (PCORI®) and conducted with the Accelerating Data Value Across a National Community Health Center Network (ADVANCE) Clinical Research Network (CRN). ADVANCE is a Clinical Research Network in PCORnet® led by OCHIN in partnership with Health Choice Network, Fenway Health, University of Washington, and Oregon Health & Science University. ADVANCE’s participation in PCORnet® is funded through the PCORI Award RI-OCHIN-01-MC.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this publication was supported by the National Institute on Minority Health and Health Disparities of the National Institutes of Health under Award Number RO1MD012886. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Ethical Statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.