Abstract

The OPTIMUM trial is a multisite pragmatic randomized clinical trial of an adapted Mindfulness Based Stress Reduction (MBSR) program for people with chronic low back pain in primary care settings provided via telehealth group medical visits. Researchers conducted fifty-nine exit interviews at the end of the intervention to inform the ongoing conduct of the trial and to better understand patients’ experiences. This manuscript describes a pragmatic approach to the qualitative analysis of exit interviews within a pragmatic clinical trial. The analysis included three important pivots. First, researchers conducted a process evaluation using a rapid approach called the Lightning Report method. Second, team-based approaches to qualitative analysis were utilized to pair experienced and inexperienced qualitative researchers. Third, based upon principles from Big Qual methodology, a codebook was developed and applied to provide an aerial overview of the data in preparation for more in-depth exploration. Based upon these pivots, the process evaluation provided actionable results in a timely fashion, team members increased analytical skills, and multiple analyses are being applied to the data set. By describing the pragmatic decisions to pivot approaches to qualitative analysis, this manuscript contributes to existing literature regarding rapid qualitative analysis methods for process evaluation in pragmatic clinical trials, team-based mentorship in large trials, and applications from Big Qual for large data sets.

Keywords

Background/Introduction

The OPTIMUM trial (Optimizing Pain Treatment in Medical Settings Using Mindfulness) is a large, multisite pragmatic clinical trial of an online, adapted Mindfulness-Based Stress Reduction (MBSR) program for primary care patients with chronic low back pain (Greco et al., 2021). Pragmatic clinical trials (PCT) are designed to measure effectiveness of evidence-based interventions in real world settings (Weinfurt, 2017). Ideally, PCTs are embedded in learning health systems designed to incorporate findings into practice and inform further research (Tuzzio & Larson, 2019). In this manner, PCTs embedded in learning health systems expedite implementation of evidence-based care in clinical settings. As opposed to randomized clinical trials, PCTs are conducted across varied settings, among more heterogenous populations, and they are flexibly adherent to intervention protocols (Weinfurt, 2017). This variability is compounded by the often complex, multi-level nature of health services interventions.

This manuscript outlines the qualitative methods adopted in the OPTIMUM trial by a team of researchers based at three academic research centers and applied to exit interviews conducted with participants in the intervention arm of a large PCT (see Figure 1). The initial qualitative analysis plan was to conduct one thematic analysis of participant exit interviews to better understand participant experience. In this manuscript, we describe three pivots in our qualitative analysis plan. First, we describe why and how we applied the Lightning Report method to these data. Second, we provide details regarding the mentorship process and training plan for research staff. Third, we apply principles of Big Qual to the project so that the breadth and depth of the data can be more fully appreciated. Three pivots to analysis plan of participants’ exit interviews in OPTIMUM trial.

Process Evaluation and the Development of Rapid Qualitative Analysis Methods

The first pivot to our qualitative analysis plan was the decision to conduct a process evaluation by means of a rapid qualitative analysis. Rapid qualitative analysis methods are relatively new forms of qualitative analysis, and they are designed to give timely, actionable feedback in a rigorous and reproducible manner (Johnson & Vindrola-Padros, 2017; Vindrola-Padros & Johnson, 2020). These methods have been utilized during public health emergencies to inform process evaluation in clinical trials (Horwood et al., 2022; Suchman et al., 2023). Given inherent variability and flexibility within PCTs, there is a need for process evaluation methods that characterize this variation, inform changes in intervention protocols, contextualize study outcomes and facilitate translation of research findings into practice. Standardized methods of defining, conducting, and reporting process evaluation within PCT designs are lacking (French et al., 2020). This manuscript contributes to the development of process evaluation methods in PCTs by describing the application of the Lightning Report method to participant exit interviews.

Team-Based Science

The second pivot to our analysis plan was the decision to mentor research team members in thematic analysis. The work of Fernald and Duclos, McAlearney and O’Cathain demonstrates that to maintain rigor and reproducibility, team-based qualitative methods require planning and training (Fernald & Duclos, 2005; McAlearney et al., 2023; O’Cathain et al., 2014). This manuscript contributes to the literature on team-based qualitative analysis methods by describing the process of mentoring team members throughout the analysis.

Lessons from Big Qual

The third pivot to our analysis plan was to apply lessons from Big Qual to summarize the breadth and depth of the data set (Brower et al., 2019; Chandrasekar et al., 2024; McAlearney et al., 2023). Brower defines Big Qual as, “data sets containing either primary or secondary qualitative data from at least 100 participants analyzed by teams of researchers, often funded by a government agency or private foundation, conducted either as a stand-alone project or in conjunction with a large quantitative study (Brower et al., 2019).” Our study included fewer than 100 participants (59 total), but the method was still a helpful model for our work. Biq Qual methods are based on the theory that when working with large data sets and large research teams, team members need to be able to understand and build off the qualitative analyses of their team members. In this manner, portions of the same dataset may be analyzed by different members of the team at multiple points in the life of the study and with varied research questions applied to the data. Qualitative data management software applications such as ATLAS.ti, etc. (version ATLAS.ti 24) can facilitate this type of team-based approach. This manuscript contributes to the literature on large qualitative analysis projects by describing the development and application of a codebook to a large data set in preparation for multiple further analyses.

In summary, this manuscript adds to existing methodological knowledge by outlining an iterative, team-based approach to the analysis of a large data set within an ongoing PCT. Initial findings, actionable items, codebook development, and how the process informed future qualitative analysis plans are delineated.

Methods

The Design of the Pragmatic Clinical Trial

In the OPTIMUM trial (4UHAT010621-02), there were 450 participants recruited from three primary care settings including: a safety net hospital in Boston, Massachusetts; a federally qualified health center in central North Carolina; and a large academic health system in Pittsburgh, Pennsylvania. Participants were randomized to usual care or usual care plus an online, adapted MBSR program. The intervention was offered online via telehealth and included a medical provider and an MBSR instructor in each group. Groups met weekly for 8 weeks, and patient reported outcome measures were collected at baseline, following the 8-week program, and at 6 and 12-month follow-up. The study was approved under a single Institutional Review Board of the University of Pittsburgh.

A Community Advisory Board consisting of nine individuals with lived experience with chronic low back pain, mindfulness instructors, pain advocacy group representatives, and individuals with experience working in primary care met monthly beginning in the second year of the trial (Morone et al., 2024). The Community Advisory Board provided ongoing feedback on study recruitment and retention procedures, data interpretation, dissemination of findings, and planning for next steps.

The standard MBSR program was adapted to a virtual group medical visit program. Adaptations included brief visits with a health care provider within each session, chair-based mindful movement, and the removal of a retreat day. In addition, the 8 weekly sessions were 2 hours long in place of the standard MBSR curriculum which is 2.5–3 hours long.

Initial Interview Guide Development

Researchers used an interview guide from a previous randomized control trial of MBSR as a first draft. Researchers from the three sites then met on multiple occasions to iteratively design the semi-structured interview guide (Appendix A). The exit interviews were part of the original trial design as was the consent process. The purpose of the interviews was to gather information from intervention participants not captured by patient-reported outcome measures. Based on initial qualitative analysis, the team decided to modify the interview guide. The second guide (Appendix B) placed additional emphasis on the ways in which mindfulness was or was not incorporated into daily life.

Exit Interviews

Individual participants from the first six cohorts at each study site were invited by phone, text, and email to complete an exit interview via tele-conferencing video platform upon completion of the intervention. Verbal consent was obtained to record the interviews. Trained researchers from the analysis team used a semi-structured interview guide to prompt participant reflection regarding their experiences learning mindfulness skills in an online group medical visit format. At one site, interviews were conducted by a staff member of a qualitative research service at the site. This interviewer was unknown to the participants (to mitigate potential bias) and was supervised by a study investigator. At the other sites, some participants knew their interviewer due to the interviewer’s role in study recruitment and retention. The different types of interviewers introduced variation in collection methods, which could affect results. However, in a large multi-site trial it was impractical for all exit interviews to be conducted in the same manner. Parts of the analysis occurred simultaneously with interview collection which could bias interviewers. The interview guide helped to mediate this influence. The interviews varied, lasting about 30 minutes to 1 hour. There were no repeat interviews. Interviews were conducted via the videoconferencing platform Zoom. Researchers conducted 59 interviews. Transcripts were transcribed by an outside company and uploaded to ATLAS.ti for analysis. Transcripts were not returned to participants, and participants did not provide feedback on the findings because there was concern about the frequency with which participants were already asked to provide data (monthly for 12 months). Interviews continued until data saturation was reached (Saunders et al., 2018).

Team Formation

From the OPTIMUM research team of about thirty members, a core team of six was assembled. The analysis team included three female Assistant Professors experienced in qualitative analysis (IR, CL, JLB) who all trained in research methods at the University of North Carolina in Chapel Hill and who have collaborated on previous projects. Three team members with no experience in qualitative analysis joined the team: a Research Specialist (EH), a Research Coordinator III (RR), and a Senior Research Project Manager (JB). No team members had prior relationships with any exit interview participants before they were enrolled in OPTIMUM.

First Pivot to Rapid Qualitative Analysis Method

One co-investigator (JLB) began by reading ten transcripts. She noted that the interviews contained information salient to the way the trial was being conducted, that commonalities existed in the ways participants from different study sites described practicing mindfulness, that a range of socio-economic statuses were represented, and that the ongoing exit interviews were a rich and expanding data source. After discussion with the team, it was decided to first conduct a rapid analysis of the exit interviews since they contained information that could inform the conduct of the clinical trial.

The Lightning Report Method

The team decided to utilize a rapid qualitative method called the Lightning Report method to analyze twenty-nine completed interviews and to provide timely feedback to the full research team (Brown-Johnson et al., 2020). The Lightning Report method utilized a structured form (see Appendix C) to extract and synthesize data (Brown-Johnson et al., 2020). The Lightning Report structure included three questions: What is working? What needs to be changed? What is next (i.e. recommendations)? While the original Stanford Lightning Report method was designed to be used before, during, and after data collection, the team adapted the method to be used with an existing data set. Rather than taking notes during the data collection episode, two team members were assigned to take notes using the Lighting Report format for each interview transcript.

Organizing the Team and Conducting the Rapid Analysis

Given the size of the dataset, the time sensitivity, and the interest of less experienced researchers, the team was structured in dyads pairing each experienced qualitative researcher with a researcher new to qualitative research. The three team pairs were assigned 10, 10, and 9 transcripts respectively. Team members were dispersed geographically and met via online teleconferencing technology.

Organization and Workflow of Rapid Qualitative Analysis.

Second Pivot to Thematic Analysis: Team Dyad Process

After completing the rapid qualitative analysis, team members consulted with an experienced qualitative researcher and educator at the Odum Institute at the University of North Carolina at Chapel Hill to plan further analysis in ATLAS.ti, and to provide qualitative analysis training for team members. Published examples of large team-based qualitative analysis projects also provided guidance (Fonteyn et al., 2008; Guise et al., 2017; Laditka et al., 2009; McAlearney et al., 2023; Schexnayder et al., 2023). The analysis team approached the dataset with the research question, “What was this experience like for participants?”, and they decided to conduct a thematic analysis (Braun et al., 2019).

The six team members (three pairs) met jointly via tele-conferencing technology to generate inductive codes by examining initial transcripts line by line. Then, each pair developed their own working style with a combination of joint analysis and individual analysis followed by joint discussion. Pairs generated inductive codes from their initial ten transcripts. The six team members then discussed and combined codes over multiple tele-conferencing sessions. The seventh team member, the Principal Investigator (NM), was kept abreast of the analysis. Ultimately, a codebook containing twenty-two codes was created. Three experienced researchers (IR, CL, JLB) double-coded five transcripts to confirm their consistent use of the codebook. At this point, the data set had grown from 29 to 59 transcripts, and the remaining transcripts were divided among dyads to complete coding. Once the researchers new to qualitative research were consistently applying the codebook correctly, they coded the remaining transcripts independently. Questions were brought to the team for discussion.

Third Pivot: Lessons from Big Qual

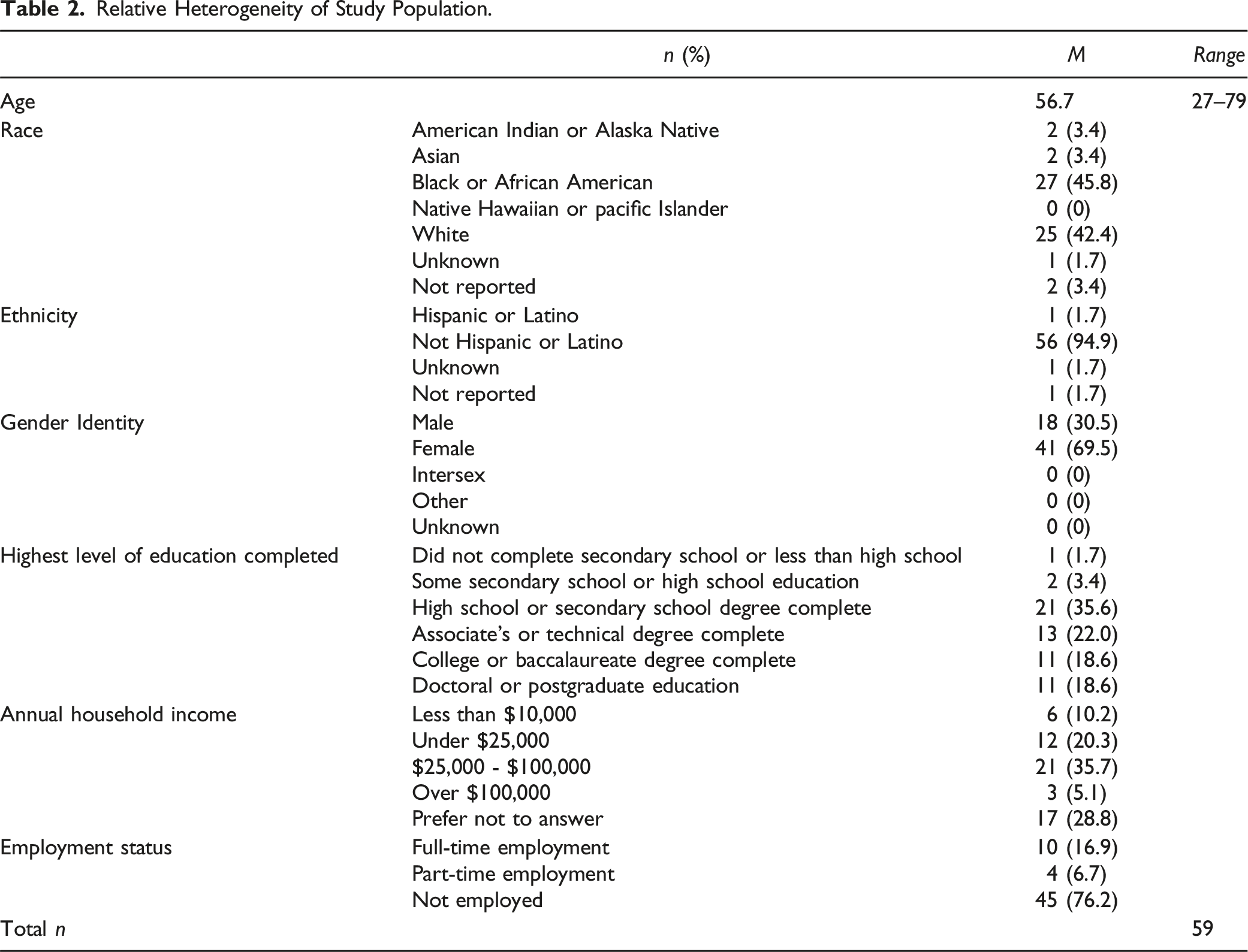

Relative Heterogeneity of Study Population.

To widen the analysis team, the codebook and coded transcripts were shared with the full research team and potential lines of further analysis discussed. To date, several small teams have formed to analyze different coded segments of the transcripts. In this manner, the team pivoted from a single thematic analysis of the data set to utilizing a codebook to first code the data and then invite multiple analyses.

When analysis revealed that data saturation had been reached, the team decided to stop collecting exit interviews. At the time the exit interviews ended, there were 59 transcripts. Remaining transcripts were divided among team members to code independently.

Results

The results of the application of these three methodological approaches: rapid analysis, team-based training, and Big Qual are presented here. The findings from the Lightning Report analysis are presented in Appendix D.

The use of a rapid qualitative method resulted in the extraction of actionable data that informed the ongoing clinical trial. For example, based upon these findings, the team implemented the following action items:

They explained the term mindfulness in more detail and shared the evidence base for mindfulness meditation and chronic low back pain. Additionally, they emphasized that mindfulness was an active treatment that required time and practice to see benefits. Questions and further discussion about how to describe mindfulness occurred during full team meetings and during joint research assistant meetings with the Project Manager. Other suggested modifications were discussed as a full team and implemented at each site.

The team welcomed increased sensitivity around the term “yoga”, the reminder to allow space for group member interaction, the importance of identifying researchers and their roles, and the value of building in break time.

The team agreed to emphasize that mindfulness is an active treatment that requires practice to see benefits. During full team meetings, the team discussed using the term “mindfulness meditation” to avoid confusion with the words medi-tation and medi-cation. Lastly, calendar invites and additional text reminders to participants of upcoming sessions were implemented.

Team-Based Thematic Analysis

Codebook Containing Program Components, Participant Characteristics and Intervention Impacts.

After applying the codebook to the complete dataset, the research team reflected on the experience. Members noted that the dyads helped form relationships early in the clinical trial, and each dyad learned from one another. The process of couples reviewing coded transcripts together took more time. However, that initial investment has continued to benefit the full team. The junior qualitative researchers applied the skills learned during this project to other datasets in the OPTIMUM trial.

Big Qual Theory Results

When the full team of approximately 30 researchers was presented with the codebook, they found it to be a helpful tool to conceptualize the breadth and depth of the data. Thoughtful conversation ensued, spawning further research questions. Some future research questions to explore within the exit interview dataset include: How has the intervention changed, or not changed, people’s perceptions and behaviors? Are participants learning and practicing mindfulness? What does that look like for them? In what way is telehealth mindfulness training different than in-person training? What are potential mechanisms of action in the relationship between mindfulness and chronic pain? What is the nature of people’s religious experience as compared to mindfulness? How does socioeconomic status influence people’s experiences with the intervention? Stemming from these discussions, more researchers have joined the analysis team, and several manuscripts are forthcoming. For example, researchers applied a reflective thematic analysis (Braun et al., 2019; Nowell et al., 2017) to explore how online group mindfulness training differed from in-person training (Barnhill et al., 2025). Other researchers are applying a thematic analysis to examine quotes pertaining to confidentiality and the role of the provider in the group.

Next steps include importing demographic data into ATLAS.ti to enable sub-analysis by race, ethnicity, age, gender, and other characteristics. Future findings at the conclusion of the OPTIMUM trial will also present opportunities for mixed methods analysis.

Discussion

Our approach to the analysis of participants’ exit interview data in the OPTIMUM trial was pragmatic and it was built upon qualitative methods scholarship pertaining to rapid methods, team-based training, and lessons from Big Qual. The rapid analysis was an efficient means to review a large quantity of qualitative data and extract key information. This method is well suited for a process evaluation that seeks to understand what is working about the clinical trial, what is not working, and what needs to be changed. Key findings from the rapid qualitative analysis informed the conduct of the trial (clarifying expectations and trial details during the consent process, identifying and including research staff who are observing the intervention), and trial retention (the importance of respectful and welcoming research staff). Findings from the rapid analysis informed implementation of the trial such as sending additional reminders and creating additional breaks during the intervention. Since PCTs are a relatively new trial design, such feedback is necessary to better understand the ethical and implementation components of PCTs (French et al., 2020; Nicholls et al., 2018, 2019).

While Brower defines Big Qual as team-based analysis of data sets containing more than 100 participants, there are similarities between Big Qual studies and this analysis of 59 participants (Brower et al., 2019). For example, a structured approach including small teams and frequent communication allowed for the decentralization of the analysis. In contrast to large teams such as McAlearney’s that contained 25 coders, the relatively small size of this project leant itself to one-on-one mentorship and co-creation of the codebook (McAlearney et al., 2023).

Future Analysis

Clinical research has historically been overrepresented by white, employed, upper-middle class participants (Kelsey et al., 2022; Yancey et al., 2006). The goal of a PCT is to test an intervention under usual conditions, including a more representative study sample (Tuzzio & Larson, 2019). In this context, the addition of qualitative methods provided insight into the experiences of underrepresented populations. This was evident in the OPTIMUM trial comprising patients from safety-net community clinics and academic centers. The exit interview data was comprised of primarily unemployed persons of which half completed high school and at least one third had a household income of less than $25,000 per year. Future qualitative analysis of this data will explore the role of socio- economic status in MBSR training. Since half of the sample identified as Black or African American, there is an opportunity for further qualitative analysis to better understand the experiences of this historically underrepresented group.

Limitations

While the rapid analysis method was useful, it provided only a cursory understanding of the rich and complex information contained in the exit interviews. We conducted a rapid analysis of 29 transcripts. We did not conduct a second rapid analysis once the new interview guide was adopted. There could have been actionable data present in the remaining 30 transcripts that would have changed the conduct of the clinical trial, in particular regarding the inquiry into the virtual nature of the intervention. Regarding the team-based structure, including an analyst from all three sites (instead of two) would have strengthened the analysis. Adjusting the approach by rotating pairs of researchers would have given inexperienced researchers more varied experience and reduced potential bias. Additionally, the team-based approach to codebook development was time consuming. Ultimately, the full benefit of the Big Qual approach lies in the future analysis that it will support. The extent of that research is yet to be determined.

Conclusion

This manuscript adds to the body of knowledge on team-based qualitative analysis methods in large multi-site pragmatic clinical trials by describing a pragmatic stepwise approach to qualitative analysis involving three key pivots. The pivot to a rapid analysis method for the process evaluation resulted in changes to the ongoing clinical trial. The team-based training method built capacity among team members and generated additional insights. Lastly, Big Qual theory informed the creation of a coded dataset in preparation for multiple further analyses.

Supplemental Material

Supplemental Material - Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial

Supplemental Material for Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial by Jessica L. Barnhill, Natalia E. Morone, Christine Lathren, Elondra Harr, Jose E. Baez, Ruth Rodriguez, Paula Gardiner, Carol M. Greco, Holly N. Thomas, Susan A. Gaylord, and Isabel J. Roth in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial

Supplemental Material for Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial by Jessica L. Barnhill, Natalia E. Morone, Christine Lathren, Elondra Harr, Jose E. Baez, Ruth Rodriguez, Paula Gardiner, Carol M. Greco, Holly N. Thomas, Susan A. Gaylord, and Isabel J. Roth in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial

Supplemental Material for Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial by Jessica L. Barnhill, Natalia E. Morone, Christine Lathren, Elondra Harr, Jose E. Baez, Ruth Rodriguez, Paula Gardiner, Carol M. Greco, Holly N. Thomas, Susan A. Gaylord, and Isabel J. Roth in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial

Supplemental Material for Pragmatic Approaches to Team-Based Qualitative Analysis of Study Participants’ Exit Interview Data in a Pragmatic Clinical Trial by Jessica L. Barnhill, Natalia E. Morone, Christine Lathren, Elondra Harr, Jose E. Baez, Ruth Rodriguez, Paula Gardiner, Carol M. Greco, Holly N. Thomas, Susan A. Gaylord, and Isabel J. Roth in International Journal of Qualitative Methods

Footnotes

Acknowledgements

Dr Paul Mihas, Odum Institute, University of North Carolina at Chapel Hill for qualitative analysis consultation. The Community Advisory Board for the OPTIMUM study. The National Institute for Complementary and Integrative Health.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported within the National Institutes of Health (NIH) Pragmatic Trials Collaboratory through the NIH HEAL Initiative under award numbers UG3 AT010621 and UH3 AT010621 administered by the National Center for Complementary and Integrative Health (NCCIH). This work also received logistical and technical support from the PRISM Resource Coordinating Center under award number U24 AT010961 from NIH through the NIH HEAL Initiative, and from the NIH Pragmatic Trials Collaboratory Coordinating Center under award number U24 AT009676 from NCCIH, the National Institute of Allergy and Infectious Diseases (NIAID), the National Cancer Institute (NCI), the National Institute on Aging (NIA), the National Heart, Lung, and Blood Institute (NHLBI), the National Institute of Nursing Research (NINR), the National Institute of Minority Health and Health Disparities (NIMHD), the National Institute of Arthritis and Musculoskeletal and Skin Diseases (NIAMS), the NIH Office of Behavioral and Social Sciences Research (OBSSR), and the NIH Office of Disease Prevention (ODP). The content is solely the responsibility of the authors and does not necessarily represent the official views of [Institute, Center, or Office providing funding or oversight] or NCCIH, NIAID, NCI, NIA, NHLBI, NINR, NIMHD, NIAMS, OBSSR, or ODP, or NIH or its HEAL Initiative.

Ethical Statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.