Abstract

Pedagogical research on experiences of learning among students with severe speech and physical impairment (SSPI) is sparse. This may be due to a lack of research-on-research methodology literature about students with SSPI, as they are difficult to find and there are barriers to their participation in mainstream research. Hence, method development is especially important regarding these students, who cannot participate when traditional inquiry methods are used. This article’s objective is therefore to advance method development by means of a retrospective investigation. The empirical findings consist of documented experiences from a previous study of students with SSPI (henceforth, “literacy study”). A computer-assisted email dialogue technique was developed in the literacy study’s pilot study and eventually used in the main study, to investigate the students’ experiences of their literacy development. The aim of this study is to retrospectively and critically examine the scientific trustworthiness of a methodological research approach based on an email dialogue technique used exploratively in the literacy study, to investigate the literacy development among the students grounded in their own experiences, and to contribute methodological experiences gained from that study regarding the relationship between the use of verification strategies and checking techniques. The computer-assisted email dialogue approach was necessary because the few participants were spread over great geographical distances. The approach was developed as an explorative and flexible inclusive research design and was used within the tradition of participatory research. The students in both the pilot and main studies (8–16 years of age) were treated as collaborators rather than research subjects. Both the verification strategies and techniques regarding trustworthiness criteria were found to be important for trustworthiness. The main conclusion, based on our experiences in this retrospective investigation, is that it is necessary to continuously and thoroughly focus on trustworthiness issues throughout the research process to obtain trustworthy findings.

Keywords

Introduction and Background

Based on ideals of equity, there is an urgent need to develop research methods that involve young people with severe disabilities in research projects, especially when their chances of participating in traditional research are small. One such group is students with severe speech and physical impairment (SSPI) who use augmentative and alternative communication (AAC). It is often challenging for researchers to communicate with students who use AAC, making research with them demanding and jeopardizing data trustworthiness. Additionally, when research is being designed for this extremely small student group, which qualifies as belonging to the hard-to-reach research participation category (Chamberlain & Hodgetts, 2018), several other challenges must be addressed, especially in sparsely populated countries with great geographical distances, such as Sweden. Experiences documented from a previous study (Atterström et al., 2021), with a longitudinal research design and with participants in Sweden who have SSPI, serve as the empirical basis for the present article. The previous study (henceforth, “literacy study”) consists of the main study (Atterström et al., 2021) as well as the pilot study preceding it. Experiences from the pilot and main studies will to some extent be addressed together, but mostly separately. Based on the experiences from the pilot study and on Shacklock and Thorp’s (2005) work, a longitudinal research design was considered the best option for gathering data. One-to-one “physical” data collection occasions were deemed to negatively affect participants’ everyday life situations, increase the risk of study dropouts, and yield only a small number of data from each interview. A traditional interview study with physical meetings that would inevitably entail time-consuming travel and substantial costs, especially when longitudinal research was required, was impossible due to economic constraints. Instead, the data collection was based on computer-assisted email dialogues (Dahlin, 2021; James, 2016), which would give the students opportunities to answer more independently and to give more nuanced answers as they were offered reflection time.

As emphasised above, there are several challenges to involving students with SSPI in pedagogical research studies. Interestingly, these students are often part of medical or psychological studies when they visit clinics for treatment (Sinclair, 2004). Sinclair (2004) suggested that this decreases their motivation to participate in other research due to “consultation fatigue”. In such health-related studies, the main focus is often on the participants’ impairment or disability. Furthermore, students with SSPI are often regarded as research subjects or even research objects in such research (Snelgrove, 2007), which may also negatively affect their motivation to participate and requires the use of well-thought-out strategies to motivate them to participate in a study. While medical and psychological research into their health issues is doubtless of great importance for this student population, these students are underrepresented in other studies. Indeed, there is a paucity of research focusing on other aspects of their everyday life situation (Feldman et al., 2013; Zascavage & Keefe, 2007), and of research in which students with disabilities are part of participatory research and viewed as collaborators in designing research. In particular, potential participants who do not use speech for communication, such as students with SSPI, are often excluded from involvement in research (Rabiee et al., 2005) and risk being marginalized and ignored (Upton, 2017; cf. Bagga-Gupta, 2017). Hence, little is known about the experiences of students with SSPI, for example, regarding their literacy development, as few studies have been designed to consider their perspective (Dada et al., 2020; Myers, 2007). According to our research review, there is an obvious need for more research in which participants with disabilities, such as students with SSPI, contribute experiences of importance for their life situation, and participatory research seems to be a viable way to obtain their views (Sigstad & Garrels, 2021; Sinclair, 2004). The literacy study was accordingly designed as inclusive research within the participatory research tradition (Nind, 2014).

This article draws, as described previously, on our methodological experiences from the literacy study of SSPI students’ experiences of their literacy development, based on both the pilot and main studies. This development is crucial in their lives, due to their communicative constraints (Smith, 2014). It should be noted that only a few students with SSPI fulfilled the inclusion criteria to be participants, as literacy skills were required (Atterström et al., 2021). More precisely, to participate, the students should have exceeded the beginner’s phase without any arrest in their literacy development. Such an arrest has been demonstrated in psychological research among nine-year-old students (Dahlgren Sandberg, 2006). In the literacy study, we found it important to interview students who had actually demonstrated ongoing literacy development, without any sign of an arrest, about their progress. A research gap was revealed, as we found no such studies in our literature review and little methodical guidance in the method literature, despite the well-known challenges of including students with SSPI in research. Hence, an unorthodox data collection method had to be developed with which to investigate students’ experiences of literacy development. Here, “unorthodox” meant an uncommon method that would not have been chosen if the participants did not have severe physical impairments that would have prevented them from participating if more traditional inquiry methods had been used or would have made it difficult for them to express their experiences without time constraints or negative physical strain. This method was also considered unorthodox as we found no references in the method literature to computer-assisted email dialogue with participants who require extensive individual adaptations for their communication, such as students with SSPI. The explorative approach was chosen as it is considered especially useful when there is little prior scientific knowledge of a group (Stebbins, 2012).

This article addresses an important issue concerning how to conduct research with participants whose communication prerequisites differ from those of most of the student population in schools. As emphasized above, as researchers have difficulties communicating with students with SSPI, few studies have involved this group and little is known about their learning experiences in general, and even less about their literacy learning, especially in the case of a less opaque orthography, such as Swedish (Atterström et al., 2021). Due to the paucity of research, no specific recommendations for this kind of study could be found in which trustworthiness issues were addressed. Taken together, this indicated the importance of a study with SSPI students being methodologically well planned to be trustworthy.

Here, we have focused on addressing two phases of the research process of the literacy study that were important for trustworthiness. Phase one concerned verification strategies during the time that the study was being planned and conducted. The description of this phase is in agreement with the description of Morse et al. (2002), who emphasized that such verification strategies should ensure reliable and valid data, that is, trustworthiness. Morse et al. (2002) criticized qualitative research as not taking all parts of the research process into critical consideration: in qualitative research, they argued, too much attention is paid to “post hoc criteria of ‘trustworthiness’” (p. 18); they described in the abstract how trustworthiness criteria are only used “once a study is completed” (p. 13), but then on the next page inconsistently stated that they are used “at the end of the study” (p. 14).

This description of a following phase addressing trustworthiness, described here as phase two, is somewhat in agreement with Lincoln and Guba (1986). Lincoln and Guba’s suggested criteria for trustworthiness, credibility, transferability, dependability, confirmability, and authenticity have been accepted by many researchers working with qualitative data, which includes us. For example, we used trustworthiness criteria in the literacy study. Lincoln and Guba (1986) also suggested several techniques that may be used to check whether the credibility, transferability, dependability, and confirmability criteria have been met, and that these techniques may be used to increase the probability of meeting the criteria. Only to some extent did Lincoln and Guba (1986) suggest that checking techniques should be applied after something had been done. Morse et al.’s (2002) criticism seems more directed towards how researchers have applied the trustworthiness criteria, as something done late in the research process. The criticism that qualitative research may emphasize trustworthiness late rather than early in the research process has served as a two-phase structuring device of this, our retrospective study of our own literacy study. Phase one is what happened early on, and phase two, without a definite boundary, is what happened late in the study.

The aim of this study is to retrospectively and critically examine the scientific trustworthiness of a methodological research approach based on an email dialogue technique used exploratively in a previous study (the literacy study) of literacy development among students with SSPI grounded in their own experiences, and to contribute methodological experiences gained from that study regarding the relationship between the use of verification strategies and checking techniques. Three research questions have been formulated in pursuit of that aim: 1. What challenges were encountered by the researchers regarding issues related to the design, preparation, and realization of the literacy study in the endeavour to attain scientific trustworthiness, and with what verification strategies were these managed in phase one? 2. In the endeavour to attain scientific trustworthiness, what trustworthiness criteria were used and why, and what checking techniques were applied to confirm or check the chosen trustworthiness criteria in phase two? 3. Based on our experiences, what verification strategies and checking techniques were found to have support, so that they may be recommended to other researchers who want to develop research methodology for students with SSPI or other groups with similar prerequisites?

Phase One

This phase contains the work on the literacy study focusing on verification strategies to ensure trustworthiness. The phase covers planning, encompassing the pilot study as well as planning and conducting the main study. Initially, as guidance for the reader and to contextualise, we briefly describe the literacy study’s empirical focus. This is followed by the main methodological challenges encountered regarding the study’s research design, preparation, and realization. Next comes a description of the verification strategies tested and elaborated on during a pilot study to address the identified challenges, after which we describe the verification strategies used during the literacy study. The final part of the description of phase one concerns outcomes related to the use of verification strategies.

The Literacy Study’s Empirical Focus

There is a general lack of research on the experiences of students with SSPI, a group in which few learn to read and write (Sturm et al., 2006). A longitudinal study showed that these students’ literacy development stopped at the age of nine, followed by a decrease in IQ at the age of 12. Other studies have shown that only a small proportion of these students’ classroom time is used for literacy activities (Larsson, 2008; Pufpaff, 2008; Ruppar et al., 2011), which indicates an underestimation of their learning potential. The objective of the literacy study (Atterström et al., 2021) was to explore what five students with SSPI, who had gone beyond the beginner’s phase (i.e., phonetic phase) without any arrest in their literacy development, experienced as significant in their continued reading and writing development.

Methodological Challenges of the Literacy Study

At the start of the literacy study project, our initial understanding was that the endeavour to investigate the experiences of students with SSPI would involve several methodological challenges, many of which would include ethical aspects. To identify and better understand such challenges, and eventually decide on verification strategies, a pilot study was designed. Early in the work on the literacy study, based on our review of previous research and on experiences from the pilot study, several critical methodological challenges were identified: 1. Students with SSPI constitute an extremely small population in Sweden and qualify as a hard-to-reach group, which means difficulty recruiting them as research participants. 2. The disabilities of these students are severe, requiring extensive individual adaptations for each participant. 3. It is difficult to find and contact students with SSPI due to the population size, and because the whole country must be the catchment area when finding participants. 4. It is difficult to contact these students due to a lack of national registers, as such registration is not allowed in Sweden, mainly due to ethical consideration and the GDPR. 5. Potential participants for the main study could not participate in the pilot study due to the greatly restricted number of students in the population. 6. If students are found and contacted, they may not be motivated to participate in a study due to “consultation fatigue” (Sinclair, 2004), which often means that they are already participants in many health science studies. 7. Dropouts are expected if participation is demanding in terms of the time or the physical or intellectual effort required for this “hard-to-retain” group. 8. Researcher–participant communication issues related to the participants’ SSPI may negatively affect the number of data collected. 9. Researcher–participant communication risks being affected by secretarial support that may bias the participants’ answers. 10. The lived experience of the interviewer (i.e., the second author), as the mother of an adult daughter with SSPI, could unintentionally influence the research findings, given her preconceptions.

Many of the above challenges refer to broad and complex issues that demand verification strategies based on thorough consideration. Guidance from the research literature, however, was not found, probably because most research seems to be based on norms of participation, according to which participants are not supposed to deviate from most people, for example, in terms of motor abilities and communication modes. Additionally, according to our previous experiences in the field, we were fully aware that there are substantial individual differences between students who belong to the SSPI group. Hence, it was found important to carefully design and conduct a pilot study to improve the quality of the main study (Malmqvist et al., 2019) by addressing the above-mentioned challenges and threats regarding trustworthiness by using several corresponding verification strategies.

Trying Out Verification Strategies in the Pilot Study

Given the many challenges identified, verification strategies were already tried out in the pilot study to address them. Regarding the challenge of conducting research in an extremely small hard-to-reach group, and of conducting a pilot study, we faced a complex challenge regarding both recruiting participants and their participation in order to answer our research questions. This may be viewed as a key challenge that needs be addressed with an appropriate verification strategy when all parts of a research process are considered (cf. Morse et al., 2002). Hence, we needed to know how to design the main study to engage students and prevent dropouts. Meanwhile, this had to be done without affecting the group of potential participants who fulfilled the inclusion criteria. Our decision was to use a strategy in which we turned to students with SSPI who were too old to be participants in our main study. With this strategy, two students with SSPI at the upper secondary school level, who were too old to meet the main study’s inclusion criteria, became participants in a pilot study using an inclusive research design (Nind, 2014). One of the students, Emil, used a joystick, while Hanna controlled the computer with a head mouse; both had assistance when they participated. The collaboration with them in the pilot study was of great value for the following main study. Having these two students as participants in the pilot study did not jeopardize the objective of finding participants for the main study.

In collaboration with these two students, Hanna and Emil, the pilot study focused on how to address the need for individual adaptations of AAC-based communication in a computer-assisted interview study involving large geographical interviewer–interviewee distances. For example, and based on Emil’s suggestion, a more detailed understanding of his literacy development was documented during one email dialogue, which served as a model in the main study. He also suggested how an interview in a physical meeting could be arranged, if this option were available. Hanna declared that she was content with the developed email dialogue format, and no changes were needed. Eventually, longitudinal researcher–participant computer-assisted dialogues focusing on the students’ experiences of literacy learning were further developed. The two students in the pilot study influenced the formulation of the interview questions (cf. Maguire, 1987), and a semi-structured interview manual with topics that had emerged during the pilot study guided each dialogue (Appendix 1).

The individual adaptations of AAC-based communication in themselves were deemed to enhance the motivation to participate as well as to prevent dropouts from the planned main study. However, the researcher found this strategy to be necessary but not sufficient, especially as dropouts from the main study would jeopardize its results. Hence, additional preventive strategies had to be developed. Based on the work on the pilot study, the participants’ own sense of “ownership” of the study was deemed crucial for the main study, especially as it was found to motivate the two participants in the pilot study. In his first email, Emil stated: “I think it is a very good idea that you want to know more about my reading and writing”. Therefore, a motivating verification strategy was eventually decided on, based on the participants’ suggestions, to initially explore, for each participant in the main study, what aspect of their own literacy learning process they were interested in examining. We also found from the pilot study that extensive consideration of the participants’ individual physical abilities was required, so that their whole life situation should not be negatively affected. In the contacts with the participant, the strategy was that the second author had to be highly responsive to everything communicated to minimize the participants’ effort, energy, and time in the long-term study. To do this, personalized support had to be provided by the researcher and people around the participants. The two pilot study participants chose to alternate between different ways of communicating, such as writing by themselves, using a joystick for Bliss communication with an aide who translated this into writing, or writing using an on-screen QWERTY display with a head mouse. They explained that this variation gave them the rest they needed to pursue an email dialogue. Consequently, such alterations were suggested to the participants in the main study. As assistance was sometimes needed, for example, when a student lacked sufficient strength and endurance to continue writing with a head mouse and/or joystick, thorough instructions were given to the interpreter not to intervene in what the participant wanted to express, as it would threaten trustworthiness. Although independent reading of the email dialogues was desirable, reading/secretarial help from a personal aide/assistant or teacher was permitted as long as the instructions were followed.

At the time of the pilot study, the interviewer had been studying in the postgraduate programme for some months and her research interest in literacy development among students with SSPI had become the starting point of the literacy study. Her work on the pilot study gave many opportunities for discussions of the risk for unintentional bias with her supervisors, as they were aware that she was the mother of a daughter with SSPI. Hence, her understanding was continuously challenged by her supervisors throughout the literacy study, including the pilot study, and by other researchers and postgraduate students during research seminars, as a precaution against unintended bias. This precaution continued into and through the main study, though it will not be separately discussed in the next section on verification strategies used during the literacy study.

Verification Strategies Used During the Main Study

As described above, regarding the experiences from the pilot study, a flexible longitudinal inclusive research design was planned. The main study incorporated many verification strategies that were tried to some extent in the pilot study, and these strategies will be more thoroughly described in this section. The objective of these strategies, of course, was to ensure trustworthiness. The data collection in the main study, which in accordance with the pilot study had an inclusive research design, was planned to be conducted over a one-year period, but was completed after 18 months (Atterström et al., 2021). Our experiences from this work and the verification strategies used in it are categorized below into two delimited parts of the research process that we consider of special importance for research involving students with SSPI: verification strategies in recruiting participants and verification strategies in data collection.

Verification Strategies in Recruiting Participants

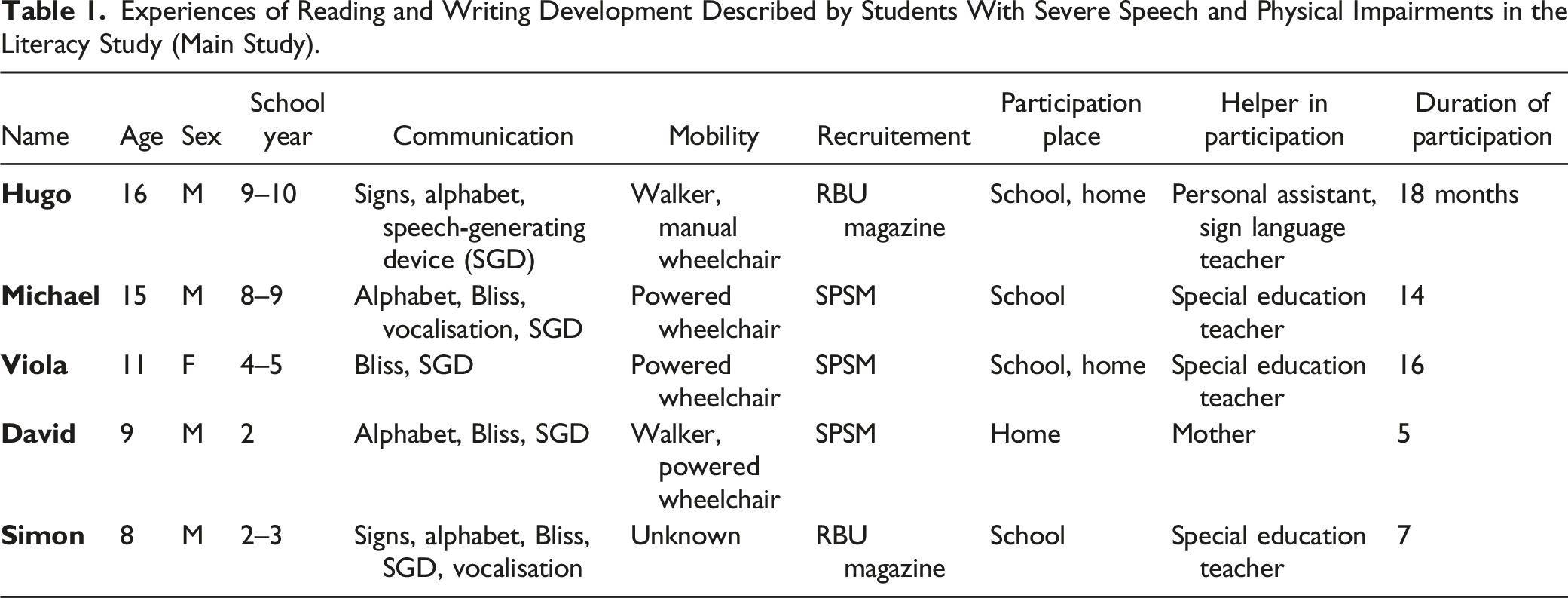

Experiences of Reading and Writing Development Described by Students With Severe Speech and Physical Impairments in the Literacy Study (Main Study).

Verification Strategies in Data Collection

To collect trustworthy data in the main study, several verification strategies were used, most of which were tried out to some extent in the pilot study. In the main study, the students’ whole life situation was considered. To the extent that the students shared their information about their everyday life situation, sensitivity to what they shared was crucial when planning and, continuously, when conducting the data collection. For example, data collection was paused during periods when the students had demanding scheduled consultations as part of their habilitation services (Sinclair, 2004). While the data collection was based on email dialogues, based on the desire to communicate directly with the students, one of the most important considerations was to find the right level of readability for each student within the applicable age range. One strategy for this was to continuously appraise how the answers were formulated in relation to the posed questions, while the main strategy was to formulate interview questions in an easy-to-read manner (Lundberg & Reichenberg, 2008). The second author’s position, working part-time as a teacher, gave rich opportunities to test the readability of the interview questions with children and youth (4–15 years of age); this was frequently done during data collection, and was an approach that had been partially tested during the pilot study. In the main study, which included one student only eight years old, the pilot study experiences regarding readability were less relevant. The second author also had a strategy of trying to establish a relationship with each participant via email dialogues that were not based on interview questions, to be sensitive to what was expressed in these e-mails and adapt the style of writing to each student.

Another verification strategy was to encourage the students to answer the interview questions as an integrated part of their education at school. For the two youngest students, it was even suggested that their classes could answer the questions as part of their regular schooling, so that these participants would not be doing something different from their peers. Hence, it was suggested that participation should mainly take place at each student’s school, for four reasons: (1) the interview questions addressed parts of the school curriculum in continued reading and writing, so they were assumed to be possible to integrate in the students’ regular schooling; (2) to make participation easier for the student by decreasing the time and energy it required in their overall everyday life situation; (3) to ensure that the participants had personalized support from an aide, resource teacher, or personal assistant, when needed; and (4) to take advantage of digital equipment in schools (cf. Jaeger, 2012), as the participants’ time had to be prioritized for their literacy experiences and not technical issues. Regarding technical issues, and to make it easier to respond to e-mail questions, attachments were not used (cf. Mazzoni et al., 1999). If a student preferred to participate in the study in their home environment and had the digital equipment that was needed, this was accepted.

Another verification strategy was based on the idea of giving the students the right to determine the pace of the data-generating process. This strategy was guided by two motives: (1) to facilitate participation as a motivational measure, and (2) to empower the participants, meaning that they were given control of when, where, and how to respond (Bowker & Tuffin, 2004). This strategy was also regarded as a measure to increase motivation. From the researcher’s perspective, however, this strategy can be regarded as frustrating due to a perceived lack of control over the temporal course of the dialogues (James, 2016). One negative consequence of this flexibility was that the planned timeframe for data collection had to be extended from 12 to 18 months. However, this flexibility was necessary to collect data to answer the research questions and avoid the issue of thin data (Barbour, 2018), which was a constant challenge, especially with the constant possibility of participants leaving the study.

The verification strategy concerning communicating reminders to the students to respond was a delicate matter related to the issue of dropouts. The main strategy in reminding students was to allow 14 days to respond, before again sending the same question or questions, while assuring them that it was understandable if they had been unable to respond. Simultaneously, the second author tried to encourage the students to continue to answer questions without putting pressure on them, as dropouts were expected if the students found participation stressful. If there still was no answer to a certain question, the interviewing process continued with the next in the series of interview questions. Eventually, interview questions that had not been answered were posed again. Almost all students answered when a reminder was sent. An exception was a student who twice ignored the reminders about a certain posed question. When he eventually responded, he declared that he found one of the questions too tough to answer, as it asked him to record his use of the alphabet for one day. He explained that, because of his vast use of the alphabet, he found it too demanding to document his complete use of it. This accounted for the only internal loss of data during the time the participants were involved in the study.

After long holidays, especially the nine-week summer holiday, it was assumed that an additional verification strategy would be required to re-establish the email dialogues. Hence, as a soft restart, an easy-to-complete questionnaire (Mazzoni et al., 1999) was developed that was restricted to multiple-choice questions.

It should be noted that, within the data collection timeframe, the students were reminded on several occasions that they could decide to end their participation at any time.

Verification Strategy-Related Outcomes

This section describes how the verification strategies contributed to the objective of ensuring trustworthiness. The intention is to provide a broad description that allows the reader to gauge the purposefulness of the verification strategies regarding the described challenges in phase one, which are related to the study aim and research questions 1 and 3.

It is crucial in any interview study that there are participants who can provide answers so that the research questions can be answered. Many of the verification strategies used in the literacy study relate to this issue, in terms of finding, recruiting, and retaining the participants throughout the study. Despite the verification strategies developed during the pilot study to find and recruit participants, it was a cumbersome process, taking over 12 months to recruit students for the main study. Finally, seven students aged 8–16 years were identified who both met the study’s inclusion criteria and agreed to participate. As they lived in different parts of the country and none of them lived near the researchers, the use of email dialogues was necessary. Although seven students agreed to participate in the study, two dropped out beforehand. We were unable to clarify why they changed their minds: staff changes at one student’s school may have contributed to that student’s dropout, and we received no information about the other student. Of the remaining five students, the two youngest participated for only 5 months, that is, half the planned data collection period. The mother of one of them explained that the wording of the email dialogues was not sufficiently adapted; despite our offering further adaptations, that student left the study. The other student’s family was contacted when the email dialogue stopped without any explanation. The father tried to encourage the student to continue, but the student did not respond to the following e-mails. It is possible that difficulties adapting the interview questions and making them readable may have contributed to both dropouts. It is also possible that difficulties establishing researcher–interviewee relationships may have exacerbated the problems encountered in the email dialogues, despite the researchers’ attempts to approach the students in a personal and friendly way.

The dropouts happened despite the use of several verification strategies that have been described above. One of them was to prevent dropouts after a long summer holiday by using an easy-to-complete reading motivation questionnaire, inspired by Marinak and Gambrell (2010). At the time when the questionnaire was used, only three students remained in the study. The multiple-choice format was a good fit for one of the three students, who almost immediately answered the questions and continued to participate in the dialogues in the same way as before the break. This strategy may not have worked as expected for the other two remaining students, whose answers were delayed by more than a month. However, they did not drop out of the study, making it difficult to judge the possible benefit of using the questionnaire. Importantly, however, they continued to participate for the whole data collection period. This was especially important as their contribution to the data collection was ultimately substantial, partly because the data collection period was extended from 12 to 18 months.

To sum up, the verification strategies to identify and recruit students seemed to have worked, but they took a very long time and took a lot of the project’s resources. Furthermore, dropouts occurred both before and during the data collection, threatening trustworthiness. As dropouts are a serious problem in research, this requires further consideration of the data collection conditions under which the study was conducted and of the adaptations to the students’ individual prerequisites in relation to their life situation. These two issues, that is, the participation conditions and the individual prerequisites, are largely interwoven but are described separately in the following two sections.

Concerning the participation conditions during data collection, the verification strategies used by the researchers focused on what was deemed necessary for participation when email correspondence was required. One strategy developed in the pilot study was to offer various communication modes, such as using an on-screen QWERTY display (with a head mouse, eye tracking, or joystick) or letting an aide write what the student communicated using an AAC system, signs, or eye gazing at an alphabet board. The students in the main study, however, could not be assumed to have functional technical equipment at home that would work adequately for email correspondence. Hence, the students’ schools were encouraged to facilitate participation as part of their regular education, in view of its assumed benefits. Of the five students who participated, only one participated solely in school, whereas the other four preferred a mix of participating at school and at home. Additionally, the suggestion to let classmates answer the interview questions was never realized in any of the students’ classes, so the idea of using a more inclusive approach to data collection was not favoured in the schools. Moreover, the four students who chose to participate in the study partially from home seemed to have high-quality technical communication aids at home. There were also signs or information showing that the use of technical equipment at school did not work well for all students. For example, there were several delays in one student’s participation due to repeated technical issues at school. Problems with the email function, for example, resulting from changes in email settings, led to months of involuntary absence from the study. The school, which was for students with intellectual disabilities, eventually did not allow their students to have email accounts; instead, the student started to use a private email account that worked much better. In view of the technical issues he encountered, this student showed considerable perseverance and participated throughout the planned data collection period. According to his e-mails, he got support from one teacher to solve the technical issues that jeopardized his participation in the study. Overall, verification strategies addressing the participation conditions during data collection were difficult to administer, and often were merely recommendations to the student and people around the student. Even if a student was motivated to participate in the study, the student depended on others’ assistance and ways of assisting. The assistance was obviously difficult to monitor, and therefore represented a possible threat to trustworthiness.

Regarding verification strategies to provide adaptations based on knowledge of individual prerequisites, taking the students’ life situation as a whole into consideration, these largely concerned accommodating different communication modes, as described above. The researchers’ knowledge of how participation could strongly affect the everyday life situation of students with SSPI was taken seriously. The second author was committed to developing sensitivity to each student’s individual prerequisites and overall life situation based on what was written in the email communication. Throughout the data collection period, there was a balancing act between encouraging continued participation and not stressing the students. This was evident every time a student did not respond within 14 days and a reminder was sent. One example of many illustrating this was when one student took a long time to reply after the reminder. Without our sending another reminder (as a precaution not to stress the student and to prevent dropout), the student responded that she was still having difficulties answering the question, which was complex and required a long time to think through. Our reply encouraged her to continue to participate and we apologized for any stress that the reminder may have caused. Such situations were obviously also stressful for the second author, who was responsible for the email communication, especially as there were few remaining participants. The strategy of acting in a careful way and being sensitive to anything that may have been expressed, or even not expressed, as with the delayed answers, may have motivated some of the students to continue participating for longer. The dropping-out of the two younger students indicate difficulties in taking students’ individual prerequisites into account, while the three older students’ participation throughout the study indicates the opposite. They continued to answer the research questions and contributed to producing trustworthy results.

Phase 2

In Phase 2, while verification strategies were still being used in the main study, several aspects of checking whether the study met the criteria for trustworthiness in terms of credibility, dependability, confirmability, and transferability emerged to a greater extent. However, as some of these emerged quite early, depending on the issues focused on as threats to trustworthiness, it was not always easy to differentiate between the verification strategies used and the techniques suggested by Lincoln and Guba (1986), as they were mixed to some extent. One example is prolonged engagement as a technique to meet criteria for credibility, which has been described above as a verification strategy.

Apart from prolonged engagement, Lincoln and Guba (1986) suggested persistent observation, triangulation, peer debriefing, negative case analysis, and member checks as techniques to meet the criteria for credibility. As the literacy study was characterized by a longitudinal approach, it seems to fit well with the description of the prolonged engagement technique regarding the time dimension but not regarding intensity. The in-depth monitoring of anything that may have served as distortion was continuous. Once again, it was difficult to differentiate the verification strategy used from the prolonged engagement technique proposed by Lincoln and Guba (1986), as they seemed to be overlapping when it came to identifying and assessing distortion. One possible distortion that needed monitoring was the issue of third-party presence during the interviews (i.e., secretarial support as assistance), which may have had an unintended influence on the students’ answers. A verification strategy was decided on and used, as described above, including strict guidelines to the secretary, whose presence could have threatened credibility. We found no indications that the instructions were not followed, meaning that we found no indications of anyone violating the guidelines, but we had no control over how the participants and secretaries acted when the questions were answered. This was a threat to trustworthiness.

Two of the other four suggested techniques for checking credibility were used to some extent through the use of verification strategies, although there may have been differences from what Lincoln and Guba (1986) described. For example, it eventually became an aim of the email dialogues to get participants’ perspectives as well as their feedback on whether the interviewer had understood their answers, although this verification strategy is not entirely the same as the member check technique described by Lincoln and Guba (1986). When it comes to peer debriefing, again, Lincoln and Guba’s (1986) description displays similarities to our work, which may be described as using a verification strategy, as there have been ongoing discussions of the quality of the answers, method, and results at research seminars with other researchers. The aim has always been to take peer debriefing seriously, although we cannot guarantee that all aspects of the longitudinal research approach have been scrutinized by a “disinterested professional peer” (Lincoln & Guba, 1986, p. 77).

Lincoln and Guba (1986) described dependability together with confirmability, noting that the criteria depend on an external auditing process, which may result in a confirmability judgement. This has been possible to some extent, as regular doctoral programme seminars were organized by this article’s first author with a group including four doctoral students (including the second author), who followed one another’s work over time, as well as other researchers, who participated on some occasions as readers. The focus of some of the seminars was the research process, but without making a formalized dependability judgement. In other seminars, the focus was on results based on the collected data and subsequent data analysis. This is not entirely the same as using external examiners to establish whether or not the study results have been proved in a confirmability judgement. The literacy study, however, was scrutinized as part of the peer-review process (Atterström et al., 2021), which may be regarded as similar in some respects to the confirmability judgement process (Stahl & King, 2020). Another ambition in the project has been to apply an audit perspective, in which transparency in all parts of the research is important (Bryman, 2012; however, this ambition has not conformed to the advanced description offered by Lincoln and Guba (1986). The more formalized auditing within the postgraduate education of the second author, via compulsory seminars in which the literacy study was scrutinized by researchers, is partly in agreement with Lincoln and Guba’s (1986) description of the external audit as resulting in dependability and confirmability judgements.

Regarding transferability, Lincoln and Guba (1986) stated that thick descriptions of qualitative data are required so they can be applied in other contexts. They also pointed out, however, that it is unclear exactly what “thick” means, a matter that only recently seems to have been clarified more thoroughly (Younas et al., 2023). Irrespective of how “thick description” is defined, given the methodological approach applied, in which data collection was based on computer-assisted email dialogues only and the researcher never visited the students, satisfying the criteria of thick descriptions of the students’ contexts was difficult despite the use of verification strategies. As described before, it was a constant challenge to avoid the issue of thin data (Barbour, 2018), which also applies to the issues of avoiding thin descriptions and of retaining the students in the literacy study. Given the longitudinal approach, the intention to provide the students with the time needed to give elaborated and reflective answers, and the aim of establishing a well-functioning researcher–student relationship as the most important verification strategy, thick data and descriptions were provided to some extent. However, two young students nevertheless dropped of the study, and their answers to the questions were shorter than those of the three older students. Again, it was not easy to differentiate between the verification strategies used and the techniques proposed by Lincoln and Guba (1986).

Discussion of Trustworthiness Emphasizing the Literacy Study

This discussion will be based on the three research questions posed to address the aim of retrospectively and critically examining the trustworthiness of the literacy study. Inherent in the first two research questions is the two-phase structure of the research process: the use of verification strategies was emphasized in the first phase, whereas techniques checking that the trustworthiness criteria have been met were emphasized in the second. Regarding the third research question, with its focus on what we have experienced and recommended to other researchers, a more general discussion follows.

The first research question concerns the challenges we identified regarding the design, preparation, and realization of the literacy study to ensure scientific trustworthiness, as well as the verification strategies used in phase one. It should be noted that Morse et al. (2002) did not specify the strategies, instead emphasizing them broadly as ensuring trustworthiness in areas such as sampling and data collection. It should also be noted that we have concentrated on our use of the verification strategies in these two areas, as we found the challenges here to be closely related to the hard-to-reach group of participants in the literacy study.

The verification strategies used to engage enough participants proved to be very difficult to apply, and it was also difficult to counteract dropouts. In particular, the three participants who continued to participate throughout the data collection period, including its half-year extension, contributed important experiences that gave us insights into how they could have continued their literacy development beyond the usual arrest at nine years of age. Our experience was that each verification technique was intended to counteract a certain challenge. We also found that the verification techniques that advanced the study’s work and trustworthiness depended on broad research aspects such as researcher flexibility, researcher–participant collaboration, and responsiveness. These aspects represent the very opposite of the idea of research based on principles of standardization and distance from the research object.

Regarding an explicit and summative answer to the first research question, in the literacy study with its challenges of identifying students, contacting them, obtaining their agreement to participate, motivating them to continue participating, and providing data collection conditions that suited their individual prerequisites to get trustworthy data, a large number of verification strategies were used based on our pilot study and continuously developed in collaboration with the SSPI students. The extent to which the verification strategies worked was closely related to whether a trusting researcher–student relationship had been established. It is possible, or even plausible, that the two youngest students’ dropouts happened because the researcher–student relationship did not develop as intended. It was also found that trustworthiness in the literacy study was closely related to how personal assistance in the data collection context and technical solutions worked in relation to students’ individual prerequisites. The issue of assistance posed the greatest threat to trustworthiness, in our experience, as there was no way for the researcher to determine whether or not the strict guidelines for assistance had been followed.

Notably, the verification strategies were developed due to the challenges encountered and were specifically designed for the literacy study. They were specific in that they addressed the identified characteristics of the SSPI students, who were regarded as a hard-to-reach group with specific demands regarding communication and for motivating them to participate and continue participation. They may also be characterised as a hard-to-retain group.

Concerning the second research question, whose purpose was to ensure trustworthiness using checking techniques in phase two, the techniques recommended by Lincoln and Guba (1986) were all used to some extent. The boundary between a checking technique and a verification strategy, however, was not always clear, as shown by the example of prolonged engagement in the previous section, and there was also no clear boundary between phases one and two, as they seemed to be intertwined when it came to certain checking techniques and verification strategies. The main characteristic of the checking techniques in the literacy study was that they were more oriented towards a broad and general level of trustworthiness, using some kinds of checking points, and were not specifically designed in relation to the characteristics of the participating group or the associated challenges. These points, often within the university tradition of research seminars, among other things, served as a trustworthiness check to determine whether the iterative research process based on verification strategies had worked as intended. Moreover, some of the checking techniques seem to be “institutional” in character, and many such techniques are part of structures established within universities in the effort to foster trustworthy research.

Our answer to the second question is that the four trustworthiness criteria – that is, credibility, dependability, confirmability, and transferability – proposed by Lincoln and Guba (1986) were applied, and several techniques were used with the objective of ensuring trustworthiness in the literacy study. When working on this article and retrospectively returning to our findings and documentation, we sometimes found it difficult to differentiate between the verification strategies used and the techniques recommended by Lincoln and Guba (1986). While the verification strategies more specifically addressed challenges related to conducting a study with the SSPI group, some of the techniques were more general in character.

The third research question concerns our experiences and what we can recommend to other researchers who want to conduct research with students who have SSPI, or other groups with similar prerequisites, when they are addressing trustworthiness issues. Our answer to this, and our recommendation, is that researchers who want to have SSPI students as participants in computer-assisted email dialogue need a thorough awareness of the challenges involved in conducting a study with this group. Several challenges are encountered in identifying them, engaging them, and retaining them in a study while also focusing on trustworthiness. Verification strategies need to be designed to address each challenge based on thorough knowledge of each student’s individual prerequisites and of the conditions necessary where and when data collection takes place, as extensive individual adaptations are required. To enable this, building trust and developing a researcher–participant relationship are the most essential aspects (cf. Dahlin, 2021), according to our experiences from the literacy study.

It is of utmost importance that students with SSPI participate in research, and not only in health-related studies. It is a group whose members are largely marginalised in the school context, for instance, in test situations such as PISA and other international standardised assessments of educational attainment, from which they are systematically excluded (Schuelka, 2013). They should be given fair chances not only to participate but also to show their knowledge in tests that are accommodating as well as to express themselves in accommodating evaluations (Malmqvist, 2001) and research. Because what is unknown remains unaddressed, necessary decisions will not be made regarding measures and resources for these students, who will continue to be marginalized in a vicious circle. In this respect, we hope that our experiences from the literacy study will support other researchers in conducting trustworthy studies with SSPI students and other marginalized groups.

Finally, our research process has been truly iterative in character, incorporating several verification strategies and checking techniques that have proved useful. Using the ideas presented by Lincoln and Guba (1986) and Morse et al. (2002) proved relevant to capturing the whole extent of the trustworthiness concept, as their methodological contributions cover the entire research process. Our main advice to other researchers conducting studies similar to our literacy study is to thoroughly consider trustworthiness throughout the research process, from planning to publishing. There simply cannot be either/or answers when it comes to verification strategies and what we rather simplistically have called “checking techniques”. Hopefully, Morse et al. (2002, p. 13) were wrong in their critique when they stated: The emphasis on strategies that are implemented during the research process has been replaced by strategies for evaluating trustworthiness and utility that are implemented once a study is completed.

We lack sufficient support from the literature to confirm that this shift has indeed taken place, or to say whether the negative consequences described by Morse et al. (2002) have taken place. Perhaps there has been a shift in the publishing of qualitative studies that has obscured the use of verification strategies? If so, there is a need to evaluate the publication format, especially the journal article format, among some publishers (Thomas, 2017), and to require article supplements that provide readers with important methodological information about the published study. This could lead to methodological development, which would be urgent for students with SSPI, who deserve more visibility in research, rather than their current marginalization.

Conclusions

According to our experiences in the literacy study, we put a lot more emphasis on verification strategies to address the challenges faced than on techniques to meet the trustworthiness criteria. Apart from the methodological contribution regarding how to work towards trustworthiness in a study with a marginalized hard-to-reach and hard-to-retain group in pedagogical research, our retrospective study with a focus on verification strategies and checking techniques and their roles in the literacy study will hopefully contribute to ongoing methodological discussion of trustworthiness in qualitative research. The question raised by Lincoln and Guba (1986) in their chapter “But is it rigorous?” has inspired us to be part of this discussion, and in our endeavour to attain scientific trustworthiness in the literacy study. If we have failed, our research is probably “worthless, becomes fiction, and loses its utility” (Morse et al., 2002, p. 14). It will not then help the hard-to-reach and hard-to-retain group of students with SSPI that we studied, or others with similar prerequisites and conditions, as we may not have gained knowledge that is useful for understanding their literacy development. On the other hand, if we have succeeded, our conclusions are that we may have contributed to important knowledge based on these students’ own experiences of their literacy development by providing thorough descriptions of how the literacy study was conducted throughout the research process, and hopefully regarding methodological development concerning trustworthiness. We hope that our studies, that is, the literacy study together with the present methodological study, will inspire other researchers to conduct research that includes population groups that risk being marginalized in research.

Supplemental Material

Supplemental Material - But was it Trustworthy? Methodological Experiences From a Study of a Hard-to-Reach Group of Students in Need of a Flexible Research Approach

Supplemental Material for But was it Trustworthy? Methodological Experiences From a Study of a Hard-to-Reach Group of Students in Need of a Flexible Research Approach by Johan Malmqvist, Andrea Atterström, and Ann-Katrin Swärd in International Journal of Qualitative Methods

Footnotes

Acknowledgments

We want to express our sincerest thanks to all seven participants (including two pilot study participants), their families, aides, and teachers.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was financially supported by the Josef and Linnéa foundation, and the Jerring foundation.

Supplemental Material

Supplemental material for this article is available online

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.