Abstract

Participant recruitment through social media platforms has been suggested as an effective method for sampling from specific populations; however, recent online recruitment attempts have been met with varying levels of success. In the current study, we targeted a specific social media population: those who advocate for the Black Lives Matter movement, delineating between those with high and with low follower counts on Twitter. We compared the outcomes of our recruitment methods, which include Facebook ads; unpaid, personalized tweets; emails to groups involved in community advocacy; and offline methods. Included in our analysis is the amount of effort involved in each recruitment method, as well as advertising costs. Based on our comparison, Facebook advertising was the most effective form of social media recruitment for our study. In contrast, unpaid, personalized tweets were time consuming and ineffective.

Optimizing Recruitment for Qualitative Research

Participant recruitment for in-person interviews through social media platforms, emails, and offline methods have had varying levels of success (Antoun et al., 2016; Ash et al., 2021; Gu et al., 2016; Nolte et al., 2015; Ramo & Prochaska, 2012). The most prevalent method appears to be Facebook, which is also well-documented within the medical research community as an effective recruitment method for sampling participants (Ash et al., 2021; Kapp et al., 2013; Pedersen & Kurz, 2016; Ramo & Prochaska, 2012; Smith et al., 2021; van Gelder et al., 2019). However, more research is needed to understand the effectiveness of Facebook outside of health studies.

Moreover, other forms of digital recruitment should be explored for comparison, particularly because data scientists now rely on social media algorithms for psychometrics (Stark, 2018). Twitter, for example, seems like a natural fit for recruiting activists. Users on that platform tweeted #BlackLivesMatter over 3.7 million times per day between May 26 and June 7, 2018—up from 17,002 times per day a month earlier (Anderson, Barthel, et al., 2018; Anderson, Toor, et al., 2018). Several studies have explored recruitment via Twitter, but results suggest the method is relatively ineffective (Gu et al., 2016; Williamson et al., 2018; Yuan et al., 2014). Other researchers have compared convenience sampling by email to Google Advertising (Morgan et al., 2013), discussion boards (Koo & Skinner, 2005), mass media (Nguyen et al., 2012) and in-class announcements and postings (Norton et al., 2009). No studies outside of medical research (Smith et al., 2021) could be found to compare the effectiveness of social media platforms to offline methods. The purpose of this study is to offer qualitative researchers additional data for decision making when choosing recruitment methods. This study is particularly helpful because it compares methods outside of a health communication context, explores methods beyond social media, and accounts for our estimation of costs, including advertising and the investment of time. Methods were compared for recruiting supporters of the Black Lives Matter movement (both students and non-students) for in-person, one-hour interviews on a university campus. Specifically, we compared the outcomes of Facebook Advertising, Twitter, emails to groups involved in community advocacy, email to university members, and offline methods for a qualitative study. We recruited participants for interviews that would probe for meaning-making and social media use during the Keith Lamont Scott shooting and the ensuing protests. Due to the phenomena we were studying, it was important to document the follower counts and posting counts on social media, which we encoded in the deidentified participant IDs.

Literature Review

Social media platforms are valuable tools for boosting participant recruitment numbers; however, some social media platforms may perform more efficiently than others, and research also exists about the offline methods of recruitment we examined. Here, we examine the state of the literature regarding successes and failures of social media platforms (such as Facebook and Twitter), email, and traditional offline recruitment methods, such as paper flyers.

Social Media Recruitment

In recent years, participant recruitment through online social platforms has become an increasingly viable option for qualitative researchers (Antoun et al., 2016; Ash et al., 2021; Gu et al., 2016; King et al., 2014). Because sampling in most qualitative studies is purposive, researchers gravitate toward tools like social media platforms to identify a population surrounding a specific phenomenon easily (Hamilton & Bowers, 2006). Social media platforms have different audience profiles with regard to the people who predominantly use them, as demonstrated through a Pew Center survey of 1502 U.S. adults (conducted via cell phones and landline phones). The survey was weighted to account for representativeness of the U.S. population based on gender, race, ethnicity, education, and other categories (Auxier & Anderson, 2021). According to this survey, more women than men use Facebook regularly, whereas slightly more men than women use Twitter. Facebook is used far more frequently than Twitter among all types of users. Specifically, most Facebook users report that they visit the site daily, whereas less than half of Twitter users expressed that they visit Twitter daily. Facebook also draws people with more life experience than Twitter does. In fact, half of people aged 65 or more use Facebook, in contrast to Twitter, which skews younger. A higher proportion of Black people than other races use both Facebook and Twitter. Hispanics are much more represented on Facebook, as opposed to Twitter. Audiences can be targeted through each social media platform based on social media ads, which allow researchers to provide micro socio-demographic criteria to drill down to a precise target population of interest (King et al., 2014).

Because of this ability, social media allows access to diverse populations, including those of low prevalence that are generally invisible (King et al., 2014). It is important to note that although Internet or cell phone service is common, recruitment by social media may overlook participants who do not have access (Leonard et al., 2014). Accordingly, the exclusion of particular socio-economic or demographic groups may cause their experiences, opinions and emotional responses to be omitted from the study (Neves and Mead (2021). It may be that populations without regular access to social media or access that is common to the community place a higher value on other forms of social communication, such as in-person community gatherings.

In addition, although the benefits of recruiting through social media are clear, it is important to consider that each social media platform has distinct traits (Jaidka et al., 2019; Kircaburun et al., 2020; Oz et al., 2017; Park et al., 2009; Raacke & Bonds-Raacke, 2008). For example, whereas Facebook is predominantly used for family and friends, Twitter is a microblogging site focused on informative updates that reaches various audiences, including those known and unknown to the individual. Each has its own set of benefits and disadvantages. This study compares a social networking platform (Facebook) with a microblogging platform (Twitter). When compared with the more traditional methods our researchers employed, the results of using these platforms provide additional context for choosing the most effective online recruitment methods.

Facebook Recruitment

Facebook has been the most prevalent social media platform for recruiting participants in recent years (Kapp et al., 2013; King et al., 2014; Pedersen & Kurz, 2016; Ramo & Prochaska, 2012; Thomson & Ito, 2014). In addition to the conventional benefits of online advertising described earlier, users can interact with Facebook ads as if they were a normal Facebook post (i.e., users can like, comment, or share the post; (Pedersen & Kurz, 2016). This functionality has the added benefit of automated virality, as compared with Google AdWords, email, and offline methods. In Facebook’s default setting, the friends of a targeted population member are notified when the targeted member interacts with an advertisement. Friends are given the opportunity to interact when the notification appears on their timelines.

This process can be thought of as the digital version of snowball sampling, which means that study participants recruit additional participants with a similar profile (Pedersen & Kurz, 2016). In addition, Facebook is an advantageous medium because researchers can identify other potential participants through public Facebook groups and fan pages.

In the field of health research, Facebook has served as a valuable tool in participant recruitment efforts. A study by Ramo and Prochaska (2012) examined Facebook as a mechanism to survey young adults about substance use. The researchers found that Facebook provided a cost-effective option to survey their target population of young adult smokers. At an average of $4.28 per completed survey over the course of 20 advertisements, Facebook allowed the researchers to gain over 14,000 ad clicks with minimal staff time devoted to designing and monitoring the campaign (Ramo & Prochaska, 2012). The results of Ramo and Prochaska’s study have limited application to the present study, however, because recruitment for survey completion differs from recruitment for in-person qualitative research. Similarly, in a study by van Gelder et al. (2019), Facebook was an effective secondary recruitment method for targeting pregnant women for a study that required online participation.

The authors of both studies, however, expressed concerns about internal validity, pointing to the fact that the representativeness of the sample could not be fully determined. In other words, people recruited via Facebook might fundamentally differ from those who are not using Facebook. Although Facebook reports the total number of accounts that could be targeted by a set of demographic characteristics, there is not a feature to compare the Facebook population with the non-Facebook population (Ramo & Prochaska, 2012).

Moreover, there are additional drawbacks to recruiting participants through Facebook. Studies have also suggested that the results of recruitment attempts may depend on study-specific factors, such as the wording of the advertisements and eligibility criteria. The characteristics of the target population (e.g., age, race, global vs. regional), the funding allotted to the advertisement campaign, and the incentives offered to participants also could influence recruitment success via Facebook (Pedersen & Kurz, 2016). In other words, the lack of success on Facebook might be more a function of the ad quality or similar factors related to effective Facebook use rather than the use of the channel itself. For example, extant research has demonstrated that users prefer social media content that includes visuals (e.g., Brubaker & Wilson, 2018; Galloway, 2017; Houts et al., 2006; Janoske, 2018; Liu et al., 2017). Another factor to consider is that researchers must take care to create an ad that will motivate clicks without biasing the sample.

Perhaps due to the wide variety of factors, recruitment results through Facebook have been inconsistent. For example, Pedersen and Kurz (2016) created a $300 advertising campaign via Facebook in hopes of recruiting women aged 35–49 but reported no success. Another study that targeted a different demographic, boys with Klinefelter syndrome, reported that traffic to the project Web site increased substantially following the Facebook advertisement (Pedersen & Kurz, 2016). Additional research is needed to assess the potential of Facebook as a qualitative recruitment tool, particularly for in-person interviews.

Twitter Recruitment

Twitter is another avenue of online recruitment that has been explored. Some researchers have described Twitter alongside Facebook groups as “essential in establishing and maintaining a rapport with the study population and community” (Yuan et al., 2014, p. 6). Nevertheless, other researchers reported that they struggled to recruit participants via the platform. A 2018 medical study (Williamson et al., 2018), for example, found Twitter to be an insufficient recruitment tool. The authors subsequently urged caution and reminded others about the importance of using key communication messages, as their tweets to pregnant women about telemedicine omitted the incentive. In that study, despite 55,700 Twitter impressions on the recruitment post, only seven respondents finished their first questionnaire and zero completed the second. Another study, targeting rural youth about online health, compared Facebook ads, Twitter, and postcards marked with QR codes (Gu et al., 2016). Those researchers found Twitter had the lowest response percentage and Facebook had the lowest cost per participant. Twitter could still be effective for recruitment, given that researchers have not reported the effectiveness of paid Twitter ads, which our study also did not do.

Researchers are exploring how to improve recruitment via Twitter. In one study (Yuan et al., 2014), researchers liked and followed content relevant to their participants and sent them personalized messages. They concluded that mass-produced messages were not as effective in recruitment as personalized messages sent directly to a community leader within the target population. Another advantage was that the leader could then share the post (Yuan et al., 2014), once again, creating a snowball sampling effect. The researchers cited the phenomenon of reciprocity, an economic term used to describe the correlation between one’s likelihood of compliance with the familiarity of the one making the request. This insight suggests it may be more effective to approach leaders in the target population rather than to recruit en masse.

Email Recruitment

Participant recruitment via email has met with varying levels of success. One study found that email lists created specifically for the targeted population were more effective than sending emails via Yahoo! or Google groups (Morgan et al., 2013). The email list in this study was from an organization’s list of depressed patients. Another study found that participants recruited via email provided noticeably different results than participants who were recruited in person, suggesting that the recruitment method may affect results (Norton et al., 2009). Another study, by Koo and Skinner (2005), found that only 0.24% of recruitment emails resulted in participants who completed the questionnaire and were qualified to contribute. The researchers suggested that participants may have been challenged to differentiate trustworthy and legitimate messages (i.e., such as recruitment for the study) from spam and misinformation in emails. This would have hindered recruitment.

Offline Recruitment

While online recruitment efforts have been growing steadily, researchers continue to use and to assess the viability of traditional offline recruitment methods, which include distributing flyers, presenting public announcements in classrooms, and producing newspaper ads and newsletters (Nguyen et al., 2012; Nolte et al., 2015; Norton et al., 2009). Paper flyers have been used widely for over 30 years and are one of the oldest recruitment methods (Nolte et al., 2015: 533). Yet, Nolte et al. (2015) noted appropriate placement of these flyers is necessary to maximize recruitment. They placed flyers in a high-traffic hospital environment and experienced significantly lower recruitment of young adults compared to other methods, noting that younger people are less likely to visit the hospital system. Although their flyers likely had the most visibility, as an estimated 800,000 people visited the hospital system while the flyers were posted, only 12% of their total recruitment came from this method. In addition to flyers, the researchers used email, Facebook, and an institutional Web site. They reported that the latter method was most successful at achieving a representative population. Facebook was helpful for recruiting young adults.

Recruitment through the college classroom has also been a prevalent method of gathering participants (Norton et al., 2009; Taylor et al., 2011). In these cases, students are typically invited to participate in the study in exchange for course credit and are notified of the study through in-class announcements (Norton et al., 2009; Taylor et al., 2011). Although this method of convenience sampling can be effective, it only produces populations of college students. Some researchers believe that findings based on the responses of college students are unlikely to be representative of non-college populations (Norton et al., 2009).

Finally, newspaper ads and newsletters have also been used as offline recruitment methods. The authors of one study found that local newspaper ads and school newsletters were more effective in their recruitment than city-wide newspaper ads (Nguyen et al., 2012). The same study found that local newspaper ads and newsletters were the most effective form of recruitment for an obese adolescent population. Conversely, in a study that targeted people who consider themselves smokers, two newspaper ads yielded only seven participants (Gordon et al., 2007).

Research Questions

Given the mixed results of social media platforms when recruiting for health studies, we believe that the effectiveness of various online and offline recruiting methods should also be considered within the social sciences, especially for studies involving in-person research. Accordingly, we asked questions about how recruitment efforts fared in a study about activism on social media: RQ 1: Which recruitment method yielded the most respondents for an in-person study about activism?

We investigated the first research question by examining the following recruitment methods: Twitter messages, an internal university Listserv, email to community organizations, LinkedIn posts, and Facebook advertising. RQ 2: Which recruitment method was the most cost effective for an in-person study about activism?

For this second research question, we considered the recruitment success of each method in relation to the effort and monetary cost. As with the initial research question, we include several measures of success, ranging from the number of people who attempted to qualify for the study to the number of qualified participants.

Methods

Recruitment for the qualitative stage of our study occurred during a 5-month window, between April 2019 and August 2019. Based on that experience, we address contextual factors that should be considered when judging the relevance of our study to future recruitment endeavors. We also share details about our recruitment efforts via social media, emails, and offline methods.

Several contextual factors in our study affect the extent to which the recruitment results relate to future research: the topic of the study and its significance based on geographic relevance, the eligibility criteria, and the incentives for community members and students relative to the effort required to participate. These factors are explained in greater depth below.

Study Topic and Geographic Relevance

We studied the Keith Lamont Scott shooting, which occurred in the highly volatile context of the Black Lives Matter movement. To ensure the emotional relevance of the shooting was relatively consistent, we limited recruitment to people living within 1 hour of the city where Scott was killed. This decision was based on the principle of psychological distance, a concept from construal-level theory that is important to consider when interpreting our recruitment.

Psychological distance refers to participants’ perceptions of (1) where it happened, (2) when it happened, and (3) how relevant it is to themselves (Trope & Liberman, 2010). Regarding the first element of psychological distance, the geographic proximity to the event deepens potential participants’ depth of processing about it, possibly heightening interest in the study or making it too sensitive of a topic. The second element of psychological distance could have been a cooling factor in the sense that 3 years had passed since the shooting. Finally, the third element—the extent to which participants identified with the Black Lives Matter movement—potentially influenced their level of interest.

Eligibility Criteria

Accordingly, we established qualifications for participants. First, they had to be at least 20 years old by 1 September 2018 so that they would be only reporting on activities that occurred during adulthood at the time of the September 2016 shooting. Second, participants had to have resided in or within 1 hour of Charlotte, NC during the time of the Charlotte protests following the Keith Lamont Scott shooting. Finally, participants had to have used any form of social media during the time of the protests.

Thus, our participants had an appropriate level of psychological distance for a study of local adults who used social media in the context of local activism. Due to the unique nature of our study criteria, we also report the number of participants who attempted to qualify for our study because our recruitment materials did not specify eligibility criteria, except in the announcement on our Web site.

Participant Incentives and Effort Required

Our grant funding enabled us to offer incentives to our students and to our non-students (referred to as “community members”) for 1 hour of participation. We offered students $10 for an in-person interview on campus. Given the additional burdens of travel and parking, as well as the higher probability of childcare needs, we offered community members $40 for an in-person interview on campus.

Recruitment Tools

In this section, we share details about our efforts to recruit participants via our study’s Web site; unpaid, personalized tweets; Facebook Advertising; personal social media connections (such as LinkedIn); campus email listserv; flyers; and the email addresses, Web site contact forms, and phone numbers of organizations who had self-identified as being supportive of the Black Lives Matter movement. All communication was written in English.

Web site

Recruitment efforts began with the creation of a project Web site. The landing page offered not only a welcoming introduction and description of the project, but also a clear association with a reputable university. It provided a narrative about how to participate, including eligibility descriptions, and the link to a screening survey. The site also offered a direct email link to the project’s lead researchers, and it described the multiple study stages with corresponding internal review board protocols.

Rounding out the efforts at establishing legitimacy and a degree of comfort for participants, the team’s photos and biographical material were provided. The team featured on the Web site included nine women and five men representing the following racial/ethnic backgrounds: seven Caucasians, three Asians, two African-Americans, one Caucasian-Native American, and one Caucasian-Hispanic. While the Web site itself was only an ancillary recruitment tool, it served the important purpose of establishing a secure and inviting project portal in a process that could otherwise seem sterile and anonymous.

Unpaid, Personalized Tweets

The study was designed to analyze the behavior on the social media platform Twitter during September 2016—the month of the Keith Lamont Scott shooting (Larimer, 2016). Our quantitative team members collected 1.36 million tweets posted from September 20, 2016, through September 26, 2016, using Twitter’s proprietary firehouse, the Gnip Historical PowerTrack. Our screening data required that the participant had used at least one of eight related hashtags: #KeithLamontScott, #KeithScott, #CharlotteProtest, #KeithLamontScott, #PrayersforCharlotte, #JustinCarr, #CharlotteUprising, and #CharlotteRiots. We determined these hashtags by examining the trending Charlotte protest hashtags and by looking for other hashtags in those tweets and related tweets.

Additionally, the qualitative researchers collected user handles of Charlotte area residents who were highly embedded, meaning they had a higher than average follower account within our screening survey, and who had tweeted about the Keith Lamont Scott shooting frequently (at least four times). The screening survey was also used to understand if users were highly embedded with a moderate to low number of posts (at least three) or if users had low embeddedness and a moderate to low number of protest tweets.

After establishing a Twitter handle for the team to coordinate with the Web site email address, we sent individual messages to approximately 500 Twitter users (using the tagging @ function) over a 5-week period based on their influencer traits. Two general messages, addressed to the public, were labeled A and B, and seven slightly different recruitment messages (sent in two waves) were directed at people whose handles had been collected by the qualitative researchers. Team members also posted general messages from their own personal Twitter accounts to encourage virality.

Facebook Advertising

As the recruitment period drew to a close, our team turned to Facebook advertising to recruit the final interview participants for the targeted demographic (within an hour of Charlotte, North Carolina, and at least 20 years old, with an interest in politics, volunteering, community issues, charities, and causes; see Figure 1). Facebook Ad. The post text accompanying the image states: “To find out if you’re eligible, complete this survey: <link>. Receive a $40 Amazon card (for non-students) or a $10 card (for students) after the interview. Protocol <number>.” Note. This ad was targeted toward users who lived within an hour of Charlotte, North Carolina, were at least 20 years old and had an interest in politics, volunteering, community issues, charities, and causes based on Facebook’s data.

Targeted Community Email Invitations

The stipulation for addressing community members by email was that there was evidence of related social media activity or a mention in the news at the time of the shooting. An article in the local newspaper proved to be instrumental for identifying 225 organizations and individuals who had signed a “statement of commitment” to justice, using the social media hashtag #ThisIsOurCharlotte (Siner, 2016). Researchers then methodically and laboriously combed Internet resources for contact information, such as email addresses, phone numbers, and Web site contact forms. In the rare cases when such information was not available, social media handles were obtained. Leads received a personal email based on a customized template, a follow-up email if necessary, and a follow-up phone call. Each lead was invited to sign up for the study individually or to accept an invitation for the researchers to facilitate an organizational focus group. Most leads had the potential for more widespread access to the organization’s membership, had the invitation been shared among its membership to generate a snowball effect within their organizational network.

Most of the 89 organizations we were able to contact did not respond to our outreach; however, 10 organizations (11%) indicated that they might be interested, and only three confirmed that they had shared the email recruitment. Other organizations cited reasons why they ultimately declined. For example, one organization agreed to a focus group but ultimately could not participate due to an organizational urgency. Another organization expressed mild interest but later said that the members who had been involved in the statement of commitment no longer attended the congregation. The net result was that none of the individuals who could be recruited through the tracking Qualtrics code for this method qualified for the study.

Campus Email Recruitment

As is typical of studies hosted on campus, our researchers posted on several classroom and university listservs over the 2019–2020 academic year. The recruitment message was substantially similar to that which had been used in community email messages, with the exception that extra credit was offered to a portion of students who participated. In these situations, there was an additional caveat that all participant responses would be de-identified for their professors.

Flyers

Approximately 100 flyers were posted on two university campuses, on lamp posts where permitted, and at other demographically diverse locations that offered bulletin boards, such as grocery stores, office supply stores, libraries, municipal pools, and YMCAs.

Results

Yield by Recruitment Source.

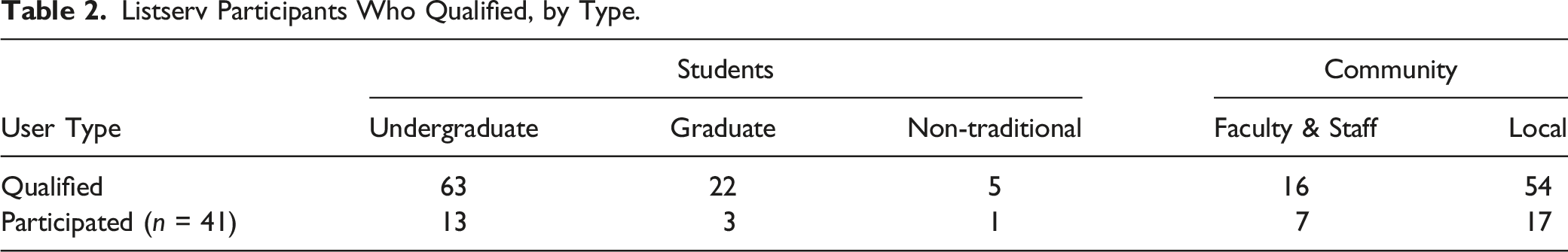

Listserv Participants Who Qualified, by Type.

Ultimately, 41 participants reported for an interview. An additional 15 people qualified and applied for an interview, but they declined to schedule an appointment. Other qualified respondents expressed a preference for a focus group, which was not necessary to arrange.

RQ 1: Which Recruitment Method Yielded the Most Respondents for an in-Person Study About Activism?

As shown in Table 1, the recruitment method that yielded the highest number of qualified respondents (25) for our in-person study about activism was an email sent by the university’s internal listserv to students, staff, and faculty. Through this method, we identified qualified undergraduate students, graduate students, nontraditional students, and faculty/staff (see Table 2). Facebook advertising yielded nearly the same number of qualified participants, although the raw yield and return on investment were each vastly different among platforms.

RQ2: Which Recruitment Method was the Most Cost Effective for an in-Person Study About Activism?

With regard to the research team’s time, advertising budget, and labor, we determined that the internal listserv was the most cost-effective method to recruit from within the university. Email recruitment resulted in approximately 120 potential leads over a 7-week period, yet the net result was that the community email campaign did not yield a single participant. Social media was the most efficient method for recruiting qualified participants from the general public. Unpaid, personalized tweets yielded a much larger number of responses but few qualified or participated.

Compared Effectiveness of Recruitment Methods for an Online Survey.

Discussion

The purpose of this study was to contribute to the emerging literature regarding the best recruitment methods for an in-person, qualitative study in the social sciences. This study was innovative because it included a labor and cost analysis, resulting in recommendations based on a return on investment model. In the case of our study, a university listserv was the most cost-effective, time-saving approach to attracting not only undergraduate students but also graduate students, faculty, and staff to participate in a study that had nothing to do with university matters. Researchers who need to recruit participants from non-university populations can consider our results: Facebook advertising resulted in the most qualified non-university participants of any method, and Facebook advertising required far less of a labor investment than other methods, such as @mentions to Twitter users who had discussed the topic of our study.

Our findings support research from the medical community that considers social media to be a viable tool for study recruitment (Ash et al., 2021; Kapp et al., 2013; Pedersen & Kurz, 2016; Ramo & Prochaska, 2012; Smith et al., 2021; van Gelder et al., 2019). Certainly, we found it much more effective than emails for reaching the general community. Like other researchers (Gu et al., 2016; Williamson et al., 2018; Yuan et al., 2014), we found unpaid, personalized tweets to be the least effective recruitment method, and we can relate to the observation (Gu et al., 2016, p. 93) that message content may be a stronger indicator of virality than follower count (Cha et al., 2010). Future research could explore the effectiveness of paid tweets for recruitment and utilize social network theory to explore snowball sampling on social media.

Similar to Williamson et al. (2018), our team considered the importance of messaging by creating a variety of messages we thought delivered important points about the study. However, the fact that message content required Institutional Review Board (IRB) approval meant that messages did not necessarily reflect the natural patterns of online communication.

There are other limitations to consider when developing conclusions about the most effective recruitment methods. We did not pay for Twitter or LinkedIn ads, which may have provided a more direct comparison with the paid ads on Facebook. Our Facebook results also would have been more directly comparable had we started using the platform earlier in our recruitment process. Consequently, this study should not be used to develop conclusions about how ads across these channels perform for qualitative recruitment. Nevertheless, our study produced a stark contrast between the ratio of time and effort of recruitment success comparing unpaid, personalized Twitter messages and Facebook advertising.

Our results are likely to be relevant to other studies that require participation in person to understand human behavior, so it is important to examine human behavior on different social media platforms. We relied on direct messaging to appeal to participants who had posted publicly and were influential on Twitter. It is possible that our messages did not employ enough emotional content to encourage virality, but that would have introduced bias to the recruiting. In retrospect, even if all social media recruitment had occurred using paid, targeted marketing, Facebook advertisements seem more likely to offer the benefit of snowball sampling within a very local population. This is because many people use Facebook to share information with friends and families, and political ideology is fairly balanced, whereas Twitter networks tend to be more dispersed (Mobile App Daily, 2021; Vogels et al., 2021). Facebook appeals to 68% of Americans, of varied demographic characteristics, while Twitter appeals to 24% of Americans, has a partisan gap of about 23 percentage points, and the demographics are more likely to include affluent, urban, college graduates (Walton, 2021).

The findings of this study, of course, are contingent on the ability for each social media platform to be a conduit to targeted audiences. Social media platforms evolve in popularity, so recent studies of these platforms, such as the Pew Center’s regular reporting (e.g., Auxier & Anderson, 2021), can be consulted prior to developing a recruitment strategy. Nevertheless, researchers should consider the target and reach of social media, which will continue to make it an efficient tool to consider until perhaps the next societal technology shift.

As other researchers continue to study recruitment methods in studies about human behavior, the body of evidence should become more established. Our study in the social sciences suggests that not all recruitment methods are equally effective; in fact, an email to a listserv was the most effective method we used to recruit on-campus participants, and it cost no money to use and minimal labor. The production of unpaid, personalized tweets was labor intensive and resulted in no participants. Meanwhile, Facebook was very effective for recruiting community members, particularly due to the use of ads. Researchers can consider these results when determining where to place their effort, possible budget, and limited time in the context of the audiences they want to reach.

Footnotes

Acknowledgments

We are grateful to our student research assistants for their contributions to this study. Michael Brunswick organized the recruitment tracking and interview scheduling. Kaila Addison, Bacarri Byrd, and Zachary Matheson helped to recruit participants. Matthew McCue contributed to the literature review, and Khyati Mahajan identified Twitter users to recruit from our Gnip dataset.

Declaration of Conflicting Interests

There are no conflicting interests to declare.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is funded by the Army Research Office of the Department of Defense, #72487-RT-REP, IRB approval 18-033.

Data Sharing

At this time, data sharing is constrained by project requirements. The data may become available for sharing at the conclusion of the research project.