Abstract

Large scale, multi-organisational collaborations between researchers from diverse disciplinary backgrounds are increasingly recognised as important to investigate and tackle complex real-world problems. However differing expectations, epistemologies, and preferences across these teams pose challenges to following best practice for ensuring high-quality and rigorous qualitative research, while maintaining goodwill and team cohesion across team members. This article presents critical reflections from the real-world experiences of a team navigating the challenges of collaborating on a large-scale, cross-disciplinary interview study. Based on these experiences, we extend the literature on large team qualitative collaboration by highlighting the importance of balancing autonomy and collaboration, and propose eight recommendations to support high quality research and team cohesion. We identify how this balance can be achieved at different times: when centralised decision-making should be prioritised, and autonomy can be allowed. We argue that prioritising time to develop shared understandings, build trust, and creating positive environments that accept and support differing researcher perspectives on qualitative methods is paramount. By exploring and reflecting on these differences, teams can identify how and when to support autonomy in decision-making, when to move forward collaboratively, and how to ensure that shared processes reflect the needs of the whole team. The reflexive findings, emanating from practical experience, can inform large research teams undertaking qualitative studies to explore complex issues. We make an original contribution to qualitative methods research by arguing that balancing autonomy and collaboration is the key to promoting high quality research and cohesion in large teams.

Keywords

Introduction

The aim of this article is to provide critical reflections on the experiences of a large-scale and disciplinary-diverse team collaborating to design and deliver an interview-based study. We respond to the challenge faced by large, disciplinary-diverse research teams, where variations in preferred methodological processes may be perceived to threaten study quality and jeopardise an ability to collaborate to produce meaningful findings (e.g. Laborde et al., 2019; Schikowitz, 2020). From our own experience of working in a large, multi-disciplinary team we make an original contribution to the qualitative methods literature by identifying the need to balance autonomy and collaboration in large-team qualitative studies to promote high quality research and team cohesion. More specifically, we identify when to prioritise collaboration and when to allow autonomy, and how this balance can be achieved. Our experience has led us to develop eight recommendations to enable higher quality data collection and analysis, as well as more meaningful integration of insights from different disciplines. We first discuss the literatures on fostering large team collaboration and delivering high quality qualitative research, creating a foundation on which to discuss our own case. We outline the methods our research team followed to provide context about how we developed our critical reflections. We then take an in-depth look at our eight recommendations, which we have developed to support other large teams to balance autonomy and collaboration in qualitative research studies.

Literature Review

Challenges and Best Practice in Large-Scale Research Teams

It is increasingly recognised that knowledge, expertise, and perspectives from a range of disciplines are needed to tackle complex ‘real world’ problems (Czajkowski et al., 2016; Hall et al., 2012; Pineo et al., 2021; UKPRP, 2017). Large-scale interview teams involving researchers from different disciplines have increased capacity to advance understanding on complex topics and ‘metaproblems’ that are beyond the capacity of any single discipline (Austin et al., 2008; Dalton et al., 2021; Hall et al., 2012; Kaufmann et al., 2020; O’Campo et al., 2011). They collaborate to design studies that look at challenging areas in new ways and collect and analyse data from a wide range of stakeholders representing multiple perspectives. These multi-, inter-, or trans-disciplinary collaborations, where researchers from different disciplines actively work together, sometimes alongside non-academic actors, using shared methods to investigate and tackle problems can lead to new understandings, insights, and innovative solutions (Czajkowski et al., 2016; Schaefer-McDaniel & Scott, 2011).

While discipline diversity in collaborations is increasingly valued, it can present challenges to researchers, for example relating to language and terminology, shared understandings, and variations in epistemology and methodological preferences (e.g. Black et al., 2019; Kaufmann et al., 2020; Schaefer-McDaniel & Scott, 2011; Stokols, Hall, et al., 2008). This reflects the fact that members of different disciplines follow discourses, practices, and rules that may not be familiar to or shared by others (Dalton et al., 2021; O’Rourke et al., 2019). Varying expectations about research goals, values, and methods can be difficult to overcome (Brister, 2016; Broto et al., 2009). Entrenched norms and practices can be key barriers to collaboration across disciplines, leading to challenge or distrust (Schikowitz, 2020). Some scholars argue against attempting to achieve epistemological consensus in these scenarios (Laborde et al., 2019). Indeed, collaboration can be threatened when researchers lose autonomy in decision-making or have concerns that their disciplinary or specialist knowledge are ignored or devalued in comparison to others (Garforth & Kerr, 2011; Graef et al., 2021; Stokols, Misra, et al., 2008; Thompson, 2009).

Additionally, many qualitative researchers work within an academic system that may traditionally promote individualism over collaboration, particularly in certain disciplines. Collaborative research may not fit well in the academic system that can promote and reward disciplinary specialism over inter- or trans-disciplinary partnership working (Bammer, 2017; Lyall, 2019). Supporting researcher autonomy is therefore important for enabling positive team dynamics, researcher motivation, career development, and maintaining commitment to collaboration in large, diverse teams. However, for large, disciplinary-diverse teams using qualitative methods as the foundation for identifying collaborative insights and impacts there are challenges in supporting this researcher autonomy.

Applying Standards of Qualitative Research in Large Collaborations

There are multiple recognised ways to conduct high-quality qualitative research, with guidance on conducting specific stages, such as sampling (Robinson, 2014), designing interview guides (Kallio et al., 2016), and coding data (Adu, 2019; Linneberg & Korsgaard, 2019; Williams & Moser, 2019). Concepts commonly associated with quality include promoting credibility, transferability, dependability, and confirmability to ensure meaningful results (e.g. Guba, 1981; Guba & Lincoln, 1989). These are recognised across disciplines although are sometimes given different emphasis and priority by different scholars and summarised under alternative terms including trustworthiness (Nowell et al., 2017), rigour (Daniel, 2018) and validity (Morse, 2015). These concepts and procedures are thoroughly explored elsewhere in a range of frameworks and strategies that promote strategies for researchers to follow to ensure rigour (e.g. Morse, 2015; Nowell et al., 2017; O’Brien et al., 2014; Rendle et al., 2019; Shenton, 2004; Tong et al., 2007; Tracy, 2010). However, each of these concepts are underpinned by epistemological assumptions. Moreover, they presume that interview teams will share understandings about methods, processes, and good practice.

The variety of methods and strategies followed by researchers from different paradigms can achieve the same overall goal of high-quality research, as described by Tracy in her highly cited ‘Eight “Big Tent” Criteria for Excellent Qualitative Research’ (Tracy, 2010; Tracy & Hinrichs, 2017). However, the challenge for large, diverse research teams is that variation in preferred processes may be perceived to threaten study quality and jeopardise an ability to collaborate to produce meaningful findings. These issues are less likely to be problematic when conducting qualitative studies with a single researcher or small groups of interviewers and coders. In such studies, teams will likely share common understandings about credible methods and good practice and researcher expectations about procedures to demonstrate transparency and credibility are likely to align or be more quickly managed. Autonomy is less likely to be threatened in these scenarios.

Despite a growing recognition of the importance of large-scale inter- or trans-disciplinary research, there is limited literature that examines the challenges of team collaboration and practical approaches to developing and following shared methods in large qualitative studies. This involves, for example, ensuring consistency in data collection and coding, developing codebooks, using shared analytical software, and gaining familiarity with large data sets (Abraham et al., 2021; Beresford et al., 2022; Giesen & Roeser, 2020; Hall et al., 2005; White et al., 2012). These challenges will likely be amplified with greater researcher diversity as teams representing different disciplines and epistemological backgrounds are less likely to coalesce easily around examples of best practice to make decisions to allow progress (Brister, 2016; Rendle et al., 2019).

Clearly there are challenges for effective collaboration in qualitative research for large teams that go beyond what faces small teams or individual investigations. Additionally, the inclusion of greater numbers of researchers across more organisations will add to complexity in practical processes commonly used in interview-based studies to ensure high-quality research, such as data management, audit trails, and co-ordinating data collection periods. Large interview teams seeking to investigate complex problems from multiple disciplinary perspectives will therefore need to consider best practice for both qualitive research and collaboration. They need to collaborate effectively as a team to produce meaningful results and demonstrate the quality of their research.

Supporting Diverse Team Qualitative Research

Based on our experience of working as a large team of researchers representing multiple organisations and disciplines, this article offers recommendations to support the successful delivery of large-scale interview studies. We seek to extend knowledge on how large teams representing different disciplines can work together effectively on qualitative investigations. This builds on understandings of best practice in the implementation of qualitative methods (Tracy, 2010; Tracy & Hinrichs, 2017) by taking account of how core components of high-quality research, such as credibility and transferability, can be rigorously applied in epistemologically heterogeneous environments. We bring this together with understandings of best practice for inter- or trans-disciplinary collaboration and team science (Bammer, 2013; Hall et al., 2018; O’Rourke et al., 2019) to emphasise the need for and, more importantly, how to balance researcher autonomy and team collaboration.

Our critical reflections and subsequent recommendations add to the currently limited literature on team approaches to collaborating in qualitative analysis (Giesen & Roeser, 2020; Milford et al., 2017; Jackson & Bazeley, 2019). This will support large teams to collectively produce new and meaningful insights, which are needed to tackle complex real-world problems, through rigorous qualitative research. Beyond the disciplinary challenges discussed, these insights will also have wider relevance to teams managing the complexities of multi-site interview studies at a time when collaborating in large, multi-institutional, and geographically spread teams is increasingly supported by technological developments and shifts towards remote working patterns.

Summary of the Large Research Team’s Study Methods

Before presenting our team’s critical insights, observations and recommendations into how to manage the challenges of large-scale multidisciplinary qualitative research, we provide an overview of the approach we took in our study to give context to the challenges we proceed to reflect on.

Project Team Overview

The interview study team involved nine sub-teams of researchers from five universities working together to critically explore England’s complex urban development system as part of the TRUUD (‘Tackling the Root causes Upstream of Unhealthy Urban Development’) research project (Black et al., 2021). The study we reflect on here aimed to increase understanding of decision-making about health in this complex system. The team was tasked to interview a wide range of urban development stakeholders to understand the system and generate insights to support the co-production, with non-academic partners, of interventions.

The researchers came from a variety of disciplinary backgrounds of relevance to the public health impacts of urban development: urban planning, transport, public health, real estate, management, public policy, law, public involvement, and public administration. As well as academic experience, the team also had diverse multisectoral experience in public, private and third sector organisations. Two of the team also had roles during this study as ‘Researchers-in-Residence’ embedded part time in local government organisations.

Methodological Approach Adopted by the Large Research Team

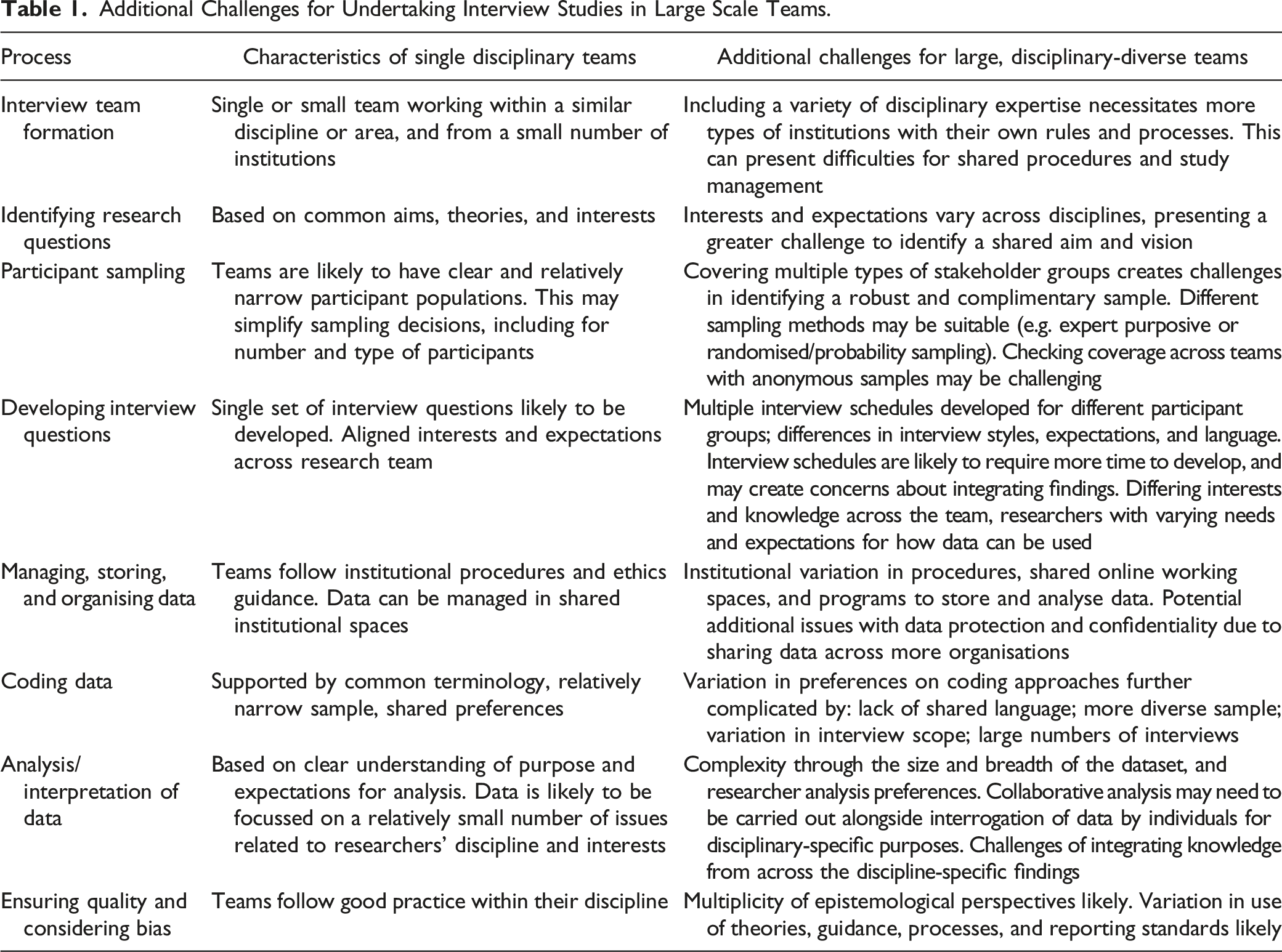

Additional Challenges for Undertaking Interview Studies in Large Scale Teams.

Study methods were designed to help the team overcome the challenges identified in Table 1 and ensure the efficient completion of a rigorous study. We ultimately needed to facilitate a collaborative analysis to support new insights through shared understandings of the complex system under investigation, while also offering the flexibility necessary to create and maintain a motivated, empowered, and cohesive team. The methodological approach that we followed to do this is summarised in Figure 1. Stages for conducting our large, disciplinary diverse team interview study.

Interviewing as a Large Research Team

Each of the interview sub-teams included one or two researchers with similar backgrounds and each targeted a different stakeholder group, related to their academic discipline, for example central government actors or private sector real estate actors. The research questions for the study were collaboratively developed to ensure shared direction and a cohesive dataset. These provided a basis from which each sub-team developed their own interview questions for different target stakeholder groups based on their expertise in interviewing these stakeholders.

Semi-structured interviews were conducted across seven interview sub-teams (range 13–24 interviews, mean 18 per sub-team) with a sample of 132 participants that varied greatly in type of expertise and sector. The team undertook an actor mapping exercise to identify important stakeholder groups who needed to be included for the team to understand the complex urban development system in the UK. The final sample was drawn from stakeholders identified in this sample, which included private, public, and third sector actors at national, regional, and local levels with a variety of roles in areas such as urban planning, real estate, transport, public health, the environment, and policymaking. Sub-teams identified and recruited their own participants based on inclusion criteria relating to stakeholder influence and expertise. Two sub-teams did not carry out their own interviews, but instead developed interview questions for the other teams to use to capture perspectives across the whole sample on issues relevant to their cross-cutting focus, and analysed data relating to these questions. Interviews were largely conducted online due to COVID-19 constraints. While we experienced some of the challenges associated with conducting qualitative research using online platforms, such as developing rapport with participants and technological barriers (Oliffe et al., 2021; Varma et al., 2021), the team found that conducting these interviews online was generally very successful. It facilitated the involvement of stakeholders in very senior roles who benefited from the flexibility and convenience that the online format provided.

Large Team Coding and Interpretation

Transcripts were uploaded to a single NVivo file (QSR International Pty Ltd, 2018) for team members to use for coding. An initial set of deductive codes, grouped into categories, were included in the starting NVivo master file. Potential new inductive codes and their definitions were discussed and agreed or merged/rejected by the whole team in weekly meetings to create new versions of the master NVivo file. This allowed sub-teams across different institutions to code transcripts relatively autonomously in their own timeframes, while using the same coding framework required collaboration. This coding period spanned 16 weeks.

When coding was completed, data was analysed in stages designed to move the team from interview sub-team summaries of their own data to team understandings and insights based upon collective analysis of the entire interview dataset. As Figure 1 and the subsequent text shows, we worked to rapidly identify overall findings based upon the whole dataset in a collaborative process, which was needed to progress the wider research project within narrow timelines. We also enabled interview teams to undertake additional and in-depth analysis of data autonomously over a much longer period. Firstly, each sub-team produced their own summaries of findings, grouped by coding category. Small groups of researchers from different disciplinary backgrounds then reviewed summaries and collaborated to develop insights based on the multiple stakeholder groups and researcher perspectives. The final stages of analysis involved different forms of thematic analysis, with flexibility for adaptation for different paradigms and epistemologies within the team (Braun et al., 2018).

Critical Reflections

Regular team discussions were held both during and after the primary study where we discussed the challenges we encountered as a large, disciplinary-diverse team, and ways to overcome these. Based on our observations and critical reflections we identified a core issue for disciplinary-diverse teams working on large-scale qualitative research: the critical need to manage the balance between researcher autonomy and team collaboration. More specifically, we identified different ways that teams can manage this issue and prioritise collaboration or allow autonomy at different times during the research study. This led to our development of eight recommendations to support large teams to think strategically about balancing autonomy and collaboration, which we discuss below. These critical reflections were supported by our understanding of the literature which we explored during our process of reflexivity. Based on our observations during this study we identified a core issue for disciplinary-diverse teams working on large-scale qualitative research: the critical need to manage the balance between researcher autonomy and team collaboration. More specifically, we identified different ways that teams can manage this issue and prioritise collaboration or allow autonomy at different times during the research study.

Eight Recommendations to Balance Autonomy and Collaboration in Large-Scale and Disciplinary-Diverse Team Interview Studies

In the previous section we outlined our approach to conducting a large-team, disciplinary-diverse interview study. Reflecting on our experiences we proceed to discuss the recommendations that we have developed, acknowledging that these arise from a single case study and additional learning could come from other contexts.

Our eight recommendations are presented in Box 1. They are designed to balance the competing needs of developing shared methods for qualitative inquiry with supporting researcher autonomy in methods and decision-making. This is in recognition that researchers from different disciplines will often have different priorities, standards, and expectations to adhere to. We provide examples of where centralised decision-making is needed to support rigour and how this can be informed by different perspectives across the team to maximise collaboration, as well as examples of where sub-teams can be supported to follow their own preferences. Taken together, these provide an approach to balancing collaboration and researcher autonomy. The examples are drawn from throughout our study, although coding and analysis were the stages where, through our reflections, we identified that the need to balance autonomy and collaboration came through most prominently. These stages required the most team discussion and caused the most tension in the team for how to proceed. This may be because there was potential to work in different ways during this phase, with additional consideration of how to achieve our multiple (individual and disciplinary) research goals needed.

1. Make time to engage in ongoing reflexivity to understand differences 2. Acknowledge no single ‘right’ way 3. Create inclusive environments for regular team discussions 4. Empower researchers to make choices 5. Allow time to trial approaches and be prepared to change direction where necessary 6. Understand variation in publication requirements across disciplines 7. Provide clarity and guidance on procedures in working protocols 8. Agree terminology and create clear definitions to understand a shared languageBox 1. Recommendations to balance autonomy and collaboration in large-scale, diverse team interview studies

Recommendation 1: Make Time to Engage in Ongoing Reflexivity to Understand Differences

It is critical to provide time and support for teams to discuss and reflect on their epistemological perspectives and research preferences to enable researchers to collaborate using shared processes, and to build trust and confidence to allow autonomy. Through this teams can identify where they share common ground, better understand and respect different perspectives, and identify strategies to overcome differences or to accommodate them in a study where it is possible to do so.

We came to the project with different experiences and knowledge about the subject, as well as with different epistemological backgrounds. We therefore included time at the start of our study to conduct and share literature reviews from each team’s disciplinary perspective on key concepts that we intended to explore in our interviews. These epistemological differences may come as no surprise since our team came from different academic disciplines, but even researchers from the same discipline can hold differing assumptions, with differing degrees of positivism compared to interpretivism, for example. Mixed methods researchers have described how challenging these differing world views can be for collaboration (Bazeley, 2016) but it is essential for any team qualitative project, especially large-scale inter- or trans-disciplinary teams, to confront and accept different perspectives (Pineo et al., 2021). The team collaborated to create an overall ‘study methods’ document with team members leading on different sections. We found that agreeing this document supported the team to reflect on epistemology and to make the case for, and compromise on, individual preferences on methods. We suggest this be supported through conducting facilitated team workshops to make explicit these tacit epistemological differences. Building in such opportunities is critical in large collaborations and rather than being seen as using up scarce time, can save time later by reducing confusion and disagreement brought about by a lack of understanding of others’ perspectives. Epistemological differences and differing world views and understandings can be a strength of large interdisciplinary teams and the aim is not to constrain this diversity of views but to understand them to improve trust and confidence in others, thereby supporting opportunities for autonomy.

Team leaders and managers, as well as research funders, must recognise that adequate time is needed to support disciplinary-diverse teams to form and develop shared understandings of research problems, language, and research methods, as well as integrating of discipline-specific learning and researcher development. This time should be built into project planning. Grant funding for temporary roles should provide adequate time to complete and write up studies, without short-term contracts breaking up a disciplinary-diverse team prematurely and preventing opportunity for early career researchers to develop their profiles. Researchers on short-term contracts will be under pressure to publish and demonstrate their skills during the study period, while larger and more complex studies can take a long time to complete. Empowering researchers to develop their profile by explicitly building in this time may benefit team morale and help maintain team cohesion and commitment. Empowerment may also come from additional delegation of responsibility by senior team members, who are likely to have little time on the project in comparison to early career researchers. Consideration of this during project planning may help to set expectations across the team on the role of all researchers in the study.

Reflexivity through team discussion can support rigour, develop team functionality, and allow issues to be identified (Barry et al., 1999; Rankl et al., 2021; Rettke et al., 2018). It can help teams to question their assumptions and values, and to bring together methods from multiple disciplines (Popa & Guillermin, 2017). In interview studies we advise seeking to understand, through reflexive discussion, the assumptions and expectations of team members at an early stage to support the development of processes, identify potential methodological challenges, and understand how to maximise the benefits that come from having a varied interview team. Clarifying our epistemological assumptions early on was important to align the direction of travel for our study and to identify processes to avoid researcher autonomy threatening rigour. For example, in early discussions some members of the team discussed sampling methods in relation to ‘reaching data saturation’ whereas others disputed the very concept of data saturation in qualitative research, except for when used in Grounded Theory where it was original devised (Glaser & Strauss, 1999), instead emphasising the need for broader exploratory research.

The need to allow some autonomy in procedures presented a challenge to sometimes deeply held perspectives and was met with some resistance at times. However, as the study progressed the team appeared to accept and understand others’ preferences. Developing team understanding was essential for our team to gain the trust and confidence in others’ perspectives and critical to allow some autonomy in how methods were applied, without losing confidence in the quality of our method. However, it required large amounts of discussion to develop our shared understanding, particularly at the start of the study.

It is critical that time and space for reflexive practice is explicitly built into studies to support effective collaboration in large and disciplinary-diverse teams. This discussion and team formation requires time and energy to develop and should not be underestimated. Reflexive practice should be continued throughout the study to reflect on progress and whether the needs and expectations of the team are met. This was invaluable in our study to understand expectations and preferences, and to resolve challenges. Later in our study, discussing as a team our variations in preferences, such in how interview questions were asked and our coding styles, helped us to understand the specific reasons for the variation in our data and gave us confidence in our findings.

Recommendation 2: Acknowledge no Single ‘Right’ Way

Positive relations and trust between team members are important in large teams of qualitative researchers (Luciani et al., 2021). Creating a team environment that is accepting of different processes and treats different disciplinary preferences equally can help build the trust and good relations conducive to effective collaboration. An important step towards collaboration therefore is to be open towards processes and expectations of different disciplines, and to question one’s own assumptions about best practice. This will also improve team members’ confidence in how others approach qualitative research, which is important to enable autonomy in places, without raising concerns about losing rigour.

We followed the principle that the aim of our ongoing reflexivity was to understand variations and consider their potential impacts, rather than to promote the preferences of some of the team over others. There are many established methods for doing interview-based studies and different preferences are to be expected within a large team across different disciplines. There are many points of potential diversity, such as with the format of interview questions, interview style, coding approach, and preferred methods of analysis, and where possible we sought to support researcher freedom to follow their preferences while considering how this might impact on our whole team method. We recognise that while what might seem normal and acceptable to one researcher in the team might be seen as poor practice by another, this does not necessarily mean that one researcher’s preference is ‘wrong’. Clearly stating this at the start of collaboration is recommended.

It was a source of tension where team members advocated for a particular approach without considering that others may feel very differently. An example was with variation in preferences about coding. Coding styles and preferences by researchers in our study were the subject of much discussion by the team and a common area of disagreement. All members of the team were experienced in qualitative research, and many had strong preferences about how they believed coding should be carried out. Examples included the number of codes applied to one section of text, the amount of text assigned to a code, and the extent that new inductive codes should be added to the coding framework rather than applying or modifying pre-existing codes. Where strong voices in the team promoted their own preferences as the ‘right’ way that the whole team should follow, there was a risk that other perspectives could be overlooked.

We were able to resolve concerns about coding approaches with the aid of NVivo data to clarify our differences, which helped us to constructively discuss our approaches. Figure 2 illustrates the variation in the number of codes that different interview sub-teams used, with boxes showing the medians (centre lines) and upper and lower quartile ranges of the codes used by each sub-team. A lack of consistency in frequency of codes may be considered a cause for concern. However, discussing this variation, supported by double coding for 1 or 2 transcripts per team, helped develop our understanding of how codes were being used and confidence in our analysis. The double coding was not designed to overtake coding by interview teams, but instead to go over an already coded transcript and raise points for discussion. Box plots demonstrating the distribution of codes used per transcript by interview sub-teams.

This method of analysing our data helped the team to understand why our study was different to other interview studies we had been involved in before, and to become comfortable with these differences. Importantly this aided our reflection on the meaning of our data to support (i) our collaborative interpretation, and (ii) our understanding of others’ preferences on issues such as creation of new codes, and created accountability, transparency, and opportunities for contributions across the team. These are important in building and maintaining good relations (Brown et al., 2022) and for allowing autonomy in these processes by giving teams freedom to code as they thought appropriate. Considering the active role of the researcher, we would expect certain issues or topics to be identified by certain researchers because of their experience and background (Braun et al., 2016) and we did not want to constrain or ensure conformity in coding. We scrutinised the use of specific codes and identified, for example, that codes relating to the category ‘governance’ were more commonly used by researchers from public policy and public administration backgrounds. This likely reflects both what was said by their interviewees, but also their own interest and knowledge about governance.

Reflecting on our experiences we strongly encourage researchers in disciplinary-diverse teams to familiarise themselves with data from stakeholders in roles and sectors less familiar to them. This helps develop the shared understandings necessary to integrate insights drawn from different disciplinary perspectives (Crowley & O’Rourke, 2020). We experienced that debates on the inclusion of new codes into our coding framework were improved when researchers had the time to read and reflect on others’ transcripts. Reading and analysing transcripts from interviews with a large and varied group of stakeholders that covered sometimes specialized and technical information is a substantial task, and researcher time for familiarization with the dataset should be built into study planning.

Recommendation 3: Create Inclusive Environments for Regular Team Discussions

It is important to create space for inclusive and reflexive discussion to give equal agency to different perspectives and avoid ‘political’ problems (Jackson & Bazeley, 2019). This will empower team members to promote their own preferences and ideas within the restrictions of collaboration, therefore supporting some autonomy in decision-making. Team leaders can encourage a culture within team discussions of being open minded about alternative approaches to carrying out interview studies, listening to other perspectives, and accepting that other views can be just as valid as one’s own. However, this requires time and trust to develop through prioritising space for regular discussion. In our case, some researchers had more experience than others in working across disciplines or accommodating the preferences of others. Interdisciplinarity may be more common in some disciplines than in others and researchers with less prior experience of interdisciplinary work may need additional support to understand the needs and interests of others in a mixed researcher group. Some senior colleagues observed that early career researchers on the team were more ‘intellectually agile’ and ‘compromising’ compared to more established academics, who had extensive experience of working with their own disciplinary approaches, leading to fixed ideas on what they deemed ‘best practice’. This challenges the idea that early career researchers need support to work across disciplines - it appeared that senior academics can find it more challenging to incorporate new ways of working For example, we encountered resistance in some sub-teams to including some shared topics in their interview schedules that, following discussion, we reflected may have been seen as less useful or important from their perspective.

The importance of reflection to help us understand where our perspectives differed and how our differences might impact on study quality was highlighted to us throughout our study. Our experience highlighted the importance of creating positive environments in which to engage in this team reflection, similar to the recommendation from Brown and colleagues to ‘Create a safe and equitable space for all involved’ in their reflection on working in an international research team of academic women (Brown et al., 2022). Our discussions were primarily held within weekly two-hour, online meetings, in addition to monthly meetings with team members outside the core interview study team, and ad-hoc team discussions when needed. To create space for debate but also recognising the need to make progress, meeting agendas need to have fewer items, with clear boundaries put around items for continued discussion and items where decisions are needed.

Creating ‘psychological safety’ where team members feel confident and comfortable to speak out or voice disagreement is important (Hall et al., 2012; Newman et al., 2017). This can be supported through, for example, break out or small group meetings, debriefing and group reflection, accessible leaders within a team, and reducing hierarchies (Traylor et al., 2021). An inclusive environment for our regular team meetings was important to facilitate debate and open attitudes. This was aided by having a positive, well-respected peer to chair the team meetings. This ensured that everyone’s views were heard and respected, as equitable communication is highly valued in diverse teams (Steger et al., 2021). Senior members of the team with management responsibilities were present but tended to mainly observe and encourage discussion. They strongly emphasised the need to listen to other perspectives and encouraged others to lead the debate. They supported the team to engage in group decision-making processes, with differences resolved through negotiation. This supported individuals to contribute to decision-making and actively involved all researchers throughout the study, giving voice to their own preferences and individual goals.

A consequence of working in a large team is that team members may undertake processes in a study at different time points. For example, in our study ethical procedures took longer to complete in some organisations than others, there was variation in researcher availability, and participant recruitment times varied. This reinforced the need to facilitate researcher participation in discussion. There was a risk that researchers who came later to tasks might have missed opportunities to voice their preferences or felt pressured to conform to the preferences of those ahead of them in the study. For example, different sub-teams coded at different times. While most sub-teams were close to completing their coding by Round 9, sub-teams 5 and 6 who were later starting their own coding continued to suggest new codes when they felt that their data was unique. Others however felt that expanding the already substantial framework further was unhelpful and were sometimes frustrated by later additions. We ensured opportunity to debate new codes in our weekly meeting, to give voice to views on both sides and alleviate tensions. Where there were strong feelings that data was unique and required new codes, these were often accepted to ensure that equal consideration was given to ideas from later coders.

Recommendation 4: Empower Researchers to Make Choices

Reflecting on differences and building trust creates the conditions where teams will develop the understanding and confidence necessary to give individuals autonomy on some processes. Allowing researchers to make some choices according to their preferences will improve morale and maintain goodwill, as well as supporting career aspirations. It will also help individuals meet needs specific to their own discipline or institutions, such as those relating to publishing.

Our experiences highlighted the importance of facilitating some level of autonomy amongst researchers working towards common goals where it is feasible to do so and where it will not limit the potential for successful collaboration and meaningful shared datasets. Requiring all researchers to undertake research processes in a consistent and rigid manner might commonly be seen as best practice in interview studies, particularly in more positivist research cultures. However, in large teams, offering flexibility (where doing so will not fundamentally damage the integrity of research findings or create significant challenges later for the team) is likely to be beneficial. In our study, we therefore sought to give some autonomy on key processes albeit within some necessary boundaries to ensure consistency and facilitate our collaborative analysis.

An important stage in many research collaborations between disciplines is developing a shared vision and sense of purpose about the direction of the study, and understanding of the problems that are being investigated (Hall et al., 2012). Our collaborative research questions provided such a framework for each interview sub-team to then have autonomy when developing their semi-structured interview guides. While it was agreed to avoid closed or leading questions, sub-teams were given flexibility in question style. Through cross-checking of guides and team discussion we were able to ensure full coverage of the research questions in each interview guide, and identified where there was variation in the number of questions relating to each research question. While we did not aim to reduce this variation, this was useful to reflect on later when we sought to understand differences in our data.

Interview sub-teams were given autonomy in identifying their own samples using the shared participant criteria, reflecting their expertise in their own sector. The whole team reviewed each sub-team’s participant list and could raise concerns about gaps and inconsistencies in the sample. While our study involved elite interviews, we suggest that similar delegation of recruitment could be advantageous for research with marginalised groups where anonymity and trust may be key to participant involvement. Researchers with experience of working with specific groups, or with relevant lived experience themselves, may be best placed to utilise existing, or build new, relationships to involve such participants in a study and ensure greater representation in research. However, in our study, we reflect that while the final sample was appropriate for the study to meet our aims it was not fully balanced across sectors and roles, and sampling decisions were less critiqued than decisions made later in the study. Sampling inevitably came relatively early in our team formation when we were developing the collaborative environment and ‘psychological safety’ essential for supporting comfortable and positive critique of others, and collaborative decision-making. This emphasises the importance of early team building, and initiation of processes designed to support effective communication in large teams.

While we worked in sub-teams based upon shared disciplinary and institutional ties to collect data, we reflect that a benefit for researchers working in a large and disciplinary-diverse team is that it creates opportunity for exposure to perspectives, theories, and stakeholders who they might not normally encounter. Teams might consider empowering researchers to conduct interviews with, or analyse data from, populations outside their own discipline. We reflect that it may have helped our understanding of the complex system and of each other’s world views and preferences. This should help to create the next generation of interdisciplinary researchers, while also supporting senior academics who may find inter-disciplinary working more challenging.

Recommendation 5: Allow Time to Trial Approaches and be Prepared to Change Direction Where Necessary

We prioritised the need to collaborate in coding and interpretation of data using processes that we agreed together. Teams need to build in time for these processes to be developed and tested. Some processes in our study were more time consuming than team members had previously experienced in smaller interview studies. This was due to additional time required to identify and understand different perspectives, and to trial approaches as proposals did not always work the first time as well as anticipated.

In our experience agreed collaborative processes may need later refinement, sometimes substantially, after they have been trialled. This aligns with others’ recommendations (e.g. Jackson & Bazeley, 2019). We agreed an initial procedure for analysing data across the whole dataset by dividing the data between team members using the categories in our coding framework. However, this proved very challenging because of the difficulty of understanding data from other interview teams without sufficient background knowledge of their sectors. We only identified this after trialling the process and then discussing the experience. We acted to address the problem by identifying the alternative multi-stage analysis approach described previously. While this was time-consuming and frustrating for the researchers who were under pressure to have this analysis completed, it was necessary to enable the collaborative analysis we were committed to. It highlights the importance of allowing more time than might normally be anticipated in an interview study to undertake important processes, and the need for constant reflection on decisions.

Recommendation 6: Understand Variation in Publication Requirements Across Disciplines

A challenge to working across disciplines in academia is the requirements for publication in journals with different research paradigms. Where researchers in a team need to meet certain standards or follow procedures to satisfy the expectations of their discipline, this supports the case for allowing some autonomy. In our study we needed to balance this with ensuring we produced meaningful results for the later stages of our applied research project - the identification of practical solutions and opportunities for change in a large and complex system, based on consistent processes of data collection and analysis that were meaningful and acceptable to our disciplinary-diverse team and project partners. Our analysis by necessity had to rapidly simplify some of the complexities of the system. We reflect that this may have promoted our team’s drive for consistency in approach and reduced opportunity for divergence in data collection and analysis.

We expected that our large data set provided opportunity for multiple publication outputs and in addition to our collaborative analysis and collective insights, some interview sub-teams intended to further analyse their own data and produce additional outputs. Therefore, we needed to follow processes that could provide evidence of study quality that would support (or not act as a limit on) researchers when they needed to demonstrate good practice as understood by different disciplines and epistemologies.

An example of varying perspectives in our team related to inter-coding reliability. We recognise that there are a spectrum of perspectives and assumptions in qualitative research that can influence the design of studies, but this needs to be made explicit so that assumptions are clarified. Kidder and Fine (1987) described these differences in approach as ‘small q’/‘big Q’ qualitative research where ‘small q’ qualitative research follows a positivist or quantitative approach, with features such as ‘inter-rater reliability scores’ and that a single truth can be found in the data. In contrast ‘big Q’ qualitative research follows a qualitative paradigm which considers the role of the active researcher who interprets the data to develop themes (Braun & Clarke, 2019). Despite differing opinions amongst team members about these paradigms, through discussion we agreed to enable articles to be presented through either a positivist or interpretivist lens. Therefore, positivist concerns about ‘rigour’ and ‘bias’ that were expected in some preferred journals could be addressed by producing statistics in NVivo from double coding, which in a ‘big Q’ qualitative paradigm are considered less relevant (Galdas, 2017).

Discussing publications also helped to understand wider variations in expectations amongst the team. We experienced differences between researchers from different disciplines in their anticipations regarding what the focus of outputs would be or what their audience would be interested in. For example, some researchers were more interested in publications that sought to influence policy and practice, compared to some who were more focused on developing theory. Understanding variation in publication expectations supported us to recognise where autonomy was needed in how individuals presented data as they prepared their own publications and conducted additional analyses, following our collaborative analysis.

Recommendation 7: Provide Clarity and Guidance on Procedures in Working Protocols

An area where we prioritised centralised decision-making over autonomy to ensure shared processes related to ethics, data management, and audit trails. It was important to be compliant with project protocols and data protection rules. These collaborative rules that were set provided the structure within which to negotiate the use of data. Through discussion, individuals sought to understand the autonomy they had within this structure.

Establishing clear processes are important to ensure quality control in qualitative research (Luciani et al., 2021) and clear audit trails are often cited as useful good practice to ensure rigour (e.g. Guba, 1981; Nowell et al., 2017). Good quality guidance can help to avoid variations in procedures across a large team that can undermine study quality, particularly involving challenges in team analysis and synthesising findings. For example, if there is significant variation in the style or content of interview questions this could reduce confidence that data can be analysed as one dataset. There may also be ethical consequences relating to secure data management and anonymity of participants, which may particularly arise where multiple institutions are involved. Establishing team rules on these ethical issues can help to prevent any inadvertent problems during the study.

While communication is important in any team project, it can be a significant barrier to good collaboration for teams of researchers from different disciplines (Crowley & O’Rourke, 2020; Thompson, 2009; Wang et al., 2019). With greater numbers of researchers across multiple organisations there is increased opportunity for problems to emerge. Potential risks that we identified as being enhanced included non-attendance at meetings, information being misinterpreted, and institutional variation in procedures. Therefore, additional guidance and consideration was needed to ensure consistency and understanding of shared methods and processes that, if not followed, might threaten research quality. To mitigate risks, during the early stages of the study the team produced a comprehensive procedural handbook that contained a detailed set of procedural rules that all teams needed to follow. These were developed to ensure compliance with ethical considerations and data management processes, and consistent formatting of transcripts. Subsequent guidance included detailed information on methodological processes and key milestones.

Data management and storage was a substantial challenge due to varying organisational ethical and procedural rules and requirements relating to data sharing and confidentiality, and shared use of project software, including for remote working. Having access to procedural documents proved critical to ensure that processes were followed consistently. One member of the team took responsibility for updating the guidance and communicating any changes to the whole team. Senior researchers on the team were asked to approve key decisions to ensure their support and lead communication on key procedures to ensure these were adopted and understood.

Working in this collaborative way with little autonomy on procedural rules was sometimes challenging. The NVivo files were merged 16 times during the project and, particularly at the start of the analysis, there were instances of confusion around when this was taking place and examples of team members having to repeat coding in NVivo where they had used an out-of-date NVivo file. While the guidance and protocols described previously were designed to reduce the risk of such incidents, our experiences highlight that communicating detailed procedural steps is inherently challenging in large research teams needing to collaborate. It is an example of where limiting autonomy and emphasising centrally managed processes potentially lowers the risk of reducing rigour.

Recommendation 8: Agree Terminology and Create Clear Definitions to Understand a Shared Language

Clear communication was also important with terminology. As linguistic differences can cause misunderstandings and reduce the respect and perceived value given to other disciplines in a team (Pineo et al., 2021), we prioritised the need to collaborate to agree and consistently use key terms. Different types of qualitative research can use terms interchangeably and disciplines often use jargon and understand terminology differently. Differences were identified through the extensive team discussions. Individuals were encouraged to question terms and to promote their own views, supporting autonomy, which the team built upon to agree shared language.

Autonomy of language development (i.e. allowing researchers to adhere to disciplinary-specific understanding of terms), may help to share concepts across disciplines, if differences in meaning are explicit and understood. This can help develop knowledge and understanding across the team and improve collaboration and interdisciplinarity. Even where it is not possible to arrive at a common definition, having the discussion around it can help with understanding differences across the team. Encouraging use of plain language without stifling the ability to express oneself or oversimplifying the meaning of concepts that may be less familiar to other disciplines is an important balance. We identified instances where researchers were unaware that their language was discipline-specific, so even promotion of use of ‘plain English’ may be challenging and we suggest that it may not be possible to enforce the use of common terms where no consensus is found. Therefore, additional time and support may be needed to understand tacit disciplinary-specific knowledge.

It was also apparent during early discussion of categories and deductive codes in the coding framework that these were interpreted differently across the team. What might have a clear and common interpretation to researchers within one discipline might be used very differently, or be meaningless, in another. This increased the challenge of developing good understanding of others’ data and use of a shared coding framework. To promote collaboration all deductive and inductive codes were accompanied with a definition. Researchers proposing new codes were asked to explain them in our weekly meetings to avoid misunderstanding and support cross-disciplinary respect (Pineo et al., 2021). If necessary, the definition was refined by the team to reflect their understanding.

While we sought to reduce autonomy in use of key terms, we learnt that differences in interpretation and use of terms remained. This may reflect how ingrained our use of language is and how challenging it can be to overcome, or that additional steps to create definitions earlier in the study would have been useful. However, we were aware that selecting one term as the ‘right’ one could imply that the definition within one discipline is somehow superior to others. We therefore agreed common definitions but accepted that language differences were likely to remain and gave time to explain and discuss differences, which supports improved team communication (Thompson, 2009).

Conclusions

In this article we provide critical reflections from our experiences of conducting a large-scale and disciplinary-diverse team interview-based study that sought to understand a complex system. Our reflections during and after the project led us to identify a central issue for researchers to consider in such contexts: balancing researcher autonomy with team collaboration to produce high-quality research. More specifically, we argue that this balance between autonomy and collaboration is not static. It needs to change appropriate to task. Some team tasks require high levels of autonomy, while at different times, collaboration needs to be prioritised. Based on this central observation we have developed a series of eight recommendations for how future research teams might effectively manage this critical balance. This can support researchers from different epistemological and disciplinary perspectives to foster goodwill and maintain effective partnership working.

Collaboration is important for setting research questions, agreeing standard procedures, data management, developing deductive codes, and team analysis across the whole dataset. However, autonomy may be prioritised for sampling, interview question design for different stakeholders, developing inductive codes, conducting disciplinary-specific analysis, and researcher publication needs. Therefore, there is a balance between prioritising collaborative processes based on centralised decision-making and enabling autonomy. Additionally, it is important to support individuals to have a voice in centralised decision-making so that collaboration is based on, and reflect the needs of, the whole team.

Our recommendations reinforce the need to build in time for reflexive practice to understand differences and give voice to different perspectives to enable this balance. Providing sufficient time for discussion and reflection can add to the length of a project but is critical to support teams to be open minded about alternative approaches, to help build trust and confidence in the preferences of others, and to trial and reflect on collaborative processes. This should explicitly be built into projects from the start to ensure that sufficient time and strategies are included to encourage a team culture that is open and respectful to alternative ideas from different disciplines, and to incorporate these.

Our recommendations add to the literature exploring the challenges facing large teams working on qualitative investigations (Giesen & Roeser, 2020; Milford et al., 2017; Jackson & Bazeley, 2019). Our findings make an original contribution to the field by offering critical insights and recommendations for successfully balancing autonomy and collaboration across the different stages of the research process. We argue that large interview teams need to be mindful of the need to prioritise autonomy and collaboration differently, depending on the task they face. Approaching this delicate balance with strategic purpose will lead to the application of robust large-team qualitative methods and successful research outcomes.

Footnotes

Acknowledgments

The authors would like to thank and acknowledge colleagues in the TRUUD consortium for their support developing the study reflected on in this article: Daniel Black, John Coggon, Charles Larkin, Kathy Pain, Nick Pearce, and Cecilia Wong. They are also grateful for Shona Kelly and Judi Kidger for providing useful critique of the draft manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the UK Prevention Research Partnership (award reference: MR/S037586/1), which is funded by the British Heart Foundation, Cancer Research UK, Chief Scientist Office of the Scottish Government Health and Social Care Directorates, Engineering and Physical Sciences Research Council, Economic and Social Research Council, Health and Social Care Research and Development Division (Welsh Government), Medical Research Council, National Institute for Health Research, Natural Environment Research Council, Public Health Agency (Northern Ireland), The Health Foundation and Wellcome.”