Abstract

This article presents a mixed methodology approach that was developed to analyze news coverage of four Canadian disasters. Here, we present the technical aspects of a mixed methods group research project that enabled analysis of more than 3,014 journalistic articles. We explain how alternating between inductive and deductive epistemological stances led to the quantification of characteristics of journalistic treatment not originating from a prior theoretical framework, but rather rooted in the data under study. Although our approach does not escape the difficulties and challenges posed by mixed methods and group research, in this article we present a way out of the usual methodological segmentation in journalistic discourse analysis by simultaneously exploiting the strengths of two approaches often perceived to be opposing.

Journalistic discourse is central to the work of researchers interested in various issues such as crisis communication (Bernier, 2018; Carignan & David, 2018; Yameogo, 2018), cultural communication (Champagne-Poirier, 2018; Schiele, 2018), political communication (Oger, 2009; Strömbäck & Dimitrova, 2006) or health communication (Niederdeppe et al., 2007; Radi, 2006). The news media is enmeshed in relationships of mutual influence with other societal actors and contributes to the co-construction of social perceptions and behaviors (Carignan & Huard, 2016; Paltridge, 2012). As such, analysis of journalistic discourse leads to a better understanding of the social context surrounding certain phenomena.

However, when it comes to planning a study of the journalistic coverage of a given phenomenon, researchers often face time constraints, particularly in keeping pace with current events. This pace accelerates in step with technological developments and the media’s commercial and competitive goals (Brin et al., 2004). An additional element of the dilemma facing researchers is how time is chief among the criteria by which researchers are evaluated. Indeed, the current context, by applying profitability and performance criteria, leads to a market evaluation of scholarly work (Laperche, 2003). This raises a methodological dilemma: How can journalistic discourse be examined within a reasonable lapse of time and in keeping with the current imperatives of the media and academic worlds?

Faced with this question, two contrasting approaches are generally adopted. According to discourse analysts, some will seek to identify topical units 1 while others will be open to non-topical units 2 (Maingueneau, 2011). From a methodological perspective, since this is the perspective adopted in this article, these two approaches are instead categorized as deductive or inductive analysis of journalistic discourse.

Deductive analysis is the most widely used by social science researchers and is the most commonly taught in university communications programs (de Bonville, 2006). This approach is based on preparatory principles enabling the researcher to “objectively decode the mediated narrative using a consistent standard of measurement” (Chartier, 2003, p. 70, freely translated). Prior to the empirical stages of a research project, this deductive analysis calls for an analytical framework that determines the “subjects to be traced, their definition and their categorization” (Chartier, 2003, p. 70, freely translated). Because it involves the treatment of specific elements, this hypothetico-deductive stance generally allows for analyzing a greater number of journalistic articles. As a result, it usually leads to quantitative analysis (Gunter, 2011).

In inductive analysis, conversely, the researcher’s lens is not forged in reference to academic texts, but rather through diligent exposure to the field (Luckerhoff & Guillemette, 2012). While this method is more rarely used by researchers interested in journalistic discourse, some note that there can be “no discourse analysis where the researcher excludes units that escape pre-established boundaries” (Maingueneau, 2011, p. 98, freely translated). This said, this refusal to approach discourse according to a theoretical framework makes journalistic discourse analysis significantly more complex. Openness to the unexpected—a posture inherent in induction—requires considerable attention and sensitivity, and consequently, inductive approaches generally allow for processing fewer journalistic articles.

In short, deductive analysis makes it possible to address issues that have generated substantial journalistic coverage by taking a theoretical and necessarily partial look at them, while inductive analysis allows for teasing out a richer understanding by examining the uniqueness of a phenomenon. Yet unless researchers have access to significant resources and time frames, they will be circumscribed to a more limited scope. What, then, is one to do when wishing to focus on a phenomenon that has generated a large volume of articles, if the goal is to develop a rich understanding of the meaning that journalists have given to the phenomenon at hand?

This article describes a methodological process used to garner a better understanding of the journalistic coverage of major Canadian disasters, 3 namely Hurricane Sandy, 4 the Lac-Mégantic rail accident, 5 the seniors’ residence blaze in L’Isle-Verte 6 and the forest fire that devastated Fort McMurray. 7 Our rationale for focusing on these four disasters is their extensive social, political and economic importance; they all attracted extensive media coverage, with 3,014 journalistic articles 8 across all four disasters having been identified. 9 Journalistic coverage of disasters is likely to have an impact on populations, especially those directly affected by them (Cauhopé, 2008). What is more, disaster victims will generally turn to the news media to stay current on disasters that affect them (David & Carignan, 2017): hence the relevance of the need to properly understand the content of these articles as presented to readers.

In concrete terms, we propose to address in this text a research approach in which we conducted a joint study by carrying out successively 1) a qualitative and inductive analysis and 2) a quantitative and deductive analysis of a corpus of journalistic articles. Starting with a qualitative and inductive approach, we were able to uncover the complexity and richness of the coverage that interested us. Having said that, our move toward a deductive stance has allowed us to go further. Indeed, an analyst adopting a deductive posture to be interested in textual data, such as those that make up a journalistic article, generally sees the advantage that “such studies can uncover features of language that are inaccessible to intuition or that cannot be discovered through the analysis of […] a few texts. This concerns patterning, typicality of usage, and quantification” (Bednarek, 2009, p. 20).

Therefore, our research design for this project made it possible to process a large volume of articles while revealing the human, technological and scientific complexity of journalistic coverage. As mentioned, this process consisted of two stages: each conducted according to different epistemological stances. First, 220 articles per disaster (880 in total) were selected at random 10 for inductive analysis. This analysis led to theorizing on the many elements that make up these journalistic articles. Second, inductive categories were used to devise an analytical grid. This grid was then systematically applied to the 3,014 articles comprising the corpus.

The rest of the text consists of the explanation and demonstration of the mixed method that we have just briefly described. While such an objective is unusual in scholarly literature, it is nonetheless relevant. Dörnyei (2007) noted that, in fact, explanations about mixed approaches to addressing textual data in the social sciences are rare. The vast majority of published studies “have not actually foregrounded the mixed methods approach and hardly any […] have treated mixed methodology in a principled way” (p. 44). To this end, by devoting this article to methodological considerations, we argue that mixed methods can be a comprehensive, complex and rich way to address issues related to journalistic coverage.

Inductive Analysis for Approaching Complex Research Subjects

Two methodological stances were successively adopted during this study, each with its own set of ontological and epistemological considerations. In terms of the inductive posture, we will discuss three elements that especially shaped our approach: 1—Openness to diversity and novelty, 2—Theoretical restraint and 3—Coding toward avenues of theorization. These elements support the purpose of an inductive analysis, which is to generate “theories rooted in and growing out of the field data” (Corbin, 2012, p. X, freely translated).

The Requirement of Seeking out Diversity and Complexity

Inductive analysis is based on the premise that there is no one truth. Rather, there are multiple truths depending on people’s definitions of a given phenomenon, and these definitions vary and evolve according to the time, place, perspective, observer and situation (Corbin, 2012). In our study, this consideration mainly translated into seeking out different perspectives on the journalistic coverage of the four disasters, in terms of both data sources and analysts.

Hence, in the inductive analysis of the journalistic coverage, each article constitutes a unique perspective on reality. For example, a journalist writing about the orphans created as a result of the Lac-Mégantic train explosion is providing a very specific perspective on the events. The same journalist could have taken other angles, such as first aid work, legal liability for the explosion’s repercussions or property damage, but decided otherwise. In our study, to obtain the most complete understanding possible of the coverage of the four disasters, an effort was made to bring together a maximum of such perspectives: the articles collected are from 125 different media.

While the journalists’ multiple perspectives contribute to the richness of the data, having the perspectives of several different researchers further helps uncover the full complexity of the meaning that can be given to news coverage. Indeed, “a major argument of this methodology is that multiple perspectives must be systematically sought during the research inquiry” (Strauss & Corbin, 1994, p. 280). In an inductive analysis, “analysts are interpreters and conveyors of meaning” (Corbin & Strauss, 2015, p. 67).

During the inductive part of this study, the 880 randomly selected articles were analyzed by a 26-member research group (made up of four principal investigators and 22 research assistants 11 ). There are several advantages to a group process of this nature: “benefits associated with conducting qualitative teamwork include addressing large, complex problems, incorporating a range of specialized knowledge […], sharing access to resources and producing work that is more creative and original” (Hall et al., 2005, p. 395).

Advantages and Challenges of Teamwork Research in Qualitative Settings

The creation of a research team made up of principal investigators familiar with the issue, as well as research assistants, kept the inductive work on track. While the principal investigators were responsible for ensuring the coherence of the approach, the research assistants, who had little or no theoretical background with which to address the issue, contributed a fresh, innovative and very close look at the data.

This approach made it possible to combine two perspectives that Lejeune (2014) calls “praising theoretical ignorance” (p. 23, freely translated) and “critiquing theoretical ignorance” (p. 24, freely translated). On the one hand, inductive researchers claim ignorance of the scholarly literature as a guarantee of the quality of their work. On the other hand, some believe that theoretical ignorance constitutes an abdication of the researcher’s craft and that research that sacrifices these requirements cannot claim to be scholarly (Lejeune, 2014).

Although these perspectives are seen as opposing, the approach adopted here capitalized on their advantages, without suffering from their disadvantages. Indeed, from the standpoint that “theories abuse data” (Lejeune, 2014, p. 23, freely translated), the participation of assistants—whose only background lay in methodological training in the inductive approaches—proved to be judicious. The assistants were able to uphold interpretations rooted in collected data. Unlike experienced researchers wishing to carry out an inductive study, they did not have to strive to adopt a tabula rasa in the face of existing theoretical knowledge on the phenomenon under study: their initial stance was (to the extent possible) free of theoretical influence.

On the flip side, an absence of theoretical knowledge can make it overwhelming at times for the analyst, which can be discouraging (Lejeune, 2014). Having the process supervised by principal investigators helped avoid this adverse effect. Thanks to rigorous monitoring of the assistants’ work and progress, it was possible to redirect them when their interpretations took them too far away from the general research issue, to push them to more closely investigate certain elements that had come to light and were relevant to the analysis of the journalistic discourse, and to reassure them of the relevance of their analysis.

This collaborative—and pedagogical—approach promoting both theoretical ignorance and theoretical knowledge did, however, pose two challenges. First, the principal investigators had to be very careful in their supervision in order to guide the assistants, but naturally, without imposing theoretical knowledge upon them. Second, the assistants had to develop the ability to work autonomously in a flexible environment.

Faced with the reflections and empirical findings shared by the assistants, the principal researchers had to temporarily suspend the use of existing theoretical frameworks (Guillemette, 2006). Failure to do so would have created a hasty rise in abstraction and led to a deductive posture. In an inductive analysis, it is important not to “force” meaning on the data, but rather to help “tease” the meaning out of it (Glaser, 1992). Next, although the assistants’ theoretical ignorance enabled them to maintain close proximity with the data, the fact that they had no “theoretical sensitivity” 12 (Strauss & Corbin, 1994, p. 280) to the issue studied was a source of insecurity for them. Since they were not in a position to naturally compare their findings with what is known about the journalistic coverage of disasters, the assistants, at times, doubted the relevance of their analyses and the value of their contributions. As Corbin and Strauss (2015) note, “background, knowledge and experience not only enable researchers to be more sensitive to concepts in data but also enable them to see connections between concepts” (p. 79). For the assistants, as is generally the case in the first inductive steps (Lejeune, 2014), the work they did seemed fruitless and therefore excessive.

Since an inductive approach lacks precise goals or a pre-established analytical structure, and does not encourage researchers to answer specific questions, it can be difficult to find meaning during the analytic work. Hence, it was necessary for the principal investigators to emphasize the value of the assistants’ work in order to make their work meaningful yet without assigning it a meaning that would have ended up restricting analytical perspectives. Multiple meetings were held to support the research assistants in their roles, to explain to them that the discomfort they felt (about their unstructured findings) was normal and, if necessary, to give them general examples to illustrate the relevance of their contributions to the knowledge of the issue under study.

Helpful Practices for Open, Axial and Selective Coding

Reaching a grounded theory, in inductive approaches, generally requires a combination of three levels of coding: open, axial and selective (Corbin & Strauss, 2015; Guillemette & Lapointe, 2012; Labelle et al., 2012). The 880 articles about the four disasters were analyzed according to this three-tiered process, which in this group project involved rigorous planning and the adoption of facilitation techniques. This section presents the sequence of the three levels of coding before going on to discuss a practice considered to be especially helpful in axial coding, namely identifying relationships between the codes by striving to categorize them into the six “W’s” 13 (Charmaz, 2006).

Open coding

Open coding is a level of analysis that requires researchers to choose to get immersed in the material and discover the characteristics of the phenomena under analysis (Lejeune, 2014). Generally, this level takes the shape of an operation during which the researcher develops “a large number of codes from their empirical corpus, line by line, paragraph by paragraph, striving to stay close to the primary data” (Labelle et al., 2012, p. 77, freely translated).

In order to carry out this first process of developing concept to stand for data (Corbin & Strauss, 2015), each of the 22 assistants started off analyzing their corpus of 40 articles with a floating reading of a single article. During this reading, the assistants had to limit themselves to identifying the “analytic pieces” (Corbin & Strauss, 2015, p. 221) likely to yield a better understanding of the issue. An analytic piece could be in the form of a word, a line or a paragraph, in short, any written element that appeared relevant.

Once this identification was complete, the assistant took a closer look at the identified units to determine what they might shed light on. This process of interpreting the analytic pieces constitutes the first rise in abstraction. The goal here is to conceptualize the units in codes. Hence, all the relevant analytic pieces of a first article were linked to codes. The assistant then turned to the second article, and so on, until all forty articles were openly coded. This is, of course, a very time- and attention-intensive activity. However, the density of open coding is expected to decrease over the course of observations. In inductive approaches, the focus is on uncovering the variety—rather than recurrence—of a phenomenon’s characteristics. Once a characteristic has been understood, recoding all its subsequent occurrences becomes futile. While, for example, the open coding of a first article led some assistants to produce around thirty codes, the coding of the fortieth article sometimes resulted in identifying only one new one.

Axial coding

Axial coding is a level of analysis in which the researcher examines the characteristics of open codes with a view to identifying systems by which these codes might be linked (Corbin & Strauss, 2015). Ultimately, the idea is to analyze the codes to see how they can be connected together. This process results in aggregating many open codes under a single axial code. The overarching goal is to end up with a manageable number of codes.

After identifying an open and, inevitably, messy conceptualization of their forty articles, many assistants found themselves with more than 150 codes. This point, at which the novice analysts were asked to raise their level of reflection to identify axial codes, was critical. Faced with a heap of codes from which it was impossible, in this form, to derive a general understanding—but which nevertheless required many hours of work—the assistants often wound up discouraged. The principal investigators therefore had to use a facilitation technique to pursue the rise in abstraction. The technique consisted in using the “families” of Anselm Strauss’ axial coding paradigm (Lejeune, 2014). To facilitate the operation of distancing toward theory that defines axial coding (Guillemette & Lapointe, 2012), the assistants were encouraged to search for the links uniting their codes by asking themselves the six questions set forth by Strauss (1987), namely: Who? What? When? Where? Why? and How? These questions, often called the “six W’s,” are suggested here not as theoretical orientations, but rather as tools to help along the emergence of systems linked to the context of the phenomenon under study (Charmaz, 2006).

Moreover, the Six W questions are particularly appropriate when studying a news story since, for many decades, journalism has abided by an ideal: “All well written news stories contain each of the 6 W elements” (Goehler et al., 2006, [online]). Most of the time, “journalistic writing answers very specific questions [the six Ws] that serve as rules” (De Coster, 2007, p. 17, freely translated). Hence, since the W’s are integral to the context of the production of meaning that underlies the articles analyzed in this project, it was appropriate to ask how the elements identified by the assistants could answer these six questions.

To facilitate the work of the assistants (who often faced a daunting number of open codes), the principal researchers recommended that they systematically re-approach each of the codes by asking themselves which W the code responded to. Next, when the open codes were divided into six “families,” it was much easier for the assistants to uncover links between the codes, according to the W aspects they answered. Indeed, the six W’s are not axial codes in themselves. A number of axial codes can be created to answer a W. The six questions are therefore guidelines only. For example, by asking themselves “how” journalists report their news, assistants formed axial codes relating to journalistic intention, the degree of vulnerability assigned to disaster-stricken communities, and the ways in which the journalists characterized the disasters. The elements likely to emerge from the 6 W questioning therefore depend on both the phenomena studied and the analysts.

For the assistants, axial coding was completed when, after individually “recoding” the codes from the open coding, they met with members of their analysis team (there were two groups of five assistants and two groups of six) to collate their results. During these meetings, the principal investigators were present to immerse themselves in the meaning given to the coding process. These meetings resulted in the formulation of axial codes representative of each team’s understanding. In other words, in each of the four teams, members had to present their axial codes and compare them with those of their teammates. If similar codes were presented, they were aggregated. If new characteristics of a code were suggested, they were added to further nuance the code’s meaning. If a brand new code was introduced, it was added. In short, the team’s final axial coding took into account all of what was found by the assistants on that team.

Selective coding

After the teams of research assistants arrived at a stabilized version of their axial coding, they presented the results of their interpretations to the principal investigators. Thus, the principal investigators were presented with four inductive analyses of disaster reporting. That said, they came to an understanding that integrated the four perspectives the teams had developed. Hence, they performed a new rise in abstraction, and carried out selective coding.

While axial coding makes it possible to categorize the characteristics of a phenomenon, selective coding aims to pinpoint categories that can genuinely increase the analyst’s understanding. When moving to the level of selective coding, “the researcher ponders the role of these categories for the phenomenon under study” (Lejeune, 2014, p. 115, freely translated). Indeed, there is no guarantee that all of the categories discovered will be useful in leading to “the development of a theoretical interpretation that provides a better grasp and understanding of human phenomena” (Corbin, 2012, p. ix, freely translated). Especially in the context of a research project where the analysts in charge of open and axial coding are unfamiliar with the phenomenon under study, it is likely they will identify a few known elements which may not not lead to identifying any new perspectives.

To maintain categories that would serve as components of a theoretical model explaining the phenomenon, and to eliminate superfluous categories, the principal researchers proceeded with the “general method of constant comparison with empirical data” (Guillemette, 2006, p. 35, freely translated). In selective coding, the analyst “refers to the academic literature for ideas to be juxtaposed with the emerging theory and incorporated into the final theoretical development” (id.). Thus, the principal researchers reviewed all the categories uncovered by the research assistants by tracing back their empirical origins. They then pondered how these new results might fit into what is already known about the phenomenon. By simultaneously comparing the categories with the data from which they were derived and with existing scholarly literature, the researchers made sure to maintain empirically sound and theoretically innovative elements.

Lejeune (2014) advises that selective coding should go hand-in-hand with a modelling effort. According to Lejeune, “modelling helps the analyst integrate the connections arising from the axial coding” (p. 119, freely translated). Considering that, generally speaking, the purpose of research is that it be disseminated and communicated to the academic community, modeling helps refine the results so that only those that are truly consistent with the analysts’ understanding are retained. The modelling process yields a model rooted in the issue studied. In terms of the journalistic coverage of the four disasters, the research team’s inductive analysis of 880 articles led to an understanding of the phenomenon as being constructed around seven processes. These processes are illustrated in the following model (Figure 1):

Model of the coverage of the four disasters.

Although the focus of this article is methodological—as opposed to bearing on the study results—in order to illustrate the potential of such an approach, following is an overview of the meaning of the seven identified processes:

It was found that the journalistic articles form a narrative framework that features multiple players’ intertwined experiences. Journalistic publications thus give versions of disaster realities, with some versions highlighting the role of disaster victims, for example, and others emphasizing politicians’ experience of the phenomena.

The articles gain from being interpreted in their relation to time, in that the article’s time of publication positions it in relation to the disasters at hand. Consequently, the realities conveyed by the articles transform over time. For example, the articles published when the fire in Fort McMurray was still active showed few commonalities with those written 8 months later. While the former were, in some cases, about the evolution of the blaze and the steps taken to put it out, the latter were generally retrospective portraits focused on the reconstruction surrounding the disaster.

In their articles, the journalists will deliver their own diagnoses of the disasters’ impact on the affected communities. In doing so, these news professionals present realities characterized by highly variable potential for community resilience. For example, some journalists presented the Lac-Mégantic community as “devastated” by the railway accident, while others underscored its ability to “rise from its ashes.”

The articles feature processes known as “cognitive shortcuts” that journalists use to characterize the disasters. While some authors limit themselves to describing the events, others use metaphors, comparisons or personification to spark readers’ imagination. By nicknaming Hurricane Sandy “Frankenstorm” or referring to the Fort McMurray fire as “The Beast,” journalists make use of social heuristics to deliver their versions of the reality of the events.

Journalistic articles are position statements in relation to the professional context in which they are written, and evidence a spectrum of practices ranging from a neutral and factual stance to an activist and emotional one. Some argue their vision of reality by drawing on logos, and others, on pathos. The following excerpt, dealing with the fire in L’Isle-Verte, aptly illustrates this shift toward emotion: “The remains of the Résidence du Havre continue to be a scar on the village. This hideous disfigurement in the middle of main street is a reminder to the inhabitants that they lost a loved one here. A father, a sister, an uncle, a friend. Burned to death or asphyxiated. Killed while calling for help. Dead after jumping off a burning balcony” (La Presse, 2014, freely translated).

The analyses showed how disasters have countless facets and writing an article is a process in which journalists select and congeal only some of them. The themes featured in the articles are thus an outgrowth of these selective processes. For example, articles about Hurricane Sandy might portray the disaster as a manifestation of climate change, a financial issue for insurance companies, or a human tragedy, among other things.

Finally, journalists become agents of change and actively contribute to a return to “normal.” In doing so, they insert themselves into the phenomenon on which they are reporting. For example, to prevent a repeat of the Lac-Mégantic events, journalists call on governments to revise railway regulations to prevent trains carrying explosive products from passing through densely populated areas. In other cases, journalists will urge their readers to give to the Fort McMurray disaster victims to help them recover from the disaster as quickly as possible.

Analytical Grid Development and Deductive Coding Procedure

Inductive analysis of the 880 items took approximately 1,000 hours of work. Hence, using this method for the 2,134 other articles (the total corpus being composed of 3,014 articles) seemed excessive and, moreover, futile. Continuing the analysis beyond the identified threshold of “theoretical saturation,” i.e. the point where a sufficiently complete understanding of the issue has been attained to be able to judge that it would not be relevant to analyze further data, cannot logically significantly improve the research process if it is limited to the inductive paradigm (Champagne-Poirier, 2016).

In concrete terms, by reaching theoretical saturation, we were able to identify the components of the journalistic coverage of these disasters. However, while these results allow a nuanced consideration on the complex components of the phenomenon, they say little about the positioning of these components in the system in which the journalistic articles were produced [the Canadian press system]. Indeed, these qualitative results are accompanied by questions which are quantitative by nature, such as: What are the themes most demented by journalists? According to journalists, who are the actors most affected by disasters? How do journalists position themselves against their ideals of practice when covering such disasters? To go further in our understanding, we had to change our objectives in terms of data analysis.

Hence, after having generated a grounded theory of the journalistic coverage of the four disasters, a shift was made to a new, hypothetical-deductive research paradigm. This new stance was adopted in order to be able to process the entire corpus using reasonable resources. This transition, during the second phase of the research, involved upholding new premises, namely those commonly associated with the neopositivist movement. The research objective therefore evolved toward “the use of statistical analyses that [allow] phenomena to be described, explained or predicted by means of observable and measurable variables (positivism), but with the understanding that the object of study may be influenced by the researcher and that what is observed remains a probability and not an absolute truth” (Corbière & Larrivière, 2014, p. 1, freely translated).

This being the case—and this is a major distinction between a purely deductive approach and a mixed one—the theoretical framework adopted here is directly linked to the issue under analysis. Thus, armed with an understanding rooted in the journalistic coverage of the four disasters, the principal researchers undertook to develop an analytical grid based on the results of the inductive phase. Specifically, they used the categories from the model presented earlier and, taking into account the properties identified at the different levels of coding, transformed them into quantifiable variables.

Therefore, the result of the qualitative analysis— the grounded theory— was used as a theoretical framework. Just as all researchers conducting hypotheticodeductive research will use theoretical models (or theory) to guide their analyses, the grounded theory presented earlier was used for this purpose. In concrete terms, the seven components of the model were revisited and turned into measurable variables. Depending on the nature of the components, binary or nominal variables were created.

Presentation of the 23-Variable Deductive Analytical Grid

The categories led to the creation of an analytical grid with 23 variables in total. To provide an overview of this grid, the following portion of the text presents the grid components according to the category from which they are drawn.

Category 1: The actor-staging process

The observation of a process of staging actors led to forming fourteen “families” of actors who took part in the journalistic coverage of the four disasters in one article or another. This process resulted in the development of 14 binary variables measuring the presence or absence of one or more actors from these families in the articles (see Table 1).

14 Families of Actors.

Category 2: The temporal positioning process

The inductive analyses of the articles showed that writing of the articles is underpinned by a temporal positioning process. Analysts have noted—through the concentration of treatment, references to specific actors and the uniformity in the coverage of certain themes—that journalistic coverage seems to hinge on four periods. Coverage shows certain particularities according to whether it is “before the onset of the disaster” (for example, before Hurricane Sandy hit the coast), “during the course of the disaster” (for example, in the 3 months it took to fully extinguish the Fort McMurray blaze), “from 0 to 6 months after the end of the disaster” (for example, for the fire in L’Isle-Verte, from January 25 to June 25, 2014) and “more than 6 months after the end of the disaster” (for example, for the Lac-Mégantic explosion, from January 8, 2014 to the time of data collection in March 2016). The “time of publication” variable was integrated into the analytical grid and the values for this variable (whose meanings are adapted according to the unfolding of the various disasters) are made up of these four key periods.

Category 3: The community-resilience diagnosing process

The category of community resilience diagnosing process takes the form of a variable that breaks down into six values (see Table 2). However, it should be noted that not all articles contain such a diagnosis.

Types of Diagnoses.

While this nominal variable does not yield a unit of measurement for this process, it does allow for a classification of articles so as to measure journalists’ propensity for these ways of describing the resilience of disaster-affected communities.

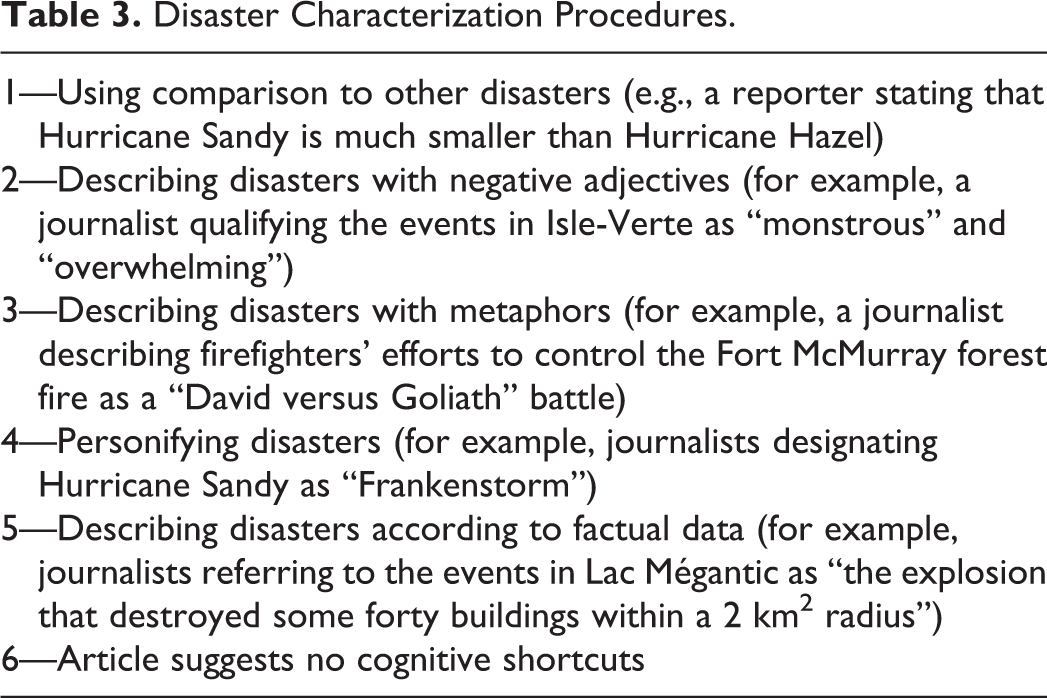

Category 4: Cognitive-shortcut suggestion process

A process of suggesting cognitive shortcuts was observed during the inductive analyses. Several procedures were used in this respect and it is not uncommon for the same article to contain more than one. To account for this process, two nominal variables were included in the grid: one to identify the main process and one to identify the secondary process used by the journalists. The values are the same for both nominal variables. When this process is present in an article (not all journalists use these procedures), the grid classifies usages into five procedures (see Table 3).

Disaster Characterization Procedures.

Category 5: Journalistic-ideals positioning process

Journalists will express their positioning with respect to journalistic ideals by writing articles with different intentions; the same article may reflect several. Two nominal variables were created to identify the primary and secondary journalistic intentions behind the articles. Inductive analyses identified six values for these variables (see Table 4).

Journalistic Intentions.

It should be noted, however, that the variable classifying secondary intentions may be assigned a seventh value, “not applicable,” since some articles express no secondary intention.

Category 6: Facet selection process

Through the themes they address in their articles, the journalists illustrate a process of selecting facets of reality. As such, one of the goals of the deductive analytical grid is to offer up a portrait of the facets of disasters foregrounded by journalists. Two nominal variables were included for this purpose: one identifies the main theme of an article; the other, the secondary theme. The inductive analyses identified 18 “thematic families” that make up the disaster coverage in question. The grid therefore helps classify the articles according to what they deal with (see Table 5).

Article Themes.

It should be noted, however, that the variable aiming to classify secondary themes can be assigned a nineteenth value, “not applicable,” since some articles have no secondary theme.

Category 7: Back-to-normal process

Finally, the grid takes into account the fact that the articles contain traces of a process by which journalists help bring about a return to normalcy in various ways. As noted in the first part of this study, journalists will use their articles to express what they believe are the avenues to be taken to restore daily life in disaster-affected communities, to prevent similar disasters from happening again, or to minimize the negative effects of subsequent disasters. A nominal variable in the grid therefore relates to the arguments made by journalists. Five “families of recommendations” were identified and serve as the values for this variable (See Table 6). It is important to note, however, that some articles bear no traces of this process. Hence, this variable may also be assigned a value of “not applicable.”

Proposed Recommendations.

Coding of the Articles

Once the analytical grid was drawn up by the principal investigators, it was time to proceed with the coding of the 3,014 articles. The objective of this second part of the study was to tease out statistical observations from the corpus of articles. For this purpose, a team of five research assistants was put together. During a 6-hour training session, the assistants were trained on the content of the grid and its application. To make sure the assistants understood and applied the grid in a similar fashion (identical would have been impossible given the qualitative nature of the variables), part of the training was devoted to a supervised coding workshop. The purpose of the workshop was to get the assistants to develop, verbalize and negotiate their coding reflexes. The training was done in a group setting to maximize the chances of arriving at uniform coding; the assistants contributed individually to a collective understanding.

Once the training was completed, the assistants were all assigned a batch of articles to be coded using the grid that had previously been transposed in SPSS software. Using this deductive method, the 3,014 articles were analyzed in less than 300 hours of coding.

Discussion. A Mixed Approach for a Thick and Operational Understanding of Journalistic Coverage in Times of Crisis

As Corbière and Larrivière (2014) have noted, the splits between the empirical-inductive and the hypothetical-deductive “tend to blur or even disappear, given that qualitative methods are commonly used in conjunction with quantitative methods and vice versa” (p. 2, freely translated). That said, there is a certain logic to the prerequisite use of inductive and qualitative methods since they “seem to lie at the very core of the development of a concept or theory” (Corbière and Larrivière, 2014, p. 3, freely translated). The use of mixed methods presented in this article highlights the complementarity between the inductive and deductive paradigms: the wane in the relevance of continued inductive analyses (owing to theoretical saturation) marked the beginning of the relevance of deductive analyses (due to the emergence of a theory that could serve as a framework). The results of our mixed approach borrow from both paradigms, in that they sketch a quantitative portrait of qualitative elements that are well rooted in the phenomenon under study.

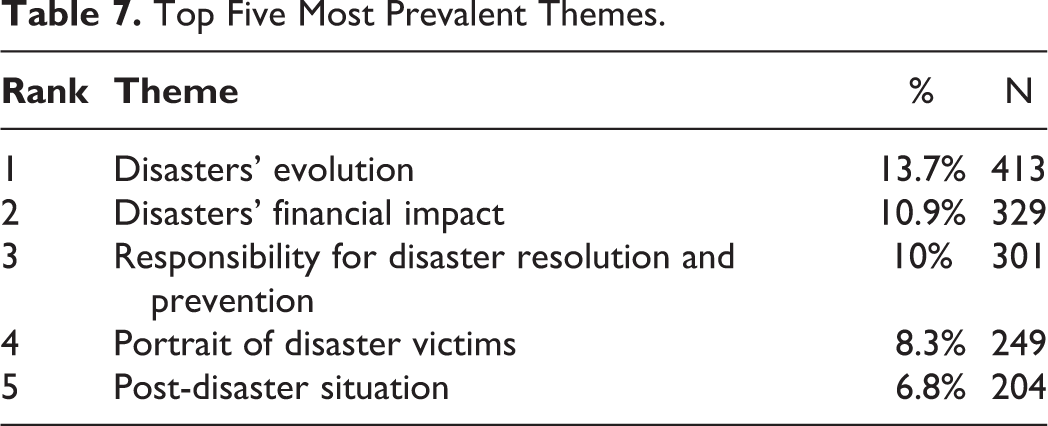

To illustrate the potential advantages of such an approach, we now turn to some of the results of the mixed-methods study of the media coverage of the four disasters in question. First, among the 18 themes discovered during the inductive analysis, those most prevalent in the journalistic coverage are: (Table 7)

Top Five Most Prevalent Themes.

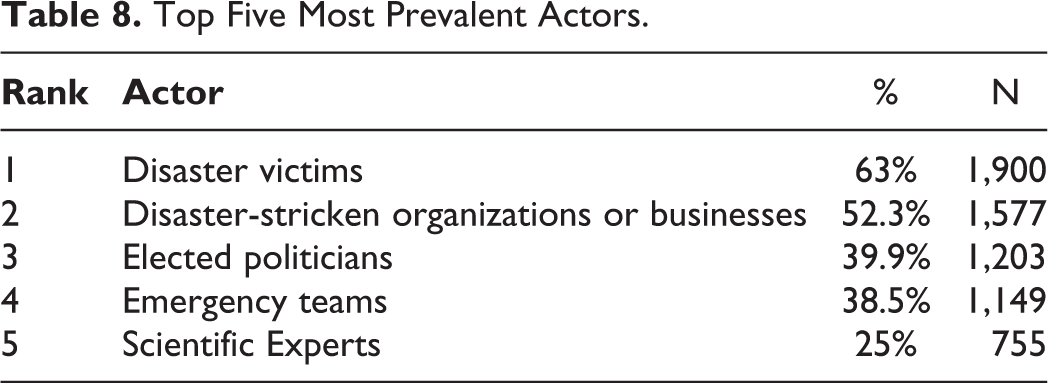

Then, among the 14 actors identified during the inductive part and likely to feature in the articles, the most commonly found are: (Table 8)

Top Five Most Prevalent Actors.

Also, while 74.8% of the articles (n: 2,255) have an informative primary journalistic intention (57.4% seek to inform and 17.4%, to relate testimony), 9.2% (n: 276) of the articles try to reassure or sympathize with the affected populations. In 7.6% (n: 228), the aim is to speak out against a situation deemed problematic. In 5.5% (n: 167), a shift is made to emotion in an attempt to dramatize or shock. In 2.9% (n: 88) of the articles, the journalists used the platform of the newspaper to make an appeal, especially for the disaster victims.

The inductive analyses also revealed that journalists use different methods to characterize the disasters addressed in their articles (see Table 9).

Characterization Procedures, in Order of Occurrence. 14

Moreover, while one of the results of the inductive part of our analysis was that journalists will propose solutions in their articles to help foster a return to normalcy, the quantitative analyses show that such instances are rather rare. Less than a quarter of the articles (24.8%, n: 747) set forth solutions. The two most popular deal with revising prevention standards and measures (11.8%, n: 356) and government subsidies and grants (4.5%, n: 136).

In addition, journalists will present disaster-affected communities according to different standards. While some articles report on communities as being prepared for disasters (2.3%, n: 69) and able to recover from them autonomously (5.9%, n: 178) or with outside help (18.3%, n: 553), others portray communities as vulnerable (26.2%, n: 787) or completely destroyed (14.3%, n: 431). 15

In the face of these few statistics, one could wonder about the added value to these additional (quantitative) analysis. Besides being more evocative for those who prefer to approach social reality using numbers, what does this mixed method really allow? Here, our desire to approach disaster coverage in a mixed method, in addition to the methodological criteria discussed above, is rooted in the problem of contemporary journalism.

While the qualitative results of our research are relevant in themselves to understanding the composition of the phenomenon, quantitative findings on this composition are essential in a context where journalistic coverage is not always impartial [despite journalistic ideals valuing the opposite (Grevisse, 2010)]. Indeed, Leray (2008) found that “the press takes a position four times out of 10 on average, which means that 40% of the media content is oriented” (p. 10, freely translated). Also, according to the author, “of this bias expressed in various forms by the media emerges a general trend that is important to grasp and evaluate in order to better understand what is conveyed” (p. 10, freely translated). Thus, while it is relevant to know that journalists can cover the Canadian disasters by focusing, for example, on the economic consequences of disasters or by focusing on the disaster victims, it is equally interesting to know that journalists, in their subjective coverage, will address economic issues 31% more often than the disaster victims (N = 329 vs. N = 249). Moreover, even though the most commonly used procedure to characterize disasters is the use of factual data (which is coherent with the prevalent journalistic ideal), the other processes we collected (negative adjectives; metaphor or metonymy; personification; comparisons to other disasters) indicate that most of the articles aim to link disasters with other elements. Our quantification, in this case, allows us to position the results of our research within the system that produced the data used. Therefore, confirming that the journalistic coverage of the selected disasters is worth addressing as an oriented process. Thus, the addition of qualitative and quantitative allows for a much better picture of what is conveyed, and to what extent.

In sum, in this mixed process, the qualitative results provide an interpretive framework for the quantitative results and, in turn, the quantitative results enrich the meaning of the qualitative results. The approach we have described thus appears optimal in the context of a process with the overall objective of better understanding the complexity of journalistic coverage in general, as well as in the context of natural and industrial disasters and catastrophes.

Conclusions

The mixed-method approach adopted in this project stands as an option in order to overcome the dilemma between comprehensive and descriptive study. It opens up an avenue for researchers to gain a thick and detailed understanding of how journalists treat certain issues, while at the same time making it possible to measure the presence of characteristics of this treatment. Moreover, it allows researchers to be attentive to phenomenon-specific elements, even during a study involving thousands of journalistic articles.

As also concluded by Dörnyei (2007), our experience leads us to argue that mixed methods research may allow for: an increase in the strengths of both qualitative and quantitative research, the study of different levels of complex phenomena, improving the scope and strength of research findings and addressing a more diverse pool of researchers. This said, while this approach seems to narrow the gap between two paradigms, compared to automated analyses generated by lexicometric software, it requires considerably greater resources. In addition, researchers adhering to the criterion of positivist scientificity that to be valid, social science research must eliminate analyst bias will hardly find this approach to be a satisfying alternative. Although a concern for uniformity is inherent in the process of coding articles during the deductive phase, the fact remains that the use of a fundamentally subjective and qualitative grid precludes complete control over analyst bias. This is even more complex in processes involving multiple coders.

On the other hand, researchers who are more interested in the substance than in the form of articles will benefit from this methodological approach. Also, by taking a plural and flexible approach to the concept of “truth,” this method allows the creation of analytical tools that can be adapted to the complexity of social reality: “grounded theories can be revised and updated as new knowledge is acquired” (Corbin & Strauss, 2015, p. 11). Indeed, in subsequent stages of research, the understanding developed to date regarding journalistic coverage of Canadian disasters will be enriched. Future efforts will include introducing greater sensitivity to the grid. In concrete terms, the grid will be tested and improved during analysis of journalistic articles written in the aftermath of other Canadian disasters, specifically the floods that affected several regions of Manitoba in 2011, the tornado that wreaked havoc on the Ontario town of Goderich in 2011, the forest fires that struck the Slave Lake community in 2012, the floods that swept several Alberta towns in 2013, the flooding that hit the neighboring cities of Ottawa and Gatineau in 2017 and 2019 and the tornado that devastated several parts of Ottawa and Gatineau in 2018.

The objective is to build an improved understanding of the journalistic coverage of these events over time and [unfortunately] as disasters occur.

Footnotes

Acknowledgments

The authors would like to thank the research assistant from the undergraduate program in applied communication at Université de Sherbrooke for their role in the research project. It could not have taken this form without their professionalism, thirst for learning and determination. The authors would also like to thank the research assistants from the University of Ottawa who helped create the database. Many thanks to Zobaida Al-Baldawi, Nilani Ananthamoorthy, Vanessa Bournival, Amanda Mac, Camille Mageau, Lyric Oblin-Moses, Karen Paik, Christina Pickering and Sydney Ruller.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is part of a broader project entitled “Supporting disaster resilience through community engagement and social participation” and funded by the SSHRC (Dossier #: 435-2016-1260).