Abstract

Interviewee Transcript Review (ITR), a form of Respondent Validation, is a way to share and check interview transcripts with research participants. To date, the literature has considered how these practices affect data quality, focused on the ability of a participant to correct, add or remove data. Less considered is the extent to which ITR might enable sensitive research. Reporting on research examining the experiences and perspectives of different stakeholders involved in Domestic Homicide Reviews, 40 participants who took part in semi-structured interviews were offered the opportunity to review their transcripts. This paper contributes to the understanding of the use of ITR, demonstrating how it can be used to increase participant confidence to provide assurance about, and indeed active involvement in, the steps being taken to preserve their anonymity.

Keywords

Like many qualitative researchers, interviews are a central part of my research practice and a primary data-collection tool. Reflecting their central role, there is extensive guidance on interviewing (e.g. Patton, 2002; Flick, 2007; Creswell, 2013; Bryman, 2016). Meanwhile, a broader literature examines specific issues in interviewing, such as the risks and benefits to participants (Buchanan & Wendt, 2018; Hamberger et al., 2020), researcher experience (Lavis, 2010), and reflections on ethical considerations (Mannell & Guta, 2018).

Any researcher designing and undertaking interview-based research must make various decisions. In my research, a major consideration was that I would be undertaking semi-structured interviews in the context of domestic abuse, a field that is considered sensitive (Buchanan & Wendt, 2018). Sensitive research can be defined as any research that poses a potential risk to those being researched and/or the researcher in terms of data collection and management, as well as dissemination of findings (Lee & Renzetti, 1993). One decision to be made related to the collection and analysis of data is if and how to share this with participants. This practice, known as Respondent Validation (RV) (also known as ‘Informant Feedback’, ‘Member Checking’ or ‘Participant Verification’), involves offering participants the opportunity to review and then respond to an interview transcript and/or findings (i.e. by agreeing or disagreeing, clarifying or proposing changes to the same) (Goldblatt et al., 2011; Thomas, 2017; McGrath et al., 2019). The form of RV where transcripts are shared is known as ‘Interviewee Transcript Review’ (ITR) (Hagens et al., 2009).

When I began my research, I anticipated sharing transcripts with participants. However, I had a scant understanding of this practice, which I would not have described as either a form of RV or specifically ITR. My desire to share transcripts reflected my previous experience and some of the unquestioned assumptions I brought from professional practice, something which will be discussed later in this paper. Thus, as I developed my methodology and then began to undertake interviews, I had to consider and engage with the practice of RV (and, as I will describe, ITR specifically). This led me to identify a gap in the literature in terms of the potential benefits of using ITR when conducting sensitive research. It is this gap that this paper addresses. I first introduce RV/ITR, before summarising my research and why it was sensitive. I then set out my rationale for using ITR specifically, with this account also serving as a discussion of the benefits and concerns related to this research practice. Next, I describe my method, including the decisions I made and the issues I encountered. Thereafter, I report findings that show how, if they chose to do so, participants used ITR and the impact on them in doing so. I also address implications for myself as a researcher. Finally, I discuss the findings, reflecting on them and identifying limitations and areas for further research.

Respondent Validation and Interview Transcript Review

The popularity of RV has been attributed to Lincoln and Guba (Varpio et al., 2017), who described RV (with they referred to as member checking) as ‘the most crucial technique for establishing credibility’ and as a means to check data and findings with participants (Lincoln & Guba, 1985, p.314). In contemporary use, RV has been identified as one way of assessing research quality (Birt et al., 2016). Although Lincoln and Guba did not elaborate on the specifics of this research practice, they described RV as being an ongoing process. However, in practice, RV has come to be used post hoc, with the sharing of transcripts or findings with participants being used as a means of verification (Morse et al., 2002; Varpio et al., 2017). While some research handbooks provide a useful summary (e.g. Bryman, 2016, p.385), in others, some form of RV is mentioned but little explicated (Patton, 2002, p.384; Flick, 2007, p.66; Creswell, 2013, p.54).

When RV is used, the rationale, procedures or outcomes are reported with varying degrees of specificity, and sometimes not at all (Goldblatt et al., 2011; Thomas, 2017). Some examples, selected as illustrating the use of ITR as a form of RV specifically, typify these variances.

In their account of using member checks, Rager described returning transcripts to participants (2005, p.26). Rager’s study is illustrative for two reasons. First, although participants are reported as reacting positively, no information is offered on the process or if any specific feedback was provided. Second, despite initially understanding this practice as about accuracy, Rager identified a broader impact, including it being an opportunity for (the researcher’s) closure and self-care.

In another study, Valentine reflected on their decision to share transcripts, describing how they made a ‘point of checking out the accuracy of the transcript with interviewees’ (2007, p.165). Although Valentine does not provide any further information, nor do they name this practice, they do provide a rationale: In addition to enhancing accuracy, this was a way of acknowledging the contribution of participants. Valentine also provided an example of an outcome, reporting that a participant identified misspellings in their transcript. Correcting these misspellings improved accuracy but, significantly, Valentine also suggested this had a broader meaning because the research was an examination of bereavement. Specifically, the interview included an account of the participant’s loved one, so Valentine suggested that a concern with accuracy was also a way of discharging a responsibility towards the dead.

In another example, Pascoe Leahy also identified the sharing of transcripts as being a means to ensure accuracy (described as an invitation ‘to correct any mistakes’). As with the preceding examples, little detail was provided. However, Pascoe Leahy located this practice, which they do not name, within a broader concern with reciprocity. Thus, for Pascoe Leahy, sharing transcripts allowed participants to ‘consider whether they are still comfortable with the kind of interview they have co-created’ (2021, p.10) although it is not reported if and how participants responded to this offer.

Finally, and offering an example of ITR in domestic abuse research, Heron et al. (2021) described providing copies of interview transcripts to participants so they could feedback on accuracy. Like the other examples given, the account offered by Heron et al. is fleeting and they do not name this practice. However, they do report that none of the participants made any changes. Yet it is noticeable that this report lacks specificity (it is not clear if all and/or how participants responded) and nor is it reflective (there is no consideration as to whether the absence of any uptake is significant).

These examples illustrate how the rationale, procedures and outcomes of RV (in the examples provided, ITR) may be only partially reported and can have multiple purposes and outcomes. To address this partially and multiplicity, when undertaking any form of RV, several steps are recommended. These include providing clear information about the process and what participants will receive, including the form information will take (e.g. whether it will be edited or verbatim), what participants can or cannot do (e.g. editing for accuracy or grammar, through to changing or adding text), as well as why they might want to take part (e.g. as any text may be directly quoted) (Carlson, 2010). Implicit in being able to offer such information is that the researcher has a rationale for using RV and so has made clear decisions about how they intend to operationalise this research practice.

Clarity of rationale is important because using RV (including ITR) raises several issues (Buchbinder, 2011; Bryman, 2016, p.385; DeCino & Waalkes, 2019). Specifically for ITR, Forbat and Henderson (2005) argue that these issues arise because transcription is emblematic of the sometimes-unrecognised complexity of the research process. They identify three areas of particular concern: the experience of seeing a transcript; participant perception of the transcript; and the effect on the research relationship.

First, the impact of receiving and reading a transcript. Sharing a transcript can be justified as a way of operationalising a commitment to the leavening of asymmetric power relations and/or as an effort to recognise the agency of participants. However, sharing a transcript can have unintended consequences because people rarely see their speech in writing (Birt et al., 2016). At a minimum, this practice needs to be understood and agreed to by prospective participants. Moreover, even having been agreed, participants may find reading a transcript demanding, particularly if it is lengthy (Hagens et al., 2009; Goldblatt et al., 2011) and/or they feel obliged to participate (Thomas, 2017). Reading a transcript may also be emotive, and while any form of RV may potentially be a positive experience, it might also be distressing (Birt et al., 2016). For example, several researchers have described sharing transcripts only to encounter unexpected and adverse participant reactions (Carlson, 2010; DeCino & Waalkes, 2019; Jackson, 2021). In the context of domestic abuse, sharing a transcript raises a specific concern about participant safety (Bender, 2017). This risk might arise from the participant’s interaction with a transcript (i.e. because an interview may mean recalling experiences of abuse, reading the transcript may be distressing) or it might be externalised (i.e. if the transcript is discovered, a perpetrator could use it to justify further abuse).

Second, having received a transcript, a participant is drawn into dialogue with their written speech. Forbat and Henderson (2005) suggest that this has epistemological implications, as a participant’s perspective may change across time and space, perhaps reflecting distance from the interview itself (Goldblatt et al., 2011) and/or the impact of reading a transcript (as described above). Indeed, if a participant makes changes to a transcript, the revised transcript could be considered as new or at least different data (Hagens et al., 2009), or the researcher may face a dilemma if valuable data is subsequently changed or removed (Mero-Jaffe, 2011). Conversely, if participant response is low, there are implications in terms of if/how to follow-up, and data quality (Goldblatt et al., 2011).

Third, the sharing of a transcript affects the participant-researcher relationship. Forbat and Henderson (2005, p.1125) refer to this as the ‘power paradox’, which arises because ultimately participant contributions are synthesised and put to use by the researcher. While sharing a transcript may go some way to addressing power differentials, this is only partial, illustrated perhaps if a researcher uses ITR but does not use other RV practices such as sharing research findings (a decision that I made, and which I address below).

In summary, scholarship and practice related to RV (including ITR) are mixed, recognising both potential while cautioning against unconsidered use. Thus, the decision to use RV/ITR requires a researcher to reflect on what they are seeking to achieve (Varpio et al., 2017). Such reflections should be based on several factors, including relevance and value, as well as noting epistemological, methodological and ethical complications.

It is noticeable that many of the concerns about the use of RV/ITR are anchored around the perceived benefit to the researcher. For example, in a review of literature related to RV, Thomas focused on the contribution such practices make to research credibility or trustworthiness. Summarising three studies that reported specifically on outcomes where interviews were shared with participants, Thomas emphasised that ‘in these studies, the changes respondents made to interview transcripts would not have affected findings in studies using qualitative analyses’ [Emphasis added] (Thomas, 2017, p.36). Arguably, this presents a rationale for sharing transcripts as based principally on the benefit to the researcher. In this way, participant involvement is merely instrumental (a concern that has parallels in a critique by Morse et al. (2002, p.19) who, in a discussion of RV more broadly, question its value if it means ‘relegating rigor to one section of a post hoc reflection on the finished work’).

In contrast and building on the examples discussed above (Rager, 2005; Valentine, 2007; Heron et al., 2021; Pascoe Leahy, 2021), what has not been substantially explored is how RV/ITR can be of benefit to participants. One way that RV/ITR may be of benefit to participants is to afford greater control and assurance over the use of sensitive data. There may also be associated advantages for a researcher despite the potential risk that data may be changed or withdrawn. Specifically, participants may be more willing to share sensitive information in an interview, particularly information that could identify them, if they have assurance as to its use and their role in that determination. To better examine this potential, in the following section, I introduce my research, describing both the context and rationale for using ITR.

Using ITR

Research Context

My doctoral research investigates Domestic Homicide Review (DHR), a type of statutory review that is undertaken into domestic abuse-related deaths in England and Wales, including homicides and increasingly deaths by suicide. In an international context, DHRs are a form of what is known as Domestic Violence Fatality Review (DVFR) (Dawson, 2017). DHRs are a process of collaborative, multi-agency enquiry. Led by an independent chair, DHRs bring together representatives from multiple agencies to form a review panel. These agencies are involved either because they had contact with a victim, any children and/or the perpetrator or because they have expertise that is relevant to the case. Among others, those represented can include criminal justice, children and/or adult services, and health agencies. Review panels also routinely include domestic abuse services, as well as local domestic abuse coordinators (DACs). 1 DHRs may also involve members of a victim or perpetrator’s testimonial network (Rowlands & Cook, 2021), that is family members, as well as others who might provide accounts like friends, neighbours and colleagues. Drawing on these different sources of information and perspectives, DHRs build a case history to try and understand what happened before a death. A DHR aims to learn lessons by identifying gaps in professional, agency or system responses, as well as what might have helped or hindered access to, or the receipt of, support (Home Office, 2016). Consequently, an outcome of a DHR may be any number of single or multi-agency recommendations. In this way, DHRs can be conceptualised as a preventative tool, with the learning and recommendations generated being used to improve responses to domestic abuse and therefore, perhaps, reduce future deaths (Websdale, 2020). Following a DHR, an anonymised report is usually published (for a summary, see Payton et al. (2017) and Rowlands (2020)).

The focus of my research is the understanding of the purpose, doing and use of DHRs. Specifically, I am interested in DHRs as a dialogical process of meaning-making, given they bring together stakeholders to make sense of a domestic abuse-related death. I am particularly interested in the experience of those participating in DHRs, including their interaction with others and their perspective on the outcome of the process. Thus, I used semi-structured interviews to explore participant experiences of DHRs. In designing my study, I recognised that participants might, indeed ideally would, draw on specific examples when sharing their experiences and perspectives of DHRs. In so doing, these examples might be positive, negative or some mix of the two. Moreover, in sharing any examples, participants might disclose information that could directly identity them (e.g. information about their role or actions) or could do so indirectly (e.g. information about a specific case or their local area). This presented a tension that, if not recognised and managed, might have impeded participant confidence to share their experiences and perspectives and so affect the quality of data collected. My desire to recognise this possibility, and mitigate this tension, led to my interest in ITR. Given this, it is to my rationale for the use of ITR that I now turn.

A Rationale for Using ITR

As noted in the introduction, my initial decision to use ITR was little considered. Upon reflection, my desire to share transcripts was in part because of my social work training. In social work, client records have a complex history: access to records is recognised as challenging because of the competing rights and responsibilities of practitioners and recipients of care, yet also important because of the potential significance to the latter’s identity (Hoyle et al., 2019). The sharing of transcripts also mirrored my ongoing practice, in which I lead DHRs as an independent chair. In that capacity, when interviewing testimonial network members, I routinely share accounts of meetings for participant approval.

With these reflections in mind, I decided to use ITR for three reasons. First, I wanted to ensure that participants had the opportunity to confirm, if they wished, that their transcript was accurate (Hagens et al., 2009). A pursuit of accuracy could be seen as problematic as it assumes a ‘static truth’ (Angen, 2000, p.383). Indeed, ITR can be described in quasi positivistic terms, for example as a means of enhancing validity (Mero-Jaffe, 2011). Yet concern with accuracy can also be placed within a social constructionist epistemology (Birt et al., 2016; DeCino & Waalkes, 2019). In this light, I understood ITR as being a way to be assured that the transcript I had produced was representative of a participant’s experience as they understood it (Thomas, 2017; McGrath et al., 2019). In this context, a response confirming a participant was satisfied with the transcript could be judged as being as meaningful as a request for changes. Second, I felt that offering ITR was consistent with my feminist research ethic, being one way to try to flatten power relations (Buchbinder, 2011). Third, I approached ITR as an extension of informed consent, particularly as a way to address the risk of participants being identified (Wolgemuth et al., 2015). Offering ITR was a way for participants to share their experiences and perspectives during the interview, in the knowledge that they could verify for themselves the steps being taken to protect their anonymity and decide, if needed, to change or withdraw any data.

Taken together then, I felt that ITR could contribute to the trustworthiness of my research, as well as its ethical foundations (Goldblatt et al., 2011). Having contextualised my decision, and set out my rationale, I now turn to my methods in using ITR.

Method

Sample

The data presented here is from an Economic and Social Research Council (ESRC) funded doctoral research study which included semi-structured interviews with 40 DHR participants. These included independent chairs and review panels members (DACs, as well as domestic abuse service and other agency representatives). Interviews were also conducted with testimonial network members (in this study, all family members), and family advocates (who provide specialist and expert advice to families involved in DHRs 2 ). Ethical approval was provided by Sussex University.

Procedure

Participants were recruited through a web-based survey. Having completed an online questionnaire, participants were asked if they would be willing to take part in a follow-up interview. Participants who consented were sent an information sheet with further information. Those who indicated they were willing to take part in an interview were then sent a consent form which they were asked to return (38 were returned in advance of interviews, with two participants providing verbal consent). Most interviews, all conducted by me, lasted between 60 and 90 minutes, with the shortest being under an hour and the longest extending to almost 2 hours. Due to the COVID-19 pandemic, all the interviews were conducted by phone or video conferencing software. Each interview was audio-recorded, and a verbatim transcript was then prepared.

As my interest was in meaning, I used a denaturalised approach removing, for example, involuntary vocalisation (Oliver et al., 2005). As part of the anonymisation process, pseudonyms (either selected by the participant, or selected by me and then participant-approved) were used. Identifiable information was stored separately, and password-protected and connected to the transcripts by way of a Unique Identification Number.

The information sheet and consent form included information about how, with participant consent, interviews would be recorded and transcribed. Participants were also told that they could receive a copy of the draft transcript if they wished, to check and comment on it. Specifically, participants were informed they could ‘amend, elaborate on or remove anything you said at the interview’. This offer was time limited. Participants were asked to respond within 2 weeks of receiving the transcript and also told that if I did not hear from them, I would assume that they were content with the draft transcript. (Two weeks was also the timeframe used by Hagens et al. (2009) and Birt et al. (2016)). However, if a participant asked for more time, this was agreed. Additionally, this timeframe did not affect their overall right of withdrawal.

At the start of each interview, participants were reminded about the information sheet and consent form, and there was a discussion as to whether they wanted to receive the draft transcript. Where appropriate, in this discussion I gave examples of why a participant might want to review the draft transcript (e.g. because direct quotes may be used). I also explained that receiving a draft transcript was an opportunity to ensure that any sensitive information that they shared during the interview, which could potentially compromise their anonymity (e.g. information about their role or actions, a specific case, or their local area), had been identified. Participants were informed that they could ask to remove this information from the transcript entirely if they wished, but that another option was to ensure that it was identified and marked as sensitive. Being marked as sensitive meant that the information would be available to me as a researcher but would not be used directly (i.e. it would not be quoted) or in an identifiable way (i.e. it would only be described in general terms and in aggregate). To reduce the likelihood that participants might feel that producing a transcript was a burden, I normalised the offer by explaining that I would produce a transcript regardless of their decision.

During interviews, several participants identified specific information as sensitive (e.g. where they referred to case-specific or local area information) and stated that they did not want it to be attributed to them. Where this happened, I clarified if and how I could use the data (in most cases, participants were happy for me to use the information if this was indirect and non-identifiable). In addition to ensuring a shared understanding in the moment, this then acted as a prompt during transcription.

After each interview, I provided verbal confirmation of what would happen next, including that I would send a follow-up email that would, among other things, confirm the participant’s decision about the receipt of a draft transcript. When sent, this email repeated the information previously provided about what participants could do (‘amend, elaborate on or remove anything’) and explained how sensitive information would be presented (‘anything in square brackets [like this] is something that you have either asked me not to share or which I think I should anonymise because it could identify you or your area’).

During transcription, where the participant had said that they did not want something attributed to them, or where I identified text that was potentially identifying, this was marked as sensitive using [square brackets].

Participants who had agreed to receive the draft transcript were then sent the draft transcript (the majority were sent within less than 2 weeks, with a small number being sent within four to 6 weeks) and invited to respond. To try and manage the potential surprise of reading their spoken word in written form, the email included the following statement: ‘I have captured how you spoke in the moment, which means sometimes the grammar or sentence structure may read a little different to how you would expect. Some people worry about that, but please don’t feel you need to: it is how we all speak in real life!’

ITR Process.

Note. Adapted from Hagens et al. (2009).

Data Analysis

Content analysis was conducted (Hsieh & Shannon, 2005; Erlingsson & Brysiewicz, 2017). This was performed deductively, using a coding frame to capture edits, additions, omissions, and notifications made by participants on the draft transcripts. The coding frame was derived from the 6-category framework developed by Hagens et al. (2009). This framework captures any edits/additions/omissions (i.e. changes) to draft transcripts as part of the process of ITR, reporting on transcription errors/omission corrected; specific details added to transcript; specific transcription details corrected/changed; grammatical changes or minor clarifications made to transcript; statements removed from transcript; and statements added to transcript. Given my rationale for using ITR, I added a further category relating to sensitive data: ‘Category 7. Confirmation and/or identification of sensitive information’.

The analysis was conducted in a data analysis application (NVivo); this facilitated the management of the data but did not replace my role as the researcher and I remained responsible for the analysis. Descriptive statistics were generated using SPSS.

If participants commented on their experience of the sharing of the draft transcript, this was either in the returned transcript itself or in a covering email. Where this was by way of a covering email, identifiable information was removed from participant responses, which were then stored alongside the anonymised transcripts. Quotations from these responses are used to illustrate the discussion. However, as the original information sheet and consent form did not explicitly cover the use of this data, participants were approached for their consent and quotes are only used if this was provided.

Results

Participant Roles and Invitation to ITR Response Rate.

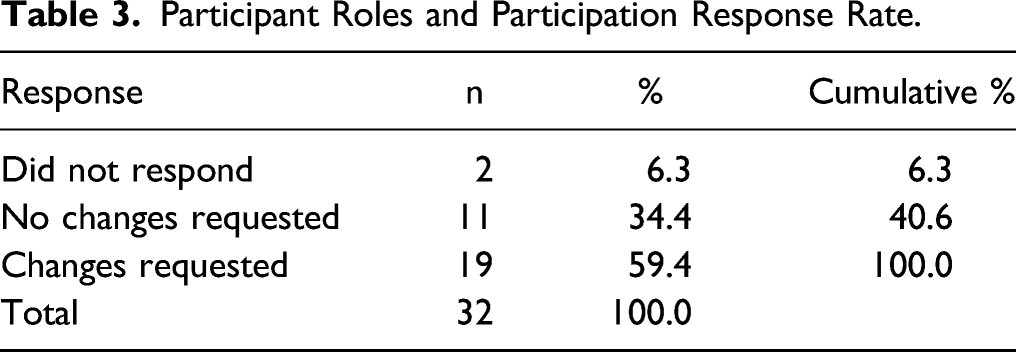

Of the 32 participants who were sent a transcript, 30 (93.8%) responded. This return rate compares favourably to the relatively low response rate reported in other studies (Goldblatt et al., 2011; Thomas, 2017).

Participant Roles and Participation Response Rate.

Of the 19 participants who did respond, the draft transcripts could be categorised broadly into two groups.

Three participants (an independent chair, a review panel member from a domestic abuse service, and a family advocate), made extensive changes to their draft transcript. In effect, these changes rendered the transcript more formally spoken. As a result of the extensive changes that these participants made to their draft transcripts, they were excluded from the analysis conducted for this (although not the larger) study. This decision was both pragmatic (it would have required extensive coding) but also related to the viability of the analysis (the volume of changes made would have meant the coded data from these three participants would have overwhelmed the data from the other participants). While the changes made by these participants were not analysed, they are discussed below.

Edits, Additions, Omissions and Notifications Made by Participants, by Category.

Note. Adapted from Hagens et al. (2009).

Discussion

As a way of engaging participants in the research process, ITR proved to be a useful tool; as evidenced by the high response rate, most participants responded positively to the offer of ITR. A high response rate was also seen across the sample, including from participants with different associations with DHRs. Although data was not captured systematically on those who did not take up the offer of ITR, where participants expressed a view, this was for one of two reasons. Some felt that they would not have sufficient time to do so, echoing concerns in other studies about the expectations that ITR might place on participants (Goldblatt et al., 2011). Others though expressed confidence in the research process as a reason they did not feel they needed to see a transcript.

Impact of ITR on the Participants, the Transcripts and the Data

Many of the participants who asked for a draft subsequently responded to confirm that they did not want to make any changes. While the reasons that participants did not want changes are unknown, it appears that for many, this was because they felt the transcript had accurately captured the interview. For some, their examination of the transcript may have been superficial, and/or enough merely to be satisfied. Illustrating this, Louise said: ‘I’ve had a flick though and all is fine’. For other participants, there was perhaps a more substantive engagement, with some participants commenting on the quality of the transcript. For example, Owen said, ‘Thank you for this, all very accurate’, while Claire said: ‘Thank you for the transcript. I am very pleased with it and [there is] nothing I want changing’.

Several participants reflected on their experience of reading their written speech, something I had tried to prepare them when sending the transcripts by explaining the ‘the grammar or sentence structure may read a little different to how you would expect’. Louise said it was ‘very strange reading back a conversation’, while Jade reported ‘cringing’ on reading the transcript. This supports Forbat and Henderson’s (2005, p.1116) observation that people may ‘express surprise’ upon encountering their spoken speech in written form. For most, while this encounter was perhaps a surprise, it did not appear to have any wider repercussions. Indeed, some were positive about the experience. Olivia made a few minor grammatical changes before noting that, those aside, ‘I was surprised I made sense!’ Grace was also positive: ‘It was good to take part in the research. Something like that does make you think about your own practice which is always useful!’

However, for three participants this experience prompted considerable concern. As noted earlier, such was their concern these three participants extensively edited their draft transcripts. This reflected discomfort with the content: Emma observed, ‘I’ve edited this so at least it is understandable’, adding they felt that in capturing their spoken word the transcript ‘obscured what I was trying to say in many places’. Meanwhile, Marie was worried ‘the transcript… makes me sound quite illiterate’. Mia summarised a shared concern with how the transcripts would be read, saying she thought ‘it [the transcript] reads terrible… (not as a professional if that makes sense)’.

This mirrors observations in the literature that, for some participants, receipt of a transcript may cause some distress. Fortunately, unlike one example provided by Carlson (2010), while this prompted considerable changes to the transcripts, it did not appear to affect rapport. Thus, all three participants remained in communication until they were satisfied with the changes. Nonetheless, for researchers intending to use ITR, it highlights both the importance of explaining the purpose of ITR, but also the need to prepare participants to encounter their spoken speech in written form and to engage In a dialogue about this.

The extent of these changes illustrates a further challenge with ITR, which is that the resultant transcript may lose some of its richness if the text is revised and formalised (and so becomes new data, as discussed above). My experience of the changes made by these three participants was that while the text was substantively revised, this did not affect the data per se. For example, in describing an encounter with a specific agency, one of the participants amended a longer, more elaborate account into a shorter more factual statement. As my interest was in the encounter and its outcome, rather than how this was specifically described, the effect was minimal. However, the extent to which such a change is an issue for different researchers will depend on the subject being studied and the analytical strategy being used.

Despite these three participants making significant changes, overall, this study replicated others that have found that most of the changes to draft transcripts were largely superficial (Thomas, 2017). Thus, grammatical changes or minor clarifications (Category 4) were the largest group of responses. For other participants, ITR was an opportunity to correct transcription errors/omissions (Category 1). In both scenarios, this meant participants could be happy with what had been captured, like Hannah who said: ‘I have clarified something on the attached and the rest is fine. Few typos but it is fine.’

However, ITR can change the data collected in several ways. In this study, ITR enabled participants to change their transcript, with a number adding specific details (Category 2) or correcting/changing transcript details (Category 3). In some cases, participants added new information (Category 6). For example, Isabella observed: ‘I’ve also added in a couple of comments too which I hope are ok’. Where this happened, these additions built on comments in the transcripts and were thus a source of additional data.

There were also some requests to remove statements (Category 5). These requests related to case details or other identifiable information. For example, Emily asked for ‘the removal of the following to avoid the risk of jigsaw identification for the victim, family and professionals involved’. While this specific example was coded under Category 5, clearly this could also be interpreted as a desire to ensure sensitive data was managed appropriately.

Concerning sensitive data, most noticeably, the second largest category of changes to the draft transcripts related to the confirmation and/or identification of sensitive information (Category 7), a category I added to the framework developed by Hagens et al. (2009). This suggests that, for many participants, the knowledge that information would be marked as sensitive – and would therefore not be used directly or in an identifiable way – appears to have meant they were confident that it could be left in the transcripts and therefore be of use to the researcher. As a result, at least for this study, the quality of the data was not compromised. William noted in his response that he had ‘marked the cases I would not want included’ in any findings, while Chloe sought specific assurance around sensitive information, highlighting that ‘the transcript contains reference to an internal disagreement which I would prefer is not identifiable’. Emma made a similar point, highlighting ‘two pieces of information that could make the agencies more identifiable’.

This suggests that, as a means to manage sensitive information, ITR can enable participants to participate freely in an interview and, thereafter, have assurance around how any sensitive data is used. It also offers a potential for an ongoing relationship between a researcher and the participant. The result is a minimisation of the loss of data, albeit it may have to be presented or used differently (often by way of generalisation). Perhaps Charlotte articulated the potential benefit of ITR in terms of collaboration between a participant and a researcher best, saying:

I have made some minor amendments, the main point is removing anything that makes it identifiable to the area or region, as I have not sought permission to engage but want to support this important piece of work. If you find there is something critical that you want to retain but is identifiable, please come back to me and I will see how I can support.

Impact of ITR on the Researcher

As summarised previously, much of the concern about ITR (or more broadly RV) relates to the opportunity cost and the management of participant responses (Hagens et al., 2009). Here, the opportunity cost was marginal. This was a result of the study design. As I was already producing interview transcripts, the offer to share a draft with participants did not incur additional time or effort. Second, in developing my research plan, I had intended to send a follow-up email to participants, thus I could simply add information on ITR into this planned communication. Third, the use of a 2-week return window was beneficial, as it set out clear expectations for participants (although, as noted above, if more time was requested, this was agreed). It also provided a built-in timeframe for transcription which, as a novice researcher, required me to carefully consider project planning and assisted with my motivation. As noted above, for the most part, I was also able to return the majority of transcripts within 2 weeks and the remainder in between four and 6 weeks. This meant that participant engagement with the transcripts was timely, reducing the risk of changes to recall over time (Goldblatt et al., 2011).

In addition to the opportunity cost in offering ITR, there is also an opportunity cost incurred in responding to participant feedback. For the most part, the time required to make changes to the transcripts was minimal, with one round of changes usually being sufficient. However, for some of the participants, including the three participants who made extensive changes, several rounds of changes were necessary.

These reflections serve as a reminder that ITR needs to be a conscious decision, not least so that researchers can identify and plan for the resource implications (for both timely transcription and then response to any feedback) from the start.

The knowledge that some participants had chosen to see the transcripts meant I was conscious that their transcripts would be read. Such awareness may be an issue for researchers: at the very least, it might mean that transcription is prioritised for those participants who had asked to see a transcript (as was the case for me). It might also mean that more care is paid to these same transcripts (while I hope that transcription quality and accuracy did not vary because of this, I cannot rule this out).

A further reflection relates to my sense of responsibility for sensitive information. Where participants requested a transcript, I was able to confirm with them that I had recognised sensitive information and/or gain assurance that anything I had not marked as such had been identified by them. This was of considerable benefit. For example, Caroline was able to identify two specific pieces of information that she felt could identify her that I then marked up as sensitive. Conversely, of course, this meant for those who did not ask for the transcript, no such confirmation or assurance was available.

A final benefit of the use of ITR was that it meant that, at least for those participants who chose to take up the offer, a line of communication was kept open. Like Valentine, (2007), this was a way of acknowledging the collaborative nature of our encounter. Noticeably, when I contacted the participants quoted in this paper for their consent to use their responses to ITR, all responded positively.

In sum, I experienced ITR to be a useful tool, one that was beneficial in terms of data quality but, critically, enhanced my ability to be responsive to participants. Yet, notably, although I included ITR within the ethics application for my research, I provided no detail about my intentions because, as I discussed earlier, I had at that point little considered this practice. Despite including ITR, during the ethical review process I was not asked to justify my decision to use this practice or indeed describe my rationale and procedures. That silence is perhaps indicative of the extent to which ITR is under scrutinised as a research practice. Yet, simply requiring ethical review boards to interrogate the use of ITR may not necessarily be helpful given this may add yet another barrier to sensitive research (Buchanan & Wendt, 2018). Perhaps more usefully, as Pascoe Leahy (2021) has observed, we might attend to the distinction between the ‘explicit’ ethics required by the formalities of research, and more ‘subtle’ ethics that are negotiated in practice, including in an interviewer–interviewee relationship. Here, and in that spirit, like Pascoe Leahy, ITR allowed me to demonstrate a commitment to an ethical praxis.

Limitations

This study was conducted with a specific cohort who were highly motivated, having been recruited via an online survey and then agreeing to take part in a semi-structured interview. This may have affected the participation rate as much as the procedures described in this paper. So to the specific topic, about participant experience and perspectives on DHRs, might have encouraged uptake (this of course is the argument offered here, that ITR is a useful research tool when conducting sensitive research). However, participant experience was not examined directly, so further research might examine participant motivation, experience, and perception of the outcomes of ITR. Additionally, ITR could be understood as a form of accountability that leads to increased accuracy and so improved data quality. Thus, an examination of the accuracy of the transcripts being shared and those that were not may shed some light on the transcription process.

A further limitation is that this study only examined ITR rather than other forms of RV, for example, sharing draft findings with respondents (Bryman, 2016, p.385) or undertaking a member check interview or focus group (Birt et al., 2016). Thus, although I used ITR to reduce power differentials, I retained the privilege of research design. While I did offer participants the opportunity to receive updates about the research data (by notifying them of any publications), I did not involve them in the interpretative process. This reflected the practical limitations of a doctoral research project. However, in preparing this paper, I offered those participants who I had quoted an opportunity to see and comment on a draft. Although a number asked to see the article either pre- or post-publication, only one took up the offer for a further discussion. It is not possible to say why uptake was so low, although this could reflect the topic (while methodologically interesting, understandably perhaps, ITR may appear to many – both without and within academia – as somewhat dry), the limited data used from participants about the topic they had been interviewed about (i.e. DHRs), or the unexpected and relatively late nature of this offer.

Conclusion

ITR has been reported to bring marginal gains for the time taken, particularly as changes to transcripts are often minor, or to raise epistemological concerns about the data collection. This study has in some ways echoed earlier findings: grammatical changes/minor clarifications were the single largest response category, although there were also changes to the transcripts which, in a small number of cases, were substantial. However, the inclusion of an additional category related to sensitive information is a novel contribution to the field. Attention to participant concerns about sensitive information identified that this was the second largest category of responses. ITR appears to have offered participants the opportunity to take part more freely in the research while retaining the ability to ensure that sensitive information, particularly if it was potentially identifying, could be identified, and managed to protect their anonymity. This had benefits for me as a researcher too, given it may have meant participants felt more able to talk more openly about their experiences and perceptions of the DHR process within interviews. This suggests that Hagen et al’s. (2009) observation that the decision to use ITR is a question of weighing its potential and disadvantages to a specific study is a pertinent one. The findings here suggest that, at least for sensitive research, the scales may tip towards the use of ITR.

Footnotes

Acknowledgements

With thanks to the study participants for their contribution, and to Althea Cribb and my supervisors Alison Phipps and Tanya Palmer for comments on early drafts of this paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Economic and Social Research Council (grant number: ES/P00072X/1).