Abstract

Background:

Video-stimulated recall (VSR) is a method whereby researchers show research participants a video of their own behavior to prompt and enhance their recall and interpretation after the event, for example, in a postconsultation interview. This article describes a process evaluation with the aim of understanding what VSR may have added to findings, to describe participants’ responses to, and the acceptability of, VSR.

Method:

This evaluation took place in the context of a United Kingdom study concerning the discussion of osteoarthritis in primary care consultations. Postconsultation VSR interviews were conducted with 13 family physicians and 17 patients. Thematic analysis of these interviews and the matched 17 consultations was undertaken.

Results:

VSR appeared to add value by enabling a deeper understanding of participants’ reactions to specific parts of consultation dialogue, by facilitating participants to express concerns and speak more candidly, and by eliciting a more multilayered narrative from participants. The method was broadly acceptable to participants; however, levels of mild anxiety and/or distress were reported or observed by both doctor and patient participants, and this may explain why some participants reported behavior change as a result of the video. Any reported behavior change was used to inform analysis.

Conclusions:

This study demonstrates how VSR may enable a more critical, more specific, and more in-depth response from participants to events of interest and, in doing so, generates multiple layers of narrative. This results in a method that goes beyond fact finding and description and generates more meaningful explanations of consultation events.

What is already known?

Video-stimulated recall (VSR) may be particularly useful for exploring consultation topics that are routine and easily overlooked and for exploring nonspoken and nonverbal behavior.

In primary care studies, VSR has been shown to be particularly useful for exploring clinicians’ perceptions, as differences in self-reported and observed behavior can be explored.

There is very little empirical evidence that video recording changes behavior in consultations.

What this paper adds?

VSR appeared to have added value in patient interviews, empowering patients to express what was important to them and to divulge more emotional or reflective responses to the consultation.

VSR is broadly acceptable but has the potential to elicit anxiety and distress in participants.

Participants perceive altered behaviors in themselves and their consultation counterpart when video recorded; these perceptions can be used to positively inform analysis.

Background

The consultation has long been a subject of interest for researchers seeking to gain further understanding of the doctor–patient relationship and interaction. The consultation is the essential unit of medical practice: the context in which data are gathered, diagnoses and plans are made, adherence is achieved, and treatment and support are provided. In 1969, Byrne and Long (1976) audio recorded over 2,500 consultations to research verbal behaviors between doctors and patients; since then, there has been increasing use of video recordings to facilitate observational consultation research (Coleman, 2000). Separately, insight into consultations has also been gained by participants’ own accounts of consultation events obtained by interview, focus group, or workshops (Fischer & Ereaut, 2012).

One theoretical concern with using video recorded consultations for research is the notion that video recording alters “natural” behavior. The evidence exploring the extent to which video recording alters behavior of general practitioners (GPs) is limited to self-report (Coleman, 2000), and one study that compared behavior in covert and overt recordings using a coding scale of verbal and physical behaviors (Pringle & Stewart-Evans, 1990). The existing literature suggests little or no effect of video recording on GP behavior; however, there is a lack of empirical evidence supporting this assertion (and no studies to our knowledge have investigated the effect on patient behavior) and little prospect of furthering this evidence base in the absence of randomized studies with covert recording.

In addition to analyzing the content of the consultation, video recorded consultations can provide the stimulus for VSR. This is a method whereby researchers show research participants a video of their own behavior (in this article, a doctor–patient consultation) to prompt and enhance their recall and interpretation after the event, for example, in a postconsultation interview. This overcomes one of the problems with postconsultation interviews which rely on an individual’s recall of the event. GPs may see in excess of 50 patients a day, and patients are thought to forget 40%–80% of information from a consultation immediately (Kessels, 2003). Therefore, providing a “stimulus,” which may be written, audio, or visual, of the consultation is important to elicit participants’ perceptions of the consultation in its entirety.

In addition to enhancing a postconsultation interview, the method of VSR therefore enables integration of the content of consultation analysis findings with data about participants’ associated thoughts, beliefs, and emotions about the content of the consultation (Henry & Fetters, 2012). The method is also described as video elicitation interviewing or video reflexive ethnography; in the latter, the video may be edited extensively to demonstrate emergent themes and promote reflexivity and problem-solving in participants (usually clinicians), to the extent that the technique is considered as much of an intervention as data collection (Carroll, Iedema, & Kerridge, 2008).

VSR is described as resource intensive; Henry and Fetters (2012) called for the use of VSR to be restricted to research questions that were unanswerable using standard observation or interview methods. However, the question remains as to what VSR adds over and above these “standard” methods. In an attempt to address these, we conducted a systematic review of 28 studies within family medicine research that had utilized the method (Paskins, McHugh, & Hassell, 2014). We identified VSR as useful for exploring specific events within the consultation, “mundane” or routine occurrences that might easily be overlooked, and nonspoken events (Paskins et al., 2014). For example, in a study of discussion around smoking cessation, doctor participants showed great surprise at their actions on video; it was apparent from findings presented that the videos had uncovered aspects of behavior that the GPs had previously not given any thought to, such as the impact of the computer on smoking cessation discussion, considered a “routine” topic of dialogue (Coleman & Murphy, 1999). In addition, VSR was identified as being particularly useful for exploring clinicians’ perceptions, as differences in self-reported and observed behavior can be explored. However, there was a lack of empirical evidence or process evaluation in any of the included studies from which to draw further conclusions about how stimulated recall added value over and above nonstimulated recall, particularly with patient participants. Furthermore, no included studies in the review directly addressed participants’ responses to viewing their own consultations or referred to any ethical issues arising during data collection. The only findings relating to the acceptability of the method originated from one study which excluded patients from the VSR component, asserting that doctors may not find it acceptable for patients to participate in VSR (Blakeman, Bower, Reeves, & Chew-Graham, 2010).

The process evaluation described in this article took place in the context of a study of primary care consultations where osteoarthritis (OA) was discussed, which produced practical results with important implications for policy (Paskins, Sanders, Croft, & Hassell, 2015). The overarching aim of the evaluation was to fill the gaps identified by our previous systematic review and provide insights that would inform further use of VSR in health-care research. We used the term “process evaluation,” as we aimed to evaluate the research method, to identify the strengths and weaknesses of the method, and areas where the method could be improved. Specifically, we aimed to explore how VSR may have contributed to the findings in our study (the “added value”), to describe participants’ responses to, and the acceptability of, VSR and to explore if participants’ felt behavior changed in front of the video camera.

Method

Data Collection and Context

This evaluation took place in the context of a United Kingdom (UK) study concerning the discussion of OA in primary care consultations (Paskins et al., 2015). The aim of the original study was to understand what happens when OA is discussed in consultations from the perspective of both patient and doctor. Family physicians (GPs) in seven practices were invited to participate and offered remuneration for their time. The study was approved by North West 8 Research Ethics Committee (11/H1013/3), and all participants gave full written consent. GPs were told the researcher was interested in long-term musculoskeletal conditions and that the purpose of the study was to explore aspects of communication, patient prioritization of symptoms, and doctor experience of the consultation. Patients were told the study concerned communication and patient experience of the consultation.

Fifteen GPs agreed to participate from seven general practices (two general practices declined after being visited about the study). Each consenting GP nominated 2 half day clinics to be video recorded between August 2012 and August 2013. All eligible patients were asked to give consent for video recording on three occasions: before the consultation, immediately after the consultation, and 48 hr later by telephone. Of the 252 patients approached, 200 (79.4%) agreed to participate in the initial phase of the study (having their consultation video recorded). Before the consultation, patients completed a brief questionnaire about their demographics and consultation agenda (not presented here). One hundred and ninety-five unselected video recorded consultations were collected from 190 participants. In the 48 hr following the consultation, all these video recordings were viewed once by the first author to determine if the consultation contained reference to OA using predetermined inclusion and exclusion criteria (Paskins et al., 2015). Patients and GPs participating in relevant consultations (about OA) were then invited for interview, where possible, within 2 weeks of the consultation. The final sample consisted of 19 consultations (i.e., those in which OA was discussed) and subsequent VSR interviews with 13 GPs and 17 patients (2 patients declined invitation for postconsultation interview; 4 GPs consulted with 2 patients). Thus, as this article concerns a process evaluation of the method, the sampling framework was not employed to answer the research question of this evaluation, but the research question of the main study.

Postconsultation VSR interviews were conducted and audio recorded by the first author (a rheumatologist with postgraduate training in qualitative methods and education). For both patients and GPs, interviews were composed of three parts; first, a short part of the semistructured interview took part before the video was played. GPs were asked to describe a typical OA consultation, before being shown selected whole, or clips of, relevant consultations performed by themselves. Patients were asked about their recollections of the consultation, including the advice and management given by the doctor, prior to video playback. Second, the video was played; at the onset of playback, patients and doctors were shown how to stop the recording in order to comment on anything of interest, anything they were thinking during the consultation, and anything that the researcher may not know about talk in the consultation. Finally, after the video, differences in recalled and observed events were explored. Both doctors and patients were asked about their experience of being video recorded and of viewing the video during VSR. Both interview topic guides for GPs and patients contained a number of questions designed to evaluate the acceptability of the method. After the first few patient interviews, it became clear that these questions were not discriminatory with all patients reporting favorable experiences; and therefore, the decision was made to reduce the amount of questions on this and use other data from observations and responses to other questions within the interview, to evaluate the acceptability of the method. Interview guides are available as Online Supplementary Data.

Analysis

Analysis of the main study was by thematic analysis (Paskins et al., 2015). The analysis in this article relates to the evaluation of the method and utilized data primarily from the postconsultation (VSR) interviews (using transcripts, audio recordings, and field notes), supported by the video recorded consultation data. Analysis was in part deductive, in that key overarching themes of “utility of VSR” (added value), “participant responses to VSR” (acceptability) and “reported and observed behavior change in response to video” were integral to our research questions, with the former two being identified as important from our previous systematic review. Within these themes, an inductive thematic approach was taken. NVivo 9 was used to aid analysis.

Under the overarching theme of “utility,” as a starting point, the comments made during playback in the VSR interviews were recorded, coded, and grouped into themes. The themes were agreed by discussion between all authors and simple frequency counts made. Following this, the discussion between the interviewer and participant during playback and immediately after playback was analyzed. Sections of talk where the participants were reflecting on observed events (from the video playback) were analyzed in more depth, and emergent themes identified. Next, talk after playback was compared and contrasted to before the video was played, and, again, emergent themes identified. Finally, for the theme of utility, the main study findings and NVivo analysis file (concerning how OA is discussed in the consultation) were reviewed to identify any other examples of VSR contributing to the results. Under the overarching theme of “participant responses” and “behavior change in response to video,” VSR interview transcripts were reviewed specifically for evidence of participants’ comments relating to being video recorded and/or participating in VSR, in addition to their answers to the more direct questions about their experiences. Field notes were also examined. Author 1 coded all manuscripts, with Author 2 coding a sample alongside. The aim of independent coding was to understand cross-disciplinary perspectives on the data to come to an agreement on shared meanings and interpretations. For this reason, it was deemed too simplistic to statistically calculate levels of agreement as a means of assessing reliability, and this was instead achieved in a more nuanced manner through detailed discussion. In practice, disagreements were few, and resolved with discussion between Authors 1 and 2. A coding framework was discussed by all coauthors (Author 4—a professor of medical education, Author 2—a social scientist, and Author 3—professor of epidemiology and primary care) across the three overarching themes. Following these discussions relating to the first phase of analysis, transcripts and consultations were reviewed to examine consultation events in light of participants’ responses to the method, to consider how participants’ responses to the method, and reported behavior change may have influenced findings.

Results

Participant Characteristics

Three of the 15 GPs were female, 7 were GP trainers (experienced in using video), and the median number of years worked as a GP was 17 (range 3–29). Eleven of the 17 patient participants were female, all were Caucasian, 12 were retired, and the median age was 67 (range 49–85).

The Results section is laid out as follows: How VSR contributed to the findings is discussed first under the overarching theme of utility of VSR. Following this, participant responses to VSR are discussed followed by a discussion of how participants’ responses to VSR informed study findings.

Utility of VSR

In general, the VSR component of the research underpinned findings in the main study (Box 1) relating to patient agendas and concerns, GP assumptions, and a fuller understanding of the construct of OA. The perceived areas where VSR may have added value are described here in three circumstances: spontaneous comments during playback, for probing (microrecall), and the change in narrative after playback.

Contextual Information for Method: Summary Findings From the Primary Study Regarding How Osteoarthritis (OA) Is Discussed in Primary Care Consultations (Paskins et al., 2015).

The study reported that the topic of osteoarthritis arises in the consultation in complex contexts of multimorbidity and multiple, often not explicit, patient agendas.

Dissonance between patient and doctor was frequently observed and reported; this occurred when general practitioners (GPs) normalized symptoms of osteoarthritis as part of life and reassured patients who were not seeking reassurance. GPs subconsciously made assumptions that patients did not consider OA a priority and that symptoms raised late in the consultation were not troublesome. GPs used “wear and tear” in preference to “OA” or didn’t name the condition at all; the lack of a clear illness profile results in confusion between patients and doctors about what osteoarthritis is and its priority in the context of multimorbidity.

Spontaneous comments during playback

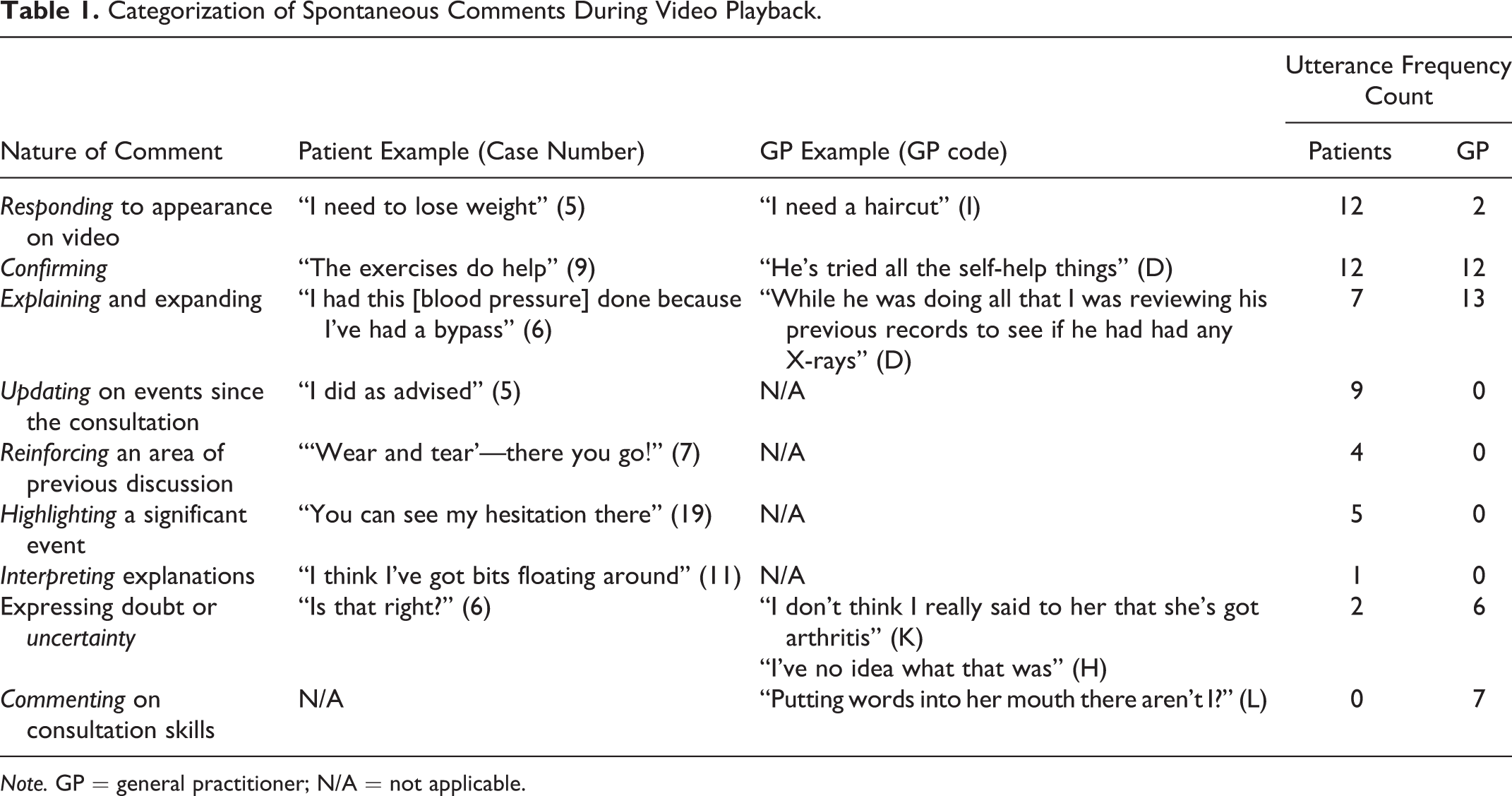

While both patients and GPs commented infrequently during playback (a mean of 3 times per playback), these spontaneous comments proved very useful in highlighting what was important to the participant, and participant concerns in the absence of interviewer prompting. It is difficult to see how this would have been achieved without VSR. The comments were categorized into nine themes, as shown in Table 1.

Categorization of Spontaneous Comments During Video Playback.

Note. GP = general practitioner; N/A = not applicable.

For patients, particularly useful comments were “highlighting significant events” and “reinforcing” areas of previous discussion in the consultation. These interjections allowed the patient participant to demonstrate what was important to them. On two occasions, patients recognized a key event in the consultation that was then further explored in the interview that may have been otherwise overlooked. An example was of a psychological concern the patient had raised during the consultation that the GP had not responded to.

In the interviews with GPs, comments relating to expressing uncertainty or doubt were particularly useful. For example, GP H questioned their own explanation about the cause of a flare of OA; this was the only inference made in the interview in which the GP suggested their knowledge may not be up to date and suggested the GPs were possibly more candid when commenting during playback. This added to analysis relating to how doctors construct OA as a condition.

Patient 15 commented about the GP’s apparent failure to pick up on their joints during playback, which was out of character with all other statements in the interview made about the GP which were extremely complimentary; this was a further example of possibly more candid responses during playback.

Probing—“micro” recall

Following video playback, the immediacy of the stimulus (video recorded consultation) was useful for “microrecall”; in other words, for the researcher to ask questions on a specific part of dialogue where the participant’s intentions or thoughts were not altogether clear. The video facilitated interrogation of small sections of talk that may have otherwise been forgotten by the participant. For example, Patient 1 was asked why they were silent after a suggestion by the GP to pursue physiotherapy. The patient then described a significant previous experience with physiotherapy in which they felt they had to fight to have their OA addressed. This added to analysis relating to patient perceived dissonance between doctor and patient. In this example, the patient did not perceive management of their OA was active enough.

Other examples included asking patients what they had meant by a certain phrase or what they were going to say when they had tailed off half way through a sentence. This enabled direct comparison between doctor and patient unspoken assumptions in the consultation as the following quotes from consultation 14 and the matched postconsultation interviews illustrate:

Yeah, I—I’m not sure there’s anything realistic we can do about it. Um, I think if you’ve used your knees that hard, then they’re actually doing very well and if you can cycle as much as you like and you can walk as much as you like, then I wouldn’t interfere, I wouldn’t suggest we start doing things to your knees

No, I speak to people and they say “oh, no, start messing around and things might get worse mate”.

Yeah, yeah. (Extract from Consultation 14)

So you said “people say stop messing around because things might get worse.” What did you mean by that? I wasn’t sure if you meant doing too much exercise, or if you meant having surgery, messing around with surgery.

Oh no, not messing around with surgery, no, it was just messing around with doing too much—I tend to do…[sighs] too much I suppose. And people are saying I’m getting older now.

He’s referring to people having some sort of intervention, medical intervention, not to people saying you must stop running or exercising I’m sure. (GP I)

This misunderstanding would not have been evident without VSR. The patient took this part of the discussion to mean the GP was endorsing his view that he was doing too much exercise, which was not the GP’s intention. This particular episode of dissonance also added to the primary analysis relating to a lack of active management for OA; the quote demonstrated part of a rationalization on part of the GP that they were right not to “interfere.”

Changes in narrative following playback. Following video playback, participants frequently discussed different viewpoints to those previously expressed.

When patients were initially asked about the consultation, most tended to report factual events, in addition to a general level of satisfaction with the consultation. After playback, more reflective responses were elicited. He didn’t offer anything, “Is it, is it stopping you from doing anything?” And I said, “No.” He said, “Well, carry on then,” er, because maybe if they start doing any intervention it might, sort of, start affecting, what I can do, or could do, so, yeah…it [the consultation] was very good actually. (Patient 7, before playback) I just wished I could have been taken a bit more seriously and gone into what was the problem with my knee. (Patient 7, after playback) But it did remind me that, [they] had mentioned really, an explanation, which I’d obviously dismissed at that point. (Patient 1, after playback)

The stimulus of the video provided a way of gently probing GP statements in real time, during the interview, in a neutral way. This had the potential to result in more detailed reflection on the part of the GP. For example, the researcher noted a recurring behavior with GP K of giving management advice without giving a diagnosis. Clips from a number of illustrative consultations were shown in order to illustrate this observation without directly questioning the GP on this behavior. The GP recognized the pattern of observed behavior which they reported being unaware of and was then able to reflect on this in more detail, giving reasons for the reluctance to give a diagnosis, including a wish not to promote a “sick role.”

In a further example, GP E was asked about their explanations for OA. They replied indicating that they did not have a “standard patter” and would personalize explanations depending on the needs of the patient. However, in the VSR interview, they observed themselves use the term “wear and tear” with a similar form of words for two patients. The GP had previously denied using the term. In this example, the GP constructed an explanation of their use of the term, on this occasion, by stating they were echoing the patient’s words. However, in these cases, the doctor had used the term first.

Participant Responses to VSR

In this section, the impact of the method on participants is discussed in terms of both responses to being video recorded and responses to viewing the video in the postconsultation interview. Responses are discussed in terms of expressed emotions, under the subthemes of acceptance, disinterest, anxiety, and feeling vulnerable or threatened, followed by a description of reported and observed behavior change in the consultation.

Acceptance

When patients were asked about being video recorded, most patients said they were either unaware or had forgotten it was there. In general, patients were positive about the VSR component, particularly when asked directly about their experience of viewing their own consultation. It reminded me of a friend that I think is a bit eccentric, and, I think I’m getting just like her! (Patient 1) It was, you know, you think, “Ooh, what, how did I sound, what did I look like?” But, yeah, it was not a problem at all, no. (Patient 7)

GPs all reported the VSR component to be acceptable, although with varying degrees of comfort. No GPs expressed objections to the patients watching the consultations, although they recognized this was novel: I mean that’s gotta be okay really, if I can view the video of them, they can view the video of me. They’re sitting there anyway, so they should only hear and see the same things that they can see in the consultation, as long as I’m not pulling faces behind their back or anything like that.…but I’ve—I’ve not seen that before. GP I

Anxiety and distress

Three patients remarked they were conscious of not saying something “silly” or “stupid” during the consultation, suggesting the presence of the video camera may have evoked some anxiety. Some patient participants reported being uncomfortable with viewing themselves during VSR. Slightly embarrassed. I don’t really like seeing it. I thought I wish I’d worn some better clothes, rather than just my old jeans. It was alright. (Patient 18) I didn’t like it…The whole experience. I don’t like to think that, you know, my words are taped and things, because I might say something stupid or foolish—or personal. (Patient 15) I didn’t really notice it being on, to be honest, and patients didn’t either, I don’t think. (GP A)

GP Behavior Change as a Result of Video as Perceived by Self and Patients.

Note. GP = general practitioner.

However, later on in their interview, they were reflecting on a complex consultation: I remember, sort of, thinking, “Oh, no, the video’s on and I’ve not got all these results back and I can’t remember what we did,” and just talking. And I want to listen, I want to be seen to listen, but I want to know what stage we’re, we’re coming from, and so I was, kind of, kicking myself about that. (GP A)

Boredom and disinterest

A number of patient participants considered and questioned the purpose of viewing the video during the VSR interview: Is this getting us anywhere, getting me anywhere me watching this now? I know what’s coming next and how long it takes and it doesn’t seem important that we watch it now. (Patient 2) That was boring wasn’t it? (Patient 14)

Feeling vulnerable or threatened

Two GPs who reported feeling embarrassed or uncomfortable during VSR did so because they were not entirely happy with their consultation skills and possibly felt vulnerable about their practice. Well, I felt slightly embarrassed, really. I thought…because I’m concentrating on the medical thing, and blah, blah, blah and then she’s added on…{her joints} so yeah. I haven’t really explored it. (GP J) Ooh, it’s horrible watching yourself on video, isn’t it? I used a lot more medical jargon than I realized I did. (GP A) You’re a professional, so it doesn’t matter. If it’s a stranger, then you worry…A social scientist would look at behavioral patterns and all that isn’t it? So that would make me uncomfortable. (GP F) I’m trying to understand why you’re asking some of the questions. (GP E)

Reported and observed behavior change in the consultation

Patient and doctor perceptions of whether the doctor’s behavior was affected by the video camera are listed in Table 2. In four consultations, both GPs and patients reported there may have been a modest change in GP behavior. Patients talked about slightly more time given than usual or expected but also about encountering different GP attitudes to their joint problem than usual. GPs L and H may have been particularly mindful of being “professional” and of performing a “model consultation”: one was a GP trainer and the other divulged a bad experience with the video component of their professional exams. Interestingly, the GPs who consulted with the two patients who reported significant GP behavior change denied any influence of the camera.

Most of the GPs perceived that patients were comfortable with being video recorded, even to the extent that some reported patients to be “performing” for the camera, described by one GP as being more “joking and jovial.” In contrast, GPs J and K felt that patients might be “more formal” and more careful about their choice of language. For example, one gave an example of how a patient would always ask after the GP’s children but had not asked these sorts of more personal questions when video recorded. However, this talk related to previous experience of one or more of the 178 consultations collected in the study that was not about OA and not part of the in-depth analysis; no GP reported that they believed any of the 17 patients in the study had behaved differently.

How Participant Responses to VSR Informed Study Findings

Reported behavior changes were used to inform study findings and questioning during VSR. Where GPs suggested more consultation time had been given as a result of the study, issues of prioritization could be discussed, and prioritization emerged as a key theme in the main study analysis (Box 1). Doctors talked about the “optional” parts of the consultation that would have normally been omitted; this revealed attitudes to prioritization of joint pain in the context of comorbidity that would not otherwise have been apparent. Furthermore, the way additional time was used was informative; in two examples where GPs reported the consultation to be longer than usual, the additional time was used for screening of comorbid conditions rather than spending more time on the presenting complaint (joint pain).

Two patients reported that they had perceived a more positive GP attitude (to their OA) in response to the video, perceptions of GP attitudes could then be explored in more depth in interview. Those patient participants who reported perceived GP behavior change were able to contrast the GP attitude to their joint pain during the video recorded encounter, to that they felt they normally experienced. The exact nature of the difference could be explored.

The influence of the more negative expressed emotions in response to VSR on study findings is harder to disentangle and subject to some speculation. One interview with a subject reporting some anxiety had to be terminated early. There was some evidence that the interviews with patients expressing disinterest were less productive than others, one being very short and the other containing little talk on the subject of interest. The extent to which these observations were attributable to the VSR component of the research is not possible to determine.

Discussion

This study set out to determine both the specific added value of VSR, in the context of health-care research, and the impact of the method on participants. The findings demonstrate VSR adds value by enabling a deeper understanding of participants’ thoughts and reactions to specific parts of consultation dialogue, by facilitating participants to express concerns and possibly speak more candidly, and by eliciting a more multilayered narrative from participants.

This study is the first to the authors’ knowledge to report participant’s responses to the method. The method was broadly acceptable to participants; however, levels of mild anxiety and/or distress were reported or observed by both doctor and patient participants and this may explain in part why some participants reported behavior change as a result of the video. In the main, the responses of participants to the method were instrumental in understanding key themes in analysis.

Added Value of VSR

In our findings, we have reported that comments during playback are useful for highlighting significant events that may be overlooked by the researcher. Pomerantz (2005) also states this advantage, although she also warns about the limitations of relying solely on comments during playback for analysis which may be intended for the researcher or have no bearing on the events during the video recorded interaction. The finding that VSR is useful for events that may have been forgotten is also not new and has been described previously (Coleman, Murphy, & Cheater, 2000; Epstein et al., 1998).

However, the “before” and “after” design of this study has led to interesting and novel findings relating to the change in narrative that is produced following playback. GPs described typical osteoarthritic consultations before video playback, where OA might present as a sole complaint. The reality of the observed consultations, which contained fragments of discussion about OA interspersed with talk on multiple comorbid conditions, prompted more discussion on the prioritizing of OA relative to other long-term conditions, which in turn uncovered deep set attitudes to the condition which were not evident in the interview before video playback was introduced. GPs also justified their actions in relation to their views or professional norms. The video challenged these “moral” accounts, and this contributed to a greater critical reflection by doctors on their actions, motives, and beliefs. Checkland, Harrison, and Marshall (2007) and Pope and Mays (2009), among others, have previously described the limitations of standard interviews, suggesting that health professionals, in particular, may construct explanations for their behaviors during interviews which do not chime with findings from observations. VSR is useful for both challenging explanations and for prompting discussion on behaviors and events which doctors do not immediately recognize or disclose. VSR moves analysis from a generalized response by a GP to a specific, empirical situated focus, where the observed reality challenges the tendency to provide moral or ideal accounts.

The previous systematic review of the use of this method failed to identify any benefit of using VSR with patients (Paskins et al., 2014). However, this empirical study demonstrates the video appeared to empower patients to express what was important to them and to divulge more emotional or reflective responses to the consultation, moving from “contingent” factual based accounts to “core” narratives with deeper cultural meaning (Bury, 2001), for example, frustration with normalization of joint pain associated with aging. These changes in narrative emphasize the changing and dynamic nature of peoples’ perceptions; the same reality viewed from different vantage points can be interpreted in contrasting ways by the same person.

Participants’ Responses to VSR

In general, both patients and doctors reported being video recorded and participating in VSR to be acceptable. GPs did not have objections to patients participating in VSR despite this being reported as a possible barrier in previous research using this method (Blakeman et al., 2010). However, the finding that some GPs appeared to be unaware of this component of the study suggests in their haste to sign up, GPs were not fully aware of the study details; this illustrates the difficulties with gaining informed consent from time-pressed health professionals.

Despite the method being broadly acceptable, participants did describe various responses to either the video or VSR including anxiety, distress, feeling self-conscious, and bored. Among patient participants, the response to the method was highly variable. To our knowledge, the finding that patients may find viewing their consultation distressing or even boring has not been previously reported. It is possible that some of these expressed emotions hindered participants from opening up in the postconsultation interviews, although it proved difficult to provide any empirical evidence to confirm or refute this hypothesis.

In this study, there was evidence that the fact that the researcher is a health professional put GP participants at ease but also may have resulted in some of them feeling challenged. Coar and Sim (2006) suggest that a social scientist interviewer may have the advantage of not making a doctor feel they are giving the “right or wrong” answer in an interview; however, the findings from one participant in this study suggest that GPs may prefer to conduct VSR with a peer. Whether the study rheumatologist was perceived as a peer or not is not clear, with some evidence of GPs possibly feeling threatened or challenged in this study. One explanation for this may be that the researcher was considered, not as a peer, but a specialist in the research topic. Another explanation is that the GPs did feel threatened by the visual challenge of their reported behavior.

Health professionals using VSR in educational contexts should be aware of the possible anxiety and distress that may result from this method.

Behavior Change in Front of the Video Camera

A theoretical concern regarding the use of video recordings to study the consultation has been the extent to which the video camera may influence participant behavior. The results in this study provide evidence to suggest there is an influence of video on behavior, with doctors (and patients) making efforts to behave better, consciously or otherwise. Alternatively, behavior change may have been a response to anxiety about the study. In the case of more time being given, this may have been a logistical impact of the study, as slightly fewer patients were booked per half day surgery to allow for the consent process. Increased time for the consultation could also have led to the patient perceiving the GP was more prepared to listen or more interested in their problems.

However, an important question is to what extent this made a difference to the findings. First, evidence suggested that, although behavior was modified, it was not changed significantly. There were several occasions where GPs expressed surprise at their actions or language and where the observed consultation did not match up to the hypothetical typical consultation they had described. In all but one of the GP interviews, GPs were critical of their behavior in some way. Furthermore, there was great variability in the findings, again evidence that GPs were not following a “model” consultation. Second, the reported behavior change could be used positively to inform findings. VSR affords the advantage of not studying the video recorded consultation in isolation; the postconsultation stimulated interview provides an opportunity to explore with the participant whether they perceive they or the person with whom they are consulting (doctor or patient) is being influenced by the video process and the nature of this influence. In our example, the reported GP behavior change mostly related to time management and attitudes to OA; this was instrumental in understanding key themes relating to prioritization and GP attitudes in the primary analysis.

Lomax and Casey (1998) have previously described the central importance of participant responses to video (not VSR) in their study of body taboos and midwifery; in their study, the circumstances in which midwifes chose to turn the video on or off, and the talk about the video recorder, revealed insights into cultural beliefs about body exposure and intimate examination. Thus, rather than considering altered behavior as a threat to validity, altered behavior can provide further stimulus for reflexive analysis. Previous studies using VSR have not capitalized on the opportunity to either identify behavior change or include this in analysis.

Study Limitations

This evaluation is subject to a number of limitations. This evaluation is of one, relatively small study. The characteristics of the lead researcher who conducted the interviews and lead analysis, a specialist in the subject of interest, are likely to have influenced the findings. However, this is a limitation for all qualitative studies, and steps were taken to counter this limitation in analysis by the use of all authors to agree themes and two authors to code. Questions by the researcher on the acceptability of the method may not have unearthed the level of true feeling about the study, as participants may have been reluctant to disclose this; for this reason, analysis paid careful attention to observations and field notes in addition to patient interview responses. GPs in the study were arguably a self-selected cohort who were comfortable with being video recorded. Furthermore, the demographics of our patient sample may affect our findings due to the social meanings and processes attached to ethnicity and gender; the selection of consultations where OA was discussed resulted in a study sample that was older and consisted of more retired and female participants than the original population of consenters to video from which the sample was selected. Future studies using this methodology may find it useful to build in an evaluation of the VSR process by a third party to explore the level of distress, if any, that arises as a result of participation. A further consideration is to what extent the findings can be contextualized to other conditions or other nonprimary care settings. The findings relating to the primary aim of the study, understanding the OA consultation, revealed clinical uncertainty surrounding OA, which may have contributed to the findings in this article; specifically, anxiety and discomfort in doctors may have been in part a product of the subject being studied rather than the methodology. Finally, we have described the added value of VSR in the absence of a “control;” and for some of our reported findings, it is not possible to weed out if the added value or participant response would have occurred in a non-VSR interview. However, the ability to compare interview responses before and after video playback equated to a within person control.

Implications for Researchers Using VSR

This study identified a number of advantages of using VSR in health-care research as have been summarized in the section regarding utility of the method. Disadvantages of the method revealed in this study include the potential for either video recording or video playback to feel intrusive and/or anxiety inducing. There are important additional ethical considerations to consider, including the potential for the video to be heard/seen by other family and friends during replay interviews in patient homes. Although we did not have any evidence of this in our study, one must also consider the potential for the method to impact on the ongoing doctor–patient relationship.

VSR is used for education as well as research and is also used in other disciplines such as psychology and second language research (Gass & Mackey, 2000). We feel that researchers and educationalists using VSR should be aware of the possible anxiety and distress that may result from this method. In either research or educational contexts, participants need to be informed of the potential for distress to occur as part of full informed consent. Behavior change in front of the video should not be considered a threat to validity but as a stimulus for reflexive analysis. Further studies utilizing VSR need to consider the role of the researcher in the process. Specifically, our findings suggest that the doctor participants afforded “insider” status to the researcher because of their status as fellow health-care practitioners; ensuring similar professional backgrounds of researcher and participant may engender a trust relationship and may be important across other disciplines. Finally, acceptability of the method needs further evaluation, and researchers employing the method may consider third-party evaluation to explore acceptability further.

Conclusion

In summary, this study adds to the existing literature on VSR in health-care research by describing specifically how this method enables a more critical, more specific, and more in-depth response from participants to events of interest and, in doing so, generates multiple layers of narrative. This results in a method that, in our view, goes beyond fact finding and description and generates more meaningful explanations of consultation events and the meanings associated with these events in essence, getting straight to the core of what is salient to participants. The benefits of VSR need to be considered in conjunction with the important ethical considerations and the potential for this method to be intrusive; characteristics of the researcher are likely to be important in managing this careful balance.

Footnotes

Authors’ Note

At the time this study was conducted, Peter Croft was a UK National Institute of Health Research Senior Investigator. Dr. Tom Sanders was supported in the preparation/submission of this article by the Translating Knowledge into Action Theme of the National Institute for Health Research Collaboration for Leadership in Applied Health Research and Care Yorkshire and Humber (NIHR CLAHRC YH). www.clahrc-yh.nir.ac.uk. The views expressed are those of the authors and not necessarily those of the NHS, the NIHR, or the Department of Health. The center has established data sharing arrangements to support joint publications and other research collaborations. Applications for access to anonymized data from our research databases are reviewed by the Centre’s Data Custodian and Academic Proposal (DCAP) Committee and a decision regarding access to the data is made subject to the NRES ethical approval first provided for the study and to new analysis being proposed. Further information on our data sharing procedures can be found on the Centre’s website (![]() ) or by e-mailing the Centre’s data manager (

) or by e-mailing the Centre’s data manager (

Acknowledgments

We wish to thank and acknowledge the help of Chan Vohara, Debbie D’Cruz, and Charlotte Purcell for administrative support; Professor R. K. McKinley, Professor Chris Main, and Professor Krysia Dziedzic for input in study design; and the participating GPs and patients.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This article presents independent research funded by the National Institute for Health Research (NIHR) under its Programme Grants for Applied Research Programme (Grant Reference Number RP-PG-0407-10386). This paper also presents independent research funded by the Arthritis Research UK Centre in Primary Care grant (Grant Number 18139).

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.