Abstract

Detailed here is the creation and application of a replicable method bricolage that brings together Discourse Analysis, discourse analysis, and the theory of reasoned action to examine attitudes and beliefs of university science professors toward the discipline of education. This method used a two-phase method for analysis. The first phase looked for phrases that could be defined as either an attitude or a belief based on definitions taken from the social psychology and communication studies literature. The second phase interpreted the overall data to explore the influences on the formation of the attitudes and beliefs as well as to support or refute the findings from Phase 1. The need for a replicable Discourse Analysis method is apparent in the education literature, as is a solid definition of what constitutes an attitude or a belief. The method outlined here provides good definitions for attitudes and beliefs, a method for extracting both constructs from the data, and incorporates an internal crystallization process for looking at and comparing emergent themes from both phases of analysis.

Two ideas are presented in this article. The first addresses the continued desire by many researchers to examine attitudes and beliefs as they relate to a variety of topics. The second relates to approaches used to analyze such data. There is a rich base of research on which to draw when attitudes and beliefs are the focus of research.

As interest in and use of qualitative and emerging mixed-methods approaches increases, new ways to think about data analysis are also emerging, including a reliance on expertise drawn from multiple disciplines. As an example of this cross-disciplinary work, the methodology outlined here draws from several disciplines, including communication studies, science education, rhetoric, and social psychology. Additionally, while this methodology is in keeping with more traditional qualitative research, there are elements of quantitative research incorporated throughout primarily in the use of the theory of reasoned action (TRA).

Method bricolage (Fogelberg, 2014a) was introduced in a study of zoo signs and further developed in a study of university professors (Fogelberg, 2014b). It introduces a potentially replicable analytic method that uses discourse analysis and Discourse Analysis in discrete, but still qualitative, manners to specifically discern attitudes and beliefs, as defined by Fishbein and Azjen’s (1975) TRA.

The term bricolage was introduced into qualitative research by Claude Levi-Strauss (1962/1966) and has subsequently been expanded by a variety of scholars (Denzin & Lincoln, 2011; Wetherell, 1998). This article describes the creation of a replicable analytic model focused on extracting attitudes and beliefs about the discipline of education. By discussing the concepts of attitudes and beliefs, the TRA, method bricolage, and the specific analytic technique, it is hoped that science educators interested in attitudes and beliefs may use this method to produce research that is well grounded in both theory and practice as well as comparable across studies.

Defining Attitudes and Beliefs

Attitudes and beliefs are defined both conceptually and structurally. While conceptually there is significant agreement within the research community, structurally there is ongoing debate. The conceptual definition of attitudes occurred very early in research covering this construct and evolved over time, ultimately resulting in the accepted idea that they are one part of the mental domain of humans, and specifically contained with the domain of affect (Ajzen & Fishbein, 1977; Allport, 1967; Droba, 1932; Eagly & Chaiken, 1993; Fishbein, 1967a, 1967b, 1967c; O’Keefe, 2002; Thomas & Znaniecki, 1918).

Structurally, some researchers view attitudes as multidimensional (Eagly & Chaiken, 1993, 2005; Rokeach, 1968; Rosenberg & Hovland, 1960), while others view them as being unidimensional (Ajzen, 1991, 2012; Ajzen & Fishbein, 1977; Osgood, Suci, & Tannenbaum, 1957). For the purposes of this article, attitudes are viewed structurally as being unidimensional. Thus, taking into account the accepted concept of attitudes and using a unidimensional structural view of attitudes, the definition of attitudes for this article is in keeping with the currently accepted definition: a learned personal evaluation of a specified object, with the term object being a general designator representing anything from a value to a belief, person, event, product, policy, institution, or other identifiable “thing” (Eagly & Chaiken, 1993, 2005; O’Keefe, 2002; Rokeach, 1968; Thomas & Znaniecki, 1918).

Early discussions about beliefs are virtually nonexistent in the scholarly literature, and unlike attitudes, they are always paired with another idea (see Krech & Crutchfield, 1948; Rokeach, 1968; Scheibe, 1970; Thorndike, 1934). In contrast to attitudes, with beliefs there is more controversy about the conceptual definition and more uniformity with regard to its structure.

Today what is generally accepted in conceptually defining beliefs is that attitudes are a result of or predicated upon the beliefs salient to a particular attitude (Ajzen, 1991, 2012; Albarracin, Johnson, Zanna, & Kumkale, 2005; Eagly & Chaiken, 1993; Fishbein, 1967a, 1967b, 1967c; Fishbein & Raven, 1967; Rokeach, 1968), and structurally beliefs do not exist in isolation but are intimately connected with one another as well as with other distinct mental constructs. With significant support for the idea that beliefs are multidimensional, or more spatially complex, the separation between attitudes (unidimensional) and beliefs (multidimensional) provides a specific way to delineate between the two, thus addressing one of the ongoing challenges throughout the years of research into these constructs (see Dewey, 1922; Eagly & Chaiken, 1993; Fishbein, 1967a; McGuire, 1960; Rokeach, 1968; Thorndike, 1934).

Measuring Attitudes and Beliefs

The predictive value of attitudes has been proposed, challenged, and subsequently supported within given parameters (Ajzen, 1991, 2012; Ajzen & Fishebein, 1972, 1977, 2005; Festinger, 1964; Fishbein, 1967a, 1967b, 1967c; O’Keefe, 2002; Wicker, 1969); it is these parameters that helped form the theory used for the basis of the research upon which this methodology is built. Currently, the most commonly employed measures of attitudes may be broken into three overarching categories: direct (asking a participant for an evaluative judgment of the attitude object), quasi-direct (getting at attitudes by measuring responses that are attitude relevant and offer a basis for assessing the attitude), and indirect (neither direct nor quasi-direct assessment) techniques (O’Keefe, 2002).

The methodology outlined here called for an indirect assessment, as questions constructed for the semi-structured interviews were meant to elicit—without soliciting—attitudes and beliefs of the participants. This is the qualitative equivalent of the indirect assessments discussed above but is in keeping with a seemingly inconsequential reference by Droba (1932), who detailed the case method, a qualitative approach to studying attitudes. He was not convinced this method was a good approach however. “Some writers … have tried to analyze attitudes by the use of this method. However, an analysis of this type is subject to crude errors since it is made by a single individual” (Droba, 1932, p. 311). He later allowed for some utility, citing a single advantage: It could explain attitudes previously measured, allowing one to trace the “development of attitudes in one individual or in a smaller group” (p. 311).

Beliefs have been less directly measured in the research. They tend to be associated with, and emerge from, sets of attitudes, but most discussions in the literature focus on measuring attitudes. The method outlined here views attitudes and beliefs as individual, yet connected, constructs. Thus, beliefs are measured in parallel with attitudes. It is important to separate the two constructs to interpret them within the context of the TRA.

The TRA

The TRA was instrumental in the development of this methodological approach to determining attitudes and beliefs. In spite of its roots as a quantitative tool, it provides the best theoretical fit for helping define and subsequently identify the differences between attitudes and beliefs during the analytic process.

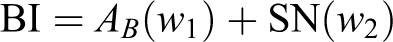

The basic premise of the TRA is that “attitudes develop reasonably from the beliefs people hold about the object of the attitude” (Ajzen, 1991, p. 191). The TRA also recognizes that any number of beliefs about an object may be held, but those most salient to the particular situation have the most influence on the subsequently formed attitudes (Ajzen, 1991, 2012). Finally, the TRA puts forth the concept that behavioral intention is a function of both the attitude toward performing the specified behavior and the individual’s beliefs about what specific others (normative referents) think should be done (Ajzen, 2012; Ajzen & Fishbein, 1972). Later refinements of the TRA identified normative behaviors as subjective norms and divided subjective norms into injunctive norms, or those beliefs based on inferences made about what “important others want us to do,” and descriptive norms, or beliefs based on “the observed or inferred actions of those important social referents” (Ajzen, 2012, p. 17). Thus, each behavioral intention is based on a grouping of a set of beliefs and attitudes that are distinct from each other. Mathematically, it appears as follows (Ajzen, 2012, p. 16; O’Keefe, 2002, pp. 102–103):

with AB = Σbiei and SN ∝ Σnimi ,

where BI = behavioral intention,

AB

= attitude toward performing the behavior,

w

1, w

2 = factor weights, SN = subjective norms,

ni

= normative belief,

mi

= motivation to comply with referent (i),

bi

= strength with which each belief is held,

ei

= evaluation of each belief.

Within the context of the TRA, different attitudes stem from different beliefs, and there is a linear move from beliefs to attitudes to behavioral intentions that runs each “set” parallel to other sets that might fall under the same category but maintain their own identities. In other words, to change an attitude, one must first change the beliefs (whether through changing the belief strength, the belief salience, or the belief in) associated with that attitude (O’Keefe, 2002), and one cannot change a parallel attitude to achieve the same goal (i.e., changing a teacher’s attitude toward student assessments will not have an effect on a teacher’s attitude toward reading education research).

The Need for a Replicable Analytic Model

Rogers et al.’s (2005) review of 46 articles in the education literature using critical discourse analysis (CDA) appears to use this term interchangeably with both discourse analysis and Discourse Analysis. Definitions of CDA, the research/focus question, study context, data sources, and data analysis methods for each article were provided. In their analysis of the analytic methods used across the articles, three main frameworks were identified, including Fairclough’s three-tiered method, poststructural discourse, and discourse analysis. The authors note, however, “the actual analytic procedures of CDA were carried out and reported on (or not reported) in a vast range of ways” (Rogers et al., 2005, p. 380). For instance, one author looked at specific patterns of language use, another used denotative microanalysis and connotative analysis of the data, and yet another looked for the recurrence of specific terms (Rogers et al., 2005). It can be seen here, then, that although falling under the large umbrella of CDA, this methodology is either unclear or unable to meet the needs of many researchers.

Kane, Sandretto, and Health’s (2002) review attempted to define the included authors’ data analysis techniques with little success. For example, Dunkin’s 1995 elicitations of participants’ “‘concepts of teaching effectiveness’ … [were] revealed through ‘careful analysis of the responses’” (as cited in Kane, Sandretto, & Heath, 2002, p. 198). Willcoxson’s (1998) technique consisted of acquiring clarity of information gained from analysis of the transcribed interview data. Menges and Rando (1989), as cited by Kane et al. (2002), used “an inspection of responses that took context and voice inflection into account” (p. 198). However, upon reviewing the original article, no such analytic technique is offered; in fact, no analytic technique is outlined at all (Menges & Rando, 1989). The authors of the review were critical of the value of the conclusions drawn from these works, as their methods of analysis were unclear and poorly defined (Kane et al., 2002). It is a fair assessment; the two reviews included here illustrated not only the method bricolage previously discussed but also the inconsistency and difficulty of using such a broadly defined method in general.

What emerged from the contrast of these two meta-analyses from educational research is that researchers have embraced the theoretical underpinnings of CDA in particular (whether wittingly or not), but the methodology tends to be fractured and ill-defined, if defined at all (Rogers et al., 2005). For those education researchers interested in searching for evidence and application of the social psychology constructs of beliefs, discourse analysis seems to be used in some form or fashion but not recognized as such by the researchers; hence, while potentially valuable information has been published, its validity—even within the context of qualitative research—is generally questionable.

Method Bricolage

Wetherell has laid out a formal argument for a “method bricolage” (Fogelberg, 2014a) when analyzing discourse. A bricoleur puts together a “set of representations that are fitted to the specifics of a complex situation” (Denzin & Lincoln, 2011, p. 4); in essence, a bricoleur is a quilter, carefully selecting pieces of research—in this case methods—and combining them purposefully to create a new and useful tool. In keeping with this idea, Fogelberg’s (2014a) combination of Gee’s word analysis technique, Fairclough’s ideological-discursive formations, and Foucault’s object-creating discourse theory provided a Discourse Analysis (DCA) framework for analysis and interpretation of interview data focused in extracting attitudes and beliefs of undergraduate science professors. The method produced by this method bricolage encompassed the entire research process, from question formulation to the implications of the participants’ answers, both individually and within the context of the greater social institutions in which each participant is enveloped.

The methodology outlined here is anchored by Gee’s (2011) work, which provided the most detailed outline over a broad range of components. Fogelberg’s (2014b) reinterpretation and addition of one of Fairclough’s (2010) big ideas combined with one of Foucault’s (1972) main concepts provided additional levels of nuance that filled out this methodology.

Analytic Process

The analytic process requires two phases, each designed to elicit themes independently of one another. Phase 1 is intended to produce a quantifiable measure of attitudes and beliefs, whereas Phase 2 is intended to provide a more interpretive view of the data. Both are important for gaining the fullest picture of the data.

Extracting Attitudes and Beliefs

Transcripts were analyzed after each set of interviews was completed and fully transcribed. In this study, transcriptions were completed by the researcher, but they could be completed by a member of the research team. The act of transcribing helped confirm the field notes and memos and helped bring back to mind any nuances that may have been missed during the actual interview. It is important to note that if transcription had been completed by an outside source, rich field notes would have become even more important. It would also be strongly recommended that the original audio recordings were listened to by the researcher(s) at some point during the analytic process for the reasons addressed above.

The initial reading of each interview provided a chance to look for clauses containing an attitude or belief statement; these clauses were underlined or otherwise marked for ease of recognition during revisits of the data. Subsequent readings of each interview looked more closely at each marked clause to determine whether they actually contained an attitude or belief statement as defined by the TRA.

Phase 1 Analysis and Identification of Explicit Themes

Part 1

For consistency, it was important to follow the definitions of attitude and belief as concretely as possible. This made Phase 1 analysis quite literal, as it took phrases or words from the clauses to support both the evaluative and strength assignments. Although a complete list of words and phrases indicating evaluation and strength was not possible, Tables 1 and 2 provide some examples from research using this analytic process to illustrate how this portion of the analysis was approached.

Examples of Evaluative Words and Phrases.

Examples of Words and Phrases Indicating a Strength Component.

For organization and ease of access, responses were recorded in Microsoft Excel, although any program designed to organize large amounts of data could easily be used. Each participant was assigned his or her own individual worksheet with seven columns: clause, page number, code, evaluation, strength, object, and notes. Marked clauses were transferred to the appropriate worksheet, justified using the appropriate definition, coded for the evaluative portion (positive, negative, or neutral), and assigned a strength (very low, low, moderate, high, very high) as necessary. If a clause contained only an evaluative portion, it was designated as an attitude. If a clause contained both an evaluative portion and a strength indicator, it was designated a belief. Notes were recorded as needed, for instance, if a contextual clue was missing from the original clause (Table 3).

Example of the First Coding.

Note. RU/VH = Research University/Very High activity; n/a = not applicable.

Part 2

To better manage the data, attitude/belief objects were analyzed for repetition. Each participant’s coded interviews were revisited and every unique object listed. This part of the analysis did not look for repetition of objects within every participant’s data but instead looked for unique objects across the data sets. This secondary analysis of Phase 1 produced a variety of individually coded objects from each set of interviews and also produced some objects that overlapped (Table 4).

Determining Explicit Themes.

Note. DE = discipline of education; PD = professional development.

The next step was to compare the lists to determine which objects, if any, were common to both sets of data. Once overlapping objects were identified, a count of the number of times each object was coded provided insight into which objects appeared to have the most commonality between the interviews. For example, in this study, the object “teaching” was common to both sets of interviews, and counting every instance of the object being coded produced a relatively high number (175). However, another common object, “communication” produced a low number (6). Thus, teaching is coded as an object in both sets of interviews and is clearly more common than communication.

It is important to remember that the total count did not describe whether an object was solely contained within a single participant or coded across multiple participants. The total count reflected only how many individual times the object was coded across all interviews.

The common objects identified in this phase of the analytic process were termed “explicit themes” because they emerged as discrete concepts from a concrete, literal process that required minimal interpretation and looked solely at the discourse of the data; they were quantifiable, clear, and overt, or explicit. This process also provided the basis for comparison to the implicit themes that emerged from Phase 2 analysis discussed below.

Phase 2 Analysis and Identification of Implicit Themes

The implicit themes are so named because they were hidden in the data and found through interpretation that came from an understanding of the entire Discourse. In other words, the implicit themes were not determined by an obvious process involving discrete clauses and assigned objects but rather through interpretation of the data set in its entirety.

Although it may appear that the analytic process occurred in a linear fashion, the attitude/belief extraction (Phase 1 analysis) and DCA (Phase 2 analysis) overlapped quite a bit. Research notes in the margins of each participant’s transcript were extremely helpful, and rereading each interview while coding attitude/belief clauses was imperative to ensure the nuances were captured as completely as possible. Research memos helped identify implicit themes, as they tended to reference responses from other participant(s) that were similar to other phrases contained within the data sets. Each reading increased familiarity with the data, facilitated the memory of statements from other participants, allowed for notation of the similarities, and used the similarities to move through Phase 2 analysis.

At the completion of the Phase 1 analysis for each set of interviews, Phase 2 analysis was already well under way. At this point, it was helpful to revisit the research memos for each data set; they were then compiled into a single list of consistent concepts that appeared to be emerging across the interviews. This provided an initial set of comments that later became the basis of the implicit themes. Examples 1a, 1b, and 1c below illustrate the evolution from memo to implicit theme.

Example 1a

List of actual memos taken from interview margins - lab being such an important assessment opportunity but so many profs don’t teach the labs! (lack of continuity?), - most seemed to not believe the [tenure] system could/would ever change, - friction between admin and teachers, - again, the hands-on lab/research experience is VERY influential, - again, experience, - most see a benefit to having research and education, - open to learning about teaching, and so on—but no reward, time constraints, - institutional constraints, - consistent (comment about the statement “I do NOT like administrative work”), - reflects the themes from the first interviews, - teaching doesn’t gain you status the same way research does, - using student evals for reflection and change of teaching? - student evals again.

Example 1b

Summarizing of research memos - distraction of service appears fairly constant/universal—dislike of and frustration with the amount of time taken up by administrative tasks, - almost all (8–9/10) mention at least one to two mentors/professors that strongly influenced them to teach—most at the graduate level, one at the undergraduate level; most also mention the importance of hands-on/lab experience, - many specifically mention that teaching poorly or OK gets you the same benefit as teaching well, but the latter takes much more time, which is time away from their research (but even lecturers mention time as a constraint).

Example 1c

Grouping of memos into emergent themes

Challenges to (good) teaching - “distraction” of service/workload outside of the classroom; - disconnects between administrators and professors; - large class sizes leading to lack of relationships with students; - too much material to be covered in a short amount of time; - nerves about teaching—confidence/lack of confidence; - 9/10 discuss the general lack of time leading to a number of things, including lack of ability to establish good relationships with students and inability to balance teaching, research, and service; - increasing number of students working outside the classroom, leaving both students and professors frustrated because students are unable to focus as well.

Influences - many had family (usually parents) who were teachers, - 8–9/10 mention at least one to two mentors/professors who strongly influenced them to teach—most at grad level, one at undergrad (but LAB experience as an UNDERGRAD was singularly important in influencing them to pursue SCIENCE), - both lecturers mentioned the presentation of quantitative data as being the driving force behind either their own or a colleague’s change in pedagogy/teaching style, - student evals are referred to by almost all (if not all) participants as helping them measure their teaching ability/effectiveness.

As with Phase 1 analysis, once the themes from Phase 2 were identified for each set of interviews, they were cross compared to determine if any overlapped. This required some interpretive tweaking to reimagine themes that were very close but not replicated exactly. For Phase 2, the overlapping emergent themes ended up being the final implicit themes (Table 5).

Example of Emerging Implicit Themes.

Note. DE = discipline of education; PD = professional development.

Identification of Overarching Themes

Once the explicit and implicit themes were identified, they were compared to see if there were any parallels. Any of the explicit and implicit themes that were parallel or similar became the final overarching themes (Table 6).

Determining Overarching Themes.

Note. DE = discipline of education.

In this research study example, there were 11 matching objects found during Phase 1 and three matching emergent themes during Phase 2. The three implicit themes were also found among the 11 explicit themes, thus providing a nice point of crystallization for the analysis. Had there been no overlap between the explicit and implicit themes, it would have prompted a return to the interview questions, a possible need for additional interviews of participants, and/or a reevaluation of the initial coding processes.

Once the overarching themes were determined, it was noticed that each overarching theme had some common threads, or subthemes, that occurred throughout. These subthemes were derived from an overall familiarity with the data, a deep understanding of the attitudes and beliefs that emerged from the analysis, and some interpretive license being applied through an overall analysis of the Discourse. Consistent revisitations of the research memos also helped with recognizing subthemes. For example, the overarching theme of personal and professional influences above might have had the subtheme of student evaluations, which was referenced both during Phase 1 (Table 3) and Phase 2 (Example 1c).

It was through exploration of the subthemes that the overarching themes were ultimately supported. The use of a two-phase analytic system that abided by specific but fluid guides helped to organize the data, presented opportunities for themes to emerge separately and be cross checked internally (crystallization), and presented the researcher an opportunity to incorporate the entire Discourse into the analysis.

Summary

Although the analytic phases overlapped in the middle, each phase was a distinctly different analytical process that produced themes independently of each other. Phase 1 provided explicit, concrete evidence of the attitudes and beliefs being investigated. It also provided a less interpretive analysis of the attitudes and beliefs identified in the data, which helped reconcile the use of a traditionally quantitatively analyzed theory, such as the TRA, with a more qualitative approach to analysis. Because the majority of work in attitudes and beliefs has been quantitative, this reconciliation of the data provided a substantial base for the research.

Phase 2 provided implicit information that corroborated, was distinctly different from, or was in opposition to the attitudes and beliefs findings that emerged from Phase 1. Because this phase was more interpretive, the nuances of the participants’ responses were more fully reflected in the analysis, sometimes producing contradictory information with regard to the attitudes expressed by the participants as a whole. It was this phase of analysis where it became evident that taking in the entire Discourse was important, as in some cases it confirmed the Phase 1 findings, and in others it contradicted them.

Discussion

Educational researchers are increasingly accepting qualitative research as rigorous and important. One of the methods often used, but rarely in a consistent manner, is Discourse Analysis. This method has been applied on numerous occasions to education research focused specifically on determining attitudes and/or beliefs of teachers at all levels (Ballantyne, Bain, & Packer, 1999; Kane et al., 2002; Rogers et al., 2005). The problem with lacking a replicable analytic model is further compounded by the fact that attitudes and beliefs have not been concretely defined by the education researchers (Pajares, 1992). This article attempts to provide a concrete definition of attitudes and beliefs, as taken from the social psychology and communication studies literature, and provide an analytic methodology that is clear enough to be replicable but not presented in such a lockstep manner that individual researchers have no interpretive freedom.

Through method bricolage, a way to use Discourse Analysis to determine attitudes and beliefs toward a specified object that is accomplished in a two-phase manner has been developed. The methodology’s strengths lie in the reliance on a concrete definition of the construct(s) being investigated, built-in crystallization of the data (or an ability to see that the data is flawed), and adaptability to evaluate a variety of constructs. The weaknesses lie in its youth, having only been used in one research study by one researcher, and its time-intensive processes.

Methodological Considerations

While it may not be practical to use this methodological approach for all forms of discourse analysis or Discourse Analysis, it could certainly be adapted to a number of them. Use of a concrete and fully accepted definition for the construct being investigated allows for a more objective approach to analyzing the discourse. This, if appropriately applied, can then support the interpretive analysis of the Discourse that occurs during Phase 2. Combined, the explicit and implicit themes are easy to compare to see if the emerging themes overlap; with overlap, crystallization is achieved. Without overlap, perhaps the research questions, the analysis, or some other aspect of the research study needs to be revisited. Or perhaps no overlap is a finding in and of itself.

Most importantly, this method bricolage allows both the micro (individual words) and macro (entire Discourse) pictures contained within the data to be seen. At the micro level, looking at and considering each individual word forces an understanding of the general connotation of the word in everyday English as well as an understanding of the context that might override that connotation within the clause itself. At the macro level, determinations of whether a gesture, a snort, a sigh, or a pause are important in how each word and each phrase are interpreted within the sentence, the individual answer, the full interview, and the complete data set must be made. The biggest strength of this methodology is that it allows a full view of the discourse and Discourse, integrates them fully, and provides an internal crystallization process that helps provide a richer and more complex view of the data.

Limitations

For DCA to be properly achieved, individual contact with each participant is necessary. This means that at least one of the interviews should be completed in person (ideally) or minimally, through a real-time audiovisual aid (i.e., Skype, Facetime), which is time, and potentially cost, intensive.

The determination of what is positive, negative, or neutral is a challenge. Short of coming up with a list of words that fit each category, this limitation will be a continual issue. While Phase 1 is intended to be more concrete because it is based on a definitive definition of the constructs being investigated, there is still an interpretive aspect that cannot be fully eliminated. The difficulty lies in the nuances associated with each utterance; discourse analysis seems relatively concrete, but we are often incapable of separating out the literal and implied connotations of words and each researcher brings with them a unique lens and perspective on language and Discourse. However, it is this room for interpretation that also allows each researcher to obtain a fuller grasp of the language within which she or he is working.

Future Work

A method bricolage is presented in an attempt to provide a replicable analytic model incorporating Discourse Analysis. Here, attitudes and beliefs are concretely defined, extracted from the data, and compared on both the micro (discourse) and macro (Discourse) levels. The current education literature demonstrates interest in attitudes and beliefs of students and teachers at all levels but lacks a coherent definition of either construct and lacks a replicable model of analysis, leading to concerns about the reliability of the conclusions drawn by previous researchers.

The methodology outlined here lays the groundwork for future scholars to investigate attitudes and beliefs using a framework that may be refined with use but still allow comparisons across studies. It is meant to delineate a discrete technique that combines with an interpretive analysis to produce independent themes that support each other through internal crystallization.

Continued research using this methodology is in progress. Application of the model has been proposed for use in a National Institutes of Health grant submission, which will use the two-phase model and search for word similarity between medical and public health students and faculty. The versatility and adaptability of the model is demonstrated by this application, and it is hoped that other researchers will find much use for the method bricolage provided.

Footnotes

Acknowledgments

The author would like to thank Dr. Molly Weinburgh, Dr. Adam Richards, and Dr. Sarah Robbins for their advice, editing, and mentorship throughout the writing of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Scholarship and research assistantship funding provided through the Andrews Institute for Mathematics and Science for the duration of my doctoral studies.