Abstract

In our work, we systematize and analyze implicit ontological commitments in the responses generated by large language models (LLMs), focusing on ChatGPT 3.5 as a case study. We investigate how LLMs exhibit implicit ontological categorizations reflected in the texts they generate, despite having no explicit ontology. The article proposes an approach to understanding the ontological commitments of LLMs by defining ontology as a theory that provides a systematic account of the ontological commitments of some text. We investigate the ontological assumptions of ChatGPT and present a systematized account, that is, GPT’s top-level ontology. This includes a taxonomy, which is available as an OWL file, as well as a discussion about ontological assumptions (e.g., about its mereology or presentism). We show that in some aspects GPT’s top-level ontology is quite similar to existing top-level ontologies. However, significant challenges arise from the flexible nature of LLM-generated texts, including ontological overload, ambiguity, and inconsistency.

Introduction

Large language models (LLMs) internalize knowledge about the world, which is reflected in the texts they generate. For example, questions like

ChatGTP Uses Categories Like “Living Organism” and “Inanimate Object.”

In this article, we will analyze these ontological commitments and present a top-level ontology that represents ontological distinctions that are made by ChatGPT 3.5 (Section 4). As we will discuss in Section 3 in more detail, because of the inherent differences between the technologies, the step from LLM to ontology is methodologically quite problematic. In Section 5 we focus on the differences between ChatGPT and top-level ontologies from the literature (e.g., BFO, DOLCE, UFO). While terms in an ontology are associated with fixed compositional, model-theoretic semantics, LLMs are trained to produce tokens stochastically depending on context. Thus, while ontologies resolve ambiguities, LLMs reproduce them. The mercurial nature of the terms used by LLMs is a significant obstacle to the investigation and the use of LLM’s top-level ontologies.

Nevertheless, we believe they are interesting to study for two reasons: since the ontological distinctions made by LLMs are a distillation of the ontological distinctions made by the authors of the millions of texts that the LLMs are trained on, this top-level ontology may be considered as an approximation of the common sense ontology that underpins everyday discourse. To study this common-sense ontology is of interest in itself. Further, there are already various efforts to use LLMs in the ontology development process (Ciatto et al., 2024), and these tools will likely become a staple in ontology engineering. Understanding the ontological assumptions of LLMs will make it easier to integrate the output they produce within the context of top-level ontologies like BFO, DOLCE, or UFO.

We aim to develop the underlying top-level ontology of ChatGPT that covers the most important and basic categories. There are already numerous manually created top-level ontologies of high quality. Partridge et al. (2020) present and compare 37 different TLOs in their overview. Some well-known TLOs are BFO, DOLCE, GFO, UFO, and GMO. These top-level ontologies differ methodologically and philosophically. BFO by Arp et al. (2015) and Smith et al. (2015), for example, embraces ontological realism, while DOLCE by Gangemi et al. (2002) is more focused on conceptual and linguistic aspects of ontology engineering, and GMO is only linguistically motivated (Bateman et al., 1995). Other approaches, such as UFO, attempt to synthesize the ideas of the TLOs (Guizzardi et al., 2015). UFO emerged from a synthesis of DOLCE and GFO, a multicategorial approach by Herre (2010). TLOs can ultimately form a basis for specific domain ontologies, which is why SUMO, for example, is very broadly diversified to offer as many points of contact as possible (Niles & Pease, 2001). While the different ontologies represent different approaches, these TLOs are characterized by the fact that they were developed manually, are of high quality, and are philosophically sound.

Our work differs from the existing work on TLOs since our goal is not to manually create a new TLO, but to investigate the top-level ontology of ChatGPT. ChatGPT is based on the GPT-3.5 model, a kind of LLM or, more precisely, a generative pre-trained transformer (OpenAI, 2024). Other LLMs are, for example, Microsoft Copilot (Spataro, 2023), Google Bard (Pichai, 2023), and Claude (Anthropic, 2023).

LLMs are already used for ontology development, and there are many different high-quality and well-founded research approaches, for example, using it for combining terms for representing domain entities (Lopes et al., 2023), enriching ontologies with a fine-tuned GPT-3 model as a tool (Mateiu & Groza, 2023) or exploring the facilitation of (semi-)automatic construction of knowledge graphs, through open-source LLMs (Kommineni et al., 2024). But there are many other projects and approaches to support ontology development using LLMs (Babaei Giglou et al., 2023; Caufield et al., 2023; Chen et al., 2023; Hertling & Paulheim, 2023; Langer et al., 2024; Pan et al., 2023; Zhao et al., 2024). The existing research is about using LLMs to support the development of domain ontologies. In contrast, we focus on the top-level ontology of ChatGPT.

Methodology

The responses of LLMs may contain ontological commitments. For example, the response of ChatGPT in Figure 1 uses ontological categories of living organisms, inanimate objects, characteristics, abilities, and (social) behavior. Further, while it is not stated explicitly, the phrasing seems to indicate that living organisms and inanimate objects are disjoint, and ChatGPT asserts a “is used for” relationship between a kind of inanimate object and its function. In Section 4 we will systematize these assumptions and present the result as “GPT’s top-level ontology.” However, this goal raises several important methodological concerns. Most importantly, is there such an ontology in any meaningful sense of the word?

To address this question, we need to be aware that the term “ontology” is used ambiguously in the literature: An ontology

Strictly speaking, an LLM uses an ontology in neither of these senses. LLMs have no access to reality beyond the documents that are in their training corpora and, thus, have no access to ontology

Further, LLMs are like stochastic parrots, which produce without any comprehension texts that are “not grounded in communicative intent, any model of the world, or any model of the reader’s state of mind” (Bender et al., 2021). A parrot may be trained to say “There are possible worlds!,” but we would not consider it a modal realist, since it lacks both the communicative intent as well as the understanding of the concepts involved. The same is true for LLMs, and, thus, it would be a category mistake to attribute a conceptualization or an ontology

One could argue that while the parrot lacks understanding of its utterances, they reflect the ontology

In the remainder of this article, we use “ontology” in a fourth sense, namely as a theory of ontological commitments. More precisely:

Let

Definition 1 is based on a variant of an entailment account of ontological commitment (Bricker, 2016). An ontology is a theory (i.e., set of sentences) that provides a systematic account of the ontological commitments of some text

Definition 1 has the benefit of being applicable to the texts generated by LLMs without having to assume that LLMs can form concepts, although LLMs produce inconsistent texts. The goal of this article is to provide a systematic account of important ontological commitments in the text generated by ChatGPT.

This task is complicated by the fact that the user may influence the ontological commitments in generated texts by the prompt that is used. For example, if one asks ChatGPT to explain the difference between particularized properties and tropes, it will generate a text that contains ontological commitments to particularized properties and tropes. Analogously, by providing the appropriate prompts, one can induce ChatGPT to provide a Platonian or an Aristotelian account of the nature of change. Since LLMs are trained on text corpora that contain philosophical texts, a user may prompt ChatGPT to generate texts reflecting a vast array of philosophical perspectives, which contain equally diverse ontological commitments. However, for the purpose of our article, we are not interested in the ontological commitments of texts that reflect philosophical positions in the literature. Since our goal is to study ChatGPT’s ontological commitments and assumptions, which may influence its usefulness as a tool for ontology engineering, we use prompts that are designed to reveal ontological commitments without priming ChatGPT to respond based on the philosophical literature. Another difficulty is that, in contrast to a human, ChatGPT has no introspection about its ontological commitments. For example, a prompt like “What are the most general ontological categories that you use to organize your knowledge?” will prompt it to generate an answer about ontological categories from the literature, but according to our observation, these are not necessarily the ones it actually uses. 4

Therefore, to study ChatGPT’s ontological commitments, we use a more indirect approach by asking questions like the one in Figure 1, which elicit responses that contain ontological categories (e.g., animate object and inanimate object). These categories we use in follow-up questions about similarities of entities in these new categories (e.g., “What is the difference between the monkey and monkey’s behavior?”) and other possible instances of these categories (e.g., “Are shadows inanimate objects?”). We also use more theoretical questions about the relations of the categories (e.g., “Are there objects that are both animate and inanimate?”). However, these sometimes lead to responses by ChatGPT that are incoherent with follow-up questions. For example, in the same session, when asked whether there are entities that are both physical and abstract, ChatGPT claimed that national flags exhibit both physical and abstract characteristics. However, when asked whether a national flag is a physical object or an abstract object, it responds that it is physical and not abstract. This example illustrates the points made above: the texts that are generated by ChatGPT are neither logically consistent nor informed by introspection. For the same reason, asking ChatGPT for definitions is not as helpful as one might hope. For example, it defines “physical entity” as “entities that have a tangible, material existence” and “abstract entity” as “entities that lack a tangible, material existence.” Thus, according to these definitions, the categories should provide a partition of entities. However, as just mentioned, it sometimes claims that some entities are both abstract and physical. Further, it also claims (at least sometimes 5 ) that shadows lack tangible, material existence but are not abstract entities.

These examples illustrate that to understand the ontology of ChatGPT, asking it for definitions of the categories it uses frequently leads to misleading responses. For this reason, we study ChatGPT’s ontology primarily by prompting it to use categories for the classification of examples or distinguishing categories.

A particular challenge for studying the ontology of LLMs is that only minor changes to prompts may lead to different results. 6 To ensure that the ontology we present in the next section reflects the typical ontological commitments of texts produced by ChatGPT, we studied the results of asking similar questions in different variations. We only included categories that were used consistently in different contexts. However, since ChatGPT generates text based on a stochastic process, it may produce texts that are inconsistent with the ontology we present in the next section. This is a challenge for the verifiability of our claim that the ontology presented in Section 4 reflects the ontology of ChatGPT. Thus, to ensure at least transparency, we published the transcripts of our interactions with ChatGPT, on which our claims are based on. 7

Hierarchy

In this part, we present the ontological categories, which we have isolated in the conversations with ChatGPT. As already discussed in Section 3, ChatGPT does not use fixed definitions of its terms, and it sometimes provides what seems to be conflicting or outright contradictory responses. However, there is a stable core of ontological commitments that are consistently made by ChatGPT (with few exceptions).

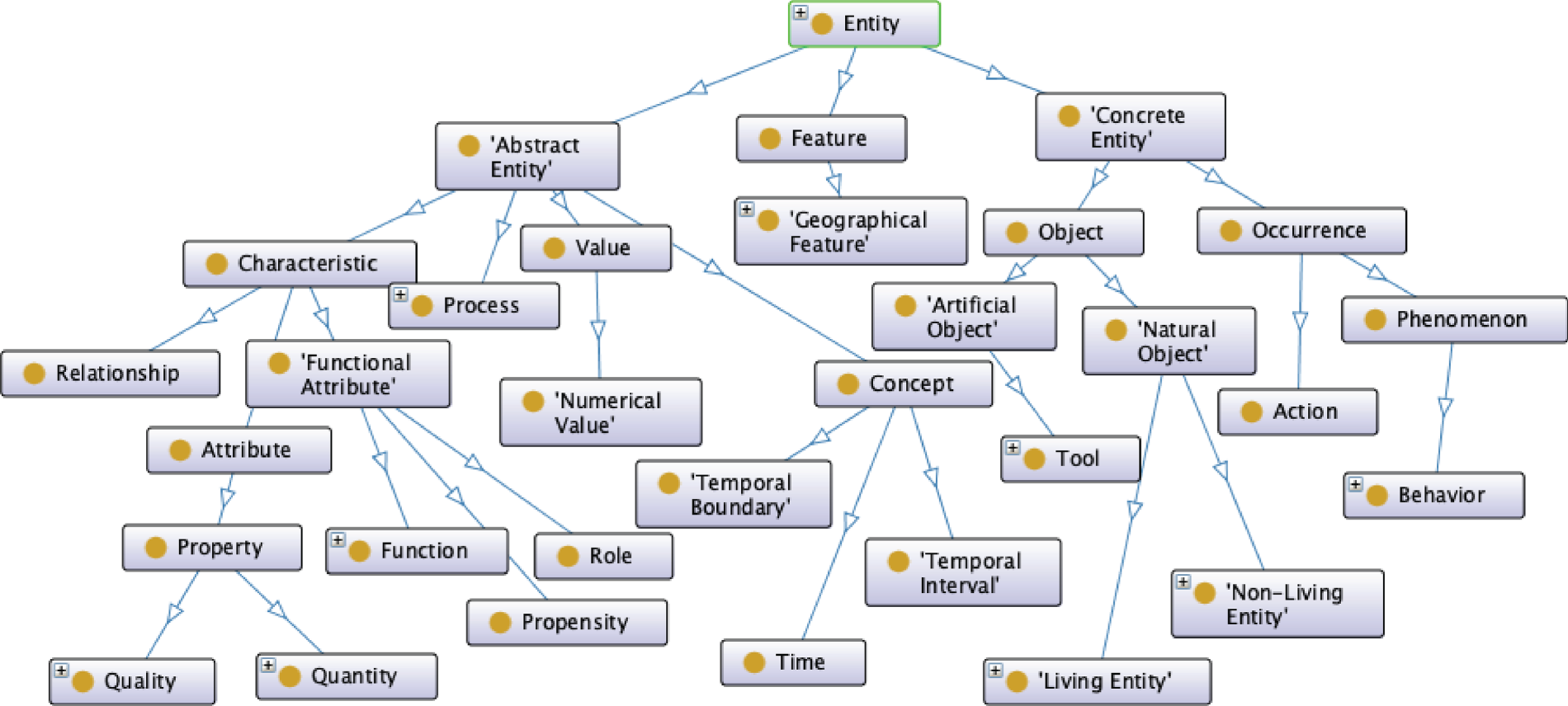

This is the source that we used to construct the ontology that we present in this section. Figure 2 shows the subsumption hierarchy of the top-level categories and some additional classes, which illustrate the meaning of the higher-level categories. The ontology is available at https://w3id.org/gptto/v1.0.0/>. We will discuss the limits of this approach in Section 5.

Hierarchy of ChatGPT’s Top-Level-Ontology.

The most general category ChatGPT uses is

In contrast,

The category concrete entity is divided into

Another subclass of occurrence is

While concrete entities primarily comprise objects and occurrences, the class of abstract entities is subdivided into concepts, values, processes, and characteristics.

Apart from the classes, we have also tried to recognize

In

Since ChatGPT does not use a formal ontology that includes axioms, and its use of terminology is fluid, it is difficult to identify the formal properties of these relationships as discussed in Partridge et al. (2020). One criterion that ChatGPT meets is that the subsumption hierarchy is upward bounded in the sense that “entity” is the top category that subsumes all other categories.

If ChatGPT is asked about dinosaurs, it answers that they existed in the past. Hence, ChatGPT uses tensed language to distinguish between past and present. However, since ChatGPT is trained to generate well-written English sentences, it follows linguistic conventions. Thus, it is quite difficult to determine whether the tensed language is just a stylistic choice or an ontological commitment to presentism. For example, if asked whether (the late) Kirk Douglas is the father of Michael Douglas, ChatGPT answers affirmatively. If asked, whether this entails that Kirk Douglas exists, it provides conflicting responses: first, it claims that this fact entails that Kirk Douglas exists, then it claims that he “no longer exists in the physical sense, but his legacy lives on through his work in film and the memories of those who knew him.” 14 It seems to us that ChatGPT’s notion of “existence” is quite mercurial, and it switches back and forth between a presentist and an eternalist point of view.

Discussion

In the previous chapter, we presented ChatGPT’s top-level ontology, that is, a hierarchy of categories (represented in an OWL file) and ontological assumptions. However, as pointed out in Section 3, ChatGPT does not use an ontology (in the sense of a file), what we presented is an attempt by the authors to provide a systematic account of the ontological commitments that we identified in texts that were generated by ChatGPT. In this section, we discuss the similarities and differences to “normal” TLOs.

In some aspects ChatGPT’s top-level ontology is surprisingly traditional: it contains a hierarchy of categories, which are instantiated by instances. The distinction between living and non-living entities has been established since Aristotle’s Categories (Barnes, 1984). Many of the other categories are similar to the ones used in established ontologies. For example, similarly to BFO, the ontology contains objects that may participate in occurrences, that have properties (qualities in BFO), may play roles, and may have propensities (dispositions in BFO) or functions. ChatGPT embraces dualism since it is ontologically committed to physical entities and mental entities, like mental constructs, mental actions, or mental processes. By our comparison, we do not wish to suggest that ChatGPT’s top-level ontology is similar to BFO. Quite contrary, there are major differences with respect to the categories, their definitions, and their organization in the subsumption hierarchy. For example, ChatGPT’s primary distinction is between abstract and concrete entities, while BFO does not include a category for abstract entities. We only want to point out that somebody familiar with established ontologies is able to recognize familiar ontological distinctions by ChatGPT.

The major difference between ontologies and ChatGPT is that ontologies are usually carefully constructed in a way that they resolve ambiguities by distinguishing different concepts and providing clear definitions. This is particularly important in the case of polysemes, where humans rely on context to disambiguate (if necessary). For example, an ontology of cattle will introduce two different terms for “cow,” one in the sense of bovine and the other for female bovine. Similarly, ontologists will distinguish between hammer-as-object, hammer-as-process, and hammer-as-function. In contrast, LLMs generate texts based on a given prompt and a function that maps tokens to probability distributions of tokens. Hence, LLMs do not disambiguate words but rather learn to use them appropriately in a given context. This mercurial use of language is a great benefit for the task of generating natural language texts, but it is an obstacle for using LLMs for the task of creating ontologies.

Firstly, it leads to a kind of “ontological overload” For example, while an ontology typically would distinguish between a coin and the value it represents, ChatGPT treats the same entity as both concrete and abstract. In this example, ChatGPT states that fact explicitly, 15 however, often it is more vague and claims that some entities have both physical and abstract characteristics. For example, the roundness of an apple is both a concrete feature of the apple and, at the same time, a generic characteristic (an abstract entity). This phenomenon is not limited to the distinction between concrete and abstract entities, the entities in ChatGPT’s ontology may be instances of different ontological categories that one expects to be disjoint. Depending on the context, they are treated to be one or the other.

Secondly, it leads to inconsistent responses. For example, in two separate conversations, ChatGPT may answer the same question in exactly the opposite way. For example, a shadow is classified as a concrete entity in Figure 3, because—according to ChatGPT—it is an observable and tangible aspect of the physical world. However, in Figure 4 exactly the same question is answered oppositely, because—according to ChatGPT—shadows, while observable, are not objects. One possible explanation for the different answers is that in Figure 3 “entity” is used by ChatGPT for the most generic category in the ontology, but in Figure 4 it seems to be used as a synonym for “object.” However, explanations like these are a kind of semantic pareidolia: we cannot help to read texts by generative AI as if they had a communicative intent. However, the different answers to the same question are a purely stochastic phenomenon.

Classification of a Shadow as a Concrete Entity.

Classification of a Shadow Distinct From a Concrete Entity.

By choosing the settings of GPT 3.5 appropriately, one can ensure that the same prompts will always produce the same output. However, this does not address the concern. Because while it ensures that the same prompt leads to the same output, even minute changes that do not change the meaning of the prompt may lead to inconsistent results. Logically inconsistent responses are not rare and are not limited to the distinction between concrete and abstract entities. For example, “litter” is sometimes classified by ChatGPT as an artifact 16 and sometimes not 17 . Interestingly, the inconsistent responses sometimes seem to be inherited along the taxonomies. For example, in ChatGPT’s TLO, shadows are a kind of phenomenon. Phenomena are also sometimes classified as concrete and sometimes as abstract.

While in most cases the ChatGPT’s responses are consistent and do not change significantly if the prompts are rephrased, these examples illustrate that there is a significant number of cases where this is not the case. Thus, if one attempts to use LLMs for ontology development, it is important to cross-validate the classifications of the LLMs with the help of a variety of prompts to ensure that the results are robust. Further, there is likely a significant number of cases where the LLM will provide inconsistent results, and, thus, one needs to account for that possibility in the design of the workflow.

There is a significant research interest in the potential use of LLMs in ontology engineering. The development of such tools would be easier if the texts that are generated by LLMs share a top-level ontology, that is, they are committed to the existence of the same kind of entities and share the same ontological assumptions. Thus, the first question is whether there is such a top-level ontology, and, if there is, what it looks like and whether it is suitable for the purpose of ontology engineers. In this article, we investigated these questions as a case study for ChatGPT 3.5.

As we discussed in Section 3, ChatGPT does not use a top-level ontology (TLO) in the sense of an OWL file that may be downloaded. However, there is a stable core of ontological commitments and assumptions, which are repeatable across many interactions with the system. In this sense there ChatGPT uses a TLO. In Section 4 we presented the taxonomy of that ontology and some important ontological assumptions. (The taxonomy is also available as an OWL file.)

ChatGPT’s ontological hierarchy of categories contains some distinctions, which are familiar from the ontological literature. However, it differs significantly from popular existing top-level ontologies. This may be an obstacle to the use of LLM-based tools within ontology engineering projects, which reuse existing TLO (e.g., DOLCE or BFO). For example, any effort to use the GPT 3.5 model to extend a BFO-based ontology will run into the issue that GPT 3.5 uses a different ontological categorization than BFO. (The differences are so significant that no simple ontology alignment is possible.) Thus, without some effort to ensure compatibility with BFO (e.g., by choosing the prompts accordingly), the responses of the LLM will be incompatible with the existing BFO-based taxonomy of the ontology, and, therefore, the result of the ontology extension is going to be incoherent and, possibly, even logically inconsistent.

A different, and more challenging issue is the fact that LLMs do not learn to disambiguate terms, but rather to use terms appropriately in a given context. As we discussed, this results in a kind of “ontological overload,” where entities a classified as members of different, supposedly disjoint categories. For ChatGPT the same entity may be abstract and concrete, depending on the context. Further, minute changes to the prompt or even the same prompt may lead to contradictory results. Thus, any attempt to use LLMs for ontology development needs to involve some strategy to manage “ontological overload” and inconsistent responses, otherwise, the resulting ontology will be of poor quality. Any good ontology provides clear and unambiguous definitions, which enable the consistent usage of its terms. On their own, LLMs deliver neither.

Footnotes

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.