Abstract

Oral and Maxillofacial Surgery (OMFS) is a surgical spatiality that serves as a bridge between medicine and dentistry, focusing on the diagnosis and treatment of diseases affecting the mouth, jaw, face, and neck. Large Language Models (LLMs), which first appeared in 2019, are trained in extensive text collections and can process languages with high quality. Although OMFS is a hands-on surgical specialty, LLMs have been increasingly used for patient education, research, and training purposes. This study aimed to explore the capabilities of LLMs in the field of OMFS by investigating the most recent literature. Seven peer-reviewed online repositories including PubMed, Scopus, association for computing machinery (ACM), IEEE, Embase, cumulative index to nursing and allied health literature (CINAHL), and Google Scholar, are selected to download relevant articles. Adhering to the PRISMA-ScR guidelines, we conducted a systematic search across these libraries to select articles that incorporated LLMs into OMFS. The forward and backward reference lists of the included articles were checked to retrieve missing articles. After the final screening process a total of 20 studies are selected for this review process. The selected studies demonstrated the applications of LLMs in OMFS, such as patient education, clinical decision support, and procedural guidance for specific procedures. The study results showed variability in LLM response accuracy and lower accuracy in citation generation, whereas open-ended questions achieved higher accuracy rates. Advanced versions of LLMs, such as ChatGPT4, have shown improved accuracy, and reliability compared with older GPT versions. While some studies reported that LLM responses lacked complete details and exhibited only moderate accuracy. This variability in performance emphasizes the need for the continuous refinement of LLMs and highlights the importance of human oversight in clinical applications. However, there is a need for further refinement, extensive research, and verification by experts.

Introduction

Oral and Maxillofacial Surgery is a surgical spatiality that serves as a bridge between medicine and dentistry, focusing on the diagnosis and treatment of diseases affecting the mouth, jaw, face, and neck. The scope of the field is broad and includes the diagnosis and management of facial injuries, head and neck cancers, salivary gland diseases, facial disproportion, facial pain, impacted teeth, cysts, and tumors of the jaws, as well as various issues affecting the oral mucosa, such as mouth ulcers and infections. 1 Specialists in this field are unique in that their training often requires dual degrees in medicine and dentistry, followed by specialty residency training, and is recognized worldwide.

The field of Oral and Maxillofacial Surgery (OMFS) includes various subspecialties, such as head and neck oncology, dentoalveolar surgery, orthognathic surgery, cleft lip and palate, craniofacial surgery, facial esthetic surgery, and craniofacial trauma.1,2 The International Association of Oral and Maxillofacial Surgeons (IAOMS) has acknowledged the transformative potential of Information Technology in global oral and maxillofacial training. This led to the formation of a committee and initiatives aimed at leveraging IT to disseminate education worldwide. 3

The advent of large language models (LLMs), which first appeared in 2019, represents a significant technological development. These models trained in extensive text collections, can process, and generate language with exceptional quality. 4 Among the most well-known LLMs, ChatGPT, developed by OpenAI in San Francisco, CA, USA, was launched in November 2022. It quickly gained recognition, amassing 1 million users within 5 days of its release. Accessible via web browsers or mobile apps, ChatGPT facilitates queries, communication, and word-based tasks. Although OMFS is a hands-on surgical specialty with practitioners often engaged in clinical or surgical settings, LLMs have found utility in areas such as diagnosis and education for both patients and dental students.5,6 Cufuna et al., 7 explored the integration of Augmented Reality (AR) and LLMs to enhance future teachers’ digital competencies, revealing that these technologies boost student engagement, problem-solving skills, and interactive learning. The findings highlighted the potential of AR and LLMs to transform education by fostering dynamic participatory teaching methodologies that prepare educators for real-world challenges. Similarly, Askarbekuly and Aničić 8 automated outcome-based assessment in informal e-learning using ChatGPT, addressing the challenge of evaluating learning trajectories. A case study and two evaluation stages showed that instructor oversight, a high-quality knowledge base, and well-crafted prompts are key to ensuring assessment quality. Distance simulation is transforming surgical education by providing scalable, high-quality training through effective hardware, validated programs, and timely feedback. With AI-enhanced assessment tools and remote feedback, it optimizes learning, mentorship, and faculty efficiency, making surgical training more accessible and sustainable. 9

While there have been several narrative reviews on the use of Artificial Intelligence in OMFS, there is a notable lack of LLMs in this area, which.4,5 aims to fill this gap by exploring the most recent applications of LLMs in OMFS and describing an overview of language model input and output. The research questions for this review are as follows.

These research questions will help us identify the subspecialties of OMFS that are most amenable to digital innovation and highlight the fields where further development might be needed. They also help to explore the various functionalities that LLMs play in OMFS, thus helping us to assess the impact on clinical outcomes and patient care. Comparing the LLM response with that of experts helps us to evaluate their reliability and accuracy, which are important for the use of LLMs in clinical settings. Understanding the methods used to evaluate the quality of responses from LLMs is essential for ensuring consistency and reliability in their application.

This scoping review provides an understanding of the role of LLMs in OMFS, aiding researchers and practitioners in developing new models or chatbots that can enhance patient and resident education. The remainder of the paper is organized as follows: Section 2 discusses the study protocol and methodology, Section 3 outlines the research findings, Section 4 discusses these findings, Section 5 examines the strengths and limitations of this review, and Section 6 concludes the paper.

Methods

The PRISMA extension for scoping reviews (PRISMA-Scr) guidelines were followed for this scoping review, and Ref. 7 The search process and study selection are described in detail below.

Search process

A systematic literature search was performed on February 2, 2024, across seven electronic databases: PubMed, Scopus, ACM, IEEE, Embase, CINAHL, and Google Scholar. The search was focused on articles published between November 2022 and January 2024. This duration was selected because the innovative ChatGPT was launched on November 30, 2022. Google Scholar retrieved several relevant and irrelevant studies. Therefore, the first 100 studies were considered to limit the search results and focus on the study objectives. The reference lists of the articles selected for inclusion were carefully examined to identify additional relevant studies. The search keywords were as follows.

The search strategy is further detailed in the Supplemental file Search Results.pdf.

Inclusion and exclusion criteria

The studies included in this scoping review focused on populations who underwent OMFS procedures, with no restrictions on age, sex, or ethnicity. The interventions considered LLM used in the OMFS field encompass applications in treatment, diagnosis planning, post-operative care, student education, research, and other relevant areas. Only studies published in English between 2022 and 2024 were included. The types of publications included were peer-reviewed articles, theses, dissertations, conference proceedings, and preprints. Reviews, conference abstracts, proposals, editorials, and commentaries were excluded. No constraints were placed on the publication country, comparators, or outcomes of the LLM models.

Study selection

The articles retrieved from the search were uploaded to the Rayyan Intelligent Review Application developed by Rayyan Systems Inc. 11 This application facilitates efficient collaboration among researchers and expedites the review process. Reviewers can conduct individual or collaborative reviews independently, making decisions regarding the inclusion or exclusion of articles. 11 Duplicates were identified and removed from the list, and the remaining studies were evaluated based on their titles and abstracts. Two reviewers (SM, MR, and SK) independently assessed the eligibility of each article. Any discrepancies were resolved through mutual consultation and discussion between reviewers.

Data extraction

A data extraction sheet was created using Microsoft Excel, and relevant information was extracted from the final articles included. The following variables were included in the extraction process: the first author’s name, year of publication, month of publication, type of publication, venue (conference or journal name), country, study design, setting, aim, duration or date of study, LLM model, type of disease or subspecialty, subcategory of disease, reasoning mechanism, LLM application, comparison, input to LLM, source of questions, output from LLM, input type, number of questions/input, number of answers/output, fine-tuned or not, number of reviewers, scoring of LLM answers, data analysis tools, inter-rater reliability, statistical analysis, performance values, completeness, accuracy or references, reported outcomes, identified gaps, limitations, future recommendations, and additional comments. A detailed description of the extraction information is provided in the Supplemental file Data Extraction sheet.xlsx. The data extraction process was conducted by the authors (SM and MR), and the extracted data were subsequently reviewed and verified by other authors (SK and ZS).

Data synthesis

The collected data were analyzed and presented using narrative synthesis. The included studies and results are summarized and detailed in the Supplemental file Data Extraction sheet.xlsx.

Results

Search results

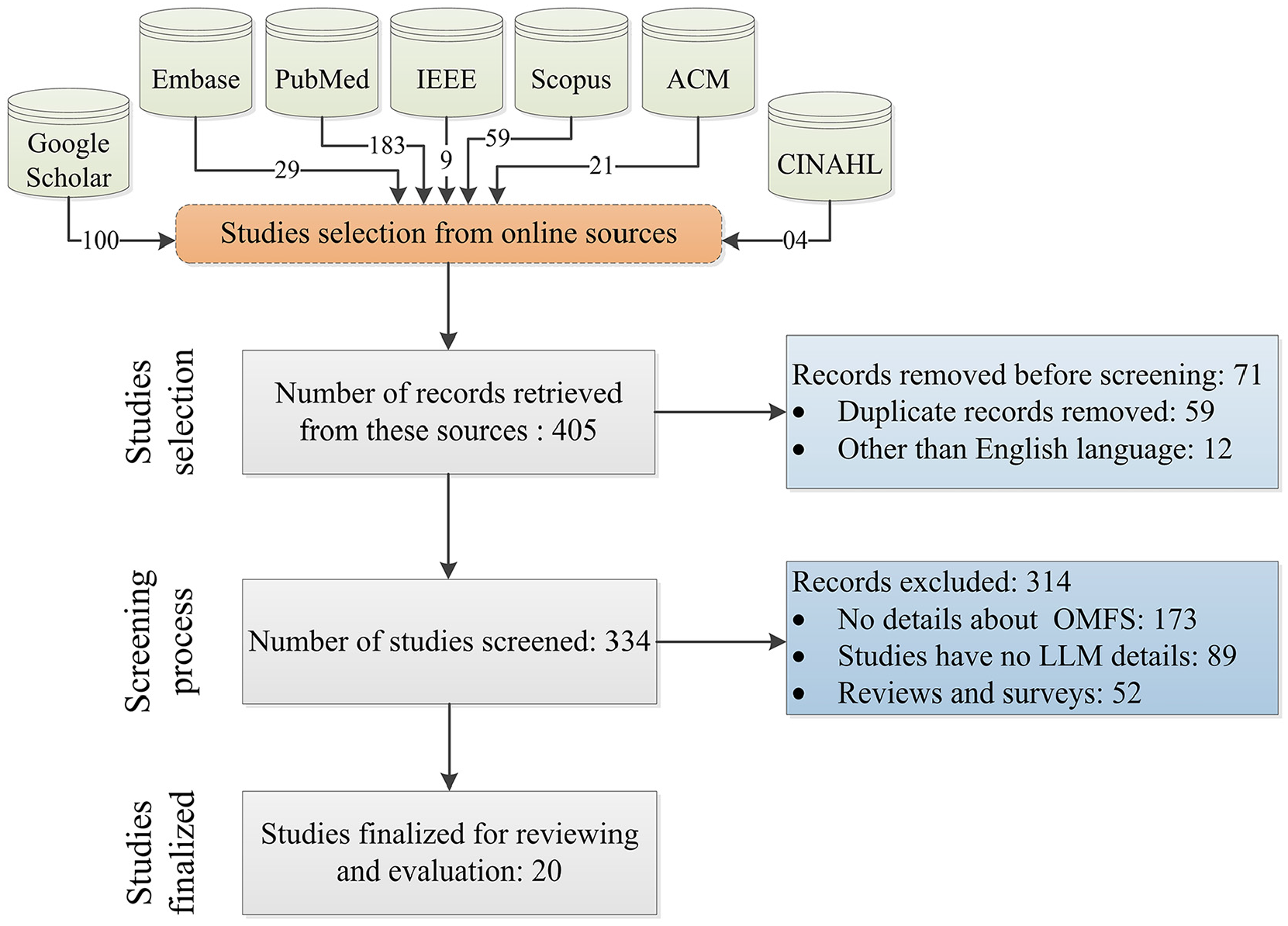

In the initial search across the seven databases, 405 articles were retrieved: PubMed (183), Scopus (59), ACM (21), IEEE (9), Embase (29), CINAHL (4), and Google Scholar (100). After removing 71 duplicate articles, the remaining 334 were screened based on their titles and abstracts. Subsequently, 312 articles were excluded: 173 due to different outcomes, 87 due to different populations, and 52 due to not meeting the inclusion/exclusion criteria regarding publication type. Following this screening process, the remaining 22 studies were sought for retrieval, out of which the full-text PDFs of two studies could not be obtained. Finally, 20 studies were assessed for eligibility, and all 20 studies were included in our final review, as they aligned with our inclusion and exclusion criteria, as shown in Figure 1.

Research protocol followed to execute this scoping review work.

Demographics of included studies

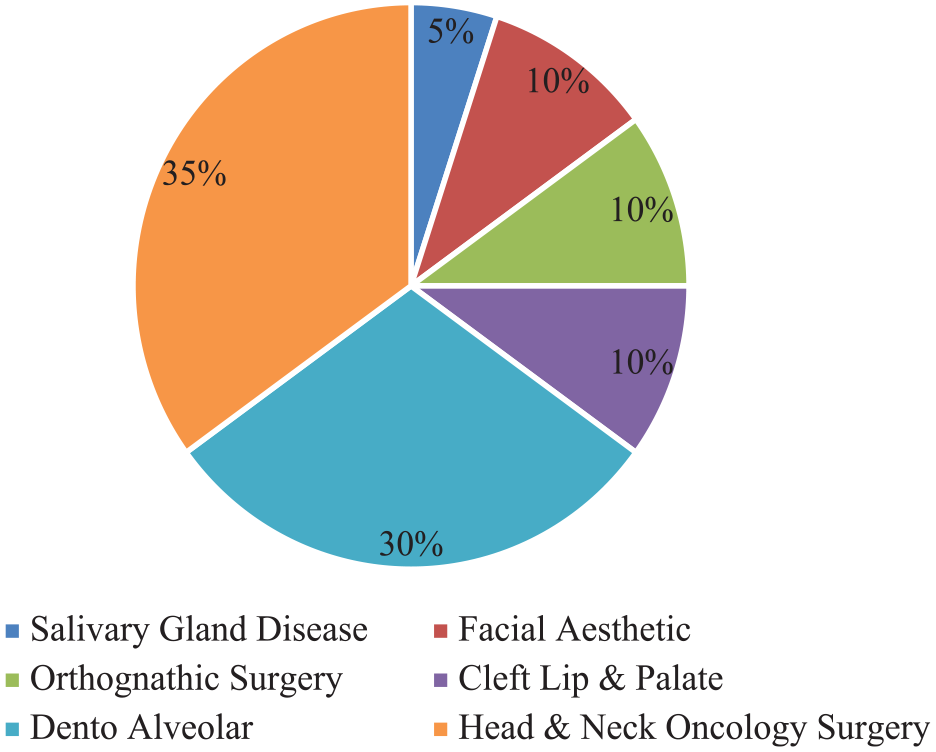

Detailed demographics of the included studies are presented in Table 1. All 20 articles were published in journals.6,9–30 Eighty-five percentage of the articles were published by 2023 (n = 17).9–20,22–28,30 Three articles were published in 2024,6,19,27 indicating a growing interest in research in this field. The included studies were published in nine countries, with the USA having the highest number (n = 5, 25%),6,15,17,19,24 followed by Turkey (n = 4, 20%).16,20,27,30 There were three studies were published in Italy13,14,28 and two each in Australia22,23 and Spain 25,26; one study each was published in Brazil, 29 France, 18 Germany, 21 and Taiwan. 12

Demographics of included studies.

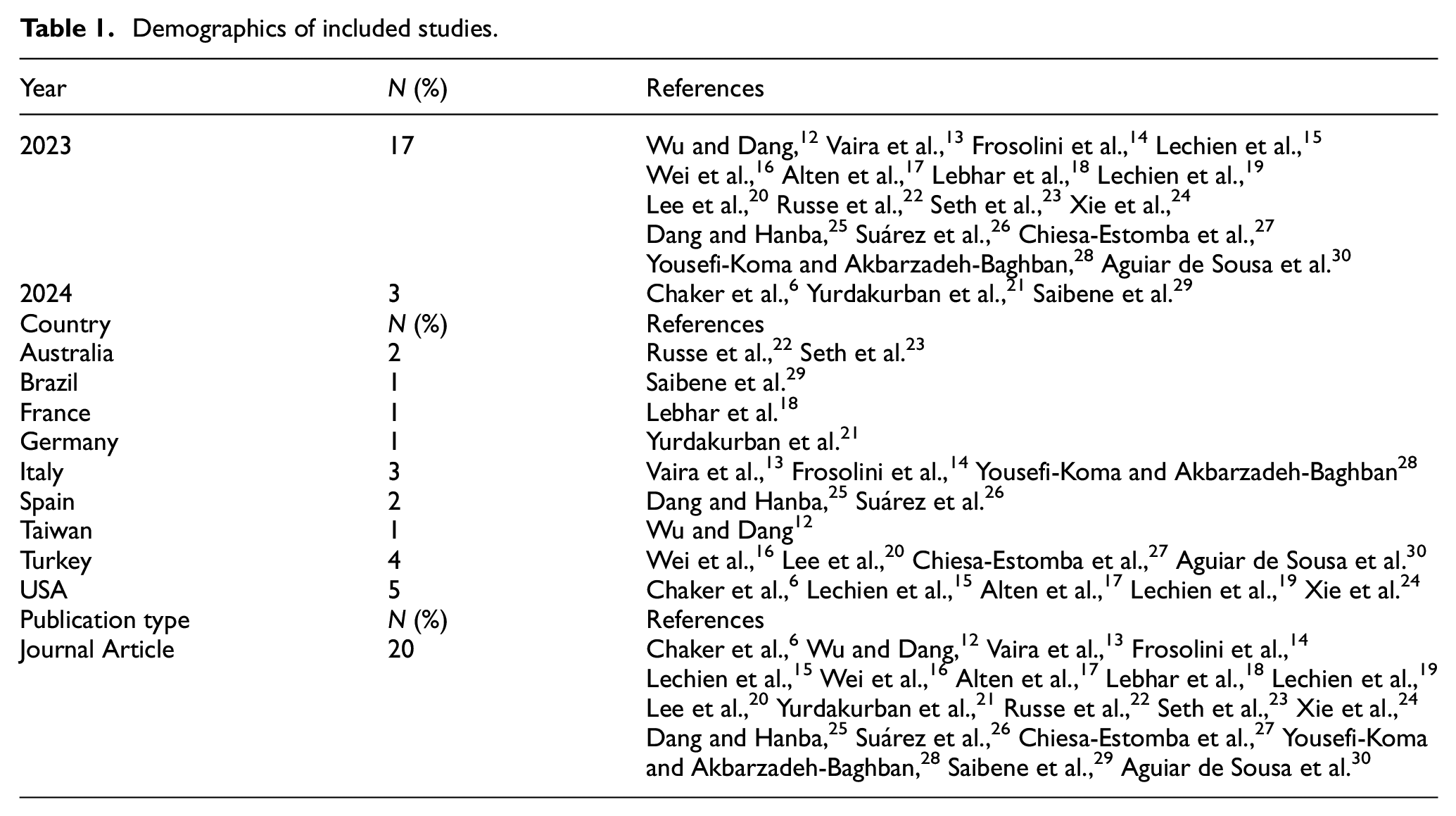

Subspecialty of OMFS

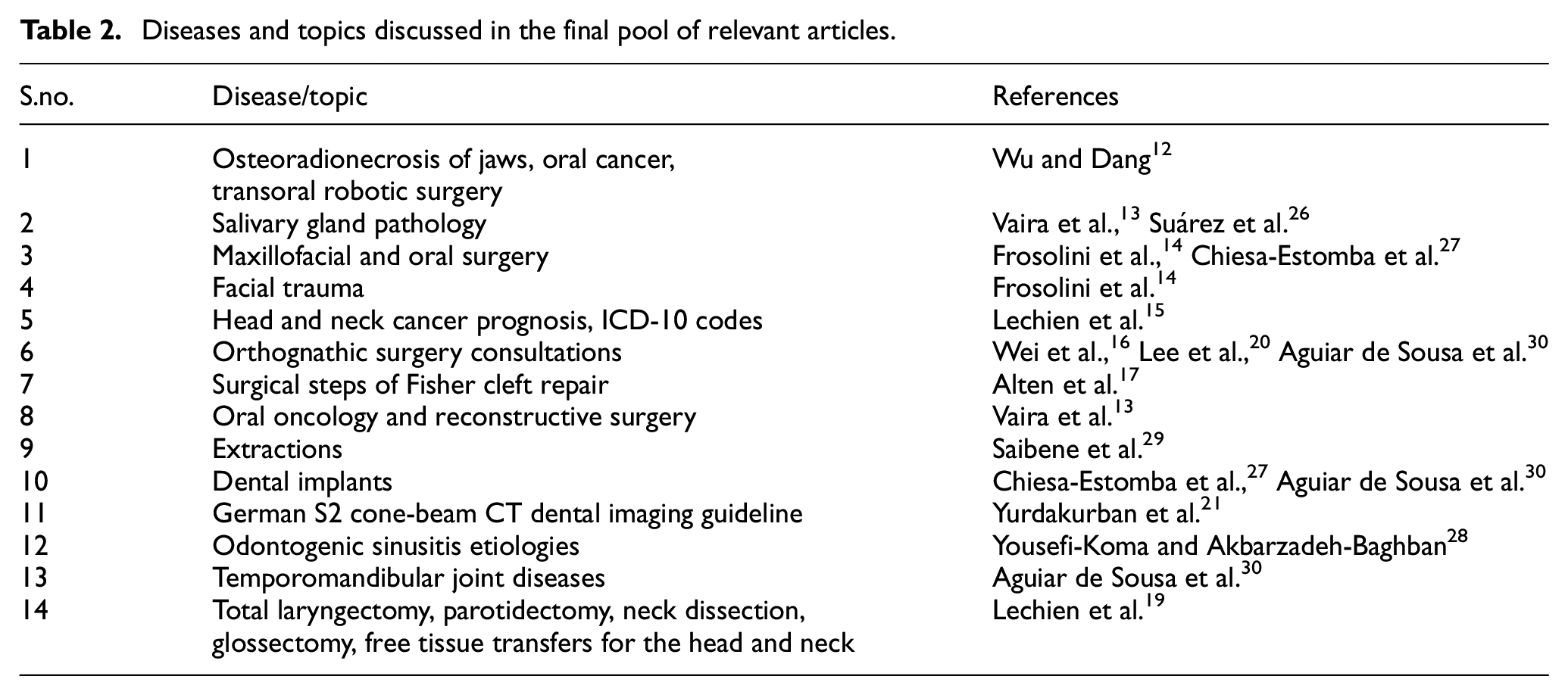

It has been found that 7(30%) studies were in the field of head and neck oncology12–15,18,19,24 while the fields of orthognathic surgery and facial esthetic surgery each had 2 (10%) studies,16,20,22,23 respectively. Thirty percent (n = 5) of the studies were in the field of dentoalveolar surgery,21,25,27–30 and only 1 (5%) study was in the field of salivary gland disease. 26 The distribution of these studies is illustrated in the pie chart shown in Figure 2. The various diseases, procedures, and surgical topics discussed by the authors include osteonecrosis of the jaw, oral cancer, transoral robotic surgery, 12 salivary gland pathology,13,26 oral oncology and reconstructive surgery, 13 maxillofacial and oral surgery,14,27 facial trauma, 14 head and neck cancer prognosis, ICD-10 codes, 15 orthognathic surgery consultation,16,20,30 steps of Fisher Cleft Lip repair, 17 Extractions, 29 German S2 cone beam dental imaging guidelines, 19 odontogenic sinusitis etiologies, 28 dental implants,27,30 temporomandibular joint diseases, 30 and total laryngectomy, parotidectomy, neck dissection, glossectomy, and free tissue transfer for the head and neck, 19 as shown in Table 2.

Evolution of OMFS subspecialty.

Diseases and topics discussed in the final pool of relevant articles.

LLM models reported with applications

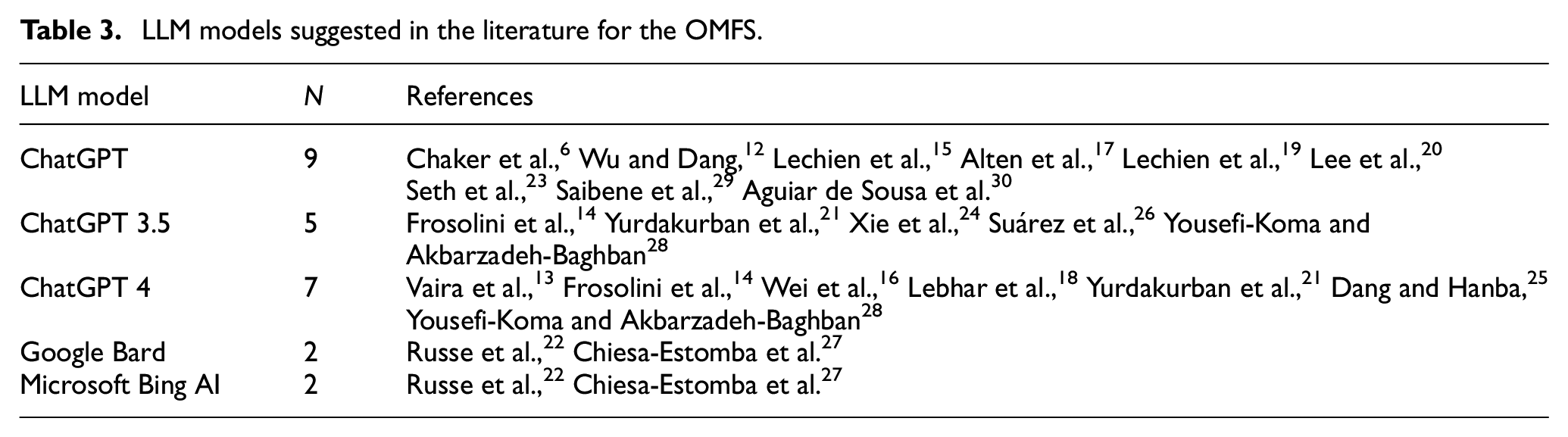

Seven studies (35%) utilized the latest version, ChatGPT4,13,14,16,18,21,25,28 whereas five studies (25%) employed ChatGPT 3.5.14,21,24,26,28 Studies by6,12,15,17,19,20,23,29,30 did not specify the version of the ChatGPT used. Additionally, two articles used Microsoft Bing AI and Google Bard, as22,27 shown in Table 3.

LLM models suggested in the literature for the OMFS.

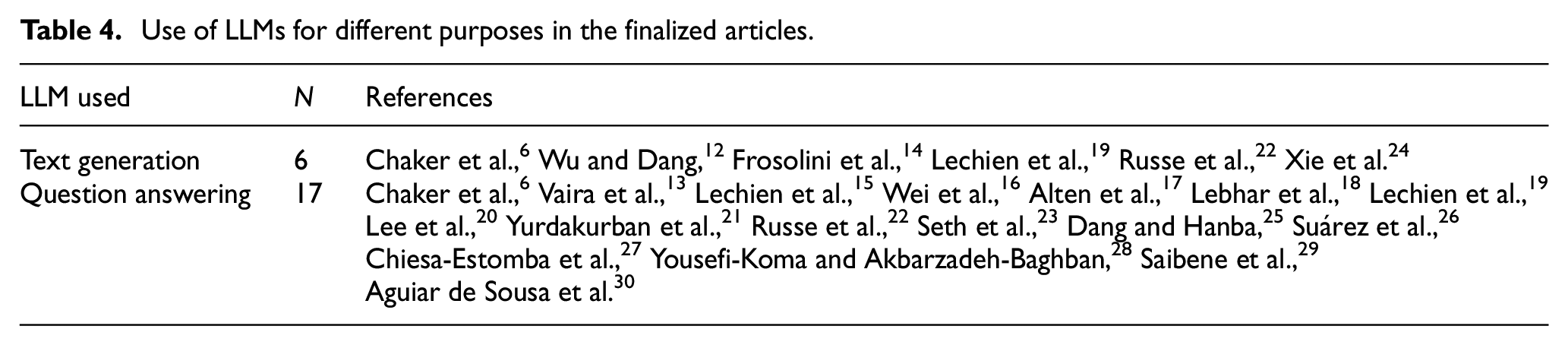

Six studies (30%) utilized LLM for text generation,6,12,14,19,22,24 whereas 17 of the included studies used it for answering6,13,15–23,25–30 questions, as shown in Table 4.

Use of LLMs for different purposes in the finalized articles.

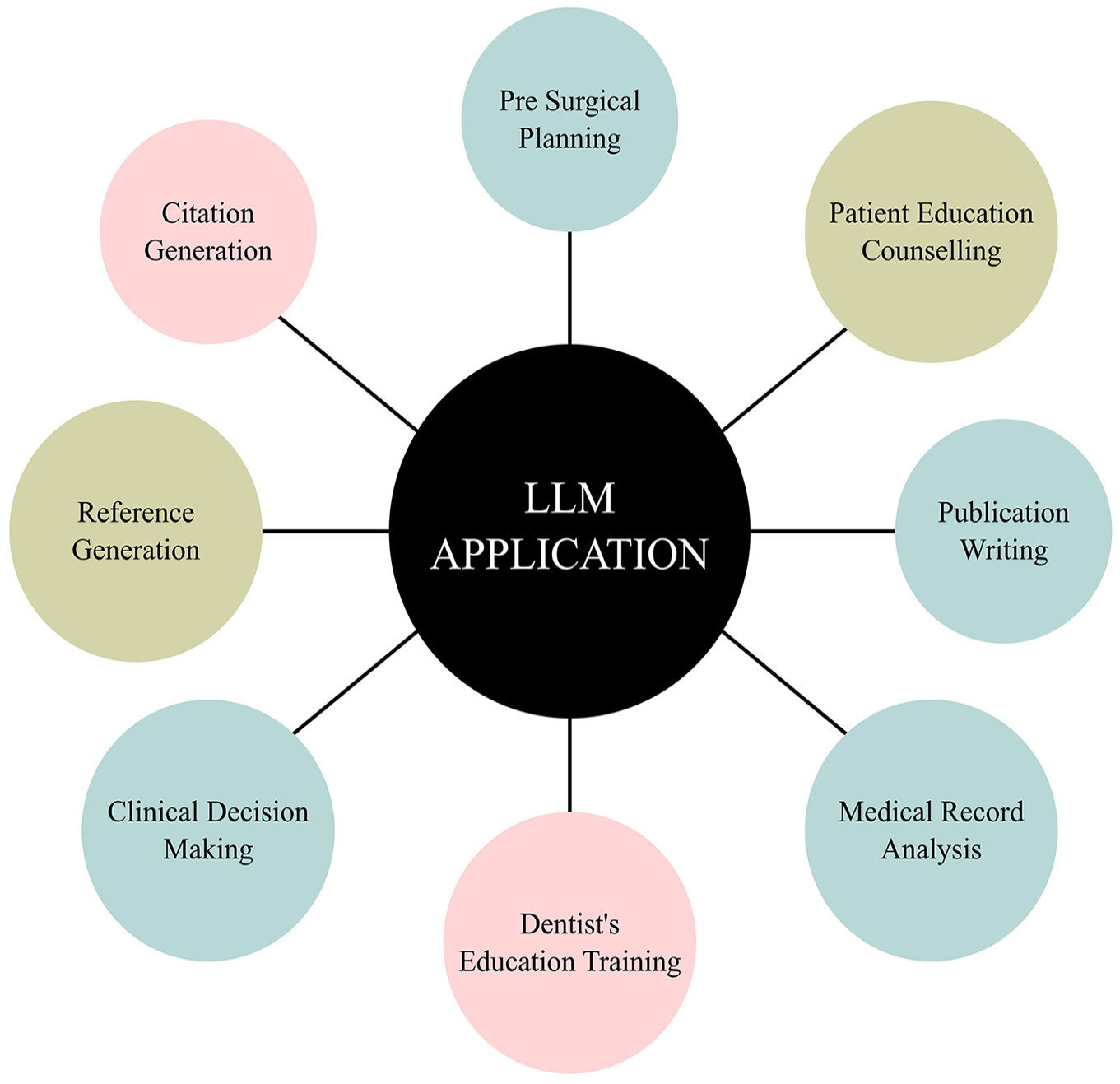

In the selected studies, LLM was utilized for the following purposes: citation generation, 10 reference generation, 14 clinical decision-making,13,25,26,28 patient education and counseling,6,15,16,19,20,22,23,27,29,30 medical record analysis, 16 dentist’s education and training,17,30 supportive tool, 16 virtual assistant for general dentist, 25 pre-surgical planning, 22 and aid publication writing, and to create a framework for assessing publication. 24 These applications demonstrate the versatile use of LLMs across various aspects of patient care and medical education as shown in Figure 3.

LLMs application in OMFS.

Sub-section head style

The finalized 20 studies posed text-based questions to LLM. The input to the LLM varied from keywords, 12 detailed clinical questions, and clinical scenarios13,14,17,18,21,22,25–28 to patient’s questions–preoperative, consultation, and postoperative questions–and6,15,16,19,20,23,29,30 the number of questions asked to the LLM ranged from 1 question 17 to 159 questions. 13

The sources of questions asked to the LLM varied: they were either commonly searched or frequently asked questions,6,12,15,20,29,30 set by researchers,13,14,17,22,26,28 derived from medical records, or examination results, 18 obtained from platforms such as Quora, 27 based on guidelines from the American Society of Plastic Surgeons website,16,23 sourced from the Spanish Society of Oral Surgery, 25 derived from the German S2 guideline for CBCT, 21 and from publications from the AHNS Head Neck Fellowship Curriculum. 24

The output from the LLM varied across studies and included references and citations,12,14 surgical steps, 17 treatment options, 26 procedure information, complications,20,23,29 post-extraction symptoms, 27 and publication scoring systems. 24 The number of responses from the LLM ranged from 1 17 to 900. 25 Prompt engineering of LLM responses was conducted in two studies,17,25 whereas the zero-shot learning approach was employed in the study. 21 The LLM answers were compared to answers by experts in seven studies,6,13,17,18,21,26,29 compared to answers from Google in two studies,15,19 and MedSearch and Open Evidence in the study. 20

Performance metrics used

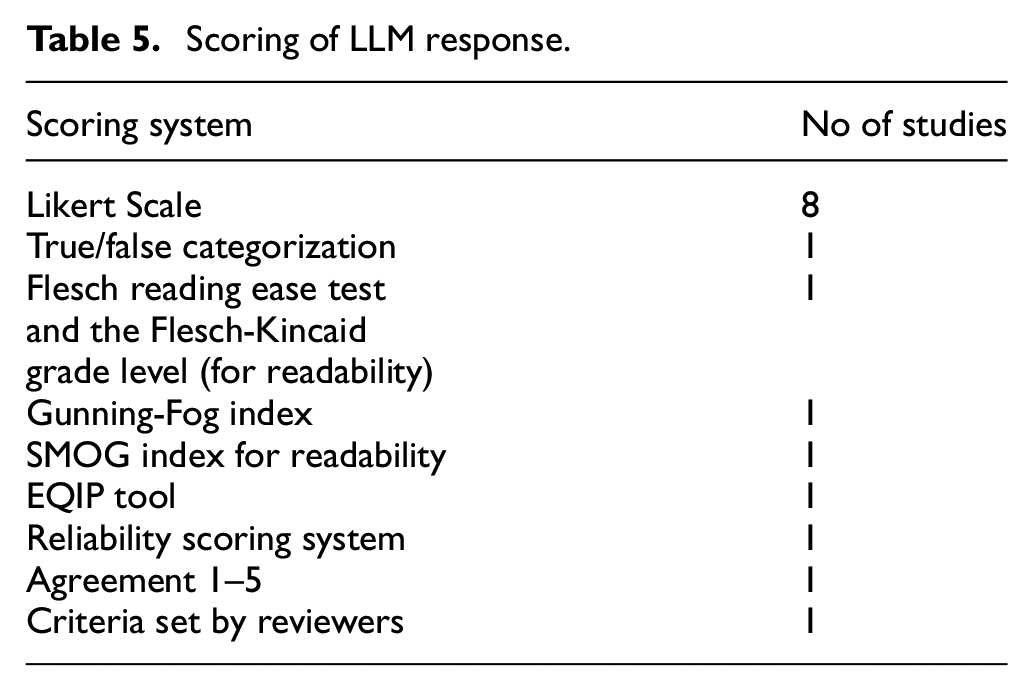

To score LLM answers, Likert scale-based evaluations were used in eight studies.13,15,17,21,25–28 True/false categorization was employed to assess content correctness, 14 while readability was evaluated using the Flesch Reading Ease Test and Flesch-Kincaid Grade Leve l. 15 Additional assessments included the Gunning-Fog Index, 19 SMOG index for readability, EQIP tool, and reliability scoring system. 20 There was one study with scoring criteria set by the reviewers 23 and one study with an agreement scoring of 1–5 22 as shown in Table 5.

Scoring of LLM response.

The number of reviewers who assessed the answers ranged from 2 to 35. Inter-rater reliability was high, with a Cohen’s kappa of 0.878, 12 demonstrating almost perfect agreement among raters. General agreement rates were notable at 90% 15 and 89.7%, respectively. 25

The accuracy of the LLM responses varied across studies. The research article 12 found that only 10% of the responses for citation generation contained all correct details, while 13 higher accuracy rates were observed in open-ended clinical questions (87.2%) than in closed ones (84.7%). Bibliographic references were poorly executed, with 46.4% being nonexistent. 13 The study article 14 reported that ChatGPT version 4 outperformed version 3.5 in providing true answers (74.2% vs 16.6%). The research work 15 indicated a preference for Google over ChatGPT, with Google demonstrating a higher-quality score. The accuracy rates were 71.7% in this study 25 and 69%. 6 The research study 21 found that ChatGPT 4 showed 100% correct recommendations, whereas ChatGPT 3.5 showed only 92.5% correct recommendations. The quality of the explanation of the answers for version 4 was superior to version 3.5 (87.5% and 57.5%, respectively).

Discussions

Principle findings

This scoping review identified the diverse applications of LLMs in OMFS. The evidence collected demonstrates that LLMs, particularly advanced models such as ChatGPT4, are increasingly being used in OMFS with satisfactory results, particularly in patient education and clinical decision-making, and as a supportive tool for practitioners and students. The wide geographic distribution of the studies, with the USA and Turkey contributing the largest number of publications, and most studies published in 2023, indicate the increasing interest and global relevance of the research topic. The focus on diverse subspecialties within OMFS, notably Head & Neck Oncology and Dentoalveolar Surgery, reflects the broad applicability of our research findings.

Capabilities of LLM

LLMs have been effective in synthesizing information and generating accurate responses, making them valuable in educational settings for both patients and health care professionals. 8 Distance simulation is transforming surgical education by providing scalable, high-quality training through effective hardware, validated programs, and timely feedback. 9 Their applications range from generating citations and supporting clinical decision-making to enhancing patient education through accessible explanations of medical conditions and treatments related to OMFS, such as cleft lip repair. The use of LLMs for unique purposes, such as medical record analysis and presurgical planning, indicates the growing confidence in LLM’s precision, and reliability by the surgical community. 25 The varied input to the LLMs, from keywords to detailed clinical scenarios, shows the ability of LLMs to handle diverse medical data. This also poses challenges in standardizing responses and ensuring consistency among studies. Comparison with answers from experts, Google, Med Search, and Open Evidence is an effective method to validate the effectiveness of the LLM response.

Accuracy and reliability

Despite its high utility, the overall accuracy of LLM responses, especially in complex clinical scenarios, poses some concerns, particularly in head and neck surgery, and other medical imaging domain. 30 While some studies have reported high accuracy rates for open-ended questions, the accuracy of bibliographic references was notably poor, which could lead to the dissemination of incorrect information if not properly verified. ChatGPT 4 outperformed version 3.5, by providing more accurate true answers and correct recommendations, and the quality of explanation was also superior, indicating improved natural language processing abilities. There are significant concerns regarding the “academic hallucination” observed in references related to head and neck oncology, where ChatGPT often produced erroneous or nonexistent references, albeit at a decreased rate with newer versions such as ChatGPT4. The limited use of other LLM tools, such as Google Bard, Gemini, Claud AI, and Microsoft Bing AI, indicates their low popularity in the OMFS community. Some authors noted that LLM responses lacked complete details and exhibited only moderate accuracy. This variability in performance emphasizes the need for the continuous refinement of LLMs and highlights the importance of human oversight in clinical applications.

Challenges in using LLM in OMFS

During our review analysis, we identified several challenges in the application of LLM in OMFS and academia.

LLMs such as ChatGPT may invent data when responding to niche topic inquiries in which verified information is scarce. This tendency can lead to the dissemination of unverified or fabricated information, which is particularly problematic in surgical and academic contexts, where accuracy is crucial.

The processing and storage of sensitive health information by LLMs pose significant privacy risks. Ensuring the security of patient data and compliance with medical confidentiality laws are essential.

There needs to be clear communication about the medicolegal aspects of using LLMs, such as ChatGPT. Patients and health care providers must understand the legal implications of relying on AI-generated advice.

Patients should be explicitly informed that they are interacting with an LLM tool and not with a human, which is crucial for maintaining trust and managing expectations.

LLMs current inability to reliably cite sources and provide evidence-based responses limits its academic feasibility.

Implications for clinical practices

The integration of LLMs into clinical settings could potentially streamline workflow and enhance the efficiency of patient care. For example, their use in patient education and counseling, as demonstrated in salivary gland clinics for Sia endoscopy treatment, suggests that LLMs can effectively communicate complex medical information. Integrating AI-based chatbots into dental imaging workflows can potentially enhance the standardization and quality of medical imaging practices. However, the reliance on LLMs for critical tasks, such as clinical decision-making and pre-surgical planning, should be approached with caution because of the variability in accuracy and the critical nature of these tasks.

Gaps and future recommendations

There is a clear need for further studies that compare the responses of LLMs, such as ChatGPT, against those from experts from the head, and neck surgical community to validate the accuracy and applicability of LLM-generated information in clinical settings. Conducting longitudinal studies to understand the impact of LLM use over time and employing large database studies specific to OMFS to enhance the comprehensiveness and reliability of LLM tools. Rigorous testing protocols and replicating studies were implemented to ensure the consistency, reliability, and generalizability of LLM applications for both patients and dentists.

No intelligent framework has been identified as implemented on mobile devices in the selected finalized studies. The high computational demands of LLMs and the substantial memory needed for OMFS imaging data may explain the limited adaptation of these applications for mobile use. Future advancements are expected to enable the deployment of these techniques on mobile platforms, integrating them with servers to facilitate diagnoses at the patient’s location. 31 This could alleviate pressure on healthcare facilities while supporting medical professionals in delivering home-based care and prescribing appropriate treatments.

There is a notable absence of a standardized scoring system for evaluating LLM responses and assessing their accuracy, highlighting the need for a standardized approach in LLM research. Additionally, there is a lack of specialized LLMs tailored specifically for physicians and other professionals in the medical field.

Strength and limitations

The strengths and limitations of this review are discussed in the following section.

This review explores the diverse applications of LLM in OMFS, providing a broad understanding of its uses and benefits in this specialized surgical subspecialty. This highlights the versatility of LLM tools for improving healthcare delivery, training, and education. There was a clear objective in conducting the scoping review and the research questions were highlighted. Furthermore, the study effectively identifies gaps in current research and applications, offering valuable insights for future researchers to address these shortcomings.

Although every effort has been made to ensure the validity of the review, there are some limitations to consider. First, this review included only seven databases, which may have resulted in the omission of relevant studies from other databases. There are a limited number of studies on other large-language models. In addition, the decision to include only papers in English may have led to the exclusion of relevant articles published in other languages.

Conclusion

This review aimed to explore the role of large language models in oral and maxillofacial surgery. We analyzed 20 articles published between 2023 and 2024, with the majority originating in the USA. These findings highlight the potential of LLMs to enhance various aspects of OMFS practice by offering timely, accessible, and accurate information that can aid in clinical decision-making and patient care. However, it is essential to acknowledge the limitations and challenges associated with its use like advanced model of ChatGPT like GPT4.0 outperformed its early version (GPG 3.5 and others). Additionally, without its limited integration some LLM models such as Google Bard, Gemini, Claud AI, and Microsoft Bing AI are less popular in OMFS community. While some studies reported that LLM responses lacked complete details and exhibited only moderate accuracy. This variability in performance emphasizes the need for the continuous refinement of LLMs and highlights the importance of human oversight in clinical applications. Future research should address the identified gaps by developing specialized LLM applications tailored to OMFS, improving content generation accuracy, establishing a standard scoring system for LLM responses, and ensuring the ethical use of Large Language Models.

Supplemental Material

sj-zip-1-mac-10.1177_00202940251344491 – Supplemental material for Exploring the capabilities of large language models in oral and maxillofacial surgery

Supplemental material, sj-zip-1-mac-10.1177_00202940251344491 for Exploring the capabilities of large language models in oral and maxillofacial surgery by Sulaiman Khan, Shahira Padinharepattel Mohamed, Md. Rafiul Biswas and Zubair Shah in Measurement and Control

Footnotes

Acknowledgements

Open Access funding provided by the Qatar National Library.

Author contributions

S.K., M.R.B. and Z.S. developed the concept and crafted a research question aimed at retrieving articles from online repositories. S.K. and S.P.M. orchestrated the study’s design and spearheaded the analysis, with assistance from M.R.B., Z.S. The S.K. and S.P.M authored and refined the original manuscript. All authors participated in the analysis, contributed intellectually, made critical revisions to the paper drafts, and endorsed the final version.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Open Access funding provided by the Qatar National Library.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data used and/or analyzed during the current study is available from the corresponding author on reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.