Abstract

The current system of radiological protection relies on the linear no-threshold (LNT) hypothesis of cancer risk due to humans being exposed to ionizing radiation (IR). Under this tenet, effects of low doses (i.e. of those not exceeding 100 mGy or 0.1 mGy/min. of X- or γ-rays for acute and chronic exposures, respectively) are evaluated by downward linear extrapolation from regions of higher doses and dose rates where harmful effects are actually observed. However, evidence accumulated over many years clearly indicates that exposure of humans to low doses of radiation does not cause any harm and often promotes health. In this review, we discuss results of some epidemiological analyses, clinical trials and controlled experimental animal studies. Epidemiological data indicate the presence of a threshold and departure from linearity at the lowest dose ranges. Experimental studies clearly demonstrate the qualitative difference between biological mechanisms and effects at low and at higher doses of IR. We also discuss the genesis and the likely reasons for the persistence of the LNT tenet, despite its scientific implausibility and deleterious social consequences. It is high time to replace the LNT paradigm by a scientifically based dose-effect relationship where realistic quantitative hormetic or threshold models are exploited.

Introduction

This work is dedicated to the late Professor Ludwik Dobrzyński, our dear friend and mentor, with whom we held many discussions on the topics we present below. We made no effort to systematically cover those topics at any depth, but by listing them and by presenting some of the views we exchanged, we wished to acknowledge Ludwik’s extensive range and depth of knowledge in these fields. His strong background in nuclear and solid-state physics and his outstanding mathematical skills allowed him to apply the nuclear physicist’s approach to analyse biological effects of ionizing radiation. We shared and enjoyed his clear-cut arguments with bio-medical scientists and statisticians and hope that some of the spirit of these discussion is reflected here. Ludwik often wondered how could low doses of ionizing radiation possibly contribute to any health risk. Instead, he expected such low doses to elicit positive health stimulation – adaptive response or hormesis, at dose ranges somewhat exceeding natural background radiation, due to the very different biological mechanisms operating at low and high dose levels. He also expected complex-system behaviour of biological mechanisms at low doses, thus excluding linearity over that dose range. Like Ludwik, we hope that in radiation protection, the present linear no-threshold (LNT) paradigm will soon be replaced by a more realistic threshold or hormetic dose-effect relationship.

Natural and Man-Made Sources of Low-Dose Exposures

On average, the yearly human exposure to natural sources of ionizing radiation (IR) is estimated at 2.5–3.5 mSv, in terms of equivalent dose. 1 Since, apart from radon and its progeny, most of the natural terrestrial human exposures are from γ-rays, and since currently the predominant man-made sources of human exposures are medical procedures, also involving X- or γ-rays, in what follows, we present most of our discussion in terms of absorbed dose (in Gy) in order to avoid confusion with equivalent and effective doses, the last two being only predictive estimates of individual risk.

Individual absorbed dose of X or γ-rays from various activities.

We note the disparity between the legal dose limits established by the International Commission on Radiological Protection (ICRP) for the general public (1 mSv/y) or for radiation workers (20 mSv/y) and the well-confirmed good health and apparent longevity of residents of high natural background areas, such as Guarapari in Brasil or Ramsar in Iran, where individuals accumulate yearly doses exceeding 200 mSv. 2 Tissues and cells in the human organism are not able to differentiate between the unavoidable exposure due to natural background (say, 3 mGy/y) and the additional milligrays from other sources, such as those due to diagnostic medical exposures. We do not expect any health hazard to result from exceeding the legally enforced yearly limit of 1 mSv by one or two orders of magnitude.

Hence, the following questions arise: Can such low-dose exposures be in any way harmful to the individual? Should we strive to avoid any additional (i.e. other than the unavoidable natural background) radiation that we may be confronted with in our daily activities? In popular opinion, unfortunately also shared by many medical practitioners and some professionals, the unequivocal answer to both these questions is ‘yes’. But does such an exaggerated fear of radiation (‘radiophobia’) result from any rational or evidence-based arguments or merely from blind acceptance of an outdated scientific paradigm? Is radiophobia intentionally stimulated to limit the proliferation of nuclear weapons or the development of nuclear power for civilian purposes? Does promulgation of the LNT paradigm serve any vested interests of international commerce (such as producers and suppliers of energy) or is it promulgated by misconception – or even fraud – perpetrated by some dishonest, ill-informed, and/or politically or ideologically driven individual scientists or regulatory bodies?

The Origin and Development of the LNT Hypothesis

In an attempt to respond to some of these questions and concerns, let us recall the 1920s, the time when Hermann Joseph Muller (1890–1967), a renowned American geneticist, studied heritable phenotypical changes in the offspring of the fruit fly (Drosophila melanogatser) irradiated with very high (lethal for humans) doses of X-rays. Even though Muller 3 was unable at that time to directly state the presence of any alterations in the fruit fly DNA, he unequivocally concluded that the phenotype changes he observed in the offspring of the flies must have resulted from radiation-induced mutations in the parents’ genetic material. In fact, Muller confused observation (i.e. transgenerational phenotype changes) with mechanism (i.e. gene mutations). Muller also insisted that the frequency of such gene mutations depended linearly and without any threshold on the X-ray dose. He may have based his conviction on the ‘one-hit’ target theory developed in the 1920s (as reviewed in Ref. 4). This theory offered a statistical interpretation and a quantitative model of the biological action of ionizing radiation – namely, that biological effects of such radiation resulted from single statistical ‘hits’ of radiative energy to a biological target – the cell nucleus or its genetic material. The ‘one-hit’ target theory indeed predicts a linear response with no threshold. Muller wished to be recognized as a pioneer in the claim that gene mutations, being the underlying mechanisms of evolution, are induced by the omnipresent ionizing radiation. Yet, he never admitted that he was unable to observe any of his ‘mutations’ after low doses of radiation, such as those universally present in the environment. 5 Muller was awarded the 1946 Nobel Prize in physiology or medicine. In his Nobel lecture, he nevertheless professed that the number of radiation-induced mutations increased linearly with dose without any threshold – including the low-dose range. Muller’s Nobel Prize came shortly after the nuclear attacks on Hiroshima and Nagasaki, followed by the onset of the Cold War and the nuclear weapons race. These events were to dominate international politics in the years to come. Eisenhower’s Atoms for Peace initiative of 1953 which promised nuclear energy ‘too cheap to meter’ was soon overshadowed by the terrifying image of terminal destruction of humanity from a global nuclear conflict. Thus, Muller’s position and statements not only laid the foundations for the LNT dogma, but also for the resulting radiophobia – perhaps with some intent to limit the proliferation of nuclear weapons. The antinuclear ‘Ban-the-Bomb’ social movement arose at that time, later to oppose nuclear energy on ‘environmental’ grounds (the ‘Green Movement’). But the oil and automobile industries may have also had a vested interest in further promoting this ‘anti-nuclear’ position – to weaken or eliminate the impact of nuclear energy as a competitor. It is perhaps no coincidence that the Rockefeller Foundation hand-picked and sponsored the activities of the first (set up in 1955) U.S. National Academy of Sciences (NAS) Committee called Biological Effects of Atomic Radiation (BEAR). In 1960, the Ford Foundation donated $250,000 (at that time a very significant sum) to the ICRP.5-7 These donations could have affected the deliberations and decisions of the members of the BEAR I Genetics Panel. In 1956, this Panel recommended to base the estimation of the risk of radiogenic cancer on the assumption that the risk declines linearly with the dose of radiation without any threshold, and that even a minute absorbed dose may be carcinogenic. This meant that the 500 mGy/y. dose limit for nuclear workers, in place since 1934, had to be significantly reduced. The 1957 Science paper by Edward Butt Lewis, specifying that spontaneous incidence of leukaemia may result from exposure to natural background radiation, 8 was most influential in fortifying the acceptance of the LNT model. Although the paper was later slated as being erroneous, it became a scientific ‘stalking horse’ for the BEAR I Panel. 9 Indeed, the last mutation of BEAR, the NAS’s Biological Effects of Ionizing Radiation (BEIR) Committee to Assess Health Risks from Exposure to Low Levels of Ionizing Radiation in its 2005 report 10 continues to support the LNT hypothesis for radiation protection purposes – despite ample evidence of its scientific failure. 11 Hence, the modern LNT-based system of radiation protection, which by now has been globally implemented within the legal systems of all the United Nations and European Union member countries, was jointly developed by successive BEAR/BEIR committees and the ICRP.

Also in the year 2005, a Joint Report of the French National Academy of Medicine and the French Academy of Sciences appeared. 12 Using the available epidemiological evidence, it concluded that the LNT relationship ‘is not based on biological concepts consistent with our current knowledge’ and that it should not be employed ‘for assessing by extrapolation the risk associated with low and even more so, with very low doses of ionizing radiation’.

In what follows, we review some examples of epidemiological, clinical and experimental studies to support our view that the position of the French Academies is indeed scientifically justified. We also reflect on the societal impact of promulgation of LNT paradigm, especially due to legal enforcement of the LNT-based system of radiation protection.

Research and Data Invalidating the LNT Paradigm

Evidence from epidemiology

Epidemiological analyses of morbidity and mortality among survivors of the atomic bombings of Hiroshima and Nagasaki are considered to be the most important data for evaluating risk of exposures to low-LET radiation at low to moderate doses. Since 1950, the inhabitants of those cities present at the time of these attacks, and their offspring, have been carefully monitored throughout their lives within the Life Span Study (LSS) project. The LSS cohort includes survivors who were located within 2.5 km of the hypocentres at the time of the bomb explosions (‘irradiated’ subjects whose absorbed colon doses 2 were estimated to exceed 5 mSv), and a similar-sized sample of survivors (‘controls’) who at the time of explosions were located at distances of 3–10 km from the hypocentres, and whose absorbed doses were considered to be negligible (below 5 mSv). Altogether, as of 2003, there were 49,111 ‘irradiated’ subjects, of whom, 31,650 (64.4%) absorbed low doses (5–100 mSv). 13 Revised evaluations of the LSS cohort revealed an elongated lifespan and reduced cancer mortality after exposure to low-dose radiation from A-bombs in 'irradiated subjects', relative to their 'control' counterparts. Also, mortality from all causes among women present in Nagasaki on the day of the bombing who had incurred low doses of IR, was lower compared to Japanese women exposed to no other radiation than natural.14-20 Finally, within this 62-year prospective cohort study, no deleterious health effects could be observed in children of people who were exposed to the nuclear bombs in Hiroshima and Nagasaki and whose gonads accumulated on average 264 mGy of radiation. 21

Results of the repeatedly revised analyses of the health outcome of the Chernobyl nuclear power plant disaster in 1986 also question the LNT hypothesis. As reviewed by the United Nations Scientific Committee on the Effects of Atomic Radiation (UNSCEAR), until 2012, acute health effects of ionizing radiation released from the burning reactor affected no more than seventy persons. Within this number were forty-seven fire fighters and other rescuers who died of lethal doses and less than twenty persons who died due to acute radiation syndrome (ARS) or to other concurrent diseases exacerbated by absorption of ionizing radiation.2,22,23 Notably, in 2000, the mortality rate from all causes among 134 ARS survivors was lower than that in the ‘control’, unexposed population (1.09% vs 1.4%). In fact, no increase in the general mortality rates was observed in the ‘post-Chernobyl populations’ evacuated from the most contaminated areas in Ukraine, Belarus and Russia, as compared to the general populations of these countries. The only elevated radiation-associated morbidity was the enhanced incidence of thyroid cancer in individuals who were children or adolescents at the time of the accident. To explain this enhancement, a number of factors have been proposed, including (a) high susceptibility of the thyroid gland during childhood to the carcinogenic consequences of absorption of radiation, especially over iodine-deficient areas, (b) increased spontaneous incidence of thyroid cancer between 35 and 65 years of age, (c) psychological stress due to the perceived cancer risk after the accident among the exposed population who voluntarily reported for diagnostic examinations and (d) qualitative and quantitative improvement of diagnostic methods (i.e. better equipment provided by some Western countries and a massive screening effort) which resulted in increased detection of microtumours (or incidentalomas) which are harmless and are usually discovered only at the time of autopsy. 24 In addition, not all of the detected thyroid cancers should be associated with radioiodine since, as noted by UNSCEAR, ‘in the absence of a biomarker, it is impossible to distinguish a radiation-related thyroid cancer from one that develops from other causes’. 25 Fortunately, most thyroid tumours are either inactive (dormant) or can be effectively treated. Consequently, within about nineteen thousand cases of thyroid tumours detected between 1991 and 2015 over all areas of Belarus and Ukraine, and over the four most contaminated oblasts of the Russian Federation, the mortality rate was less than 1%.24,26

Over the earlier years of the last century in the treatment of tuberculosis (TB) an artificial pneumothorax was applied in TB patients and their lungs were frequently checked by fluoroscopy. In their detailed analysis, Anthony B. Miller and co-workers 27 examined breast cancer mortality in a cohort of 31 710 women treated for TB at Canadian sanatoria between 1930 and 1952. Breast cancer mortality (expressed as the standardized mortality ratio, SMR) among those patients who received lung doses ranging between 100 and 190 mGy was markedly lower than among those who received X-ray doses below 90 mGy or above 200 mGy. Strangely, the authors failed to notice this difference and concluded that their results were ‘most consistent with a linear dose-response relation’.

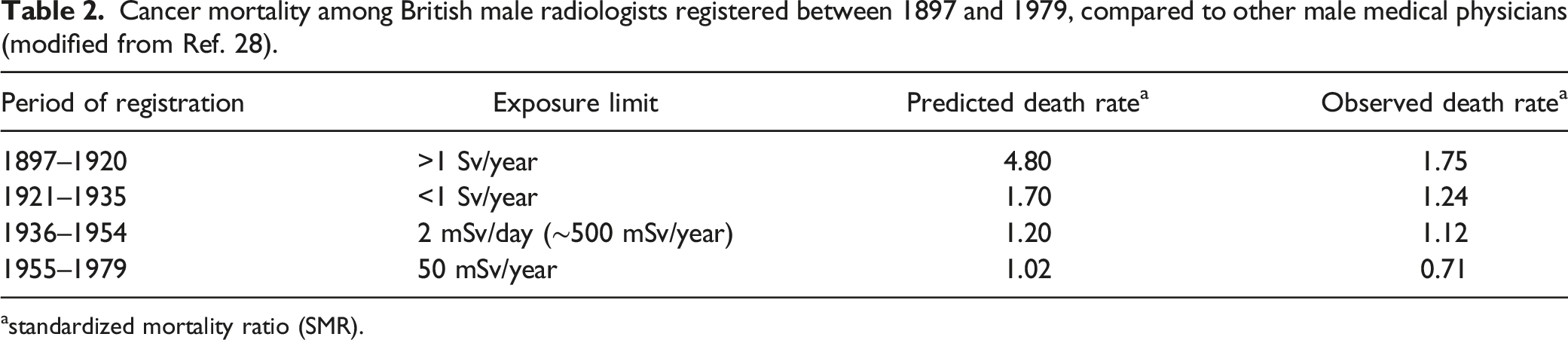

Cancer mortality among British male radiologists registered between 1897 and 1979, compared to other male medical physicians (modified from Ref. 28).

astandardized mortality ratio (SMR).

Deaths from cancer among various groups of nuclear workers from the USA, Canada and Great Britain (modified, after Ref. 29).

These and other such data are typically either ignored by the LNT proponents or explained by the so-called ‘healthy worker’ effect. 30 A recent large-scale analysis (INWORKS) of over 300 000 workers in the nuclear industries of France, the United Kingdom and the USA suggests a linear increase in the rate of cancer deaths in this cohort with increasing radiation exposure, including the 0–100 mGy dose range. 31 However, valid objections with respect to the applied methodology and to the missing evaluations of biological responses to the irradiation were raised by several authors who supplied a detailed critique of the INWORKS study design and of its published results, indicating that these results do not support the LNT dose-effect relationship.20,32-34

Incidence of lung cancer vs concentration of radon in breathing air among inhabitants of Worcester County, USA (modified, after Ref. 41).

aOdds Ratios and 95% confidence intervals (CI) obtained from univariate conditional logistic regression.

**P ≤ .05.

The results of these radon studies were later confirmed by a group of investigators from the Polish National Centre of Nuclear Studies led by Professor Ludwik Dobrzyński who in their sequential analyses of the available data demonstrated that breathing air containing even several hundred Bq/m3 is unlikely to cause lung cancer.42,43 Despite these clear indications, which pertain to indoor and outdoor radon concentrations in the air, Rn-222 and its progeny continue to be considered as one of the leading causes of lung cancer. 36

Recently, a large-scale statistical study to evaluate the impact of natural background radiation on human longevity and cancer mortality has been published. 44 Individual values of dose equivalents (in mrems or mSv) from terrestrial and cosmic sources (including radon), averaged over a 5-year period (2011–2015) were evaluated for the entire population of all 3139 USA counties (involving over 320 million inhabitants) using the ‘radiation dose calculator’ 4 of the U.S. Environmental Protection Agency’s (EPA). Data on cancer statistics over the same period were collected from the U.S. Cancer Statistics (USCS), the official federal statistics on cancer incidence and deaths, produced by the Centers for Disease Control and Prevention (CDC) and the National Cancer Institute (NCI). The results of this analysis showed that life expectancy, the most integrative index of population health, was extended by some 2.5 years for individuals residing in areas with relatively higher levels of background radiation (≥1.8 mSv/year), compared with those receiving lower (≤1 mSv/year) yearly doses from this source. As indicated by the authors, such lifespan extension, established with high statistical significance (p > .005), could be associated with a decrease in cancer mortality from common malignancies, such as lung, pancreas and colon cancers for either sex, and brain and bladder cancers, for males only. 44 While the findings of this study could be influenced by the inherent assumptions in the EPA model applied in the calculation of background radiation doses, such results are in accord with and complement earlier observations from some of the world’s high background natural radiation areas in India, Brazil, China, and Iran where no, or a reverse, relationship was observed between exposure to elevated (up to 50 mSv/y.) natural background radiation and the rate of cancer mortality (reviewed by Ref. 1).

The results of David et al. are exceptional in that they reliably (i.e. with adequate statistical significance) demonstrate the ‘negative’ trend between low-level background exposure and its health outcome. In most epidemiological studies, the radiation-exposed cohorts for which their absorbed doses could be established with any credibility are too small to yield a convincing dose-effect relationship. Indeed, to establish with reasonable certainty any relationship between induction of cancer and exposure to small doses of ionizing radiation, the investigated cohorts of irradiated subjects must be very large.45-47 Yet, in most of such statistical analyses, only a one-sided (i.e. ‘positive’) trend is considered. To argue for a ‘negative’ trend, proponents of the LNT hypothesis demand that a statistically significant ‘negative’ dose-effect association be demonstrated. Thus, they neglect the general principle that statistics should be applied to disprove and not to approve a hypothesis – that is to judge how unlikely is either of these trends, the less unlikely winning the argument. Naturally, a ‘zero’ (i.e. non-existent or ‘flat’) dose-effect hypothesis should also be considered over the lowest dose ranges, to test the likelihood of a threshold response over these ranges. In such analyses performed by pro-LNT authors, invariably, the pre-assumed downward-extrapolated linear dependence is drawn directly through the dose = 0, effect = 0 point. In view of the unavoidable natural background, there is no experimental data to support such a procedure. Also, the choice of data ‘binning’ in order to reduce the number of data points in such an analysis may significantly affect the slope of the resulting dependence. 43 Proponents of LNT often resort to studies biased ab initio (i.e. such, where the validity of the LNT model is never contested), or where circular reasoning, ‘doctoring’ of data in statistical analyses or other methodological shortcomings are apparent. 48 At the same time, any evidence of thresholds or adaptive/hermetic responses to radiation is played down or totally ignored. In general, studies claiming adverse effects are still more likely to gain recognition (and funding) than those which consider the whole spectrum of possible outcomes of exposure to radiation, including no effect.

Evidence from low-dose whole-body irradiation in clinical conditions

Early in the 20th century, soon after Röntgen’s discovery, external X-ray irradiations were applied to treat patients with systemic and/or advanced neoplasms. For technical reasons, at that time only whole-body or half-body irradiations could be performed. As recently reviewed, 49 until 2020 some fifty such procedures have been reported involving over 2000 patients. These trials, in which the majority of patients were exposed to low-level radiation, offered a unique opportunity to study low-dose effects in humans. The earlier enthusiasm to perform such cancer therapy soon ended with the advent of chemotherapy in the 1940s and has never returned. Most of the treated radiotherapy patients presented with advance-stage lymphomas and leukaemias, but metastasizing solid tumours were also treated by whole- or half-body exposures to X- or γ-rays. Typically, these were applied in fractions of 0.1– 0.25 Gy several times a week to total doses ranging from 1.5 to 2.0 Gy. (reviewed in Ref. 49). There were complete remissions in over 50% and sometimes in over 90% of the treated patients – results comparable to - or better than those obtained with chemotherapy. In contrast to the latter, low-level whole-body radiotherapy proved to be almost totally devoid of serious side effects, was less expensive, and the remissions occurred earlier and lasted longer. Unfortunately, the LNT-based rules of radiation protection (quite inappropriate in the case of radiotherapy) along with the LNT-based concern that new cancers would be generated, and the associated radiophobia – which still affects so many medical practitioners – have prevented any further testing and application of such low-dose radiotherapy on a larger scale. Another factor was (and still is) the fairly evident pressure from the pharmaceutical industry, naturally promoting chemotherapy. Thus, the opportunity has been lost of providing a firm evidence-based initiative to standardize the procedures of whole-body low-level exposures to low-LET IR as a treatment modality for cancer and other systemic diseases. 49

Evidence from controlled experimental animal studies

To arrive at a mechanistic base for the epidemiological observations, one must resort to well designed and fully controlled experiments performed on mice and rats, even though results of such experiments cannot be directly translated to humans. Rodents are generally more radio-resistant than we are.

34

Among the earliest reports of cancer control and life-prolonging effects in mice exposed to low doses of IR was the work of Lorenz’s group, published in 1950s.

50

More recently, Japanese investigators from the Medical Faculty of the Tokyo University demonstrated that single or multi-fractional irradiation of mice at total doses ranging between 0.1 and 0.5 Gy of X-rays suppressed the development of lung metastases from a fibrosarcoma (a malignant tumour of the connective tissue) transplanted 12 days earlier to the legs of the animals.

51

Somewhat later, Hosoi and Sakamoto

52

of Tohoku University School of Medicine in Sendai, Japan, showed that both spontaneous and artificially induced metastases of squamous cell carcinoma in the murine lungs were effectively suppressed by whole-body irradiation of mice with X-rays at doses ranging between 0.15 and 0.20 Gy; this procedure was effective if irradiations were performed between nine hours prior to and up to three hours after implanting the mice with the carcinoma cells. These results were confirmed by the Polish group in Warsaw, at the Department of Radiobiology and Radiation Protection of the Military Institute of Hygiene and Epidemiology, using different cancer cells and different strains of mice: here, the animals were irradiated with X-rays at single doses of 0.1 or 0.2 Gy and, for comparison, at a single dose of 1 Gy. Several repetitions of this experiment consistently demonstrated that, in contrast to the 1.0 Gy-irradiation, single low-dose exposures significantly inhibited the development of neoplastic colonies in the lungs of the mice intravenously injected with syngeneic cancer cells (Figure 1).

53

Similar results were obtained under a fractionation regime: ten daily fractions of 0.1 Gy to a total dose of 1 Gy suppressed the development of tumour colonies.

54

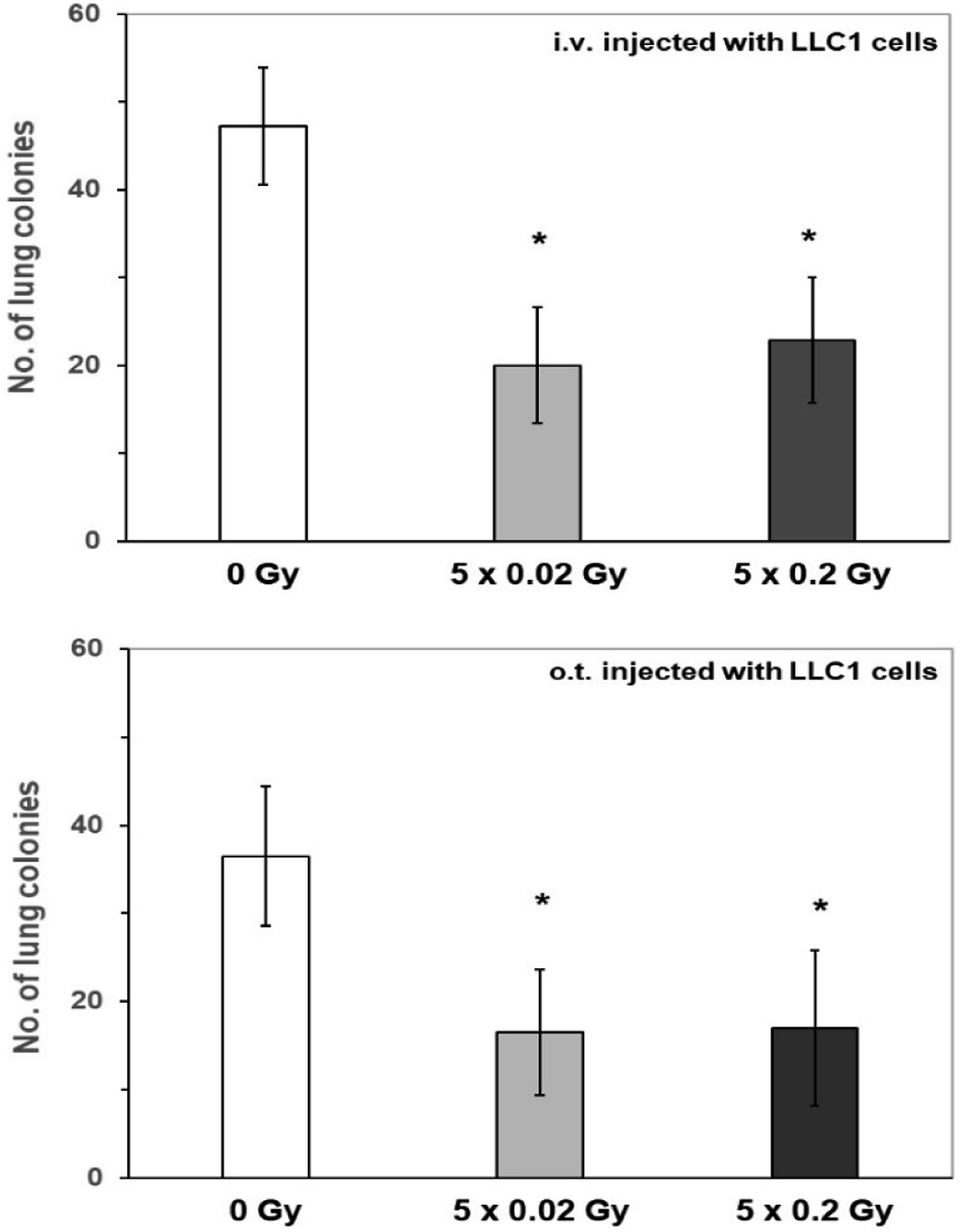

Recently, these observations were further substantiated in an experimental model in which syngeneic lung cancer cells were injected into mice by three different routes: intravenously, orthotopically (i.e. directly into the pulmonary tissue), or subcutaneously. In all these cases, five consecutive irradiations of mice at 0.02 or 0.2 Gy of X-rays per day significantly suppressed the growth of murine tumour cells, either in the lungs (Figure 2) or under the skin (data not shown).

55

Development of neoplastic colonies in the lungs of mice treated with single exposures of X-rays at 0.1, 0.2 or 1.0 Gy, compared to the control rate (100%) observed in the unexposed mice. Shown are results of three independent experiments; *indicates significant (p < 0.05) difference against the control value (modified, after Ref. 53). Tumour colonies in the lungs of mice after intravenous (i.v.) or orthotopic (o.t.) injection of LLC1 cells followed by five daily irradiations with X-rays at 0.02 or 0.2 Gy/day. *indicates statistically significant (p > 0.05) difference against the result obtained in the control (0 Gy) group (modified, after Ref. 55).

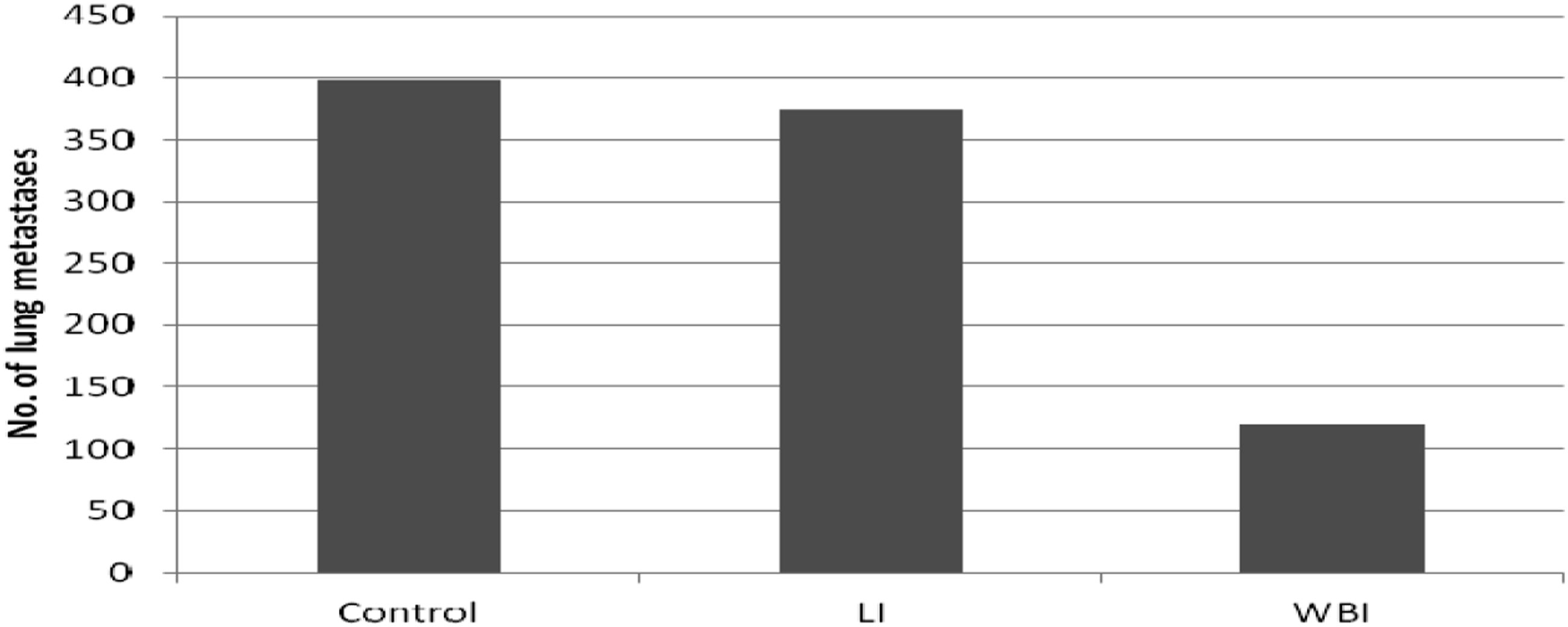

Similar results were also obtained in tumour-bearing rats. In a study conducted at the Hokkaido University School of Medicine in Sapporo, Japan, WKHA rats were subcutaneously implanted with allogeneic KDH-8 hepatoma cells (which metastasize to the lungs) and 14 days later irradiated at 0.2 Gy by X-rays; the radiation was delivered either to the whole body or only to the site of tumour implantation. Notably, only the former mode of exposure appeared to significantly inhibit the development of pulmonary metastases, detected 50 days after implantation of the tumour cells (Figure 3). That result suggested that systemic anti-neoplastic reactions had been triggered. In fact, whole-body, but not local irradiation, was associated with enhanced accumulation of CD8+ (cytotoxic) lymphocytes in the spleen and the tumour, together with elevated expression of genes coding for anti-cancer cytokines, IFN-γ and TNF-α.

56

Indeed, as indicated by the results of several studies, including those performed in Warsaw, Poland, stimulation of anti-neoplastic immunity is among the most important factors in eliciting tumour-inhibitory effects of low-level exposures to IR (reviewed in Refs. 57–59). Development of lung metastases 50 days post subcutaneous implantation of KDH-8 hepatoma cells to WKHA rats which 14 days after the implantation were exposed to either whole-body (WBI) or local (LI) irradiation at 0.2 Gy of X-rays. While the SD +/− bars are not shown, the differences between the WBI column and the Control and LI columns are statistically significant (p < 0.01). (modified, after Ref. 56).

Low-level radiation can also ‘prime’ the organism to receiving higher, ‘challenging’ doses of radiation. Such ‘adaptive response’ was discovered in the early 1980s and was repeatedly confirmed in the following years (reviewed in Ref. 60). Adaptive response with respect to radio-carcinogenicity has also been demonstrated in a number of studies: the carcinogenic effect of a high-dose of X- or γ-radiation was inhibited by an earlier exposure to low-level X-rays. In one such study,

61

if mice susceptible to induction of thymic lymhoma by four 1.8 Gy irradiations with X-rays (Figure 4, column B) were pre-exposed each time to a low X-ray dose of 75 mGy, the lymphoma incidence was significantly suppressed (i.e. decreased from about 90% to about 63%) (Figure 4, column C). These authors also demonstrated that chronic low-level irradiation could suppress the carcinogenic effect of the high-dose exposure: if mice were continuously irradiated with γ-rays at a low dose rate of 1.2 mGy/hr. for 450 days, commencing 35 days before the challenging irradiation, the rate of the developing lymphomas dropped further, to 43% (Figure 4, column D). Finally, if mice were subjected solely to chronic, low-level γ-ray exposure for up to 450 days, no thymic lymphoma could be detected in any of these animals (Figure 4, column E). Notably, the health condition of the continuously irradiated mice was better (e.g. no loss of hair with age was observed) and their body mass was greater than in non-irradiated mice (controls). As suggested by Ina and co-workers,

61

these results could be associated with stimulation of immune activity by continuous low-level irradiation, as indicated by the enhanced numbers of CD4+ T cells, CD40+ B cells and antibody-producing cells in the murine spleen. Incidence of thymic lymphoma in thymic lymhoma-susceptible mice exposed to single and/or continuous irradiations with X- and/or γ-rays. A – control mice not exposed to radiation. B – mice exposed four times at week-long intervals to X-rays at 1.8 Gy/dose. C – mice exposed as in group B and additionally irradiated at 75 mGy of X rays given 6 h before each 1.8-Gy irradiation. D – mice exposed as in group B and additionally continuously γ-irradiated for 450 days at 1.2 mGy/h dose rate. E − mice exposed only to the continuous irradiation as in group D. The figure combines data shown in two panels of the figure from the original publication, where SD bars or error bars had not been plotted (modified, after Ref. 61).

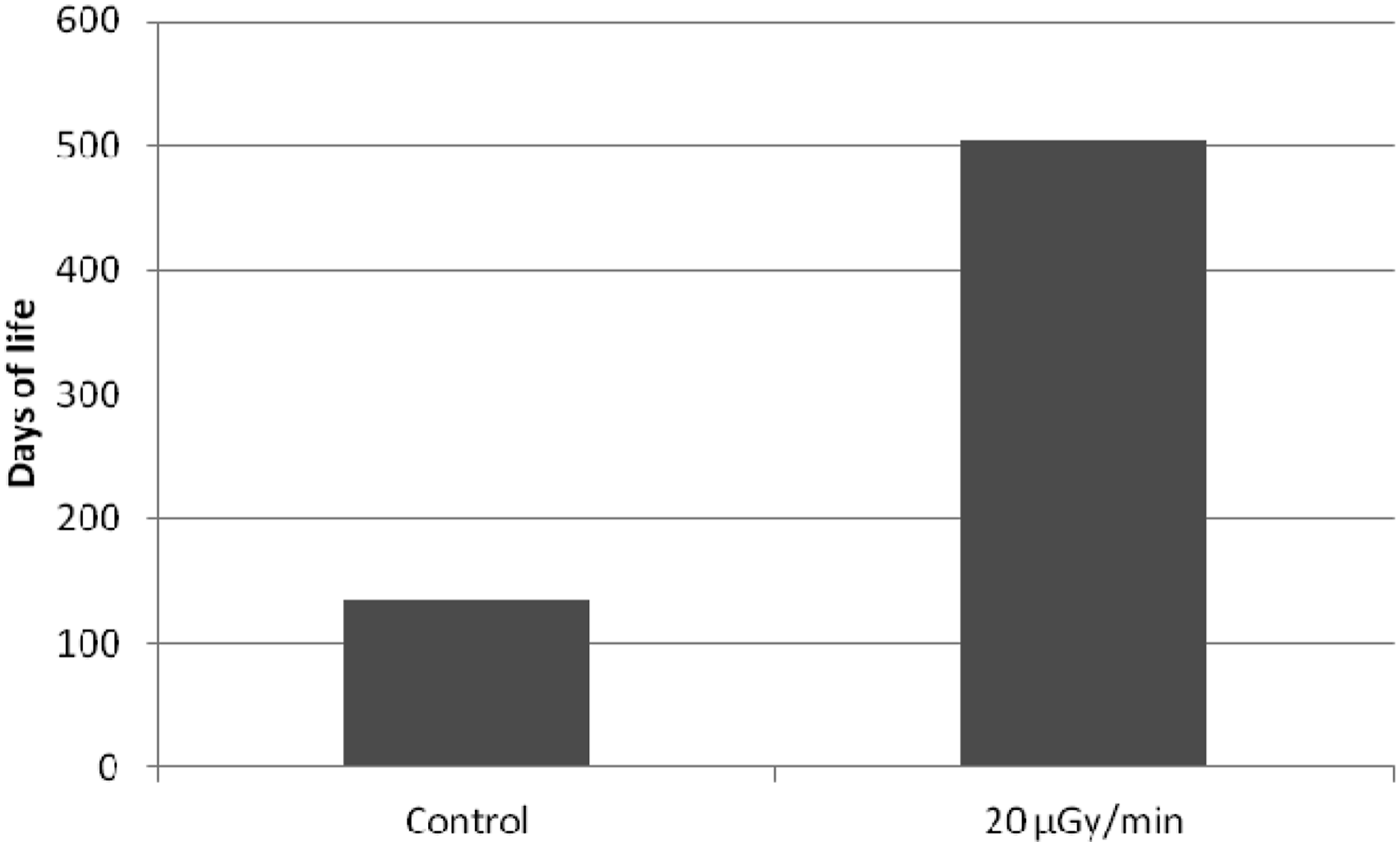

Beneficial effects of chronic exposures at low dose rate were also demonstrated by Ina and Sakai in MRL-lpr/lpr mice which carry a deletion in the apoptosis-regulating Fas gene – markedly shortening their life span due to development of multiple autoimmune disorders. In this experiment, mice irradiated throughout their lives with γ-rays at a dose rate of 20 μGy/min. lived almost four times longer (504 vs 134 days) compared to their unexposed counterparts (Figure 5). The increased lifetime of the animals was due to ameliorations of the total-body lymphadenopathy, splenomegaly and other autoimmune pathologies, in line with activation of health-promoting immunological functions, such as significant increase in CD4+CD8+ T cells in the thymus and CD8+ T cells in the spleen, and significant decrease in CD3+CD45 + R/B220+ cells and CD45 + R/B220+CD40+ cells in the spleen.

62

Prolongation of longevity of autoimmune-prone MRL-lpr/lpr mice irradiated throughout their lives with γ-rays at 20 μGy/min. dose rate. While the SD +/− bars are not indicated, the difference is statistically significant (p < 0.01) (data after Ref. 62).

Qualitative difference between biological effects of high-level and low-level exposures to ionizing radiation

The quoted examples of epidemiological, clinical, and experimental studies – a mere fraction of those published but not discussed here – clearly attest to the existence of qualitative differences between the biological effects of absorption of high vs. low doses of ionizing radiation by an organism. By concentrating our discussion on biological effects of exposures of biological systems to X- and γ-rays, we consciously avoid issues related to the effects of charged particles heavier than electrons (i.e. high-LET radiation) where additional factors, such as Relative Biological Effectiveness (RBE), as described by microdosimetry or track structure models, play a role and need to be considered. Obviously, the dose response of different cells or organs will depend on their radiosensitivity, but the biological response of an organism at its different systemic levels may also be significantly affected by the dose rate of the delivered IR. As the time scales for generation of radiation-produced species, for biological repair of radiation-induced damage and inter-cellular communication, or for cancer development, are quite different, varying dose rates and exposure modes of IR may significantly affect the overall dose-response of the organism as a whole.

Qualitative differences between the effects of high and low doses of low-LET ionizing radiation on biological systems.

Radiation hormesis (in Ancient Greek hormáein means ‘to set in motion, to urge on’) may be graphically presented as a U-shaped dose-response relationship, that is, ‘a low-dose stimulation and a high-dose inhibition’ effect. 63 The phenomenon of hormesis was first observed by Rudolf Virchow in 1852 and described in 1888 by a German pharmacologist Hugo Schulz who, however, erroneously interpreted it as homeopathy (reviewed by Ref. 64). In spite of its ubiquitous nature, in scientific literature, hormesis was first termed as such in 1943 65 and has since been demonstrated in a multitude of instances (reviewed in, for example, Refs. 5,66,67). Yet even today, hormesis is often confused with the pseudoscientific homeopathy, perhaps partly explaining why radiation hormesis tends to be questioned, downplayed or ignored. Arguably, indoctrination of generations of scientists and regulators with the LNT paradigm over the last 70 years has created a fertile ground for such reservations.14,66,68,69

Persistence of the LNT Paradigm and its Consequences

In fulfilling the three fundamental principles of radiological protection – justification, optimization and the application of dose limits, the ICRP consistently adheres to the LNT dose-response model. As explained in their Publication 103, 70 this model ‘is based on the assumption that, in the low dose range, radiation doses greater than zero will increase the risk of excess cancer and/or heritable disease in a simple proportionate manner’. Here, ‘simple’ must mean no effect at no (zero) dose, and ‘proportionate manner’ must mean linearity of response (or risk of excess cancer) above zero dose (i.e. no threshold), which further implies that this response (or risk) will add linearly and cumulatively with consecutive exposures. Consequently, any capability of repair of radiation damage by an irradiated human organism at low doses is excluded. Assumption of linearity in the ICRP system of radiation protection extends to cumulative additivity of absorbed dose, equivalent dose, or effective dose with their corresponding ICRP-established multiplicative factors, and to cumulative calculations of collective effective dose, dose commitment, or collective equivalent dose – at low doses. In this context, LNT also underpins the distinction between stochastic and deterministic effects 5 of radiation in humans, declaring effects of low doses as being stochastic – where LNT applies. Various categories of dose limits or dose constraints have been incorporated within the ICRP radiation protection system, only applicable to the stochastic, that is, LNT-governed range.

Over the years, the LNT model has been consistently endorsed by several American and international advisory bodies and regulatory agencies, including the U.S. NAS′ BEAR Committee (in 1956), the U.S. National Committee for Radiation Protection and Measurement (in 1958), the International Commission on Radiological Protection (in 1959) and the U.S. Environment Protection Agency (in 1976). This trend was reflected in Publications 60 71 and 103 70 of the ICRP, which upheld the validity of the LNT model for risk assessment of radiogenic cancer, being reaffirmed in 2018 by the NCRP Commentary 27. 72 Thus, LNT has been supported as the fundamental law of radiation protection throughout the history of ICRP, despite some much earlier discussions on the existence of dose thresholds in the response (occurrence of cancer) in humans exposed to ionizing radiation, as reviewed, for example, in the first UNSCEAR report. 73 To address this lack of integrity of the LNT-based system against even some earlier data on low-dose effects, ICRP has resorted to the ‘precautionary principle’: when an activity raises threats of harm to human health or the environment, precautionary measures should be taken even if some cause-and-effect relationships are not fully established scientifically.74,75 In line with this principle, ICRP also adopted the ALARA 6 principle with its ramifications, for example, in diagnostic radiology, now termed ‘Image softly’. The ICRP-recommended system of radiation protection has by now been legally implemented in all UN member countries. At face value, it would appear that this system is fairly simple and well-defined in legal terms – exceeding a particular dose limit, or evidence that any of the recommended procedures (such as ALARA) have not been undertaken with respect to exposure of humans (or of the environment) to ionizing radiation, will entail legal consequences, thus maintaining the highest possible safety measures, even if grossly over-protective. However, within this system there are several conceptual issues, such as the incompatibility between the physically measurable unit of absorbed dose (in Gy) and the concept of equivalent dose (in Sv). Absorbed dose, an intensive quantity, is the ratio of two extensive quantities: energy (in joules) of radiation and mass (in kg) of absorber where this energy is deposited. Collective effective dose (in man-Sv), the sum of equivalent doses over a population of individuals is, by its definition, an extensive quantity (if not represented by its per caput value, a population average). The numerical individual value of equivalent dose results from a linear composition of absorbed dose value multiplied by the ICRP-established multiplicative factors, and together with an ICRP-established risk factor, represents some projection of risk for an individual human organism. The sievert has no reference unit of risk, and the numerical equivalence of 1 Sv = 1 Gy (or, better, 1 mSv = 1 mGy) for gamma rays over the ‘stochastic region’ adds to this confusion. A high value of collective dose may be accumulated either by adding minute values of individual equivalent doses over a large number of individuals, or by high values of such doses added over a small population. If these two values of collective dose are multiplied by the ICRP-established risk factor value, ‘projected deaths’ result, both equal, and both equally meaningless. This seriously confuses any judgement as to the effects of radiation (the respective per caput values being perhaps a bit more helpful, yet rarely quoted), contributing to the general fear of radiation – radiophobia. Another source of confusion is the social perception of risk – for instance, after the Fukushima nuclear power accident the neighbouring Japanese population was seriously concerned and evacuated from areas where their predicted yearly cumulative doses would be no higher than doses typically received by patients undergoing CT or nuclear medicine diagnostic procedures. The benefit of exposure from medical procedures appears to offset the fear of radiation due to other types of exposure, especially accidental. This social perception of risk is further aggravated by social media which profit from spreading fear rather than from mitigating it.

Currently, there is no scientific support for LNT as a predictive and quantitative model. While even the lowest values of absorbed dose of ionizing radiation may induce alterations in the DNA structure, development of potentially carcinogenic mutations does not follow directly, nor does the number of such mutations increase linearly or cumulatively with increasing dose. Any change in the genome will directly trigger either its repair or apoptotic cell death – if such repair is neither possible nor cost-effective. Thus, large numbers of DNA alterations, constantly produced in all replicating cells, do not transform into life-threatening mutations. Importantly, the relatively small number of alterations in the DNA induced by absorption of a low dose of ionizing radiation also trigger repair which, in addition to rectifying the ‘radiation damage,’ also handles the much more frequent physiological (metabolic) alterations. The net result is a decrease in the rate of spontaneous cancers in a population exposed to low-level ionizing radiation.

In view of its evident lack of biological plausibility and the accumulated evidence to the contrary, the motives behind continuing to base radiation protection of the LNT hypothesis are not easily discernible but they may include ethical improbity, dogmatic policy assumptions, institutional inertia and comfort, the love of money and power, conventional respect, and even corruption and fraud (reviewed in Refs. 6,9,11,76,77). Apart from the scientists, policy makers and advisory bodies, the blame is certainly shared by the radiation protection community which has been recently downrightly accused of ‘choosing not to speak up against the excessiveness that has come to define the public conversation surrounding radiation’, 78 this decision assuring continued radiophobia.

Consequences.

The LNT model and the LNT-based radiation protection system, apart from generating radiophobia, lead to grave medical, economical and societal consequences. These may include (a) highly restrictive application of ionizing radiation in diagnostic and therapeutic medicine, and in particular, elimination of clinical trials of low-level, whole-body irradiation in treating cancer and other systemic diseases – despite this modality being clearly superior to chemotherapy; (b) grossly overestimated and unnecessary costs of implementing the ALARA (or ‘Image Softly’) principles or the excessive and unreasonably expensive safety regulations required in the design, construction, operation and decommissioning of nuclear power facilities, making nuclear power uncompetitive against other sources of electricity generation, despite its ‘greenest’ environmental footprint’; (c) induction, due to unnecessary relocations, rather than prevention of casualties among the evacuees from regions affected by nuclear plant (or other) radiation accidents.

In view of the foregoing, one may wonder how would abandoning the LNT paradigm affect the present system of radiation protection. Indeed ‘…when the concept of a universal initial slope is not supported by experiment, this house of cards collapses. And carries with it that scientific aberration called the sievert’. 79 As an initial remedy, LNT could be replaced by a linear-with-threshold dependence based on a suitably established dose threshold value, thus maintaining linearity and additivity above such a threshold, and still retaining some features of the present system, even if false in principle. Such a decision would immediately relieve us of the ALARA burden and dramatically reduce the costs of applying ionizing radiation in medicine, industry, and nuclear power generation. In longer terms, application of a more realistic non-linear (power-law or U-type hormetic) dose-effect relationship, also incorporating a suitable dose-rate dependence, would eliminate simple cumulative additivity of the predicted outcome and also require selecting suitable time schedules for collecting and reporting results – both attuned to rates of biological repair of radiation damage. Such a radiation protection system would necessarily apply to individual rather than to collective risk and be reported for a population via a distribution of individual values rather than by an average or cumulative population value. 7 Within such a distribution, cases of individual radiosensitivity (observed, for example, in radiotherapy patients) could be accounted for. In all, a much more elaborate non-linear evaluation of radiation hazard needs to be devised, also accounting for the beneficial effects of low-level radiation exposures – perhaps aided by modern machine learning technology.

Conclusion

The ever-accumulating results of solid epidemiological, experimental, and clinical studies make it clear that the answer to our title question is an emphatic NO – exposures to low-level ionizing radiation will not harm us and instead will in many instances improve our health and general condition. Indeed, the present system of radiation protection with its LNT foundation as applied to the low dose and low dose-rate region of exposures is more harmful than beneficial to the society. Hence, as succinctly expressed by Golden, Bus and Calabrese, ‘the time is ripe, if not long overdue, to place cancer risk assessment on sounder, more biologically based and fully transparent foundations’. 7 Professor Ludwik Dobrzyński, like many other scientists, had postulated and substantiated by their research the need to abandon the LNT paradigm and to base the radiation regulatory policy on the present knowledge of low-dose radiation effects.33,42,80-82 In this context, one is tempted to quote Charles Sanders, who in his recently published book entitled Radiobiology and Radiation Hormesis, 67 writes: ‘The number of lives in the world that can be saved and prolonged by low dose ionizing radiation in one year is considerably greater than all the American combat losses in our entire history’. Let us then allay fear and apply more broadly low levels of ionizing radiation – to save and prolong the lives of those who should never die in combat.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors acknowledge the financial support for publication of this article obtained from the National Centre of Nuclear Research (NCBJ), Świerk, Poland.