Abstract

Background:

Pediatric scaphoid fractures can be challenging to diagnose on plain radiograph. Rates of missed scaphoid fractures can be as high as 30% to 37% on initial imaging and overall sensitivity ranging from 21% to 97%. Few studies, however, have examined the reliability of radiographs in the diagnosis of scaphoid fractures, and none are specific to the pediatric population. Reliability, both between different specialists and for individual raters, may elucidate some of the diagnostic challenges.

Methods:

We conducted a 2-iteration survey of pediatric orthopedic surgeons, plastic surgeons, radiologists, and emergency physicians at a tertiary children’s hospital. Participants were asked to assess 10 series of pediatric wrist radiographs for evidence of scaphoid fracture. Inter-rater and intrarater reliability was calculated using the intraclass correlation coefficient of 2.1.

Results:

Forty-two respondents were included in the first iteration analysis. Inter-rater reliability between surgeons (0.66; 95% confidence interval, 0.43-0.87), radiologists (0.76; 0.55-0.92), and emergency physicians (0.65; 0.46-0.86) was “good” to “excellent.” Twenty-six respondents participated in the second iteration for intrarater reliability (0.73; 0.67-0.78). Sensitivity (0.75; 0.69-0.81) and specificity (0.78; 0.71-0.83) of wrist radiographs for diagnosing scaphoid fractures were consistent with results in other studies.

Conclusions:

Both inter-rater and intrarater reliability for diagnosing pediatric scaphoid fractures on radiographs was good to excellent. No significant difference was found between specialists. Plain radiographs, while useful for obvious scaphoid fractures, are unable to reliably rule out subtle fractures routinely. Our study demonstrates that poor sensitivity stems from the test itself, and not rater variability.

Introduction

Pediatric scaphoid fractures often result from a fall on an outstretched hand (FOOSH) and present with pain over the scaphoid tubercle or the anatomical snuffbox.1,2 Although they represent only 0.34% of fractures in children, they carry important clinical significance: Missed fractures may lead to pain, avascular necrosis, nonunion and degenerative arthritis.3 -5

Consequently, prompt diagnosis of these injuries is essential. At most institutions, wrist radiographs remain the primary choice of imaging for suspected fractures due to ease of access and low cost. However, studies on scaphoid fractures and wrist radiographs in adults have shown widely variable sensitivity, ranging from 21% to 97%—consistently lower compared with other imaging modalities. 2 ,6 -10 In children, scaphoid fractures may be occult on 30% to 37% of initial radiographs.2,6,9,11 We previously demonstrated that, even after a second set of plain radiographs 10 to 14 days after injury, scaphoid fractures remained occult in 5.5% of cases. 12 Incomplete ossification of the pediatric wrist and the low prevalence of scaphoid fractures in children have been proposed as further challenges in radiographic diagnosis.1,2

To determine the optimal management for suspected scaphoid injuries, it is essential to understand the reliability of wrist radiographs in children. Reliability differs from accuracy in that accuracy is the measure of a diagnostic test’s ability to give the correct (true) result, whereas reliability serves to demonstrate whether the results from a diagnostic test can be obtained consistently. Accordingly, reliability can measure both the consistency between separate observers (inter-rater) or between successive recordings for an individual observer (intrarater). 13 Poor reliability could indicate that differences between raters may contribute to the occult nature of scaphoid fractures on radiographs. However, strong reliability would appear to confirm that poor sensitivity is derived from the test itself, when compared with other imaging modalities.1,2,10,14 Reliability for scaphoid fractures and wrist radiographs has previously been reported solely in the adult population. 10 ,15 -19 The goal of this study was to compare evaluations between surgeons, radiologists, and emergency physicians using inter-rater and intrarater reliability to determine the validity of wrist radiographs in the diagnosis of pediatric scaphoid fractures. Secondary objectives included comparing sensitivity, specificity, and accuracy.

Methods

We conducted a 2-iteration survey of physicians at a tertiary children’s hospital. Participants were asked to assess 10 series of pediatric wrist radiographs for evidence of scaphoid fracture.

Radiograph Selection

Anonymized wrist radiographs for children with a suspected scaphoid fracture were identified through a previous study at our institution. 12 Radiographs of patients meeting the following criteria were considered for inclusion: (1) patients younger than 15 years at the time of injury; (2) radiographs taken 7 to 15 days following the initial injury; (3) clinical histories consistent with FOOSH and clinical suspicion of scaphoid fracture; and (4) all radiographs consisting of 4 views (posteroanterior, lateral, oblique, and pronated scaphoid views).

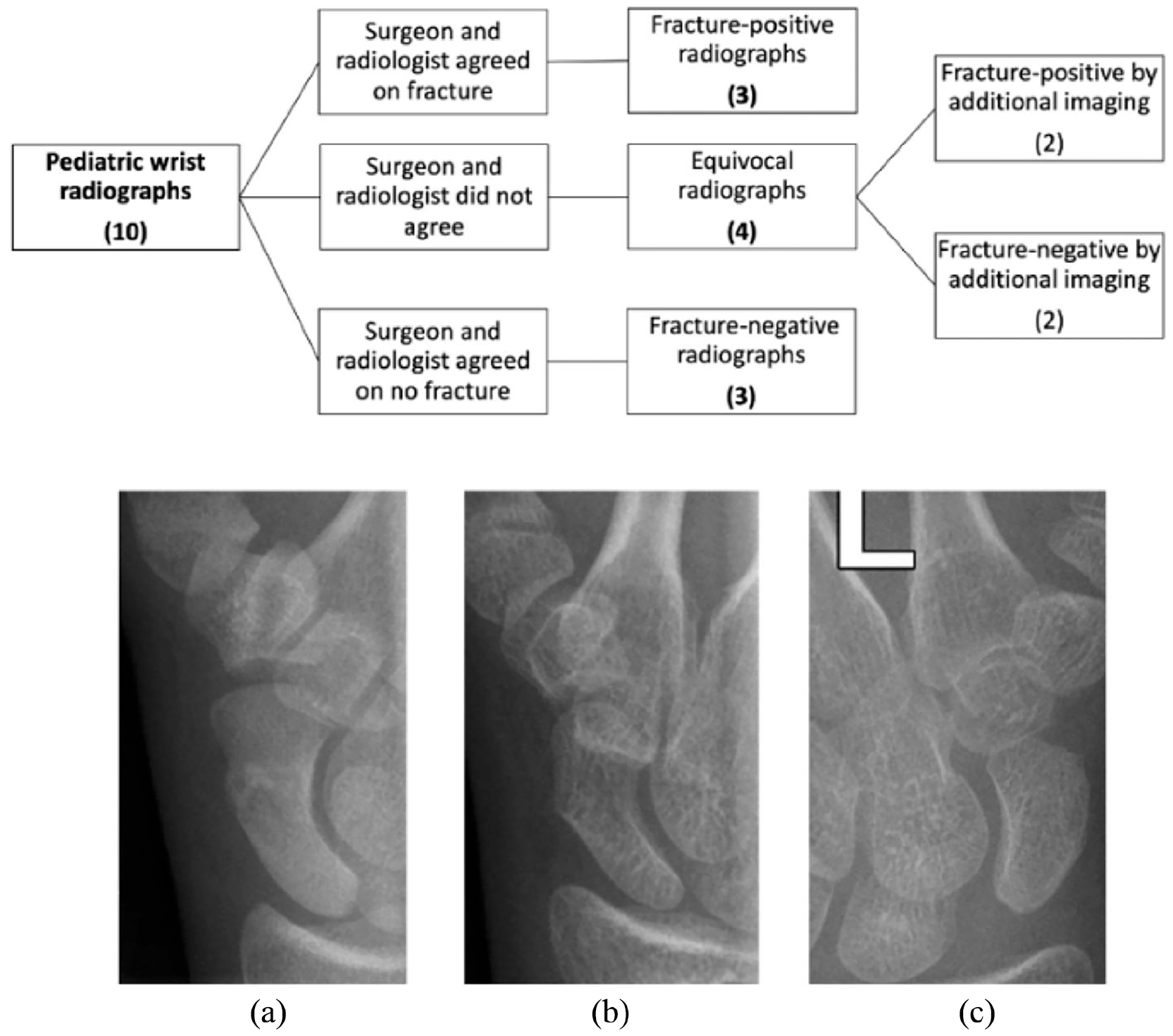

Radiographs meeting the above criteria were then classified as fracture-positive or fracture-negative according to the patient’s final diagnosis, based on their clinical course and all available imaging (including 6-week radiographs, computed tomography [CT], and/or magnetic resonance imaging [MRI]). In our analysis, this became our fracture-truth (true positives and negatives) against which raters’ responses were compared. In addition, we included radiographs where there was disagreement between the treating surgeon and reading radiologist; these radiographs were classified as equivocal. Ultimately, these equivocal radiographs were also classified as fracture-positive or fracture-negative dependent on additional imaging during the patient’s clinical course (radiographs at 6 weeks, CT, or MRI). Ten cases were selected for inclusion in the survey. Three were fracture-positive, 3 were fracture-negative, and 4 were equivocal (of which 2 were ultimately fracture-positive and 2 were fracture-negative) (Figure 1). Sample size calculation, assuming an intraclass correlation coefficient (ICC) of 0.75 with 10 cases and 40 participants, demonstrated an achievable confidence interval (CI) full width to be 0.35.

Radiograph selection for survey inclusion by fracture-truth. Radiograph examples: (a) Fracture-positive radiograph, (b) fracture-negative radiograph, and (c) equivocal radiograph.

Survey

An electronic survey was created through Research Electronic Data Capture (REDCap) consisting of the wrist radiographs from the 10 cases. Cases were randomized prior to survey distribution. We piloted the survey between members of our research team to ensure optimal user interface when viewing radiographs. Following refinement, the survey was distributed to all emergency physicians, radiologists, and plastic and orthopedic surgeons at our institution. Participants could select their perceived likelihood of a fracture, given the available radiograph on a 6-point Likert scale (extremely unlikely [“1”] to almost certain [“7”], with no mid-point [“4”] [Figure 2]). No mid-point or decimal values were allowed forcing participants to commit to the presence or absence of a fracture. The survey was distributed a second time, 4 weeks later, with the case order again randomized, to allow us to calculate intrarater reliability. All other statistical analysis was performed on the first iteration data. Information regarding demographics and standard clinical practice of participants was also collected.

Example of survey arrangement with radiograph displayed and Likert scale to determine fracture likelihood.

Analysis

Results were collected and analyzed using R software (R Core Team [2020], version 3.6.3). The ICC was used to evaluate both inter-rater and intrarater reliability. The ICC (2.1) was chosen for both measures to represent a 2-way random, single measure, agreement model. Adult studies on wrist radiograph reliability for scaphoid fractures have been conducted with kappa statistics (Cohen’s κ, Siegel and Castellan’s κ, or an unspecified κ). 10 ,15 -19 However, as our study involved a Likert scale, we did not use the nominal variable–based kappa but chose instead the ICC. The ICC was interpreted along the Cicchetti and Sparrow guidelines, where clinical significance is interpreted as “poor” (ICC < 0.40), “fair” (0.40-0.59), “good” (0.60-0.74), or “excellent” (≥0.75). 20 Accuracy was measured to compare for differences based on fracture-truth. Specificity and sensitivity were calculated and compared between specialists based on fracture-truth.

Results

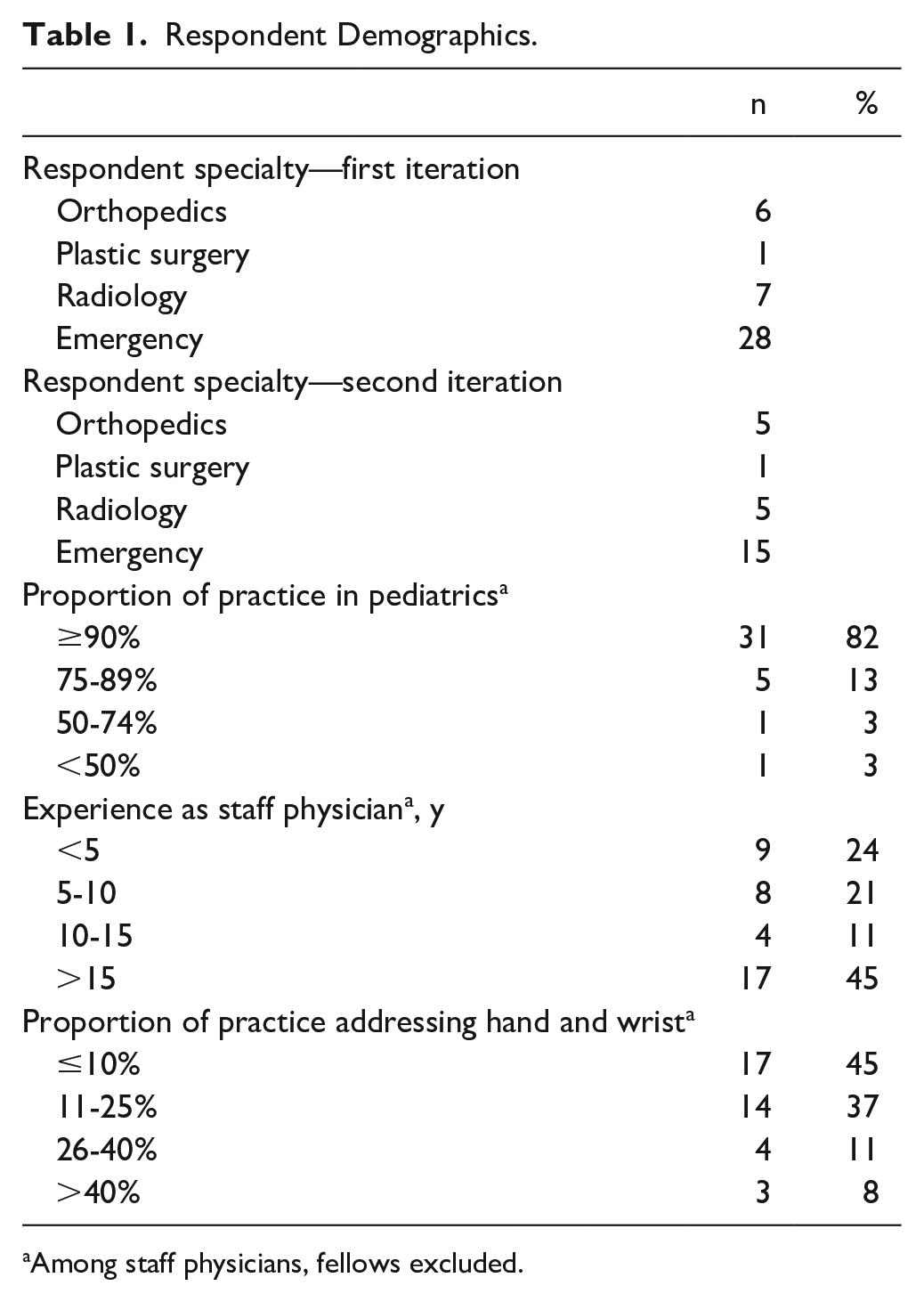

Forty-two board-certified physicians participated in the first iteration (43% response rate): 6 orthopedic surgeons, 1 plastic surgeon, 7 radiologists, and 28 emergency physicians. Twenty-six participated again in the second iteration: 5 orthopedic surgeons, 1 plastic surgeon, 5 radiologists, and 15 emergency physicians. Additional participant demographics are noted in Table 1.

Respondent Demographics.

Among staff physicians, fellows excluded.

Inter-Rater Reliability (ICCinter)

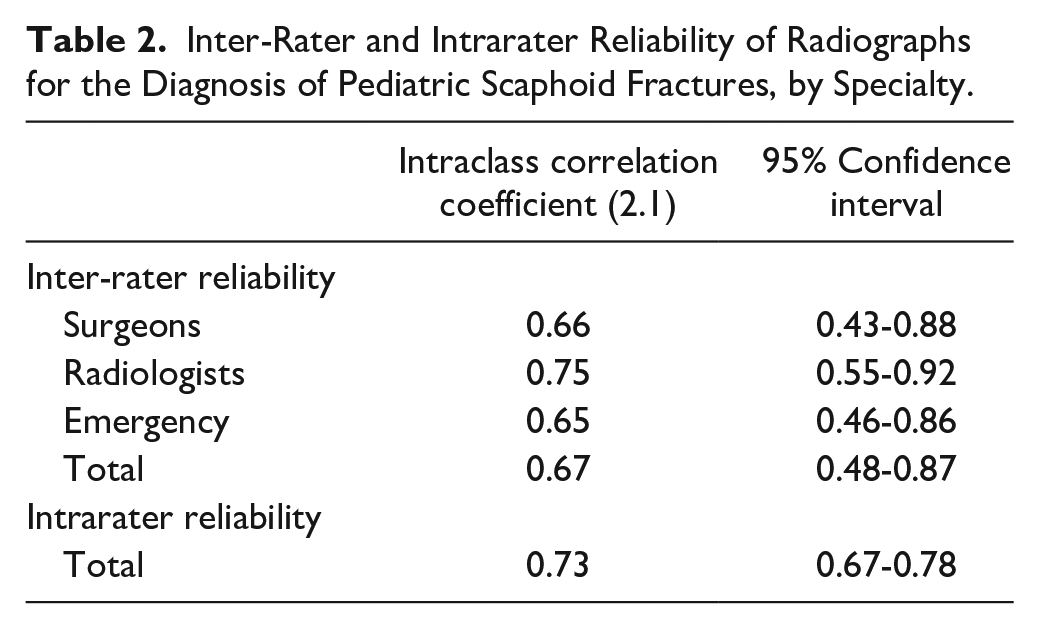

Inter-rater reliability was evaluated with the ICC (2.1). Likelihood ratings for the 10 radiographs were compared between all raters within a specialty to evaluate agreement for each group. Inter-rater ICC was calculated at 0.66 (95% CI, 0.43-0.88) for surgeons, 0.76 (0.55-0.92) for radiologists, and 0.65 (0.46-0.86) for emergency physicians, respectively. Pooled ICCinter for all respondents was 0.67 (0.48-0.87) (Table 2). Individual responses were plotted to demonstrate inter-rater reliability by grouping responses according to the fracture-truth (fracture-positive, equivocal-positive, equivocal-negative, fracture-negative) (Figure 3a-3c).

Inter-Rater and Intrarater Reliability of Radiographs for the Diagnosis of Pediatric Scaphoid Fractures, by Specialty.

Survey responses (extremely unlikely to almost certain) distributed based on radiograph fracture-truth.

Intrarater Reliability (ICCintra)

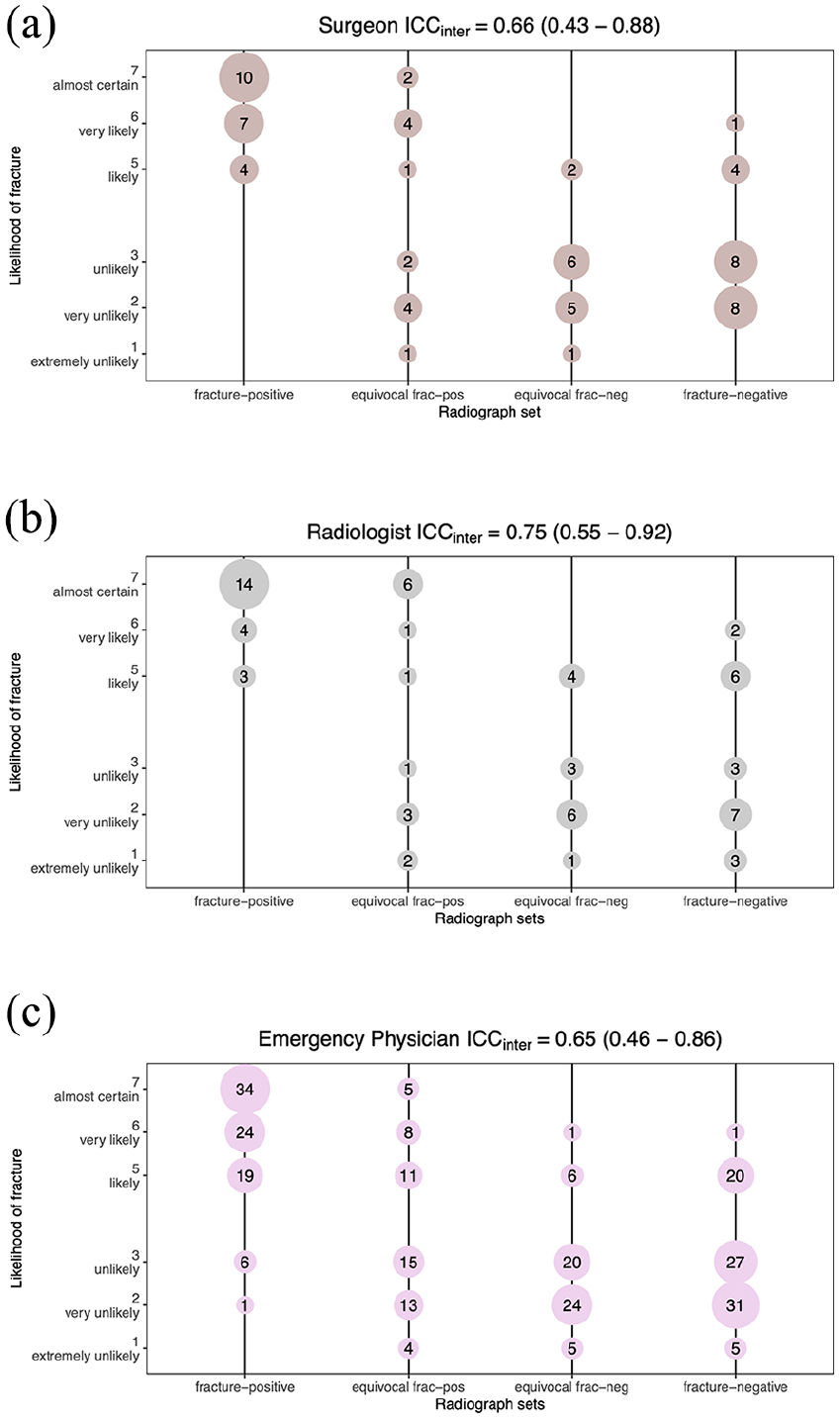

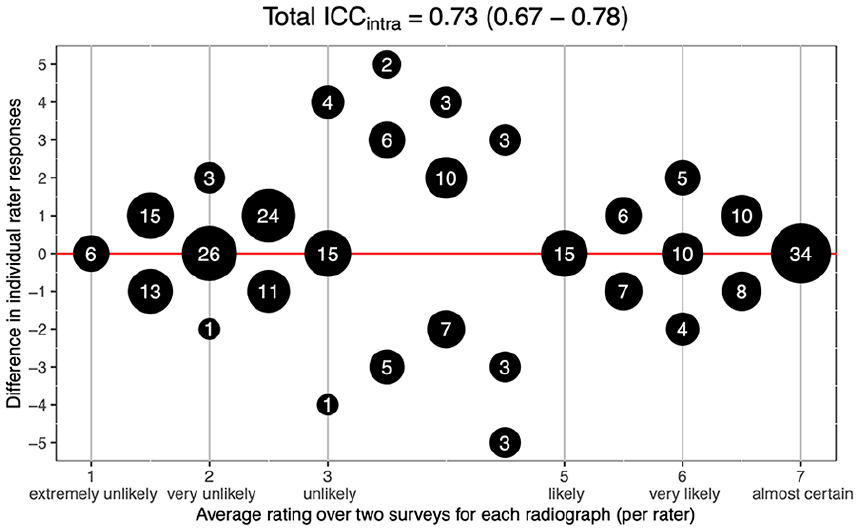

Intrarater reliability was calculated with the ICC (2.1). We compared individuals’ first iteration rating with their second for the same radiographs. Pooled ICCintra for all respondents was 0.73 (95% CI, 0.67-0.78) (Table 2). A Bland-Altman plot of intrarater responses is presented comparing the difference between the 2 ratings for the same fracture (Figure 4).

Bland-Altman plot, comparing raters’ responses when viewing the same radiograph over 2 surveys.

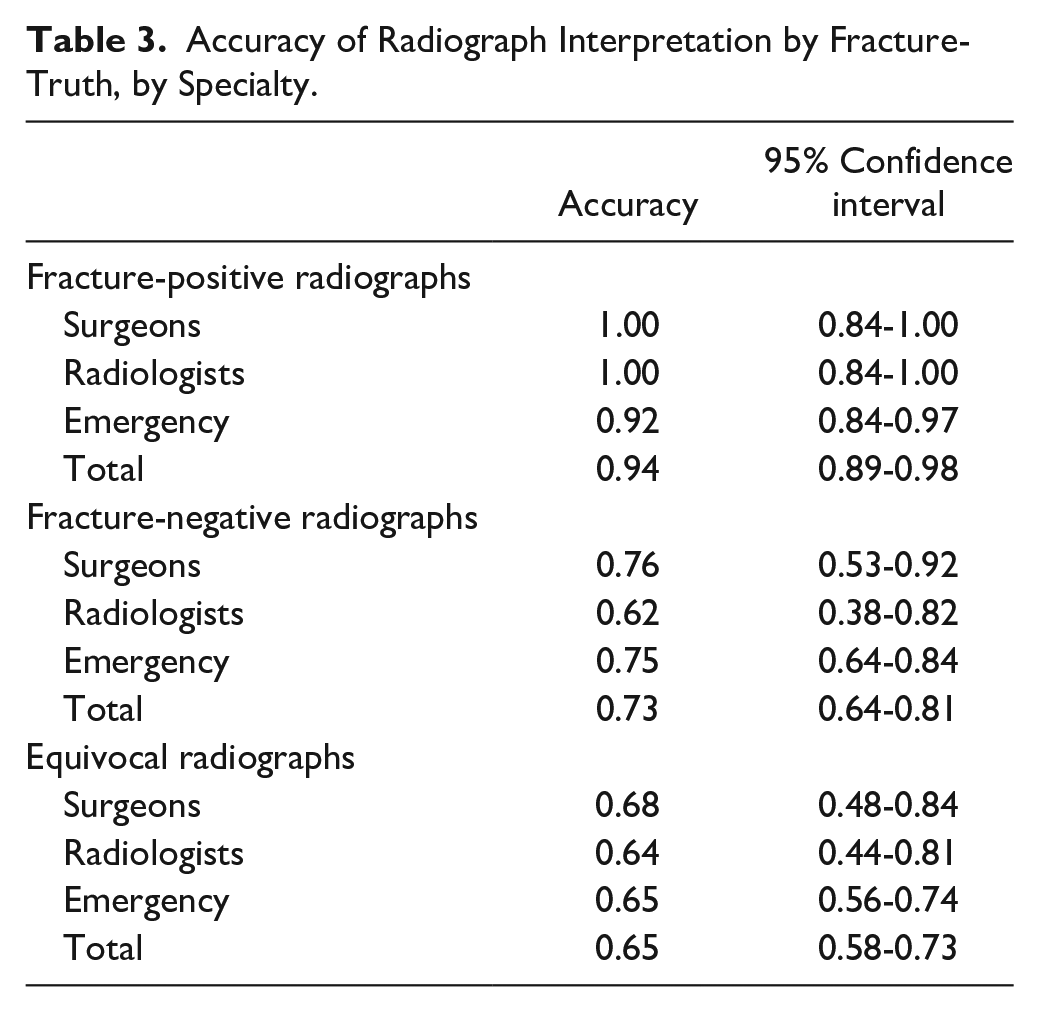

Accuracy

Accuracy was used to show the proportion of correct results (both true positives and true negatives) for all fracture-truths. Accuracy for fracture-positive radiographs of 0.94 (95% CI, 0.89-0.98) was significantly greater when compared with accuracy of fracture-negative (0.73; 0.64-0.81), equivocal-positive (0.46; 0.35-0.57), and equivocal-negative radiographs (0.85; 0.75-0.91). By specialty, accuracy of surgeons, radiologists, and emergency physicians was 0.80 (0.69-0.87), 0.74 (0.62-0.84), and 0.76 (0.71-0.81) respectively. There was no significance between different groups of specialists (Table 3).

Accuracy of Radiograph Interpretation by Fracture-Truth, by Specialty.

Sensitivity and Specificity

Sensitivity and specificity for detecting a scaphoid fracture by wrist radiograph were calculated by comparing respondent Likert scoring with the fracture-truth determined prior to the survey. By specialty, sensitivity was 0.80 (95% CI, 0.63-0.92) for surgeons, 0.83 (0.66-0.93) for radiologists, and 0.72 (0.64-0.79) for emergency physicians. Specificity was 0.80 (0.63-0.92), 0.66 (0.48-0.81), and 0.80 (0.72-0.86) for surgeons, radiologists, and emergency physicians, respectively. Pooled sensitivity and specificity were 0.75 (0.69-0.81) and 0.78 (0.71-0.83), respectively. No significance was noted between specialties.

Discussion

The management of children presenting with a suspected scaphoid fracture is challenging. When a fracture is not readily apparent on plain radiographs, children may be immobilized until symptoms resolve. This may result in overtreatment, unnecessary health care visits, and increased health care utilization.11,12 To understand the role that radiographs should play, we examined their reliability in children with suspected scaphoid fractures.

Reliability

Reliability has not been previously reported in the pediatric population. We found “good” inter-rater reliability with a pooled ICC of 0.67, and no statistically significant difference between specialty groups. Radiographic reliability of suspected scaphoid fractures in the adult population has been reported with mixed results. In an 8-rater cadaver study, Temple et al 10 also found excellent “interobserver” reliability for radiologists (0.86), hand surgeons (0.86), and emergency physicians (0.76). Tiel-van Buul found varied reliability in 2 studies involving immediate, 2-week, and 6-week radiographs. In 1 study, 2 radiologists had interobserver reliability of 0.76 on initial radiographs and 0.50 on 2- and 6-week radiographs. 18 These values corroborate with our own CIs. Conversely, in the other study, interobserver reliability between 2 radiologists and 2 surgeons was reported to be less than 0.40. 19 Low and Raby 16 also found poor interobserver reliability of 0.18 to 0.53 in their study between 4 raters evaluating follow-up radiographs. Mallee et al, 17 similarly, found low interobserver in a study involving 2 groups of surgeons: 0.15 for a group of 53 surgeons and 0.14 for a separate group of 28. Importantly, the prevalence of fractures in this study was only 18%, which may have had implications on the interpretation of the kappa value. 21

In our study, intrarater reliability was good (0.73) for the 26 respondents who answered both iterations of the survey. In adult literature, Temple et al 10 found excellent (≥0.75) intraobserver reliability in their study involving 2 radiologists, hand surgeons, and emergency physicians, ranging from 0.76 to 0.86. Dias et al, 15 however, found only fair reliability for 5 orthopedic surgeons (0.53), although excellent reliability between 5 radiologists (0.80).

The heterogeneity of results across these studies may be attributed to differing methodologies and radiographs under evaluation. Studies by Tiel-van Buul et al, 18 Low and Raby, 16 and Mallee et al 17 demonstrated both low reliability and poor accuracy suggesting that their studies depended primarily on challenging “equivocal” radiographs. The comparison of adult and pediatric wrist radiographs could also provide a potential source of discrepancy, although we would expect in fact more difficulty interpreting pediatric radiographs as they present their own unique challenges, as discussed in the “Introduction” section. More studies, with uniform methodologies, could contribute to more consistent data.

Interpretation of Reliability

High reliability can often be erroneously interpreted as high validity—however, validity is dependent not only on reliability but also on a test’s sensitivity and specificity. In reality, reliability is simply a demonstration of consistency in interpretation, even if that interpretation is incorrect. 16 Thus, high inter-rater reliability could simply mean that 2 raters will make the same mistake when ruling out a fracture, or vice versa. The same observation can be made with high intrarater reliability, where a single rater can be wrong, but consistent, nonetheless. In our study, “good”-to-“excellent” reliability was likely partly due to consistent (but incorrect) diagnoses by raters. Consequently, our findings of strong reliability between individuals and groups of specialists demonstrate that radiographs’ inferior sensitivity is due to the test itself, rather than the interpreters, despite the large variety of physicians who may interpret and manage pediatric wrist radiographs. That is, rater variability does not appear to account for the radiographs’ poor sensitivity when compared with other imaging modalities.

Accuracy, Sensitivity, and Specificity

Sensitivity of radiographs in the diagnosis of pediatric scaphoid fractures has been reported to range from 0.21 to 0.97. 2 ,6 -10 Pediatric data for the specificity of plain radiographs is not widely reported; however, adult data suggest that this also varies greatly, ranging from 0.40 to 1.00.7,15,22 Our overall sensitivity for all raters was 0.75, with no significant difference observed between specialists. While this value suggests moderate sensitivity, other imaging modalities such as MRI have been shown to have sensitivities approaching 100%.1,2,10,14 Interestingly, we found a pooled specificity of 0.78, challenging the supposition that false positive radiographs are rare.

When we examined the accuracy of radiographs based on fracture-truth, radiographs with fractures (fracture-positive radiographs) had a significantly higher accuracy (0.94) compared with fracture-negative and equivocal radiographs. This suggests that clear and obvious fractures will be detected by most physicians, consistent with what is reported in the literature. 18 Fracture-negative radiographs, however, had poorer accuracy (0.73) and wider distribution is seen in terms of fracture likelihood (Figure 3): More raters incorrectly saw a fracture in fracture-negative radiographs. Equivocal radiographs fared even poorer with an aggregate accuracy of 0.65. This was independent of clinician specialty and consistent with expected results: Radiographs that were identified as equivocal before the survey remained equivocal when viewed by more raters. These findings continue to reinforce what is seen in practice: Scaphoid fractures may be missed on radiographs.

Limitations

Some limitations to our study include institutional bias, as our study was limited to a single tertiary center. Our study was also affected by our survey design. To garner a high number of raters, we limited our study to just 10 radiographs, as estimated that a longer survey would lead to unfinished questionnaires or even noninitiation of the survey. A strength of our study is in fact the large sample size compared with other reliability studies; however, the nature of ICC dictates high numbers of raters are necessary to obtain satisfactory CIs. Even when resampling (bootstrapping) with up to 300 raters, our CIs remained large. Per specialty, we received a disproportionate number of emergency physician respondents (28 of 48), but this not surprising, given the number of emergency physicians at our institution. Radiographs 7 to 15 days after injury were used in our study as it is well recognized that a proportion of scaphoid fractures will be occult on initial radiograph.2,6,9,11 Consequently, our results may not be applicable to typical radiographs taken within the first 24 to 48 hours of an acute wrist injury, although we expect no notable difference in reliability as raters’ reliability would likely still consistently misdiagnose fractures. In addition, by distributing the survey through RedCap, images were not preserved in their original Digital Imaging and Communications in Medicine (DICOM) format, but rather as Joint Photographic Experts Group (JPEG) images, which has previously been noted to affect interpretation of imaging. 17 Finally, it is important to note that the gold standard for fracture diagnosis is not well defined. 17 For most of our cases, no advanced imaging was performed (MRI, CT, or bone scan). True-positive fractures were defined by evidence of fracture on subsequent radiographs and then advanced imaging if necessary. If occult fractures were in fact present despite normal radiographs throughout the course of the patient’s care, including 6-week follow-up, the results of this study may be affected. The clinical significance of these occult fractures, however, is unknown.

Conclusion

This study represents the first pediatric scaphoid fracture wrist radiograph reliability study and provides important context regarding the reliability of their clinical interpretation. We found good to excellent inter-rater reliability for surgeons, radiologists, and emergency physicians in the interpretation of these images. We also found good intrarater reliability for raters examining these same radiographs. No significant differences were noted in any way between specialist groups.

The use of plain radiographs in the management of children with suspected scaphoid fractures remains a mainstay of practice due to availability and low cost. It is essential, however, to understand the strengths and limitations of radiographs in this diagnosis. Our study, consistent with other literature, demonstrates that both the accuracy and the sensitivity of wrist radiographs, especially equivocal ones, remain poor when detecting scaphoid fractures. This was consistent across all specialties and practitioners indicating inferiority derived from the test itself and not by the clinicians. These findings speak to the validity of wrist radiographs as an imaging test to detect subtle pediatric scaphoid fractures. Consequently, while radiographs in the emergency department may remain useful as a screening tool to identify obvious fractures on initial presentation, their utility for equivocal situations or sequential evaluation requires reevaluation. Advanced imaging should be considered when suspicion persists despite seemingly normal or equivocal radiographs.2,12 The routine use of advanced imaging requires investigation, including consideration of availability, cost, and the clinical significance of a radiographically occult scaphoid fracture. Subsequently, our findings support that clinicians should consider alternative imaging modalities if subtle scaphoid fractures in children are suspected.

Footnotes

Authors’ Note

No patient identifying information was included in this study or the distributed survey. Physician participant demographic details were obtained through our survey, which included a detailed consent.

Ethical Approval

This study was approved by our institutional review board.

Statement of Human and Animal Rights

This article does not contain any studies with human or animal subjects.

Statement of Informed Consent

No identifying patient information is included in this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.