Abstract

Informed consent is a guiding ethical principle when conducting research involving human participants. Yet, consent forms are often skimmed or ignored, jeopardizing informed consent. In two experiments, we test four interventions designed to encourage participants to read online consent forms more carefully. Experiment 1 employed a 2 (length: short or long) by 2 (timing: fixed or free) by 2 (quiz: present or absent) between-participants design. We measured instruction-following and comprehension of the consent form. Results showed that fixed timing and a quiz led to greater instruction-following, but consent form length had no effect. Experiment 2 employed a 2 (length: short or long) by 3 (delivery format: live, audiovisual, standard written) between-participants design. Once again, length had no effect, but both live and audiovisual formats increased instruction-following and comprehension. We recommend that researchers consider using fixed timing, adding a quiz, and/or using alternative delivery formats to help participants make an informed decision.

Keywords

Informed consent is of utmost importance when conducting research involving human participants. Indeed, it is the guiding mechanism for respecting participants’ autonomy in research (Canadian Institutes of Health Research [CIHR] et al., 2022). Before agreeing to participate in research, participants should understand enough information about the research to make an informed decision. According to ethics codes (e.g., American Psychological Association, 2017; CIHR et al., 2022), participants should understand (1) the purpose, duration, and procedure, (2) their right to decline participation and to withdraw, (3) the consequences of declining or withdrawing, (4) risks and benefits, (5) limits of confidentiality, (6) incentives for participation, and (7) whom to contact for questions about the research and participants’ rights. Prospective participants should also be able to ask questions and receive answers about the research.

To indicate consent, participants are typically asked to read and sign a written form detailing the above information and given the opportunity to ask questions (Ripley et al., 2018; Wogalter, 1999). Evidence suggests that participants do not read these consent forms carefully, thereby jeopardizing this ethical principle. For example, in one study, fewer than 10% of participants reported reading the entire consent form (Perrault & Keating, 2018). When participants do not read the consent form, they cannot comprehend the key information that ethics codes state is required to make an informed decision about participation. They may then consent to participation without any understanding of what the study entails, the risks involved, what will be done with their personal information, or what they should do if they decide to withdraw from the study. This lack of comprehension certainly puts participant well-being at serious risk.

To address this problem, researchers have employed a multitude of interventions with varying levels of success. For instance, researchers have tried making forms shorter (Knepp, 2018; Perrault & McCullock, 2019; Perrault & Nazione, 2016), using bullet points (Antonacopoulos & Serin, 2016), using language that is easier to understand (Ittenbach et al., 2015), employing varying colors and fonts (Ripley et al., 2018), having participants sign different sections of the consent form (Antonacopoulos & Serin, 2016; Ripley et al., 2018), and testing participants’ knowledge of the consent form (Geier et al., 2021; Ripley et al., 2018). Of the above interventions, two that appear to hold considerable promise are shortening the consent form and quizzing participants about consent form content.

Consent Form Length

One factor that may be contributing to participants’ failure to read consent forms is that consent forms have become longer over time (Albala et al., 2010). When faced with a long form, participants may skip right to the signature box to get on with the next step (i.e., the study itself). Moreover, participants prefer shorter consent forms (Perrault & Keating, 2018). Empirical tests of consent form length are mixed, however, with some researchers finding that shorter consent forms produce greater comprehension (Kim & Kim, 2015; Perrault & Nazione, 2016), and others finding that a shorter form had no effect on comprehension (Matsui et al., 2012; Schmaltz, 2007). Thus, the first goal of our research is to test the effect of shortening consent forms.

Fixed Timing

To mitigate issues associated with consent form length, another solution may be to prevent participants from skipping ahead by forcing them to remain on the consent form page. Although we located one study that employed fixed timing (Krishnamurti & Argo, 2016), no control group was used, so it is unknown whether fixed timing has any effect on comprehension of the consent form. To our knowledge, no study has tested this potential intervention empirically. Thus, the second goal of this research is to provide an empirical test of the effect of fixed timing on consent form comprehension.

Quizzing Consent Form Comprehension

Another factor that may lead to participants’ failure to read consent forms is the passive nature of a written form. Without a researcher present, there are often no interactive elements to the consent process other than checking “yes” or providing an electronic signature. In online settings, quizzing participants on elements of the consent forms may be one way to get participants to be more actively engaged with the consent form process. This intervention shows some initial promise. For example, Geier et al. (2021) found that, out of six interventions, quizzing participants yielded the highest levels of comprehension, presumably because of the high interactivity of this intervention. Thus, a third goal of this research is to provide an additional test of the effectiveness of quiz questions.

Although many attempts to improve the consent process have been conducted, very few studies have tested multiple interventions in a single study with a fully crossed design. Thus, it is unclear whether interventions interact with each other or have an additive effect. A fourth goal of this research is to explore whether our intervention variables interact with each other to influence comprehension.

Experiment 1

In Experiment 1, we test three interventions that may improve the consent process as well as any potential interactions between these interventions. Our first intervention is shortening the consent form. We hypothesize that, consistent with prior research, short consent forms should produce greater comprehension than long ones. Our second intervention is fixed timing such that participants will be unable to advance beyond the consent form screen until a reasonable amount of time has passed (i.e., the time it takes to read the entire consent form based on average reading speed). We hypothesize that fixed timing should produce greater comprehension compared to a control group without fixed timing. Finally, our third intervention is quizzing participants on their knowledge of the consent form using multiple choice questions. We hypothesize that, consistent with prior research, participants who are quizzed about elements of the consent form will have greater comprehension than those who are not quizzed. Our tests of the interactive effects of these variables are exploratory; thus, no formal hypotheses were made.

Method

Participants

Participants were recruited from introductory psychology classes at a mid-sized Canadian university (N = 182) and from Qualtrics (N = 349). Data from 21 participants who failed to click “Submit my responses” at the end of the experiment were excluded. Note that results were similar when these samples were analyzed separately and together, thus, we only report analyses from the combined sample, which constituted 510 participants (154 men, 343 women, 11 non-binary/third gender, 2 not reported).

Design

We used a 2 (consent form length: short or long) by 2 (timing: fixed or free) by 2 (quiz: present or absent) between-participants design.

Procedure

Upon signing up for the study, titled “Adult Temperament Differences,” participants receive a link to one of six versions (randomly assigned) of the consent form.

The long form condition consisted of the standard consent form recommended by the university's Research Ethics Board (752 words). The short form condition consisted of what we deemed to be the most crucial elements of the consent form: the project title, researcher names, what participants would be asked to do, the level of risk involved, how they could withdraw, and what would happen to the results of the study (141 words). The short form condition also included the option to click on the full consent form if they wished to do so.

In the fixed timing condition, participants were unable to continue to the next screen until a reasonable time had passed, based on an average reading speed of 250 words per minute (165 s for the long form and 25 s for the short form). In the free timing condition, participants could advance to the next screen whenever they wished to do so. To ensure that participants in the fixed timing condition did not think that the program had malfunctioned, they were informed that they would not be able to advance until a certain length of time had passed.

In the quiz condition, three multiple choice questions were presented at the end of the consent form. Specifically, participants were quizzed on the study's purpose, the researchers’ names, and how they could withdraw if they wished to do so. These questions appeared on the same screen as the consent form, so participants were able to consult it when answering the questions. In the no quiz condition, no questions were presented.

All versions of the consent form contained the following instructions that appeared in the middle of the consent form: “To ensure that people are paying attention, when you see the question ‘What animal should universities bring onto campuses to reduce anxiety?’, please answer with ‘I am paying attention to the study.’” Following the consent form, participants responded to the dependent measures.

Measures

Behavioral Measure

Compliance with the embedded instructions was coded by a research assistant who was blind to condition. Answers were coded as correct (1 if the participant approximated our instructions in their answer to the key question described above) or incorrect (0 for other answers, such as “dogs”).

Comprehension

Comprehension was assessed with two multiple choice questions. Specifically, participants were asked about the risks involved (no foreseeable risks) and what would happen to the results of the study (they would be presented at conferences and published in a scholarly journal). Correct answers were summed such that comprehension scores ranged from 0 to 2.

Demographics

Participants were asked to report their gender identity and other demographics. 1

Results

To test whether we successfully manipulated consent form length, combined agreement (r(509)= .50, p < .001) with two items: “The consent form I read at the beginning of the study was long” and “The consent form I read at the beginning of the study was short” (recoded) was analyzed with an independent samples t-test. Results confirmed that participants who received the long form indicated higher agreement (M = 4.16, SD = 1.69) compared to those who received the short form (M = 3.14, SD = 1.41), t(507) = 7.39, p < .001, d = .66.

We used Process Model 3 with consent form length (short or long) as the predictor variable, and timing (fixed or free) and quiz (present or absent) as moderating variables for the remaining analyses. First, we tested whether our independent variables affected participants’ compliance with our embedded instructions (i.e., to state “I am paying attention to the study”). We predicted that short consent forms would produce greater instruction-following behavior than long consent forms, and although the pattern was consistent with this prediction (45% correct in the short form condition, 30% in the long form condition), the effect failed to achieve significance, b = .71, SE = .43, t = 1.64, p = .10, 95% CI [−1.55, 0.14]. As predicted, presence of a quiz increased instruction following, b = 1.10, SE = .38, p = .004, 95% CI [0.36, 1.84] (45% quiz present condition, 30% quiz absent condition). Similarly, fixed timing also increased instruction following (b = .78, SE = .38, t = 2.07, p < .05, 95% CI [0.04, .14], (43% fixed timing condition, 33% free timing condition). There were no significant interactions between any of our independent variables.

Next, we tested whether our independent variables had any effect on comprehension. Contrary to our predictions, no effects of length, quiz, nor timing were found.

Summary and Discussion

In sum, Experiment 1 demonstrated evidence for two relatively easy interventions that improved informed consent. First, controlling how much time participants spent on the consent form page appeared to affect how carefully they read it. When participants were not able to continue to the next page until a reasonable amount of time had passed, they were more likely to follow the embedded instructions. Second, providing a short quiz at the end of the consent form increased compliance with our embedded instructions.

We expected that making the consent form shorter would improve consent form comprehension, but results did not support this hypothesis. Shorter consent forms did not appear to lead to more “informed” participants relative to long consent forms. Given that prior research has shown that short consent forms are more likely to be read (e.g., Perrault & Nazione, 2016) and that participants themselves report preferring short consent forms (Perrault & Keating, 2018), the fact that length had no effect on our dependent measures was surprising.

Although two of our interventions were successful according to our key dependent measure, one could certainly argue that participants were still not as informed as researchers (and research ethics boards) would like them to be. For example, the proportion of participants who correctly followed our embedded instructions did not exceed 45%. Moreover, only 28% of our participants were able to correctly answer the two comprehension questions. Thus, it appears that the majority of participants did not carefully read the consent form despite our interventions. Given the large room for improvement, other interventions should be explored.

Experiment 2

We suspect that the quizzing intervention was successful because it likely encouraged participants to actively engage with the consent form. Indeed, previous research has shown that high interactivity via quizzing led to greater comprehension (Geier et al., 2021; Knepp, 2018). Another way to promote interactivity in the consent form process is to have a researcher reading the consent form with the participant and answering their questions. Douglas et al. (2021) found that having a researcher present led to more recall of an embedded phrase in the consent form compared to when the researcher was absent. In online settings, a researcher can easily be present virtually such that participants can see and interact with them. Although Wang et al. (2021) found success with a live online consent process in a clinical trial, no control group was used. To our knowledge, no empirical tests of researcher presence exist in online settings. Thus, in Experiment 2, we provide the first test of virtual researcher presence (using videoconferencing) compared to a standard written consent form condition.

We recognize that having a researcher present is labor-intensive and may undermine the convenience of online research. Therefore, we also sought to test whether simply listening to an audio recording of a researcher reading the consent form, in addition to viewing the consent form on the screen, would improve comprehension. Although participants would not easily be able to ask questions of the researcher in this situation, the presentation of audio (in addition to the visual consent form) should encourage information processing. According to Penney's notion of separate processing streams (1975, 1989), audiovisual formats should encourage individuals to process information in both audio and visual streams of short-term memory. Thus, in Experiment 2, we employed an audiovisual condition in which participants listened to a recording of a researcher reading the consent form while viewing the written form.

Given our unexpected finding that consent form length had no impact on our dependent measures, another goal of Experiment 2 was to test consent form length a second time. In Experiment 2, we attempted to strengthen the length manipulation by making the short consent form even easier to digest through the use of bullet points rather than paragraphs. We also sought to improve our dependent measures in two ways. First, we increased the number of multiple-choice questions from two to five to produce a more sensitive measure of comprehension. Second, we recorded the amount of time participants spent on the consent form screen to explore its relationship with our manipulations and other dependent measures.

Given our improvements to Experiment 2, we expect a consent form length effect to emerge such that shorter consent forms produce more comprehension compared to long consent forms. We also hypothesized that the delivery format would improve instruction-following and comprehension such that a live condition would produce the greatest comprehension as it is the most interactive, followed by the audiovisual condition, and finally, the standard written condition. Lastly, we hypothesized that, if length is indeed a factor that increases comprehension, this effect may depend on delivery format. Specifically, if our audiovisual and live delivery format interventions cause participants to engage in a deeper level of processing, then the length of the consent form may have less of an impact in those conditions where deeper processing occurs compared to more shallow processing.

Method

Preregistration

Before data collection, this study was preregistered on the Open Science Framework with the following information: target sample size, variables, hypotheses, procedures, and planned analyses.

Participants

Participants consisted of 293 introductory psychology students aged 17 to 62 (M = 21.14, SD = 4.91). The sample consisted of 229 women, 47 men, 8 non-binary or gender fluid, and 9 unreported responses. Data from two participants were withdrawn and therefore excluded from analysis.

Design

We used a 2 (consent form length: short or long) by 3 (delivery format: live, audiovisual, standard written) between-participants design.

Procedure

Experiment 2, titled “Adult Temperament Differences 2,” was administered online via Qualtrics. Upon entering the survey link, participants were randomly assigned to one of six conditions. The long and short consent form conditions were identical to those of Experiment 1, except that every one to two sentences in the short form was formatted into a bullet point.

In the live delivery condition, participants entered a one-on-one videoconference with a research assistant. Participants were instructed to follow along with the written form in the survey as the research assistant read the consent form aloud. Before consenting, participants were prompted to ask any questions they had.

In the audiovisual condition, participants listened to a test recording and were encouraged to adjust their device volume as desired. They then listened to a voice recording of the consent form (6 min and 42 s for the long consent form, 1 min and 15 s for the short consent form). The research assistant was kept constant for both videoconference and audiovisual conditions.

Participants in the standard written condition were instructed to read the consent form independently. After providing consent, participants responded to the dependent measures.

Measures

Experiment 2 employed the same dependent measures as those in Experiment 1, with the addition of recording the time, in seconds, that participants spent on the consent form screen. Three multiple choice questions (i.e., those used in the Experiment 1 quiz) were also added to the comprehension measure to improve its sensitivity.

Results

Manipulation Check

As in Experiment 1, the two manipulation check items were moderately correlated and combined, r(283) = .59, p < .001. Participants perceived the long form as longer (M = 3.60, SD = 1.57) than the short form (M = 2.63, SD = 1.35), t(285) = −5.62, p < .001, d = 1.47.

Behavioral Measure

A multiple binary logistic regression was conducted to assess the impact of delivery format and length on compliance with embedded instructions. The binary logistic regression model's goodness-of-fit was assessed using a likelihood ratio test, revealing a significant chi-square statistic, χ22(5) = 23.57, p < .001. The model explained 10.5% (Nagelkerke R2) and correctly predicted 63.4% of cases. As expected, a main effect of delivery format emerged, χ2(2) = 21.48, p < .001. The odds of participants in the audiovisual condition following instructions (55.1% correct) were 3.98 times higher than those in the standard written condition (30.5% correct; b = 1.38, SE = 0.40, p < .001, 95% CI [1.81, 8.75]), and for participants in the live condition (60.7% correct), the odds of following instructions were 3.61 times higher than those in the standard written condition (b = 1.28, SE = 0.47, p = .006, 95% CI [1.45, 9.00]).

We expected that shortening the consent form length would increase the proportion of participants who correctly followed instructions, but like Experiment 1, no effect of length emerged, χ2(1) = 0.54, p = .462.

We also expected that delivery format and length would interact such that the impact of shorter consent forms would be greatest with the written delivery format and would diminish as the delivery format encourages more processing. However, no significant interaction effect emerged, χ2(2) = 1.56, p = .460.

Comprehension

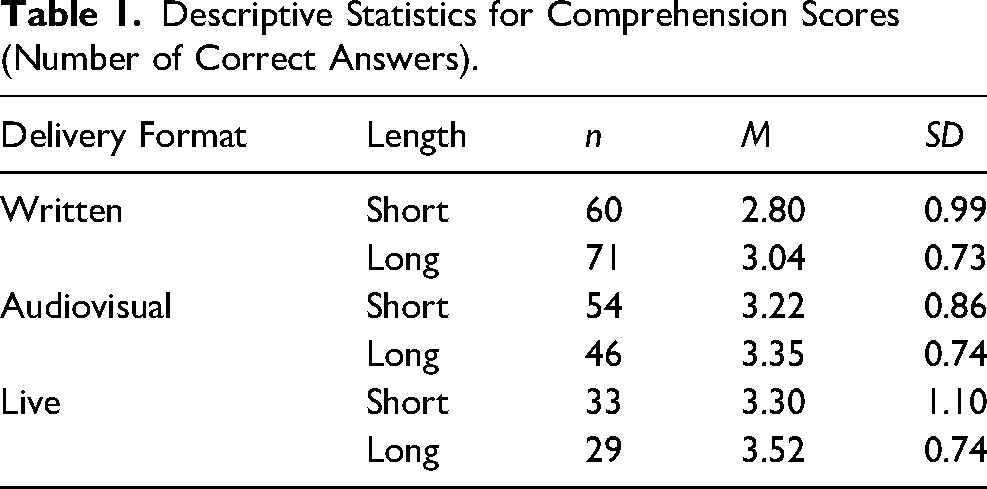

Correct answers to the four comprehension questions 2 were summed and analyzed using a 2 (length: short or long) x 3 (delivery format: live, audiovisual, standard written) ANOVA (analysis of variance). Overall, 39.9% (n = 117) of participants correctly answered all four questions (M = 3.15, SD = 0.88). See Table 1 for descriptive statistics.

Descriptive Statistics for Comprehension Scores (Number of Correct Answers).

Results revealed a main effect of delivery format, F(2, 287) = 8.65, p < .001, ηp2 = .06. Tukey's HSD post-hoc comparisons revealed that, as predicted, comprehension scores were higher in both the live (M = 3.41, SD = 0.11, p = .001, 95% CI [0.16, 0.78] and audiovisual conditions (M = 3.29, SD = 0.09, p = .007, 95% CI [0.08, 0.62] relative to the written condition (M = 2.92, SD = 0.08). Once again, the predicted main effect of length was non-significant, F(1, 287) = 3.37, p = .067, ηp2 = .01, as was the interaction between delivery format and length, F(2, 287) = 0.13, p = .875, ηp2 = .001.

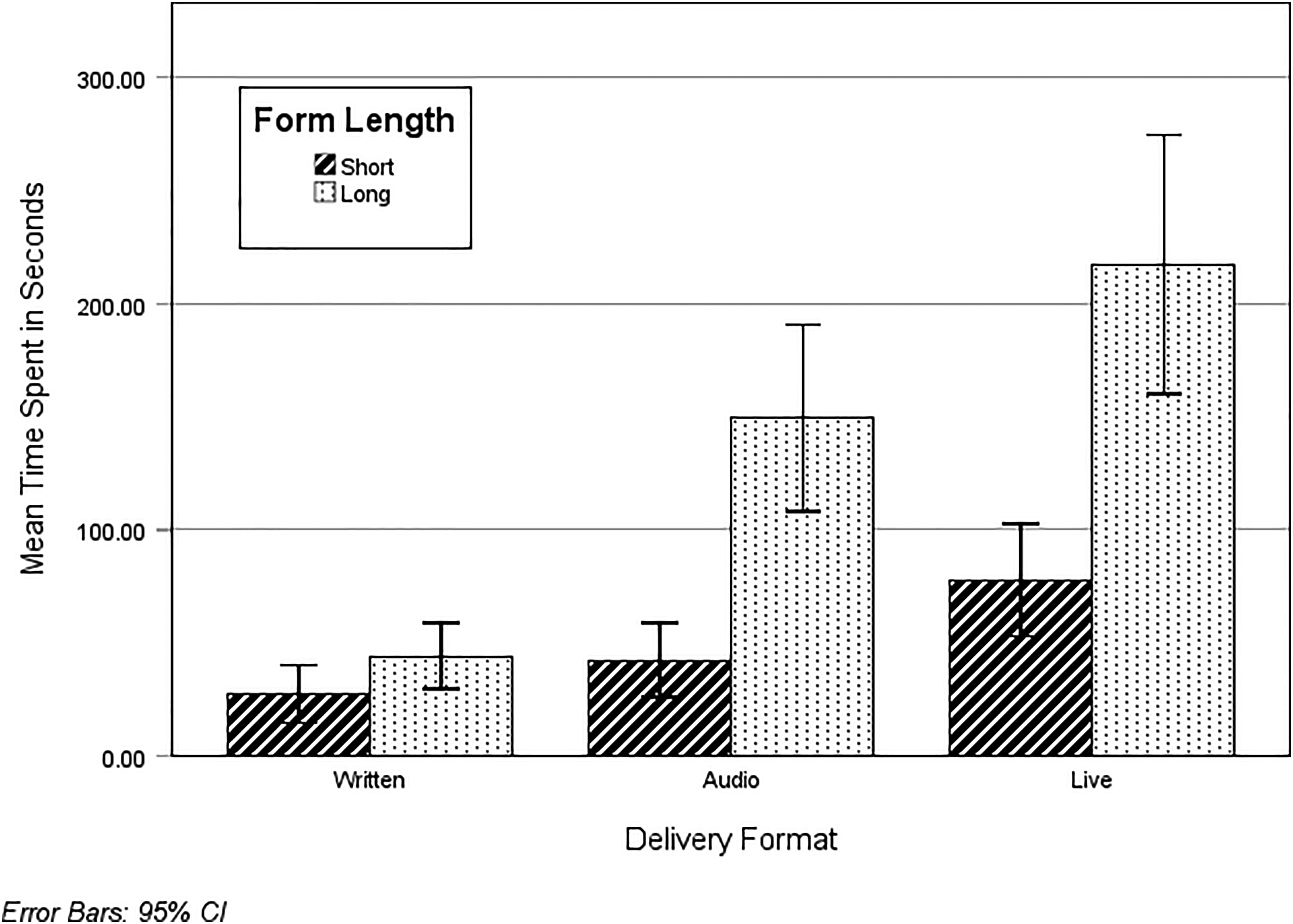

Time Spent on Consent Form

A 2 (length: short or long)×3 (delivery format: live, audiovisual, standard written) ANOVA was conducted on time spent. A main effect of delivery format emerged, F(2, 287) = 39.29, p < .001, ηp2 = .22, such that those in the live condition (M = 148.85, SD = 10.73) spent more time than those in the audiovisual (M = 90.58, SD = 8.45, p < .001, 95% CI [31.39, 85.14]) and standard written conditions (M = 35.73, SD = 7.39, p < .001, 95% CI [87.48, 138.75). Those in the audiovisual condition spent more time on the consent form than those in the standard written condition (p < .001, 95% CI [32.75, 76.95]).

There was also a main effect of length, F(1, 287) = 69.01, p < .001, ηp2 = .19, where those who received the long form (M = 134.72, SD = 7.45) spent more time on it than those who received the short form (M = 48.73, SD = 7.19), as well as an interaction effect, F(2, 287) = 12.44, p < .001, ηp2 = .08.

Simple effects (see Figure 1) indicated that in the live delivery format condition, participants spent more time on the long form (M = 219.81, SD = 15.65) than the short form (M = 77.89, SD = 14.67, t(287) = 6.62, p < .001, 95% CI [99.70, 184.13]). In the audiovisual condition, participants also spent more time with the long form (M = 138.49, SD = 12.43) than the short form (M = 42.68, SD = 11.47), t(287) = 5.67, p < .001, 95% CI [62.53, 129.09]). In contrast, form length had no effect on how much time participants spent on the form, t(287) = 1.37, p = .172, 95% CI [−8.84, 49.33].

Time spent (in seconds) on consent forms as a function of delivery format and form length.

Time spent on the consent form was positively associated with correctly following instructions, r(287) = .38, p < .001, and comprehension scores, r(293) = .27, p < .001.

Summary and Discussion

We hypothesized that the live delivery of consent forms would produce the most comprehension, followed by an audiovisual delivery and, lastly, a standard written delivery. Consistent with this hypothesis, participants in the live condition were indeed more likely to correctly follow instructions and scored higher on comprehension compared to the standard written condition. Unexpectedly, however, those in the audiovisual condition performed nearly as well. Results suggest two meaningful, yet simple, steps toward achieving informed consent; not only are live presentations of consent forms effective, but providing audio recordings, which are less labour-intensive, cost-free, and easy to implement, can improve participants’ comprehension and compliance with instructions that are on par with live presentations.

Despite the improved manipulations and measures, the unexpected null effect for consent form length replicated in Experiment 2; participants’ comprehension scores and compliance with embedded instructions were similar for both the long and short consent forms. Although shortened consent forms (Geier et al., 2021) and formatted bullet points (Perrault & Nazione, 2016) are popular suggestions among research participants, they do not consistently improve comprehension (see Antonacopoulos & Serin, 2016; Matsui et al., 2012; Schmaltz, 2007). Given that short consent forms are more concise, simple, and consequently, easier to read (Perrault & Nazione, 2016; Whitney et al., 2008), this null effect is indeed surprising and deserves further inquiry.

In all, Experiment 2 shows that changing the delivery format of consent information is any easy way to improve informed consent. However, like Experiment 1, results suggest there is still room for improvement. In the live condition, for example, 61% of participants correctly followed instructions, and 60% of them correctly answered all comprehension questions. Further, participants spent less time on the consent forms than was warranted. For instance, participants in the audiovisual condition who received the long form spent, on average, 2 min and 18 s on the consent form, nowhere near the length of the recording (6 min and 42 s). This warrants future research to investigate other interventions that will further improve the informed consent process.

Overall Discussion

Previous research has found that consent forms are typically ineffective at meeting their intended aims, namely to ensure participants understand enough information about the research to make an informed decision about their participation (Perrault & Keating, 2018; Perrault & Nazione, 2016). The current studies contribute to the literature examining interventions to improve the consent process, using a rigorous design that tests multiple interventions in single studies with fully crossed experimental designs including control groups.

Results from the current studies add to the growing body of research examining whether consent form length influences comprehension. Across two studies, shorter consent forms did not significantly impact comprehension or compliance with instructions. While this finding seems to defy logic, it is consistent with some past research (Antonacopoulos & Serin, 2016; Matsui et al., 2012; Schmaltz, 2007). Investigating potential moderators of the length effect (e.g., study type, setting, or domain) may help to resolve these mixed findings.

Experiment 1 provides the first empirical test of the effect of fixed timing on consent form comprehension. Importantly, results demonstrate that controlling how much time participants spend on the consent form improved their ability to correctly follow instructions embedded in the consent form. These results extend the findings of Krishnamurti and Argo (2016), who also had success by imposing a 5-min minimum reading time on participants but did not compare to a control group. Results from Experiment 1 also illustrate that including a short quiz at the end of a consent form improves compliance with our instructions. This replicates research by Geier et al. (2021) who suggest that the effectiveness of quizzing stems from its inherent interactivity.

Experiment 2 illustrated that, compared to standard written delivery of consent forms, live delivery of consent forms leads to greater comprehension and instruction following. Interestingly, the audiovisual condition performed nearly as well. This finding extends research by Flory and Emanuel (2004) and Farrell et al. (2014) who have examined how various types of consent form delivery formats can improve comprehension over traditional written consent forms.

Looking across the interventions, our findings suggest that increasing participants’ engagement/interaction with consent forms improves comprehension and compliance with instructions. Despite incorporating multiple interventions to improve the consent form process, however, comprehension and instruction following remained relatively low. Only 28% (Experiment 1) to 42% (Experiment 2) of participants correctly answered questions, and only about 45% (Experiment 1) to 60% (Experiment 2) of participants followed instructions. Thus, comprehension of the consent form was quite low. As other scholars have argued (e.g., Holland et al., 2013), consent processes are not meeting their moral obligations. Results from the current studies contribute to the growing body of literature suggesting that there is a need to continue to find ways to improve consent processes.

Best Practices

Our results provide evidence supporting three cost-effective interventions that show promise. Consent form comprehension can be increased by: 1) Controlling how much time participants spend on virtual consent forms by preventing them from moving ahead until they have spent a sufficient amount of time on the webpage, 2) Briefly quizzing participants about the content of the consent form, and 3) Delivering consent form content live via virtual conference call (or audio recording), in addition to the written form. In the current study, delivery format was tested with a sample of undergraduate students, limiting generalizability. Future researchers are encouraged to replicate these findings with other samples.

Limitations/Future Research Agenda

One limitation of the current studies is that we did not test the interactive effects of fixed timing, quiz, and delivery format simultaneously. Future researchers may benefit from using a fully crossed experimental design to see whether these three variables interact with each other. Moreover, because the current experiments were of minimal risk, future researchers are encouraged to extend these findings into domains in which informed consent is more vital (e.g., medical interventions).

Our behavioral measure was compliance with instructions embedded in the consent form. Although we assume that this measure indicates how carefully participants are reading, and thus understanding, the consent form, participants may have read and understood these instructions but forgot what they were supposed to do by the time they came to our dependent measures. We believe this to be unlikely because the dependent measures directly follow the consent form, but it remains a possibility, and thus, a limitation of this research. Future researchers might benefit by incorporating eye tracking software into their laboratory studies to examine whether participants are actually reading consent forms, and to identify and record participants’ visual attention during the consent process. We also encourage researchers to consider more robust behavioral measures, perhaps by asking participants to do something more substantive, such as visiting a website (online study) or placing their completed survey in a certain color of box (in-person study).

Educational Implications

Our findings add to a burgeoning body of literature suggesting that, in their current form, consent forms are ineffective and fail to meet the obligations required by ethics codes (APA, 2017; CIHR et al., 2022). Echoing Perrault and Nazione (2016), this leads to a critical question for Institutional Review Boards: Do universities care about whether prospective participants comprehend the content on consent forms? There seems to be a disconnect among ethics codes, Institutional Review Boards, and participants. Ethics codes claim that participants should understand enough about the research to make an informed decision (APA, 2017; CIHR et al., 2022), Institutional Review Boards continue to increase the length of consent forms to include extensive information linked to institutional risk, and participants seem to inherently trust universities when it comes to minimal risk social science research, choosing to skim consent forms when possible.

Altogether, this suggests a need to reevaluate institutional consent form templates and processes, and incorporate evidence-based modifications - including those tested here - to the informed consent process. This is especially needed as technology (including privacy agreements and cookie policies) evolves rapidly (Perrault & Nazione, 2016). Regardless of whether Institutional Review Boards take up current promising practices regarding improving consent form processes, researchers themselves can continuously consider and improve their own practices (Perrault & Keating, 2018). Finally, educators can embark on campaigns to help the general public learn about the importance of understanding consent forms, particularly in high-stakes contexts (e.g., medicine). Future researchers should continue to work to improve consent form comprehension so that participants can actually make informed decisions.

Footnotes

Data Availability Statement

Data are available upon request by contacting the first author.

Declaration of Conflicting Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval Statement

This research has been approved by the Human Research Ethics Board, Mount Royal University.