Abstract

Esports have become increasingly popular as naturalistic experimental settings. In large part, this popularity is due to esports helping researchers balance ecological validity and experimental control; esports provide situations in which people are naturally motivated to learn and act in a complex yet restricted environment. Since players often learn and act collaboratively, many researchers have used esports as a setting in which to study communication. However, most of this research has focused on optimizing team performance or player experience, with less work examining fundamental questions of psycholinguistics. Esports offer unique opportunities in this regard, particularly for studying psycholinguistics in the context of prior knowledge, emergent expertise, and emergent culture. The present paper describes a case study that demonstrates the benefits of using an esport as a microcosm for studying psycholinguistics and points to opportunities for further exploration.

Keywords

Introduction

A classic joke tells of a farmer whose prized hen stops laying eggs. The farmer seeks out the smartest person in town, a scientist, for advice. The scientist conducts a variety of experiments before she is satisfied that she fully understands the situation. She then goes to the farmer with the good news, saying, “I figured out how to fix your problem, so long as we assume your hen is perfectly spherical and in a vacuum.”

While this joke specifically references assumptions made by physicists, it also speaks to the broader tension between experimental control and ecological validity. For experiments to be interpretable, they must be controlled. But we often want to learn things about the real, uncontrolled world. The tension between experimental control and ecological validity arises frequently in psycholinguistics, which examines how language and psychological processes influence and relate to each other. Both phenomena are highly complex, and interactions between the two are even more so. Psycholinguists, like other scientists, often need to sacrifice some ecological validity in order to create well-controlled experiments. For example, if researchers wanted to learn about how people think and talk about a category of birds, a common approach would be to invent a fictional species of bird and give participants limited, carefully controlled information about its features (e.g., Chopra et al., 2019).

Researchers hope that participants’ behavior in such experiments yields some insight about real life. But in reality, people think and talk about birds because the topic has some relevance to them. Along the way, they naturally develop varying degrees of expertise about birds, as well as a shared cultural understanding of them. By creating a novel, fully controlled environment that focuses on an isolated instance of communication and is of minimal significance to participants, researchers risk creating an environment that bears little resemblance to how people think and talk in the real world.

In the present paper, we explain how esports provide an alternative to both traditional experiments and fully naturalistic, real-world settings, providing a unique combination of experimental control and ecological validity. In essence, this approach is a modern adaptation of the line of research that has used games, particularly chess (e.g., Chase & Simon, 1973), as naturalistic experimental settings.

Researchers have already begun to use esports as experimental settings for studying communication. For example, game design researchers have used esports environments to investigate which communication mechanisms are effective and satisfying (e.g., Dabbish et al., 2012; Wuertz et al., 2017). Similarly, industrial/organizational psychologists have used these environments to investigate how high-performing teams communicate (e.g., Richter & Lechner, 2009; Leavitt et al., 2016). Here, we argue that esports can also be used as a setting in which to study fundamental questions of psycholinguistics.

In the present paper, we focus on the opportunities that esports provide for basic research in psycholinguistics. First, we describe an example experiment in the artificial world of League of Legends, investigating how people use prior knowledge to interpret generalizations (Coon et al., 2021). We then discuss the potential of esports to provide interpretable yet ecologically valid experimental data. Finally, we consider the implications of using esports as experimental settings for psycholinguistics research in particular and cognitive science research in general.

Background: A Case Study of Esports as an Experimental Setting

Generalizations such as “mosquitoes fly” and “mosquitoes carry malaria,” are a common way of conveying knowledge, but their interpretation can vary widely.

In Coon et al. (2021), we investigated whether people's level of prior knowledge systematically changes the way they interpret generalizations. We hypothesized that (1) experienced participants would use their prior knowledge to interpret generalizations more flexibly and (2) naive participants would apply generalizations more broadly than experienced participants. We investigated this question within the environment of the esport League of Legends, where players with varying levels of authentic and quantifiable expertise naturally use generalizations in communicating about the environment.

Players in League of Legends are divided into different tiers based on their performance in ranked matches. As players learn about the game, they can perform better and rise through the ranks:

Iron < Bronze < Silver < Gold < Platinum < Diamond < Master < Grandmaster < Challenger

For this experiment, we used participants’ skill tier to measure their expertise, operationally defining League of Legends expertise in terms of players’ proficiency.

League of Legends is a team game where coordination and strategy can be just as important as individual skill. Players often discuss strategy with each other and refine their knowledge by consulting experts via guides, tutorials, or individualized coaching. Generalizations are fundamental to such discussions. The primary objective of the game is to destroy the opposing team's base, but there are also secondary objectives. To prioritize these secondary objectives appropriately, players need some sense of how a given game will unfold. Such predictions are often based on generalizations.

For example, a particularly impactful strategic consideration is the characters that players select, because characters differ in important ways that determine what players can do in the game environment. Players choose their characters from a standardized set of options prior to each game. Characters have preset attributes, so players need to combine characters strategically when constructing their team. As one particularly relevant example, some characters tend to be more useful in the early stages of the game; others are more useful in the later stages. By understanding characters’ capabilities, players can get a general sense of how a game will play out and plan accordingly.

The League of Legends game environment resets to the same initial state before each new game match, and the characters that players control are also reset. Because they start from the same initial state, any League of Legends match featuring a specific set of characters is exchangeable with any other match featuring that set of characters—at least before the match starts. We can thus measure how broadly participants think a given generalization applies by asking them how often they think it would apply across a series of iterations of the game.

Methods

We recruited experienced participants (n = 49) from League of Legends online forums and naive participants (n = 33) from an undergraduate participant pool. To qualify as experienced, participants needed to be ranked in the Silver tier (27th percentile; Milella, 2021) of players or higher. To qualify as naive, participants needed to have had no prior experience with League of Legends. While these samples were similar in terms of education and first language, there were considerable gender disparities. The sample of experienced participants was heavily male-dominated (89% male), whereas the sample of naive participants was heavily female-dominated (91% female).All participants were shown six League of Legends teams (see Figure 1) with different combinations of characters. The participant's task was to interpret generalizations made by a hypothetical knowledgeable speaker about how each team would behave. Through pilot testing, we identified 12 combinations of characters that varied in terms of how often experts think those team compositions would excel in the early or late game. In this pilot phase of the study, candidate compositions were randomly generated according to the rate at which characters are used in League of Legends and evaluated by at least 10 experienced players. Specifically, we selected two compositions for each of the six types: strong early game (denoted by E+), middling early game (E0), weak early game (E-), strong late game (L+), middling late game (L0), and weak late game (L-). Participants were asked to interpret a generalization (“this team composition excels in the early game” or “this team composition excels in the late game”) about one composition per type for a total of six trials per participant. Compositions were chosen at random, counterbalanced across participants, and presented in randomized order.

An example of the team compositions shown to participants. Each icon indicates the specific team role being fulfilled by that character.

Our dependent variable was how broadly participants interpreted the generalizations. We measured participants’ interpretations by first telling them that a knowledgeable speaker who had seen the team play 100 times against varying opposition had said, “this team composition excels in the [early/late] game.” We then asked participants what they thought the speaker had seen in those 100 games. The more broadly participants interpreted the generalization, the more positive examples they should expect the speaker to have seen. We recorded participants responses as proportions, ranging from 0 to 1. A response of 1 would mean that the participant guessed that the knowledgeable speaker had seen positive examples of the generalization in all 100 games they watched.

We also asked both experienced and naive participants whether they would themselves endorse the generalizations (a yes/no question) and to make their own prediction of how broadly the generalization would be true in a sample of 100 games. These additional questions confirmed that naive participants had no direct prior knowledge and that experienced participants’ prior knowledge varied as expected. The questions also served as a way to check ecological validity by confirming that experienced players endorsed such generalizations.

Results

We analyzed participants’ responses using a Bayesian mixed-effects analysis (Gelman & HIll, 2006), which included three considerations. First, we included components accounting for how participants’ interpretations tended to vary depending on which team the generalization was referring to and the participants’ expertise. This describes how an average participant, given their expertise and the team composition under discussion, would interpret a generalization. Second, we used a participant-level offset to account for variations in the tendencies of individual participants. For example, perhaps one participant consistently uses the higher end of the response scale, while another consistently uses the lower end. Finally, we included a component to account for trial-to-trial noise.

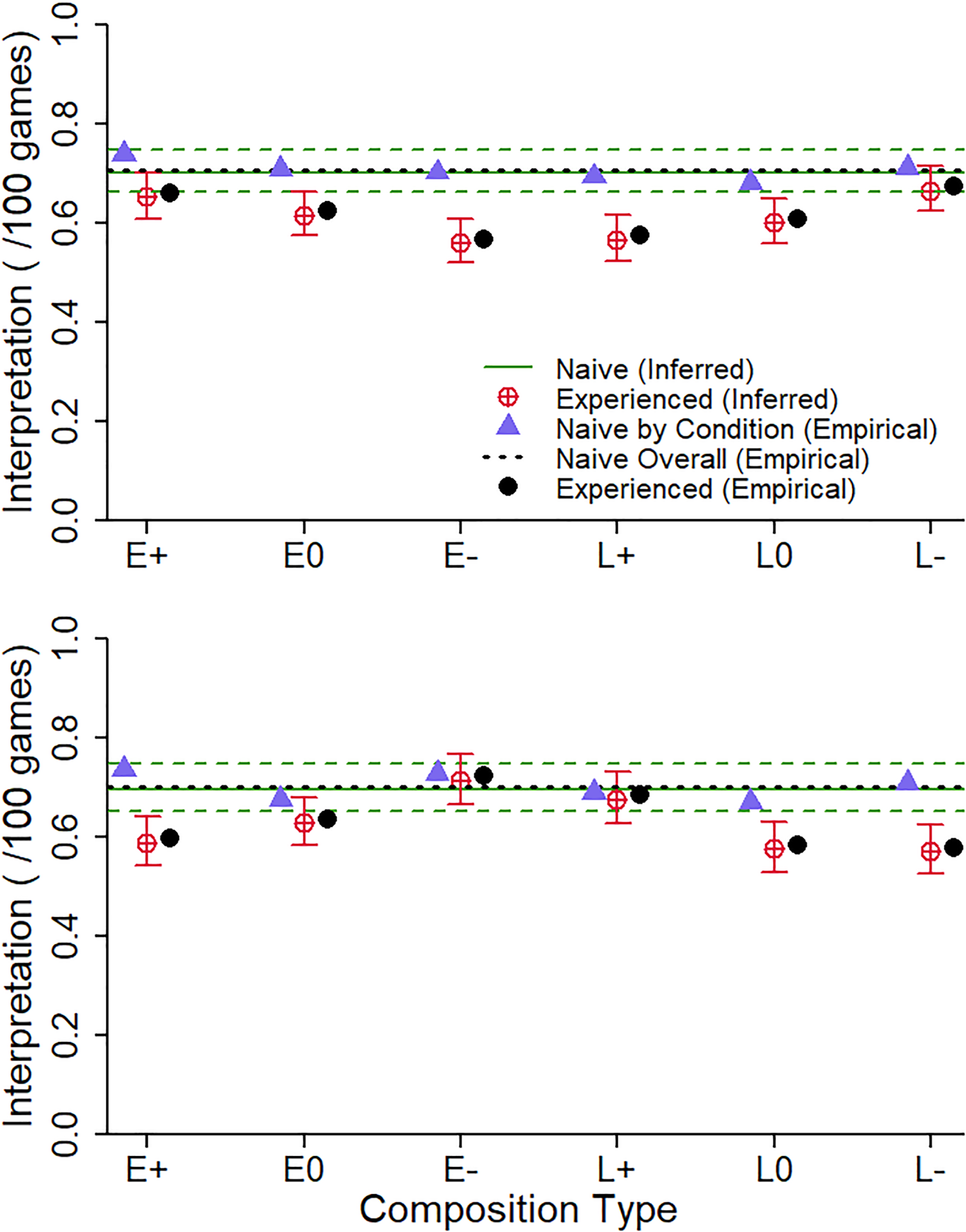

As predicted, the observed data (see Figure 2) showed that only experienced participants varied their responses systematically by composition, indicating that they drew on their prior knowledge of the game to situate the generalizations and interpret them appropriately. Experienced participants’ responses varied systematically, following characteristic U-shaped patterns, while inexperienced participants’ responses fluctuated unsystematically around the overall mean. The data also showed that, when experienced and naive participants’ interpretations diverged, it was because experienced participants interpreted generalizations as applying more narrowly.

Empirical results and model-based inferences for how experienced and naive participants interpreted early game (top) and late game (bottom) generalizations by composition type (x-axis). Error bars represent 95% credible intervals.

For complete data and analyses, see Coon et al. (2021).

Discussion

We conducted this experiment to test whether experienced and naive listeners differ in how they interpret the same generalizations, and whether such differences might lead to miscommunication. Consistent with recent work from other labs (e.g., Tessler & Goodman, 2019), we found that experienced participants interpreted generalizations flexibly, varying their interpretations based on the context. In contrast, naive participants interpreted generalizations as broadly applicable regardless of the context.

It makes sense that only experienced participants would vary their interpretations in the context of their prior knowledge of the game. Naive participants were told that the team compositions can vary in terms of their propensity to excel in the early/late game, but only experienced participants have prior knowledge to help them distinguish among specific team compositions. Accordingly, only experienced participants adjusted their interpretations. Naive participants, having no basis for modulating interpretations, applied generalizations broadly across the board.

One limitation of this study is the gender disparity between the samples of naive and experienced participants. Linguistically, there is no reason to expect gender to influence our results, but gender could interact with game-specific knowledge in a number of ways. Male and female players develop their gaming skills—and presumably their knowledge about the game—at the same rate (Ratan et al., 2015), but female players disproportionately use female characters and may thus have expertise about different aspects of the game than males do (Ratan et al., 2019). Gender may also influence social aspects of both expertise and communication, particularly since there has historically been considerable gender discrimination in the esports community (Kuznekoff & Rose, 2013; Ruvalcaba et al., 2018). While this experiment can be replicated with gender-matched samples, it is a limitation of the esports setting that the gender of participants is so skewed. Notably, we see that this gender imbalance is equally a product of the fact that our university participant pool is skewed toward women.

Further research can help clarify when differences between experienced and naive listeners’ interpretations of generalizations might lead to miscommunication. Our results suggest that miscommunication might arise from naive and experienced listeners interpreting the same generalization differently. Expert speakers could mitigate misinterpretations by tailoring their use of generalizations to fit their audience's expertise. However, experts are biased toward overestimating their audience's expertise (the curse of knowledge; Camerer et al., 1989), so it seems unlikely that they would adjust sufficiently.

Rather than different interpretations being a case of misunderstanding, it may actually be adaptive for naive listeners to err on the side of overestimating the applicability of generalizations. Such overestimation may be a rational way for naive listeners to make the most of what little information they have (Griffiths et al., 2019). For example, if all you know about mosquitoes is that “mosquitoes carry malaria,” avoiding mosquitoes whenever possible is rational. This perspective on rationality may point to a general learning trajectory wherein people apply new knowledge broadly and narrow their interpretations as they learn more.

The Value of Esports as a Research Tool

This League of Legends study demonstrates how esports can help researchers balance ecological validity with experimental control. Esports provide an environment that is complex and yet has well-defined characteristics. They also provide a setting in which prior knowledge, expertise, and culture emerge naturally.

Complex yet Well-Defined Environment

The case study above takes advantage of a complexity bottleneck in League of Legends. During each game of League of Legends, there are infinite permutations as to how players can behave. Prior to each game, however, players must choose which character they will use from a clearly delineated set of options. In making this choice, players factor in how they expect a given game to play out, with all the complexity that reasoning entails. Furthermore, at last count, there are 170 characters for players to choose from. With one team of five players choosing characters, there are more than one billion possible combinations, although in practice, this number is somewhat reduced by some characters having relatively specialized roles in a team. By setting the experiment in this phase, we can better understand what players are thinking and how they share those thoughts with others.

Importantly, this complexity can help us study the impact of expertise because it leaves plenty of room for differences in prior knowledge. However, the complexity also raises the difficulty of how to go about selecting experimental stimuli from more than a billion options. We chose not to generate compositions randomly because we wanted to ensure that our stimuli would elicit a full range of responses. In other words, we wanted some compositions that clearly excelled in the early/late game, some that clearly did not, and some that were borderline. Also, not all of the one billion possible options are equally likely or even equally sensible choices. Fortunately, there are publicly available statistics about the rate at which each character is chosen. We used these statistics to select sensible compositions more efficiently.

Another benefit of studying generalizations in this environment is that it helped us delimit the topic of the relevant generalizations. In the real world, it can be difficult to know what entity people are referring to when they use generalizations. Statements like “mosquitoes carry malaria” refer to mosquitoes as a general concept rather than a specific set of mosquitoes. But what is the category of things in the world that this concept picks out? There are many species of mosquitoes, some of which carry malaria and some of which do not. Within those species, every individual is different. When we say “mosquitoes,” we refer to some idealized essence of “mosquito-ness” that may not actually exist (Gelman, 2003). In contrast, the characters in League of Legends get reset to the same initial state before each game. When discussing a specific set of characters in that initial state, we know precisely what entity we are talking about, because the characters are defined by properties assigned in the underlying computer code.

Using League of Legends as an experimental setting allowed us to select an authentic situation that balances complexity and experimental control. Our stimuli were sufficiently complex to provide authentic examples of generalizations and associated higher-level reasoning. The environment was sufficiently well-defined for us to (1) ensure that the stimuli were ecologically valid, (2) understand the strategic context (i.e., available choices) in which the generalizations were being considered, and (3) specify the entities being referenced by those generalizations.

Studying Prior Knowledge and Expertise Using Esports

Our experimental question focused on how familiarity with a domain, or lack thereof, influences how people interpret generalizations about that domain. We thus needed to establish whether a given participant was familiar with League of Legends. We could have relied on common approximations, such as asking participants to demonstrate their knowledge on a test or report the extent of their experience, but League of Legends provides a more convenient option in the form of a metric for players' skill. As with many zero-sum games, this metric is based on ELO rating (Elo, 2008), which is an objective measure of performance originally created for chess. Players’ ELO rating increases if they win and decreases if they lose, with bigger changes after surprising results (i.e., winning against a strong opponent or losing against a weak one). In the case of League of Legends, ELO is used to divide players into different tiers, which is how we measured expertise in the case study.

By using these tiers as a metric for expertise, we defined expertise in terms of proficiency; you are an expert if you are good at the game. This is far from the only way to define expertise. Players can know a lot about the game without being particularly skillful or having much social authority in the community. However, these three considerations are presumably correlated in most situations. Higher-ranked players are more likely to know more about the game and to have more authority when talking about it. And while players may have their own notions of how to have fun, being good at the game is surely a central goal.

We also implicitly assumed that higher-ranked players know more about the game than lower-ranked players. For subtle distinctions in rank, such an assumption may be problematic, but for the case study, we simply needed to divide participants into two groups based on whether or not they had directly applicable prior knowledge. For those who did, we recruited players who ranked as Silver level (27th percentile; Milella, 2021) or higher. For those who did not, we recruited participants who had no prior experience with the game. Since we can safely assume that players ranked in the Silver tier or higher are well-acquainted with League of Legends, this operational definition was satisfactory for our purposes.

In focusing solely on the difference between experienced and naïve participants, we only scratched the surface of the opportunities afforded by the experimental setting. Our experienced sample included players ranging in skill from Silver to Grandmaster (99.96th percentile; Milella, 2021). By placing them in a single group, we ignored a wide range of expertise, which could be explored with a larger sample. We also could have recruited players ranked lower than Silver level to provide insight into the transition from novice to expert. Such players are difficult to recruit because they are less likely to seek out game-specific forums. Nevertheless, examining more nuanced distinctions in expertise is an intriguing option.

Studying Emergent Culture Using Esports

In our case study, we needed to make sure that our communication scenarios were properly situated in an authentic culture. League of Legends provides such a culture because players often work collaboratively and can tap into a wealth of pre-existing knowledge accumulated by the larger gaming community. In the process, the community naturally develops a shared system of meaning. Fortunately, this culture is narrow in scope since players are focused on a game environment, which is itself restricted.

We made sure that each statement was representative of how players communicate in this cultural context by situating the statements in the time between games. Without a specific match to reference, players discuss the strengths and weaknesses of various characters in an abstract sense. Situational variables, such as the relative skill of opponents, get subsumed within the generalizations. For example, a team composition might generally do well in the early game, but skilled opponents might be able to prevent this from happening. Such a consideration is part of why “this composition excels in the early game” is a generalization rather than a deterministic statement of fact. If players do not have a specific opponent in mind, the potential impact of relative skill gets factored into their thought process about generalizations as a source of exceptions to the general trend.

To simplify our experiment, we used predetermined statements. Such a setup is still realistic because players often learn from noninteractive sources like forum posts and expert guides. However, it does artificially restrict the choices available to participants. In principle, we could have studied open-ended and dynamic interactions, particularly since the structure of League of Legends would be conducive to such an experimental design. As with other team-based computer games, players often speak to each other. In League of Legends, there is a phase before each game in which players are deciding which characters to select. During this phase, players need to align their expectations about how characters are likely to perform during the forthcoming game, presenting an excellent opportunity to observe generalizations in action.

Limitations

However, there are some limitations on using esports as experimental settings for psycholinguistics. First, while we hope to have argued successfully that esports strike a nice balance between ecological validity and experimental control, any attempt to make an experiment more ecologically valid introduces the possibility of increased noise relative to a controlled experimental setting, and esports are no exception. Second, what we ask participants to do needs to make sense in the context of the structure and culture of the game. Unlike laboratory-controlled experiments, we cannot design a situation to our exact specifications. Instead, we must work with what is already provided by each game, carving out procedures and stimuli from what would happen naturally.

There are also limitations in generalizing results to a broader population. Most fundamentally, players are self-selecting. People decide whether, and how seriously, they want to play, so there may be consistent differences between players and nonplayers beyond experience with the game. Comparisons between existing players and nonplayers or between experts and non-experts are only correlational. Experimenters can recruit nonplayers and randomly assign some to undergo training (e.g., Richter & Lechner, 2009), but such an approach is labor-intensive. Furthermore, findings from such an experiment may not generalize to people who would choose to play the game on their own and are therefore ineligible for such a study.

On the bright side, the results of our case study indicate that experienced players and undergraduates have similar demographics, at least along some dimensions (i.e., age, education, languages spoken). However, the sample of experienced players was heavily male dominated. Such a disparity is unfortunately symptomatic of a lack of gender diversity in the esports community at large (Kuznekoff & Rose, 2013; Ruvalcaba et al., 2018). This situation may improve, but, in the meantime, researchers must be cognizant of potential gender disparities when extending findings to the broader population.

Conclusion

The features which made League of Legends so suitable for studying our specific research question also apply to a variety of esports and a variety of related questions. Esports provide environments in which people are highly motivated to think and talk. The environments are rich, and yet they are self-contained and have well-defined characteristics, opening up exciting possibilities for designing experiments. As with any experimental setting, esports have limitations. However, they provide unusual opportunities and shortcuts for balancing ecological validity and experimental control.

One of the main reasons for esports’ popularity is that they present rewarding challenges to the people who play and follow them. Yet for all their complexity, the environment is founded on a set of explicit rules specified by the underlying computer code. Games in general are useful settings for studying the impact of prior knowledge because the games’ rules help restrict both the learner's goals and the relevant experiences they might have had.

We are not the first to use game environments to study expertise. Already in the 1970s, researchers were using chess for this purpose (e.g., Chase & Simon, 1973). This research famously showed, for example, that chess masters’ remarkable memory for piece positions only applies to sensible game states (i.e., those that have been produced through play rather than randomization). Chess masters are no better than novices at recalling nonsensical details, suggesting that narrative and/or causal reasoning may be relevant to their encoding of those memories. Work on analogous questions in esports has already begun (Bonny & Castaneda, 2016; Lindstedt & Gray, 2019).

Esports can also help us study the hierarchical nature of prior knowledge (Kemp et al., 2007). This hierarchical nature is hinted at by the behavior of naive participants in our case study (Figure 2). Naive participants interpreted generalizations as being more broadly applicable than experienced participants did, but not to absurdly so. They likely had the sense that no game would be designed such that the generalizations would be true in virtually every situation. Thus, even naive participants used prior knowledge, albeit rather general prior knowledge—too much certainty would make outcomes too easy to predict for the game to be fun. Experienced participants also had this very general knowledge about games, but within that knowledge of games, they also have a sense of how this specific game works, of how characters within the game work, and maybe even how a specific combination of characters works.

Esports offer unique opportunities to study hierarchical prior knowledge through games which share their general structure but differ in their specific feature. For example, Defense of the Ancients (DOTA), is identical to League of Legends in its basic structure but different in its specifics. By recruiting experienced DOTA players for a League of Legends study, the researchers could tease apart the effects of general prior knowledge (common to both DOTA and League of Legends) from those of game-specific knowledge. Given the landscape of video games, this opportunity is not unique to League of Legends. Sequels create games with similar general mechanics but different specifics. Games are also readily divided into genres as developers borrow mechanics from each other. In all of these situations, players with experience in similar games already have general prior knowledge that may help inform and accelerate their acquisition of more specific prior knowledge.

Esports also offer unique opportunities to study expertise. Prior knowledge and expertise are related concepts, and indeed the two are often used interchangeably because experts can simply be defined as those who have accumulated more prior knowledge (e.g., Helton, 2007). Yet experts are also expected to be particularly effective at using their prior knowledge, which is not necessarily the same thing as having a lot of it. Expertise is thus often characterized by a fundamental shift in how prior knowledge is organized (Chi et al., 1981). Moreover, expertise can be thought of as a social phenomenon (Mieg & Evetts, 2018): someone is an expert if others recognize their authority as an expert.

The standard approach to studying expertise in the lab is to create a simplified approximation, allowing researchers to manipulate or control participants’ expertise. Researchers can then precisely quantify expertise, but they must also commit to a specific definition of expertise. For example, one common approximation of expertise is for researchers to vary the amount of information given to participants (e.g., Hawthorne-Madell & Goodman, 2019). With such a procedure, researchers implicitly assume that expertise is a function of the quantity of one's experience. In committing to a particular definition of expertise, researchers risk creating an invalid approximation.

Choosing how to define expertise is less problematic in a naturalistic experimental setting because such a setting guarantees that the underlying expertise is at least authentic to that setting. However, researchers do still need to commit to a metric for expertise. How would we go about establishing whether someone is a mosquito expert? If we think of expertise as a social phenomenon, an appropriate operationalization might be whether others have conferred credentials upon a participant, such as prestigious degrees or publications. The credentials we identify as relevant would be largely subjective. Is it more important for an expert to have a PhD or numerous publications? If expertise is instead considered a cognitive phenomenon, it might be more appropriate to test knowledge or skill. In this case, the contents of the test would often be subjectively selected. Is it more important for an expert to be able to identify different species of mosquitoes or to explain their physiology?

Esports provide situations in which expertise develops naturally and yet researchers have a reasonable chance of measuring it objectively. As such, considerable research has already been conducted looking at how players become experts (e.g., Bonny & Castaneda, 2017; Röhlcke et al., 2018), how their expertise impacts the way they engage with a given game (e.g., Reeves et al., 2009; Bonny & Castaneda, 2016), and whether the skills they develop transfer to other domains (e.g., Green & Bavelier, 2006; Seya & Shinoda, 2016). Players are naturally self-motivated to find information that is relevant to their goals and ignore that which is not. In the process, they have the time and motivation to reorganize and grow their knowledge. They also interact socially with other players who have varying levels of expertise. As players become more accomplished within the environment, there is typically a metric tracking their progress, making it relatively simple to measure their expertise. While such a metric is not perfect, given that it exclusively measures performance rather than knowledge or social authority, it is far more convenient than the approximations (e.g., tests, surveys, observations, interviews) that would typically be available for measuring naturally emerging expertise.

Esports also provide situations in which players are communicating in the context of a naturally developing culture. That culture is focused on learning about an environment with well-defined characteristics and a unified goal, making it simpler to ensure that experimental materials are appropriately situated within that culture. The environment in esports restricts the decisions that players can make in response to cultural factors, and it also provides a standardized reward structure. Researchers have already begun to use esports as microcosms in which to observe a variety of cultural phenomena, such as stereotype threat (Kaye et al., 2018) and social systems for regulating behavior (Kou & Nardi, 2013; Kou & Nardi, 2014). Numerous studies have also examined the cultural dynamics of cooperative play, both in terms of stable teams developing a system of shared meaning specific to themselves (e.g., Richter & Lechner, 2009; Dabbish et al., 2012) and the formation of ad-hoc teams in the context of the general culture of the game (e.g., Kou & Gui, 2014; Kim et al., 2016).

Esports are particularly useful for studying communication in these naturalistic cultural contexts. Some games provide players with a set of streamlined communication options, which allows researchers to observe how players use a communication system that is already controlled (e.g., “pings”; Wuertz et al., 2017). Players will also sometimes communicate verbally, allowing researchers to observe players’ use of language in a controlled context—typically a single audio channel where people discuss a shared objective reality, which happens to be defined and recorded by a computer. As esports become increasingly popular, they offer researchers a way to study how players communicate to each other within and about the games. In doing so, they present opportunities to (re)examine fundamental questions in psycholinguistics.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.