Abstract

Lethal Autonomous Weapon Systems (LAWS) have the capability to survey the environment, determine which objects should be targeted, and act on that determination, improving the likelihood of achieving mission objectives. There is a risk that the inappropriate deployment of LAWS will result in significant, unintended collateral damage. A scoping review was undertaken to identify research concerning the factors that impact operators’ decisions to deploy LAWS. Understanding these factors could avoid inappropriate deployments, enabling small military forces to safely and effectively contend with larger forces. Databases used for a primary scan included Scopus, ProQuest, and Web of Science. Of 642 screened publications, none directly examined the decision-making processes of field operators tasked with deploying LAWS. Rather, the majority of publications referred to the governance, design and the development of LAWS. Drawing on the Socio-Technical Framework (SOTEF), gaps relating to operators’ decision to deploy LAWS were identified, including organisational culture, training, system characteristics, human factors, and operational demands. These gaps in the literature provide opportunities for future research in the field.

Keywords

Introduction

Uncrewed vehicles have proven useful in a range of industries, including the military (Ayamga et al., 2021; Karber & Thibeault, 2016; Khalilzada, 2022). Historically, uncrewed vehicles, including lethal variants, have been piloted remotely by operators located outside the battlefield (Dowd, 2013; Kindervater, 2016). While these uncrewed vehicles have assisted in the completion of mission objectives, they are not without challenges.

Uncrewed vehicles that require real-time teleoperation, typically referred to as ‘drones’ (e.g. General Atomics’ ‘MQ-9 Reaper’), are associated with significant cognitive demands. For example, one or several operators are required to coordinate flight paths, alter altitude, scan for hostiles, and release weaponised payloads. The cognitive demands associated with uncrewed vehicles that require real-time teleoperation are thought to contribute to the high rates of burnout amongst uncrewed vehicle operators (Chappelle et al., 2014).

The cognitive demands associated with the teleoperation of uncrewed vehicles could be alleviated with Artificial Intelligence (AI). It should be noted that a universal definition of AI is still developing (see Schraagen, 2023). However, there appears to be an emerging consensus that AI refers to a computerised system performing tasks that are otherwise associated with intelligent beings (Copeland, 2023). Expanding this definition, there are five characteristics, identified by the United States Department of Defence (2019), as being common to AI-enabled machine agents.

First, AI-enabled machine agents perform tasks in varying and unpredictable circumstances without significant human oversight. Second, AI-enabled machine agents can learn from experience and improve performance when exposed to data sets. Third, machine agents can solve tasks that require human-like perception, cognition, planning, learning, communication or physical action. Fourth, AI-enabled machine agents are capable of thinking or acting like a human (e.g. neural networks), and finally, machine agents can act rationally to achieve goals using perception, planning, reasoning, learning, communicating, decision-making, and acting.

Uncrewed vehicles with weaponised payloads that are equipped with AI are referred to as ‘Lethal Autonomous Weapon Systems’ or LAWS. A current, close approximation of LAWS is the Close-In-Weapon System (CIWS; Horowitz, 2021). In this case, a human operator can set this system to ‘automatic’ mode, which allows the CIWS to autonomously intercept missiles that it detects.

Lethal systems that use AI and that are currently used by operators in the field, such as AeroVironment’s ‘Switchblade’, require human oversight and can operate as a semi-autonomous system. However, Wahid Nawabi, the Chief Executive Officer of AeroVironment’s ‘Switchblade’, has stated that the Switchblade system could operate fully autonomously (Bode & Watts, 2023). Therefore, there is a possibility that operators in the field could be deploying LAWS in the future, with the potential that human oversight may be removed once the operator decides to launch the system. This gives significance to the global effort to establish principles of Meaningful Human Control (MHC).

While a universally accepted definition of MHC is still emerging, the common principle underpinning MHC is that human judgement should be preserved. However, this does not necessitate complete human control over AI-enabled machine agents. Rather, a universal definition of MHC will likely involve a balance between using AI-enabled machine agents, such as LAWS, effectively, without producing negative unintended consequences.

Santoni de Sio and den Hoven (2018) describe two characteristics that AI-enabled machine agents should exhibit to preserve human judgement and therefore, MHC. These are that the actions/decisions of AI-enabled machine agents should be traceable to at least one human in the chain of design and operations, and that the impending action/decision of AI-enabled machine agents should be trackable so that a human can intervene, especially for moral reasons or where an error is likely to occur.

The Socio-Technical Feedback Loop

Despite the requirements for MHC, human judgement in the actions/decisions of AI-enabled machine agents are likely to manifest differently at different life-cycle stages of the machine agent. For example, a Head of State might consider legislation as underpinning trackable, newly purchased, AI-enabled machine agents, where an engineer might consider a warning indicator as crucial to a trackable agent during development. The Socio-Technical Feedback (SOTEF) loop (Schraagen, 2023) is a theoretical framework that considers relevant stakeholders during different lifecycle stages of AI-enabled machine agents and their association to MHC.

The Governance Loop

The SOTEF consists of four hierarchical, inter-related loops. The overarching loop relates to governance, with the key stakeholders comprising policy-makers or legislators, whose key artefacts to support MHC include laws, policy, and defining ethical goals. For example, the United States Department of Defence (2019) has proposed five principles with the intention to assure ethical ideation and development in the use of AI-based weapons.

The first two principles require that humans exercise deliberate steps that produce ethically sound judgement in the development, use, and outcomes of AI-based weapons, so that inadvertent harm to society does not occur through unintended biases. Principles three and four stipulate that AI-based weapon development should be transparent and auditable, and that the safety, security, and durability of the system is tested and assured across an entire life cycle within the domain of use. Finally, the AI-based weapon should possess the ability to detect and avoid unintended harm, including the ability to disengage or deactivate weapons when operational circumstances change.

The Design Loop and the Development Loop

The loops subordinate to governance are the design loop and the development loop, respectively. These loops have been combined as they share an inherent chronology regarding ideation, design, prototype testing, and manufacture. The key stakeholders in these loops include system architects, engineers, subject matter experts, operational users, and manufacturers. The key outputs in the design and development loops relating to MHC are an analysis of ethical, legal, and societal requirements, setting constraints, and ethical goal function.

Public perception has an important role in the development and adoption of ethical AI. Members of the public require that authorities and organisations actively seek and incorporate societal values (Crawford, 2021), which can impact whether the public engages positively with AI-driven ventures (Sartori & Bocca, 2022).

Sengupta et al. (2024) report key public perceptions and sentiments regarding AI-based systems through an analysis of an internet discussion forum on the social media platform, Reddit. The discussion occurred in a forum specific to AI ethics, suggesting that the participants who were offering opinions on AI ethics were actively interested in the topic.

Sengupta et al. (2024) observed that perceptions towards machine consciousness is a pertinent concern for members of the public. That is, members of the public queried how an AI-based system could feel and understand pain. Without being able to experience pain, members of the public were doubtful that an AI-based system could correctly reason in an ethical dilemma, such as choosing whether to run over an animal or swerve, thereby placing the vehicle occupant in danger.

Sengupta et al. (2024) also noted the public perception that AI-enabled machine agents should embody context-specific, ethical reasoning. For example, an AI-based surgical robot will embody different ethical reasoning in comparison to an AI-based system controlling a motor vehicle. This suggests that there cannot be a universal ‘ethical AI algorithm’, and that designers will need input from subject matter experts concerning the ethical dilemmas in their specific domain (Omrani et al., 2022). Overall, it appears that there will be an important link between societal perception, ethics, and eventually, the legal requirements associated with the adoption and use of AI-enabled machine agents, like LAWS.

The Operation Loop

The final loop in the SOTEF is the operation loop, in which operational end-users are a key stakeholder. Typically, remotely located pilots are the operational end-users of uncrewed vehicles. This is likely to change with the advent of LAWS because contemporary cyber, space, and electronic weapons can disrupt the global communications required to remotely pilot uncrewed vehicles (April et al., 2021). One solution is to consign the authority to deploy LAWS to the operator in the field, removing the reliance on global communications and providing greater assurance of operational support to operators in the field. If this occurs, it is important to understand operators’ perspectives on LAWS so that LAWS can, and will be deployed appropriately, even during high-stress operational events.

Galliott and Wyatt (2020, 2022a, 2022b) investigated the potential operational constraints of LAWS perceived by operators in the field. Australian Defence Force trainee officers were presented with humanitarian/non-conflict and wartime/conflict operational scenarios in which lethal (i.e. LAWS) and non-lethal uncrewed vehicles were deployed. Trainees were aware that the uncrewed vehicles were ‘self-governing’, having the autonomy to survey the environment, determine an appropriate action, and then perform that action.

In these scenarios, the trainees were not responsible for deciding to deploy the uncrewed vehicle. Rather, trainees were asked if they were willing to conduct a military operation while the uncrewed vehicle was present. Trainees were also asked to consider the perceived risks and benefits that uncrewed vehicles could provide to each scenario. The findings revealed a contrast in the preference to operate alongside uncrewed vehicles, depending on whether the system was weaponised.

Trainees were generally willing to deploy alongside non-lethal uncrewed vehicles in humanitarian/non-conflict scenarios. However, the majority of trainees expressed an unwillingness to deploy alongside LAWS, even during wartime scenarios, since LAWS were perceived to be associated with a high risk of collateral damage. Further investigation revealed that this perception typically originated from concerns that LAWS could malfunction, leading to a loss of human control, inadequate safety controls, and/or inaccurate targeting.

The outcomes of Galliott and Wyatt (2020, 2022a, 2022b) suggest that future operational end-users are greatly concerned with unintended negative outcomes arising from the use of LAWS. Ultimately, the perception that unintended negative outcomes could occur from using LAWS could prevent LAWS from being deployed, even when it is appropriate. Instead, it may be preferable to deploy military personnel to achieve mission objectives, placing humans in circumstances that carry an unnecessarily high risk.

Despite the concerns identified by Galliott and Wyatt (2020, 2022a, 2022b), LAWS present a number of advantages in comparison to alternative in-service explosive systems. For example, LAWS provide greater assurances for safe engagement as they can be aborted once initiated, in contrast to current in-service explosive systems. LAWS also has the potential to reduce human casualties and increase precision on the battlefield, since the deployment is based on timely and field-oriented intelligence (MacIntosh et al., 2021). LAWS could also significantly assist small military forces to contend, and potentially defeat, a larger hostile force (e.g. see de Boisboissel, 2015's hypothetical scenarios).

In summary, there is a clear need to identify the critical factors that operators in the field might consider in their decision to deploy LAWS during complex and dangerous operations. An understanding of these factors could assure that LAWS are considered appropriately as an option to achieve military objectives. Latent risks associated with the inappropriate use of LAWS will remain unidentified in the absence of an understanding of the critical factors in the decision to deploy LAWS. Further, the inappropriate use of LAWS could lead to LAWS being disavowed or disused unilaterally, which would negatively impact the safety and effectiveness of military personnel during military operations.

The Current Study

There are currently no systematic nor scoping literature reviews that detail the factors that aid or hinder a tactical operator’s willingness to deploy LAWS. Therefore, the aim of the present scoping review was to address the following research questions: (1) What factors aid tactical operators’ decisions to deploy LAWS? (2) What factors hinder tactical operators’ decisions to deploy LAWS?

Method

This scoping review was conducted in adherence with the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA) extension for scoping reviews (PRISMA-ScR; Tricco et al., 2018). It was registered with the Open Science Framework (OSF) on the 1st of March 2024.

Eligibility Criteria

Inclusion and exclusion parameters were developed by the primary researcher prior to database searches. These parameters were applied as part of an initial screen (title and abstract) to determine whether a publication yielded by the database search qualified for a full-text review. Inclusion and exclusion parameters were formatted according to the PRISMA 2020 framework for abstracts (Page et al., 2021). This framework requires setting inclusion and exclusion criteria for the following categories: Populations, Interventions, Comparisons, Outcomes, and Study Characteristics (PICOS; Page et al., 2021).

Populations

Population is defined as the group of participants characterised by specific demographic or clinical characteristics.

Interventions

The intervention that is relevant for the present scoping review relates to an experience, rather than an experimenter-controlled independent variable.

Comparisons

The comparison is the baseline or reference point against which the experience is being evaluated. For example, this might be a control or placebo group in an intervention-based study. It was decided to remove any comparison criteria since LAWS are nascent and therefore, their application in warfighting is also emerging. In addition, foregoing the requirement for a baseline comparator was considered suitable for capturing a wider pool of publications associated with LAWS (e.g. observational studies, trial reports).

Outcomes

Outcomes refer to the results or effects measured or assessed because of the experience.

Study Characteristics

Study characteristics refers to research methodology used to answer the research question.

Information Sources

Gusenbauer and Haddaway (2020) compared 28 commonly used databases on 27 measures of quality (e.g. primary subject matter, size, accuracy of search based on search terms). The results of this comparison suggest that Scopus, ProQuest, and Web of Science databases are suitable for a primary scan of publications for the purpose of a scoping review (see also Chadegani et al., 2013; Schotten et al., 2017).

Consistent with Gusenbauer and Haddaway (2020), Google Scholar was also used as a supplementary database search. Specifically, Google Scholar’s forward citation search (i.e. ‘cited by’) was used to investigate the existence of newly published standalone articles that cited publications identified in the primary database scan, especially those that appeared to be highly relevant to the research question.

Search Strategy

The following search terms were used: (((uncrew* or autonomous or remote* or unmanned or UAV or LAWS or UXV or RPV) NEAR/5 (lethal or weapon*)) AND (deploy* or command or authority or dec* or willing*)). These search terms represent three information conditions. The first condition, which relates to the uncrewed vehicle, was necessary to capture the wide range of terminology associated with uncrewed vehicles. The second information condition was that the uncrewed vehicle was weaponised (i.e. lethal). The final information condition required that lethal uncrewed vehicles be related to the decision, authority or willingness to deploy a LAWS.

Publication Selection

All publications identified by the search terms were initially screened by the online application, Covidence, for title and abstract duplicates. After removing duplicates, the primary researcher screened the title and abstract of the remaining publications. Publications that met the PICOS inclusion and exclusion criteria at the initial screen were then selected for full-screen review.

Data Extraction

A framework for data extraction was developed to include: 1. Reference source: Publication title, abstract, publication year, and published journal. 2. Study participants: military personnel (Yes / No), LAWS operator (Y / N). 3. Relevance to the SOTEF: (1) Governance loop, (2) Design loop and Development loop, and (3) Operation loop.

Bias

The primary author screened and coded the publications that were eligible for full-text review (i.e. initial screen). The two other researchers each independently screened and coded a randomly selected sample (10%) of publications during the initial screen. Any disagreements concerning whether publications progressed to full-text review were resolved through discussion and mutual agreement by the full research team.

Results

Publication Selection

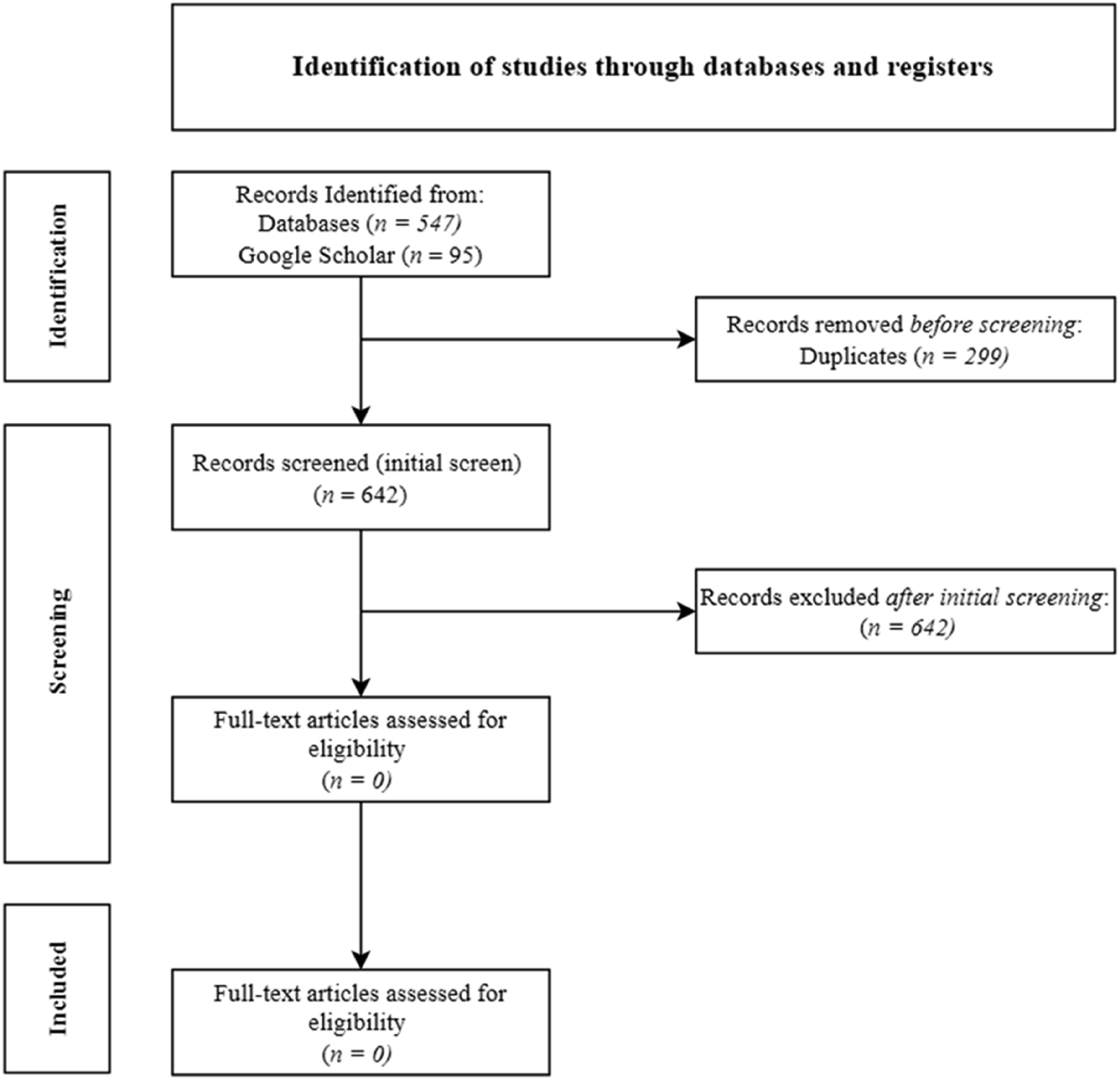

Figure 1 shows the decision process from initial publication yield to final inclusion. A total of 941 publications were retrieved from primary databases; Scopus (n = 377), Web of Science (n = 187), ProQuest (n = 370), and supplementary database, Google Scholar (n = 7). Covidence removed 299 duplicates, resulting in 642 publications that were included in the initial screen. During the initial screen, no publications immediately qualified for full-text screening. That is, no publications directly investigated the critical factors in operators’ decision making in deciding to deploy LAWS in combat. PRISMA-ScR flowchart for the identification of studies.

Details of the Publications at Initial Screening

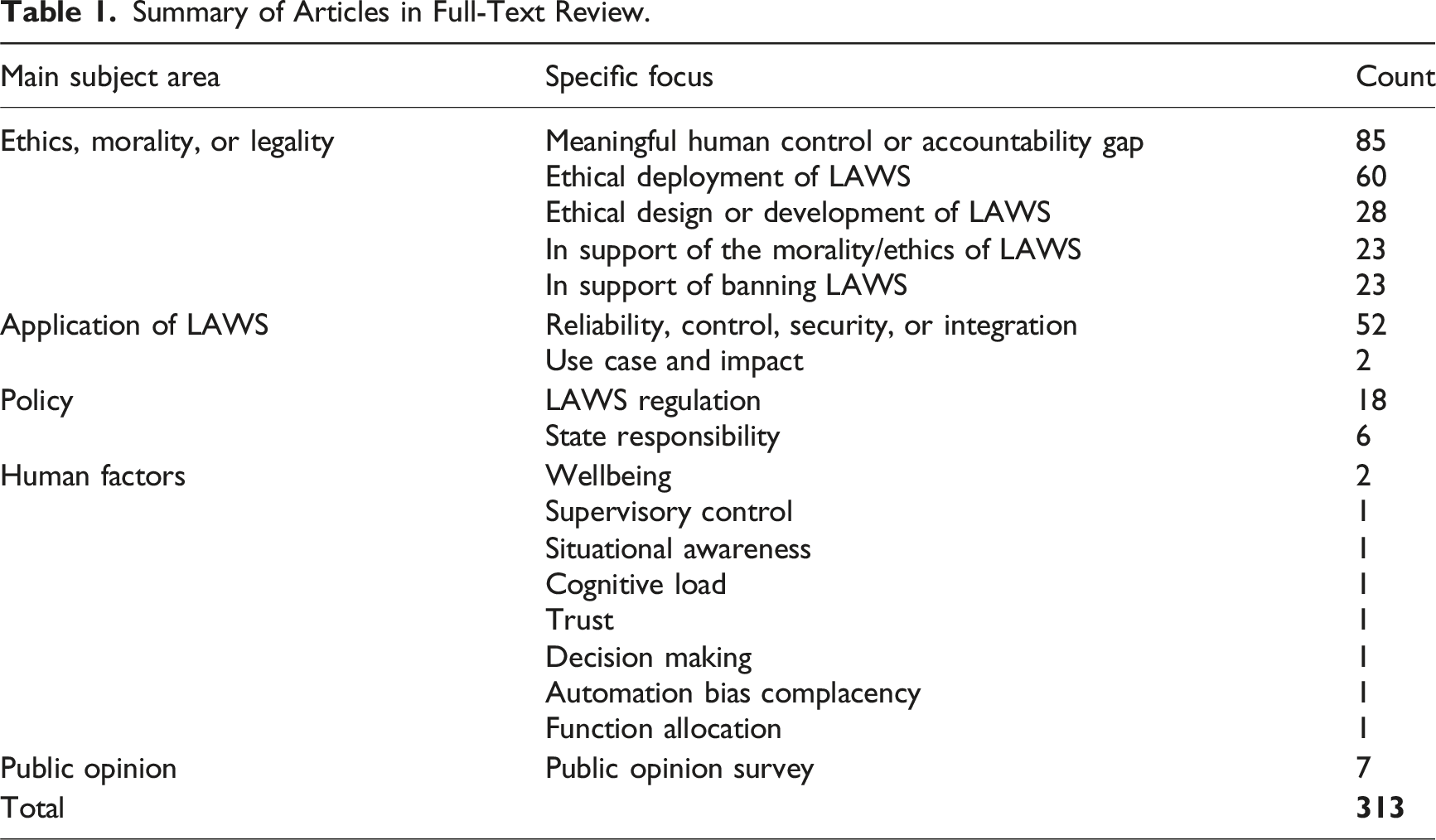

Summary of Articles in Full-Text Review.

The Inclusion of Military Personnel and Current Uses of LAWS

Verdiesen et al. (2019) interviewed military operators’ perceptions of moral values of the effects produced by uncrewed vehicles in a hypothetical scenario. In the scenario, a vehicle with suspected insurgents is engaged by the uncrewed vehicle, preventing a potential attack on the operator and their team. Operators are either not told about the uncrewed vehicle’s level of autonomy (i.e. reference to its decision-making process is excluded; unclear if the system is LAWS), told the level of autonomy of the uncrewed vehicle (e.g. it deliberates and decides; clearly LAWS), or told that the uncrewed vehicle is human-operated (i.e. an operator guides the uncrewed vehicle onto the threat; a drone). The operators were advised that, in the scenario, they would only observe the strike and that the authority to deploy the uncrewed vehicle was consigned to their fictional commander.

Operators were told that the strike on the vehicle carrying suspected insurgents resulted in the collateral death of nearby children. Operators were then asked to provide their perception of the morality of the strike. Verdiesen et al. (2019) observed a clear distinction in the perception of morals relating to human dignity, trust, blame, and anxiety between human operated drones and LAWS. That is, operators placed more blame on human-operated uncrewed vehicles for collateral deaths, but perceived greater dignity when a lethal outcome is the result of a human-operated system, rather than LAWS. Further, operators were more trusting, and less anxious, when human-operated uncrewed vehicles delivered lethal outcomes than LAWS.

Two additional publications included military operators in their study design but were unrelated to the research questions. Viktorovna et al. (2023) interviewed uncrewed vehicle operators for mental health disorders. A full text review of this publication indicated that the weapon systems were piloted remotely and that the vehicles did not have the autonomy to release weaponised payloads (i.e. not LAWS). The other publication was an unpublished doctoral dissertation investigating how nations make choices about the military weapons that they design and use (Tannenwald, 2019). A full-text review revealed that this publication involved senior military procurement personnel who would not normally be expected to operate in forward-deployed tactical conditions.

Two publications relating to LAWS use-cases and impact were extracted from the search. One of these publications outlined hypothetical use-case examples while the other outlined the history of LAWS use-cases prior to the 2014 Ukraine-Russia war. In contrast, 52 publications were extracted relating to technical developments that improve the resilience, control, or integration of LAWS. The underrepresentation of LAWS use-cases and impact could be due to its nascence and therefore, the sensitivity associated with contemporary operations. That is, States may be withholding trial reports and technical specifications related to their LAWS to protect a sensitive military capability.

SOTEF: Governance Loop

Overall, 209 publications (66.8%) were classified as relevant to the governance loop described by SOTEF. These publications referred to legal processes, the responsibility/accountability gap, regulatory policy, public opinion, and the international debate on banning or supporting LAWS. Commonly, these publications commenced by acknowledging the lack of a universal rule for accountability (the so-called ‘accountability gap’; Verdiesen et al., 2021), and/or MHC, if LAWS operations result in disastrous outcomes.

Legal Processes

A total of 112 publications were identified that examined legal literature. These examinations resulted in two major positions. The first challenged the adequacy of current legislation to authorise a deployment of LAWS (78 publications, e.g. Kwik & van Engers, 2023; Sotoudehfar & Sarkin, 2023). The other position proposes laws or agreements to legally obligate individuals or organizations to act or refrain from acting in an unlawful manner when using LAWS (e.g. Acquaviva, 2023; Forrest, 2021; Weigend, 2023).

The Responsibility/Accountability Gap

A total of 30 publications were identified that address the responsibility/accountability gap. Broadly, this gap is noted as unclear or ill-defined processes and responsibilities in the authority to deploy LAWS. These publications contain a discourse on identifying accountable persons for the deployment of LAWS, especially if punitive actions are required for illegal or unethical outcomes associated with the deployments of LAWS.

Several of the publications called on the United Nations’ (UN) ruling body to provide clear guidance as to who will bear accountability in the case of a negative outcome (da Silva & Antônio, 2018; Marauhn, 2018; Reed, 2023; Riebe et al., 2020; Vanderborght, 2021). Other publications explain who could be held accountable according to the military’s chain-of-command, ranging from the head of State to the operator in the field (e.g. Gunawan et al., 2019; Häyry, 2021). Publications also contained proposals to review acceptable collateral damage estimates (e.g. Diederich, 2021).

Other proponents demand that State figures not allow lethal effects and outcomes to be autonomous (e.g. Gillespie, 2021). These proponents have suggested severing the autonomy between decision-making and performing the related action. For example, in the case of striking a target, regulation could result in the LAWS incorporating the autonomy to determine whether a target should be attacked, but the human operator may still be required to initiate the attack (Hoffberger-Pippan, 2021). Allocating the final decision in the decision to use lethal effects against a target to a human operator is seen as upholding responsible and/or accountable actions and outcomes.

Regulatory Policy

Publications relating to policy provide several perspectives concerning regulating the production, distribution, or deployment of LAWS (14 publications). One perspective is to regulate the quantities of LAWS production (e.g. Bogdanov & Yevtodyeva, 2022). Since LAWS are more mobile and expendable than soldiers, the failure to regulate quantities of LAWS production (i.e. over-production) could bias States to initially opt for military options, rather than diplomacy. Therefore, the ultimate effect of failing to regulate production quantities of LAWS could undermine global peace and security.

Other publications are focused on States placing appropriate controls on the use of LAWS to avoid initiating or escalating inter-state conflict. For example, one publication recounts international crises arising from LAWS that strayed into another State’s airspace (Hwang, 2019). From an intra-State perspective, Falletti and Gallese (2022) propose that the State places strict controls on the sale of technologies that could allow a non-expert user to create a LAWS, which could have implications in the case of terrorism.

Cases are made to avoid the regulation of LAWS. Kralingen (2016) argues that regulations make a State predictable and can therefore, be pre-empted. Wallach (2013) argues that many States are developing, testing and refining LAWS without regulation. Consequently, regulating LAWS before its full potential is realised could produce global power imbalances.

Public Opinion

Seven publications related to the public’s perceptions of LAWS. Half of these publications surveyed public opinion and how it could impact political persuasion and dissent in a democratic nation. For example, Whetham (2015) suggested that the public may view LAWS strikes as akin to assassination, which may contrast with a narrative to the effect that a State is committed to global peace and security. The remaining publications sought public opinion to validate a position concerning the global debate regarding the ban on autonomous weapons systems.

The International Debate on Banning or Supporting LAWS

An equal number of publications (46 in total) were identified that promote banning LAWS or advocating that LAWS could uphold greater moral/ethical outcomes on the battlefield. Proponents for banning LAWS provide arguments using hypothetical scenarios where unacceptably high civilian casualties occur (e.g. Asaro, 2019; Cernea, 2017; Solovyeva & Hynek, 2023). Similarly, proponents that posit LAWS as morally/ethically superior to humans apply ethical, moral, or legal standards that are applied to hypothetical scenarios (e.g. Matiz Rojas & Fernández Camargo, 2023; Thurnher, 2015; Young, 2021). Either case cannot, yet, be substantiated.

SOTEF: Design and Development Loops

The remaining publications (104; 33.2%) relate to design and/or development considerations for LAWS from the perspectives of engineering or human factors. For example, engineering publications contain an examination of different machine learning strategies for specific actions such as target searching or weapon engagement (e.g. Zhang et al., 2023; Zhao & Zhang, 2023). Other engineering publications discuss the prohibitions and permissions that should be incorporated into the LAWS decision-making process (e.g. Boulanin & Lewis, 2023; Brutzman et al., 2018; Cronin, 2022; Lavazza & Farina, 2023).

Publications relating to human factors contain suggestions for optimising performance or ensuring the safe and accountable use of LAWS. These publications make reference to automation bias (Coco, 2024), cognitive load (Osterreicher & Krakorova, 2016), decision making (Wu et al., 2020), function allocation (Canellas & Haga, 2015), situational awareness (Helle et al., 2022), supervisory control (Sharkey, 2014), and trust (Rastogi & Nygard, 2019). While these publications are useful for human-machine design considerations, they do not directly relate to the use of LAWS in the context of field operations.

Discussion

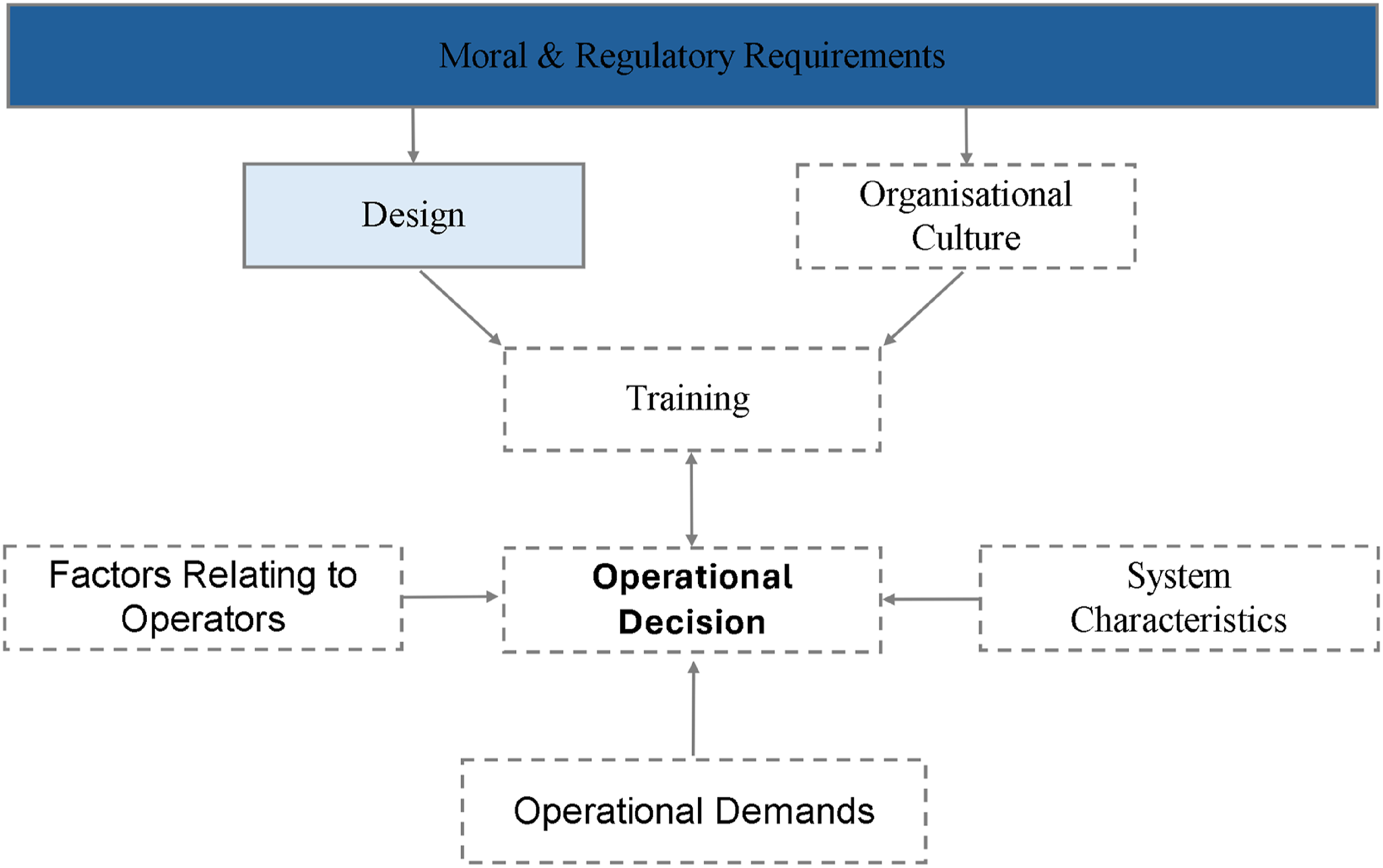

This scoping review has provided an overview of the concerns that the international research community considers important prior to the implementation of LAWS. Publications identified in this scoping review are largely aligned to the SOTEF’s governance, design, and development loops. It was observed that there is a two-fold number of publications relating to the SOTEF’s governance loop than the combined number of publications in the design and development loops. This suggests that moral and regulatory requirements are expected to strongly influence the design, development, and operation of LAWS.

A small number of publications align with the SOTEF’s operation loop, which include actual use-cases of LAWS and interviews with military operators who have experience with LAWS-like systems. These publications do not, however, directly investigate the factors that operators might consider in deciding whether LAWS could or should be deployed. Therefore, there is an evident gap in the literature concerning the perspective of operators who may be charged with deploying LAWS. Therefore, there is a latent potential for LAWS to be used inappropriately and/or ineffectively.

The SOTEF Operation Loop: A Proposal for Investigating LAWS

It should be noted that the primary function of LAWS, unlike most autonomous machine agents, is to deliver lethal effects in support of military objectives. This function might explain the greater weight of publications focussing on moral and regulatory requirements. That is, interested parties are likely to seek assurances that LAWS will be used in morally and legally appropriate ways to achieve military outcomes. However, without investigations using actual operators in realistic settings, the interaction between moral/legal and cognitive requirements in the decision-making process for deploying LAWS in combat is not yet understood.

A model of possible interactions between moral/legal and cognitive requirements is shown in Figure 2. Lightly shaded boxes indicate a lesser weight of publications focused on the topic as it relates to operators’ decision-making process for deploying LAWS. Boxes with a dashed outline represent no existing literature. In total, Figure 2 is a representation of factors that are likely to impact operators’ operational decisions as to whether to deploy LAWS during combat. Factors that could impact operators in the operational decision to deploy LAWS.

Moral and regulatory requirements constitute a key factor that are posited as an over-arching framework that potentially impacts the design of LAWS, the organisational culture within which LAWS is deployed, training for the use of the LAWS, operational experience in deploying LAWS, and ultimately, the operational decision to deploy LAWS. Several papers were identified during the present scoping review that focus on moral and regulatory requirements in the design of LAWS (e.g., designing for lawful use, Brutzman et al., 2018, Galliott et al., 2021, Kajander et al., 2020, Thurnher, 2013, avoiding biases in autonomous target recognition, Limata, 2023, Narayanan, 2020, the use of transparent autonomous decision-making processes, Knuckey, 2016, McFarland & Assaad, 2023, and the development of engineering requirements based on International Humanitarian Law, Gillespie, 2019, George Jain, 2023).

Organisational Culture

No publications were evident that investigated the impact of moral and regulatory requirements on organisational culture relating to LAWS. Organisational culture will likely influence the acceptance and the use-case for LAWS, together with the threshold for deployment in the field (Davis, 1989). Overtly supportive leadership and systems that are designed to align with established organisational processes and values can directly increase workers’ engagement with newly introduced technological systems (Cameron & Quinn, 2011; Ismail Al-Alawi et al., 2007). Therefore, overt leadership support for the use of LAWS may ameliorate operators’ perceived risks, such as being court martialled for collateral damage, thereby reducing the threshold for deployment (Galliott & Wyatt, 2020, 2022a, 2022b).

Organisational culture can also impact the selection of prospective operators. Organisations’ values have a significant impact on the ethical conduct and performance of its workers (Ardichvili et al., 2009). This may be due to the fact that prospective operators are drawn to an organisation’s values, which determine whether there is a ‘fit’ with their personal values (Backhaus & Tikoo, 2004; Cable & Judge, 1996). In this case, understanding a military units’ values could be important in determining the types of prospective operators who are selected, and who are likely to make decisions to deploy LAWS legally and/or morally.

Training and Experience

Training is an important source of experience, providing knowledge and behaviours that operators can use to make operational decisions. This is especially true for ethical conduct in military contexts. For example, Warner et al. (2011) reviewed the effectiveness of an ethical training package administered to soldiers in a combat military unit prior to a 15-month high-intensity combat deployment. The ethical training package involved viewing excerpts of popular movies depicting warlike scenarios, in which ethically questionable behaviour was occurring or could occur. This was followed by leader-led discussions of these scenarios using standardised questions.

Questions focused on identifying risk and protective factors in breaches of ethical behaviours. This led to discussions on military rules that are situationally dependent, such as the point when the right to self-defence becomes excessive force, constituting a war crime. This training package was administered sequentially, whereby each level of leadership provided the training to their immediate subordinates with the guidance of a battlefield-ethics training team from an external military unit.

Warner et al. (2011) observed a reduction in breaches of ethical conduct and an increased willingness to report unethical conduct from military operators who had undertaken the ethical training package. Nearly all operators expressed an increased clarity in the decision-making process in the treatment of non-combatants during ethically ambiguous situations. Warner et al. (2011) also noted that combat frequency and intensity were positively associated with breaches of ethical behaviour.

Ethical training packages using LAWS could achieve similar outcomes to those reported by Warner et al. (2011). Given its infancy, there is a notable lack of operational experience with LAWS. In the absence of operational experience, a training package using hypothetical scenarios could assist current and prospective operators to form decisions regarding the deployment of LAWS in actual future battlefield scenarios ensuring moral and legal compliance.

Operational Decision

In addition to training and experience, the operational decision to deploy LAWS can also be influenced by the characteristics of LAWS, operational demands, and human factors relevant to operators.

System Characteristics of LAWS

Automation involves a series of algorithms that allows a machine agent to detect, understand, and respond to inputs from the environment (Endsley & Kaber, 1999; Lee & See, 2004; Parasuraman et al., 2000). Automation may be applied to one or a combination of several machine agent functions: information acquisition, information analysis, decision/action selection, and action implementation. There are also different Levels of Automation (LOAs) that can be applied to machine agents.

LOAs can range from ‘low', whereby all functions are performed by operators, to ‘high', whereby all functions are performed by the machine agent without requiring human intervention (Parasuraman et al., 2000; Simmler & Frischknecht, 2021). LAWS have been presented as fully autonomous machine agents, implying that they will have high LOAs. A high LOA presents potential benefits and challenges to operators that will be required to determine whether LAWS is deployed during combat.

High LOAs have been associated with a reduction in cognitive demands, resulting in improved performance and safety (Cummings et al., 2013; Manzey et al., 2012; Onnasch et al., 2014). However, this may not be the case for LAWS, which is distinct from typical machine agents given that the primary function of LAWS is to deliver lethal effects to hostile targets. The LOA of LAWS could present several potential challenges for operators based on the reliability of the automation, and the likelihood that LAWS will perform its intended function without failure (Parasuraman et al., 2000).

The reliability of LAWS can determine the level of trust that operators place in LAWS. Trust, in the context of automation, is ‘the attitude that an agent will help achieve an individual’s goals in a situation characterised by uncertainty and vulnerability’ (Lee & See, 2004, p. 54). Inappropriate levels of trust, either too much or insufficient, can impact performance and safety.

Parasuraman et al. (2000) note that highly reliable automated machine agents can be ‘misused’ by operators. That is, operators may become highly reliant and trusting of automated machine agents, resulting in an ‘automation bias’. An automation bias could result in operators favouring suggestions or information from autonomous machine agents while discounting contradictory information from other sources, including their own judgement (Goddard et al., 2012; Parasuraman & Manzey, 2010; Wickens & Dixon, 2007).

The misuse of LAWS could be problematic if signals from the operational environment indicate that it is inappropriate to deploy LAWS. That is, operators may perform risky tactical actions while assuming that they will receive appropriate operational support from LAWS. As an example, operators might cross open terrain while a sniper is present in a residential building, which LAWS cannot strike because it cannot confirm the absence of civilians.

Inconsistently performed functions or multiple errors can lead to a reduction in trust of LAWS (Parasuraman et al., 2000). This could result in the ‘disuse’ of LAWS, including under-utilisation, or the refusal to deploy LAWS. For example, collision avoidance systems with high false alarm rates are eventually ignored, even disabled, by operators (Getty et al., 1995; Parasuraman et al., 1997; Satchell, 2016). In this case, if LAWS are found to perform actions that are contrary to operators’ intended outcomes, the resulting under-utilisation could leave operators at greater risk.

Changing the LOA of LAWS could assist in the completion of mission objectives and/or reduction of errors, but it may prove challenging for operators. The LOA of LAWS could be changed so that the decision or action to apply lethal force is transferred to operators in ambiguous situations (e.g. visual obscuration or the potential presence of civilians). However, if this occurs, one challenge is the need to maintain operators’ manual skills.

A meta-analysis conducted by Onnasch et al. (2014) examined the effect that different LOAs had on manual performance when the machine agent fails, termed ‘return-to-manual skill performance’. The outcomes of this meta-analysis suggested that return-to-manual skill performance suffers with the use of machine agents, and that performance decrements increase in severity as LOAs increase. This could be attributed to increased LOAs providing operators with insufficient opportunities to practice manual skills, leading to skill decay. Therefore, the LOA of LAWS, and the potential transition to manual takeover, requires investigation to ensure operators are trained appropriately and confident to use LAWS to complete mission objectives.

Operational Demands

Temporal pressure refers to situations where operators will have limited time to make a decision. Decreasing the time for the decision-making process can lead to operators perceiving or processing less information than is otherwise necessary for their decision (Kerstholt, 1994; Ordonez & Benson, 1997; Zakay & Wooler, 1984). Temporal pressure can also change decision-making strategies. That is, operators may shift from effortful, deliberate decision-making to making decisions based on whether the current situation ‘matches’ a previous event stored in memory (Klein, 2014; Ross et al., 2014). This is a type of naturalistic decision-making process observed in workplaces associated with temporally demanding, high-risk, high consequence conditions, such as firefighting (Klein et al., 1993) and the military (Kaempf et al., 1996; Pascual & Henderson, 2014).

Temporal pressure in situations requiring operators to decide whether to deploy LAWS can have a significant impact on design, training, and/or organisational culture. With respect to design, decision support systems are used in air traffic control, an environment with significant time pressure, workload, unpredictability, and safety-critical conditions. A decision support system can assist air traffic controllers by anticipating potential conflicts and alerting the controller to intervene with sufficient response time. A similar system may need to be developed for LAWS, which will be operated in similarly unpredictable, high workload, and safety-critical conditions.

Temporal pressure in training can be an important tool in assisting operators to become aware of their propensity for mistakes. Keith and Frese (2008) demonstrated, through a meta-analysis, that training focussing on error management can produce greater outcomes compared to training that focuses on error avoidance. Further, error management training can provide learners with skills and strategies that transfer to different real-world situations, which will benefit prospective LAWS operators, noting the lack of actual LAWS use-cases identified.

From an organisational cultural perspective, screening prospective operators for their ability to perform under temporal pressure is likely to be important in implementing LAWS successfully. Several authors note the relevance of specific traits that may be linked to performance under temporal pressure (e.g. working memory capacity, Beilock & Carr, 2005; personality, Barrick & Mount, 1991, Mosley & Laborde, 2016). This represents an important avenue of research so that military units establish an initial cadre of LAWS operators, who will be responsible for training future operators to ethically and effectively deploy LAWS under a range of conditions.

The characteristics of military operations could also determine whether operators elect to deploy LAWS. In conditions where tasks demand exceed operators’ resources, Biros et al. (2004) demonstrated that operators continue to use machine agents despite trust in the machine agent being rightfully low (i.e., the agent showed signs of sabotage by an adversary). Operators who perceive tasks as carrying excessive risks have been shown to lower their trust in machine agents, resulting in disuse (Ezer et al., 2008; Rajaonah & Sarraipa, 2018), especially if the machine agent has a higher LOA (Lyons & Stokes, 2012). Ultimately, the misuse or disuse of LAWS in either condition could be detrimental to the achievement of military objectives.

Factors Relating to Operators

This scoping review identified several ‘human factors’ considerations in the context of LAWS. However, apart from Mental Workload (MWL), these considerations have been largely addressed by the other factors affecting the operational decision. MWL refers to the cognitive demands imposed on operators’ mental resources by the task and the system, with which they are interacting (Young et al., 2015). Sources of cognitive demand include: (1) the amount and complexity of information that needs to be perceived, processed, and acted upon; (2) the amount of information required to be held and manipulated in memory; (3) switching attention between tasks and the level of focused attention required for each task; (4) the difficulty and number of choices associated with decision-making; and (5) analysing and solving problems.

There is evidence to indicate that when cognitive demands exceed cognitive resources, performance is impaired and the likelihood of errors increases (Chen & Cowan, 2009; Helton & Russell, 2011; Monsell, 2003; Morrison et al., 2015). In the context of LAWS, errors in the decision to deploy LAWS could be catastrophic. Further investigation of LAWS with operators in realistic settings could identify the MWL associated with tasks or operators’ physiological measures and the associated risk of an inappropriate use of LAWS. This could result in system designs that guide operators’ deployment of LAWS through a process aligned to ethical or moral considerations.

Limitations

The key limitation for the present scoping review is that no publications were identified that related directly to the research question. Therefore, there is a need to commence an exploratory investigation of the factors that operators use when deciding whether to deploy LAWS.

The sample size captured by individual publications represents another limitation. Many publications from the present review were extracted from non-scientific journals. These journals may not require publishers to utilise an optimal sample size to collect data. Consequently, the outcomes reported in this review could reflect publications with three or fewer subjects and therefore, may not reflect the true extent of the reported variables.

The sensitivity of the topic presents further challenges. LAWS are a nascent capability supporting state security and geopolitics. This makes LAWS a sensitive capability, for which operational security will be paramount. As a result, the lack of publications could partly reflect operational security measures, which will influence the volume of evaluations.

Conclusion and Theoretical Implications

The aim of the present scoping review was to identify the scope of investigation into the factors that operators use in the decision to deploy LAWS. Operators are a central stakeholder in the SOTEFs operation loop. Operators are also likely to be the final stakeholder responsible for deploying LAWS against hostile targets and are therefore likely to have important interactions with the other loops in the SOTEF (governance, design, and development, Schraagen, 2023).

As a result of this scoping review, no publications were found that directly investigate the factors that operators consider in the decision to deploy LAWS. Instead, two-thirds of the publications in the scoping review consider how authoritative bodies should act and/or how ethics or laws could be upheld with the use of LAWS. The remaining publications related to design and/or the developmental considerations for LAWS from the perspectives of engineering or human factors.

While these perspectives are important in the context leading up to the deployment of LAWS, their interaction with operators’ decision-making process as to whether to deploy LAWS, especially in demanding situations such as combat, is yet to be investigated. Investigating this interaction could provide assurance to all stakeholders encapsulated within the SOTEF that LAWS will be used effectively and ethically. Without this assurance, the potential misuse or disuse of LAWS in future conflicts could result in excessive risks to operators or significant, unintended collateral damage. Therefore, an investigation of the factors used by operators in deciding whether to deploy LAWS should commence.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The principal author receives a monetary scholarship from the NSW Defence Innovation Network (20213921), which aims to facilitate collaboration between Universities and the Defence sector.