Abstract

Those responsible in organisations for the design and delivery of training require a practical method for the analysis and prediction of skills retention. To address this, a taxonomy of nine psychological domains was developed specifically to provide a finer-grained approach to analysis of the skills required in the performance of trained tasks. An extant predictive model relevant to five of the domains was applied to produce a set of domain retention curves for physical/lower-order cognitive skills. These curves informed the development of a novel Competence Retention Analysis Technique (CRA-T) that incorporates a simple ‘traffic light’ approach indicating workforce proficiency, following a period without practice. CRA-T simplifies the process of understanding skill retention for practitioners by providing an alternative to separate empirical studies. By identifying the psychological domains involved in task performance insights can be gained into the acquisition and retention of these components, allowing the determination of those most at risk of decay. CRA-T is suitable for the analysis of a range of physical/cognitive tasks across sectors, where systematic approaches to training analysis/design for skill retention optimisation are required. CRA-T considers complex cognitive skills, but as no predictive models currently exist, longitudinal research is required to define their retention levels.

Keywords

Introduction

Skill decay reflects the failure to retrieve knowledge and skills after a period of non-use (Arthur et al., 1998; Linde et al., 2018). The mitigation of skill degradation is a particular challenge for infrequently applied skills such as those required in non-routine situations for example, automation failure (Grindley et al., 2024; Vlasblom et al., 2020). Moreover, a loss of skill proficiency in high-risk, safety critical roles or reversionary tasks may result in adverse consequences impacting not only individuals and their organisations but also society (Klostermann et al., 2022; Volz et al., 2016; Woodman et al., 2021). This has important implications for organisations in terms of training delivery, risk management and operational readiness (Brouwers & Joung, 2024).

The psychological literature demonstrates that knowledge and different types of skills decay at different rates (e.g. Linde et al., 2018; Stothard & Nicholson, 2001; Vlasblom et al., 2020; Volz & Dorneich, 2020; Wang et al., 2013; Wisher et al., 1999; Woodman et al., 2021). Therefore, to deliver cost-effective training organisations need an understanding of the knowledge and skill types that underpin the performance of tasks (Woodman et al., 2021). They also need guidance in identifying which components of tasks will decay more than others and thus require refresher training more frequently (Brouwers & Joung, 2024), and more practice during initial training to ensure competence (Kim et al., 2013).

This paper addresses the current gap in the provision of an approach to support training practitioners in understanding how knowledge and different types of skills underpinning tasks are retained over time. This will identify the task components most at risk of decay, supporting targeted training design and delivery for skill retention optimisation. This is important as improved retention of skills over time can enhance transfer of training to the workplace (Velada et al., 2007). A critical overview of extant literature relevant to skills analysis and the prediction of skills retention is provided before going on to present the rationale behind the development of the Competence Retention Analysis – Technique (CRA-T), its underpinning taxonomy and how the CRA-T can be exploited by training practitioners using examples from UK Defence and wider industry. The work included the development of guidance on competence retention for individual training as defined in Joint Service Publication (JSP) 822 (UK MOD, 2024). JSP 822 sets out the Ministry of Defence (MOD) policy, direction and guidance for all learning and development across Defence.

Background Literature

Skills Analysis and Taxonomies

A key aspect of the Training Needs Analysis (TNA) process that supports skill analysis is Hierarchical Task Analysis (HTA). Accurate HTA is essential in the design and delivery of effective training and education. It determines the task components of job roles and is used as a framework to systematically identify the requisite Knowledge, Skills and Attitudes (KSA) to ensure proficient task performance (UK MOD, 2024). Bloom’s revised taxonomy (BRT) of educational objectives (Anderson & Krathwohl, 2001; Krathwohl, 2002) is a well-established model supporting KSA analysis. It categorises knowledge and cognitive processes within a two-dimensional hierarchical structure, designed to show progression from lower- to higher-order thinking. Figure 1 summarises the cognitive processes. Although BRT is hierarchical in structure, the levels are interconnected (Krathwohl, 2002); learning can involve movement between levels reflecting the use of multiple skills (Agarwal, 2019). Bloom’s revised taxonomy levels for the cognitive processes (Anderson & Krathwohl, 2001).

Whilst the BRT is one of the most frequently applied taxonomies within training and education (Larsen, 2022), aspects of the model limit its practical application in real-world contexts when addressing competence retention. Since the boundaries between cognitive processes can overlap (Agarwal, 2019), this creates ambiguity. For instance, decision-making involves analysis and evaluation but at differing levels of complexity. Whilst simple decision-making often involves binary choices, multiple alternatives must be considered when making more complex decisions where there is not always a right answer (Chen et al., 2019). The analysing and evaluating processes thus do not support practitioners in distinguishing between these two types of decision-making. The apparent overlap between cognitive processes also does not address the integration of skills, where multiple cognitive processes are engaged concurrently. Hence, a psychological skills category reflecting such integration is required.

The psychomotor taxonomy focuses on reflex movements and basic, fundamental movements, moving on to perceptual and physical abilities before skilled movements are achieved (Anderson & Krathwohl, 2001). This classification renders the distinction between different types of physical skills ambiguous, for example, discrete versus continuous psychomotor skills. Ultimately, these limitations present a challenge for military practitioners who are focused on training personnel to complete practical skills, which can include both cognitive and psychomotor processes, therefore making the specific psychological skills that need to be trained more challenging to identify.

In a similar way to BRT, Miller’s pyramid of clinical competence (Miller, 1990; Ramani & Leinster, 2008) aligns learning objectives with what clinicians are expected to be able to do at each phase of learning. It focuses on the learning progression novices undergo to become experts. The high-level description of KSA provided by BRT and Miller’s taxonomy limits their practical application.

Practitioners in training design and delivery roles within the military are not always well-versed in education theory. To effectively consider skills retention, they require guidance in aligning what they already know from policy regarding task-related KSA analysis with different types of physical and cognitive skills. Consequently, a skills taxonomy that makes a clear distinction between knowledge and different physical and cognitive skills, and the psychological processes that underpin them is required.

Predictive Models of Skills Retention

The psychological literature was reviewed to identify the availability of scientifically derived and validated predictive skills retention methods. Pertinent examples are described and then evaluated.

Tasking-Rating Methods

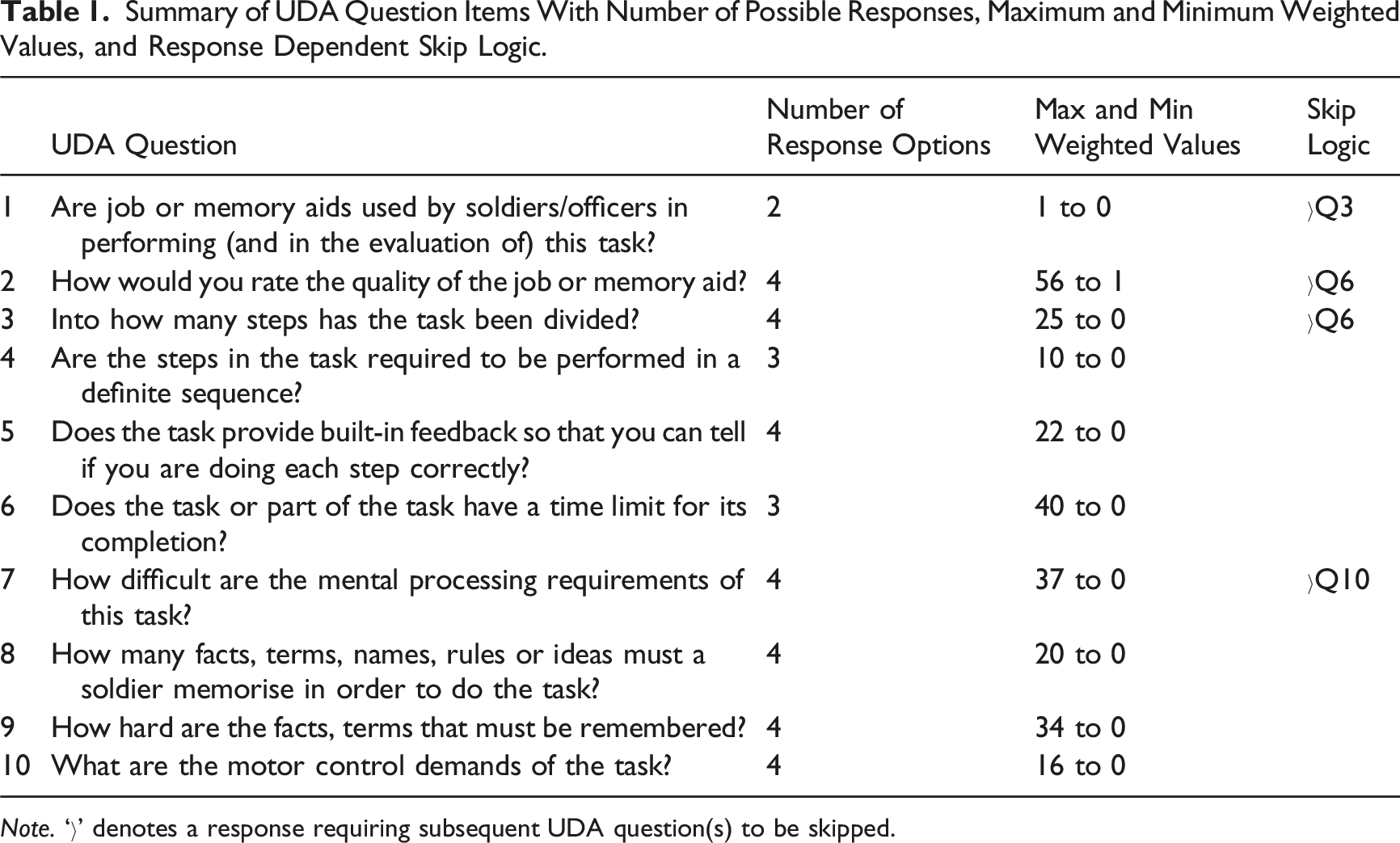

Summary of UDA Question Items With Number of Possible Responses, Maximum and Minimum Weighted Values, and Response Dependent Skip Logic.

Note. ‘›’ denotes a response requiring subsequent UDA question(s) to be skipped.

The sum of the weighted rating values is calculated. A formula is then applied to the sum of the rating values to produce indicative retention curves out to 12 months, indicating the point at which any given percentage of the workforce is predicted to no longer be proficient in the task. The model was developed from three years of extensive trials and data collection, with its formula generated using aggregated individual performance data from 78 military procedural tasks. It has been widely applied, determining projected skill retention for digital skills, (Sanders, 1999), air defence missile crews, field artillery tasks and radio operators (Wisher et al., 1999). More recently, it has been applied to predicting retention of procedural skills required in other domains, for example, virtual learning environment content production tasks (Cahillane et al., 2019) and a safety critical task performed by rail workers (Brouwers & Joung, 2024).

The UDA has received validation within military contexts and is found to have high validity and reliability (Macpherson et al., 1989; Rose & Czarnolewski et al., 1985; Rose et al., 1984). Rose and Czarnolewski et al. compared UDA predicted scores to actual performance scores across different time intervals (two, five and seven months) and found the UDA to be effective at predicting retention across several military tasks within a field artillery context. The projected retention curves for these tasks were found to be reasonably consistent with actual performance data, obtained through observation during live task performance. However, for some retention intervals, such as two months, the arising predictions for skills were found to be pessimistic with predicted retention being lower than actual performance; for some tasks, Rose and Czarnolewski et al. observed this difference to be as large as 30%. This suggests that the UDA model may over predict skill fade across varying time intervals.

The potential underestimation of the level of skills retention has important implications for the application of the UDA to military tasks when evaluating training intervals. Rose & Czarnolewski et al. (1985) therefore view the UDA as pessimistic in its projected predictions, such that the decay rate is potentially predicted to be somewhat worse than might be observed if actual performance data were collected. Any perceived overestimation of skills fade may be due in fact to the UDA not considering practice to consolidate skills (Anderson, 1982; Kim et al., 2013) and the level of skill acquisition during initial training (Stothard & Nicholson, 2001), both additional factors known to influence skills retention (Cianciolo et al., 2010; Klostermann et al., 2022; Linde et al., 2018; Swezey & Llaneras, 1997).

A revised version of the UDA known as the Trainer’s Decision Aid (TDA) was developed by Cianciolo et al. (2010) to incorporate the influence of practice and training-related factors. With regards to the latter, the TDA considers how training is delivered, the similarity between the training context and the operational environment, and the impact of technology on performance. Like the UDA, the TDA questions have several possible responses. However, a limitation of the TDA is that weightings for the response options have not been developed (Wood et al., 2021). Consequently, the relative importance of each factor is not reflected in the score (NATO STO, 2023). Contrary to the UDA, where empirical data was collected by extensive controlled experimentation, the data gathered for the TDA were from live exercises. This less controlled approach likely produced difficulties in collecting the necessary performance data to generate and validate projections of skill decay.

Computational Models

More recent predictive models are focused on the individual level of retention for specific tasks. ACT-R is an established complex computational model that considers the mechanisms determining learning and memory, including procedural and declarative knowledge, and working memory, to predict knowledge and skills retention (Anderson, 2007; Ritter et al., 2018). However, the model’s performance is currently primarily determined by declarative memory-based learning and retrieval mechanisms. The Dismal complex spreadsheet task, comprising 14 activities, is an example of a specific task where the ACT-R model has been used to measure the degradation of knowledge and skills (Kim & Ritter, 2015; Oury et al., 2018).

Tehranchi et al. (2021) show the ACT-R’s decay model does not accurately predict decay for the Dismal spreadsheet task when the model is applied across multiple days (i.e. 1–4 days, with extension to 10, 16 and 22 day decay periods). Rather, it exaggerates the decay observed in human performance data for tasks trained over successive days. With the intention to enhance the model’s predictions, Tehranchi et al. manipulated several parameters (one at a time) within ACT-R that alter the declarative memory component. These individual manipulations, however, did not produce a better model fit compared to the default parameters, leading Tehranchi et al. to suggest parameter combinations may lead to a better model fit. Notwithstanding the suggested hyper tuning of parameters to get the model to fit with human retention data, the observed performance evidence is only relevant to a very specific type of context – in this case, retention of a spreadsheet task. This means ACT-R currently has reduced utility for broad application to different types of highly realistic tasks.

Van Leeuwen et al. (2023) highlight how current predictive models aimed at the individual level of skill retention are limited due to a requirement for large data sets. Examining the application of Extreme Gradient Boosting (XGBoost) decision tree models, Van Leeuwen et al. found that while such computational models can trace individual retention curves, more data are required to attain sufficient reliability and accuracy for them to be a useful predictive retention model.

The Predictive Performance Equation (PPE) is a computational model of learning and forgetting that predicts learning and retention based on past performance. It combines the effects of recency and the temporal distribution of practice (spacing effects) on performance (Jastrzembski & Gluck, 2009; Walsh et al., 2018). Empirical evaluation of the PPE demonstrates it can make accurate predictions of individual performance over an extended period (Peebles, 2024). However, the PPE does not consider task-related factors nor the nature of the cognitive processes underpinning different skills, including mental processing requirements and motor control demands.

Evaluation of Extant Predictive Models

Overall, there is evidence supporting the ability of computational predictive models to track some individual level retention changes over a period of days (e.g. Peebles, 2024; Van Leeuwen et al., 2023). However, they lack the broad scope of relevance to highly realistic environments that would allow training practitioners to capture changes in workforce level proficiency. Computational models also require large amounts of data to obtain a sufficiently accurate retention curve for specific tasks (Van Leeuwen et al., 2023).

The UDA model appears to offer an empirically based, pragmatic approach of relevance to the military, given it was developed for military task analysis (Rose & Radtke, et al., 1985). Rather than predicting task retention for individual personnel, its decay curves predict task proficiently at the population level. While validation of its algorithm and decay curve predictions stems from older empirical research (Macpherson et al., 1989; Rose & Czarnolewski et al., 1985; Rose et al., 1984), it considers task characteristics and duration of non-use. Meta-analyses and empirical work indicate these are two of the best predictors of skill retention (Arthur et al., 1998; Park et al., 2022; Wang et al., 2013), rendering the UDA applicable in real-world contexts (Brouwers & Joung, 2024). Although UDA task retention curves may be pessimistic, they indicate the ‘worst-case scenario’ predicting the lowest level of retention for a task as it fades over time without practice (Cahillane et al., 2019).

Despite the UDA being deemed a practical method (Brouwers & Joung, 2024; Macpherson et al., 1989), it can still require considerable time to administer in consultation with multiple SMEs to reach consensus. Although an average of between 10 and 12 minutes to rate a task is estimated, the actual time needed differs and is contingent on the experience of the raters and nature of the task (Rose, Radtke, et al., 1985). A larger amount of time would be required for tasks comprising multiple subtasks. Administration involves ensuring SMEs understand the definitions for the factors considered to obtain the most accurate judgement concerning weighted response option selection. Furthermore, the UDA produces a task decay curve. This serves only to indicate the point at which task proficiency declines to an unacceptable level, indicating the requirement for refresher training to uplift proficiency. Therefore, the UDA does not enable practitioners to consider retention for different types of psychological skills underpinning tasks, so that these can be targeted during training design to optimise retention.

Whilst the UDA is applicable to procedural based roles involving explicit knowledge, physical and simple (i.e. lower-order) cognitive skills, it is inappropriate for the type of complex (i.e. higher-order) cognitive skills and underpinning implicit knowledge required for future operating environments (Cahillane et al., 2020; NATO STO, 2023). Personnel operating in these environments will face a range of increasingly complex and cognitively demanding information processing and decision-making tasks. Such tasks will require job holders to multitask and adapt to new situations (MacLean et al., 2015; NATO STO, 2023). Unlike simple cognitive skills, attentional control is considered a more fundamental psychological mechanism underlying performance of complex cognitive skills than working memory function (Draheim et al., 2022).

Simple cognitive skills are known to decay more rapidly (Cahillane & Morin, 2012; Landman et al., 2023; Vlasblom et al., 2020), whilst scant available evidence to date indicates complex cognitive skills are better retained (NATO STO, 2023; Villado et al., 2013; Wang et al., 2013), with one study suggesting retention up to six months (Sanli & Carnahan, 2018). The improved retention of complex cognitive skills has been explained with reference to them being meaningful, mostly open-looped and the requirement for elaborative processing (Skinner, 2014; Wang et al., 2013). In the absence of a predictive model of retention applicable to tasks underpinned by complex cognitive skills, evaluation of their retention requires longitudinal empirical evidence (Cahillane et al., 2020; Cahillane et al., 2022; NATO STO, 2023).

CRA-T Development

Extant skills taxonomies do not provide the granular approach to analysis required to enable practitioners to identify knowledge and different types of skills underpinning tasks. Current predictive skills retention models focus the analysis at the task level. To be of value to training practitioners, a model needs to be practically applied, offering guidance on the likely retention levels for the knowledge and skills underpinning tasks. Furthermore, the approach should not rely on multiple SMEs or skilled facilitators for application nor be time consuming to apply. The UDA does not meet these practical criteria for practitioner application. Commissioned by the MOD, the CRA-T and its underpinning taxonomy were developed in response to these practical limitations (Cahillane et al., 2013, 2022). It aimed to provide generic principles and guidance for the optimisation of what the MOD termed ‘competence retention’. Before proceeding, it was agreed with the MOD (via their research agency, the Defence Science and Technology Laboratory (Dstl)) that the following definition of competence would be used: ‘A competence refers to the knowledge, skills and underpinning attitudinal dispositions that must be acquired and maintained by individuals and teams in order to effectively perform tasks to a pre-defined standard of proficiency’ (Cahillane et al., 2013, 1).

Taxonomy of Psychological Domains

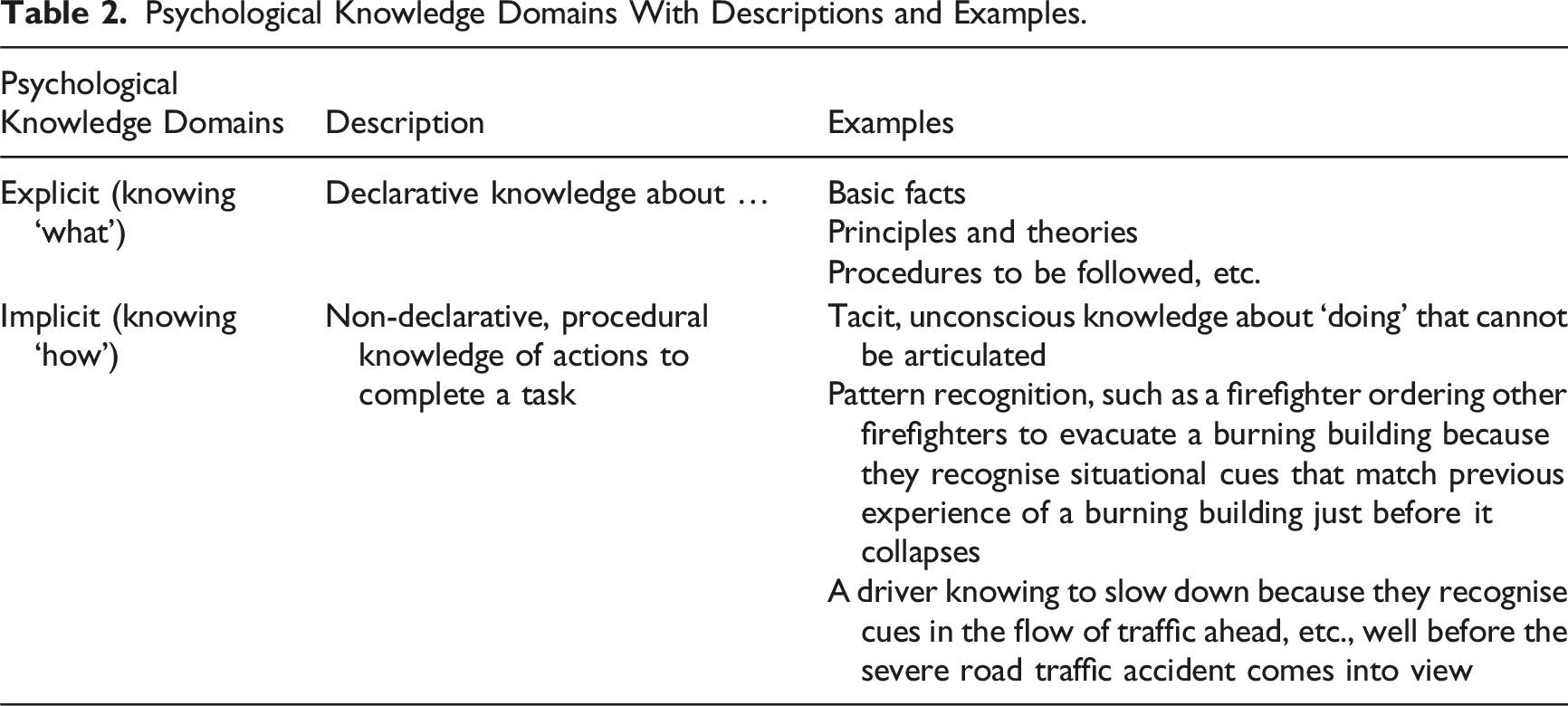

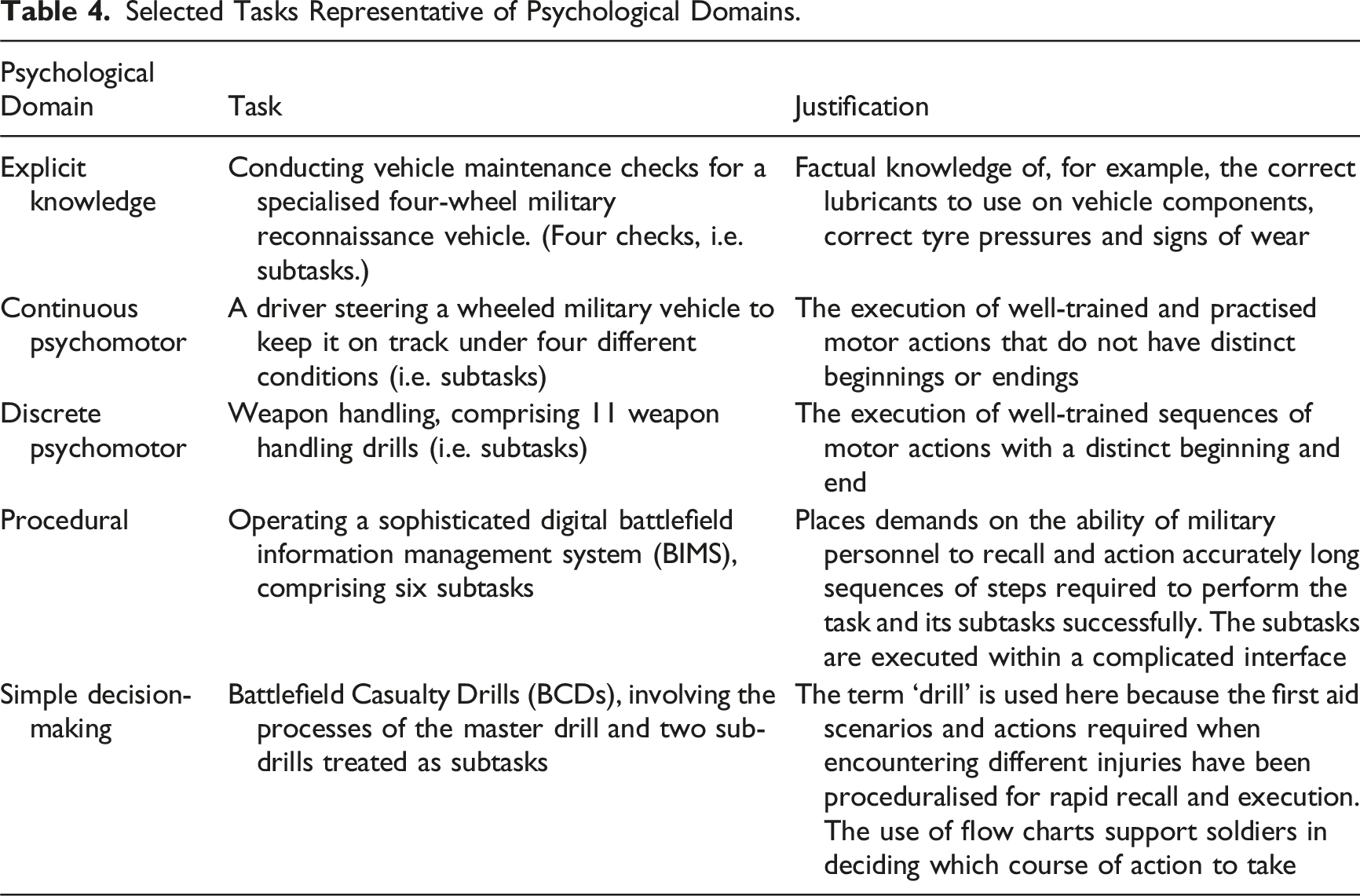

Psychological Knowledge Domains With Descriptions and Examples.

Psychological Skill Domains With Descriptions and Examples.

Although many of the examples in Table 3 are military tasks, the taxonomy is equally applicable to non-military tasks because it was derived from psychological principles. Note that no attitudinal domains were developed. While attitudes can decay, for example, when not based on emotion (Rocklage & Luttrell, 2021), any decay in attitudes over time is manifested in observable shifts in behaviour (Gagné et al., 2005).

Psychological Domain Retention Curve Generation

The UDA was deemed the most appropriate empirical model to advance into the CRA-T. However, this was with regards to the lower-order physical and simple cognitive skills (i.e. explicit knowledge, the continuous psychomotor, discrete psychomotor, simple decision-making and procedural domains) since the UDA is not applicable to complex cognitive skills (Cahillane et al., 2020; NATO STO, 2023). The task decay curves produced by the UDA do not enable practitioners to identify retention levels for the different psychological skills underpinning tasks. This led to the advancement of the UDA by applying it to tasks representative of the different domains, thus exploiting the taxonomy, to generate novel psychological domain curves instead.

Selected Tasks Representative of Psychological Domains.

A single task was selected to represent each psychological domain, where each task was broad in scope (i.e. comprising several subtasks) and associated with a large pool of SMEs, increasing the chance of SME access to support the study. Military tasks are also often underpinned by more than one psychological skill domain (Cahillane et al., 2020; NATO STO, 2023). Only tasks of sufficient breadth and depth (i.e. comprising multiple subtasks) that could be aligned to a single psychological skill domain were of relevance to the development of CRA-T. Consequently, this limited the number of available broad, representative tasks that could be aligned to a single psychological skill domain. However, because each task comprised several subtasks their UDA scores could be averaged.

The UDA task rating method (Rose, Radtke, et al., 1985) was applied to the five tasks selected by military SMEs to develop novel psychological domain retention curves for five of the domains (Cahillane et al., 2013). Application of the UDA requires the rating of task characteristics by individuals with expert knowledge of the task; it is not a psychometric measure of individual characteristics (Cahillane et al., 2019). Therefore, the accuracy of UDA ratings is not determined by having a specific number of SMEs (i.e. sample size) but rather access to SMEs with the appropriate level of task knowledge (Rose, Radtke et al., 1985). The number of SMEs rating each task, all of whom were military training instructors, was based on SME availability and where taking part would not impact on training course delivery. The UDA task rating was completed with between four to 10 SMEs for each task with the exception of the BCD task, for which only one SME was available. However, the SME was a senior instructor who had played a key role in developing BCD training and the soldier aide memoire. Therefore, the researchers were confident in the accuracy of the ratings obtained for this task.

Before consulting with SMEs, the proposed protocol for data gathering was reviewed by Dstl against JSP 536 guidance. Dstl confirmed that an ethics application to the Ministry of Defence Research Ethics Committee (MODREC) was not required as the work fitted within the ‘service evaluation’ category of JSP 536 (UK MOD, 2022). It evaluated existing processes (i.e. tasks) by gathering SME views on factual, task-related information, presenting no significant ethical issues. It did not involve the collection of human performance data or information that could lead to the identification of individuals. At the time the work was undertaken, an ethics application was not required by Cranfield University for consultancy. This was in accordance with ethics processes in place at the time. Whilst ethical approval was not required, the conduct of the work adhered to JSP 536 ethical principles and was overseen by Dstl. Note: if this work went through Cranfield University’s current processes for the review of consultancy research, the risk level would be graded low (CURES/21003/2023).

The researchers were present to brief the SMEs on the UDA method and then facilitate a consensus on task ratings. Before completing the UDA task rating method, the SMEs were informed about the aims and objectives of the study. They voluntarily agreed to give their inputs by completing an informed consent form, which provided information on research ethics and data protection procedures. Each consultation required SMEs to gather as a group and involved a two-stage procedure described below.

Task Ratings – Stage 1

At Stage 1, SMEs completed the UDA questions individually and recorded their responses by hand on a printed task rating form. For each task, the 10 question items were applied at the subtask level. Having rated all subtasks, the SMEs were then asked to add the individual weighted rating values for each subtask.

To aid selection of the most appropriate responses, the UDA task rating form includes a ‘Definitions’ section for each question to enable SMEs to select the response which best matches their knowledge and experience of the task. The definitions work to increase its reliability when multiple analysts are used. To illustrate, the definitions section for the question on built-in feedback explains that, in selecting an answer, the most important thing to consider is whether feedback to the job holder indicates correctness of performance at each step. Examples provided include where equipment does not allow subtasks and their elements to be performed out of sequence or cue the steps required. The SMEs were asked to review each question and its associated ‘Definitions’ section, along with any available reference material for the task, for example, training documentation or aide memoires. To mitigate potential bias and variability the researchers ensured the SMEs had a sufficiently firm understanding of the question items and available responses to enable them to apply their expert judgement to the rating of tasks. The researchers explained the definitions for each possible response in turn. The researchers also explained the presence of conditional branching for some items, that is, responses to some questions would determine whether an answer was required for the next question in the sequence or whether this should be skipped.

Task Ratings – Stage 2

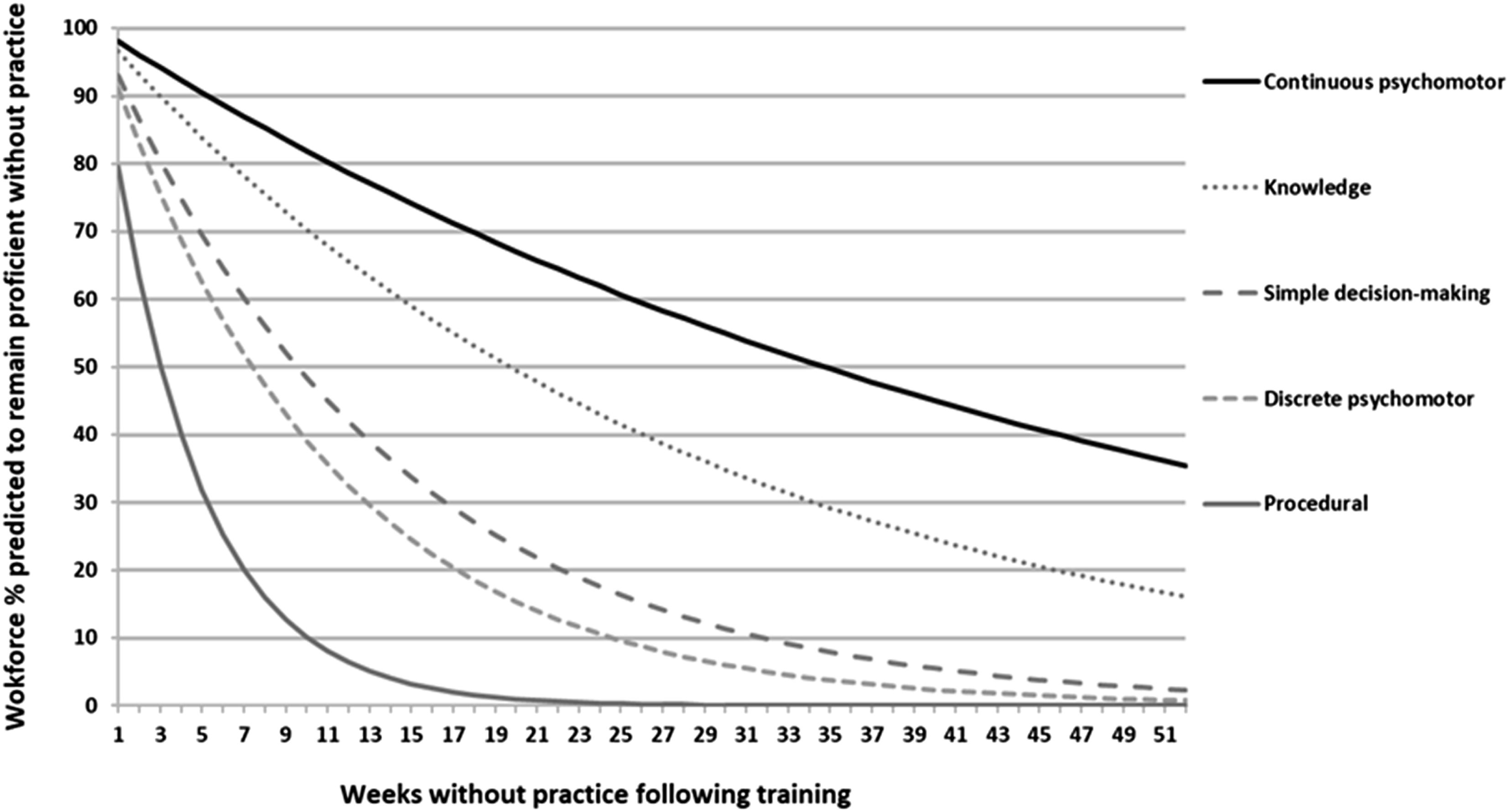

During the second stage, any differences in SME rating values assigned to the subtasks were identified and resolved through group discussion, facilitated by the researchers, to arrive at consensual agreement on the ratings for each of the five tasks. For each subtask, the agreed rating value for each question was recorded by the researcher. The agreed rating values were then totalled, and the average score calculated to produce a single score for each of the five psychological skill domains. The UDA formula was then applied to each domain score to produce indicative decay curves out to 12 months of non-practice for each psychological domain (see Figure 2). Predicted decay curves for five psychological domains.

The indicative decay curves for the five psychological domains (see Figure 2) demonstrated differences in their predicted retention rate. Consequently, the psychological domains influence the extent to which skilled performance of tasks is retained over 12 months. Since the UDA algorithm is based on the aggregation of individual performance data, the psychological domain decay curves represent the percentage of a workforce predicted to retain the skill (i.e. remain proficient) after a period without practice.

Retention Level Category Development

Those responsible in organisations such as the military for the design and delivery of training require a practical method for the analysis and prediction of skills retention; this is what the CRA-T, underpinned by the UDA algorithm (for explicit knowledge, physical and lower-order cognitive skills) was created to do. The taxonomy together with the decay curves generated for five out of the nine psychological domains provided the foundation for the development of the CRA-T.

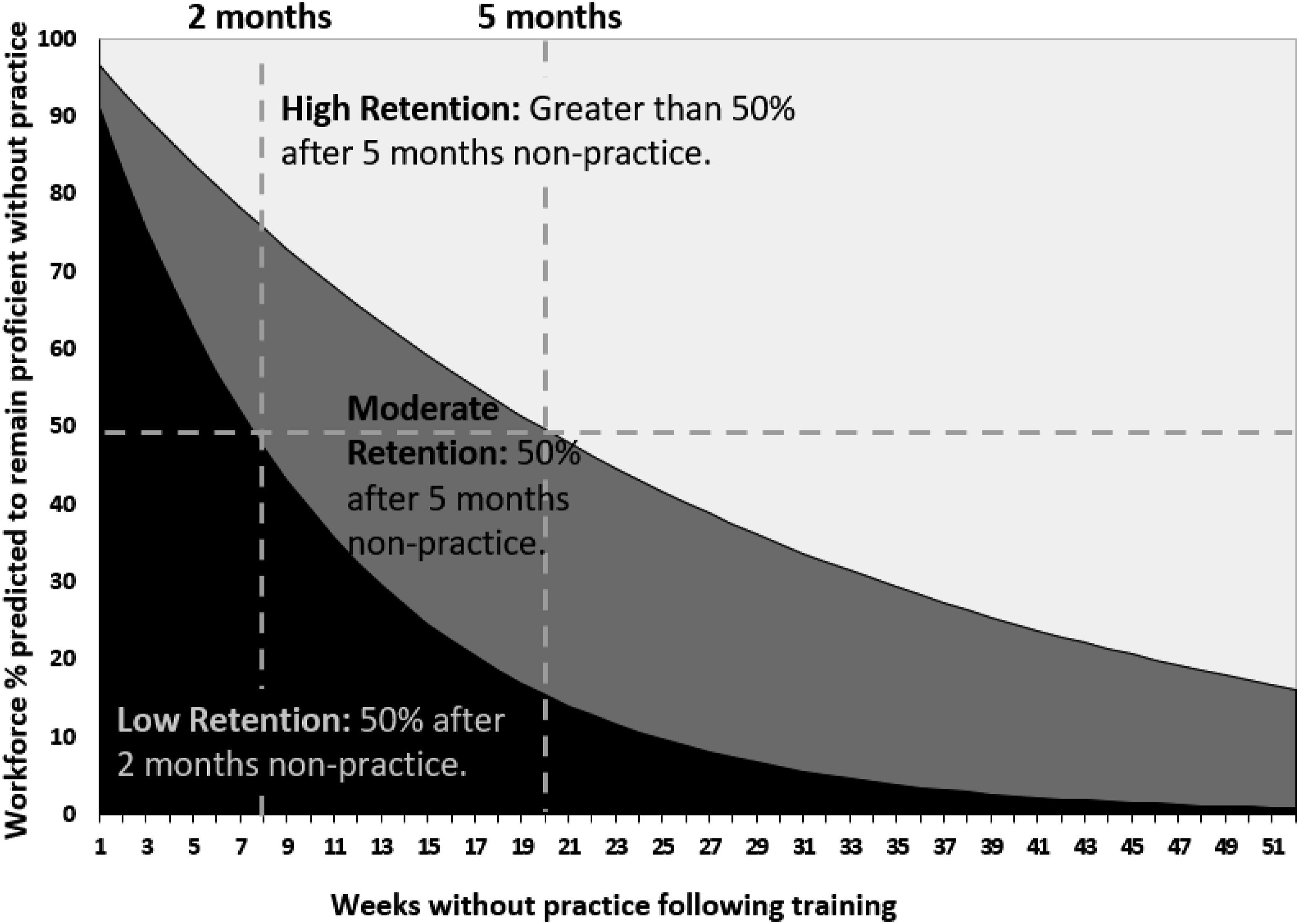

The decay curves for the psychological domains in Figure 2 were categorised into a practical traffic light system to generate three retention level categories. These retention categories were based on a UDA score range, derived from that which was obtained through applying the UDA to tasks representative of five psychological domains. The low retention (red) category represented a UDA score between 80 and 120. The moderate retention (amber) category represented a UDA score between 125 and 150 and the high retention (green) category represented skills that are easy to retain with a UDA score falling between 155 and 180.

Using the UDA scores, Figure 3 translates the above retention level categories into indicative retention category ‘zones’: high (light grey, in place of green), moderate (dark grey, in place of amber) and low (black, in place of red). The indicative decay curves for each psychological domain in Figure 2 are aligned to these zones. These ‘generic retention zones’ provide a practical evidence base which can inform training policy decisions and be implemented by those who manage training requirements. The rationale behind generating the retention category zones in Figure 3, and therefore the range of UDA scores behind each category, is that the decay curves produced by the UDA algorithm have been found to be pessimistic when compared to actual performance data (Rose, Czarnolewski et al., 1985; Ciancialo et al., 2010). Retention category zones for the psychological domains.

The point at which 50% of the workforce are predicted to remain proficient was used to define the three retention level category zones: high, moderate and low in Figure 3. It is entirely coincidental that these intervals are the same as two of the three retention test intervals of time (two and five months) used to gather data during the original UDA research as reported by Rose & Czarnolewski, et al. (1985).

A recent study (Peebles, 2024) assigned participants to one of three training schedule conditions (massed, space or mixed) to test PPE predictions of human performance. In addition to this main analysis, three subtasks from the Air Force Multi-Attribute Task Battery (AF-MATB: Miller et al., 2014) were mapped to one of three CRA-T skill domains: A tracking task (continuous psychomotor domain), a resource management task (simple decision-making domain) and a communications subtask (procedural domain). The pattern of differences in the retention profiles for these subtasks were not in line with the CRA-T categorisation. For instance, in all three conditions, participants rapidly attained and retained very high accuracy levels in the communications subtask mapped to the Procedural domain. However, present authors assert that the AF-MATB is more representative of CRA-T’s Integrative skill domain. This is a multitasking environment (Miller et al., 2014) involving the integration of coordinated skill domains underpinning the subtasks. Thus, the subtasks are performed concurrently rather than separately. The literature suggests such complex cognitive skills are better retained due to the deeper processing requirements needed to generate the task mental models underpinning performance (Sanli & Carnahan, 2018; Villado et al., 2013; Wang et al., 2013). Notwithstanding the small sample size (n = 27), the focus on individual performance (i.e., not workforce capability levels) is not directly comparable with CRA-T.

The CRA-T Process – A Guide for Practitioners

The CRA-T is included within JSP 822 as an approach to the specification of job-related refresher training intervals (UK MOD, 2024). Application of the CRA-T involves five steps. Steps 1 to 4 of the CRA-T are required if individuals wish to know more about the retention of knowledge and skills within subtasks and wish to specify evidence-based refresher training intervals and priorities. Step 5 is relevant if individuals wish to apply the CRA-T output to identify relevant training design and delivery options to improve competence acquisition and retention. The five steps are described below to assist training practitioners in applying CRA-T.

Step 1 – Establish a Clear Understanding of the Task

A clear understanding of the task should be acquired or developed. The level of granularity required is determined by that information which provides the clearest understanding of the task or job, in terms of identifying the requisite knowledge and skills. Wherever possible, reference should be made to any key sources of information such as job scalars and role performance statements that are typically collected as part of a TNA. Other relevant training documentation could include a competence framework. These should provide a hierarchical description of all the elements and sub-elements of a job or role. For example, a learning scalar decomposes the Training Objective (TO)/task into Enabling Objectives (EOs)/subtasks and Key Learning Points (KLPs)/task elements. As more than one type of knowledge and/or skill can underpin an EO/subtask, the KLPs/task elements will provide the granularity needed to perform CRA and articulate the knowledge and skills required to complete the EOs/subtasks.

Step 2 – Match Subtasks/EOs to Psychological Domains

With a clear understanding of the skills and knowledge required for the job or role, the task elements/KLPs and subtasks/EOs which form the main task can then each be mapped to the nine psychological domains of the taxonomy. The mapping of knowledge and skills, represented by the task elements/KLPs and subtasks/EOs, to one of these psychological domains is important because the ability to retain these types of knowledge and skills varies. It is important to read and understand the psychological knowledge domain definitions in Table 2 and the psychological skill domain definitions in Table 3. This supports the matching of each task element/KLP and subtask/EO to the psychological domain that best represents the knowledge or type of skill required to perform it.

Considering the action verb used to describe the task elements/KLPs and subtask/EOs will help in mapping to the correct psychological domains. For example, the action verb ‘Describe’ indicates the explicit knowledge domain underpins performance. However, the action verb may not always provide a clear and practical indication of the psychological knowledge or skill domain being applied in the conduct of the task element/KLP or subtask/EO. For example, ‘Manage’ could indicate the procedural skills or simple decision-making skills domain. Therefore, SME knowledge of the task/job role is needed in order to best match task elements/KLPs and subtasks/EOs to the psychological domains. In some cases, it may also be necessary to look at the steps (i.e. the next level of granularity below the task element/KLP) to better inform understanding of the domains required at the task element/KLP level.

Mapping should start with the task elements/KLPs, as this provides the necessary granularity to inform the subsequent mapping of the subtasks/EOs and, where appropriate, tasks/TOs. Where all task elements/KLPs are mapped to the same psychological domain, then this domain should also be mapped to the subtask/EO. Where task elements/KLPs are mapped to a combination of psychological domains, but they are performed separately, then the psychological domain that is most susceptible to decay should be mapped to the subtask/EO. Where task elements/KLPs match a combination of two or more different psychological skill domains that are performed concurrently (i.e. integrated), the integrative domain is matched to the subtask/EO. Here, the integrative domain is responsible for managing attention to effectively coordinate and integrate the other psychological skill domains that underpin performance of this subtask/EO.

Where all subtasks are not mapped to the same psychological domain, the task/TO cannot be mapped to a psychological domain because a combination of psychological domains is involved in performance of the Task/TO. In such cases only, the task elements/KLPs and then the subtasks/EOs can be mapped to a psychological domain.

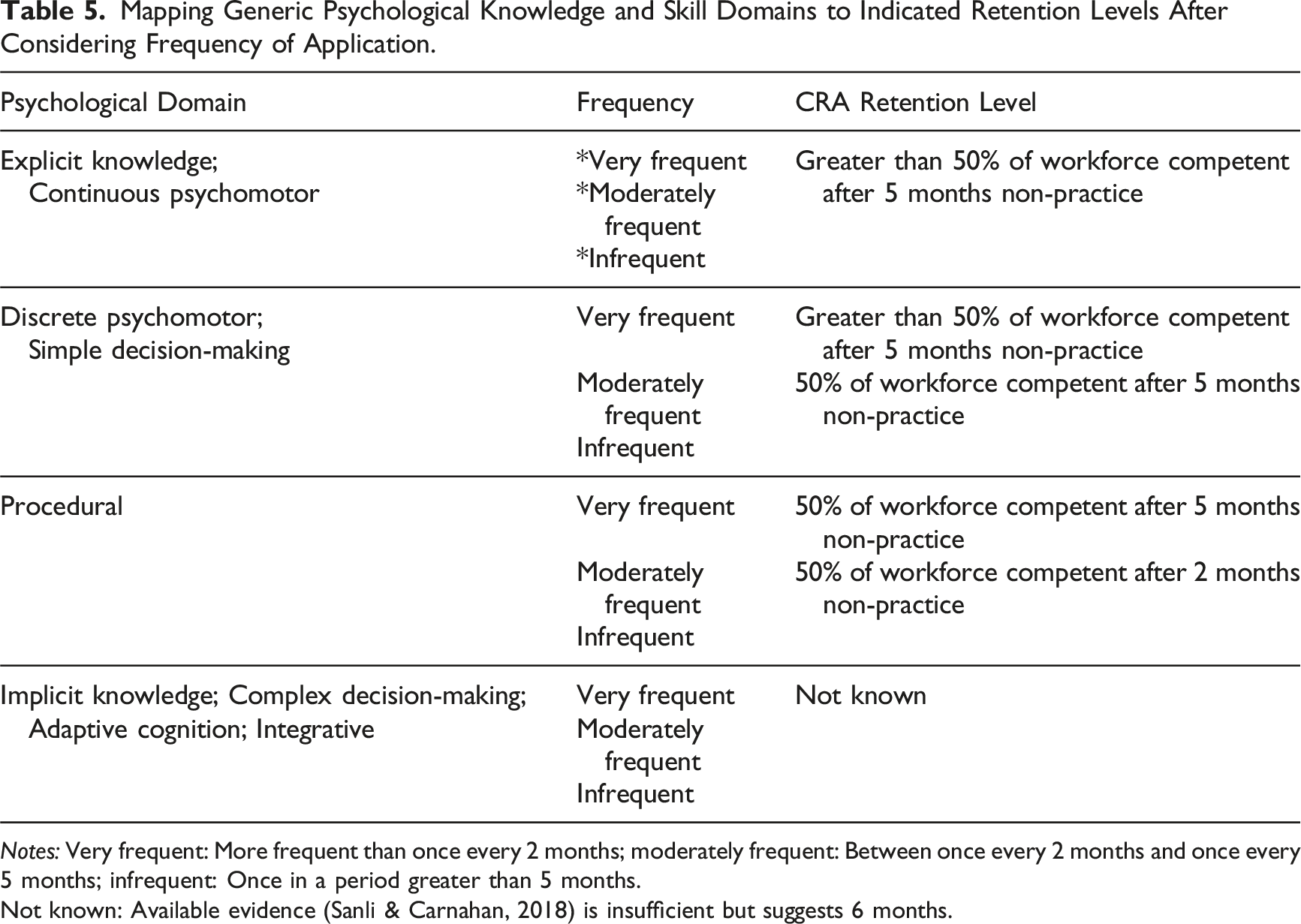

Step 3 – Consider Frequency of Application and Assign Retention Level

Mapping Generic Psychological Knowledge and Skill Domains to Indicated Retention Levels After Considering Frequency of Application.

Notes: Very frequent: More frequent than once every 2 months; moderately frequent: Between once every 2 months and once every 5 months; infrequent: Once in a period greater than 5 months.

Not known: Available evidence (Sanli & Carnahan, 2018) is insufficient but suggests 6 months.

The UDA model that underpins the CRA-T does not consider the frequency with which knowledge and skills are practised following training, which can moderate the rate at which they fade over time (Bryant & Angel, 2000; Cianciolo et al., 2010). Therefore, the potential moderating role of practice on the alignment of psychological domains with retention levels (Cahillane et al., 2020) can only be considered in terms of what would be expected based on the literature. Importantly, individuals with low and high general mental abilities equally benefit from practice to maintain cognitive skills (Frank & Kluge, 2018a). To illustrate, although procedural skills decay rapidly (Cahillane & Morin, 2012) if they are performed very frequently (i.e. more than once every two months), then a moderate rather than a low level of retention would be expected (Larsen, 2018). Where discrete psychomotor skills are performed very frequently, a high rather than moderate level of retention would be expected. This is because frequent practice of the motor element helps to bind the cognitive component (i.e. sequence of actions) in memory (Clawson et al., 2001; Wickens et al., 2013). Where simple decision-making skills are performed very frequently, this supports the development of expertise and a sensitivity to cues, patterns and expectancies (Klein, 1998). Therefore, a high rather than moderate level of retention would be expected.

The knowledge and continuous psychomotor domains are mapped to the high retention level regardless of frequency of practice, that is, the frequency of performance selected. Table 5 defines high retention as ‘greater than’ 50% of the workforce being competent after 5 months non-practice. However, in the high (light grey) retention zone in Figure 3, there is a large area of space above the 50% workforce proficiency point out to 12 months. This suggests 50% or more of a workforce could remain competent in subtasks underpinned by the knowledge and continuous psychomotor domains out to 12 months.

As no predictive model exists that could be applied to define a retention interval for the four complex cognitive skill domains, these are aligned to the currently ‘Not known' interval. Available evidence of their retention is insufficient but suggests up to 6 months (Sanli & Carnahan, 2018).

Step 4 – Consider Subtask/EO criticality

The output of Step 4 is an SME judgement about subtask/EO criticality. Criticality in this context refers to the impact of an inadequately performed subtask/EO on operational capability and safety. Subtask/EO criticality does not affect retention levels; however, subtask/EO criticality analysis should be completed to identify the risks carried with regards to the susceptibility of the subtasks/EOs to knowledge and skills fade. Considering the criticality of successful performance of subtasks/EOs can support training designers by informing the prioritisation of subtasks/EOs when making decisions regarding the allocation of resources to refresher training and/or the design and delivery of training. A typical criticality analysis might consider each subtask against the following three levels: • Very Critical: Failure is likely to have a severe impact upon operational capability or the wellbeing of personnel or equipment. • Moderately Critical: Failure is likely to have a moderate impact upon operational capability or the wellbeing of personnel or equipment. • Not Critical: Failure is unlikely to have an impact upon operational capability or the wellbeing of personnel or equipment.

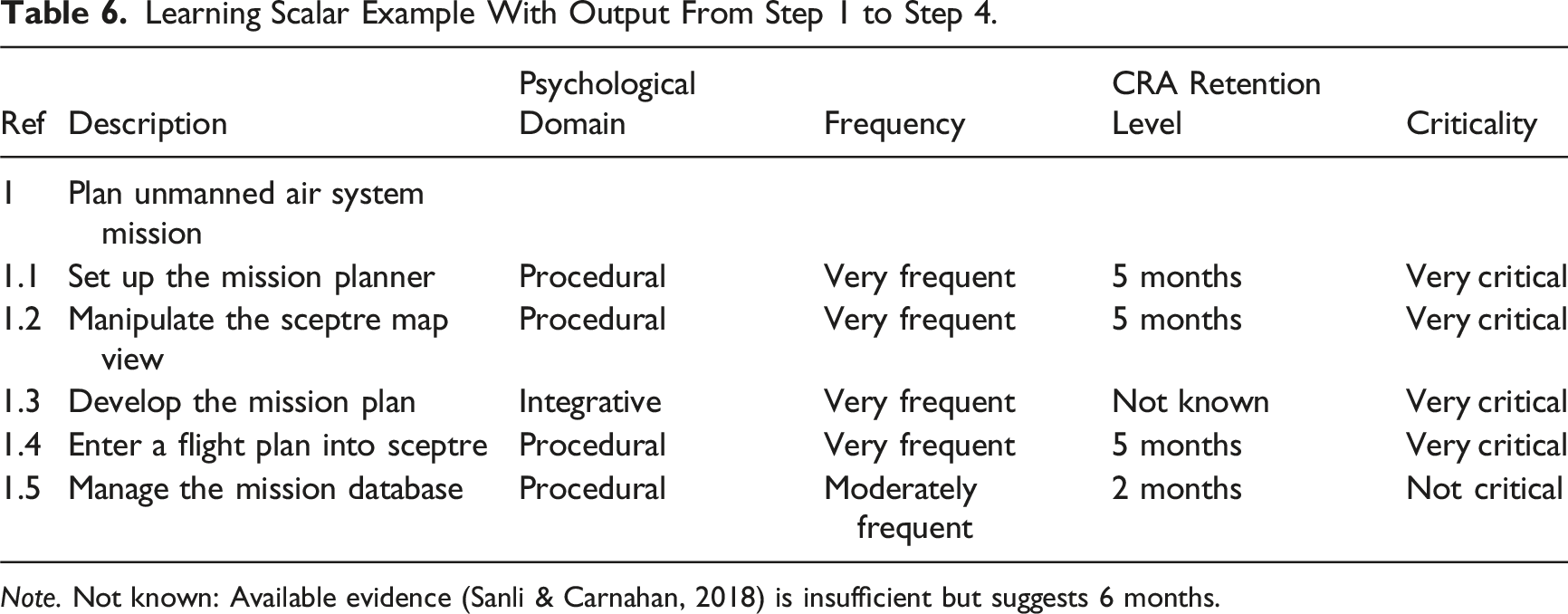

Learning Scalar Example With Output From Step 1 to Step 4.

Note. Not known: Available evidence (Sanli & Carnahan, 2018) is insufficient but suggests 6 months.

Step 5 – Use of CRA-T Output in Training Design and Delivery

This step is about considering other factors, other than task frequency, which may influence the retention of the knowledge and skills that underpin the very critical and/or moderately critical subtasks/EOs identified in Step 4. Considering how these influencing factors can be addressed within training design and/or delivery supports the management and retention of knowledge and skill.

In addition to general influencing factors such as initial training conditions (Cianciolo et al., 2010; Vlasblom et al., 2020), there are specific influencing factors relevant to explicit knowledge, physical and simple cognitive skills and those which are applicable only to implicit knowledge and complex cognitive skills. In terms of the latter, factors include part-task training (Angel et al., 2012); well-designed human-machine interfaces, such as the provision of recognition cues (Stothard & Nicholson, 2001; Xu et al., 2008), guiding an operator’s attention to significant areas on the screen (Frank & Kluge, 2018b), appropriate simulation fidelity (Cianciolo et al., 2010), task-oriented training (Sabol & Wisher, 2001) and interleaved practice (Pusic et al., 2012). Factors applicable only to complex cognitive skill domains include understanding task complexity (Stasielowicz, 2019; Wood, 1986), the development of task mental models (Wang et al., 2013) and introducing whole-task practice (Wickens et al., 2013).

Conclusions

UK MOD training and education policy recommends the CRA-T as a process grounded in psychological principles, specifically created to support the acquisition and retention of knowledge and skills (UK MOD, 2024). It provides training practitioners with a broad framework for considering competence retention at the workforce level. It makes general assertions and projections about psychological domain retention and can be used to inform training management decisions regarding the design and delivery of refresher training. The CRA-T has also been incorporated into a practical handbook for military training practitioners (UK MOD, 2024). It has successfully been applied to identify the psychological domains and retention levels for a range of military tasks (NATO STO, 2023) involving physical and lower-order cognitive skills. Examples include the use of digital BIMS (Cahillane et al., 2015a), military annual training tests (Cahillane et al., 2015b) and the maintenance and operation of military vehicles (Webb et al., 2013). CRA-T could also be applied to identify the psychological domains and retention levels for safety critical tasks in other industries. These include but are not limited to nuclear plant and safety workers, oil riggers and uncrewed system operators.

The CRA-T is internationally recognised as a significant evolution of the long-standing empirically based UDA (NATO STO, 2023). It simplifies the process of understanding skill retention for practitioners by removing the need to apply the UDA, a resource consuming rating method requiring multiple SME consensus. By identifying the psychological domains involved in task performance insights can be gained into the retention of these components, allowing the determination of those requiring extra attention. Task decay curves generated by the UDA do not provide this level of granularity.

The CRA-T is intended to assist defence personnel with responsibility for making decisions concerning the management, development and design of training in reducing the impact of skill decay and enhancing competence retention. Irrespective of the fact it was developed for Defence training purposes, the principles of CRA-T are equally applicable to practitioners in any large organisation where systematic approaches to training analysis and design are required. Specifically, it is relevant to two audiences. Training analysts can use CRA-T outputs during the TNA process to justify training solutions and the training budget. Training designers can use CRA-T outputs to inform the specification of training priorities (initial and refresher) and the scheduling of refresher training or practice intervals. The outputs can also be used during the development of training to mitigate subsequent knowledge and skills decay. Furthermore, the CRA-T can be applied retrospectively to evaluate and improve extant training designs.

A limitation of the CRA-T is the requirement for extensive longitudinal empirical data to define retention levels for the complex cognitive skill domains. The focus on lower-order physical and cognitive skills and their retention thus limits the framework’s generalisability. However, CRA-T does have applicability to complex real-world tasks, given these will increasingly involve skill combinations and dependencies between simple and complex cognitive skills. It may be that any decay in lower-order simple cognitive skills impacts complex cognitive skill performance. Application of CRA-T’s taxonomy can support training practitioners in identifying cognitive complexity within tasks. To date, it has served to identify complex cognitive domains in a range of military tasks, including responding to an incident and carrying out decision-making in an emergency (Cahillane et al., 2020). Note that such complex tasks are carried out in contexts other than the military.

During development, the use of a single task to represent each psychological domain is a further limitation. Only tasks of sufficient breadth and depth (i.e. comprising multiple subtasks) that could be aligned to a single psychological skill domain were of relevance to the development of CRA-T, constraining the choice of tasks. The number of tasks was further restricted by resource constraints associated with applying the UDA with multiple SMEs to develop CRA-Ts retention categories. Such constraints are typically experienced in the conduct of applied research in the field and within highly realistic environments. Nevertheless, multiple tasks under each psychological domain would afford the generation of multiple retention curves to assess the variability among UDA scores. This would enable identification of the extent to which tasks representative of the same domain generate similar predictions of retention. As derived from the subtask UDA scores for each of the five tasks used to develop CRA-T, an average retention curve could be calculated for multiple whole tasks and used as the basis for CRA-T predictions. Future work should obtain observational performance data to validate CRA-Ts retention categories and the underpinning psychological decay curves derived from application of the UDA.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research was funded by the Defence Science and Technology Laboratory (DSTL) through the Defence Human Capability Science and Technology Centre (contract number: DSTLX-1000069524) and the Human and Social Sciences Research Capability (contract number: DSTL/AGR/01035/01).

Data Availability Statement

Research data are not shared. Data supporting this study cannot be made available due to commercial restrictions and the nature of the research (i.e. classification of the military tasks and subtasks used to represent the psychological domains).