Abstract

Plastic surgeons routinely use 3D-models in their clinical practice, from 3D-photography and surface imaging to 3D-segmentations from radiological scans. However, these models continue to be viewed on flattened 2D screens that do not enable an intuitive understanding of 3D-relationships and cause challenges regarding collaboration with colleagues. The Metaverse has been proposed as a new age of applications building on modern Mixed Reality headset technology that allows remote collaboration on virtual 3D-models in a shared physical-virtual space in real-time. We demonstrate the first use of the Metaverse in the context of reconstructive surgery, focusing on preoperative planning discussions and trainee education. Using a HoloLens headset with the Microsoft Mesh application, we performed planning sessions for 4 DIEP-flaps in our reconstructive metaverse on virtual patient-models segmented from routine CT angiography. In these sessions, surgeons discuss perforator anatomy and perforator selection strategies whilst comprehensively assessing the respective models. We demonstrate the workflow for a one-on-one interaction between an attending surgeon and a trainee in a video featuring both viewpoints as seen through the headset. We believe the Metaverse will provide novel opportunities to use the 3D-models that are already created in everyday plastic surgery practice in a more collaborative, immersive, accessible, and educational manner.

Keywords

Background & Need

“The Metaverse” has become omnipresent in recent technology news and can be defined as the “convergence of virtually-enhanced physical reality and physically persistent virtual space”. 1 With recent advances in Augmented and Mixed Reality headsets, this now has become technically feasible – an experience of mixed virtuality and reality.

Elmer et al. 2 shared ideas for potential applications of using the Metaverse in plastic surgery, such as global outreach, patient education, resident training, or preoperative planning. Shafarenko et al. 3 highlight multiple advantages of model-based simulation in Mixed Reality for plastic surgery planning and education.

Already today plastic surgeons routinely use 3D-photography to recreate digital patient representations 4 as well as 3D segmentation models from radiological imaging data for preoperative, virtual surgical planning in complex reconstructions.

However, we are currently still viewing these models on 2D-screens, greatly diminishing their potential for easier spatial comprehension of three-dimensional anatomical relationships. Bringing these already existing high-fidelity digital 3D-models into the Metaverse is not complicated and would facilitate communication between members of the surgery team, e.g., in resident education to investigate 3D patient anatomy, in cross-specialty case conferences and across adjacent disciplines like pathology or radiology (e.g., to enable remote, visual and intuitive communication referring to 3D models rather than using written reports only 5 ).

Methodology and Technique Description

Here, we present our vision of the reconstructive metaverse for preoperative surgical planning across the surgical team and for simultaneous education on surgical decision-making strategies with trainees.

Virtual models of the patient’s skin surface, rectus abdominis muscle, and DIEA (deep inferior epigastric artery) vascular tree were created from CTA (high-resolution mixed arterial-venous phase) as previously reported on. 6 CT angiography (CTA) has been established as the standard modality for preoperative imaging for optimal perforator differentiation in breast reconstruction.7,8 Models were colored (skin = translucent grey, rectus abdominis muscle = translucent blue, arteries and perforators = opaque red), with different red color intensities allowing for differentiation of supra-fascial, intramuscular, and submuscular vascular segments.

We imported these models into Microsoft Mesh on Microsoft HoloLens 2° (Microsoft, Redmond, WA, USA). HoloLens is a Mixed Reality-headset that superimposes digital models directly within the user’s real-world surroundings with natural hand interaction. Mesh is Microsoft’s interpretation of a Metaverse application for professional collaboration on digital models. The models can be annotated with different markings and arbitrarily positioned in space – a shared physical-virtual space for all users. Every user is represented by an avatar, depicted depending on their current perspective looking at the model allowing life-like interactions with each other. Since avatars are seen where real users see themselves, users can physically walk around the model and most importantly see everyone’s hands interacting with the model. Especially having accurate hand-tracking allows for very intuitive interaction with digital models – one simply points at an interesting anatomical structure. Simultaneously, Mesh provides a conference call in the background, thus, permitting real-time communication among users.

Preliminary Results

We performed 4 preoperative discussions for deep inferior epigastric artery perforator (DIEP) flaps in our reconstructive metaverse.

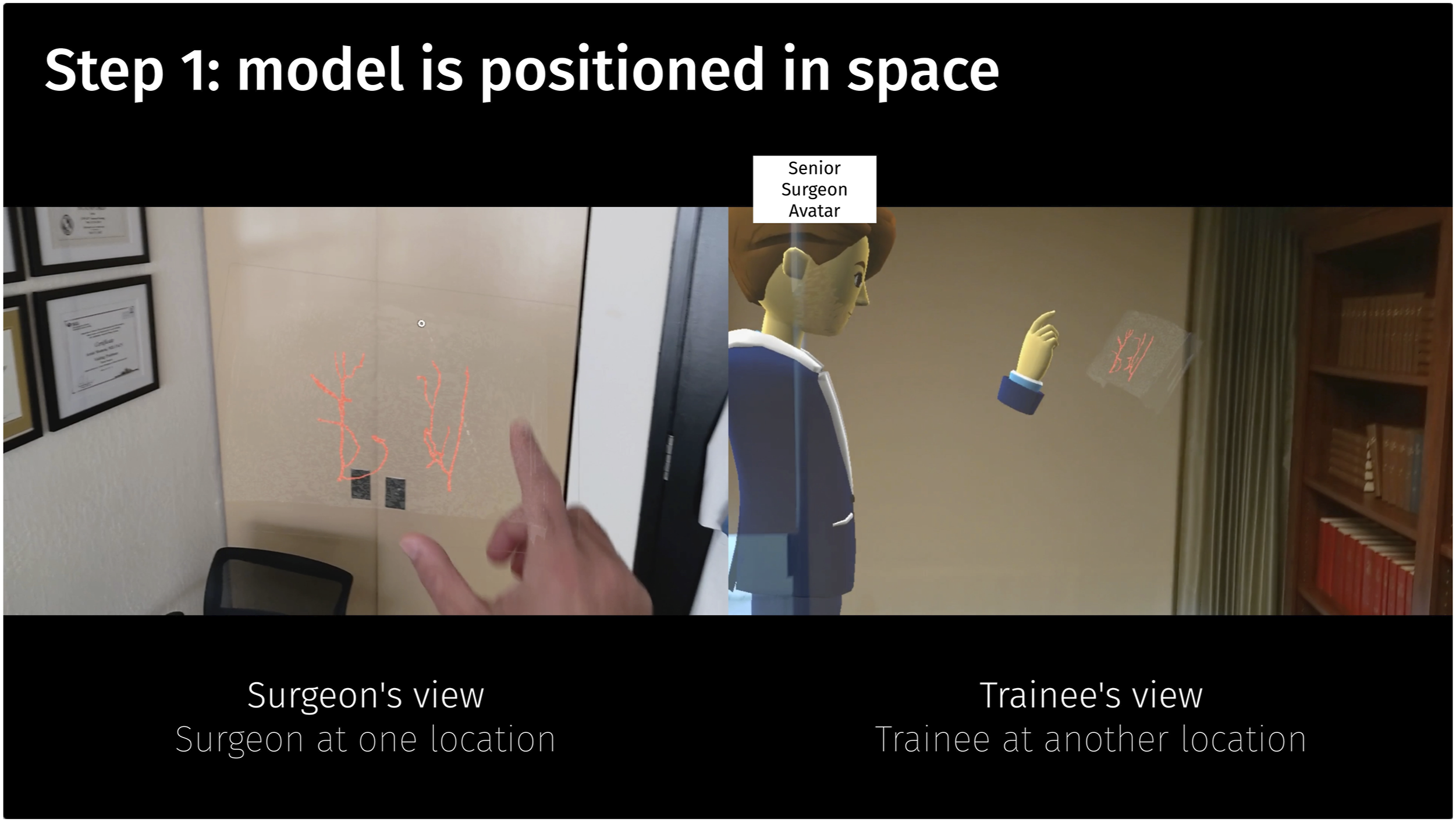

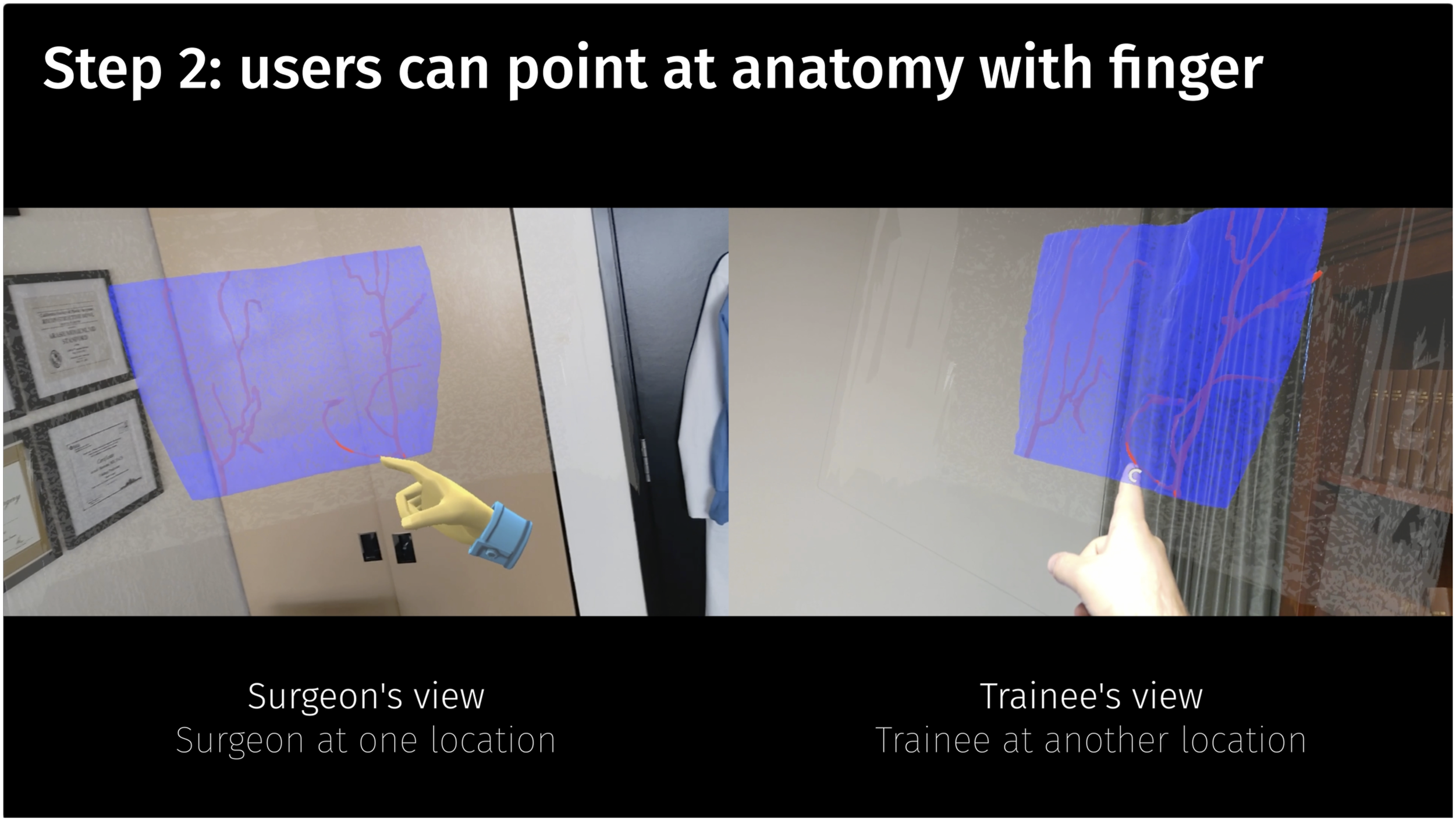

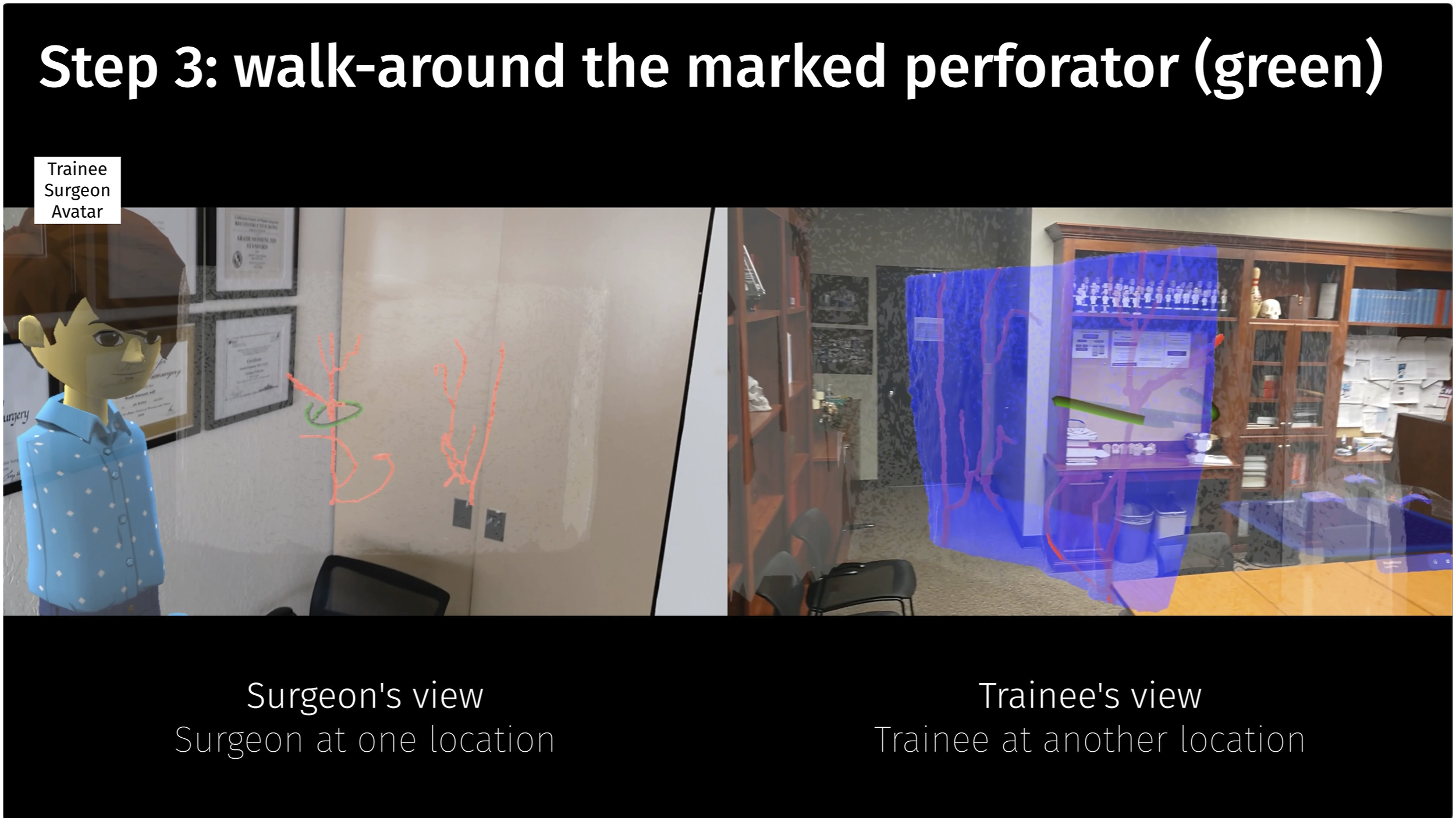

For simplicity, we demonstrate the entire workflow as a one-on-one interaction between an attending surgeon and a trainee in Video 1. The surgeon (avatar in a suit) picks one of the cases from a table and positions it in space for easy viewing (Step 1, Figure 1). The model is turned to a posterior-anterior view and the perforators embedded in the blue muscle become visible (e.g., allowing discussion on eccentricity as a consideration for perforator selection). The trainee uses their finger to point out parts of the DIEA and interesting perforators (Step 2, Figure 2). Lastly, the model is turned back to an anterior-posterior view and the perforator to be harvested is marked with a 3D-pencilstroke (green). Meanwhile, the trainee (avatar wearing a shirt) can walk around the model in space during discussions and observe the 3D-marked perforator for a full 3D spatial understanding of its location within the surrounding anatomy (Step 3, Figure 3). Multiple users – here, a senior attending surgeon and a junior resident surgeon – have joined in a session in the Reconstructive Metaverse, both at completely independent locations First, a case is picked and can be positioned arbitrarily in the shared space. Note, that the senior surgeon’s hands and avatar position correspond directly with their real position in regard to the model. Due to highly accurate hand-tracking, users can simply user their fingers to point at anatomical structures. As the avatars are represented correctly in the shared cyber-physical space in regard to the model, users can walk around the model and are shown to fellow participants in their true location with regards to their perspective looking at the abdominal model.

Current Status

To our knowledge, this is the first demonstration of preoperative plastic surgery planning in the Metaverse where an attending and a trainee discuss a DIEP-flap harvest completely remotely at independent physical locations on a digital abdominal model from CT angiography in a shared physical-virtual space. The ease with which the patient’s anatomy can be studied collaboratively becomes apparent. This is an example for how technology can be leveraged to improve collaboration and interaction between team members, free from space constraints.

Using these models in the metaverse, we observed several benefits. Firstly, not having to meet at the same location allowed much easier, spontaneous scheduling of preoperative discussion with the entire team. Secondly, trainees felt more confident and comfortable during flap dissection due to having a more intuitive anatomical understanding by more naturally being familiarized with the patient-specific anatomy. Thirdly, the senior surgeon felt that pedicle dissection was more expeditious due to 3D pre-planning. Furthermore, residents particularly appreciated being able to discuss the intra- and submuscular course of the vascular pedicle preoperatively, which normally presents a challenge when confronted with unknown anatomy. Lastly, the 3D visualization and interactivity were very appreciated by all team members when compared to conventional 2D greyscale slice display. Future studies would be needed to investigate the impact on surgical performance and outcomes.

As can be observed in the video, depending on the perspective, the semitransparent, blue muscle sometimes is visible and sometimes is not. We suspect this to be a limitation of the rendering shaders currently used in Microsoft Mesh, which could be alleviated in future iterations of the software. We believe the Metaverse will enable a multitude of new opportunities as using a 3D-representation of a patient’s true anatomy will allow for easier understanding and collaboration compared to a 2D-depiction – from anywhere, by anyone on the team and in real-time.

Supplemental Material

Footnotes

Acknowledgments

We would like to thank Chris Le Castillo, RT, MS from the 3D and Quantitative Imaging Laboratory (3DQ Lab) at the Department of Radiology, Stanford University School of Medicine, for his support in 3D model creation for this study. The present work was performed in fulfillment of the requirements for obtaining the degree „Dr. med.“ for Fabian Necker. We would like to thank BaCaTeC - Bavaria California Technology Center, Erlangen, Germany for funding Fabian Necker and Michael Scholz for this project.

Author Contributions

F. Necker, D. Cholok, M. Shaheen, M. Fischer, K. Gifford, T. Chemaly, C. Leuze, M. Scholz, B. Daniel, A. Momeni.

1. Made a substantial contribution to the concept or design of the work; or acquisition, analysis or interpretation of data.

2. Drafted the article or revised it critically for important intellectual content.

3. Approved the version to be published.

4. Each author should have participated sufficiently in the work to take public responsibility for appropriate portions of the content.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: F. Necker is a part-time research student at Siemens Healthineers (Erlangen, Germany). Dr. C.W. Leuze is a co-founder and owner of Nakamir Inc (Menlo Park, CA). Dr. A. Momeni is a consultant for AxoGen, Gore, RTI, and Sientra. The remaining authors have no conflict of interest to disclose.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: F. Necker and Dr. M. Scholz have kindly been supported by BaCaTeC – Bavaria California Technology Center, Erlangen, Germany (funded by the Bavarian State Ministry for Science and Art). Project title: Patient-specific visualization of (vascular) anatomy in mixed reality for interventional and surgical planning; Start of funding: 01/01/2022. F. Necker is a scholarship holder at the graduate center of the Bavarian Research Institute for Digital Transformation (Munich, Germany) funded by the Bavarian State Ministry for Science and Art.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.