Abstract

Learning surgical skills require critical visual-spatial motor skills. Current learning methods employ costly and limited in-person teaching in addition to supplementation by videos, textbooks, and cadaveric labs. Increasingly limited healthcare resources and in-person training has led to growing concerns for skills acquisition of trainees. Recent Mixed Reality (MR) devices offer an attractive solution to these resource barriers by providing three-dimensional holographic representations of reality that mimic in-person experiences in a portable, individualized, and cost-effective form. We developed and evaluated two holographic MR models to explore the feasibility of visual-spatial motor skill acquisition from a technical development, learning, and usability perspective. In our first, a pair of holographic hands were created and projected in front of the trainee, and participants were evaluated on their ability to learn complex hand motions in comparison to traditional methods of video and apprenticeship-based learning. The second model displayed a 3D holographic model of the middle and inner ear with labeled anatomical structures which users could explore and user experience feedback was obtained. Our studies demonstrated that scores between MR and apprenticeship learning were comparable. All felt MR was an effective learning tool and most noted that the MR models were better than existing didactic methods of learning. Identified advantages of MR included the ability to provide true 3D spatial representation, improved visualization of smaller structures in detail by upscaling the models, and improved interactivity. Our results demonstrate that holographic learning is able to mimic in-person learning for visual-spatial motor skills and could be a new effective form of self-directed apprenticeship learning.

Keywords

Introduction

Learning anatomy and hands-on surgical skills are core components in surgical education. Such visual spatial motor skills are particularly important in fields such as plastic surgery that involve complex reconstructions of delicate components like the hands and face requiring advanced anatomical spatial understanding and fine hand-eye motor coordination. 1 To gain proficiency in anatomy and surgical skills, the current gold standard is in-person “you see one, you do one” apprenticeship learning in the operating room and in cadaveric labs on human or animal specimens.2,3 However, increasingly limited healthcare resources and restricted work hour policies have led to progressively less in-person training time for trainees with growing concern for acquiring increasing amounts of skills/knowledge within the same time.4,5 This problem was highlighted during the COVID-19 pandemic that saw a 50-75% reduction of elective surgical OR time of all plastic surgery residents placing emphasis on the need for effective self-directed learning methods outside of the hospital. 6

Currently, surgical residents still predominantly use lecture, textbook, and video learning as their self-study tools yet most perceive these traditional tools to be inadequate or insufficient to prepare them for real-life operations. 6 To improve upon these self-directed methods, a variety of digital technological innovations have been developed including high-fidelity interactive 3D computer models for learning anatomy to 3D apps on tablets that illustrate the steps of a surgical procedure to sophisticated computer workstations where trainees use external controllers/instruments with motion tracking to practice procedures in a virtual environment.7–10 Many studies have illuminated the effectiveness of these innovative digital tools for learning anatomy and surgical skills compared to traditional methods including enhanced learner motivation, improved scores and confidence, transfer of skills to reality, longer retention times, and shorter learning curves.2,11,12

However, despite all these benefits, studies have also shown a lack of adoption of digital self-study tools across all levels of medical training. For example, a recent survey in 2020 of 115 plastic surgery residents in Italy showed that only 13% actively used virtual/digital tools in their independent learning while another study showed that the majority of medical students still preferred cadaveric dissection and traditional 2D media over their digital learning tools for learning anatomy.6,13 Common barriers included lack of accessibility, lack of portability, and high cost with many of the more sophisticated simulators ranging from $80,000 to $200,000. 14 For the more accessible technologies like interactive 3D anatomical models, students found a lack of true three-dimensional representation in viewing 3D models on a 2D display compared to real life preventing widespread adoption and use. 13

In recent years, new mixed reality (MR) technology headsets such as the Microsoft HoloLens may overcome existing barriers by providing true three-dimensional holographic representations of reality that could mimic in-person experiences in a portable and cost-effective form. Unlike virtual reality (VR) technology where everything you see is virtual, these MR headsets have transparent lenses that allow you to see life-like 3D holograms within your physical space. With integrated tracking sensors, you can manipulate these 3D holograms naturally using your own hands or physical tools and walk around the holograms as if they were truly objects in your real space.14,15 (Figure 1) Early development versions of such advanced MR headsets currently already retail from $500 to $3500 USD. Furthermore, a handful of studies are already showing that holographic learning of anatomy using the Microsoft HoloLens can mimic in-person cadaveric learning experiences.

16

This ability to mimic and interact naturally with life-like objects in a portable, accessible, cost-effective manner has spurred much excitement for the potential use of MR headsets like the HoloLens in self-directed education. Learner wearing a Microsoft HoloLens headset to view and interact with 3D holograms in her physical space. The above image “Augmented Reality for Medicine” by Valery Heritier is licensed under CC BY-NC-ND 2.0.

Self-directed education in innovative and highly technical fields such as plastic surgery could benefit significantly from MR technology such as the HoloLens 17 and spurs the question - can we use holograms for visual spatial skills acquisition and mimic in-person training experiences? To date, no studies have explored the feasibility of doing so. To travel towards that reality, we developed holographic models that can be displayed through the HoloLens to explore the feasibility of visuo-spatial-motor skill acquisition from a technical development, learning, and usability perspective through two pilot studies. Specifically, pilot study one examines the feasibility and effectiveness of learning visual spatial motor skills such as hand motions from a pair of holographic hands compared to traditional methods of apprenticeship and video-based learning. Pilot study two focuses on exploring medical trainees' perspectives on the feasibility of learning visual spatial anatomy from a holographic model of the middle and inner ear.

Methods

Pilot Study 1: Holographic Hand Motions

Concept

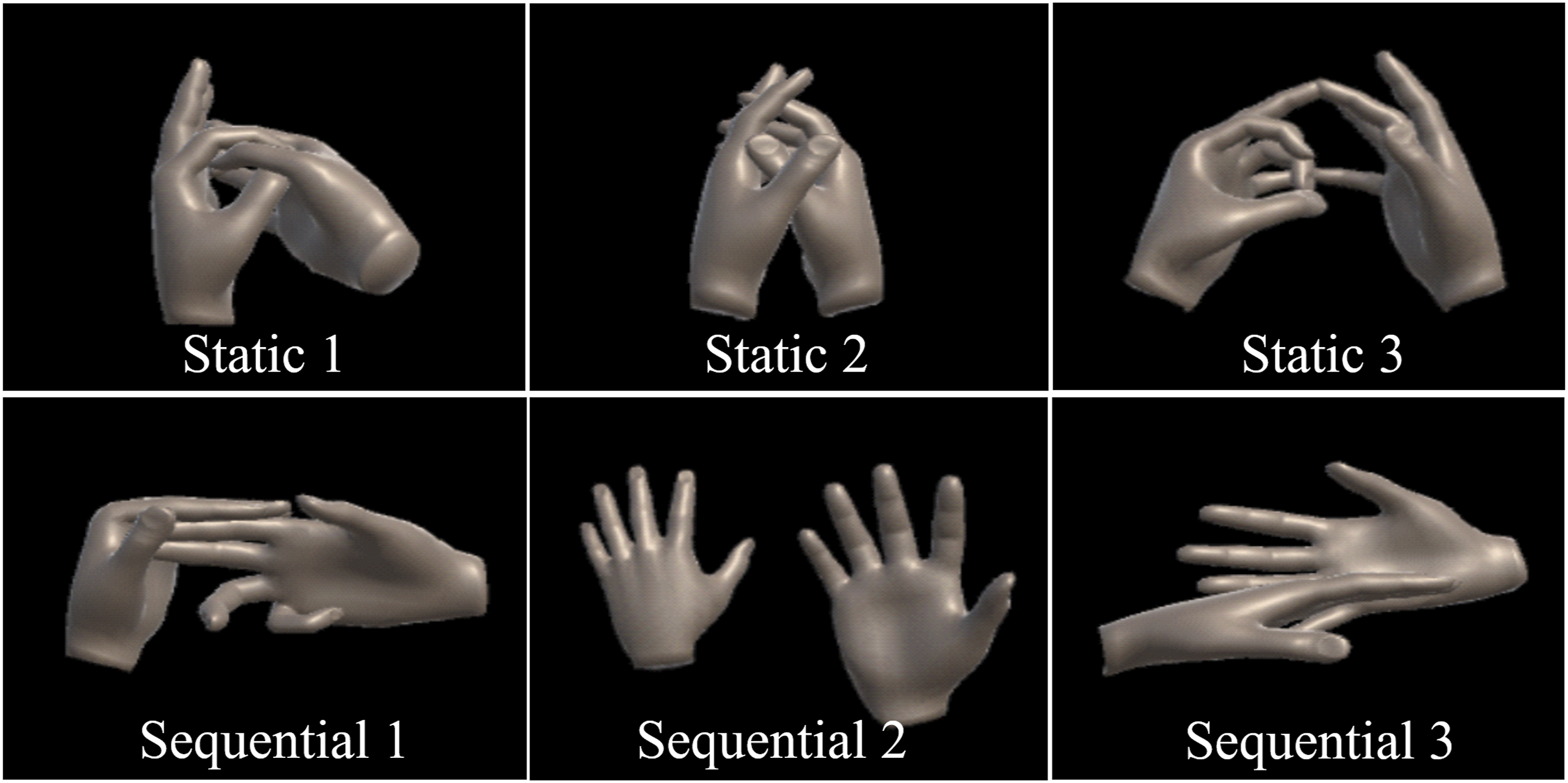

A pair of holographic hands was created and projected in front of the trainee using the Microsoft HoloLens. (Figure 2) The holographic hands performed a variety of custom-made hand motions involving dexterity and hand-eye coordination such as precise positioning of the fingers of one hand with respect to the other hand in a specific sequence. (Figure 3). The trainee could choose to learn the skill by observing or they could overlay their hands on top of the holographic hands for guidance to mimic the apprenticeship learning experience. Left: Trainee wearing HoloLens learning hand motions. Right: First person view of trainee’s hands and holographic hands seen through the HoloLens. Trainee can overlay their hands on top of holographic hands for guidance in learning hand motions. Six hand motions learned by participants through video, apprenticeship, or holographic hands. Static hand motions (top row) consist of a single pose whereas sequential hand motions consist of a sequence of hand poses (bottom row).

Implementation

The 3D holographic hands and hand motions displayed on the HoloLens were created using MakeHuman, Blender, and Unity (2017 LTS version) software. MakeHuman was used to create static 3D meshes of a pair of hands. Blender, an open-source animation software, was then used to animate the 3D hand meshes to create hand motions. Six custom hand motion animations were created as illustrated in Figure 3. Three of the animations are classified as static indicating a single hand pose and the other three are classified as sequential indicating a sequence of hand poses. These animations were then imported into Unity to be played directly on the laptop for video-based learning or streamed remotely to the HoloLens where the user would see the 3D holographic hand animations playing in front of them in space. (Figure 2) For apprenticeship learning, the hand motions modeled by the animations were demonstrated to the trainee/participant in person by the author of this study. All hand motion animations or live demonstrations for all three learning modalities were shown in a continuous loop.

Validation

We explored the feasibility and effectiveness of learning hand motions from holographic hands in comparison to traditional methods of video and apprenticeship-based learning. Nine (9) participants in total with no prior experience using the HoloLens were recruited for the study. All participants received training with the HoloLens at the start of their session. Each participant learned a total of six hand motions (Figure 3) from 3 different modalities (video, apprenticeship, and holographic hands with the HoloLens) split in the following way: a set of static hand motions (1 video, 1 apprenticeship, 1 HoloLens) followed by a set of sequential hand motions (1 video, 1 apprenticeship, 1 HoloLens). The hand motions to be learned for each modality were assigned randomly to each participant and no limits were placed on the amount of time allowed to learn each motion. Successful completion defined by demonstration of the motion to the author without correction was collected. Upon completion of learning each set of hand motions (static or sequential), the participant was asked to fill out a questionnaire asking them to rate the effectiveness of each learning modality on a scale of 1-5 (5 highest), their preferred learning method, as well as their opinion on if learning from holographic hands was comparable to learning from apprenticeship. General comments/feedback were also collected.

Pilot Study 2: Holographic Anatomy

Concept

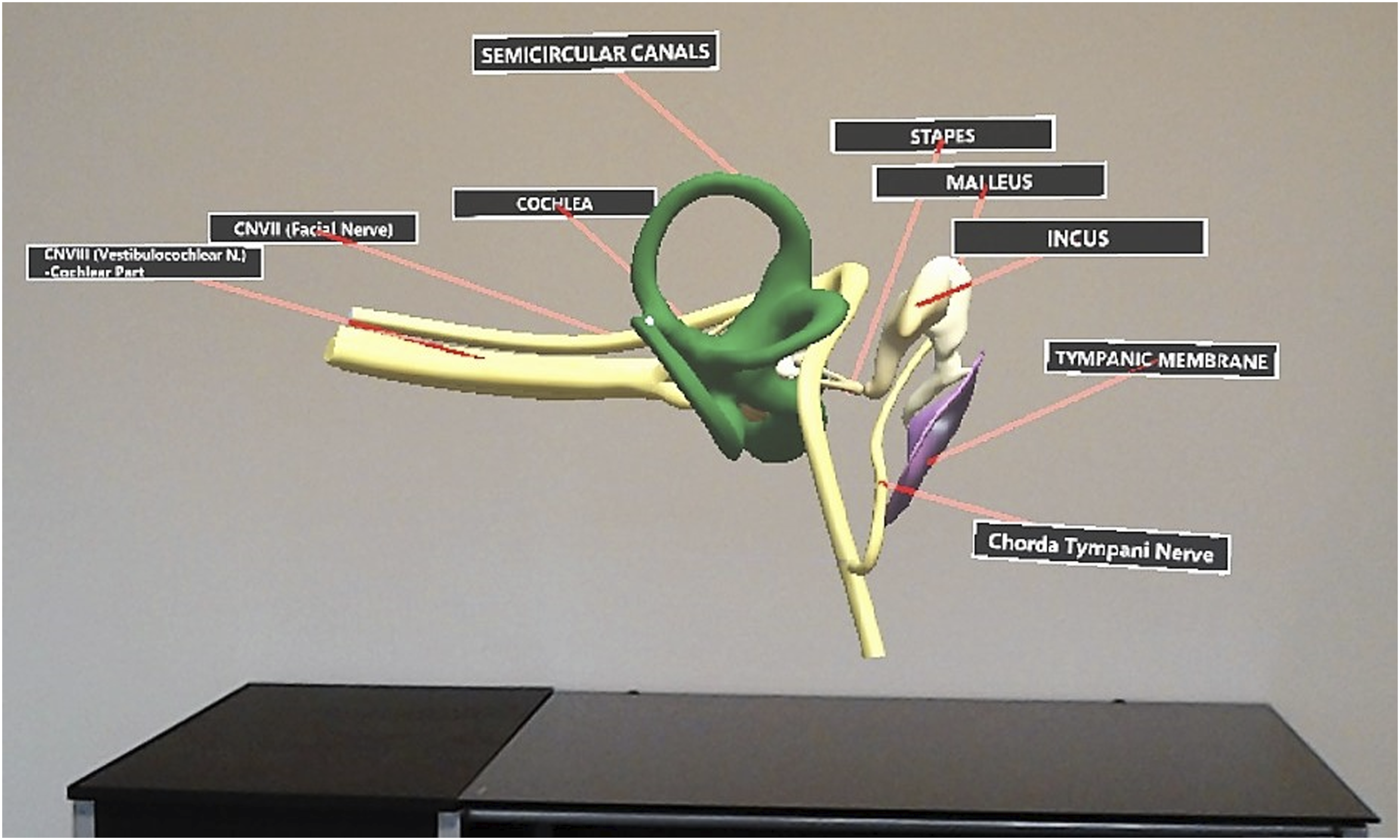

A 3D holographic model of the middle and inner ear with labeled anatomical structures is projected in front of the user using the Microsoft HoloLens. (Figure 4) The user can choose to observe the holographic model from afar or walk around the model for spatial exploration as if the model existed in their real physical space. The user can also see a visual cursor controlled by their gaze to point at structures. No gesture interactions were implemented for the current model. Holographic model of the middle and inner ear seen by the trainee through the HoloLens.

Implementation

The object data for the holographic model used in this study originated from Campbell’s 3D computer model of the inner ear 18 which was derived from Funnell’s segmented MRI data of a human cadaver “3D Ear” at McGill University. 19 Both Campbell and Funnell’s data models are made publicly available and used under CC BY-NC-SA 1.0 and CC BY-NC-SA 4.0 licenses respectively. Multiple Ear, Nose, and Throat (ENT) surgeons at Queens University were also consulted for accuracy of the model. The 3D object data of the middle and inner ear was imported into Unity software to develop and design the holographic model used in this study to be displayed on the Microsoft HoloLens.

Validation

To explore trainees’ perspectives on using a holographic model for learning middle and inner ear anatomy, twenty-six (26) second year undergraduate medical students at Queen’s University were recruited to demo the holographic model using the Microsoft HoloLens. Each student was provided with training for use of the HoloLens, shown the holographic model, and given as much time as needed to explore and learn. Feedback on feasibility and effectiveness as a learning tool using Likert scale ratings (1-5) was collected through a questionnaire. Freeform comments were also collected to further explore students’ unrestricted perceptions on HoloLens hologram learning in comparison to existing methods (ex. didactic lecture, textbook, 3D computer models) and areas of improvement. Participants were encouraged to provide such feedback comments although not mandatory.

Results

Pilot Study 1: Holographic Hand Motions

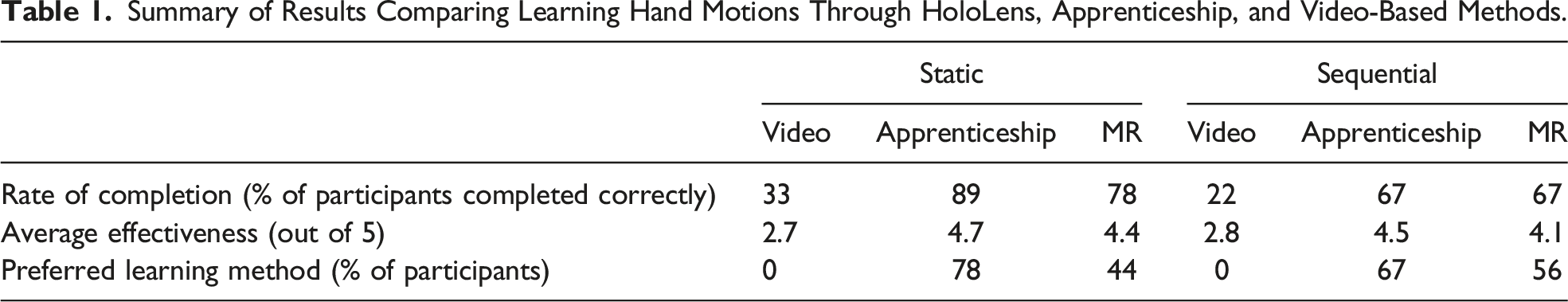

Summary of Results Comparing Learning Hand Motions Through HoloLens, Apprenticeship, and Video-Based Methods.

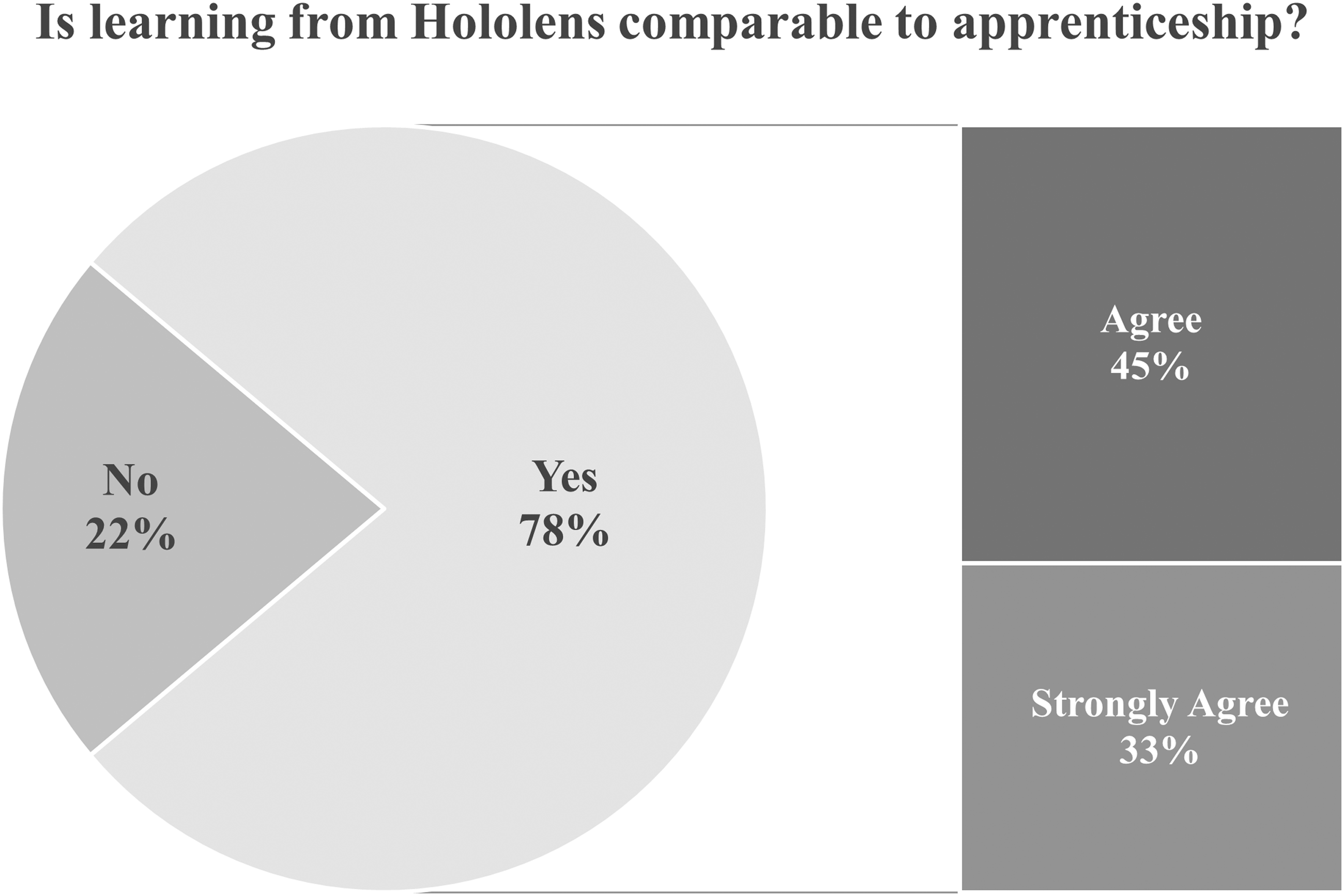

In contrast, scores between HoloLens and apprenticeship learning were quite comparable. For learning static hand motions, 89% (8/9) of apprenticeship participants learned the motions correctly compared to 78% (7/9) of HoloLens participants. For learning sequences of hand motions, learning by HoloLens was equivalent to apprenticeship where both had a 67% success rate. In terms of average effectiveness, participants found both apprenticeship and HoloLens learning to be effective (both >4.0) although average apprenticeship ratings tended to be slightly higher (4.7 vs 4.4 for static and 4.5 vs 4.1 for sequential). Participants tended to choose apprenticeship as their preferred learning method although a significant fraction also chose HoloLens (78% apprenticeship vs 44% HoloLens for static motions). This difference in preference became smaller when learning sequential motions (67% apprenticeship vs 56% HoloLens). The combined scores are >100% as some participants chose both HoloLens and apprenticeship as their preferred learning method. Overall, there was a significant proportion of participants (78%) who thought that learning from HoloLens was comparable to learning by apprenticeship (Figure 5). Participant feedback in comparing HoloLens learning versus apprenticeship learning.

Pilot Study 2: Holographic Anatomy

Questionnaire

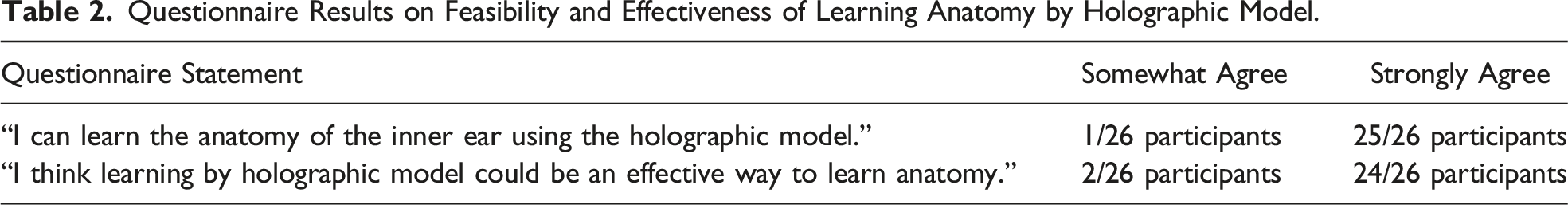

Questionnaire Results on Feasibility and Effectiveness of Learning Anatomy by Holographic Model.

Free-Form Feedback Comments

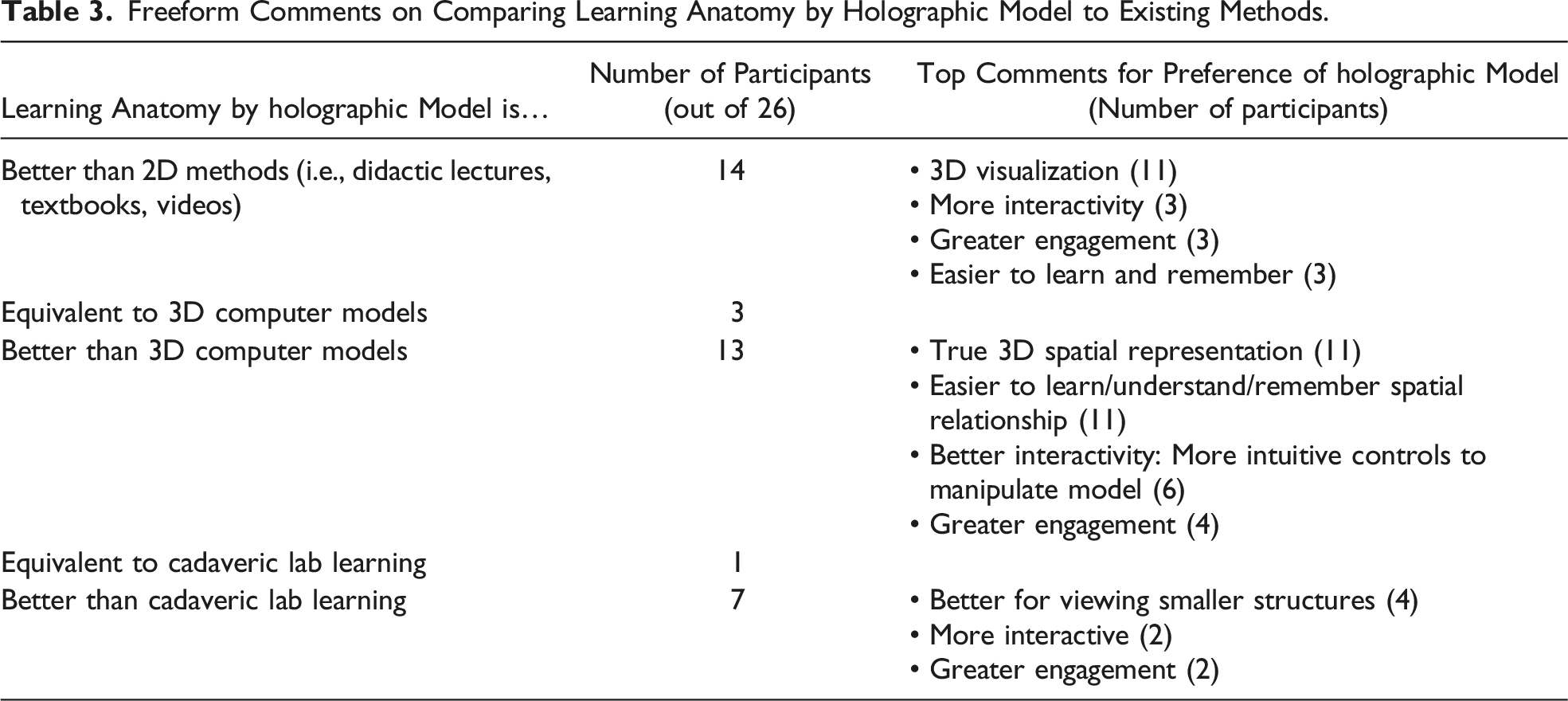

Freeform Comments on Comparing Learning Anatomy by Holographic Model to Existing Methods.

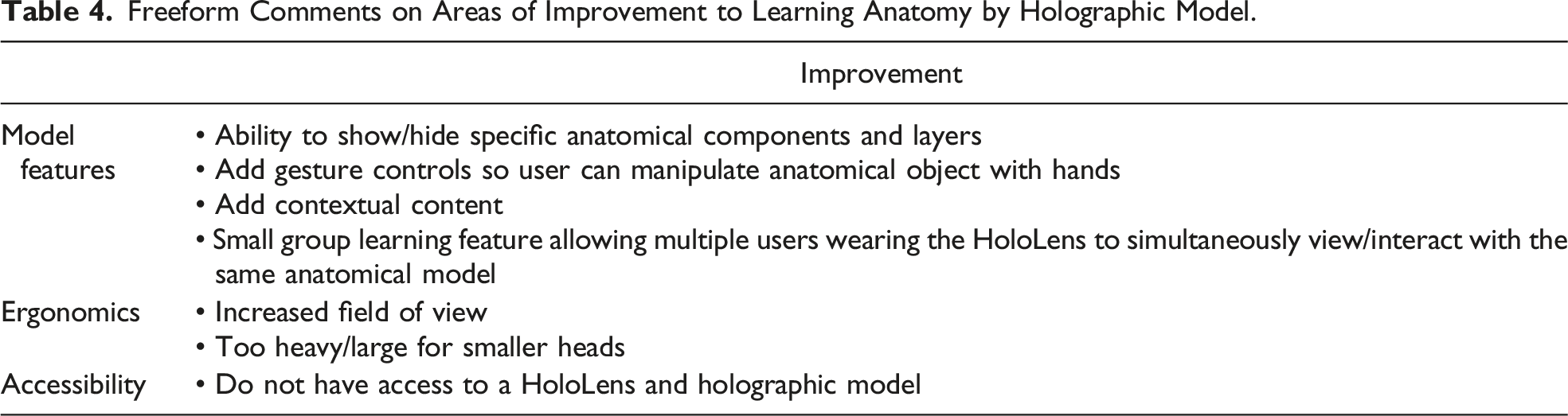

Freeform Comments on Areas of Improvement to Learning Anatomy by Holographic Model.

Discussion

Technical Development Feasibility

New technologies can present with significant technical development difficulties due to their immature state. However, the author found the process of developing holograms for the HoloLens to be feasible with a well-documented software development process. With a programming background but no prior experience in the specific HoloLens hologram development tools (Unity, C#, 3D modelling/animation), each hologram took approximately 1 month of development time from learning appropriate tools to finished product. The programming background helped significantly with learning Unity and C#; however, modelling/3D animation required a completely different skillset and posed more of a challenge in development with a significant learning curve. This may be a common scenario in academia research teams where software developers often do not have significant animation/3D modelling experience which may pose as a barrier for more complex hologram development. In an ideal scenario, hologram development teams should involve a team of at least an animator/3D modeller, a programmer, and a content expert, in this case, educator surgeons.

Learning Feasibility

Learning Hand Motions

Our initial results suggest that it is feasible to learn visual spatial motor skills like hand motions from a pair of holographic hands and may even be as effective as in-person apprenticeship learning. In comparison to video-based learning, both HoloLens and apprenticeship learning significantly outperformed video-based learning on all performance metrics with zero participants preferring video. This was expected as video-based learning could not provide the depth/spatial orientation information required to visualize all parts of the different hand poses whereas HoloLens and apprenticeship allowed for full three-dimensional visualization of the hands in space.

Between HoloLens and apprenticeship learning, the similarities in all performance ratings (completion rate, average perceived effectiveness) are encouraging to suggest that learning hand motions by holograms has the potential to mimic the apprenticeship learning experience. This was further reinforced by 78% of the participants agreeing that the two learning modalities were comparable. With similar performance ratings, it is likely the discrepancy in perceived comparability is related to user usability factors discussed below. It was also interesting to note that the performance ratings were most similar for the sequential hand motions (equivalent completion rates of 67%) which were more complex in nature compared to the static hand motions. This could suggest that the benefits of learning by holographic hands become even more apparent or useful in skills that are more complex in nature and merits further investigation/development.

Learning Anatomy

All 26 participants agreed learning middle/inner ear anatomy from a 3D holographic model was feasible and effective. We explored why this might be so through participants’ free-form comments in comparing HoloLens hologram learning to existing 2D, 3D, and in-person cadaveric methods. In general, many thought holographic learning was effective for enhanced interactivity and engagement, reduced learning curve, and increased retention. This was expected and aligns with known benefits of existing 3D digital methods.2,11,12 However, these known benefits have also been insufficient in driving adoption of existing 3D learning tools with lack of true 3D representation (i.e., displaying a 3D model on a 2D display) a likely barrier. 13 We hypothesized HoloLens learning could overcome this through projection of life-like 3D holograms in the trainee's space. Encouragingly, in our collected feedback, 19/26 participants in this pilot study already perceived HoloLens hologram learning to provide a true 3D spatial representation boding well for future adoption. Specifically, out of those 19, 11 thought holographic learning provided a true 3D representation that could enhance their understanding/learning of spatial anatomical relationships beyond existing 3D models and 8 even thought holographic learning was equivalent or better than cadaveric learning. Given the early-stage holographic model developed with limited interactions, it was perhaps most surprising that out of those 8 participants, the majority 7 noted holographic learning could be even better than cadaveric learning. Such participants noted reasons like enhanced digital capabilities beyond cadaveric learning such as ability to view smaller structures and greater interactivity. Although a small but encouraging number in our study, a growing number of other recent studies have also begun to show equivalent learning efficacy between holographic anatomy and cadaveric learning. These positive perceptions and growing external evidence highlight the potential ability of holographic learning to overcome existing challenges of 3D methods and further combine advantages to provide a true-representation of in-person training experiences with digital enhancements.

User Usability Feasibility, Adoption, and Technologic Limitations

User usability factors are important for user adoption and explored through collecting free-form comments in this study. Common feedback comments for potential barriers to holographic learning included uncomfortable HoloLens headset ergonomics (i.e., too heavy/large for smaller heads and limited field of view), lack of accessibility, and lack of holographic model features. With regards to these potential barriers, significant industry and academia interest in advanced MR technology like the HoloLens have accelerated technical development in this field with multiple large (Microsoft, Lenovo, Apple) and small industry competitors (MagicLeap, Nreal) already addressing and overcoming many of these barriers. For example, since the completion of this study, Microsoft has already released an updated HoloLens 2 headset in 2020 showing significantly improved user wearability/comfort for hours of use and doubled the field of view amongst other technical improvements. 20 Other MR headset competitors such as Nreal are also coming into the market with similar features including better design with light, portable glasses instead of a headset which is much more comfortable for general consumers. 21 With respect to additional holographic model features, we have started off with a simple holographic model with limited interactions by design to gauge initial feasibility. Feedback comments for additional features such as additional gesture control, ability to show/hide layers of anatomical model, and contextual content are all technically feasible and insightful to guide the next iteration of implementation.

A common concern for the accessibility of technology includes costs. There exists various MR hardware, including the Microsoft HoloLens, however, they still range typically upward of at least $2000 USD at minimum with the HoloLens 2 at $3500 USD. Given that this is new technology for most organizations, there will be high upfront costs for each unit in addition to the technologic upkeep by training the IT department. However, the per unit cost of these technologies are expected to decrease with increased uptake following the footsteps of other technologies such as the implementation of computers and e-learning into the medical education field. For example, Nreal’s new MR product has significantly driven down costs to as low as $500 USD per headset making this much more accessible for general consumers. 21 Additionally, unlike cadaveric teaching of anatomy, this technology represents reusable technology, and both the software and hardware can be reused year after year with some upkeep costs after initial investment by the institution. In the long term, this form of reusable technology will be cost-effective for institutions. However, in the interim, it is not yet feasible to expect learners to pay for their own units and as such rental systems will need to be set-up by the institution to allow learners to borrow the hardware and return it for other students’ use which will allow each institution to ensure there is no concern for inequality.

A further common criticism of extended reality technologies is its lack of haptic feedback which is considered crucial for teaching certain procedural skills within surgery. We have only explored its use for teaching complex anatomic knowledge and 3D complex visuospatial hand motions which do not intuitively require haptics for learning. However, for future expansion into procedural or surgical simulations, researchers will need to consider the barrier of lack of haptics. Several solutions have been proposed or implemented. For one, without using any additional hardware, visual maps of pressure or sensation can be used on screen to act as a proxy for the actual haptic sensation. An example includes a colonoscopy simulator which demonstrates too much pressure on the colon wall with the color red on the pressure reading meter on the screen when too much force is applied by the endoscopist. Another solution includes mixed physical and extended reality simulators. These include a physical patient model and/or physical instruments which learners use while wearing a MR headset which can read and project on top of the physical models to walk learners through projected simulations. Another solution includes the incorporation of haptic external hardware such as gloves and bodysuits which can sync up to the MR holograms and simulate physical pressure with the user’s movements. These are only described in its infancy for its use in medical or surgical education and will require further research and streamlining for the development process before they can be implemented for teaching learners.

Overall, with such rapid development in MR headset technology and continued exponential growth in industry, it is encouraging to suggest current adoption barriers in usability factors, accessibility, and features revealed in this study will or already have been addressed in the near future.

Limitations and Next Steps

The current holographic models developed in this study have limited interactive capabilities with no voice/gesture control and thus may not show the full potential or natural experience of interacting with HoloLens holographic objects. However, despite this limitation, the participants in our pilot studies could still learn skills like hand motions as effectively as apprenticeship learning. They could also already perceive holographic learning as a feasible/effective learning method comparable to in-person learning experiences for visual spatial motor skill acquisition like anatomy and hand motions. These limitations may however account for participants' continued preference for in-person training experiences and lower numbers for perceived comparability between holographic and in-person learning. We imagine future development of holographic models with voice/gesture interactions to further close this gap of equivalency between in-person and HoloLens training experiences. Our study further did not use a validated scale to measure the user experience as it is an initial pilot study. In follow-up studies, we will consider the use of scales such as the Game User Experience Satisfaction Score (GUESS-18) and Enjoyment Scale (ENJOY) from the gaming literature to examine the application and the technology itself in a rigorous fashion.

Overall, these positive initial results from two small pilot studies conducted prompts further development and wider exploration of holographic training applications specific to plastic surgery. For visual spatial motor skills acquisition, we imagine applications ranging from basic skills such as knot tying to applications that walk trainees through anatomically complex procedures such as cleft palate surgery. To provide tactile feedback, holographic models and instructional content can also be projected on top of 3D-printed training models. To further enhance self-directed learning experiences, holographic models should also incorporate hand/tool tracking capabilities of advanced MR headsets to provide immediate feedback and skill assessment. In integrating such components, we imagine a future where trainees have access to a low-cost skills simulation center in their own home that mimics their in-person training experiences. Of course, before then, further larger validation studies need to be completed on effectiveness of such tools and transfer of skills to reality.

Conclusion

This initial work has demonstrated feasibility in all domains of technical development, learning effectiveness, and user usability in holograms to learn visual spatial motor skills such as hand motions and inner ear anatomy. Furthermore, these initial results suggest that holographic learning has the potential to mimic in-person learning experiences in both learner effectiveness and spatial representation. The results are encouraging to suggest that holographic learning could be a new effective form of self-directed apprenticeship learning for visual spatial motor skills acquisition that not only combines advantages of existing learning modalities but may also overcome known barriers of adoption to 3D digital tools. Holographic learning can be applied to a wide variety of visual spatial motor skills and should be further explored for specific applications within specific surgical domains.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.