Abstract

This article is a scholarly reflection on using the AI tools Transkribus and AntConc as part of a digital humanities PhD Placement within the British Library to extract metadata from printed catalogues for the online catalogue. This project focuses on BMC XI, the catalogue of English Incunabula at the British Library published in 2007. Transkribus is a “comprehensive platform for the digitization, AI-powered text recognition, transcription, and searching of historical documents” while AntConc is a “freeware corpus analysis toolkit for concordancing and text analysis”. Together, these tools can be used to extract information en masse to be uploaded to the specialist databases MEI (Material Evidence Incunabula) and the ISTC (Incunabula Short Title Catalogue) as well as pick out patterns and trends within incunabula descriptions. This project followed FRAIM (Framing responsible AI implementation and management) principles.

Introduction

As a PhD student interested in digital humanities and their applications, I was thrilled to apply for a PhD Placement at the British Library. My PhD focuses on the history of catalogues becoming museum collection databases and their use and accessibility for users. As such “Computational analysis of early printed book descriptions” a project to use AI, specifically Transkribus, to extract and prepare metadata for use on library catalogues immediately caught my attention. While many catalogues have been physically published, they are not accessible online and the information that they contain on the collection is not included in the online database. By using Transkribus to perform text recognition on the image scans and tag key information, this can be extracted via coding to enrich the collection database and make this information accessible online, not only in person. This placement has given me the exciting opportunity to implement the digital humanities skills I have learned over the course of my first two years of research in a tangible way and continue my goal of gaining experience in digitization and accessibility within galleries, libraries, archives, and museums (GLAM) sector.

Purpose

My work is building from my supervisor’s Rossitza Atanassova’s AHRC-RLUK (Arts and Humanities Council-Research Libraries) professional practice fellowship (Research Libraries UK 2024) where she “investigate[d] the legacies of curatorial voice in the descriptions of incunabula collections at the British Library and their future reuse” (Atanassova 2022).While she worked on BMC (British Museum Catalogue) volumes I–X (published 1908–2007), my work continued where she left off on volume XI, published 2007 (Incunabula Printed Catalogue Dataset Metadata 2023). Transkribus was used for text analysis and to create a website with the information extracted (British Library Incunabula Catalogue Data 2026). AntConc to pick out patterns in writing style of each volume to identify how many cataloguers worked on these catalogues over the one hundred years they were published (Atanassova 2023a). Over the nine months of my placement, I have used the same two platforms as Rossitza to investigate the British Museum Catalogue XI (BMC).

Tools Used: Transkribus and AntConc

Transkribus

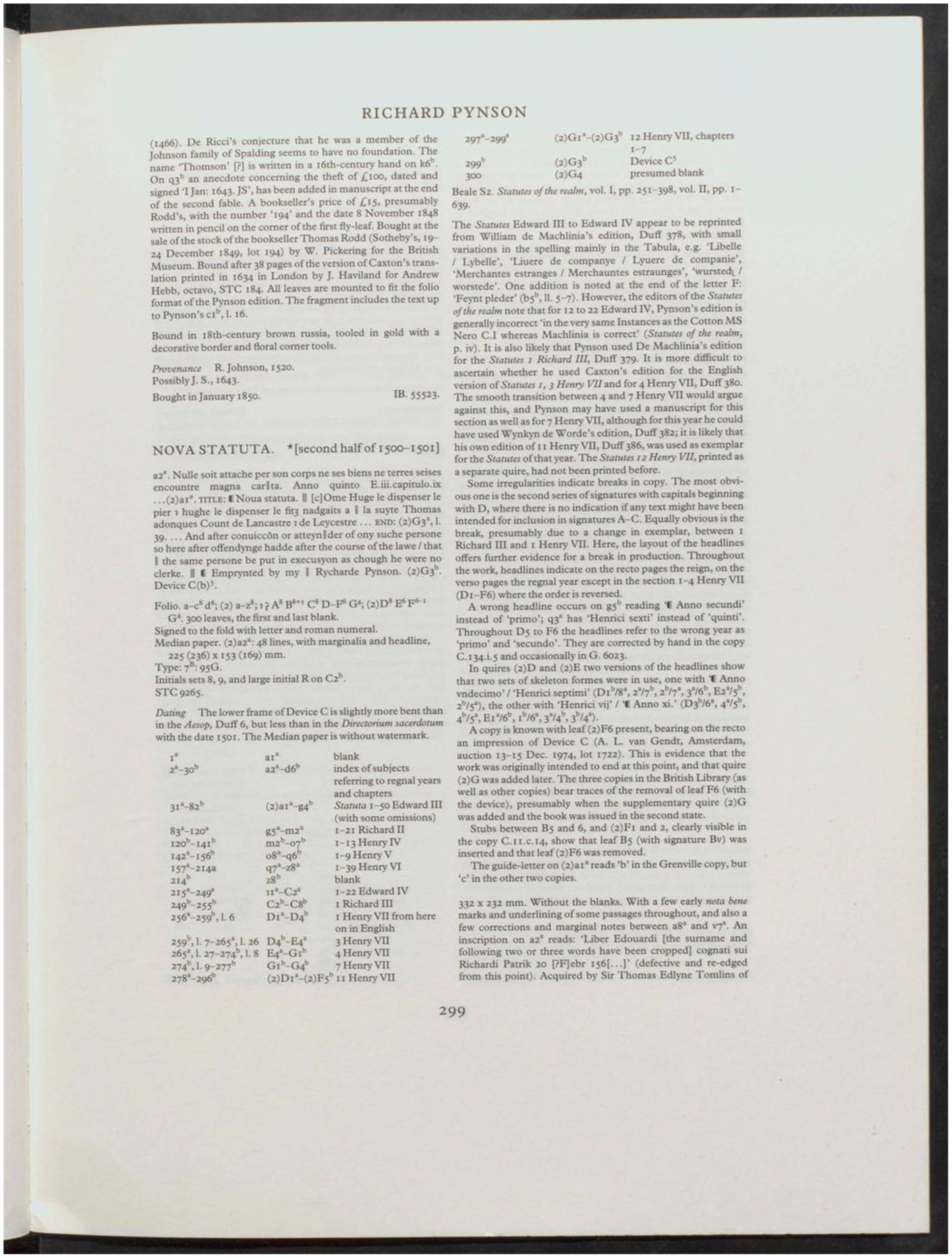

Transkribus is the platform on which I have performed the most work so far in my placement. Transkribus is “a comprehensive platform for the digitization, AI-powered text recognition, transcription, and searching of historical documents” (READ-COOP 2026) and was chosen for its abilities with optical character recognition. As it was created for dealing with historical document scans which is what the British Library had already completed for BMC XI, the AI tool is created for this exact sort of project (Transkribus 2026a, 2026b). This means that the tools included within it are specific, and it does not require excess training to extract the data needed. For this project, layout recognition and OCR (optical character recognition) were the primary tools relied on. As BMC Volume XI was published in 2007, OCR was relatively easy as it was typed document. An issue arose as there are frequently superscripts included which unfortunately the OCR is currently unable to recognize. The BMCs contain data in both paragraph and table format with the tables covering a lot of specific information such as page numbers for illustrations and content. Unfortunately, these tables are not placed at regular intervals within the text, nor do they contain lines that delineate them as tables to the Transkribus software. These tables also go across columns and pages (Figures 1–3).

Image of table in BMC Volume XI demonstrating lack of structure to tables included within the catalogue.

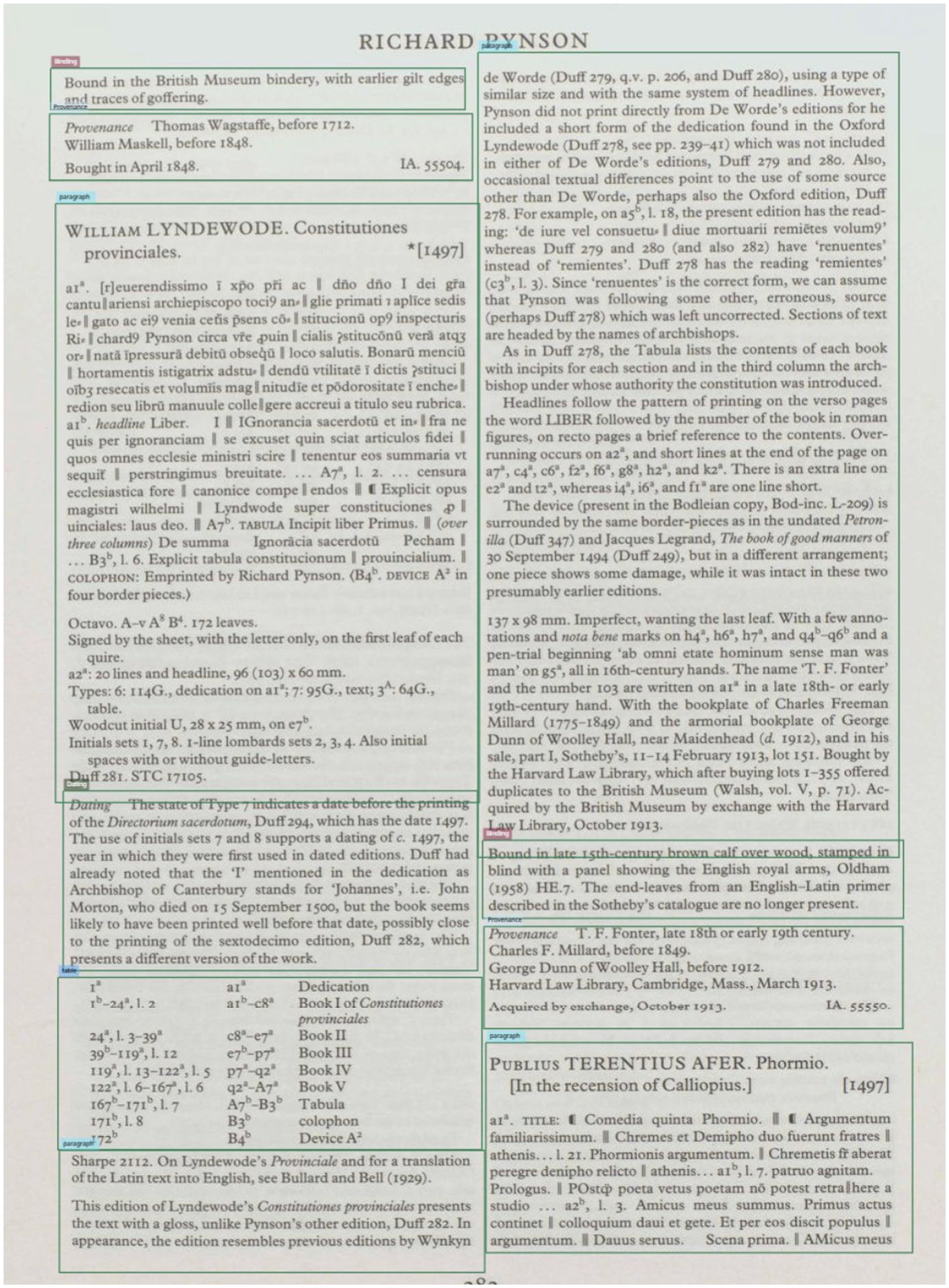

Image demonstrating overlap of bounding boxes with the second field model on BMC Volume XI.

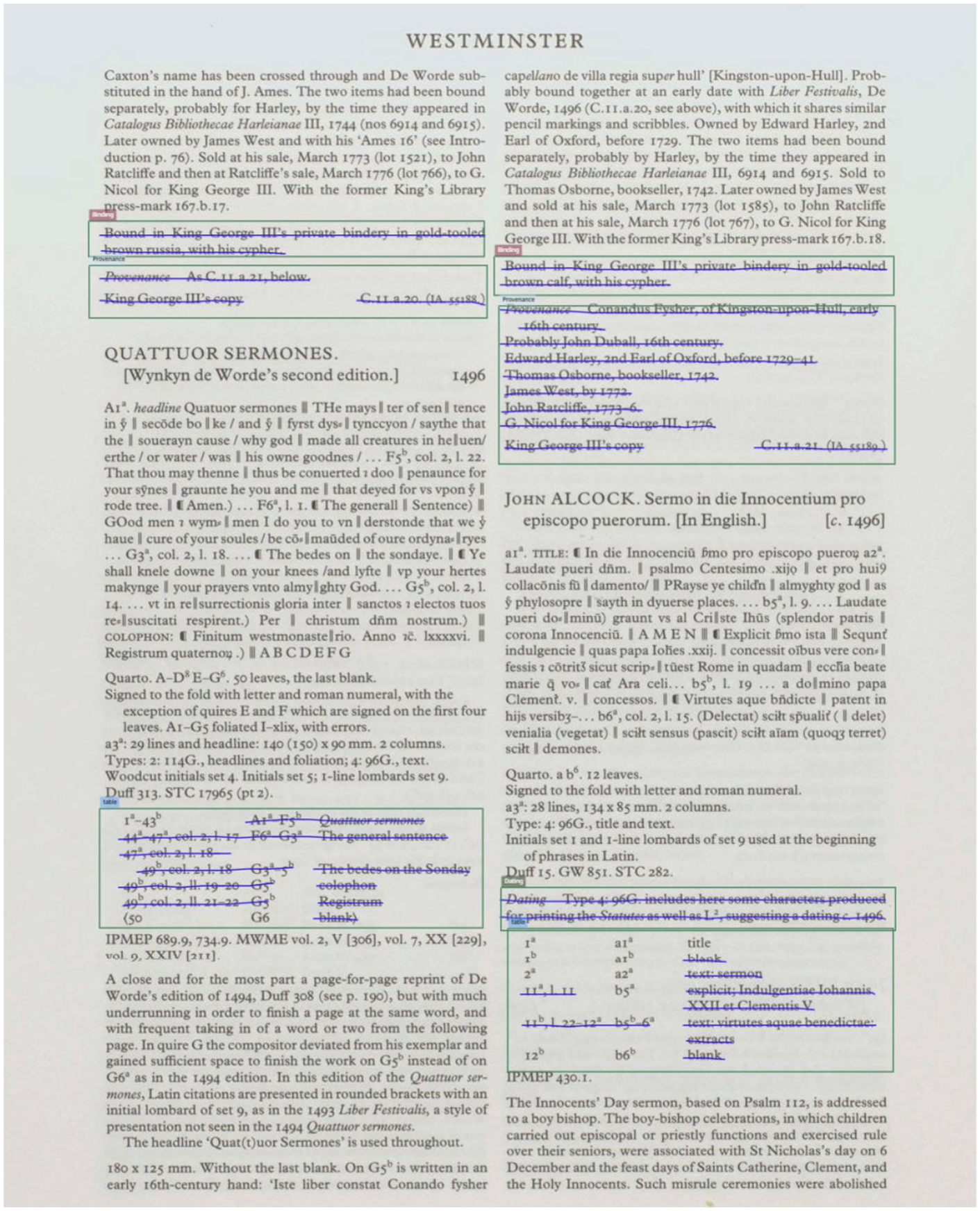

Successful use of field model 3 on BMC Volume XI.

The table model that is specific for layout recognition of tables was first attempted. Unfortunately, they were unable to detect the tables, we then ran field models, a more general layout recognition option (Help Center 2025). With field models, the user can manually tag sections on a page such as tables or charts, or in this case dating, provenance and binding regions. After these are tagged and taken as ground truth, the model can be trained to recognize where these regions and tag the information on the remaining pages of the catalogue. This then makes it possible to extract specific regions with bespoke python code written for this project.

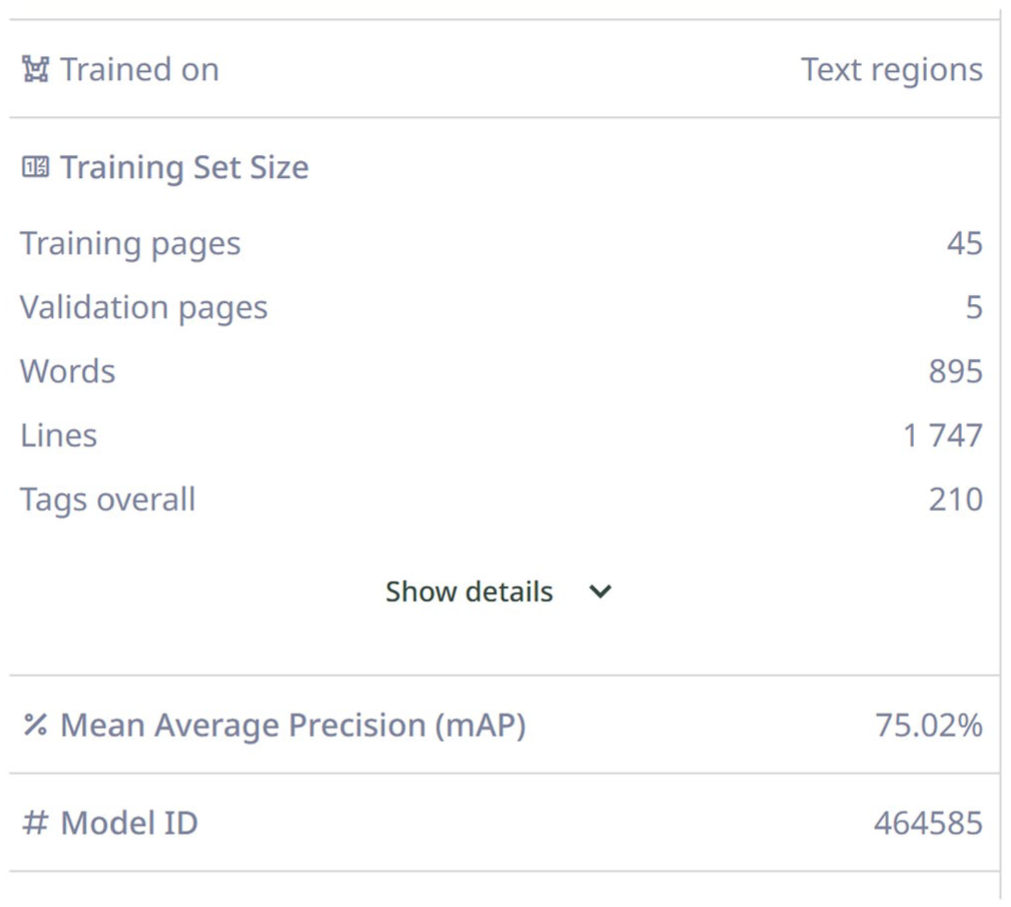

Three iterations of the field model were created to ensure optimal extraction of data. The first field model included paragraphs, dating, binding, provenance, illustration tables and tables and regions for the AI to recognize. This is done by manual tagging fifty pages, with the first forty-five pages used as training data and the last five used as validation data. The sections were outlined as shown below with varying widths of the boxes as the table sections are often narrower than the rest of the text. While Tranksribus showed the mAP (mean average precision) was high on this model at 75% when over 60% is meant to show good results, it seemed like it had worked (Figure 4). However, mAP just measures whether the regions were detected and how well the size and shape of regions match the validation data. Since all the boxes for this model are rectangles of various shapes, it is not the best measure of accuracy for this model. Despite the mAP being high, bounding boxes overlapped regions, which would render it quite difficult to extract data from individual regions.

Training model data for the 3rd field model created.

This led to a second field model created with all region boxes the same width, with the hope that the AI would find this less confusing and be consistent with the results. This resulted in a lower mAP at around 61%, but the boxes were slightly more accurate. Unfortunately, there was still a significant issue with regions overlapping.

This led to the creation of the third field model which removed the paragraph region tag. As paragraphs were the largest region, this removed a significant amount of area for the AI tagged which improved results. The mAP went up to 75% and the issues with overlapping regions were primarily resolved.

For all three models, each was only initially run on ten pages to judge performance but to limit excessive running for minimal energy waste. Once the final model was determined, it was run on the pages of the catalogue not manually tagged, which was around 150 pages. After regions were tagged with the field model and the final model determined, OCR needed to be run on them so that the text could be extracted. OCR was completed as the last step so it would not need to be run multiple times with models that would not end up used. As such, I have experimented with several language models including Transkribus Print M1and the Text Titan I ter. Transkribus Print M1 and The Text Titan I ter are both good with the English detection and transcription. However, Text Titan I ter was the final OCR model as exact correctness is important for this project as the goal is for the final sections to be uploaded with little human intervention.

Unfortunately, none of these models are very good at detecting superscripts within the text. Superscripts are key within the BMC as they denote what part of the quire (folded part of parchment bound together to create an incunabulum) an important object such as an illustration is on and, in that way, function as page numbers. This is key information to be added to the catalogue for researchers to be able to identify key pages without needing to sift through an entire incunabulum. While the table regions are tagged, they will not be extracted until the language models can detect these superscripts so that only accurate information is added to the database. The current file with regions tagged can be reused, with only new OCR rerun on it to capture this data, limiting the need to create new field models or rerun models to limit environmental footprint in the future.

AntConc

AntConc is “a freeware corpus analysis toolkit for concordancing and text analysis created by Laurence Anthony” (Anthony 2026). This innovative suite of tools allows a researcher to identify patterns and language usage in texts that would be otherwise too large to perform this research. This was used heavily in Rossitza’s research (Atanassova 2023b). After the data is extracted by Transkribus and finished being processed by code, and OCR completed, the text is then uploaded to AntConc. This software has been used to detect the most common words in the text which include names such as Caxton, a prolific English printer, and King George III whose library is where much of the collection originates (Figure 5). Further research will be conducted on how the language from this volume of the BMC differs from previous volumes.

AntConc word cloud without stop words.

Final Reflections

These programs overall enable work to be done that otherwise would be nearly impossible for a researcher to perform due to the immense scale and amount of labor that it would require. Despite the fact AI is used, the AI is not creating anything new, it is directly pulling from the original paper catalogue and simply transcribing it into a machine-readable format that can then be pulled from and added into the online catalogue. This is a key difference between AI and generative AI, AI can process and analyze what is given to it while generative AI can create something new (MITxPro 2024). This has been considered by the British Library in staff research as part of their participation in FRAIM (Framing Responsible AI Implementation & Management; University of Sheffield 2026; Ridge 2024). This will enable researchers who may not be able to access the BMC in person to perform research that is not currently possible through the online catalogue. When possible, existing models were used on Transkribus to recognize text and layout which is less energy taxing and therefore more ethically and environmentally conscious. Where this was not possible and new models were created, they will be published for use at the end of the placement on Transkribus so that others can utilize it without needing to use additional energy to create similar models.

As outlined in my descriptions of tasks, this project involves heavy human oversight, guidance and corrections. These tools are certainly not infallible and require very specific input and structure to work as needed. In the context of this project, the use of AI is not taking away entry-level roles, rather evolving what these roles entail. Previously, this work could have been transcribing by hand, now it involves AI and coding. After the models are run, specific code has been created to check these entries contain keywords to ensure these regions are correctly labelled for their eventual upload to the specialist databases MEI (Material Evidence Incunabula 2026) and the ISTC (Incunabula Short Title Catalogue 2026). The ability to upload this knowledge and work en masse will expand who this research can reach and who can perform research by allowing this information to move from printed text into the online catalogue. The lack of digitization is a pressing concern within libraries, museums and archives and this is a way to accelerate the process. It does not eliminate the need for images to be taken of the individual incunabula within the collection and uploaded as there is much that can be extracted from the font, binding and watermarks of books but this helps other research occur while the expensive, time-consuming, and labor intensive process of high-quality photography occurs. Just as digital humanities evolved from humanities research with the two fields working together to further knowledge, so too could the use of thoughtful and ethical AI be part of this growth. AI in combination with coding and other measures can help increase digitization and upload of information to a much faster speed than previously possible. While unfortunately, there are many instances of AI used unethically such as for creative images where it has been trained on artists work without permission, that does not negate the fact that it can be used ethically, especially for collection care and expanding access.

Footnotes

Acknowledgements

I would like to thank my supervisors at the British Library, Rossitza Atanassova, Harry Lloyd and Alyssa Steiner for their help and guidance with this project. I would also like to thank my supervisors at University of Lincoln, Kate Hill and Sarah Longair for their help in securing this project and my PhD research.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethical Considerations

As part of this project, the author has taken a careful and measured approach to AI use at times choosing to use coding or other means to achieve the desired results when possible. Otherwise, when possible, less costly AI models were used and models created as part of the project will be made available for public use to decrease the need to create new models, the most environmentally taxing part of the process.