Abstract

Deduplication has been widely applied to save storage overhead in the cloud server. Data integrity verification with deduplication can not only save space of the cloud server but also ensure security of the stored data. In the existing integrity verification scheme, deduplications are implemented by the cloud server. The signatures of all data blocks are generated and sent to the cloud server. Once receiving the data blocks and signatures, the cloud server compares the received signatures with the stored signatures. If there is a signature that has the same value as some stored signature, the received signature and data block will not be stored by the cloud server. Otherwise, the cloud server stores all received signatures and data blocks. In fact, these operations bring a lot of computational costs. To solve this problem, we propose a data integrity verification scheme with deduplication. In this scheme, the deduplication is performed by the cloud users, which can avoid additional communicational and computational costs. The experiment evaluation indicates that our scheme is practical for real application scenario. We demonstrate that the proposed scheme satisfies signature unforgeability, and the malicious users cannot obtain any legitimate file from the cloud server in the form of deception.

Keywords

Introduction

Cloud service platform is becoming more and more popular because it is regarded as a promising technique to relieve burden of the cloud users’ local hardware and software maintenance. 1 However, remote storage does not come without security issues. The essence of cloud storage is to delegate validation steps to a public auditor. It brings the security problems in terms of integrity, availability, and privacy of file. Therefore, it is especially crucial for the cloud users to guarantee that their data blocks are kept correctly since they do not hold these data locally.

To address security issues of the remote data, a number of cryptographic mechanisms have been proposed, such as proof of data possession,2–5 proof of storage (POS), 6 and proof of retrievability (POR).7–11 These schemes allow the cloud users to validate integrity of remote data blocks that are stored on the cloud server. The users divide their files into multiple data blocks and store these blocks on the cloud server. Periodically, the cloud users initiate integrity verification challenges. Then, the cloud server makes responses for the challenges and sends the responses back to the cloud users. These schemes are mainly to improve the security of cloud storage.

For cloud storage efficiency, Harnik et al.

12

introduced a deduplication technique to save the storage space. The deduplication mechanism has become a popular practice for cloud service providers (CSPs). This is necessary when there are multiple data copies in the cloud server (only

The main purpose of the deduplication scheme is to improve storage efficiency of the cloud server, and the main task of the integrity verification is to ensure the security of the stored data. In order to adapt to the needs of cloud users, the deduplication and the integrity verification are combined. In the existing integrity verification scheme with deduplication, the deduplication operation is performed by the cloud server. The data owner generates the signatures of all data blocks and stores the signatures and blocks on the cloud server. The cloud server checks whether a data block is a duplicate according to signature of this data block. If the signature of the data block is the same as a previously stored signature, then the data block is a duplicate. The cloud server does not store the data block and its signature. If the signature of the data block is not the same as all previously stored signatures, the cloud server would store the data block and its signature. In fact, the data owner needs to generate signatures of all the data blocks and sends the signatures and data blocks on the cloud server, which needs additional communication consumption and computation consumption.

To solve this problem, we propose an integrity verification scheme with deduplication. In this scheme, the deduplication operation is performed by the data owner. The signatures of data blocks which are stored for the first time are generated by data owner. For the duplicates, the data owner does not need to generate their signatures. Thus, performing deduplication on the user side does not need to compute signature of the duplicate and transfer the signatures and duplicate, which can save a lot of communication and computation costs.

Related work

In the cloud storage platform, the users’ data are outside their control. In order to obtain more benefits, the selfish cloud server may hide the accident of data loss or damage. Many security models had been established to deal with this issue. Ateniese et al. 2 first proposed provable data possession (PDP) model. In this scheme, the third verifier was allowed to check correctness of the stored data blocks. A fully dynamic PDP model had been established by Erway et al. 14 In this scheme, the data owner was allowed to modify the stored data. In 2012, a proxy PDP scheme and security model had been presented by Wang. 15 At the same time, a cooperative PDP scheme which deals with multicloud storage issue had been proposed by Zhu et al. 16

The first POR scheme 17 had been proposed by Shacham et al. in 2008. In their scheme, the integrity of cloud users’ data can be checked by file owner at any time. In the next year, Ateniese et al. 6 showed how to establish a POS scheme according to public-key homomorphic linear authenticator. In order to save computing resources of local users, the cloud users usually transfer the correctness validation steps to the third-party auditor (TPA) who can learn nothing about data information. The third-party auditing has wide application in cloud storage.4,18–20 In Xu and Chang’s 21 scheme, a private POR scheme was presented by Xu and Zhang. Compared with the mechanism proposed in the scheme, 3 this scheme saved communication overheads greatly using polynomial commitment technique introduced in Kate et al.’s 22 scheme.

Zheng and Xu

23

showed how to remove the extra copies of the same file in PDP scheme. Data deduplication is an ideal method to eliminate redundant data and minimize storage and network overhead. A private data deduplication protocol had been proposed in 2012.

24

This protocol can be regarded as a complement of Halevi et al.’s

25

scheme, and it was constructed on the basis of the standard cryptographic assumptions. Yang et al.

26

used the technique of spot checking to reduce the computational complexity for users. A secure deduplication storage system that can support keyword search had been presented by Li et al.

27

Miao et al.

28

proposed a secure multi-server-aided data deduplication protocol, but the protocol did not work when the valid key server was less than

In order to realize data security storage and deduplication, Yuan et al. 29 presented an integrity auditing scheme which was equipped with polynomial commitment. Although this scheme had constant communication complexity and computational cost, the data block update was not considered in this scheme.

Contributions

Motivated by the security storage and data deduplication, we propose a public data integrity verification scheme which supports deduplication. Considering that the data block deduplication is more meaningful than the file deduplication, we choose the data blocks to implement integrity verification and deduplication.

The existing integrity verification scheme with deduplication can support dynamic operation of data block and public verification. In addition to these properties, the proposed scheme achieves the following functions: (1) The secret keys of all data blocks are generated by the group manager according to content of each data block. Thus, signature results for the same data blocks are identical. (2) The deduplication is performed by group manager and group users, which avoids additional computation costs and communication costs. (3) The proposed scheme also supports batch checking, which greatly reduces the validation complexity. (4) The group user independently uses the secret key of a data block to compute signature of this data block without interacting with other group users.

One of the biggest challenges of this scheme is to prevent dishonest users or malicious users from cheating the server. These users attempt to obtain some files that do not belong to them from the cloud server. We establish a challenge–response interaction between the users and cloud server. Once a user sends a file request to the cloud server, the server asks the user to provide information to prove that the file belongs to him. If the information can pass verification of the cloud server, the server creates an access link to the file for the user. Otherwise, the server refuses to create the file link.

Organizations

The remainder of this article is organized as follows: section “Related work” shows some preliminaries used in the proposed scheme. Section “Preliminary” introduces the system model and security goals of our scheme. The detailed construction of the scheme is illustrated in section “System model and security goals.” The security analysis of the scheme is evaluated in Section “Our scheme.” Performance analysis of the proposed scheme is given in section “Security analysis.” Finally, section “Performance analysis” concludes our article.

Preliminary

Preliminary and notation

Denote

Bilinearity:

Non-degeneracy:

Computability:

Definition 1. Computational Diffie–Hellman assumption 30

For a triple

Definition 2. Homomorphic verifiability 30

We say that a signature mechanism

There are two computable functions

There exists an verification function

The function

Definition 3. Homomorphic composability 30

We say that a signature mechanism

where

Wang et al. 30 presented two exemplary signature schemes, Boneh–Boyen scheme 31 and Gennaro–Halevi–Rabin scheme, 32 which hold the Definitions 2 and 3. Combined with the actual scene, we choose the Boneh–Boyen signature as the secret key generation algorithm in our scheme.

System model and security goals

System model

The data integrity verification scheme with deduplication involves in four participants, and the system model is shown in Figure 1. The participants include the following: (1) group manager, (2) cloud storage service provider, (3) group members, and (4) the TPA.

System model.

The specific functions of each component in system model are as follows: (1) the group manager is responsible for generating signature secret key of each data block that is not repeated according to data block’s content. At the same time, the identities of these data blocks are recorded by the group manager. Once a new data block is sent to the manager, the group manager compares the identity of this data block with identities of the stored data blocks. When the group manager finds that this data block is the same as some stored data block, the group manager will inform the data owner that these data block has been stored and he/she does not need to store it again. (2) The cloud storage server has two main functions: (a) the cloud server is responsible for storing the data blocks and their signatures, and there is no similar data block in these blocks; (b) the cloud server accomplishes the integrity verification by the challenge–response interaction with the TPA. That is to say, the cloud server provides the proof information of the stored data blocks for the auditor. (3) The group member is responsible for generating signatures of the data blocks that are not repeated according to signature key assigned by the group manager. For the data replicas, the signature keys are not sent to the group members. Therefore, the group member does not need to calculate signatures of the data replicas. If the group member wants to access the stored data, an access link of the data block for the members will be created by the cloud server. When the group members modify their data blocks, a modification command will be sent to the cloud server. Thus, the stored data blocks and signatures can be updated timely. (4) The TPA is responsible for making a challenge–response interaction with the cloud server to check whether or not the stored data blocks are correct.

Security goals

In our scheme, the following three security goals should be considered: (1) Unforgeability of secret key—the attacker cannot successfully forge the signature secret key. (2) Unforgeability of signature—the attacker or the cloud server may collude to obtain a forged signature when the TPA initiates a challenge to the cloud server. (3) Due to curiosity, the dishonest group users always want to spy other honest users’ data information. Assume that honest user

Our scheme

In this section, we present the data possession checking protocol with deduplication as follows. Let

Setup

Denote

KeyGen

For user

and the secret key

If the equation holds, receive it, otherwise abandon it.

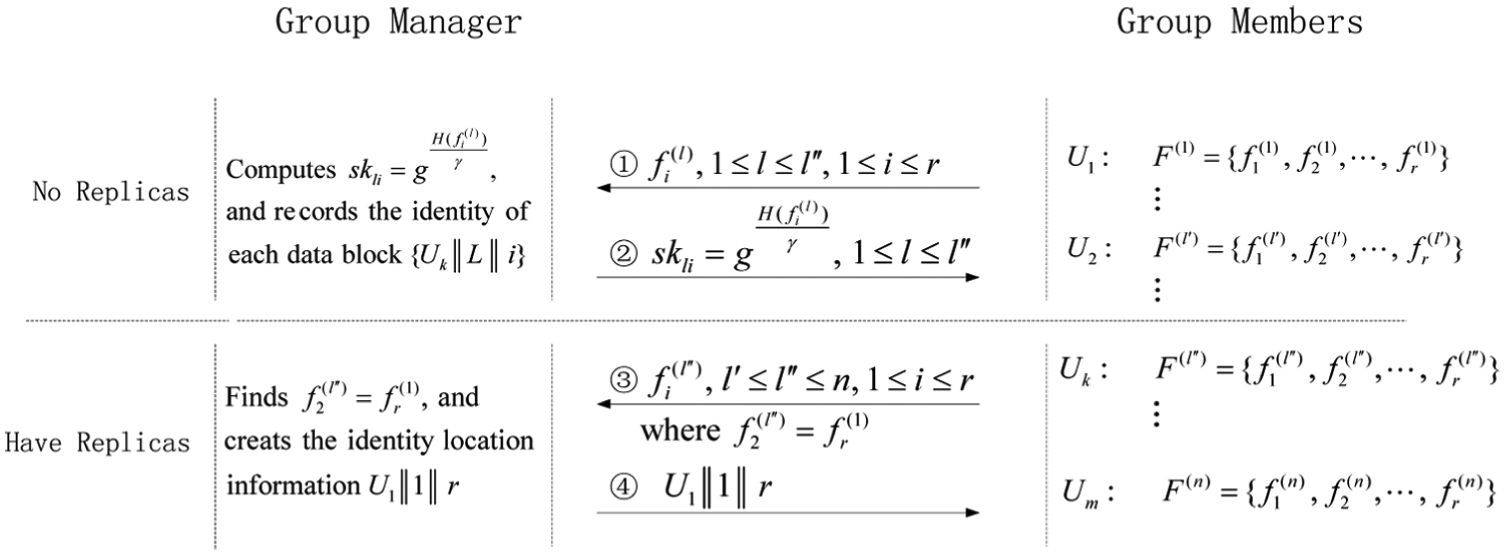

When the group manager computes the signature secret key of data blocks, he/she records the identity of each data block. If the group manager finds that a data block is the same as some stored data block, he/she informs the data block owner that there is no need to store the data block and does not send the signature secret key to the owner. The detailed process is shown in Figure 2. If there is no replica in the data blocks, the group manager generates the signature secret key

The generation of signature secret key.

Sign

If there is no duplicate in the data blocks, the group users compute signature of the data blocks and store them on the CSP. The user

Then, the user

If a data block

Challenge

The verifier performs the challenge algorithm for file

Choose

Select a random integer

Send the challenge vector

Response

Once obtaining the challenge vector from the verifier, the CSP makes a response to the challenge.

Aggregate the data blocks

Aggregate the data signature

Then, the CSP returns the proof vector

Verify

The TPA checks whether or not the following equality holds

where

Deduplication

In order to save storage space, the same data block is stored only once on the CSP in our scheme. That is, the CSP stores a data block only when it receives the first upload request for this data block. For the subsequent storage request for this data block, the CSP creates an access pointer to this data block. However, some dishonest group users may deceive the CSP and attempt to obtain the stored data blocks that do not belong to them.

Assume that a group user

When the user

Once receiving the set

Then,

The CSP performs the verification operation as follows

And checks whether or not the following equality holds

where

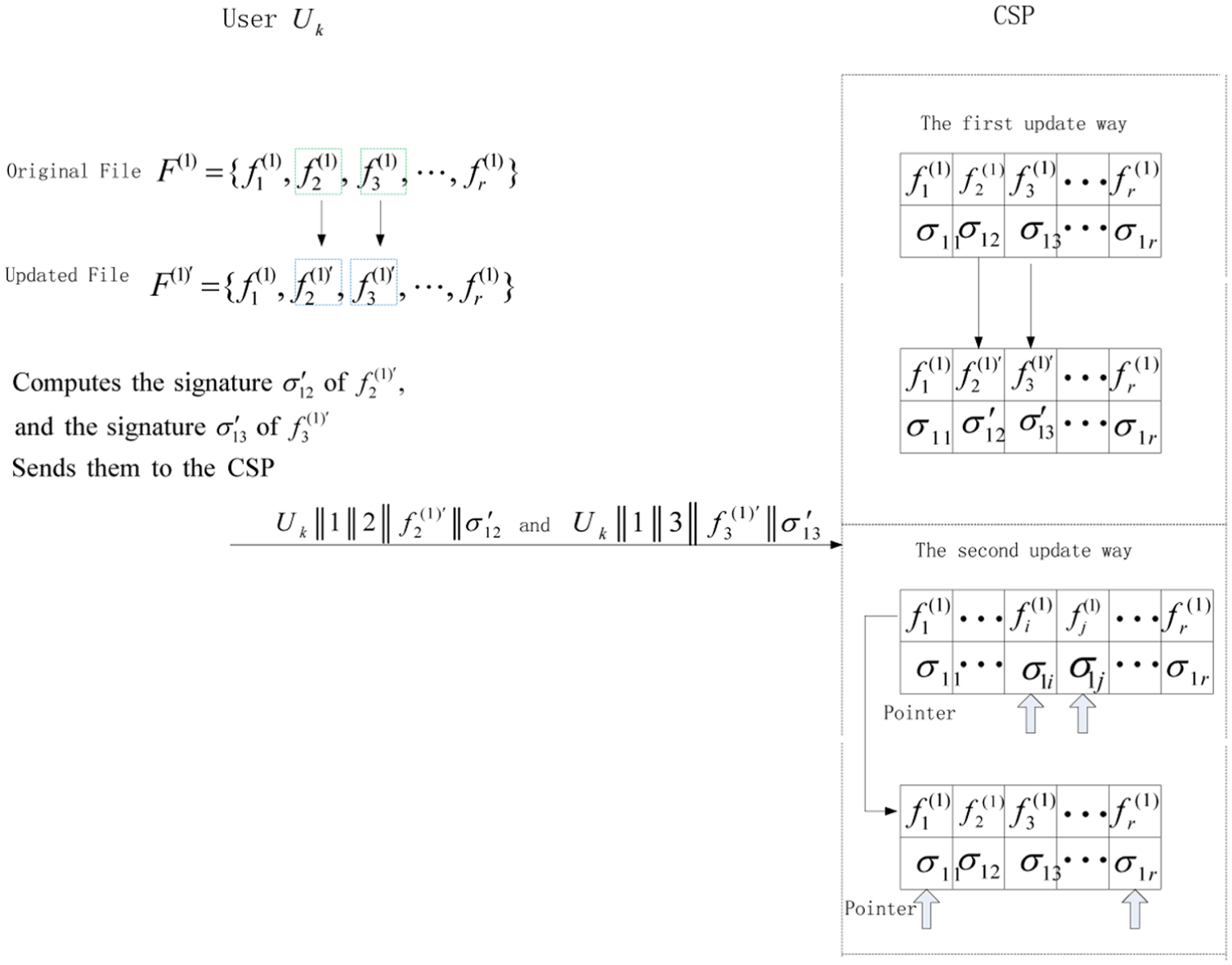

Update

The stored data blocks will be updated when the group users compile their files. The data block owner sends the update command that includes identity information of updated data block to the CSP. Then, the CSP updates the stored data blocks: (1) if the data block to be updated is stored on the CSP for the first time, the CSP replaces the original data block and its signature with the updated data block and signature. (2) If the data block to be updated is a copy of the stored data block, the CSP adjusts the pointer of this data block to the stored data block that is identical with the updated data block. Figure 3 provides us with an example of data block update.

The data block update process.

In Figure 3, we assume that the user

Security analysis

Theorem 1

Our data integrity verification protocol and deduplication checking protocol are correct.

Proof

The correctness of integrity verification protocol

where

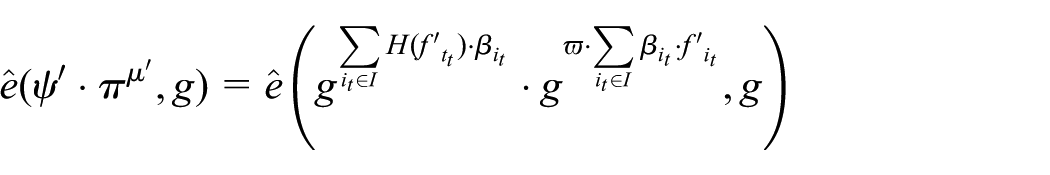

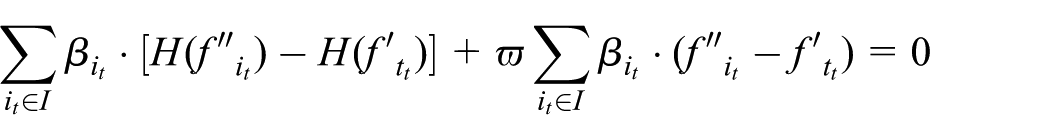

The correctness of deduplication checking protocol

where

Theorem 2

In our scheme, the response proof is unforgeable for any PPT adversary

Proof

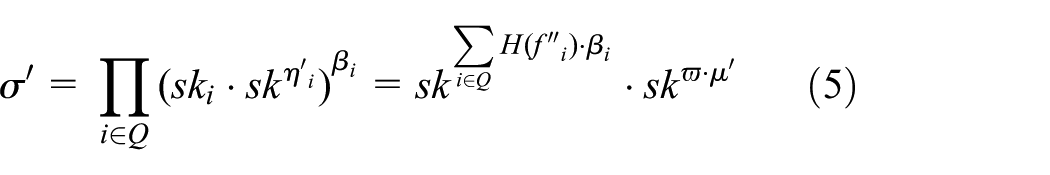

Referring to Wang et al., 30 the generation algorithm of secret key is a secure signature protocol. Hence, we mainly discuss security of signature scheme.

We assume that a PPT adversary

Query

Assume that

Challenge

The challenger

End

The adversary

where

For the correct proof information

According to equations (5) and (6), we can obtain

Since

Notice that

Theorem 3

The group user

Proof

Assume that a user

The CSP sends to the group user

Computes the signature secret key of each block of

Generates signature

Then,

and the stored data blocks, the indexes which belong to

Then, the CSP verifies the aggregation information as

If the result in equation (8) holds the verification equation, then

thus

That is

Thus, for

Therefore,

We have completed the proof of theorem.

Performance analysis

Numerical analysis

In order to evaluate the performance of our scheme, we first show computation efficiency in each step. Since the exponentiation operations and pairing operations in

Setup. In this stage, the group manager creates the group public key

KeyGen. For each file, the group manager needs one EXP to obtain the signature secret key of file. After receiving the signature secret, the group user needs two Pair operations to verify whether the secret key is correct or not.

Sign. For one data block, the user needs one Mult and one EXP operations to generate its signature. Response. Once receiving the challenge from the verifier, the CSP makes a response. He/she needs

Verify. The verifier needs one EXP, one Mult, and two Pair operations to verify whether the proof information is correct or not.

Deduplication. This process is completed by the interaction of group user and CSP. The group user needs

Update. The group user computes signature of updated data block and sends the updated data block and its signature to the CSP. For each updated data block, the group user needs one Mult and one EXP operations to generate its signature.

The communication costs are discussed in this section. Our scheme involves eight interactions that are shown in Figure 4. In the first interaction, the group manager sends the signature secret key and master secret key to the group user. Since the signature secret key and master secret key are elements in

The interaction of scheme.

Experimental analysis

To illustrate that the proposed scheme is efficient, we perform the experiments on a computer with Intel (R) Core (TM) i5-2400 CPU @ 3.10 GHz processor and 4 GB memory on the Win7 system and use Pairing Based Cryptography (PBC) library

33

to perform cryptographic operations in our scheme. In the following experiment, we assume that the number of file changes from 10 to 100, and each files consist of 64 data blocks. Let the size of an element of

As shown in section “System model and security goals,” we just generates the group public/secret key pair with one exponentiation operation in

Secret key generation time on the data blocks.

As shown in Figure 5, when there is no replica in the data blocks, the group manager needs to compute secret key of each data block, and the cost of secret key generation is approximately linear growth with the increase in data blocks. When there are 20% replicas in the data blocks, the group manager only needs to compute the secret key of 80% data blocks, and the cost of secret key generation is also approximately linear growth with the increase in data blocks, and the growth rate is smaller than no replica. When there are 40% replicas in the data blocks, the group manager only needs to compute the secret key of 60% data blocks, and the cost of secret key generation is linear growth with the increase in data blocks, and the growth rate is smaller than 20% replicas. When there are 60% replicas in the data blocks, the group manager only needs to compute the secret key of 40% data blocks, and the cost of secret key generation is also linear growth with the increase in data blocks, and the growth rate is smaller than 40% replicas. Since the group manager is only responsible for generating the signature secret key of single data block, the computational efficiency of secret key is higher and higher with the increase in replicas.

The computational cost of signature is shown in Figure 6. Because the group users are only responsible for generating the signature of single data block, the generation efficiency will be improved when there are replicas in the data blocks. We make the efficiency analysis with no replica, 20% replicas, 40% replicas and 60% replicas in the data blocks.

Signature generation time on the group users.

From Figure 6, when there is no replica in the data blocks, the group users need to compute signature of each data block, and the cost of signature is increased with the increase in data blocks. When there are 20% replicas in the data blocks, the group users only need to compute the signature of 80% data blocks, and the cost of signature is increased with the increase in data blocks, and the growth rate is smaller than no replica. When there are 40% replicas in the data blocks, the group users only need to compute the signature of 60% data blocks, and the cost of signature is also increased with the increase in data blocks and the growth rate is smaller than 20% replicas. When there are 60% replicas in the data blocks, the group users only need to compute the signature of 40% data blocks, and the cost of signature is approximately linear growth with the increase in data blocks, and the growth rate is smaller than 40% replicas. Since the group users are only responsible for generating the signature of single data block, the computational efficiency of signature is higher and higher with the increase in replicas. Thus, the deduplication can avoid additional computational cost in user side, communication cost in channel, and storage cost in CSP. Therefore, it is crucial for the group users and CSP to combine deduplication with the integrity validation scheme.

The efficiency of verification is represented in Figure 7. The number of data blocks that are chosen by the verifier changes from 400 to 4000. The batch verification and verification one by one are tested. From Figure 7, we can see that the computation cost of batch verification is constant and the computation time of verification one by one increases with the increase in verified data blocks. Although the efficiency difference of the two verification algorithms is small when the number of verified data blocks is 400, the computation time of verification one by one is about six times of the batch verification when the number of verified data blocks is 4000. In our scheme, the verifier chooses the batch verification.

Verification time of verifier.

In this section, we analyze the deduplication efficiency. We show the computation cost of group users and the CSP in Figures 8 and 9, respectively. Here, we choose the number of challenged data blocks changing from 20 to 200. For the group users, they need to compute the signature for the data blocks that are challenged by the CSP. Actually, this is a signature process. Therefore, the computation efficiency of group user in this process has a similar trend with signature generation. As the challenged blocks are only a small part of the whole data blocks, the computation time of response for group users in this process is also less than signature generation. As shown in Figure 8, when the number of data blocks is 20, it is about 0.68 s to generate signatures of challenged data blocks. When the number of challenged data blocks is 200, it is about 2.68 s to generate their signatures.

Signature time on the group users in deduplication.

Verification time of CSP in deduplication.

In deduplication, the CSP is responsible for checking whether or not the response of group user is correct. Here, the CSP first needs to aggregate the signature sent by the group user and then proves the verification equation. Although the computation cost of verification process for the CSP is constant, the aggregation cost of signature increases with the increase in data blocks. Because the aggregation involves exponentiation and multiplication operations in

In the update stage, the group user needs to compute signature of updated data block. For the group user, the computation cost mainly comes from the signature computation. Therefore, we evaluate the efficiency of update by referring signature cost and no longer make a detailed efficiency analysis.

Conclusion

In this article, we propose a data possession–checking protocol with deduplication. Our scheme is featured by a special signature secret key. Any illegal group users cannot generate a valid signature secret key. Since deduplication operations are performed by group users and group manager, additional communicational and computational costs are saved. Our scheme supports data block update and efficient deduplication, which guarantees that an illegal group user cannot obtain the file information of other valid group user. The provided security analysis and experiment evaluation show that our scheme is secure and practical for real application scenario.

Footnotes

Acknowledgements

The authors are very grateful to the editor and reviewers for their suggestions to improve the quality of paper.

Academic Editor: Luca Foschini

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National key Research and Development Program of China (2016YFB 0800903), the NSF of China (U1636212, U1636112).