Abstract

This article presents a distributed Bayesian reconstruction algorithm for wireless sensor networks to reconstruct the sparse signals based on variational sparse Bayesian learning and consensus filter. The proposed approach is able to address wireless sensor network applications for a fusion-center-free scenario. In the proposed approach, each node calculates the local information quantities using local measurement matrix and measurements. A consensus filter is then used to diffuse the local information quantities to other nodes and approximate the global information at each node. Then, the signals are reconstructed by variational approximation with resultant global information. Simulation results demonstrate that the proposed distributed approach converges to their centralized counterpart and has good recovery performance.

Introduction

The ongoing concerns about environment and global warming pose more challenges in meeting the increasing demand for deployment of wireless communication networks.1,2 The green communication (GC) has become a new trend in the design and operation of wireless communication networks. In the future communication system, the Internet of Things (IoT) will play an important role. As a key enabling technology of IoT, wireless sensor networks (WSNs) help IoT to flourish by fusing sensing and wireless communication.3,4 Generally, a WSN consists of a large number of sensor nodes with low processing, limited power, low storage capacity, and unreliable communication over short-range radio links.5,6 Based on the WSNs, the potential applications of IoT in industrial automation, habitat monitoring, and smart cities are numerous and diverse.7–10 However, the energy demand for IoT will increase dramatically in the near future considering the widespread interest and adoption of various organizations, which will lead to higher carbon footprint and other environmental issues.

The recently developed compressive sensing (CS) theory11,12 is a new sampling paradigm that can achieve acquisition of information contained in a large-scale data using much fewer samples than that are required by Nyquist sampling theorem. By exploiting sparsity, which is inherent characteristic of many natural signals, CS enables the signal to be stored in few samples and subsequently be recovered accurately. Moreover, the CS has been extensively applied in WSNs since the signals in many applications exhibit sparsity. Sparse Bayesian learning (SBL) was introduced in Tipping 13 and has become a popular method for sparse signal recovery in CS.14,15 In SBL, the sparse signal recovery problem is formulated from a Bayesian perspective, while the sparsity information is exploited by assuming a hierarchical sparse prior to the signal of interest. To recover the values of model parameters, an inference algorithm is derived based on well-known Type-II Maximum Likelihood which assumes that the hyperprior are uninformative. 16 In contrast to uninformative, a fully Bayesian treatment was introduced in Bishop and Tipping 17 with the variational rendition of sparse Bayesian learning (VSBL), where both the model parameters and hyperparameters can be estimated for the distribution. 16

On the other hand, due to the high fault tolerance and scalability, distributed processing is becoming increasingly popular in WSNs applications. Different from the centralized approach that rely on a fusion center (FC), distributed processing requires no central coordinator and only single-hop communications among neighbors that aim to achieve consensus on local estimates. However, most sparse signal recovery algorithms operate in a centralized manner. Recently, distributed processing for CS applications has received considerable attention.18–21 Moreover, the VSBL algorithm is a centralized method and may not be used for sparse signal reconstruction in a distributed WSN directly.

Average-consensus algorithms have lately investigated as a family of low-complexity iterative distributed algorithms, where sensors in a group communicate with each other to reach a consensus. 22 In more detail, each sensor receives information from others and adjusts their own information state with the goal to reach an agreement in a scalable and fault-tolerant manner. 23 Consensus was initially elaborated in Tsitsiklis et al. 24 and has received a considerable attention in many subjects due to its wide range of applications such as load balancing in parallel calculation, 25 coordination of autonomous agents, distributed control, 26 and data fusion.27–29

In this article, we develop a distributed sparse signal reconstruction algorithm using probabilistic graphical models in the Bayesian framework. First, three global information quantities are particularly designed for distributed Sparse Bayesian inference by centralized update equations. Then, several average-consensus iterations are needed to reach a consensus on global information quantities in each local variational Bayesian (VB) step. In comparison with the centralized VSBL algorithms, the proposed algorithm allows each sensor to parallelly reconstruct sparse signal with local information and moderate inter-node communication.

The rest of this article is structured as follows: the fundamental of compressive sampling is provided in section “Background,” and introduces the sparse signal recovery using SBL. The system model is described in section “Problem statement and system model.” The centralized variational Bayesian inference for the system model is developed in section “Variational approximation.” Then, the proposed distributed sparse signal reconstruction is presented in section “Distributed variational SBL algorithm.” Numerical results are provided in section “Simulations,” followed by conclusions in the final section.

Notation

Throughout this article, we use b,

Background

In this following, we will briefly review the principle of CS and SBL,13,30 which is a centralized sparse signal recovery algorithm.

Let

where

where

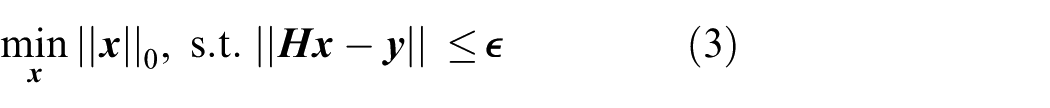

In order to recover

where

where

From a Bayesian perspective, the CS problem can be also formulated by SBL whose close

relationship to non-convex

where

As per the analysis in Tipping, 13 solving equation (6) is equivalent to minimizing the following cost function

where

In Wipf and colleagues,30,34 authors provide the theoretical justification for applying SBL to sparse signal recovery and demonstrate superior performance compared with other algorithms.

Problem statement and system model

A network with K nodes modeled by an undirected graph

An example of network structure.

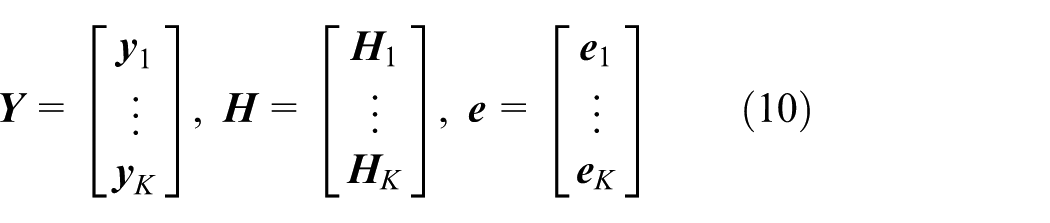

Assuming that each node observes a linear combinations of unknown sparse signal

where

Then the global observation model is given by

where

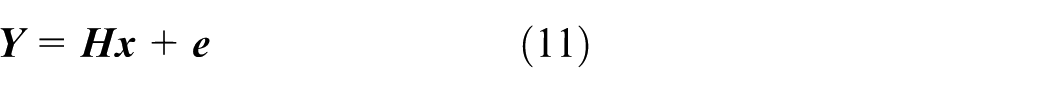

Moreover, an appropriate conjugate prior with respect to equation (12) is further attached on the

parameter

To reflect our knowledge about sparsity of

where

So far, the system model is developed. For the Bayesian model described above, we

illustrate the directed acyclic graph (DAG) in Figure 2, where

DAG of the hierarchical Bayesian model.

Variational approximation

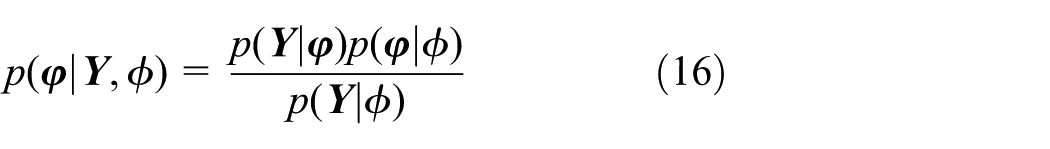

According to Bayes’ theorem

where

where F is the free energy given by the following expression

and

where KL is the Kullback–Leibler divergence between the true posterior

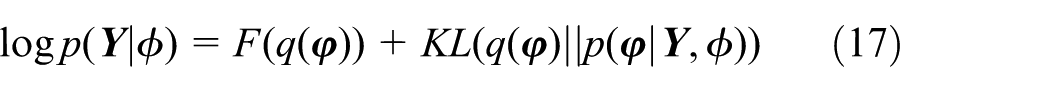

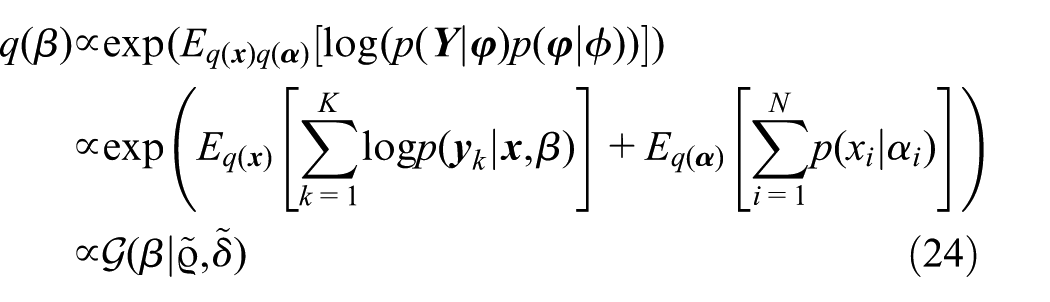

Based on the mean-field theory from statistical physics,

that is, all model parameters are assumed to be a posteriori

independent. This fully factorized form of distribution

where

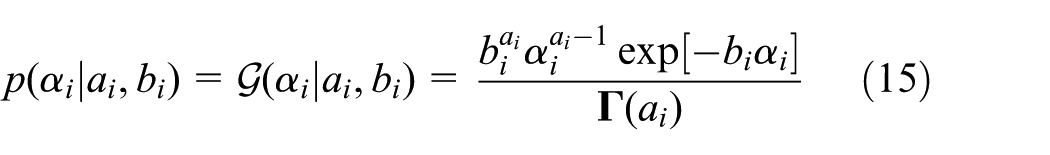

The log joint distribution of Y and

Due to the conjugacy properties of the chosen distributions, as mentioned previously, a general solution (21) can be derived analytically as follows

where

The required moments can be easily evaluated using the following results

From the above, it is noted that the aforementioned formulas can be used to compute the parameters of model in a centralized manner when all the measurements can be gathered in a FC. However, in the distributed scenario, there is no FC in the network and the formulas derived above cannot be implemented directly. In order to develop the distributed algorithm for VSBL, the formulas so far discussed will be reformulated in the following section such that the VSBL can be used for distributed sparse learning in a WSN.

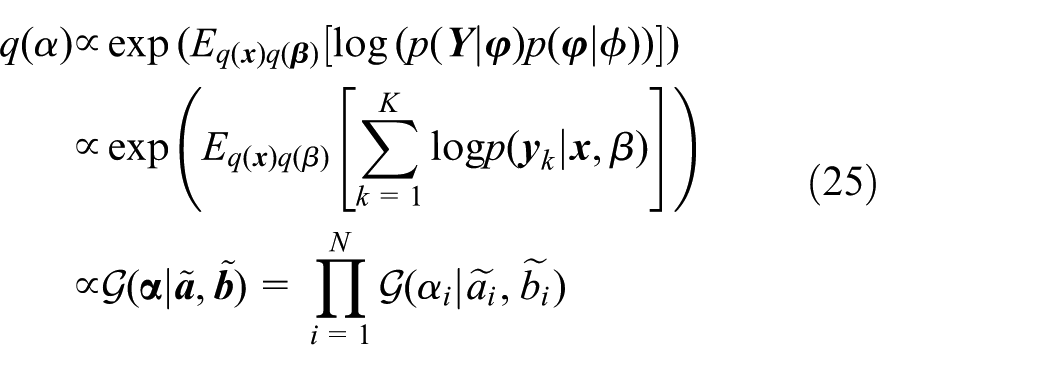

Distributed variational SBL algorithm

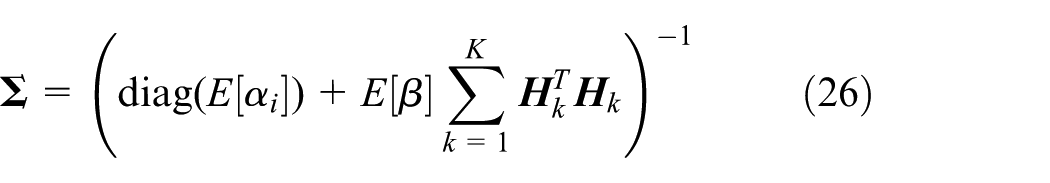

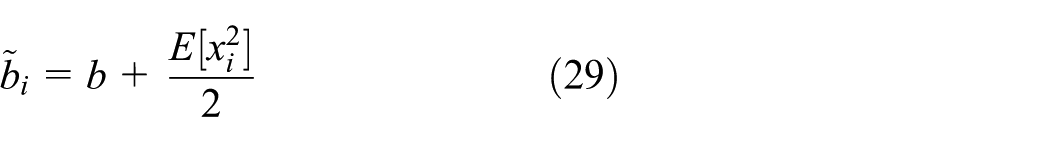

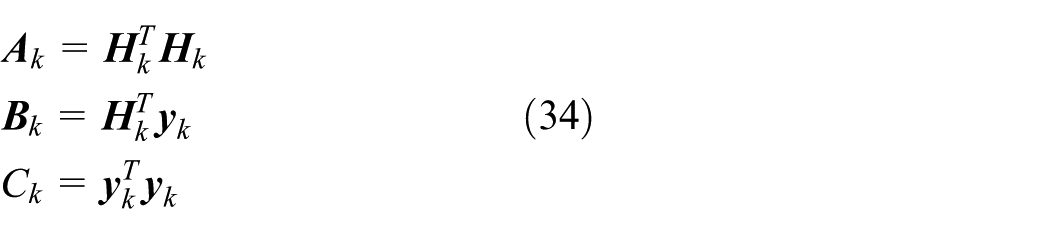

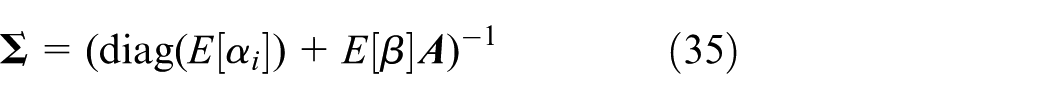

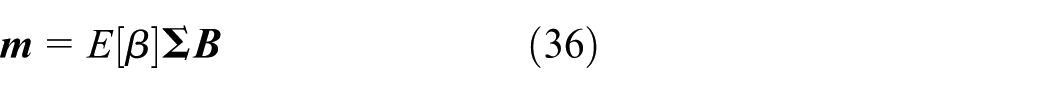

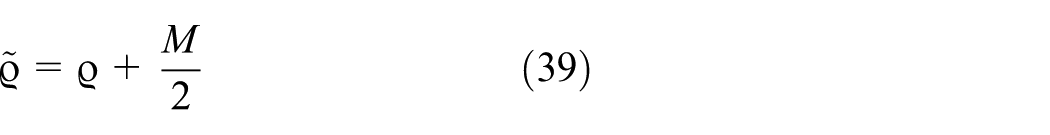

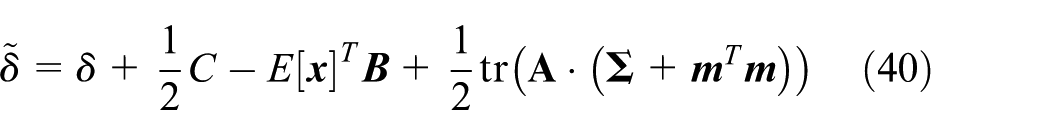

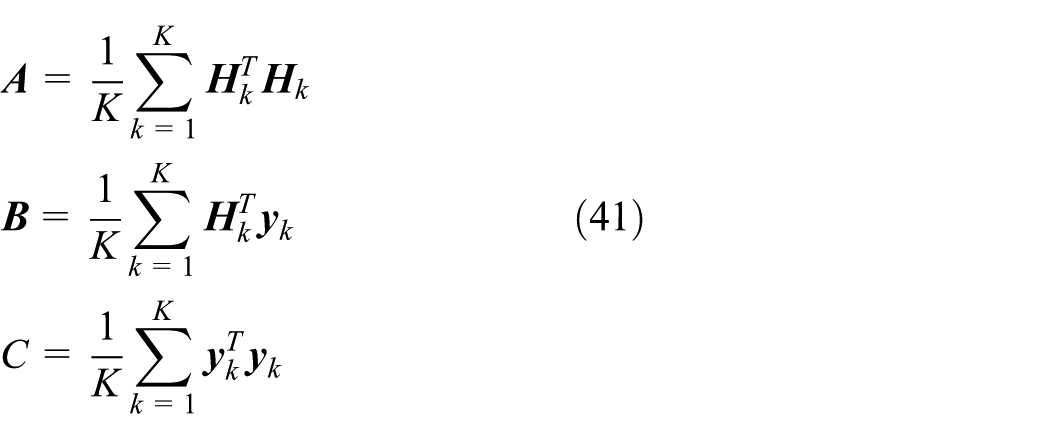

Since the measurements are divided into K different nodes, the following global quantities can be defined by inspecting equations (26)–(31)

where

Hence, equations (26)–(31) can be reformulated as follows

It should be noted that all the required parameters can be computed based on the

variational approximation method and using the global quantities. In the sense of

distributed calculation, each sensor communicates with its neighbors and operates

accordingly. In the distributed variational sparse Bayesian learning (DVSBL) algorithm, the

global quantities

It is easy to see that above redefinition has no impact on the parameter approximations considered in equations (26)–(31). After inspecting the average formulas mentioned in equation (41), the idea of an average-consensus filter suggested in Kingston and Beard 36 can be employed to approximate the global information quantities. In particular, the local information quantities of each sensor are interchanged with their neighbors, then each sensor’s global information quantities changes depending on the local information quantities input from others using consensus filter. Hence, the DVSBL algorithm can be developed by employing such average-consensus filter.

According to Kingston and Beard, 36 a consensus filter can be formulated as follows in a continuous compact form

where

The discrete-time form of consensus filter suggested in Kingston and Beard 36 is as follows

where

It can be noted from the above equations that each node should communicate with its

neighbors several times before implementing variational approximation. However, the exchange

of messages among the nodes iteratively is inevitable to consume time and energy and it is

hard to know the number of iterations that are necessary to achieve consensus in different

application scenarios, which are the main limitations of the proposed distributed VSBL.

Moreover, another concerned problem is the design of weight matrix

Moreover, it has been shown that the second smallest eigenvalue of

The performance of the proposed algorithm is validated using simulations, and results are presented in the next section. Moreover, the intact algorithm is given in Algorithm 1 (Figure 3).

Algorithm architecture.

Simulations

First, a sensor network with six nodes is used to verify the performance of the proposed

algorithm for distributed WSNs (Figure

4). Without loss of generality, the considered six-node network is represented by

an undirected graph

Random topology.

In the considered example, the signal

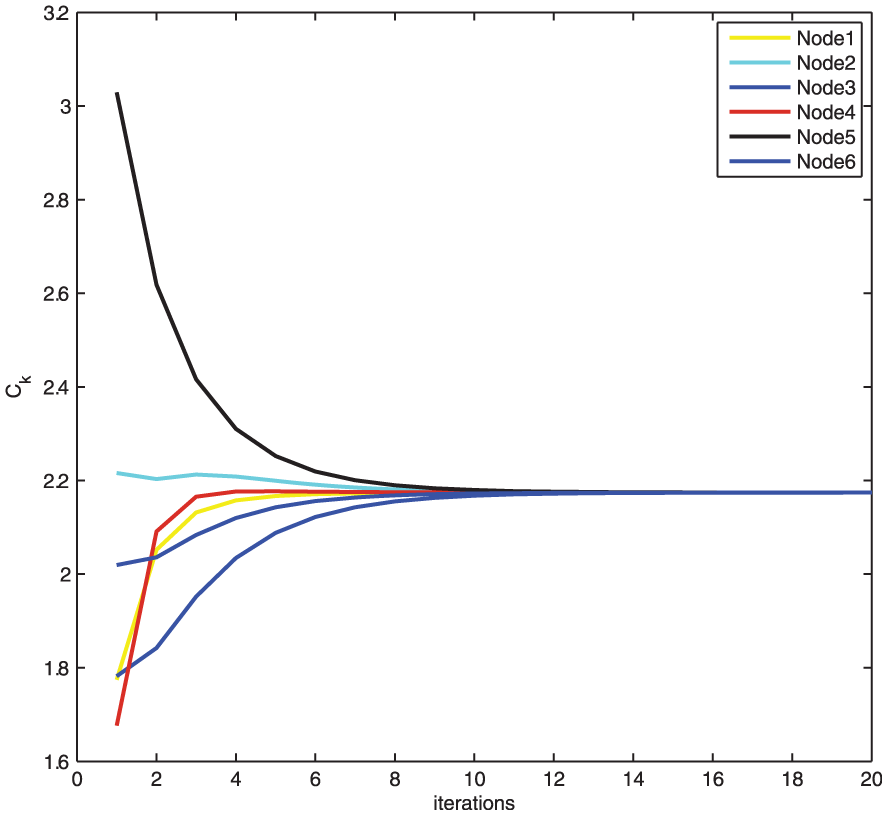

The convergence rate of the proposed algorithm is shown in Figures 5 and 6. It can be seen from the figures that both the local

information quantity

Convergence for local information quantity

Normalized MSE versus iteration.

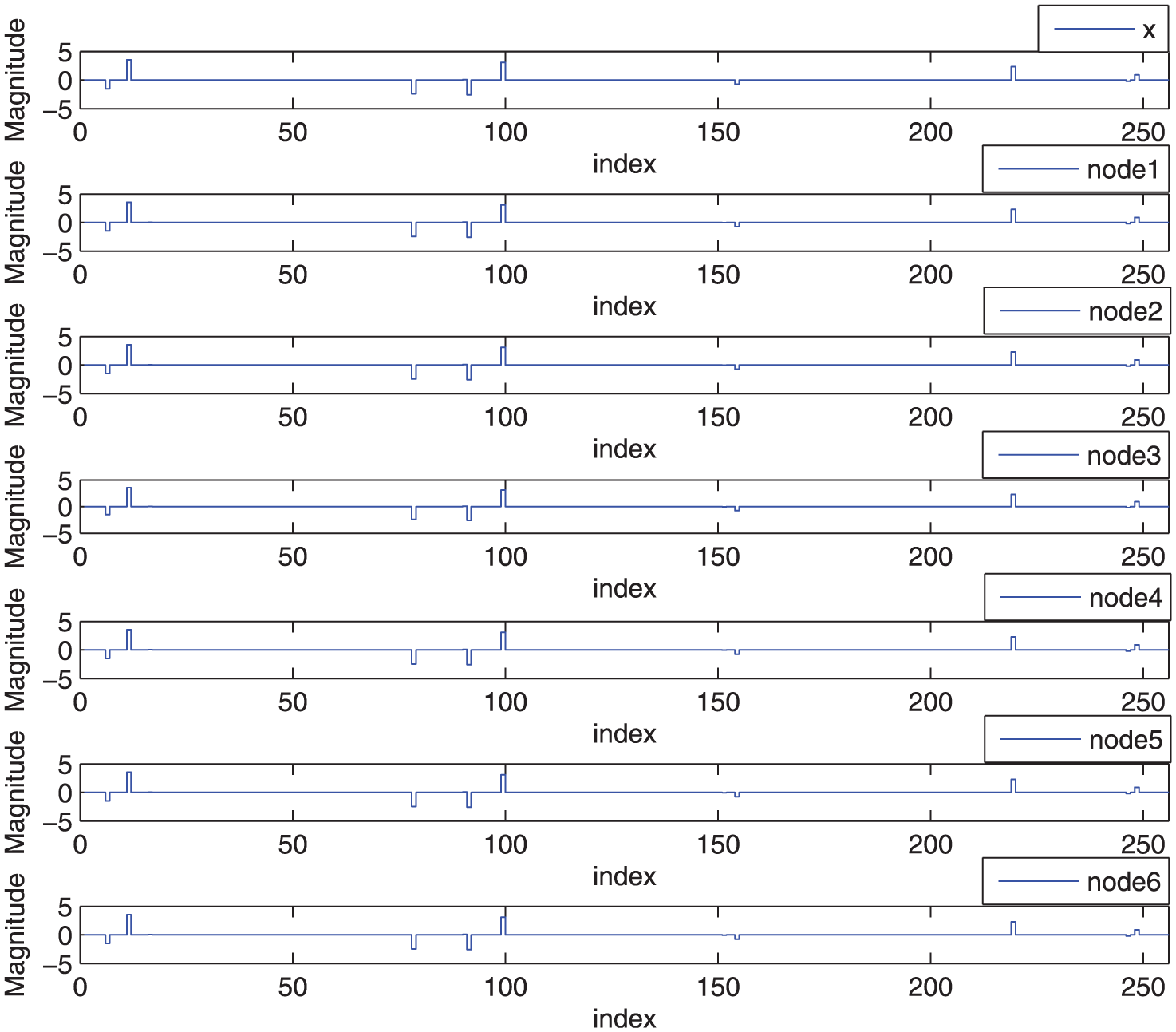

The estimated

Estimation of

In order to prove the scalability of the proposed distributed algorithm, we consider two networks of different sizes, one of which is an L-connected Harary graph formed by 24 nodes, and the other is an L-connected Harary graph formed by 72 nodes. The L-connection denotes the number of neighbors for each node. In the considered example, the L is set as 3. The error performance is shown in Figures 8 and 9. It can be noted that irrespective of the size, both the networks have the comparable reconstruction performance compared to the centralized VSBL.

Comparison of Normalized MSE (24 nodes).

Comparison of Normalized MSE (72 nodes).

The average relative error of 72 nodes is presented in Figure 10. It can be seen that the relative error after two iterations is negligible. This demonstrates that the proposed algorithm is salable.

ARE versus iteration.

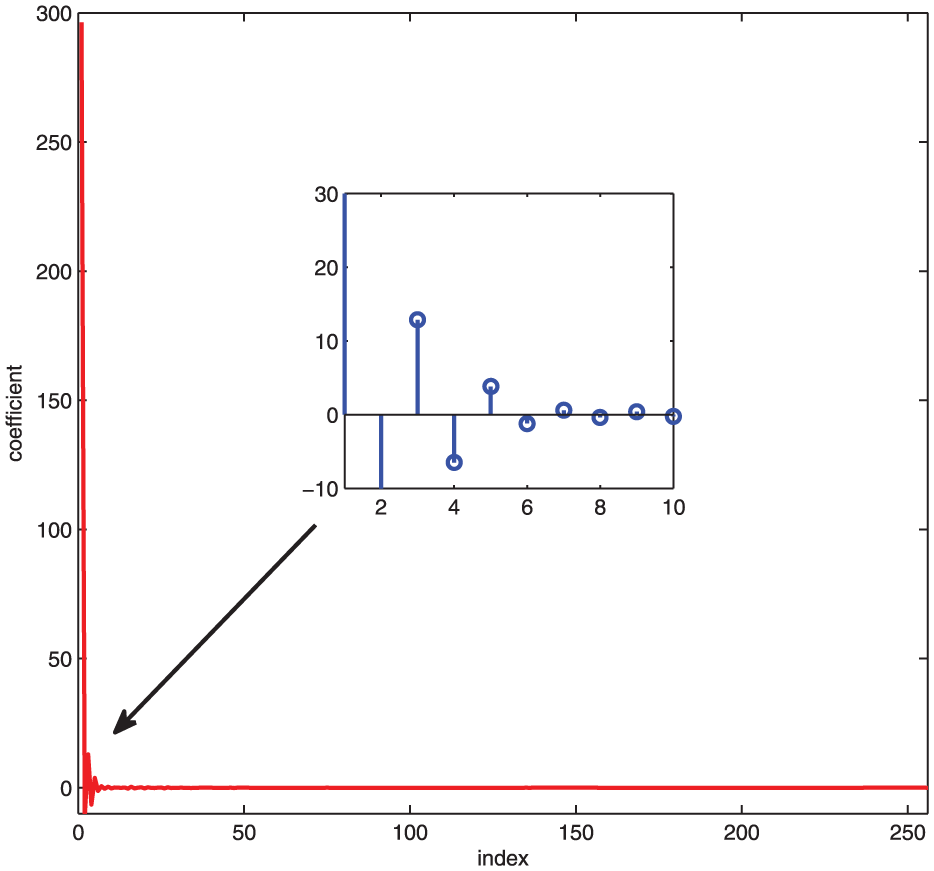

Finally, the real temperature signals obtained from Intel Berkeley Research laboratory are considered to test the effectiveness of the proposed algorithm. The considered temperature signals are represented using discrete cosine transform (DCT) as shown in Figure 11. In this context, a three-connected Harary graph with 24 nodes is employed. The effectiveness of our distributed algorithm is demonstrated in Figure 12 where both the original signal and the reconstructed signal of one node are provided. It can be observed from Figure 12 that both the proposed distributed algorithm and the centralized VSBL are successful in reconstructing the temperature signals.

The coefficients of DCT.

Reconstructed results of temperature signal.

In a word, all the results presented so far have demonstrated the effectiveness of the distributed sparse signal reconstruction algorithm presented in this article.

Conclusion

In this article, a new distributed variational SBL algorithm for sparse signal reconstruction in a WSNs is presented. By combining VSBL and average-consensus algorithm, the DVSBL is consistent with centralized VSBL, where all data are available at FC. Experimental results demonstrate the superior recovery performance and convergence properties of the proposed distributed algorithm.

Footnotes

Academic Editor: Wei Yu

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work is supported by “Qing Lan Project” and “the National Natural Science Foundation of China under Grant 61572172”; “the Fundamental Research Funds for the Central Universities, No. 2016B10714”; “The Basic Research Plan in Shenzhen City under Grant (No. JCYJ20130401100512995. JCYJ20140418100633654)”; “the National Natural Science Foundation of China (No. 61001125)”; “PAPD”; and “CICAEET.”