Abstract

Communication sits at the heart of any coordination within organization. Yet, what are the consequences when employes use Artificial Intelligence (AI) to copilot, i.e., support, their communication? While AI support in human interactions holds much promise for improving communication quality at work, it also fundamentally challenges how much people trust that communication. We, therefore, ask how organizations should introduce AI. In particular, we focus on the responsibility of leaders as stewards of workplace communication. Accordingly, we offer a set of specific hands-on recommendations on how employes should be guided to use AI copiloting effectively so that they do not give in to the temptation of letting go of the “steering wheel” (i.e., allowing AI to [auto]pilot intraorganizational communication).

Introduction

With artificial intelligence (AI) entering employes’ daily work (Bankins et al., 2023; Brynjolfsson et al., 2023; Dell’Acqua et al., 2023), the dominant focus of present scientific and practice inquiry is whether and how generative AI can make employes more efficient, productive, and creative (Jia et al., 2024). Surprisingly, to date, stakeholders have engaged far less with the implications AI holds for human-to-human communication in organizations even though these interactions present a critical prerequisite for performance and innovation.

Interpersonal communication constitutes up to 50% of daily work for the average employe, with numbers rising to 80% for managers (Mintzberg, 2013). But with people feeling more and more resource-depleted (American Psychological Association, 2023), communication suffers (Trougakos et al., 2015). This results in higher levels of disrespect and incivility (Pearson & Porath, 2004), with recent reports suggesting experiences of incivility to be already at 70% within US workplaces (Yao et al., 2022). Next to creating a host of negative outcomes (e.g., turnover, physical illness; Cortina et al., 2013; Nielsen & Einarsen, 2012), such increased incivility undermines human relations at work (Cortina et al., 2013) and as such also impairs worker productivity and creativity (Byron et al., 2010; Gilboa et al., 2008). Without people getting more time for and being more deliberate about their communication, this trend is likely to continue.

Herein lies the promise of AI copiloting, i.e., the augmentation of workplace interactions by a continuously available generative AI tool that combines Large Language Models and conversational interfaces (Hancock et al., 2020): to (co)produce more respectful communication in less time. But at what price? Will we still trust such communication? Indeed, given the increasing ubiquity and performance benefits of AI copiloting, the question is not whether AI will become an integral part of future human workplace communication. Instead, the main concern needs to be how to best go about its implementation so that workplace communication can become more civil and respectful but does not lose its relational signaling function.

Against that background, we discuss what leaders as role models and stewards of organizational communication can do so that AI adoption helps improving workplace communication while also retaining people's trust in the communication itself. To this end, in this commentary, we suggest three interrelated domains where organizational leaders need to counter the currently too-simplistic notion that “things will sort themselves out” when copiloting is activated: (1) How to encourage adoption of AI to improve interpersonal communication, (2) How to ensure human ownership of AI-supported interpersonal communication, and (3) How to inject learning from and into AI-supported interpersonal communication.

Considerations Concerning the Adoption of AI for Interpersonal Communication

A plethora of research shows that, objectively speaking, AI-based chatbots can quite effectively communicate or support human communication (including emotional and complex interpersonal matters; e.g., Jakesch et al., 2023; Yin et al., 2024). For example, scholars have now repeatedly found that AI-generated advice or answers are rated as more empathic than human responses (Li et al., 2024). People also feel more heard by AI (Ayers et al., 2023) and feel that their emotional understanding and awareness are enhanced with AI support (Fu et al., 2023). A set of recent studies even showed that AI-supported communication can also improve experiences of trust and a sense of closeness between humans interacting with each other (Hohenstein et al., 2023; Hohenstein & Jung, 2020). Additionally, more generally, using AI may allow us to break the otherwise all-too-often occurring negative spiral of heated discussions and in-the-moment aggressive replies by providing the opportunity to pause and intentionally reflect on communication alternatives (Costello et al., 2024). Thus, empirically speaking, humans communicating with the support of AI holds great potential for people to experience workplace communication as more civil and respectful.

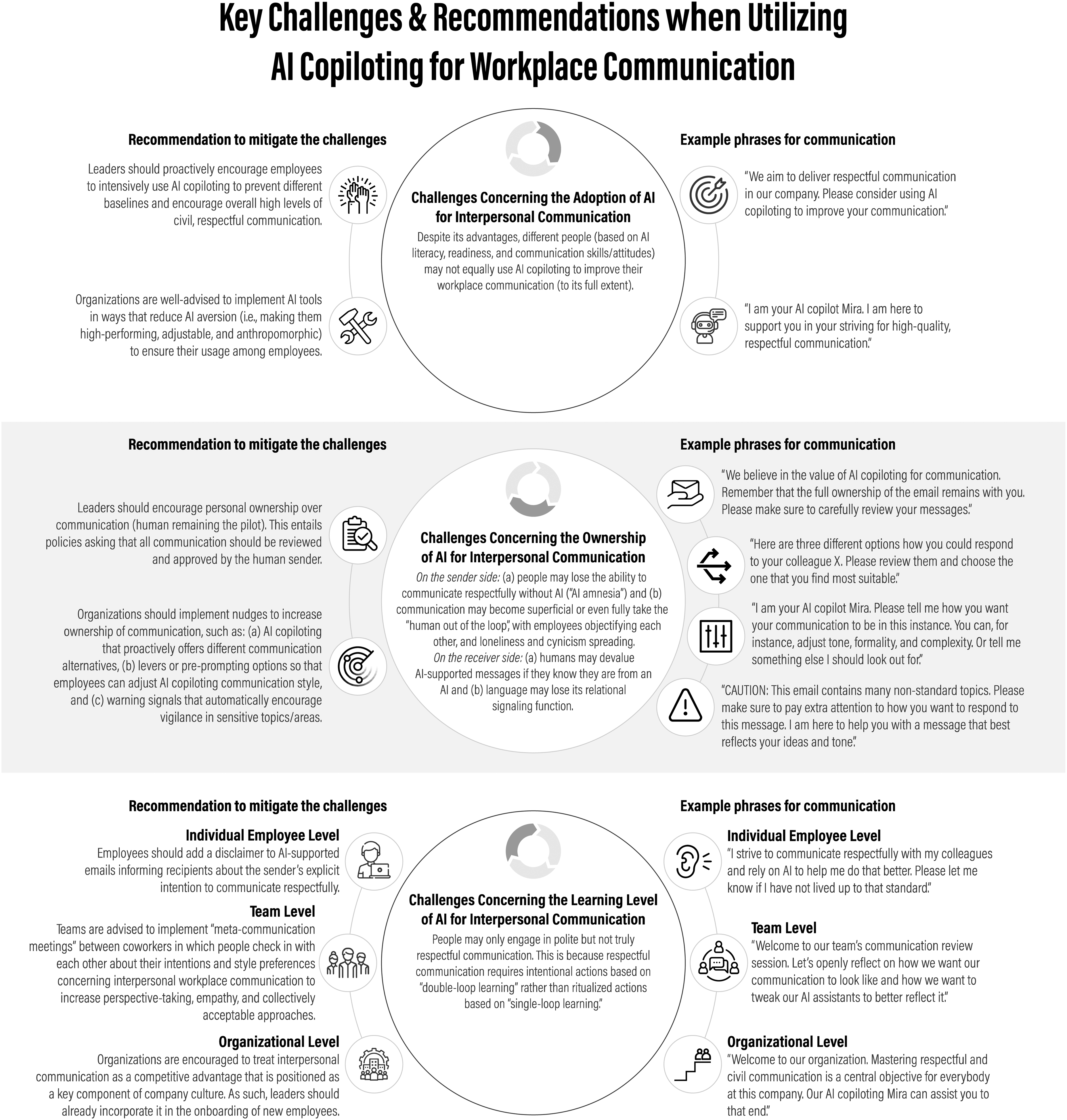

Nonetheless, individual differences in AI literacy, technological openness, as well as general communication skills and attitudes may play a role in how quickly, easily, and effectively people can and will rely on AI copiloting for their communication (Noy & Zhang, 2023; Park et al., 2024). For example, among sales agents, the gap between better and worse salespeople was shown to widen with AI support because better workers made more and better use of AI (Jia et al., 2024). Yet, as that study and another field experiment with customer service agents show, this gap can be closed when AI support is more thoughtfully introduced (Brynjolfsson et al., 2023). Taking such AI divide patterns (Wang et al., 2024) seriously, it seems organizations are well-advised to not only make AI assistance available but also that leaders should encourage broad adoption amongst their employes. When doing so, organizations should particularly implement AI tools that are transparent or rather transparently used (Purcell et al., 2024) and somewhat anthropomorphic (i.e., more human-like; for review see, e.g., Li & Suh, 2022) as well as give users a feeling of autonomy and control because all of these factors have been shown to help overcome different AI aversion tendencies (Dietvorst et al., 2018, 2015). The top panel within Figure 1 summarizes the general challenge of AI adoption in the context of workplace communication and illustrates what leaders are advised to do in order to respond to it.

Key challenges and recommendations when utilizing AI copiloting for workplace communication.

Considerations Concerning the Ownership of Interpersonal Communication

Whereas we have outlined above that the content of workplace communication has the potential to generally become more respectful under AI copiloting, the literature also highlights risks for both the sender and receiver sides. On the sender side, employes may be tempted to outsource too much of their communication to AI. Indeed, research shows that AI tools—once readily available at the fingertips of workers—are heavily and often blindly utilized to perform key workplace tasks and activities (Brynjolfsson et al., 2023; Jia et al., 2024; Park et al., 2024). In extrapolation to AI-assisted communication, a pessimistic outlook for the future is that employes’ communication skills will therefore deteriorate. This aligns with work on “digital amnesia,” where constant use of technology impaired human skills normally used for respective behaviors (e.g., effects of GPS on spatial navigation or Google on memory; Dahmani & Bohbot, 2020; Sparrow et al., 2011). So, as humans come to rely on AI copiloting for their communication, a deskilling process may happen such that they eventually become worse at the underlying communication skills (“use it or lose it” hypothesis; Dietvorst et al., 2018, 2015). In fact, the dynamics may become worse as people are also more likely to detach themselves from their communication and cut themselves some more slack regarding transgressions when using AI (Dong & Bocian, 2024). Unsurpringly then, using AI support for one's communication may also prompt feelings of having more superficial connections at work (Tang et al., 2023; Yam et al., 2022). In this confluence, it then also does not help that humans generally seem to overestimate how much others are using AI for their communication (Purcell et al., 2024).

As for the risks on the receiver side, studies suggest that although AI objectively demonstrates enhanced communicative capabilities, as of now, humans still tend to devaluate AI-generated responses if they know a message comes from an AI. Partially, this is because they tend to trust AI-generated or -supported text less than human-generated content (see the example of feedback: Tong et al., 2021 or on ethical decisions: Jago, 2019). As such, the current research shows that once aware of AI involvement, humans display negative emotions toward content fully or partially generated with AI support (e.g., Yin et al., 2024). This negative evaluation so far seems particularly strong for contexts that are, for example, directly relevant to a person's identity (e.g., creative work), concern higher- rather than lower-construal topics (e.g., why vs. how to do something), and for positive vs. negative decisions (Kim & Duhachek, 2020; Morewedge, 2022; Yalcin et al., 2022). That said, given that people tend to habituate to technology (Filiz et al., 2021; Turel & Kalhan, 2023), we expect that such devaluating tendencies against AI-supported human-to-human communication at work may only be an intermediate phenomenon. In fact, more recent studies already suggest that humans, at least explicitly, are more accepting of other's use of AI technology when it is done openly (Purcell et al., 2024). However, the more general risk remains that language's relational signaling function is severely reduced when AI is entered into the mix as people may mistrust the content and start to objectify others more (Granulo et al., 2024).

Against that backdrop, we suggest that human leaders need to make it resoundingly clear for senders and receivers alike: AI is a tool, but the senders and therefore owners of this communication are the humans. Humans need to remain the pilot. The second panel in Figure 1 outlines various proposals on how to achieve that. For these, we draw on domains where technological temptations are high but human ownership is paramount. For example, to prevent users from blindly following GPS advice, newer versions present users with alternative route choices. Even though some of these route options are clearly inferior, this simple nudge helps users to assume more responsibility (Anagnostopoulou et al., 2017). Correspondingly, AI copiloting for communication should be structured in a way that such tools proactively offer alternative formulations that users may choose from, again underlining that the final decision rests with the sender. An additional nudge for more ownership may come from a signaling system that—like weather warnings for pilots—automatically parses incoming information for emotionality or idiosyncrasy of the topic and would accordingly recommend increased human vigilance. Lastly, giving people more agency over the tools they operate with renders the use of these tools also more intentional (Derakhshan et al., 2024; Zhang et al., 2022). This works better than simply providing dashboard information on AI use, which as the literature on social media use showcases, may be appreciated (Zimmermann, 2021) but does not effectively help change behavior (Loid et al., 2020). Taking inspiration from this research, the advice would be to implement AI copiloting with levers (or educate users in pre-prompt options) via which they can tweak, for instance, the tone, formality, or complexity of AI formulations. All of these measures are to make clear to organizational members that communication ownership of any message lies with the human sender. Notably, if no measures to ensure human ownership are taken, language exchanges via a computer will likely lose their relational function, including any signals of interpersonal respect, no matter how good the content is.

Considerations Concerning the Learning Level of Interpersonal Communication

Given the underlying probabilistic characteristics of Large Language Models, the type of support that AI copiloting offers comes in the form of socialized ritualized communication which one may call generally polite or civil. Yet, we want to highlight that herein lies a danger of AI copiloting that organizational leaders need to tackle. Instead of only encouraging a generally more civil communication, the ultimate objective should be to foster “truly” respectful communication. Such communication entails appreciating a receiver's individuality in feelings, needs, and rights (Buss, 1999; Rudolph et al., 2021). An example to illustrate this: An AI may advise men to pick up the bill when on a date with a woman because on average, that is considered the polite thing to do. In the individual case, however, the woman in question may not want to be invited and may even feel that such behavior is not truly respectful. Thus, instead of blindly relying on a heuristic provided by AI copiloting, as good as it may be for the average case, the goal of workplace communication should be to consider the individual receiver's perspective (Argyris, 2003; Buss, 1999).

Qualifying our previous recommendation, employes are thus to be encouraged to not only assume content ownership but also to understand to what end they are to own their communication, i.e., they are to reflect on the attitude they want to bring to the conversation. This is even more true as some of “AI-like” communication patterns may spill over into human face-to-face interactions. To this end, we suggest leaders to deliberately encourage double-loop rather than single-loop learning with regard to AI copiloting.

“Single-loop” or surface-level learning refers to the adjustment of actions based on feedback without questioning or modifying the underlying beliefs that led to the original actions (Argyris, 1977). When following this approach, people may change their communication by merely reproducing the politeness they learned from their AI copilot (Hohenstein & Jung, 2020). In contrast, “double-loop learning” represents a more thorough engagement with a topic (Argyris, 2003). This form of learning involves scrutinizing fundamental principles.

Against that background, our final recommendation for leaders is thus to provide encouragement and opportunities for double-loop learning (see the third panel of Figure 1 for an overview of suggestions). At the level of the individual employe, a starting point to signal such a learning attitude could be to add a disclaimer at the end of one's AI-supported emails that explicitly addresses the wish to communicate respectfully. This represents a clear signal to correct the sender if disrespect is perceived, and the ensuing discussion can help the sender to communicate more respectfully. At the team level, organizations may want to institutionalize structured “meta-communication meetings,” i.e., interactions where team members talk about how they [want to] communicate with each other (cf. after-action reviews; Keiser & Arthur Jr, 2021). In such meetings, employes should learn about and discuss personal communication preferences. That is, employes may meta-communicate about how they otherwise communicate and, for example, come to an agreement that they prefer short and fact-oriented conversation and actually feel uncomfortable with interpersonal “chit-chat.” As a result of that discussion, they may (pre)prompt their AI copilot to keep future emails to respective addressees more concise and to the point (cf. personalization of LLMs; Kirk et al., 2024). At the organizational level, we advise companies to prominently feature mastery of interpersonal communication as a key desired facet within the onboarding process, thereby reinforcing its importance in the company culture and encouraging continuous improvement in that domain.

Conclusion

With AI on the rise, humanity in general and leadership specifically are at the cusp of a critical transition (Van Quaquebeke & Gerpott, 2023). It is these transitory periods that need to be proactively managed because they represent sensitive periods that pave the way for later more steady states (Lewin, 1947). With the rollout of powerful Large Language Models in office communication software, the question is not whether AI copiloting will be employed in organizational interpersonal communication but how (Sundar & Lee, 2022). Given that good and trusted interpersonal communication is key for almost any organizational process ranging from strategizing to operations (Cooren et al., 2011; Mintzberg, 1973), it seems high time for human leaders to step up to the plate and be more deliberate about the implementation of AI for workplace communication.

Footnotes

Acknowledgments

Special thanks to Roman Briker and Brooke Gazdag for their input on earlier versions of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.